Optimizing Brain-Computer Interface Interaction with Augmented Reality

MAR 5, 20269 MIN READ

Generate Your Research Report Instantly with AI Agent

PatSnap Eureka helps you evaluate technical feasibility & market potential.

BCI-AR Integration Background and Technical Objectives

Brain-Computer Interface (BCI) technology has evolved significantly since its inception in the 1970s, transitioning from basic signal detection systems to sophisticated neural control platforms. Early research focused primarily on medical applications, enabling paralyzed patients to control external devices through thought alone. The field has witnessed remarkable progress in signal acquisition, processing algorithms, and real-time interpretation of neural signals.

Augmented Reality (AR) technology has similarly undergone rapid development, evolving from rudimentary overlay systems to immersive mixed-reality environments. Modern AR platforms leverage advanced computer vision, spatial mapping, and rendering technologies to seamlessly blend digital content with physical environments. The convergence of these two technologies represents a paradigm shift toward more intuitive and natural human-computer interaction.

The integration of BCI and AR technologies addresses fundamental limitations in current interface paradigms. Traditional AR systems rely heavily on manual input methods such as hand gestures, voice commands, or physical controllers, which can be cumbersome and limit the naturalness of interaction. BCI technology offers the potential to eliminate these intermediary steps, enabling direct neural control of AR environments.

Current market drivers include the growing demand for hands-free computing solutions across healthcare, gaming, education, and industrial applications. The global BCI market is projected to reach significant growth, while AR adoption continues expanding across consumer and enterprise sectors. This convergence creates unprecedented opportunities for developing more accessible and efficient human-machine interfaces.

The primary technical objective centers on establishing seamless, low-latency communication between neural signals and AR rendering systems. This requires developing robust signal processing algorithms capable of interpreting complex neural patterns in real-time while maintaining high accuracy and reliability. Signal-to-noise ratio optimization remains critical for practical implementation.

Secondary objectives include creating adaptive learning systems that can personalize BCI-AR interactions based on individual neural patterns and usage behaviors. The development of standardized protocols for BCI-AR integration is essential for ensuring interoperability across different hardware platforms and software ecosystems.

Long-term goals encompass achieving natural, intuitive control mechanisms that feel as effortless as traditional thought processes. This includes developing predictive algorithms that can anticipate user intentions, reducing cognitive load and improving overall user experience. The ultimate vision involves creating transparent interfaces where the boundary between thought and digital interaction becomes imperceptible.

Augmented Reality (AR) technology has similarly undergone rapid development, evolving from rudimentary overlay systems to immersive mixed-reality environments. Modern AR platforms leverage advanced computer vision, spatial mapping, and rendering technologies to seamlessly blend digital content with physical environments. The convergence of these two technologies represents a paradigm shift toward more intuitive and natural human-computer interaction.

The integration of BCI and AR technologies addresses fundamental limitations in current interface paradigms. Traditional AR systems rely heavily on manual input methods such as hand gestures, voice commands, or physical controllers, which can be cumbersome and limit the naturalness of interaction. BCI technology offers the potential to eliminate these intermediary steps, enabling direct neural control of AR environments.

Current market drivers include the growing demand for hands-free computing solutions across healthcare, gaming, education, and industrial applications. The global BCI market is projected to reach significant growth, while AR adoption continues expanding across consumer and enterprise sectors. This convergence creates unprecedented opportunities for developing more accessible and efficient human-machine interfaces.

The primary technical objective centers on establishing seamless, low-latency communication between neural signals and AR rendering systems. This requires developing robust signal processing algorithms capable of interpreting complex neural patterns in real-time while maintaining high accuracy and reliability. Signal-to-noise ratio optimization remains critical for practical implementation.

Secondary objectives include creating adaptive learning systems that can personalize BCI-AR interactions based on individual neural patterns and usage behaviors. The development of standardized protocols for BCI-AR integration is essential for ensuring interoperability across different hardware platforms and software ecosystems.

Long-term goals encompass achieving natural, intuitive control mechanisms that feel as effortless as traditional thought processes. This includes developing predictive algorithms that can anticipate user intentions, reducing cognitive load and improving overall user experience. The ultimate vision involves creating transparent interfaces where the boundary between thought and digital interaction becomes imperceptible.

Market Demand for BCI-Enhanced AR Applications

The convergence of brain-computer interfaces and augmented reality represents a transformative technological frontier with substantial market potential across multiple sectors. Healthcare applications demonstrate the strongest immediate demand, particularly in neurorehabilitation and assistive technologies for individuals with motor disabilities. Medical institutions increasingly seek BCI-AR solutions that enable paralyzed patients to interact with digital environments through neural signals, creating immersive therapeutic experiences that accelerate recovery processes.

Gaming and entertainment industries exhibit rapidly growing interest in BCI-enhanced AR applications, driven by consumer demand for more immersive and intuitive interaction paradigms. Early adopters in this sector are exploring neural control mechanisms for gaming interfaces, where users can manipulate virtual objects and navigate augmented environments through thought patterns alone. This market segment shows particular enthusiasm for applications that eliminate traditional input devices, creating seamless mind-to-digital experiences.

Industrial and manufacturing sectors present significant opportunities for BCI-AR integration, especially in complex assembly operations and quality control processes. Manufacturing companies are investigating hands-free AR interfaces controlled by neural signals, enabling workers to access technical documentation, receive real-time guidance, and control machinery while maintaining full use of their hands for physical tasks. This application area addresses critical productivity and safety concerns in industrial environments.

Educational technology markets demonstrate growing demand for BCI-AR solutions that enhance learning experiences through direct neural feedback mechanisms. Educational institutions and training organizations are exploring applications that monitor cognitive load, attention levels, and learning states in real-time, enabling personalized AR content delivery based on neural responses. This approach promises to revolutionize skill acquisition and knowledge retention methodologies.

Military and defense applications represent a specialized but high-value market segment, where BCI-AR systems can provide tactical advantages through enhanced situational awareness and rapid information processing. Defense contractors are developing neural interfaces that allow personnel to control multiple AR displays simultaneously while maintaining operational effectiveness in complex environments.

The overall market trajectory indicates accelerating adoption across these sectors, driven by advances in neural signal processing, miniaturization of BCI hardware, and improved AR display technologies. Market barriers include regulatory approval processes, particularly in healthcare applications, and the need for standardized protocols ensuring reliable neural signal interpretation across diverse user populations.

Gaming and entertainment industries exhibit rapidly growing interest in BCI-enhanced AR applications, driven by consumer demand for more immersive and intuitive interaction paradigms. Early adopters in this sector are exploring neural control mechanisms for gaming interfaces, where users can manipulate virtual objects and navigate augmented environments through thought patterns alone. This market segment shows particular enthusiasm for applications that eliminate traditional input devices, creating seamless mind-to-digital experiences.

Industrial and manufacturing sectors present significant opportunities for BCI-AR integration, especially in complex assembly operations and quality control processes. Manufacturing companies are investigating hands-free AR interfaces controlled by neural signals, enabling workers to access technical documentation, receive real-time guidance, and control machinery while maintaining full use of their hands for physical tasks. This application area addresses critical productivity and safety concerns in industrial environments.

Educational technology markets demonstrate growing demand for BCI-AR solutions that enhance learning experiences through direct neural feedback mechanisms. Educational institutions and training organizations are exploring applications that monitor cognitive load, attention levels, and learning states in real-time, enabling personalized AR content delivery based on neural responses. This approach promises to revolutionize skill acquisition and knowledge retention methodologies.

Military and defense applications represent a specialized but high-value market segment, where BCI-AR systems can provide tactical advantages through enhanced situational awareness and rapid information processing. Defense contractors are developing neural interfaces that allow personnel to control multiple AR displays simultaneously while maintaining operational effectiveness in complex environments.

The overall market trajectory indicates accelerating adoption across these sectors, driven by advances in neural signal processing, miniaturization of BCI hardware, and improved AR display technologies. Market barriers include regulatory approval processes, particularly in healthcare applications, and the need for standardized protocols ensuring reliable neural signal interpretation across diverse user populations.

Current BCI-AR Interface Challenges and Limitations

The integration of brain-computer interfaces with augmented reality systems faces significant technical barriers that currently limit widespread adoption and optimal performance. Signal acquisition represents one of the most fundamental challenges, as current BCI technologies struggle with low signal-to-noise ratios and inconsistent neural signal quality. Non-invasive methods like EEG provide limited bandwidth and spatial resolution, while invasive approaches, though offering better signal fidelity, present substantial safety and practical implementation concerns for consumer applications.

Latency issues pose critical obstacles for seamless BCI-AR interaction. Current systems typically exhibit delays ranging from 100-500 milliseconds between neural signal detection and AR response execution. This latency creates a disconnect between user intention and visual feedback, leading to frustration and reduced system usability. The computational overhead required for real-time neural signal processing, combined with AR rendering demands, exacerbates these timing constraints.

Calibration complexity presents another major limitation, as BCI systems require extensive user-specific training periods that can span several hours or days. Individual neural patterns vary significantly between users, necessitating personalized algorithms and frequent recalibration sessions. This requirement creates substantial barriers to user adoption and limits the technology's practical deployment in commercial environments.

Accuracy and reliability concerns persist across current BCI-AR implementations. Classification accuracy for neural commands typically ranges between 70-85% under optimal laboratory conditions, but degrades significantly in real-world environments due to artifacts, user fatigue, and environmental interference. This performance variability undermines user confidence and limits practical applications to simple command sets.

User comfort and wearability constraints further restrict current BCI-AR systems. Existing hardware configurations often require cumbersome headsets, multiple sensors, and extensive setup procedures. The physical burden of wearing multiple devices simultaneously, combined with concerns about electromagnetic interference between BCI sensors and AR displays, creates ergonomic challenges that impede long-term usage scenarios and mainstream market acceptance.

Latency issues pose critical obstacles for seamless BCI-AR interaction. Current systems typically exhibit delays ranging from 100-500 milliseconds between neural signal detection and AR response execution. This latency creates a disconnect between user intention and visual feedback, leading to frustration and reduced system usability. The computational overhead required for real-time neural signal processing, combined with AR rendering demands, exacerbates these timing constraints.

Calibration complexity presents another major limitation, as BCI systems require extensive user-specific training periods that can span several hours or days. Individual neural patterns vary significantly between users, necessitating personalized algorithms and frequent recalibration sessions. This requirement creates substantial barriers to user adoption and limits the technology's practical deployment in commercial environments.

Accuracy and reliability concerns persist across current BCI-AR implementations. Classification accuracy for neural commands typically ranges between 70-85% under optimal laboratory conditions, but degrades significantly in real-world environments due to artifacts, user fatigue, and environmental interference. This performance variability undermines user confidence and limits practical applications to simple command sets.

User comfort and wearability constraints further restrict current BCI-AR systems. Existing hardware configurations often require cumbersome headsets, multiple sensors, and extensive setup procedures. The physical burden of wearing multiple devices simultaneously, combined with concerns about electromagnetic interference between BCI sensors and AR displays, creates ergonomic challenges that impede long-term usage scenarios and mainstream market acceptance.

Existing BCI-AR Integration Solutions

01 Signal acquisition and processing methods for brain-computer interfaces

Brain-computer interface systems require effective methods for acquiring and processing neural signals. These methods involve capturing brain activity through various sensors and electrodes, then applying signal processing techniques to extract meaningful information. Advanced algorithms are used to filter noise, amplify relevant signals, and convert raw brain data into interpretable formats that can be used for control commands or communication purposes.- Signal acquisition and processing methods for brain-computer interfaces: Brain-computer interface systems require effective methods for acquiring and processing neural signals. These methods involve capturing brain activity through various sensors and electrodes, then applying signal processing techniques to extract meaningful information. Advanced algorithms are used to filter noise, amplify relevant signals, and convert raw brain data into interpretable formats that can be used for control commands or communication purposes.

- Machine learning and pattern recognition for brain signal interpretation: Machine learning algorithms and pattern recognition techniques are essential for interpreting brain signals in interface systems. These methods enable the system to learn from training data and recognize specific brain patterns associated with different intentions or commands. Deep learning models and neural networks can be trained to classify brain states, decode motor imagery, and translate neural activity into actionable outputs with high accuracy.

- Feedback mechanisms and adaptive control systems: Effective brain-computer interfaces incorporate feedback mechanisms that provide users with information about system performance and enable adaptive control. These systems can adjust their parameters based on user performance and changing brain states. Feedback can be visual, auditory, or tactile, helping users improve their control over the interface through practice and real-time adjustments to enhance interaction efficiency.

- Multi-modal integration and hybrid interface architectures: Advanced brain-computer interfaces often combine multiple input modalities and hybrid architectures to improve performance and reliability. These systems may integrate electroencephalography with other biosignals or combine brain signals with conventional input methods. Multi-modal approaches can compensate for limitations of single-modality systems and provide more robust and versatile interaction capabilities for diverse applications.

- Application-specific interface designs and user training protocols: Brain-computer interfaces are designed for specific applications such as assistive technology, rehabilitation, gaming, or communication systems. Each application requires tailored interface designs and user training protocols to optimize performance. Training methods help users develop the mental strategies needed to generate consistent and distinguishable brain patterns, while interface designs are customized to meet the specific requirements of the target application domain.

02 Machine learning and pattern recognition for brain signal interpretation

Machine learning algorithms and pattern recognition techniques are essential for interpreting brain signals in interface systems. These methods enable the system to learn from training data and recognize specific brain patterns associated with different intentions or commands. Deep learning models and neural networks are employed to improve accuracy and reduce latency in translating brain activity into actionable outputs, enhancing the overall user experience.Expand Specific Solutions03 Feedback mechanisms and closed-loop control systems

Effective brain-computer interfaces incorporate feedback mechanisms that provide users with real-time information about their brain activity and system responses. Closed-loop control systems enable bidirectional communication between the brain and external devices, allowing for adaptive adjustments based on user performance. These systems enhance user training, improve control accuracy, and facilitate more natural interaction between the user and the interface.Expand Specific Solutions04 Hardware design and electrode configurations

The physical design of brain-computer interface hardware plays a crucial role in system performance. This includes the development of specialized electrode arrays, sensor configurations, and wearable devices that can reliably capture brain signals while maintaining user comfort. Innovations in materials science and miniaturization enable the creation of more portable and user-friendly interface devices with improved signal quality and reduced interference.Expand Specific Solutions05 Application-specific interface systems and control paradigms

Brain-computer interfaces are being developed for various specific applications, each requiring tailored control paradigms and interaction methods. These applications range from assistive technologies for individuals with disabilities to gaming, virtual reality, and robotic control. Different control strategies are implemented based on the specific requirements of each application, including motor imagery, visual evoked potentials, and cognitive state monitoring to enable intuitive and efficient user interaction.Expand Specific Solutions

Key Players in BCI and AR Industry Ecosystem

The brain-computer interface and augmented reality integration field represents an emerging technology sector in its early developmental stage, characterized by significant growth potential but limited commercial maturity. The market remains relatively nascent with fragmented players ranging from tech giants to specialized startups and academic institutions. Technology maturity varies considerably across participants, with established companies like Apple and Hyundai Motor leveraging existing hardware capabilities, while specialized firms such as Cognixion Corp., MindPortal, and Naqi Logix focus on dedicated BCI solutions. Academic institutions including Tsinghua University, Beijing Institute of Technology, and Zhejiang University contribute foundational research, while companies like SenseTime provide AI infrastructure support. The competitive landscape reflects a convergence of multiple disciplines, with participants approaching the challenge from different technological angles, suggesting the field is still defining optimal integration pathways between neural interfaces and augmented reality systems.

Apple, Inc.

Technical Solution: Apple has developed advanced AR frameworks including ARKit that provide robust spatial tracking and environmental understanding capabilities for brain-computer interface integration. Their approach focuses on seamless user experience through optimized hardware-software integration, utilizing custom silicon like the M-series chips for low-latency processing of neural signals combined with AR rendering. The company leverages machine learning algorithms to interpret brain signals and translate them into intuitive AR interactions, enabling users to control virtual objects through thought patterns with minimal cognitive load.

Strengths: Exceptional hardware-software integration and user experience design. Weaknesses: Limited openness for third-party BCI hardware integration and high cost barriers.

Cognixion Corp.

Technical Solution: Cognixion has developed the ONE platform, a comprehensive brain-computer interface system specifically designed for augmented reality applications. Their technology combines non-invasive EEG sensors with advanced signal processing algorithms to enable direct neural control of AR interfaces. The system utilizes machine learning models trained on individual user patterns to achieve high accuracy in intention recognition, allowing users to navigate AR environments, select objects, and execute commands through thought alone. The platform includes real-time feedback mechanisms and adaptive learning capabilities to improve performance over time.

Strengths: Specialized focus on BCI-AR integration with proven commercial applications. Weaknesses: Limited to non-invasive methods which may have lower signal fidelity compared to invasive approaches.

Core Patents in Neural Signal Processing for AR

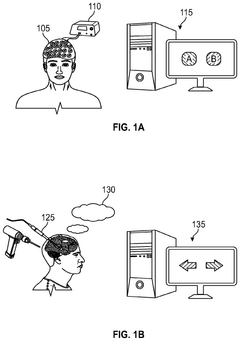

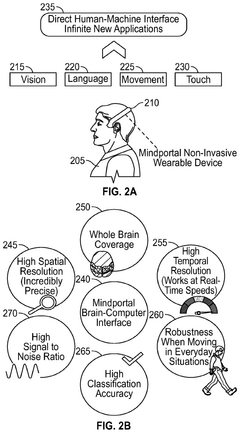

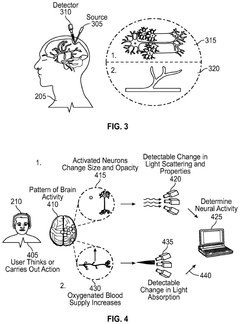

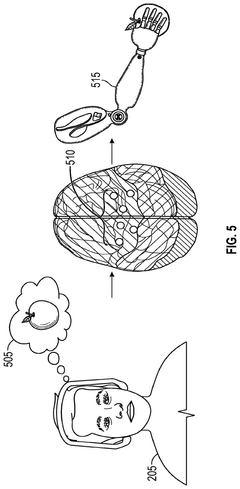

Systems and methods that involve BCI (brain computer interface), extended reality and/or eye-tracking devices, detect mind/brain activity, generate and/or process saliency maps, eye-tracking information and/or various control(s) or instructions, implement mind-based selection of UI elements and/or perform other features and functionality

PatentPendingUS20250004558A1

Innovation

- A non-invasive brain-computer interface system that uses optical-based brain signal acquisition and decoding modalities, enabling high-resolution data collection and decoding of neural activities associated with thoughts, including visual attention and intended actions, through the use of wearable optodes that detect neuronal and haemodynamic changes, allowing for precise brain signal processing and interaction with UI elements in mixed reality environments.

Multi-functional brain-computer interface apparatus using augmented reality

PatentInactiveKR1020240055528A

Innovation

- A brain-computer interface device utilizing spontaneous and stimulation-based brain waves, with a processor that generates control signals based on these waves to manage augmented reality screen output and user intentions, employing electrodes, an augmented reality display, and neural networks for classification.

Neurotechnology Safety and Privacy Regulations

The integration of brain-computer interfaces with augmented reality systems presents unprecedented challenges in neurotechnology safety and privacy regulation. Current regulatory frameworks, primarily developed for traditional medical devices, struggle to address the unique risks posed by direct neural data acquisition and real-time AR processing. The FDA's existing Class II and Class III medical device classifications inadequately capture the complexity of BCIs that continuously monitor neural signals while simultaneously delivering augmented sensory information.

Privacy regulations face particular challenges when addressing neural data, which represents the most intimate form of personal information. The European Union's General Data Protection Regulation provides some foundation through its biometric data protections, but lacks specific provisions for neural signal processing. The California Consumer Privacy Act similarly falls short in addressing the continuous, high-resolution nature of brain data collection inherent in BCI-AR systems.

International regulatory bodies are beginning to recognize these gaps. The International Organization for Standardization has initiated working groups focused on neurotechnology standards, while the IEEE has established the P2857 standard for privacy engineering in neurotechnology. However, these efforts remain in early stages and lack harmonization across jurisdictions.

Key regulatory concerns center on informed consent mechanisms for neural data collection, data minimization principles in continuous monitoring scenarios, and cross-border data transfer restrictions for cloud-processed neural information. The temporal nature of neural signals creates additional complexity, as traditional data deletion requirements become technically challenging when dealing with learned neural patterns embedded in machine learning models.

Emerging regulatory approaches suggest a risk-based framework that considers both the invasiveness of neural monitoring and the sensitivity of augmented information delivery. This includes mandatory impact assessments for neural privacy, algorithmic transparency requirements for BCI signal processing, and user control mechanisms for neural data sharing. The regulatory landscape continues evolving as policymakers balance innovation promotion with fundamental privacy and safety protections in this transformative technology domain.

Privacy regulations face particular challenges when addressing neural data, which represents the most intimate form of personal information. The European Union's General Data Protection Regulation provides some foundation through its biometric data protections, but lacks specific provisions for neural signal processing. The California Consumer Privacy Act similarly falls short in addressing the continuous, high-resolution nature of brain data collection inherent in BCI-AR systems.

International regulatory bodies are beginning to recognize these gaps. The International Organization for Standardization has initiated working groups focused on neurotechnology standards, while the IEEE has established the P2857 standard for privacy engineering in neurotechnology. However, these efforts remain in early stages and lack harmonization across jurisdictions.

Key regulatory concerns center on informed consent mechanisms for neural data collection, data minimization principles in continuous monitoring scenarios, and cross-border data transfer restrictions for cloud-processed neural information. The temporal nature of neural signals creates additional complexity, as traditional data deletion requirements become technically challenging when dealing with learned neural patterns embedded in machine learning models.

Emerging regulatory approaches suggest a risk-based framework that considers both the invasiveness of neural monitoring and the sensitivity of augmented information delivery. This includes mandatory impact assessments for neural privacy, algorithmic transparency requirements for BCI signal processing, and user control mechanisms for neural data sharing. The regulatory landscape continues evolving as policymakers balance innovation promotion with fundamental privacy and safety protections in this transformative technology domain.

Ethical Framework for Neural-Augmented Reality Systems

The integration of brain-computer interfaces with augmented reality systems presents unprecedented ethical challenges that require comprehensive frameworks to address privacy, autonomy, and human dignity concerns. Neural-augmented reality systems operate at the intersection of cognitive enhancement and immersive technology, creating unique vulnerabilities in mental privacy and psychological manipulation that traditional ethical guidelines cannot adequately address.

Privacy protection in neural-augmented reality environments extends beyond conventional data security to encompass neural data sovereignty and cognitive liberty. The intimate nature of brain signals requires establishing strict protocols for neural data collection, storage, and processing, ensuring users maintain absolute control over their cognitive information. Consent mechanisms must evolve to address the dynamic nature of neural interfaces, implementing continuous consent protocols that account for changing mental states and long-term cognitive effects.

Autonomy preservation becomes critically important when external systems can directly influence cognitive processes and perceptual experiences. Ethical frameworks must establish clear boundaries between therapeutic enhancement and cognitive manipulation, ensuring users retain authentic decision-making capabilities. The potential for subliminal influence through augmented reality overlays combined with neural feedback creates risks of coercive persuasion that require robust safeguards.

Equity and accessibility considerations demand that neural-augmented reality benefits do not exacerbate existing social inequalities or create new forms of cognitive stratification. Frameworks must address the digital divide implications of neural enhancement technologies, ensuring fair distribution of cognitive augmentation capabilities across diverse populations and preventing the emergence of enhanced versus non-enhanced social classes.

Safety protocols must encompass both immediate physical risks and long-term neuroplasticity effects of prolonged neural-augmented reality exposure. Ethical guidelines should mandate comprehensive longitudinal studies to understand the psychological and neurological impacts of sustained brain-computer interface usage in augmented environments, establishing evidence-based usage limits and monitoring requirements.

Transparency and explainability requirements become essential when neural interfaces influence cognitive processes through augmented reality systems. Users must understand how their neural data influences system responses and how augmented content may affect their perceptions and decisions, ensuring informed participation in neural-augmented experiences while maintaining cognitive agency and self-determination.

Privacy protection in neural-augmented reality environments extends beyond conventional data security to encompass neural data sovereignty and cognitive liberty. The intimate nature of brain signals requires establishing strict protocols for neural data collection, storage, and processing, ensuring users maintain absolute control over their cognitive information. Consent mechanisms must evolve to address the dynamic nature of neural interfaces, implementing continuous consent protocols that account for changing mental states and long-term cognitive effects.

Autonomy preservation becomes critically important when external systems can directly influence cognitive processes and perceptual experiences. Ethical frameworks must establish clear boundaries between therapeutic enhancement and cognitive manipulation, ensuring users retain authentic decision-making capabilities. The potential for subliminal influence through augmented reality overlays combined with neural feedback creates risks of coercive persuasion that require robust safeguards.

Equity and accessibility considerations demand that neural-augmented reality benefits do not exacerbate existing social inequalities or create new forms of cognitive stratification. Frameworks must address the digital divide implications of neural enhancement technologies, ensuring fair distribution of cognitive augmentation capabilities across diverse populations and preventing the emergence of enhanced versus non-enhanced social classes.

Safety protocols must encompass both immediate physical risks and long-term neuroplasticity effects of prolonged neural-augmented reality exposure. Ethical guidelines should mandate comprehensive longitudinal studies to understand the psychological and neurological impacts of sustained brain-computer interface usage in augmented environments, establishing evidence-based usage limits and monitoring requirements.

Transparency and explainability requirements become essential when neural interfaces influence cognitive processes through augmented reality systems. Users must understand how their neural data influences system responses and how augmented content may affect their perceptions and decisions, ensuring informed participation in neural-augmented experiences while maintaining cognitive agency and self-determination.

Unlock deeper insights with PatSnap Eureka Quick Research — get a full tech report to explore trends and direct your research. Try now!

Generate Your Research Report Instantly with AI Agent

Supercharge your innovation with PatSnap Eureka AI Agent Platform!