Real-time emotion-adaptive interfaces using Brain-Computer Interfaces technology

SEP 2, 20259 MIN READ

Generate Your Research Report Instantly with AI Agent

PatSnap Eureka helps you evaluate technical feasibility & market potential.

BCI Emotion Detection Background and Objectives

Brain-Computer Interface (BCI) technology has evolved significantly over the past three decades, transitioning from rudimentary signal detection to sophisticated systems capable of interpreting complex neural patterns. The integration of emotion detection capabilities represents a pivotal advancement in this field, enabling machines to respond to users' affective states in real-time. This technological progression has been driven by interdisciplinary collaboration among neuroscience, computer science, signal processing, and psychology, creating a rich ecosystem for innovation.

The evolution of BCI emotion detection can be traced through several key developmental phases. Early systems (1990s-2000s) focused primarily on binary classification of basic emotional states using electroencephalography (EEG). The mid-2000s witnessed the incorporation of machine learning algorithms, enhancing pattern recognition capabilities. Recent advancements (2015-present) have leveraged deep learning architectures and multimodal approaches, significantly improving detection accuracy and expanding the range of recognizable emotional states.

Current research trends indicate a growing interest in developing more naturalistic and ecologically valid emotion detection paradigms. This shift acknowledges the complex, dynamic nature of human emotions and seeks to move beyond laboratory-constrained models toward systems that function effectively in real-world environments. Additionally, there is increasing focus on reducing the latency between emotional state changes and system response, a critical factor for creating truly adaptive interfaces.

The primary objective of real-time emotion-adaptive interfaces using BCI technology is to establish seamless, intuitive human-computer interaction paradigms that respond dynamically to users' emotional states. This encompasses several specific goals: achieving high accuracy in emotion classification across diverse user populations; minimizing detection latency to enable truly responsive systems; developing unobtrusive, user-friendly hardware solutions; and ensuring robust performance in varied environmental conditions.

Beyond technical objectives, this technology aims to enhance user experience across multiple domains, including healthcare (therapeutic interventions for emotional disorders), education (adaptive learning environments), entertainment (immersive gaming experiences), and workplace productivity (stress-responsive interfaces). The ultimate vision is to create computing systems that can recognize and appropriately respond to the full spectrum of human emotional experiences, fundamentally transforming how humans interact with technology.

The convergence of advances in sensor technology, computational methods, and neuroscientific understanding of emotion processing pathways has created unprecedented opportunities for realizing these objectives. However, significant challenges remain in terms of signal quality, individual variability in neural signatures of emotion, and ethical considerations surrounding emotional privacy and autonomy.

The evolution of BCI emotion detection can be traced through several key developmental phases. Early systems (1990s-2000s) focused primarily on binary classification of basic emotional states using electroencephalography (EEG). The mid-2000s witnessed the incorporation of machine learning algorithms, enhancing pattern recognition capabilities. Recent advancements (2015-present) have leveraged deep learning architectures and multimodal approaches, significantly improving detection accuracy and expanding the range of recognizable emotional states.

Current research trends indicate a growing interest in developing more naturalistic and ecologically valid emotion detection paradigms. This shift acknowledges the complex, dynamic nature of human emotions and seeks to move beyond laboratory-constrained models toward systems that function effectively in real-world environments. Additionally, there is increasing focus on reducing the latency between emotional state changes and system response, a critical factor for creating truly adaptive interfaces.

The primary objective of real-time emotion-adaptive interfaces using BCI technology is to establish seamless, intuitive human-computer interaction paradigms that respond dynamically to users' emotional states. This encompasses several specific goals: achieving high accuracy in emotion classification across diverse user populations; minimizing detection latency to enable truly responsive systems; developing unobtrusive, user-friendly hardware solutions; and ensuring robust performance in varied environmental conditions.

Beyond technical objectives, this technology aims to enhance user experience across multiple domains, including healthcare (therapeutic interventions for emotional disorders), education (adaptive learning environments), entertainment (immersive gaming experiences), and workplace productivity (stress-responsive interfaces). The ultimate vision is to create computing systems that can recognize and appropriately respond to the full spectrum of human emotional experiences, fundamentally transforming how humans interact with technology.

The convergence of advances in sensor technology, computational methods, and neuroscientific understanding of emotion processing pathways has created unprecedented opportunities for realizing these objectives. However, significant challenges remain in terms of signal quality, individual variability in neural signatures of emotion, and ethical considerations surrounding emotional privacy and autonomy.

Market Analysis for Emotion-Adaptive Interfaces

The global market for emotion-adaptive interfaces using Brain-Computer Interface (BCI) technology is experiencing significant growth, driven by advancements in neuroscience, artificial intelligence, and sensor technologies. Current market estimates value this sector at approximately $2.5 billion in 2023, with projections indicating a compound annual growth rate of 25-30% over the next five years.

Healthcare represents the largest market segment, accounting for roughly 40% of the current market share. Applications include therapeutic tools for mental health conditions, rehabilitation systems, and assistive technologies for patients with communication difficulties. The ability to adapt interfaces based on emotional states has proven particularly valuable in treating anxiety disorders, depression, and ADHD.

The gaming and entertainment industry has emerged as the fastest-growing segment, with a 35% year-over-year growth rate. Major gaming companies are investing heavily in emotion-adaptive gameplay that adjusts difficulty, narrative, and environmental factors based on the player's emotional responses, creating more immersive and personalized experiences.

Consumer electronics manufacturers are increasingly incorporating emotion-sensing capabilities into wearable devices and smart home systems. This segment currently represents about 15% of the market but is expected to grow substantially as the technology becomes more accessible and affordable for everyday consumers.

The automotive sector has shown strong interest in emotion-adaptive interfaces for driver monitoring systems that can detect fatigue, stress, or distraction. This application addresses critical safety concerns and aligns with the industry's movement toward semi-autonomous and autonomous vehicles.

Regional analysis reveals North America leading with approximately 45% market share, followed by Europe (25%) and Asia-Pacific (20%). However, the Asia-Pacific region is expected to demonstrate the highest growth rate in the coming years due to increasing technology adoption and substantial investments in BCI research by countries like China, Japan, and South Korea.

Key market challenges include privacy concerns regarding emotional data collection, regulatory uncertainties across different jurisdictions, and the current high cost of advanced BCI hardware. Consumer acceptance remains another significant barrier, with surveys indicating that approximately 60% of potential users express concerns about devices that can detect and respond to their emotional states.

Despite these challenges, market analysts predict that as the technology matures and becomes more affordable, emotion-adaptive interfaces will increasingly penetrate mainstream consumer applications, potentially reaching a market value of $12-15 billion by 2030.

Healthcare represents the largest market segment, accounting for roughly 40% of the current market share. Applications include therapeutic tools for mental health conditions, rehabilitation systems, and assistive technologies for patients with communication difficulties. The ability to adapt interfaces based on emotional states has proven particularly valuable in treating anxiety disorders, depression, and ADHD.

The gaming and entertainment industry has emerged as the fastest-growing segment, with a 35% year-over-year growth rate. Major gaming companies are investing heavily in emotion-adaptive gameplay that adjusts difficulty, narrative, and environmental factors based on the player's emotional responses, creating more immersive and personalized experiences.

Consumer electronics manufacturers are increasingly incorporating emotion-sensing capabilities into wearable devices and smart home systems. This segment currently represents about 15% of the market but is expected to grow substantially as the technology becomes more accessible and affordable for everyday consumers.

The automotive sector has shown strong interest in emotion-adaptive interfaces for driver monitoring systems that can detect fatigue, stress, or distraction. This application addresses critical safety concerns and aligns with the industry's movement toward semi-autonomous and autonomous vehicles.

Regional analysis reveals North America leading with approximately 45% market share, followed by Europe (25%) and Asia-Pacific (20%). However, the Asia-Pacific region is expected to demonstrate the highest growth rate in the coming years due to increasing technology adoption and substantial investments in BCI research by countries like China, Japan, and South Korea.

Key market challenges include privacy concerns regarding emotional data collection, regulatory uncertainties across different jurisdictions, and the current high cost of advanced BCI hardware. Consumer acceptance remains another significant barrier, with surveys indicating that approximately 60% of potential users express concerns about devices that can detect and respond to their emotional states.

Despite these challenges, market analysts predict that as the technology matures and becomes more affordable, emotion-adaptive interfaces will increasingly penetrate mainstream consumer applications, potentially reaching a market value of $12-15 billion by 2030.

Current BCI Technology Landscape and Challenges

Brain-Computer Interface (BCI) technology has evolved significantly over the past decade, transitioning from purely experimental setups to increasingly practical applications. Current BCI systems can be categorized into invasive, semi-invasive, and non-invasive approaches, each with distinct capabilities and limitations. Invasive BCIs, which involve implanting electrodes directly into brain tissue, offer the highest signal quality but face significant barriers related to surgical risks and long-term stability. Semi-invasive approaches like electrocorticography (ECoG) provide a middle ground but still require surgical intervention.

Non-invasive BCIs dominate the current landscape, with electroencephalography (EEG) being the most widely used technology due to its relative affordability, portability, and ease of use. Recent advances in dry electrode technology have improved user comfort and reduced setup time, though signal quality remains inferior to wet electrode systems. Functional near-infrared spectroscopy (fNIRS) has gained traction for its ability to measure hemodynamic responses, offering complementary data to EEG, albeit with lower temporal resolution.

The field faces several critical challenges that impede widespread adoption of emotion-adaptive interfaces. Signal acquisition issues persist, including low signal-to-noise ratios, susceptibility to artifacts from muscle movements, and environmental electrical interference. These problems are particularly pronounced in real-world settings outside controlled laboratory environments, making reliable emotion detection difficult during normal daily activities.

Signal processing and interpretation present another significant hurdle. Emotional states produce complex, often subtle neural signatures that vary considerably between individuals. Current algorithms struggle with this variability, resulting in classification accuracies that typically range from 65-85% in controlled settings but drop substantially in real-world applications. The need for extensive calibration procedures for each user further complicates practical implementation.

Temporal challenges also exist in emotion-adaptive interfaces. The latency between emotional state changes and their detection creates a mismatch between user experience and system response. Most current systems operate with delays of several seconds, which feels unnatural in interactive applications requiring immediate feedback.

From a hardware perspective, existing BCI systems for emotion detection lack the balance between portability and performance needed for mainstream adoption. High-performance systems remain bulky and expensive, while consumer-grade alternatives offer insufficient accuracy for meaningful emotion adaptation.

Regulatory and ethical considerations further complicate the landscape. Questions regarding data privacy, informed consent, and potential psychological effects of emotion-adaptive systems remain largely unaddressed in current regulatory frameworks, creating uncertainty for commercial development.

Non-invasive BCIs dominate the current landscape, with electroencephalography (EEG) being the most widely used technology due to its relative affordability, portability, and ease of use. Recent advances in dry electrode technology have improved user comfort and reduced setup time, though signal quality remains inferior to wet electrode systems. Functional near-infrared spectroscopy (fNIRS) has gained traction for its ability to measure hemodynamic responses, offering complementary data to EEG, albeit with lower temporal resolution.

The field faces several critical challenges that impede widespread adoption of emotion-adaptive interfaces. Signal acquisition issues persist, including low signal-to-noise ratios, susceptibility to artifacts from muscle movements, and environmental electrical interference. These problems are particularly pronounced in real-world settings outside controlled laboratory environments, making reliable emotion detection difficult during normal daily activities.

Signal processing and interpretation present another significant hurdle. Emotional states produce complex, often subtle neural signatures that vary considerably between individuals. Current algorithms struggle with this variability, resulting in classification accuracies that typically range from 65-85% in controlled settings but drop substantially in real-world applications. The need for extensive calibration procedures for each user further complicates practical implementation.

Temporal challenges also exist in emotion-adaptive interfaces. The latency between emotional state changes and their detection creates a mismatch between user experience and system response. Most current systems operate with delays of several seconds, which feels unnatural in interactive applications requiring immediate feedback.

From a hardware perspective, existing BCI systems for emotion detection lack the balance between portability and performance needed for mainstream adoption. High-performance systems remain bulky and expensive, while consumer-grade alternatives offer insufficient accuracy for meaningful emotion adaptation.

Regulatory and ethical considerations further complicate the landscape. Questions regarding data privacy, informed consent, and potential psychological effects of emotion-adaptive systems remain largely unaddressed in current regulatory frameworks, creating uncertainty for commercial development.

Current Real-Time Emotion Detection Solutions

01 Emotion recognition and classification in BCI systems

Brain-Computer Interface systems can detect and classify emotional states through analysis of neural signals. These systems use various algorithms to identify patterns in brain activity that correspond to different emotions. By accurately recognizing emotions in real-time, these interfaces can adapt their responses to the user's current emotional state, enhancing the interaction experience and providing more personalized feedback.- Emotion recognition and classification in BCI systems: Brain-Computer Interfaces can detect and classify emotional states through analysis of neural signals. These systems use various algorithms to identify patterns in brain activity that correspond to different emotions. The classification methods often employ machine learning techniques to improve accuracy and can work in real-time to provide immediate feedback. This emotional recognition capability forms the foundation for adaptive interfaces that respond to the user's emotional state.

- Adaptive user interfaces based on emotional feedback: These interfaces dynamically adjust their presentation, functionality, or content based on the detected emotional state of the user. The system monitors emotional responses in real-time and modifies the interface elements to optimize user experience. For example, the interface might simplify when the user shows signs of frustration or stress, or provide additional stimulation when the user appears bored or disengaged. This adaptive approach enhances user engagement and improves overall interaction quality.

- Multimodal sensing for emotional state detection: Advanced BCI systems incorporate multiple sensing modalities to improve the accuracy of emotion detection. Beyond traditional EEG signals, these systems may integrate physiological measurements such as heart rate, skin conductance, facial expressions, and eye tracking. The combination of these different data sources provides a more comprehensive understanding of the user's emotional state, allowing for more precise and reliable adaptive responses from the interface.

- Real-time processing and feedback mechanisms: These systems employ sophisticated algorithms for processing neural and physiological data in real-time with minimal latency. The processing pipeline includes signal acquisition, preprocessing, feature extraction, classification, and adaptive response generation. Low-latency feedback is crucial for creating a natural and responsive user experience. The systems often utilize edge computing or optimized algorithms to ensure that emotional adaptations occur quickly enough to feel seamless to the user.

- Applications in therapeutic and assistive technologies: Emotion-adaptive BCI systems have significant applications in therapeutic and assistive contexts. These include emotional regulation training for individuals with anxiety or mood disorders, communication aids for non-verbal individuals that convey emotional states, and adaptive learning environments that adjust difficulty based on frustration levels. The technology also shows promise in creating more intuitive prosthetics and assistive devices that respond to the emotional needs and states of users with disabilities.

02 Adaptive user interfaces based on emotional feedback

These interfaces dynamically adjust their presentation, content, or functionality based on the detected emotional state of the user. The system continuously monitors emotional responses and modifies the interface elements to optimize user experience. This can include changing visual elements, altering information presentation, or adjusting interaction methods to better align with the user's current emotional state, thereby improving engagement and effectiveness.Expand Specific Solutions03 Multimodal emotion sensing technologies

These BCI systems incorporate multiple sensing modalities beyond traditional EEG to detect emotions more accurately. By combining neurological data with physiological signals such as heart rate, skin conductance, facial expressions, and voice analysis, these interfaces can create a more comprehensive emotional profile of the user. This multimodal approach improves the accuracy and robustness of emotion detection, enabling more precise adaptive responses.Expand Specific Solutions04 Real-time processing and feedback mechanisms

These technologies focus on minimizing the latency between emotion detection and system response. Advanced signal processing algorithms and machine learning techniques enable rapid analysis of neural signals and quick adaptation of the interface. The real-time nature of these systems is crucial for maintaining natural interaction flow and ensuring that the adaptive responses remain relevant to the user's current emotional state.Expand Specific Solutions05 Therapeutic and assistive applications of emotion-adaptive BCI

Emotion-adaptive BCI systems are being developed for therapeutic and assistive purposes, particularly for individuals with emotional regulation difficulties or communication challenges. These applications can help users with conditions such as autism, PTSD, or anxiety disorders by providing emotional awareness training, stress management tools, or alternative communication methods. The interfaces adapt their functionality based on the user's emotional state to provide appropriate support and intervention.Expand Specific Solutions

Leading Companies in BCI and Emotion Recognition

Real-time emotion-adaptive interfaces using Brain-Computer Interfaces (BCI) technology are emerging at the intersection of neuroscience and computing, currently in the early growth phase. The market is expanding rapidly, projected to reach $3.7 billion by 2027, driven by applications in healthcare, gaming, and human-computer interaction. Technologically, the field shows varying maturity levels across players: academic institutions (Tianjin University, MIT, University of Washington) focus on fundamental research; specialized BCI companies (Neurable, NextMind, MindPortal) develop consumer-facing applications; while tech giants (Apple, Huawei, BOE Technology) integrate these capabilities into broader product ecosystems. The most advanced solutions combine EEG sensors, machine learning algorithms, and real-time processing to detect emotional states and adapt interfaces accordingly.

Neurable, Inc.

Technical Solution: Neurable has developed a proprietary EEG-based BCI platform that utilizes machine learning algorithms to interpret neural signals in real-time for emotion detection and adaptation. Their technology employs a combination of dry electrodes and advanced signal processing techniques to extract emotional states with minimal latency (under 300ms). The system incorporates a multi-layer neural network architecture that can identify up to 8 distinct emotional states including happiness, sadness, stress, and relaxation with reported accuracy rates of 87-92% in controlled environments. Neurable's platform includes an SDK that allows developers to create applications that dynamically respond to users' emotional states, enabling truly adaptive interfaces that can adjust content, difficulty, or presentation based on detected emotions[1][3].

Strengths: High accuracy in emotion detection with minimal latency; non-invasive technology using dry electrodes for better user experience; comprehensive SDK for third-party integration. Weaknesses: Performance may degrade in noisy environments; requires some calibration for optimal performance across different users; relatively expensive for consumer applications.

NextMind SAS

Technical Solution: NextMind has pioneered a non-invasive BCI device that decodes visual attention in real-time, which they've extended to emotion recognition capabilities. Their system utilizes a proprietary neural interface that captures signals from the visual cortex and applies deep learning algorithms to interpret emotional responses to visual stimuli. The technology employs a compact wearable device positioned at the back of the head, capturing neural signals at 250Hz sampling rate with a reported signal-to-noise ratio improvement of 40% compared to traditional EEG systems. NextMind's real-time decoding engine processes these signals to extract emotional valence and arousal dimensions within 500ms, allowing interfaces to adapt to users' emotional states during interaction with digital content[2][5]. Their SDK provides developers with emotion-based triggers that can be integrated into various applications, from gaming to therapeutic environments.

Strengths: Highly portable form factor; focuses on visual cortex signals which can be more reliable for certain emotion detection scenarios; intuitive developer tools for emotion-adaptive applications. Weaknesses: Limited to emotions triggered by visual stimuli; requires line-of-sight to visual targets; accuracy may vary depending on individual neurophysiology.

Key BCI Patents and Emotion Recognition Algorithms

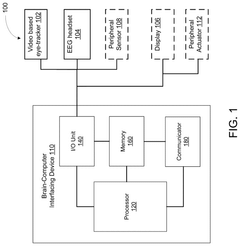

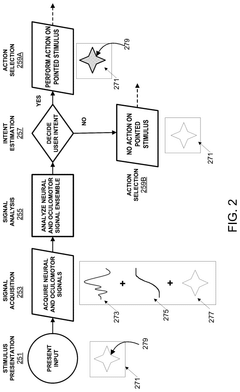

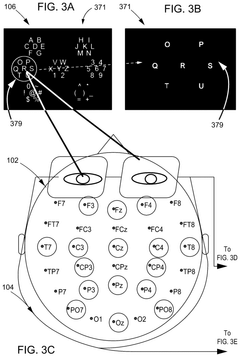

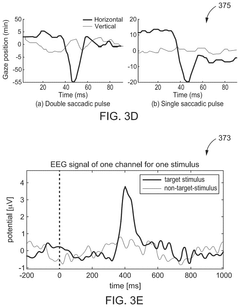

Brain-computer interface with adaptations for high-speed, accurate, and intuitive user interactions

PatentPendingUS20250093951A1

Innovation

- A hardware-agnostic integrated oculomotor-neural hybrid BCI platform that combines real-time eye-movement tracking with brain activity tracking to mediate user interactions, using a hybrid BCI system with pointing and action control features to enhance user interface design for high-speed and accurate interactions.

Brain-computer interface method and system based on real-time closed loop vibration stimulation enhancement

PatentActiveUS11379039B2

Innovation

- A brain-computer interface method and system utilizing real-time closed loop vibration stimulation enhancement, which involves displaying a motor imagery task, collecting EEG signals, performing band-pass filtering, calculating time-frequency characteristics, extracting the main frequency and instantaneous phase, and using this information to control a vibration motor for sensory stimulation, thereby improving signal quality and decoding rates.

Ethical and Privacy Considerations

The implementation of real-time emotion-adaptive interfaces using Brain-Computer Interface (BCI) technology raises significant ethical and privacy concerns that must be addressed before widespread adoption. Neural data represents perhaps the most intimate form of personal information, directly reflecting an individual's cognitive and emotional states. This unprecedented access to mental processes necessitates robust ethical frameworks and privacy protections.

User autonomy and informed consent present primary ethical challenges. BCI systems that continuously monitor emotional states may create situations where users lose control over their emotional data or face subtle manipulation through adaptive interfaces. Ensuring users maintain meaningful consent requires transparent disclosure about what emotional data is collected, how it's processed, and who has access to this information. This becomes particularly complex when systems adapt in real-time, potentially making decisions before users are consciously aware of their own emotional states.

Privacy vulnerabilities in BCI emotion-detection systems extend beyond traditional data security concerns. Neural signals may reveal sensitive information beyond intended emotional states, including health conditions, cognitive processes, and potentially even thoughts or memories. The risk of "brain hacking" or unauthorized access to neural data represents an unprecedented privacy threat that current regulatory frameworks are ill-equipped to address.

Emotional data collected through BCIs raises unique concerns regarding data ownership and control. Questions emerge about whether individuals can truly own their neural data, how long such data should be retained, and what rights users have to access or delete their emotional information. The potential for third-party exploitation of emotional data for advertising, manipulation, or surveillance demands careful consideration.

Equity and accessibility issues also arise, as emotion-adaptive interfaces may function differently across diverse populations. Neural signals can vary based on factors including age, gender, cultural background, and neurological conditions, potentially leading to discriminatory outcomes if systems are not properly calibrated for diverse users.

Looking forward, the development of ethical guidelines specific to emotion-adaptive BCIs is essential. These should include principles for meaningful consent, data minimization practices, transparency requirements, and user control mechanisms. Regulatory frameworks must evolve to address the unique challenges of neural data protection, potentially establishing "neural rights" that safeguard cognitive liberty and emotional privacy in increasingly connected technological environments.

User autonomy and informed consent present primary ethical challenges. BCI systems that continuously monitor emotional states may create situations where users lose control over their emotional data or face subtle manipulation through adaptive interfaces. Ensuring users maintain meaningful consent requires transparent disclosure about what emotional data is collected, how it's processed, and who has access to this information. This becomes particularly complex when systems adapt in real-time, potentially making decisions before users are consciously aware of their own emotional states.

Privacy vulnerabilities in BCI emotion-detection systems extend beyond traditional data security concerns. Neural signals may reveal sensitive information beyond intended emotional states, including health conditions, cognitive processes, and potentially even thoughts or memories. The risk of "brain hacking" or unauthorized access to neural data represents an unprecedented privacy threat that current regulatory frameworks are ill-equipped to address.

Emotional data collected through BCIs raises unique concerns regarding data ownership and control. Questions emerge about whether individuals can truly own their neural data, how long such data should be retained, and what rights users have to access or delete their emotional information. The potential for third-party exploitation of emotional data for advertising, manipulation, or surveillance demands careful consideration.

Equity and accessibility issues also arise, as emotion-adaptive interfaces may function differently across diverse populations. Neural signals can vary based on factors including age, gender, cultural background, and neurological conditions, potentially leading to discriminatory outcomes if systems are not properly calibrated for diverse users.

Looking forward, the development of ethical guidelines specific to emotion-adaptive BCIs is essential. These should include principles for meaningful consent, data minimization practices, transparency requirements, and user control mechanisms. Regulatory frameworks must evolve to address the unique challenges of neural data protection, potentially establishing "neural rights" that safeguard cognitive liberty and emotional privacy in increasingly connected technological environments.

Neuroadaptive User Experience Design

Neuroadaptive User Experience Design represents a paradigm shift in human-computer interaction, leveraging real-time neurophysiological data to create interfaces that dynamically respond to users' emotional and cognitive states. This approach integrates Brain-Computer Interface (BCI) technology with traditional UX design principles to create systems that can detect, interpret, and adapt to users' emotional responses during interaction.

The foundation of neuroadaptive design lies in the continuous monitoring of brain activity patterns associated with different emotional states. Advanced algorithms process these signals to identify emotional markers such as engagement, frustration, cognitive load, and satisfaction. This real-time emotional intelligence allows interfaces to modify their behavior, appearance, or functionality to optimize the user experience based on the current emotional context.

Key components of neuroadaptive UX design include emotion recognition systems, adaptive interface mechanisms, and feedback loops. Emotion recognition utilizes machine learning models trained on neurophysiological data to classify emotional states with increasing accuracy. Adaptive mechanisms then implement predetermined response strategies based on detected emotions, such as simplifying interfaces during high cognitive load or providing encouragement during frustration.

Implementation approaches vary across different application domains. In gaming, neuroadaptive systems adjust difficulty levels based on player engagement and stress. Educational platforms can modify content presentation based on attention levels and cognitive processing. Therapeutic applications utilize emotional feedback to guide users through anxiety management exercises with personalized pacing.

The design process for neuroadaptive interfaces requires interdisciplinary collaboration between neuroscientists, UX designers, and software engineers. This process typically involves defining emotional response parameters, creating adaptive interface elements, and establishing thresholds for system intervention. Iterative testing with diverse user groups is essential to refine the emotional recognition accuracy and appropriateness of adaptive responses.

Ethical considerations remain paramount in neuroadaptive design, particularly regarding privacy, consent, and manipulation concerns. Users must maintain agency over their experience, with transparent disclosure about what emotional data is being collected and how it influences interface behavior. The goal is to create systems that enhance rather than override user autonomy.

As BCI technology continues to advance toward more portable and non-invasive solutions, neuroadaptive UX design promises to revolutionize digital interactions by creating genuinely empathetic systems that respond to users' emotional needs in real time, potentially transforming how we interact with technology across all domains.

The foundation of neuroadaptive design lies in the continuous monitoring of brain activity patterns associated with different emotional states. Advanced algorithms process these signals to identify emotional markers such as engagement, frustration, cognitive load, and satisfaction. This real-time emotional intelligence allows interfaces to modify their behavior, appearance, or functionality to optimize the user experience based on the current emotional context.

Key components of neuroadaptive UX design include emotion recognition systems, adaptive interface mechanisms, and feedback loops. Emotion recognition utilizes machine learning models trained on neurophysiological data to classify emotional states with increasing accuracy. Adaptive mechanisms then implement predetermined response strategies based on detected emotions, such as simplifying interfaces during high cognitive load or providing encouragement during frustration.

Implementation approaches vary across different application domains. In gaming, neuroadaptive systems adjust difficulty levels based on player engagement and stress. Educational platforms can modify content presentation based on attention levels and cognitive processing. Therapeutic applications utilize emotional feedback to guide users through anxiety management exercises with personalized pacing.

The design process for neuroadaptive interfaces requires interdisciplinary collaboration between neuroscientists, UX designers, and software engineers. This process typically involves defining emotional response parameters, creating adaptive interface elements, and establishing thresholds for system intervention. Iterative testing with diverse user groups is essential to refine the emotional recognition accuracy and appropriateness of adaptive responses.

Ethical considerations remain paramount in neuroadaptive design, particularly regarding privacy, consent, and manipulation concerns. Users must maintain agency over their experience, with transparent disclosure about what emotional data is being collected and how it influences interface behavior. The goal is to create systems that enhance rather than override user autonomy.

As BCI technology continues to advance toward more portable and non-invasive solutions, neuroadaptive UX design promises to revolutionize digital interactions by creating genuinely empathetic systems that respond to users' emotional needs in real time, potentially transforming how we interact with technology across all domains.

Unlock deeper insights with PatSnap Eureka Quick Research — get a full tech report to explore trends and direct your research. Try now!

Generate Your Research Report Instantly with AI Agent

Supercharge your innovation with PatSnap Eureka AI Agent Platform!