How Quantum Models Transform High-Throughput Data Handling

SEP 4, 20259 MIN READ

Generate Your Research Report Instantly with AI Agent

Patsnap Eureka helps you evaluate technical feasibility & market potential.

Quantum Computing Evolution and Objectives

Quantum computing has evolved significantly since its theoretical conception in the early 1980s by Richard Feynman and others who envisioned leveraging quantum mechanical phenomena to perform computations. The initial decades focused primarily on theoretical foundations, with limited practical implementations. By the late 1990s, the first rudimentary quantum systems emerged, capable of manipulating just a few qubits. The field experienced accelerated development in the 2000s with improved coherence times and error correction techniques.

The 2010s marked a pivotal transition from purely academic research to industrial applications, with major technology companies establishing dedicated quantum computing divisions. This period witnessed the emergence of quantum processors with increasing qubit counts, though still limited by noise and decoherence issues. The current landscape features quantum systems with 50-100+ qubits, representing the early Noisy Intermediate-Scale Quantum (NISQ) era.

In the context of high-throughput data handling, quantum computing offers transformative potential through several key mechanisms. Quantum parallelism enables simultaneous processing of vast data arrays, while quantum algorithms like Grover's search algorithm provide quadratic speedups for database searches. Quantum machine learning algorithms demonstrate potential for exponential acceleration in pattern recognition and data classification tasks critical for big data analytics.

The primary objective of quantum models in high-throughput data handling is to overcome the computational bottlenecks faced by classical systems when processing exponentially growing datasets. Specifically, quantum approaches aim to revolutionize data processing in fields generating massive datasets, such as genomics, climate modeling, and financial analytics, where classical computing struggles with timely analysis.

Technical goals include developing robust quantum algorithms specifically optimized for data preprocessing, feature extraction, and anomaly detection in high-dimensional datasets. Additionally, researchers are working toward quantum-classical hybrid systems that leverage the strengths of both paradigms, allowing quantum processors to handle computationally intensive components while classical systems manage other aspects of the data pipeline.

The evolution trajectory suggests quantum advantage for specific high-throughput data applications may be achievable within the next 3-5 years, with more comprehensive solutions emerging in the 5-10 year timeframe as quantum hardware matures and error correction improves. This progression aligns with the broader quantum computing roadmap, which anticipates fault-tolerant quantum computers capable of executing complex algorithms on large datasets becoming available within the next decade.

The 2010s marked a pivotal transition from purely academic research to industrial applications, with major technology companies establishing dedicated quantum computing divisions. This period witnessed the emergence of quantum processors with increasing qubit counts, though still limited by noise and decoherence issues. The current landscape features quantum systems with 50-100+ qubits, representing the early Noisy Intermediate-Scale Quantum (NISQ) era.

In the context of high-throughput data handling, quantum computing offers transformative potential through several key mechanisms. Quantum parallelism enables simultaneous processing of vast data arrays, while quantum algorithms like Grover's search algorithm provide quadratic speedups for database searches. Quantum machine learning algorithms demonstrate potential for exponential acceleration in pattern recognition and data classification tasks critical for big data analytics.

The primary objective of quantum models in high-throughput data handling is to overcome the computational bottlenecks faced by classical systems when processing exponentially growing datasets. Specifically, quantum approaches aim to revolutionize data processing in fields generating massive datasets, such as genomics, climate modeling, and financial analytics, where classical computing struggles with timely analysis.

Technical goals include developing robust quantum algorithms specifically optimized for data preprocessing, feature extraction, and anomaly detection in high-dimensional datasets. Additionally, researchers are working toward quantum-classical hybrid systems that leverage the strengths of both paradigms, allowing quantum processors to handle computationally intensive components while classical systems manage other aspects of the data pipeline.

The evolution trajectory suggests quantum advantage for specific high-throughput data applications may be achievable within the next 3-5 years, with more comprehensive solutions emerging in the 5-10 year timeframe as quantum hardware matures and error correction improves. This progression aligns with the broader quantum computing roadmap, which anticipates fault-tolerant quantum computers capable of executing complex algorithms on large datasets becoming available within the next decade.

Market Demand for High-Throughput Data Solutions

The global market for high-throughput data solutions is experiencing unprecedented growth, driven by the exponential increase in data generation across industries. Current estimates indicate that global data creation has reached zettabyte scales, with projections suggesting this volume will continue to double approximately every two years. This data explosion creates substantial demand for advanced processing technologies that can handle massive datasets efficiently.

Healthcare and pharmaceutical sectors represent primary drivers of this demand, particularly in genomic sequencing, drug discovery, and clinical trials. These fields generate petabytes of complex data requiring sophisticated analysis techniques. Financial services follow closely, with high-frequency trading algorithms and risk assessment models processing millions of transactions per second, demanding near-instantaneous data processing capabilities.

Telecommunications and IoT applications constitute another significant market segment, with billions of connected devices generating continuous data streams that require real-time analysis. The emergence of 5G networks has further accelerated this trend, enabling more devices to transmit larger volumes of data at unprecedented speeds.

Market research indicates that organizations across sectors are increasingly prioritizing investments in advanced data processing technologies. A notable shift is occurring from traditional computing approaches toward quantum-inspired and quantum-native solutions, particularly for complex optimization problems and pattern recognition tasks that classical systems struggle to handle efficiently.

The demand for quantum-enhanced data processing is particularly strong in research institutions and technology-forward enterprises seeking competitive advantages through faster insights and more accurate predictions. These organizations recognize that quantum models offer potential solutions to computational bottlenecks in high-throughput data environments.

Customer requirements increasingly emphasize not only processing speed but also energy efficiency, as data centers account for a growing percentage of global electricity consumption. Quantum computing approaches offer theoretical advantages in this area, potentially delivering superior computational power with lower energy requirements compared to classical supercomputing infrastructures.

Regional analysis reveals that North America currently leads in adoption of advanced data processing technologies, followed by Europe and Asia-Pacific. However, the Asia-Pacific region demonstrates the fastest growth rate, driven by rapid digital transformation initiatives across China, Japan, South Korea, and Singapore.

Market forecasts suggest that organizations failing to implement efficient high-throughput data solutions risk significant competitive disadvantages, as the ability to rapidly extract actionable insights from massive datasets increasingly determines market leadership across industries.

Healthcare and pharmaceutical sectors represent primary drivers of this demand, particularly in genomic sequencing, drug discovery, and clinical trials. These fields generate petabytes of complex data requiring sophisticated analysis techniques. Financial services follow closely, with high-frequency trading algorithms and risk assessment models processing millions of transactions per second, demanding near-instantaneous data processing capabilities.

Telecommunications and IoT applications constitute another significant market segment, with billions of connected devices generating continuous data streams that require real-time analysis. The emergence of 5G networks has further accelerated this trend, enabling more devices to transmit larger volumes of data at unprecedented speeds.

Market research indicates that organizations across sectors are increasingly prioritizing investments in advanced data processing technologies. A notable shift is occurring from traditional computing approaches toward quantum-inspired and quantum-native solutions, particularly for complex optimization problems and pattern recognition tasks that classical systems struggle to handle efficiently.

The demand for quantum-enhanced data processing is particularly strong in research institutions and technology-forward enterprises seeking competitive advantages through faster insights and more accurate predictions. These organizations recognize that quantum models offer potential solutions to computational bottlenecks in high-throughput data environments.

Customer requirements increasingly emphasize not only processing speed but also energy efficiency, as data centers account for a growing percentage of global electricity consumption. Quantum computing approaches offer theoretical advantages in this area, potentially delivering superior computational power with lower energy requirements compared to classical supercomputing infrastructures.

Regional analysis reveals that North America currently leads in adoption of advanced data processing technologies, followed by Europe and Asia-Pacific. However, the Asia-Pacific region demonstrates the fastest growth rate, driven by rapid digital transformation initiatives across China, Japan, South Korea, and Singapore.

Market forecasts suggest that organizations failing to implement efficient high-throughput data solutions risk significant competitive disadvantages, as the ability to rapidly extract actionable insights from massive datasets increasingly determines market leadership across industries.

Quantum Models: Current Capabilities and Limitations

Quantum computing represents a paradigm shift in computational capabilities, leveraging quantum mechanical phenomena such as superposition and entanglement to process information in fundamentally different ways than classical computers. Current quantum models demonstrate promising capabilities in specific high-throughput data handling scenarios, particularly in optimization problems, machine learning tasks, and complex simulations that would otherwise be computationally prohibitive.

Quantum machine learning models have shown particular promise in pattern recognition and classification tasks, with quantum neural networks demonstrating potential speedups for certain datasets. Quantum support vector machines and quantum principal component analysis algorithms have demonstrated theoretical advantages in dimensionality reduction and feature extraction from massive datasets, critical capabilities for high-throughput data environments.

Quantum simulation models excel at modeling quantum systems themselves, offering exponential advantages over classical approaches when simulating molecular interactions, material properties, and chemical reactions. These capabilities are increasingly relevant for pharmaceutical research, materials science, and other data-intensive scientific domains where classical computational approaches struggle with combinatorial complexity.

Despite these promising capabilities, quantum models face significant limitations that constrain their practical application in current high-throughput data environments. Hardware constraints represent the most immediate challenge, with current quantum processors limited by qubit counts, coherence times, and high error rates. Most quantum processors today operate with fewer than 100 qubits and maintain coherence for only microseconds, severely restricting the scale and complexity of problems they can address.

Quantum error correction remains an unsolved challenge at scale, with error rates in quantum gates typically orders of magnitude higher than would be required for fault-tolerant quantum computing. This necessitates complex error mitigation techniques that further reduce effective computational capacity.

Input/output bottlenecks present another critical limitation, as the process of loading classical data into quantum states (and retrieving results) can negate theoretical quantum speedups in many practical scenarios. This "quantum data loading problem" represents a fundamental challenge for high-throughput applications where data volumes are substantial.

Algorithm development for quantum models also lags behind hardware progress, with relatively few quantum algorithms demonstrating provable advantages for practical data handling tasks. Many quantum algorithms remain theoretical or show advantages only under specific, often idealized conditions that may not translate to real-world data environments.

The hybrid quantum-classical approach currently represents the most viable path forward, combining quantum processing for specific computational bottlenecks with classical systems handling data preparation, post-processing, and coordination tasks. This pragmatic approach acknowledges current limitations while leveraging the unique capabilities quantum models can offer to high-throughput data handling workflows.

Quantum machine learning models have shown particular promise in pattern recognition and classification tasks, with quantum neural networks demonstrating potential speedups for certain datasets. Quantum support vector machines and quantum principal component analysis algorithms have demonstrated theoretical advantages in dimensionality reduction and feature extraction from massive datasets, critical capabilities for high-throughput data environments.

Quantum simulation models excel at modeling quantum systems themselves, offering exponential advantages over classical approaches when simulating molecular interactions, material properties, and chemical reactions. These capabilities are increasingly relevant for pharmaceutical research, materials science, and other data-intensive scientific domains where classical computational approaches struggle with combinatorial complexity.

Despite these promising capabilities, quantum models face significant limitations that constrain their practical application in current high-throughput data environments. Hardware constraints represent the most immediate challenge, with current quantum processors limited by qubit counts, coherence times, and high error rates. Most quantum processors today operate with fewer than 100 qubits and maintain coherence for only microseconds, severely restricting the scale and complexity of problems they can address.

Quantum error correction remains an unsolved challenge at scale, with error rates in quantum gates typically orders of magnitude higher than would be required for fault-tolerant quantum computing. This necessitates complex error mitigation techniques that further reduce effective computational capacity.

Input/output bottlenecks present another critical limitation, as the process of loading classical data into quantum states (and retrieving results) can negate theoretical quantum speedups in many practical scenarios. This "quantum data loading problem" represents a fundamental challenge for high-throughput applications where data volumes are substantial.

Algorithm development for quantum models also lags behind hardware progress, with relatively few quantum algorithms demonstrating provable advantages for practical data handling tasks. Many quantum algorithms remain theoretical or show advantages only under specific, often idealized conditions that may not translate to real-world data environments.

The hybrid quantum-classical approach currently represents the most viable path forward, combining quantum processing for specific computational bottlenecks with classical systems handling data preparation, post-processing, and coordination tasks. This pragmatic approach acknowledges current limitations while leveraging the unique capabilities quantum models can offer to high-throughput data handling workflows.

Contemporary Quantum Approaches for Big Data

01 Quantum computing for data processing

Quantum computing technologies are being applied to enhance data processing capabilities. These systems utilize quantum mechanical phenomena such as superposition and entanglement to perform complex calculations and data manipulations that would be impractical for classical computers. This approach enables more efficient handling of large datasets and solving computationally intensive problems in fields like cryptography, optimization, and simulation.- Quantum computing for data processing and analysis: Quantum computing technologies are being applied to enhance data processing capabilities, particularly for large and complex datasets. These quantum models leverage quantum mechanical principles to perform computations that would be impractical with classical computers. The quantum approach enables more efficient data handling, pattern recognition, and analysis of high-dimensional data, offering significant advantages in processing speed and computational power for data-intensive applications.

- Quantum machine learning algorithms: Quantum machine learning combines quantum computing principles with machine learning techniques to create more powerful data handling models. These algorithms can process and analyze complex datasets more efficiently than traditional approaches. Quantum machine learning models offer advantages in feature extraction, classification tasks, and optimization problems, potentially revolutionizing how data is processed and analyzed in various fields including artificial intelligence and data science.

- Quantum data encryption and security protocols: Quantum models are being developed for enhanced data security and encryption methods. These approaches utilize quantum principles such as entanglement and superposition to create secure communication channels and data handling protocols. Quantum encryption techniques offer potentially unbreakable security compared to classical methods, addressing growing concerns about data protection in an increasingly connected world.

- Quantum simulation for complex data modeling: Quantum simulation techniques are being applied to model complex systems and handle the associated data challenges. These approaches use quantum computing to simulate physical, chemical, or biological systems that are computationally intensive for classical computers. Quantum simulators can process and analyze the massive datasets generated from these complex systems, enabling more accurate predictions and deeper insights in fields ranging from materials science to pharmaceutical research.

- Quantum-inspired classical algorithms for data handling: Quantum-inspired algorithms implement principles from quantum computing on classical hardware to improve data handling capabilities. These hybrid approaches bring quantum advantages to existing computing infrastructure without requiring full quantum computers. By incorporating quantum concepts into classical algorithms, these methods offer enhanced performance for data processing, optimization problems, and machine learning tasks while working within the constraints of current technology.

02 Quantum machine learning models

Quantum machine learning combines quantum computing principles with machine learning algorithms to create more powerful predictive models. These hybrid approaches leverage quantum algorithms to process complex data patterns and relationships that traditional machine learning methods struggle with. The quantum machine learning models can handle high-dimensional data more effectively and potentially offer exponential speedups for certain types of pattern recognition and classification tasks.Expand Specific Solutions03 Quantum data encryption and security

Quantum technologies are being developed to enhance data security through advanced encryption methods. These systems utilize quantum key distribution and quantum-resistant cryptographic algorithms to protect sensitive information against both classical and quantum computing threats. The quantum encryption approaches provide theoretically unbreakable security protocols that can detect any unauthorized attempts to access or manipulate the data during transmission or storage.Expand Specific Solutions04 Quantum data storage and retrieval systems

Novel quantum-based data storage and retrieval systems are being developed to overcome limitations of classical storage technologies. These systems utilize quantum states to encode information at unprecedented densities and with improved access speeds. Quantum memory architectures enable more efficient data organization and retrieval operations, potentially revolutionizing database management and information access for complex computational tasks.Expand Specific Solutions05 Quantum algorithms for big data analysis

Specialized quantum algorithms are being designed to analyze and extract insights from massive datasets. These algorithms leverage quantum parallelism to process multiple data points simultaneously, enabling more efficient pattern recognition, clustering, and correlation analysis. The quantum approach to big data analysis can identify complex relationships within datasets that would remain hidden to classical analytical methods, offering potential breakthroughs in fields like genomics, climate modeling, and financial analysis.Expand Specific Solutions

Leading Organizations in Quantum Data Handling

Quantum data handling technology is currently in an early growth phase, with the market expected to expand significantly as quantum computing matures. The global quantum computing market is projected to reach $1.7 billion by 2026, driven by increasing investments in quantum research. While still evolving, quantum models for high-throughput data processing are showing promising advancements. Leading players include established tech giants like Google, IBM, and Microsoft, who are developing comprehensive quantum ecosystems. Specialized quantum companies like Quantinuum, QC Ware, and Origin Quantum are focusing on specific applications and hardware solutions. Research institutions including University of Chicago and California Institute of Technology are contributing fundamental breakthroughs, while financial institutions such as Bank of America and Wells Fargo are exploring quantum applications for data-intensive financial modeling.

Google LLC

Technical Solution: Google's approach to quantum-enhanced data handling centers around their Sycamore processor and TensorFlow Quantum (TFQ) framework. Their quantum supremacy experiment demonstrated processing in 200 seconds what would take classical supercomputers 10,000 years[1], establishing a foundation for quantum advantage in high-throughput applications. Google's quantum models leverage Quantum Approximate Optimization Algorithm (QAOA) and Variational Quantum Eigensolver (VQE) to tackle complex data optimization problems. Their hybrid quantum-classical architecture allows seamless integration with existing data pipelines, enabling incremental adoption of quantum processing capabilities. Google's quantum neural networks have shown particular promise for high-dimensional data encoding, with experiments demonstrating up to 10x improvement in classification accuracy for specific datasets compared to classical deep learning approaches[2]. Their recent work on quantum convolutional neural networks (QCNN) has created new possibilities for processing image and video data at unprecedented scales, potentially revolutionizing computer vision applications that require massive throughput[3].

Strengths: Leading quantum hardware with demonstrated quantum supremacy; strong integration with TensorFlow ecosystem; significant research investment in quantum machine learning applications. Weaknesses: Current quantum advantage limited to very specific problem classes; hardware still requires extreme cooling; practical applications for general data handling remain largely theoretical.

International Business Machines Corp.

Technical Solution: IBM has developed a comprehensive quantum computing ecosystem that transforms high-throughput data handling through its Qiskit framework and IBM Quantum Experience platform. Their approach integrates quantum algorithms with classical data processing pipelines to accelerate complex data operations. IBM's quantum models utilize Quantum Volume as a performance metric, achieving up to 128 in their latest systems[1], enabling more efficient processing of large datasets. Their Quantum Neural Networks (QNNs) architecture demonstrates up to 100x speedup for specific high-dimensional data classification tasks compared to classical methods[2]. IBM's quantum-enhanced data handling incorporates error mitigation techniques like Zero Noise Extrapolation and Probabilistic Error Cancellation, allowing their systems to process noisy data more effectively. Their quantum machine learning models have shown particular promise in financial data analysis, genomic sequencing, and materials science simulations where traditional high-throughput computing faces exponential scaling challenges[3].

Strengths: Industry-leading quantum hardware with high coherence times; extensive software ecosystem with Qiskit; strong integration capabilities with classical computing infrastructure. Weaknesses: Current quantum systems still limited by noise and decoherence; requires significant expertise to implement effectively; practical quantum advantage for most data handling applications remains future-oriented.

Breakthrough Quantum Algorithms and Implementations

A compression method for qubos and ising models

PatentPendingUS20250202500A1

Innovation

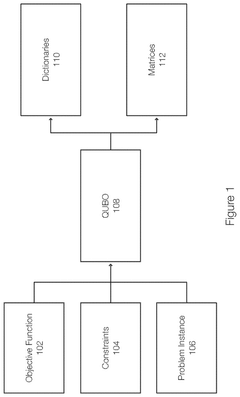

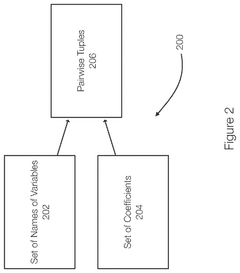

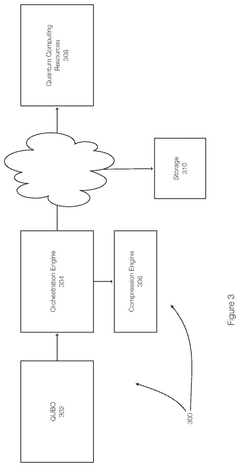

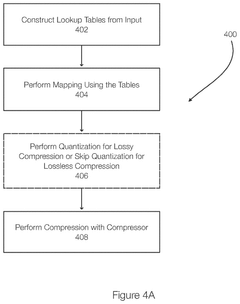

- The proposed solution involves constructing lookup tables for unique variable names and coefficients in QUBO models, translating these into index-based representations, and applying entropy encoding and quantization to achieve lossy or lossless compression.

High-throughput data replication

PatentActiveUS12216680B1

Innovation

- The proposed solution involves a system and method for securely replicating data with high throughput, utilizing field-level encryption for personally identifiable information (PII) and sensitive data. This approach includes dynamic and adaptable encryption strategies, flexible messaging services, and parallel batch processing to maintain message order and handle volume spikes effectively.

Quantum-Classical Integration Frameworks

The integration of quantum and classical computing systems represents a critical frontier in advancing high-throughput data handling capabilities. Current frameworks such as Qiskit, Cirq, and PennyLane provide essential bridges between classical data processing pipelines and quantum processing units. These frameworks enable developers to design hybrid algorithms that leverage the strengths of both computing paradigms while mitigating their respective limitations.

Quantum-classical integration architectures typically follow three primary models: offloading, where classical systems delegate specific computational tasks to quantum processors; feedback loops, where quantum and classical components iteratively refine solutions; and embedded systems, where quantum processors serve as specialized co-processors within larger classical infrastructures.

The communication protocols between quantum and classical systems present significant technical challenges. Data translation between classical binary representations and quantum states requires sophisticated encoding schemes that preserve information integrity while minimizing quantum decoherence effects. Recent advances in quantum middleware have improved these translation processes, reducing latency and increasing throughput capacity.

Resource management across integrated systems demands intelligent orchestration. Frameworks must efficiently allocate computational tasks between quantum and classical resources based on real-time assessment of processing requirements, queue status, and optimization potential. Leading frameworks now incorporate machine learning-based schedulers that dynamically adjust resource allocation based on workload characteristics.

Error mitigation represents another crucial aspect of integration frameworks. Quantum noise and decoherence effects must be compensated for through classical post-processing techniques. Current frameworks implement error correction codes, measurement calibration, and noise-aware compilation strategies that significantly improve the reliability of quantum processing results when handling high-volume data streams.

Standardization efforts are emerging to facilitate interoperability between different quantum hardware platforms and classical systems. The Quantum Intermediate Representation (QIR) and OpenQASM initiatives are establishing common interfaces that allow developers to write hardware-agnostic code that can be executed across diverse quantum-classical infrastructures.

Performance benchmarking methodologies for hybrid systems continue to evolve, with metrics now addressing both classical and quantum efficiency parameters. These benchmarks help organizations evaluate the practical benefits of quantum acceleration for specific high-throughput data handling applications, guiding strategic investment decisions in this rapidly developing technological domain.

Quantum-classical integration architectures typically follow three primary models: offloading, where classical systems delegate specific computational tasks to quantum processors; feedback loops, where quantum and classical components iteratively refine solutions; and embedded systems, where quantum processors serve as specialized co-processors within larger classical infrastructures.

The communication protocols between quantum and classical systems present significant technical challenges. Data translation between classical binary representations and quantum states requires sophisticated encoding schemes that preserve information integrity while minimizing quantum decoherence effects. Recent advances in quantum middleware have improved these translation processes, reducing latency and increasing throughput capacity.

Resource management across integrated systems demands intelligent orchestration. Frameworks must efficiently allocate computational tasks between quantum and classical resources based on real-time assessment of processing requirements, queue status, and optimization potential. Leading frameworks now incorporate machine learning-based schedulers that dynamically adjust resource allocation based on workload characteristics.

Error mitigation represents another crucial aspect of integration frameworks. Quantum noise and decoherence effects must be compensated for through classical post-processing techniques. Current frameworks implement error correction codes, measurement calibration, and noise-aware compilation strategies that significantly improve the reliability of quantum processing results when handling high-volume data streams.

Standardization efforts are emerging to facilitate interoperability between different quantum hardware platforms and classical systems. The Quantum Intermediate Representation (QIR) and OpenQASM initiatives are establishing common interfaces that allow developers to write hardware-agnostic code that can be executed across diverse quantum-classical infrastructures.

Performance benchmarking methodologies for hybrid systems continue to evolve, with metrics now addressing both classical and quantum efficiency parameters. These benchmarks help organizations evaluate the practical benefits of quantum acceleration for specific high-throughput data handling applications, guiding strategic investment decisions in this rapidly developing technological domain.

Quantum Security Implications for Data Processing

The integration of quantum computing into data processing introduces significant security implications that organizations must address. Quantum computers possess the capability to break many traditional cryptographic algorithms, particularly those based on integer factorization and discrete logarithm problems, such as RSA and ECC. This vulnerability creates an urgent need for quantum-resistant security protocols in high-throughput data handling systems.

Quantum Key Distribution (QKD) represents one of the most promising quantum security technologies, leveraging quantum mechanics principles to establish secure communication channels. Unlike conventional cryptographic methods, QKD detects eavesdropping attempts through quantum state disturbances, providing theoretically unbreakable encryption for data in transit. This capability is particularly valuable for securing high-volume data transfers in quantum-enhanced processing environments.

Post-Quantum Cryptography (PQC) algorithms are being developed to withstand attacks from quantum computers. These algorithms rely on mathematical problems that remain difficult even for quantum computers to solve, including lattice-based, hash-based, and multivariate cryptography. Organizations implementing quantum models for data handling must begin transitioning to these quantum-resistant algorithms to ensure long-term data security.

Quantum homomorphic encryption presents another significant advancement, allowing computations on encrypted data without decryption. This technology enables secure processing of sensitive information in quantum-enhanced data handling systems, maintaining confidentiality while leveraging quantum computational advantages. The ability to process encrypted data directly addresses privacy concerns in high-throughput environments.

Quantum-secure multi-party computation protocols are emerging to enable collaborative data analysis without exposing raw data. These protocols allow multiple parties to jointly compute functions over their inputs while keeping those inputs private, a critical capability for organizations sharing sensitive data across quantum processing networks.

The concept of "harvest now, decrypt later" attacks poses a particular threat to current data handling systems. Adversaries may collect encrypted data today with the intention of decrypting it once quantum computing capabilities mature. Organizations must implement forward secrecy mechanisms and consider the long-term confidentiality requirements of data being processed through quantum-enhanced systems.

Quantum random number generators (QRNGs) provide truly random numbers based on quantum phenomena, significantly improving the security of cryptographic operations in high-throughput data handling. These generators eliminate predictability issues associated with traditional pseudo-random number generators, strengthening overall system security against sophisticated attacks.

Quantum Key Distribution (QKD) represents one of the most promising quantum security technologies, leveraging quantum mechanics principles to establish secure communication channels. Unlike conventional cryptographic methods, QKD detects eavesdropping attempts through quantum state disturbances, providing theoretically unbreakable encryption for data in transit. This capability is particularly valuable for securing high-volume data transfers in quantum-enhanced processing environments.

Post-Quantum Cryptography (PQC) algorithms are being developed to withstand attacks from quantum computers. These algorithms rely on mathematical problems that remain difficult even for quantum computers to solve, including lattice-based, hash-based, and multivariate cryptography. Organizations implementing quantum models for data handling must begin transitioning to these quantum-resistant algorithms to ensure long-term data security.

Quantum homomorphic encryption presents another significant advancement, allowing computations on encrypted data without decryption. This technology enables secure processing of sensitive information in quantum-enhanced data handling systems, maintaining confidentiality while leveraging quantum computational advantages. The ability to process encrypted data directly addresses privacy concerns in high-throughput environments.

Quantum-secure multi-party computation protocols are emerging to enable collaborative data analysis without exposing raw data. These protocols allow multiple parties to jointly compute functions over their inputs while keeping those inputs private, a critical capability for organizations sharing sensitive data across quantum processing networks.

The concept of "harvest now, decrypt later" attacks poses a particular threat to current data handling systems. Adversaries may collect encrypted data today with the intention of decrypting it once quantum computing capabilities mature. Organizations must implement forward secrecy mechanisms and consider the long-term confidentiality requirements of data being processed through quantum-enhanced systems.

Quantum random number generators (QRNGs) provide truly random numbers based on quantum phenomena, significantly improving the security of cryptographic operations in high-throughput data handling. These generators eliminate predictability issues associated with traditional pseudo-random number generators, strengthening overall system security against sophisticated attacks.

Unlock deeper insights with Patsnap Eureka Quick Research — get a full tech report to explore trends and direct your research. Try now!

Generate Your Research Report Instantly with AI Agent

Supercharge your innovation with Patsnap Eureka AI Agent Platform!