How to Measure Quantum State Changes with Optimal Methods

SEP 4, 20259 MIN READ

Generate Your Research Report Instantly with AI Agent

PatSnap Eureka helps you evaluate technical feasibility & market potential.

Quantum Measurement Background and Objectives

Quantum measurement theory has evolved significantly since the early days of quantum mechanics. The foundational work by pioneers such as Heisenberg, Bohr, and von Neumann established the probabilistic nature of quantum measurements and introduced the concept of wave function collapse. This evolution continued through the development of quantum information theory in the late 20th century, which brought new perspectives on measurement processes and their fundamental limitations.

The field has witnessed a paradigm shift from traditional projective measurements to more sophisticated techniques including Positive Operator-Valued Measures (POVMs), weak measurements, and quantum non-demolition measurements. These advancements have been driven by both theoretical insights and experimental necessities, particularly as quantum technologies advance toward practical applications in computing, communication, and sensing.

Current trends in quantum measurement theory focus on optimality criteria that balance information gain against quantum state disturbance. The Heisenberg uncertainty principle fundamentally limits the precision with which complementary observables can be simultaneously measured, creating an inherent trade-off that must be navigated in any measurement strategy. Recent developments in quantum metrology have pushed these boundaries, approaching the standard quantum limit and even achieving Heisenberg-limited precision in specific contexts.

The primary technical objectives in this field include developing measurement protocols that minimize quantum back-action while maximizing information extraction, creating adaptive measurement schemes that can respond to partial measurement results, and establishing robust error mitigation techniques for noisy quantum systems. These objectives are particularly crucial for quantum computing applications, where measurement fidelity directly impacts computational outcomes.

Another significant goal is the standardization of quantum measurement benchmarks across different physical implementations, from superconducting circuits to trapped ions and photonic systems. This standardization would facilitate meaningful comparisons between competing technologies and accelerate progress in the field.

The quantum measurement community also aims to bridge the gap between theoretical optimality proofs and practical implementation constraints. While mathematical frameworks like quantum Fisher information provide theoretical bounds on measurement precision, translating these insights into laboratory-viable protocols remains challenging. Addressing this theory-practice gap requires interdisciplinary collaboration between theorists, experimentalists, and engineers.

As quantum technologies transition from research laboratories to commercial applications, there is an increasing focus on developing measurement techniques that are not only optimal in theory but also robust against environmental noise, scalable to larger systems, and compatible with existing classical control infrastructure.

The field has witnessed a paradigm shift from traditional projective measurements to more sophisticated techniques including Positive Operator-Valued Measures (POVMs), weak measurements, and quantum non-demolition measurements. These advancements have been driven by both theoretical insights and experimental necessities, particularly as quantum technologies advance toward practical applications in computing, communication, and sensing.

Current trends in quantum measurement theory focus on optimality criteria that balance information gain against quantum state disturbance. The Heisenberg uncertainty principle fundamentally limits the precision with which complementary observables can be simultaneously measured, creating an inherent trade-off that must be navigated in any measurement strategy. Recent developments in quantum metrology have pushed these boundaries, approaching the standard quantum limit and even achieving Heisenberg-limited precision in specific contexts.

The primary technical objectives in this field include developing measurement protocols that minimize quantum back-action while maximizing information extraction, creating adaptive measurement schemes that can respond to partial measurement results, and establishing robust error mitigation techniques for noisy quantum systems. These objectives are particularly crucial for quantum computing applications, where measurement fidelity directly impacts computational outcomes.

Another significant goal is the standardization of quantum measurement benchmarks across different physical implementations, from superconducting circuits to trapped ions and photonic systems. This standardization would facilitate meaningful comparisons between competing technologies and accelerate progress in the field.

The quantum measurement community also aims to bridge the gap between theoretical optimality proofs and practical implementation constraints. While mathematical frameworks like quantum Fisher information provide theoretical bounds on measurement precision, translating these insights into laboratory-viable protocols remains challenging. Addressing this theory-practice gap requires interdisciplinary collaboration between theorists, experimentalists, and engineers.

As quantum technologies transition from research laboratories to commercial applications, there is an increasing focus on developing measurement techniques that are not only optimal in theory but also robust against environmental noise, scalable to larger systems, and compatible with existing classical control infrastructure.

Market Applications for Quantum State Measurement

Quantum state measurement technologies are rapidly transitioning from laboratory curiosities to commercial applications across multiple industries. The financial sector has emerged as an early adopter, with quantum-secured communications offering unprecedented protection for financial transactions. Major banks including JPMorgan Chase and Goldman Sachs have established quantum research divisions specifically focused on implementing quantum cryptography solutions that leverage precise state measurement techniques to detect eavesdropping attempts.

In healthcare, quantum sensors utilizing state measurement principles are revolutionizing medical diagnostics through enhanced magnetic resonance imaging (MRI). These quantum-enhanced MRI systems can detect subtle changes in biological tissues with significantly higher resolution than conventional technologies, enabling earlier disease detection. Companies like Quantum Diamond Technologies are developing quantum sensors based on nitrogen-vacancy centers in diamond that can measure magnetic fields associated with neural activity and cardiac signals with unprecedented precision.

The telecommunications industry represents another substantial market, with quantum key distribution (QKD) networks being deployed globally. These systems rely on quantum state measurement to ensure secure communication channels. China's quantum backbone network spanning over 2,000 kilometers and the European Quantum Communication Infrastructure initiative demonstrate the scale of investment in this technology. Telecommunications giants including Toshiba, Nokia, and Huawei have commercialized QKD systems that depend on optimal quantum state measurement methods.

Computing and data centers constitute a growing application area where quantum state measurement enables error correction in quantum computing systems. As quantum computers scale up, the ability to accurately measure and correct quantum states becomes critical for maintaining computational integrity. Companies like IBM, Google, and Rigetti Computing are investing heavily in improving measurement fidelity to reduce error rates in their quantum processors.

The defense and aerospace sectors represent premium markets for quantum sensing applications. Quantum gravimeters that precisely measure gravitational fields can detect underground structures or resources, while quantum inertial sensors offer navigation capabilities that don't rely on GPS. Lockheed Martin, Northrop Grumman, and other defense contractors are developing quantum navigation systems that maintain positional accuracy even when satellite signals are unavailable.

Materials science and manufacturing benefit from quantum sensing through atomic-scale characterization capabilities. Quantum sensors can detect structural defects and chemical compositions with unprecedented precision, enabling quality control at the atomic level. This capability is particularly valuable for semiconductor manufacturing, where feature sizes continue to shrink toward atomic dimensions.

In healthcare, quantum sensors utilizing state measurement principles are revolutionizing medical diagnostics through enhanced magnetic resonance imaging (MRI). These quantum-enhanced MRI systems can detect subtle changes in biological tissues with significantly higher resolution than conventional technologies, enabling earlier disease detection. Companies like Quantum Diamond Technologies are developing quantum sensors based on nitrogen-vacancy centers in diamond that can measure magnetic fields associated with neural activity and cardiac signals with unprecedented precision.

The telecommunications industry represents another substantial market, with quantum key distribution (QKD) networks being deployed globally. These systems rely on quantum state measurement to ensure secure communication channels. China's quantum backbone network spanning over 2,000 kilometers and the European Quantum Communication Infrastructure initiative demonstrate the scale of investment in this technology. Telecommunications giants including Toshiba, Nokia, and Huawei have commercialized QKD systems that depend on optimal quantum state measurement methods.

Computing and data centers constitute a growing application area where quantum state measurement enables error correction in quantum computing systems. As quantum computers scale up, the ability to accurately measure and correct quantum states becomes critical for maintaining computational integrity. Companies like IBM, Google, and Rigetti Computing are investing heavily in improving measurement fidelity to reduce error rates in their quantum processors.

The defense and aerospace sectors represent premium markets for quantum sensing applications. Quantum gravimeters that precisely measure gravitational fields can detect underground structures or resources, while quantum inertial sensors offer navigation capabilities that don't rely on GPS. Lockheed Martin, Northrop Grumman, and other defense contractors are developing quantum navigation systems that maintain positional accuracy even when satellite signals are unavailable.

Materials science and manufacturing benefit from quantum sensing through atomic-scale characterization capabilities. Quantum sensors can detect structural defects and chemical compositions with unprecedented precision, enabling quality control at the atomic level. This capability is particularly valuable for semiconductor manufacturing, where feature sizes continue to shrink toward atomic dimensions.

Current Challenges in Quantum Measurement Techniques

Despite significant advancements in quantum measurement techniques, the field faces several persistent challenges that impede optimal quantum state measurement. The fundamental obstacle remains the quantum measurement problem itself - the act of measurement disturbs the quantum system, causing wave function collapse and irreversibly altering the quantum state. This inherent limitation, rooted in quantum mechanics' probabilistic nature, creates a fundamental boundary for measurement precision.

Quantum noise presents another significant challenge, manifesting as decoherence when quantum systems interact with their environment. This interaction causes quantum information leakage and state degradation, severely limiting measurement fidelity and quantum operation times. Current quantum technologies struggle to maintain coherence beyond milliseconds in most practical implementations.

Technical limitations in measurement apparatus create additional barriers. Quantum state detection requires extraordinarily sensitive equipment operating at near-zero temperatures, with minimal electromagnetic interference. The precision engineering required for such equipment remains at the cutting edge of technological capability, with significant room for improvement in signal-to-noise ratios and detection efficiency.

The scalability challenge becomes increasingly prominent as quantum systems grow in complexity. While measuring single or few-qubit systems has become relatively routine in laboratory settings, extending these techniques to many-qubit systems introduces exponential complexity in both the measurement process and data analysis. This scalability issue represents one of the most significant roadblocks to practical quantum computing.

Calibration and error correction present ongoing difficulties. Quantum measurements are inherently probabilistic and error-prone, requiring sophisticated error correction protocols. However, implementing these protocols demands additional qubits and measurements, creating a resource overhead that compounds system complexity.

The speed-accuracy tradeoff remains unresolved in quantum measurement. Faster measurements typically introduce more noise and disturbance, while more accurate measurements require longer integration times, during which decoherence can degrade the quantum state. Finding the optimal balance between measurement speed and accuracy represents a critical challenge.

Finally, the interpretation of measurement results presents analytical challenges. Converting raw measurement data into meaningful quantum state information requires complex tomographic techniques and statistical analysis. As quantum systems scale, the computational resources required for this analysis grow exponentially, creating a bottleneck in the quantum measurement pipeline.

Quantum noise presents another significant challenge, manifesting as decoherence when quantum systems interact with their environment. This interaction causes quantum information leakage and state degradation, severely limiting measurement fidelity and quantum operation times. Current quantum technologies struggle to maintain coherence beyond milliseconds in most practical implementations.

Technical limitations in measurement apparatus create additional barriers. Quantum state detection requires extraordinarily sensitive equipment operating at near-zero temperatures, with minimal electromagnetic interference. The precision engineering required for such equipment remains at the cutting edge of technological capability, with significant room for improvement in signal-to-noise ratios and detection efficiency.

The scalability challenge becomes increasingly prominent as quantum systems grow in complexity. While measuring single or few-qubit systems has become relatively routine in laboratory settings, extending these techniques to many-qubit systems introduces exponential complexity in both the measurement process and data analysis. This scalability issue represents one of the most significant roadblocks to practical quantum computing.

Calibration and error correction present ongoing difficulties. Quantum measurements are inherently probabilistic and error-prone, requiring sophisticated error correction protocols. However, implementing these protocols demands additional qubits and measurements, creating a resource overhead that compounds system complexity.

The speed-accuracy tradeoff remains unresolved in quantum measurement. Faster measurements typically introduce more noise and disturbance, while more accurate measurements require longer integration times, during which decoherence can degrade the quantum state. Finding the optimal balance between measurement speed and accuracy represents a critical challenge.

Finally, the interpretation of measurement results presents analytical challenges. Converting raw measurement data into meaningful quantum state information requires complex tomographic techniques and statistical analysis. As quantum systems scale, the computational resources required for this analysis grow exponentially, creating a bottleneck in the quantum measurement pipeline.

State-of-the-Art Quantum Measurement Solutions

01 Quantum state tomography techniques

Quantum state tomography is a method used to reconstruct the complete quantum state from a series of measurements. Optimal methods involve efficient measurement protocols that minimize the number of measurements required while maximizing accuracy. These techniques often employ mathematical optimization to determine the most informative measurement bases and statistical methods to reconstruct the quantum state from measurement outcomes.- Quantum state tomography techniques: Quantum state tomography is a method used to reconstruct the complete quantum state from a series of measurements. Optimal methods involve efficient algorithms for state reconstruction with minimal measurements, reducing computational complexity and improving accuracy. These techniques are crucial for quantum computing applications where precise state knowledge is required for error correction and algorithm verification.

- Adaptive measurement strategies: Adaptive measurement strategies dynamically adjust measurement parameters based on previous measurement outcomes. These methods optimize the information gain per measurement, reducing the total number of measurements needed to characterize a quantum system. By using feedback loops and machine learning algorithms, these approaches can efficiently converge on optimal measurement settings for specific quantum states.

- Quantum error mitigation techniques: Optimal quantum state measurement requires effective error mitigation strategies to account for noise and decoherence. These techniques include error detection protocols, noise-resilient measurement schemes, and post-processing methods that can extract accurate information despite imperfect measurement apparatus. By characterizing and compensating for systematic errors, these approaches improve measurement fidelity in practical quantum systems.

- Weak measurement and quantum non-demolition methods: Weak measurement techniques allow for minimal disturbance of quantum states during the measurement process. These approaches, including quantum non-demolition measurements, enable repeated or continuous monitoring of quantum systems while preserving quantum coherence. By carefully controlling the measurement strength, these methods balance information extraction with state preservation, which is crucial for quantum feedback control and continuous monitoring applications.

- Hardware-specific optimization for quantum measurements: Different quantum hardware platforms require tailored measurement strategies to achieve optimal results. These methods involve customizing measurement protocols based on the specific characteristics of the quantum system, such as superconducting qubits, trapped ions, or photonic systems. Hardware-specific optimization includes pulse sequence design, readout resonator tuning, and signal processing techniques that maximize measurement fidelity while minimizing measurement time.

02 Adaptive measurement strategies

Adaptive measurement strategies dynamically adjust measurement settings based on previous measurement outcomes. These methods use feedback loops to optimize subsequent measurements, reducing the total number of measurements needed to characterize a quantum state. By focusing measurements on the most uncertain parameters of the quantum state, these approaches achieve higher accuracy with fewer resources compared to non-adaptive methods.Expand Specific Solutions03 Quantum error mitigation in measurements

Techniques for mitigating errors in quantum state measurements focus on identifying and correcting noise sources that affect measurement accuracy. These methods include error characterization protocols, noise-resilient measurement designs, and post-processing algorithms that can extract accurate quantum state information even in the presence of measurement errors and decoherence effects.Expand Specific Solutions04 Machine learning for quantum measurement optimization

Machine learning approaches can optimize quantum measurement protocols by identifying patterns in measurement data and suggesting optimal measurement strategies. Neural networks and other AI techniques can be trained to predict the most informative measurements to perform next, recognize quantum states from partial measurement data, and improve the efficiency of quantum state reconstruction algorithms.Expand Specific Solutions05 Weak and continuous measurement techniques

Weak measurement and continuous monitoring approaches provide alternative methods for extracting quantum state information with minimal disturbance to the system. These techniques allow for the gradual extraction of information about quantum states while minimizing the collapse of the wavefunction. They are particularly useful for quantum feedback control systems and for monitoring the evolution of quantum systems over time.Expand Specific Solutions

Leading Research Groups and Companies in Quantum Measurement

The quantum state measurement technology landscape is evolving rapidly, currently transitioning from early research to commercial application phases. The market is experiencing significant growth, projected to reach substantial scale as quantum computing advances. In terms of technical maturity, established players like IBM, Google, and NEC lead with robust quantum measurement frameworks, while specialized quantum companies such as Origin Quantum and Alpine Quantum Technologies are developing innovative measurement protocols. Academic institutions including Nanjing University, KAIST, and Shanghai Jiao Tong University contribute fundamental research advancements. The ecosystem shows a collaborative dynamic between technology corporations (Fujitsu, Tencent), research institutions, and emerging quantum-focused startups, with measurement techniques progressing from theoretical concepts to practical implementations in quantum computing systems.

Origin Quantum Computing Technology (Hefei) Co., Ltd.

Technical Solution: Origin Quantum has developed a distinctive approach to quantum state measurement focusing on superconducting quantum computing systems. Their methodology incorporates adaptive quantum state estimation techniques that dynamically adjust measurement bases to maximize information gain with minimal measurements. Origin's proprietary "QuBox" quantum computer implements dispersive readout techniques for superconducting qubits, allowing non-destructive state measurements with high fidelity. Their measurement protocol includes a novel error correction scheme specifically designed for Chinese quantum hardware architectures, addressing unique noise profiles in their systems. Origin Quantum has also pioneered quantum detector tomography methods that characterize and calibrate measurement devices independently of state preparation errors, improving overall measurement accuracy. Their quantum cloud platform integrates these measurement capabilities with classical post-processing algorithms that enhance the extraction of quantum state information from raw measurement data.

Strengths: Specialized expertise in superconducting quantum systems; strong integration between hardware and software measurement solutions; government backing providing substantial resources for research. Weaknesses: Limited international presence compared to Western competitors; their proprietary measurement techniques lack widespread validation outside China; relatively newer entrant to quantum computing ecosystem with less established measurement protocols.

Google LLC

Technical Solution: Google's approach to measuring quantum state changes leverages their Quantum AI research division's expertise in quantum supremacy experiments. Their technique employs Quantum Process Tomography (QPT) which reconstructs the complete quantum process matrix by preparing various input states and measuring corresponding outputs. Google has developed randomized benchmarking protocols that efficiently characterize gate fidelities without full tomography. Their Sycamore processor implements cross-entropy benchmarking to verify quantum advantage claims, allowing measurement of complex quantum states with 53-qubits. Additionally, Google pioneered Shadow Tomography methods that provide efficient approximations of quantum states using significantly fewer measurements than traditional approaches, reducing the exponential scaling problem in quantum state measurement.

Strengths: Advanced hardware capabilities with Sycamore processor enabling complex state measurements; proprietary calibration techniques for high-fidelity measurements; integration with TensorFlow Quantum for classical-quantum hybrid analysis. Weaknesses: Their methods often require specialized hardware not widely accessible; some techniques remain proprietary limiting academic adoption; measurement fidelity decreases with increasing system size.

Key Innovations in Quantum State Detection

Standardized method of quantum state verification based on optimal strategy

PatentPendingUS20210374589A1

Innovation

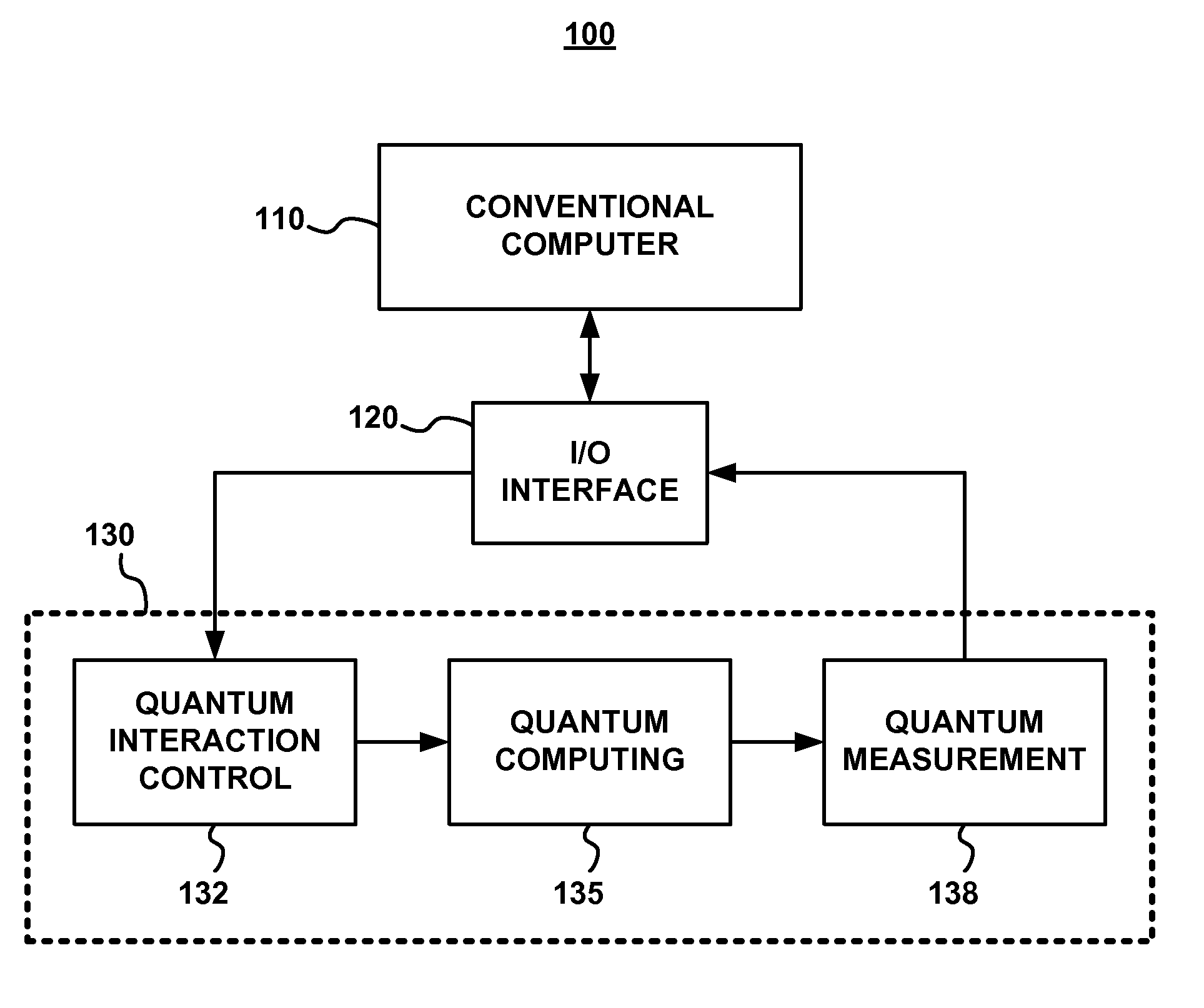

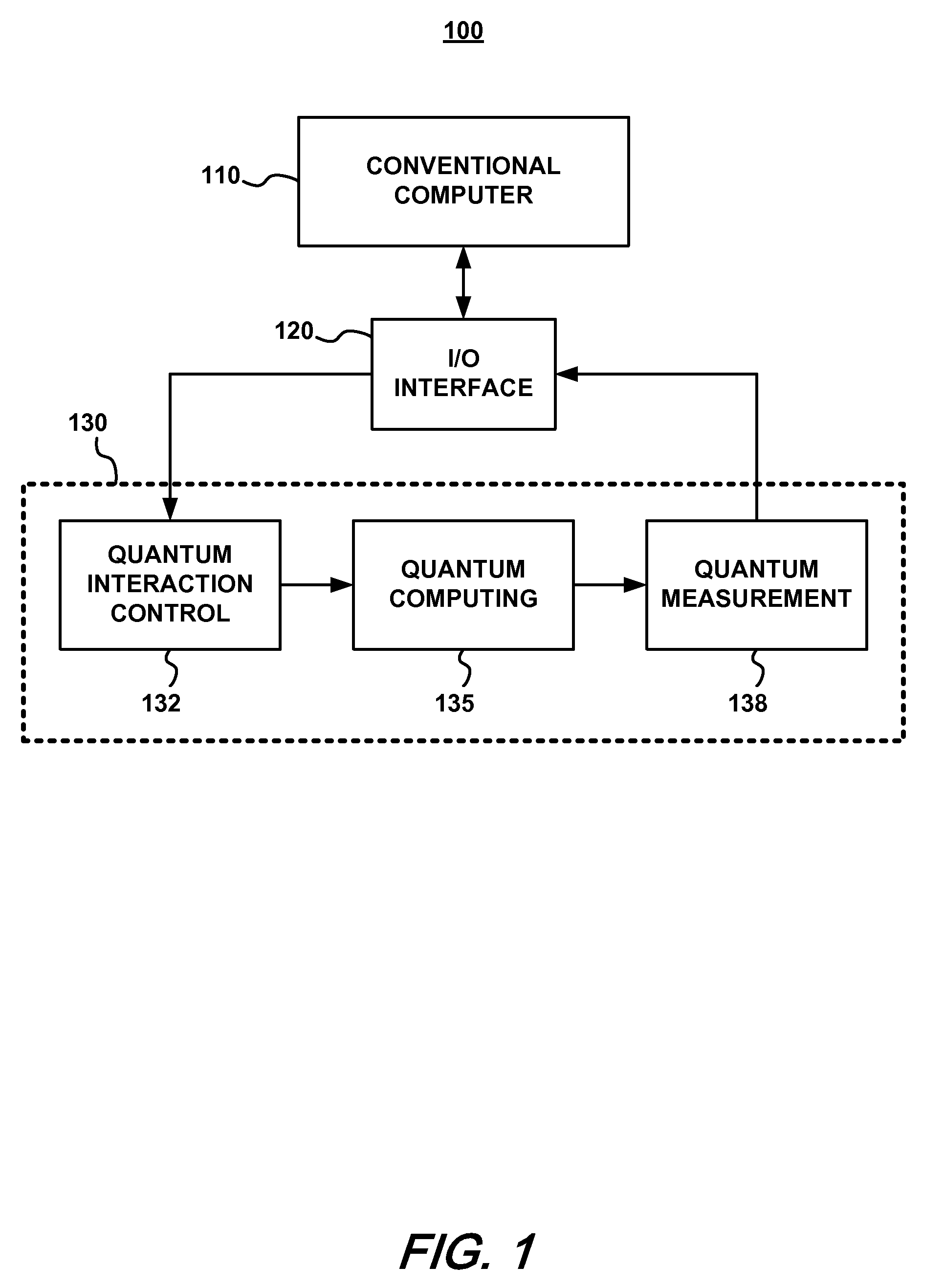

- A standardized quantum state verification procedure using an optimal verification strategy that adjusts quantum equipment components to generate target states, performs projective measurements with non-adaptive and adaptive methods, and analyzes measurement results to ensure reliability with fewer resources, allowing for efficient verification of quantum devices.

Estimating a quantum state of a quantum mechanical system

PatentInactiveUS8315969B2

Innovation

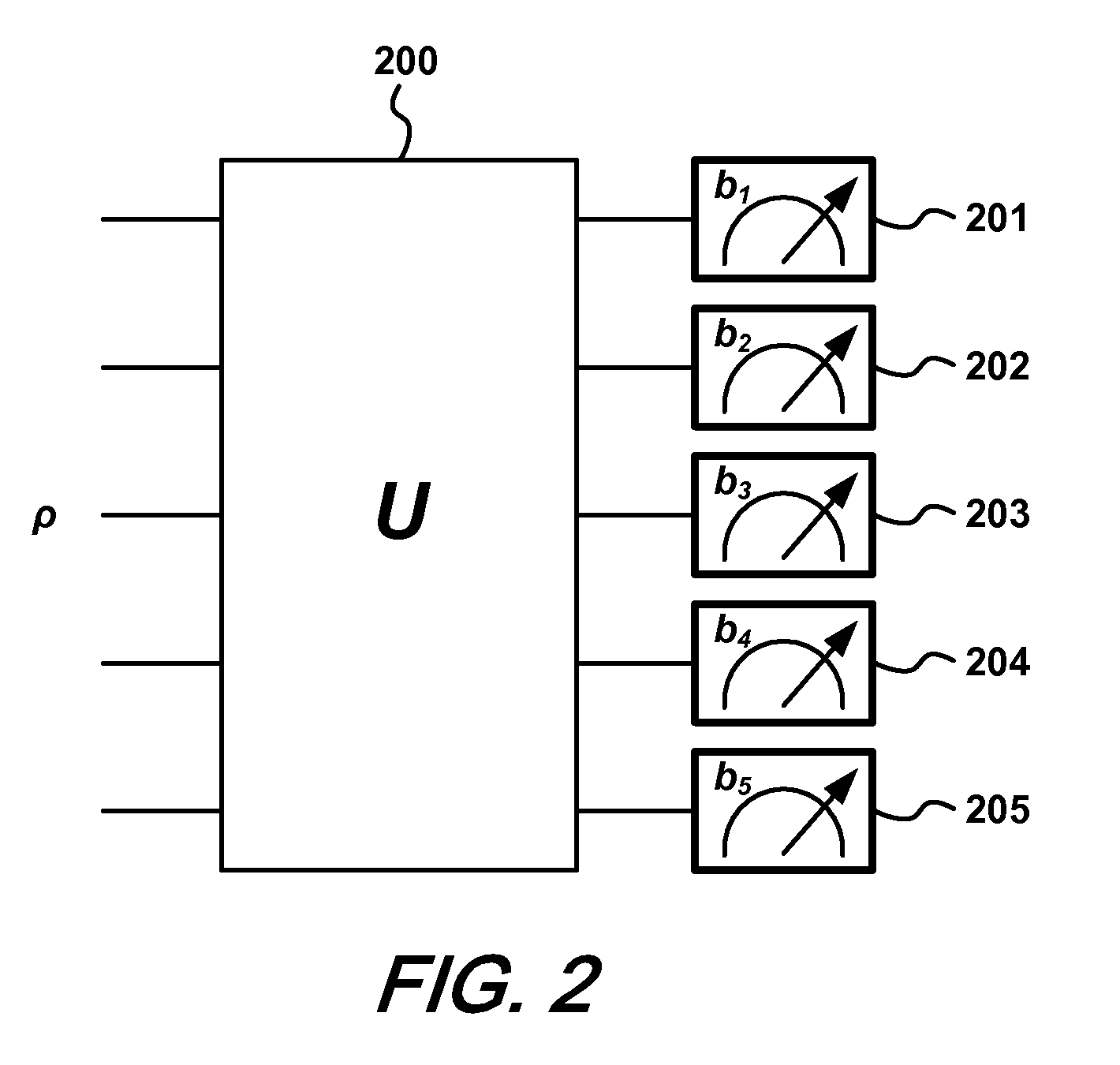

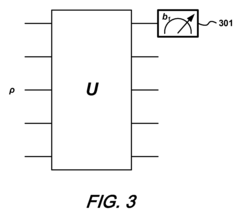

- A method using yes/no measurements of a single qubit to estimate the quantum state by applying a series of unitary operations and reconstructing the state with the expression ρ=(2d∑i=1d2-1piPi+(1-pi)(1-Pi))-(d2-2d)Id, where d is the system dimension, Pi are measurement projectors, and pi are their probabilities, allowing for efficient characterization of the quantum state with minimal measurements.

Quantum Error Mitigation Strategies

Quantum Error Mitigation Strategies represent a critical frontier in quantum computing, addressing the fundamental challenge of maintaining quantum state fidelity in the presence of noise. These strategies have evolved significantly from early error correction codes to more sophisticated approaches that balance computational overhead with error reduction efficacy.

The current landscape features several prominent methodologies, each with distinct advantages for measuring quantum state changes. Zero-noise extrapolation techniques systematically amplify noise during computation and extrapolate results back to the zero-noise limit, providing remarkably accurate state measurements without requiring additional qubits. This approach has demonstrated up to 10x improvement in measurement fidelity in recent IBM quantum processor implementations.

Probabilistic error cancellation offers another powerful approach, where quantum noise is characterized and then inverted through a quasi-probability distribution of quantum operations. This method effectively transforms the noise channel into an identity operation, allowing for more precise quantum state measurements, though at the cost of increased sampling overhead that scales exponentially with circuit depth.

Symmetry verification techniques leverage known symmetries in quantum systems to detect and filter out measurement results that violate these symmetries. This approach has proven particularly effective for quantum chemistry applications, where conservation laws provide natural verification criteria for quantum state measurements.

Machine learning-based error mitigation represents the newest frontier, where neural networks are trained to recognize and compensate for systematic errors in quantum measurements. Recent experiments by Google's quantum AI team demonstrated that these approaches can reduce measurement errors by up to 30% compared to traditional calibration methods.

Dynamical decoupling sequences, adapted from nuclear magnetic resonance techniques, offer yet another strategy by applying precisely timed control pulses that effectively "undo" environmental interactions, preserving quantum state coherence during measurement processes. These sequences have proven particularly effective for extending measurement fidelity in nitrogen-vacancy center quantum sensors.

The integration of multiple error mitigation strategies into comprehensive frameworks represents the current state-of-the-art approach. Quantum computing platforms from IBM, Google, and Rigetti now incorporate layered mitigation strategies that address different error sources simultaneously, enabling more reliable quantum state measurements even on noisy intermediate-scale quantum (NISQ) devices.

The current landscape features several prominent methodologies, each with distinct advantages for measuring quantum state changes. Zero-noise extrapolation techniques systematically amplify noise during computation and extrapolate results back to the zero-noise limit, providing remarkably accurate state measurements without requiring additional qubits. This approach has demonstrated up to 10x improvement in measurement fidelity in recent IBM quantum processor implementations.

Probabilistic error cancellation offers another powerful approach, where quantum noise is characterized and then inverted through a quasi-probability distribution of quantum operations. This method effectively transforms the noise channel into an identity operation, allowing for more precise quantum state measurements, though at the cost of increased sampling overhead that scales exponentially with circuit depth.

Symmetry verification techniques leverage known symmetries in quantum systems to detect and filter out measurement results that violate these symmetries. This approach has proven particularly effective for quantum chemistry applications, where conservation laws provide natural verification criteria for quantum state measurements.

Machine learning-based error mitigation represents the newest frontier, where neural networks are trained to recognize and compensate for systematic errors in quantum measurements. Recent experiments by Google's quantum AI team demonstrated that these approaches can reduce measurement errors by up to 30% compared to traditional calibration methods.

Dynamical decoupling sequences, adapted from nuclear magnetic resonance techniques, offer yet another strategy by applying precisely timed control pulses that effectively "undo" environmental interactions, preserving quantum state coherence during measurement processes. These sequences have proven particularly effective for extending measurement fidelity in nitrogen-vacancy center quantum sensors.

The integration of multiple error mitigation strategies into comprehensive frameworks represents the current state-of-the-art approach. Quantum computing platforms from IBM, Google, and Rigetti now incorporate layered mitigation strategies that address different error sources simultaneously, enabling more reliable quantum state measurements even on noisy intermediate-scale quantum (NISQ) devices.

Standardization Efforts in Quantum Measurement Protocols

The quantum measurement landscape has witnessed significant efforts toward standardization in recent years, driven by the need for consistent protocols across different quantum computing platforms. The IEEE Quantum Computing Standards Working Group established in 2019 has been instrumental in developing P7131, a standard focused on quantum measurement techniques and result interpretation. This initiative aims to create a unified framework for quantum measurements that can be applied across various quantum technologies, from superconducting qubits to trapped ions.

Similarly, the International Organization for Standardization (ISO) has formed the ISO/IEC JTC 1/SC 42 committee specifically addressing quantum computing standardization, with Working Group 4 dedicated to measurement protocols. Their ongoing work focuses on establishing terminology, metrics, and procedures for quantum state measurement that can be universally adopted by both academic and industrial stakeholders.

The National Institute of Standards and Technology (NIST) has published several special publications outlining recommended practices for quantum measurement, including SP 800-219, which addresses quantum state tomography protocols. These documents provide detailed guidelines for implementing reproducible measurement techniques and analyzing the resulting data with statistical rigor.

Consortium efforts like the Quantum Economic Development Consortium (QED-C) have established technical advisory committees specifically focused on measurement standards. Their Quantum Measurement Working Group has published white papers on best practices for characterizing quantum states and processes, emphasizing the importance of standardized error metrics and calibration procedures.

The European Telecommunications Standards Institute (ETSI) has launched the Quantum-Safe Cryptography group, which includes standardization of quantum measurement protocols relevant to quantum key distribution and quantum random number generation. Their technical specifications provide detailed measurement procedures that ensure security and reliability in quantum communication systems.

Academic collaborations have also contributed significantly to standardization efforts. The Quantum Characterization, Verification, and Validation (QCVV) community has organized multiple workshops resulting in published consensus documents on measurement techniques like randomized benchmarking, gate set tomography, and cross-entropy benchmarking. These community-driven standards have been widely adopted in research publications, facilitating meaningful comparison of results across different research groups.

Industry leaders including IBM, Google, and Microsoft have contributed to open-source quantum software frameworks that implement standardized measurement protocols. Libraries such as Qiskit, Cirq, and Q# incorporate measurement modules that adhere to emerging standards, helping to establish de facto conventions for quantum measurement implementation and reporting.

Similarly, the International Organization for Standardization (ISO) has formed the ISO/IEC JTC 1/SC 42 committee specifically addressing quantum computing standardization, with Working Group 4 dedicated to measurement protocols. Their ongoing work focuses on establishing terminology, metrics, and procedures for quantum state measurement that can be universally adopted by both academic and industrial stakeholders.

The National Institute of Standards and Technology (NIST) has published several special publications outlining recommended practices for quantum measurement, including SP 800-219, which addresses quantum state tomography protocols. These documents provide detailed guidelines for implementing reproducible measurement techniques and analyzing the resulting data with statistical rigor.

Consortium efforts like the Quantum Economic Development Consortium (QED-C) have established technical advisory committees specifically focused on measurement standards. Their Quantum Measurement Working Group has published white papers on best practices for characterizing quantum states and processes, emphasizing the importance of standardized error metrics and calibration procedures.

The European Telecommunications Standards Institute (ETSI) has launched the Quantum-Safe Cryptography group, which includes standardization of quantum measurement protocols relevant to quantum key distribution and quantum random number generation. Their technical specifications provide detailed measurement procedures that ensure security and reliability in quantum communication systems.

Academic collaborations have also contributed significantly to standardization efforts. The Quantum Characterization, Verification, and Validation (QCVV) community has organized multiple workshops resulting in published consensus documents on measurement techniques like randomized benchmarking, gate set tomography, and cross-entropy benchmarking. These community-driven standards have been widely adopted in research publications, facilitating meaningful comparison of results across different research groups.

Industry leaders including IBM, Google, and Microsoft have contributed to open-source quantum software frameworks that implement standardized measurement protocols. Libraries such as Qiskit, Cirq, and Q# incorporate measurement modules that adhere to emerging standards, helping to establish de facto conventions for quantum measurement implementation and reporting.

Unlock deeper insights with PatSnap Eureka Quick Research — get a full tech report to explore trends and direct your research. Try now!

Generate Your Research Report Instantly with AI Agent

Supercharge your innovation with PatSnap Eureka AI Agent Platform!