Hybrid DRAM–NVM Designs For Scalable In-Memory Computing Frameworks

SEP 2, 20259 MIN READ

Generate Your Research Report Instantly with AI Agent

PatSnap Eureka helps you evaluate technical feasibility & market potential.

Hybrid Memory Evolution and Research Objectives

The evolution of memory systems has been a critical factor in computing advancement, with traditional DRAM dominating for decades due to its balance of cost, density, and performance. However, as data-intensive applications proliferate, DRAM's limitations—particularly power consumption, density constraints, and scalability issues—have become increasingly apparent. This technological inflection point has catalyzed research into hybrid memory architectures that combine DRAM with emerging Non-Volatile Memory (NVM) technologies.

The hybrid DRAM-NVM paradigm represents a significant shift in memory system design, aiming to leverage the complementary strengths of both technologies. DRAM offers superior read/write speeds and endurance, while NVMs such as PCM, ReRAM, and STT-MRAM provide persistence, higher density, and lower static power consumption. The evolution of these hybrid systems has progressed from simple tiered arrangements to sophisticated heterogeneous architectures with intelligent data placement algorithms.

Recent advancements in in-memory computing frameworks have further accelerated interest in hybrid memory designs. These frameworks aim to minimize data movement between storage and processing units—a major bottleneck in modern computing systems. By performing computations directly within or near memory structures, these approaches can dramatically reduce energy consumption and latency for data-intensive workloads such as machine learning, graph processing, and database operations.

Our research objectives focus on developing scalable hybrid DRAM-NVM architectures specifically optimized for in-memory computing frameworks. We aim to address several critical challenges: optimizing data placement strategies between volatile and non-volatile tiers based on access patterns and computational requirements; designing efficient memory controllers capable of managing heterogeneous memory resources; developing programming models that abstract hardware complexity while enabling developers to leverage hybrid memory benefits; and creating runtime systems that dynamically adapt to changing workload characteristics.

Additionally, we seek to quantify the performance, energy efficiency, and cost benefits of hybrid memory systems across diverse application domains. This includes establishing benchmarking methodologies that accurately reflect real-world usage scenarios and developing analytical models to predict system behavior under various configurations. The ultimate goal is to establish design principles for next-generation memory hierarchies that can scale to meet the exponentially growing computational demands of emerging applications.

Through this research, we anticipate enabling new capabilities in data-intensive computing that were previously infeasible due to memory constraints, while simultaneously improving energy efficiency—a critical concern as computing continues to scale. The findings will inform both hardware architecture decisions and software optimization strategies for future computing platforms.

The hybrid DRAM-NVM paradigm represents a significant shift in memory system design, aiming to leverage the complementary strengths of both technologies. DRAM offers superior read/write speeds and endurance, while NVMs such as PCM, ReRAM, and STT-MRAM provide persistence, higher density, and lower static power consumption. The evolution of these hybrid systems has progressed from simple tiered arrangements to sophisticated heterogeneous architectures with intelligent data placement algorithms.

Recent advancements in in-memory computing frameworks have further accelerated interest in hybrid memory designs. These frameworks aim to minimize data movement between storage and processing units—a major bottleneck in modern computing systems. By performing computations directly within or near memory structures, these approaches can dramatically reduce energy consumption and latency for data-intensive workloads such as machine learning, graph processing, and database operations.

Our research objectives focus on developing scalable hybrid DRAM-NVM architectures specifically optimized for in-memory computing frameworks. We aim to address several critical challenges: optimizing data placement strategies between volatile and non-volatile tiers based on access patterns and computational requirements; designing efficient memory controllers capable of managing heterogeneous memory resources; developing programming models that abstract hardware complexity while enabling developers to leverage hybrid memory benefits; and creating runtime systems that dynamically adapt to changing workload characteristics.

Additionally, we seek to quantify the performance, energy efficiency, and cost benefits of hybrid memory systems across diverse application domains. This includes establishing benchmarking methodologies that accurately reflect real-world usage scenarios and developing analytical models to predict system behavior under various configurations. The ultimate goal is to establish design principles for next-generation memory hierarchies that can scale to meet the exponentially growing computational demands of emerging applications.

Through this research, we anticipate enabling new capabilities in data-intensive computing that were previously infeasible due to memory constraints, while simultaneously improving energy efficiency—a critical concern as computing continues to scale. The findings will inform both hardware architecture decisions and software optimization strategies for future computing platforms.

Market Analysis for In-Memory Computing Solutions

The in-memory computing (IMC) market is experiencing robust growth, driven by the increasing demand for real-time data processing and analytics across various industries. Current market valuations place the global IMC market at approximately 11.4 billion USD in 2023, with projections indicating a compound annual growth rate (CAGR) of 18.5% through 2028, potentially reaching 26.7 billion USD by the end of the forecast period.

The primary market drivers for hybrid DRAM-NVM solutions stem from the exponential growth in data volumes and the need for faster processing capabilities. Organizations across financial services, telecommunications, healthcare, and e-commerce sectors are increasingly adopting in-memory computing frameworks to gain competitive advantages through accelerated data processing and analytics.

Financial services represent the largest vertical market segment, accounting for roughly 28% of the total market share. This sector leverages in-memory computing for high-frequency trading, risk analysis, and fraud detection applications where millisecond advantages translate to significant financial gains. Healthcare follows at 19% market share, with applications in genomic sequencing, medical imaging, and patient data analytics.

Geographically, North America dominates the market with approximately 42% share, followed by Europe (27%) and Asia-Pacific (23%). The Asia-Pacific region, however, is expected to witness the highest growth rate over the next five years, primarily driven by rapid digitalization in China, India, and South Korea.

The hybrid DRAM-NVM solution segment specifically is growing at a faster rate than traditional in-memory computing solutions, with a CAGR of 22.3%. This accelerated growth reflects the increasing recognition of hybrid memory architectures as a viable solution to balance performance, cost, and persistence requirements.

Customer demand patterns indicate a strong preference for scalable solutions that can accommodate growing data volumes without proportional increases in costs. Enterprise surveys reveal that 76% of large organizations consider scalability as a critical factor in their in-memory computing investment decisions, while 68% prioritize total cost of ownership considerations.

The competitive landscape features both established technology giants and specialized startups. Major players include SAP, Oracle, and IBM in the enterprise software space, while hardware vendors like Intel, Samsung, and Micron are investing heavily in hybrid memory technologies. Specialized providers such as GridGain, GigaSpaces, and Hazelcast are gaining market share through focused in-memory computing frameworks.

Market challenges include concerns about data persistence, system complexity, and integration with existing infrastructure. Additionally, 63% of potential adopters cite cost as a significant barrier to implementation, highlighting the need for more cost-effective hybrid memory solutions.

The primary market drivers for hybrid DRAM-NVM solutions stem from the exponential growth in data volumes and the need for faster processing capabilities. Organizations across financial services, telecommunications, healthcare, and e-commerce sectors are increasingly adopting in-memory computing frameworks to gain competitive advantages through accelerated data processing and analytics.

Financial services represent the largest vertical market segment, accounting for roughly 28% of the total market share. This sector leverages in-memory computing for high-frequency trading, risk analysis, and fraud detection applications where millisecond advantages translate to significant financial gains. Healthcare follows at 19% market share, with applications in genomic sequencing, medical imaging, and patient data analytics.

Geographically, North America dominates the market with approximately 42% share, followed by Europe (27%) and Asia-Pacific (23%). The Asia-Pacific region, however, is expected to witness the highest growth rate over the next five years, primarily driven by rapid digitalization in China, India, and South Korea.

The hybrid DRAM-NVM solution segment specifically is growing at a faster rate than traditional in-memory computing solutions, with a CAGR of 22.3%. This accelerated growth reflects the increasing recognition of hybrid memory architectures as a viable solution to balance performance, cost, and persistence requirements.

Customer demand patterns indicate a strong preference for scalable solutions that can accommodate growing data volumes without proportional increases in costs. Enterprise surveys reveal that 76% of large organizations consider scalability as a critical factor in their in-memory computing investment decisions, while 68% prioritize total cost of ownership considerations.

The competitive landscape features both established technology giants and specialized startups. Major players include SAP, Oracle, and IBM in the enterprise software space, while hardware vendors like Intel, Samsung, and Micron are investing heavily in hybrid memory technologies. Specialized providers such as GridGain, GigaSpaces, and Hazelcast are gaining market share through focused in-memory computing frameworks.

Market challenges include concerns about data persistence, system complexity, and integration with existing infrastructure. Additionally, 63% of potential adopters cite cost as a significant barrier to implementation, highlighting the need for more cost-effective hybrid memory solutions.

DRAM-NVM Integration: Current Status and Technical Barriers

The integration of DRAM and Non-Volatile Memory (NVM) technologies represents a critical frontier in memory system design, particularly for in-memory computing frameworks. Currently, this integration faces several significant technical barriers that impede widespread adoption and optimal performance.

At the hardware level, the fundamental challenge lies in reconciling the disparate operational characteristics of DRAM and NVM technologies. DRAM offers superior read/write speeds and endurance but suffers from volatility and relatively high power consumption during idle states. Conversely, NVM technologies such as PCM, ReRAM, and STT-MRAM provide non-volatility and higher density but exhibit slower write speeds and limited write endurance. These inherent differences create significant integration challenges for unified memory controllers and address mapping schemes.

Interface standardization remains problematic, with no universally accepted protocol for hybrid memory systems. Current solutions often rely on proprietary interfaces or adaptations of existing standards like DDR4/5, which fail to optimize for the unique characteristics of NVM technologies. This lack of standardization increases development complexity and limits interoperability between components from different manufacturers.

Thermal management presents another substantial barrier. NVM write operations typically generate more heat than DRAM operations, creating thermal hotspots in hybrid designs. These temperature variations can affect both performance and reliability, particularly in dense computing environments where cooling is already challenging.

From a software perspective, current operating systems and memory management algorithms are predominantly optimized for homogeneous memory hierarchies. They lack sophisticated mechanisms to intelligently place data across heterogeneous memory types based on access patterns, persistence requirements, and performance characteristics. This results in suboptimal utilization of the hybrid memory resources.

Wear-leveling algorithms for hybrid systems remain relatively immature. While techniques exist for individual NVM technologies, coordinated wear management across heterogeneous memory types presents additional complexity. Current approaches often fail to balance performance optimization with endurance preservation effectively.

Security models for hybrid memory systems are also underdeveloped. The persistent nature of NVM introduces new attack vectors and data protection requirements that traditional DRAM-centric security models do not adequately address. Cold boot attacks, data remanence, and secure deletion present particular challenges in hybrid environments.

Cost considerations further complicate integration efforts. The manufacturing processes for DRAM and various NVM technologies differ significantly, making monolithic integration expensive. Current solutions typically employ multi-chip packages or separate memory channels, increasing system complexity and potentially introducing performance bottlenecks.

At the hardware level, the fundamental challenge lies in reconciling the disparate operational characteristics of DRAM and NVM technologies. DRAM offers superior read/write speeds and endurance but suffers from volatility and relatively high power consumption during idle states. Conversely, NVM technologies such as PCM, ReRAM, and STT-MRAM provide non-volatility and higher density but exhibit slower write speeds and limited write endurance. These inherent differences create significant integration challenges for unified memory controllers and address mapping schemes.

Interface standardization remains problematic, with no universally accepted protocol for hybrid memory systems. Current solutions often rely on proprietary interfaces or adaptations of existing standards like DDR4/5, which fail to optimize for the unique characteristics of NVM technologies. This lack of standardization increases development complexity and limits interoperability between components from different manufacturers.

Thermal management presents another substantial barrier. NVM write operations typically generate more heat than DRAM operations, creating thermal hotspots in hybrid designs. These temperature variations can affect both performance and reliability, particularly in dense computing environments where cooling is already challenging.

From a software perspective, current operating systems and memory management algorithms are predominantly optimized for homogeneous memory hierarchies. They lack sophisticated mechanisms to intelligently place data across heterogeneous memory types based on access patterns, persistence requirements, and performance characteristics. This results in suboptimal utilization of the hybrid memory resources.

Wear-leveling algorithms for hybrid systems remain relatively immature. While techniques exist for individual NVM technologies, coordinated wear management across heterogeneous memory types presents additional complexity. Current approaches often fail to balance performance optimization with endurance preservation effectively.

Security models for hybrid memory systems are also underdeveloped. The persistent nature of NVM introduces new attack vectors and data protection requirements that traditional DRAM-centric security models do not adequately address. Cold boot attacks, data remanence, and secure deletion present particular challenges in hybrid environments.

Cost considerations further complicate integration efforts. The manufacturing processes for DRAM and various NVM technologies differ significantly, making monolithic integration expensive. Current solutions typically employ multi-chip packages or separate memory channels, increasing system complexity and potentially introducing performance bottlenecks.

Current Hybrid DRAM-NVM Implementation Approaches

01 Memory architecture integration for hybrid DRAM-NVM systems

Hybrid memory systems that integrate DRAM and non-volatile memory (NVM) technologies can be designed with specialized architectures to optimize performance and scalability. These architectures typically involve tiered memory hierarchies where DRAM serves as a fast cache or buffer while NVM provides higher capacity storage. The integration requires careful consideration of memory controllers, address mapping, and data movement between different memory types to maintain performance while scaling to larger capacities.- Memory architecture for hybrid DRAM-NVM systems: Hybrid memory systems that combine DRAM and non-volatile memory (NVM) technologies can be designed with specific architectures to improve scalability. These architectures typically involve tiered memory structures where DRAM serves as a fast cache or buffer while NVM provides larger capacity storage. The memory controllers in these systems are specially designed to manage data movement between the different memory types, optimizing for both performance and power efficiency while enabling greater overall memory capacity scaling.

- Data management techniques in hybrid memory systems: Effective data management is crucial for scalable hybrid DRAM-NVM designs. This includes intelligent data placement algorithms that determine which data should reside in DRAM versus NVM based on access patterns, temperature, or criticality. Migration policies control when and how data moves between memory tiers, while wear-leveling techniques ensure the longevity of NVM components. These management techniques enable hybrid memory systems to scale by optimizing the utilization of both memory types according to their strengths and limitations.

- Integration technologies for hybrid memory solutions: Physical integration technologies enable scalable hybrid DRAM-NVM designs through various packaging and interconnect approaches. These include 3D stacking of memory dies, through-silicon vias (TSVs), interposers for heterogeneous integration, and system-in-package solutions. Such integration technologies allow for higher memory density, reduced latency between different memory types, and improved power efficiency, all of which contribute to better scalability of hybrid memory systems.

- Power management for scalable hybrid memory: Power management techniques are essential for scaling hybrid DRAM-NVM systems, particularly in data centers and mobile applications. These techniques include dynamic power states for different memory regions, selective refresh operations for DRAM components, and power-aware data placement policies. By optimizing power consumption while maintaining performance, these approaches enable hybrid memory systems to scale more effectively within thermal and energy constraints.

- Security and reliability enhancements for hybrid memory: Security and reliability features are critical for scalable hybrid DRAM-NVM designs, especially as memory capacity increases. These include error correction codes tailored for different memory types, encryption mechanisms for sensitive data stored in non-volatile regions, and secure erase capabilities. Reliability techniques such as redundancy, checkpointing, and recovery mechanisms help maintain data integrity across the hybrid memory system, enabling these systems to scale while maintaining trustworthiness.

02 Power management techniques for scalable hybrid memory

Power efficiency is critical for scaling hybrid DRAM-NVM systems. Various techniques are employed to reduce power consumption while maintaining performance, including selective activation of memory regions, power-aware data placement, and dynamic voltage and frequency scaling. These approaches help manage the different power characteristics of DRAM (which consumes power for data retention) and NVM (which typically has higher write energy but lower standby power), enabling more scalable memory solutions for energy-constrained environments.Expand Specific Solutions03 Data management and migration strategies in hybrid memory

Efficient data management between DRAM and NVM components is essential for scalable hybrid memory systems. This includes intelligent data placement algorithms that consider access patterns, data temperature (hot vs. cold data), and the performance characteristics of each memory type. Automated migration mechanisms move frequently accessed data to faster DRAM while keeping less frequently accessed data in NVM. These strategies optimize overall system performance while leveraging the capacity advantages of NVM technologies.Expand Specific Solutions04 Memory controller designs for hybrid systems

Specialized memory controllers are developed to manage the complexity of hybrid DRAM-NVM systems. These controllers implement address translation, wear-leveling for NVM, and coordinated access scheduling across heterogeneous memory types. Advanced controller designs incorporate predictive algorithms to anticipate data needs and optimize data placement. As systems scale to incorporate more memory channels and higher capacities, these controllers become increasingly sophisticated to maintain performance while managing the different timing and reliability characteristics of DRAM and NVM.Expand Specific Solutions05 Reliability and endurance enhancement for scalable hybrid memory

Ensuring reliability while scaling hybrid memory systems presents unique challenges due to the different failure modes and endurance characteristics of DRAM and NVM. Techniques such as error correction codes, wear-leveling algorithms, and redundancy schemes are implemented to extend the lifetime of NVM components. Additionally, intelligent refresh management for DRAM and data integrity verification methods help maintain system reliability as capacity scales. These approaches work together to provide robust memory systems that can scale while maintaining data integrity over extended operational periods.Expand Specific Solutions

Leading Companies and Research Institutions in Hybrid Memory

The hybrid DRAM-NVM computing framework market is in a transitional growth phase, with an estimated market size of $5-7 billion and projected annual growth of 25-30%. This technology addresses memory-compute bottlenecks by combining DRAM's speed with NVM's persistence and density. The competitive landscape features established players like IBM, Intel, and Huawei driving commercial adoption, while academic institutions (Huazhong University, Shanghai Jiao Tong University) focus on fundamental research. Memory specialists Rambus, Netlist, and Avalanche Technology are developing specialized solutions, while system integrators like Inspur are creating enterprise-ready implementations. The technology is approaching commercial maturity with early deployments in data centers and high-performance computing environments.

Huawei Technologies Co., Ltd.

Technical Solution: Huawei has developed an innovative hybrid memory architecture called "Memory-Centric Computing Architecture" (MCCA) that integrates DRAM with various NVM technologies including 3D NAND and ReRAM. Their approach emphasizes a disaggregated memory pool design where memory resources can be dynamically allocated across computing nodes through their proprietary memory fabric. Huawei's architecture implements a multi-tiered memory hierarchy with intelligent data placement algorithms that automatically migrate data between DRAM and NVM based on access patterns, temperature, and application requirements. Their solution includes hardware-accelerated address translation mechanisms to minimize the overhead of managing the heterogeneous memory space. Huawei has also developed compiler-level optimizations and runtime systems that transparently manage data movement between memory tiers, allowing legacy applications to benefit from hybrid memory without code modifications. Their architecture supports both byte-addressable and block-addressable NVM technologies, providing flexibility for different workload characteristics.

Strengths: Comprehensive end-to-end solution from hardware to software stack; advanced memory fabric technology for disaggregated memory pools; strong integration with AI and big data frameworks. Weaknesses: Limited ecosystem support outside Huawei's own hardware platforms; proprietary interfaces may limit interoperability; relatively new technology compared to established players.

IBM Corp.

Technical Solution: IBM has pioneered Phase-Change Memory (PCM) technology for hybrid memory systems, focusing on creating computational storage architectures that bring processing closer to data. Their hybrid DRAM-NVM design incorporates PCM as a non-volatile tier with DRAM serving as a high-speed buffer. IBM's approach emphasizes in-memory computing frameworks that can dynamically migrate data between memory tiers based on access patterns and computational requirements. Their architecture includes specialized memory controllers that handle the asymmetric read/write characteristics of PCM while maintaining coherence with DRAM. IBM has developed a memory-centric computing architecture called "Memory-Driven Computing" that leverages these hybrid systems to enable massive parallel processing for AI and big data workloads. The company has also created programming abstractions that hide the complexity of the heterogeneous memory hierarchy, allowing applications to benefit from NVM persistence without significant code modifications.

Strengths: Advanced research in phase-change memory with demonstrated scalability for enterprise workloads; strong integration with AI and analytics frameworks; robust memory management techniques for handling different memory characteristics. Weaknesses: Complex implementation requiring specialized hardware controllers; higher latency for certain write operations compared to pure DRAM solutions; thermal management challenges in dense deployments.

Key Patents and Innovations in Memory Integration

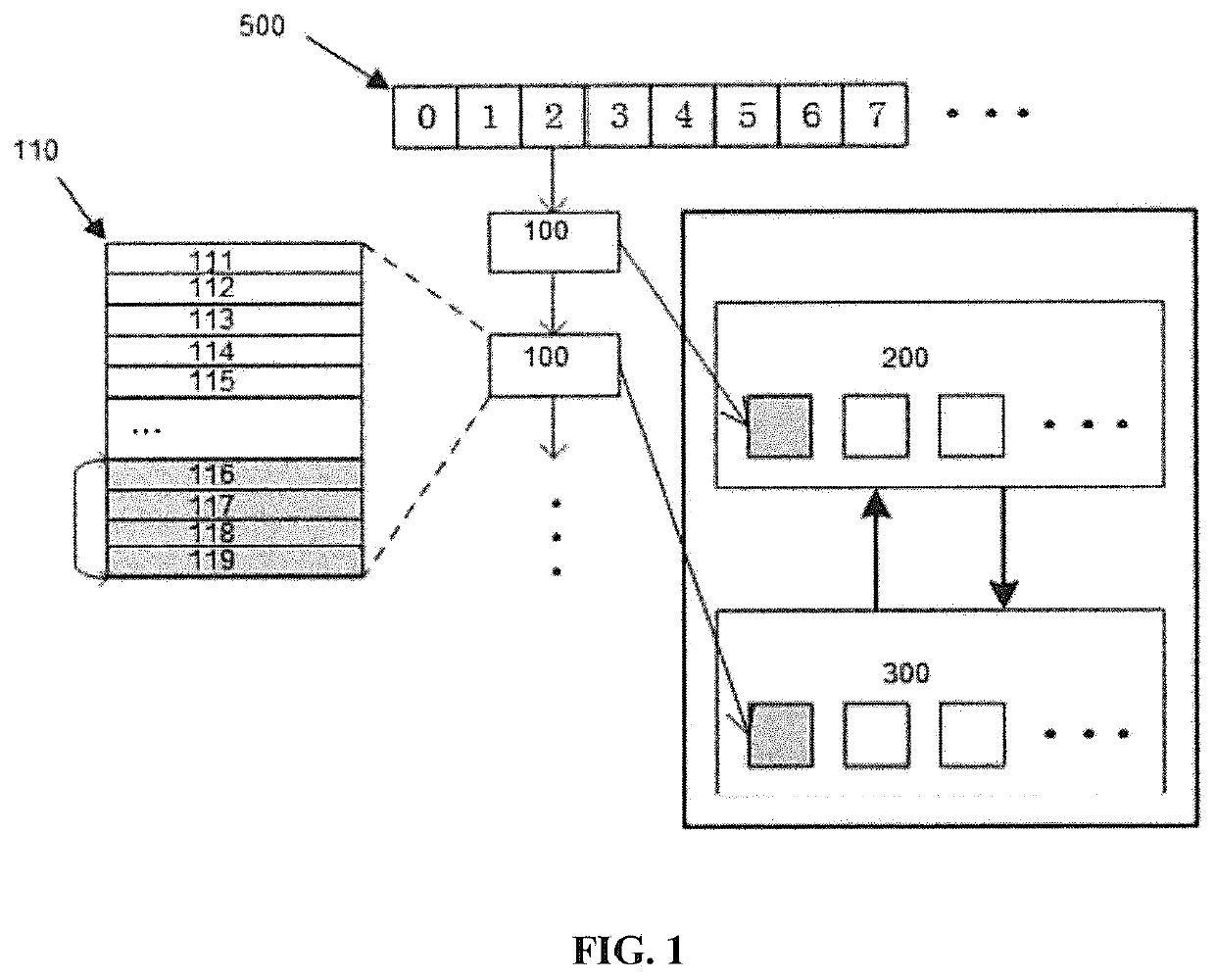

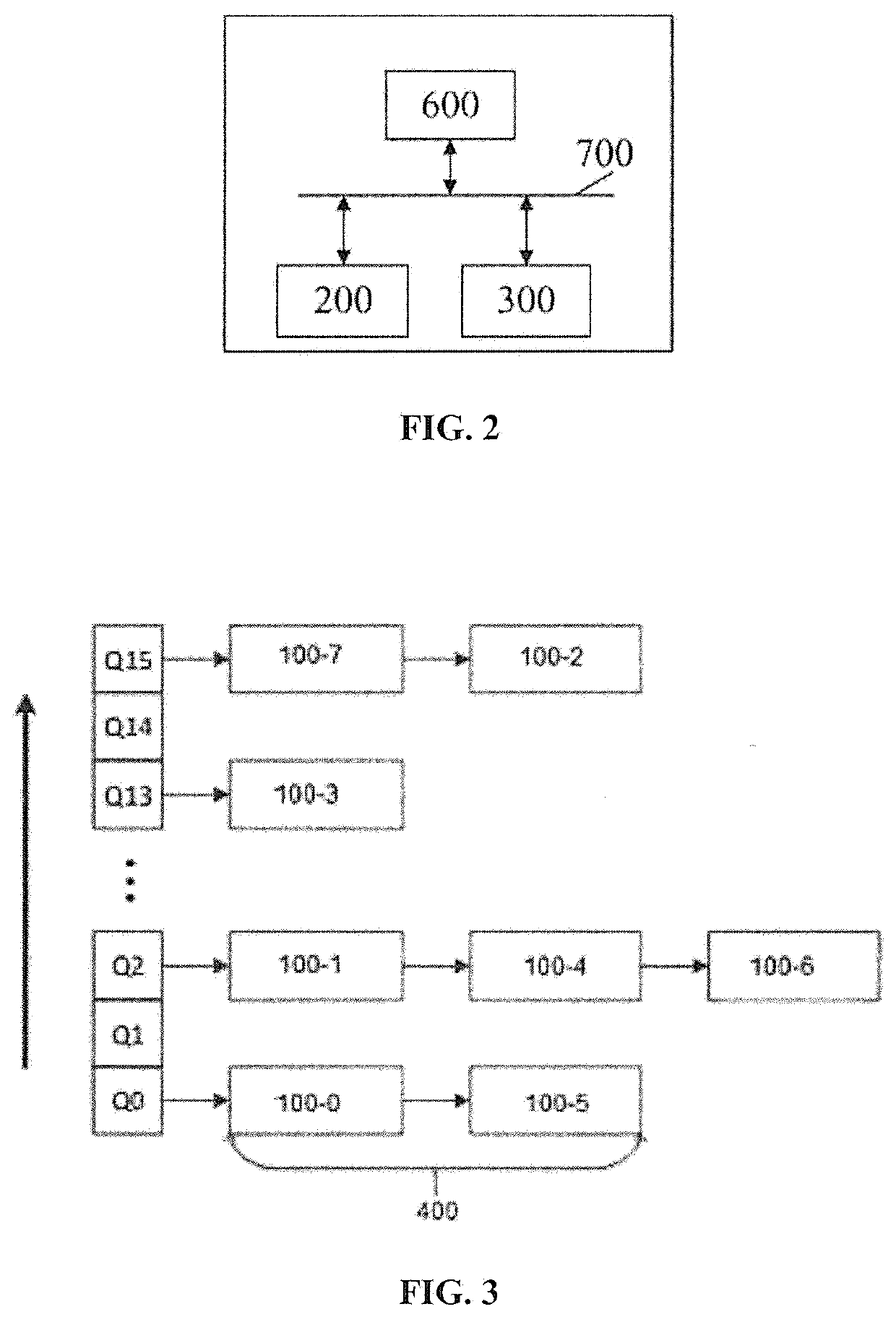

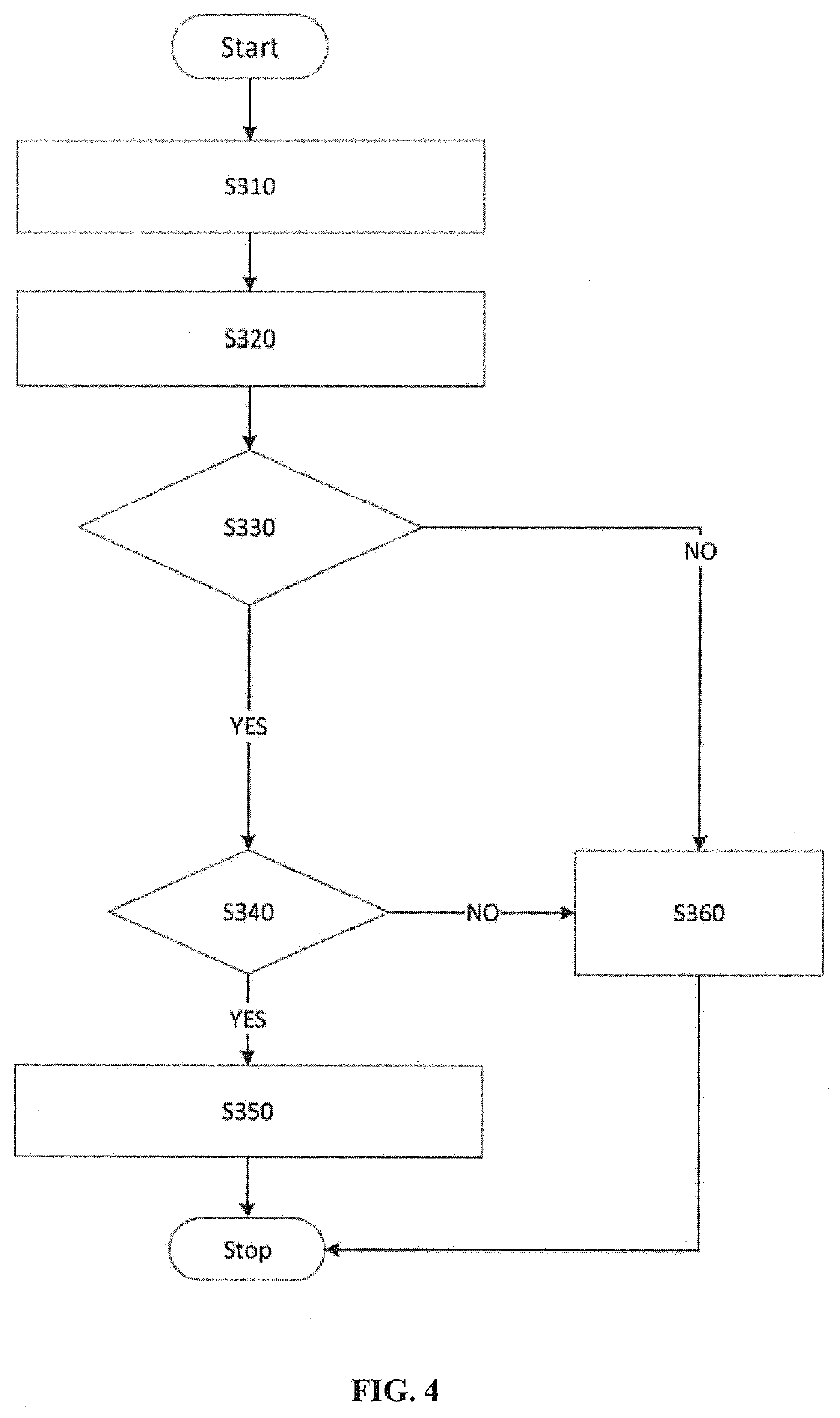

SCALABLE In-MEMORY OBJECT STORAGE SYSTEM USING HYBRID MEMORY DEVICES

PatentActiveUS20200333981A1

Innovation

- A scalable storage system that monitors access hotness information of each memory object at the application level, dynamically adjusting storage destinations between DRAM and NVM domains using a slab-based allocation mechanism, with independent hotness thresholds for each slab class and a multi-queue strategy for efficient data management.

Non-Volatile Dynamic Random Access Memory

PatentActiveUS20210118864A1

Innovation

- A stacked memory architecture that combines DRAM with multiple layers of non-volatile memory (NVM) using direct bonding interconnects, where the RAM layer is coupled to NVM layers, allowing for high-bandwidth, high-pincount interconnects and separate logic for data storage and management, enabling efficient data tagging and movement between short-term RAM storage and long-term NVM storage.

Energy Efficiency and Performance Metrics

Energy efficiency and performance metrics are critical evaluation parameters for hybrid DRAM-NVM architectures in in-memory computing frameworks. These metrics provide quantitative measures to assess the viability and effectiveness of different design approaches. The energy consumption in hybrid memory systems can be categorized into dynamic energy (consumed during read/write operations) and static energy (leakage power when memory is idle). NVM technologies generally offer superior static energy characteristics compared to DRAM, with power leakage reductions of up to 95% in some implementations.

Performance metrics for hybrid DRAM-NVM systems include latency, throughput, and bandwidth. Latency measures the time delay for memory operations, with DRAM typically offering read latencies of 10-50ns while NVMs range from 20ns to 1μs depending on the technology. Throughput quantifies the data processing rate, typically measured in operations per second (OPS) or FLOPS for computational tasks. Bandwidth represents the data transfer rate between memory and processing units, measured in GB/s.

The energy-delay product (EDP) serves as a composite metric that balances energy efficiency with performance, providing a more holistic evaluation framework. For in-memory computing applications, specialized metrics have emerged, including computational density (operations per unit area) and energy per operation, which are particularly relevant for edge computing deployments where energy constraints are significant.

Recent research indicates that hybrid architectures can achieve 40-60% energy savings compared to pure DRAM solutions while maintaining comparable performance levels. The energy efficiency advantage becomes more pronounced in data-intensive applications with irregular access patterns, where intelligent data placement strategies between DRAM and NVM tiers can minimize energy-expensive data movements.

Temperature sensitivity represents another important consideration, as operating temperatures affect both performance and reliability. DRAM typically operates optimally between 0-85°C, while some NVM technologies like PCM can function reliably at higher temperatures (up to 125°C), potentially reducing cooling requirements and associated energy costs in data center environments.

Endurance metrics track the write cycle limitations of memory cells before failure. While DRAM offers practically unlimited write cycles (>10^15), NVM technologies have more restricted endurance ranging from 10^4 to 10^12 cycles depending on the specific technology. This limitation necessitates wear-leveling algorithms and impacts the long-term energy efficiency calculations for hybrid memory systems.

Performance metrics for hybrid DRAM-NVM systems include latency, throughput, and bandwidth. Latency measures the time delay for memory operations, with DRAM typically offering read latencies of 10-50ns while NVMs range from 20ns to 1μs depending on the technology. Throughput quantifies the data processing rate, typically measured in operations per second (OPS) or FLOPS for computational tasks. Bandwidth represents the data transfer rate between memory and processing units, measured in GB/s.

The energy-delay product (EDP) serves as a composite metric that balances energy efficiency with performance, providing a more holistic evaluation framework. For in-memory computing applications, specialized metrics have emerged, including computational density (operations per unit area) and energy per operation, which are particularly relevant for edge computing deployments where energy constraints are significant.

Recent research indicates that hybrid architectures can achieve 40-60% energy savings compared to pure DRAM solutions while maintaining comparable performance levels. The energy efficiency advantage becomes more pronounced in data-intensive applications with irregular access patterns, where intelligent data placement strategies between DRAM and NVM tiers can minimize energy-expensive data movements.

Temperature sensitivity represents another important consideration, as operating temperatures affect both performance and reliability. DRAM typically operates optimally between 0-85°C, while some NVM technologies like PCM can function reliably at higher temperatures (up to 125°C), potentially reducing cooling requirements and associated energy costs in data center environments.

Endurance metrics track the write cycle limitations of memory cells before failure. While DRAM offers practically unlimited write cycles (>10^15), NVM technologies have more restricted endurance ranging from 10^4 to 10^12 cycles depending on the specific technology. This limitation necessitates wear-leveling algorithms and impacts the long-term energy efficiency calculations for hybrid memory systems.

Standardization Efforts for Hybrid Memory Interfaces

Standardization efforts for hybrid memory interfaces have become increasingly critical as the industry moves toward widespread adoption of heterogeneous memory systems. The Joint Electron Device Engineering Council (JEDEC) has been at the forefront, developing specifications that enable seamless integration between DRAM and emerging NVM technologies. Their JC-42 committee has established working groups specifically focused on hybrid memory standards, addressing challenges in signal integrity, power management, and command protocols across different memory types.

The Compute Express Link (CXL) consortium represents another significant standardization effort, developing an open industry standard that enables a high-speed CPU-to-device connection, optimized for hybrid memory systems. CXL 2.0 and 3.0 specifications include memory pooling and sharing capabilities essential for hybrid DRAM-NVM architectures, allowing coherent memory access across heterogeneous memory resources.

The Open Memory Interface (OMI) specification, supported by OpenCAPI Consortium members, provides a standardized approach for connecting different memory technologies to processing units. This vendor-neutral standard facilitates the integration of DRAM and NVM in computing systems without requiring extensive hardware redesigns when adopting new memory technologies.

Gen-Z, a memory-semantic fabric protocol, has established specifications for memory-centric data access across hybrid memory pools. Their standards enable memory-semantic operations across DRAM and various NVM technologies, supporting the development of composable infrastructure where memory resources can be dynamically allocated based on workload requirements.

The Storage Networking Industry Association (SNIA) has developed the Persistent Memory Programming Model, which provides standardized software interfaces for applications to interact with hybrid memory systems. This model includes specifications for direct access (DAX) operations, atomic operations, and persistence guarantees across heterogeneous memory types.

Industry collaboration through the Open Compute Project (OCP) has yielded reference designs for server architectures incorporating hybrid memory subsystems. These designs establish de facto standards for physical integration, thermal management, and power delivery in systems utilizing both DRAM and NVM technologies.

The CCIX Consortium (Cache Coherent Interconnect for Accelerators) has developed specifications enabling coherent memory access across processors and accelerators with hybrid memory configurations, facilitating efficient data sharing between computational units and heterogeneous memory resources without redundant data copies.

These standardization efforts collectively address the technical challenges of hybrid memory integration while providing the industry with consistent frameworks for hardware design, software development, and system architecture, ultimately accelerating the adoption of hybrid DRAM-NVM solutions for in-memory computing applications.

The Compute Express Link (CXL) consortium represents another significant standardization effort, developing an open industry standard that enables a high-speed CPU-to-device connection, optimized for hybrid memory systems. CXL 2.0 and 3.0 specifications include memory pooling and sharing capabilities essential for hybrid DRAM-NVM architectures, allowing coherent memory access across heterogeneous memory resources.

The Open Memory Interface (OMI) specification, supported by OpenCAPI Consortium members, provides a standardized approach for connecting different memory technologies to processing units. This vendor-neutral standard facilitates the integration of DRAM and NVM in computing systems without requiring extensive hardware redesigns when adopting new memory technologies.

Gen-Z, a memory-semantic fabric protocol, has established specifications for memory-centric data access across hybrid memory pools. Their standards enable memory-semantic operations across DRAM and various NVM technologies, supporting the development of composable infrastructure where memory resources can be dynamically allocated based on workload requirements.

The Storage Networking Industry Association (SNIA) has developed the Persistent Memory Programming Model, which provides standardized software interfaces for applications to interact with hybrid memory systems. This model includes specifications for direct access (DAX) operations, atomic operations, and persistence guarantees across heterogeneous memory types.

Industry collaboration through the Open Compute Project (OCP) has yielded reference designs for server architectures incorporating hybrid memory subsystems. These designs establish de facto standards for physical integration, thermal management, and power delivery in systems utilizing both DRAM and NVM technologies.

The CCIX Consortium (Cache Coherent Interconnect for Accelerators) has developed specifications enabling coherent memory access across processors and accelerators with hybrid memory configurations, facilitating efficient data sharing between computational units and heterogeneous memory resources without redundant data copies.

These standardization efforts collectively address the technical challenges of hybrid memory integration while providing the industry with consistent frameworks for hardware design, software development, and system architecture, ultimately accelerating the adoption of hybrid DRAM-NVM solutions for in-memory computing applications.

Unlock deeper insights with PatSnap Eureka Quick Research — get a full tech report to explore trends and direct your research. Try now!

Generate Your Research Report Instantly with AI Agent

Supercharge your innovation with PatSnap Eureka AI Agent Platform!