Model Predictive Control In Digital Twin-Based Process Optimization

SEP 9, 20259 MIN READ

Generate Your Research Report Instantly with AI Agent

PatSnap Eureka helps you evaluate technical feasibility & market potential.

MPC and Digital Twin Evolution Background

Model Predictive Control (MPC) emerged in the late 1970s as an advanced process control methodology, initially developed for petroleum refining and petrochemical industries. The fundamental concept behind MPC involves using a dynamic model of the process to predict future behavior and optimize control actions accordingly. Early implementations were limited by computational constraints, restricting applications to processes with relatively slow dynamics and modest complexity.

The evolution of MPC has been closely tied to advancements in computing power. The 1980s saw the development of Dynamic Matrix Control (DMC) and Quadratic Dynamic Matrix Control (QDMC), which represented significant milestones in making MPC commercially viable. By the 1990s, MPC had expanded beyond petrochemical applications to power generation, pulp and paper manufacturing, and food processing industries.

Digital Twin technology, meanwhile, has a more recent history, with the term first coined by Dr. Michael Grieves at the University of Michigan in 2002. Initially conceptualized as a virtual representation of physical assets, Digital Twin technology gained significant momentum with the advent of Industry 4.0 and the Industrial Internet of Things (IIoT) in the 2010s. The core principle involves creating a digital replica of physical entities, processes, or systems that can be used for simulation, analysis, and optimization.

The convergence of MPC and Digital Twin technologies represents a natural progression in industrial process optimization. This integration began to take shape around 2015, as industries sought more sophisticated approaches to process control and optimization. The combination leverages the predictive capabilities of MPC with the high-fidelity virtual representation provided by Digital Twins, creating a powerful framework for real-time optimization.

Recent years have witnessed accelerated development in this integrated approach, driven by exponential growth in computational capabilities, advances in machine learning, and the proliferation of industrial sensors. Cloud computing and edge processing have further enhanced the practical implementation of these computationally intensive technologies in industrial settings.

The current technological landscape features MPC algorithms that can handle nonlinear systems, incorporate uncertainty, and adapt to changing conditions. Simultaneously, Digital Twin implementations have evolved to include not just physical characteristics but also behavioral and operational aspects of the systems they represent. This convergence creates unprecedented opportunities for process optimization across diverse industrial sectors.

The evolution of MPC has been closely tied to advancements in computing power. The 1980s saw the development of Dynamic Matrix Control (DMC) and Quadratic Dynamic Matrix Control (QDMC), which represented significant milestones in making MPC commercially viable. By the 1990s, MPC had expanded beyond petrochemical applications to power generation, pulp and paper manufacturing, and food processing industries.

Digital Twin technology, meanwhile, has a more recent history, with the term first coined by Dr. Michael Grieves at the University of Michigan in 2002. Initially conceptualized as a virtual representation of physical assets, Digital Twin technology gained significant momentum with the advent of Industry 4.0 and the Industrial Internet of Things (IIoT) in the 2010s. The core principle involves creating a digital replica of physical entities, processes, or systems that can be used for simulation, analysis, and optimization.

The convergence of MPC and Digital Twin technologies represents a natural progression in industrial process optimization. This integration began to take shape around 2015, as industries sought more sophisticated approaches to process control and optimization. The combination leverages the predictive capabilities of MPC with the high-fidelity virtual representation provided by Digital Twins, creating a powerful framework for real-time optimization.

Recent years have witnessed accelerated development in this integrated approach, driven by exponential growth in computational capabilities, advances in machine learning, and the proliferation of industrial sensors. Cloud computing and edge processing have further enhanced the practical implementation of these computationally intensive technologies in industrial settings.

The current technological landscape features MPC algorithms that can handle nonlinear systems, incorporate uncertainty, and adapt to changing conditions. Simultaneously, Digital Twin implementations have evolved to include not just physical characteristics but also behavioral and operational aspects of the systems they represent. This convergence creates unprecedented opportunities for process optimization across diverse industrial sectors.

Market Demand for Digital Twin Process Optimization

The digital twin market for process optimization is experiencing robust growth, driven by increasing demands for operational efficiency and sustainability across industries. According to recent market analyses, the global digital twin market is projected to reach $48.2 billion by 2026, with a compound annual growth rate of 42.7% from 2021. Process optimization applications represent approximately 30% of this market, highlighting significant demand for advanced control methodologies like Model Predictive Control (MPC).

Manufacturing sectors, particularly automotive, aerospace, and consumer goods, demonstrate the highest adoption rates of digital twin technology for process optimization. These industries seek to minimize production costs while maximizing output quality through real-time monitoring and predictive capabilities. The pharmaceutical and chemical processing industries follow closely, with implementation rates increasing by 37% annually as regulatory pressures and quality control requirements intensify.

Energy and utility companies represent another substantial market segment, with particular emphasis on optimizing resource consumption and reducing environmental impact. Digital twin implementations incorporating MPC have demonstrated energy savings between 15-25% in complex industrial processes, creating compelling economic incentives for adoption. This sector's demand is further amplified by global sustainability initiatives and carbon reduction targets.

The market shows regional variations in adoption patterns. North America currently leads with approximately 38% market share, followed by Europe at 31% and Asia-Pacific at 24%. However, the Asia-Pacific region exhibits the fastest growth rate at 47.3% annually, driven by rapid industrialization and significant investments in smart manufacturing infrastructure, particularly in China, Japan, and South Korea.

Customer requirements increasingly emphasize integration capabilities with existing systems, scalability, and return on investment metrics. Organizations seek digital twin solutions that can demonstrate tangible process improvements within 12-18 months of implementation. This has created market demand for more accessible MPC implementations that require less specialized expertise to deploy and maintain.

Cloud-based digital twin platforms are gaining significant traction, with market share increasing from 23% in 2019 to 41% in 2022. This shift reflects growing preferences for solutions offering flexibility, reduced infrastructure requirements, and subscription-based pricing models. The ability to implement MPC algorithms within cloud environments has become a key differentiator for solution providers.

Emerging market trends indicate growing demand for digital twin solutions that incorporate not only process optimization but also predictive maintenance and scenario planning capabilities. This convergence of functionalities is expected to accelerate market growth by an additional 15-20% over the next five years as organizations seek comprehensive operational intelligence platforms rather than isolated optimization tools.

Manufacturing sectors, particularly automotive, aerospace, and consumer goods, demonstrate the highest adoption rates of digital twin technology for process optimization. These industries seek to minimize production costs while maximizing output quality through real-time monitoring and predictive capabilities. The pharmaceutical and chemical processing industries follow closely, with implementation rates increasing by 37% annually as regulatory pressures and quality control requirements intensify.

Energy and utility companies represent another substantial market segment, with particular emphasis on optimizing resource consumption and reducing environmental impact. Digital twin implementations incorporating MPC have demonstrated energy savings between 15-25% in complex industrial processes, creating compelling economic incentives for adoption. This sector's demand is further amplified by global sustainability initiatives and carbon reduction targets.

The market shows regional variations in adoption patterns. North America currently leads with approximately 38% market share, followed by Europe at 31% and Asia-Pacific at 24%. However, the Asia-Pacific region exhibits the fastest growth rate at 47.3% annually, driven by rapid industrialization and significant investments in smart manufacturing infrastructure, particularly in China, Japan, and South Korea.

Customer requirements increasingly emphasize integration capabilities with existing systems, scalability, and return on investment metrics. Organizations seek digital twin solutions that can demonstrate tangible process improvements within 12-18 months of implementation. This has created market demand for more accessible MPC implementations that require less specialized expertise to deploy and maintain.

Cloud-based digital twin platforms are gaining significant traction, with market share increasing from 23% in 2019 to 41% in 2022. This shift reflects growing preferences for solutions offering flexibility, reduced infrastructure requirements, and subscription-based pricing models. The ability to implement MPC algorithms within cloud environments has become a key differentiator for solution providers.

Emerging market trends indicate growing demand for digital twin solutions that incorporate not only process optimization but also predictive maintenance and scenario planning capabilities. This convergence of functionalities is expected to accelerate market growth by an additional 15-20% over the next five years as organizations seek comprehensive operational intelligence platforms rather than isolated optimization tools.

Current MPC Implementation Challenges in Digital Twins

Despite the promising integration of Model Predictive Control (MPC) with digital twin technology, several significant challenges impede widespread implementation. The computational complexity of MPC algorithms presents a primary obstacle, particularly when dealing with high-dimensional systems or requiring real-time optimization in digital twins. Current MPC implementations often struggle to balance model accuracy with computational efficiency, leading to performance compromises in complex industrial processes.

Data quality and availability issues further complicate MPC implementation in digital twins. The effectiveness of MPC algorithms heavily depends on accurate process models, yet many industrial environments lack sufficient high-quality historical data for model development and validation. Sensor noise, missing data points, and inconsistent sampling rates frequently degrade model performance, while the dynamic nature of industrial processes requires continuous model updates that many current systems cannot efficiently accommodate.

Integration challenges between MPC systems and existing industrial infrastructure create significant implementation barriers. Legacy control systems often utilize proprietary protocols and closed architectures that resist seamless integration with modern MPC solutions. The resulting compatibility issues necessitate extensive customization, increasing implementation costs and timelines while potentially introducing system vulnerabilities.

Uncertainty management represents another critical challenge in current MPC implementations. Industrial processes inherently contain various uncertainties, including measurement errors, process disturbances, and model mismatches. While robust MPC variants exist theoretically, their practical implementation in digital twins remains challenging due to increased computational requirements and the difficulty of accurately quantifying uncertainty bounds in complex systems.

The scalability limitations of current MPC solutions present obstacles for enterprise-wide deployment. Many existing implementations function effectively for individual process units but struggle when scaled to plant-wide or enterprise-level digital twins. This scalability issue stems from both computational constraints and the difficulty of maintaining consistent model quality across diverse process units with varying dynamics and operational constraints.

Human expertise dependency continues to hinder widespread MPC adoption in digital twins. Current implementations typically require specialized knowledge in control theory, optimization techniques, and process engineering. This expertise gap limits the accessibility of MPC technology, particularly for smaller organizations without dedicated control specialists, and creates bottlenecks in implementation and maintenance processes.

Data quality and availability issues further complicate MPC implementation in digital twins. The effectiveness of MPC algorithms heavily depends on accurate process models, yet many industrial environments lack sufficient high-quality historical data for model development and validation. Sensor noise, missing data points, and inconsistent sampling rates frequently degrade model performance, while the dynamic nature of industrial processes requires continuous model updates that many current systems cannot efficiently accommodate.

Integration challenges between MPC systems and existing industrial infrastructure create significant implementation barriers. Legacy control systems often utilize proprietary protocols and closed architectures that resist seamless integration with modern MPC solutions. The resulting compatibility issues necessitate extensive customization, increasing implementation costs and timelines while potentially introducing system vulnerabilities.

Uncertainty management represents another critical challenge in current MPC implementations. Industrial processes inherently contain various uncertainties, including measurement errors, process disturbances, and model mismatches. While robust MPC variants exist theoretically, their practical implementation in digital twins remains challenging due to increased computational requirements and the difficulty of accurately quantifying uncertainty bounds in complex systems.

The scalability limitations of current MPC solutions present obstacles for enterprise-wide deployment. Many existing implementations function effectively for individual process units but struggle when scaled to plant-wide or enterprise-level digital twins. This scalability issue stems from both computational constraints and the difficulty of maintaining consistent model quality across diverse process units with varying dynamics and operational constraints.

Human expertise dependency continues to hinder widespread MPC adoption in digital twins. Current implementations typically require specialized knowledge in control theory, optimization techniques, and process engineering. This expertise gap limits the accessibility of MPC technology, particularly for smaller organizations without dedicated control specialists, and creates bottlenecks in implementation and maintenance processes.

Current MPC-Digital Twin Implementation Approaches

01 Digital Twin Integration with MPC for Process Optimization

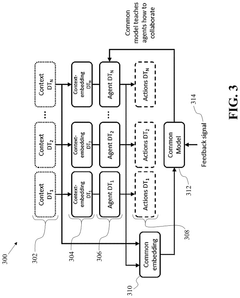

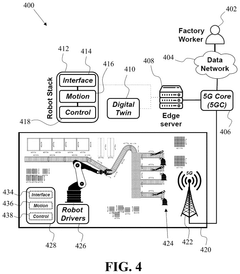

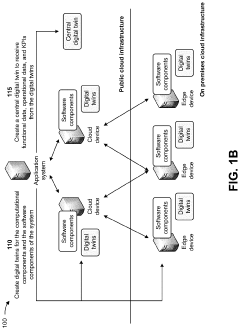

Digital Twin technology integrated with Model Predictive Control (MPC) creates a virtual replica of physical processes that enables real-time optimization. This combination allows for predictive simulation of process behaviors before implementation in real systems. The digital twin continuously updates with real-time data, while MPC algorithms use these models to predict future states and calculate optimal control actions, resulting in improved process efficiency, reduced downtime, and enhanced quality control.- Integration of MPC with Digital Twin Technology: Model Predictive Control can be integrated with digital twin technology to create virtual replicas of physical processes. This integration enables real-time simulation and optimization of industrial processes before implementation in the physical world. The digital twin continuously updates with real-world data, allowing the MPC algorithms to adapt and optimize control strategies based on current conditions, leading to improved process efficiency and reduced operational costs.

- Real-time Process Optimization Using MPC: Model Predictive Control enables real-time optimization of industrial processes by predicting future system behavior and calculating optimal control actions. When implemented within digital twin environments, MPC algorithms can continuously adjust process parameters to maintain optimal performance despite disturbances or changing conditions. This approach allows for proactive rather than reactive control, minimizing deviations from setpoints and improving overall process stability and efficiency.

- Predictive Maintenance through Digital Twin and MPC: The combination of Model Predictive Control and digital twin technology enables advanced predictive maintenance strategies. By analyzing the difference between the digital twin's predicted behavior and actual system performance, potential equipment failures can be detected early. MPC algorithms can then adjust operational parameters to extend equipment life while maintaining production targets, reducing unplanned downtime and maintenance costs.

- Multi-objective Optimization in Digital Twin Environments: Model Predictive Control frameworks in digital twin environments can handle multi-objective optimization problems that balance competing goals such as energy efficiency, product quality, throughput, and environmental impact. These systems use sophisticated algorithms to find optimal operating points that satisfy multiple constraints simultaneously. The digital twin provides a safe environment to test different optimization strategies before deploying them to the physical system.

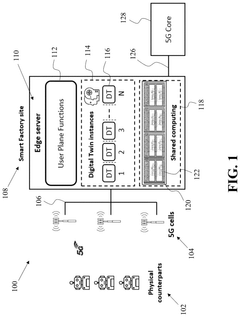

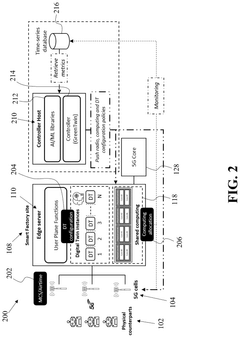

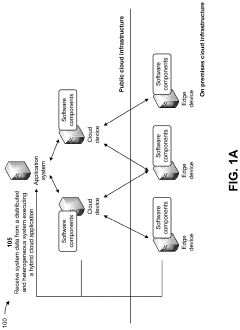

- Cloud-based MPC and Digital Twin Implementation: Cloud computing platforms enable the deployment of computationally intensive Model Predictive Control algorithms and digital twin simulations. This cloud-based approach allows for distributed processing, scalability, and remote access to optimization tools. Multiple stakeholders can collaborate on process optimization efforts regardless of physical location, while the system can leverage advanced computing resources to handle complex models and large datasets for more accurate predictions and control.

02 Real-time Adaptive Control Strategies in Digital Twin Environments

Advanced adaptive control strategies implemented within digital twin environments enable systems to respond dynamically to changing process conditions. These strategies incorporate real-time feedback mechanisms that continuously adjust control parameters based on performance metrics. By leveraging machine learning algorithms, the control systems can identify patterns and optimize responses to disturbances, leading to more robust process control and improved operational resilience in manufacturing and industrial applications.Expand Specific Solutions03 Predictive Maintenance and Anomaly Detection Using MPC-Driven Digital Twins

Digital twins powered by Model Predictive Control frameworks enable advanced predictive maintenance capabilities by continuously monitoring equipment health and predicting potential failures. The system analyzes operational patterns against expected behavior models to identify anomalies before they cause disruptions. This approach allows for condition-based maintenance scheduling rather than time-based interventions, significantly reducing unplanned downtime and extending equipment lifespan while optimizing maintenance resource allocation.Expand Specific Solutions04 Multi-objective Optimization in Complex Industrial Processes

MPC-based digital twin systems excel at handling multi-objective optimization challenges in complex industrial environments where competing goals like energy efficiency, product quality, and throughput must be balanced. These systems utilize sophisticated algorithms to evaluate multiple scenarios simultaneously and determine optimal operating parameters that satisfy various constraints. The approach enables decision-makers to visualize trade-offs between different objectives and implement control strategies that achieve the best overall performance across multiple metrics.Expand Specific Solutions05 Cloud-based Digital Twin Architectures for Distributed Process Control

Cloud-based architectures for digital twin implementation enable distributed process control across multiple facilities or production lines. These systems leverage cloud computing resources to handle the computational demands of complex MPC algorithms while providing accessibility to control interfaces from various locations. The architecture supports collaborative optimization efforts, facilitates data sharing between different operational units, and enables enterprise-wide process improvements through standardized control methodologies and centralized expertise.Expand Specific Solutions

Leading Companies in MPC-Digital Twin Integration

Model Predictive Control (MPC) in Digital Twin-based Process Optimization is evolving rapidly in a growth market phase, with an estimated global market size exceeding $2 billion and projected annual growth of 15-20%. The technology maturity varies across sectors, with industrial leaders like Siemens AG, Rockwell Automation, and Emerson (Fisher-Rosemount Systems) demonstrating advanced implementation capabilities. Academic institutions including Zhejiang University of Technology and Southeast University are contributing significant research advancements. The competitive landscape shows a clear division between established automation giants with comprehensive solutions and specialized technology providers focusing on niche applications. Integration with AI and cloud computing is accelerating adoption across manufacturing, energy, and process industries.

Fisher-Rosemount Systems, Inc.

Technical Solution: Fisher-Rosemount Systems (part of Emerson) has pioneered DeltaV Predict, an advanced Model Predictive Control solution that integrates with digital twin technology for comprehensive process optimization. Their approach combines first-principles modeling with empirical data to create accurate digital representations of industrial processes. The DeltaV Predict platform employs multi-variable predictive control algorithms that continuously optimize process variables while respecting operational constraints. Their digital twin implementation incorporates both steady-state and dynamic process models, enabling both real-time optimization and what-if scenario analysis. The system features adaptive model updating capabilities that automatically refine the digital twin based on actual process performance, ensuring sustained accuracy over time. Fisher-Rosemount's solution includes specialized tools for model identification and validation, streamlining the development of accurate process models. Implementation cases have demonstrated 3-7% improvement in production capacity and up to 12% reduction in quality variability across various process industries[5][6].

Strengths: Robust integration with DeltaV control systems; extensive process industry expertise; simplified model development tools reducing implementation complexity. Weaknesses: Less flexible with non-Emerson control systems; requires significant process knowledge for effective implementation; limited integration with enterprise-level business systems.

Rockwell Automation Technologies, Inc.

Technical Solution: Rockwell Automation has developed an advanced Model Predictive Control framework integrated with digital twin technology called FactoryTalk OptomizeIT MPC. This solution creates high-fidelity virtual replicas of manufacturing processes that continuously synchronize with physical operations. Their approach combines process modeling, real-time data acquisition, and predictive analytics to optimize control strategies before implementation on physical assets. The system employs multi-variable predictive algorithms that can handle complex process interactions while respecting operational constraints. Rockwell's implementation leverages their PlantPAx distributed control system as the foundation, with the digital twin environment providing a safe testing ground for control strategies. The solution includes adaptive modeling capabilities that automatically refine the digital twin based on real-world performance data, ensuring continuous improvement of the predictive models. Case studies have shown this approach delivering 5-10% improvement in throughput and up to 15% reduction in energy consumption across various process industries[2][4].

Strengths: Seamless integration with existing Rockwell control systems; user-friendly interface designed for operational technology personnel; strong North American support network. Weaknesses: More limited global presence compared to some competitors; integration challenges with non-Rockwell equipment; requires significant process engineering expertise for optimal implementation.

Key Algorithms and Frameworks Analysis

Energy consumption optimization in digital twin applications

PatentPendingUS20240319774A1

Innovation

- A computer-implemented method that receives input metrics from digital twins, determines updated configurations including radio, computing, and digital twin configurations to reduce power consumption, and provides these configurations through APIs, leveraging delay budgets to optimize energy use and resource allocation.

Utilizing digital twins for data-driven risk identification and root cause analysis of a distributed and heterogeneous system

PatentPendingUS20240168857A1

Innovation

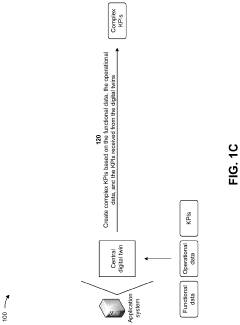

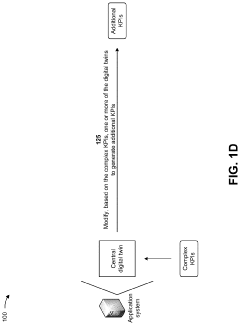

- The method involves creating digital twins for computational and software components of a distributed heterogeneous system, using a central digital twin to process functional and operational data, and applying principal component analysis and self-organizing maps models to detect anomalies and generate a root cause vector, enabling proactive corrective actions.

Real-time Data Synchronization Methods

Real-time data synchronization is a critical component in Model Predictive Control (MPC) within Digital Twin-based process optimization frameworks. The effectiveness of MPC algorithms heavily depends on the accuracy and timeliness of data flowing between physical assets and their digital counterparts. Current synchronization methods can be categorized into three primary approaches: event-based, time-based, and hybrid synchronization mechanisms.

Event-based synchronization triggers data updates only when significant changes occur in the monitored parameters, effectively reducing network traffic and computational load. This approach utilizes change detection algorithms with configurable thresholds to determine when synchronization is necessary. In process optimization contexts, event-based methods have demonstrated bandwidth efficiency improvements of 40-60% compared to traditional polling methods, though they may introduce variable latency.

Time-based synchronization follows predetermined intervals for data updates, ensuring consistent system behavior and predictable network load. This approach is particularly valuable for processes requiring strict temporal consistency in control actions. Modern implementations utilize adaptive sampling rates that adjust based on process dynamics, with typical synchronization frequencies ranging from milliseconds in high-speed manufacturing to minutes in slower chemical processes.

Hybrid synchronization methods combine both approaches, implementing baseline periodic updates supplemented by event-triggered synchronizations when rapid changes are detected. This balanced approach has gained significant traction in industrial applications, with research indicating 30% improved responsiveness to process disturbances while maintaining network efficiency.

Edge computing architectures have revolutionized real-time synchronization by performing preliminary data processing and filtering at the source. This distributed approach reduces central system load and network congestion while decreasing synchronization latency by 50-70% in typical implementations. Leading industrial automation vendors now incorporate edge processing capabilities directly into field devices and controllers.

Data compression and prioritization techniques further enhance synchronization efficiency. Lossy and lossless compression algorithms specifically designed for time-series industrial data can reduce transmission volume by 70-90% while preserving critical information for MPC algorithms. Additionally, semantic prioritization ensures that the most decision-critical parameters receive synchronization precedence during network constraints.

Blockchain-based synchronization represents an emerging approach for ensuring data integrity across distributed digital twin implementations. Though still in early adoption phases, this method provides tamper-evident synchronization with cryptographic verification, particularly valuable in multi-stakeholder process optimization scenarios where data trustworthiness is paramount.

Event-based synchronization triggers data updates only when significant changes occur in the monitored parameters, effectively reducing network traffic and computational load. This approach utilizes change detection algorithms with configurable thresholds to determine when synchronization is necessary. In process optimization contexts, event-based methods have demonstrated bandwidth efficiency improvements of 40-60% compared to traditional polling methods, though they may introduce variable latency.

Time-based synchronization follows predetermined intervals for data updates, ensuring consistent system behavior and predictable network load. This approach is particularly valuable for processes requiring strict temporal consistency in control actions. Modern implementations utilize adaptive sampling rates that adjust based on process dynamics, with typical synchronization frequencies ranging from milliseconds in high-speed manufacturing to minutes in slower chemical processes.

Hybrid synchronization methods combine both approaches, implementing baseline periodic updates supplemented by event-triggered synchronizations when rapid changes are detected. This balanced approach has gained significant traction in industrial applications, with research indicating 30% improved responsiveness to process disturbances while maintaining network efficiency.

Edge computing architectures have revolutionized real-time synchronization by performing preliminary data processing and filtering at the source. This distributed approach reduces central system load and network congestion while decreasing synchronization latency by 50-70% in typical implementations. Leading industrial automation vendors now incorporate edge processing capabilities directly into field devices and controllers.

Data compression and prioritization techniques further enhance synchronization efficiency. Lossy and lossless compression algorithms specifically designed for time-series industrial data can reduce transmission volume by 70-90% while preserving critical information for MPC algorithms. Additionally, semantic prioritization ensures that the most decision-critical parameters receive synchronization precedence during network constraints.

Blockchain-based synchronization represents an emerging approach for ensuring data integrity across distributed digital twin implementations. Though still in early adoption phases, this method provides tamper-evident synchronization with cryptographic verification, particularly valuable in multi-stakeholder process optimization scenarios where data trustworthiness is paramount.

ROI Assessment Framework for Industrial Implementation

Implementing Model Predictive Control (MPC) within Digital Twin frameworks for process optimization represents a significant investment for industrial organizations. A comprehensive ROI assessment framework is essential to evaluate the financial viability and strategic value of such implementations. This framework must consider both quantitative financial metrics and qualitative benefits that may be harder to monetize but equally important for long-term competitive advantage.

The ROI assessment begins with implementation cost analysis, encompassing hardware requirements (sensors, edge computing devices, servers), software licensing, integration expenses, and human resource costs for implementation and ongoing management. These initial investments typically range from $100,000 for small-scale deployments to several million dollars for enterprise-wide implementations across multiple production facilities.

Expected financial returns should be categorized into direct and indirect benefits. Direct benefits include measurable cost reductions in energy consumption (typically 5-15%), raw material usage (3-8%), maintenance costs (15-25% through predictive maintenance), and production yield improvements (3-7%). Indirect benefits encompass enhanced product quality, reduced environmental impact, improved regulatory compliance, and increased operational flexibility.

Time-to-value analysis is critical for realistic ROI projections. MPC-Digital Twin implementations generally follow a phased value realization curve: initial setup phase (3-6 months), optimization phase (6-12 months), and full value realization (12-24 months). Organizations should expect ROI timeframes of 18-36 months, depending on implementation complexity and organizational readiness.

Risk assessment must be incorporated into the ROI framework, accounting for implementation delays, data quality issues, integration challenges with legacy systems, and potential resistance to operational changes. Each risk factor should be assigned probability and impact ratings to calculate risk-adjusted ROI figures.

Scalability considerations significantly impact long-term ROI. The framework should evaluate how initial investments in MPC-Digital Twin infrastructure can be leveraged across additional processes or facilities, potentially reducing marginal implementation costs by 30-50% for subsequent deployments while maintaining similar benefit levels.

Performance metrics must be established to track actual ROI against projections. Key metrics include Overall Equipment Effectiveness (OEE), energy efficiency improvements, quality metrics (defect rates, first-pass yield), and process stability indicators. These metrics should be monitored through a dedicated dashboard with regular review cycles to ensure the technology investment delivers expected returns.

The ROI assessment begins with implementation cost analysis, encompassing hardware requirements (sensors, edge computing devices, servers), software licensing, integration expenses, and human resource costs for implementation and ongoing management. These initial investments typically range from $100,000 for small-scale deployments to several million dollars for enterprise-wide implementations across multiple production facilities.

Expected financial returns should be categorized into direct and indirect benefits. Direct benefits include measurable cost reductions in energy consumption (typically 5-15%), raw material usage (3-8%), maintenance costs (15-25% through predictive maintenance), and production yield improvements (3-7%). Indirect benefits encompass enhanced product quality, reduced environmental impact, improved regulatory compliance, and increased operational flexibility.

Time-to-value analysis is critical for realistic ROI projections. MPC-Digital Twin implementations generally follow a phased value realization curve: initial setup phase (3-6 months), optimization phase (6-12 months), and full value realization (12-24 months). Organizations should expect ROI timeframes of 18-36 months, depending on implementation complexity and organizational readiness.

Risk assessment must be incorporated into the ROI framework, accounting for implementation delays, data quality issues, integration challenges with legacy systems, and potential resistance to operational changes. Each risk factor should be assigned probability and impact ratings to calculate risk-adjusted ROI figures.

Scalability considerations significantly impact long-term ROI. The framework should evaluate how initial investments in MPC-Digital Twin infrastructure can be leveraged across additional processes or facilities, potentially reducing marginal implementation costs by 30-50% for subsequent deployments while maintaining similar benefit levels.

Performance metrics must be established to track actual ROI against projections. Key metrics include Overall Equipment Effectiveness (OEE), energy efficiency improvements, quality metrics (defect rates, first-pass yield), and process stability indicators. These metrics should be monitored through a dedicated dashboard with regular review cycles to ensure the technology investment delivers expected returns.

Unlock deeper insights with PatSnap Eureka Quick Research — get a full tech report to explore trends and direct your research. Try now!

Generate Your Research Report Instantly with AI Agent

Supercharge your innovation with PatSnap Eureka AI Agent Platform!