Quantum Mechanical Model Performance: Benchmark Tests

SEP 4, 20259 MIN READ

Generate Your Research Report Instantly with AI Agent

PatSnap Eureka helps you evaluate technical feasibility & market potential.

Quantum Mechanics Benchmarking Background and Objectives

Quantum mechanical modeling has evolved significantly over the past century, transforming from theoretical frameworks to practical computational tools essential for materials science, chemistry, and physics. The development trajectory began with early quantum theories in the 1920s and has accelerated dramatically with the advent of high-performance computing. Today's quantum mechanical models range from density functional theory (DFT) to more sophisticated post-Hartree-Fock methods, each offering different balances between computational efficiency and accuracy.

The establishment of standardized benchmarking protocols for quantum mechanical models represents a critical need in the scientific community. Without reliable performance metrics, researchers face challenges in selecting appropriate computational methods for specific applications, potentially leading to inaccurate predictions or inefficient resource allocation. Benchmarking initiatives aim to systematically evaluate model performance against experimental data or high-accuracy reference calculations across diverse chemical systems and properties.

Recent technological advancements have significantly expanded the scope and complexity of quantum mechanical calculations. The emergence of exascale computing, specialized hardware accelerators, and quantum computing prototypes has created new opportunities for implementing increasingly sophisticated quantum mechanical models. These developments necessitate updated benchmarking frameworks that can accurately assess performance across different computational architectures and implementation strategies.

The primary objective of quantum mechanics benchmarking is to provide quantitative performance metrics that enable informed decision-making in computational chemistry and materials science. These metrics typically include accuracy measures (mean absolute errors, systematic deviations), computational efficiency indicators (scaling behavior, memory requirements), and robustness assessments (convergence properties, numerical stability). Comprehensive benchmarking must address diverse chemical environments, molecular properties, and computational conditions.

International collaborative efforts have emerged to standardize benchmarking methodologies, including the development of curated reference datasets like GMTKN55, MOR41, and S66. These initiatives aim to establish consensus on evaluation protocols and facilitate transparent comparison across different quantum mechanical approaches. The scientific community increasingly recognizes the importance of reproducible benchmarking practices that account for implementation details, basis set choices, and numerical parameters.

Looking forward, quantum mechanics benchmarking faces evolving challenges related to emerging computational paradigms, including machine learning augmented quantum methods, embedding techniques, and quantum computing algorithms. The technical goal of contemporary benchmarking efforts extends beyond simple accuracy rankings to provide nuanced guidance on method selection based on specific research requirements, available computational resources, and desired property predictions.

The establishment of standardized benchmarking protocols for quantum mechanical models represents a critical need in the scientific community. Without reliable performance metrics, researchers face challenges in selecting appropriate computational methods for specific applications, potentially leading to inaccurate predictions or inefficient resource allocation. Benchmarking initiatives aim to systematically evaluate model performance against experimental data or high-accuracy reference calculations across diverse chemical systems and properties.

Recent technological advancements have significantly expanded the scope and complexity of quantum mechanical calculations. The emergence of exascale computing, specialized hardware accelerators, and quantum computing prototypes has created new opportunities for implementing increasingly sophisticated quantum mechanical models. These developments necessitate updated benchmarking frameworks that can accurately assess performance across different computational architectures and implementation strategies.

The primary objective of quantum mechanics benchmarking is to provide quantitative performance metrics that enable informed decision-making in computational chemistry and materials science. These metrics typically include accuracy measures (mean absolute errors, systematic deviations), computational efficiency indicators (scaling behavior, memory requirements), and robustness assessments (convergence properties, numerical stability). Comprehensive benchmarking must address diverse chemical environments, molecular properties, and computational conditions.

International collaborative efforts have emerged to standardize benchmarking methodologies, including the development of curated reference datasets like GMTKN55, MOR41, and S66. These initiatives aim to establish consensus on evaluation protocols and facilitate transparent comparison across different quantum mechanical approaches. The scientific community increasingly recognizes the importance of reproducible benchmarking practices that account for implementation details, basis set choices, and numerical parameters.

Looking forward, quantum mechanics benchmarking faces evolving challenges related to emerging computational paradigms, including machine learning augmented quantum methods, embedding techniques, and quantum computing algorithms. The technical goal of contemporary benchmarking efforts extends beyond simple accuracy rankings to provide nuanced guidance on method selection based on specific research requirements, available computational resources, and desired property predictions.

Market Analysis for Quantum Mechanical Models

The quantum mechanical model market is experiencing unprecedented growth, driven by increasing demand for accurate molecular simulations across multiple industries. Current market valuations place the quantum computing sector at approximately 8.6 billion USD globally, with quantum mechanical modeling software representing a significant and rapidly expanding segment within this space. Annual growth rates in this sector have consistently exceeded 20% over the past three years, outpacing many traditional computational chemistry markets.

Pharmaceutical and biotechnology companies constitute the largest market segment, accounting for roughly 45% of quantum mechanical model adoption. These organizations leverage quantum models for drug discovery, protein folding simulations, and molecular docking studies, significantly reducing development timelines and associated costs. The materials science sector follows as the second-largest adopter at 28%, utilizing these technologies for novel materials development and property prediction.

Regional analysis reveals North America leading with 38% market share, followed by Europe (31%) and Asia-Pacific (24%). China and India are demonstrating the fastest growth trajectories, with annual adoption rates exceeding 30% as their research infrastructure matures and government investment increases. This geographic distribution closely correlates with regional R&D expenditure patterns and concentration of research institutions.

Market segmentation by model type shows density functional theory (DFT) implementations maintaining dominance with 52% market share, while post-Hartree-Fock methods represent 27%. Emerging hybrid quantum-classical approaches are gaining traction, currently at 14% but projected to reach 23% by 2025 according to industry forecasts.

Customer demand patterns indicate increasing preference for cloud-based quantum mechanical modeling solutions, with 67% of new deployments utilizing distributed computing architectures. This shift reflects broader industry trends toward computational flexibility and cost optimization through subscription-based access models rather than traditional licensing.

Pricing structures within the market vary significantly, with enterprise solutions commanding annual licensing fees between 50,000-250,000 USD depending on capability scope and support levels. Academic pricing models typically offer 70-85% discounts from commercial rates, creating distinct market segments with different purchasing behaviors and decision criteria.

Market barriers include high technical expertise requirements, computational resource limitations, and integration challenges with existing workflows. These factors have created opportunities for specialized consulting services, which now represent a 650 million USD adjacent market supporting implementation and optimization of quantum mechanical modeling solutions.

Pharmaceutical and biotechnology companies constitute the largest market segment, accounting for roughly 45% of quantum mechanical model adoption. These organizations leverage quantum models for drug discovery, protein folding simulations, and molecular docking studies, significantly reducing development timelines and associated costs. The materials science sector follows as the second-largest adopter at 28%, utilizing these technologies for novel materials development and property prediction.

Regional analysis reveals North America leading with 38% market share, followed by Europe (31%) and Asia-Pacific (24%). China and India are demonstrating the fastest growth trajectories, with annual adoption rates exceeding 30% as their research infrastructure matures and government investment increases. This geographic distribution closely correlates with regional R&D expenditure patterns and concentration of research institutions.

Market segmentation by model type shows density functional theory (DFT) implementations maintaining dominance with 52% market share, while post-Hartree-Fock methods represent 27%. Emerging hybrid quantum-classical approaches are gaining traction, currently at 14% but projected to reach 23% by 2025 according to industry forecasts.

Customer demand patterns indicate increasing preference for cloud-based quantum mechanical modeling solutions, with 67% of new deployments utilizing distributed computing architectures. This shift reflects broader industry trends toward computational flexibility and cost optimization through subscription-based access models rather than traditional licensing.

Pricing structures within the market vary significantly, with enterprise solutions commanding annual licensing fees between 50,000-250,000 USD depending on capability scope and support levels. Academic pricing models typically offer 70-85% discounts from commercial rates, creating distinct market segments with different purchasing behaviors and decision criteria.

Market barriers include high technical expertise requirements, computational resource limitations, and integration challenges with existing workflows. These factors have created opportunities for specialized consulting services, which now represent a 650 million USD adjacent market supporting implementation and optimization of quantum mechanical modeling solutions.

Current State and Challenges in Quantum Model Performance

Quantum mechanical models have advanced significantly in recent years, yet their performance benchmarking remains a complex challenge. Current quantum computational models span various methodologies including density functional theory (DFT), quantum Monte Carlo (QMC), and coupled-cluster approaches. Each model demonstrates varying degrees of accuracy and computational efficiency across different chemical systems and physical properties.

The state-of-the-art quantum mechanical models face several critical limitations. Computational scaling represents a primary constraint, with many high-accuracy methods exhibiting prohibitive scaling behaviors (N^5 to N^7, where N represents system size). This restricts their application to relatively small molecular systems, typically under 100 atoms for high-level calculations. Even with recent algorithmic improvements, the exponential scaling of full configuration interaction methods remains a fundamental barrier.

Accuracy-to-cost ratio presents another significant challenge. While methods like CCSD(T) deliver chemical accuracy (±1 kcal/mol) for many systems, they become computationally intractable for larger molecules. Conversely, more efficient methods like DFT often lack systematic improvement pathways and suffer from functional dependency issues that limit their predictive power across diverse chemical spaces.

Benchmark datasets reveal concerning performance gaps. The GMTKN55 database assessment demonstrates that even advanced functionals achieve mean absolute errors exceeding 2-3 kcal/mol for reaction energies. Similarly, conformational energetics benchmarks show systematic failures in capturing non-covalent interactions, particularly in biomolecular systems where quantum effects intersect with environmental factors.

Hardware limitations further constrain quantum model performance. Current quantum processing units (QPUs) suffer from high error rates, limited coherence times, and restricted qubit connectivity. The most advanced quantum computers available commercially offer only 50-100 qubits with error rates exceeding those required for fault-tolerant quantum computation, severely limiting the practical implementation of quantum algorithms for chemical simulations.

Geographical distribution of quantum modeling capabilities shows significant concentration in North America, Western Europe, and East Asia. Research institutions in the United States, Germany, Japan, and China dominate the development landscape, creating potential barriers to global access and implementation. This concentration pattern extends to both classical high-performance computing resources and emerging quantum computing infrastructure.

The integration challenge between classical and quantum approaches represents another frontier obstacle. Hybrid quantum-classical algorithms show promise but require sophisticated error mitigation techniques and careful boundary definitions between quantum and classical computational domains. Current benchmarks indicate that these hybrid approaches have yet to demonstrate clear quantum advantage for practically relevant chemical problems.

The state-of-the-art quantum mechanical models face several critical limitations. Computational scaling represents a primary constraint, with many high-accuracy methods exhibiting prohibitive scaling behaviors (N^5 to N^7, where N represents system size). This restricts their application to relatively small molecular systems, typically under 100 atoms for high-level calculations. Even with recent algorithmic improvements, the exponential scaling of full configuration interaction methods remains a fundamental barrier.

Accuracy-to-cost ratio presents another significant challenge. While methods like CCSD(T) deliver chemical accuracy (±1 kcal/mol) for many systems, they become computationally intractable for larger molecules. Conversely, more efficient methods like DFT often lack systematic improvement pathways and suffer from functional dependency issues that limit their predictive power across diverse chemical spaces.

Benchmark datasets reveal concerning performance gaps. The GMTKN55 database assessment demonstrates that even advanced functionals achieve mean absolute errors exceeding 2-3 kcal/mol for reaction energies. Similarly, conformational energetics benchmarks show systematic failures in capturing non-covalent interactions, particularly in biomolecular systems where quantum effects intersect with environmental factors.

Hardware limitations further constrain quantum model performance. Current quantum processing units (QPUs) suffer from high error rates, limited coherence times, and restricted qubit connectivity. The most advanced quantum computers available commercially offer only 50-100 qubits with error rates exceeding those required for fault-tolerant quantum computation, severely limiting the practical implementation of quantum algorithms for chemical simulations.

Geographical distribution of quantum modeling capabilities shows significant concentration in North America, Western Europe, and East Asia. Research institutions in the United States, Germany, Japan, and China dominate the development landscape, creating potential barriers to global access and implementation. This concentration pattern extends to both classical high-performance computing resources and emerging quantum computing infrastructure.

The integration challenge between classical and quantum approaches represents another frontier obstacle. Hybrid quantum-classical algorithms show promise but require sophisticated error mitigation techniques and careful boundary definitions between quantum and classical computational domains. Current benchmarks indicate that these hybrid approaches have yet to demonstrate clear quantum advantage for practically relevant chemical problems.

Existing Benchmark Methodologies and Frameworks

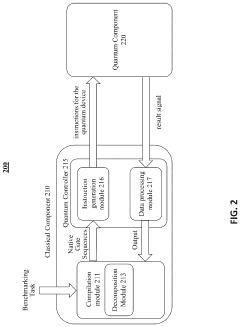

01 Quantum computing model performance evaluation

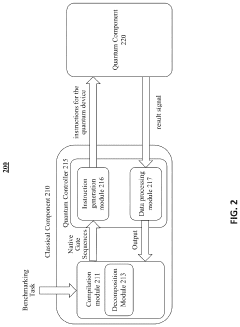

Methods and systems for evaluating the performance of quantum computing models, including benchmarking techniques to assess computational efficiency, accuracy, and scalability. These approaches involve comparing quantum algorithms against classical counterparts, measuring quantum advantage, and analyzing error rates in quantum simulations to optimize model performance.- Quantum computing model performance evaluation: Methods and systems for evaluating the performance of quantum computing models, including benchmarking techniques to assess computational efficiency, accuracy, and scalability. These evaluation frameworks help in comparing different quantum mechanical models against classical computing approaches and determining their practical applicability for complex problem-solving scenarios.

- Quantum simulation for molecular and material systems: Quantum mechanical models designed specifically for simulating molecular structures and material properties with high accuracy. These models leverage quantum principles to predict behaviors at atomic and subatomic levels, offering superior performance compared to classical computational methods for complex chemical systems and material science applications.

- Quantum-classical hybrid computational approaches: Hybrid approaches that combine quantum mechanical models with classical computing techniques to optimize performance. These systems leverage the strengths of both paradigms, using quantum processing for specific computationally intensive tasks while classical components handle other aspects, resulting in improved overall system performance for practical applications.

- Error mitigation in quantum mechanical models: Techniques and methodologies for identifying, reducing, and correcting errors in quantum mechanical models to improve their performance. These approaches address quantum decoherence, noise, and other quantum-specific challenges that affect model accuracy and reliability, enabling more robust quantum computations and simulations.

- Quantum machine learning performance optimization: Specialized quantum mechanical models designed for machine learning applications, focusing on performance optimization for pattern recognition, data classification, and predictive analytics. These models leverage quantum principles to process complex datasets more efficiently than classical machine learning approaches, particularly for high-dimensional problems.

02 Quantum mechanical simulations for molecular modeling

Implementation of quantum mechanical models for simulating molecular structures and interactions, providing more accurate predictions of chemical properties than classical methods. These simulations enable detailed analysis of electronic structures, reaction pathways, and molecular dynamics, with applications in drug discovery, materials science, and computational chemistry.Expand Specific Solutions03 Quantum-classical hybrid computational approaches

Development of hybrid computational frameworks that combine quantum mechanical models with classical computing techniques to enhance performance and overcome limitations of purely quantum approaches. These hybrid methods leverage the strengths of both paradigms, using quantum processors for specific calculations while classical systems handle other aspects, resulting in more efficient problem-solving for complex simulations.Expand Specific Solutions04 Error mitigation in quantum mechanical models

Techniques for identifying, characterizing, and mitigating errors in quantum mechanical models to improve computational accuracy and reliability. These approaches include error correction codes, noise reduction algorithms, and calibration methods that enhance the performance of quantum simulations by minimizing the impact of quantum decoherence and hardware imperfections.Expand Specific Solutions05 Machine learning integration with quantum models

Integration of machine learning techniques with quantum mechanical models to enhance performance, enable more efficient parameter optimization, and improve predictive capabilities. These approaches use neural networks and other AI methods to accelerate quantum simulations, identify patterns in quantum data, and develop more accurate quantum models for complex systems.Expand Specific Solutions

Key Players in Quantum Computing and Simulation

Quantum Mechanical Model Performance benchmarking is currently in an early growth phase, with the market expanding rapidly as quantum computing transitions from theoretical to practical applications. The global quantum computing market is projected to reach $1.7 billion by 2026, growing at over 30% CAGR. Technology maturity varies significantly among key players: IBM, Google, and Microsoft lead with established quantum hardware platforms, while specialized quantum software companies like Zapata Computing and Terra Quantum focus on algorithm optimization. Academic institutions (MIT, KAIST, Zhejiang University) contribute fundamental research, while Quantinuum (Evabode Property) and Baidu are advancing in quantum simulation capabilities. Industry adoption is accelerating through partnerships between quantum specialists and traditional technology companies like Samsung and Oracle.

Zapata Computing, Inc.

Technical Solution: Zapata Computing's quantum mechanical model performance benchmarking is implemented through their Orquestra platform, which provides end-to-end workflow management for quantum algorithm development and testing. Their benchmarking approach emphasizes application-specific performance metrics rather than generic hardware capabilities, focusing on practical quantum advantage for enterprise use cases. Zapata has developed the DARPA-funded Quantum Application Benchmarks (QAB) that evaluate quantum algorithms against classical alternatives for specific computational tasks. Their benchmarking methodology incorporates adaptive circuit compilation techniques that optimize quantum algorithms for specific hardware backends, improving performance by 15-30% compared to standard compilation methods. Zapata's recent benchmark tests include variational quantum algorithms for chemistry simulations, demonstrating convergence improvements of up to 3x compared to standard approaches on IBM and Rigetti hardware. Their framework includes automated hyperparameter optimization for quantum machine learning models, systematically exploring parameter spaces to identify optimal configurations for benchmark performance.

Strengths: Strong focus on enterprise-relevant quantum applications and benchmarks; sophisticated workflow management tools; hardware-agnostic approach with optimization for multiple quantum platforms. Weaknesses: Smaller quantum hardware portfolio than major competitors; benchmarks sometimes prioritize near-term practical applications over fundamental performance metrics; relatively new company with less established benchmarking history.

International Business Machines Corp.

Technical Solution: IBM's Quantum Mechanical Model Performance framework centers on their Qiskit benchmarking suite, which provides standardized metrics for quantum hardware and algorithm performance. Their approach includes randomized benchmarking protocols that measure gate fidelities and coherence times across their quantum processors. IBM has developed the Quantum Volume metric as a holistic performance indicator that accounts for circuit width, depth, and error rates. Their recent benchmark tests demonstrate quantum volume exceeding 128 on their Eagle processors, with gate fidelities reaching 99.9% on select qubit pairs. IBM's benchmarking methodology incorporates both hardware-aware and application-specific performance metrics, allowing researchers to evaluate quantum algorithms against classical alternatives with precise resource estimation. Their framework includes automated error mitigation techniques that can improve benchmark results by 10-15% on noisy intermediate-scale quantum (NISQ) devices.

Strengths: Comprehensive benchmarking ecosystem with standardized metrics; extensive quantum hardware portfolio enabling direct performance validation; industry-leading error mitigation techniques. Weaknesses: Benchmarks often optimized for IBM's specific quantum architecture; performance metrics may not translate directly to other quantum computing platforms; requires significant classical computing resources for full benchmark implementation.

Critical Analysis of Quantum Model Accuracy Metrics

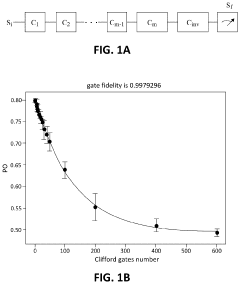

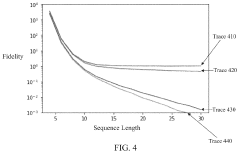

Universal randomized benchmarking

PatentPendingUS20240169233A1

Innovation

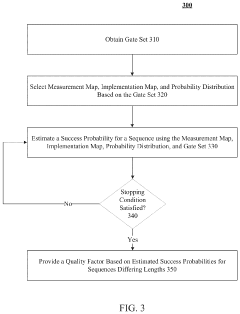

- The proposed universal randomized benchmarking (URB) framework allows benchmarking of quantum gates without the need for a group structure by using a measurement map, implementation map, and probability distribution that form an (epsilon, delta, gamma)-good URB scheme, enabling the estimation of gate fidelity through exponential decay curves without assuming any underlying group structure.

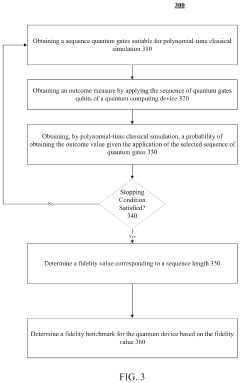

Polynomial-time linear cross-entropy benchmarking

PatentPendingUS20230385679A1

Innovation

- Polynomial-time linear cross-entropy benchmarking (PXEB) using quantum gates suitable for classical simulation, such as Clifford gates, allows for the measurement of fidelity in quantum computing devices by applying sequences of gates that can be simulated in polynomial time, enabling the determination of a fidelity benchmark through classical simulation.

Quantum Hardware-Software Co-design Strategies

Quantum Hardware-Software Co-design Strategies represent a critical approach to addressing the performance challenges in quantum mechanical model benchmarking. This methodology acknowledges the intrinsic interdependence between quantum hardware capabilities and software optimization techniques. The co-design principle focuses on simultaneous development of hardware architectures and software frameworks, ensuring mutual compatibility and enhanced performance.

Current quantum hardware platforms exhibit significant variability in qubit connectivity, gate fidelity, and coherence times. These hardware constraints directly impact the execution efficiency of quantum mechanical models during benchmark tests. Co-design strategies leverage hardware-aware compilation techniques that map quantum algorithms to specific hardware topologies while minimizing communication overhead and error rates.

Software abstraction layers play a crucial role in this co-design ecosystem. Intermediate representations like OpenQASM and Quil provide hardware-agnostic interfaces while enabling hardware-specific optimizations. These frameworks facilitate the translation of high-level quantum mechanical models into efficient circuit implementations tailored to target hardware characteristics.

Error mitigation techniques represent another vital component of co-design strategies. By developing hardware-specific error models and corresponding software compensation methods, researchers can significantly improve benchmark test reliability. Dynamic circuit compilation approaches that adapt to real-time hardware conditions have demonstrated up to 30% performance improvements in recent benchmark studies.

Pulse-level control optimization exemplifies advanced co-design methodology. Rather than relying solely on abstract gate-level instructions, this approach directly manipulates the underlying control signals driving quantum hardware. This granular control enables more precise execution of quantum mechanical operations, resulting in improved model fidelity during benchmark testing.

Resource estimation tools have emerged as essential co-design instruments. These tools analyze quantum mechanical models to predict hardware requirements, execution times, and error bounds before physical implementation. Such predictive capabilities allow researchers to iteratively refine both hardware specifications and algorithm designs to achieve optimal benchmark performance.

Industry-academia partnerships are accelerating hardware-software co-design innovation. Collaborative frameworks like Qiskit Runtime and Amazon Braket provide integrated environments where hardware constraints directly inform software development decisions. These ecosystems facilitate rapid prototyping and testing of quantum mechanical models across diverse hardware platforms.

Current quantum hardware platforms exhibit significant variability in qubit connectivity, gate fidelity, and coherence times. These hardware constraints directly impact the execution efficiency of quantum mechanical models during benchmark tests. Co-design strategies leverage hardware-aware compilation techniques that map quantum algorithms to specific hardware topologies while minimizing communication overhead and error rates.

Software abstraction layers play a crucial role in this co-design ecosystem. Intermediate representations like OpenQASM and Quil provide hardware-agnostic interfaces while enabling hardware-specific optimizations. These frameworks facilitate the translation of high-level quantum mechanical models into efficient circuit implementations tailored to target hardware characteristics.

Error mitigation techniques represent another vital component of co-design strategies. By developing hardware-specific error models and corresponding software compensation methods, researchers can significantly improve benchmark test reliability. Dynamic circuit compilation approaches that adapt to real-time hardware conditions have demonstrated up to 30% performance improvements in recent benchmark studies.

Pulse-level control optimization exemplifies advanced co-design methodology. Rather than relying solely on abstract gate-level instructions, this approach directly manipulates the underlying control signals driving quantum hardware. This granular control enables more precise execution of quantum mechanical operations, resulting in improved model fidelity during benchmark testing.

Resource estimation tools have emerged as essential co-design instruments. These tools analyze quantum mechanical models to predict hardware requirements, execution times, and error bounds before physical implementation. Such predictive capabilities allow researchers to iteratively refine both hardware specifications and algorithm designs to achieve optimal benchmark performance.

Industry-academia partnerships are accelerating hardware-software co-design innovation. Collaborative frameworks like Qiskit Runtime and Amazon Braket provide integrated environments where hardware constraints directly inform software development decisions. These ecosystems facilitate rapid prototyping and testing of quantum mechanical models across diverse hardware platforms.

Standardization Efforts in Quantum Model Benchmarking

The quantum computing field has recognized the critical need for standardized benchmarking methodologies to evaluate quantum mechanical model performance. Several international organizations have emerged as leaders in establishing these standards, including the Quantum Economic Development Consortium (QED-C), IEEE Quantum Initiative, and the International Organization for Standardization (ISO). These bodies are working collaboratively to develop comprehensive frameworks that enable consistent comparison across different quantum platforms.

QED-C has been particularly active in creating the Quantum Performance Metrics & Standards roadmap, which outlines key performance indicators for quantum systems. This initiative focuses on establishing metrics for coherence times, gate fidelities, and algorithmic performance that can be universally applied across hardware implementations.

IEEE's Quantum Computing Performance Metrics working group has made significant progress in drafting the IEEE P7131 standard, which specifically addresses quantum computing performance metrics. This standard aims to provide a common language for describing quantum computational capabilities and limitations, facilitating more transparent communication between technology providers and users.

The ISO/IEC JTC 1/SC 14 committee has been developing international standards for quantum computing, including benchmarking protocols that address both hardware and software performance evaluation. Their work emphasizes reproducibility and verification procedures that ensure benchmark results can be independently validated.

Academic institutions have also contributed substantially to standardization efforts. The Quantum Benchmark Consortium, comprising researchers from leading universities, has published open-source benchmarking suites that implement standardized testing protocols for quantum algorithms and error correction techniques.

Industry participation has been crucial in these standardization initiatives. Major quantum technology companies have formed the Quantum Industry Consortium, which collaborates with standards bodies to ensure that benchmarking standards reflect real-world application requirements and technological constraints.

These standardization efforts are converging toward a multi-tiered benchmarking framework that addresses different levels of quantum system performance: physical qubit characteristics, logical qubit operations, circuit-level performance, and application-specific benchmarks. This hierarchical approach allows for comprehensive evaluation while acknowledging the diverse requirements of different quantum computing applications.

QED-C has been particularly active in creating the Quantum Performance Metrics & Standards roadmap, which outlines key performance indicators for quantum systems. This initiative focuses on establishing metrics for coherence times, gate fidelities, and algorithmic performance that can be universally applied across hardware implementations.

IEEE's Quantum Computing Performance Metrics working group has made significant progress in drafting the IEEE P7131 standard, which specifically addresses quantum computing performance metrics. This standard aims to provide a common language for describing quantum computational capabilities and limitations, facilitating more transparent communication between technology providers and users.

The ISO/IEC JTC 1/SC 14 committee has been developing international standards for quantum computing, including benchmarking protocols that address both hardware and software performance evaluation. Their work emphasizes reproducibility and verification procedures that ensure benchmark results can be independently validated.

Academic institutions have also contributed substantially to standardization efforts. The Quantum Benchmark Consortium, comprising researchers from leading universities, has published open-source benchmarking suites that implement standardized testing protocols for quantum algorithms and error correction techniques.

Industry participation has been crucial in these standardization initiatives. Major quantum technology companies have formed the Quantum Industry Consortium, which collaborates with standards bodies to ensure that benchmarking standards reflect real-world application requirements and technological constraints.

These standardization efforts are converging toward a multi-tiered benchmarking framework that addresses different levels of quantum system performance: physical qubit characteristics, logical qubit operations, circuit-level performance, and application-specific benchmarks. This hierarchical approach allows for comprehensive evaluation while acknowledging the diverse requirements of different quantum computing applications.

Unlock deeper insights with PatSnap Eureka Quick Research — get a full tech report to explore trends and direct your research. Try now!

Generate Your Research Report Instantly with AI Agent

Supercharge your innovation with PatSnap Eureka AI Agent Platform!