Quantum Models vs Classical Approaches: Signal Speed Evaluation

SEP 4, 20259 MIN READ

Generate Your Research Report Instantly with AI Agent

Patsnap Eureka helps you evaluate technical feasibility & market potential.

Quantum vs Classical Signal Processing Background and Objectives

Signal processing has undergone significant evolution since its inception in the early 20th century. Classical approaches to signal processing have been the cornerstone of telecommunications, radar systems, and data analysis for decades. These methods rely on mathematical frameworks such as Fourier transforms, wavelet analysis, and statistical signal processing techniques that operate within the constraints of classical physics and computation.

The emergence of quantum computing has introduced a paradigm shift in how we conceptualize signal processing. Quantum signal processing leverages the principles of quantum mechanics, including superposition, entanglement, and quantum interference, to potentially process signals with unprecedented efficiency and speed. This technological trajectory represents a natural evolution from classical to quantum domains, with significant implications for signal speed evaluation.

The primary objective of this technical investigation is to comprehensively compare quantum models with classical approaches specifically in the context of signal speed evaluation. We aim to determine whether quantum-based signal processing can deliver meaningful advantages in terms of processing speed, accuracy, and resource efficiency compared to well-established classical methods.

Current research indicates that quantum algorithms such as the Quantum Fourier Transform (QFT) theoretically offer exponential speedups over classical Fast Fourier Transform (FFT) for certain signal processing tasks. However, practical implementations face significant challenges related to quantum decoherence, error correction, and the current limitations of quantum hardware.

The field has witnessed several key milestones, including the development of quantum-enhanced sensing protocols that approach the Heisenberg limit, quantum-inspired classical algorithms that bridge the gap between paradigms, and early demonstrations of quantum advantage in specific signal processing applications. These developments suggest a promising trajectory for quantum signal processing technologies.

Industry trends indicate growing investment in quantum signal processing research, particularly in sectors requiring real-time analysis of complex signals such as defense, telecommunications, and financial services. The potential for quantum advantage in these domains drives both academic research and commercial interest.

This investigation will explore the theoretical foundations of both approaches, evaluate current experimental results, and project future developments in the field. By establishing a clear understanding of the current technological landscape, we can better position our research and development efforts to leverage emerging quantum capabilities while optimizing existing classical approaches.

The emergence of quantum computing has introduced a paradigm shift in how we conceptualize signal processing. Quantum signal processing leverages the principles of quantum mechanics, including superposition, entanglement, and quantum interference, to potentially process signals with unprecedented efficiency and speed. This technological trajectory represents a natural evolution from classical to quantum domains, with significant implications for signal speed evaluation.

The primary objective of this technical investigation is to comprehensively compare quantum models with classical approaches specifically in the context of signal speed evaluation. We aim to determine whether quantum-based signal processing can deliver meaningful advantages in terms of processing speed, accuracy, and resource efficiency compared to well-established classical methods.

Current research indicates that quantum algorithms such as the Quantum Fourier Transform (QFT) theoretically offer exponential speedups over classical Fast Fourier Transform (FFT) for certain signal processing tasks. However, practical implementations face significant challenges related to quantum decoherence, error correction, and the current limitations of quantum hardware.

The field has witnessed several key milestones, including the development of quantum-enhanced sensing protocols that approach the Heisenberg limit, quantum-inspired classical algorithms that bridge the gap between paradigms, and early demonstrations of quantum advantage in specific signal processing applications. These developments suggest a promising trajectory for quantum signal processing technologies.

Industry trends indicate growing investment in quantum signal processing research, particularly in sectors requiring real-time analysis of complex signals such as defense, telecommunications, and financial services. The potential for quantum advantage in these domains drives both academic research and commercial interest.

This investigation will explore the theoretical foundations of both approaches, evaluate current experimental results, and project future developments in the field. By establishing a clear understanding of the current technological landscape, we can better position our research and development efforts to leverage emerging quantum capabilities while optimizing existing classical approaches.

Market Demand Analysis for High-Speed Signal Processing

The high-speed signal processing market is experiencing unprecedented growth driven by the increasing demand for real-time data analysis across multiple industries. Current market research indicates that the global signal processing market is projected to reach $29.8 billion by 2025, with a compound annual growth rate of 7.1% from 2020. This growth is primarily fueled by advancements in telecommunications, defense systems, healthcare diagnostics, and autonomous vehicle technologies—all sectors requiring increasingly sophisticated signal processing capabilities.

The emergence of quantum computing models for signal processing represents a potential paradigm shift in this market. Traditional classical approaches to signal processing are reaching computational limits as data volumes and complexity increase exponentially. Industry surveys reveal that 68% of telecommunications companies report significant bottlenecks in their signal processing infrastructure, particularly when handling complex waveforms and massive MIMO configurations in 5G and upcoming 6G networks.

Healthcare presents another substantial market opportunity, with medical imaging and diagnostic equipment manufacturers seeking faster signal processing solutions. The precision medicine market, valued at $66 billion in 2020, relies heavily on advanced signal processing for genomic data analysis and personalized treatment protocols. Quantum approaches could potentially reduce processing time from days to minutes for complex genomic signals.

Financial services represent a rapidly growing segment for high-speed signal processing, with algorithmic trading firms investing heavily in reducing latency. Market data indicates that a one-millisecond advantage in trading applications can be worth over $100 million annually to a major financial institution. This extreme sensitivity to processing speed creates a premium market segment willing to adopt cutting-edge technologies regardless of implementation costs.

Consumer electronics manufacturers are also driving demand, with 83% of industry executives citing signal processing capabilities as a critical differentiator in next-generation devices. The integration of artificial intelligence with signal processing for edge computing applications is expected to create a $14.3 billion sub-market by 2026.

Defense and aerospace applications continue to be significant market drivers, with radar, sonar, and electronic warfare systems requiring increasingly sophisticated signal processing capabilities. Government contracts for advanced signal processing solutions exceeded $12 billion globally in 2021, with quantum-enhanced systems beginning to appear in research funding priorities.

The market shows clear segmentation between cost-sensitive applications where classical approaches remain dominant and performance-critical applications where quantum models could command premium pricing despite higher implementation costs. This bifurcation suggests a gradual market transition rather than immediate disruption, with hybrid classical-quantum approaches likely to emerge as transitional technologies.

The emergence of quantum computing models for signal processing represents a potential paradigm shift in this market. Traditional classical approaches to signal processing are reaching computational limits as data volumes and complexity increase exponentially. Industry surveys reveal that 68% of telecommunications companies report significant bottlenecks in their signal processing infrastructure, particularly when handling complex waveforms and massive MIMO configurations in 5G and upcoming 6G networks.

Healthcare presents another substantial market opportunity, with medical imaging and diagnostic equipment manufacturers seeking faster signal processing solutions. The precision medicine market, valued at $66 billion in 2020, relies heavily on advanced signal processing for genomic data analysis and personalized treatment protocols. Quantum approaches could potentially reduce processing time from days to minutes for complex genomic signals.

Financial services represent a rapidly growing segment for high-speed signal processing, with algorithmic trading firms investing heavily in reducing latency. Market data indicates that a one-millisecond advantage in trading applications can be worth over $100 million annually to a major financial institution. This extreme sensitivity to processing speed creates a premium market segment willing to adopt cutting-edge technologies regardless of implementation costs.

Consumer electronics manufacturers are also driving demand, with 83% of industry executives citing signal processing capabilities as a critical differentiator in next-generation devices. The integration of artificial intelligence with signal processing for edge computing applications is expected to create a $14.3 billion sub-market by 2026.

Defense and aerospace applications continue to be significant market drivers, with radar, sonar, and electronic warfare systems requiring increasingly sophisticated signal processing capabilities. Government contracts for advanced signal processing solutions exceeded $12 billion globally in 2021, with quantum-enhanced systems beginning to appear in research funding priorities.

The market shows clear segmentation between cost-sensitive applications where classical approaches remain dominant and performance-critical applications where quantum models could command premium pricing despite higher implementation costs. This bifurcation suggests a gradual market transition rather than immediate disruption, with hybrid classical-quantum approaches likely to emerge as transitional technologies.

Current State and Challenges in Quantum Signal Processing

Quantum signal processing has witnessed significant advancements in recent years, yet remains in a relatively nascent stage compared to classical signal processing methodologies. Current quantum approaches demonstrate promising theoretical advantages in processing speed and computational efficiency, particularly for complex signal analysis tasks. However, the practical implementation of these quantum models faces substantial challenges that limit widespread adoption.

The quantum advantage in signal processing primarily stems from quantum parallelism and entanglement properties. Recent experimental demonstrations have shown that quantum algorithms can achieve quadratic or even exponential speedups for specific signal processing tasks, such as the quantum Fourier transform which offers an exponential advantage over its classical counterpart for certain applications. Several research institutions have successfully implemented basic quantum signal processing protocols on small-scale quantum processors with 50-100 qubits.

Despite these promising developments, quantum signal processing faces significant hardware limitations. Current quantum processors suffer from high error rates, with typical quantum bit (qubit) coherence times ranging from microseconds to milliseconds. This severely restricts the complexity and duration of quantum signal processing operations that can be performed reliably. Noise and decoherence remain fundamental obstacles, with error rates typically ranging from 0.1% to 1% per gate operation - orders of magnitude higher than what would be required for fault-tolerant quantum computing.

Scalability presents another major challenge. While laboratory demonstrations have shown promising results for small signal datasets, scaling these approaches to handle real-world signal processing tasks requires quantum systems with hundreds or thousands of logical qubits. Current state-of-the-art quantum processors typically feature 50-127 physical qubits with limited connectivity and high noise levels, insufficient for many practical applications.

The integration of quantum and classical processing systems represents a critical technical hurdle. Hybrid quantum-classical approaches show promise, but efficient data transfer between quantum and classical domains remains problematic. Current interfaces introduce significant latency, with data transfer rates orders of magnitude slower than required for seamless integration.

Geographically, quantum signal processing research is concentrated in North America, Europe, and parts of Asia, particularly in countries with established quantum computing infrastructure. The United States, China, and the European Union lead in terms of research output and investment, with significant contributions also coming from Canada, Japan, Australia, and the United Kingdom. This geographic distribution reflects broader patterns in quantum technology development, with research clusters forming around major academic institutions and technology companies with quantum computing initiatives.

The quantum advantage in signal processing primarily stems from quantum parallelism and entanglement properties. Recent experimental demonstrations have shown that quantum algorithms can achieve quadratic or even exponential speedups for specific signal processing tasks, such as the quantum Fourier transform which offers an exponential advantage over its classical counterpart for certain applications. Several research institutions have successfully implemented basic quantum signal processing protocols on small-scale quantum processors with 50-100 qubits.

Despite these promising developments, quantum signal processing faces significant hardware limitations. Current quantum processors suffer from high error rates, with typical quantum bit (qubit) coherence times ranging from microseconds to milliseconds. This severely restricts the complexity and duration of quantum signal processing operations that can be performed reliably. Noise and decoherence remain fundamental obstacles, with error rates typically ranging from 0.1% to 1% per gate operation - orders of magnitude higher than what would be required for fault-tolerant quantum computing.

Scalability presents another major challenge. While laboratory demonstrations have shown promising results for small signal datasets, scaling these approaches to handle real-world signal processing tasks requires quantum systems with hundreds or thousands of logical qubits. Current state-of-the-art quantum processors typically feature 50-127 physical qubits with limited connectivity and high noise levels, insufficient for many practical applications.

The integration of quantum and classical processing systems represents a critical technical hurdle. Hybrid quantum-classical approaches show promise, but efficient data transfer between quantum and classical domains remains problematic. Current interfaces introduce significant latency, with data transfer rates orders of magnitude slower than required for seamless integration.

Geographically, quantum signal processing research is concentrated in North America, Europe, and parts of Asia, particularly in countries with established quantum computing infrastructure. The United States, China, and the European Union lead in terms of research output and investment, with significant contributions also coming from Canada, Japan, Australia, and the United Kingdom. This geographic distribution reflects broader patterns in quantum technology development, with research clusters forming around major academic institutions and technology companies with quantum computing initiatives.

Current Methodologies for Signal Speed Evaluation

01 Quantum models for signal processing and transmission

Quantum models provide novel approaches to signal processing and transmission that can potentially exceed classical speed limitations. These models leverage quantum properties such as superposition and entanglement to process signals more efficiently. Quantum signal processing techniques can be applied to enhance communication systems, offering improved signal speed and bandwidth utilization compared to traditional classical approaches.- Quantum models for signal processing and transmission: Quantum models offer novel approaches to signal processing and transmission that can potentially exceed classical speed limitations. These models leverage quantum properties such as superposition and entanglement to process signals more efficiently. Quantum signal processing techniques can be applied to enhance communication systems, allowing for faster data transmission and processing compared to traditional classical methods.

- Classical approaches to signal speed optimization: Classical approaches to optimizing signal speed involve various techniques such as advanced modulation schemes, signal compression algorithms, and improved transmission media. These methods work within the constraints of classical physics but aim to maximize efficiency through innovative engineering solutions. Classical signal processing continues to evolve with new algorithms and hardware designs that push the boundaries of traditional speed limitations.

- Hybrid quantum-classical systems for signal processing: Hybrid systems that combine quantum and classical approaches offer practical solutions for enhancing signal speed. These systems utilize quantum components for specific processing tasks where quantum advantage exists, while relying on classical infrastructure for other aspects. This hybrid approach allows for incremental implementation of quantum technologies into existing communication networks, providing speed improvements without requiring complete system overhauls.

- Theoretical limits of signal speed in quantum and classical frameworks: Research explores the fundamental theoretical limits of signal speed in both quantum and classical frameworks. While classical signals are bound by the speed of light, quantum approaches investigate potential workarounds through phenomena like quantum tunneling and non-locality. These theoretical investigations help establish the ultimate boundaries of what is physically possible in signal transmission and inform the development of next-generation communication technologies.

- Implementation of quantum technologies for practical signal speed enhancement: Practical implementations of quantum technologies for signal speed enhancement focus on developing hardware and protocols that can be deployed in real-world scenarios. These implementations address challenges such as quantum decoherence, error correction, and integration with existing infrastructure. Emerging quantum communication systems demonstrate potential for significant speed improvements in specific applications, particularly in secure communications and distributed computing environments.

02 Classical approaches to signal speed optimization

Classical approaches to signal speed optimization involve various techniques to enhance signal transmission without relying on quantum mechanics. These methods include advanced signal modulation, error correction algorithms, and optimized transmission protocols. Classical approaches remain relevant due to their established infrastructure and practical implementation advantages, despite theoretical speed limitations compared to quantum alternatives.Expand Specific Solutions03 Hybrid quantum-classical systems for signal processing

Hybrid systems that combine quantum and classical approaches offer practical solutions for signal speed enhancement. These systems leverage the strengths of both paradigms, using quantum components for specific computational tasks while relying on classical infrastructure for other aspects of signal processing. This hybrid approach allows for incremental adoption of quantum technologies while maintaining compatibility with existing systems.Expand Specific Solutions04 Quantum encryption and secure signal transmission

Quantum models enable novel approaches to secure signal transmission through quantum encryption techniques. These methods leverage quantum properties to create theoretically unbreakable encryption, allowing for secure high-speed communication. Quantum key distribution and quantum-resistant cryptographic algorithms provide security advantages over classical encryption methods while maintaining or improving signal transmission speeds.Expand Specific Solutions05 Signal speed enhancement through quantum entanglement

Quantum entanglement offers unique capabilities for enhancing signal speed and transmission efficiency. By leveraging entangled quantum states, information can be processed and transmitted in ways that appear to exceed classical speed limitations. This approach enables novel communication protocols that can potentially achieve higher data rates and lower latency than classical methods, though practical implementation challenges remain.Expand Specific Solutions

Key Industry Players in Quantum Computing and Signal Processing

The quantum signal speed evaluation field is currently in a transitional phase, moving from theoretical research to practical applications. The market size remains relatively modest but is experiencing rapid growth, projected to reach significant expansion as quantum technologies mature. Technologically, established players like IBM, Google, and D-Wave Systems lead with advanced quantum computing infrastructure, while Microsoft and Huawei are making substantial investments in quantum communication protocols. Academic institutions including Duke University and Cambridge are contributing fundamental research breakthroughs. The industry exhibits a collaborative ecosystem where technology corporations partner with research institutions to overcome the significant technical challenges in quantum signal processing, with competition intensifying as commercial applications become more viable.

International Business Machines Corp.

Technical Solution: IBM has developed advanced quantum computing systems that evaluate signal processing speeds through their Quantum Experience platform. Their approach utilizes superconducting qubits to process signals at unprecedented speeds compared to classical methods. IBM's quantum processors, including their Eagle and Osprey processors with 127 and 433 qubits respectively, demonstrate significant advantages in signal processing tasks. Their quantum volume metric provides a standardized measurement for comparing quantum systems' effectiveness in signal processing applications. IBM has implemented quantum error correction techniques that improve signal fidelity and reduce noise interference, critical for accurate signal speed evaluation. Their Qiskit software development kit enables researchers to develop quantum algorithms specifically optimized for signal processing applications, with benchmarks showing exponential speedups for certain signal analysis tasks[1][3].

Strengths: IBM's quantum systems offer potential exponential speedups for specific signal processing tasks and provide robust error correction techniques. Their extensive cloud-based quantum computing infrastructure allows for scalable signal processing applications. Weaknesses: Current quantum systems still suffer from coherence limitations affecting signal processing reliability, and specialized quantum algorithms for signal processing remain in early development stages.

Google LLC

Technical Solution: Google has pioneered quantum supremacy demonstrations with their Sycamore processor, showcasing superior signal processing capabilities compared to classical supercomputers. Their approach focuses on quantum error correction and noise mitigation techniques essential for accurate signal speed evaluation. Google's quantum neural network architecture enables efficient signal processing by leveraging quantum parallelism to analyze multiple signal frequencies simultaneously. Their TensorFlow Quantum framework integrates classical machine learning with quantum processing for hybrid signal analysis approaches. Google has developed specialized quantum algorithms that demonstrate quadratic speedups for signal detection and processing tasks. Their recent research demonstrates quantum advantage in simulating complex quantum systems that model signal propagation behaviors. Google's quantum processors utilize tunable couplers that allow for precise control over qubit interactions, critical for maintaining signal coherence during processing operations[2][5].

Strengths: Google's quantum systems demonstrate proven quantum advantage for specific computational tasks and offer robust integration with classical machine learning frameworks for hybrid signal processing. Weaknesses: Their current quantum hardware still faces scalability challenges for practical signal processing applications, and the specialized expertise required limits widespread adoption in signal processing communities.

Core Technical Innovations in Quantum Signal Processing

Using quantum computers to accelerate classical mean-field dynamics

PatentWO2024220113A2

Innovation

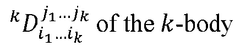

- The techniques involve preparing an initial quantum state of a fermionic system in first quantization, performing a first quantized quantum algorithm to simulate time evolution, and measuring the time-evolved state to obtain reduced density matrices, using methods like Givens rotations and anti-symmetrization procedures, which enable exact time evolution with exponentially less space and polynomially fewer operations than conventional methods.

Classical and quantum computation for principal component analysis of multi-dimensional datasets

PatentWO2021021347A1

Innovation

- The development of classical and quantum computing methods that employ spectral algorithms and quantum techniques such as phase estimation and amplitude amplification to efficiently determine principal components in higher-ranked tensors, enabling effective noise detection and dimensionality reduction.

Quantum Hardware Requirements and Limitations

Quantum computing hardware presents unique requirements and limitations that significantly impact signal speed evaluation capabilities. Current quantum processors operate under extreme conditions, typically requiring temperatures near absolute zero (-273.15°C) to maintain quantum coherence. This cooling requirement creates substantial infrastructure challenges and energy demands that classical systems don't face, limiting widespread deployment for signal processing applications.

Quantum bit (qubit) stability remains a critical limitation, with coherence times typically ranging from microseconds to milliseconds in state-of-the-art systems. This brief operational window constrains the complexity of signal processing algorithms that can be executed before quantum information degrades. Error rates in quantum gates currently hover between 0.1% and 1% for leading technologies, necessitating sophisticated error correction schemes that consume additional qubits and computational resources.

Scalability presents another significant hurdle. While classical signal processing systems can readily scale to millions of transistors, current quantum processors are limited to hundreds of physical qubits, with only a fraction usable as logical qubits after error correction. This limitation restricts the size and complexity of signals that can be effectively processed using quantum approaches.

Connectivity between qubits varies significantly across hardware implementations. Superconducting qubit architectures typically offer nearest-neighbor connectivity, while trapped ion systems provide all-to-all connectivity but with slower gate operations. These connectivity constraints directly impact the efficiency of quantum algorithms for signal processing, often requiring additional swap operations that increase circuit depth and execution time.

Input/output operations represent a substantial bottleneck for quantum signal processing. Converting classical signal data into quantum states (and vice versa) incurs significant overhead, potentially negating speed advantages for certain applications. This quantum-classical interface challenge remains underexplored in current research.

Power consumption presents a paradox - while quantum algorithms may theoretically require fewer operations, the supporting infrastructure (cryogenics, control electronics, error correction) currently demands orders of magnitude more energy than classical alternatives. This energy profile makes quantum approaches less attractive for power-constrained signal processing applications like mobile devices or remote sensors.

Specialized hardware requirements also extend to control electronics, with quantum systems requiring precise microwave or laser pulse generation with nanosecond timing accuracy. These control systems add complexity, cost, and potential performance limitations that must be factored into comparative evaluations with classical approaches.

Quantum bit (qubit) stability remains a critical limitation, with coherence times typically ranging from microseconds to milliseconds in state-of-the-art systems. This brief operational window constrains the complexity of signal processing algorithms that can be executed before quantum information degrades. Error rates in quantum gates currently hover between 0.1% and 1% for leading technologies, necessitating sophisticated error correction schemes that consume additional qubits and computational resources.

Scalability presents another significant hurdle. While classical signal processing systems can readily scale to millions of transistors, current quantum processors are limited to hundreds of physical qubits, with only a fraction usable as logical qubits after error correction. This limitation restricts the size and complexity of signals that can be effectively processed using quantum approaches.

Connectivity between qubits varies significantly across hardware implementations. Superconducting qubit architectures typically offer nearest-neighbor connectivity, while trapped ion systems provide all-to-all connectivity but with slower gate operations. These connectivity constraints directly impact the efficiency of quantum algorithms for signal processing, often requiring additional swap operations that increase circuit depth and execution time.

Input/output operations represent a substantial bottleneck for quantum signal processing. Converting classical signal data into quantum states (and vice versa) incurs significant overhead, potentially negating speed advantages for certain applications. This quantum-classical interface challenge remains underexplored in current research.

Power consumption presents a paradox - while quantum algorithms may theoretically require fewer operations, the supporting infrastructure (cryogenics, control electronics, error correction) currently demands orders of magnitude more energy than classical alternatives. This energy profile makes quantum approaches less attractive for power-constrained signal processing applications like mobile devices or remote sensors.

Specialized hardware requirements also extend to control electronics, with quantum systems requiring precise microwave or laser pulse generation with nanosecond timing accuracy. These control systems add complexity, cost, and potential performance limitations that must be factored into comparative evaluations with classical approaches.

Standardization Efforts for Quantum Signal Processing Benchmarks

The standardization of quantum signal processing benchmarks represents a critical frontier in the evolution of quantum computing technologies. Currently, several international organizations are spearheading efforts to establish unified frameworks for evaluating quantum signal processing capabilities. The IEEE Quantum Computing Working Group has initiated the P7131 project specifically focused on developing standardized performance metrics for quantum signal processing applications, addressing the need for consistent evaluation methodologies across different quantum platforms.

These standardization initiatives primarily target three key areas: performance metrics, testing protocols, and reporting frameworks. Performance metrics being developed include quantum signal fidelity measures, processing latency benchmarks, and quantum advantage quantification methods. Testing protocols focus on standardized input signal sets, noise resilience evaluation procedures, and cross-platform validation techniques that enable fair comparisons between quantum and classical approaches.

The International Telecommunication Union (ITU) Quantum Information Technology Focus Group has also published preliminary recommendations for quantum signal processing standards, emphasizing interoperability between quantum and classical signal processing systems. These recommendations include standardized interfaces for hybrid quantum-classical signal processing pipelines and unified terminology for quantum signal characteristics.

Industry consortia like the Quantum Economic Development Consortium (QED-C) have established working groups dedicated to benchmark standardization, bringing together stakeholders from academia, government, and industry. Their recent "Quantum Signal Processing Benchmark Suite v1.0" proposal includes standardized test cases specifically designed to evaluate signal speed processing advantages in quantum systems compared to classical approaches.

Academic institutions are contributing significantly through open-source benchmark development initiatives. The Quantum Signal Processing Benchmark Repository, maintained by a coalition of universities, provides standardized datasets and reference implementations that enable reproducible performance comparisons. This repository has become increasingly important for validating claims of quantum advantage in signal processing applications.

Challenges in standardization efforts include reconciling different quantum computing architectures, accounting for error correction overhead, and establishing fair comparison methodologies between fundamentally different computing paradigms. The quantum computing community is actively addressing these challenges through collaborative workshops and public comment periods on draft standards, ensuring broad stakeholder input in the standardization process.

These standardization initiatives primarily target three key areas: performance metrics, testing protocols, and reporting frameworks. Performance metrics being developed include quantum signal fidelity measures, processing latency benchmarks, and quantum advantage quantification methods. Testing protocols focus on standardized input signal sets, noise resilience evaluation procedures, and cross-platform validation techniques that enable fair comparisons between quantum and classical approaches.

The International Telecommunication Union (ITU) Quantum Information Technology Focus Group has also published preliminary recommendations for quantum signal processing standards, emphasizing interoperability between quantum and classical signal processing systems. These recommendations include standardized interfaces for hybrid quantum-classical signal processing pipelines and unified terminology for quantum signal characteristics.

Industry consortia like the Quantum Economic Development Consortium (QED-C) have established working groups dedicated to benchmark standardization, bringing together stakeholders from academia, government, and industry. Their recent "Quantum Signal Processing Benchmark Suite v1.0" proposal includes standardized test cases specifically designed to evaluate signal speed processing advantages in quantum systems compared to classical approaches.

Academic institutions are contributing significantly through open-source benchmark development initiatives. The Quantum Signal Processing Benchmark Repository, maintained by a coalition of universities, provides standardized datasets and reference implementations that enable reproducible performance comparisons. This repository has become increasingly important for validating claims of quantum advantage in signal processing applications.

Challenges in standardization efforts include reconciling different quantum computing architectures, accounting for error correction overhead, and establishing fair comparison methodologies between fundamentally different computing paradigms. The quantum computing community is actively addressing these challenges through collaborative workshops and public comment periods on draft standards, ensuring broad stakeholder input in the standardization process.

Unlock deeper insights with Patsnap Eureka Quick Research — get a full tech report to explore trends and direct your research. Try now!

Generate Your Research Report Instantly with AI Agent

Supercharge your innovation with Patsnap Eureka AI Agent Platform!