Quantum Models vs. Simulations: Which To Choose For Scalability

SEP 4, 20259 MIN READ

Generate Your Research Report Instantly with AI Agent

PatSnap Eureka helps you evaluate technical feasibility & market potential.

Quantum Computing Evolution and Objectives

Quantum computing has evolved significantly since its theoretical conception in the early 1980s by Richard Feynman and others who envisioned machines that could leverage quantum mechanical phenomena to perform computations. The initial development focused on theoretical frameworks, with the first quantum algorithms, such as Deutsch's algorithm, emerging in the late 1980s. By the mid-1990s, Peter Shor's factoring algorithm and Lov Grover's search algorithm demonstrated the potential quantum advantage over classical computing methods, catalyzing increased research interest.

The early 2000s marked the transition from purely theoretical work to experimental implementations, with rudimentary quantum bits (qubits) being realized in various physical systems including superconducting circuits, trapped ions, and photonic systems. The decade from 2010 to 2020 witnessed significant advancements in qubit coherence times, gate fidelities, and the scaling of quantum processors, culminating in Google's 2019 claim of quantum supremacy using a 53-qubit processor.

Currently, quantum computing exists in what is often termed the Noisy Intermediate-Scale Quantum (NISQ) era, characterized by systems with dozens to hundreds of qubits that lack full error correction. These systems face significant challenges in maintaining quantum coherence and minimizing gate errors, limiting their practical applications.

The primary objective in quantum computing development is achieving fault-tolerant quantum computation, which requires error rates below certain thresholds and sufficient qubit counts to implement error correction codes. This goal necessitates improvements in both hardware quality and algorithmic approaches to error mitigation.

When considering quantum models versus simulations for scalability, the technical objectives diverge. Quantum models aim to create abstract representations of quantum systems that can be efficiently implemented on available quantum hardware, balancing expressivity with the constraints of current technology. These models must account for noise characteristics, connectivity limitations, and available gate sets.

Quantum simulations, conversely, focus on using classical computing resources to emulate quantum systems, with objectives centered on maximizing the size and complexity of quantum circuits that can be reliably simulated. This approach serves both as a benchmarking tool for quantum hardware and as a development platform for quantum algorithms when hardware is unavailable or insufficient.

The evolution trajectory suggests that hybrid approaches, combining quantum models running on actual quantum processors with classical simulations, will dominate the near-term landscape as researchers work toward quantum advantage in specific application domains.

The early 2000s marked the transition from purely theoretical work to experimental implementations, with rudimentary quantum bits (qubits) being realized in various physical systems including superconducting circuits, trapped ions, and photonic systems. The decade from 2010 to 2020 witnessed significant advancements in qubit coherence times, gate fidelities, and the scaling of quantum processors, culminating in Google's 2019 claim of quantum supremacy using a 53-qubit processor.

Currently, quantum computing exists in what is often termed the Noisy Intermediate-Scale Quantum (NISQ) era, characterized by systems with dozens to hundreds of qubits that lack full error correction. These systems face significant challenges in maintaining quantum coherence and minimizing gate errors, limiting their practical applications.

The primary objective in quantum computing development is achieving fault-tolerant quantum computation, which requires error rates below certain thresholds and sufficient qubit counts to implement error correction codes. This goal necessitates improvements in both hardware quality and algorithmic approaches to error mitigation.

When considering quantum models versus simulations for scalability, the technical objectives diverge. Quantum models aim to create abstract representations of quantum systems that can be efficiently implemented on available quantum hardware, balancing expressivity with the constraints of current technology. These models must account for noise characteristics, connectivity limitations, and available gate sets.

Quantum simulations, conversely, focus on using classical computing resources to emulate quantum systems, with objectives centered on maximizing the size and complexity of quantum circuits that can be reliably simulated. This approach serves both as a benchmarking tool for quantum hardware and as a development platform for quantum algorithms when hardware is unavailable or insufficient.

The evolution trajectory suggests that hybrid approaches, combining quantum models running on actual quantum processors with classical simulations, will dominate the near-term landscape as researchers work toward quantum advantage in specific application domains.

Market Analysis for Quantum Computing Solutions

The quantum computing market is experiencing unprecedented growth, with projections indicating a market value reaching $1.7 billion by 2026, growing at a CAGR of approximately 30%. This expansion is driven by increasing investments from both private and public sectors, particularly in North America, Europe, and Asia-Pacific regions. The market landscape reveals a clear bifurcation between quantum models and quantum simulations, each addressing different customer needs and use cases.

Quantum models, which involve developing mathematical frameworks to represent quantum systems, are gaining traction in financial services, pharmaceuticals, and materials science. Financial institutions are particularly interested in quantum models for portfolio optimization and risk assessment, with major banks allocating significant R&D budgets to explore these applications. The pharmaceutical sector shows growing demand for quantum modeling solutions that can accelerate drug discovery processes, potentially reducing development timelines by 30-40%.

Quantum simulations, conversely, are seeing robust demand in academic research, defense, and advanced manufacturing sectors. These simulations, which replicate quantum behavior on classical systems, offer a more accessible entry point for organizations without access to quantum hardware. The defense sector has emerged as a significant customer, with government contracts for quantum simulation technologies increasing by 45% year-over-year.

Customer segmentation analysis reveals three primary market segments: early adopters (primarily research institutions and tech giants), pragmatic implementers (financial services and pharmaceutical companies), and exploratory users (manufacturing and logistics companies). Early adopters typically have higher tolerance for technological immaturity and are willing to invest in both models and simulations, while pragmatic implementers prefer more established quantum simulation approaches with demonstrable ROI.

Pricing models in the quantum computing market are evolving rapidly. Quantum simulation solutions typically follow SaaS or subscription-based models, with annual contracts ranging from $100,000 to $2 million depending on complexity and scale. Quantum modeling solutions, being more specialized, often involve custom pricing structures with significant professional services components.

Distribution channels are primarily direct sales for enterprise solutions, with cloud service providers emerging as important intermediaries offering quantum computing as a service. IBM Quantum, Amazon Braket, and Microsoft Azure Quantum have established themselves as leading platforms for delivering both quantum models and simulations to end users.

Customer feedback indicates that scalability remains a primary concern, with 67% of enterprise users citing it as a critical factor in their purchasing decisions. Organizations are increasingly seeking solutions that can scale with their growing computational needs while providing clear migration paths from classical to quantum paradigms.

Quantum models, which involve developing mathematical frameworks to represent quantum systems, are gaining traction in financial services, pharmaceuticals, and materials science. Financial institutions are particularly interested in quantum models for portfolio optimization and risk assessment, with major banks allocating significant R&D budgets to explore these applications. The pharmaceutical sector shows growing demand for quantum modeling solutions that can accelerate drug discovery processes, potentially reducing development timelines by 30-40%.

Quantum simulations, conversely, are seeing robust demand in academic research, defense, and advanced manufacturing sectors. These simulations, which replicate quantum behavior on classical systems, offer a more accessible entry point for organizations without access to quantum hardware. The defense sector has emerged as a significant customer, with government contracts for quantum simulation technologies increasing by 45% year-over-year.

Customer segmentation analysis reveals three primary market segments: early adopters (primarily research institutions and tech giants), pragmatic implementers (financial services and pharmaceutical companies), and exploratory users (manufacturing and logistics companies). Early adopters typically have higher tolerance for technological immaturity and are willing to invest in both models and simulations, while pragmatic implementers prefer more established quantum simulation approaches with demonstrable ROI.

Pricing models in the quantum computing market are evolving rapidly. Quantum simulation solutions typically follow SaaS or subscription-based models, with annual contracts ranging from $100,000 to $2 million depending on complexity and scale. Quantum modeling solutions, being more specialized, often involve custom pricing structures with significant professional services components.

Distribution channels are primarily direct sales for enterprise solutions, with cloud service providers emerging as important intermediaries offering quantum computing as a service. IBM Quantum, Amazon Braket, and Microsoft Azure Quantum have established themselves as leading platforms for delivering both quantum models and simulations to end users.

Customer feedback indicates that scalability remains a primary concern, with 67% of enterprise users citing it as a critical factor in their purchasing decisions. Organizations are increasingly seeking solutions that can scale with their growing computational needs while providing clear migration paths from classical to quantum paradigms.

Current Quantum Models and Simulation Limitations

Current quantum computing models and simulation approaches face significant limitations that hinder their scalability and practical application. Classical simulations of quantum systems become exponentially more complex as the number of qubits increases, with each additional qubit doubling the computational resources required. Even the most powerful supercomputers today can only simulate quantum systems with approximately 50-60 qubits before encountering insurmountable computational barriers.

Physical quantum computers, while theoretically more scalable, face their own set of challenges. Current quantum hardware suffers from high error rates due to quantum decoherence, where qubits lose their quantum properties through interaction with the environment. This necessitates complex error correction schemes that themselves require additional qubits, creating a paradoxical situation where solving larger problems requires exponentially more physical qubits than logical qubits.

Gate-based quantum computing models, such as those employed by IBM and Google, struggle with maintaining coherence times long enough to execute complex algorithms. While recent advances have extended coherence times to milliseconds in some systems, this remains insufficient for many practical applications that would require seconds or minutes of stable operation.

Quantum annealing approaches, championed by D-Wave Systems, offer better scalability in terms of qubit count but are limited to specific optimization problems and cannot perform universal quantum computation. Their connectivity constraints between qubits further limit the complexity of problems they can effectively address.

Hybrid quantum-classical approaches attempt to mitigate these limitations by delegating certain computational tasks to classical computers while leveraging quantum processors for specific subroutines. However, these approaches still face fundamental interface challenges and are constrained by the limitations of their quantum components.

Simulation fidelity presents another critical limitation. Noise-resilient quantum simulations require sophisticated error mitigation techniques that add significant overhead to the computation process. Current error rates in the range of 0.1% to 1% per gate operation accumulate rapidly in deep circuits, rendering many practical applications infeasible without substantial error correction.

Resource requirements for both models and simulations scale poorly with problem size. Classical simulations demand exponentially increasing memory and processing power, while physical quantum systems require increasingly complex control systems, cryogenic infrastructure, and precision electronics as qubit counts increase.

The trade-off between model complexity and simulation accuracy remains a fundamental challenge. Simplified models that are computationally tractable often fail to capture critical quantum effects, while high-fidelity simulations quickly become computationally intractable as system size increases.

Physical quantum computers, while theoretically more scalable, face their own set of challenges. Current quantum hardware suffers from high error rates due to quantum decoherence, where qubits lose their quantum properties through interaction with the environment. This necessitates complex error correction schemes that themselves require additional qubits, creating a paradoxical situation where solving larger problems requires exponentially more physical qubits than logical qubits.

Gate-based quantum computing models, such as those employed by IBM and Google, struggle with maintaining coherence times long enough to execute complex algorithms. While recent advances have extended coherence times to milliseconds in some systems, this remains insufficient for many practical applications that would require seconds or minutes of stable operation.

Quantum annealing approaches, championed by D-Wave Systems, offer better scalability in terms of qubit count but are limited to specific optimization problems and cannot perform universal quantum computation. Their connectivity constraints between qubits further limit the complexity of problems they can effectively address.

Hybrid quantum-classical approaches attempt to mitigate these limitations by delegating certain computational tasks to classical computers while leveraging quantum processors for specific subroutines. However, these approaches still face fundamental interface challenges and are constrained by the limitations of their quantum components.

Simulation fidelity presents another critical limitation. Noise-resilient quantum simulations require sophisticated error mitigation techniques that add significant overhead to the computation process. Current error rates in the range of 0.1% to 1% per gate operation accumulate rapidly in deep circuits, rendering many practical applications infeasible without substantial error correction.

Resource requirements for both models and simulations scale poorly with problem size. Classical simulations demand exponentially increasing memory and processing power, while physical quantum systems require increasingly complex control systems, cryogenic infrastructure, and precision electronics as qubit counts increase.

The trade-off between model complexity and simulation accuracy remains a fundamental challenge. Simplified models that are computationally tractable often fail to capture critical quantum effects, while high-fidelity simulations quickly become computationally intractable as system size increases.

Comparative Analysis of Quantum Models and Simulations

01 Hardware architectures for scalable quantum simulations

Various hardware architectures have been developed to address the scalability challenges in quantum simulations. These include specialized quantum processors, integrated circuits, and hybrid quantum-classical systems designed to handle larger quantum models. These architectures implement techniques such as parallel processing, optimized memory management, and dedicated quantum simulation accelerators to improve computational efficiency and enable the simulation of more complex quantum systems.- Quantum simulation architecture optimization: Optimization techniques for quantum simulation architectures focus on improving the scalability of quantum models. These approaches include specialized hardware designs, efficient circuit layouts, and resource allocation strategies that minimize computational overhead. By optimizing the architecture, quantum simulations can handle larger and more complex systems while maintaining performance and accuracy.

- Error mitigation in scalable quantum models: Error mitigation techniques are essential for maintaining the reliability of quantum models as they scale. These methods include error correction codes, noise-resilient algorithms, and fault-tolerant protocols that compensate for quantum decoherence and gate errors. Implementing these techniques allows quantum simulations to scale to larger problem sizes while preserving computational accuracy.

- Hybrid classical-quantum computational approaches: Hybrid approaches combine classical and quantum computing resources to enhance scalability. These methods distribute computational tasks between classical and quantum processors based on their respective strengths, using classical computers for pre-processing, coordination, and post-processing while leveraging quantum processors for specific quantum advantage tasks. This hybrid paradigm enables more efficient scaling of quantum simulations for practical applications.

- Distributed quantum computing frameworks: Distributed quantum computing frameworks address scalability by partitioning quantum simulations across multiple quantum processing units. These frameworks include protocols for quantum communication, resource sharing, and workload distribution that enable larger simulations than would be possible on a single quantum processor. Such approaches are particularly valuable for complex quantum models that exceed the capacity of individual quantum computers.

- Algorithm optimization for quantum scalability: Algorithm optimization techniques focus on redesigning quantum algorithms to improve their scalability characteristics. These include methods for reducing circuit depth, minimizing qubit requirements, and developing approximation techniques that maintain solution quality with fewer quantum resources. By optimizing algorithms specifically for scalability, quantum simulations can address larger problem instances even with limited quantum hardware resources.

02 Algorithmic approaches for quantum model scalability

Novel algorithms have been developed to enhance the scalability of quantum models and simulations. These include decomposition methods, approximation techniques, and optimization algorithms that reduce computational complexity. By implementing more efficient mathematical frameworks and computational methods, these algorithms enable quantum simulations to scale to larger systems while maintaining accuracy and performance, overcoming traditional limitations in quantum model size and complexity.Expand Specific Solutions03 Error mitigation and noise reduction techniques

Addressing quantum noise and errors is crucial for scaling quantum simulations. Various techniques have been developed to mitigate errors and reduce noise in quantum systems, including error correction codes, noise-aware algorithms, and robust control methods. These approaches improve the stability and reliability of quantum simulations at scale, allowing for more accurate results when modeling complex quantum systems and enabling simulations to run for longer periods without degradation.Expand Specific Solutions04 Resource optimization for large-scale quantum models

Efficient resource management is essential for scaling quantum simulations. Techniques include optimized qubit allocation, circuit compression, memory management strategies, and computational resource distribution. These methods minimize the hardware and computational resources required for quantum simulations, enabling more complex models to be executed within practical constraints. Resource optimization approaches also include techniques for distributing quantum computations across multiple processing units and managing the classical-quantum interface efficiently.Expand Specific Solutions05 Hybrid and distributed quantum simulation frameworks

Hybrid approaches combining classical and quantum computing resources, along with distributed computing frameworks, have been developed to enhance quantum simulation scalability. These frameworks partition computational tasks between classical and quantum processors based on their respective strengths, and distribute quantum simulations across multiple systems. By leveraging cloud computing, parallel processing, and specialized middleware, these approaches enable larger and more complex quantum models to be simulated effectively, overcoming the limitations of standalone quantum systems.Expand Specific Solutions

Leading Organizations in Quantum Computing Research

The quantum computing landscape is evolving rapidly, with competition intensifying between quantum models and simulations for scalable solutions. Currently in the early commercialization phase, the market is projected to reach $1.3 billion by 2025, growing at 30% CAGR. Technology maturity varies significantly across players: IBM, Google, and Intel lead with hardware-based quantum computers, while D-Wave and Rigetti offer specialized quantum systems. Companies like PsiQuantum and IonQ are advancing photonic and trapped-ion approaches respectively. Simulation-focused players including HQS Quantum Simulations, QunaSys, and Classiq are developing software solutions that can run on classical hardware. Microsoft and Origin Quantum are pursuing hybrid approaches, balancing immediate practicality with future quantum advantage. The ecosystem is further enriched by academic institutions like Peking University and University of Chicago collaborating with industry partners.

International Business Machines Corp.

Technical Solution: IBM's approach to quantum scalability combines both quantum models and simulations through their Qiskit framework. Their quantum hardware focuses on superconducting qubits with their latest Eagle processor featuring 127 qubits[1]. For simulation, IBM offers quantum circuit simulators that can handle up to 100 qubits on classical hardware. Their hybrid approach uses quantum-classical integration where quantum processors handle specialized calculations while classical systems manage the overall workflow. IBM's Quantum System Two architecture specifically addresses scalability by implementing a modular design that allows for the connection of multiple quantum processors through quantum communication links[2]. This architecture enables distributed quantum computing across multiple cryogenic systems, potentially overcoming single-processor size limitations. IBM also employs error mitigation techniques and is advancing toward error correction to improve the reliability of quantum computations as they scale to larger systems.

Strengths: IBM's modular architecture allows for incremental scaling beyond the limitations of single-processor systems. Their extensive classical computing infrastructure enables powerful hybrid approaches. Weaknesses: Their superconducting qubit technology requires extreme cooling, creating physical scaling challenges. Current error rates still limit the practical problem sizes that can be addressed with their quantum hardware.

Google LLC

Technical Solution: Google's approach to quantum scalability centers on their Sycamore quantum processor and TensorFlow Quantum framework. Their hardware strategy focuses on superconducting qubits, with their 53-qubit Sycamore processor demonstrating quantum supremacy in 2019 by performing a specific calculation in 200 seconds that would take the world's most powerful supercomputer approximately 10,000 years[3]. For simulations, Google has developed high-performance quantum circuit simulators that can emulate systems up to about 40 qubits on classical hardware. Their hybrid approach combines quantum hardware with classical optimization techniques through their Quantum Neural Network architecture. Google's scalability strategy includes developing improved qubit connectivity patterns and implementing error correction codes, particularly surface codes that they've pioneered[4]. They're also exploring quantum error mitigation techniques that can extend the computational reach of noisy intermediate-scale quantum (NISQ) devices without full error correction.

Strengths: Google has demonstrated industry-leading qubit performance and coherence times. Their quantum supremacy experiment validated their hardware approach for specific problems. Their deep expertise in classical computing enables powerful hybrid solutions. Weaknesses: Their current hardware still operates in the NISQ regime with significant error rates. The specific problems where quantum advantage can be demonstrated remain limited.

Key Quantum Algorithms and Implementation Frameworks

Simulating Quantum Systems with Quantum Computation

PatentPendingUS20210406421A1

Innovation

- The use of density matrix embedding theory (DMET) in conjunction with variational quantum eigensolver (VQE) algorithms on multiple unentangled quantum processor units (QPUs) allows for the simulation of larger quantum systems by dividing the system into fragments, enabling parallel computation and overcoming the qubit dimensionality constraint.

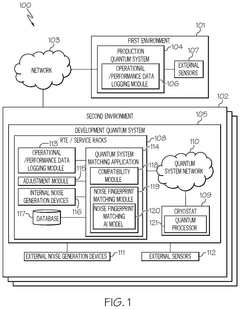

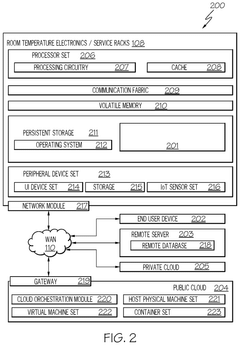

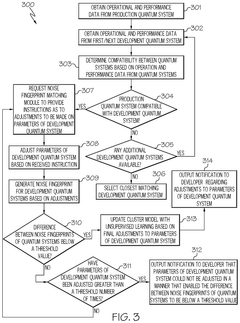

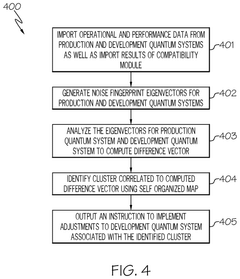

Optimizing development of a quantum circuit or a quantum model

PatentPendingUS20250131300A1

Innovation

- The method involves obtaining operational and performance data from both development and production quantum systems, generating noise fingerprints for each, and adjusting the parameters of the development system until the difference between the two noise fingerprints is below a threshold value, thereby matching the noise environment of the production system.

Quantum Hardware-Software Integration Challenges

The integration of quantum hardware and software presents significant challenges that directly impact the scalability decision between quantum models and simulations. Current quantum hardware platforms—including superconducting qubits, trapped ions, photonic systems, and topological qubits—each require specialized software interfaces that are often incompatible with one another. This fragmentation creates substantial barriers when attempting to scale quantum applications across different hardware architectures.

Software development kits (SDKs) from major quantum computing providers like IBM Qiskit, Google Cirq, and Amazon Braket attempt to address these integration challenges, but still require significant translation layers when moving between platforms. These translation processes introduce additional computational overhead and potential fidelity loss, particularly as quantum systems scale beyond 50-100 qubits.

Error correction mechanisms represent another critical integration challenge. As quantum models scale, they require increasingly sophisticated error mitigation techniques that must be tightly coupled with the underlying hardware. Current approaches like Quantum Error Correction (QEC) codes demand substantial qubit overhead—often requiring 10-1000 physical qubits for each logical qubit—creating a significant hardware-software optimization problem that impacts scalability decisions.

The abstraction layers between quantum algorithms and physical implementation present further complications. High-level quantum programming languages must efficiently compile to hardware-specific instructions while preserving quantum advantages. Current compiler technologies struggle with optimizing circuits for specific hardware topologies and noise profiles, resulting in performance degradation as system size increases.

Real-time calibration and feedback systems represent an emerging integration challenge. Quantum hardware requires continuous calibration to maintain coherence and gate fidelity, necessitating sophisticated software systems that can dynamically adjust parameters based on hardware performance. These systems become exponentially more complex as qubit counts increase, affecting the scalability comparison between models and simulations.

Resource management presents perhaps the most immediate practical challenge. Quantum processing units (QPUs) have limited connectivity, coherence times, and gate fidelities that must be carefully managed by scheduling and resource allocation software. As quantum models scale, the complexity of this resource management grows non-linearly, often making classical simulations more practical for intermediate-scale problems despite their theoretical limitations at larger scales.

Software development kits (SDKs) from major quantum computing providers like IBM Qiskit, Google Cirq, and Amazon Braket attempt to address these integration challenges, but still require significant translation layers when moving between platforms. These translation processes introduce additional computational overhead and potential fidelity loss, particularly as quantum systems scale beyond 50-100 qubits.

Error correction mechanisms represent another critical integration challenge. As quantum models scale, they require increasingly sophisticated error mitigation techniques that must be tightly coupled with the underlying hardware. Current approaches like Quantum Error Correction (QEC) codes demand substantial qubit overhead—often requiring 10-1000 physical qubits for each logical qubit—creating a significant hardware-software optimization problem that impacts scalability decisions.

The abstraction layers between quantum algorithms and physical implementation present further complications. High-level quantum programming languages must efficiently compile to hardware-specific instructions while preserving quantum advantages. Current compiler technologies struggle with optimizing circuits for specific hardware topologies and noise profiles, resulting in performance degradation as system size increases.

Real-time calibration and feedback systems represent an emerging integration challenge. Quantum hardware requires continuous calibration to maintain coherence and gate fidelity, necessitating sophisticated software systems that can dynamically adjust parameters based on hardware performance. These systems become exponentially more complex as qubit counts increase, affecting the scalability comparison between models and simulations.

Resource management presents perhaps the most immediate practical challenge. Quantum processing units (QPUs) have limited connectivity, coherence times, and gate fidelities that must be carefully managed by scheduling and resource allocation software. As quantum models scale, the complexity of this resource management grows non-linearly, often making classical simulations more practical for intermediate-scale problems despite their theoretical limitations at larger scales.

Quantum Computing Standardization Efforts

The standardization of quantum computing technologies has become increasingly critical as the field advances toward practical applications. Several international bodies are actively working to establish common frameworks, protocols, and benchmarks that will enable interoperability and reliable performance comparisons between different quantum systems.

The IEEE Quantum Computing Standards Working Group has been instrumental in developing standards for quantum computing terminology, performance metrics, and algorithm implementations. Their P7130 and P7131 standards specifically address quantum computing architecture and performance benchmarking, providing essential guidelines for evaluating scalability in both quantum models and simulations.

The International Organization for Standardization (ISO) has established the ISO/IEC JTC 1/WG 14 working group focused on quantum computing standardization. This group is developing standards for quantum terminology and performance metrics that will help organizations make informed decisions about which quantum approaches best suit their scalability requirements.

The Quantum Economic Development Consortium (QED-C) has been working on technical roadmaps and standards that address the practical aspects of quantum computing scalability. Their efforts include standardized testing methodologies that allow for objective comparisons between quantum models and simulation approaches.

In the academic sphere, the Quantum Open Source Foundation (QOSF) is promoting standardized interfaces and protocols for quantum software development. Their work facilitates the integration of quantum models and simulations into existing computational workflows, a critical factor when considering scalability across different computing paradigms.

The National Institute of Standards and Technology (NIST) has established the Quantum Economic Development Consortium, which brings together industry, academic, and government stakeholders to advance quantum computing technologies through standardized approaches. Their work includes developing benchmarks specifically designed to evaluate the scalability potential of different quantum computing approaches.

For organizations evaluating quantum models versus simulations for scalability, these standardization efforts provide crucial frameworks for assessment. The emerging standards for quantum volume, circuit layer operations per second (CLOPS), and quantum application-specific benchmarks offer objective metrics for comparing different approaches based on specific use case requirements.

As quantum computing continues to evolve, these standardization initiatives will play an increasingly important role in guiding technology selection decisions, particularly regarding the scalability trade-offs between quantum models and simulation approaches.

The IEEE Quantum Computing Standards Working Group has been instrumental in developing standards for quantum computing terminology, performance metrics, and algorithm implementations. Their P7130 and P7131 standards specifically address quantum computing architecture and performance benchmarking, providing essential guidelines for evaluating scalability in both quantum models and simulations.

The International Organization for Standardization (ISO) has established the ISO/IEC JTC 1/WG 14 working group focused on quantum computing standardization. This group is developing standards for quantum terminology and performance metrics that will help organizations make informed decisions about which quantum approaches best suit their scalability requirements.

The Quantum Economic Development Consortium (QED-C) has been working on technical roadmaps and standards that address the practical aspects of quantum computing scalability. Their efforts include standardized testing methodologies that allow for objective comparisons between quantum models and simulation approaches.

In the academic sphere, the Quantum Open Source Foundation (QOSF) is promoting standardized interfaces and protocols for quantum software development. Their work facilitates the integration of quantum models and simulations into existing computational workflows, a critical factor when considering scalability across different computing paradigms.

The National Institute of Standards and Technology (NIST) has established the Quantum Economic Development Consortium, which brings together industry, academic, and government stakeholders to advance quantum computing technologies through standardized approaches. Their work includes developing benchmarks specifically designed to evaluate the scalability potential of different quantum computing approaches.

For organizations evaluating quantum models versus simulations for scalability, these standardization efforts provide crucial frameworks for assessment. The emerging standards for quantum volume, circuit layer operations per second (CLOPS), and quantum application-specific benchmarks offer objective metrics for comparing different approaches based on specific use case requirements.

As quantum computing continues to evolve, these standardization initiatives will play an increasingly important role in guiding technology selection decisions, particularly regarding the scalability trade-offs between quantum models and simulation approaches.

Unlock deeper insights with PatSnap Eureka Quick Research — get a full tech report to explore trends and direct your research. Try now!

Generate Your Research Report Instantly with AI Agent

Supercharge your innovation with PatSnap Eureka AI Agent Platform!