Develop Atomic Force Microscopy Image Analysis Algorithms — Methodology

SEP 19, 202510 MIN READ

Generate Your Research Report Instantly with AI Agent

PatSnap Eureka helps you evaluate technical feasibility & market potential.

AFM Technology Background and Objectives

Atomic Force Microscopy (AFM) emerged in the mid-1980s as a revolutionary imaging technique capable of visualizing surfaces at the nanoscale. Since its invention by Gerd Binnig, Calvin Quate, and Christoph Gerber in 1986, AFM has evolved from a simple surface profiling tool to a sophisticated multi-functional platform for nanoscale characterization. The technology operates by measuring forces between a sharp probe and sample surface, enabling three-dimensional topographical imaging with unprecedented resolution.

The evolution of AFM technology has been marked by significant improvements in probe design, feedback systems, and operational modes. Early systems were limited to contact mode imaging, while modern instruments offer numerous specialized modes including tapping mode, non-contact mode, and various spectroscopic capabilities. These advancements have expanded AFM applications across diverse scientific disciplines, from materials science to biological research.

Image analysis has become increasingly critical as AFM capabilities have expanded. Traditional AFM image processing relied on basic correction algorithms for tilt removal and noise reduction. However, the complexity of modern AFM data necessitates more sophisticated analytical approaches. Current image analysis challenges include accurate feature recognition, automated data processing, and quantitative characterization of complex surface properties.

The primary objective of developing advanced AFM image analysis algorithms is to extract meaningful quantitative information from complex topographical data with minimal human intervention. This includes automating routine processing tasks, enhancing image quality, and enabling statistical analysis of surface features across multiple scales. Additionally, these algorithms aim to standardize analysis protocols to improve reproducibility and facilitate comparison between different experimental conditions.

Machine learning and artificial intelligence represent promising frontiers in AFM image analysis. These approaches can potentially overcome limitations of traditional algorithmic methods by learning from large datasets to identify patterns and features that might otherwise be overlooked. The integration of deep learning techniques with AFM image processing could revolutionize how researchers interpret nanoscale surface information.

The technological trajectory suggests a convergence of AFM with complementary characterization techniques, creating multimodal analytical platforms. This integration necessitates development of algorithms capable of correlating and synthesizing data from multiple sources. Future AFM image analysis tools will likely emphasize real-time processing capabilities, enabling dynamic experiments and in-situ monitoring of surface phenomena at the nanoscale.

Achieving these objectives requires interdisciplinary collaboration between microscopy experts, computer scientists, and domain specialists from various application fields. The ultimate goal is to transform AFM from a primarily qualitative imaging tool to a quantitative analytical technique capable of providing statistically robust measurements of surface properties across multiple length scales.

The evolution of AFM technology has been marked by significant improvements in probe design, feedback systems, and operational modes. Early systems were limited to contact mode imaging, while modern instruments offer numerous specialized modes including tapping mode, non-contact mode, and various spectroscopic capabilities. These advancements have expanded AFM applications across diverse scientific disciplines, from materials science to biological research.

Image analysis has become increasingly critical as AFM capabilities have expanded. Traditional AFM image processing relied on basic correction algorithms for tilt removal and noise reduction. However, the complexity of modern AFM data necessitates more sophisticated analytical approaches. Current image analysis challenges include accurate feature recognition, automated data processing, and quantitative characterization of complex surface properties.

The primary objective of developing advanced AFM image analysis algorithms is to extract meaningful quantitative information from complex topographical data with minimal human intervention. This includes automating routine processing tasks, enhancing image quality, and enabling statistical analysis of surface features across multiple scales. Additionally, these algorithms aim to standardize analysis protocols to improve reproducibility and facilitate comparison between different experimental conditions.

Machine learning and artificial intelligence represent promising frontiers in AFM image analysis. These approaches can potentially overcome limitations of traditional algorithmic methods by learning from large datasets to identify patterns and features that might otherwise be overlooked. The integration of deep learning techniques with AFM image processing could revolutionize how researchers interpret nanoscale surface information.

The technological trajectory suggests a convergence of AFM with complementary characterization techniques, creating multimodal analytical platforms. This integration necessitates development of algorithms capable of correlating and synthesizing data from multiple sources. Future AFM image analysis tools will likely emphasize real-time processing capabilities, enabling dynamic experiments and in-situ monitoring of surface phenomena at the nanoscale.

Achieving these objectives requires interdisciplinary collaboration between microscopy experts, computer scientists, and domain specialists from various application fields. The ultimate goal is to transform AFM from a primarily qualitative imaging tool to a quantitative analytical technique capable of providing statistically robust measurements of surface properties across multiple length scales.

Market Applications and Demand Analysis

The Atomic Force Microscopy (AFM) image analysis algorithms market is experiencing robust growth driven by expanding applications across multiple industries. The global AFM market, valued at approximately $570 million in 2022, is projected to reach $720 million by 2027, with image analysis software and algorithms representing a significant growth segment within this market.

In the semiconductor industry, demand for high-precision AFM image analysis has surged due to the continuous miniaturization of electronic components. As manufacturers push toward 3nm and smaller process nodes, conventional imaging techniques reach their physical limitations, creating substantial market pull for advanced AFM analysis algorithms capable of sub-nanometer resolution and defect detection.

The life sciences sector represents another major demand driver, with pharmaceutical companies and research institutions increasingly utilizing AFM for biomolecular imaging. The market requires algorithms specifically designed for analyzing soft biological samples, tracking dynamic processes, and correlating structural information with functional properties. This segment is growing at approximately 9% annually, outpacing the overall AFM market.

Materials science applications constitute a third significant market segment, with particular growth in nanomaterials characterization. Industries developing advanced composites, 2D materials, and functional surfaces require specialized algorithms for quantitative analysis of mechanical, electrical, and topographical properties at the nanoscale.

The industrial quality control sector demonstrates increasing adoption of AFM-based inspection systems, creating demand for automated, high-throughput image analysis algorithms. This trend is particularly evident in precision manufacturing industries where surface quality directly impacts product performance.

Market research indicates that end-users prioritize several key features in AFM image analysis solutions: automation capabilities to reduce operator dependency, integration with other analytical techniques, batch processing functionality, and advanced statistical analysis tools. Additionally, there is growing demand for machine learning and AI-enhanced algorithms that can identify patterns and anomalies beyond human visual perception.

Regional analysis shows North America leading the market with approximately 40% share, followed by Europe and Asia-Pacific. However, the Asia-Pacific region demonstrates the fastest growth rate, driven by expanding semiconductor manufacturing and research infrastructure in countries like China, South Korea, and Taiwan.

The market structure reveals a mix of established scientific instrument companies offering proprietary software solutions bundled with hardware, specialized software developers focusing exclusively on analysis algorithms, and open-source initiatives gaining traction in academic settings. This diverse ecosystem creates both competitive pressures and collaboration opportunities for new algorithm development.

In the semiconductor industry, demand for high-precision AFM image analysis has surged due to the continuous miniaturization of electronic components. As manufacturers push toward 3nm and smaller process nodes, conventional imaging techniques reach their physical limitations, creating substantial market pull for advanced AFM analysis algorithms capable of sub-nanometer resolution and defect detection.

The life sciences sector represents another major demand driver, with pharmaceutical companies and research institutions increasingly utilizing AFM for biomolecular imaging. The market requires algorithms specifically designed for analyzing soft biological samples, tracking dynamic processes, and correlating structural information with functional properties. This segment is growing at approximately 9% annually, outpacing the overall AFM market.

Materials science applications constitute a third significant market segment, with particular growth in nanomaterials characterization. Industries developing advanced composites, 2D materials, and functional surfaces require specialized algorithms for quantitative analysis of mechanical, electrical, and topographical properties at the nanoscale.

The industrial quality control sector demonstrates increasing adoption of AFM-based inspection systems, creating demand for automated, high-throughput image analysis algorithms. This trend is particularly evident in precision manufacturing industries where surface quality directly impacts product performance.

Market research indicates that end-users prioritize several key features in AFM image analysis solutions: automation capabilities to reduce operator dependency, integration with other analytical techniques, batch processing functionality, and advanced statistical analysis tools. Additionally, there is growing demand for machine learning and AI-enhanced algorithms that can identify patterns and anomalies beyond human visual perception.

Regional analysis shows North America leading the market with approximately 40% share, followed by Europe and Asia-Pacific. However, the Asia-Pacific region demonstrates the fastest growth rate, driven by expanding semiconductor manufacturing and research infrastructure in countries like China, South Korea, and Taiwan.

The market structure reveals a mix of established scientific instrument companies offering proprietary software solutions bundled with hardware, specialized software developers focusing exclusively on analysis algorithms, and open-source initiatives gaining traction in academic settings. This diverse ecosystem creates both competitive pressures and collaboration opportunities for new algorithm development.

Current AFM Image Analysis Challenges

Atomic Force Microscopy (AFM) image analysis faces several significant challenges that impede the full utilization of this powerful technique. One primary challenge is the inherent noise present in AFM images, which can originate from various sources including thermal fluctuations, electronic noise from instrumentation, and mechanical vibrations. These noise artifacts significantly reduce image quality and complicate accurate interpretation of nanoscale features.

Tip-sample interaction artifacts represent another major challenge, as they can introduce false topographical features or distort actual surface characteristics. The complex nature of these interactions, influenced by factors such as tip geometry, sample elasticity, and environmental conditions, makes it difficult to develop universal correction algorithms that work across different experimental setups.

Image drift poses a substantial problem, particularly during long-duration scans. Thermal expansion of components, piezoelectric creep, and environmental fluctuations can cause lateral shifts in the image, resulting in distorted representations of the sample surface. Current drift correction methods often require reference markers or complex post-processing techniques that may introduce additional artifacts.

Resolution limitations remain a persistent challenge in AFM imaging. While AFM theoretically offers atomic resolution, practical factors such as tip radius, feedback loop parameters, and environmental conditions often limit the achievable resolution. Developing algorithms that can effectively enhance resolution without introducing artificial features requires sophisticated mathematical approaches.

Data heterogeneity presents significant obstacles for automated analysis. AFM can operate in multiple modes (contact, tapping, non-contact) and can measure various properties (topography, phase, friction), resulting in diverse data types that require specialized processing approaches. This heterogeneity complicates the development of standardized analysis workflows.

Quantitative analysis of AFM images faces challenges in accurate feature extraction and measurement. Parameters such as roughness, particle size, and surface morphology require robust algorithms that can account for instrumental artifacts while providing reproducible measurements. Current methods often show significant variations between different analysis software packages.

The lack of standardized procedures for image processing represents a systemic challenge in the field. Unlike other microscopy techniques, AFM image analysis lacks widely accepted protocols, leading to inconsistencies in data interpretation across research groups. This hampers reproducibility and makes comparative studies difficult.

High computational demands of advanced processing techniques, particularly for large datasets or real-time applications, limit the widespread implementation of sophisticated analysis methods. Many cutting-edge algorithms require specialized hardware or extensive processing time, making them impractical for routine analysis in many research environments.

Tip-sample interaction artifacts represent another major challenge, as they can introduce false topographical features or distort actual surface characteristics. The complex nature of these interactions, influenced by factors such as tip geometry, sample elasticity, and environmental conditions, makes it difficult to develop universal correction algorithms that work across different experimental setups.

Image drift poses a substantial problem, particularly during long-duration scans. Thermal expansion of components, piezoelectric creep, and environmental fluctuations can cause lateral shifts in the image, resulting in distorted representations of the sample surface. Current drift correction methods often require reference markers or complex post-processing techniques that may introduce additional artifacts.

Resolution limitations remain a persistent challenge in AFM imaging. While AFM theoretically offers atomic resolution, practical factors such as tip radius, feedback loop parameters, and environmental conditions often limit the achievable resolution. Developing algorithms that can effectively enhance resolution without introducing artificial features requires sophisticated mathematical approaches.

Data heterogeneity presents significant obstacles for automated analysis. AFM can operate in multiple modes (contact, tapping, non-contact) and can measure various properties (topography, phase, friction), resulting in diverse data types that require specialized processing approaches. This heterogeneity complicates the development of standardized analysis workflows.

Quantitative analysis of AFM images faces challenges in accurate feature extraction and measurement. Parameters such as roughness, particle size, and surface morphology require robust algorithms that can account for instrumental artifacts while providing reproducible measurements. Current methods often show significant variations between different analysis software packages.

The lack of standardized procedures for image processing represents a systemic challenge in the field. Unlike other microscopy techniques, AFM image analysis lacks widely accepted protocols, leading to inconsistencies in data interpretation across research groups. This hampers reproducibility and makes comparative studies difficult.

High computational demands of advanced processing techniques, particularly for large datasets or real-time applications, limit the widespread implementation of sophisticated analysis methods. Many cutting-edge algorithms require specialized hardware or extensive processing time, making them impractical for routine analysis in many research environments.

Leading AFM Algorithm Development Organizations

Atomic Force Microscopy (AFM) image analysis algorithms are currently in a growth phase, with the market expanding due to increasing applications in nanotechnology, materials science, and biological research. The global AFM market is projected to reach significant scale as demand for high-resolution imaging solutions grows across industries. Technologically, the field shows varying maturity levels, with established players like Bruker Nano, Inc. leading innovation alongside newer entrants. Key competitors include Keysight Technologies, Leica Microsystems, and WITec GmbH, who are advancing proprietary algorithms for enhanced image processing and analysis. Academic institutions such as CSIC, Nankai University, and Beihang University are contributing fundamental research, while industrial players like Seagate, Sony, and Huawei are developing application-specific implementations, creating a dynamic competitive landscape balancing commercial solutions with open-source approaches.

Bruker Nano, Inc.

Technical Solution: Bruker Nano has developed advanced AFM image analysis algorithms through their proprietary NanoScope Analysis software suite. Their methodology incorporates multi-channel data processing that simultaneously analyzes topography, phase, and amplitude data to extract comprehensive surface characteristics. The company employs machine learning-based feature recognition algorithms that can automatically identify and categorize nanoscale structures based on morphological parameters. Their PeakForce QNM (Quantitative Nanomechanical Mapping) technology integrates real-time mechanical property mapping with topographical imaging, allowing for correlation between physical structures and their mechanical behaviors. Bruker's algorithms include adaptive noise filtering techniques that preserve edge details while removing scanning artifacts, and their 3D visualization tools incorporate advanced rendering algorithms for intuitive interpretation of complex surface topographies. Recent developments include automated tip-sample interaction modeling to correct for tip-induced artifacts in high-resolution imaging.

Strengths: Industry-leading integration of hardware and software solutions provides seamless workflow from data acquisition to analysis. Their algorithms are extensively validated across multiple application domains. Weaknesses: Proprietary nature of their software ecosystem creates vendor lock-in, and the complex algorithms often require significant computational resources for processing large datasets.

Keysight Technologies, Inc.

Technical Solution: Keysight Technologies has developed a comprehensive AFM image analysis methodology centered around their PicoView and PicoImage software platforms. Their approach employs multi-resolution wavelet transform techniques for noise reduction while preserving critical edge information in AFM images. Keysight's algorithms feature adaptive plane-fitting routines that automatically compensate for sample tilt and scanner nonlinearities, crucial for accurate nanoscale measurements. Their methodology incorporates statistical pattern recognition for automated feature identification and classification based on morphological parameters. The company has pioneered frequency-domain filtering techniques specifically optimized for AFM data, allowing selective removal of periodic noise patterns while maintaining structural integrity. Keysight's analysis pipeline includes advanced segmentation algorithms that can distinguish between different material phases based on mechanical property mapping, enabling compositional analysis alongside topographical characterization. Their recent developments include implementing deep learning networks for automated defect detection in semiconductor and materials science applications.

Strengths: Exceptional signal processing capabilities particularly suited for electrical characterization modes of AFM. Their algorithms excel at correlating multiple data channels for comprehensive surface analysis. Weaknesses: Their analysis tools have steeper learning curves compared to competitors, and some advanced algorithms require significant user expertise to properly configure and interpret results.

Key Algorithmic Innovations in AFM Analysis

Atomic force microscopy of scanning and image processing

PatentActiveUS20160025772A1

Innovation

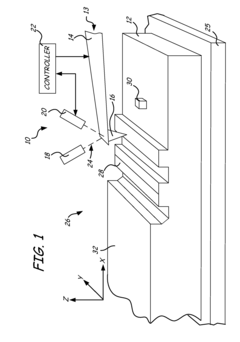

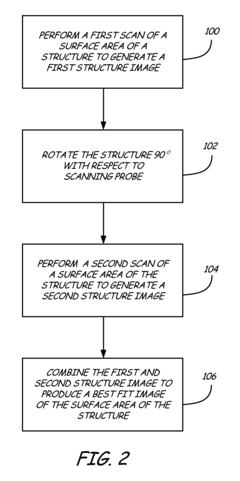

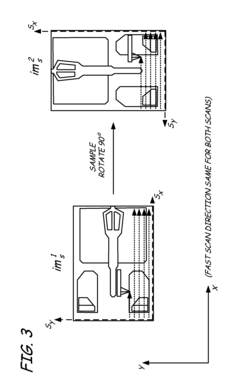

- Performing multiple AFM scans at different angles and data densities, with correction techniques to align and integrate images, ensuring higher resolution at areas of interest while maintaining contextual details and reducing artifacts.

Atomic force microscopy true shape measurement method

PatentInactiveUS8296860B2

Innovation

- The method involves scanning a structure and a flat standard surface twice, each time rotated 90°, and combining the images to produce best fit images. These best fit images are then subtracted to eliminate thermal drift and Zrr errors, allowing for the generation of a true topographical image.

Data Validation and Accuracy Assessment

Data validation and accuracy assessment constitute critical components in the development of reliable Atomic Force Microscopy (AFM) image analysis algorithms. Establishing robust validation protocols ensures that algorithms produce consistent, reproducible, and scientifically sound results across diverse sample types and imaging conditions. The validation process typically begins with benchmark datasets containing known reference features or standards with well-characterized dimensions and properties, allowing for quantitative comparison between algorithm outputs and established ground truth.

Statistical methods play a fundamental role in accuracy assessment, including calculation of precision metrics such as root mean square error (RMSE), mean absolute error (MAE), and standard deviation of measurements. These metrics provide quantitative measures of how closely algorithm results match reference values. Additionally, correlation coefficients and Bland-Altman plots offer valuable insights into systematic biases or proportional errors that may exist in the algorithmic analysis.

Cross-validation techniques further strengthen the reliability assessment by partitioning available data into training and testing subsets. k-fold cross-validation, leave-one-out methods, and bootstrap resampling represent common approaches that help evaluate algorithm performance across different data distributions and prevent overfitting to specific sample characteristics.

Uncertainty quantification represents another essential aspect of AFM image analysis validation. This involves characterizing measurement uncertainties arising from instrument noise, environmental factors, tip-sample interactions, and algorithmic processing steps. Monte Carlo simulations and error propagation analyses can effectively model how these uncertainties propagate through the analysis pipeline and affect final measurements.

Resolution assessment protocols determine the minimum feature size that can be reliably detected and measured by the algorithm. This typically involves analyzing test patterns with progressively smaller features until the detection limit is reached, often quantified using modulation transfer function (MTF) or Fourier ring correlation analyses.

Inter-laboratory comparison studies provide external validation by evaluating algorithm performance across different instruments, operators, and environmental conditions. Such studies help identify potential sources of variability and establish reproducibility limits for the developed algorithms. Participation in round-robin tests or collaborative measurement campaigns can significantly enhance confidence in algorithm robustness.

Finally, documentation of validation procedures and results in accordance with metrological standards ensures transparency and facilitates adoption of the algorithms by the broader scientific community. This includes detailed reporting of validation datasets, statistical methods, uncertainty budgets, and known limitations of the algorithms under specific conditions.

Statistical methods play a fundamental role in accuracy assessment, including calculation of precision metrics such as root mean square error (RMSE), mean absolute error (MAE), and standard deviation of measurements. These metrics provide quantitative measures of how closely algorithm results match reference values. Additionally, correlation coefficients and Bland-Altman plots offer valuable insights into systematic biases or proportional errors that may exist in the algorithmic analysis.

Cross-validation techniques further strengthen the reliability assessment by partitioning available data into training and testing subsets. k-fold cross-validation, leave-one-out methods, and bootstrap resampling represent common approaches that help evaluate algorithm performance across different data distributions and prevent overfitting to specific sample characteristics.

Uncertainty quantification represents another essential aspect of AFM image analysis validation. This involves characterizing measurement uncertainties arising from instrument noise, environmental factors, tip-sample interactions, and algorithmic processing steps. Monte Carlo simulations and error propagation analyses can effectively model how these uncertainties propagate through the analysis pipeline and affect final measurements.

Resolution assessment protocols determine the minimum feature size that can be reliably detected and measured by the algorithm. This typically involves analyzing test patterns with progressively smaller features until the detection limit is reached, often quantified using modulation transfer function (MTF) or Fourier ring correlation analyses.

Inter-laboratory comparison studies provide external validation by evaluating algorithm performance across different instruments, operators, and environmental conditions. Such studies help identify potential sources of variability and establish reproducibility limits for the developed algorithms. Participation in round-robin tests or collaborative measurement campaigns can significantly enhance confidence in algorithm robustness.

Finally, documentation of validation procedures and results in accordance with metrological standards ensures transparency and facilitates adoption of the algorithms by the broader scientific community. This includes detailed reporting of validation datasets, statistical methods, uncertainty budgets, and known limitations of the algorithms under specific conditions.

Integration with Machine Learning Frameworks

The integration of machine learning frameworks with Atomic Force Microscopy (AFM) image analysis represents a significant advancement in nanoscale imaging technology. Traditional AFM image processing relies heavily on deterministic algorithms that often struggle with complex surface topographies and noise patterns. Machine learning approaches offer powerful alternatives by leveraging data-driven models that can adapt to various imaging conditions and sample characteristics.

Current machine learning frameworks such as TensorFlow, PyTorch, and scikit-learn provide robust platforms for implementing advanced image analysis algorithms. These frameworks facilitate the development of convolutional neural networks (CNNs) that excel at feature extraction from AFM images, enabling automated identification of nanoscale structures with minimal human intervention. The integration process typically involves preprocessing AFM data to normalize scale and contrast, followed by feature extraction and classification or regression tasks depending on the specific analysis goals.

Transfer learning techniques have proven particularly valuable in the AFM domain, where labeled training data may be limited. Pre-trained models on large image datasets can be fine-tuned with smaller AFM-specific datasets, significantly reducing the computational resources and time required for model development. This approach has demonstrated success in identifying surface defects, characterizing material properties, and quantifying nanoscale phenomena across diverse sample types.

Real-time processing capabilities represent another crucial aspect of machine learning integration. Modern frameworks support model optimization techniques such as quantization and pruning, allowing complex algorithms to run efficiently on standard laboratory computing hardware. This enables researchers to obtain immediate feedback during AFM scanning sessions, potentially adjusting parameters on-the-fly to optimize image acquisition.

Interoperability between AFM instrument software and machine learning frameworks remains a technical challenge. Several middleware solutions have emerged to address this gap, including open-source libraries that provide standardized data formats and communication protocols. Commercial AFM manufacturers have also begun incorporating machine learning capabilities directly into their software suites, though these implementations often lack the flexibility of standalone frameworks.

The validation of machine learning results against established physical models represents a critical consideration in scientific applications. Hybrid approaches that combine physics-based constraints with data-driven learning have shown promise in maintaining scientific rigor while leveraging the pattern recognition capabilities of neural networks. These methods typically incorporate domain knowledge as regularization terms or architectural constraints within the learning framework.

Looking forward, edge computing implementations of machine learning frameworks may enable more sophisticated on-instrument analysis, reducing data transfer bottlenecks and enabling truly adaptive scanning methodologies. This convergence of nanoscale imaging hardware with advanced computational techniques points toward a new paradigm in materials characterization and nanoscience research.

Current machine learning frameworks such as TensorFlow, PyTorch, and scikit-learn provide robust platforms for implementing advanced image analysis algorithms. These frameworks facilitate the development of convolutional neural networks (CNNs) that excel at feature extraction from AFM images, enabling automated identification of nanoscale structures with minimal human intervention. The integration process typically involves preprocessing AFM data to normalize scale and contrast, followed by feature extraction and classification or regression tasks depending on the specific analysis goals.

Transfer learning techniques have proven particularly valuable in the AFM domain, where labeled training data may be limited. Pre-trained models on large image datasets can be fine-tuned with smaller AFM-specific datasets, significantly reducing the computational resources and time required for model development. This approach has demonstrated success in identifying surface defects, characterizing material properties, and quantifying nanoscale phenomena across diverse sample types.

Real-time processing capabilities represent another crucial aspect of machine learning integration. Modern frameworks support model optimization techniques such as quantization and pruning, allowing complex algorithms to run efficiently on standard laboratory computing hardware. This enables researchers to obtain immediate feedback during AFM scanning sessions, potentially adjusting parameters on-the-fly to optimize image acquisition.

Interoperability between AFM instrument software and machine learning frameworks remains a technical challenge. Several middleware solutions have emerged to address this gap, including open-source libraries that provide standardized data formats and communication protocols. Commercial AFM manufacturers have also begun incorporating machine learning capabilities directly into their software suites, though these implementations often lack the flexibility of standalone frameworks.

The validation of machine learning results against established physical models represents a critical consideration in scientific applications. Hybrid approaches that combine physics-based constraints with data-driven learning have shown promise in maintaining scientific rigor while leveraging the pattern recognition capabilities of neural networks. These methods typically incorporate domain knowledge as regularization terms or architectural constraints within the learning framework.

Looking forward, edge computing implementations of machine learning frameworks may enable more sophisticated on-instrument analysis, reducing data transfer bottlenecks and enabling truly adaptive scanning methodologies. This convergence of nanoscale imaging hardware with advanced computational techniques points toward a new paradigm in materials characterization and nanoscience research.

Unlock deeper insights with PatSnap Eureka Quick Research — get a full tech report to explore trends and direct your research. Try now!

Generate Your Research Report Instantly with AI Agent

Supercharge your innovation with PatSnap Eureka AI Agent Platform!