Hardware Fault Injection Testing For QEC Robustness Certification

SEP 2, 20259 MIN READ

Generate Your Research Report Instantly with AI Agent

PatSnap Eureka helps you evaluate technical feasibility & market potential.

QEC Hardware Fault Injection Background and Objectives

Quantum Error Correction (QEC) represents a critical advancement in quantum computing, enabling the protection of quantum information against decoherence and operational errors. As quantum systems scale toward practical applications, ensuring their fault tolerance becomes paramount. Hardware Fault Injection Testing has emerged as an essential methodology for validating QEC protocols under realistic error conditions.

The evolution of QEC techniques dates back to the mid-1990s with the development of the Shor code and stabilizer formalism. These theoretical foundations have gradually transitioned into experimental implementations across various quantum computing platforms. Recent years have witnessed significant progress in demonstrating small-scale error correction codes on superconducting qubits, trapped ions, and photonic systems, marking important milestones toward fault-tolerant quantum computation.

The primary objective of Hardware Fault Injection Testing for QEC is to systematically evaluate the robustness of error correction protocols against realistic hardware failures. Unlike simulated errors, hardware-induced faults provide authentic test conditions that reflect the actual operational environment of quantum processors. This approach aims to bridge the gap between theoretical error models and practical implementation challenges.

Current technological trends indicate a growing emphasis on characterizing and mitigating specific error channels relevant to different quantum computing architectures. The field is moving toward more sophisticated fault injection methodologies that can precisely target various components of the quantum processing stack, from physical qubits to control electronics and readout systems.

The technical goals of QEC robustness certification through hardware fault injection include: establishing standardized benchmarks for comparing different QEC implementations; developing comprehensive fault models that accurately represent hardware-level failures; creating adaptive testing frameworks capable of identifying critical failure points; and formulating quantitative metrics for certifying fault tolerance thresholds under realistic conditions.

Looking forward, the integration of machine learning techniques with hardware fault injection testing presents promising opportunities for optimizing QEC protocols. Additionally, the development of automated testing infrastructures will be crucial for scaling validation processes as quantum systems grow in complexity. The ultimate aim is to establish rigorous certification methodologies that can guarantee the reliability of error-corrected quantum systems for practical applications in scientific computing, cryptography, and optimization problems.

The evolution of QEC techniques dates back to the mid-1990s with the development of the Shor code and stabilizer formalism. These theoretical foundations have gradually transitioned into experimental implementations across various quantum computing platforms. Recent years have witnessed significant progress in demonstrating small-scale error correction codes on superconducting qubits, trapped ions, and photonic systems, marking important milestones toward fault-tolerant quantum computation.

The primary objective of Hardware Fault Injection Testing for QEC is to systematically evaluate the robustness of error correction protocols against realistic hardware failures. Unlike simulated errors, hardware-induced faults provide authentic test conditions that reflect the actual operational environment of quantum processors. This approach aims to bridge the gap between theoretical error models and practical implementation challenges.

Current technological trends indicate a growing emphasis on characterizing and mitigating specific error channels relevant to different quantum computing architectures. The field is moving toward more sophisticated fault injection methodologies that can precisely target various components of the quantum processing stack, from physical qubits to control electronics and readout systems.

The technical goals of QEC robustness certification through hardware fault injection include: establishing standardized benchmarks for comparing different QEC implementations; developing comprehensive fault models that accurately represent hardware-level failures; creating adaptive testing frameworks capable of identifying critical failure points; and formulating quantitative metrics for certifying fault tolerance thresholds under realistic conditions.

Looking forward, the integration of machine learning techniques with hardware fault injection testing presents promising opportunities for optimizing QEC protocols. Additionally, the development of automated testing infrastructures will be crucial for scaling validation processes as quantum systems grow in complexity. The ultimate aim is to establish rigorous certification methodologies that can guarantee the reliability of error-corrected quantum systems for practical applications in scientific computing, cryptography, and optimization problems.

Market Demand for Robust Quantum Error Correction

The quantum computing market is experiencing significant growth, with projections indicating expansion from $866 million in 2023 to approximately $4.375 billion by 2028, representing a compound annual growth rate of 38.3%. Within this expanding ecosystem, Quantum Error Correction (QEC) has emerged as a critical technology, addressing the fundamental challenge of quantum decoherence and errors that currently limit practical quantum computing applications.

Industry stakeholders across multiple sectors are demonstrating increasing demand for robust QEC solutions. Financial institutions seeking quantum advantage for portfolio optimization and risk assessment require error rates below 10^-15 for meaningful computational advantage. Pharmaceutical companies investing in quantum computing for drug discovery need reliable quantum systems capable of maintaining coherence throughout complex molecular simulations, necessitating effective error correction mechanisms.

Government and defense sectors represent another significant market segment, with substantial investments in quantum technologies for cryptography and security applications. The US National Quantum Initiative Act allocated $1.2 billion for quantum research, with a significant portion directed toward error correction research. Similarly, China's announced $10 billion national quantum laboratory and the EU's Quantum Flagship program with €1 billion in funding both prioritize QEC development.

Hardware manufacturers and quantum service providers are increasingly focused on demonstrating QEC capabilities as a competitive differentiator. Companies like IBM, Google, and Rigetti prominently feature their error correction roadmaps in investor presentations, indicating the market value placed on robust QEC implementations.

Market research indicates that enterprise customers are willing to pay premium prices for quantum computing services with verified error correction capabilities. A recent survey of potential quantum computing customers revealed that 78% consider demonstrated QEC robustness as "very important" or "critical" in their vendor selection process, highlighting the commercial importance of QEC certification.

The market specifically demands standardized certification methodologies for QEC robustness. Current quantum hardware providers use inconsistent metrics and testing protocols, creating market confusion and hindering adoption. Hardware fault injection testing represents a potential solution to this standardization challenge, offering a systematic approach to certifying QEC implementations across different quantum computing architectures.

As quantum computing transitions from research to commercial applications, investors and enterprise customers increasingly require evidence-based certification of QEC performance before committing significant resources. This market dynamic creates substantial demand for robust testing methodologies that can reliably evaluate and certify the error correction capabilities of quantum computing systems.

Industry stakeholders across multiple sectors are demonstrating increasing demand for robust QEC solutions. Financial institutions seeking quantum advantage for portfolio optimization and risk assessment require error rates below 10^-15 for meaningful computational advantage. Pharmaceutical companies investing in quantum computing for drug discovery need reliable quantum systems capable of maintaining coherence throughout complex molecular simulations, necessitating effective error correction mechanisms.

Government and defense sectors represent another significant market segment, with substantial investments in quantum technologies for cryptography and security applications. The US National Quantum Initiative Act allocated $1.2 billion for quantum research, with a significant portion directed toward error correction research. Similarly, China's announced $10 billion national quantum laboratory and the EU's Quantum Flagship program with €1 billion in funding both prioritize QEC development.

Hardware manufacturers and quantum service providers are increasingly focused on demonstrating QEC capabilities as a competitive differentiator. Companies like IBM, Google, and Rigetti prominently feature their error correction roadmaps in investor presentations, indicating the market value placed on robust QEC implementations.

Market research indicates that enterprise customers are willing to pay premium prices for quantum computing services with verified error correction capabilities. A recent survey of potential quantum computing customers revealed that 78% consider demonstrated QEC robustness as "very important" or "critical" in their vendor selection process, highlighting the commercial importance of QEC certification.

The market specifically demands standardized certification methodologies for QEC robustness. Current quantum hardware providers use inconsistent metrics and testing protocols, creating market confusion and hindering adoption. Hardware fault injection testing represents a potential solution to this standardization challenge, offering a systematic approach to certifying QEC implementations across different quantum computing architectures.

As quantum computing transitions from research to commercial applications, investors and enterprise customers increasingly require evidence-based certification of QEC performance before committing significant resources. This market dynamic creates substantial demand for robust testing methodologies that can reliably evaluate and certify the error correction capabilities of quantum computing systems.

Current Challenges in Hardware Fault Injection Testing

Hardware fault injection testing for quantum error correction (QEC) systems faces several significant challenges that impede comprehensive robustness certification. The complexity of quantum systems presents a fundamental obstacle, as quantum states are inherently fragile and susceptible to environmental noise, making controlled fault injection particularly difficult without causing unintended perturbations.

Scale and coverage limitations represent another major challenge. Current quantum processors operate with limited qubit counts, restricting the scale at which fault injection can be performed. This creates difficulties in testing error correction codes that require larger qubit arrays to demonstrate meaningful fault tolerance. Additionally, achieving comprehensive coverage of all possible fault scenarios becomes exponentially more complex as system size increases.

Reproducibility issues plague hardware fault injection testing in quantum systems. The probabilistic nature of quantum mechanics means that identical fault injection procedures may produce different outcomes across test runs. This variability complicates the certification process, as it becomes difficult to establish deterministic relationships between injected faults and system responses.

The lack of standardized methodologies further hampers progress in this field. Unlike classical computing, where fault injection techniques have matured over decades, quantum computing lacks established protocols for fault characterization, injection methods, and success metrics. This absence of standardization makes it challenging to compare results across different research groups and hardware platforms.

Simulation-hardware gaps present additional complications. While software simulations offer controlled environments for fault testing, they often fail to capture the full complexity of physical quantum systems. Conversely, hardware-based testing provides realistic conditions but with limited controllability and observability of internal quantum states.

Technical implementation challenges also exist at the hardware level. Precise control over fault injection timing, location, and magnitude requires sophisticated instrumentation that may interfere with the quantum system itself. The trade-off between fault precision and system disturbance remains a significant technical hurdle.

Finally, the interpretation of results poses analytical challenges. Distinguishing between the effects of intentionally injected faults versus background noise requires advanced statistical methods. Furthermore, translating test results into meaningful robustness metrics that can inform practical QEC implementations remains an open research question, complicating certification efforts.

Scale and coverage limitations represent another major challenge. Current quantum processors operate with limited qubit counts, restricting the scale at which fault injection can be performed. This creates difficulties in testing error correction codes that require larger qubit arrays to demonstrate meaningful fault tolerance. Additionally, achieving comprehensive coverage of all possible fault scenarios becomes exponentially more complex as system size increases.

Reproducibility issues plague hardware fault injection testing in quantum systems. The probabilistic nature of quantum mechanics means that identical fault injection procedures may produce different outcomes across test runs. This variability complicates the certification process, as it becomes difficult to establish deterministic relationships between injected faults and system responses.

The lack of standardized methodologies further hampers progress in this field. Unlike classical computing, where fault injection techniques have matured over decades, quantum computing lacks established protocols for fault characterization, injection methods, and success metrics. This absence of standardization makes it challenging to compare results across different research groups and hardware platforms.

Simulation-hardware gaps present additional complications. While software simulations offer controlled environments for fault testing, they often fail to capture the full complexity of physical quantum systems. Conversely, hardware-based testing provides realistic conditions but with limited controllability and observability of internal quantum states.

Technical implementation challenges also exist at the hardware level. Precise control over fault injection timing, location, and magnitude requires sophisticated instrumentation that may interfere with the quantum system itself. The trade-off between fault precision and system disturbance remains a significant technical hurdle.

Finally, the interpretation of results poses analytical challenges. Distinguishing between the effects of intentionally injected faults versus background noise requires advanced statistical methods. Furthermore, translating test results into meaningful robustness metrics that can inform practical QEC implementations remains an open research question, complicating certification efforts.

Current Hardware Fault Injection Techniques

01 Hardware fault injection techniques for robustness testing

Hardware fault injection involves deliberately introducing faults into hardware systems to evaluate their robustness and failure response. These techniques can simulate various hardware failures such as power fluctuations, signal interference, or component malfunctions to test how systems respond under adverse conditions. By systematically injecting faults, engineers can identify vulnerabilities, validate error detection mechanisms, and improve overall system resilience against hardware failures.- Hardware-based fault injection techniques: Hardware-based fault injection involves introducing physical faults into hardware components to test system robustness. These techniques include voltage glitching, clock manipulation, and electromagnetic interference to simulate real-world hardware failures. By subjecting hardware to controlled fault conditions, developers can evaluate how systems respond to unexpected failures and improve their resilience against hardware malfunctions.

- Simulation-based fault testing methodologies: Simulation-based approaches allow for testing hardware robustness without physical intervention. These methodologies use software models to simulate various fault scenarios, enabling comprehensive testing of hardware designs before physical implementation. Simulation tools can inject faults at different abstraction levels, from gate-level to system-level, providing insights into potential vulnerabilities and failure modes in hardware designs.

- Automated fault injection frameworks: Automated frameworks streamline the process of fault injection testing by providing systematic approaches to test case generation, execution, and analysis. These frameworks can automatically identify critical test points, apply various fault models, and collect comprehensive test results. By automating the fault injection process, developers can achieve more thorough testing coverage while reducing the time and effort required for robustness evaluation.

- Security-focused fault injection testing: Security-focused fault injection specifically targets potential vulnerabilities that could be exploited by attackers. These techniques simulate fault-based attacks such as side-channel analysis, power analysis, and timing attacks to evaluate the security robustness of hardware systems. By identifying security weaknesses through controlled fault injection, developers can implement countermeasures to protect against malicious exploitation of hardware vulnerabilities.

- Real-time fault detection and recovery mechanisms: Real-time fault detection and recovery mechanisms focus on identifying hardware faults during operation and implementing appropriate recovery strategies. These approaches include redundancy techniques, error detection codes, and self-healing mechanisms that can maintain system functionality despite hardware failures. Testing these mechanisms involves injecting faults during operation to evaluate how effectively the system can detect, isolate, and recover from hardware failures without compromising critical functions.

02 Simulation-based fault injection testing methodologies

Simulation-based approaches allow for controlled and repeatable fault injection testing without risking damage to physical hardware. These methodologies use software models to emulate hardware behavior and introduce various fault scenarios. Advanced simulation frameworks can model complex systems and predict their responses to different types of faults, enabling comprehensive robustness testing during early development stages when hardware modifications are less costly.Expand Specific Solutions03 Automated fault injection testing systems

Automated systems for fault injection testing streamline the process of evaluating hardware robustness by systematically introducing faults and analyzing responses without manual intervention. These systems can execute comprehensive test suites that cover numerous fault scenarios, collect performance metrics, and generate detailed reports on system behavior under fault conditions. Automation increases testing efficiency, improves coverage, and enables more thorough validation of hardware robustness.Expand Specific Solutions04 Security validation through fault injection

Fault injection techniques are increasingly used to validate security mechanisms in hardware systems by attempting to bypass security controls through deliberately induced faults. This approach helps identify vulnerabilities that could be exploited in fault-based attacks such as power glitching, clock manipulation, or electromagnetic interference. By understanding how security features respond to hardware faults, designers can implement more robust protection mechanisms against physical attacks.Expand Specific Solutions05 Real-time fault detection and recovery mechanisms

Hardware systems can be designed with built-in mechanisms to detect faults in real-time and initiate appropriate recovery procedures. These mechanisms include error detection circuits, watchdog timers, redundant components, and self-testing routines that continuously monitor system health. When faults are detected, the system can implement various recovery strategies such as component isolation, reconfiguration, or graceful degradation to maintain critical functionality despite hardware failures.Expand Specific Solutions

Leading Organizations in QEC Robustness Certification

Hardware Fault Injection Testing for QEC robustness certification is in an emerging growth phase, with the market expanding as quantum error correction becomes critical for practical quantum computing. The technology is approaching early maturity, with key players demonstrating varied capabilities. Huawei, NVIDIA, and Intel lead commercial development with robust testing frameworks, while academic institutions like Harbin Institute of Technology and Beihang University contribute foundational research. TSMC and SMIC provide essential semiconductor manufacturing expertise. Microsoft and Google focus on software-hardware integration approaches. The competitive landscape is characterized by collaboration between hardware manufacturers, research institutions, and software developers, with increasing investment as quantum computing approaches practical implementation.

NXP USA, Inc.

Technical Solution: NXP has developed specialized Hardware Fault Injection Testing platforms focused on practical QEC implementation in embedded systems. Their approach combines electromagnetic fault injection (EMFI) techniques with laser-based fault induction methods to comprehensively test quantum error correction implementations. NXP's testing framework includes custom hardware that can precisely target specific components of quantum control electronics to evaluate their resilience against environmental disturbances. Their methodology incorporates real-time monitoring systems that can detect and characterize fault propagation through quantum error correction circuits, providing valuable data for robustness certification. NXP has pioneered techniques for testing the interface between classical control electronics and quantum processing units, a critical vulnerability point in many quantum computing implementations. Their certification process includes standardized test suites that evaluate QEC performance against common fault models, including timing errors, voltage glitches, and electromagnetic interference patterns typically encountered in practical deployment scenarios.

Strengths: NXP's expertise in secure embedded systems provides practical insights into real-world fault scenarios that might affect quantum computing systems. Their hardware-based approach offers high-fidelity testing of actual physical error mechanisms. Weaknesses: Their solutions may be more focused on the classical control electronics rather than the quantum processing elements themselves, potentially missing quantum-specific error modes.

Microsoft Technology Licensing LLC

Technical Solution: Microsoft has developed the Q# Fault Injection Framework specifically designed for QEC robustness certification. Their approach integrates with their Quantum Development Kit to provide end-to-end testing capabilities for quantum error correction implementations. Microsoft's system employs topological quantum error correction models that can be systematically stressed through controlled fault injection at both the physical and logical qubit levels. Their testing methodology incorporates automated fault pattern generation based on theoretical error models derived from physical qubit implementations. Microsoft has pioneered techniques for evaluating the performance of surface code implementations under various noise conditions, including correlated errors that are particularly challenging for QEC systems. Their certification framework includes comprehensive metrics for quantifying error correction thresholds and logical error rates under different fault scenarios. Microsoft collaborates with quantum hardware providers to validate their fault injection models against experimental data from actual quantum processors, ensuring the relevance of their certification approach.

Strengths: Microsoft's strong theoretical foundation in topological quantum computing provides deep insights into error correction mechanisms. Their software-centric approach enables rapid iteration and testing of different QEC strategies. Weaknesses: Their testing framework may be optimized primarily for topological quantum computing approaches, potentially offering less support for other quantum computing paradigms.

Key Innovations in QEC Robustness Testing

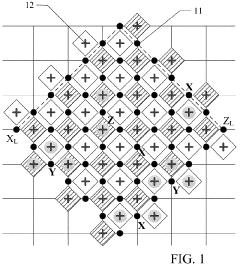

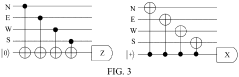

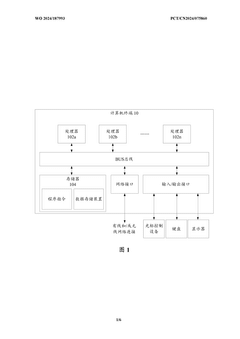

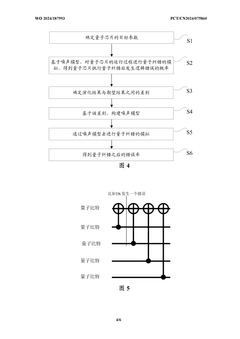

Quantum error correction decoding system and method, fault-tolerant quantum error correction system, and chip

PatentActiveUS11842250B2

Innovation

- A quantum error correction decoding system and method utilizing neural network decoders with multiply accumulate operations on unsigned fixed-point numbers, integrated into an error correction chip, to quickly decode error syndrome information and determine error locations and types in quantum circuits, thereby enabling real-time error correction.

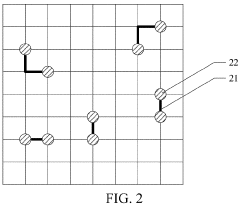

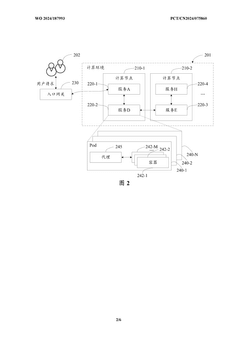

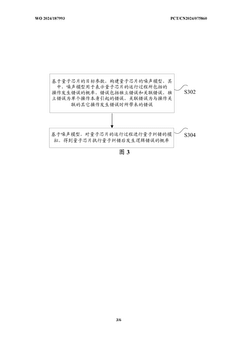

Quantum error correction processing method and apparatus, and computer device

PatentWO2024187993A1

Innovation

- By constructing a noise model of the quantum chip to represent the probability of independent errors and correlated errors during the operation of the quantum chip, quantum error correction simulations are performed to improve the accuracy and effect of error correction.

Standardization Efforts for QEC Certification

The standardization of Quantum Error Correction (QEC) certification represents a critical frontier in quantum computing's maturation toward practical applications. Currently, several international bodies are working to establish comprehensive frameworks for validating QEC implementations through hardware fault injection testing. The IEEE Quantum Computing Working Group has initiated the P1913 standard specifically addressing "Software-Defined Quantum Communication," which includes protocols for certifying error correction capabilities under various fault conditions. Similarly, the International Organization for Standardization (ISO) has formed the ISO/IEC JTC 1/SC 42 committee focused on quantum computing standards, with a dedicated workstream on error correction benchmarking methodologies.

These standardization efforts are converging around several key principles for QEC certification. First is the establishment of standardized fault models that accurately represent real-world quantum hardware failure modes, including decoherence, gate errors, and measurement inaccuracies. Second is the definition of minimum performance thresholds that QEC implementations must achieve under controlled fault injection to be considered robust. The National Institute of Standards and Technology (NIST) has proposed a preliminary framework requiring QEC systems to demonstrate logical error rates at least two orders of magnitude lower than physical error rates under specified fault conditions.

Industry consortia are also making significant contributions to standardization efforts. The Quantum Economic Development Consortium (QED-C) has established a technical advisory committee specifically focused on QEC certification standards, bringing together hardware manufacturers, software developers, and end users to ensure standards meet diverse stakeholder needs. Their recent publication, "Benchmarking Guidelines for Quantum Error Correction," proposes a tiered certification system ranging from Level 1 (basic error detection) to Level 5 (fault-tolerant quantum computation).

Academic institutions are collaborating with industry partners to develop open-source certification toolkits that implement these emerging standards. The Quantum Error Correction Testing and Certification Toolkit (QECTCT), developed through a multi-university initiative, provides standardized fault injection patterns and analysis tools compatible with major quantum computing platforms. This toolkit has been adopted by several national laboratories as part of their quantum technology evaluation processes.

Looking forward, the roadmap for QEC certification standardization includes the development of automated certification processes by 2025, international harmonization of standards by 2027, and the establishment of accredited certification authorities by 2030. These efforts recognize that as quantum computing transitions from research to commercial applications, standardized certification will be essential for building trust in quantum systems and enabling their deployment in critical applications.

These standardization efforts are converging around several key principles for QEC certification. First is the establishment of standardized fault models that accurately represent real-world quantum hardware failure modes, including decoherence, gate errors, and measurement inaccuracies. Second is the definition of minimum performance thresholds that QEC implementations must achieve under controlled fault injection to be considered robust. The National Institute of Standards and Technology (NIST) has proposed a preliminary framework requiring QEC systems to demonstrate logical error rates at least two orders of magnitude lower than physical error rates under specified fault conditions.

Industry consortia are also making significant contributions to standardization efforts. The Quantum Economic Development Consortium (QED-C) has established a technical advisory committee specifically focused on QEC certification standards, bringing together hardware manufacturers, software developers, and end users to ensure standards meet diverse stakeholder needs. Their recent publication, "Benchmarking Guidelines for Quantum Error Correction," proposes a tiered certification system ranging from Level 1 (basic error detection) to Level 5 (fault-tolerant quantum computation).

Academic institutions are collaborating with industry partners to develop open-source certification toolkits that implement these emerging standards. The Quantum Error Correction Testing and Certification Toolkit (QECTCT), developed through a multi-university initiative, provides standardized fault injection patterns and analysis tools compatible with major quantum computing platforms. This toolkit has been adopted by several national laboratories as part of their quantum technology evaluation processes.

Looking forward, the roadmap for QEC certification standardization includes the development of automated certification processes by 2025, international harmonization of standards by 2027, and the establishment of accredited certification authorities by 2030. These efforts recognize that as quantum computing transitions from research to commercial applications, standardized certification will be essential for building trust in quantum systems and enabling their deployment in critical applications.

Risk Assessment Framework for Quantum Systems

The development of a comprehensive Risk Assessment Framework for Quantum Systems represents a critical component in ensuring the reliability and security of quantum computing technologies. As quantum error correction (QEC) becomes increasingly vital for practical quantum computing, establishing standardized methodologies to evaluate system resilience against hardware faults becomes paramount.

A robust risk assessment framework must incorporate multiple layers of evaluation, beginning with the identification of potential fault vectors specific to quantum hardware. These include environmental disturbances (magnetic field fluctuations, thermal noise), manufacturing defects in qubits, control system failures, and intentional adversarial attacks. Each vector requires distinct testing protocols within the framework to ensure comprehensive coverage.

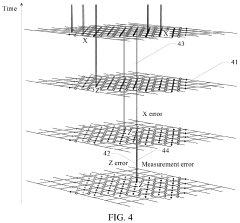

The framework should establish quantifiable metrics for risk evaluation, including fault tolerance thresholds, error propagation rates, and recovery success probabilities under various fault scenarios. These metrics must be calibrated against the specific quantum error correction codes being implemented, as different codes exhibit varying resilience to particular fault types. For instance, surface codes demonstrate strong resistance to local errors but may be vulnerable to correlated noise patterns.

Implementation of the framework necessitates a multi-tiered approach to testing. At the foundational level, simulation-based testing provides controlled environments for evaluating theoretical responses to injected faults. The intermediate tier involves controlled hardware fault injection in laboratory settings, where precise fault parameters can be manipulated. The advanced tier encompasses real-world operational testing under varying environmental conditions to validate performance in practical deployment scenarios.

Certification standards within the framework must define clear thresholds for acceptable risk levels based on application domains. Quantum systems for financial applications may require stricter fault tolerance than those used for scientific simulation. These standards should align with emerging international guidelines for quantum computing reliability while acknowledging the rapidly evolving nature of the technology.

The framework must also incorporate temporal considerations, recognizing that quantum systems may exhibit drift in performance characteristics over time. Regular recertification protocols should be established, with frequency determined by system stability metrics and operational criticality. This dynamic approach ensures continued reliability as systems age or undergo modifications.

Finally, the framework should provide clear documentation requirements for risk assessment results, enabling transparent evaluation by stakeholders and regulatory bodies. This documentation creates an auditable trail of system resilience testing that supports broader adoption of quantum technologies in sensitive application domains.

A robust risk assessment framework must incorporate multiple layers of evaluation, beginning with the identification of potential fault vectors specific to quantum hardware. These include environmental disturbances (magnetic field fluctuations, thermal noise), manufacturing defects in qubits, control system failures, and intentional adversarial attacks. Each vector requires distinct testing protocols within the framework to ensure comprehensive coverage.

The framework should establish quantifiable metrics for risk evaluation, including fault tolerance thresholds, error propagation rates, and recovery success probabilities under various fault scenarios. These metrics must be calibrated against the specific quantum error correction codes being implemented, as different codes exhibit varying resilience to particular fault types. For instance, surface codes demonstrate strong resistance to local errors but may be vulnerable to correlated noise patterns.

Implementation of the framework necessitates a multi-tiered approach to testing. At the foundational level, simulation-based testing provides controlled environments for evaluating theoretical responses to injected faults. The intermediate tier involves controlled hardware fault injection in laboratory settings, where precise fault parameters can be manipulated. The advanced tier encompasses real-world operational testing under varying environmental conditions to validate performance in practical deployment scenarios.

Certification standards within the framework must define clear thresholds for acceptable risk levels based on application domains. Quantum systems for financial applications may require stricter fault tolerance than those used for scientific simulation. These standards should align with emerging international guidelines for quantum computing reliability while acknowledging the rapidly evolving nature of the technology.

The framework must also incorporate temporal considerations, recognizing that quantum systems may exhibit drift in performance characteristics over time. Regular recertification protocols should be established, with frequency determined by system stability metrics and operational criticality. This dynamic approach ensures continued reliability as systems age or undergo modifications.

Finally, the framework should provide clear documentation requirements for risk assessment results, enabling transparent evaluation by stakeholders and regulatory bodies. This documentation creates an auditable trail of system resilience testing that supports broader adoption of quantum technologies in sensitive application domains.

Unlock deeper insights with PatSnap Eureka Quick Research — get a full tech report to explore trends and direct your research. Try now!

Generate Your Research Report Instantly with AI Agent

Supercharge your innovation with PatSnap Eureka AI Agent Platform!