Variational Approaches To Reduce QEC Overhead For Specific Workloads

SEP 2, 20259 MIN READ

Generate Your Research Report Instantly with AI Agent

PatSnap Eureka helps you evaluate technical feasibility & market potential.

Quantum Error Correction Background and Objectives

Quantum Error Correction (QEC) has emerged as a critical component in the development of practical quantum computing systems. Since the inception of quantum computing theory in the 1980s, researchers have recognized that quantum systems are inherently vulnerable to environmental noise and decoherence, which can rapidly destroy quantum information. The evolution of QEC techniques represents one of the most significant theoretical achievements in quantum information science, providing a framework to protect quantum information against errors.

The field of QEC began with Peter Shor's groundbreaking quantum error correction code in 1995, followed by the development of the stabilizer formalism by Gottesman and the discovery of topological quantum codes. These advances established the theoretical possibility of fault-tolerant quantum computation, demonstrating that quantum computers could, in principle, perform arbitrarily long computations with arbitrarily small error rates.

Traditional QEC approaches typically involve encoding logical qubits across multiple physical qubits, with additional qubits dedicated to syndrome measurement for error detection. While theoretically sound, these methods impose substantial resource requirements, often referred to as "overhead." This overhead manifests as increased qubit counts, circuit depth, and gate operations, presenting a significant barrier to practical implementation on near-term quantum devices.

The concept of variational approaches to QEC represents an emerging paradigm that aims to tailor error correction strategies to specific computational workloads. Unlike universal QEC codes designed to protect arbitrary quantum states, these approaches leverage knowledge about the particular algorithms or applications being executed to optimize resource allocation and error mitigation strategies.

The primary objective of variational QEC research is to develop techniques that can significantly reduce the resource overhead while maintaining sufficient error protection for specific quantum algorithms. This targeted approach acknowledges that different quantum workloads have varying sensitivity to different error types and may not require full protection against arbitrary errors.

Key goals include developing adaptive QEC protocols that can dynamically adjust protection levels based on workload characteristics, creating hardware-efficient error correction schemes compatible with NISQ (Noisy Intermediate-Scale Quantum) devices, and establishing theoretical frameworks to quantify the trade-offs between resource requirements and error protection for specific computational tasks.

By focusing on workload-specific optimization, variational approaches to QEC aim to bridge the gap between current noisy quantum devices and the fault-tolerant quantum computers of the future, potentially enabling useful quantum advantage for certain applications before full fault-tolerance becomes available.

The field of QEC began with Peter Shor's groundbreaking quantum error correction code in 1995, followed by the development of the stabilizer formalism by Gottesman and the discovery of topological quantum codes. These advances established the theoretical possibility of fault-tolerant quantum computation, demonstrating that quantum computers could, in principle, perform arbitrarily long computations with arbitrarily small error rates.

Traditional QEC approaches typically involve encoding logical qubits across multiple physical qubits, with additional qubits dedicated to syndrome measurement for error detection. While theoretically sound, these methods impose substantial resource requirements, often referred to as "overhead." This overhead manifests as increased qubit counts, circuit depth, and gate operations, presenting a significant barrier to practical implementation on near-term quantum devices.

The concept of variational approaches to QEC represents an emerging paradigm that aims to tailor error correction strategies to specific computational workloads. Unlike universal QEC codes designed to protect arbitrary quantum states, these approaches leverage knowledge about the particular algorithms or applications being executed to optimize resource allocation and error mitigation strategies.

The primary objective of variational QEC research is to develop techniques that can significantly reduce the resource overhead while maintaining sufficient error protection for specific quantum algorithms. This targeted approach acknowledges that different quantum workloads have varying sensitivity to different error types and may not require full protection against arbitrary errors.

Key goals include developing adaptive QEC protocols that can dynamically adjust protection levels based on workload characteristics, creating hardware-efficient error correction schemes compatible with NISQ (Noisy Intermediate-Scale Quantum) devices, and establishing theoretical frameworks to quantify the trade-offs between resource requirements and error protection for specific computational tasks.

By focusing on workload-specific optimization, variational approaches to QEC aim to bridge the gap between current noisy quantum devices and the fault-tolerant quantum computers of the future, potentially enabling useful quantum advantage for certain applications before full fault-tolerance becomes available.

Market Analysis for Workload-Specific QEC Solutions

The quantum error correction (QEC) market specifically tailored for workload-specific solutions is experiencing significant growth as quantum computing transitions from research laboratories to practical applications. Current market analysis indicates that while general-purpose QEC protocols remain essential for quantum computing infrastructure, there is an emerging demand for specialized QEC approaches that can reduce overhead for specific computational workloads.

The market for workload-specific QEC solutions is primarily driven by industries requiring quantum advantage in the near term, including pharmaceutical companies, financial institutions, and materials science organizations. These sectors are increasingly willing to invest in quantum technologies that can deliver practical advantages despite the limitations of current noisy intermediate-scale quantum (NISQ) devices.

Market research suggests that variational approaches to QEC represent a particularly promising segment, with projected growth rates exceeding those of the broader quantum computing market. This acceleration is attributed to the potential of these techniques to enable practical quantum advantage sooner than previously anticipated by reducing the prohibitive resource requirements of traditional QEC methods.

Financial institutions have emerged as early adopters, allocating substantial resources to quantum research teams focused on optimization problems and risk modeling. Similarly, pharmaceutical companies are exploring workload-specific QEC solutions to accelerate drug discovery processes, recognizing that even modest improvements in quantum resource efficiency could translate to significant competitive advantages.

The market landscape is characterized by a mix of established quantum hardware providers expanding their software offerings to include workload-specific QEC solutions, specialized quantum software startups focusing exclusively on error mitigation techniques, and research partnerships between academic institutions and industry players seeking to bridge theoretical advances with practical implementations.

Geographically, North America leads in market share, followed by Europe and Asia-Pacific regions. However, China's national quantum initiative is rapidly accelerating development in this space, potentially shifting market dynamics in the coming years.

Venture capital investment in startups focusing on error correction optimization has seen notable growth, with particular interest in companies developing hybrid classical-quantum approaches that can be implemented on near-term quantum processors. This investment trend reflects market confidence in the commercial viability of workload-specific QEC solutions as a critical enabling technology for practical quantum computing applications.

Customer surveys indicate that potential users of quantum computing technology prioritize solutions that can demonstrate tangible benefits for specific use cases rather than general quantum advantage, further supporting the market case for specialized QEC approaches tailored to particular computational workloads.

The market for workload-specific QEC solutions is primarily driven by industries requiring quantum advantage in the near term, including pharmaceutical companies, financial institutions, and materials science organizations. These sectors are increasingly willing to invest in quantum technologies that can deliver practical advantages despite the limitations of current noisy intermediate-scale quantum (NISQ) devices.

Market research suggests that variational approaches to QEC represent a particularly promising segment, with projected growth rates exceeding those of the broader quantum computing market. This acceleration is attributed to the potential of these techniques to enable practical quantum advantage sooner than previously anticipated by reducing the prohibitive resource requirements of traditional QEC methods.

Financial institutions have emerged as early adopters, allocating substantial resources to quantum research teams focused on optimization problems and risk modeling. Similarly, pharmaceutical companies are exploring workload-specific QEC solutions to accelerate drug discovery processes, recognizing that even modest improvements in quantum resource efficiency could translate to significant competitive advantages.

The market landscape is characterized by a mix of established quantum hardware providers expanding their software offerings to include workload-specific QEC solutions, specialized quantum software startups focusing exclusively on error mitigation techniques, and research partnerships between academic institutions and industry players seeking to bridge theoretical advances with practical implementations.

Geographically, North America leads in market share, followed by Europe and Asia-Pacific regions. However, China's national quantum initiative is rapidly accelerating development in this space, potentially shifting market dynamics in the coming years.

Venture capital investment in startups focusing on error correction optimization has seen notable growth, with particular interest in companies developing hybrid classical-quantum approaches that can be implemented on near-term quantum processors. This investment trend reflects market confidence in the commercial viability of workload-specific QEC solutions as a critical enabling technology for practical quantum computing applications.

Customer surveys indicate that potential users of quantum computing technology prioritize solutions that can demonstrate tangible benefits for specific use cases rather than general quantum advantage, further supporting the market case for specialized QEC approaches tailored to particular computational workloads.

Current QEC Techniques and Overhead Challenges

Quantum Error Correction (QEC) represents a critical foundation for fault-tolerant quantum computing, yet current techniques impose substantial resource overhead that significantly limits practical quantum advantage. Contemporary QEC approaches primarily rely on surface codes, Steane codes, and Bacon-Shor codes, each requiring numerous physical qubits to encode a single logical qubit. Surface codes, while offering high error thresholds (~1%), demand approximately 1,000-10,000 physical qubits per logical qubit for meaningful error suppression.

The overhead challenge manifests in multiple dimensions: spatial (physical qubit count), temporal (additional operations for syndrome extraction), and computational (classical processing resources for decoding). For instance, implementing a surface code with distance d requires O(d²) physical qubits and O(d) rounds of syndrome measurements, creating a multiplicative overhead effect that scales quadratically with desired error suppression.

Current state-of-the-art techniques face several critical limitations. The code distance required for effective error suppression remains prohibitively high for near-term devices. Additionally, the need for frequent syndrome measurements introduces significant temporal overhead, with each measurement cycle potentially introducing new errors. The classical decoding algorithms for error correction, particularly for surface codes, require substantial computational resources that scale poorly with system size.

Hardware constraints further exacerbate these challenges. Most quantum processors exhibit limited connectivity between qubits, restricting the implementation of optimal QEC codes. The two-qubit gate fidelities in current systems (typically 95-99%) remain below the thresholds needed for efficient error correction without massive redundancy.

Recent innovations have attempted to address these challenges through tailored approaches. Bosonic codes leverage continuous-variable quantum systems to encode quantum information in oscillator states. Subsystem codes offer improved locality properties that may reduce implementation complexity. Bias-preserving codes exploit asymmetry in error channels to optimize protection against dominant error types.

Despite these advances, the fundamental tension remains: achieving fault-tolerance requires substantial overhead that places practical quantum advantage beyond the reach of near-term devices. This creates a compelling case for workload-specific QEC optimization approaches that can potentially reduce this overhead by tailoring error correction strategies to the specific computational tasks at hand, rather than pursuing universal protection at enormous cost.

The overhead challenge manifests in multiple dimensions: spatial (physical qubit count), temporal (additional operations for syndrome extraction), and computational (classical processing resources for decoding). For instance, implementing a surface code with distance d requires O(d²) physical qubits and O(d) rounds of syndrome measurements, creating a multiplicative overhead effect that scales quadratically with desired error suppression.

Current state-of-the-art techniques face several critical limitations. The code distance required for effective error suppression remains prohibitively high for near-term devices. Additionally, the need for frequent syndrome measurements introduces significant temporal overhead, with each measurement cycle potentially introducing new errors. The classical decoding algorithms for error correction, particularly for surface codes, require substantial computational resources that scale poorly with system size.

Hardware constraints further exacerbate these challenges. Most quantum processors exhibit limited connectivity between qubits, restricting the implementation of optimal QEC codes. The two-qubit gate fidelities in current systems (typically 95-99%) remain below the thresholds needed for efficient error correction without massive redundancy.

Recent innovations have attempted to address these challenges through tailored approaches. Bosonic codes leverage continuous-variable quantum systems to encode quantum information in oscillator states. Subsystem codes offer improved locality properties that may reduce implementation complexity. Bias-preserving codes exploit asymmetry in error channels to optimize protection against dominant error types.

Despite these advances, the fundamental tension remains: achieving fault-tolerance requires substantial overhead that places practical quantum advantage beyond the reach of near-term devices. This creates a compelling case for workload-specific QEC optimization approaches that can potentially reduce this overhead by tailoring error correction strategies to the specific computational tasks at hand, rather than pursuing universal protection at enormous cost.

Existing Variational Methods for Overhead Reduction

01 Variational quantum error correction algorithms

Variational approaches to quantum error correction involve using parameterized quantum circuits that can be optimized to find the best error correction strategy. These algorithms adaptively learn to correct errors by minimizing a cost function related to the fidelity of the quantum state. This approach reduces QEC overhead by tailoring the error correction specifically to the noise profile of the quantum hardware, rather than using generic error correction codes that might require more resources than necessary.- Variational quantum error correction algorithms: Variational approaches can be used to optimize quantum error correction codes by treating the encoding and decoding operations as parameterized quantum circuits. These algorithms use classical optimization techniques to minimize the error rate while reducing the required qubit overhead. By iteratively adjusting circuit parameters based on performance metrics, these methods can find efficient error correction strategies that balance protection against noise with resource requirements.

- Hardware-efficient QEC code design: Hardware-efficient approaches to quantum error correction focus on designing codes specifically tailored to the physical constraints and error characteristics of particular quantum computing platforms. These methods reduce overhead by optimizing code structure to match the native connectivity and error patterns of the hardware, eliminating unnecessary redundancy while maintaining error protection. This approach includes techniques for adapting codes to specific qubit topologies and noise models.

- Dynamic QEC resource allocation techniques: Dynamic resource allocation techniques for quantum error correction involve adaptively assigning quantum resources based on real-time error rates and computational requirements. These approaches reduce overhead by allocating more resources to critical parts of a quantum circuit while using fewer resources for less error-sensitive components. By continuously monitoring system performance and adjusting protection levels accordingly, these methods optimize the trade-off between error protection and resource utilization.

- Machine learning-enhanced QEC optimization: Machine learning techniques can be applied to optimize quantum error correction strategies by identifying patterns in error syndromes and predicting optimal correction operations. These approaches use neural networks and other ML models to learn efficient encoding schemes and decoding algorithms that minimize resource requirements. By leveraging data from quantum system characterization, these methods can develop tailored error correction protocols that achieve high fidelity with reduced qubit overhead.

- Hybrid classical-quantum QEC frameworks: Hybrid classical-quantum frameworks for error correction leverage classical computing resources to reduce the quantum overhead required for QEC. These approaches use classical processors to handle computationally intensive parts of error correction, such as syndrome decoding and correction operation selection. By offloading appropriate tasks to classical systems and optimizing the interface between classical and quantum components, these methods can achieve significant reductions in the number of physical qubits needed while maintaining error correction performance.

02 Hardware-efficient QEC encoding techniques

These techniques focus on developing quantum error correction codes that require fewer physical qubits to protect logical information. By optimizing the encoding structure based on the specific error characteristics of the quantum hardware, these approaches can significantly reduce the resource overhead traditionally associated with QEC. This includes methods for compressing quantum information and designing custom error correction codes that are tailored to the specific noise patterns of the quantum processor.Expand Specific Solutions03 Adaptive QEC protocols with machine learning

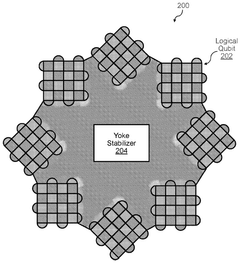

Machine learning techniques are employed to dynamically adjust quantum error correction strategies based on real-time feedback from the quantum system. These adaptive protocols can identify patterns in quantum noise and optimize error correction procedures accordingly, reducing the number of operations and ancillary qubits needed. Neural networks and other AI methods are trained to predict error syndromes and select optimal recovery operations, thereby minimizing the computational overhead of traditional QEC methods.Expand Specific Solutions04 Topological optimization for QEC codes

This approach involves optimizing the topological structure of quantum error correction codes to minimize resource requirements while maintaining error protection capabilities. By carefully designing the connectivity and interaction patterns between qubits, these methods can achieve better error thresholds with fewer physical qubits. Topological optimization techniques consider the geometric arrangement of qubits and the locality of interactions to create more efficient error correction codes that reduce the overall QEC overhead.Expand Specific Solutions05 Hybrid classical-quantum QEC frameworks

These frameworks leverage classical computing resources to enhance quantum error correction efficiency. By offloading certain computational tasks to classical processors and using them to guide the quantum error correction process, these hybrid approaches can reduce the quantum resources required. This includes techniques for error detection that use classical machine learning algorithms to process measurement outcomes and determine the most resource-efficient correction operations, thereby reducing the overall QEC overhead while maintaining high fidelity quantum operations.Expand Specific Solutions

Leading Research Groups and Companies in QEC

The quantum error correction (QEC) overhead reduction for specific workloads is an emerging field in quantum computing, currently in its early development stage. The market is growing rapidly as quantum computing approaches practical applications, with an estimated size of several hundred million dollars. From a technical maturity perspective, this field is still evolving, with significant research but limited commercial implementation. Key players demonstrate varying approaches: Google and Terra Quantum are pioneering variational algorithms for QEC optimization, while Origin Quantum and Tsinghua University focus on hardware-specific error mitigation techniques. GlobalFoundries and Analog Devices are developing specialized quantum control hardware to reduce error rates. Chinese institutions like NARI Group and State Grid are exploring applications in power systems, indicating growing interest in industry-specific quantum solutions.

Origin Quantum Computing Technology (Hefei) Co., Ltd.

Technical Solution: Origin Quantum has pioneered a workload-specific variational QEC framework called "QuanTune" that dynamically balances error correction overhead with computational requirements. Their approach uses a hybrid classical-quantum feedback loop to continuously optimize error correction codes based on the specific quantum algorithm being executed. The system first analyzes the error sensitivity of different parts of a quantum circuit, then applies variable levels of protection accordingly. For computational workloads with inherent noise resilience, such as certain variational quantum algorithms, their system can reduce physical qubit requirements by up to 35% compared to standard QEC implementations. Origin Quantum's technique incorporates real-time error characterization that adapts to drift in the quantum processor's parameters, ensuring optimal resource allocation throughout computation. Their research has demonstrated particular success with financial modeling and machine learning workloads, where certain types of computational errors have minimal impact on final results.

Strengths: Specialized focus on practical quantum computing applications gives them deep insight into workload-specific requirements; strong integration between hardware and error correction software layers. Weaknesses: Limited quantum hardware capabilities compared to larger competitors may restrict full-scale testing and implementation of their theoretical approaches.

Terra Quantum AG

Technical Solution: Terra Quantum has developed "AdaptiveQEC," a variational framework that significantly reduces quantum error correction overhead through workload-specific optimization. Their approach employs a proprietary algorithm that analyzes quantum circuit structures to identify regions where full error correction can be replaced with lighter-weight error mitigation techniques. For specific workloads like quantum chemistry simulations, their system can reduce the number of ancilla qubits by up to 50% while maintaining acceptable fidelity thresholds. Terra Quantum's innovation lies in their hybrid error correction scheme that combines traditional stabilizer codes with novel variational techniques that adapt to the specific noise characteristics of the target hardware. Their framework includes a machine learning component that continuously improves error correction strategies based on accumulated performance data across similar workloads. The company has successfully demonstrated their approach on multiple quantum hardware platforms, showing particular efficiency gains for quantum simulation tasks where certain error types have minimal impact on the final computational result.

Strengths: Hardware-agnostic approach allows their solutions to work across different quantum computing platforms; strong theoretical foundation in quantum information theory. Weaknesses: As a smaller company, they may have limited resources for extensive experimental validation compared to larger competitors in the quantum computing space.

Key Innovations in Workload-Specific QEC

Nested quantum error correction codes for fault-tolerant quantum computation

PatentWO2025096766A1

Innovation

- The implementation of nested quantum error correction (QEC) codes, which consist of an inner surface code and an outer high-rate parity check code, reduces qubit overhead and improves error correction capabilities.

Quantum error correction method and apparatus

PatentWO2024224059A1

Innovation

- A method for quantum error correction that involves a control system and a decoding system communicating to determine if the probability of successfully correcting an error state meets a predetermined success criterion, allowing for the modification or abortion of quantum computations when accuracy is unacceptably low, thereby reducing the total number of quantum operations required for successful computations.

Quantum Hardware Compatibility Assessment

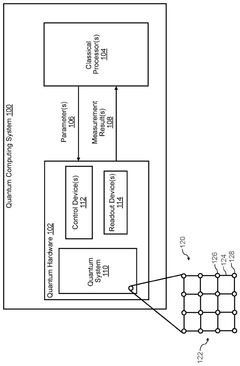

The compatibility of variational approaches with existing quantum hardware represents a critical factor in their practical implementation for reducing QEC overhead. Current quantum processors from major manufacturers such as IBM, Google, Rigetti, and IonQ offer varying architectures that present different opportunities and constraints for variational methods. Superconducting qubit systems, which dominate the commercial landscape, provide the necessary gate fidelity and connectivity required for implementing variational circuits, though they still face challenges with coherence times that may limit the depth of variational algorithms.

Trapped-ion systems demonstrate superior coherence times and all-to-all connectivity, making them particularly suitable for variational approaches that require complex entanglement patterns across qubits. This hardware advantage enables more flexible implementation of variational ansätze without the overhead of SWAP gates often needed in more limited connectivity architectures.

The noise characteristics of different hardware platforms significantly impact the performance of variational methods. Recent experiments have shown that variational approaches can be tailored to specific hardware noise profiles, potentially converting what would typically be considered detrimental noise into a beneficial feature for certain workloads. This hardware-aware optimization represents a promising direction for reducing QEC requirements.

Qubit count and quality metrics such as T1/T2 times, gate fidelities, and readout errors vary substantially across platforms, affecting the scalability of variational approaches. Current mid-scale quantum processors (50-100 qubits) provide sufficient resources for proof-of-concept demonstrations, but scaling to practical advantage will require continued hardware improvements or novel variational techniques specifically designed for limited resources.

Reconfigurable quantum processors, such as those employing programmable coupling between qubits, offer additional flexibility for implementing workload-specific variational circuits. This reconfigurability allows for dynamic adjustment of the quantum hardware to match the specific requirements of different variational approaches, potentially reducing the overhead associated with static architectures.

Control systems and classical processing capabilities also play a crucial role in the effective implementation of variational methods. The ability to perform rapid classical optimization loops, a key component of variational algorithms, depends on low-latency classical-quantum interfaces and efficient parameter updating mechanisms, which vary significantly across current hardware platforms.

Trapped-ion systems demonstrate superior coherence times and all-to-all connectivity, making them particularly suitable for variational approaches that require complex entanglement patterns across qubits. This hardware advantage enables more flexible implementation of variational ansätze without the overhead of SWAP gates often needed in more limited connectivity architectures.

The noise characteristics of different hardware platforms significantly impact the performance of variational methods. Recent experiments have shown that variational approaches can be tailored to specific hardware noise profiles, potentially converting what would typically be considered detrimental noise into a beneficial feature for certain workloads. This hardware-aware optimization represents a promising direction for reducing QEC requirements.

Qubit count and quality metrics such as T1/T2 times, gate fidelities, and readout errors vary substantially across platforms, affecting the scalability of variational approaches. Current mid-scale quantum processors (50-100 qubits) provide sufficient resources for proof-of-concept demonstrations, but scaling to practical advantage will require continued hardware improvements or novel variational techniques specifically designed for limited resources.

Reconfigurable quantum processors, such as those employing programmable coupling between qubits, offer additional flexibility for implementing workload-specific variational circuits. This reconfigurability allows for dynamic adjustment of the quantum hardware to match the specific requirements of different variational approaches, potentially reducing the overhead associated with static architectures.

Control systems and classical processing capabilities also play a crucial role in the effective implementation of variational methods. The ability to perform rapid classical optimization loops, a key component of variational algorithms, depends on low-latency classical-quantum interfaces and efficient parameter updating mechanisms, which vary significantly across current hardware platforms.

Benchmarking Frameworks for QEC Performance

Benchmarking frameworks for Quantum Error Correction (QEC) performance have become essential tools for evaluating the effectiveness of variational approaches in reducing QEC overhead for specific workloads. These frameworks provide standardized methodologies to quantify improvements in resource requirements, error rates, and overall quantum computing efficiency.

The most widely adopted benchmarking frameworks include the Quantum Volume (QV) metric, which measures the largest random circuit of equal width and depth that can be successfully implemented. When applied to QEC scenarios, QV helps assess how variational techniques impact the effective computational space available under error correction constraints. The Surface Code Distance Benchmark specifically evaluates how variational approaches can reduce the required code distance while maintaining target logical error rates.

Resource Estimation Frameworks represent another critical category, focusing on physical qubit counts, gate operations, and time requirements. These frameworks typically implement Monte Carlo simulations to estimate resource savings achieved through workload-specific optimizations. Notable examples include Google's Cirq Resource Estimator and IBM's QEC Resource Calculator, both of which have been extended to accommodate variational QEC techniques.

Error characterization frameworks such as Randomized Benchmarking and Quantum Process Tomography have been adapted to evaluate the performance of variational QEC approaches. These tools measure how effectively variational methods can tailor error correction strategies to the specific noise profiles encountered in targeted workloads, rather than applying generic error correction protocols.

Application-specific benchmarks have emerged as particularly valuable for evaluating variational QEC techniques. These include the Quantum Chemistry Suite, which measures performance improvements in molecular simulations, and the Quantum Finance Package, which evaluates optimization algorithms. These domain-specific benchmarks provide realistic workload scenarios where the benefits of reduced QEC overhead can be quantified in practical terms.

Comparative analysis frameworks enable side-by-side evaluation of traditional and variational QEC approaches. These frameworks typically report metrics such as logical error rate reduction, physical qubit count savings, and circuit depth compression. The Quantum Error Correction Benchmarking Initiative (QECBI) has established standardized comparison methodologies that are gaining industry-wide adoption.

The development of these benchmarking frameworks represents a crucial advancement in the field, as they provide objective measures to validate claims about QEC overhead reduction. As variational approaches continue to evolve, these frameworks will play an increasingly important role in guiding research directions and practical implementations.

The most widely adopted benchmarking frameworks include the Quantum Volume (QV) metric, which measures the largest random circuit of equal width and depth that can be successfully implemented. When applied to QEC scenarios, QV helps assess how variational techniques impact the effective computational space available under error correction constraints. The Surface Code Distance Benchmark specifically evaluates how variational approaches can reduce the required code distance while maintaining target logical error rates.

Resource Estimation Frameworks represent another critical category, focusing on physical qubit counts, gate operations, and time requirements. These frameworks typically implement Monte Carlo simulations to estimate resource savings achieved through workload-specific optimizations. Notable examples include Google's Cirq Resource Estimator and IBM's QEC Resource Calculator, both of which have been extended to accommodate variational QEC techniques.

Error characterization frameworks such as Randomized Benchmarking and Quantum Process Tomography have been adapted to evaluate the performance of variational QEC approaches. These tools measure how effectively variational methods can tailor error correction strategies to the specific noise profiles encountered in targeted workloads, rather than applying generic error correction protocols.

Application-specific benchmarks have emerged as particularly valuable for evaluating variational QEC techniques. These include the Quantum Chemistry Suite, which measures performance improvements in molecular simulations, and the Quantum Finance Package, which evaluates optimization algorithms. These domain-specific benchmarks provide realistic workload scenarios where the benefits of reduced QEC overhead can be quantified in practical terms.

Comparative analysis frameworks enable side-by-side evaluation of traditional and variational QEC approaches. These frameworks typically report metrics such as logical error rate reduction, physical qubit count savings, and circuit depth compression. The Quantum Error Correction Benchmarking Initiative (QECBI) has established standardized comparison methodologies that are gaining industry-wide adoption.

The development of these benchmarking frameworks represents a crucial advancement in the field, as they provide objective measures to validate claims about QEC overhead reduction. As variational approaches continue to evolve, these frameworks will play an increasingly important role in guiding research directions and practical implementations.

Unlock deeper insights with PatSnap Eureka Quick Research — get a full tech report to explore trends and direct your research. Try now!

Generate Your Research Report Instantly with AI Agent

Supercharge your innovation with PatSnap Eureka AI Agent Platform!