Testing And Calibration Workflows For Error-Corrected Chips

SEP 2, 20259 MIN READ

Generate Your Research Report Instantly with AI Agent

PatSnap Eureka helps you evaluate technical feasibility & market potential.

Quantum Error Correction Background and Objectives

Quantum error correction (QEC) represents a critical frontier in quantum computing, addressing the fundamental challenge of quantum decoherence and gate errors. Since the inception of quantum computing theory in the 1980s, researchers have recognized that quantum systems are inherently fragile, with quantum states easily disturbed by environmental interactions. This vulnerability has evolved from a theoretical concern to the primary obstacle in scaling quantum computers beyond noisy intermediate-scale quantum (NISQ) devices.

The development of QEC has progressed through several significant phases. Initially, Peter Shor's 1995 nine-qubit code demonstrated the theoretical possibility of protecting quantum information. This was followed by the discovery of stabilizer codes and the surface code architecture, which currently represents the most promising approach for practical error correction implementation. The field has steadily advanced from theoretical constructs to experimental demonstrations on small systems, with recent milestones including Google's logical qubit with error rates below physical qubits in 2023.

The primary objective of QEC research is to achieve fault-tolerant quantum computation, where logical operations can be performed on encoded quantum information with arbitrarily low error rates. This requires not only effective error correction codes but also precise calibration and testing methodologies to characterize and mitigate errors in physical quantum systems.

Testing and calibration workflows for error-corrected chips represent a crucial bridge between theoretical QEC schemes and their practical implementation. These workflows aim to systematically identify, characterize, and mitigate various error sources in quantum hardware, including decoherence, gate imperfections, crosstalk, and readout errors. The goal is to optimize the performance of physical qubits to meet the threshold requirements for effective error correction.

Current objectives in this domain include developing scalable calibration protocols that can efficiently handle increasing qubit counts, creating automated testing frameworks that can rapidly characterize quantum devices, and establishing standardized benchmarks for comparing error correction performance across different hardware platforms. Additionally, there is a growing focus on co-designing hardware and error correction codes to optimize overall system performance.

The technological trajectory suggests that successful implementation of error correction will likely proceed through several stages: demonstration of logical qubits with improved error rates, implementation of small fault-tolerant logical operations, and eventually scaling to fully error-corrected quantum processors capable of running quantum algorithms with practical advantage. This progression necessitates increasingly sophisticated testing and calibration methodologies tailored specifically to error-corrected architectures.

The development of QEC has progressed through several significant phases. Initially, Peter Shor's 1995 nine-qubit code demonstrated the theoretical possibility of protecting quantum information. This was followed by the discovery of stabilizer codes and the surface code architecture, which currently represents the most promising approach for practical error correction implementation. The field has steadily advanced from theoretical constructs to experimental demonstrations on small systems, with recent milestones including Google's logical qubit with error rates below physical qubits in 2023.

The primary objective of QEC research is to achieve fault-tolerant quantum computation, where logical operations can be performed on encoded quantum information with arbitrarily low error rates. This requires not only effective error correction codes but also precise calibration and testing methodologies to characterize and mitigate errors in physical quantum systems.

Testing and calibration workflows for error-corrected chips represent a crucial bridge between theoretical QEC schemes and their practical implementation. These workflows aim to systematically identify, characterize, and mitigate various error sources in quantum hardware, including decoherence, gate imperfections, crosstalk, and readout errors. The goal is to optimize the performance of physical qubits to meet the threshold requirements for effective error correction.

Current objectives in this domain include developing scalable calibration protocols that can efficiently handle increasing qubit counts, creating automated testing frameworks that can rapidly characterize quantum devices, and establishing standardized benchmarks for comparing error correction performance across different hardware platforms. Additionally, there is a growing focus on co-designing hardware and error correction codes to optimize overall system performance.

The technological trajectory suggests that successful implementation of error correction will likely proceed through several stages: demonstration of logical qubits with improved error rates, implementation of small fault-tolerant logical operations, and eventually scaling to fully error-corrected quantum processors capable of running quantum algorithms with practical advantage. This progression necessitates increasingly sophisticated testing and calibration methodologies tailored specifically to error-corrected architectures.

Market Analysis for Error-Corrected Quantum Processors

The quantum computing market is experiencing significant growth, with the global market value projected to reach $1.3 billion by 2023 and expected to expand at a CAGR of 56.0% through 2029. Error-corrected quantum processors represent a critical advancement in this landscape, addressing the fundamental challenge of quantum decoherence that has limited practical applications.

The demand for error-corrected quantum processors is primarily driven by research institutions, government agencies, and large technology corporations investing in quantum computing infrastructure. Financial services, pharmaceuticals, materials science, and cryptography sectors show particular interest in error-corrected quantum systems due to their potential to solve complex computational problems beyond classical capabilities.

Current market analysis indicates that while fully error-corrected quantum processors remain predominantly in the research phase, there is substantial investment in developing testing and calibration workflows. Venture capital funding for quantum computing startups focusing on error correction technologies exceeded $1.7 billion in 2022, demonstrating strong market confidence in this technological direction.

The market segmentation for error-corrected quantum processors reveals distinct categories: research-grade systems for academic institutions, development platforms for corporate R&D departments, and emerging commercial solutions for specific computational problems. The testing and calibration workflows market represents approximately 15% of the overall quantum computing ecosystem, with specialized software tools and hardware diagnostics forming a rapidly growing subsector.

Geographic distribution of market demand shows North America leading with approximately 45% market share, followed by Europe (30%) and Asia-Pacific (20%). China's national quantum initiative and the European Quantum Flagship program are accelerating regional market development through substantial public funding.

Customer pain points identified through market research include the high technical expertise required for calibration, lengthy testing cycles impacting time-to-solution, and the lack of standardized benchmarking methodologies for error-corrected systems. These challenges represent significant market opportunities for companies developing automated testing solutions and user-friendly calibration interfaces.

The market for error-corrected quantum processors demonstrates high price elasticity in the current phase, with customers willing to pay premium prices for systems demonstrating measurable improvements in error rates and coherence times. This suggests that effective testing and calibration workflows that can verify and optimize quantum error correction will command significant market value in the near term.

The demand for error-corrected quantum processors is primarily driven by research institutions, government agencies, and large technology corporations investing in quantum computing infrastructure. Financial services, pharmaceuticals, materials science, and cryptography sectors show particular interest in error-corrected quantum systems due to their potential to solve complex computational problems beyond classical capabilities.

Current market analysis indicates that while fully error-corrected quantum processors remain predominantly in the research phase, there is substantial investment in developing testing and calibration workflows. Venture capital funding for quantum computing startups focusing on error correction technologies exceeded $1.7 billion in 2022, demonstrating strong market confidence in this technological direction.

The market segmentation for error-corrected quantum processors reveals distinct categories: research-grade systems for academic institutions, development platforms for corporate R&D departments, and emerging commercial solutions for specific computational problems. The testing and calibration workflows market represents approximately 15% of the overall quantum computing ecosystem, with specialized software tools and hardware diagnostics forming a rapidly growing subsector.

Geographic distribution of market demand shows North America leading with approximately 45% market share, followed by Europe (30%) and Asia-Pacific (20%). China's national quantum initiative and the European Quantum Flagship program are accelerating regional market development through substantial public funding.

Customer pain points identified through market research include the high technical expertise required for calibration, lengthy testing cycles impacting time-to-solution, and the lack of standardized benchmarking methodologies for error-corrected systems. These challenges represent significant market opportunities for companies developing automated testing solutions and user-friendly calibration interfaces.

The market for error-corrected quantum processors demonstrates high price elasticity in the current phase, with customers willing to pay premium prices for systems demonstrating measurable improvements in error rates and coherence times. This suggests that effective testing and calibration workflows that can verify and optimize quantum error correction will command significant market value in the near term.

Current Testing Challenges and Technical Limitations

Testing error-corrected quantum chips presents unprecedented challenges that significantly exceed those encountered in classical computing. The quantum nature of these systems introduces a complex layer of testing requirements due to the inherent fragility of quantum states and the susceptibility to environmental noise. Current testing methodologies struggle to effectively characterize quantum error correction (QEC) capabilities while maintaining quantum coherence during the testing process itself.

One of the primary challenges is the development of comprehensive test vectors that can adequately probe the error correction mechanisms without introducing additional errors. Traditional testing approaches that work for classical error correction fail when applied to quantum systems due to the no-cloning theorem and measurement-induced state collapse. This fundamental limitation necessitates novel testing strategies that can verify error correction functionality without disrupting the very quantum properties being protected.

Calibration workflows face significant technical limitations related to the dynamic nature of quantum systems. Quantum chips require frequent recalibration due to drift in qubit parameters, crosstalk effects, and fluctuations in control systems. Current calibration procedures are often time-consuming, requiring hours or even days to complete, which significantly impacts the practical utility of these systems. The calibration process itself can introduce errors, creating a paradoxical situation where the act of preparing the system for error correction may degrade its performance.

The scalability of testing protocols represents another major hurdle. As quantum processors grow in size and complexity, the number of potential error channels increases exponentially. Current testing methodologies do not scale efficiently with system size, creating bottlenecks in the development pipeline. This limitation is particularly problematic for error-corrected chips, which require more qubits and more complex interconnections than their non-error-corrected counterparts.

Diagnostic capabilities present additional challenges. When tests reveal performance issues, pinpointing the exact source of errors remains difficult. The interdependence of various subsystems in quantum processors means that errors can propagate in non-intuitive ways, making root cause analysis exceptionally challenging. Current diagnostic tools lack the resolution and specificity needed to efficiently troubleshoot complex error correction implementations.

The integration of testing into the manufacturing workflow presents practical limitations as well. Unlike classical chips, which can be fully tested post-fabrication, quantum chips require continuous testing throughout their operational lifetime. This necessitates built-in test capabilities that can function without disrupting normal operation, a feature that current architectures struggle to implement effectively.

One of the primary challenges is the development of comprehensive test vectors that can adequately probe the error correction mechanisms without introducing additional errors. Traditional testing approaches that work for classical error correction fail when applied to quantum systems due to the no-cloning theorem and measurement-induced state collapse. This fundamental limitation necessitates novel testing strategies that can verify error correction functionality without disrupting the very quantum properties being protected.

Calibration workflows face significant technical limitations related to the dynamic nature of quantum systems. Quantum chips require frequent recalibration due to drift in qubit parameters, crosstalk effects, and fluctuations in control systems. Current calibration procedures are often time-consuming, requiring hours or even days to complete, which significantly impacts the practical utility of these systems. The calibration process itself can introduce errors, creating a paradoxical situation where the act of preparing the system for error correction may degrade its performance.

The scalability of testing protocols represents another major hurdle. As quantum processors grow in size and complexity, the number of potential error channels increases exponentially. Current testing methodologies do not scale efficiently with system size, creating bottlenecks in the development pipeline. This limitation is particularly problematic for error-corrected chips, which require more qubits and more complex interconnections than their non-error-corrected counterparts.

Diagnostic capabilities present additional challenges. When tests reveal performance issues, pinpointing the exact source of errors remains difficult. The interdependence of various subsystems in quantum processors means that errors can propagate in non-intuitive ways, making root cause analysis exceptionally challenging. Current diagnostic tools lack the resolution and specificity needed to efficiently troubleshoot complex error correction implementations.

The integration of testing into the manufacturing workflow presents practical limitations as well. Unlike classical chips, which can be fully tested post-fabrication, quantum chips require continuous testing throughout their operational lifetime. This necessitates built-in test capabilities that can function without disrupting normal operation, a feature that current architectures struggle to implement effectively.

Existing Testing Workflows for Error-Corrected Chips

01 Error detection and correction in semiconductor chips

Various methods and systems for detecting and correcting errors in semiconductor chips during testing. These include built-in self-test (BIST) circuits, error correction code (ECC) implementations, and redundancy techniques to identify and address manufacturing defects or operational failures. These approaches help improve chip reliability by automatically detecting errors and either correcting them or flagging the chip for further analysis.- Error detection and correction in semiconductor chips: Various methods and systems for detecting and correcting errors in semiconductor chips during testing and operation. These include built-in self-test (BIST) circuits, error correction code (ECC) implementations, and redundancy techniques that identify and address defects or failures in memory cells and logic circuits. These error correction mechanisms improve chip reliability by automatically detecting faults and either correcting them or routing around damaged components.

- Automated testing workflows for semiconductor devices: Automated testing workflows that streamline the process of identifying and calibrating error-prone chips. These workflows include sequential testing procedures, parallel testing architectures, and adaptive testing methodologies that adjust based on initial test results. The automation reduces human intervention, increases throughput, and ensures consistent quality by applying standardized testing protocols across multiple devices simultaneously.

- Calibration techniques for error-corrected chips: Specialized calibration techniques that optimize the performance of error-corrected semiconductor chips. These include parameter adjustment methods, reference voltage calibration, timing calibration, and temperature compensation techniques. The calibration processes ensure that chips operate within specified tolerances even after error correction mechanisms have been applied, maintaining signal integrity and functional accuracy across varying operating conditions.

- Probe and contact systems for chip testing: Advanced probe and contact systems designed specifically for testing error-corrected chips. These include specialized probe cards, micro-electromechanical systems (MEMS) based contacts, and high-density interconnect solutions that enable precise electrical connections to test points on the chip. These systems ensure accurate signal delivery and measurement during testing and calibration workflows, even for chips with built-in error correction capabilities.

- Integration of testing and calibration in manufacturing processes: Methods for integrating testing and calibration workflows directly into semiconductor manufacturing processes. These approaches include in-line testing stations, real-time calibration adjustments, and feedback loops that modify manufacturing parameters based on test results. By embedding these workflows within the production line, manufacturers can identify and address potential errors earlier, reducing waste and improving overall yield of error-corrected chips.

02 Automated testing workflows for semiconductor devices

Automated testing workflows that streamline the process of testing semiconductor devices. These workflows include automated handling systems, test sequence optimization, parallel testing capabilities, and integration with manufacturing execution systems. By automating the testing process, manufacturers can increase throughput, reduce human error, and collect comprehensive data for quality control and process improvement.Expand Specific Solutions03 Calibration techniques for error-corrected chips

Specialized calibration techniques designed for error-corrected semiconductor chips. These techniques include parameter adjustment, reference signal calibration, temperature compensation, and adaptive calibration algorithms. Proper calibration ensures that error correction mechanisms function optimally across various operating conditions, improving overall chip performance and reliability.Expand Specific Solutions04 Probe and test equipment for chip validation

Specialized probe and test equipment used for validating error-corrected chips. This includes wafer probers, automated test equipment (ATE), specialized test fixtures, and probe cards designed for high-accuracy measurements. These tools enable precise electrical characterization of chips, allowing for accurate detection of defects and verification of error correction functionality.Expand Specific Solutions05 Data analysis and reporting systems for chip testing

Advanced data analysis and reporting systems that process test results from error-corrected chips. These systems include statistical analysis tools, failure mode identification algorithms, yield management software, and visualization tools for test data. By effectively analyzing test data, manufacturers can identify patterns in chip failures, optimize error correction mechanisms, and improve overall manufacturing processes.Expand Specific Solutions

Leading Companies in Quantum Error Correction

The testing and calibration workflows for error-corrected chips market is currently in a growth phase, with increasing demand driven by quantum computing advancements and high-performance computing needs. The market size is expanding as more industries adopt error-correction technologies to enhance chip reliability and performance. Leading players like IBM, GLOBALFOUNDRIES, and Samsung Electronics have established mature testing frameworks, while companies such as Synopsys and Xilinx are developing specialized tools for error detection and correction. Google and MIT are advancing research in quantum error correction, while semiconductor manufacturers including SK hynix and Infineon Technologies are integrating error-correction capabilities into their production processes. The competitive landscape shows a mix of established technology giants and specialized semiconductor firms working to address the growing complexity of chip testing and calibration requirements.

International Business Machines Corp.

Technical Solution: IBM has developed comprehensive testing and calibration workflows for error-corrected quantum chips through their Qiskit framework. Their approach integrates error mitigation techniques with dynamic circuit compilation to address both coherent and incoherent errors. IBM's workflow includes characterization protocols that identify error sources, followed by calibration procedures that optimize gate parameters to minimize these errors. Their system employs randomized benchmarking and quantum process tomography to validate error correction performance. IBM's recent advancements include the implementation of mid-circuit measurements for active error correction and the development of scalable calibration techniques that can handle increasing qubit counts while maintaining fidelity thresholds required for fault-tolerant quantum computing.

Strengths: Comprehensive end-to-end solution that integrates with their quantum hardware ecosystem; extensive experience with superconducting qubit technology; advanced automation capabilities for calibration workflows. Weaknesses: Solutions are primarily optimized for IBM's own quantum architecture; resource-intensive calibration procedures that may require significant classical computing resources.

Infineon Technologies AG

Technical Solution: Infineon has established robust testing and calibration workflows for error-corrected chips, particularly focusing on automotive and security applications where error resilience is critical. Their approach combines hardware-based built-in self-test (BIST) mechanisms with software-based error detection and correction algorithms. Infineon's workflow implements a multi-level testing strategy that addresses errors at various abstraction levels, from transistor-level defects to system-level functional errors. Their methodology incorporates adaptive voltage scaling techniques that dynamically adjust operating parameters to minimize error rates while optimizing power consumption. Infineon's recent advancements include the development of specialized test structures for monitoring aging effects and environmental stressors that contribute to error rates over time, enabling predictive maintenance and calibration scheduling to maintain error correction efficiency throughout the chip's operational lifetime.

Strengths: Exceptional expertise in automotive and security applications where error correction is mission-critical; integrated approach combining hardware and software solutions; strong focus on reliability under extreme conditions. Weaknesses: Solutions may be overly specialized for certain vertical markets; calibration workflows can be complex to implement in mixed-signal environments.

Key Technologies in Quantum Calibration Systems

Chip and testing method thereof

PatentActiveUS20210096180A1

Innovation

- Integration of encoding and decoding circuits directly within the chip for self-testing capabilities, eliminating the need for expensive automatic testing machines.

- Implementation of a testing method that can determine scan chain errors through analysis of scan output data, enabling more efficient troubleshooting of chip failures.

- Design approach that allows for distinguishing between digital logic defects and scan mode entry failures, which was difficult to identify in conventional testing methods.

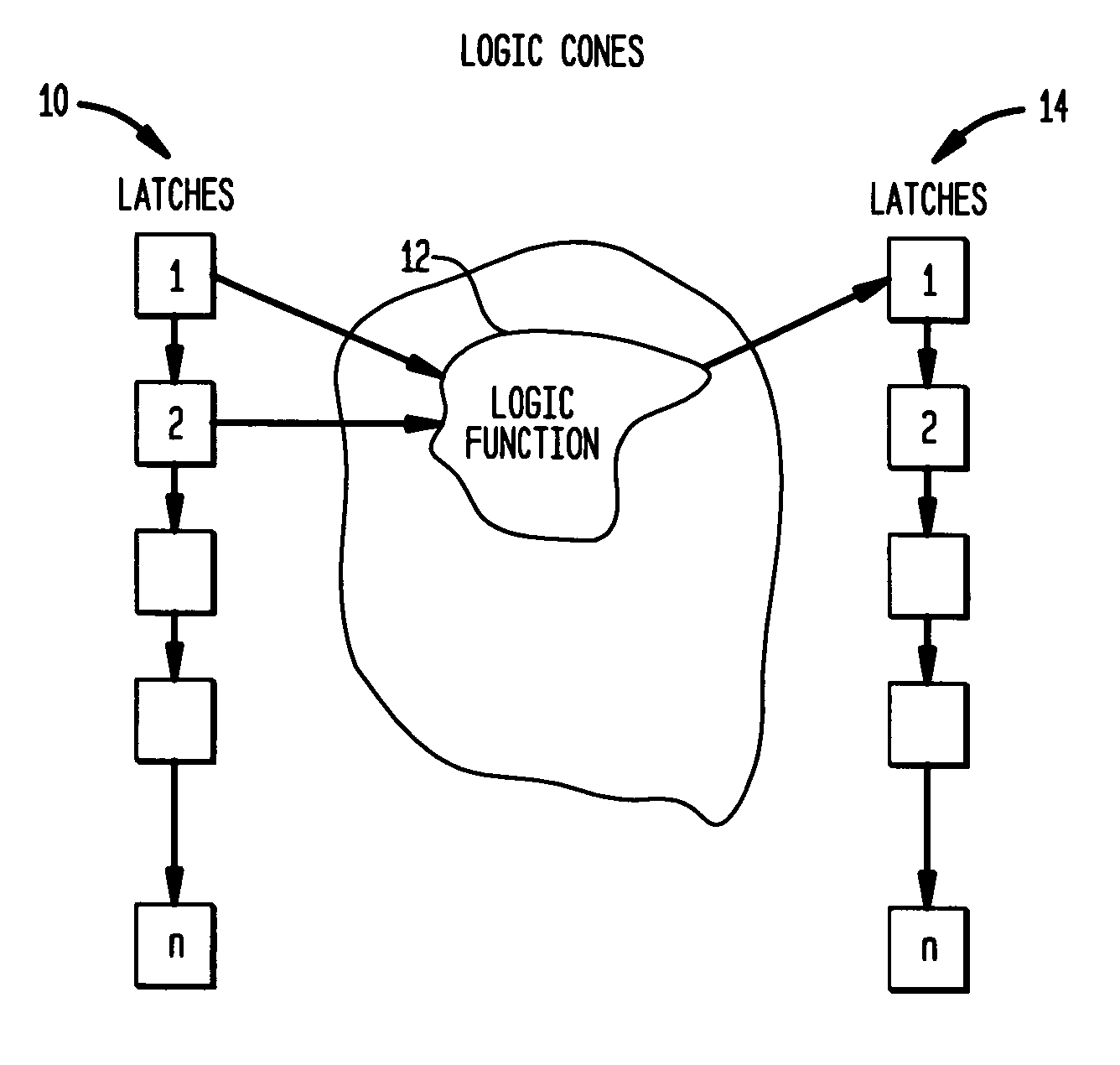

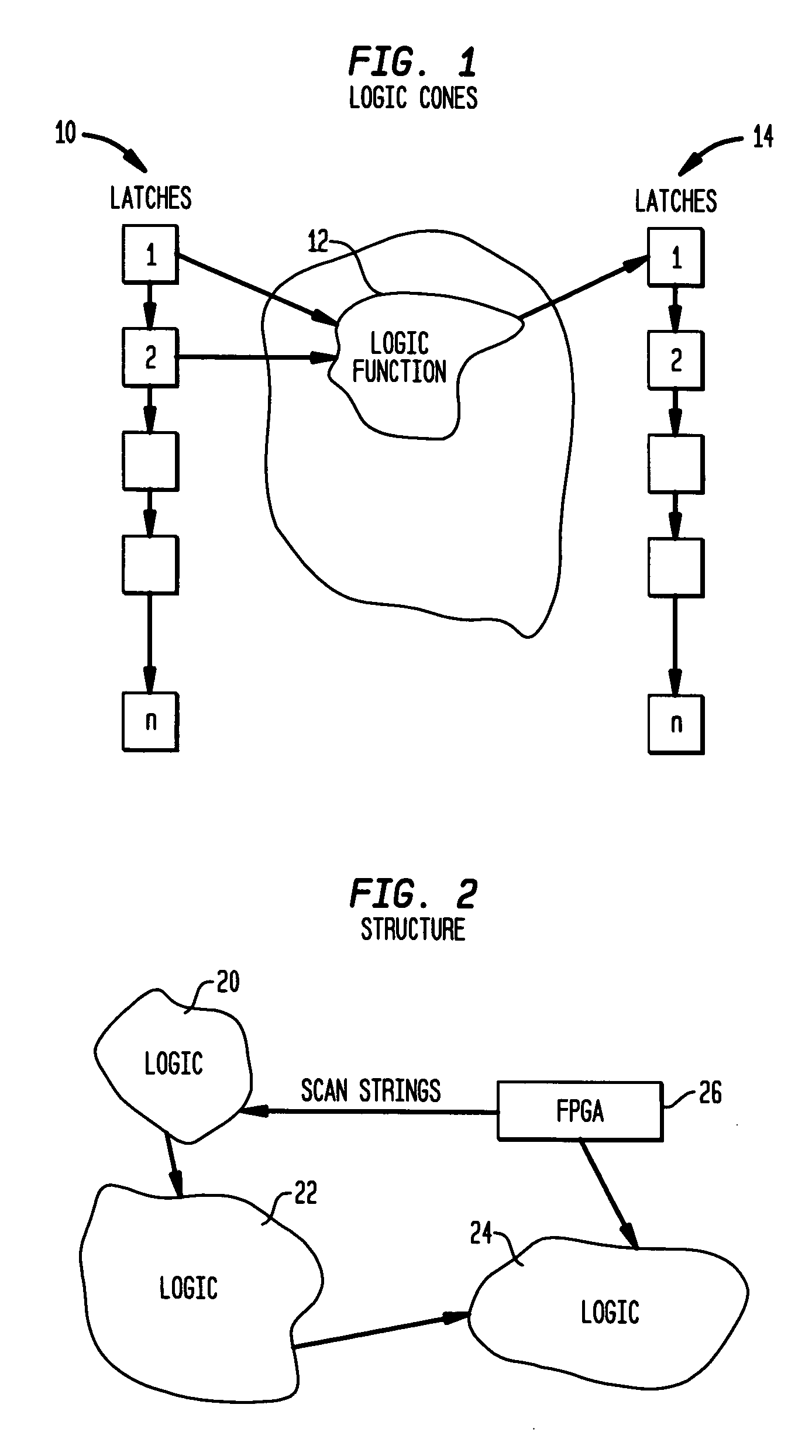

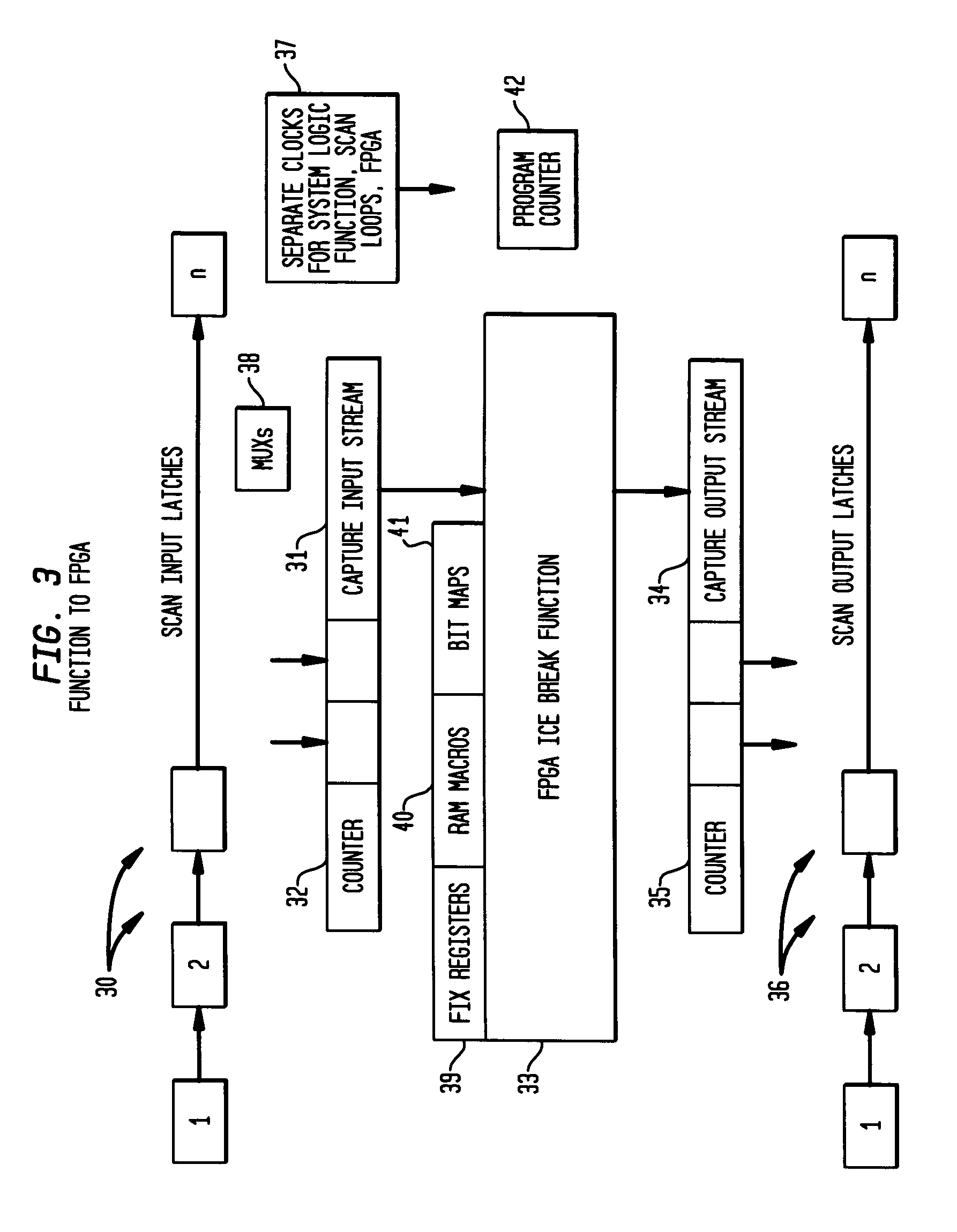

A system and method of providing error detection and correction capability in an integrated circuit using redundant logic cells of an embedded FPGA

PatentActiveUS20050278588A1

Innovation

- The implementation of embedded field programmable gate array (FPGA) structures within the IC allows for routing defective logic function inputs to FPGA macros for correction, enabling internal logic error correction without external scanning, and evaluating error recovery by injecting data errors through FPGA structures.

Standardization Efforts in Quantum Chip Testing

The quantum computing industry is witnessing significant efforts toward standardization in testing and calibration methodologies for error-corrected quantum chips. Organizations such as IEEE, ISO, and the Quantum Economic Development Consortium (QED-C) are leading initiatives to establish common frameworks for evaluating quantum processor performance and reliability.

IEEE's P7131 working group specifically focuses on quantum computing performance metrics and benchmarks, aiming to create standardized methods for assessing error rates, coherence times, and gate fidelities across different quantum computing platforms. This standardization effort is crucial for enabling meaningful comparisons between quantum processors from different manufacturers.

Similarly, the National Institute of Standards and Technology (NIST) has established the Quantum Economic Development Consortium, which includes working groups dedicated to developing testing protocols and calibration procedures for quantum chips. These protocols address various aspects of quantum chip performance, including error correction capabilities, qubit connectivity, and system stability under different operating conditions.

The European Telecommunications Standards Institute (ETSI) has formed the Quantum Computing Technical Committee, which is working on standardizing testing methodologies specifically for error-corrected quantum processors. Their focus includes standardized workflows for characterizing logical qubits and measuring the effectiveness of error correction codes in practical implementations.

Industry consortia like OpenSuperQ and the Quantum Industry Consortium (QuIC) are complementing these formal standardization bodies by developing open-source testing suites and calibration tools that implement emerging standards. These tools enable researchers and manufacturers to adopt standardized testing approaches early in the development cycle.

Key areas of standardization include test pattern generation for quantum circuits, statistical methods for error characterization, calibration sequence definitions, and reporting formats for quantum processor benchmarking results. The development of standard reference materials and calibration artifacts for quantum systems is also underway, similar to those used in conventional semiconductor testing.

Cross-platform validation methodologies are being established to ensure that error correction techniques can be fairly evaluated across different qubit technologies, including superconducting, ion trap, and photonic systems. These standardization efforts are essential for the maturation of quantum computing technology and will facilitate more reliable comparison of quantum processors as they advance toward fault-tolerant operation.

IEEE's P7131 working group specifically focuses on quantum computing performance metrics and benchmarks, aiming to create standardized methods for assessing error rates, coherence times, and gate fidelities across different quantum computing platforms. This standardization effort is crucial for enabling meaningful comparisons between quantum processors from different manufacturers.

Similarly, the National Institute of Standards and Technology (NIST) has established the Quantum Economic Development Consortium, which includes working groups dedicated to developing testing protocols and calibration procedures for quantum chips. These protocols address various aspects of quantum chip performance, including error correction capabilities, qubit connectivity, and system stability under different operating conditions.

The European Telecommunications Standards Institute (ETSI) has formed the Quantum Computing Technical Committee, which is working on standardizing testing methodologies specifically for error-corrected quantum processors. Their focus includes standardized workflows for characterizing logical qubits and measuring the effectiveness of error correction codes in practical implementations.

Industry consortia like OpenSuperQ and the Quantum Industry Consortium (QuIC) are complementing these formal standardization bodies by developing open-source testing suites and calibration tools that implement emerging standards. These tools enable researchers and manufacturers to adopt standardized testing approaches early in the development cycle.

Key areas of standardization include test pattern generation for quantum circuits, statistical methods for error characterization, calibration sequence definitions, and reporting formats for quantum processor benchmarking results. The development of standard reference materials and calibration artifacts for quantum systems is also underway, similar to those used in conventional semiconductor testing.

Cross-platform validation methodologies are being established to ensure that error correction techniques can be fairly evaluated across different qubit technologies, including superconducting, ion trap, and photonic systems. These standardization efforts are essential for the maturation of quantum computing technology and will facilitate more reliable comparison of quantum processors as they advance toward fault-tolerant operation.

Scalability Considerations for Industrial Implementation

As error-corrected chip technologies advance from laboratory settings to industrial production environments, scalability becomes a critical factor determining commercial viability. The implementation of testing and calibration workflows must evolve to accommodate high-volume manufacturing while maintaining precision and reliability. Current industrial implementations face significant challenges when scaling from dozens to thousands or millions of chips.

Production throughput represents the primary scalability concern, with testing and calibration processes often creating bottlenecks in manufacturing pipelines. Traditional sequential testing methodologies become prohibitively time-consuming at scale, necessitating parallel testing architectures. Data from industry leaders indicates that parallelization can improve throughput by 15-20x, though this requires substantial capital investment in specialized equipment.

Test data management systems must scale proportionally with production volume, handling petabytes of calibration and error correction data. Cloud-based solutions have emerged as the preferred approach, offering elastic storage capabilities and distributed processing power. However, these systems must implement robust security protocols to protect proprietary calibration algorithms and chip-specific correction parameters.

Automation represents another critical dimension of scalability. Manual intervention in calibration workflows becomes unsustainable at industrial scales, driving the development of self-calibrating systems and machine learning algorithms that can adaptively optimize testing parameters. These automated systems reduce human error while significantly decreasing per-unit testing costs, with recent implementations demonstrating cost reductions of 30-45% at scale.

Supply chain integration presents additional scalability challenges, particularly regarding the sourcing of specialized testing equipment and calibration reference standards. Manufacturers must develop redundant supply networks to mitigate disruption risks, especially for components with limited supplier options. This often necessitates strategic partnerships with testing equipment vendors to ensure capacity alignment with production forecasts.

Workforce considerations cannot be overlooked when scaling testing operations. The technical complexity of error-corrected chip calibration requires specialized expertise, creating potential staffing bottlenecks. Leading manufacturers have addressed this through comprehensive training programs and the development of intuitive testing interfaces that reduce operator skill requirements while maintaining calibration quality.

Regulatory compliance adds another layer of complexity to scalability planning, particularly for chips destined for safety-critical applications. Testing workflows must maintain compliance documentation at scale, often requiring automated validation systems that can generate and archive certification evidence for each manufactured unit.

Production throughput represents the primary scalability concern, with testing and calibration processes often creating bottlenecks in manufacturing pipelines. Traditional sequential testing methodologies become prohibitively time-consuming at scale, necessitating parallel testing architectures. Data from industry leaders indicates that parallelization can improve throughput by 15-20x, though this requires substantial capital investment in specialized equipment.

Test data management systems must scale proportionally with production volume, handling petabytes of calibration and error correction data. Cloud-based solutions have emerged as the preferred approach, offering elastic storage capabilities and distributed processing power. However, these systems must implement robust security protocols to protect proprietary calibration algorithms and chip-specific correction parameters.

Automation represents another critical dimension of scalability. Manual intervention in calibration workflows becomes unsustainable at industrial scales, driving the development of self-calibrating systems and machine learning algorithms that can adaptively optimize testing parameters. These automated systems reduce human error while significantly decreasing per-unit testing costs, with recent implementations demonstrating cost reductions of 30-45% at scale.

Supply chain integration presents additional scalability challenges, particularly regarding the sourcing of specialized testing equipment and calibration reference standards. Manufacturers must develop redundant supply networks to mitigate disruption risks, especially for components with limited supplier options. This often necessitates strategic partnerships with testing equipment vendors to ensure capacity alignment with production forecasts.

Workforce considerations cannot be overlooked when scaling testing operations. The technical complexity of error-corrected chip calibration requires specialized expertise, creating potential staffing bottlenecks. Leading manufacturers have addressed this through comprehensive training programs and the development of intuitive testing interfaces that reduce operator skill requirements while maintaining calibration quality.

Regulatory compliance adds another layer of complexity to scalability planning, particularly for chips destined for safety-critical applications. Testing workflows must maintain compliance documentation at scale, often requiring automated validation systems that can generate and archive certification evidence for each manufactured unit.

Unlock deeper insights with PatSnap Eureka Quick Research — get a full tech report to explore trends and direct your research. Try now!

Generate Your Research Report Instantly with AI Agent

Supercharge your innovation with PatSnap Eureka AI Agent Platform!