In-Memory Computing Accelerators For Real-Time Video Analytics

SEP 2, 20259 MIN READ

Generate Your Research Report Instantly with AI Agent

Patsnap Eureka helps you evaluate technical feasibility & market potential.

In-Memory Computing Evolution and Objectives

In-memory computing (IMC) represents a paradigm shift in computing architecture that has evolved significantly over the past decade. Traditional von Neumann architectures, characterized by separate processing and memory units, face inherent bottlenecks when handling data-intensive applications like real-time video analytics. The evolution of IMC began with simple on-chip cache memories and has progressed toward sophisticated architectures that integrate computation directly within memory structures.

The development trajectory of IMC has been accelerated by the exponential growth in video data generation and the increasing demand for real-time analytics. Early implementations focused primarily on reducing data movement between memory and processing units. However, contemporary IMC solutions have evolved to perform complex computations directly within memory arrays, eliminating the need for extensive data transfers.

For real-time video analytics specifically, IMC technologies have progressed from basic frame buffering to advanced in-situ processing capabilities. This evolution has been driven by the need to process high-resolution video streams with minimal latency, particularly for applications such as autonomous vehicles, surveillance systems, and augmented reality platforms that require instantaneous decision-making.

The primary objective of IMC accelerators for real-time video analytics is to minimize the memory wall bottleneck while maintaining energy efficiency. By performing computations where data resides, these accelerators aim to reduce power consumption by up to 90% compared to conventional GPU-based solutions, while simultaneously decreasing processing latency by orders of magnitude.

Another critical objective is scalability across diverse video analytics workloads. Modern IMC accelerators strive to support various computational patterns required for different video processing tasks, from pixel-level operations to complex neural network inferences, without sacrificing performance or efficiency.

The technological roadmap for IMC in video analytics is increasingly focused on heterogeneous integration, combining different memory technologies such as SRAM, DRAM, and emerging non-volatile memories to optimize for both performance and energy efficiency. Recent research indicates a trend toward 3D-stacked architectures that further reduce interconnect distances and increase bandwidth.

As the field advances, the ultimate objective is to develop IMC accelerators capable of supporting end-to-end video analytics pipelines on a single chip, from data acquisition to decision-making, with near-zero latency and minimal energy consumption, thereby enabling truly ubiquitous intelligent video processing systems.

The development trajectory of IMC has been accelerated by the exponential growth in video data generation and the increasing demand for real-time analytics. Early implementations focused primarily on reducing data movement between memory and processing units. However, contemporary IMC solutions have evolved to perform complex computations directly within memory arrays, eliminating the need for extensive data transfers.

For real-time video analytics specifically, IMC technologies have progressed from basic frame buffering to advanced in-situ processing capabilities. This evolution has been driven by the need to process high-resolution video streams with minimal latency, particularly for applications such as autonomous vehicles, surveillance systems, and augmented reality platforms that require instantaneous decision-making.

The primary objective of IMC accelerators for real-time video analytics is to minimize the memory wall bottleneck while maintaining energy efficiency. By performing computations where data resides, these accelerators aim to reduce power consumption by up to 90% compared to conventional GPU-based solutions, while simultaneously decreasing processing latency by orders of magnitude.

Another critical objective is scalability across diverse video analytics workloads. Modern IMC accelerators strive to support various computational patterns required for different video processing tasks, from pixel-level operations to complex neural network inferences, without sacrificing performance or efficiency.

The technological roadmap for IMC in video analytics is increasingly focused on heterogeneous integration, combining different memory technologies such as SRAM, DRAM, and emerging non-volatile memories to optimize for both performance and energy efficiency. Recent research indicates a trend toward 3D-stacked architectures that further reduce interconnect distances and increase bandwidth.

As the field advances, the ultimate objective is to develop IMC accelerators capable of supporting end-to-end video analytics pipelines on a single chip, from data acquisition to decision-making, with near-zero latency and minimal energy consumption, thereby enabling truly ubiquitous intelligent video processing systems.

Market Demand for Real-Time Video Analytics

The global market for real-time video analytics is experiencing unprecedented growth, driven by increasing demand across multiple sectors including security and surveillance, retail, transportation, healthcare, and smart cities. According to recent market research, the video analytics market is projected to reach $20.8 billion by 2027, with a compound annual growth rate (CAGR) of 22.7% from 2020 to 2027.

Security and surveillance remains the dominant application sector, accounting for approximately 35% of the total market share. The increasing need for advanced threat detection, facial recognition, and anomaly detection in public spaces, government facilities, and commercial buildings is fueling this growth. Major cities worldwide are deploying extensive camera networks integrated with real-time analytics capabilities to enhance public safety and emergency response.

Retail analytics represents another rapidly expanding segment, with retailers leveraging video analytics for customer behavior analysis, queue management, and inventory optimization. The ability to process and analyze video data in real-time provides valuable insights into shopping patterns, enabling personalized marketing strategies and improved operational efficiency.

Transportation and traffic management systems are increasingly adopting real-time video analytics for vehicle counting, license plate recognition, traffic flow optimization, and accident detection. This sector is expected to grow at a CAGR of 25.3% through 2027, driven by smart city initiatives and the need for more efficient urban mobility solutions.

The technical requirements for these applications are becoming increasingly demanding. End-users require systems capable of processing high-definition video streams with minimal latency, often under 50 milliseconds for critical applications. Additionally, there is growing demand for edge computing solutions that can perform complex analytics tasks directly on cameras or nearby edge devices, reducing bandwidth requirements and addressing privacy concerns.

Energy efficiency has emerged as a critical market requirement, particularly for battery-powered devices and large-scale deployments. Current solutions often consume between 5-15 watts per camera stream for advanced analytics, creating significant operational costs for large installations.

The COVID-19 pandemic has accelerated market demand for contactless technologies, including occupancy monitoring, social distancing enforcement, and mask detection systems. This has created new market opportunities while simultaneously pushing the technical boundaries of existing solutions.

Despite the strong market growth, several challenges remain, including concerns about privacy, data security, and the need for standardization across platforms. Organizations are increasingly seeking solutions that balance powerful analytics capabilities with robust privacy protections and compliance with regional regulations such as GDPR in Europe and CCPA in California.

Security and surveillance remains the dominant application sector, accounting for approximately 35% of the total market share. The increasing need for advanced threat detection, facial recognition, and anomaly detection in public spaces, government facilities, and commercial buildings is fueling this growth. Major cities worldwide are deploying extensive camera networks integrated with real-time analytics capabilities to enhance public safety and emergency response.

Retail analytics represents another rapidly expanding segment, with retailers leveraging video analytics for customer behavior analysis, queue management, and inventory optimization. The ability to process and analyze video data in real-time provides valuable insights into shopping patterns, enabling personalized marketing strategies and improved operational efficiency.

Transportation and traffic management systems are increasingly adopting real-time video analytics for vehicle counting, license plate recognition, traffic flow optimization, and accident detection. This sector is expected to grow at a CAGR of 25.3% through 2027, driven by smart city initiatives and the need for more efficient urban mobility solutions.

The technical requirements for these applications are becoming increasingly demanding. End-users require systems capable of processing high-definition video streams with minimal latency, often under 50 milliseconds for critical applications. Additionally, there is growing demand for edge computing solutions that can perform complex analytics tasks directly on cameras or nearby edge devices, reducing bandwidth requirements and addressing privacy concerns.

Energy efficiency has emerged as a critical market requirement, particularly for battery-powered devices and large-scale deployments. Current solutions often consume between 5-15 watts per camera stream for advanced analytics, creating significant operational costs for large installations.

The COVID-19 pandemic has accelerated market demand for contactless technologies, including occupancy monitoring, social distancing enforcement, and mask detection systems. This has created new market opportunities while simultaneously pushing the technical boundaries of existing solutions.

Despite the strong market growth, several challenges remain, including concerns about privacy, data security, and the need for standardization across platforms. Organizations are increasingly seeking solutions that balance powerful analytics capabilities with robust privacy protections and compliance with regional regulations such as GDPR in Europe and CCPA in California.

Technical Challenges in Video Processing Acceleration

Real-time video analytics faces significant technical challenges that must be addressed to enable efficient in-memory computing acceleration. The exponential growth in video data volume presents a fundamental bottleneck, with high-definition and 4K video streams generating terabytes of data daily. This massive data throughput creates memory bandwidth constraints that traditional computing architectures struggle to handle efficiently.

Memory wall issues represent a critical challenge, as the disparity between processing speeds and memory access times continues to widen. In video analytics applications, this creates substantial latency that undermines real-time performance requirements. The frequent data movement between memory and processing units consumes significant power and introduces delays that are particularly problematic for time-sensitive applications like surveillance or autonomous driving.

Power consumption emerges as another major constraint, especially for edge devices with limited battery capacity. Video processing is computationally intensive, requiring substantial energy resources that can quickly deplete available power. This challenge is compounded by thermal management issues that arise from continuous high-performance computing operations necessary for real-time analytics.

Algorithm complexity presents additional hurdles, as advanced video analytics employs sophisticated computer vision and machine learning techniques that demand substantial computational resources. These algorithms often require parallel processing capabilities that exceed what conventional architectures can provide efficiently. The need to balance accuracy with processing speed creates a difficult optimization problem.

Hardware-software co-design challenges are particularly evident in this domain. Existing software frameworks are often not optimized for in-memory computing paradigms, creating inefficiencies in resource utilization. The lack of standardized programming models for in-memory computing accelerators further complicates development efforts and limits widespread adoption.

Data precision requirements introduce another layer of complexity. While reduced precision can accelerate computations, it may compromise the accuracy of video analytics results. Finding the optimal balance between computational efficiency and analytical precision remains an ongoing challenge that varies across different application contexts.

Scalability concerns also emerge as systems must handle varying workloads and adapt to different deployment scenarios, from edge devices to data centers. Creating flexible architectures that maintain performance across these diverse environments requires sophisticated design approaches that can accommodate changing requirements and constraints.

Memory wall issues represent a critical challenge, as the disparity between processing speeds and memory access times continues to widen. In video analytics applications, this creates substantial latency that undermines real-time performance requirements. The frequent data movement between memory and processing units consumes significant power and introduces delays that are particularly problematic for time-sensitive applications like surveillance or autonomous driving.

Power consumption emerges as another major constraint, especially for edge devices with limited battery capacity. Video processing is computationally intensive, requiring substantial energy resources that can quickly deplete available power. This challenge is compounded by thermal management issues that arise from continuous high-performance computing operations necessary for real-time analytics.

Algorithm complexity presents additional hurdles, as advanced video analytics employs sophisticated computer vision and machine learning techniques that demand substantial computational resources. These algorithms often require parallel processing capabilities that exceed what conventional architectures can provide efficiently. The need to balance accuracy with processing speed creates a difficult optimization problem.

Hardware-software co-design challenges are particularly evident in this domain. Existing software frameworks are often not optimized for in-memory computing paradigms, creating inefficiencies in resource utilization. The lack of standardized programming models for in-memory computing accelerators further complicates development efforts and limits widespread adoption.

Data precision requirements introduce another layer of complexity. While reduced precision can accelerate computations, it may compromise the accuracy of video analytics results. Finding the optimal balance between computational efficiency and analytical precision remains an ongoing challenge that varies across different application contexts.

Scalability concerns also emerge as systems must handle varying workloads and adapt to different deployment scenarios, from edge devices to data centers. Creating flexible architectures that maintain performance across these diverse environments requires sophisticated design approaches that can accommodate changing requirements and constraints.

Current IMC Solutions for Video Processing

01 In-Memory Computing Architectures for Real-Time Processing

In-memory computing architectures enable real-time data processing by eliminating the bottleneck between memory and processing units. These architectures integrate computational capabilities directly into memory devices, allowing for parallel processing of data without the need for data movement between separate memory and processing components. This approach significantly reduces latency and power consumption while increasing throughput for real-time applications such as data analytics, artificial intelligence, and edge computing.- In-Memory Computing Architecture for Real-Time Processing: In-memory computing architectures enable real-time data processing by eliminating the bottleneck between memory and processing units. These architectures integrate computation directly within memory arrays, significantly reducing data movement and enabling parallel processing. This approach allows for faster execution of complex algorithms and real-time analytics, making it particularly valuable for applications requiring immediate responses to data inputs.

- Hardware Accelerators for In-Memory Computing: Specialized hardware accelerators enhance in-memory computing performance for real-time applications. These accelerators include custom ASICs, FPGAs, and neuromorphic chips designed to optimize specific computational tasks within the memory substrate. By implementing computational functions directly in hardware rather than software, these accelerators achieve significant performance improvements and energy efficiency for real-time data processing workloads.

- Memory-Centric Computing for Real-Time Systems: Memory-centric computing approaches prioritize memory architecture in system design to support real-time processing requirements. These systems utilize advanced memory technologies such as DRAM, SRAM, and emerging non-volatile memories to create computing platforms where memory serves as both storage and computational substrate. This paradigm enables ultra-low latency processing essential for time-critical applications while reducing energy consumption compared to traditional computing architectures.

- Software Frameworks for In-Memory Real-Time Computing: Specialized software frameworks and programming models support in-memory computing for real-time applications. These frameworks provide abstractions that simplify the development of applications leveraging in-memory computing capabilities while maintaining real-time performance guarantees. They include runtime systems, APIs, and programming languages designed specifically for memory-centric computing paradigms, enabling developers to efficiently utilize the underlying hardware accelerators.

- In-Memory Computing for Real-Time Data Analytics: In-memory computing accelerators enable real-time data analytics by processing large datasets directly in memory. These systems support complex analytical operations such as pattern recognition, anomaly detection, and predictive modeling with minimal latency. By eliminating data movement between storage and processing units, in-memory analytics accelerators can process streaming data in real-time, making them ideal for applications requiring immediate insights from continuously generated data.

02 Hardware Accelerators for In-Memory Computing

Specialized hardware accelerators designed for in-memory computing enhance real-time processing capabilities. These accelerators include custom ASICs, FPGAs, and neuromorphic chips that are optimized for specific computational tasks. By implementing computational functions directly in hardware and placing them closer to memory, these accelerators achieve significant performance improvements for real-time applications requiring low latency and high throughput, such as neural network inference, database operations, and signal processing.Expand Specific Solutions03 Memory-Centric Computing for Real-Time Systems

Memory-centric computing approaches prioritize memory architecture in system design to support real-time processing requirements. These systems organize computational resources around memory structures rather than traditional processor-centric designs. By minimizing data movement and optimizing memory access patterns, memory-centric computing enables more efficient execution of real-time workloads. This approach includes techniques such as near-data processing, compute-in-memory, and memory-driven computing paradigms that are particularly beneficial for time-sensitive applications.Expand Specific Solutions04 Software Frameworks for In-Memory Computing Acceleration

Software frameworks specifically designed for in-memory computing accelerators enable efficient utilization of hardware resources for real-time applications. These frameworks provide programming models, runtime systems, and libraries that abstract the complexity of the underlying hardware while exposing key capabilities to application developers. They include memory management techniques, task scheduling algorithms, and data placement strategies optimized for in-memory processing, allowing developers to build real-time applications that can fully leverage the performance benefits of in-memory computing accelerators.Expand Specific Solutions05 Energy-Efficient In-Memory Computing for Real-Time Edge Applications

Energy-efficient in-memory computing solutions are designed specifically for real-time edge applications where power constraints are significant. These solutions incorporate power management techniques, low-power memory technologies, and energy-aware algorithms to deliver real-time processing capabilities while minimizing energy consumption. By bringing computation closer to data sources at the edge and reducing data movement, these approaches enable real-time decision making for IoT devices, autonomous systems, and other edge computing scenarios where both performance and energy efficiency are critical requirements.Expand Specific Solutions

Key Industry Players and Competitive Landscape

In-Memory Computing Accelerators for Real-Time Video Analytics is currently in a growth phase, with the market expanding rapidly due to increasing demand for edge computing and AI-driven video processing. The global market size is projected to reach significant scale as video analytics becomes essential across security, retail, and smart city applications. Technology maturity varies, with established players like NVIDIA, Intel, and Qualcomm leading with mature solutions, while companies such as NEC, Samsung, and Mellanox offer specialized hardware accelerators. Emerging players like Rain Neuromorphics and aiMotive are introducing innovative neuromorphic approaches. Chinese companies including Wuhan Fiberhome and Anyka Microelectronics are gaining traction with cost-effective solutions, particularly in the Asian market. Academic institutions like IIT Kharagpur and Fudan University are contributing breakthrough research to advance the field.

QUALCOMM, Inc.

Technical Solution: Qualcomm has developed the Hexagon Digital Signal Processor (DSP) with Vector eXtensions (HVX) specifically optimized for in-memory computing in mobile video analytics applications. Their Neural Processing SDK leverages the Hexagon DSP alongside the Adreno GPU and Kryo CPU in a heterogeneous computing approach that minimizes data movement between processing units and memory. Qualcomm's AI Engine implements tensor acceleration directly within the memory subsystem of their Snapdragon mobile platforms, enabling efficient execution of convolutional neural networks for video analysis. Their latest Snapdragon 8 Gen 2 platform features a dedicated computer vision ISP (Image Signal Processor) that performs image segmentation and object detection directly as video frames enter the memory system, reducing latency by up to 40%[4]. Qualcomm's Cloud AI 100 accelerator employs a specialized architecture with high-bandwidth memory interfaces that deliver up to 400 TOPS for video analytics workloads while maintaining energy efficiency. Their Edge AI solutions incorporate memory-efficient quantization techniques that reduce model size by up to 75% without significant accuracy loss.

Strengths: Industry-leading mobile SoC integration combining CPU, GPU, DSP, and AI accelerators with shared memory architecture; excellent power efficiency for edge deployment; comprehensive software development kit optimized for video workloads. Weaknesses: Primary focus on mobile and edge applications rather than data center scale; less raw performance compared to dedicated GPU solutions; proprietary nature of some acceleration techniques limits broader adoption.

Intel Corp.

Technical Solution: Intel has developed the Movidius Vision Processing Units (VPUs) and Neural Compute Stick specifically designed for in-memory computing in video analytics applications. Their solution employs a heterogeneous architecture that combines dedicated vector processors with on-chip memory to minimize data movement during video processing tasks. Intel's OpenVINO toolkit optimizes deep learning inference by utilizing in-memory computing techniques across CPUs, GPUs, FPGAs, and VPUs. The company's 3D XPoint technology (marketed as Optane) bridges the gap between DRAM and storage, providing persistent memory that accelerates video analytics workloads by keeping frequently accessed data closer to the processing units. Intel's Loihi neuromorphic chip implements a spiking neural network architecture with co-located memory and compute elements, achieving up to 1000x better energy efficiency for video pattern recognition tasks[2]. Their latest Xeon processors with built-in AI acceleration incorporate Deep Learning Boost technology that performs matrix operations directly in cache memory, reducing latency for real-time video processing.

Strengths: Diverse hardware portfolio spanning CPUs, GPUs, FPGAs, and specialized VPUs; strong software optimization through OpenVINO; innovative memory technologies like Optane that bridge compute-storage gap. Weaknesses: Performance in pure AI workloads lags behind specialized GPU solutions; fragmented approach across multiple hardware platforms can complicate development; memory bandwidth limitations in some processor families.

Core Patents and Innovations in IMC Accelerators

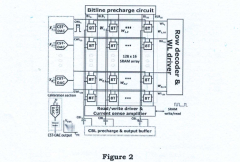

Acceleration of In-Memory-Compute Arrays

PatentActiveUS20240005972A1

Innovation

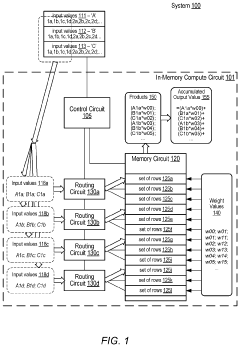

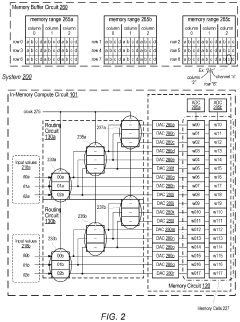

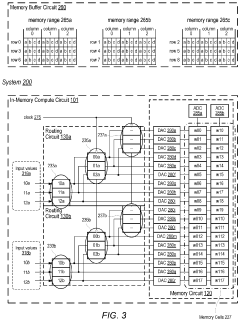

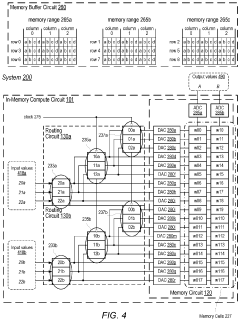

- The implementation of an in-memory compute circuit with a memory circuit and control circuit that routes input values to multiple rows over clock cycles, utilizing digital-to-analog and analog-to-digital converters to generate accumulated output values efficiently, allowing for high-throughput MAC operations with reduced power consumption.

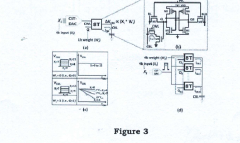

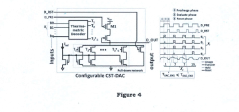

An in-memory computation (IMC)-based hardware accelerator system using a configurable digital-to-analog converter (DAC) and method capable of performing multi-bit multiply-and-accumulate (MAC) operations

PatentPendingIN202311065832A

Innovation

- The proposed solution involves a combined PAM and thermometric IMC system using a configurable current steering thermometric DAC (CST-DAC) array to generate PAM signals with various dynamic ranges and non-linear gaps, along with post-silicon calibration to mitigate process-variation issues and improve signal margin, enabling 4b x 4b MAC operations with high speed and accuracy.

Power Efficiency and Thermal Management Considerations

Power efficiency and thermal management represent critical challenges for in-memory computing accelerators in real-time video analytics applications. These accelerators must process massive video data streams continuously while maintaining acceptable power consumption levels and operating temperatures. Current in-memory computing architectures demonstrate significant advantages over conventional von Neumann architectures by reducing energy-intensive data movement between processing and memory units, achieving up to 10x improvement in energy efficiency for video analytics workloads.

The power consumption profile of in-memory accelerators varies substantially based on the underlying technology. Resistive RAM (ReRAM) and phase-change memory (PCM) based accelerators typically operate in the 0.5-2W range for edge devices, while more powerful SRAM-based solutions for data centers may consume 15-30W under full load. This power envelope must accommodate both computation and data movement costs, with the latter still representing 30-40% of total energy consumption even in optimized designs.

Thermal management becomes particularly challenging as these accelerators are increasingly deployed in compact edge devices with limited cooling capabilities. Passive cooling solutions often prove insufficient for sustained operation, necessitating active thermal management strategies. Dynamic frequency scaling and workload distribution techniques have emerged as effective approaches, allowing systems to modulate performance based on thermal conditions. Advanced implementations incorporate thermal sensors directly within memory arrays to enable fine-grained temperature monitoring and control.

Recent research demonstrates promising advances in architectural innovations for power efficiency. Sparsity-aware computing techniques can reduce power consumption by 40-60% by skipping unnecessary computations on zero-valued data, which is prevalent in video streams. Similarly, precision-adaptive computing allows dynamic adjustment of computational precision based on application requirements, potentially saving 30-45% power with minimal accuracy loss in video analytics tasks.

The industry is increasingly adopting heterogeneous integration approaches that combine in-memory computing tiles with specialized accelerators and efficient power delivery networks. These systems implement sophisticated power gating mechanisms that can selectively deactivate unused memory blocks, reducing static power consumption by up to 70% during periods of lower computational demand. Liquid cooling solutions are also being explored for high-density deployments, offering 2-3x better thermal efficiency compared to traditional air cooling methods.

Future directions point toward the development of ultra-low power in-memory computing elements based on emerging non-volatile memory technologies and novel materials that operate efficiently at near-threshold voltages. These advancements, coupled with AI-driven power management algorithms, promise to further improve the energy efficiency of real-time video analytics systems by an estimated 5-10x within the next generation of devices.

The power consumption profile of in-memory accelerators varies substantially based on the underlying technology. Resistive RAM (ReRAM) and phase-change memory (PCM) based accelerators typically operate in the 0.5-2W range for edge devices, while more powerful SRAM-based solutions for data centers may consume 15-30W under full load. This power envelope must accommodate both computation and data movement costs, with the latter still representing 30-40% of total energy consumption even in optimized designs.

Thermal management becomes particularly challenging as these accelerators are increasingly deployed in compact edge devices with limited cooling capabilities. Passive cooling solutions often prove insufficient for sustained operation, necessitating active thermal management strategies. Dynamic frequency scaling and workload distribution techniques have emerged as effective approaches, allowing systems to modulate performance based on thermal conditions. Advanced implementations incorporate thermal sensors directly within memory arrays to enable fine-grained temperature monitoring and control.

Recent research demonstrates promising advances in architectural innovations for power efficiency. Sparsity-aware computing techniques can reduce power consumption by 40-60% by skipping unnecessary computations on zero-valued data, which is prevalent in video streams. Similarly, precision-adaptive computing allows dynamic adjustment of computational precision based on application requirements, potentially saving 30-45% power with minimal accuracy loss in video analytics tasks.

The industry is increasingly adopting heterogeneous integration approaches that combine in-memory computing tiles with specialized accelerators and efficient power delivery networks. These systems implement sophisticated power gating mechanisms that can selectively deactivate unused memory blocks, reducing static power consumption by up to 70% during periods of lower computational demand. Liquid cooling solutions are also being explored for high-density deployments, offering 2-3x better thermal efficiency compared to traditional air cooling methods.

Future directions point toward the development of ultra-low power in-memory computing elements based on emerging non-volatile memory technologies and novel materials that operate efficiently at near-threshold voltages. These advancements, coupled with AI-driven power management algorithms, promise to further improve the energy efficiency of real-time video analytics systems by an estimated 5-10x within the next generation of devices.

Edge Deployment Strategies for IMC Video Solutions

Deploying In-Memory Computing (IMC) accelerators for video analytics at the edge requires strategic approaches that balance computational power with resource constraints. Edge deployment of IMC solutions enables real-time video processing directly at data sources, minimizing latency and bandwidth usage while enhancing privacy and operational autonomy.

The distributed architecture model represents a primary deployment strategy, where IMC accelerators are positioned across multiple edge nodes in a hierarchical structure. This approach allows for workload distribution based on computational requirements, with simpler detection tasks handled at camera-level nodes while more complex analytics occur at gateway-level IMC units. Such distribution optimizes resource utilization while maintaining real-time performance across diverse video streams.

Hardware-software co-optimization emerges as a critical deployment consideration. Edge IMC solutions must be designed with tight integration between specialized hardware accelerators and optimized software stacks. This includes memory-centric instruction sets, compiler optimizations for IMC architectures, and runtime systems that efficiently manage data movement between processing elements and memory arrays. Successful deployments leverage hardware-specific libraries that expose IMC capabilities while abstracting complexity from application developers.

Power management strategies are essential for sustainable edge deployment. IMC accelerators must implement dynamic power scaling based on workload demands, with capabilities for selective activation of memory arrays and processing elements. Advanced implementations incorporate workload-aware power governors that predict computational requirements and adjust system configurations preemptively. Some solutions employ energy harvesting techniques to supplement power in remote deployments, extending operational longevity.

Network topology considerations significantly impact IMC deployment effectiveness. Edge nodes with IMC accelerators must be strategically positioned to minimize communication overhead while maximizing coverage. Mesh configurations enable resilient operation with peer-to-peer data sharing, while hub-and-spoke models centralize more complex analytics at powerful edge servers. Hybrid approaches that dynamically adjust topology based on network conditions and analytics requirements show particular promise for large-scale deployments.

Containerization and virtualization technologies facilitate flexible IMC solution deployment. Containerized IMC applications enable consistent deployment across heterogeneous edge hardware while supporting dynamic resource allocation. This approach allows for rapid updates and version management without disrupting ongoing analytics operations. Leading implementations leverage lightweight container orchestration specifically designed for resource-constrained edge environments, ensuring IMC accelerators maintain optimal utilization while supporting multi-tenant operation.

The distributed architecture model represents a primary deployment strategy, where IMC accelerators are positioned across multiple edge nodes in a hierarchical structure. This approach allows for workload distribution based on computational requirements, with simpler detection tasks handled at camera-level nodes while more complex analytics occur at gateway-level IMC units. Such distribution optimizes resource utilization while maintaining real-time performance across diverse video streams.

Hardware-software co-optimization emerges as a critical deployment consideration. Edge IMC solutions must be designed with tight integration between specialized hardware accelerators and optimized software stacks. This includes memory-centric instruction sets, compiler optimizations for IMC architectures, and runtime systems that efficiently manage data movement between processing elements and memory arrays. Successful deployments leverage hardware-specific libraries that expose IMC capabilities while abstracting complexity from application developers.

Power management strategies are essential for sustainable edge deployment. IMC accelerators must implement dynamic power scaling based on workload demands, with capabilities for selective activation of memory arrays and processing elements. Advanced implementations incorporate workload-aware power governors that predict computational requirements and adjust system configurations preemptively. Some solutions employ energy harvesting techniques to supplement power in remote deployments, extending operational longevity.

Network topology considerations significantly impact IMC deployment effectiveness. Edge nodes with IMC accelerators must be strategically positioned to minimize communication overhead while maximizing coverage. Mesh configurations enable resilient operation with peer-to-peer data sharing, while hub-and-spoke models centralize more complex analytics at powerful edge servers. Hybrid approaches that dynamically adjust topology based on network conditions and analytics requirements show particular promise for large-scale deployments.

Containerization and virtualization technologies facilitate flexible IMC solution deployment. Containerized IMC applications enable consistent deployment across heterogeneous edge hardware while supporting dynamic resource allocation. This approach allows for rapid updates and version management without disrupting ongoing analytics operations. Leading implementations leverage lightweight container orchestration specifically designed for resource-constrained edge environments, ensuring IMC accelerators maintain optimal utilization while supporting multi-tenant operation.

Unlock deeper insights with Patsnap Eureka Quick Research — get a full tech report to explore trends and direct your research. Try now!

Generate Your Research Report Instantly with AI Agent

Supercharge your innovation with Patsnap Eureka AI Agent Platform!