Automated Dynamic Light Scattering for Enhanced Throughput

SEP 5, 202510 MIN READ

Generate Your Research Report Instantly with AI Agent

Patsnap Eureka helps you evaluate technical feasibility & market potential.

DLS Technology Background and Objectives

Dynamic Light Scattering (DLS) emerged in the 1960s as a powerful analytical technique for characterizing particles in suspension. Initially developed for fundamental research in colloidal science, DLS measures the Brownian motion of particles and correlates it to their size through the Stokes-Einstein relationship. The technology has evolved from basic manual instruments requiring significant operator expertise to sophisticated automated systems capable of high-precision measurements across diverse applications.

Traditional DLS systems have been limited by their throughput capabilities, typically processing only one sample at a time with measurement cycles often taking 30 minutes or more. This constraint has become increasingly problematic as industries like pharmaceuticals, nanomaterials, and biotechnology require rapid analysis of multiple samples for quality control, formulation development, and research applications.

The evolution of DLS technology has been marked by several key advancements: improved laser sources transitioning from helium-neon to solid-state diode lasers; enhanced detection systems with greater sensitivity and dynamic range; and sophisticated correlation algorithms that enable more accurate particle size distribution analysis. Recent innovations have focused on miniaturization, automation, and integration with other analytical techniques.

Automated Dynamic Light Scattering represents the convergence of established DLS principles with modern automation technology, aiming to overcome the throughput limitations that have historically constrained the technique. The primary objective is to develop systems capable of analyzing multiple samples in parallel or in rapid sequence without compromising measurement quality or requiring constant operator intervention.

The technical goals for enhanced throughput DLS systems include: reducing measurement time per sample while maintaining statistical reliability; enabling unattended operation for extended periods; implementing intelligent sample scheduling and prioritization; developing robust self-cleaning mechanisms to prevent cross-contamination; and creating intuitive software interfaces that simplify data interpretation and quality assessment.

Beyond mere acceleration of measurements, modern automated DLS aims to integrate with laboratory information management systems (LIMS), incorporate machine learning for predictive maintenance and anomaly detection, and provide comprehensive data analytics capabilities. These features collectively support the transition toward smart manufacturing and high-throughput research environments where rapid, reliable particle characterization is essential.

The ultimate vision for Automated Dynamic Light Scattering technology is to transform a traditionally specialized analytical technique into a routine, accessible tool that can be deployed across multiple industries and research settings, enabling real-time quality control, accelerated formulation development, and deeper insights into particle behavior in complex systems.

Traditional DLS systems have been limited by their throughput capabilities, typically processing only one sample at a time with measurement cycles often taking 30 minutes or more. This constraint has become increasingly problematic as industries like pharmaceuticals, nanomaterials, and biotechnology require rapid analysis of multiple samples for quality control, formulation development, and research applications.

The evolution of DLS technology has been marked by several key advancements: improved laser sources transitioning from helium-neon to solid-state diode lasers; enhanced detection systems with greater sensitivity and dynamic range; and sophisticated correlation algorithms that enable more accurate particle size distribution analysis. Recent innovations have focused on miniaturization, automation, and integration with other analytical techniques.

Automated Dynamic Light Scattering represents the convergence of established DLS principles with modern automation technology, aiming to overcome the throughput limitations that have historically constrained the technique. The primary objective is to develop systems capable of analyzing multiple samples in parallel or in rapid sequence without compromising measurement quality or requiring constant operator intervention.

The technical goals for enhanced throughput DLS systems include: reducing measurement time per sample while maintaining statistical reliability; enabling unattended operation for extended periods; implementing intelligent sample scheduling and prioritization; developing robust self-cleaning mechanisms to prevent cross-contamination; and creating intuitive software interfaces that simplify data interpretation and quality assessment.

Beyond mere acceleration of measurements, modern automated DLS aims to integrate with laboratory information management systems (LIMS), incorporate machine learning for predictive maintenance and anomaly detection, and provide comprehensive data analytics capabilities. These features collectively support the transition toward smart manufacturing and high-throughput research environments where rapid, reliable particle characterization is essential.

The ultimate vision for Automated Dynamic Light Scattering technology is to transform a traditionally specialized analytical technique into a routine, accessible tool that can be deployed across multiple industries and research settings, enabling real-time quality control, accelerated formulation development, and deeper insights into particle behavior in complex systems.

Market Analysis for High-Throughput DLS Systems

The global market for high-throughput Dynamic Light Scattering (DLS) systems is experiencing robust growth, driven primarily by increasing demand in pharmaceutical research, biotechnology, and materials science sectors. Current market valuation stands at approximately 300 million USD, with projections indicating a compound annual growth rate of 7.8% over the next five years, potentially reaching 450 million USD by 2028.

Pharmaceutical and biotechnology companies represent the largest market segment, accounting for nearly 60% of the total market share. This dominance stems from the critical role DLS plays in drug development processes, particularly in characterizing protein formulations, nanoparticle-based drug delivery systems, and assessing colloidal stability. The rising prevalence of biopharmaceuticals has significantly amplified demand for high-throughput analytical technologies.

Academic research institutions constitute the second-largest market segment at 25%, followed by material science applications at 10%. The remaining market share is distributed among food science, environmental monitoring, and other industrial applications. Geographically, North America leads with 40% market share, followed by Europe (30%), Asia-Pacific (25%), and rest of the world (5%).

Key market drivers include increasing R&D investments in biopharmaceuticals, growing adoption of nanotechnology across industries, and rising demand for quality control in manufacturing processes. The trend toward miniaturization and automation in laboratory equipment further propels market expansion for automated DLS systems.

Customer demand patterns reveal strong preference for systems offering higher sample throughput, reduced sample volume requirements, and improved data analysis capabilities. End-users increasingly seek integrated solutions that combine DLS with complementary techniques such as Raman spectroscopy or size exclusion chromatography.

Price sensitivity varies significantly by market segment. While large pharmaceutical companies prioritize performance and reliability over cost, academic institutions and smaller biotechnology firms demonstrate greater price sensitivity, creating demand for more affordable, entry-level high-throughput systems.

The competitive landscape features established analytical instrument manufacturers alongside specialized DLS technology providers. Recent market consolidation through mergers and acquisitions indicates the strategic importance industry leaders place on expanding their automated analytical capabilities.

Emerging markets, particularly in Asia-Pacific regions, present substantial growth opportunities due to expanding pharmaceutical manufacturing capabilities, increasing research activities, and growing government investments in scientific infrastructure. These regions are expected to show the highest growth rates, potentially reshaping the global market distribution over the next decade.

Pharmaceutical and biotechnology companies represent the largest market segment, accounting for nearly 60% of the total market share. This dominance stems from the critical role DLS plays in drug development processes, particularly in characterizing protein formulations, nanoparticle-based drug delivery systems, and assessing colloidal stability. The rising prevalence of biopharmaceuticals has significantly amplified demand for high-throughput analytical technologies.

Academic research institutions constitute the second-largest market segment at 25%, followed by material science applications at 10%. The remaining market share is distributed among food science, environmental monitoring, and other industrial applications. Geographically, North America leads with 40% market share, followed by Europe (30%), Asia-Pacific (25%), and rest of the world (5%).

Key market drivers include increasing R&D investments in biopharmaceuticals, growing adoption of nanotechnology across industries, and rising demand for quality control in manufacturing processes. The trend toward miniaturization and automation in laboratory equipment further propels market expansion for automated DLS systems.

Customer demand patterns reveal strong preference for systems offering higher sample throughput, reduced sample volume requirements, and improved data analysis capabilities. End-users increasingly seek integrated solutions that combine DLS with complementary techniques such as Raman spectroscopy or size exclusion chromatography.

Price sensitivity varies significantly by market segment. While large pharmaceutical companies prioritize performance and reliability over cost, academic institutions and smaller biotechnology firms demonstrate greater price sensitivity, creating demand for more affordable, entry-level high-throughput systems.

The competitive landscape features established analytical instrument manufacturers alongside specialized DLS technology providers. Recent market consolidation through mergers and acquisitions indicates the strategic importance industry leaders place on expanding their automated analytical capabilities.

Emerging markets, particularly in Asia-Pacific regions, present substantial growth opportunities due to expanding pharmaceutical manufacturing capabilities, increasing research activities, and growing government investments in scientific infrastructure. These regions are expected to show the highest growth rates, potentially reshaping the global market distribution over the next decade.

Current Challenges in Automated DLS Implementation

Despite the significant advancements in Dynamic Light Scattering (DLS) technology, several critical challenges persist in the implementation of fully automated DLS systems for high-throughput applications. These challenges span hardware limitations, software complexities, and methodological constraints that collectively impede the widespread adoption of automated DLS platforms.

Sample preparation remains a significant bottleneck in automated DLS workflows. The technique requires samples free from dust and aggregates, as these contaminants can dramatically skew measurement results. Current automated sample preparation systems struggle to consistently achieve the required cleanliness levels without human intervention, particularly when handling diverse sample types with varying viscosities, concentrations, and buffer compositions.

Data interpretation presents another substantial challenge. While DLS instruments can generate vast amounts of raw data, the automated analysis of this data requires sophisticated algorithms capable of distinguishing between meaningful signals and artifacts. Current automated systems often lack the contextual understanding that experienced human operators possess, leading to potential misinterpretation of complex multimodal distributions or samples with unusual scattering properties.

Temperature control and stability represent critical technical hurdles. DLS measurements are highly sensitive to temperature fluctuations, as these directly affect Brownian motion and consequently the measured particle size. Maintaining precise temperature control across multiple samples in high-throughput settings is technically demanding, with even minor variations potentially compromising data quality and reproducibility.

Integration with existing laboratory workflows and information management systems poses significant implementation challenges. Many automated DLS systems operate as standalone units with proprietary software, creating data silos that hinder seamless incorporation into broader laboratory automation ecosystems. The lack of standardized data formats and communication protocols further complicates this integration.

Validation and quality control mechanisms for automated measurements remain underdeveloped. Unlike manual DLS operations where operators can visually inspect correlation functions and apply expert judgment, automated systems require robust, algorithm-driven quality assessment protocols. Current solutions often struggle to reliably flag problematic measurements without generating excessive false positives or negatives.

Cost considerations also present barriers to widespread adoption. High-quality DLS instrumentation with automation capabilities requires substantial capital investment, while the complexity of these systems necessitates specialized maintenance and calibration. For many laboratories, particularly in academic or small industrial settings, these financial barriers limit access to automated DLS technology despite its potential throughput benefits.

Sample preparation remains a significant bottleneck in automated DLS workflows. The technique requires samples free from dust and aggregates, as these contaminants can dramatically skew measurement results. Current automated sample preparation systems struggle to consistently achieve the required cleanliness levels without human intervention, particularly when handling diverse sample types with varying viscosities, concentrations, and buffer compositions.

Data interpretation presents another substantial challenge. While DLS instruments can generate vast amounts of raw data, the automated analysis of this data requires sophisticated algorithms capable of distinguishing between meaningful signals and artifacts. Current automated systems often lack the contextual understanding that experienced human operators possess, leading to potential misinterpretation of complex multimodal distributions or samples with unusual scattering properties.

Temperature control and stability represent critical technical hurdles. DLS measurements are highly sensitive to temperature fluctuations, as these directly affect Brownian motion and consequently the measured particle size. Maintaining precise temperature control across multiple samples in high-throughput settings is technically demanding, with even minor variations potentially compromising data quality and reproducibility.

Integration with existing laboratory workflows and information management systems poses significant implementation challenges. Many automated DLS systems operate as standalone units with proprietary software, creating data silos that hinder seamless incorporation into broader laboratory automation ecosystems. The lack of standardized data formats and communication protocols further complicates this integration.

Validation and quality control mechanisms for automated measurements remain underdeveloped. Unlike manual DLS operations where operators can visually inspect correlation functions and apply expert judgment, automated systems require robust, algorithm-driven quality assessment protocols. Current solutions often struggle to reliably flag problematic measurements without generating excessive false positives or negatives.

Cost considerations also present barriers to widespread adoption. High-quality DLS instrumentation with automation capabilities requires substantial capital investment, while the complexity of these systems necessitates specialized maintenance and calibration. For many laboratories, particularly in academic or small industrial settings, these financial barriers limit access to automated DLS technology despite its potential throughput benefits.

Current Automation Solutions for DLS Measurements

01 High-throughput dynamic light scattering systems

Advanced systems designed to increase the throughput of dynamic light scattering measurements. These systems typically incorporate multiple sample holders, automated sample handling, and parallel processing capabilities to analyze numerous samples in a shorter time frame. Such high-throughput systems enable rapid characterization of particle size distributions and molecular interactions, making them valuable for research and quality control applications where large numbers of samples need to be analyzed efficiently.- High-throughput dynamic light scattering systems: Advanced systems designed to increase the throughput of dynamic light scattering measurements. These systems typically incorporate multiple sample holders, automated sample handling, and parallel processing capabilities to analyze numerous samples in a shorter time frame. Such high-throughput systems enable rapid characterization of particle size distributions and molecular interactions, making them valuable for research and quality control applications in pharmaceuticals, nanomaterials, and biological samples.

- Data processing techniques for DLS throughput enhancement: Specialized algorithms and data processing methods that improve the throughput and accuracy of dynamic light scattering measurements. These techniques include advanced correlation analysis, noise reduction algorithms, and statistical methods for interpreting scattered light data. By optimizing data processing, these approaches enable faster analysis times, improved signal-to-noise ratios, and the ability to extract meaningful information from complex samples, ultimately increasing the overall throughput of DLS measurements.

- Optical configurations for enhanced DLS throughput: Innovative optical arrangements and components designed to improve the efficiency and throughput of dynamic light scattering instruments. These configurations may include specialized light sources, detectors, fiber optics, and optical filters that optimize the collection and analysis of scattered light. By enhancing the optical pathway, these designs increase signal quality, reduce measurement time, and allow for more efficient characterization of samples, thereby improving overall throughput.

- Microfluidic and lab-on-chip DLS systems: Integration of dynamic light scattering technology with microfluidic platforms and lab-on-chip devices to achieve higher throughput and reduced sample volumes. These miniaturized systems incorporate microchannels, flow cells, and integrated optics to perform DLS measurements on small sample volumes in a continuous or high-throughput manner. The approach enables rapid screening of multiple samples, automation of measurement processes, and integration with other analytical techniques, significantly increasing overall throughput.

- Multi-angle and multi-detector DLS configurations: Systems that utilize multiple detection angles or multiple detectors to simultaneously collect scattered light data from samples. By capturing light scattering information at various angles or using multiple detectors, these configurations enable more comprehensive characterization of samples in a single measurement. This approach reduces the need for repeated measurements, increases data quality, and allows for more efficient analysis of complex samples with polydisperse particle distributions, thereby enhancing the overall throughput of DLS measurements.

02 Data processing techniques for DLS throughput enhancement

Specialized algorithms and data processing methods that improve the throughput and accuracy of dynamic light scattering measurements. These techniques include advanced signal processing, correlation analysis, and statistical methods to extract meaningful information from scattered light data more efficiently. By optimizing data processing, these approaches reduce measurement time while maintaining or improving the quality of results, thereby increasing overall system throughput without requiring hardware modifications.Expand Specific Solutions03 Optical configurations for improved DLS throughput

Novel optical arrangements and components that enhance the efficiency of dynamic light scattering measurements. These configurations may include specialized light sources, detectors, fiber optics, and optical filters designed to maximize signal quality while minimizing measurement time. By optimizing the optical path and detection system, these innovations allow for faster acquisition of high-quality scattering data, thereby increasing the throughput of DLS measurements.Expand Specific Solutions04 Microfluidic and lab-on-chip DLS systems

Integration of dynamic light scattering technology with microfluidic platforms and lab-on-chip devices to achieve higher throughput. These miniaturized systems utilize small sample volumes and can perform multiple measurements in parallel channels. The combination of microfluidics with DLS enables rapid, automated analysis of numerous samples with minimal manual intervention, making these systems particularly valuable for high-throughput screening applications in pharmaceutical development and materials science.Expand Specific Solutions05 Real-time monitoring and automation for DLS throughput

Systems that incorporate real-time monitoring capabilities and automation to enhance the throughput of dynamic light scattering measurements. These innovations include automated sample preparation, robotic handling, continuous flow analysis, and feedback control mechanisms that optimize measurement parameters on-the-fly. By reducing manual operations and enabling continuous processing, these approaches significantly increase the number of samples that can be analyzed in a given time period while maintaining measurement quality and reliability.Expand Specific Solutions

Leading Companies in DLS Instrumentation

Automated Dynamic Light Scattering (DLS) for Enhanced Throughput is currently in a growth phase, with increasing market adoption driven by demand for high-throughput nanoparticle characterization. The global market is expanding steadily, estimated at approximately $300-400 million with projected annual growth of 8-10%. Technologically, the field shows varying maturity levels across players. Established instrumentation companies like Wyatt Technology and Otsuka Electronics lead with advanced commercial solutions, while research institutions such as South China Normal University and KAIST are developing next-generation capabilities. Major corporations including Samsung Electronics, Philips, and Fujitsu are integrating DLS into broader analytical platforms. The competitive landscape features specialized instrument manufacturers competing with diversified technology conglomerates, with innovation focused on automation, miniaturization, and integration with AI-driven analytics to enhance throughput and data quality.

Otsuka Electronics Co., Ltd.

Technical Solution: Otsuka Electronics has developed the ELSZ-2000 series, an automated dynamic light scattering system designed specifically for high-throughput applications. Their technology incorporates a unique optical design with a 10mW semiconductor laser operating at 660nm wavelength and utilizes a proprietary multi-tau digital correlator for enhanced sensitivity. The system features an automated dilution module that can prepare samples at multiple concentrations without user intervention, significantly increasing throughput while ensuring measurement accuracy. Otsuka's technology includes temperature-controlled sample chambers (0-90°C) with precision of ±0.1°C and automated cleaning cycles between measurements to prevent cross-contamination. Their software platform provides batch measurement capabilities with automated data processing algorithms that can analyze particle size distributions, zeta potential, and molecular weight simultaneously, reducing total analysis time by approximately 65% compared to conventional methods.

Strengths: Excellent automation of sample preparation including dilution steps; comprehensive measurement capabilities (size, zeta potential, molecular weight); robust contamination prevention systems; user-friendly interface requiring minimal training. Weaknesses: More limited temperature range than some competitors; slightly lower sensitivity for very small particles (<10nm); fewer customization options for specialized applications.

Q.SyS, Inc.

Technical Solution: Q.SyS has developed the AutoDLS platform, a fully automated dynamic light scattering system designed for pharmaceutical and biotechnology applications requiring high throughput. Their technology utilizes a unique optical configuration with dual detection angles (90° and 173°) to optimize measurements across different sample types and concentrations. The system incorporates robotic sample handling with capacity for up to 192 samples and features proprietary "intelligent scheduling" algorithms that optimize measurement sequences based on sample properties. Q.SyS's technology includes automated filtration modules to remove dust and contaminants prior to measurement, significantly improving data quality. Their software platform employs machine learning algorithms to identify and correct for artifacts in scattering data, reducing failed measurements by approximately 40% compared to conventional systems. The AutoDLS system also features integrated stability testing protocols with automated time-course measurements at controlled temperature conditions.

Strengths: Highest sample capacity in the industry; dual-angle detection for versatility across sample types; advanced machine learning for data quality improvement; specialized pharmaceutical validation protocols. Weaknesses: Relatively new to market with less established track record; higher maintenance requirements due to complex robotics; limited compatibility with third-party data analysis tools.

Key Innovations in High-Throughput DLS Methods

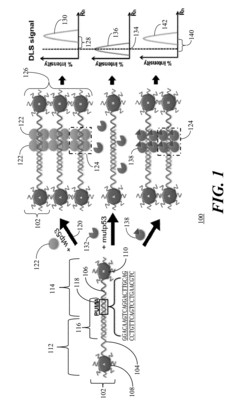

Dynamic Light Scattering Nanoplatform For High-Throughput Drug Screening

PatentInactiveUS20180172678A1

Innovation

- A method using plasmonic metal nanoparticle probes with specific response elements, combined with dynamic light scattering (DLS) to measure particle size distribution, allowing for sensitive and label-free detection of sequence-specific transcription factor-DNA interactions in multi-well plates, enabling fast and high-throughput drug and disease screening.

Configuring and controlling autosampler

PatentWO2025137538A2

Innovation

- The implementation of a special-purpose computer system and autosampler that dynamically display results from electrophoretic light scattering measurements, allowing for contemporaneous performance of sample collection, injection, and washing operations, thereby streamlining the automation workflow.

Data Management Strategies for High-Volume DLS Applications

The exponential growth of Dynamic Light Scattering (DLS) applications in high-throughput environments necessitates robust data management strategies. As automated DLS systems generate unprecedented volumes of measurement data, organizations face significant challenges in storage, processing, and analysis workflows. Implementing scalable database architectures becomes essential, with relational databases proving effective for structured DLS data while NoSQL solutions offer advantages for handling raw scattering patterns and unstructured metadata.

Cloud-based storage solutions have emerged as a preferred approach for many research institutions and pharmaceutical companies utilizing automated DLS systems. These platforms provide virtually unlimited storage capacity with built-in redundancy and disaster recovery capabilities. Additionally, they enable seamless collaboration between distributed research teams working with the same DLS datasets, a critical advantage in multi-site research environments.

Data compression techniques specifically optimized for DLS data characteristics can significantly reduce storage requirements without compromising analytical integrity. Lossy compression may be applied to raw scattering intensity data where appropriate, while lossless compression remains essential for derived particle size distributions and critical metadata. Implementing automated data lifecycle management policies further optimizes storage utilization by archiving or purging older DLS measurements based on predefined retention criteria.

Integration of automated data validation protocols represents another crucial component of effective DLS data management. These systems can flag measurements with potential quality issues such as dust contamination, sample aggregation, or instrument calibration drift. Machine learning algorithms trained on historical DLS data can enhance these validation processes by identifying subtle anomalies that might escape traditional rule-based detection methods.

Standardized data formats and metadata schemas are increasingly important as organizations seek to maximize the value of their DLS data assets. Adoption of formats like JCAMP-DX or custom XML schemas with comprehensive metadata documentation facilitates data exchange between different analysis platforms and enables more effective data mining across historical measurements. This standardization also supports regulatory compliance requirements in regulated industries where DLS measurements inform critical quality decisions.

Real-time data processing pipelines represent the frontier of high-volume DLS data management. These systems process incoming measurement data immediately upon acquisition, extracting key parameters and updating centralized dashboards or triggering automated workflows based on predefined criteria. Such capabilities are particularly valuable in manufacturing environments where DLS measurements directly inform process control decisions or quality release determinations.

Cloud-based storage solutions have emerged as a preferred approach for many research institutions and pharmaceutical companies utilizing automated DLS systems. These platforms provide virtually unlimited storage capacity with built-in redundancy and disaster recovery capabilities. Additionally, they enable seamless collaboration between distributed research teams working with the same DLS datasets, a critical advantage in multi-site research environments.

Data compression techniques specifically optimized for DLS data characteristics can significantly reduce storage requirements without compromising analytical integrity. Lossy compression may be applied to raw scattering intensity data where appropriate, while lossless compression remains essential for derived particle size distributions and critical metadata. Implementing automated data lifecycle management policies further optimizes storage utilization by archiving or purging older DLS measurements based on predefined retention criteria.

Integration of automated data validation protocols represents another crucial component of effective DLS data management. These systems can flag measurements with potential quality issues such as dust contamination, sample aggregation, or instrument calibration drift. Machine learning algorithms trained on historical DLS data can enhance these validation processes by identifying subtle anomalies that might escape traditional rule-based detection methods.

Standardized data formats and metadata schemas are increasingly important as organizations seek to maximize the value of their DLS data assets. Adoption of formats like JCAMP-DX or custom XML schemas with comprehensive metadata documentation facilitates data exchange between different analysis platforms and enables more effective data mining across historical measurements. This standardization also supports regulatory compliance requirements in regulated industries where DLS measurements inform critical quality decisions.

Real-time data processing pipelines represent the frontier of high-volume DLS data management. These systems process incoming measurement data immediately upon acquisition, extracting key parameters and updating centralized dashboards or triggering automated workflows based on predefined criteria. Such capabilities are particularly valuable in manufacturing environments where DLS measurements directly inform process control decisions or quality release determinations.

Quality Control and Validation Protocols for Automated DLS

Quality control and validation protocols are essential components of any automated Dynamic Light Scattering (DLS) system designed for enhanced throughput. These protocols ensure the reliability, accuracy, and reproducibility of particle size measurements across multiple samples and over extended operational periods.

The foundation of effective quality control for automated DLS begins with system calibration using certified reference materials. These standards, typically consisting of monodisperse polystyrene latex beads with precisely known diameters, should be measured at regular intervals to verify instrument performance. For high-throughput environments, automated calibration routines that can be scheduled during non-peak hours optimize operational efficiency while maintaining measurement integrity.

Data validation algorithms represent another critical aspect of quality assurance in automated DLS systems. These algorithms should automatically flag measurements that fall outside predetermined acceptance criteria, such as those with unusually high polydispersity indices, low count rates, or significant deviations from expected size distributions. Machine learning approaches can enhance these validation processes by identifying subtle patterns indicative of measurement anomalies that might otherwise go undetected.

Sample-specific validation protocols must account for the diverse nature of materials analyzed in high-throughput environments. Parameters such as concentration ranges, viscosity variations, and potential interactions between sample components and measurement cells should be systematically evaluated. Automated dilution series can be incorporated to identify concentration-dependent effects that might compromise measurement accuracy.

Documentation and traceability form the backbone of robust quality control systems. Automated DLS platforms should maintain comprehensive audit trails that record all system parameters, calibration histories, and measurement conditions. This documentation is particularly valuable for regulated industries where compliance with standards such as ISO 13321 or ASTM E2490 is mandatory.

Inter-laboratory comparison studies provide an external validation mechanism for automated DLS protocols. Participation in such studies allows organizations to benchmark their measurement capabilities against peer institutions and identify potential systematic biases in their automated workflows. Results from these comparisons should inform continuous improvement initiatives for quality control protocols.

Preventive maintenance schedules must be integrated into validation protocols to ensure long-term system reliability. Automated self-diagnostic routines that assess laser stability, detector response, and temperature control precision can preemptively identify hardware issues before they impact measurement quality. These diagnostics should be performed at frequencies determined by system usage patterns and environmental conditions.

The foundation of effective quality control for automated DLS begins with system calibration using certified reference materials. These standards, typically consisting of monodisperse polystyrene latex beads with precisely known diameters, should be measured at regular intervals to verify instrument performance. For high-throughput environments, automated calibration routines that can be scheduled during non-peak hours optimize operational efficiency while maintaining measurement integrity.

Data validation algorithms represent another critical aspect of quality assurance in automated DLS systems. These algorithms should automatically flag measurements that fall outside predetermined acceptance criteria, such as those with unusually high polydispersity indices, low count rates, or significant deviations from expected size distributions. Machine learning approaches can enhance these validation processes by identifying subtle patterns indicative of measurement anomalies that might otherwise go undetected.

Sample-specific validation protocols must account for the diverse nature of materials analyzed in high-throughput environments. Parameters such as concentration ranges, viscosity variations, and potential interactions between sample components and measurement cells should be systematically evaluated. Automated dilution series can be incorporated to identify concentration-dependent effects that might compromise measurement accuracy.

Documentation and traceability form the backbone of robust quality control systems. Automated DLS platforms should maintain comprehensive audit trails that record all system parameters, calibration histories, and measurement conditions. This documentation is particularly valuable for regulated industries where compliance with standards such as ISO 13321 or ASTM E2490 is mandatory.

Inter-laboratory comparison studies provide an external validation mechanism for automated DLS protocols. Participation in such studies allows organizations to benchmark their measurement capabilities against peer institutions and identify potential systematic biases in their automated workflows. Results from these comparisons should inform continuous improvement initiatives for quality control protocols.

Preventive maintenance schedules must be integrated into validation protocols to ensure long-term system reliability. Automated self-diagnostic routines that assess laser stability, detector response, and temperature control precision can preemptively identify hardware issues before they impact measurement quality. These diagnostics should be performed at frequencies determined by system usage patterns and environmental conditions.

Unlock deeper insights with Patsnap Eureka Quick Research — get a full tech report to explore trends and direct your research. Try now!

Generate Your Research Report Instantly with AI Agent

Supercharge your innovation with Patsnap Eureka AI Agent Platform!