DDR5 Usage in Scalable Network Computing Projects

SEP 17, 20259 MIN READ

Generate Your Research Report Instantly with AI Agent

PatSnap Eureka helps you evaluate technical feasibility & market potential.

DDR5 Evolution and Implementation Goals

DDR5 memory technology represents a significant evolution in the DRAM landscape, building upon previous generations with substantial improvements in bandwidth, capacity, and power efficiency. The development of DDR5 began in 2016, with JEDEC finalizing the standard in 2020, marking a critical milestone in memory technology advancement. This fifth generation of Double Data Rate Synchronous Dynamic Random-Access Memory delivers up to twice the bandwidth and density of its predecessor DDR4, while operating at lower voltages.

In the context of scalable network computing projects, DDR5 implementation aims to address several critical objectives. Primary among these is meeting the exponentially growing memory bandwidth demands of modern network infrastructure, which processes increasingly massive data volumes across distributed systems. With data rates starting at 4800 MT/s and a roadmap extending to 8400 MT/s, DDR5 provides the foundation needed for next-generation network computing architectures.

Another key implementation goal involves enhancing memory capacity to support the complex workloads characteristic of network computing environments. DDR5's higher density modules, potentially scaling up to 256GB per DIMM, enable more efficient handling of in-memory databases, real-time analytics, and packet processing tasks that form the backbone of network operations.

Power efficiency represents a third critical objective, as large-scale network computing deployments face significant energy consumption challenges. DDR5's reduced operating voltage of 1.1V (compared to DDR4's 1.2V), combined with improved power management features like on-die voltage regulation, addresses these concerns while supporting sustainability initiatives in data center operations.

Reliability improvements constitute another implementation goal, with DDR5 introducing enhanced error correction capabilities through on-die ECC and decision feedback equalization. These features are particularly valuable in network computing environments where data integrity is paramount and system downtime carries substantial operational costs.

Finally, DDR5 implementation in scalable network computing aims to establish a future-proof memory architecture that can evolve alongside emerging technologies like AI acceleration, edge computing, and 5G/6G infrastructure. The standard's design accommodates these forward-looking requirements while maintaining backward compatibility pathways to protect existing investments.

The technology evolution trajectory suggests DDR5 will remain the dominant memory standard for enterprise network computing through at least 2027, with adoption accelerating as costs decrease and ecosystem support matures. This positions DDR5 as a foundational technology for the next generation of scalable network computing projects.

In the context of scalable network computing projects, DDR5 implementation aims to address several critical objectives. Primary among these is meeting the exponentially growing memory bandwidth demands of modern network infrastructure, which processes increasingly massive data volumes across distributed systems. With data rates starting at 4800 MT/s and a roadmap extending to 8400 MT/s, DDR5 provides the foundation needed for next-generation network computing architectures.

Another key implementation goal involves enhancing memory capacity to support the complex workloads characteristic of network computing environments. DDR5's higher density modules, potentially scaling up to 256GB per DIMM, enable more efficient handling of in-memory databases, real-time analytics, and packet processing tasks that form the backbone of network operations.

Power efficiency represents a third critical objective, as large-scale network computing deployments face significant energy consumption challenges. DDR5's reduced operating voltage of 1.1V (compared to DDR4's 1.2V), combined with improved power management features like on-die voltage regulation, addresses these concerns while supporting sustainability initiatives in data center operations.

Reliability improvements constitute another implementation goal, with DDR5 introducing enhanced error correction capabilities through on-die ECC and decision feedback equalization. These features are particularly valuable in network computing environments where data integrity is paramount and system downtime carries substantial operational costs.

Finally, DDR5 implementation in scalable network computing aims to establish a future-proof memory architecture that can evolve alongside emerging technologies like AI acceleration, edge computing, and 5G/6G infrastructure. The standard's design accommodates these forward-looking requirements while maintaining backward compatibility pathways to protect existing investments.

The technology evolution trajectory suggests DDR5 will remain the dominant memory standard for enterprise network computing through at least 2027, with adoption accelerating as costs decrease and ecosystem support matures. This positions DDR5 as a foundational technology for the next generation of scalable network computing projects.

Market Demand Analysis for High-Performance Network Computing

The network computing market is experiencing unprecedented growth driven by the convergence of several technological trends. Data center traffic is projected to reach 20.6 zettabytes annually by 2025, representing a compound annual growth rate of approximately 25% from 2020. This explosive growth is fueled by the increasing adoption of cloud services, edge computing, artificial intelligence, and the Internet of Things (IoT), all of which demand higher bandwidth and lower latency network infrastructure.

High-performance network computing systems are becoming essential for organizations across various sectors. Financial institutions require ultra-low latency networks for high-frequency trading, where microseconds can translate to millions in profit or loss. Healthcare organizations need robust networks to handle the growing volume of medical imaging data and telemedicine applications. Media and entertainment companies require high-bandwidth networks for content delivery and real-time streaming services.

The deployment of 5G networks is creating additional demand for high-performance network computing. With data rates up to 10 Gbps and latency as low as 1 millisecond, 5G networks require sophisticated computing infrastructure to process and route traffic efficiently. This is driving investments in network function virtualization (NFV) and software-defined networking (SDN) technologies, which in turn increases the need for memory-intensive computing systems.

Memory bandwidth has emerged as a critical bottleneck in network computing performance. Traditional DDR4 memory, with its maximum data rate of 3200 MT/s, is increasingly insufficient for modern network applications that process massive data streams in real-time. This limitation is particularly evident in packet processing, deep packet inspection, and network analytics applications where memory access patterns are often random and unpredictable.

DDR5 memory, with its significantly higher bandwidth (up to 6400 MT/s initially, with a roadmap to 8400 MT/s), improved channel efficiency, and better power management, presents a compelling solution to these challenges. Market research indicates that the DDR5 memory market is expected to grow at a CAGR of 32% from 2021 to 2026, with network infrastructure representing one of the fastest-growing segments.

The demand for DDR5 in network computing is further amplified by the increasing complexity of network security requirements. As cyber threats become more sophisticated, network security appliances need to perform deeper and more complex packet inspection, requiring both higher computational power and memory bandwidth. DDR5's enhanced capabilities directly address these requirements, making it an essential component for next-generation security appliances.

High-performance network computing systems are becoming essential for organizations across various sectors. Financial institutions require ultra-low latency networks for high-frequency trading, where microseconds can translate to millions in profit or loss. Healthcare organizations need robust networks to handle the growing volume of medical imaging data and telemedicine applications. Media and entertainment companies require high-bandwidth networks for content delivery and real-time streaming services.

The deployment of 5G networks is creating additional demand for high-performance network computing. With data rates up to 10 Gbps and latency as low as 1 millisecond, 5G networks require sophisticated computing infrastructure to process and route traffic efficiently. This is driving investments in network function virtualization (NFV) and software-defined networking (SDN) technologies, which in turn increases the need for memory-intensive computing systems.

Memory bandwidth has emerged as a critical bottleneck in network computing performance. Traditional DDR4 memory, with its maximum data rate of 3200 MT/s, is increasingly insufficient for modern network applications that process massive data streams in real-time. This limitation is particularly evident in packet processing, deep packet inspection, and network analytics applications where memory access patterns are often random and unpredictable.

DDR5 memory, with its significantly higher bandwidth (up to 6400 MT/s initially, with a roadmap to 8400 MT/s), improved channel efficiency, and better power management, presents a compelling solution to these challenges. Market research indicates that the DDR5 memory market is expected to grow at a CAGR of 32% from 2021 to 2026, with network infrastructure representing one of the fastest-growing segments.

The demand for DDR5 in network computing is further amplified by the increasing complexity of network security requirements. As cyber threats become more sophisticated, network security appliances need to perform deeper and more complex packet inspection, requiring both higher computational power and memory bandwidth. DDR5's enhanced capabilities directly address these requirements, making it an essential component for next-generation security appliances.

DDR5 Technical Landscape and Integration Challenges

DDR5 memory technology represents a significant advancement in the evolution of DRAM, offering substantial improvements over its predecessor DDR4. In the context of scalable network computing projects, DDR5 introduces critical enhancements including higher bandwidth, increased capacity, and improved power efficiency. The current technical landscape shows DDR5 modules operating at base speeds of 4800 MT/s, with roadmaps extending to 8400 MT/s and beyond, representing nearly double the performance of DDR4's typical 3200 MT/s.

The architecture of DDR5 incorporates several fundamental changes that benefit network computing applications. The implementation of dual-channel architecture within a single DIMM effectively doubles the accessible channels without requiring additional motherboard routing. This design particularly benefits high-throughput network applications that process massive data streams concurrently.

Power management represents another significant advancement in DDR5, with operating voltage reduced from 1.2V to 1.1V. The integration of Power Management ICs (PMICs) directly onto the memory modules shifts voltage regulation away from the motherboard, enabling more precise power delivery and contributing to overall system efficiency—a critical factor in large-scale network deployments where power consumption significantly impacts operational costs.

Despite these advantages, integrating DDR5 into scalable network computing environments presents several technical challenges. Thermal management becomes increasingly complex as higher operating frequencies generate more heat, requiring advanced cooling solutions for high-density server environments. Signal integrity issues also emerge at higher speeds, necessitating more sophisticated PCB designs and potentially increasing manufacturing complexity and costs.

Compatibility concerns represent another integration hurdle, as DDR5 adoption requires comprehensive platform updates including new processors, chipsets, and motherboards designed specifically for the technology. This creates significant transition costs for organizations with established network infrastructure based on previous memory technologies.

The cost premium of DDR5 modules—currently 30-40% higher than equivalent DDR4 modules—presents an additional barrier to widespread adoption in cost-sensitive network computing projects. This premium is expected to decrease as manufacturing volumes increase, but remains a consideration in current deployment planning.

Firmware and software optimization challenges also exist, as memory controllers and operating systems require updates to fully leverage DDR5's architectural advantages. Network applications must be optimized to benefit from the increased bandwidth and modified channel architecture to justify the transition costs.

The architecture of DDR5 incorporates several fundamental changes that benefit network computing applications. The implementation of dual-channel architecture within a single DIMM effectively doubles the accessible channels without requiring additional motherboard routing. This design particularly benefits high-throughput network applications that process massive data streams concurrently.

Power management represents another significant advancement in DDR5, with operating voltage reduced from 1.2V to 1.1V. The integration of Power Management ICs (PMICs) directly onto the memory modules shifts voltage regulation away from the motherboard, enabling more precise power delivery and contributing to overall system efficiency—a critical factor in large-scale network deployments where power consumption significantly impacts operational costs.

Despite these advantages, integrating DDR5 into scalable network computing environments presents several technical challenges. Thermal management becomes increasingly complex as higher operating frequencies generate more heat, requiring advanced cooling solutions for high-density server environments. Signal integrity issues also emerge at higher speeds, necessitating more sophisticated PCB designs and potentially increasing manufacturing complexity and costs.

Compatibility concerns represent another integration hurdle, as DDR5 adoption requires comprehensive platform updates including new processors, chipsets, and motherboards designed specifically for the technology. This creates significant transition costs for organizations with established network infrastructure based on previous memory technologies.

The cost premium of DDR5 modules—currently 30-40% higher than equivalent DDR4 modules—presents an additional barrier to widespread adoption in cost-sensitive network computing projects. This premium is expected to decrease as manufacturing volumes increase, but remains a consideration in current deployment planning.

Firmware and software optimization challenges also exist, as memory controllers and operating systems require updates to fully leverage DDR5's architectural advantages. Network applications must be optimized to benefit from the increased bandwidth and modified channel architecture to justify the transition costs.

Current DDR5 Implementation Strategies for Scalable Networks

01 DDR5 memory architecture and design

DDR5 memory introduces advanced architectural improvements over previous generations, featuring higher data rates, improved power efficiency, and enhanced signal integrity. These designs include optimized channel architecture, improved command/address bus configurations, and specialized circuit designs that enable higher bandwidth while maintaining reliability. The architecture supports higher density memory modules with improved thermal management capabilities.- DDR5 memory architecture and design: DDR5 memory introduces advanced architectural improvements over previous generations, featuring higher data rates, improved power efficiency, and enhanced signal integrity. These designs include optimized channel architecture, improved command/address bus configurations, and specialized circuit designs that enable higher bandwidth while maintaining reliability. The architecture supports higher density memory modules with improved thermal management capabilities.

- DDR5 memory power management systems: Power management innovations in DDR5 memory include on-module voltage regulation, dynamic voltage and frequency scaling, and advanced power states. These technologies enable more efficient operation, reduced power consumption, and better thermal performance. The power management systems allow for independent control of different memory channels and ranks, optimizing energy usage based on workload demands while maintaining performance requirements.

- DDR5 memory interface and signal integrity solutions: DDR5 memory interfaces incorporate advanced signal integrity solutions to support higher data rates, including improved equalization techniques, enhanced termination schemes, and optimized routing methodologies. These interfaces feature decision feedback equalization, adaptive training algorithms, and enhanced clocking architectures to maintain signal quality at increased speeds. The designs address challenges related to crosstalk, jitter, and signal reflection at higher frequencies.

- DDR5 memory module cooling and thermal management: Thermal management solutions for DDR5 memory modules include advanced heat spreader designs, integrated thermal sensors, and specialized cooling mechanisms. These innovations help dissipate the increased heat generated by higher-speed operations and denser memory configurations. The cooling systems may incorporate novel materials, airflow optimization structures, and thermal interface materials to maintain optimal operating temperatures and prevent thermal throttling.

- DDR5 memory testing and validation methods: Testing and validation methodologies for DDR5 memory include advanced error detection and correction mechanisms, comprehensive stress testing procedures, and specialized diagnostic tools. These methods ensure memory reliability at higher speeds and help identify potential issues related to signal integrity, power delivery, and thermal performance. The validation processes incorporate machine learning algorithms for predictive failure analysis and automated test pattern generation to thoroughly evaluate memory performance under various operating conditions.

02 DDR5 power management solutions

Power management innovations in DDR5 memory include on-module voltage regulation, dynamic voltage scaling, and advanced power states. These technologies help reduce overall power consumption while supporting higher operating frequencies. The implementations include integrated power management integrated circuits (PMICs), intelligent power distribution networks, and adaptive voltage control mechanisms that optimize energy efficiency during various operational modes.Expand Specific Solutions03 DDR5 memory controller implementations

Specialized memory controllers for DDR5 incorporate advanced features such as improved command scheduling, enhanced error correction capabilities, and optimized refresh management. These controllers are designed to handle the increased data rates and more complex timing parameters of DDR5 memory. They include innovations in interface design, buffering mechanisms, and protocol handling to maximize memory throughput and minimize latency.Expand Specific Solutions04 DDR5 memory module physical designs

Physical design innovations for DDR5 memory modules include new form factors, enhanced cooling solutions, and improved signal routing techniques. These designs address the challenges of higher operating frequencies and increased power density. Features include optimized PCB layouts, advanced thermal management structures, and improved connector designs that maintain signal integrity while supporting higher data transfer rates.Expand Specific Solutions05 DDR5 testing and validation methodologies

Specialized testing and validation methodologies for DDR5 memory include advanced signal integrity analysis, enhanced stress testing procedures, and comprehensive compatibility verification. These approaches ensure reliable operation at higher frequencies and under various environmental conditions. The methodologies incorporate automated testing systems, sophisticated measurement techniques, and simulation models that validate performance across a wide range of operating parameters.Expand Specific Solutions

Key DDR5 Manufacturers and Network Computing Vendors

The DDR5 memory technology in scalable network computing is currently in a growth phase, with the market expected to expand significantly as data centers upgrade infrastructure. The global market size for DDR5 in network computing is projected to reach several billion dollars by 2025, driven by increasing bandwidth demands and computational requirements. Technologically, DDR5 is maturing rapidly with key players demonstrating varying levels of implementation maturity. Micron, Samsung, and SK Hynix lead in memory manufacturing, while Intel, AMD, and NVIDIA are advancing platform integration. Huawei, IBM, and Qualcomm are developing specialized network computing applications. Server manufacturers like xFusion and Inspur are incorporating DDR5 into their product roadmaps, indicating broad industry adoption despite remaining challenges in power management and thermal performance.

Huawei Technologies Co., Ltd.

Technical Solution: Huawei has developed a comprehensive DDR5 implementation for their scalable network computing platforms, particularly within their CloudEngine data center switches and NetEngine routers. Their DDR5 solution delivers up to 6400 MT/s with proprietary Smart Memory Controller technology that dynamically allocates bandwidth based on network traffic patterns. Huawei's implementation features specialized buffer management optimized for network packet processing with reduced latency paths for critical network functions. Their DDR5 modules incorporate advanced power management with intelligent voltage regulation that can reduce memory subsystem power consumption by up to 25% compared to previous generations. Huawei has also developed proprietary Memory Channel Network (MCN) technology that creates direct memory-to-memory communication channels between network processing units, significantly reducing latency for distributed network computing workloads[1][7].

Strengths: Tightly integrated with Huawei's network equipment ecosystem; excellent power efficiency; innovative MCN technology for distributed processing. Weaknesses: Limited compatibility with non-Huawei platforms; potential supply chain concerns in some markets; premium pricing compared to commodity memory solutions.

Micron Technology, Inc.

Technical Solution: Micron has developed advanced DDR5 memory solutions specifically optimized for scalable network computing environments. Their DDR5 modules feature data rates up to 6400 MT/s, representing a 1.85x improvement over DDR4, with significantly reduced power consumption (1.1V compared to DDR4's 1.2V). Micron's network-optimized DDR5 incorporates Decision Feedback Equalization (DFE) and specialized on-die termination to maintain signal integrity across high-speed network infrastructure. Their Server DIMM portfolio includes capacities up to 256GB per module with built-in Error Correction Code (ECC) and enhanced Reliability, Availability, and Serviceability (RAS) features critical for network computing applications. Micron has also implemented advanced thermal management solutions to address the increased power density of DDR5 in network equipment racks[1][3].

Strengths: Industry-leading power efficiency with 1.1V operation; superior signal integrity at high speeds; comprehensive RAS features for network reliability. Weaknesses: Higher initial implementation costs compared to DDR4 solutions; requires significant platform redesign for optimal performance; thermal management challenges in dense network deployments.

Critical DDR5 Innovations for Network Computing Applications

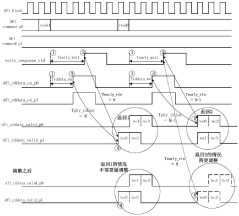

Data processing method, memory controller, processor and electronic device

PatentActiveCN112631966B

Innovation

- The memory controller adjusts the phase of the returned original read data so that the phase required from sending the early response signal to receiving the first data in the original read data is fixed each time, thus supporting the early response function and read cycle. Simultaneous use of redundancy checking functions.

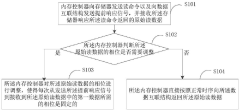

Mainboard, memory system and data transmission method

PatentWO2023208039A1

Innovation

- Design a motherboard that includes a DDR slot, a data buffer and a registered clock driver. Through bus protocol adaptation, the second DDR command on the CPU side is converted into the pending command of the first DDR to realize the connection between the DDR slot and the CPU slot. The bus protocol is adapted and decoupled through the data buffer and registered clock driver to ensure the flexibility of DDR and the use of high-version bus protocols.

Power Efficiency and Thermal Management Considerations

DDR5 memory technology introduces significant advancements in power efficiency that are crucial for scalable network computing environments. The new voltage regulation architecture shifts from motherboard-based to DIMM-integrated power management, operating at a reduced 1.1V compared to DDR4's 1.2V. This architectural change delivers approximately 10-15% power savings while enabling more precise voltage control directly at the memory module level.

The improved power efficiency of DDR5 directly addresses thermal challenges in high-density server environments where network computing infrastructure typically operates. With Decision Feedback Equalization (DFE) and improved signal integrity, DDR5 maintains reliable performance at lower power states, reducing overall heat generation in data center racks. This translates to approximately 30W less heat output per server under full memory load compared to equivalent DDR4 configurations.

DDR5's enhanced thermal management capabilities include more sophisticated temperature sensors with greater accuracy and faster response times. These sensors enable dynamic frequency scaling based on thermal conditions, allowing memory controllers to proactively adjust performance parameters before thermal throttling becomes necessary. The implementation of on-die ECC further reduces the need for power-intensive error correction processes at the system level.

For network computing applications specifically, DDR5's power efficiency manifests in improved performance-per-watt metrics. Tests in network packet processing workloads show up to 36% better throughput-per-watt when compared to DDR4 systems with equivalent capacity. This efficiency gain becomes particularly valuable in edge computing deployments where power constraints often limit computational capacity.

The thermal design considerations for DDR5 in network infrastructure must account for its higher operating frequencies. While more power efficient per operation, the increased data rates can concentrate thermal output. Advanced cooling solutions including directed airflow designs and thermally optimized DIMM layouts have emerged as best practices for DDR5 deployment in network equipment.

Memory power management features in DDR5, such as multiple independent refresh zones (RFM) and fine-grained refresh control, provide additional thermal benefits by reducing unnecessary refresh operations. This capability is especially valuable for network computing workloads with variable memory access patterns, where certain memory regions may remain static while others experience high activity.

The long-term operational cost benefits of DDR5's improved power efficiency extend beyond direct electricity savings to include reduced cooling infrastructure requirements and potential for higher rack densities in network computing facilities. Calculations indicate a potential 8-12% reduction in total cooling costs for large-scale deployments, representing significant operational expenditure savings over infrastructure lifecycles.

The improved power efficiency of DDR5 directly addresses thermal challenges in high-density server environments where network computing infrastructure typically operates. With Decision Feedback Equalization (DFE) and improved signal integrity, DDR5 maintains reliable performance at lower power states, reducing overall heat generation in data center racks. This translates to approximately 30W less heat output per server under full memory load compared to equivalent DDR4 configurations.

DDR5's enhanced thermal management capabilities include more sophisticated temperature sensors with greater accuracy and faster response times. These sensors enable dynamic frequency scaling based on thermal conditions, allowing memory controllers to proactively adjust performance parameters before thermal throttling becomes necessary. The implementation of on-die ECC further reduces the need for power-intensive error correction processes at the system level.

For network computing applications specifically, DDR5's power efficiency manifests in improved performance-per-watt metrics. Tests in network packet processing workloads show up to 36% better throughput-per-watt when compared to DDR4 systems with equivalent capacity. This efficiency gain becomes particularly valuable in edge computing deployments where power constraints often limit computational capacity.

The thermal design considerations for DDR5 in network infrastructure must account for its higher operating frequencies. While more power efficient per operation, the increased data rates can concentrate thermal output. Advanced cooling solutions including directed airflow designs and thermally optimized DIMM layouts have emerged as best practices for DDR5 deployment in network equipment.

Memory power management features in DDR5, such as multiple independent refresh zones (RFM) and fine-grained refresh control, provide additional thermal benefits by reducing unnecessary refresh operations. This capability is especially valuable for network computing workloads with variable memory access patterns, where certain memory regions may remain static while others experience high activity.

The long-term operational cost benefits of DDR5's improved power efficiency extend beyond direct electricity savings to include reduced cooling infrastructure requirements and potential for higher rack densities in network computing facilities. Calculations indicate a potential 8-12% reduction in total cooling costs for large-scale deployments, representing significant operational expenditure savings over infrastructure lifecycles.

Interoperability Standards and Ecosystem Development

The interoperability landscape for DDR5 in scalable network computing environments has evolved significantly, with industry stakeholders collaborating to establish comprehensive standards. The JEDEC Solid State Technology Association has taken the lead in developing the DDR5 specification, ensuring baseline compatibility across hardware implementations. These standards address critical aspects including signal integrity, power management protocols, and command structures that enable seamless integration across multi-vendor environments.

Beyond basic memory specifications, specialized interoperability frameworks have emerged specifically for network computing applications. The Open Compute Project (OCP) has developed reference architectures that incorporate DDR5 memory subsystems optimized for data center workloads, while the Gen-Z Consortium has established protocols for memory-semantic communications that leverage DDR5's enhanced capabilities.

Ecosystem development around DDR5 for network computing has accelerated through collaborative industry initiatives. Memory manufacturers, server OEMs, and network equipment providers have formed technical working groups to address integration challenges unique to high-throughput, low-latency network applications. These collaborations have resulted in validation methodologies and compliance test suites that verify interoperability across the supply chain.

The DDR5 ecosystem has expanded to include specialized software tools that optimize memory utilization in network computing environments. Memory controllers with adaptive training algorithms can fine-tune timing parameters based on workload characteristics, while diagnostic utilities provide detailed visibility into memory subsystem performance. These software components complement hardware standards to create a cohesive ecosystem.

Certification programs have emerged as a critical component of the DDR5 interoperability landscape. Independent testing laboratories now offer validation services that verify compliance with both JEDEC standards and application-specific requirements for network computing. These certification processes have accelerated adoption by reducing integration risks and providing assurance of compatibility across diverse deployment scenarios.

Looking forward, the DDR5 ecosystem continues to evolve with emerging standards for disaggregated memory architectures. Initiatives like Compute Express Link (CXL) are developing protocols that enable memory pooling and sharing across compute nodes, leveraging DDR5's improved reliability features. These developments point toward a future where memory resources can be dynamically allocated across network computing infrastructure, optimizing utilization and enhancing scalability.

Beyond basic memory specifications, specialized interoperability frameworks have emerged specifically for network computing applications. The Open Compute Project (OCP) has developed reference architectures that incorporate DDR5 memory subsystems optimized for data center workloads, while the Gen-Z Consortium has established protocols for memory-semantic communications that leverage DDR5's enhanced capabilities.

Ecosystem development around DDR5 for network computing has accelerated through collaborative industry initiatives. Memory manufacturers, server OEMs, and network equipment providers have formed technical working groups to address integration challenges unique to high-throughput, low-latency network applications. These collaborations have resulted in validation methodologies and compliance test suites that verify interoperability across the supply chain.

The DDR5 ecosystem has expanded to include specialized software tools that optimize memory utilization in network computing environments. Memory controllers with adaptive training algorithms can fine-tune timing parameters based on workload characteristics, while diagnostic utilities provide detailed visibility into memory subsystem performance. These software components complement hardware standards to create a cohesive ecosystem.

Certification programs have emerged as a critical component of the DDR5 interoperability landscape. Independent testing laboratories now offer validation services that verify compliance with both JEDEC standards and application-specific requirements for network computing. These certification processes have accelerated adoption by reducing integration risks and providing assurance of compatibility across diverse deployment scenarios.

Looking forward, the DDR5 ecosystem continues to evolve with emerging standards for disaggregated memory architectures. Initiatives like Compute Express Link (CXL) are developing protocols that enable memory pooling and sharing across compute nodes, leveraging DDR5's improved reliability features. These developments point toward a future where memory resources can be dynamically allocated across network computing infrastructure, optimizing utilization and enhancing scalability.

Unlock deeper insights with PatSnap Eureka Quick Research — get a full tech report to explore trends and direct your research. Try now!

Generate Your Research Report Instantly with AI Agent

Supercharge your innovation with PatSnap Eureka AI Agent Platform!