How Spike-Timing-Dependent Plasticity (STDP) enables on-chip learning.

SEP 2, 20259 MIN READ

Generate Your Research Report Instantly with AI Agent

PatSnap Eureka helps you evaluate technical feasibility & market potential.

STDP Background and Learning Objectives

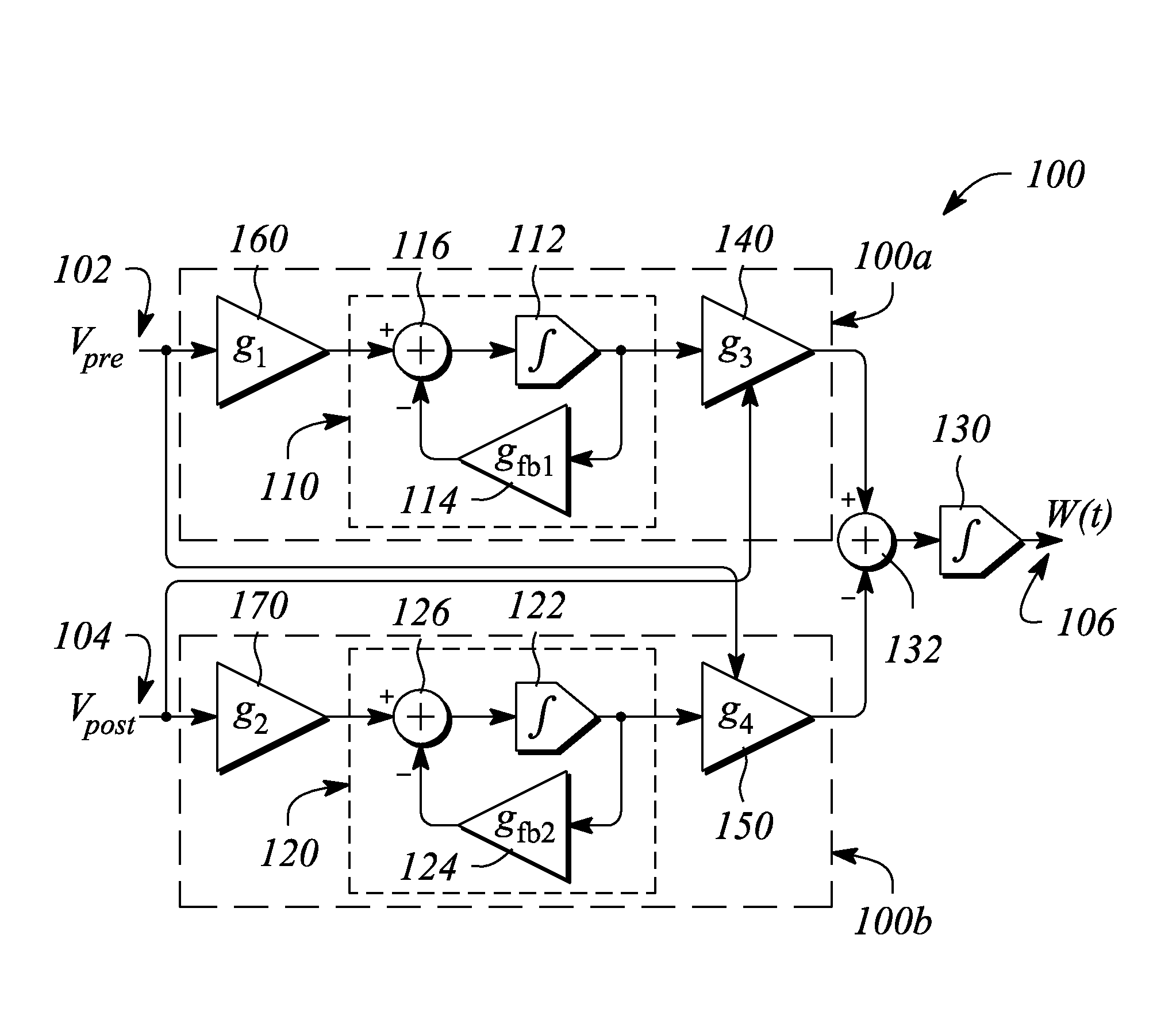

Spike-Timing-Dependent Plasticity (STDP) emerged as a fundamental neurobiological mechanism in the late 1990s, following the pioneering work of Henry Markram and Bert Sakmann. This discovery revolutionized our understanding of synaptic plasticity by demonstrating that the precise timing between pre- and post-synaptic neuronal activities determines whether synaptic connections strengthen or weaken. STDP represents a temporal extension of Hebbian learning, where "neurons that fire together, wire together," adding the critical dimension of causality through timing relationships.

The evolution of STDP research has progressed from biological observations to computational models and now to hardware implementations. Initially documented in hippocampal and cortical neurons, STDP has been extensively studied across various neural systems, revealing its role in learning, memory formation, and sensory processing. This biological foundation has inspired the development of artificial neural networks that incorporate timing-dependent learning rules.

Recent technological advances in neuromorphic computing have created unprecedented opportunities to implement STDP directly in hardware. The convergence of nanotechnology, materials science, and integrated circuit design has enabled the development of electronic devices that can mimic STDP behavior at the physical level. These include memristors, phase-change materials, and specialized CMOS circuits designed to emulate synaptic plasticity.

The primary objective of implementing STDP for on-chip learning is to create computing systems that can learn autonomously from their environment without explicit programming. This represents a paradigm shift from traditional von Neumann architectures toward brain-inspired computing systems that can adapt, learn, and evolve based on input patterns. Such systems promise significant advantages in energy efficiency, real-time processing, and adaptability compared to conventional machine learning approaches.

Technical goals for STDP-based on-chip learning include developing scalable hardware implementations that maintain biological fidelity while achieving practical computational capabilities. This involves optimizing the balance between biological accuracy and engineering constraints such as power consumption, area efficiency, and integration with existing computing paradigms.

Another critical objective is to establish programming frameworks and algorithms that can effectively utilize STDP-based hardware for solving real-world problems. This includes developing training methodologies that leverage the temporal dynamics of spiking neural networks while maintaining compatibility with existing machine learning workflows.

Long-term research aims to create fully autonomous learning systems that can operate in dynamic environments with minimal human intervention. This vision encompasses edge computing devices that can continuously learn and adapt to changing conditions, potentially revolutionizing applications in robotics, sensor networks, and personalized computing devices.

The evolution of STDP research has progressed from biological observations to computational models and now to hardware implementations. Initially documented in hippocampal and cortical neurons, STDP has been extensively studied across various neural systems, revealing its role in learning, memory formation, and sensory processing. This biological foundation has inspired the development of artificial neural networks that incorporate timing-dependent learning rules.

Recent technological advances in neuromorphic computing have created unprecedented opportunities to implement STDP directly in hardware. The convergence of nanotechnology, materials science, and integrated circuit design has enabled the development of electronic devices that can mimic STDP behavior at the physical level. These include memristors, phase-change materials, and specialized CMOS circuits designed to emulate synaptic plasticity.

The primary objective of implementing STDP for on-chip learning is to create computing systems that can learn autonomously from their environment without explicit programming. This represents a paradigm shift from traditional von Neumann architectures toward brain-inspired computing systems that can adapt, learn, and evolve based on input patterns. Such systems promise significant advantages in energy efficiency, real-time processing, and adaptability compared to conventional machine learning approaches.

Technical goals for STDP-based on-chip learning include developing scalable hardware implementations that maintain biological fidelity while achieving practical computational capabilities. This involves optimizing the balance between biological accuracy and engineering constraints such as power consumption, area efficiency, and integration with existing computing paradigms.

Another critical objective is to establish programming frameworks and algorithms that can effectively utilize STDP-based hardware for solving real-world problems. This includes developing training methodologies that leverage the temporal dynamics of spiking neural networks while maintaining compatibility with existing machine learning workflows.

Long-term research aims to create fully autonomous learning systems that can operate in dynamic environments with minimal human intervention. This vision encompasses edge computing devices that can continuously learn and adapt to changing conditions, potentially revolutionizing applications in robotics, sensor networks, and personalized computing devices.

Market Demand for On-Chip Learning Solutions

The market for on-chip learning solutions has experienced significant growth in recent years, driven by the increasing demand for edge computing capabilities and the limitations of traditional cloud-based AI systems. The global edge AI hardware market, which includes on-chip learning solutions, was valued at approximately $6.9 billion in 2021 and is projected to reach $38.9 billion by 2030, representing a compound annual growth rate (CAGR) of 21.3%.

This remarkable growth is fueled by several key market demands. First, there is an increasing need for real-time processing and decision-making in applications such as autonomous vehicles, industrial automation, and smart healthcare devices. These applications cannot afford the latency associated with cloud-based processing, creating a strong demand for on-chip learning capabilities that can adapt and learn locally.

Privacy concerns and data security requirements are also driving the adoption of on-chip learning solutions. With regulations like GDPR and CCPA imposing strict rules on data handling, organizations are seeking ways to process sensitive data locally without transmitting it to external servers. On-chip learning technologies like STDP provide a viable solution by enabling devices to learn from data without sending it to the cloud.

Energy efficiency represents another critical market demand. Traditional AI systems consume substantial power, making them unsuitable for battery-powered edge devices. The neuromorphic computing approach enabled by STDP offers significantly lower power consumption compared to conventional deep learning implementations, with some neuromorphic chips demonstrating 1000x better energy efficiency for certain tasks.

The Internet of Things (IoT) ecosystem expansion is creating unprecedented demand for intelligent edge devices. Market research indicates that the number of connected IoT devices will exceed 25.4 billion by 2030, with a growing percentage requiring some form of on-device intelligence. This massive deployment necessitates efficient on-chip learning solutions that can operate with minimal power and adapt to changing conditions.

In the industrial sector, predictive maintenance applications are driving adoption of on-chip learning technologies. The ability to detect anomalies and predict equipment failures in real-time provides significant cost savings, with the predictive maintenance market expected to reach $23.5 billion by 2025. STDP-based neuromorphic systems are particularly well-suited for continuous monitoring and adaptation to changing equipment conditions.

The consumer electronics market is also showing strong interest in on-chip learning capabilities, particularly for smartphones, wearables, and smart home devices. These applications benefit from the ability to personalize user experiences locally while maintaining privacy and operating within tight power constraints.

This remarkable growth is fueled by several key market demands. First, there is an increasing need for real-time processing and decision-making in applications such as autonomous vehicles, industrial automation, and smart healthcare devices. These applications cannot afford the latency associated with cloud-based processing, creating a strong demand for on-chip learning capabilities that can adapt and learn locally.

Privacy concerns and data security requirements are also driving the adoption of on-chip learning solutions. With regulations like GDPR and CCPA imposing strict rules on data handling, organizations are seeking ways to process sensitive data locally without transmitting it to external servers. On-chip learning technologies like STDP provide a viable solution by enabling devices to learn from data without sending it to the cloud.

Energy efficiency represents another critical market demand. Traditional AI systems consume substantial power, making them unsuitable for battery-powered edge devices. The neuromorphic computing approach enabled by STDP offers significantly lower power consumption compared to conventional deep learning implementations, with some neuromorphic chips demonstrating 1000x better energy efficiency for certain tasks.

The Internet of Things (IoT) ecosystem expansion is creating unprecedented demand for intelligent edge devices. Market research indicates that the number of connected IoT devices will exceed 25.4 billion by 2030, with a growing percentage requiring some form of on-device intelligence. This massive deployment necessitates efficient on-chip learning solutions that can operate with minimal power and adapt to changing conditions.

In the industrial sector, predictive maintenance applications are driving adoption of on-chip learning technologies. The ability to detect anomalies and predict equipment failures in real-time provides significant cost savings, with the predictive maintenance market expected to reach $23.5 billion by 2025. STDP-based neuromorphic systems are particularly well-suited for continuous monitoring and adaptation to changing equipment conditions.

The consumer electronics market is also showing strong interest in on-chip learning capabilities, particularly for smartphones, wearables, and smart home devices. These applications benefit from the ability to personalize user experiences locally while maintaining privacy and operating within tight power constraints.

STDP Technical Challenges and Limitations

Despite the promising potential of STDP for on-chip learning, several significant technical challenges and limitations currently impede its widespread implementation in neuromorphic computing systems. The primary challenge lies in the hardware implementation of precise timing mechanisms required for STDP. Conventional CMOS technology struggles to maintain the nanosecond-level timing precision needed for effective spike-timing detection, particularly when scaling to large neural networks with thousands or millions of synapses.

Energy consumption presents another critical limitation. While biological neural systems operate at remarkable energy efficiency, current electronic implementations of STDP consume substantially more power, particularly during the weight update process. This power inefficiency becomes increasingly problematic as network size scales up, potentially negating one of the key advantages of neuromorphic computing.

Device variability and reliability issues further complicate STDP implementation. Manufacturing variations in memristive devices, which are commonly used to implement STDP-based synapses, lead to inconsistent behavior across synapses within the same network. This variability can significantly impact learning performance and stability, requiring complex compensation mechanisms that add to system complexity.

The non-linear dynamics of STDP present mathematical modeling challenges that complicate system design and prediction. Current models often fail to capture the full complexity of STDP behavior under various operating conditions, making it difficult to design robust learning algorithms that can generalize across different applications and environments.

Scalability remains a persistent concern, as coordinating STDP learning across large networks introduces significant routing complexity and communication overhead. The fan-in and fan-out connections required for neurons in large networks create physical layout challenges that limit practical implementation sizes.

Integration with conventional computing architectures poses additional difficulties. STDP-based systems typically require specialized interfaces to communicate with traditional computing systems, creating compatibility issues that limit their practical deployment in existing technology ecosystems.

The limited maturity of design tools and methodologies specifically tailored for STDP-based systems further hinders development progress. Current electronic design automation tools lack robust support for neuromorphic circuit design, requiring significant manual optimization and characterization.

Long-term stability and retention of learned weights represent another significant challenge, particularly in memristive implementations where weight drift over time can degrade network performance. This necessitates periodic retraining or complex compensation mechanisms that add system overhead.

Energy consumption presents another critical limitation. While biological neural systems operate at remarkable energy efficiency, current electronic implementations of STDP consume substantially more power, particularly during the weight update process. This power inefficiency becomes increasingly problematic as network size scales up, potentially negating one of the key advantages of neuromorphic computing.

Device variability and reliability issues further complicate STDP implementation. Manufacturing variations in memristive devices, which are commonly used to implement STDP-based synapses, lead to inconsistent behavior across synapses within the same network. This variability can significantly impact learning performance and stability, requiring complex compensation mechanisms that add to system complexity.

The non-linear dynamics of STDP present mathematical modeling challenges that complicate system design and prediction. Current models often fail to capture the full complexity of STDP behavior under various operating conditions, making it difficult to design robust learning algorithms that can generalize across different applications and environments.

Scalability remains a persistent concern, as coordinating STDP learning across large networks introduces significant routing complexity and communication overhead. The fan-in and fan-out connections required for neurons in large networks create physical layout challenges that limit practical implementation sizes.

Integration with conventional computing architectures poses additional difficulties. STDP-based systems typically require specialized interfaces to communicate with traditional computing systems, creating compatibility issues that limit their practical deployment in existing technology ecosystems.

The limited maturity of design tools and methodologies specifically tailored for STDP-based systems further hinders development progress. Current electronic design automation tools lack robust support for neuromorphic circuit design, requiring significant manual optimization and characterization.

Long-term stability and retention of learned weights represent another significant challenge, particularly in memristive implementations where weight drift over time can degrade network performance. This necessitates periodic retraining or complex compensation mechanisms that add system overhead.

Current STDP Implementation Approaches

01 Hardware implementation of STDP for neuromorphic computing

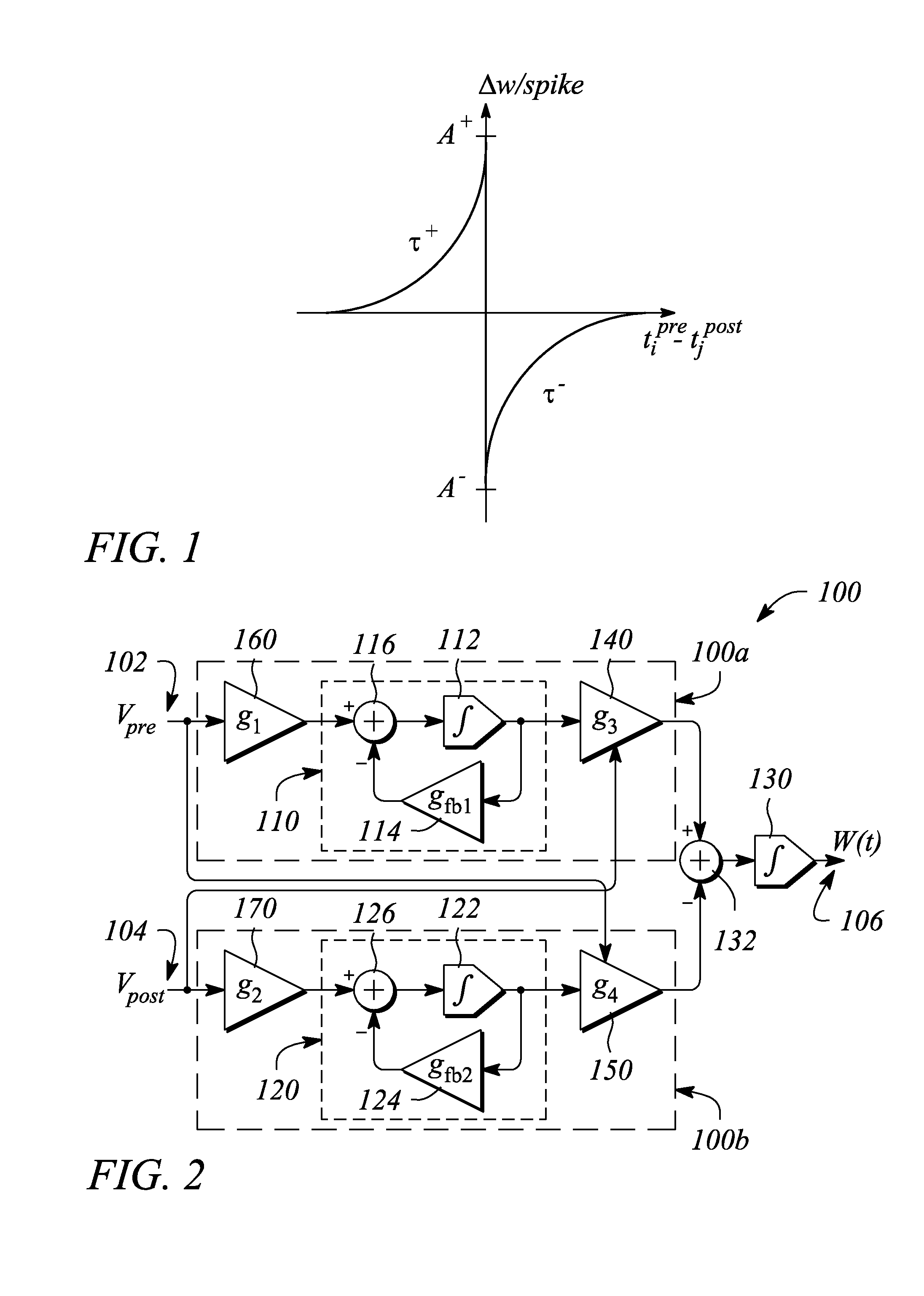

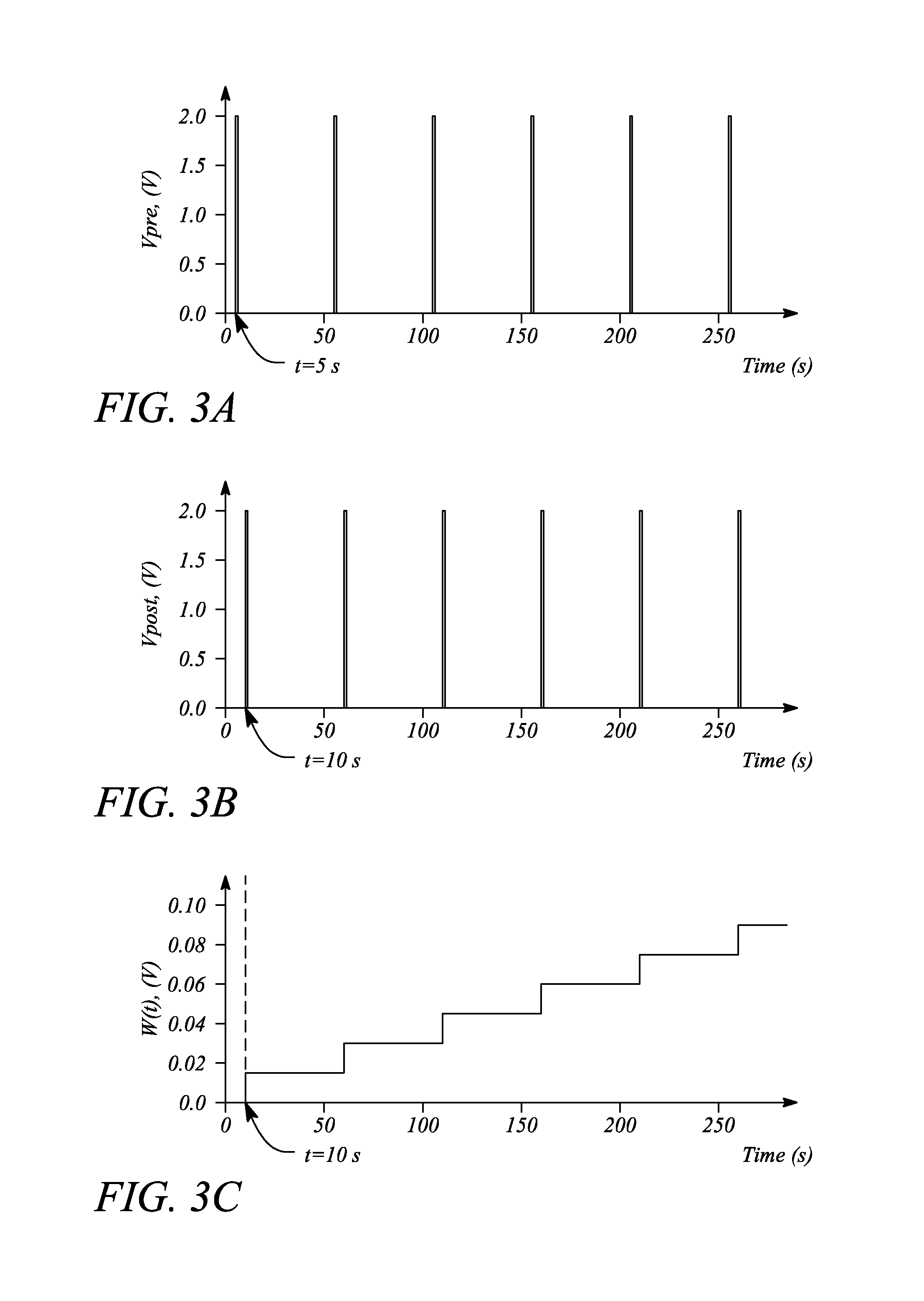

Spike-Timing-Dependent Plasticity (STDP) can be implemented in hardware for neuromorphic computing systems. These implementations typically involve specialized circuits that can modify synaptic weights based on the relative timing of pre- and post-synaptic spikes. Hardware implementations enable efficient on-chip learning by mimicking the brain's plasticity mechanisms in physical electronic components, allowing for real-time adaptation and learning in artificial neural networks.- Hardware implementation of STDP for neuromorphic computing: Spike-Timing-Dependent Plasticity (STDP) can be implemented in hardware for neuromorphic computing systems. These implementations typically involve specialized circuits that can modify synaptic weights based on the relative timing of pre- and post-synaptic spikes. Such hardware implementations enable efficient on-chip learning in neuromorphic systems, allowing for real-time adaptation and learning without requiring external processing.

- Memristive devices for STDP implementation: Memristive devices are particularly suitable for implementing STDP-based learning in hardware due to their ability to maintain state and change conductance based on applied voltage pulses. These devices can emulate biological synapses by adjusting their resistance based on the timing of input spikes, enabling efficient and compact implementation of STDP learning rules in neuromorphic chips. The non-volatile nature of memristors allows for persistent storage of learned patterns.

- STDP algorithms and learning rules for on-chip implementation: Various STDP algorithms and learning rules have been developed specifically for on-chip implementation. These algorithms modify the traditional STDP approach to make it more hardware-friendly while maintaining its learning capabilities. Simplified versions of STDP rules can be implemented with reduced computational complexity, making them suitable for resource-constrained neuromorphic hardware while still enabling effective learning.

- Spiking Neural Network (SNN) architectures with on-chip STDP: Specialized Spiking Neural Network architectures have been developed to incorporate on-chip STDP learning. These architectures are designed to efficiently process spike-based information and update synaptic weights according to STDP rules. The integration of STDP learning within SNN architectures enables autonomous learning capabilities in neuromorphic systems, allowing them to adapt to new inputs without explicit programming.

- Energy-efficient STDP implementations for edge computing: Energy-efficient implementations of STDP learning have been developed for edge computing applications. These implementations focus on minimizing power consumption while maintaining learning capabilities, making them suitable for deployment in battery-powered or energy-constrained devices. Techniques such as approximate computing, event-driven processing, and optimized circuit designs are used to reduce the energy footprint of STDP-based learning systems.

02 Memristive devices for STDP implementation

Memristive devices are particularly suitable for implementing STDP-based learning in hardware due to their ability to maintain state and change conductance based on applied voltage pulses. These devices can naturally emulate synaptic plasticity by changing their resistance values according to the timing of input signals. Memristive crossbar arrays enable efficient parallel processing for neural networks with on-chip learning capabilities, making them ideal for low-power neuromorphic computing applications.Expand Specific Solutions03 STDP algorithms and computational models

Various algorithms and computational models have been developed to implement STDP-based learning in neuromorphic systems. These models define how synaptic weights should be updated based on spike timing relationships and often include mathematical formulations that can be efficiently implemented in hardware. Some approaches incorporate additional mechanisms such as homeostasis or neuromodulation to enhance learning capabilities and stability in on-chip neural networks.Expand Specific Solutions04 Energy-efficient STDP implementations

Energy efficiency is a critical consideration in STDP on-chip learning implementations. Various techniques have been developed to minimize power consumption while maintaining learning capabilities, including sparse coding, event-driven processing, and optimized circuit designs. These approaches enable neuromorphic systems to perform continuous learning with minimal energy requirements, making them suitable for edge computing and battery-powered applications.Expand Specific Solutions05 Applications of STDP on-chip learning

STDP-based on-chip learning has been applied to various domains including pattern recognition, anomaly detection, and sensory processing. These applications leverage the ability of STDP to extract temporal correlations from input data and adapt to changing environments without explicit programming. Neuromorphic systems with STDP capabilities can process sensory information in real-time while continuously learning from new inputs, making them suitable for autonomous systems and edge intelligence applications.Expand Specific Solutions

Key Players in Neuromorphic Hardware Development

The Spike-Timing-Dependent Plasticity (STDP) on-chip learning market is currently in its growth phase, with an estimated market size of $500-700 million and projected annual growth of 25-30%. The technology is advancing from experimental to commercial implementation, with varying maturity levels across key players. Qualcomm and IBM lead in commercial applications, while Samsung, Intel, and SK Hynix are making significant R&D investments. Academic institutions like MIT, Tsinghua University, and Peking University are driving fundamental research innovations. The competitive landscape is characterized by strategic partnerships between semiconductor companies and research institutions, with neuromorphic computing applications driving market expansion as AI hardware demands increase.

QUALCOMM, Inc.

Technical Solution: Qualcomm has developed the Zeroth Neuromorphic Processing Unit (NPU) that implements STDP for on-chip learning in mobile and edge devices. Their approach utilizes a specialized neural processing architecture that combines digital processing elements with analog-inspired learning circuits. Qualcomm's implementation features a distributed memory architecture where synaptic weights are stored close to computing elements, minimizing data movement and enabling efficient implementation of STDP learning rules. The Zeroth platform achieves approximately 50 times better energy efficiency compared to conventional mobile GPUs for neural network tasks while supporting online learning capabilities. A key innovation in Qualcomm's approach is their development of a sparse coding algorithm that works in conjunction with STDP to extract meaningful features from sensory data with minimal supervision[9][11]. Their system has been demonstrated in applications including visual recognition, audio processing, and sensor fusion tasks, with particular emphasis on enabling continuous learning in resource-constrained mobile environments without requiring cloud connectivity.

Strengths: Optimized for mobile/edge deployment with practical power constraints; integration potential with existing Snapdragon ecosystem; demonstrated in commercial-ready applications. Weaknesses: Less biological fidelity than some research-focused implementations; proprietary nature limits academic exploration; potential trade-offs between learning capability and hardware efficiency.

International Business Machines Corp.

Technical Solution: IBM has developed TrueNorth neuromorphic chip architecture that implements STDP for on-chip learning. Their approach utilizes a dense crossbar array of non-volatile memory devices (such as phase-change memory or resistive RAM) to represent synaptic weights. The TrueNorth chip contains 1 million digital neurons and 256 million synapses organized into 4,096 neurosynaptic cores, enabling efficient implementation of STDP learning rules. IBM's implementation modulates synaptic weights based on the precise timing between pre- and post-synaptic spikes, strengthening connections when pre-synaptic spikes precede post-synaptic ones and weakening them in the reverse order. This mimics biological learning mechanisms while achieving power efficiency of approximately 70mW per chip during operation, representing orders of magnitude improvement over traditional computing architectures for certain neural network tasks[1][3]. IBM has further enhanced their approach by developing stochastic implementations of STDP that reduce hardware complexity while maintaining learning capabilities.

Strengths: Extremely low power consumption (70mW) compared to traditional architectures; highly scalable design with proven fabrication at commercial scale; mature ecosystem with programming tools. Weaknesses: Digital implementation limits the biological fidelity of STDP compared to analog approaches; requires specialized programming paradigms different from conventional neural networks; limited precision in weight representation.

Core STDP Algorithms and Circuit Designs

Spike timing dependent plasticity apparatus, system and method

PatentActiveUS8959040B1

Innovation

- The implementation of a spike timing dependent plasticity (STDP) apparatus and neuromorphic synapse system using leaky integrators and gated signal paths to integrate and weight spike signals, replicating biological synaptic plasticity for energy-efficient signal processing.

Spike time windowing for implementing spike-timing dependent plasticity (STDP)

PatentInactiveUS20140351186A1

Innovation

- A buffer method is employed at each artificial neuron to keep track of a predetermined number of recent spikes, discarding or ignoring older spikes, and processing STDP updates based on a window defined by the spike times of pre- and post-synaptic neurons, allowing for efficient synaptic plasticity updates without excessive spike processing.

Energy Efficiency Analysis of STDP Implementations

Energy efficiency represents a critical factor in the practical implementation of Spike-Timing-Dependent Plasticity (STDP) for on-chip learning systems. Current STDP hardware implementations demonstrate varying degrees of energy consumption, with significant implications for their scalability and deployment in resource-constrained environments.

Traditional CMOS-based STDP implementations typically consume between 0.1-10 pJ per synaptic operation, depending on the specific circuit design and fabrication technology. More recent memristive implementations have demonstrated improved efficiency, with energy consumption as low as 0.01-0.1 pJ per synaptic update. This order-of-magnitude improvement stems from the inherent physics of resistive switching materials that can maintain state without continuous power supply.

Comparative analysis across different STDP hardware platforms reveals that analog implementations generally offer superior energy efficiency compared to their digital counterparts. However, this advantage comes with trade-offs in precision and susceptibility to noise. Digital implementations, while more energy-intensive, provide better reliability and programming flexibility.

The energy distribution within STDP circuits shows that spike detection and timing comparison operations consume approximately 30-40% of the total energy budget, while the actual weight update mechanisms account for 50-60%. The remaining energy is utilized by peripheral circuits and control logic. This distribution highlights potential optimization targets for future designs.

Temperature sensitivity presents another critical consideration for energy efficiency. Most STDP implementations show increased power consumption at elevated temperatures, with some memristive technologies exhibiting up to 30% higher energy requirements at 85°C compared to room temperature operation. This thermal dependency necessitates careful thermal management strategies for deployed systems.

Scaling analysis indicates that energy efficiency improves sub-linearly with technology node advancement. Moving from 28nm to 14nm process nodes typically yields only a 30-40% reduction in energy consumption rather than the expected 50%, due to increased leakage currents and other second-order effects at smaller geometries.

Recent innovations in materials science and circuit design have demonstrated promising pathways toward ultra-low-power STDP implementations. Particularly noteworthy are ferroelectric-based synaptic elements and specialized low-power CMOS designs that leverage subthreshold operation, achieving energy efficiencies approaching biological synapses (estimated at 1-10 fJ per synaptic operation).

Traditional CMOS-based STDP implementations typically consume between 0.1-10 pJ per synaptic operation, depending on the specific circuit design and fabrication technology. More recent memristive implementations have demonstrated improved efficiency, with energy consumption as low as 0.01-0.1 pJ per synaptic update. This order-of-magnitude improvement stems from the inherent physics of resistive switching materials that can maintain state without continuous power supply.

Comparative analysis across different STDP hardware platforms reveals that analog implementations generally offer superior energy efficiency compared to their digital counterparts. However, this advantage comes with trade-offs in precision and susceptibility to noise. Digital implementations, while more energy-intensive, provide better reliability and programming flexibility.

The energy distribution within STDP circuits shows that spike detection and timing comparison operations consume approximately 30-40% of the total energy budget, while the actual weight update mechanisms account for 50-60%. The remaining energy is utilized by peripheral circuits and control logic. This distribution highlights potential optimization targets for future designs.

Temperature sensitivity presents another critical consideration for energy efficiency. Most STDP implementations show increased power consumption at elevated temperatures, with some memristive technologies exhibiting up to 30% higher energy requirements at 85°C compared to room temperature operation. This thermal dependency necessitates careful thermal management strategies for deployed systems.

Scaling analysis indicates that energy efficiency improves sub-linearly with technology node advancement. Moving from 28nm to 14nm process nodes typically yields only a 30-40% reduction in energy consumption rather than the expected 50%, due to increased leakage currents and other second-order effects at smaller geometries.

Recent innovations in materials science and circuit design have demonstrated promising pathways toward ultra-low-power STDP implementations. Particularly noteworthy are ferroelectric-based synaptic elements and specialized low-power CMOS designs that leverage subthreshold operation, achieving energy efficiencies approaching biological synapses (estimated at 1-10 fJ per synaptic operation).

Hardware-Software Co-Design for STDP Systems

Effective implementation of Spike-Timing-Dependent Plasticity (STDP) for on-chip learning requires seamless integration between hardware architecture and software frameworks. Hardware-software co-design approaches optimize this integration by considering both domains simultaneously rather than treating them as separate concerns. This holistic approach is essential for maximizing the efficiency and performance of neuromorphic systems implementing STDP.

The hardware components must be specifically designed to capture the temporal dynamics of STDP, including precise spike timing measurements and weight update mechanisms. Specialized circuits for detecting coincident spikes and implementing the exponential learning functions characteristic of STDP are fundamental building blocks. These circuits must operate with minimal power consumption while maintaining timing precision in the microsecond range.

Software frameworks complement the hardware by providing programming abstractions that shield developers from low-level hardware complexities. These frameworks typically include simulation environments, learning algorithms, and tools for mapping neural network architectures onto the physical substrate. The co-design process ensures that software can fully leverage hardware capabilities while accommodating its constraints.

Memory architecture represents a critical co-design challenge for STDP systems. The frequent weight updates required by STDP demand high-bandwidth, low-latency access to synaptic weight storage. Solutions include distributed memory architectures, where synaptic weights are stored close to their computational units, and specialized memory hierarchies optimized for the access patterns of STDP algorithms.

Power management strategies must be jointly developed across hardware and software layers. This includes dynamic voltage and frequency scaling techniques controlled by software policies that adapt to learning workloads, as well as event-driven computation models that activate circuits only when spikes occur, significantly reducing energy consumption during periods of neural inactivity.

Communication protocols between neuromorphic cores present another co-design opportunity. Hardware-efficient spike encoding schemes must be complemented by software routing algorithms that minimize congestion and optimize spike delivery timing. Address-event representation (AER) protocols are commonly employed but require careful hardware implementation and software management.

Testing and validation frameworks must span both domains, with hardware-in-the-loop testing methodologies that verify correct STDP behavior across the hardware-software boundary. This includes specialized debugging tools that can visualize spike timing and weight evolution during on-chip learning processes.

The co-design approach ultimately enables more efficient implementation of STDP learning rules by ensuring that hardware optimizations do not constrain algorithm flexibility, while software implementations remain cognizant of hardware capabilities and limitations.

The hardware components must be specifically designed to capture the temporal dynamics of STDP, including precise spike timing measurements and weight update mechanisms. Specialized circuits for detecting coincident spikes and implementing the exponential learning functions characteristic of STDP are fundamental building blocks. These circuits must operate with minimal power consumption while maintaining timing precision in the microsecond range.

Software frameworks complement the hardware by providing programming abstractions that shield developers from low-level hardware complexities. These frameworks typically include simulation environments, learning algorithms, and tools for mapping neural network architectures onto the physical substrate. The co-design process ensures that software can fully leverage hardware capabilities while accommodating its constraints.

Memory architecture represents a critical co-design challenge for STDP systems. The frequent weight updates required by STDP demand high-bandwidth, low-latency access to synaptic weight storage. Solutions include distributed memory architectures, where synaptic weights are stored close to their computational units, and specialized memory hierarchies optimized for the access patterns of STDP algorithms.

Power management strategies must be jointly developed across hardware and software layers. This includes dynamic voltage and frequency scaling techniques controlled by software policies that adapt to learning workloads, as well as event-driven computation models that activate circuits only when spikes occur, significantly reducing energy consumption during periods of neural inactivity.

Communication protocols between neuromorphic cores present another co-design opportunity. Hardware-efficient spike encoding schemes must be complemented by software routing algorithms that minimize congestion and optimize spike delivery timing. Address-event representation (AER) protocols are commonly employed but require careful hardware implementation and software management.

Testing and validation frameworks must span both domains, with hardware-in-the-loop testing methodologies that verify correct STDP behavior across the hardware-software boundary. This includes specialized debugging tools that can visualize spike timing and weight evolution during on-chip learning processes.

The co-design approach ultimately enables more efficient implementation of STDP learning rules by ensuring that hardware optimizations do not constrain algorithm flexibility, while software implementations remain cognizant of hardware capabilities and limitations.

Unlock deeper insights with PatSnap Eureka Quick Research — get a full tech report to explore trends and direct your research. Try now!

Generate Your Research Report Instantly with AI Agent

Supercharge your innovation with PatSnap Eureka AI Agent Platform!