Unsupervised Learning in Neuromorphic Systems for Pattern Recognition.

SEP 2, 20259 MIN READ

Generate Your Research Report Instantly with AI Agent

PatSnap Eureka helps you evaluate technical feasibility & market potential.

Neuromorphic Computing Evolution and Objectives

Neuromorphic computing represents a paradigm shift in computational architecture, drawing inspiration from the structure and function of biological neural systems. The evolution of this field can be traced back to the 1980s when Carver Mead first introduced the concept of using analog VLSI systems to mimic neurobiological architectures. This pioneering work laid the foundation for hardware implementations that could emulate the brain's parallel processing capabilities and energy efficiency.

Over the past four decades, neuromorphic computing has progressed through several distinct phases. The initial phase focused primarily on developing basic circuit elements that could replicate neural behaviors. The second phase, spanning the 1990s to early 2000s, saw the emergence of more sophisticated neural models and small-scale neuromorphic systems. The current phase, beginning around 2010, has witnessed significant advancements in large-scale neuromorphic architectures, driven by breakthroughs in materials science, integrated circuit design, and neuroscience.

Key technological milestones include the development of silicon neurons, spike-based communication protocols, and plastic synapses capable of learning. Projects such as IBM's TrueNorth, Intel's Loihi, and the SpiNNaker platform have demonstrated the feasibility of building neuromorphic systems with millions of neurons and billions of synapses, approaching the complexity required for meaningful cognitive tasks.

The primary objectives of neuromorphic computing for pattern recognition applications center around achieving brain-like efficiency, adaptability, and robustness. Current von Neumann architectures face fundamental limitations in processing the massive datasets required for modern pattern recognition tasks, particularly in terms of energy consumption and speed. Neuromorphic systems aim to overcome these limitations by implementing massively parallel, event-driven computation with co-located memory and processing.

Specifically for unsupervised learning in pattern recognition, neuromorphic systems target the ability to extract meaningful features and identify patterns without explicit labeling, similar to how biological systems learn from their environment. This capability is crucial for applications in dynamic environments where pre-labeled data is scarce or impractical to obtain.

The field is now trending toward hybrid systems that combine traditional computing elements with neuromorphic components, enabling more flexible deployment across various application domains. Additionally, there is growing interest in incorporating more biologically realistic learning mechanisms, such as spike-timing-dependent plasticity (STDP) and homeostatic plasticity, to enhance the self-organizing capabilities of these systems for pattern recognition tasks.

Over the past four decades, neuromorphic computing has progressed through several distinct phases. The initial phase focused primarily on developing basic circuit elements that could replicate neural behaviors. The second phase, spanning the 1990s to early 2000s, saw the emergence of more sophisticated neural models and small-scale neuromorphic systems. The current phase, beginning around 2010, has witnessed significant advancements in large-scale neuromorphic architectures, driven by breakthroughs in materials science, integrated circuit design, and neuroscience.

Key technological milestones include the development of silicon neurons, spike-based communication protocols, and plastic synapses capable of learning. Projects such as IBM's TrueNorth, Intel's Loihi, and the SpiNNaker platform have demonstrated the feasibility of building neuromorphic systems with millions of neurons and billions of synapses, approaching the complexity required for meaningful cognitive tasks.

The primary objectives of neuromorphic computing for pattern recognition applications center around achieving brain-like efficiency, adaptability, and robustness. Current von Neumann architectures face fundamental limitations in processing the massive datasets required for modern pattern recognition tasks, particularly in terms of energy consumption and speed. Neuromorphic systems aim to overcome these limitations by implementing massively parallel, event-driven computation with co-located memory and processing.

Specifically for unsupervised learning in pattern recognition, neuromorphic systems target the ability to extract meaningful features and identify patterns without explicit labeling, similar to how biological systems learn from their environment. This capability is crucial for applications in dynamic environments where pre-labeled data is scarce or impractical to obtain.

The field is now trending toward hybrid systems that combine traditional computing elements with neuromorphic components, enabling more flexible deployment across various application domains. Additionally, there is growing interest in incorporating more biologically realistic learning mechanisms, such as spike-timing-dependent plasticity (STDP) and homeostatic plasticity, to enhance the self-organizing capabilities of these systems for pattern recognition tasks.

Market Analysis for Unsupervised Pattern Recognition Systems

The market for unsupervised pattern recognition systems utilizing neuromorphic computing is experiencing significant growth, driven by increasing demands for efficient data processing solutions across multiple industries. Current market valuations indicate that neuromorphic computing hardware is projected to reach approximately 8 billion USD by 2028, with pattern recognition applications constituting nearly 30% of this market segment.

Healthcare represents one of the most promising sectors, where unsupervised neuromorphic systems are being deployed for medical image analysis, patient monitoring, and early disease detection. These applications benefit from the ability to identify anomalies without extensive labeled training data, addressing a critical bottleneck in healthcare AI implementation.

Manufacturing and industrial automation form another substantial market segment, where real-time anomaly detection in production lines can significantly reduce defects and maintenance costs. Companies implementing these systems report efficiency improvements of 15-25% in quality control processes, with corresponding reductions in false positive rates compared to traditional computer vision systems.

The automotive and transportation sectors are rapidly adopting neuromorphic pattern recognition for advanced driver assistance systems (ADAS) and autonomous vehicles. The ability to process visual information with lower power consumption makes these systems particularly valuable for edge computing applications where energy efficiency is paramount.

Security and surveillance applications represent a growing market segment, with neuromorphic systems enabling more effective crowd monitoring, unusual behavior detection, and threat identification without requiring extensive labeled examples of every possible scenario. This capability addresses privacy concerns while maintaining security effectiveness.

Consumer electronics manufacturers are increasingly incorporating neuromorphic pattern recognition capabilities into smartphones, wearables, and smart home devices. These implementations focus on personalization features, gesture recognition, and contextual awareness that can operate efficiently on device without cloud connectivity.

Market adoption faces several challenges, including integration complexities with existing systems, lack of standardized development frameworks, and limited understanding of neuromorphic computing principles among potential enterprise adopters. These barriers are gradually being addressed through improved development tools and educational initiatives from major technology providers.

Regional analysis shows North America leading in research and development investment, while Asia-Pacific demonstrates the fastest adoption growth rate, particularly in manufacturing applications. European markets show strong interest in neuromorphic solutions for privacy-preserving AI applications, aligning with regional regulatory frameworks.

Healthcare represents one of the most promising sectors, where unsupervised neuromorphic systems are being deployed for medical image analysis, patient monitoring, and early disease detection. These applications benefit from the ability to identify anomalies without extensive labeled training data, addressing a critical bottleneck in healthcare AI implementation.

Manufacturing and industrial automation form another substantial market segment, where real-time anomaly detection in production lines can significantly reduce defects and maintenance costs. Companies implementing these systems report efficiency improvements of 15-25% in quality control processes, with corresponding reductions in false positive rates compared to traditional computer vision systems.

The automotive and transportation sectors are rapidly adopting neuromorphic pattern recognition for advanced driver assistance systems (ADAS) and autonomous vehicles. The ability to process visual information with lower power consumption makes these systems particularly valuable for edge computing applications where energy efficiency is paramount.

Security and surveillance applications represent a growing market segment, with neuromorphic systems enabling more effective crowd monitoring, unusual behavior detection, and threat identification without requiring extensive labeled examples of every possible scenario. This capability addresses privacy concerns while maintaining security effectiveness.

Consumer electronics manufacturers are increasingly incorporating neuromorphic pattern recognition capabilities into smartphones, wearables, and smart home devices. These implementations focus on personalization features, gesture recognition, and contextual awareness that can operate efficiently on device without cloud connectivity.

Market adoption faces several challenges, including integration complexities with existing systems, lack of standardized development frameworks, and limited understanding of neuromorphic computing principles among potential enterprise adopters. These barriers are gradually being addressed through improved development tools and educational initiatives from major technology providers.

Regional analysis shows North America leading in research and development investment, while Asia-Pacific demonstrates the fastest adoption growth rate, particularly in manufacturing applications. European markets show strong interest in neuromorphic solutions for privacy-preserving AI applications, aligning with regional regulatory frameworks.

Current Challenges in Neuromorphic Unsupervised Learning

Despite significant advancements in neuromorphic computing for pattern recognition, several critical challenges persist in the realm of unsupervised learning implementations. The fundamental obstacle remains the biological plausibility gap, where current algorithms struggle to faithfully replicate the complex, self-organizing learning mechanisms observed in biological neural systems while maintaining computational efficiency. This creates a persistent tension between biological accuracy and practical implementation.

Energy efficiency presents another significant hurdle. While neuromorphic hardware inherently offers power advantages over traditional computing architectures, unsupervised learning algorithms often require extensive training iterations and complex weight update mechanisms that can diminish these efficiency gains. The development of truly low-power unsupervised learning systems that maintain high recognition accuracy remains elusive.

Scalability issues further complicate progress in this field. Many current unsupervised learning approaches demonstrate promising results on simple datasets but fail to scale effectively to complex, real-world pattern recognition tasks. This limitation stems from both algorithmic constraints and hardware implementation challenges, particularly in managing the increased connectivity requirements of larger networks.

Temporal dynamics integration represents a particularly challenging frontier. Biological neural networks excel at processing temporal patterns through complex mechanisms like spike-timing-dependent plasticity (STDP), but implementing these dynamics efficiently in hardware while preserving their computational capabilities has proven difficult. Current implementations often sacrifice temporal processing capabilities for implementation simplicity.

The hardware-algorithm co-design challenge remains particularly acute. Unsupervised learning algorithms developed for conventional computing platforms often translate poorly to neuromorphic hardware, requiring significant modifications that can compromise their effectiveness. Conversely, algorithms optimized for specific neuromorphic architectures may lack generalizability across different hardware implementations.

Noise sensitivity and robustness issues further complicate practical applications. Neuromorphic systems must operate in noisy environments with variable inputs, yet many current unsupervised learning approaches demonstrate brittleness when confronted with data variations, noise, or previously unseen patterns. This limitation severely restricts their applicability in real-world pattern recognition scenarios.

Finally, evaluation metrics and benchmarking standards for neuromorphic unsupervised learning remain underdeveloped. Unlike supervised learning with clear accuracy metrics, assessing the quality of unsupervised feature learning lacks standardized approaches, making it difficult to compare different solutions objectively and track progress in the field.

Energy efficiency presents another significant hurdle. While neuromorphic hardware inherently offers power advantages over traditional computing architectures, unsupervised learning algorithms often require extensive training iterations and complex weight update mechanisms that can diminish these efficiency gains. The development of truly low-power unsupervised learning systems that maintain high recognition accuracy remains elusive.

Scalability issues further complicate progress in this field. Many current unsupervised learning approaches demonstrate promising results on simple datasets but fail to scale effectively to complex, real-world pattern recognition tasks. This limitation stems from both algorithmic constraints and hardware implementation challenges, particularly in managing the increased connectivity requirements of larger networks.

Temporal dynamics integration represents a particularly challenging frontier. Biological neural networks excel at processing temporal patterns through complex mechanisms like spike-timing-dependent plasticity (STDP), but implementing these dynamics efficiently in hardware while preserving their computational capabilities has proven difficult. Current implementations often sacrifice temporal processing capabilities for implementation simplicity.

The hardware-algorithm co-design challenge remains particularly acute. Unsupervised learning algorithms developed for conventional computing platforms often translate poorly to neuromorphic hardware, requiring significant modifications that can compromise their effectiveness. Conversely, algorithms optimized for specific neuromorphic architectures may lack generalizability across different hardware implementations.

Noise sensitivity and robustness issues further complicate practical applications. Neuromorphic systems must operate in noisy environments with variable inputs, yet many current unsupervised learning approaches demonstrate brittleness when confronted with data variations, noise, or previously unseen patterns. This limitation severely restricts their applicability in real-world pattern recognition scenarios.

Finally, evaluation metrics and benchmarking standards for neuromorphic unsupervised learning remain underdeveloped. Unlike supervised learning with clear accuracy metrics, assessing the quality of unsupervised feature learning lacks standardized approaches, making it difficult to compare different solutions objectively and track progress in the field.

Existing Unsupervised Learning Algorithms for Neuromorphic Systems

01 Spiking Neural Networks for Pattern Recognition

Neuromorphic systems implementing spiking neural networks (SNNs) can effectively perform pattern recognition tasks through unsupervised learning. These systems mimic the brain's neural architecture by using spike-timing-dependent plasticity (STDP) to adjust synaptic weights. This bio-inspired approach enables efficient processing of temporal patterns and can adapt to new input patterns without explicit supervision, making it suitable for real-time pattern recognition applications.- Spike-based neuromorphic computing for pattern recognition: Neuromorphic systems that utilize spike-based neural networks for pattern recognition tasks without supervised training. These systems mimic the brain's neural processing by using spiking neurons that communicate through discrete events rather than continuous signals. The spike timing and frequency encode information, enabling efficient unsupervised learning for pattern recognition with reduced power consumption compared to traditional computing approaches.

- Self-organizing neuromorphic architectures: Neuromorphic systems that implement self-organizing principles for unsupervised pattern recognition. These architectures can autonomously adapt their structure and connectivity based on input data patterns without explicit training signals. They utilize mechanisms such as competitive learning, Hebbian plasticity, and homeostatic regulation to form representations of recurring patterns in the input data, enabling efficient clustering and classification of complex data.

- Hardware implementations of unsupervised neuromorphic systems: Specialized hardware designs for implementing unsupervised learning in neuromorphic systems. These include memristor-based circuits, analog VLSI implementations, and dedicated neuromorphic chips that enable efficient on-chip learning. The hardware architectures are optimized for parallel processing of neural computations with low power consumption, making them suitable for edge computing applications requiring real-time pattern recognition.

- Hybrid learning approaches in neuromorphic systems: Neuromorphic systems that combine unsupervised learning with other learning paradigms for enhanced pattern recognition. These hybrid approaches may integrate unsupervised pre-training with supervised fine-tuning, reinforcement learning, or transfer learning techniques. The combination leverages the strengths of different learning methods to improve pattern recognition accuracy while maintaining the efficiency and adaptability of neuromorphic computing.

- Application-specific neuromorphic pattern recognition: Neuromorphic systems with unsupervised learning tailored for specific pattern recognition applications. These include systems designed for computer vision, audio processing, sensor data analysis, and anomaly detection. The architectures are optimized for the particular characteristics of the application domain, with specialized preprocessing, feature extraction, and pattern encoding mechanisms that enhance the performance of unsupervised learning for the target application.

02 Hardware Implementations of Neuromorphic Systems

Specialized hardware architectures are designed to implement neuromorphic systems for unsupervised learning and pattern recognition. These include memristor-based circuits, FPGA implementations, and custom ASIC designs that enable parallel processing and low power consumption. Such hardware implementations overcome the limitations of traditional von Neumann architectures by integrating memory and processing, allowing for more efficient execution of unsupervised learning algorithms for pattern recognition tasks.Expand Specific Solutions03 Self-Organizing Maps and Competitive Learning

Self-organizing maps (SOMs) and competitive learning mechanisms are key components in neuromorphic systems for unsupervised pattern recognition. These approaches allow the system to automatically organize input data into meaningful clusters without labeled training examples. The competitive learning process enables neurons to specialize in recognizing specific patterns through lateral inhibition and neighborhood functions, creating topologically ordered representations of input data that preserve similarity relationships.Expand Specific Solutions04 Online Learning and Adaptation Mechanisms

Neuromorphic systems with online learning capabilities can continuously adapt to new patterns in real-time without requiring system retraining. These systems incorporate plasticity mechanisms that allow for dynamic adjustment of neural parameters based on incoming data streams. This continuous adaptation enables the system to recognize evolving patterns in changing environments, making them suitable for applications requiring lifelong learning and adaptation to non-stationary data distributions.Expand Specific Solutions05 Feature Extraction and Dimensionality Reduction

Neuromorphic systems employ unsupervised feature extraction and dimensionality reduction techniques to identify relevant patterns in high-dimensional data. These methods automatically discover latent representations that capture essential characteristics of the input data without human intervention. By reducing the dimensionality of the input space while preserving important information, these systems can efficiently recognize patterns in complex data such as images, audio, and sensor readings with minimal computational resources.Expand Specific Solutions

Leading Organizations in Neuromorphic Computing Research

Unsupervised learning in neuromorphic systems for pattern recognition is currently in a growth phase, with the market expanding rapidly as AI applications proliferate. The global market size is projected to reach significant scale by 2030, driven by increasing demand for energy-efficient computing solutions. Technologically, the field shows varying maturity levels across players. IBM leads with established neuromorphic architectures, while Google, NVIDIA, and DeepMind focus on algorithmic innovations. Research institutions like CNRS and CEA contribute fundamental breakthroughs, with universities (Fudan, Wuhan) expanding academic foundations. Asian tech giants (Samsung, Tencent) are investing heavily in hardware implementations. The competitive landscape reveals a healthy ecosystem of established tech corporations, specialized AI firms, and academic institutions collaborating to advance this emerging technology.

International Business Machines Corp.

Technical Solution: IBM has developed TrueNorth, a neuromorphic chip architecture specifically designed for unsupervised learning in pattern recognition tasks. The system employs a non-von Neumann computing approach with a million digital neurons and 256 million synapses organized into 4,096 neurosynaptic cores. IBM's neuromorphic system implements spike-timing-dependent plasticity (STDP) learning algorithms that enable unsupervised feature extraction and pattern recognition. The architecture allows for parallel processing of sensory data with extremely low power consumption (around 70mW) while achieving high performance in visual pattern recognition tasks. IBM has demonstrated this technology in applications ranging from real-time video analytics to acoustic pattern recognition, showing classification accuracy comparable to traditional deep learning approaches but with significantly reduced energy requirements. Recent advancements include the integration of resistive memory technologies to enable on-chip learning capabilities, further enhancing the system's ability to adapt to new patterns without explicit training.

Strengths: Extremely low power consumption (70mW) compared to GPU implementations; highly scalable architecture; real-time processing capabilities. Weaknesses: Limited software ecosystem compared to traditional deep learning frameworks; requires specialized programming approaches; challenges in implementing complex learning algorithms beyond STDP.

DeepMind Technologies Ltd.

Technical Solution: DeepMind has pioneered neuromorphic computing approaches for unsupervised learning through their Differentiable Neural Computer (DNC) architecture. This system combines neural networks with external memory components that mimic aspects of biological neural systems while maintaining end-to-end differentiability. For pattern recognition tasks, DeepMind has developed specialized unsupervised learning algorithms that leverage spike-based computation and local learning rules inspired by neuroscience. Their approach implements a form of contrastive Hebbian learning that allows the system to discover underlying patterns in unlabeled data without explicit supervision. The architecture incorporates attention mechanisms that enable the system to focus on relevant features during pattern recognition tasks, similar to biological visual processing. DeepMind has demonstrated this technology on complex pattern recognition benchmarks, achieving state-of-the-art results in unsupervised image classification and temporal pattern detection while maintaining biological plausibility in the learning mechanisms employed.

Strengths: Sophisticated integration of memory and neural processing; strong theoretical foundation in neuroscience principles; demonstrated success on complex benchmarks. Weaknesses: Higher computational requirements than pure neuromorphic hardware implementations; complex architecture may limit deployment in resource-constrained environments; requires significant expertise to implement and optimize.

Key Innovations in Spike-Based Pattern Recognition

Neuromorphic architecture for unsupervised pattern detection and feature learning

PatentActiveUS10650307B2

Innovation

- The proposed solution involves a spiking neural network architecture with multiple neuronal modules, each employing different learning mechanisms, and an arbitration mechanism to selectively modify behavior, enabling enhanced synaptic learning rules that prevent unwanted depression of common patterns and extract unique features.

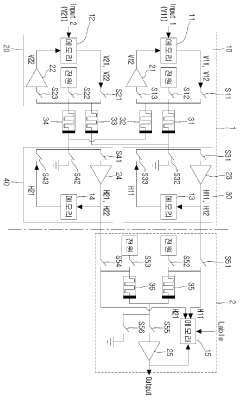

Neuromorphic system operating method therefor

PatentActiveKR1020160005500A

Innovation

- A neuromorphic system incorporating unsupervised and supervised learning hardware, utilizing memristors and neurons to perform parallel computation, simulating neural networks for rapid learning and classification.

Energy Efficiency Considerations in Neuromorphic Implementation

Energy efficiency represents a critical consideration in neuromorphic computing systems designed for unsupervised learning and pattern recognition tasks. Traditional von Neumann architectures face significant energy constraints when implementing neural network operations due to the constant shuttling of data between memory and processing units. Neuromorphic systems address this fundamental limitation through co-located memory and computation, mimicking the brain's energy-efficient information processing paradigm.

Current neuromorphic implementations demonstrate remarkable energy efficiency advantages, with some systems achieving pattern recognition tasks at energy consumption levels 100-1000 times lower than conventional computing architectures. This efficiency stems from several key design principles: event-driven processing, sparse activation patterns, and analog computation. Event-driven processing ensures that energy is consumed only when information changes, eliminating the power drain of continuous clock-based operations.

The choice of hardware substrate significantly impacts energy consumption profiles. Analog implementations using memristive devices can perform multiply-accumulate operations at energy levels below 1 pJ per operation, compared to digital CMOS implementations requiring 10-100 pJ for equivalent computations. However, analog approaches face challenges with device variability and precision limitations that must be balanced against their energy benefits.

Spike-based communication protocols further enhance energy efficiency by encoding information in the timing and frequency of discrete events rather than continuous signal values. This approach reduces data movement and leverages the inherent sparsity of natural data patterns. Research indicates that optimized spike encoding schemes can reduce energy requirements by up to 80% compared to rate-based encoding methods.

Power management strategies specifically designed for neuromorphic systems present additional opportunities for efficiency gains. Dynamic voltage and frequency scaling techniques adapted to the asynchronous nature of neuromorphic computation can yield 30-50% energy savings during pattern recognition tasks with varying computational demands. Sleep modes for inactive neural circuits further reduce static power consumption during operation.

The scaling properties of neuromorphic architectures present promising energy efficiency trends. As system size increases, the energy per operation tends to decrease due to amortized overhead costs and more efficient utilization of shared resources. This favorable scaling characteristic makes neuromorphic approaches particularly attractive for large-scale pattern recognition applications where energy constraints would otherwise be prohibitive.

Current neuromorphic implementations demonstrate remarkable energy efficiency advantages, with some systems achieving pattern recognition tasks at energy consumption levels 100-1000 times lower than conventional computing architectures. This efficiency stems from several key design principles: event-driven processing, sparse activation patterns, and analog computation. Event-driven processing ensures that energy is consumed only when information changes, eliminating the power drain of continuous clock-based operations.

The choice of hardware substrate significantly impacts energy consumption profiles. Analog implementations using memristive devices can perform multiply-accumulate operations at energy levels below 1 pJ per operation, compared to digital CMOS implementations requiring 10-100 pJ for equivalent computations. However, analog approaches face challenges with device variability and precision limitations that must be balanced against their energy benefits.

Spike-based communication protocols further enhance energy efficiency by encoding information in the timing and frequency of discrete events rather than continuous signal values. This approach reduces data movement and leverages the inherent sparsity of natural data patterns. Research indicates that optimized spike encoding schemes can reduce energy requirements by up to 80% compared to rate-based encoding methods.

Power management strategies specifically designed for neuromorphic systems present additional opportunities for efficiency gains. Dynamic voltage and frequency scaling techniques adapted to the asynchronous nature of neuromorphic computation can yield 30-50% energy savings during pattern recognition tasks with varying computational demands. Sleep modes for inactive neural circuits further reduce static power consumption during operation.

The scaling properties of neuromorphic architectures present promising energy efficiency trends. As system size increases, the energy per operation tends to decrease due to amortized overhead costs and more efficient utilization of shared resources. This favorable scaling characteristic makes neuromorphic approaches particularly attractive for large-scale pattern recognition applications where energy constraints would otherwise be prohibitive.

Hardware-Software Co-Design Approaches

The integration of hardware and software components in neuromorphic systems represents a critical approach for achieving efficient unsupervised learning in pattern recognition applications. Hardware-software co-design methodologies enable developers to optimize both computational elements simultaneously, resulting in systems that more effectively mimic biological neural processes while maintaining energy efficiency and performance.

Current co-design approaches typically begin with algorithm-hardware mapping strategies, where unsupervised learning algorithms such as Spike-Timing-Dependent Plasticity (STDP) are specifically tailored to leverage the unique characteristics of neuromorphic hardware. This bidirectional optimization process ensures that learning algorithms can exploit the parallel processing capabilities and analog computation features of neuromorphic chips while accommodating hardware constraints.

Simulation frameworks play a crucial role in the co-design process, with tools like NEST, Brian, and Nengo providing environments where software implementations can be tested before hardware deployment. These frameworks allow researchers to evaluate the performance of unsupervised learning algorithms on virtual neuromorphic architectures, facilitating rapid prototyping and refinement of both hardware specifications and software implementations.

Memory hierarchy optimization represents another significant aspect of co-design approaches. Neuromorphic systems implementing unsupervised learning require efficient data movement between processing elements and memory components. Co-design strategies often involve developing custom memory architectures that support sparse, event-driven computation patterns typical in spiking neural networks, thereby reducing energy consumption associated with data transfer operations.

Programming models specifically designed for neuromorphic hardware constitute an evolving area within co-design methodologies. Languages and frameworks such as PyNN, Loihi's Nx SDK, and SpiNNaker's tools provide abstractions that enable developers to express unsupervised learning algorithms in ways that map efficiently to neuromorphic hardware while maintaining programmer productivity and algorithm flexibility.

Runtime adaptation mechanisms represent the most advanced co-design approaches, where hardware parameters and software algorithms dynamically adjust during operation based on input patterns and system performance. These adaptive systems can modify synaptic plasticity rules, neuron parameters, or network topologies in response to changing pattern recognition requirements, enabling more robust unsupervised learning in real-world applications.

The future of hardware-software co-design for neuromorphic systems lies in automated design space exploration tools that can simultaneously optimize hardware configurations and software implementations. These tools will likely incorporate machine learning techniques to navigate the complex trade-offs between energy efficiency, recognition accuracy, and computational throughput in unsupervised learning systems.

Current co-design approaches typically begin with algorithm-hardware mapping strategies, where unsupervised learning algorithms such as Spike-Timing-Dependent Plasticity (STDP) are specifically tailored to leverage the unique characteristics of neuromorphic hardware. This bidirectional optimization process ensures that learning algorithms can exploit the parallel processing capabilities and analog computation features of neuromorphic chips while accommodating hardware constraints.

Simulation frameworks play a crucial role in the co-design process, with tools like NEST, Brian, and Nengo providing environments where software implementations can be tested before hardware deployment. These frameworks allow researchers to evaluate the performance of unsupervised learning algorithms on virtual neuromorphic architectures, facilitating rapid prototyping and refinement of both hardware specifications and software implementations.

Memory hierarchy optimization represents another significant aspect of co-design approaches. Neuromorphic systems implementing unsupervised learning require efficient data movement between processing elements and memory components. Co-design strategies often involve developing custom memory architectures that support sparse, event-driven computation patterns typical in spiking neural networks, thereby reducing energy consumption associated with data transfer operations.

Programming models specifically designed for neuromorphic hardware constitute an evolving area within co-design methodologies. Languages and frameworks such as PyNN, Loihi's Nx SDK, and SpiNNaker's tools provide abstractions that enable developers to express unsupervised learning algorithms in ways that map efficiently to neuromorphic hardware while maintaining programmer productivity and algorithm flexibility.

Runtime adaptation mechanisms represent the most advanced co-design approaches, where hardware parameters and software algorithms dynamically adjust during operation based on input patterns and system performance. These adaptive systems can modify synaptic plasticity rules, neuron parameters, or network topologies in response to changing pattern recognition requirements, enabling more robust unsupervised learning in real-world applications.

The future of hardware-software co-design for neuromorphic systems lies in automated design space exploration tools that can simultaneously optimize hardware configurations and software implementations. These tools will likely incorporate machine learning techniques to navigate the complex trade-offs between energy efficiency, recognition accuracy, and computational throughput in unsupervised learning systems.

Unlock deeper insights with PatSnap Eureka Quick Research — get a full tech report to explore trends and direct your research. Try now!

Generate Your Research Report Instantly with AI Agent

Supercharge your innovation with PatSnap Eureka AI Agent Platform!