Software Frameworks for Programming Neuromorphic Hardware.

SEP 2, 20259 MIN READ

Generate Your Research Report Instantly with AI Agent

PatSnap Eureka helps you evaluate technical feasibility & market potential.

Neuromorphic Computing Evolution and Objectives

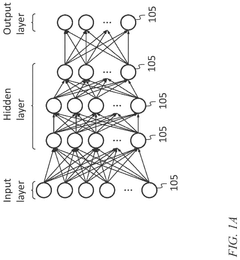

Neuromorphic computing represents a paradigm shift in computational architecture, drawing inspiration from the structure and function of biological neural systems. The evolution of this field began in the late 1980s with Carver Mead's pioneering work at Caltech, where he first proposed using analog VLSI circuits to mimic neurobiological architectures. This marked the inception of hardware systems designed to process information in ways similar to the human brain.

Throughout the 1990s and early 2000s, neuromorphic computing remained largely in academic research domains, with limited practical applications due to technological constraints. The field gained significant momentum around 2010 with the emergence of more sophisticated CMOS technologies and the increasing limitations of traditional von Neumann architectures in handling complex AI workloads.

The development trajectory accelerated dramatically with major research initiatives such as the European Human Brain Project, DARPA's SyNAPSE program, and IBM's TrueNorth chip in 2014, which featured one million neurons and 256 million synapses. These milestones demonstrated the feasibility of large-scale neuromorphic systems and catalyzed broader industry interest.

The primary objective of neuromorphic computing is to create computing systems that emulate the brain's efficiency in processing information, particularly for tasks involving pattern recognition, sensory processing, and adaptive learning. Unlike conventional computing architectures that separate memory and processing units, neuromorphic systems integrate these functions, potentially offering orders of magnitude improvements in energy efficiency for certain computational tasks.

Current technical goals in the field include developing hardware that can support spike-based neural networks with high energy efficiency, creating scalable architectures that maintain performance as system size increases, and designing systems capable of online learning and adaptation. These objectives align with the broader aim of enabling more sophisticated edge AI applications where power constraints are significant.

Software frameworks for programming neuromorphic hardware represent a critical bridge between these novel computing architectures and practical applications. The evolution of these frameworks has been shaped by the need to abstract the complexities of neuromorphic hardware while preserving its unique computational advantages. Early programming approaches relied heavily on hardware-specific languages, limiting accessibility and adoption.

The field now aims to develop more standardized, user-friendly programming interfaces that can support a variety of neuromorphic hardware platforms while maintaining compatibility with existing machine learning ecosystems. This includes creating tools that can efficiently map conventional deep learning models to spiking neural network implementations, as well as frameworks that enable direct programming of spike-based computation.

Throughout the 1990s and early 2000s, neuromorphic computing remained largely in academic research domains, with limited practical applications due to technological constraints. The field gained significant momentum around 2010 with the emergence of more sophisticated CMOS technologies and the increasing limitations of traditional von Neumann architectures in handling complex AI workloads.

The development trajectory accelerated dramatically with major research initiatives such as the European Human Brain Project, DARPA's SyNAPSE program, and IBM's TrueNorth chip in 2014, which featured one million neurons and 256 million synapses. These milestones demonstrated the feasibility of large-scale neuromorphic systems and catalyzed broader industry interest.

The primary objective of neuromorphic computing is to create computing systems that emulate the brain's efficiency in processing information, particularly for tasks involving pattern recognition, sensory processing, and adaptive learning. Unlike conventional computing architectures that separate memory and processing units, neuromorphic systems integrate these functions, potentially offering orders of magnitude improvements in energy efficiency for certain computational tasks.

Current technical goals in the field include developing hardware that can support spike-based neural networks with high energy efficiency, creating scalable architectures that maintain performance as system size increases, and designing systems capable of online learning and adaptation. These objectives align with the broader aim of enabling more sophisticated edge AI applications where power constraints are significant.

Software frameworks for programming neuromorphic hardware represent a critical bridge between these novel computing architectures and practical applications. The evolution of these frameworks has been shaped by the need to abstract the complexities of neuromorphic hardware while preserving its unique computational advantages. Early programming approaches relied heavily on hardware-specific languages, limiting accessibility and adoption.

The field now aims to develop more standardized, user-friendly programming interfaces that can support a variety of neuromorphic hardware platforms while maintaining compatibility with existing machine learning ecosystems. This includes creating tools that can efficiently map conventional deep learning models to spiking neural network implementations, as well as frameworks that enable direct programming of spike-based computation.

Market Analysis for Brain-Inspired Computing Solutions

The brain-inspired computing market is experiencing significant growth, driven by the increasing demand for efficient processing of complex AI workloads. Current market valuations place neuromorphic computing at approximately $2.5 billion, with projections indicating a compound annual growth rate of 20-25% over the next five years. This growth trajectory is supported by substantial investments from both private and public sectors, with government initiatives like the EU's Human Brain Project and DARPA's SyNAPSE program allocating hundreds of millions in funding.

Market segmentation reveals diverse application areas for neuromorphic hardware and their programming frameworks. The largest current segment is research and academia, accounting for roughly 40% of the market. However, commercial applications are rapidly expanding, particularly in edge computing devices, autonomous systems, and data centers seeking energy-efficient AI processing solutions.

Healthcare represents a particularly promising vertical, with applications in medical imaging analysis, brain-computer interfaces, and drug discovery. The financial sector is also adopting neuromorphic solutions for real-time fraud detection and algorithmic trading. Additionally, the automotive industry is increasingly integrating these technologies for advanced driver assistance systems and autonomous driving capabilities.

Regional analysis shows North America leading with approximately 45% market share, followed by Europe (25%) and Asia-Pacific (20%). China's aggressive investments in neuromorphic technologies are expected to significantly alter this distribution within the next decade.

Key market drivers include the exponential growth in data volumes requiring real-time processing, increasing power consumption concerns in traditional computing architectures, and the push toward edge AI deployment. The inherent energy efficiency of neuromorphic systems—consuming up to 1000 times less power than conventional processors for certain workloads—presents a compelling value proposition.

Market barriers include the limited ecosystem of software frameworks for programming neuromorphic hardware, creating significant adoption challenges. The lack of standardization across different neuromorphic architectures necessitates specialized programming approaches, increasing development costs and limiting portability. Additionally, the shortage of developers proficient in both neuroscience principles and computer programming creates a talent gap that constrains market growth.

Customer demand analysis indicates strong interest in unified programming interfaces that abstract hardware complexities, comprehensive simulation environments for algorithm testing, and robust debugging tools. Organizations are increasingly seeking frameworks that enable seamless migration of existing AI workloads to neuromorphic platforms without requiring complete redevelopment.

Market segmentation reveals diverse application areas for neuromorphic hardware and their programming frameworks. The largest current segment is research and academia, accounting for roughly 40% of the market. However, commercial applications are rapidly expanding, particularly in edge computing devices, autonomous systems, and data centers seeking energy-efficient AI processing solutions.

Healthcare represents a particularly promising vertical, with applications in medical imaging analysis, brain-computer interfaces, and drug discovery. The financial sector is also adopting neuromorphic solutions for real-time fraud detection and algorithmic trading. Additionally, the automotive industry is increasingly integrating these technologies for advanced driver assistance systems and autonomous driving capabilities.

Regional analysis shows North America leading with approximately 45% market share, followed by Europe (25%) and Asia-Pacific (20%). China's aggressive investments in neuromorphic technologies are expected to significantly alter this distribution within the next decade.

Key market drivers include the exponential growth in data volumes requiring real-time processing, increasing power consumption concerns in traditional computing architectures, and the push toward edge AI deployment. The inherent energy efficiency of neuromorphic systems—consuming up to 1000 times less power than conventional processors for certain workloads—presents a compelling value proposition.

Market barriers include the limited ecosystem of software frameworks for programming neuromorphic hardware, creating significant adoption challenges. The lack of standardization across different neuromorphic architectures necessitates specialized programming approaches, increasing development costs and limiting portability. Additionally, the shortage of developers proficient in both neuroscience principles and computer programming creates a talent gap that constrains market growth.

Customer demand analysis indicates strong interest in unified programming interfaces that abstract hardware complexities, comprehensive simulation environments for algorithm testing, and robust debugging tools. Organizations are increasingly seeking frameworks that enable seamless migration of existing AI workloads to neuromorphic platforms without requiring complete redevelopment.

Current Frameworks and Technical Barriers

The current landscape of software frameworks for neuromorphic hardware is characterized by a diverse ecosystem of tools that bridge the gap between traditional programming paradigms and the unique computational models of neuromorphic systems. Leading frameworks include IBM's TrueNorth Neurosynaptic System, which provides a comprehensive development environment for its neuromorphic chips, and Intel's Nengo framework for its Loihi platform, offering Python-based neural network modeling capabilities.

SpiNNaker software stack represents another significant framework, designed specifically for the SpiNNaker neuromorphic architecture, enabling large-scale spiking neural network simulations. Additionally, PyNN has emerged as a simulator-independent language for describing spiking neural network models, facilitating code portability across different neuromorphic platforms.

Despite these advancements, several technical barriers persist in the neuromorphic software ecosystem. The most significant challenge is the lack of standardization across different hardware platforms. Each neuromorphic system typically requires its own programming approach, creating fragmentation in the development landscape and hindering code portability. This necessitates substantial rewriting and optimization when migrating applications between platforms.

The abstraction gap presents another major obstacle. Current frameworks struggle to provide high-level abstractions that effectively hide hardware complexities while maintaining performance benefits. Developers must often choose between accessibility and efficiency, with no ideal middle ground that offers both.

Performance optimization remains challenging due to the unique characteristics of neuromorphic hardware. Traditional optimization techniques developed for von Neumann architectures are often ineffective, requiring specialized approaches for spike-based computation, event-driven processing, and distributed memory architectures.

Debugging and profiling tools for neuromorphic systems lag significantly behind those available for conventional computing platforms. The event-driven nature of these systems makes traditional debugging approaches inadequate, while the lack of standardized profiling metrics complicates performance analysis and optimization.

Integration with mainstream machine learning frameworks represents another barrier. While frameworks like TensorFlow and PyTorch dominate the AI landscape, their integration with neuromorphic systems remains limited, creating a significant adoption hurdle for machine learning practitioners interested in neuromorphic computing.

The talent gap further exacerbates these challenges, with relatively few developers possessing expertise in both traditional software development and neuromorphic computing principles. This shortage of skilled professionals slows innovation and adoption across the industry.

SpiNNaker software stack represents another significant framework, designed specifically for the SpiNNaker neuromorphic architecture, enabling large-scale spiking neural network simulations. Additionally, PyNN has emerged as a simulator-independent language for describing spiking neural network models, facilitating code portability across different neuromorphic platforms.

Despite these advancements, several technical barriers persist in the neuromorphic software ecosystem. The most significant challenge is the lack of standardization across different hardware platforms. Each neuromorphic system typically requires its own programming approach, creating fragmentation in the development landscape and hindering code portability. This necessitates substantial rewriting and optimization when migrating applications between platforms.

The abstraction gap presents another major obstacle. Current frameworks struggle to provide high-level abstractions that effectively hide hardware complexities while maintaining performance benefits. Developers must often choose between accessibility and efficiency, with no ideal middle ground that offers both.

Performance optimization remains challenging due to the unique characteristics of neuromorphic hardware. Traditional optimization techniques developed for von Neumann architectures are often ineffective, requiring specialized approaches for spike-based computation, event-driven processing, and distributed memory architectures.

Debugging and profiling tools for neuromorphic systems lag significantly behind those available for conventional computing platforms. The event-driven nature of these systems makes traditional debugging approaches inadequate, while the lack of standardized profiling metrics complicates performance analysis and optimization.

Integration with mainstream machine learning frameworks represents another barrier. While frameworks like TensorFlow and PyTorch dominate the AI landscape, their integration with neuromorphic systems remains limited, creating a significant adoption hurdle for machine learning practitioners interested in neuromorphic computing.

The talent gap further exacerbates these challenges, with relatively few developers possessing expertise in both traditional software development and neuromorphic computing principles. This shortage of skilled professionals slows innovation and adoption across the industry.

Contemporary Programming Approaches for Neural Hardware

01 Neural Network Simulation Frameworks

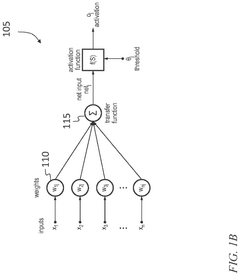

Software frameworks designed specifically for simulating neural networks on neuromorphic hardware. These frameworks provide tools for modeling, training, and deploying spiking neural networks that can efficiently run on specialized neuromorphic processors. They typically include libraries for implementing various neuron models, synaptic plasticity rules, and network architectures optimized for brain-inspired computing paradigms.- Neural Network Simulation Frameworks: Software frameworks designed specifically for simulating neural networks on neuromorphic hardware. These frameworks provide tools for modeling, training, and deploying neural networks that can efficiently run on specialized neuromorphic processors. They typically include libraries and APIs that abstract the complexities of the underlying hardware architecture, allowing developers to focus on the neural network design rather than hardware-specific implementations.

- Hardware Abstraction Layers for Neuromorphic Computing: Software layers that provide a standardized interface between neuromorphic hardware and higher-level applications. These abstraction layers hide the complexities and differences between various neuromorphic hardware implementations, allowing software developers to write code that can run on different neuromorphic platforms without modification. They typically include device drivers, runtime environments, and APIs that manage resource allocation, scheduling, and communication with the neuromorphic hardware.

- Programming Models for Spiking Neural Networks: Specialized programming models and languages designed for implementing spiking neural networks on neuromorphic hardware. These frameworks provide constructs for defining neuron models, synaptic connections, and learning rules that are compatible with the event-driven, asynchronous nature of neuromorphic computing. They often include compilers that translate high-level neural network descriptions into efficient code that can run on specific neuromorphic hardware platforms.

- Development and Debugging Tools for Neuromorphic Systems: Integrated development environments and debugging tools specifically designed for neuromorphic software development. These tools provide capabilities for visualizing neural network activity, profiling performance, identifying bottlenecks, and debugging neuromorphic applications. They often include simulators that allow developers to test their neuromorphic software on conventional hardware before deploying to actual neuromorphic systems.

- Runtime Systems for Neuromorphic Hardware: Software systems that manage the execution of neuromorphic applications at runtime. These frameworks handle tasks such as resource allocation, scheduling, power management, and fault tolerance for neuromorphic hardware. They typically provide mechanisms for dynamic reconfiguration of neural networks, real-time monitoring of system performance, and optimization of resource utilization based on application requirements and hardware constraints.

02 Hardware Abstraction Layers for Neuromorphic Systems

Software interfaces that abstract the complexities of neuromorphic hardware, allowing developers to write code without detailed knowledge of the underlying hardware architecture. These abstraction layers provide standardized APIs that enable software to interact with different neuromorphic platforms while maintaining compatibility. They facilitate portability across various neuromorphic hardware implementations and simplify the development process.Expand Specific Solutions03 Programming Models for Event-Based Computing

Specialized programming models designed for event-driven, asynchronous computation paradigms used in neuromorphic systems. These frameworks support the development of applications that process information in a temporal, spike-based manner similar to biological neural systems. They provide constructs for handling event streams, managing timing dependencies, and implementing sparse, event-triggered computations that match the operational principles of neuromorphic hardware.Expand Specific Solutions04 Optimization and Compilation Tools for Neuromorphic Deployment

Software tools that optimize neural network models for efficient execution on neuromorphic hardware. These frameworks include compilers that transform standard neural network descriptions into optimized representations suitable for neuromorphic architectures. They perform operations such as quantization, pruning, and mapping of network topologies to physical neuromorphic circuits while preserving functional equivalence and maximizing performance.Expand Specific Solutions05 Development Environments and Debugging Tools

Integrated development environments and debugging tools specifically designed for neuromorphic software development. These frameworks provide visualization capabilities, performance analysis tools, and debugging interfaces tailored to the unique characteristics of neuromorphic computation. They help developers understand the behavior of their applications on neuromorphic hardware, identify bottlenecks, and optimize performance through specialized profiling and monitoring features.Expand Specific Solutions

Leading Organizations in Neuromorphic Ecosystem

The neuromorphic hardware programming frameworks market is in an early growth phase, characterized by increasing adoption as AI applications expand. The market is projected to grow significantly as energy-efficient computing demands rise, though still relatively small compared to traditional computing markets. Technologically, the field shows varied maturity levels across players. Industry leaders like IBM and Intel have established comprehensive frameworks (TrueNorth, Loihi), while Samsung and Siemens are advancing commercial applications. Academic institutions (Tsinghua University, UESTC) contribute fundamental research, and specialized companies like Syntiant and Polyn focus on edge AI implementations. Government entities, particularly in the US and China, provide substantial funding, creating a competitive landscape balancing commercial deployment with ongoing research innovation.

International Business Machines Corp.

Technical Solution: IBM has developed TrueNorth Neurosynaptic System, a comprehensive software framework for programming neuromorphic hardware. The framework includes a neural network description language, a compiler, and a runtime environment specifically designed for their TrueNorth neuromorphic chip. IBM's approach focuses on event-driven, parallel, scalable neural networks that mimic biological neural systems. Their programming model abstracts the underlying hardware complexity through Corelets - reusable software components that encapsulate networks of neurosynaptic cores. The Corelet Programming Environment provides tools for composition, testing, and deployment of applications. IBM has also developed the SpiNNaker (Spiking Neural Network Architecture) platform in collaboration with the University of Manchester, which supports PyNN, a Python-based common programming interface for neuromorphic computing[1][3]. Their frameworks emphasize energy efficiency, with TrueNorth consuming only 70mW while simulating 1 million neurons and 256 million synapses.

Strengths: Mature ecosystem with comprehensive tools for development, testing, and deployment; strong integration with traditional computing systems; extensive research backing and industry partnerships. Weaknesses: Proprietary nature of some components limits broader adoption; complexity of programming model requires specialized knowledge; hardware-specific optimizations may limit portability across different neuromorphic platforms.

Intel Corp.

Technical Solution: Intel has developed Loihi, a neuromorphic research chip, accompanied by a comprehensive software framework called Lava. Lava is an open-source software framework designed to provide a common programming interface across neuromorphic hardware platforms. It enables developers to build applications for neuromorphic computing systems using Python-based APIs that abstract the complexities of the underlying hardware. The framework supports both rate-based and spiking neural networks, with a focus on event-driven computation. Intel's Nengo-Loihi framework, developed in collaboration with Applied Brain Research, provides a higher-level neural modeling interface that compiles to Loihi's architecture[2]. Intel's neuromorphic programming approach emphasizes the temporal dynamics of spiking neural networks, allowing developers to leverage the energy efficiency benefits of event-based computation. Their framework supports online learning and adaptation, enabling systems that can continuously learn from their environment without explicit training phases.

Strengths: Open-source approach fosters community development and broader adoption; comprehensive Python-based APIs lower the barrier to entry; strong support for both research and commercial applications. Weaknesses: Still evolving with ongoing changes to APIs and functionality; optimization for Intel hardware may limit performance on other platforms; requires understanding of spiking neural network principles for effective use.

Key Innovations in Neuromorphic Programming Models

High-density neuromorphic computing element

PatentActiveUS12333422B2

Innovation

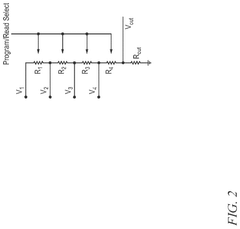

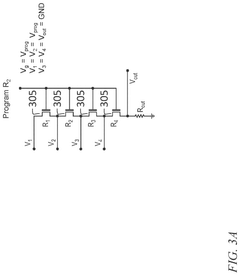

- A neuromorphic device is developed for analog computation of a linear combination of input signals, featuring a vertical stack of flash-like cells with a common control gate and individually contacted source-drain regions, enabling non-volatile programming and fast evaluation.

Neuromorphic computer

PatentActiveUS8275728B2

Innovation

- A neuromorphic computer architecture utilizing electronic devices with variable resistance circuits to represent synaptic connection strength and positive/negative output circuits to mimic excitatory and inhibitory responses, enabling high-density fabrication of brain-like computing functions.

Standardization Efforts in Neuromorphic Programming

The standardization of neuromorphic programming interfaces represents a critical evolution in the field, addressing the fragmentation that has historically limited broader adoption. Early neuromorphic systems operated with proprietary programming models, creating isolated ecosystems that hindered knowledge transfer and application development. Recognizing this challenge, several significant standardization initiatives have emerged in recent years to establish common frameworks and interfaces.

The Neuro-inspired Computational Elements (NICE) workshop series, initiated in 2013, has been instrumental in bringing together researchers and industry professionals to discuss standardization needs. These discussions have led to the development of the PyNN (Python Neural Networks) interface, which has emerged as one of the most successful standardization efforts to date. PyNN provides a common API for specifying spiking neural network models across different neuromorphic hardware platforms, including SpiNNaker, BrainScaleS, and Neurogrid.

Another notable initiative is the INRC (Intel Neuromorphic Research Community), which has been working on standardizing programming interfaces for Intel's Loihi neuromorphic chip. Their NxSDK (Neuromorphic x Software Development Kit) represents an important step toward creating accessible programming tools for neuromorphic hardware.

The European Human Brain Project has also contributed significantly to standardization efforts through the development of the Neuromorphic Computing Platform. This platform aims to provide unified access to different neuromorphic computing systems through standardized interfaces, enabling researchers to focus on algorithms rather than hardware-specific implementations.

Industry consortia are increasingly recognizing the importance of standardization. The Khronos Group, known for OpenGL and Vulkan standards, has recently shown interest in neuromorphic computing standards. Similarly, the IEEE has established working groups focused on neuromorphic computing standards, particularly through the IEEE Rebooting Computing initiative.

Open-source communities have also emerged as powerful forces for de facto standardization. Projects like Nengo, developed by Applied Brain Research, provide cross-platform neural simulation environments that work across various neuromorphic hardware systems. Similarly, the SNN Toolbox offers conversion tools between different neural network frameworks and neuromorphic platforms.

Despite these advances, significant challenges remain in standardization efforts. The rapid evolution of neuromorphic hardware architectures makes it difficult to establish lasting standards. Additionally, the tension between hardware-specific optimizations and general-purpose interfaces continues to present trade-offs between performance and portability that the community must navigate as the field matures.

The Neuro-inspired Computational Elements (NICE) workshop series, initiated in 2013, has been instrumental in bringing together researchers and industry professionals to discuss standardization needs. These discussions have led to the development of the PyNN (Python Neural Networks) interface, which has emerged as one of the most successful standardization efforts to date. PyNN provides a common API for specifying spiking neural network models across different neuromorphic hardware platforms, including SpiNNaker, BrainScaleS, and Neurogrid.

Another notable initiative is the INRC (Intel Neuromorphic Research Community), which has been working on standardizing programming interfaces for Intel's Loihi neuromorphic chip. Their NxSDK (Neuromorphic x Software Development Kit) represents an important step toward creating accessible programming tools for neuromorphic hardware.

The European Human Brain Project has also contributed significantly to standardization efforts through the development of the Neuromorphic Computing Platform. This platform aims to provide unified access to different neuromorphic computing systems through standardized interfaces, enabling researchers to focus on algorithms rather than hardware-specific implementations.

Industry consortia are increasingly recognizing the importance of standardization. The Khronos Group, known for OpenGL and Vulkan standards, has recently shown interest in neuromorphic computing standards. Similarly, the IEEE has established working groups focused on neuromorphic computing standards, particularly through the IEEE Rebooting Computing initiative.

Open-source communities have also emerged as powerful forces for de facto standardization. Projects like Nengo, developed by Applied Brain Research, provide cross-platform neural simulation environments that work across various neuromorphic hardware systems. Similarly, the SNN Toolbox offers conversion tools between different neural network frameworks and neuromorphic platforms.

Despite these advances, significant challenges remain in standardization efforts. The rapid evolution of neuromorphic hardware architectures makes it difficult to establish lasting standards. Additionally, the tension between hardware-specific optimizations and general-purpose interfaces continues to present trade-offs between performance and portability that the community must navigate as the field matures.

Energy Efficiency Considerations for Neural Computing

Energy efficiency has emerged as a critical consideration in neuromorphic computing, driven by the exponential growth in computational demands and the inherent limitations of traditional von Neumann architectures. Neuromorphic hardware, designed to mimic the brain's neural structure, offers significant energy advantages compared to conventional computing systems, with potential energy savings of 2-3 orders of magnitude for specific neural network workloads.

The fundamental energy efficiency advantage of neuromorphic systems stems from their event-driven computation model. Unlike traditional processors that continuously consume power regardless of computational load, neuromorphic hardware activates only when processing spikes or events, substantially reducing static power consumption. This approach aligns with the brain's remarkable efficiency, which operates on approximately 20 watts while performing complex cognitive tasks.

Current neuromorphic hardware implementations demonstrate varying degrees of energy efficiency. Intel's Loihi chip achieves approximately 23 picojoules per synaptic operation, while IBM's TrueNorth operates at around 26 picojoules per synaptic event. These figures represent significant improvements over GPU implementations of neural networks, which typically require hundreds of picojoules per operation.

Software frameworks for neuromorphic hardware must explicitly address energy optimization through specialized programming constructs. Frameworks like Nengo, PyNN, and Brian provide energy-aware primitives that allow developers to balance computational accuracy with power consumption. These frameworks implement techniques such as sparse coding, approximate computing, and precision scaling to minimize energy usage while maintaining acceptable performance levels.

Memory access patterns represent another critical energy consideration in neural computing. The energy cost of data movement often exceeds that of computation itself, making data locality crucial. Advanced software frameworks implement memory-centric programming models that minimize data transfer between processing elements and memory, significantly reducing energy consumption.

Looking forward, the integration of emerging non-volatile memory technologies with neuromorphic architectures promises further energy efficiency gains. Software frameworks are evolving to support these hybrid systems, enabling fine-grained power management across heterogeneous computing elements. Additionally, automated energy profiling tools embedded within these frameworks allow developers to identify and optimize energy hotspots in their neural computing applications.

The fundamental energy efficiency advantage of neuromorphic systems stems from their event-driven computation model. Unlike traditional processors that continuously consume power regardless of computational load, neuromorphic hardware activates only when processing spikes or events, substantially reducing static power consumption. This approach aligns with the brain's remarkable efficiency, which operates on approximately 20 watts while performing complex cognitive tasks.

Current neuromorphic hardware implementations demonstrate varying degrees of energy efficiency. Intel's Loihi chip achieves approximately 23 picojoules per synaptic operation, while IBM's TrueNorth operates at around 26 picojoules per synaptic event. These figures represent significant improvements over GPU implementations of neural networks, which typically require hundreds of picojoules per operation.

Software frameworks for neuromorphic hardware must explicitly address energy optimization through specialized programming constructs. Frameworks like Nengo, PyNN, and Brian provide energy-aware primitives that allow developers to balance computational accuracy with power consumption. These frameworks implement techniques such as sparse coding, approximate computing, and precision scaling to minimize energy usage while maintaining acceptable performance levels.

Memory access patterns represent another critical energy consideration in neural computing. The energy cost of data movement often exceeds that of computation itself, making data locality crucial. Advanced software frameworks implement memory-centric programming models that minimize data transfer between processing elements and memory, significantly reducing energy consumption.

Looking forward, the integration of emerging non-volatile memory technologies with neuromorphic architectures promises further energy efficiency gains. Software frameworks are evolving to support these hybrid systems, enabling fine-grained power management across heterogeneous computing elements. Additionally, automated energy profiling tools embedded within these frameworks allow developers to identify and optimize energy hotspots in their neural computing applications.

Unlock deeper insights with PatSnap Eureka Quick Research — get a full tech report to explore trends and direct your research. Try now!

Generate Your Research Report Instantly with AI Agent

Supercharge your innovation with PatSnap Eureka AI Agent Platform!