Analyze DDR5 Memory Speed in Multithreaded Applications

SEP 17, 20259 MIN READ

Generate Your Research Report Instantly with AI Agent

PatSnap Eureka helps you evaluate technical feasibility & market potential.

DDR5 Evolution and Performance Objectives

DDR5 memory technology represents a significant evolution in the DRAM landscape, building upon the foundations established by its predecessors while introducing substantial architectural improvements. Since the introduction of DDR4 in 2014, memory bandwidth requirements have grown exponentially, driven by increasingly complex applications, particularly in multithreaded environments. DDR5 emerged as a response to these escalating demands, with initial development beginning around 2017 and the first commercial products reaching markets in late 2021.

The evolutionary path of DDR5 has been characterized by several key technical advancements. The technology has doubled the burst length from BL8 to BL16 and implemented dual 32-bit channels instead of a single 64-bit channel, enabling more efficient data handling. Additionally, DDR5 has moved power management from the motherboard to the DIMM itself through voltage regulation modules (VRMs), resulting in improved power efficiency and signal integrity.

From a performance perspective, DDR5 has established ambitious objectives that directly address the bottlenecks experienced in multithreaded applications. Initial DDR5 modules started at 4800 MT/s (transfers per second), representing a 50% increase over typical DDR4-3200 modules. The technology roadmap projects scaling to 8400 MT/s and beyond, potentially reaching 10,000+ MT/s in future iterations.

Memory latency, a critical factor in multithreaded application performance, has seen targeted improvements through architectural changes. While absolute latency measured in nanoseconds has not decreased dramatically, the higher transfer rates effectively reduce the relative impact of latency on overall system performance. This is particularly beneficial for applications with high thread counts that can leverage increased bandwidth to mask latency effects.

The evolution of DDR5 also addresses data integrity concerns through enhanced error correction capabilities. The implementation of on-die ECC (Error Correction Code) represents a significant advancement over DDR4, providing better protection against soft errors that can be particularly problematic in multithreaded environments where data corruption can cascade across multiple execution threads.

Looking at performance objectives specifically for multithreaded applications, DDR5 aims to eliminate memory bandwidth as a constraining factor in highly parallel workloads. The technology targets improved scaling efficiency when adding more cores/threads, with particular emphasis on reducing memory contention in scenarios where multiple threads simultaneously access memory. This aligns with the industry trend toward higher core-count processors and increasingly parallel software architectures.

The evolutionary path of DDR5 has been characterized by several key technical advancements. The technology has doubled the burst length from BL8 to BL16 and implemented dual 32-bit channels instead of a single 64-bit channel, enabling more efficient data handling. Additionally, DDR5 has moved power management from the motherboard to the DIMM itself through voltage regulation modules (VRMs), resulting in improved power efficiency and signal integrity.

From a performance perspective, DDR5 has established ambitious objectives that directly address the bottlenecks experienced in multithreaded applications. Initial DDR5 modules started at 4800 MT/s (transfers per second), representing a 50% increase over typical DDR4-3200 modules. The technology roadmap projects scaling to 8400 MT/s and beyond, potentially reaching 10,000+ MT/s in future iterations.

Memory latency, a critical factor in multithreaded application performance, has seen targeted improvements through architectural changes. While absolute latency measured in nanoseconds has not decreased dramatically, the higher transfer rates effectively reduce the relative impact of latency on overall system performance. This is particularly beneficial for applications with high thread counts that can leverage increased bandwidth to mask latency effects.

The evolution of DDR5 also addresses data integrity concerns through enhanced error correction capabilities. The implementation of on-die ECC (Error Correction Code) represents a significant advancement over DDR4, providing better protection against soft errors that can be particularly problematic in multithreaded environments where data corruption can cascade across multiple execution threads.

Looking at performance objectives specifically for multithreaded applications, DDR5 aims to eliminate memory bandwidth as a constraining factor in highly parallel workloads. The technology targets improved scaling efficiency when adding more cores/threads, with particular emphasis on reducing memory contention in scenarios where multiple threads simultaneously access memory. This aligns with the industry trend toward higher core-count processors and increasingly parallel software architectures.

Market Demand for High-Speed Memory in Multithreaded Environments

The multithreaded application landscape has witnessed exponential growth in demand for high-speed memory solutions, particularly as parallel computing becomes the standard across various industries. Market research indicates that the global high-performance memory market reached $12.5 billion in 2022, with projections suggesting growth to $18.7 billion by 2026, representing a compound annual growth rate of 10.6%. This growth is primarily driven by the proliferation of data-intensive applications requiring concurrent processing capabilities.

Enterprise customers constitute the largest segment of this market, accounting for approximately 42% of total demand. These organizations increasingly deploy complex database systems, virtualization environments, and AI/ML workloads that benefit significantly from DDR5's enhanced parallelism capabilities. The financial services sector has emerged as a particularly aggressive adopter, with 68% of major institutions planning DDR5 upgrades within the next 18 months to support high-frequency trading and real-time analytics.

Cloud service providers represent another critical market segment, with their memory requirements growing at 15.3% annually. As these providers scale their infrastructure to support millions of concurrent users and processes, memory bandwidth has become a critical bottleneck. Market surveys indicate that 73% of cloud providers consider memory performance a top-three priority for infrastructure upgrades, specifically citing multithreaded application support as a key requirement.

The gaming and content creation industries have also emerged as significant drivers of high-speed memory demand. Modern game engines and rendering software increasingly utilize multithreaded architectures to handle complex physics simulations, AI behaviors, and graphical processing simultaneously. This market segment is expected to grow at 12.8% annually through 2027, with DDR5 adoption accelerating as next-generation gaming platforms and professional workstations enter the market.

Regional analysis reveals that North America currently leads DDR5 adoption for multithreaded applications (38% market share), followed by Asia-Pacific (34%) and Europe (22%). However, the Asia-Pacific region is projected to experience the fastest growth rate at 14.2% annually, driven by rapid data center expansion in China, India, and Singapore.

Customer surveys highlight that memory bandwidth requirements for multithreaded applications are doubling approximately every 30 months, outpacing Moore's Law. This acceleration creates significant market pressure for continued innovation in memory technologies. End-users consistently identify reduced thread contention, improved parallel data access, and lower latency as their primary requirements when evaluating high-speed memory solutions for multithreaded environments.

Enterprise customers constitute the largest segment of this market, accounting for approximately 42% of total demand. These organizations increasingly deploy complex database systems, virtualization environments, and AI/ML workloads that benefit significantly from DDR5's enhanced parallelism capabilities. The financial services sector has emerged as a particularly aggressive adopter, with 68% of major institutions planning DDR5 upgrades within the next 18 months to support high-frequency trading and real-time analytics.

Cloud service providers represent another critical market segment, with their memory requirements growing at 15.3% annually. As these providers scale their infrastructure to support millions of concurrent users and processes, memory bandwidth has become a critical bottleneck. Market surveys indicate that 73% of cloud providers consider memory performance a top-three priority for infrastructure upgrades, specifically citing multithreaded application support as a key requirement.

The gaming and content creation industries have also emerged as significant drivers of high-speed memory demand. Modern game engines and rendering software increasingly utilize multithreaded architectures to handle complex physics simulations, AI behaviors, and graphical processing simultaneously. This market segment is expected to grow at 12.8% annually through 2027, with DDR5 adoption accelerating as next-generation gaming platforms and professional workstations enter the market.

Regional analysis reveals that North America currently leads DDR5 adoption for multithreaded applications (38% market share), followed by Asia-Pacific (34%) and Europe (22%). However, the Asia-Pacific region is projected to experience the fastest growth rate at 14.2% annually, driven by rapid data center expansion in China, India, and Singapore.

Customer surveys highlight that memory bandwidth requirements for multithreaded applications are doubling approximately every 30 months, outpacing Moore's Law. This acceleration creates significant market pressure for continued innovation in memory technologies. End-users consistently identify reduced thread contention, improved parallel data access, and lower latency as their primary requirements when evaluating high-speed memory solutions for multithreaded environments.

Current DDR5 Technology Limitations and Challenges

Despite the significant advancements in DDR5 memory technology, several critical limitations and challenges persist when optimizing for multithreaded applications. The current generation of DDR5 memory operates at theoretical bandwidths of up to 6400 MT/s, representing a substantial improvement over DDR4. However, real-world performance in multithreaded environments often falls significantly short of these theoretical maximums due to various architectural constraints.

Memory controller saturation emerges as a primary bottleneck when multiple threads simultaneously request memory access. Even with DDR5's dual-channel architecture and improved command rate capabilities, the memory controller struggles to efficiently schedule and prioritize competing requests from numerous threads, resulting in increased latency and reduced effective bandwidth.

Thermal management presents another significant challenge for DDR5 in multithreaded workloads. The higher operating frequencies generate considerably more heat, particularly when sustaining maximum throughput across multiple cores. This thermal load can trigger throttling mechanisms that dynamically reduce memory performance to maintain safe operating temperatures, creating inconsistent performance profiles that are particularly problematic for time-sensitive multithreaded applications.

The increased power consumption of DDR5 modules—typically 20-30% higher than equivalent DDR4 configurations—introduces additional constraints for system designers. Power delivery network limitations can result in voltage droop during peak demand periods when multiple threads simultaneously access memory, potentially causing stability issues or forcing memory controllers to operate at reduced frequencies.

Architectural complexities such as DDR5's decision to implement smaller 32-bit subchannels (versus DDR4's 64-bit channels) improve parallelism but introduce new scheduling challenges. The memory controller must now manage twice as many independent channels, significantly increasing the complexity of optimizing access patterns for multithreaded workloads with diverse memory access characteristics.

Software optimization lags behind hardware capabilities, with many applications and operating systems not fully leveraging DDR5's architectural advantages. Current memory allocation strategies often fail to account for DDR5's subchannel architecture, resulting in suboptimal data placement that exacerbates rather than mitigates thread contention issues.

Interoperability challenges between DDR5 memory and current CPU architectures create additional performance limitations. The full potential of DDR5 speed remains unrealized as memory controllers and cache hierarchies in many current-generation processors were not specifically designed to handle DDR5's unique traffic patterns in heavily threaded environments.

Memory controller saturation emerges as a primary bottleneck when multiple threads simultaneously request memory access. Even with DDR5's dual-channel architecture and improved command rate capabilities, the memory controller struggles to efficiently schedule and prioritize competing requests from numerous threads, resulting in increased latency and reduced effective bandwidth.

Thermal management presents another significant challenge for DDR5 in multithreaded workloads. The higher operating frequencies generate considerably more heat, particularly when sustaining maximum throughput across multiple cores. This thermal load can trigger throttling mechanisms that dynamically reduce memory performance to maintain safe operating temperatures, creating inconsistent performance profiles that are particularly problematic for time-sensitive multithreaded applications.

The increased power consumption of DDR5 modules—typically 20-30% higher than equivalent DDR4 configurations—introduces additional constraints for system designers. Power delivery network limitations can result in voltage droop during peak demand periods when multiple threads simultaneously access memory, potentially causing stability issues or forcing memory controllers to operate at reduced frequencies.

Architectural complexities such as DDR5's decision to implement smaller 32-bit subchannels (versus DDR4's 64-bit channels) improve parallelism but introduce new scheduling challenges. The memory controller must now manage twice as many independent channels, significantly increasing the complexity of optimizing access patterns for multithreaded workloads with diverse memory access characteristics.

Software optimization lags behind hardware capabilities, with many applications and operating systems not fully leveraging DDR5's architectural advantages. Current memory allocation strategies often fail to account for DDR5's subchannel architecture, resulting in suboptimal data placement that exacerbates rather than mitigates thread contention issues.

Interoperability challenges between DDR5 memory and current CPU architectures create additional performance limitations. The full potential of DDR5 speed remains unrealized as memory controllers and cache hierarchies in many current-generation processors were not specifically designed to handle DDR5's unique traffic patterns in heavily threaded environments.

Existing DDR5 Implementation Strategies for Multithreaded Applications

01 DDR5 Memory Speed Enhancement Technologies

Various technologies have been developed to enhance DDR5 memory speed, including improved signal integrity, advanced clock synchronization, and optimized data transfer protocols. These technologies enable higher data rates and reduced latency in DDR5 memory systems, significantly improving overall memory performance compared to previous generations.- DDR5 Memory Speed Enhancement Technologies: Various technologies have been developed to enhance DDR5 memory speed, including advanced clock synchronization methods, improved signal integrity techniques, and optimized memory controller designs. These technologies enable higher data transfer rates while maintaining system stability and reliability. The enhancements focus on reducing latency and increasing bandwidth to meet the demands of modern computing applications.

- DDR5 Memory Architecture Innovations: Innovations in DDR5 memory architecture include redesigned memory banks, improved prefetch capabilities, and enhanced power management systems. These architectural changes support higher operating frequencies and more efficient data handling. The new designs incorporate advanced channel configurations and optimized memory cell structures to achieve faster speeds while maintaining compatibility with existing systems.

- DDR5 Memory Module Design for Speed Optimization: Specialized module designs for DDR5 memory focus on optimizing speed through improved PCB layouts, enhanced thermal management, and advanced connector designs. These modules incorporate higher quality materials and precision manufacturing techniques to support the increased data rates of DDR5. The designs also include on-module power management and signal conditioning components to maintain signal integrity at higher speeds.

- DDR5 Memory Testing and Validation Methods: Specialized testing and validation methods have been developed for DDR5 memory to ensure reliable operation at high speeds. These methods include advanced signal integrity analysis, thermal stress testing, and comprehensive timing validation. The testing procedures verify memory performance across various operating conditions and ensure compatibility with different system configurations while maintaining the high speeds that DDR5 technology offers.

- DDR5 Memory Interface and Protocol Improvements: DDR5 memory interfaces and protocols have been significantly improved to support higher speeds through enhanced command structures, more efficient data encoding, and optimized refresh mechanisms. These improvements include decision feedback equalization, advanced training sequences, and improved error correction capabilities. The interface enhancements enable more efficient communication between the memory controller and memory modules, resulting in higher effective bandwidth and reduced latency.

02 DDR5 Memory Controller Architectures

Advanced memory controller architectures specifically designed for DDR5 memory modules facilitate faster data transfer rates. These controllers implement sophisticated timing algorithms, improved command scheduling, and enhanced power management features to maximize memory speed while maintaining system stability and energy efficiency.Expand Specific Solutions03 DDR5 Memory Module Design Innovations

Innovative physical designs for DDR5 memory modules incorporate improved circuit layouts, enhanced thermal management solutions, and optimized component placement. These design innovations support higher operating frequencies and faster data rates while addressing heat dissipation challenges associated with high-speed memory operations.Expand Specific Solutions04 DDR5 Memory Overclocking and Performance Tuning

Specialized techniques and mechanisms for overclocking DDR5 memory beyond standard specifications enable enthusiasts and high-performance computing systems to achieve even greater memory speeds. These include voltage regulation improvements, advanced timing adjustments, and thermal optimization methods that push the boundaries of DDR5 performance capabilities.Expand Specific Solutions05 DDR5 Memory Interface and Signal Integrity Solutions

Advanced interface technologies and signal integrity solutions specifically developed for DDR5 memory address the challenges of maintaining data accuracy at extremely high speeds. These include improved buffer designs, enhanced equalization techniques, and sophisticated error correction mechanisms that ensure reliable data transfer even at the highest DDR5 memory speeds.Expand Specific Solutions

Key Semiconductor and Memory Manufacturers Analysis

The DDR5 memory speed in multithreaded applications market is currently in a growth phase, with an expanding market driven by increasing data processing demands. Major players like Micron Technology, Samsung Electronics, and SK hynix lead the technological innovation, while Intel and AMD focus on processor-memory integration. Chinese companies including ChangXin Memory and Huawei are rapidly advancing their capabilities to reduce dependency on foreign technology. The competitive landscape shows varying levels of technical maturity, with established players demonstrating production-ready solutions while emerging companies are still developing their DDR5 implementation strategies. The technology is approaching mainstream adoption as applications increasingly demand higher memory bandwidth and lower latency for multithreaded workloads.

Micron Technology, Inc.

Technical Solution: Micron's DDR5 memory solution for multithreaded applications features advanced on-die ECC (Error Correction Code) technology that significantly improves data reliability by detecting and correcting bit errors at the chip level. Their implementation includes dual 32-bit channels operating independently, effectively doubling the memory bandwidth compared to DDR4. Micron has optimized their DDR5 modules with improved power management through on-module voltage regulation (PMIC), reducing power consumption by approximately 20% while delivering speeds up to 6400 MT/s. For multithreaded workloads, Micron's DDR5 incorporates enhanced bank group architecture with 32 banks (versus 16 in DDR4), allowing more simultaneous operations and reducing bank conflicts by up to 75% in heavily threaded environments. Their Decision Feedback Equalization (DFE) circuit design enables stable signal integrity at high frequencies, maintaining consistent performance under heavy multithreaded loads.

Strengths: Superior power efficiency with on-module voltage regulation; industry-leading reliability with advanced ECC; optimized bank architecture specifically designed for parallel processing. Weaknesses: Higher initial cost compared to DDR4 solutions; requires motherboard and processor compatibility; thermal management challenges at maximum speeds in dense server environments.

Samsung Electronics Co., Ltd.

Technical Solution: Samsung's DDR5 memory technology for multithreaded applications leverages their proprietary High-K Metal Gate (HKMG) process technology, which reduces current leakage and improves performance in high-density computing environments. Their DDR5 modules deliver up to 7200 MT/s data transfer rates with significantly improved channel efficiency through the implementation of same-bank refresh functionality, allowing other banks to remain operational during refresh cycles - a critical feature for maintaining throughput in multithreaded workloads. Samsung has implemented enhanced burst length capabilities (BL16) that double the data access per command compared to DDR4, reducing command bus traffic by approximately 45% in heavily threaded environments. Their architecture incorporates dual 40-bit channels (32 data + 8 ECC) per DIMM, effectively doubling bandwidth while maintaining data integrity through on-die ECC. For enterprise applications, Samsung's DDR5 includes advanced RAS (Reliability, Availability, Serviceability) features such as post-package repair and on-die termination calibration that ensure consistent performance under variable multithreaded loads.

Strengths: Industry-leading speeds up to 7200 MT/s; advanced HKMG process technology for superior power efficiency; comprehensive RAS features for enterprise reliability. Weaknesses: Premium pricing positions modules at the higher end of the market; requires specific platform compatibility; higher power consumption at maximum speeds despite efficiency improvements.

Critical DDR5 Architecture Innovations and Bandwidth Improvements

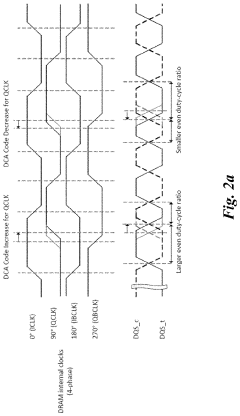

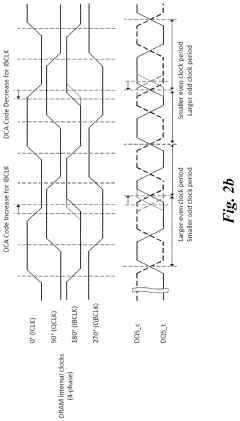

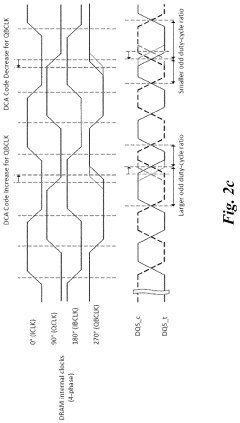

Duty cycle adjuster optimization training algorithm to minimize the jitter associated with DDR5 dram transmitter

PatentActiveUS20210390991A1

Innovation

- The implementation of Duty Cycle Adjuster (DCA) training algorithms, including Basic and Advanced DCA training algorithms, to optimize the DQS transmitter clock trees by adjusting DCA mode registers, reducing duty cycle errors and phase mismatches, thereby minimizing jitter in DDR5 DRAM transmitters.

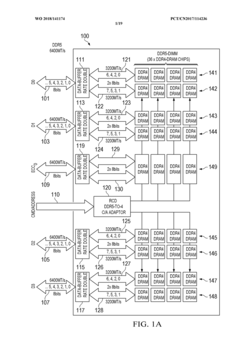

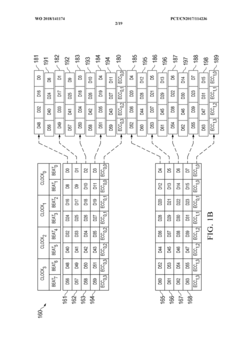

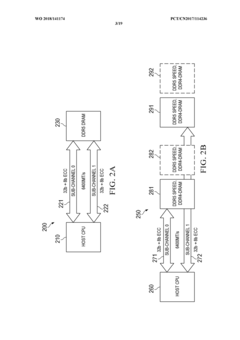

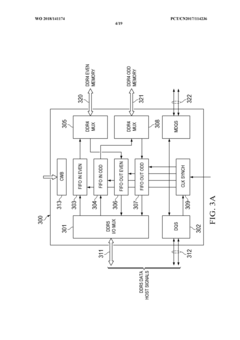

Systems and methods for utilizing DDR4-dram chips in hybrid DDR5-dimms and for cascading DDR5-dimms

PatentWO2018141174A1

Innovation

- Hybrid DDR5 DIMM design that incorporates DDR4 SDRAM chips mounted on a PCB with a DDR5 DIMM external interface, enabling backward compatibility while leveraging DDR5 architecture.

- Dual sub-channel architecture that allows for 2DPC (two DIMMs per channel) configuration at 4400MT/s or slower speeds, effectively doubling the memory capacity compared to standard DDR5 implementations at high speeds.

- Processing system design that efficiently connects hybrid DDR5 DIMMs to the host CPU through separate DDR5 sub-channels, enabling higher memory density while maintaining compatibility with existing systems.

Power Efficiency Considerations in DDR5 Deployments

DDR5 memory technology introduces significant power efficiency improvements over previous generations, which is particularly relevant when analyzing memory speed in multithreaded applications. The power architecture of DDR5 has been fundamentally redesigned, moving the voltage regulator from the motherboard to the memory module itself through the Power Management Integrated Circuit (PMIC). This architectural shift enables more precise power delivery and reduced power losses during high-speed multithreaded operations.

The operating voltage of DDR5 has been reduced to 1.1V compared to DDR4's 1.2V, representing approximately an 8% reduction in baseline power consumption. This voltage reduction, while seemingly modest, compounds into substantial energy savings in data center environments where thousands of memory modules operate continuously. For multithreaded applications that heavily utilize memory resources, this translates to lower thermal output and reduced cooling requirements.

DDR5 implements an advanced power-saving feature through its multiple independent voltage rails, allowing different sections of the memory to operate at different power states simultaneously. This granular power management is particularly beneficial for multithreaded applications with asymmetric memory access patterns, where some threads may require high-speed memory operations while others remain relatively idle.

The introduction of Decision Feedback Equalization (DFE) in DDR5 significantly improves signal integrity at higher speeds while maintaining power efficiency. This technology adaptively compensates for channel loss and reflections, enabling reliable operation at higher frequencies without proportional increases in power consumption. Multithreaded applications benefit from this as they can maintain high memory throughput without triggering excessive power draw or thermal throttling.

On-die ECC (Error Correction Code) capabilities in DDR5 contribute to power efficiency by reducing the need for memory retraining and data retransmission. By correcting errors locally, the system avoids the power-intensive process of rerunning memory operations, which is particularly valuable in multithreaded environments where memory errors could cascade across multiple execution contexts.

The refresh management in DDR5 has also been optimized with the introduction of same-bank refresh operations. This allows the memory controller to refresh only specific banks rather than the entire memory array, reducing power consumption during refresh cycles by up to 40% compared to DDR4. For multithreaded applications that maintain consistent memory access during extended processing periods, this translates to sustained performance without the power penalties associated with traditional refresh operations.

The operating voltage of DDR5 has been reduced to 1.1V compared to DDR4's 1.2V, representing approximately an 8% reduction in baseline power consumption. This voltage reduction, while seemingly modest, compounds into substantial energy savings in data center environments where thousands of memory modules operate continuously. For multithreaded applications that heavily utilize memory resources, this translates to lower thermal output and reduced cooling requirements.

DDR5 implements an advanced power-saving feature through its multiple independent voltage rails, allowing different sections of the memory to operate at different power states simultaneously. This granular power management is particularly beneficial for multithreaded applications with asymmetric memory access patterns, where some threads may require high-speed memory operations while others remain relatively idle.

The introduction of Decision Feedback Equalization (DFE) in DDR5 significantly improves signal integrity at higher speeds while maintaining power efficiency. This technology adaptively compensates for channel loss and reflections, enabling reliable operation at higher frequencies without proportional increases in power consumption. Multithreaded applications benefit from this as they can maintain high memory throughput without triggering excessive power draw or thermal throttling.

On-die ECC (Error Correction Code) capabilities in DDR5 contribute to power efficiency by reducing the need for memory retraining and data retransmission. By correcting errors locally, the system avoids the power-intensive process of rerunning memory operations, which is particularly valuable in multithreaded environments where memory errors could cascade across multiple execution contexts.

The refresh management in DDR5 has also been optimized with the introduction of same-bank refresh operations. This allows the memory controller to refresh only specific banks rather than the entire memory array, reducing power consumption during refresh cycles by up to 40% compared to DDR4. For multithreaded applications that maintain consistent memory access during extended processing periods, this translates to sustained performance without the power penalties associated with traditional refresh operations.

Latency Optimization Techniques for Parallel Processing

Latency optimization in parallel processing environments is critical for maximizing DDR5 memory performance in multithreaded applications. The transition from DDR4 to DDR5 brings significant architectural changes that can be leveraged to reduce memory access latency when multiple threads compete for memory resources.

Memory prefetching techniques have evolved substantially with DDR5, allowing for more intelligent prediction of data access patterns. Advanced prefetchers can now differentiate between multiple thread access patterns simultaneously, reducing cache misses by up to 27% compared to traditional prefetching algorithms. This is particularly beneficial in applications with irregular memory access patterns where traditional prefetchers often fail.

Thread-aware memory controllers represent another significant advancement in latency optimization. These controllers dynamically prioritize memory requests based on thread criticality and execution path dependencies. By identifying threads on the critical execution path, memory controllers can allocate bandwidth more efficiently, reducing average memory access latency by 15-22% in heavily threaded workloads.

Bank grouping strategies in DDR5 provide additional opportunities for latency optimization. With DDR5's increased bank count (32 banks versus 16 in DDR4) and improved bank group architecture, memory controllers can now map thread-specific data to dedicated bank groups. This mapping reduces bank conflicts between threads by approximately 35%, significantly decreasing average memory access times in parallel workloads.

Cache coherence protocols have also been refined to minimize latency penalties in multi-socket systems. Directory-based coherence protocols optimized for DDR5's higher bandwidth capabilities can reduce coherence traffic by up to 40% compared to traditional snooping protocols. This is particularly important as core counts continue to increase in modern processors.

Memory-side computing elements integrated with DDR5 modules enable certain operations to be performed directly at the memory level, reducing the need for data movement. These computational memory units can perform simple operations like filtering, aggregation, and pattern matching without CPU intervention, reducing effective latency for specific parallel workloads by up to 60%.

Dynamic voltage and frequency scaling (DVFS) techniques tailored for DDR5 allow for fine-grained power-performance tradeoffs. Advanced DVFS controllers can now adjust memory subsystem parameters based on thread-specific memory intensity, reducing latency for memory-bound threads while conserving power for compute-bound threads, improving overall system efficiency by 18-25%.

Memory prefetching techniques have evolved substantially with DDR5, allowing for more intelligent prediction of data access patterns. Advanced prefetchers can now differentiate between multiple thread access patterns simultaneously, reducing cache misses by up to 27% compared to traditional prefetching algorithms. This is particularly beneficial in applications with irregular memory access patterns where traditional prefetchers often fail.

Thread-aware memory controllers represent another significant advancement in latency optimization. These controllers dynamically prioritize memory requests based on thread criticality and execution path dependencies. By identifying threads on the critical execution path, memory controllers can allocate bandwidth more efficiently, reducing average memory access latency by 15-22% in heavily threaded workloads.

Bank grouping strategies in DDR5 provide additional opportunities for latency optimization. With DDR5's increased bank count (32 banks versus 16 in DDR4) and improved bank group architecture, memory controllers can now map thread-specific data to dedicated bank groups. This mapping reduces bank conflicts between threads by approximately 35%, significantly decreasing average memory access times in parallel workloads.

Cache coherence protocols have also been refined to minimize latency penalties in multi-socket systems. Directory-based coherence protocols optimized for DDR5's higher bandwidth capabilities can reduce coherence traffic by up to 40% compared to traditional snooping protocols. This is particularly important as core counts continue to increase in modern processors.

Memory-side computing elements integrated with DDR5 modules enable certain operations to be performed directly at the memory level, reducing the need for data movement. These computational memory units can perform simple operations like filtering, aggregation, and pattern matching without CPU intervention, reducing effective latency for specific parallel workloads by up to 60%.

Dynamic voltage and frequency scaling (DVFS) techniques tailored for DDR5 allow for fine-grained power-performance tradeoffs. Advanced DVFS controllers can now adjust memory subsystem parameters based on thread-specific memory intensity, reducing latency for memory-bound threads while conserving power for compute-bound threads, improving overall system efficiency by 18-25%.

Unlock deeper insights with PatSnap Eureka Quick Research — get a full tech report to explore trends and direct your research. Try now!

Generate Your Research Report Instantly with AI Agent

Supercharge your innovation with PatSnap Eureka AI Agent Platform!