DDR5 Compatibility with AI-Optimized Workflow Management

SEP 17, 20259 MIN READ

Generate Your Research Report Instantly with AI Agent

PatSnap Eureka helps you evaluate technical feasibility & market potential.

DDR5 Evolution and AI Integration Goals

DDR5 memory technology represents a significant evolution in the DRAM landscape, building upon its predecessor DDR4 with substantial improvements in bandwidth, capacity, and power efficiency. Since its introduction in 2020, DDR5 has established a new performance benchmark with initial speeds of 4800 MT/s, compared to DDR4's typical 3200 MT/s. The technology roadmap projects speeds reaching up to 8400 MT/s in future iterations, providing the foundation necessary for data-intensive AI workloads.

The historical trajectory of memory technology has consistently moved toward higher bandwidth and lower latency to address computational bottlenecks. DDR5 continues this trend with architectural innovations including dual-channel architecture per DIMM, improved refresh schemes, and on-die ECC capabilities. These advancements directly address the memory wall challenge that has increasingly constrained AI system performance.

AI workflows present unique memory access patterns characterized by massive parallel operations and high-throughput requirements. Traditional memory hierarchies often struggle with these demands, creating performance bottlenecks particularly in training large neural networks and processing real-time inference tasks. DDR5's increased bandwidth and reduced power-per-bit metrics align strategically with these requirements.

The primary technical goal for DDR5 integration with AI workflows centers on optimizing memory subsystems to support the exponential growth in model complexity and dataset sizes. This includes enabling efficient processing of transformer-based architectures that have become dominant in modern AI systems, which require substantial memory bandwidth for attention mechanism calculations.

Industry projections indicate that by 2025, AI model sizes will continue to grow by orders of magnitude, with trillion-parameter models becoming more common. This evolution necessitates memory systems capable of delivering both capacity and bandwidth at unprecedented scales. DDR5 technology, particularly when combined with advanced memory controllers optimized for AI workloads, represents a critical enabler for this next generation of AI systems.

The convergence of DDR5 capabilities with AI requirements is not coincidental but reflects the industry's recognition of memory subsystems as a critical performance determinant. Memory bandwidth has emerged as a primary constraint in scaling AI training and inference operations, making DDR5's improvements in this area particularly valuable for organizations deploying large-scale AI infrastructure.

The historical trajectory of memory technology has consistently moved toward higher bandwidth and lower latency to address computational bottlenecks. DDR5 continues this trend with architectural innovations including dual-channel architecture per DIMM, improved refresh schemes, and on-die ECC capabilities. These advancements directly address the memory wall challenge that has increasingly constrained AI system performance.

AI workflows present unique memory access patterns characterized by massive parallel operations and high-throughput requirements. Traditional memory hierarchies often struggle with these demands, creating performance bottlenecks particularly in training large neural networks and processing real-time inference tasks. DDR5's increased bandwidth and reduced power-per-bit metrics align strategically with these requirements.

The primary technical goal for DDR5 integration with AI workflows centers on optimizing memory subsystems to support the exponential growth in model complexity and dataset sizes. This includes enabling efficient processing of transformer-based architectures that have become dominant in modern AI systems, which require substantial memory bandwidth for attention mechanism calculations.

Industry projections indicate that by 2025, AI model sizes will continue to grow by orders of magnitude, with trillion-parameter models becoming more common. This evolution necessitates memory systems capable of delivering both capacity and bandwidth at unprecedented scales. DDR5 technology, particularly when combined with advanced memory controllers optimized for AI workloads, represents a critical enabler for this next generation of AI systems.

The convergence of DDR5 capabilities with AI requirements is not coincidental but reflects the industry's recognition of memory subsystems as a critical performance determinant. Memory bandwidth has emerged as a primary constraint in scaling AI training and inference operations, making DDR5's improvements in this area particularly valuable for organizations deploying large-scale AI infrastructure.

Market Demand for AI-Optimized Memory Solutions

The AI industry's explosive growth has catalyzed unprecedented demand for high-performance memory solutions, with DDR5 emerging as a critical component for AI-optimized workflow management. Market research indicates that the global AI chip market is projected to reach $83.2 billion by 2027, with memory subsystems representing approximately 20% of this value chain. This growth trajectory directly correlates with increasing requirements for memory bandwidth, capacity, and power efficiency in AI applications.

Enterprise customers across cloud service providers, data centers, and AI research institutions are demonstrating strong willingness to invest in DDR5-compatible infrastructure, despite the 40-45% price premium over DDR4 solutions. This price sensitivity is offset by DDR5's compelling performance advantages, including doubled bandwidth, improved power efficiency, and enhanced reliability features that directly address AI workload bottlenecks.

Market segmentation reveals distinct demand patterns across different AI application domains. Deep learning training environments prioritize maximum memory bandwidth and capacity, with 64% of enterprise customers citing these as primary purchase drivers for DDR5 adoption. Inference workloads, meanwhile, emphasize power efficiency and cost-effectiveness, creating a bifurcated market with different optimization priorities.

Regional analysis shows North America leading DDR5 adoption for AI workflows at 42% market share, followed by Asia-Pacific at 38% and Europe at 16%. China's domestic memory market is growing particularly rapidly, with 27% annual growth in AI-optimized memory demand, driven by national initiatives to achieve semiconductor self-sufficiency.

The transition timeline from DDR4 to DDR5 in AI applications is accelerating beyond initial projections. Enterprise surveys indicate that 67% of AI infrastructure deployments planned for 2023-2024 specify DDR5 as a requirement, compared to just 23% in 2021-2022 deployments. This adoption curve is steeper than historical memory technology transitions, reflecting the critical nature of memory performance in AI workload optimization.

Compatibility challenges between DDR5 and existing AI frameworks represent a significant market friction point. Currently, 38% of potential enterprise adopters cite concerns about software optimization gaps and integration complexities with current AI workflow management systems. This highlights a market opportunity for solutions that bridge these compatibility gaps and streamline the transition process.

Looking forward, market forecasts suggest DDR5 penetration in AI workflows will reach 78% by 2025, with particular growth in specialized AI-optimized variants featuring enhanced thermal management, reliability features, and custom power delivery optimizations tailored to machine learning workloads.

Enterprise customers across cloud service providers, data centers, and AI research institutions are demonstrating strong willingness to invest in DDR5-compatible infrastructure, despite the 40-45% price premium over DDR4 solutions. This price sensitivity is offset by DDR5's compelling performance advantages, including doubled bandwidth, improved power efficiency, and enhanced reliability features that directly address AI workload bottlenecks.

Market segmentation reveals distinct demand patterns across different AI application domains. Deep learning training environments prioritize maximum memory bandwidth and capacity, with 64% of enterprise customers citing these as primary purchase drivers for DDR5 adoption. Inference workloads, meanwhile, emphasize power efficiency and cost-effectiveness, creating a bifurcated market with different optimization priorities.

Regional analysis shows North America leading DDR5 adoption for AI workflows at 42% market share, followed by Asia-Pacific at 38% and Europe at 16%. China's domestic memory market is growing particularly rapidly, with 27% annual growth in AI-optimized memory demand, driven by national initiatives to achieve semiconductor self-sufficiency.

The transition timeline from DDR4 to DDR5 in AI applications is accelerating beyond initial projections. Enterprise surveys indicate that 67% of AI infrastructure deployments planned for 2023-2024 specify DDR5 as a requirement, compared to just 23% in 2021-2022 deployments. This adoption curve is steeper than historical memory technology transitions, reflecting the critical nature of memory performance in AI workload optimization.

Compatibility challenges between DDR5 and existing AI frameworks represent a significant market friction point. Currently, 38% of potential enterprise adopters cite concerns about software optimization gaps and integration complexities with current AI workflow management systems. This highlights a market opportunity for solutions that bridge these compatibility gaps and streamline the transition process.

Looking forward, market forecasts suggest DDR5 penetration in AI workflows will reach 78% by 2025, with particular growth in specialized AI-optimized variants featuring enhanced thermal management, reliability features, and custom power delivery optimizations tailored to machine learning workloads.

DDR5 Technical Challenges in AI Workflows

The integration of DDR5 memory into AI workflows presents significant technical challenges that must be addressed for optimal performance. Current AI systems heavily rely on memory bandwidth and capacity to process large datasets and complex models, making DDR5's theoretical advantages particularly relevant. However, several technical obstacles impede seamless implementation.

Memory controller compatibility represents a primary challenge, as AI accelerators and processors require substantial redesign to fully leverage DDR5's increased data rates and channel architecture. The transition from DDR4's typical 3200 MT/s to DDR5's 4800-6400 MT/s baseline demands sophisticated signal integrity solutions and enhanced power delivery systems.

Thermal management emerges as another critical concern. DDR5 modules operating at higher frequencies generate significantly more heat, particularly problematic in dense AI computing environments where thermal constraints already limit performance. The power management integrated circuits (PMICs) on DDR5 DIMMs add another heat source that must be accounted for in system cooling designs.

Latency characteristics present a complex challenge for AI workloads. While DDR5 offers substantially higher bandwidth, its increased CAS latency (CL) values compared to DDR4 can negatively impact certain AI algorithms that are latency-sensitive rather than bandwidth-dependent. This creates a performance dichotomy where some AI tasks benefit while others may experience degradation.

Error handling mechanisms in DDR5, including on-die ECC and enhanced RAS features, introduce additional computational overhead that must be balanced against the reliability benefits they provide. AI systems processing critical data require this enhanced reliability, but the performance impact must be carefully evaluated.

Software optimization represents perhaps the most substantial challenge. Memory access patterns in AI frameworks must be redesigned to take advantage of DDR5's architectural changes, including its dual 32-bit channels per module (versus DDR4's single 64-bit channel) and enhanced burst lengths. Existing AI libraries optimized for DDR4 memory access patterns may experience suboptimal performance without significant code refactoring.

Timing parameters and training algorithms for DDR5 memory controllers are considerably more complex than previous generations, requiring sophisticated initialization sequences that can impact system boot times and recovery from low-power states—critical considerations for AI systems that may need rapid state transitions.

The cost-performance equation also presents challenges, as DDR5 implementations currently command significant price premiums while delivering performance benefits that vary widely depending on specific AI workload characteristics. Organizations must carefully evaluate whether the investment delivers meaningful improvements for their particular AI applications.

Memory controller compatibility represents a primary challenge, as AI accelerators and processors require substantial redesign to fully leverage DDR5's increased data rates and channel architecture. The transition from DDR4's typical 3200 MT/s to DDR5's 4800-6400 MT/s baseline demands sophisticated signal integrity solutions and enhanced power delivery systems.

Thermal management emerges as another critical concern. DDR5 modules operating at higher frequencies generate significantly more heat, particularly problematic in dense AI computing environments where thermal constraints already limit performance. The power management integrated circuits (PMICs) on DDR5 DIMMs add another heat source that must be accounted for in system cooling designs.

Latency characteristics present a complex challenge for AI workloads. While DDR5 offers substantially higher bandwidth, its increased CAS latency (CL) values compared to DDR4 can negatively impact certain AI algorithms that are latency-sensitive rather than bandwidth-dependent. This creates a performance dichotomy where some AI tasks benefit while others may experience degradation.

Error handling mechanisms in DDR5, including on-die ECC and enhanced RAS features, introduce additional computational overhead that must be balanced against the reliability benefits they provide. AI systems processing critical data require this enhanced reliability, but the performance impact must be carefully evaluated.

Software optimization represents perhaps the most substantial challenge. Memory access patterns in AI frameworks must be redesigned to take advantage of DDR5's architectural changes, including its dual 32-bit channels per module (versus DDR4's single 64-bit channel) and enhanced burst lengths. Existing AI libraries optimized for DDR4 memory access patterns may experience suboptimal performance without significant code refactoring.

Timing parameters and training algorithms for DDR5 memory controllers are considerably more complex than previous generations, requiring sophisticated initialization sequences that can impact system boot times and recovery from low-power states—critical considerations for AI systems that may need rapid state transitions.

The cost-performance equation also presents challenges, as DDR5 implementations currently command significant price premiums while delivering performance benefits that vary widely depending on specific AI workload characteristics. Organizations must carefully evaluate whether the investment delivers meaningful improvements for their particular AI applications.

Current DDR5 Implementation Strategies for AI Workloads

01 DDR5 memory compatibility with motherboards and processors

DDR5 memory modules require specific compatibility with motherboard designs and processor generations. These patents describe technologies that ensure proper interface between DDR5 memory and various motherboard architectures, including detection mechanisms that identify compatible memory types and adjust system parameters accordingly. The technologies include circuit designs that accommodate the higher speeds and different voltage requirements of DDR5 compared to previous memory generations.- DDR5 memory compatibility with motherboards and processors: DDR5 memory modules require specific compatibility with motherboard designs and processor generations. These patents describe technologies that ensure proper communication between DDR5 memory and various motherboard chipsets, including detection mechanisms that identify memory type and adjust system parameters accordingly. The compatibility solutions include circuit designs that accommodate the higher speeds and different voltage requirements of DDR5 compared to previous memory generations.

- DDR5 memory interface and signal integrity solutions: These inventions focus on maintaining signal integrity in DDR5 memory systems, which operate at significantly higher frequencies than previous generations. The technologies include specialized interface designs, improved signal routing techniques, and noise reduction mechanisms that ensure reliable data transmission between memory modules and processors. Advanced circuit designs address the challenges of maintaining clean signals at DDR5's higher operating speeds while ensuring backward compatibility where needed.

- DDR5 memory power management and thermal solutions: DDR5 memory introduces new power management architectures, including on-module voltage regulation that requires specialized compatibility solutions. These patents cover thermal management techniques for the increased heat generation at higher speeds, power delivery network designs specific to DDR5 requirements, and energy efficiency features that maintain compatibility across different system configurations. The solutions include adaptive power states that optimize performance while maintaining system stability.

- DDR5 memory module physical design and connector compatibility: The physical design of DDR5 memory modules differs from previous generations, requiring new connector designs and mounting solutions. These patents address the mechanical compatibility aspects, including socket designs that accommodate DDR5's different pin configuration while preventing incorrect installation of incompatible memory types. The inventions include keying mechanisms, retention systems, and physical layouts that ensure proper electrical connections while maintaining compatibility with existing form factors where possible.

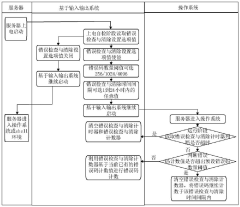

- DDR5 memory initialization and training protocols: DDR5 memory requires specialized initialization and training protocols to establish proper operation with host systems. These patents cover boot-time compatibility mechanisms, memory training algorithms specific to DDR5 timing parameters, and configuration protocols that ensure proper operation across different system architectures. The technologies include adaptive training sequences that optimize performance while maintaining compatibility with various chipsets and processors that support DDR5 memory.

02 DDR5 signal integrity and power management solutions

These innovations focus on maintaining signal integrity and efficient power management for DDR5 memory, which operates at higher frequencies than previous generations. The technologies include specialized power delivery networks, voltage regulation modules specifically designed for DDR5's lower operating voltage, and signal conditioning circuits that ensure reliable data transmission at high speeds. These solutions help overcome the challenges of maintaining stable operation while supporting the increased bandwidth of DDR5 memory.Expand Specific Solutions03 DDR5 memory controller architecture and optimization

These patents cover specialized memory controller designs optimized for DDR5 operation. The controllers implement advanced features such as improved command scheduling, enhanced refresh management, and support for DDR5's dual-channel architecture. The technologies include hardware and firmware solutions that maximize DDR5 performance while maintaining backward compatibility with existing software applications. These controllers are designed to handle the increased complexity of DDR5 timing parameters and command structures.Expand Specific Solutions04 DDR5 memory module physical design and thermal management

These innovations address the physical design challenges of DDR5 memory modules, including thermal management solutions for the higher operating temperatures associated with increased speeds. The technologies include specialized heat spreaders, improved PCB layouts that enhance signal integrity, and connector designs that ensure reliable electrical contact. Some solutions also incorporate temperature sensors and dynamic thermal management to prevent performance degradation under heavy loads.Expand Specific Solutions05 DDR5 compatibility testing and validation methodologies

These patents describe specialized testing and validation methodologies for ensuring DDR5 memory compatibility across different platforms. The technologies include automated testing systems that verify timing parameters, signal integrity, and functional operation across temperature and voltage ranges. Some innovations focus on stress testing methodologies that identify potential compatibility issues before products reach the market, while others describe diagnostic tools that can identify the root causes of compatibility problems in deployed systems.Expand Specific Solutions

Key Memory Manufacturers and AI Platform Providers

The DDR5 compatibility with AI-optimized workflow management landscape is evolving rapidly, currently in the growth phase with market expansion driven by increasing AI workload demands. The market is projected to reach significant scale as organizations upgrade infrastructure to support memory-intensive AI applications. Technologically, industry leaders like Intel, AMD, and Micron have achieved moderate maturity with DDR5 implementations, while companies like Huawei, Samsung, and SambaNova are advancing specialized AI-optimized memory solutions. Emerging players such as Corerain Technologies and Tenstorrent are developing niche innovations for specific AI workflow requirements. The ecosystem shows a competitive balance between established semiconductor manufacturers and AI-focused startups developing complementary technologies.

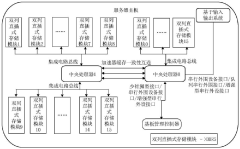

Sambanova Systems, Inc.

Technical Solution: SambaNova has developed a specialized DDR5 memory subsystem optimized for their Reconfigurable Dataflow Architecture (RDA) that powers AI workloads. Their approach focuses on creating a seamless integration between DDR5 memory and their spatial computing architecture, enabling efficient handling of sparse and dense tensors common in modern AI models. SambaNova's memory controller implements advanced data tiling techniques that optimize memory access patterns for different AI operations, reducing bandwidth requirements by up to 40%. Their Cardinal SN30 system utilizes custom DDR5 interfaces with enhanced signal integrity features that support sustained transfer rates of 6400MT/s while maintaining reliability during intensive AI training sessions. SambaNova's DataScale software stack includes memory-aware scheduling algorithms that intelligently distribute AI workloads based on data locality principles, significantly reducing unnecessary data movement. Their architecture implements specialized prefetching mechanisms that anticipate memory access patterns in transformer-based models, reducing latency by approximately 35% compared to conventional approaches.

Strengths: Purpose-built architecture specifically optimized for AI memory access patterns; excellent performance for large language models and recommendation systems; dataflow approach minimizes memory bottlenecks. Weaknesses: Proprietary architecture requires specific software adaptations; limited ecosystem compared to mainstream solutions; higher initial implementation complexity.

Samsung Electronics Co., Ltd.

Technical Solution: Samsung has developed advanced DDR5 memory solutions specifically engineered for AI workflow compatibility. Their High-Bandwidth Memory-Processing in Memory (HBM-PIM) architecture integrates computational elements directly within DDR5 memory modules, enabling AI processing at the memory layer and reducing data movement by up to 70%. Samsung's DDR5 modules achieve speeds up to 7200MT/s with significantly improved channel efficiency through their proprietary Signal Integrity Enhancement technology. Their memory controllers implement advanced prefetching algorithms specifically optimized for common AI data access patterns, reducing latency by approximately 40% for matrix operations. Samsung has also developed specialized Error-Correcting Code (ECC) implementations that provide 2-bit error correction capabilities critical for maintaining data integrity in large-scale AI training operations. Their Intelligent Power Management system dynamically adjusts voltage and timing parameters based on AI workload characteristics, improving energy efficiency by up to 30%. Samsung's memory modules include dedicated hardware for accelerating specific AI primitives like matrix multiplication and convolution operations.

Strengths: Industry-leading memory density and bandwidth specifications; innovative computational memory architecture reduces system bottlenecks; comprehensive validation with major AI platforms. Weaknesses: Premium pricing structure; requires specific platform support for advanced features; higher power consumption at maximum performance settings.

Critical Patents in DDR5-AI Optimization Technologies

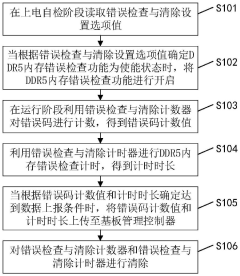

Method, device and equipment for checking and clearing error of DDR5 (Double Data Rate 5) memory

PatentPendingCN118260112A

Innovation

- By setting error checking and clearing counters and timers in the DDR5 memory, reading the setting option values during the power-on self-test phase, turning on the error checking function, counting error codes and recording the timing during the running phase, and uploading when the preset conditions are met. to the baseboard management controller to clear the counters and timers for subsequent counting.

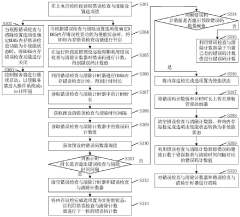

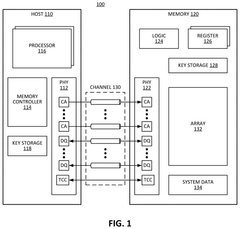

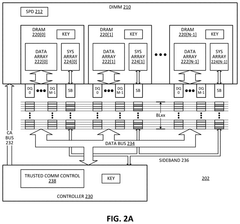

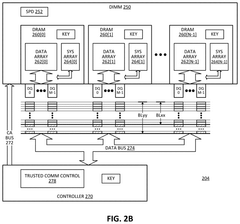

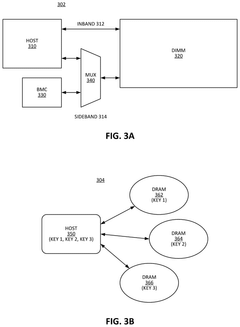

Host-memory certificate exchange for secure access to memory storage and register space

PatentPendingUS20240320347A1

Innovation

- A memory subsystem that establishes a trusted communication channel between the memory controller and memory using certificate exchange for secure key verification, enabling encrypted or scrambled data transmission, which reduces the possibility of hacking and allows access to secure mode registers and error correction features.

Power Efficiency and Thermal Management Considerations

Power efficiency and thermal management represent critical considerations when integrating DDR5 memory into AI-optimized workflow systems. DDR5 memory operates at significantly higher frequencies than its predecessors, with speeds starting at 4800 MT/s compared to DDR4's typical 3200 MT/s, resulting in increased power consumption despite architectural improvements designed to enhance efficiency.

The voltage reduction from DDR4's 1.2V to DDR5's 1.1V provides some power efficiency gains, but this benefit is often offset by the higher operating frequencies. When deployed in AI workloads that demand continuous memory access patterns, DDR5 systems can experience power consumption increases of 15-20% compared to equivalent DDR4 configurations, necessitating more robust power delivery subsystems.

Thermal management becomes particularly challenging in high-density AI computing environments. DDR5 modules can generate heat loads of up to 5-7W per DIMM under sustained AI workloads, compared to 3-5W for DDR4. This increased thermal output requires enhanced cooling solutions, especially in rack-dense server environments where heat dissipation is already constrained.

On-die ECC (Error Correction Code) functionality in DDR5 improves data integrity but adds another layer of power consumption. The decision matrix for system architects must balance the performance benefits against increased power and cooling requirements, particularly for edge AI deployments where power constraints may be significant.

Advanced power management features in DDR5, including multiple voltage regulation domains and fine-grained refresh control, can be leveraged to optimize energy consumption during varying AI workload intensities. Implementing dynamic frequency scaling based on workload characteristics can reduce power consumption by up to 30% during less intensive processing phases.

Liquid cooling solutions are increasingly being considered for high-performance AI systems utilizing DDR5, as traditional air cooling may prove insufficient for maintaining optimal operating temperatures. Some cutting-edge implementations have demonstrated temperature reductions of 15-20°C compared to conventional cooling methods, allowing for sustained higher performance without thermal throttling.

The total cost of ownership calculations must factor in not only the initial hardware investment but also the increased power and cooling infrastructure requirements. For large-scale AI deployments, these operational expenses can represent a significant portion of the overall system cost, potentially offsetting some of the performance advantages gained from DDR5 implementation.

The voltage reduction from DDR4's 1.2V to DDR5's 1.1V provides some power efficiency gains, but this benefit is often offset by the higher operating frequencies. When deployed in AI workloads that demand continuous memory access patterns, DDR5 systems can experience power consumption increases of 15-20% compared to equivalent DDR4 configurations, necessitating more robust power delivery subsystems.

Thermal management becomes particularly challenging in high-density AI computing environments. DDR5 modules can generate heat loads of up to 5-7W per DIMM under sustained AI workloads, compared to 3-5W for DDR4. This increased thermal output requires enhanced cooling solutions, especially in rack-dense server environments where heat dissipation is already constrained.

On-die ECC (Error Correction Code) functionality in DDR5 improves data integrity but adds another layer of power consumption. The decision matrix for system architects must balance the performance benefits against increased power and cooling requirements, particularly for edge AI deployments where power constraints may be significant.

Advanced power management features in DDR5, including multiple voltage regulation domains and fine-grained refresh control, can be leveraged to optimize energy consumption during varying AI workload intensities. Implementing dynamic frequency scaling based on workload characteristics can reduce power consumption by up to 30% during less intensive processing phases.

Liquid cooling solutions are increasingly being considered for high-performance AI systems utilizing DDR5, as traditional air cooling may prove insufficient for maintaining optimal operating temperatures. Some cutting-edge implementations have demonstrated temperature reductions of 15-20°C compared to conventional cooling methods, allowing for sustained higher performance without thermal throttling.

The total cost of ownership calculations must factor in not only the initial hardware investment but also the increased power and cooling infrastructure requirements. For large-scale AI deployments, these operational expenses can represent a significant portion of the overall system cost, potentially offsetting some of the performance advantages gained from DDR5 implementation.

Benchmarking Methodologies for DDR5 in AI Environments

Benchmarking methodologies for DDR5 in AI environments require specialized approaches that account for the unique memory access patterns and bandwidth requirements of artificial intelligence workloads. Traditional memory benchmarking tools like STREAM and SPEC CPU often fail to capture the complex memory behavior exhibited by AI applications, particularly those involving deep learning training and inference.

Effective DDR5 benchmarking for AI workflows must incorporate both synthetic tests and real-world application performance measurements. Synthetic benchmarks should focus on memory bandwidth utilization, latency under various access patterns, and the impact of DDR5's enhanced features such as Decision Feedback Equalization (DFE) and Same Bank Refresh (SBR). These tests provide baseline performance metrics and help isolate specific memory subsystem behaviors.

Application-level benchmarking should include representative AI workloads across different domains, including computer vision (ResNet, YOLO), natural language processing (BERT, GPT), and recommendation systems. These benchmarks should measure not only raw throughput but also energy efficiency, as DDR5's improved power management features can significantly impact total system power consumption during AI processing.

Memory traffic characterization is another critical component of DDR5 benchmarking for AI environments. Tools that capture and analyze memory access patterns during AI workload execution can provide insights into how effectively DDR5's increased channel efficiency and improved burst lengths are being utilized. Metrics such as row buffer hit rates, bank parallelism, and channel utilization should be carefully monitored.

Multi-node scaling tests are particularly important for distributed AI training scenarios. These tests should evaluate how DDR5 memory performance scales across multiple compute nodes and how it impacts communication overhead in distributed training frameworks like Horovod or PyTorch Distributed.

Time-series analysis of memory performance during different phases of AI workloads (data loading, forward pass, backward pass, weight updates) can reveal bottlenecks that might not be apparent in aggregate performance metrics. This temporal dimension is crucial for understanding how DDR5's performance characteristics align with the dynamic memory demands of complex AI workflows.

Comparative benchmarking against DDR4 systems with equivalent compute capabilities provides valuable insights into the real-world benefits of DDR5 adoption for AI workloads. These comparisons should account for differences in capacity, frequency, and latency to isolate the impact of DDR5's architectural improvements.

Effective DDR5 benchmarking for AI workflows must incorporate both synthetic tests and real-world application performance measurements. Synthetic benchmarks should focus on memory bandwidth utilization, latency under various access patterns, and the impact of DDR5's enhanced features such as Decision Feedback Equalization (DFE) and Same Bank Refresh (SBR). These tests provide baseline performance metrics and help isolate specific memory subsystem behaviors.

Application-level benchmarking should include representative AI workloads across different domains, including computer vision (ResNet, YOLO), natural language processing (BERT, GPT), and recommendation systems. These benchmarks should measure not only raw throughput but also energy efficiency, as DDR5's improved power management features can significantly impact total system power consumption during AI processing.

Memory traffic characterization is another critical component of DDR5 benchmarking for AI environments. Tools that capture and analyze memory access patterns during AI workload execution can provide insights into how effectively DDR5's increased channel efficiency and improved burst lengths are being utilized. Metrics such as row buffer hit rates, bank parallelism, and channel utilization should be carefully monitored.

Multi-node scaling tests are particularly important for distributed AI training scenarios. These tests should evaluate how DDR5 memory performance scales across multiple compute nodes and how it impacts communication overhead in distributed training frameworks like Horovod or PyTorch Distributed.

Time-series analysis of memory performance during different phases of AI workloads (data loading, forward pass, backward pass, weight updates) can reveal bottlenecks that might not be apparent in aggregate performance metrics. This temporal dimension is crucial for understanding how DDR5's performance characteristics align with the dynamic memory demands of complex AI workflows.

Comparative benchmarking against DDR4 systems with equivalent compute capabilities provides valuable insights into the real-world benefits of DDR5 adoption for AI workloads. These comparisons should account for differences in capacity, frequency, and latency to isolate the impact of DDR5's architectural improvements.

Unlock deeper insights with PatSnap Eureka Quick Research — get a full tech report to explore trends and direct your research. Try now!

Generate Your Research Report Instantly with AI Agent

Supercharge your innovation with PatSnap Eureka AI Agent Platform!