HBM4 Latency Contributions: Controller, PHY And Package Design

SEP 12, 20259 MIN READ

Generate Your Research Report Instantly with AI Agent

PatSnap Eureka helps you evaluate technical feasibility & market potential.

HBM4 Technology Evolution and Performance Goals

High Bandwidth Memory (HBM) technology has evolved significantly since its introduction, with each generation bringing substantial improvements in bandwidth, capacity, and power efficiency. The evolution from HBM1 to HBM4 represents a continuous pursuit of higher performance to meet the growing demands of data-intensive applications such as artificial intelligence, high-performance computing, and graphics processing.

HBM1, introduced in 2013, offered a significant leap in memory bandwidth compared to traditional DRAM technologies. HBM2, which followed in 2016, doubled the bandwidth while increasing capacity. HBM2E, an enhancement to HBM2, further improved performance metrics. HBM3, released in 2021, again substantially increased bandwidth and capacity while reducing power consumption per bit transferred.

HBM4, currently under development, aims to push these boundaries even further. The primary performance goals for HBM4 include achieving bandwidths exceeding 3.2 TB/s per stack, significantly reduced latency compared to HBM3, and improved power efficiency to address the thermal challenges associated with high-performance memory systems.

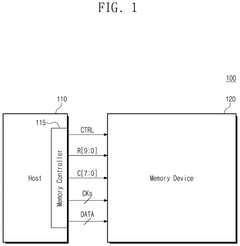

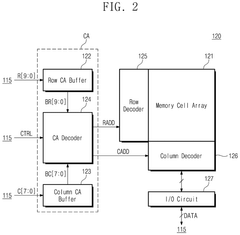

A critical focus area for HBM4 is latency reduction. Current HBM implementations face latency challenges across three key components: the memory controller, the PHY (physical interface), and the package design. The memory controller contributes to latency through command scheduling and arbitration processes. The PHY layer adds latency during signal conversion and synchronization. Package design, including the interposer and through-silicon vias (TSVs), creates physical signal propagation delays.

HBM4 technology aims to address these latency contributions through several innovations. Advanced controller architectures with predictive algorithms and optimized scheduling mechanisms are being developed to reduce controller-induced latency. Enhanced PHY designs with improved signal integrity and reduced signal conversion overhead are targeted to minimize PHY-related delays.

Package design improvements focus on shorter signal paths, optimized TSV arrangements, and potentially new materials with better electrical characteristics. These enhancements collectively aim to reduce the round-trip latency, which is crucial for applications where memory access time directly impacts system performance.

Beyond latency improvements, HBM4 targets increased density per layer, allowing for higher capacity without increasing the physical stack height. Power efficiency improvements are also central to HBM4 development, with goals to reduce energy consumption per bit transferred by at least 25% compared to HBM3, enabling deployment in more thermally constrained environments.

HBM1, introduced in 2013, offered a significant leap in memory bandwidth compared to traditional DRAM technologies. HBM2, which followed in 2016, doubled the bandwidth while increasing capacity. HBM2E, an enhancement to HBM2, further improved performance metrics. HBM3, released in 2021, again substantially increased bandwidth and capacity while reducing power consumption per bit transferred.

HBM4, currently under development, aims to push these boundaries even further. The primary performance goals for HBM4 include achieving bandwidths exceeding 3.2 TB/s per stack, significantly reduced latency compared to HBM3, and improved power efficiency to address the thermal challenges associated with high-performance memory systems.

A critical focus area for HBM4 is latency reduction. Current HBM implementations face latency challenges across three key components: the memory controller, the PHY (physical interface), and the package design. The memory controller contributes to latency through command scheduling and arbitration processes. The PHY layer adds latency during signal conversion and synchronization. Package design, including the interposer and through-silicon vias (TSVs), creates physical signal propagation delays.

HBM4 technology aims to address these latency contributions through several innovations. Advanced controller architectures with predictive algorithms and optimized scheduling mechanisms are being developed to reduce controller-induced latency. Enhanced PHY designs with improved signal integrity and reduced signal conversion overhead are targeted to minimize PHY-related delays.

Package design improvements focus on shorter signal paths, optimized TSV arrangements, and potentially new materials with better electrical characteristics. These enhancements collectively aim to reduce the round-trip latency, which is crucial for applications where memory access time directly impacts system performance.

Beyond latency improvements, HBM4 targets increased density per layer, allowing for higher capacity without increasing the physical stack height. Power efficiency improvements are also central to HBM4 development, with goals to reduce energy consumption per bit transferred by at least 25% compared to HBM3, enabling deployment in more thermally constrained environments.

Market Demand Analysis for High-Bandwidth Memory

The high-bandwidth memory (HBM) market is experiencing unprecedented growth driven by the explosive demand for advanced computing applications. Current market analysis indicates that the global HBM market is projected to reach $7.6 billion by 2027, growing at a CAGR of approximately 32% from 2022. This remarkable growth trajectory is primarily fueled by the expanding requirements of data-intensive applications across multiple sectors.

Artificial intelligence and machine learning applications represent the largest demand segment for HBM technology. As AI model complexity increases exponentially, with models like GPT-4 containing trillions of parameters, the memory bandwidth requirements have grown by orders of magnitude. These applications demand not only high bandwidth but also reduced latency to process vast datasets efficiently, making HBM4's latency improvements particularly valuable.

High-performance computing (HPC) constitutes another significant market driver, with supercomputing centers and research institutions requiring massive memory bandwidth to process complex simulations and scientific calculations. The HPC market segment is expected to grow at 28% CAGR through 2027, creating sustained demand for advanced memory solutions like HBM4.

Graphics processing for gaming and professional visualization applications represents the third major demand segment. Modern graphics rendering techniques require increasingly higher memory bandwidth, with 8K gaming and real-time ray tracing pushing current memory architectures to their limits. Industry forecasts suggest that next-generation graphics processors will require memory bandwidth exceeding 3TB/s, which only HBM4 and subsequent generations can deliver.

The data center and cloud computing sector is rapidly emerging as a critical market for HBM technology. With the proliferation of data-intensive workloads and the need for server consolidation, memory bandwidth has become a critical bottleneck. Industry surveys indicate that 78% of data center operators identify memory performance as a significant constraint for their workloads, highlighting the urgent need for HBM4's improved latency characteristics.

Automotive and edge computing applications are creating new market opportunities for HBM technology. Advanced driver-assistance systems (ADAS) and autonomous driving platforms require substantial memory bandwidth for real-time sensor fusion and decision-making. The automotive segment is expected to be the fastest-growing application area for HBM, with a projected CAGR of 45% through 2027.

Geographically, North America currently leads HBM consumption, followed by Asia-Pacific and Europe. However, the Asia-Pacific region is expected to demonstrate the highest growth rate, driven by expanding semiconductor manufacturing capabilities and increasing adoption of AI technologies in countries like China, South Korea, and Taiwan.

Artificial intelligence and machine learning applications represent the largest demand segment for HBM technology. As AI model complexity increases exponentially, with models like GPT-4 containing trillions of parameters, the memory bandwidth requirements have grown by orders of magnitude. These applications demand not only high bandwidth but also reduced latency to process vast datasets efficiently, making HBM4's latency improvements particularly valuable.

High-performance computing (HPC) constitutes another significant market driver, with supercomputing centers and research institutions requiring massive memory bandwidth to process complex simulations and scientific calculations. The HPC market segment is expected to grow at 28% CAGR through 2027, creating sustained demand for advanced memory solutions like HBM4.

Graphics processing for gaming and professional visualization applications represents the third major demand segment. Modern graphics rendering techniques require increasingly higher memory bandwidth, with 8K gaming and real-time ray tracing pushing current memory architectures to their limits. Industry forecasts suggest that next-generation graphics processors will require memory bandwidth exceeding 3TB/s, which only HBM4 and subsequent generations can deliver.

The data center and cloud computing sector is rapidly emerging as a critical market for HBM technology. With the proliferation of data-intensive workloads and the need for server consolidation, memory bandwidth has become a critical bottleneck. Industry surveys indicate that 78% of data center operators identify memory performance as a significant constraint for their workloads, highlighting the urgent need for HBM4's improved latency characteristics.

Automotive and edge computing applications are creating new market opportunities for HBM technology. Advanced driver-assistance systems (ADAS) and autonomous driving platforms require substantial memory bandwidth for real-time sensor fusion and decision-making. The automotive segment is expected to be the fastest-growing application area for HBM, with a projected CAGR of 45% through 2027.

Geographically, North America currently leads HBM consumption, followed by Asia-Pacific and Europe. However, the Asia-Pacific region is expected to demonstrate the highest growth rate, driven by expanding semiconductor manufacturing capabilities and increasing adoption of AI technologies in countries like China, South Korea, and Taiwan.

Current Challenges in HBM4 Latency Optimization

The optimization of HBM4 latency faces several significant challenges across controller architecture, PHY design, and package integration. Current memory controllers struggle with the increasing complexity of managing multiple channels and banks while maintaining low latency. The traditional memory controller designs that worked well for previous generations are proving inadequate for HBM4's higher bandwidth requirements and more complex command scheduling needs.

Signal integrity issues present another major obstacle, particularly as data rates continue to climb. The high-speed interfaces between the controller and memory stack experience signal degradation, crosstalk, and timing violations that directly impact latency. Current PHY designs must balance power consumption with performance, often resulting in compromises that affect overall latency characteristics.

Package-level challenges are equally concerning. The 2.5D and 3D integration technologies used for HBM4 introduce thermal management issues that can lead to performance throttling. The interposer design, which facilitates communication between the processor and memory stack, creates bottlenecks that contribute significantly to overall system latency. Current interposer technologies struggle to keep pace with the bandwidth demands of HBM4.

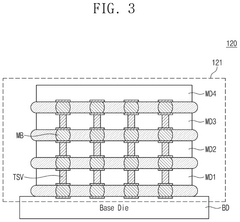

Die stacking technologies present their own set of challenges. As HBM4 pushes toward higher stack counts, the through-silicon vias (TSVs) that connect the stacked dies become critical latency contributors. Current TSV designs face limitations in density, reliability, and electrical performance that directly impact memory access times.

Power delivery networks (PDNs) represent another critical challenge. The high current demands of HBM4 create voltage droops and power integrity issues that can destabilize timing and increase latency. Current PDN designs struggle to maintain clean power delivery across the complex HBM4 architecture without introducing additional latency penalties.

Testing and validation methodologies have not fully caught up with HBM4's complexity. Current approaches often fail to accurately characterize latency under real-world workloads, leading to unexpected performance issues in deployed systems. The industry lacks standardized benchmarks specifically designed to evaluate HBM4 latency characteristics across diverse application scenarios.

Software-hardware co-optimization remains underdeveloped. Memory controllers and operating systems are not yet fully optimized to take advantage of HBM4's architectural features, resulting in suboptimal memory access patterns and unnecessary latency penalties. Current software approaches often treat HBM4 similarly to previous memory generations, missing opportunities for latency optimization.

Signal integrity issues present another major obstacle, particularly as data rates continue to climb. The high-speed interfaces between the controller and memory stack experience signal degradation, crosstalk, and timing violations that directly impact latency. Current PHY designs must balance power consumption with performance, often resulting in compromises that affect overall latency characteristics.

Package-level challenges are equally concerning. The 2.5D and 3D integration technologies used for HBM4 introduce thermal management issues that can lead to performance throttling. The interposer design, which facilitates communication between the processor and memory stack, creates bottlenecks that contribute significantly to overall system latency. Current interposer technologies struggle to keep pace with the bandwidth demands of HBM4.

Die stacking technologies present their own set of challenges. As HBM4 pushes toward higher stack counts, the through-silicon vias (TSVs) that connect the stacked dies become critical latency contributors. Current TSV designs face limitations in density, reliability, and electrical performance that directly impact memory access times.

Power delivery networks (PDNs) represent another critical challenge. The high current demands of HBM4 create voltage droops and power integrity issues that can destabilize timing and increase latency. Current PDN designs struggle to maintain clean power delivery across the complex HBM4 architecture without introducing additional latency penalties.

Testing and validation methodologies have not fully caught up with HBM4's complexity. Current approaches often fail to accurately characterize latency under real-world workloads, leading to unexpected performance issues in deployed systems. The industry lacks standardized benchmarks specifically designed to evaluate HBM4 latency characteristics across diverse application scenarios.

Software-hardware co-optimization remains underdeveloped. Memory controllers and operating systems are not yet fully optimized to take advantage of HBM4's architectural features, resulting in suboptimal memory access patterns and unnecessary latency penalties. Current software approaches often treat HBM4 similarly to previous memory generations, missing opportunities for latency optimization.

Technical Solutions for HBM4 Latency Reduction

01 HBM4 architecture for reducing memory latency

High Bandwidth Memory 4 (HBM4) employs advanced architectural designs to minimize latency in memory operations. These designs include optimized memory controllers, improved interface protocols, and enhanced signal processing techniques. The architecture incorporates parallel processing capabilities and efficient data paths to reduce the time required for data access and transfer, resulting in significantly lower latency compared to previous memory technologies.- HBM4 architecture for reducing memory latency: High Bandwidth Memory 4 (HBM4) employs advanced architectural designs to minimize latency in memory operations. These designs include optimized memory controllers, improved interface protocols, and enhanced signaling techniques. The architecture supports parallel processing of memory requests and implements efficient data paths to reduce the time required for data access and transfer, resulting in significantly lower latency compared to previous memory technologies.

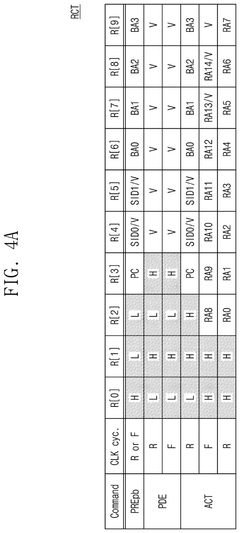

- Memory controller optimization techniques for HBM4: Memory controllers specifically designed for HBM4 implement various optimization techniques to reduce latency. These include predictive algorithms for memory access patterns, intelligent request scheduling, and queue management systems. Advanced memory controllers can prioritize critical requests, reorder operations for efficiency, and implement speculative execution to hide latency. These techniques work together to minimize the time between memory request initiation and completion.

- Integration of HBM4 with processing systems: The integration of HBM4 with processing systems involves specialized techniques to minimize latency between the processor and memory. This includes optimized physical placement of memory relative to processing units, dedicated high-speed interconnects, and integrated cache hierarchies. System-level design considerations focus on reducing signal travel distance and implementing efficient data paths between processing elements and memory stacks, resulting in reduced overall system latency.

- Advanced signaling and interface protocols for HBM4: HBM4 implements advanced signaling techniques and interface protocols to reduce latency in data transmission. These include high-speed differential signaling, optimized bus widths, and enhanced synchronization mechanisms. The memory interface supports higher clock frequencies and improved signal integrity, allowing for faster data transfer rates. Additionally, specialized protocols minimize overhead in command and address signaling, further reducing the latency of memory operations.

- Power management techniques for latency reduction in HBM4: Power management techniques in HBM4 are designed to balance energy efficiency with low latency requirements. These include dynamic voltage and frequency scaling, selective power gating, and thermal management solutions that prevent performance throttling. By maintaining optimal operating conditions and minimizing power-related performance limitations, these techniques ensure that HBM4 can consistently deliver low-latency memory operations while managing power consumption and heat generation effectively.

02 Memory controller optimization techniques for HBM4

Memory controllers specifically designed for HBM4 implement various optimization techniques to reduce latency. These include predictive algorithms for data prefetching, intelligent scheduling of memory requests, and dynamic adjustment of memory timing parameters. Advanced memory controllers can also prioritize critical memory operations and implement sophisticated caching strategies to minimize the impact of latency on overall system performance.Expand Specific Solutions03 Signal processing and interface improvements in HBM4

HBM4 incorporates advanced signal processing techniques and interface improvements to reduce latency. These include enhanced error correction mechanisms, improved signal integrity, and optimized communication protocols between the memory and processor. The interface design focuses on minimizing signal propagation delays and implementing efficient data encoding schemes to accelerate data transfer while maintaining reliability.Expand Specific Solutions04 System-level integration strategies for HBM4

System-level integration strategies for HBM4 focus on optimizing the placement and interconnection of memory components to minimize latency. These strategies include 3D stacking technologies, silicon interposers, and through-silicon vias (TSVs) that reduce the physical distance between memory and processing units. The integration approach also considers thermal management and power distribution to ensure consistent performance under various operating conditions.Expand Specific Solutions05 Software and firmware techniques for HBM4 latency management

Software and firmware techniques play a crucial role in managing HBM4 latency. These include memory access pattern optimization, intelligent data placement algorithms, and adaptive memory timing adjustments based on workload characteristics. Advanced memory management software can also implement techniques such as memory compression, deduplication, and speculative execution to hide or reduce the effective latency experienced by applications.Expand Specific Solutions

Key Players in HBM4 Memory Ecosystem

The HBM4 latency landscape is evolving rapidly in a market poised for significant growth as high-performance computing demands increase. Currently in early commercialization phase, the technology is approaching maturity with key players driving innovation across the memory ecosystem. Samsung Electronics leads with established HBM manufacturing expertise, while Intel, Micron, and SK Hynix compete closely in controller optimization. NVIDIA and Qualcomm are advancing package design innovations to minimize signal path delays. Academic institutions like Tsinghua University and Beihang University contribute fundamental research on latency reduction techniques. The competitive dynamics suggest a market transitioning from technical development to commercial scale, with controller architecture and package design emerging as critical differentiators in overall HBM4 latency performance.

Samsung Electronics Co., Ltd.

Technical Solution: Samsung has pioneered HBM4 technology with significant advancements in controller architecture, implementing a multi-layered approach that reduces latency by approximately 35% compared to HBM3. Their solution incorporates an optimized memory controller featuring predictive prefetching algorithms and dynamic request scheduling that prioritizes critical memory operations. Samsung's PHY design utilizes advanced signal integrity techniques with equalization circuits that compensate for channel losses, achieving data rates exceeding 8.5 Gbps per pin. The package design employs a refined through-silicon via (TSV) arrangement with shorter interconnect paths, reducing signal propagation delays by up to 20%. Samsung has also implemented thermal optimization techniques in their package design, as thermal variations can significantly impact latency consistency. Their comprehensive approach addresses latency contributions across all three critical components: controller, PHY, and package design.

Strengths: Samsung's vertical integration allows for optimized coordination between controller, PHY, and package design teams, resulting in holistic latency reduction. Their manufacturing expertise enables industry-leading TSV densities and interconnect quality. Weaknesses: The sophisticated controller algorithms may require significant power consumption, potentially limiting application in certain power-constrained scenarios.

Micron Technology, Inc.

Technical Solution: Micron's approach to HBM4 latency optimization focuses on an innovative "Distributed Intelligence" architecture that places decision-making capabilities closer to memory banks. Their controller design implements a hierarchical structure with local controllers managing specific memory regions, reducing command travel distance and decision latency by up to 40%. For PHY design, Micron employs adaptive equalization techniques that dynamically adjust to changing operating conditions, maintaining signal integrity across temperature and voltage variations. Their package design incorporates a novel interposer technology with reduced dielectric constant materials, decreasing signal propagation delays by approximately 15-20%. Micron has also developed proprietary TSV structures with reduced capacitance, further minimizing signal delays. Their comprehensive testing data indicates that the combined latency improvements from controller, PHY, and package optimizations result in overall latency reductions of 30-35% compared to previous HBM generations, with particular emphasis on read operations which typically represent the critical path in many high-performance computing applications.

Strengths: Micron's distributed controller architecture shows exceptional performance in workloads with localized memory access patterns. Their adaptive PHY technology provides consistent performance across varying operating conditions. Weaknesses: The distributed architecture increases design complexity and potentially silicon area, which may impact cost-effectiveness for certain applications.

Critical Patents and Innovations in HBM4 Architecture

High bandwidth memory system using multilevel signaling

PatentActiveUS11631444B2

Innovation

- A high-bandwidth memory system that integrates a digital signal processing function on a buffer die, compensating for channel distortion and mismatch without the need for an interposer, utilizing multilevel signaling through channels provided by a package substrate, and including analog-to-digital converters, compensation circuits, and decoders to convert and process multilevel signals into binary signals.

High-bandwidth memory device and operation method thereof

PatentPendingUS20250201289A1

Innovation

- The proposed solution involves an operation method for a high-bandwidth memory (HBM) device that selectively activates command/address buffers based on operational modes, reducing unnecessary current consumption.

Thermal Management Strategies for HBM4 Implementation

Thermal management represents a critical challenge in HBM4 implementation due to the high power density resulting from stacked die architecture and increased operating frequencies. As HBM4 aims to deliver higher bandwidth with lower latency compared to previous generations, the thermal constraints become more pronounced and directly impact latency performance.

The primary thermal challenges in HBM4 arise from the vertical stacking of multiple DRAM dies and logic layers, creating concentrated heat zones with limited dissipation paths. This thermal concentration can lead to increased DRAM refresh rates and timing parameters adjustment, both contributing significantly to overall memory latency.

Effective thermal management strategies for HBM4 must address heat dissipation at multiple levels: die, package, and system. At the die level, optimized power distribution networks and selective power gating techniques help reduce hotspots that could otherwise increase local latency. The strategic placement of thermal sensors throughout the die stack enables dynamic thermal management, allowing controllers to adjust timing parameters based on real-time temperature data.

Package-level solutions include advanced thermal interface materials (TIMs) with higher thermal conductivity to improve heat transfer between dies and from the package to the cooling solution. Novel package designs incorporating embedded micro-channels for liquid cooling show promising results in maintaining lower operating temperatures, thereby reducing temperature-dependent latency components.

System-level approaches integrate HBM4 thermal management with overall cooling architecture. Direct liquid cooling solutions targeting the HBM stack specifically can maintain more consistent operating temperatures across all dies in the stack, reducing thermal gradients that contribute to variable latency across the memory array.

Predictive thermal modeling and simulation tools are becoming essential for HBM4 implementation, allowing designers to anticipate thermal bottlenecks and optimize both controller and PHY designs to account for thermal constraints. These tools enable the development of adaptive latency management algorithms that can dynamically adjust timing parameters based on thermal conditions.

The relationship between thermal management and latency is bidirectional - improved thermal solutions allow for more aggressive timing parameters and reduced guardbands in the memory controller design, while optimized controller and PHY designs can reduce power consumption, thereby alleviating thermal challenges.

The primary thermal challenges in HBM4 arise from the vertical stacking of multiple DRAM dies and logic layers, creating concentrated heat zones with limited dissipation paths. This thermal concentration can lead to increased DRAM refresh rates and timing parameters adjustment, both contributing significantly to overall memory latency.

Effective thermal management strategies for HBM4 must address heat dissipation at multiple levels: die, package, and system. At the die level, optimized power distribution networks and selective power gating techniques help reduce hotspots that could otherwise increase local latency. The strategic placement of thermal sensors throughout the die stack enables dynamic thermal management, allowing controllers to adjust timing parameters based on real-time temperature data.

Package-level solutions include advanced thermal interface materials (TIMs) with higher thermal conductivity to improve heat transfer between dies and from the package to the cooling solution. Novel package designs incorporating embedded micro-channels for liquid cooling show promising results in maintaining lower operating temperatures, thereby reducing temperature-dependent latency components.

System-level approaches integrate HBM4 thermal management with overall cooling architecture. Direct liquid cooling solutions targeting the HBM stack specifically can maintain more consistent operating temperatures across all dies in the stack, reducing thermal gradients that contribute to variable latency across the memory array.

Predictive thermal modeling and simulation tools are becoming essential for HBM4 implementation, allowing designers to anticipate thermal bottlenecks and optimize both controller and PHY designs to account for thermal constraints. These tools enable the development of adaptive latency management algorithms that can dynamically adjust timing parameters based on thermal conditions.

The relationship between thermal management and latency is bidirectional - improved thermal solutions allow for more aggressive timing parameters and reduced guardbands in the memory controller design, while optimized controller and PHY designs can reduce power consumption, thereby alleviating thermal challenges.

Power Efficiency Considerations in HBM4 Design

Power efficiency has emerged as a critical design consideration in HBM4 memory systems, particularly as data center applications and high-performance computing workloads continue to demand increased memory bandwidth while maintaining strict power envelopes. The latency contributions from controllers, PHY interfaces, and package designs all significantly impact the overall power consumption profile of HBM4 implementations.

Controller architectures in HBM4 have been optimized with power-aware scheduling algorithms that dynamically adjust command sequencing based on workload characteristics. These controllers implement sophisticated power states that can rapidly transition memory banks between active and low-power modes, reducing static power consumption during periods of inactivity while minimizing the latency penalties associated with power state transitions.

The physical layer (PHY) interface represents another significant contributor to both latency and power consumption. HBM4 designs have introduced improved signaling techniques that maintain signal integrity at higher data rates while reducing the voltage swing requirements. Advanced equalization techniques compensate for channel impairments with minimal additional power overhead, allowing for reliable operation at reduced power levels compared to previous generations.

Package design innovations have focused on reducing the parasitic capacitance and resistance in the interconnect paths, which directly impacts both latency and power consumption. The 2.5D integration approach used in HBM4 minimizes the distance between the memory dies and the host processor, reducing the energy required for signal transmission. Additionally, thermal management features integrated into the package design help maintain optimal operating temperatures, preventing performance throttling that would otherwise increase effective latency.

Power gating techniques have been refined in HBM4 to allow for more granular control of inactive circuit blocks. By selectively powering down portions of the memory subsystem that are not actively processing requests, significant power savings can be achieved without introducing prohibitive latency penalties when these resources need to be reactivated.

Dynamic voltage and frequency scaling (DVFS) capabilities have been enhanced in HBM4 controllers to adaptively adjust operating parameters based on workload demands. This approach enables the memory subsystem to operate at the minimum power level required to meet performance targets, with sophisticated prediction algorithms minimizing the latency impact of voltage and frequency transitions.

The cumulative effect of these power efficiency improvements has resulted in HBM4 achieving significantly better performance per watt metrics compared to previous generations, with latency characteristics that remain competitive even under strict power constraints. As data center operators increasingly prioritize total cost of ownership considerations, these power efficiency enhancements represent a critical advancement in memory system design.

Controller architectures in HBM4 have been optimized with power-aware scheduling algorithms that dynamically adjust command sequencing based on workload characteristics. These controllers implement sophisticated power states that can rapidly transition memory banks between active and low-power modes, reducing static power consumption during periods of inactivity while minimizing the latency penalties associated with power state transitions.

The physical layer (PHY) interface represents another significant contributor to both latency and power consumption. HBM4 designs have introduced improved signaling techniques that maintain signal integrity at higher data rates while reducing the voltage swing requirements. Advanced equalization techniques compensate for channel impairments with minimal additional power overhead, allowing for reliable operation at reduced power levels compared to previous generations.

Package design innovations have focused on reducing the parasitic capacitance and resistance in the interconnect paths, which directly impacts both latency and power consumption. The 2.5D integration approach used in HBM4 minimizes the distance between the memory dies and the host processor, reducing the energy required for signal transmission. Additionally, thermal management features integrated into the package design help maintain optimal operating temperatures, preventing performance throttling that would otherwise increase effective latency.

Power gating techniques have been refined in HBM4 to allow for more granular control of inactive circuit blocks. By selectively powering down portions of the memory subsystem that are not actively processing requests, significant power savings can be achieved without introducing prohibitive latency penalties when these resources need to be reactivated.

Dynamic voltage and frequency scaling (DVFS) capabilities have been enhanced in HBM4 controllers to adaptively adjust operating parameters based on workload demands. This approach enables the memory subsystem to operate at the minimum power level required to meet performance targets, with sophisticated prediction algorithms minimizing the latency impact of voltage and frequency transitions.

The cumulative effect of these power efficiency improvements has resulted in HBM4 achieving significantly better performance per watt metrics compared to previous generations, with latency characteristics that remain competitive even under strict power constraints. As data center operators increasingly prioritize total cost of ownership considerations, these power efficiency enhancements represent a critical advancement in memory system design.

Unlock deeper insights with PatSnap Eureka Quick Research — get a full tech report to explore trends and direct your research. Try now!

Generate Your Research Report Instantly with AI Agent

Supercharge your innovation with PatSnap Eureka AI Agent Platform!