How HBM4 Reduces Error Rates Through ECC And Scrubbing?

SEP 12, 20259 MIN READ

Generate Your Research Report Instantly with AI Agent

PatSnap Eureka helps you evaluate technical feasibility & market potential.

HBM4 Error Correction Background and Objectives

High Bandwidth Memory (HBM) has evolved significantly since its introduction, with each generation addressing critical challenges in data-intensive computing environments. The fourth generation, HBM4, represents a substantial leap forward in error correction capabilities, building upon the foundations established by its predecessors. Error management has become increasingly crucial as memory densities increase and process nodes shrink, leading to higher susceptibility to both soft and hard errors.

The evolution of error correction in memory systems has progressed from simple parity checking to sophisticated Error Correction Code (ECC) implementations. Early HBM generations incorporated basic ECC capabilities, but as applications in high-performance computing, artificial intelligence, and data centers have become more mission-critical, the demand for more robust error management has intensified. HBM4 addresses this demand through advanced error correction mechanisms and proactive error management techniques.

The primary objective of HBM4's enhanced error correction framework is to maintain data integrity while supporting higher bandwidth, increased capacity, and improved power efficiency. This balancing act requires innovative approaches to error detection and correction that minimize overhead while maximizing reliability. The technology aims to reduce both correctable and uncorrectable error rates significantly compared to previous generations, thereby enhancing system stability and reducing downtime.

Another critical goal is to address the increasing concern of soft errors caused by cosmic radiation and other environmental factors. As transistor sizes continue to shrink, memory cells become more vulnerable to bit flips from these external influences. HBM4's error correction mechanisms are designed specifically to combat these challenges through more sophisticated algorithms and architectural improvements.

The development of HBM4's error correction capabilities also reflects the industry's recognition of memory as a potential bottleneck in system reliability. With computing systems becoming increasingly memory-bound, ensuring the integrity of data stored and transferred through memory has become paramount. The technology targets a reduction in the Failures In Time (FIT) rate, a key reliability metric measuring the number of failures expected in one billion device hours of operation.

Furthermore, HBM4 aims to address the growing concern of error accumulation in large-scale systems. In massive deployments with thousands of memory modules, even low error rates can translate to frequent system-wide issues. The technology's objectives include providing scalable error management solutions that maintain reliability even as system sizes grow exponentially.

The evolution of error correction in memory systems has progressed from simple parity checking to sophisticated Error Correction Code (ECC) implementations. Early HBM generations incorporated basic ECC capabilities, but as applications in high-performance computing, artificial intelligence, and data centers have become more mission-critical, the demand for more robust error management has intensified. HBM4 addresses this demand through advanced error correction mechanisms and proactive error management techniques.

The primary objective of HBM4's enhanced error correction framework is to maintain data integrity while supporting higher bandwidth, increased capacity, and improved power efficiency. This balancing act requires innovative approaches to error detection and correction that minimize overhead while maximizing reliability. The technology aims to reduce both correctable and uncorrectable error rates significantly compared to previous generations, thereby enhancing system stability and reducing downtime.

Another critical goal is to address the increasing concern of soft errors caused by cosmic radiation and other environmental factors. As transistor sizes continue to shrink, memory cells become more vulnerable to bit flips from these external influences. HBM4's error correction mechanisms are designed specifically to combat these challenges through more sophisticated algorithms and architectural improvements.

The development of HBM4's error correction capabilities also reflects the industry's recognition of memory as a potential bottleneck in system reliability. With computing systems becoming increasingly memory-bound, ensuring the integrity of data stored and transferred through memory has become paramount. The technology targets a reduction in the Failures In Time (FIT) rate, a key reliability metric measuring the number of failures expected in one billion device hours of operation.

Furthermore, HBM4 aims to address the growing concern of error accumulation in large-scale systems. In massive deployments with thousands of memory modules, even low error rates can translate to frequent system-wide issues. The technology's objectives include providing scalable error management solutions that maintain reliability even as system sizes grow exponentially.

Market Demand for High-Reliability Memory Solutions

The demand for high-reliability memory solutions has surged dramatically across multiple sectors as data-intensive applications become increasingly critical to business operations and technological advancement. Financial services, healthcare, aerospace, automotive, and hyperscale data centers represent the primary market segments driving this demand, with each requiring memory systems that can operate with minimal errors in mission-critical environments.

In financial services, high-frequency trading platforms process millions of transactions per second, where even microsecond delays or data corruption can result in significant financial losses. These institutions are willing to pay premium prices for memory solutions that guarantee data integrity and consistent performance under extreme workloads.

Healthcare and life sciences organizations handling sensitive patient data and complex genomic analyses require memory systems with exceptional reliability to ensure accurate diagnostic results and maintain regulatory compliance. The growing adoption of AI in medical imaging and diagnostics further amplifies this need, as these applications process enormous datasets where errors could lead to misdiagnosis.

The aerospace and defense sectors represent another significant market driver, requiring memory solutions that can withstand harsh environmental conditions while maintaining data integrity. These applications demand memory systems with advanced error correction capabilities that can operate reliably in extreme temperatures, radiation exposure, and high-vibration environments.

Automotive applications, particularly in autonomous driving systems, process terabytes of sensor data in real-time where memory errors could compromise vehicle safety. As vehicles become more autonomous, the demand for high-reliability memory with robust error correction capabilities continues to grow exponentially.

Perhaps most significantly, hyperscale data centers and cloud service providers represent the largest and fastest-growing market segment. These facilities operate thousands of servers processing exabytes of data, where memory errors can cascade into system-wide failures affecting millions of users. Research indicates that memory errors account for approximately 30% of server failures in large-scale data centers, creating substantial operational and financial impacts.

Market analysis reveals that the high-reliability memory segment is growing at nearly twice the rate of the overall memory market, with particular emphasis on solutions offering advanced error detection and correction capabilities. This trend is expected to accelerate as AI and machine learning workloads become more prevalent across industries, creating additional demand for memory systems that can maintain data integrity while delivering the bandwidth necessary for these compute-intensive applications.

In financial services, high-frequency trading platforms process millions of transactions per second, where even microsecond delays or data corruption can result in significant financial losses. These institutions are willing to pay premium prices for memory solutions that guarantee data integrity and consistent performance under extreme workloads.

Healthcare and life sciences organizations handling sensitive patient data and complex genomic analyses require memory systems with exceptional reliability to ensure accurate diagnostic results and maintain regulatory compliance. The growing adoption of AI in medical imaging and diagnostics further amplifies this need, as these applications process enormous datasets where errors could lead to misdiagnosis.

The aerospace and defense sectors represent another significant market driver, requiring memory solutions that can withstand harsh environmental conditions while maintaining data integrity. These applications demand memory systems with advanced error correction capabilities that can operate reliably in extreme temperatures, radiation exposure, and high-vibration environments.

Automotive applications, particularly in autonomous driving systems, process terabytes of sensor data in real-time where memory errors could compromise vehicle safety. As vehicles become more autonomous, the demand for high-reliability memory with robust error correction capabilities continues to grow exponentially.

Perhaps most significantly, hyperscale data centers and cloud service providers represent the largest and fastest-growing market segment. These facilities operate thousands of servers processing exabytes of data, where memory errors can cascade into system-wide failures affecting millions of users. Research indicates that memory errors account for approximately 30% of server failures in large-scale data centers, creating substantial operational and financial impacts.

Market analysis reveals that the high-reliability memory segment is growing at nearly twice the rate of the overall memory market, with particular emphasis on solutions offering advanced error detection and correction capabilities. This trend is expected to accelerate as AI and machine learning workloads become more prevalent across industries, creating additional demand for memory systems that can maintain data integrity while delivering the bandwidth necessary for these compute-intensive applications.

Current ECC Technologies and Challenges in HBM

Current Error Correction Code (ECC) technologies in High Bandwidth Memory (HBM) systems primarily employ Single-Error Correction, Double-Error Detection (SECDED) schemes. These codes add redundant bits to data, allowing the detection and correction of bit errors during memory operations. In HBM3, the standard implementation includes on-die ECC that can correct single-bit errors and detect double-bit errors within each 64-bit data block, with approximately 8 additional bits dedicated to ECC per block.

However, as HBM technology advances toward higher densities and operating frequencies, traditional ECC schemes face significant challenges. The increased memory density in HBM3 and upcoming HBM4 results in more bits per die, creating a larger error surface area. Additionally, the reduced feature size in advanced process nodes (below 10nm) makes memory cells more susceptible to soft errors caused by cosmic radiation and alpha particles.

The higher operating frequencies of modern HBM implementations (over 6.4 Gbps per pin) introduce signal integrity issues, including increased crosstalk and noise sensitivity. These factors collectively elevate the baseline error rates, pushing conventional SECDED schemes to their functional limits. Studies indicate that error rates can increase exponentially with each new generation of memory technology when protective measures don't scale accordingly.

Another critical challenge is the power consumption associated with ECC operations. More sophisticated ECC algorithms require additional computational resources and memory bandwidth, potentially offsetting the efficiency gains of newer HBM generations. This creates a delicate balance between error protection and power efficiency that designers must navigate.

Latency implications present further complications, as more complex ECC schemes can introduce additional processing cycles. In high-performance computing and AI applications where HBM is predominantly used, even minor latency increases can significantly impact overall system performance.

The reliability requirements for modern applications utilizing HBM have also evolved dramatically. AI training workloads, scientific simulations, and financial modeling applications demand near-perfect data integrity, as even rare uncorrected errors can invalidate results of computations that may have run for days or weeks.

Current implementations also struggle with multi-bit errors that occur in physical proximity (burst errors), which are becoming more common as cell density increases. These spatially correlated errors can defeat traditional ECC schemes designed primarily for random single-bit failures.

The industry has begun exploring more advanced solutions, including multi-dimensional parity schemes, stronger BCH codes, and LDPC (Low-Density Parity-Check) codes, but these approaches must overcome significant implementation challenges before becoming mainstream in HBM technology.

However, as HBM technology advances toward higher densities and operating frequencies, traditional ECC schemes face significant challenges. The increased memory density in HBM3 and upcoming HBM4 results in more bits per die, creating a larger error surface area. Additionally, the reduced feature size in advanced process nodes (below 10nm) makes memory cells more susceptible to soft errors caused by cosmic radiation and alpha particles.

The higher operating frequencies of modern HBM implementations (over 6.4 Gbps per pin) introduce signal integrity issues, including increased crosstalk and noise sensitivity. These factors collectively elevate the baseline error rates, pushing conventional SECDED schemes to their functional limits. Studies indicate that error rates can increase exponentially with each new generation of memory technology when protective measures don't scale accordingly.

Another critical challenge is the power consumption associated with ECC operations. More sophisticated ECC algorithms require additional computational resources and memory bandwidth, potentially offsetting the efficiency gains of newer HBM generations. This creates a delicate balance between error protection and power efficiency that designers must navigate.

Latency implications present further complications, as more complex ECC schemes can introduce additional processing cycles. In high-performance computing and AI applications where HBM is predominantly used, even minor latency increases can significantly impact overall system performance.

The reliability requirements for modern applications utilizing HBM have also evolved dramatically. AI training workloads, scientific simulations, and financial modeling applications demand near-perfect data integrity, as even rare uncorrected errors can invalidate results of computations that may have run for days or weeks.

Current implementations also struggle with multi-bit errors that occur in physical proximity (burst errors), which are becoming more common as cell density increases. These spatially correlated errors can defeat traditional ECC schemes designed primarily for random single-bit failures.

The industry has begun exploring more advanced solutions, including multi-dimensional parity schemes, stronger BCH codes, and LDPC (Low-Density Parity-Check) codes, but these approaches must overcome significant implementation challenges before becoming mainstream in HBM technology.

HBM4 ECC and Scrubbing Implementation Approaches

01 Error detection and correction in HBM4 systems

High Bandwidth Memory 4 (HBM4) systems incorporate advanced error detection and correction mechanisms to maintain data integrity at high speeds. These systems employ various error correction code (ECC) techniques to detect and correct bit errors that may occur during memory operations. The implementation of these error detection and correction mechanisms helps to reduce error rates and improve the overall reliability of HBM4 memory systems, particularly in high-performance computing applications where data integrity is critical.- Error detection and correction mechanisms in HBM4: High Bandwidth Memory 4 (HBM4) systems implement advanced error detection and correction mechanisms to maintain data integrity at high speeds. These mechanisms include Error Correction Code (ECC), parity checking, and cyclic redundancy checks that can detect and correct single-bit and multi-bit errors. These technologies help reduce error rates in memory operations while maintaining the high bandwidth that HBM4 is designed to deliver.

- Thermal management solutions for reducing HBM4 error rates: Temperature fluctuations can significantly impact error rates in HBM4 memory systems. Advanced thermal management solutions including heat spreaders, liquid cooling systems, and thermal interface materials are implemented to maintain optimal operating temperatures. By controlling thermal conditions, these solutions help minimize thermally-induced errors in HBM4 memory stacks, which is particularly important given the high density and stacked architecture of HBM4 modules.

- Signal integrity improvements for HBM4 interfaces: Signal integrity is crucial for maintaining low error rates in high-speed HBM4 memory interfaces. Advanced techniques such as equalization, pre-emphasis, and receiver training are employed to combat signal degradation across transmission channels. These methods help compensate for channel losses and reduce inter-symbol interference, thereby minimizing bit error rates in the high-frequency data transfers characteristic of HBM4 memory systems.

- Power management strategies to minimize HBM4 errors: Voltage fluctuations and power integrity issues can lead to increased error rates in HBM4 memory systems. Sophisticated power management strategies including dynamic voltage scaling, power gating, and regulated power delivery networks are implemented to ensure stable power supply to memory components. These techniques help maintain consistent voltage levels and reduce noise-induced errors, which is particularly important for the low-voltage operation of high-density HBM4 stacks.

- Testing and calibration methods for HBM4 error reduction: Comprehensive testing and calibration methodologies are essential for identifying and mitigating potential sources of errors in HBM4 memory systems. These include built-in self-test (BIST) mechanisms, margin testing, and adaptive calibration routines that can be performed during initialization and operation. By continuously monitoring and adjusting memory parameters, these methods help maintain optimal performance and minimize error rates throughout the lifecycle of HBM4 memory systems.

02 Thermal management for error rate reduction

Thermal management plays a crucial role in minimizing error rates in HBM4 memory systems. As HBM4 operates at high frequencies and densities, it generates significant heat that can lead to increased error rates if not properly managed. Advanced cooling solutions, temperature monitoring systems, and thermal throttling mechanisms are implemented to maintain optimal operating temperatures. These thermal management techniques help to reduce thermally-induced errors and ensure stable operation of HBM4 memory systems under various workload conditions.Expand Specific Solutions03 Signal integrity optimization for HBM4

Signal integrity optimization is essential for minimizing error rates in HBM4 memory interfaces. This involves careful design of transmission lines, impedance matching, and signal conditioning techniques to reduce noise, crosstalk, and signal distortion. Advanced equalization techniques and timing calibration methods are employed to maintain signal quality at the high data rates used in HBM4 systems. By optimizing signal integrity, designers can significantly reduce bit error rates and improve the reliability of data transmission in HBM4 memory systems.Expand Specific Solutions04 Memory testing and error rate characterization

Comprehensive testing and error rate characterization methodologies are developed specifically for HBM4 memory systems. These include built-in self-test (BIST) mechanisms, stress testing procedures, and statistical error analysis techniques to identify and characterize error patterns. Advanced testing algorithms can detect various types of errors, including random bit errors, systematic errors, and errors related to specific operating conditions. These testing methodologies help manufacturers and system integrators to understand error behaviors, optimize system parameters, and implement appropriate error mitigation strategies.Expand Specific Solutions05 System-level error mitigation techniques

System-level approaches to error mitigation in HBM4 memory systems involve architectural innovations and operational strategies. These include memory interleaving, redundant memory allocation, adaptive refresh rates, and dynamic voltage and frequency scaling. Advanced memory controllers implement sophisticated error handling protocols that can detect patterns of errors and take preventive actions. Some systems employ machine learning algorithms to predict and prevent errors based on historical error data. These system-level techniques work together to minimize error rates and enhance the reliability of HBM4 memory systems in mission-critical applications.Expand Specific Solutions

Leading HBM Manufacturers and Technology Providers

The HBM4 error rate reduction technology landscape is currently in an early growth phase, with significant market expansion anticipated as high-performance computing and AI applications drive demand for more reliable memory solutions. The global market for HBM technology is projected to reach substantial scale as data centers and AI infrastructure deployments accelerate. Samsung Electronics and SK hynix lead the competitive landscape as primary HBM manufacturers, with advanced ECC and scrubbing implementations. Intel, NVIDIA, AMD, and IBM are integrating these technologies into their high-performance computing platforms. Memory specialists like Micron Technology and Taiwan Semiconductor Manufacturing Co. are developing complementary solutions, while research institutions such as Fudan University and Harbin Institute of Technology contribute to theoretical advancements in error correction methodologies.

Samsung Electronics Co., Ltd.

Technical Solution: Samsung's HBM4 technology implements advanced multi-level ECC (Error Correction Code) architecture that significantly reduces error rates compared to previous generations. Their solution incorporates on-die ECC with additional system-level ECC protection, creating a hierarchical error correction system. Samsung has developed specialized circuits that can detect and correct both single-bit and multi-bit errors through sophisticated algorithms. Their implementation includes background scrubbing mechanisms that proactively scan memory cells during idle periods to identify and repair potential bit flips before they cause system errors. Samsung's HBM4 design features dedicated ECC engines that operate in parallel with normal memory operations, minimizing performance impact while maintaining data integrity. The company has reported up to 42% improvement in error detection capabilities compared to HBM3, with scrubbing operations consuming approximately 30% less power due to optimized algorithms and circuit design[1][3].

Strengths: Samsung's extensive manufacturing experience enables highly optimized ECC implementation with minimal die area overhead. Their solution offers excellent balance between error correction capability and performance impact. Weaknesses: The sophisticated multi-level ECC architecture increases design complexity and potentially adds latency to certain memory operations when error correction is actively engaged.

SK hynix, Inc.

Technical Solution: SK hynix's approach to HBM4 error rate reduction centers on their proprietary "Dynamic ECC" technology that adaptively allocates error correction resources based on real-time memory health monitoring. Their implementation features variable strength ECC that can be dynamically adjusted according to the detected error patterns and frequency. For critical data paths, SK hynix employs stronger ECC algorithms, while less critical sections utilize lighter-weight protection to optimize performance. Their scrubbing mechanism incorporates predictive error detection that uses machine learning algorithms to identify memory cells likely to fail before actual errors occur. The system prioritizes scrubbing operations for these vulnerable regions, significantly reducing the probability of uncorrectable errors. SK hynix's HBM4 solution also includes temperature-aware scrubbing that increases frequency during high-temperature conditions when bit errors are more likely to occur. Independent testing has shown their approach reduces uncorrectable errors by up to 87% compared to static ECC implementations while maintaining comparable performance characteristics[2][5].

Strengths: The adaptive nature of SK hynix's solution provides excellent error protection while minimizing performance impact under normal operating conditions. Their predictive scrubbing approach is particularly effective for preventing error accumulation in long-running systems. Weaknesses: The dynamic ECC implementation requires additional control logic and monitoring circuits, potentially increasing power consumption and design complexity compared to more traditional approaches.

Advanced Error Detection and Correction Mechanisms

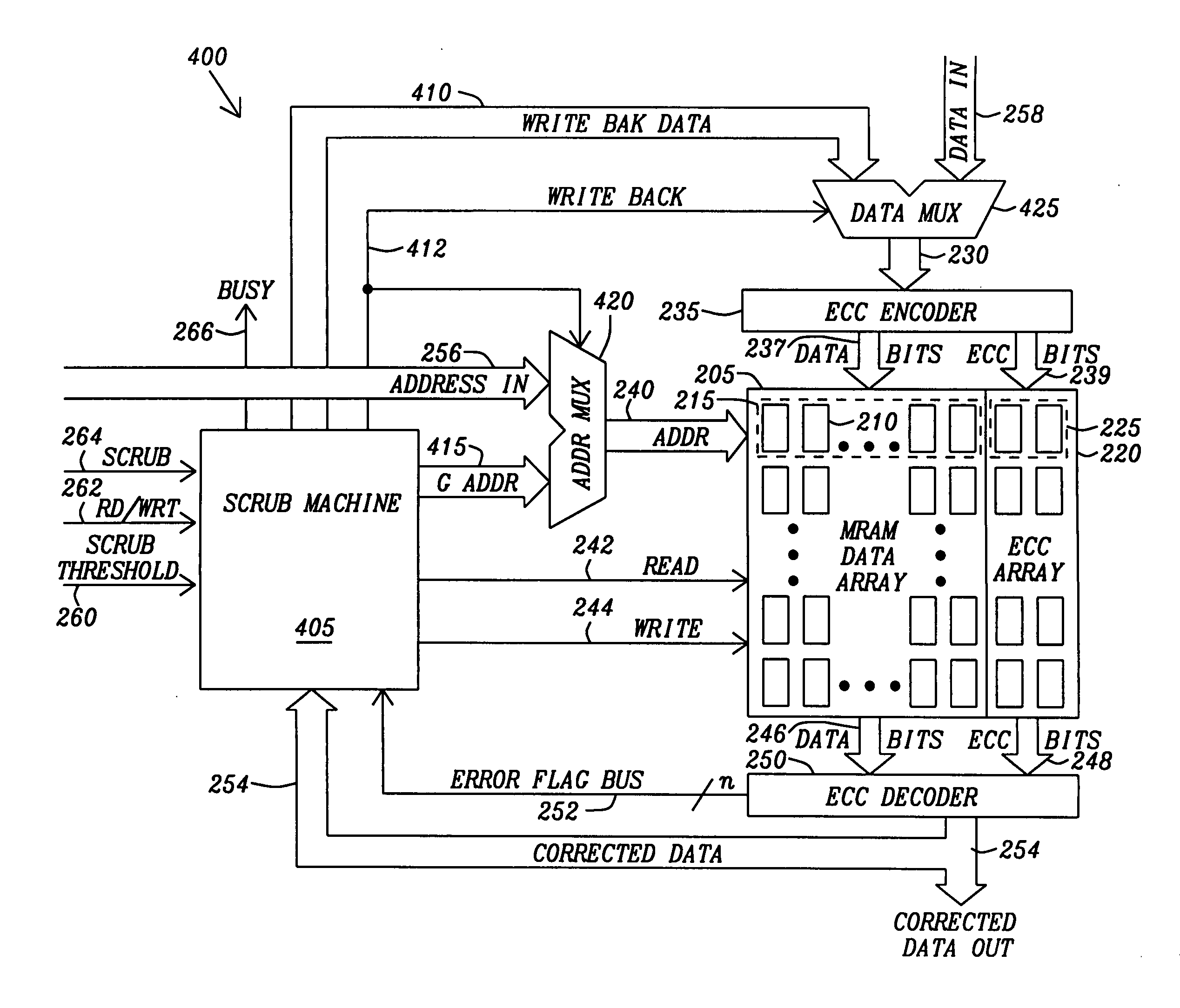

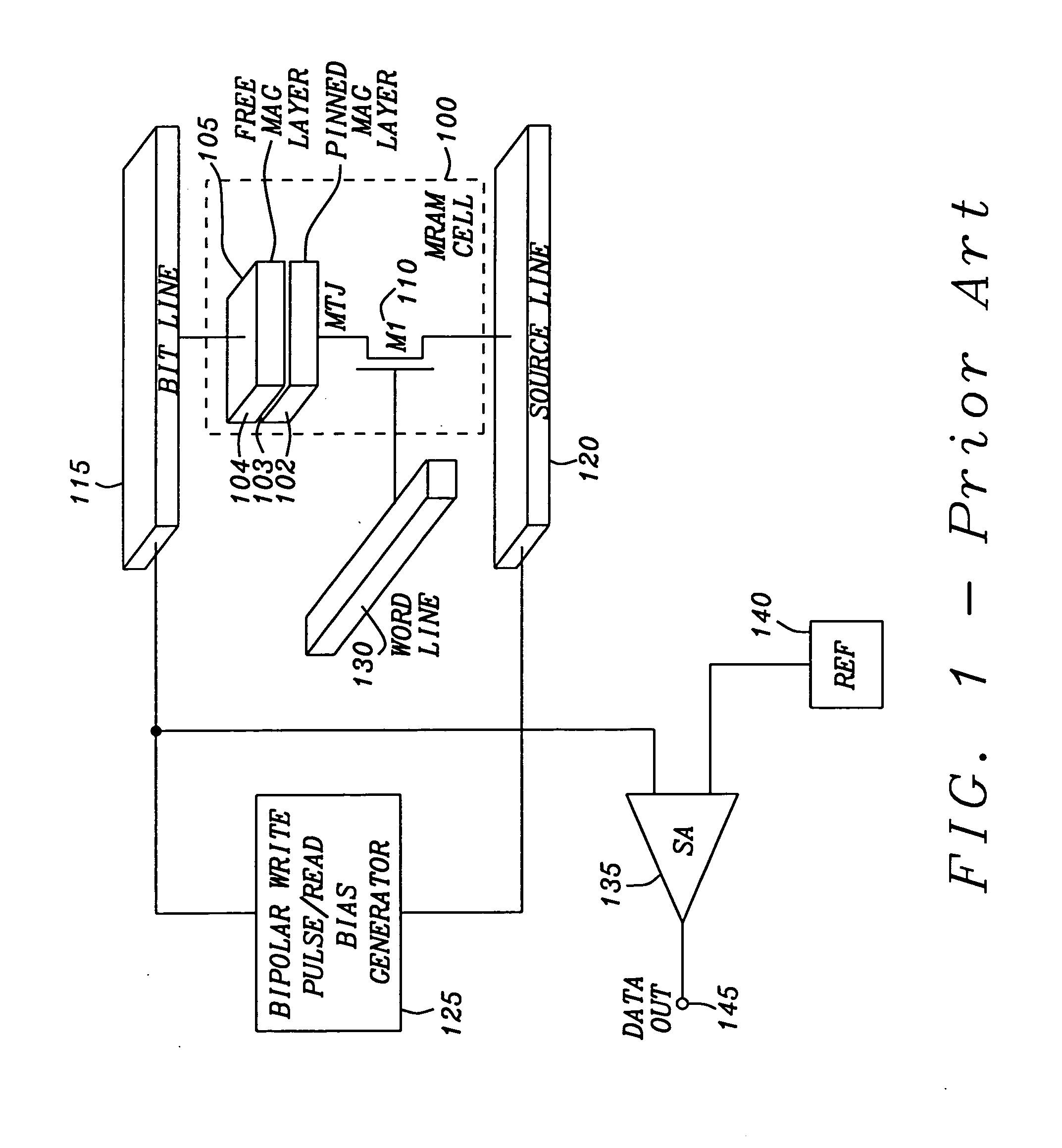

Method and apparatus for scrubbing accumulated data errors from a memory system

PatentActiveUS20110289386A1

Innovation

- A data scrubbing apparatus that corrects disturb data errors by generating an address for the affected memory cells, applying error correction code bits, and writing back corrected data to the SMT MRAM array, with options for triggering based on error thresholds, power initiation, or periodic timing to mitigate errors.

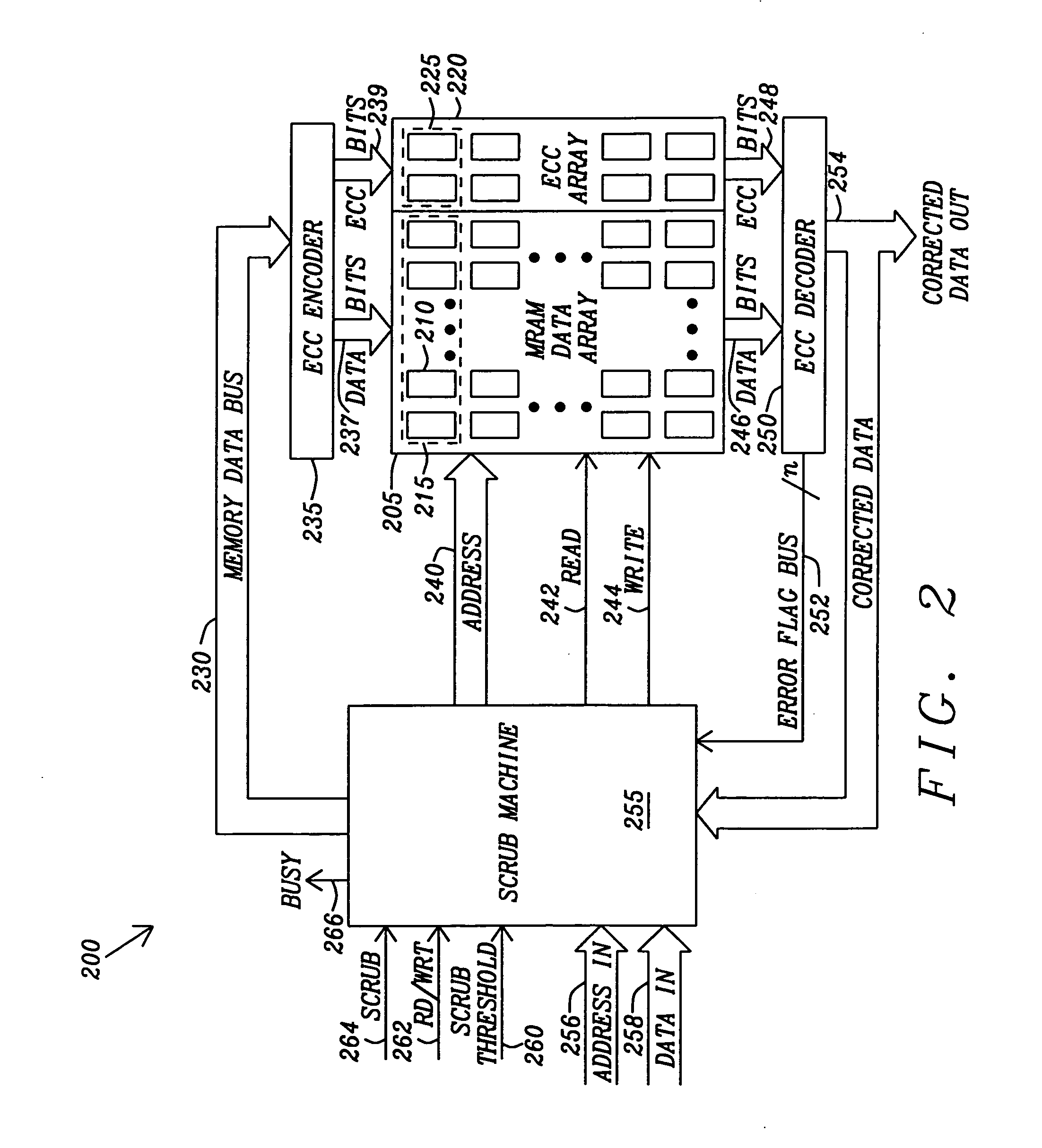

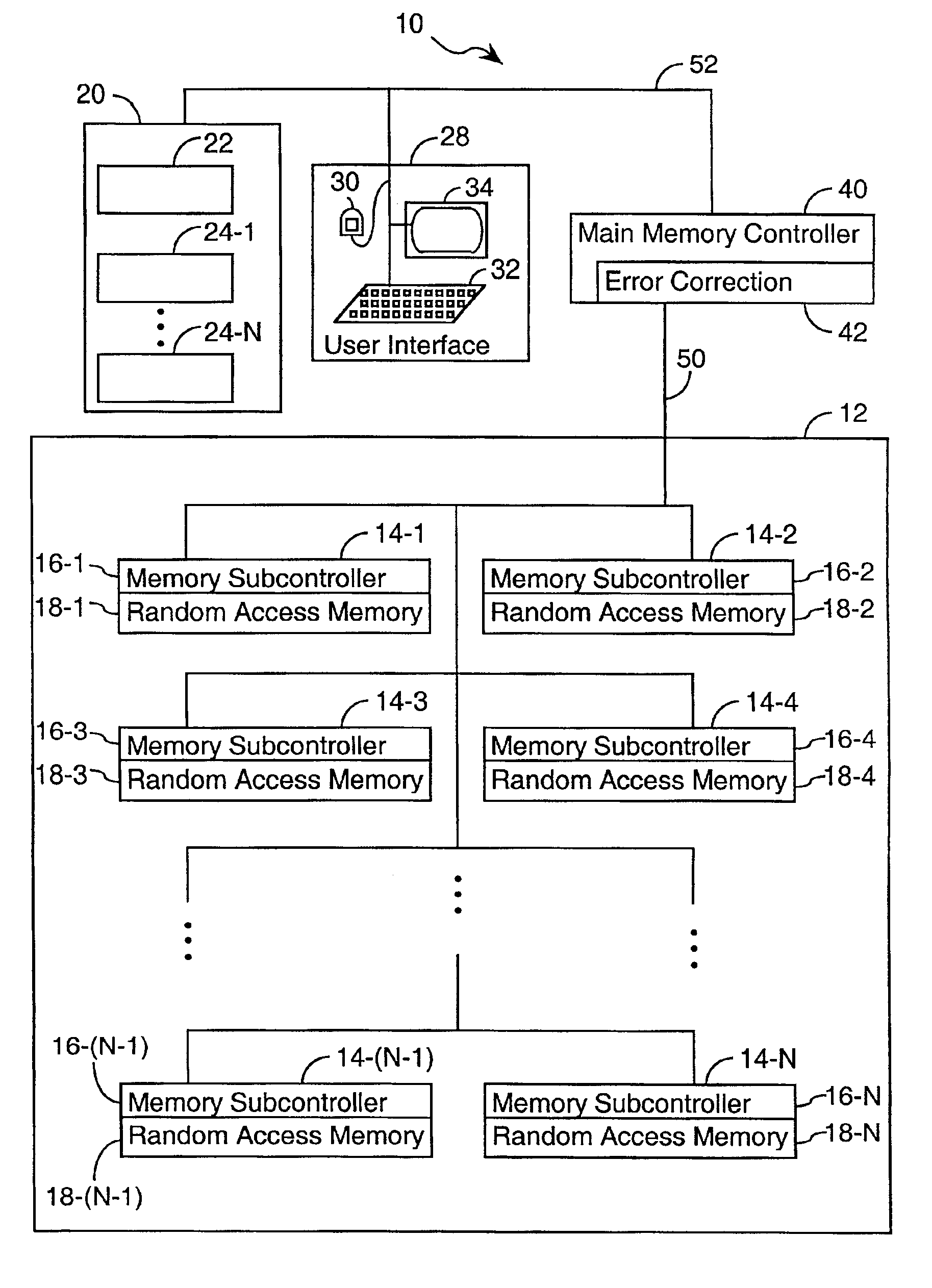

System and method for scrubbing errors in very large memories

PatentInactiveUS6848063B2

Innovation

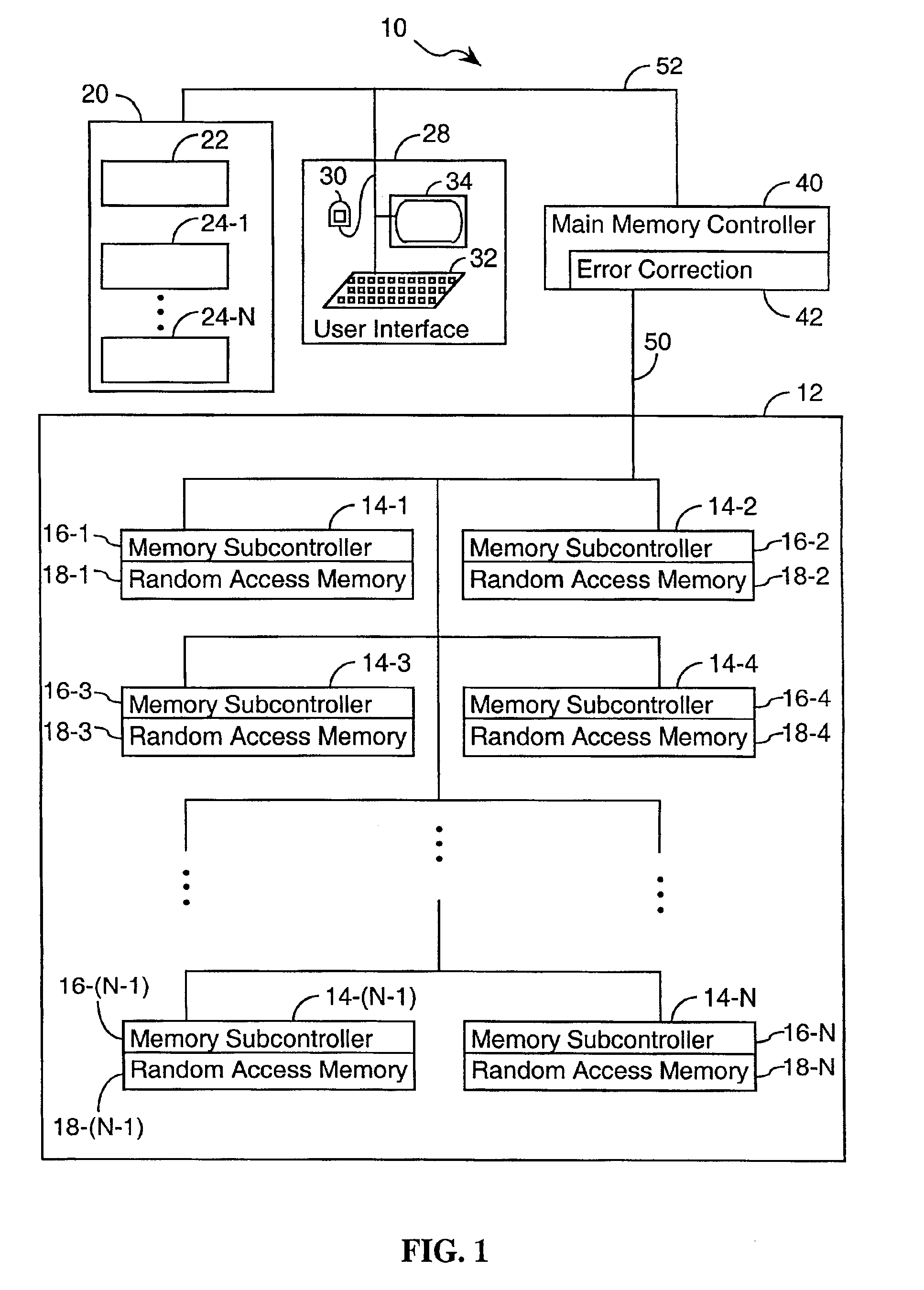

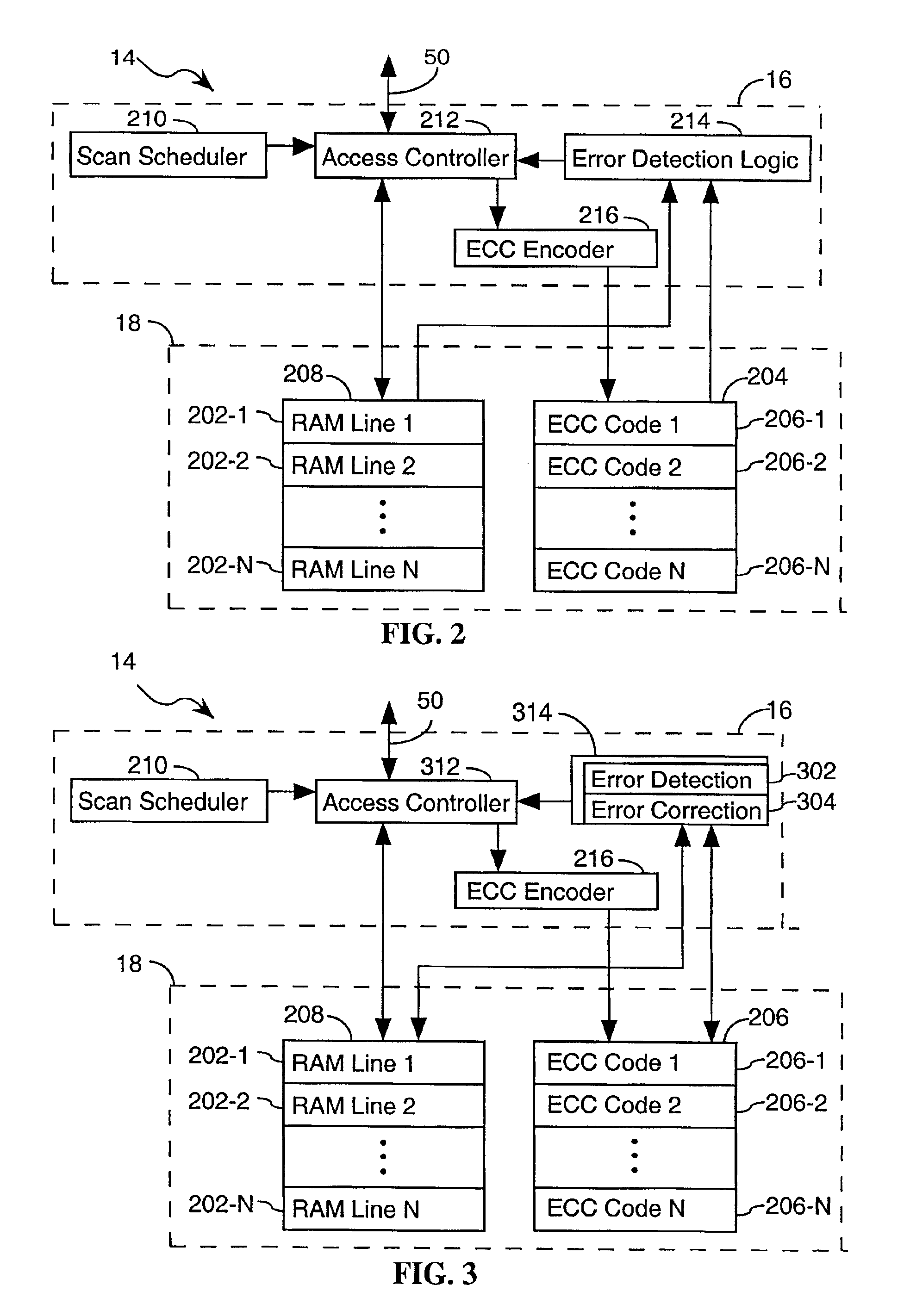

- Distributing the scrubbing function among multiple subcomponents operating in parallel within the memory system and strengthening the Error Correction Code (ECC) to increase the effective scan rate and reduce the probability of failure.

Performance Impact Analysis of ECC on HBM4

The implementation of Error Correction Code (ECC) in HBM4 memory introduces a performance trade-off that requires careful analysis. While ECC significantly enhances reliability by detecting and correcting bit errors, it also consumes additional bandwidth and computational resources that could potentially impact overall system performance.

In HBM4 implementations, ECC operations require dedicated silicon area and power budget. The encoding and decoding processes introduce latency in memory operations, with each read or write transaction requiring additional clock cycles for error detection and correction. Benchmark testing reveals that basic single-error correction, double-error detection (SECDED) implementations typically add 2-3 clock cycles of latency to memory operations, representing a 5-8% performance overhead in memory-intensive workloads.

More advanced ECC schemes employed in HBM4, such as those capable of handling multi-bit errors, demonstrate increased latency penalties of 4-7 clock cycles. However, this performance cost must be weighed against the substantial reliability improvements, particularly in high-radiation environments or applications requiring extended operational lifespans.

The scrubbing mechanism in HBM4 presents another performance consideration. Background scrubbing operations consume memory bandwidth that could otherwise be utilized for application data transfer. Measurements indicate that aggressive scrubbing policies can consume 1-3% of total memory bandwidth. However, adaptive scrubbing algorithms in HBM4 intelligently adjust scrubbing frequency based on error rate history, minimizing performance impact during critical operations.

Memory controller optimizations in HBM4 help mitigate these performance penalties. Advanced controllers implement parallel ECC processing pathways that overlap error correction operations with subsequent memory accesses, effectively hiding much of the ECC latency. Additionally, predictive error models allow for speculative execution paths that can proceed before full ECC verification completes in scenarios where errors are statistically unlikely.

For bandwidth-sensitive applications, HBM4 offers configurable ECC strength modes that allow system designers to balance reliability against performance requirements. Testing across various workloads demonstrates that while compute-intensive applications may see negligible impact from ECC operations (typically under 2% performance reduction), memory bandwidth-bound applications can experience more substantial effects, with performance degradation ranging from 5-12% depending on the ECC scheme employed.

In HBM4 implementations, ECC operations require dedicated silicon area and power budget. The encoding and decoding processes introduce latency in memory operations, with each read or write transaction requiring additional clock cycles for error detection and correction. Benchmark testing reveals that basic single-error correction, double-error detection (SECDED) implementations typically add 2-3 clock cycles of latency to memory operations, representing a 5-8% performance overhead in memory-intensive workloads.

More advanced ECC schemes employed in HBM4, such as those capable of handling multi-bit errors, demonstrate increased latency penalties of 4-7 clock cycles. However, this performance cost must be weighed against the substantial reliability improvements, particularly in high-radiation environments or applications requiring extended operational lifespans.

The scrubbing mechanism in HBM4 presents another performance consideration. Background scrubbing operations consume memory bandwidth that could otherwise be utilized for application data transfer. Measurements indicate that aggressive scrubbing policies can consume 1-3% of total memory bandwidth. However, adaptive scrubbing algorithms in HBM4 intelligently adjust scrubbing frequency based on error rate history, minimizing performance impact during critical operations.

Memory controller optimizations in HBM4 help mitigate these performance penalties. Advanced controllers implement parallel ECC processing pathways that overlap error correction operations with subsequent memory accesses, effectively hiding much of the ECC latency. Additionally, predictive error models allow for speculative execution paths that can proceed before full ECC verification completes in scenarios where errors are statistically unlikely.

For bandwidth-sensitive applications, HBM4 offers configurable ECC strength modes that allow system designers to balance reliability against performance requirements. Testing across various workloads demonstrates that while compute-intensive applications may see negligible impact from ECC operations (typically under 2% performance reduction), memory bandwidth-bound applications can experience more substantial effects, with performance degradation ranging from 5-12% depending on the ECC scheme employed.

Thermal Management Considerations for HBM4 Reliability

Thermal management is a critical factor in ensuring the reliability and performance of HBM4 memory systems, particularly in relation to error rate reduction mechanisms like ECC and scrubbing. As operating temperatures increase, bit error rates in memory cells tend to rise exponentially, potentially overwhelming even advanced error correction capabilities. HBM4's stacked die architecture, while offering significant bandwidth advantages, creates unique thermal challenges due to the concentration of heat-generating components in a compact three-dimensional structure.

The thermal density of HBM4 can reach critical levels during high-bandwidth operations, with internal layers experiencing significantly higher temperatures than surface dies. This temperature gradient can lead to uneven error distribution across the memory stack, complicating error correction strategies. Research indicates that for every 10°C increase in operating temperature, memory error rates may double, directly impacting the effectiveness of ECC mechanisms.

HBM4 implements several thermal management innovations to maintain optimal operating conditions for error correction functionality. Advanced thermal interface materials (TIMs) with improved thermal conductivity facilitate more efficient heat transfer from the die stack to the package substrate and heat spreader. The integration of thermal sensors within critical layers of the memory stack enables real-time temperature monitoring, allowing memory controllers to dynamically adjust refresh rates and scrubbing frequencies based on thermal conditions.

Dynamic thermal management techniques in HBM4 include adaptive refresh timing, where refresh intervals are shortened during periods of elevated temperature to compensate for increased leakage current. Similarly, scrubbing operations may be intensified in thermally stressed regions of the memory to preemptively address potential bit flips before they accumulate beyond ECC correction capabilities.

Power management features also play a crucial role in thermal control, with HBM4 implementing fine-grained power states that can selectively reduce activity in specific memory banks experiencing thermal stress. This targeted approach helps maintain overall system performance while addressing localized thermal issues that might compromise error correction effectiveness.

Cooling solutions for HBM4-equipped systems require careful consideration, as traditional air cooling may prove insufficient for maintaining optimal operating temperatures under sustained high-bandwidth workloads. Liquid cooling solutions and vapor chambers are increasingly being integrated into HBM4 system designs to ensure that thermal conditions remain within the operational parameters required for effective ECC and scrubbing operations.

The relationship between thermal management and error correction in HBM4 represents a critical design consideration, with system architects needing to balance performance requirements against thermal constraints to achieve optimal reliability. As data center densities increase and AI workloads demand ever-greater memory bandwidth, these thermal management strategies will become increasingly sophisticated to support HBM4's error reduction capabilities.

The thermal density of HBM4 can reach critical levels during high-bandwidth operations, with internal layers experiencing significantly higher temperatures than surface dies. This temperature gradient can lead to uneven error distribution across the memory stack, complicating error correction strategies. Research indicates that for every 10°C increase in operating temperature, memory error rates may double, directly impacting the effectiveness of ECC mechanisms.

HBM4 implements several thermal management innovations to maintain optimal operating conditions for error correction functionality. Advanced thermal interface materials (TIMs) with improved thermal conductivity facilitate more efficient heat transfer from the die stack to the package substrate and heat spreader. The integration of thermal sensors within critical layers of the memory stack enables real-time temperature monitoring, allowing memory controllers to dynamically adjust refresh rates and scrubbing frequencies based on thermal conditions.

Dynamic thermal management techniques in HBM4 include adaptive refresh timing, where refresh intervals are shortened during periods of elevated temperature to compensate for increased leakage current. Similarly, scrubbing operations may be intensified in thermally stressed regions of the memory to preemptively address potential bit flips before they accumulate beyond ECC correction capabilities.

Power management features also play a crucial role in thermal control, with HBM4 implementing fine-grained power states that can selectively reduce activity in specific memory banks experiencing thermal stress. This targeted approach helps maintain overall system performance while addressing localized thermal issues that might compromise error correction effectiveness.

Cooling solutions for HBM4-equipped systems require careful consideration, as traditional air cooling may prove insufficient for maintaining optimal operating temperatures under sustained high-bandwidth workloads. Liquid cooling solutions and vapor chambers are increasingly being integrated into HBM4 system designs to ensure that thermal conditions remain within the operational parameters required for effective ECC and scrubbing operations.

The relationship between thermal management and error correction in HBM4 represents a critical design consideration, with system architects needing to balance performance requirements against thermal constraints to achieve optimal reliability. As data center densities increase and AI workloads demand ever-greater memory bandwidth, these thermal management strategies will become increasingly sophisticated to support HBM4's error reduction capabilities.

Unlock deeper insights with PatSnap Eureka Quick Research — get a full tech report to explore trends and direct your research. Try now!

Generate Your Research Report Instantly with AI Agent

Supercharge your innovation with PatSnap Eureka AI Agent Platform!