Optimize RRAM for Cloud Computing: Speed and Scalability

SEP 10, 20259 MIN READ

Generate Your Research Report Instantly with AI Agent

Patsnap Eureka helps you evaluate technical feasibility & market potential.

RRAM Technology Evolution and Objectives

Resistive Random-Access Memory (RRAM) has emerged as a promising non-volatile memory technology over the past two decades, evolving from theoretical concepts to practical implementations. The technology leverages the resistance switching phenomenon in certain dielectric materials, allowing for data storage through changes in resistance states. Initially developed as a potential replacement for flash memory, RRAM has gradually expanded its application scope to address the growing computational demands of modern data centers.

The evolution of RRAM technology can be traced through several distinct phases. In the early 2000s, researchers primarily focused on understanding the fundamental mechanisms of resistive switching in various material systems. By the 2010s, significant progress was made in improving the reliability and endurance of RRAM cells, with major semiconductor companies beginning to invest in research and development efforts. Recent years have witnessed substantial advancements in scaling capabilities, switching speed, and integration with conventional CMOS processes.

Current technological trends indicate a shift toward optimizing RRAM specifically for cloud computing applications, where data processing speed and storage density are paramount. The increasing adoption of artificial intelligence and big data analytics in cloud environments has created unprecedented demands for memory systems that can efficiently handle massive datasets while minimizing energy consumption. RRAM's inherent characteristics, including non-volatility, high density, and low power operation, position it as a potentially transformative technology in this domain.

The primary objectives for RRAM optimization in cloud computing contexts are multifaceted. First, enhancing switching speed to reduce latency in data-intensive operations is critical for real-time applications. Current RRAM technologies typically exhibit switching times in the nanosecond range, but further improvements to sub-nanosecond speeds would significantly benefit cloud computing workloads.

Second, improving scalability to accommodate the exponential growth in data volume represents another key objective. This involves not only increasing storage density through advanced fabrication techniques but also developing innovative array architectures that maximize parallelism and throughput.

Third, ensuring compatibility with existing cloud infrastructure while providing a clear path for integration presents a substantial engineering challenge. This requires standardization efforts and the development of interface protocols that allow seamless adoption of RRAM technology within current cloud computing frameworks.

Finally, optimizing energy efficiency remains a crucial goal, as power consumption represents a significant operational cost for cloud service providers. RRAM's inherent low-power characteristics offer promising advantages in this regard, but further refinements are necessary to fully realize its potential in large-scale deployments.

The evolution of RRAM technology can be traced through several distinct phases. In the early 2000s, researchers primarily focused on understanding the fundamental mechanisms of resistive switching in various material systems. By the 2010s, significant progress was made in improving the reliability and endurance of RRAM cells, with major semiconductor companies beginning to invest in research and development efforts. Recent years have witnessed substantial advancements in scaling capabilities, switching speed, and integration with conventional CMOS processes.

Current technological trends indicate a shift toward optimizing RRAM specifically for cloud computing applications, where data processing speed and storage density are paramount. The increasing adoption of artificial intelligence and big data analytics in cloud environments has created unprecedented demands for memory systems that can efficiently handle massive datasets while minimizing energy consumption. RRAM's inherent characteristics, including non-volatility, high density, and low power operation, position it as a potentially transformative technology in this domain.

The primary objectives for RRAM optimization in cloud computing contexts are multifaceted. First, enhancing switching speed to reduce latency in data-intensive operations is critical for real-time applications. Current RRAM technologies typically exhibit switching times in the nanosecond range, but further improvements to sub-nanosecond speeds would significantly benefit cloud computing workloads.

Second, improving scalability to accommodate the exponential growth in data volume represents another key objective. This involves not only increasing storage density through advanced fabrication techniques but also developing innovative array architectures that maximize parallelism and throughput.

Third, ensuring compatibility with existing cloud infrastructure while providing a clear path for integration presents a substantial engineering challenge. This requires standardization efforts and the development of interface protocols that allow seamless adoption of RRAM technology within current cloud computing frameworks.

Finally, optimizing energy efficiency remains a crucial goal, as power consumption represents a significant operational cost for cloud service providers. RRAM's inherent low-power characteristics offer promising advantages in this regard, but further refinements are necessary to fully realize its potential in large-scale deployments.

Cloud Computing Market Demands for Memory Solutions

The cloud computing industry is experiencing unprecedented growth, with global market value projected to reach $832.1 billion by 2025, growing at a CAGR of 17.5%. This explosive expansion has created significant demands for advanced memory solutions that can support the massive data processing requirements of modern cloud infrastructures. Traditional memory technologies such as DRAM and NAND flash are increasingly struggling to meet these evolving needs, creating a substantial market opportunity for emerging technologies like RRAM.

Cloud service providers require memory solutions that deliver exceptional performance metrics, particularly in terms of data access speed, power efficiency, and density. Current benchmarks indicate that memory access latency represents a critical bottleneck in cloud computing applications, with data suggesting that reducing memory latency by 30% could improve overall application performance by up to 15% in data-intensive workloads.

The hyperscale data center segment, which represents 45% of cloud infrastructure spending, has particularly stringent requirements for memory solutions. These facilities process exabytes of data daily and require memory technologies that can support massive parallel operations while maintaining low power consumption profiles. Market research indicates that data centers currently consume approximately 3% of global electricity, with memory systems accounting for 25-40% of server power usage.

Edge computing represents another rapidly growing segment within the cloud ecosystem, with market projections indicating 37% annual growth through 2025. This distributed computing model demands memory solutions with different characteristics - emphasizing low power consumption, thermal efficiency, and resilience to environmental factors. RRAM's non-volatile nature and potential for low-power operation position it favorably for these applications.

AI and machine learning workloads are driving particularly intense memory demands within cloud environments. These applications require high-bandwidth, low-latency memory access for processing massive neural network models. Current projections indicate that AI-specific cloud services will grow at 45% annually, creating substantial demand for specialized memory solutions that can accelerate these workloads.

Cost considerations remain paramount for cloud providers, with memory representing 20-30% of server hardware costs. Any memory technology seeking widespread adoption must demonstrate compelling economics alongside technical advantages. RRAM's potential for high density and simplified manufacturing processes could provide significant cost advantages at scale if production challenges can be overcome.

Cloud service providers require memory solutions that deliver exceptional performance metrics, particularly in terms of data access speed, power efficiency, and density. Current benchmarks indicate that memory access latency represents a critical bottleneck in cloud computing applications, with data suggesting that reducing memory latency by 30% could improve overall application performance by up to 15% in data-intensive workloads.

The hyperscale data center segment, which represents 45% of cloud infrastructure spending, has particularly stringent requirements for memory solutions. These facilities process exabytes of data daily and require memory technologies that can support massive parallel operations while maintaining low power consumption profiles. Market research indicates that data centers currently consume approximately 3% of global electricity, with memory systems accounting for 25-40% of server power usage.

Edge computing represents another rapidly growing segment within the cloud ecosystem, with market projections indicating 37% annual growth through 2025. This distributed computing model demands memory solutions with different characteristics - emphasizing low power consumption, thermal efficiency, and resilience to environmental factors. RRAM's non-volatile nature and potential for low-power operation position it favorably for these applications.

AI and machine learning workloads are driving particularly intense memory demands within cloud environments. These applications require high-bandwidth, low-latency memory access for processing massive neural network models. Current projections indicate that AI-specific cloud services will grow at 45% annually, creating substantial demand for specialized memory solutions that can accelerate these workloads.

Cost considerations remain paramount for cloud providers, with memory representing 20-30% of server hardware costs. Any memory technology seeking widespread adoption must demonstrate compelling economics alongside technical advantages. RRAM's potential for high density and simplified manufacturing processes could provide significant cost advantages at scale if production challenges can be overcome.

RRAM Current Status and Technical Barriers

Resistive Random-Access Memory (RRAM) has emerged as a promising non-volatile memory technology with potential applications in cloud computing environments. Currently, RRAM devices demonstrate write speeds of 10-100 nanoseconds and read speeds of 1-10 nanoseconds, which is significantly faster than traditional flash memory but still lags behind DRAM and SRAM technologies critical for high-performance cloud computing applications.

The scalability of RRAM has shown considerable progress, with devices successfully fabricated at sub-20nm nodes. Industry leaders like Intel, Samsung, and Micron have demonstrated working prototypes at 16nm, while research institutions have reported functional devices at nodes as small as 10nm. This scaling capability positions RRAM favorably for high-density storage applications in cloud data centers.

Despite these advancements, RRAM faces several critical technical barriers that limit its widespread adoption in cloud computing environments. The most significant challenge is the variability in switching behavior, which manifests as inconsistent resistance states across multiple write-erase cycles. This variability increases with scaling down device dimensions, creating reliability concerns for large-scale deployments essential for cloud infrastructure.

Endurance limitations represent another major obstacle, with current RRAM devices typically achieving 10^6 to 10^9 write cycles before failure. While this exceeds flash memory capabilities, it falls short of the requirements for write-intensive cloud applications that demand endurance levels comparable to DRAM (>10^15 cycles).

Power consumption during switching operations remains problematic, particularly for the RESET process which typically requires higher current densities. This energy inefficiency becomes increasingly critical in large-scale cloud deployments where power consumption directly impacts operational costs and sustainability metrics.

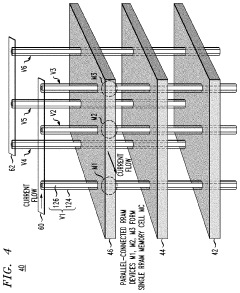

The sneak path current issue in crossbar architectures presents a significant barrier to achieving the high-density arrays necessary for cloud storage applications. Although selector devices and architectural solutions have been proposed, they often introduce additional complexity and manufacturing challenges.

Integration with CMOS technology presents compatibility challenges, particularly regarding thermal budgets and process contamination. Current fabrication processes for RRAM often require temperatures or materials that can compromise the integrity of underlying CMOS circuits, complicating 3D integration efforts that would be valuable for cloud computing hardware.

Data retention at elevated temperatures typical in data center environments (often exceeding 40°C) remains problematic for some RRAM compositions, with accelerated degradation observed under these conditions. This threatens the long-term reliability required for mission-critical cloud storage applications.

The scalability of RRAM has shown considerable progress, with devices successfully fabricated at sub-20nm nodes. Industry leaders like Intel, Samsung, and Micron have demonstrated working prototypes at 16nm, while research institutions have reported functional devices at nodes as small as 10nm. This scaling capability positions RRAM favorably for high-density storage applications in cloud data centers.

Despite these advancements, RRAM faces several critical technical barriers that limit its widespread adoption in cloud computing environments. The most significant challenge is the variability in switching behavior, which manifests as inconsistent resistance states across multiple write-erase cycles. This variability increases with scaling down device dimensions, creating reliability concerns for large-scale deployments essential for cloud infrastructure.

Endurance limitations represent another major obstacle, with current RRAM devices typically achieving 10^6 to 10^9 write cycles before failure. While this exceeds flash memory capabilities, it falls short of the requirements for write-intensive cloud applications that demand endurance levels comparable to DRAM (>10^15 cycles).

Power consumption during switching operations remains problematic, particularly for the RESET process which typically requires higher current densities. This energy inefficiency becomes increasingly critical in large-scale cloud deployments where power consumption directly impacts operational costs and sustainability metrics.

The sneak path current issue in crossbar architectures presents a significant barrier to achieving the high-density arrays necessary for cloud storage applications. Although selector devices and architectural solutions have been proposed, they often introduce additional complexity and manufacturing challenges.

Integration with CMOS technology presents compatibility challenges, particularly regarding thermal budgets and process contamination. Current fabrication processes for RRAM often require temperatures or materials that can compromise the integrity of underlying CMOS circuits, complicating 3D integration efforts that would be valuable for cloud computing hardware.

Data retention at elevated temperatures typical in data center environments (often exceeding 40°C) remains problematic for some RRAM compositions, with accelerated degradation observed under these conditions. This threatens the long-term reliability required for mission-critical cloud storage applications.

Current RRAM Optimization Approaches for Cloud Computing

01 RRAM switching speed and performance optimization

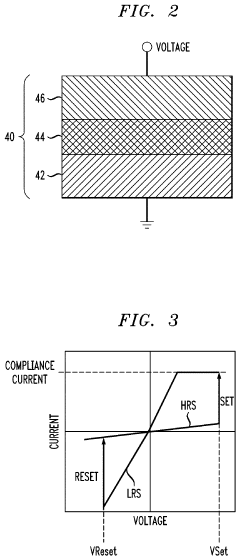

RRAM devices can achieve high-speed operation through optimized material selection and device structure. The switching speed of RRAM is primarily determined by the ion migration process within the resistive switching layer. By engineering the oxide layer thickness and composition, switching speeds in the nanosecond range can be achieved. Various techniques such as doping the switching material, controlling the oxygen vacancy concentration, and optimizing the electrode materials can significantly enhance the switching speed while maintaining reliability.- RRAM switching speed and performance optimization: RRAM devices can achieve high switching speeds through optimized material selection and device structure. By engineering the resistive switching layer and electrode materials, switching speeds in the nanosecond range can be achieved. Various techniques such as doping, interface engineering, and pulse width optimization can further enhance the switching performance, making RRAM suitable for high-speed memory applications. The fast switching capability combined with low power consumption positions RRAM as a competitive technology for next-generation memory systems.

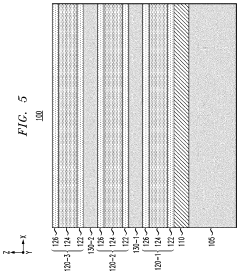

- Scalability and miniaturization of RRAM cells: RRAM technology demonstrates excellent scalability potential, allowing for device dimensions to be reduced to nanometer scale. The simple two-terminal structure of RRAM cells facilitates high-density integration and 3D stacking capabilities. Advanced fabrication techniques enable the creation of ultra-small memory cells with minimal feature sizes, supporting continued scaling according to Moore's Law. This scalability advantage makes RRAM particularly suitable for high-density storage applications and integration with advanced CMOS technology nodes.

- Novel materials and structures for enhanced RRAM performance: Innovative materials and device structures are being developed to enhance RRAM performance metrics. These include metal oxides, chalcogenides, and two-dimensional materials that exhibit superior resistive switching properties. Multi-layer structures and engineered interfaces can improve retention, endurance, and reliability while maintaining fast switching speeds. The incorporation of novel materials such as hafnium oxide, tantalum oxide, and various transition metal oxides enables tunable resistance states and improved operational stability across a wide temperature range.

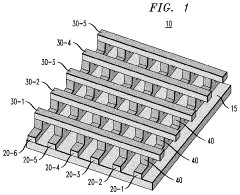

- 3D integration and crossbar architectures for RRAM: Three-dimensional integration and crossbar architectures significantly enhance RRAM density and performance. These approaches allow for vertical stacking of memory cells, dramatically increasing storage capacity per unit area. Crossbar structures enable simple addressing schemes and reduce peripheral circuitry overhead. Advanced fabrication techniques support the creation of multi-layer RRAM arrays with minimal interference between layers. These architectural innovations address scaling challenges while maintaining high performance, making RRAM suitable for ultra-high-density storage applications.

- Circuit design and integration techniques for RRAM systems: Specialized circuit designs and integration techniques are crucial for optimizing RRAM system performance. These include sense amplifiers tailored for RRAM's resistance characteristics, write drivers capable of delivering precise programming pulses, and addressing schemes that minimize sneak path currents in crossbar arrays. Advanced error correction codes and wear-leveling algorithms extend device lifetime and reliability. Integration with CMOS logic enables hybrid computing architectures that leverage RRAM's unique properties for both memory and computing functions, supporting emerging applications in neuromorphic computing and in-memory processing.

02 Scalability and miniaturization of RRAM cells

RRAM technology demonstrates excellent scalability potential, allowing for high-density memory arrays. The simple two-terminal structure of RRAM cells enables scaling down to nanometer dimensions without significant performance degradation. Cross-point architectures and 3D stacking techniques further enhance the integration density. As cell size decreases, careful engineering of the conductive filament formation process becomes critical to maintain consistent switching behavior and prevent variability issues at smaller nodes.Expand Specific Solutions03 Novel materials and structures for enhanced RRAM performance

Advanced material systems and innovative device structures can significantly improve RRAM speed and scalability. Utilizing transition metal oxides, perovskites, and two-dimensional materials as switching layers offers unique advantages for high-speed operation. Multi-layer stacks with engineered interfaces can provide better control over the resistive switching mechanism. Incorporating selector devices with RRAM cells helps mitigate sneak path issues in high-density arrays while maintaining fast switching characteristics.Expand Specific Solutions04 RRAM array architecture and integration techniques

Specialized array architectures and integration methods are crucial for maximizing RRAM speed and density. Cross-point arrays with optimized word line and bit line configurations reduce parasitic resistance and capacitance, enabling faster read/write operations. 3D vertical stacking techniques dramatically increase storage density while maintaining accessibility and performance. Advanced sensing circuits and programming schemes help overcome variability issues in scaled RRAM arrays, ensuring reliable high-speed operation across all memory cells.Expand Specific Solutions05 Hybrid and emerging RRAM technologies

Hybrid memory systems and emerging RRAM variants offer pathways to overcome traditional speed and scaling limitations. Combining RRAM with CMOS logic enables in-memory computing architectures that reduce data movement bottlenecks. Neuromorphic implementations leverage RRAM's analog switching characteristics for efficient AI acceleration. Advanced selector-memory combinations, such as 1S1R (one-selector one-resistor) structures, enable ultra-high density arrays while maintaining fast access times. These emerging approaches position RRAM as a versatile technology for both conventional and non-von Neumann computing paradigms.Expand Specific Solutions

Leading RRAM and Cloud Computing Industry Players

The RRAM (Resistive Random Access Memory) market for cloud computing is in a growth phase, with increasing demand for faster, more scalable memory solutions. The global market is expanding rapidly as cloud infrastructure providers seek to overcome traditional memory bottlenecks. Technologically, RRAM is advancing toward commercial maturity with key players driving innovation. Intel leads with comprehensive memory architecture solutions, while Qualcomm focuses on mobile-cloud integration. Companies like SanDisk Technologies and Renesas Electronics are developing specialized RRAM implementations for data centers. Oracle and Red Hat are integrating RRAM capabilities into their cloud platforms, while Chinese players including Tianyi Cloud and Inspur are rapidly advancing in this space. Academic institutions like Zhejiang University and Huazhong University are contributing fundamental research to overcome current density and endurance limitations.

Intel Corp.

Technical Solution: Intel has developed Optane DC Persistent Memory technology based on 3D XPoint (a type of RRAM) specifically optimized for data center applications. Their solution integrates RRAM modules directly into the memory subsystem, creating a new tier between DRAM and storage that delivers up to 512GB per module. Intel's architecture allows RRAM to function either as memory-mapped persistent storage or as volatile system memory extension, enabling significant performance improvements for cloud computing workloads. Their Optane technology delivers approximately 4-15x better read latency compared to NAND SSDs while maintaining data persistence. Intel has also developed specific optimizations for cloud database applications, showing up to 2.5x more database operations per second and 2x faster data recovery times compared to conventional storage solutions.

Strengths: Industry-leading memory controller integration, established ecosystem support, and proven deployment in major cloud providers. Intel's vertical integration from silicon to software stack provides comprehensive optimization. Weaknesses: Higher cost per GB compared to NAND solutions, requires specific hardware support, and power consumption remains higher than ideal for maximum data center density.

Oracle International Corp.

Technical Solution: Oracle has developed specialized RRAM-based acceleration technology for their Exadata cloud computing platform. Their approach focuses on integrating RRAM as a persistent cache tier between DRAM and flash storage, creating a hybrid memory hierarchy optimized for database and analytics workloads. Oracle's implementation uses custom memory controllers that intelligently place hot data in RRAM while maintaining cooler data in lower-cost storage tiers. Their solution achieves approximately 10x lower latency for random reads compared to flash storage while providing persistence guarantees for critical transaction logs. Oracle has demonstrated their RRAM technology delivering up to 3x higher transaction throughput for cloud database workloads compared to conventional architectures. Their implementation includes specialized circuitry for atomic write operations, significantly improving consistency and durability for cloud applications. Oracle has also developed software-defined memory management that dynamically allocates RRAM resources based on workload characteristics, optimizing utilization across multi-tenant cloud environments.

Strengths: Tight integration with database and enterprise applications, comprehensive software optimization stack, and proven performance in production cloud environments. Their solution offers excellent reliability metrics for mission-critical workloads. Weaknesses: Proprietary architecture limiting broader ecosystem adoption, higher acquisition costs compared to commodity solutions, and optimization primarily focused on Oracle's own software stack.

Key RRAM Speed and Scalability Patents Analysis

Resistive random access memory device and methods of fabrication

PatentActiveUS20200106013A1

Innovation

- The implementation of a multi-layer stack for RRAM devices, including a switching layer, an oxygen exchange layer, and a sidewall oxide, formed through specific fabrication processes such as plasma oxidation, to enhance filament formation and stability, thereby improving device endurance and retention.

Resistive random-access memory array with reduced switching resistance variability

PatentInactiveUS10957742B2

Innovation

- The fabrication of RRAM memory cells with multiple parallel-connected resistive memory devices, where each cell comprises a group of RRAM devices sharing a common horizontal electrode layer, effectively averaging the switching resistances to minimize variability and noise.

Energy Efficiency Considerations for RRAM in Data Centers

Energy efficiency has emerged as a critical factor in the adoption of RRAM technology within data center environments. As cloud computing workloads continue to grow exponentially, the power consumption of memory systems represents an increasingly significant portion of overall data center energy usage. RRAM offers compelling advantages in this domain, with studies indicating potential energy savings of 60-85% compared to conventional DRAM solutions when optimized for specific workloads.

The fundamental energy efficiency benefits of RRAM stem from its non-volatile nature, eliminating the need for constant refresh operations that plague traditional DRAM. This characteristic alone can reduce idle power consumption by up to 40% in large-scale deployments. Additionally, RRAM's ability to maintain stored data without power input creates opportunities for intelligent power management strategies, where memory banks can be selectively powered down during periods of low utilization without data loss.

Recent advancements in RRAM cell design have further enhanced energy efficiency metrics. Multi-level cell (MLC) configurations enable higher storage density per unit area, effectively reducing the energy cost per bit stored. Research from leading semiconductor manufacturers demonstrates that optimized MLC RRAM can achieve energy consumption as low as 0.1-0.5 pJ per bit operation, representing a significant improvement over both DRAM and NAND flash alternatives.

Thermal management considerations also favor RRAM implementation in data centers. The technology generates substantially less heat during operation compared to conventional memory solutions, reducing cooling requirements that typically account for 30-40% of data center energy expenditure. Thermal modeling studies suggest that large-scale RRAM deployment could potentially reduce cooling-related energy costs by 15-25% in typical data center environments.

Integration challenges remain a consideration for energy-efficient RRAM deployment. Current hybrid architectures that combine RRAM with traditional memory technologies require sophisticated power management systems to fully realize efficiency gains. The energy overhead of these control systems must be carefully balanced against the savings provided by the RRAM components themselves. Industry consortiums are actively developing standardized approaches to address these integration challenges.

Looking forward, the energy efficiency advantages of RRAM are expected to become even more pronounced as data centers transition toward edge computing models. The distributed nature of edge infrastructure places greater emphasis on energy-efficient memory solutions that can operate effectively under variable power conditions. RRAM's combination of non-volatility, low active power, and minimal standby consumption positions it as an ideal candidate for these emerging deployment scenarios.

The fundamental energy efficiency benefits of RRAM stem from its non-volatile nature, eliminating the need for constant refresh operations that plague traditional DRAM. This characteristic alone can reduce idle power consumption by up to 40% in large-scale deployments. Additionally, RRAM's ability to maintain stored data without power input creates opportunities for intelligent power management strategies, where memory banks can be selectively powered down during periods of low utilization without data loss.

Recent advancements in RRAM cell design have further enhanced energy efficiency metrics. Multi-level cell (MLC) configurations enable higher storage density per unit area, effectively reducing the energy cost per bit stored. Research from leading semiconductor manufacturers demonstrates that optimized MLC RRAM can achieve energy consumption as low as 0.1-0.5 pJ per bit operation, representing a significant improvement over both DRAM and NAND flash alternatives.

Thermal management considerations also favor RRAM implementation in data centers. The technology generates substantially less heat during operation compared to conventional memory solutions, reducing cooling requirements that typically account for 30-40% of data center energy expenditure. Thermal modeling studies suggest that large-scale RRAM deployment could potentially reduce cooling-related energy costs by 15-25% in typical data center environments.

Integration challenges remain a consideration for energy-efficient RRAM deployment. Current hybrid architectures that combine RRAM with traditional memory technologies require sophisticated power management systems to fully realize efficiency gains. The energy overhead of these control systems must be carefully balanced against the savings provided by the RRAM components themselves. Industry consortiums are actively developing standardized approaches to address these integration challenges.

Looking forward, the energy efficiency advantages of RRAM are expected to become even more pronounced as data centers transition toward edge computing models. The distributed nature of edge infrastructure places greater emphasis on energy-efficient memory solutions that can operate effectively under variable power conditions. RRAM's combination of non-volatility, low active power, and minimal standby consumption positions it as an ideal candidate for these emerging deployment scenarios.

Integration Challenges with Existing Cloud Infrastructure

Integrating RRAM technology into existing cloud infrastructure presents significant challenges that must be addressed to fully realize its potential for speed and scalability improvements. Current cloud architectures are predominantly built around traditional memory hierarchies utilizing DRAM and flash storage, with hardware and software stacks optimized for these technologies. The introduction of RRAM requires substantial modifications to both hardware interfaces and system software layers.

At the hardware level, existing memory controllers and bus architectures are not natively compatible with RRAM's unique electrical characteristics and timing requirements. Cloud servers typically employ standardized DDR interfaces for memory access, while RRAM may require specialized signaling, voltage levels, and access protocols. This incompatibility necessitates either the development of translation layers or complete redesign of memory subsystems, both of which represent significant engineering investments for cloud providers.

System software presents another integration hurdle. Operating systems, hypervisors, and virtualization platforms that power cloud environments contain deeply embedded assumptions about memory performance characteristics, particularly regarding latency, endurance, and power consumption profiles. RRAM's asymmetric read/write performance and different failure modes require modifications to memory management algorithms, caching policies, and resource allocation strategies to prevent performance degradation.

Data center infrastructure also poses challenges for RRAM adoption. Power distribution systems and thermal management solutions in existing facilities are designed around the energy profiles of conventional memory technologies. While RRAM offers potential energy efficiency benefits, its integration may require reconfiguration of power delivery networks and cooling systems to accommodate different operational parameters.

Security and reliability frameworks represent another integration concern. Cloud providers have established robust error correction, data integrity, and encryption mechanisms tailored to current memory technologies. RRAM's unique error patterns and vulnerability profiles necessitate reevaluation and potential redesign of these critical protection systems to maintain the high reliability standards expected in cloud environments.

Migration strategies present perhaps the most practical challenge. Cloud providers cannot afford significant service disruptions, making wholesale replacement of memory systems impractical. Instead, hybrid approaches that gradually introduce RRAM alongside existing technologies must be developed, requiring complex orchestration systems that can intelligently manage data placement across heterogeneous memory resources while maintaining performance guarantees to customers.

At the hardware level, existing memory controllers and bus architectures are not natively compatible with RRAM's unique electrical characteristics and timing requirements. Cloud servers typically employ standardized DDR interfaces for memory access, while RRAM may require specialized signaling, voltage levels, and access protocols. This incompatibility necessitates either the development of translation layers or complete redesign of memory subsystems, both of which represent significant engineering investments for cloud providers.

System software presents another integration hurdle. Operating systems, hypervisors, and virtualization platforms that power cloud environments contain deeply embedded assumptions about memory performance characteristics, particularly regarding latency, endurance, and power consumption profiles. RRAM's asymmetric read/write performance and different failure modes require modifications to memory management algorithms, caching policies, and resource allocation strategies to prevent performance degradation.

Data center infrastructure also poses challenges for RRAM adoption. Power distribution systems and thermal management solutions in existing facilities are designed around the energy profiles of conventional memory technologies. While RRAM offers potential energy efficiency benefits, its integration may require reconfiguration of power delivery networks and cooling systems to accommodate different operational parameters.

Security and reliability frameworks represent another integration concern. Cloud providers have established robust error correction, data integrity, and encryption mechanisms tailored to current memory technologies. RRAM's unique error patterns and vulnerability profiles necessitate reevaluation and potential redesign of these critical protection systems to maintain the high reliability standards expected in cloud environments.

Migration strategies present perhaps the most practical challenge. Cloud providers cannot afford significant service disruptions, making wholesale replacement of memory systems impractical. Instead, hybrid approaches that gradually introduce RRAM alongside existing technologies must be developed, requiring complex orchestration systems that can intelligently manage data placement across heterogeneous memory resources while maintaining performance guarantees to customers.

Unlock deeper insights with Patsnap Eureka Quick Research — get a full tech report to explore trends and direct your research. Try now!

Generate Your Research Report Instantly with AI Agent

Supercharge your innovation with Patsnap Eureka AI Agent Platform!