Optimizing Phased Array for Telecommunication Infrastructure

SEP 22, 202510 MIN READ

Generate Your Research Report Instantly with AI Agent

Patsnap Eureka helps you evaluate technical feasibility & market potential.

Phased Array Technology Evolution and Objectives

Phased array technology has evolved significantly since its inception in the early 20th century, initially developed for military radar applications during World War II. The fundamental principle of electronically steering radio waves without mechanical movement has remained consistent, while implementation technologies have transformed dramatically. Early systems utilized vacuum tubes and rudimentary phase shifters, limiting their practical applications due to size, cost, and power requirements.

The 1960s marked a pivotal transition with the introduction of solid-state components, enabling more compact and reliable phased array systems. By the 1980s, advancements in integrated circuits and digital signal processing facilitated more sophisticated beam forming capabilities, expanding potential applications beyond military use into commercial telecommunications.

The past two decades have witnessed revolutionary progress in phased array technology, driven by semiconductor miniaturization, MMIC (Monolithic Microwave Integrated Circuit) development, and advanced manufacturing techniques. These innovations have dramatically reduced costs while improving performance metrics such as power efficiency, bandwidth, and beam steering accuracy.

Current phased array systems for telecommunications infrastructure leverage multiple technological domains, including RF engineering, digital signal processing, advanced materials, and artificial intelligence for adaptive beam management. The integration of these technologies has enabled the deployment of massive MIMO systems essential for 5G networks, supporting higher data rates and improved spectral efficiency.

The primary objectives for optimizing phased array technology in telecommunications infrastructure center on several key dimensions. Cost reduction remains paramount, as widespread deployment requires economically viable solutions. Size and weight minimization is critical for practical installation in diverse environments. Power efficiency improvements are essential for operational sustainability and reduced operating expenses.

Performance enhancement objectives include wider bandwidth capabilities to support increasing data demands, improved beam steering precision for better spatial multiplexing, and reduced latency in beam adaptation. Additionally, environmental resilience to ensure reliable operation across varying conditions represents a significant technical goal.

Looking forward, the technology roadmap aims to achieve fully integrated, software-defined phased array systems capable of dynamic spectrum utilization across multiple frequency bands, including millimeter wave. The ultimate objective is to develop intelligent, self-optimizing arrays that can autonomously adapt to changing network conditions, user demands, and environmental factors, thereby maximizing spectral efficiency and network capacity while minimizing energy consumption.

The 1960s marked a pivotal transition with the introduction of solid-state components, enabling more compact and reliable phased array systems. By the 1980s, advancements in integrated circuits and digital signal processing facilitated more sophisticated beam forming capabilities, expanding potential applications beyond military use into commercial telecommunications.

The past two decades have witnessed revolutionary progress in phased array technology, driven by semiconductor miniaturization, MMIC (Monolithic Microwave Integrated Circuit) development, and advanced manufacturing techniques. These innovations have dramatically reduced costs while improving performance metrics such as power efficiency, bandwidth, and beam steering accuracy.

Current phased array systems for telecommunications infrastructure leverage multiple technological domains, including RF engineering, digital signal processing, advanced materials, and artificial intelligence for adaptive beam management. The integration of these technologies has enabled the deployment of massive MIMO systems essential for 5G networks, supporting higher data rates and improved spectral efficiency.

The primary objectives for optimizing phased array technology in telecommunications infrastructure center on several key dimensions. Cost reduction remains paramount, as widespread deployment requires economically viable solutions. Size and weight minimization is critical for practical installation in diverse environments. Power efficiency improvements are essential for operational sustainability and reduced operating expenses.

Performance enhancement objectives include wider bandwidth capabilities to support increasing data demands, improved beam steering precision for better spatial multiplexing, and reduced latency in beam adaptation. Additionally, environmental resilience to ensure reliable operation across varying conditions represents a significant technical goal.

Looking forward, the technology roadmap aims to achieve fully integrated, software-defined phased array systems capable of dynamic spectrum utilization across multiple frequency bands, including millimeter wave. The ultimate objective is to develop intelligent, self-optimizing arrays that can autonomously adapt to changing network conditions, user demands, and environmental factors, thereby maximizing spectral efficiency and network capacity while minimizing energy consumption.

Telecommunication Market Demand Analysis

The global telecommunication infrastructure market is experiencing unprecedented growth, driven by the increasing demand for high-speed connectivity, the proliferation of IoT devices, and the ongoing deployment of 5G networks. The market for phased array technology within this sector is projected to reach $73.4 billion by 2028, growing at a CAGR of 17.2% from 2023. This growth is primarily fueled by the telecommunications industry's need for more efficient, reliable, and high-performance antenna systems that can support the exponential increase in data traffic.

Consumer demand for faster and more reliable mobile connectivity continues to surge, with global mobile data traffic expected to increase fourfold between 2022 and 2027. This escalating demand necessitates advanced antenna solutions like optimized phased arrays that can provide higher throughput, lower latency, and better coverage. The ability of phased arrays to electronically steer beams without mechanical movement makes them particularly valuable for modern telecommunication networks that must dynamically adapt to changing user distributions and traffic patterns.

Enterprise and industrial sectors represent another significant market segment for phased array technology. With the rise of Industry 4.0, smart manufacturing, and automated logistics, businesses require robust communication infrastructure that can support mission-critical applications. Phased array systems offer the reliability and performance needed for these demanding environments, driving adoption across various industrial verticals.

The rural and remote connectivity market presents substantial growth opportunities for phased array technology. Approximately 37% of the global population still lacks reliable internet access, creating a significant addressable market. Optimized phased arrays can help overcome the challenges of providing connectivity to these underserved areas by enabling more efficient use of spectrum resources and extending the range of base stations.

Emerging applications such as satellite internet constellations, including Starlink, OneWeb, and Project Kuiper, are creating new demand vectors for advanced phased array technology. These low Earth orbit (LEO) satellite networks require sophisticated ground terminals with electronically steerable antennas to maintain connections with satellites in motion, representing a rapidly growing market segment estimated to reach $18.7 billion by 2026.

Government initiatives worldwide to bridge the digital divide and enhance national communication infrastructure are providing additional market momentum. Many countries have allocated significant funding for telecommunications infrastructure development, with the United States alone committing over $65 billion through the Infrastructure Investment and Jobs Act specifically for broadband expansion, creating substantial opportunities for advanced antenna technologies.

Consumer demand for faster and more reliable mobile connectivity continues to surge, with global mobile data traffic expected to increase fourfold between 2022 and 2027. This escalating demand necessitates advanced antenna solutions like optimized phased arrays that can provide higher throughput, lower latency, and better coverage. The ability of phased arrays to electronically steer beams without mechanical movement makes them particularly valuable for modern telecommunication networks that must dynamically adapt to changing user distributions and traffic patterns.

Enterprise and industrial sectors represent another significant market segment for phased array technology. With the rise of Industry 4.0, smart manufacturing, and automated logistics, businesses require robust communication infrastructure that can support mission-critical applications. Phased array systems offer the reliability and performance needed for these demanding environments, driving adoption across various industrial verticals.

The rural and remote connectivity market presents substantial growth opportunities for phased array technology. Approximately 37% of the global population still lacks reliable internet access, creating a significant addressable market. Optimized phased arrays can help overcome the challenges of providing connectivity to these underserved areas by enabling more efficient use of spectrum resources and extending the range of base stations.

Emerging applications such as satellite internet constellations, including Starlink, OneWeb, and Project Kuiper, are creating new demand vectors for advanced phased array technology. These low Earth orbit (LEO) satellite networks require sophisticated ground terminals with electronically steerable antennas to maintain connections with satellites in motion, representing a rapidly growing market segment estimated to reach $18.7 billion by 2026.

Government initiatives worldwide to bridge the digital divide and enhance national communication infrastructure are providing additional market momentum. Many countries have allocated significant funding for telecommunications infrastructure development, with the United States alone committing over $65 billion through the Infrastructure Investment and Jobs Act specifically for broadband expansion, creating substantial opportunities for advanced antenna technologies.

Current Phased Array Implementation Challenges

Despite significant advancements in phased array technology for telecommunications infrastructure, several critical challenges persist that impede optimal implementation and performance. The miniaturization of phased array systems remains problematic, particularly when balancing size reduction with maintaining high performance characteristics. Current designs struggle to achieve the necessary power efficiency while maintaining compact form factors required for modern telecommunications deployments, especially in dense urban environments.

Thermal management presents another significant hurdle, as the concentrated power in smaller arrays generates substantial heat that can degrade performance and reduce component lifespan. Existing cooling solutions often add bulk and complexity to systems that ideally should be streamlined and maintenance-free. This challenge becomes particularly acute in outdoor installations where environmental conditions vary widely and passive cooling may be insufficient.

Cost factors continue to limit widespread adoption, with high-quality phase shifters, amplifiers, and control circuitry representing substantial portions of system budgets. The precision manufacturing required for millimeter-wave components operating at frequencies used in 5G and beyond drives costs higher, creating barriers to deployment scale necessary for comprehensive network coverage.

Calibration and synchronization across large arrays present ongoing technical difficulties. As the number of elements increases to support higher gain and more precise beamforming, maintaining phase coherence becomes exponentially more complex. Current calibration techniques often require significant downtime or specialized equipment, reducing operational efficiency.

Interference management capabilities in existing phased array systems frequently fall short of requirements in congested spectrum environments. The ability to dynamically adapt to changing interference patterns while maintaining desired signal quality remains limited by processing capabilities and algorithm sophistication in current implementations.

Power consumption continues to be a critical constraint, particularly for remote or battery-powered installations. The trade-off between power efficiency and performance creates design compromises that limit the practical application of advanced beamforming techniques in energy-constrained scenarios.

Manufacturing repeatability presents challenges in scaling production while maintaining consistent performance across units. Slight variations in component characteristics can lead to significant differences in array performance, requiring sophisticated quality control processes that add to production costs and complexity.

Software control systems for managing complex phased arrays often lack the necessary flexibility and user-friendly interfaces required for efficient network operation. The integration of phased array systems with existing network management infrastructure frequently requires custom solutions that increase implementation complexity and maintenance requirements.

Thermal management presents another significant hurdle, as the concentrated power in smaller arrays generates substantial heat that can degrade performance and reduce component lifespan. Existing cooling solutions often add bulk and complexity to systems that ideally should be streamlined and maintenance-free. This challenge becomes particularly acute in outdoor installations where environmental conditions vary widely and passive cooling may be insufficient.

Cost factors continue to limit widespread adoption, with high-quality phase shifters, amplifiers, and control circuitry representing substantial portions of system budgets. The precision manufacturing required for millimeter-wave components operating at frequencies used in 5G and beyond drives costs higher, creating barriers to deployment scale necessary for comprehensive network coverage.

Calibration and synchronization across large arrays present ongoing technical difficulties. As the number of elements increases to support higher gain and more precise beamforming, maintaining phase coherence becomes exponentially more complex. Current calibration techniques often require significant downtime or specialized equipment, reducing operational efficiency.

Interference management capabilities in existing phased array systems frequently fall short of requirements in congested spectrum environments. The ability to dynamically adapt to changing interference patterns while maintaining desired signal quality remains limited by processing capabilities and algorithm sophistication in current implementations.

Power consumption continues to be a critical constraint, particularly for remote or battery-powered installations. The trade-off between power efficiency and performance creates design compromises that limit the practical application of advanced beamforming techniques in energy-constrained scenarios.

Manufacturing repeatability presents challenges in scaling production while maintaining consistent performance across units. Slight variations in component characteristics can lead to significant differences in array performance, requiring sophisticated quality control processes that add to production costs and complexity.

Software control systems for managing complex phased arrays often lack the necessary flexibility and user-friendly interfaces required for efficient network operation. The integration of phased array systems with existing network management infrastructure frequently requires custom solutions that increase implementation complexity and maintenance requirements.

Current Optimization Approaches for Telecommunication

01 Beamforming and beam steering optimization techniques

Various methods for optimizing beamforming and beam steering in phased array systems to improve directivity and signal quality. These techniques include adaptive algorithms that dynamically adjust phase and amplitude of array elements to maximize gain in desired directions while minimizing interference. Advanced optimization approaches incorporate machine learning and neural networks to predict optimal beam patterns based on environmental conditions and system requirements.- Beamforming and Beam Steering Optimization: Optimization techniques for phased array systems focus on improving beamforming and beam steering capabilities. These methods involve adjusting phase shifters and amplitude controls to optimize radiation patterns, minimize sidelobes, and enhance directivity. Advanced algorithms are employed to calculate optimal phase and amplitude distributions across array elements, enabling precise beam control and improved signal quality in various applications including radar and communications systems.

- Antenna Element Configuration and Layout Optimization: This approach focuses on optimizing the physical arrangement and configuration of antenna elements within phased arrays. Techniques include determining optimal spacing between elements, array geometry (linear, planar, circular, etc.), and element positioning to minimize mutual coupling effects. These optimizations aim to improve array performance metrics such as gain, bandwidth, and coverage while reducing interference and maximizing efficiency in limited physical spaces.

- Computational Methods and Algorithms for Array Optimization: Advanced computational techniques are employed to optimize phased array performance. These include genetic algorithms, machine learning approaches, neural networks, and other computational intelligence methods that can efficiently search large solution spaces. These algorithms help solve complex multi-objective optimization problems in phased array design, balancing tradeoffs between power consumption, heat generation, cost, and performance parameters while meeting specific application requirements.

- Power Management and Efficiency Optimization: Techniques focused on optimizing power consumption and thermal management in phased array systems. This includes adaptive power allocation strategies, efficient amplifier designs, and power distribution networks that minimize losses. These approaches help extend battery life in portable systems, reduce cooling requirements, and improve overall system efficiency while maintaining required performance levels for target applications such as radar, communications, and sensing.

- Calibration and Error Compensation Techniques: Methods for improving phased array performance through advanced calibration and error compensation. These techniques address manufacturing variations, component aging, environmental effects, and other factors that can degrade array performance. Real-time monitoring systems, self-calibration procedures, and adaptive compensation algorithms help maintain optimal performance over time by adjusting for phase and amplitude errors, mutual coupling effects, and other system imperfections.

02 Element configuration and layout optimization

Optimization of phased array element configurations, spacing, and geometric arrangements to enhance array performance. This includes determining optimal element positions to reduce sidelobes, minimize mutual coupling effects, and improve overall radiation efficiency. Techniques involve computational methods for analyzing and optimizing array geometries based on specific application requirements and frequency ranges.Expand Specific Solutions03 Power efficiency and thermal management optimization

Methods for optimizing power consumption and thermal performance in phased array systems. These approaches include efficient power distribution networks, advanced cooling techniques, and power management algorithms that balance performance requirements with energy constraints. Optimization strategies focus on reducing heat generation while maintaining signal integrity and extending operational lifetime of array components.Expand Specific Solutions04 Signal processing and calibration techniques

Advanced signal processing methods and calibration techniques for improving phased array performance. These include digital beamforming algorithms, phase and amplitude calibration procedures, and error compensation mechanisms to account for component variations and environmental factors. Optimization approaches focus on enhancing signal-to-noise ratio, reducing phase errors, and improving overall system accuracy.Expand Specific Solutions05 Adaptive and reconfigurable array architectures

Design and optimization of adaptive and reconfigurable phased array architectures that can dynamically adjust their characteristics based on operational requirements. These systems incorporate programmable components, modular designs, and software-defined functionality to adapt to changing conditions and requirements. Optimization methods focus on maximizing flexibility while maintaining performance across multiple operational modes and frequency bands.Expand Specific Solutions

Leading Companies in Phased Array Technology

The phased array telecommunication infrastructure market is currently in a growth phase, characterized by increasing adoption across 5G networks and satellite communications. The market is expanding rapidly with projections exceeding $10 billion by 2025, driven by demand for high-speed connectivity and beamforming capabilities. Technologically, the field shows varying maturity levels with established players like Huawei, Ericsson, and Qualcomm leading commercial deployments, while research institutions such as California Institute of Technology and University of Electronic Science & Technology of China drive innovation. Companies including Raytheon, Samsung, and ViaSat have developed specialized applications, while emerging players like Chengdu Tianrui Xingtong Technology are focusing on millimeter wave solutions. The competitive landscape features telecommunications giants competing with specialized defense contractors and semiconductor manufacturers in this strategically important technology domain.

Huawei Technologies Co., Ltd.

Technical Solution: Huawei has developed advanced Massive MIMO phased array systems for 5G telecommunication infrastructure that utilize innovative beamforming algorithms and calibration techniques. Their solution incorporates active antenna units (AAUs) with up to 192 antenna elements, enabling precise beam steering and formation. Huawei's MetaAAU technology employs extremely large antenna arrays (ELAA) with innovative array architecture and signal processing algorithms that improve coverage by 3dB while reducing power consumption by 30%. Their phased array systems utilize proprietary algorithms for channel estimation and precoding, which optimize spectral efficiency and minimize interference in dense urban environments. Additionally, Huawei has implemented digital twin technology for real-time monitoring and optimization of phased array performance across network deployments.

Strengths: Industry-leading integration of hardware and software solutions, superior energy efficiency, and extensive commercial deployment experience. Weaknesses: Potential geopolitical challenges affecting global market access and component supply chain vulnerabilities in certain markets.

Raytheon Co.

Technical Solution: Raytheon has pioneered advanced phased array technology for telecommunications infrastructure through their GaN-based active electronically scanned arrays (AESA). Their solution incorporates gallium nitride (GaN) semiconductor technology that enables higher power density, wider bandwidth, and improved thermal management compared to traditional materials. Raytheon's phased arrays feature sophisticated digital beamforming capabilities that allow for dynamic beam steering and multiple simultaneous beams, critical for modern telecommunication networks. Their systems employ proprietary calibration techniques that maintain phase coherence across thousands of elements, ensuring optimal performance in varying environmental conditions. Additionally, Raytheon has developed specialized cooling systems that address the thermal challenges of high-power phased arrays, extending operational lifetime and reliability in telecommunications infrastructure applications.

Strengths: Exceptional expertise in military-grade phased array technology that transfers well to commercial telecommunications, superior reliability in harsh environments, and advanced manufacturing capabilities. Weaknesses: Higher cost structure compared to purely commercial competitors and potentially longer development cycles due to rigorous testing protocols.

Key Patents in Phased Array Optimization

Phased array antenna having sub-arrays

PatentWO2017121222A1

Innovation

- Tiled rectangular sub-arrays design that reduces periodicity of phase centers, enabling improved beam steering capabilities while maintaining cost efficiency.

- Common phase shifter connection across multiple sub-arrays, simplifying the control architecture while maintaining steering performance.

- Variable amplitude weighting across sub-arrays to approximate column weighting, providing enhanced beam forming capabilities.

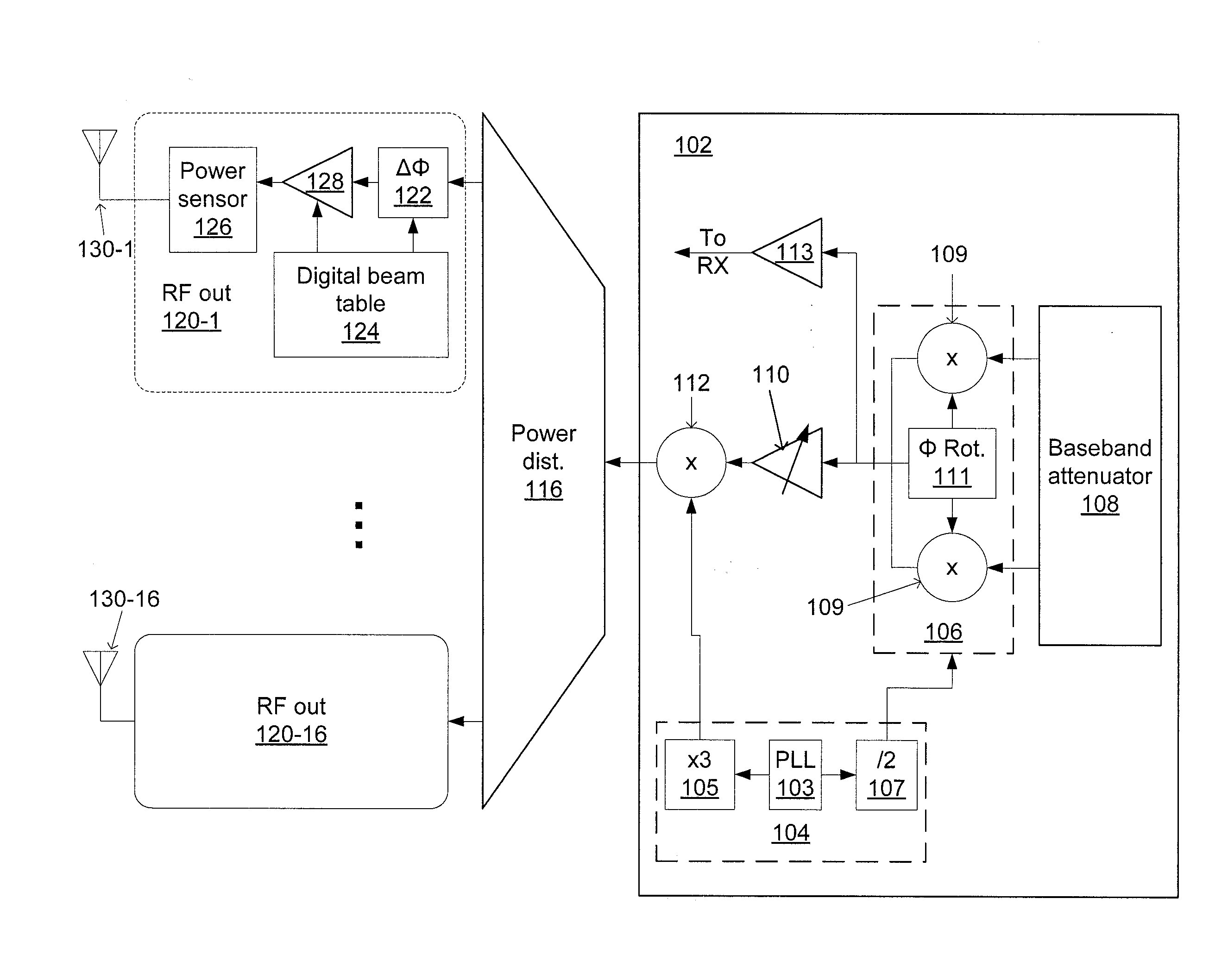

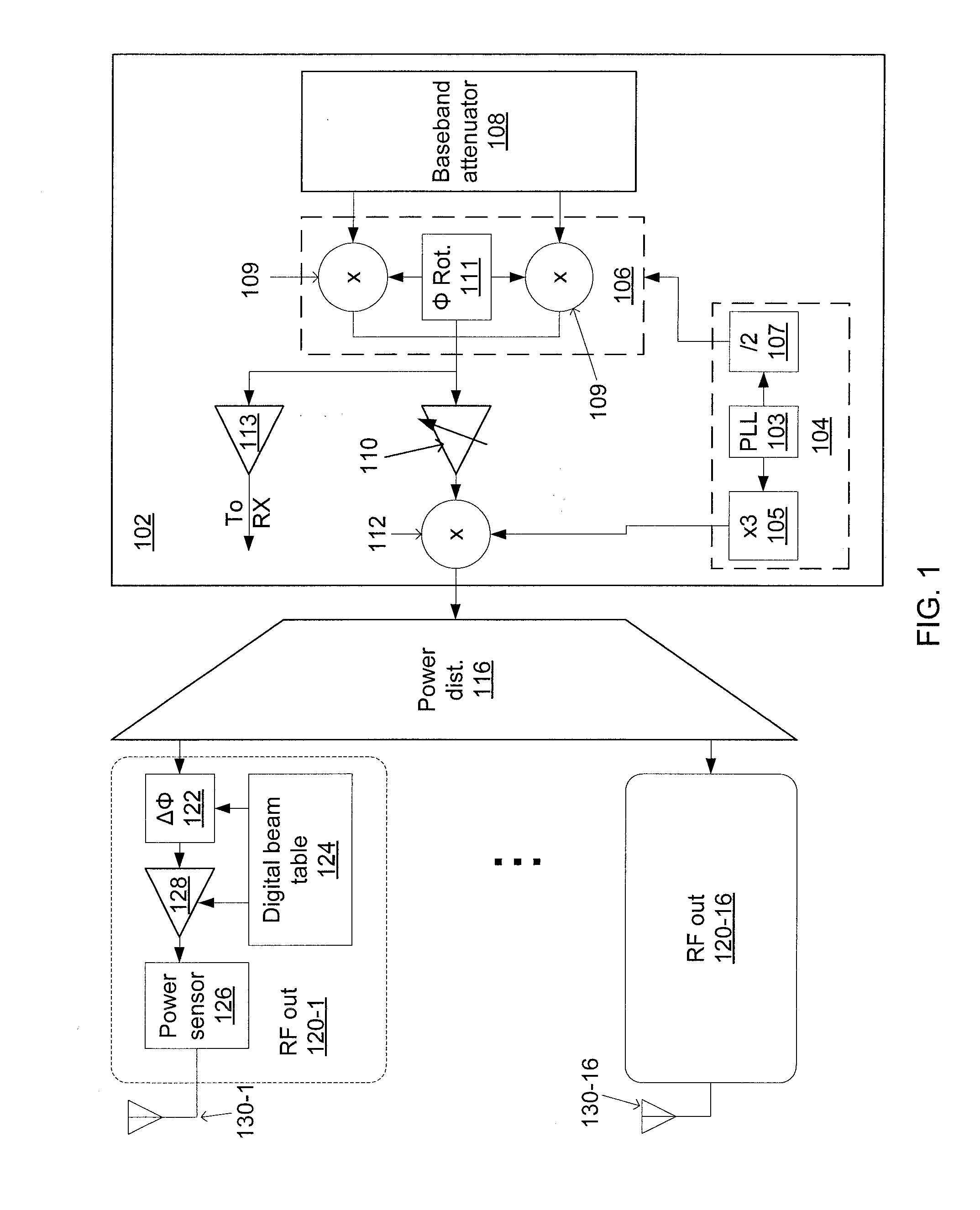

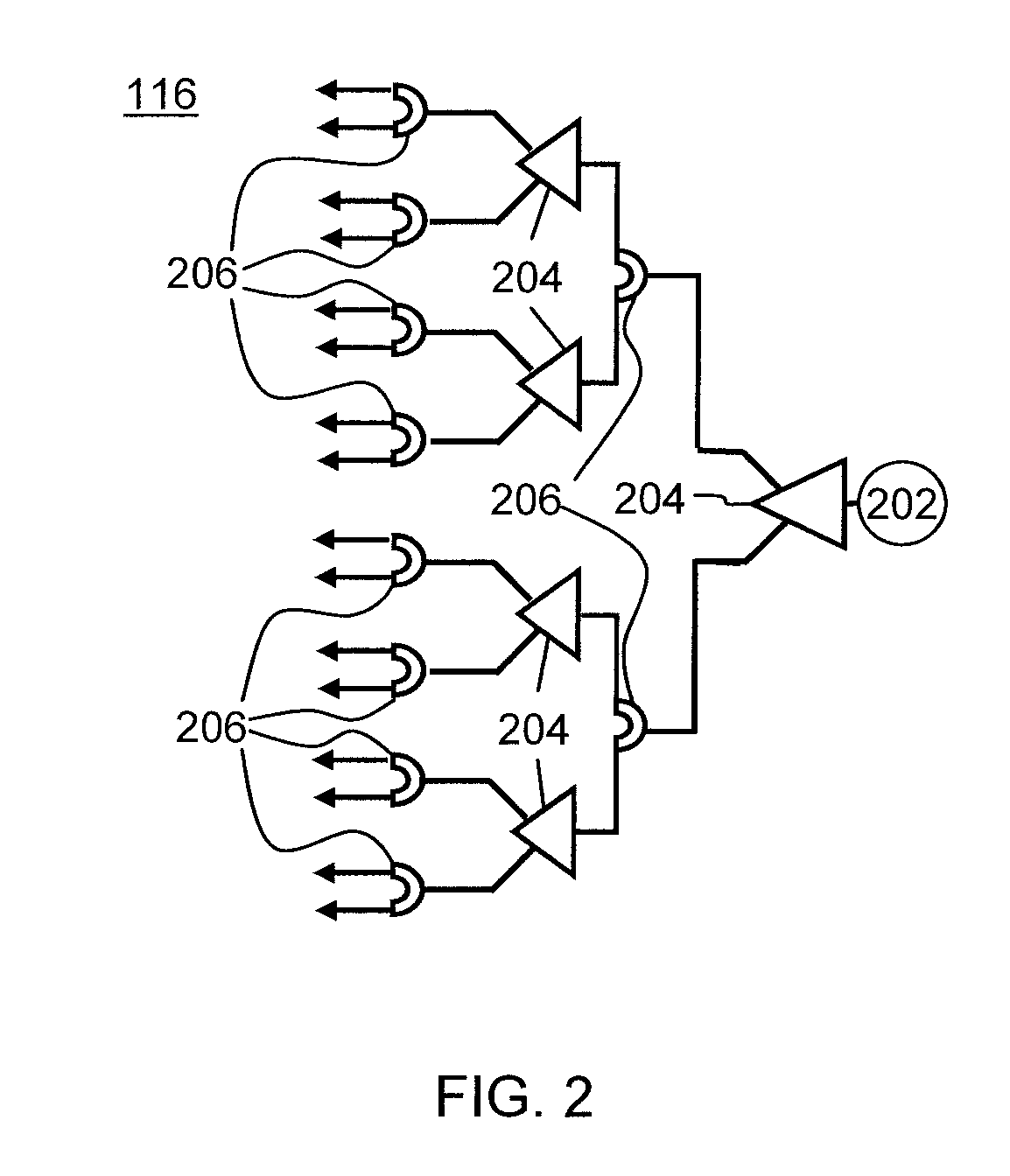

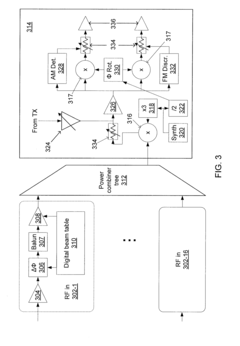

Phased-array transceiver for millimeter-wave frequencies

PatentActiveUS20110063169A1

Innovation

- The implementation of a phased-array transmitter and receiver with a combination of active and passive phase-shifting and power-combining elements, including a power distribution network and power monitoring systems, allows for beam steering and compensation for deviations in power output, using a silicon substrate and integrated chip design.

Spectrum Allocation and Regulatory Considerations

Spectrum allocation for phased array systems in telecommunications represents a critical regulatory framework that directly impacts deployment strategies and operational capabilities. The International Telecommunication Union (ITU) and national regulatory bodies like the Federal Communications Commission (FCC) in the United States and the European Conference of Postal and Telecommunications Administrations (CEPT) in Europe establish the guidelines governing spectrum usage. These frameworks determine which frequency bands are available for phased array applications in 5G and beyond, with significant variations across different regions.

The millimeter wave (mmWave) bands, particularly 24-29 GHz, 37-40 GHz, and 64-71 GHz, have been prioritized for 5G deployment utilizing phased array technology. However, regulatory approaches to these bands differ substantially between jurisdictions. For instance, the United States has adopted a more flexible approach to mmWave allocation, while European regulators have implemented more stringent power limitations and interference protection measures.

Regulatory considerations extend beyond mere frequency allocation to encompass radiation exposure limits, interference management protocols, and international coordination requirements. Phased array systems must comply with specific power spectral density (PSD) limitations and electromagnetic field (EMF) exposure guidelines, which can vary by up to 30% between different regulatory domains.

Cross-border coordination presents additional complexity, particularly for telecommunications infrastructure deployed in border regions. The ITU Radio Regulations provide the framework for international coordination, but bilateral agreements often supplement these with more specific provisions for phased array deployments. These agreements typically address issues such as maximum allowable field strength at borders and coordination zones extending 15-50 kilometers from international boundaries.

Dynamic spectrum access (DSA) and spectrum sharing frameworks are emerging as important regulatory innovations that could significantly enhance phased array optimization. These approaches allow for more efficient spectrum utilization through technologies like cognitive radio and automated frequency coordination. The Citizens Broadband Radio Service (CBRS) in the United States represents an early implementation of this approach, utilizing a three-tiered access model that could serve as a template for future phased array spectrum allocation.

Regulatory timelines for spectrum auctions and licensing procedures directly impact deployment schedules for phased array systems. The typical timeline from spectrum identification to commercial deployment spans 3-7 years, creating significant planning challenges for telecommunications infrastructure developers. Forward-looking regulatory roadmaps that provide visibility into future spectrum availability are therefore essential for optimizing phased array implementation strategies.

The millimeter wave (mmWave) bands, particularly 24-29 GHz, 37-40 GHz, and 64-71 GHz, have been prioritized for 5G deployment utilizing phased array technology. However, regulatory approaches to these bands differ substantially between jurisdictions. For instance, the United States has adopted a more flexible approach to mmWave allocation, while European regulators have implemented more stringent power limitations and interference protection measures.

Regulatory considerations extend beyond mere frequency allocation to encompass radiation exposure limits, interference management protocols, and international coordination requirements. Phased array systems must comply with specific power spectral density (PSD) limitations and electromagnetic field (EMF) exposure guidelines, which can vary by up to 30% between different regulatory domains.

Cross-border coordination presents additional complexity, particularly for telecommunications infrastructure deployed in border regions. The ITU Radio Regulations provide the framework for international coordination, but bilateral agreements often supplement these with more specific provisions for phased array deployments. These agreements typically address issues such as maximum allowable field strength at borders and coordination zones extending 15-50 kilometers from international boundaries.

Dynamic spectrum access (DSA) and spectrum sharing frameworks are emerging as important regulatory innovations that could significantly enhance phased array optimization. These approaches allow for more efficient spectrum utilization through technologies like cognitive radio and automated frequency coordination. The Citizens Broadband Radio Service (CBRS) in the United States represents an early implementation of this approach, utilizing a three-tiered access model that could serve as a template for future phased array spectrum allocation.

Regulatory timelines for spectrum auctions and licensing procedures directly impact deployment schedules for phased array systems. The typical timeline from spectrum identification to commercial deployment spans 3-7 years, creating significant planning challenges for telecommunications infrastructure developers. Forward-looking regulatory roadmaps that provide visibility into future spectrum availability are therefore essential for optimizing phased array implementation strategies.

Energy Efficiency and Environmental Impact

The energy consumption of phased array systems in telecommunication infrastructure represents a significant operational cost and environmental concern. Current phased array systems for 5G and emerging 6G networks typically consume between 200-500 watts per antenna array, with larger MIMO configurations potentially requiring several kilowatts of power. This substantial energy demand not only impacts operational expenses but also contributes significantly to the carbon footprint of telecommunication networks, which currently account for approximately 2-3% of global greenhouse gas emissions.

Recent advancements in semiconductor technology have enabled more energy-efficient phased array designs. Gallium nitride (GaN) and silicon germanium (SiGe) based power amplifiers have demonstrated 30-40% improved efficiency compared to traditional silicon-based components. These materials allow for higher power density and better thermal management, reducing overall energy requirements while maintaining performance standards.

Adaptive power management techniques represent another promising approach to energy optimization. Dynamic element activation strategies, which selectively power only the necessary elements of the array based on traffic demands and coverage requirements, have shown potential energy savings of up to 45% during non-peak hours. This approach maintains service quality while significantly reducing unnecessary power consumption.

The environmental impact of phased array systems extends beyond operational energy use to include manufacturing and end-of-life considerations. The production of specialized semiconductor components involves energy-intensive processes and potentially hazardous materials. Life cycle assessments indicate that manufacturing accounts for approximately 15-20% of a phased array system's total carbon footprint, highlighting the importance of sustainable production methods and material selection.

Thermal management innovations are proving critical for both energy efficiency and environmental sustainability. Passive cooling designs that reduce or eliminate the need for energy-intensive active cooling systems can decrease overall power consumption by 10-15%. Additionally, advanced thermal interface materials and optimized heat sink designs are extending component lifespans, reducing electronic waste generation.

Renewable energy integration presents a compelling opportunity for telecommunication infrastructure. Field trials combining phased array systems with on-site solar generation have demonstrated the potential to offset 30-60% of energy requirements, depending on geographical location. This approach not only reduces operational costs but also enhances network resilience during grid outages, providing critical communication capabilities during emergencies.

Looking forward, the industry is moving toward holistic energy optimization approaches that consider the entire network architecture. Software-defined networking techniques that dynamically allocate resources based on real-time demands show promise for reducing system-wide energy consumption by an additional 20-25%, representing the next frontier in sustainable telecommunication infrastructure development.

Recent advancements in semiconductor technology have enabled more energy-efficient phased array designs. Gallium nitride (GaN) and silicon germanium (SiGe) based power amplifiers have demonstrated 30-40% improved efficiency compared to traditional silicon-based components. These materials allow for higher power density and better thermal management, reducing overall energy requirements while maintaining performance standards.

Adaptive power management techniques represent another promising approach to energy optimization. Dynamic element activation strategies, which selectively power only the necessary elements of the array based on traffic demands and coverage requirements, have shown potential energy savings of up to 45% during non-peak hours. This approach maintains service quality while significantly reducing unnecessary power consumption.

The environmental impact of phased array systems extends beyond operational energy use to include manufacturing and end-of-life considerations. The production of specialized semiconductor components involves energy-intensive processes and potentially hazardous materials. Life cycle assessments indicate that manufacturing accounts for approximately 15-20% of a phased array system's total carbon footprint, highlighting the importance of sustainable production methods and material selection.

Thermal management innovations are proving critical for both energy efficiency and environmental sustainability. Passive cooling designs that reduce or eliminate the need for energy-intensive active cooling systems can decrease overall power consumption by 10-15%. Additionally, advanced thermal interface materials and optimized heat sink designs are extending component lifespans, reducing electronic waste generation.

Renewable energy integration presents a compelling opportunity for telecommunication infrastructure. Field trials combining phased array systems with on-site solar generation have demonstrated the potential to offset 30-60% of energy requirements, depending on geographical location. This approach not only reduces operational costs but also enhances network resilience during grid outages, providing critical communication capabilities during emergencies.

Looking forward, the industry is moving toward holistic energy optimization approaches that consider the entire network architecture. Software-defined networking techniques that dynamically allocate resources based on real-time demands show promise for reducing system-wide energy consumption by an additional 20-25%, representing the next frontier in sustainable telecommunication infrastructure development.

Unlock deeper insights with Patsnap Eureka Quick Research — get a full tech report to explore trends and direct your research. Try now!

Generate Your Research Report Instantly with AI Agent

Supercharge your innovation with Patsnap Eureka AI Agent Platform!