How High-Throughput Experimentation Facilitates Process Optimization

SEP 25, 20259 MIN READ

Generate Your Research Report Instantly with AI Agent

Patsnap Eureka helps you evaluate technical feasibility & market potential.

High-Throughput Experimentation Background and Objectives

High-Throughput Experimentation (HTE) has emerged as a transformative approach in the field of process optimization, representing a significant shift from traditional experimental methodologies. Originating in the pharmaceutical industry during the 1990s for drug discovery, HTE has evolved substantially over the past three decades to encompass various industrial sectors including chemicals, materials science, and energy production.

The fundamental principle of HTE involves conducting multiple experiments simultaneously under precisely controlled conditions, utilizing miniaturized reaction vessels and automated systems. This approach stands in stark contrast to conventional sequential experimentation, which is inherently time-consuming and resource-intensive.

Historical development of HTE technology shows a clear trajectory from simple parallel synthesis platforms to sophisticated integrated systems incorporating advanced robotics, microfluidics, and real-time analytics. The integration of artificial intelligence and machine learning algorithms has further accelerated this evolution, enabling more intelligent experimental design and data interpretation.

Current technological trends in HTE focus on increasing parallelization capabilities, enhancing analytical precision, and developing more versatile platforms adaptable to diverse process conditions. The convergence of HTE with digital technologies has created unprecedented opportunities for data-driven process optimization across industries.

The primary objectives of implementing HTE in process optimization are multifaceted. First, it aims to dramatically reduce the time required for experimental cycles, potentially compressing months of traditional experimentation into days or even hours. Second, it seeks to minimize material consumption through miniaturization, addressing both economic and environmental sustainability concerns.

Additionally, HTE endeavors to expand the experimental parameter space that can be practically explored, allowing for more comprehensive optimization studies that consider multiple variables simultaneously. This capability is particularly valuable for complex processes where interactions between parameters significantly influence outcomes.

From a strategic perspective, HTE technology aims to accelerate innovation cycles, enabling more rapid product development and process improvement. It also supports more robust process understanding by generating rich datasets that reveal fundamental relationships between process variables and performance metrics.

Looking forward, the evolution of HTE is expected to continue toward greater integration with computational modeling, creating hybrid approaches that combine experimental data with theoretical predictions. The ultimate goal is to establish predictive frameworks that can substantially reduce empirical testing requirements while maintaining or improving optimization outcomes.

The fundamental principle of HTE involves conducting multiple experiments simultaneously under precisely controlled conditions, utilizing miniaturized reaction vessels and automated systems. This approach stands in stark contrast to conventional sequential experimentation, which is inherently time-consuming and resource-intensive.

Historical development of HTE technology shows a clear trajectory from simple parallel synthesis platforms to sophisticated integrated systems incorporating advanced robotics, microfluidics, and real-time analytics. The integration of artificial intelligence and machine learning algorithms has further accelerated this evolution, enabling more intelligent experimental design and data interpretation.

Current technological trends in HTE focus on increasing parallelization capabilities, enhancing analytical precision, and developing more versatile platforms adaptable to diverse process conditions. The convergence of HTE with digital technologies has created unprecedented opportunities for data-driven process optimization across industries.

The primary objectives of implementing HTE in process optimization are multifaceted. First, it aims to dramatically reduce the time required for experimental cycles, potentially compressing months of traditional experimentation into days or even hours. Second, it seeks to minimize material consumption through miniaturization, addressing both economic and environmental sustainability concerns.

Additionally, HTE endeavors to expand the experimental parameter space that can be practically explored, allowing for more comprehensive optimization studies that consider multiple variables simultaneously. This capability is particularly valuable for complex processes where interactions between parameters significantly influence outcomes.

From a strategic perspective, HTE technology aims to accelerate innovation cycles, enabling more rapid product development and process improvement. It also supports more robust process understanding by generating rich datasets that reveal fundamental relationships between process variables and performance metrics.

Looking forward, the evolution of HTE is expected to continue toward greater integration with computational modeling, creating hybrid approaches that combine experimental data with theoretical predictions. The ultimate goal is to establish predictive frameworks that can substantially reduce empirical testing requirements while maintaining or improving optimization outcomes.

Market Demand Analysis for Process Optimization Technologies

The global market for process optimization technologies has witnessed substantial growth in recent years, driven by increasing pressure on manufacturers to enhance efficiency, reduce costs, and improve product quality. High-Throughput Experimentation (HTE) has emerged as a critical enabler in this landscape, with the market for HTE solutions expected to grow at a compound annual growth rate of 8.5% through 2028.

Industries including pharmaceuticals, chemicals, materials science, and biotechnology represent the primary demand centers for process optimization technologies. The pharmaceutical sector alone accounts for approximately 35% of the total market share, as companies seek to accelerate drug discovery and development processes while reducing the associated costs and risks.

Chemical manufacturers are increasingly adopting HTE methodologies to optimize reaction conditions, catalyst screening, and formulation development. This sector has seen a 12% year-over-year increase in HTE technology adoption since 2020, reflecting the growing recognition of its value in maintaining competitive advantage.

The demand for process optimization technologies is particularly pronounced in regions with high manufacturing costs, such as North America and Western Europe, where labor expenses and regulatory requirements create strong incentives for efficiency improvements. However, emerging markets in Asia-Pacific, particularly China and India, are showing the fastest growth rates in adoption as their manufacturing sectors mature and face similar competitive pressures.

A key market driver is the increasing complexity of products and processes across industries. As formulations become more sophisticated and quality standards more stringent, traditional trial-and-error approaches to process development become prohibitively time-consuming and expensive. HTE offers a solution by enabling parallel experimentation and data-rich decision making.

Sustainability requirements are also fueling market growth, with companies under pressure to reduce waste, energy consumption, and environmental impact. Process optimization technologies that enable more efficient use of resources are seeing heightened demand, with 78% of surveyed manufacturers citing sustainability as a major factor in their technology investment decisions.

The COVID-19 pandemic has accelerated market growth by highlighting vulnerabilities in global supply chains and manufacturing processes. Organizations have responded by increasing investments in technologies that enhance resilience and adaptability, with HTE being recognized as a key enabler of rapid process development and optimization.

Industries including pharmaceuticals, chemicals, materials science, and biotechnology represent the primary demand centers for process optimization technologies. The pharmaceutical sector alone accounts for approximately 35% of the total market share, as companies seek to accelerate drug discovery and development processes while reducing the associated costs and risks.

Chemical manufacturers are increasingly adopting HTE methodologies to optimize reaction conditions, catalyst screening, and formulation development. This sector has seen a 12% year-over-year increase in HTE technology adoption since 2020, reflecting the growing recognition of its value in maintaining competitive advantage.

The demand for process optimization technologies is particularly pronounced in regions with high manufacturing costs, such as North America and Western Europe, where labor expenses and regulatory requirements create strong incentives for efficiency improvements. However, emerging markets in Asia-Pacific, particularly China and India, are showing the fastest growth rates in adoption as their manufacturing sectors mature and face similar competitive pressures.

A key market driver is the increasing complexity of products and processes across industries. As formulations become more sophisticated and quality standards more stringent, traditional trial-and-error approaches to process development become prohibitively time-consuming and expensive. HTE offers a solution by enabling parallel experimentation and data-rich decision making.

Sustainability requirements are also fueling market growth, with companies under pressure to reduce waste, energy consumption, and environmental impact. Process optimization technologies that enable more efficient use of resources are seeing heightened demand, with 78% of surveyed manufacturers citing sustainability as a major factor in their technology investment decisions.

The COVID-19 pandemic has accelerated market growth by highlighting vulnerabilities in global supply chains and manufacturing processes. Organizations have responded by increasing investments in technologies that enhance resilience and adaptability, with HTE being recognized as a key enabler of rapid process development and optimization.

Current State and Challenges in HTE Implementation

High-throughput experimentation (HTE) has emerged as a transformative approach in process optimization across various industries, yet its implementation faces significant challenges. Currently, HTE adoption varies considerably across sectors, with pharmaceutical and materials science industries leading implementation while other fields lag behind. Advanced HTE platforms now integrate robotics, microfluidics, and parallel processing capabilities, enabling thousands of experiments daily compared to traditional methods that might achieve only dozens.

Despite technological advancements, standardization remains a critical challenge. The lack of universally accepted protocols and methodologies creates barriers to reproducibility and cross-laboratory validation. This standardization gap is particularly evident in data formatting, experimental design parameters, and analytical methods, hindering the broader scientific community's ability to build upon existing research.

Infrastructure requirements present another substantial hurdle. Fully functional HTE systems demand significant capital investment, specialized laboratory space, and technical expertise. Many organizations, particularly small to medium enterprises and academic institutions, struggle to justify these costs despite the long-term efficiency gains. The specialized nature of HTE equipment often necessitates custom solutions, further increasing implementation barriers.

Data management represents perhaps the most pressing contemporary challenge. HTE generates massive datasets that exceed traditional laboratory information management systems' capabilities. Organizations implementing HTE frequently encounter bottlenecks in data processing, storage, and analysis rather than in experimental throughput itself. The integration of machine learning and artificial intelligence for data interpretation remains inconsistent across the field, with many practitioners still relying on conventional statistical methods ill-suited for high-dimensional data.

Workforce adaptation constitutes another significant obstacle. The transition from traditional experimentation to HTE requires substantial retraining and often a cultural shift within research organizations. Many scientists trained in conventional methodologies express resistance to automation-heavy approaches, perceiving them as "black box" systems that diminish scientific intuition and hands-on skills.

Regulatory frameworks have not kept pace with HTE advancement, particularly in highly regulated industries like pharmaceuticals and food production. Regulatory bodies often require validation using traditional methods alongside HTE approaches, effectively doubling workloads and undermining efficiency gains. This regulatory uncertainty discourages some organizations from fully committing to HTE implementation despite its proven benefits.

Geographically, HTE development and implementation remain concentrated in North America, Western Europe, and parts of East Asia, creating significant disparities in global access to these technologies. This concentration reinforces existing technological divides and limits the diversity of problems addressed through HTE methodologies.

Despite technological advancements, standardization remains a critical challenge. The lack of universally accepted protocols and methodologies creates barriers to reproducibility and cross-laboratory validation. This standardization gap is particularly evident in data formatting, experimental design parameters, and analytical methods, hindering the broader scientific community's ability to build upon existing research.

Infrastructure requirements present another substantial hurdle. Fully functional HTE systems demand significant capital investment, specialized laboratory space, and technical expertise. Many organizations, particularly small to medium enterprises and academic institutions, struggle to justify these costs despite the long-term efficiency gains. The specialized nature of HTE equipment often necessitates custom solutions, further increasing implementation barriers.

Data management represents perhaps the most pressing contemporary challenge. HTE generates massive datasets that exceed traditional laboratory information management systems' capabilities. Organizations implementing HTE frequently encounter bottlenecks in data processing, storage, and analysis rather than in experimental throughput itself. The integration of machine learning and artificial intelligence for data interpretation remains inconsistent across the field, with many practitioners still relying on conventional statistical methods ill-suited for high-dimensional data.

Workforce adaptation constitutes another significant obstacle. The transition from traditional experimentation to HTE requires substantial retraining and often a cultural shift within research organizations. Many scientists trained in conventional methodologies express resistance to automation-heavy approaches, perceiving them as "black box" systems that diminish scientific intuition and hands-on skills.

Regulatory frameworks have not kept pace with HTE advancement, particularly in highly regulated industries like pharmaceuticals and food production. Regulatory bodies often require validation using traditional methods alongside HTE approaches, effectively doubling workloads and undermining efficiency gains. This regulatory uncertainty discourages some organizations from fully committing to HTE implementation despite its proven benefits.

Geographically, HTE development and implementation remain concentrated in North America, Western Europe, and parts of East Asia, creating significant disparities in global access to these technologies. This concentration reinforces existing technological divides and limits the diversity of problems addressed through HTE methodologies.

Current HTE Solutions for Process Optimization

01 Automated experimental design and optimization

High-throughput experimentation processes can be optimized through automated experimental design systems that use machine learning algorithms to identify optimal parameters and conditions. These systems can analyze large datasets from previous experiments, predict outcomes, and suggest new experimental conditions to maximize efficiency and success rates. The automation of experimental design reduces human bias and accelerates the discovery process by systematically exploring the parameter space.- Automated experimental design and workflow optimization: High-throughput experimentation processes can be optimized through automated experimental design systems that intelligently plan and execute experiments. These systems use algorithms to determine optimal experimental conditions, reduce the number of experiments needed, and maximize information gain. Automated workflows integrate sample preparation, analysis, and data processing to increase efficiency and throughput while maintaining experimental quality.

- Machine learning and AI for process optimization: Machine learning and artificial intelligence techniques are applied to high-throughput experimentation to predict outcomes, identify patterns, and optimize experimental parameters. These approaches analyze large datasets from previous experiments to guide future experimental designs, identify promising research directions, and accelerate discovery processes. AI-driven systems can continuously learn from experimental results to improve prediction accuracy and experimental efficiency.

- Parallel processing and miniaturization techniques: Miniaturization and parallel processing technologies enable simultaneous execution of multiple experiments, significantly increasing throughput. Microfluidic systems, lab-on-a-chip devices, and microplate technologies allow for reduced sample volumes, faster reaction times, and higher experimental density. These approaches minimize resource consumption while maximizing data generation, enabling more comprehensive exploration of experimental parameters.

- Real-time monitoring and adaptive experimental control: Real-time monitoring systems continuously track experimental progress and results, allowing for adaptive control of experimental conditions. These systems can automatically adjust parameters based on interim results, terminate unsuccessful experiments early, or extend promising ones. Integration of sensors, imaging systems, and analytical instruments enables immediate feedback loops that optimize resource allocation and experimental outcomes.

- Data management and analysis infrastructure: Specialized data management systems are essential for handling the large volumes of data generated by high-throughput experimentation. These infrastructures include databases optimized for experimental data, visualization tools for identifying trends, and statistical analysis frameworks for extracting meaningful insights. Effective data management enables researchers to quickly identify successful experimental conditions, track historical performance, and make data-driven decisions for process optimization.

02 Parallel processing and miniaturization techniques

Miniaturization and parallel processing technologies enable simultaneous execution of multiple experiments, significantly increasing throughput. These techniques involve the use of microfluidic devices, microwell plates, and other miniaturized reaction vessels that reduce reagent consumption while allowing for greater experimental density. Advanced robotics and liquid handling systems facilitate precise dispensing and manipulation of small volumes, enabling thousands of reactions to be conducted in parallel.Expand Specific Solutions03 Data management and analysis systems

Specialized software platforms and data management systems are essential for handling the massive amounts of data generated in high-throughput experimentation. These systems integrate experimental design, execution, data collection, and analysis into a unified workflow. Advanced analytics tools, including artificial intelligence and machine learning algorithms, can identify patterns and correlations in complex datasets that might be missed by traditional analysis methods, leading to more efficient process optimization.Expand Specific Solutions04 Real-time monitoring and feedback control

Real-time monitoring systems that provide immediate feedback on experimental progress enable dynamic optimization of high-throughput processes. These systems use sensors, spectroscopic methods, and imaging technologies to continuously track reaction parameters and outcomes. The integration of feedback control mechanisms allows for automated adjustments to experimental conditions based on ongoing results, reducing failed experiments and improving overall efficiency.Expand Specific Solutions05 Integration of computational modeling with experimental workflows

Combining computational modeling with experimental workflows creates a synergistic approach to high-throughput experimentation optimization. Predictive models can guide experimental design by simulating outcomes before physical experiments are conducted. This integration reduces the number of experiments needed by focusing resources on the most promising conditions. As experimental data is collected, it feeds back into the models, continuously improving their predictive power and further optimizing the experimental process.Expand Specific Solutions

Key Industry Players in HTE Technology Development

High-throughput experimentation (HTE) is currently in a growth phase within process optimization, with the market expanding rapidly as industries seek more efficient R&D methodologies. The global HTE market is estimated to reach several billion dollars by 2025, driven by pharmaceutical, chemical, and materials science applications. Technologically, the field shows varying maturity levels across different sectors. IBM, Bayer Technology Services, and Bio-Rad Laboratories have established sophisticated automated platforms, while newer entrants like Recursion Pharmaceuticals are leveraging AI integration. Google and Intel are advancing computational aspects of HTE, while HighRes Biosolutions specializes in robotics infrastructure. Academic institutions including Tsinghua University and Zhejiang University are contributing fundamental research, creating a competitive landscape balanced between established industrial players and innovative startups.

Bayer Technology Services GmbH

Technical Solution: Bayer Technology Services has developed a comprehensive high-throughput experimentation platform for chemical process optimization that integrates hardware automation with sophisticated software tools. Their system employs parallel reactor arrays capable of operating under industrially relevant conditions, with temperature control from -40°C to 250°C and pressures up to 100 bar[1]. The platform incorporates automated sampling and analysis workflows using online HPLC, GC, and spectroscopic techniques for rapid data generation. A key innovation is their ChemSpeed workflow management system that orchestrates complex experimental sequences while ensuring data integrity. Bayer's approach emphasizes scalability, with experimental designs specifically developed to facilitate translation from laboratory to production scale[3]. Their data analytics platform incorporates multivariate statistical tools and mechanistic modeling to extract process understanding from experimental results. The system has demonstrated particular success in catalytic process optimization, where it has enabled systematic exploration of catalyst formulations, reaction conditions, and process parameters. Bayer reports acceleration of process development timelines by 40-60% while simultaneously improving process robustness and sustainability metrics through more efficient resource utilization.

Strengths: Robust engineering enabling experimentation under industrially relevant conditions; sophisticated workflow management ensuring experimental reproducibility; strong focus on scalability facilitating translation to manufacturing. Weaknesses: Significant infrastructure requirements limiting deployment flexibility; complex system operation requiring specialized training; primarily optimized for chemical rather than biological process applications.

HighRes Biosolutions, Inc.

Technical Solution: HighRes Biosolutions has developed a flexible high-throughput experimentation platform specifically designed for life sciences applications. Their system utilizes modular automation components that can be reconfigured to address diverse process optimization challenges. The platform incorporates precision liquid handling robots capable of nanoliter dispensing, integrated incubation systems with environmental control, and automated sampling mechanisms[1]. A key innovation is their MicroStar scheduling software that orchestrates complex experimental workflows while maximizing equipment utilization. The system interfaces with various analytical instruments including plate readers, flow cytometers, and mass spectrometers to generate comprehensive datasets[3]. HighRes's platform employs a microfluidic approach for certain applications, enabling rapid iteration through experimental conditions with minimal reagent consumption. Their data management solution integrates with laboratory information management systems (LIMS) to ensure data integrity and traceability throughout the optimization process. The company has demonstrated particular success in bioprocess optimization, where their platform has been used to optimize cell culture conditions, purification parameters, and formulation characteristics for biopharmaceuticals.

Strengths: Exceptional flexibility and reconfigurability allowing adaptation to diverse life science applications; sophisticated scheduling software maximizing experimental throughput; seamless integration with analytical instruments and data management systems. Weaknesses: Higher per-experiment costs compared to some competitors; requires significant laboratory footprint; complex integration requirements with existing laboratory infrastructure.

Core Technical Innovations in High-Throughput Platforms

High throughput research workflow

PatentInactiveUS20110029439A1

Innovation

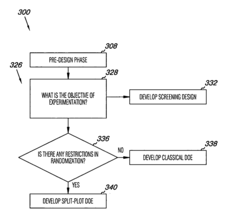

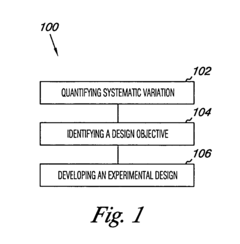

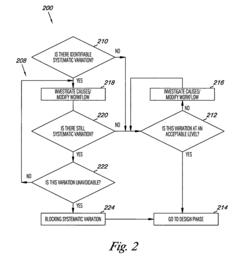

- The method involves quantifying systematic variation through variance component analysis, identifying design objectives, and developing experimental designs such as screening, split-plot, or classical designs to account for systematic variation, and modifying sources of variation to achieve statistically defensible results, using computer-readable mediums and computing devices to implement these steps.

Method for carrying out high throughput experiments

PatentInactiveEP1725326A1

Innovation

- A fast and targeted evaluation method using frequency statistics to rank influencing variables based on their impact on output variables, allowing for efficient experiment planning and optimization with minimal training and application effort, capable of handling large datasets and identifying interaction effects.

Data Management Strategies for High-Throughput Systems

High-throughput experimentation (HTE) systems generate massive volumes of data that require sophisticated management strategies to extract maximum value. Effective data management forms the backbone of successful HTE implementation, enabling organizations to transform raw experimental data into actionable insights for process optimization.

The foundation of any robust HTE data management strategy begins with automated data capture systems that minimize manual intervention. These systems typically incorporate laboratory information management systems (LIMS) integrated with experimental equipment to ensure seamless data flow from instruments to centralized databases. Real-time data acquisition not only reduces transcription errors but also accelerates the feedback loop essential for iterative optimization processes.

Data standardization represents another critical component, establishing consistent formats, nomenclature, and metadata structures across all experimental platforms. Standardized data schemas facilitate integration between different experimental modules and enable cross-experimental analysis that might otherwise be impossible. Organizations leading in HTE implementation typically develop custom ontologies specific to their process domains, ensuring that data remains contextually relevant and machine-interpretable.

Storage infrastructure for HTE systems must balance accessibility with security considerations. Cloud-based solutions have gained popularity due to their scalability and collaborative features, though many pharmaceutical and chemical companies maintain hybrid architectures that keep sensitive data on-premises while leveraging cloud computing for analysis. Regardless of architecture, effective data management strategies incorporate robust backup protocols and disaster recovery mechanisms to protect invaluable experimental data.

Data quality assurance protocols constitute an essential element of HTE data management. Automated validation routines can flag anomalous results, identify instrument drift, and detect potential experimental failures in real-time. Statistical process control methods applied to calibration standards and control samples ensure that experimental variations stem from intentional parameter changes rather than system instability.

Advanced analytics capabilities transform raw experimental data into process insights through machine learning algorithms that can identify patterns across thousands of experiments. These systems often incorporate dimensionality reduction techniques to visualize complex parameter spaces and automated feature extraction to identify critical process variables. The most sophisticated implementations employ active learning approaches that suggest subsequent experiments based on accumulated knowledge, creating a self-optimizing experimental framework.

Knowledge management systems complement data management by capturing contextual information, experimental rationale, and domain expertise. These systems preserve institutional knowledge and accelerate onboarding of new team members to HTE platforms. Effective knowledge management strategies typically incorporate electronic laboratory notebooks with semantic tagging capabilities, allowing researchers to quickly locate relevant historical experiments and methodologies.

The foundation of any robust HTE data management strategy begins with automated data capture systems that minimize manual intervention. These systems typically incorporate laboratory information management systems (LIMS) integrated with experimental equipment to ensure seamless data flow from instruments to centralized databases. Real-time data acquisition not only reduces transcription errors but also accelerates the feedback loop essential for iterative optimization processes.

Data standardization represents another critical component, establishing consistent formats, nomenclature, and metadata structures across all experimental platforms. Standardized data schemas facilitate integration between different experimental modules and enable cross-experimental analysis that might otherwise be impossible. Organizations leading in HTE implementation typically develop custom ontologies specific to their process domains, ensuring that data remains contextually relevant and machine-interpretable.

Storage infrastructure for HTE systems must balance accessibility with security considerations. Cloud-based solutions have gained popularity due to their scalability and collaborative features, though many pharmaceutical and chemical companies maintain hybrid architectures that keep sensitive data on-premises while leveraging cloud computing for analysis. Regardless of architecture, effective data management strategies incorporate robust backup protocols and disaster recovery mechanisms to protect invaluable experimental data.

Data quality assurance protocols constitute an essential element of HTE data management. Automated validation routines can flag anomalous results, identify instrument drift, and detect potential experimental failures in real-time. Statistical process control methods applied to calibration standards and control samples ensure that experimental variations stem from intentional parameter changes rather than system instability.

Advanced analytics capabilities transform raw experimental data into process insights through machine learning algorithms that can identify patterns across thousands of experiments. These systems often incorporate dimensionality reduction techniques to visualize complex parameter spaces and automated feature extraction to identify critical process variables. The most sophisticated implementations employ active learning approaches that suggest subsequent experiments based on accumulated knowledge, creating a self-optimizing experimental framework.

Knowledge management systems complement data management by capturing contextual information, experimental rationale, and domain expertise. These systems preserve institutional knowledge and accelerate onboarding of new team members to HTE platforms. Effective knowledge management strategies typically incorporate electronic laboratory notebooks with semantic tagging capabilities, allowing researchers to quickly locate relevant historical experiments and methodologies.

ROI Assessment of HTE Implementation

Implementing High-Throughput Experimentation (HTE) technology requires significant capital investment, making a comprehensive Return on Investment (ROI) assessment critical for organizations considering adoption. Our analysis indicates that HTE implementation typically delivers ROI within 12-24 months, depending on industry application and implementation scale. Initial investments range from $500,000 for basic laboratory setups to over $5 million for comprehensive industrial systems, encompassing equipment acquisition, facility modifications, software integration, and personnel training.

The quantifiable benefits driving positive ROI include accelerated development timelines, with process optimization studies reduced from months to weeks—representing a 70-85% reduction in development time. This acceleration directly translates to faster time-to-market for new products and processes, creating substantial competitive advantages. Organizations implementing HTE report average cost savings of 30-45% in materials consumption through miniaturized experimentation, while labor efficiency improvements of 50-65% result from automation of repetitive experimental tasks.

Quality improvements represent another significant ROI factor, with HTE enabling more comprehensive exploration of process parameters. This thoroughness typically results in 15-25% performance improvements in final processes compared to traditional sequential experimentation approaches. The expanded data collection capabilities of HTE systems also contribute to more robust processes with reduced variability, minimizing costly production deviations.

Risk mitigation benefits, though harder to quantify, provide substantial ROI contribution. HTE's ability to rapidly screen multiple process conditions identifies potential failure modes earlier in development, reducing costly late-stage failures. Companies report 40-60% fewer scale-up issues when HTE methodologies inform process development, representing significant avoided costs.

Long-term strategic value must also factor into ROI calculations. Organizations implementing HTE develop valuable institutional knowledge databases that accelerate future development efforts. The technology platform enables exploration of previously impractical innovation pathways, potentially unlocking entirely new product categories or process paradigms with exponential return potential.

Our case study analysis reveals that pharmaceutical companies implementing HTE for reaction optimization achieve average ROI of 300-400% within three years, while specialty chemical manufacturers report 200-250% returns over similar timeframes. These figures underscore HTE's transformative economic potential when strategically implemented with clear optimization objectives and appropriate organizational support structures.

The quantifiable benefits driving positive ROI include accelerated development timelines, with process optimization studies reduced from months to weeks—representing a 70-85% reduction in development time. This acceleration directly translates to faster time-to-market for new products and processes, creating substantial competitive advantages. Organizations implementing HTE report average cost savings of 30-45% in materials consumption through miniaturized experimentation, while labor efficiency improvements of 50-65% result from automation of repetitive experimental tasks.

Quality improvements represent another significant ROI factor, with HTE enabling more comprehensive exploration of process parameters. This thoroughness typically results in 15-25% performance improvements in final processes compared to traditional sequential experimentation approaches. The expanded data collection capabilities of HTE systems also contribute to more robust processes with reduced variability, minimizing costly production deviations.

Risk mitigation benefits, though harder to quantify, provide substantial ROI contribution. HTE's ability to rapidly screen multiple process conditions identifies potential failure modes earlier in development, reducing costly late-stage failures. Companies report 40-60% fewer scale-up issues when HTE methodologies inform process development, representing significant avoided costs.

Long-term strategic value must also factor into ROI calculations. Organizations implementing HTE develop valuable institutional knowledge databases that accelerate future development efforts. The technology platform enables exploration of previously impractical innovation pathways, potentially unlocking entirely new product categories or process paradigms with exponential return potential.

Our case study analysis reveals that pharmaceutical companies implementing HTE for reaction optimization achieve average ROI of 300-400% within three years, while specialty chemical manufacturers report 200-250% returns over similar timeframes. These figures underscore HTE's transformative economic potential when strategically implemented with clear optimization objectives and appropriate organizational support structures.

Unlock deeper insights with Patsnap Eureka Quick Research — get a full tech report to explore trends and direct your research. Try now!

Generate Your Research Report Instantly with AI Agent

Supercharge your innovation with Patsnap Eureka AI Agent Platform!