How Neuroscience Influences Neuromorphic Computing Materials

OCT 27, 202510 MIN READ

Generate Your Research Report Instantly with AI Agent

PatSnap Eureka helps you evaluate technical feasibility & market potential.

Neuromorphic Computing Evolution and Objectives

Neuromorphic computing represents a paradigm shift in computational architecture, drawing direct inspiration from the structure and function of biological neural systems. The evolution of this field began in the late 1980s when Carver Mead first introduced the concept of using analog circuits to mimic neurobiological architectures. This pioneering work established the foundation for hardware systems that could emulate the brain's parallel processing capabilities and energy efficiency.

The trajectory of neuromorphic computing has been marked by several distinct developmental phases. The initial phase focused on creating basic electronic circuits that could replicate fundamental neural behaviors. This was followed by the development of more sophisticated systems incorporating spike-timing-dependent plasticity (STDP) and other biologically-inspired learning mechanisms. The current phase is characterized by the integration of advanced materials science with neuroscience principles to create more efficient and capable neuromorphic systems.

A critical objective in neuromorphic computing is to achieve the remarkable energy efficiency exhibited by biological brains. While modern supercomputers consume megawatts of power, the human brain operates on approximately 20 watts. This vast efficiency gap drives research toward materials and architectures that can process information with minimal energy consumption while maintaining computational power.

Another key goal is to develop systems capable of unsupervised learning and adaptation. Biological neural networks continuously modify their synaptic connections based on experience, enabling learning without explicit programming. Neuromorphic systems aim to replicate this capability through materials that can dynamically alter their properties in response to electrical stimuli, mimicking synaptic plasticity.

Fault tolerance represents another significant objective in neuromorphic computing. Biological brains demonstrate remarkable resilience, continuing to function effectively despite the regular loss of neurons. Achieving similar robustness in artificial systems requires distributed processing architectures and redundancy mechanisms that can maintain functionality despite component failures.

The convergence of neuroscience and materials science has become increasingly important in advancing neuromorphic computing. Understanding how neurons process information has informed the development of novel materials with properties that can emulate neural functions. These include memristive materials that can change resistance based on historical current flow, phase-change materials that alter their physical state to store information, and organic electronic materials that offer flexibility and biocompatibility.

As neuromorphic computing continues to evolve, its objectives expand beyond mere computational efficiency to include cognitive capabilities such as pattern recognition, decision-making, and contextual understanding. The ultimate goal remains creating systems that can approach the brain's remarkable combination of energy efficiency, adaptability, and cognitive power.

The trajectory of neuromorphic computing has been marked by several distinct developmental phases. The initial phase focused on creating basic electronic circuits that could replicate fundamental neural behaviors. This was followed by the development of more sophisticated systems incorporating spike-timing-dependent plasticity (STDP) and other biologically-inspired learning mechanisms. The current phase is characterized by the integration of advanced materials science with neuroscience principles to create more efficient and capable neuromorphic systems.

A critical objective in neuromorphic computing is to achieve the remarkable energy efficiency exhibited by biological brains. While modern supercomputers consume megawatts of power, the human brain operates on approximately 20 watts. This vast efficiency gap drives research toward materials and architectures that can process information with minimal energy consumption while maintaining computational power.

Another key goal is to develop systems capable of unsupervised learning and adaptation. Biological neural networks continuously modify their synaptic connections based on experience, enabling learning without explicit programming. Neuromorphic systems aim to replicate this capability through materials that can dynamically alter their properties in response to electrical stimuli, mimicking synaptic plasticity.

Fault tolerance represents another significant objective in neuromorphic computing. Biological brains demonstrate remarkable resilience, continuing to function effectively despite the regular loss of neurons. Achieving similar robustness in artificial systems requires distributed processing architectures and redundancy mechanisms that can maintain functionality despite component failures.

The convergence of neuroscience and materials science has become increasingly important in advancing neuromorphic computing. Understanding how neurons process information has informed the development of novel materials with properties that can emulate neural functions. These include memristive materials that can change resistance based on historical current flow, phase-change materials that alter their physical state to store information, and organic electronic materials that offer flexibility and biocompatibility.

As neuromorphic computing continues to evolve, its objectives expand beyond mere computational efficiency to include cognitive capabilities such as pattern recognition, decision-making, and contextual understanding. The ultimate goal remains creating systems that can approach the brain's remarkable combination of energy efficiency, adaptability, and cognitive power.

Market Analysis for Brain-Inspired Computing Solutions

The brain-inspired computing market is experiencing significant growth, driven by the increasing demand for efficient processing of complex data patterns and the limitations of traditional von Neumann computing architectures. Current market valuations place neuromorphic computing at approximately $3.1 billion in 2023, with projections indicating a compound annual growth rate of 24% through 2030, potentially reaching $14.8 billion by the end of the decade.

Key market segments demonstrating strong demand include artificial intelligence applications, autonomous systems, robotics, and edge computing devices. The AI sector particularly values neuromorphic solutions for their energy efficiency and pattern recognition capabilities, which align perfectly with deep learning requirements. Autonomous vehicle manufacturers are increasingly investing in brain-inspired computing to enhance real-time decision-making capabilities while maintaining power efficiency.

Market research indicates that neuromorphic computing solutions offer substantial competitive advantages in specific application domains. These systems demonstrate up to 100 times better energy efficiency compared to traditional GPU-based neural network implementations when processing sensory data streams. This efficiency makes them particularly valuable for battery-powered edge devices and IoT applications where power consumption is a critical constraint.

Regional analysis shows North America currently leading the market with approximately 42% share, followed by Europe and Asia-Pacific. However, the Asia-Pacific region is expected to demonstrate the fastest growth rate over the next five years, driven by substantial investments from countries like China, Japan, and South Korea in neuromorphic research and commercialization initiatives.

Industry adoption patterns reveal that defense and aerospace sectors were early adopters, but commercial applications are now expanding rapidly. Healthcare applications, particularly in medical imaging and diagnostic systems, represent a high-growth segment with potential market value exceeding $1.2 billion by 2028. Financial services are also exploring neuromorphic solutions for fraud detection and algorithmic trading applications.

Customer demand analysis shows increasing interest in hybrid systems that combine traditional computing architectures with neuromorphic accelerators. This approach allows organizations to gradually integrate brain-inspired computing capabilities without completely overhauling existing infrastructure. The market for such hybrid solutions is expected to grow at 32% annually through 2027.

Barriers to wider market adoption include the current high cost of specialized neuromorphic hardware, limited software ecosystems, and the need for specialized programming expertise. However, these barriers are gradually diminishing as more commercial solutions enter the market and development tools mature.

Key market segments demonstrating strong demand include artificial intelligence applications, autonomous systems, robotics, and edge computing devices. The AI sector particularly values neuromorphic solutions for their energy efficiency and pattern recognition capabilities, which align perfectly with deep learning requirements. Autonomous vehicle manufacturers are increasingly investing in brain-inspired computing to enhance real-time decision-making capabilities while maintaining power efficiency.

Market research indicates that neuromorphic computing solutions offer substantial competitive advantages in specific application domains. These systems demonstrate up to 100 times better energy efficiency compared to traditional GPU-based neural network implementations when processing sensory data streams. This efficiency makes them particularly valuable for battery-powered edge devices and IoT applications where power consumption is a critical constraint.

Regional analysis shows North America currently leading the market with approximately 42% share, followed by Europe and Asia-Pacific. However, the Asia-Pacific region is expected to demonstrate the fastest growth rate over the next five years, driven by substantial investments from countries like China, Japan, and South Korea in neuromorphic research and commercialization initiatives.

Industry adoption patterns reveal that defense and aerospace sectors were early adopters, but commercial applications are now expanding rapidly. Healthcare applications, particularly in medical imaging and diagnostic systems, represent a high-growth segment with potential market value exceeding $1.2 billion by 2028. Financial services are also exploring neuromorphic solutions for fraud detection and algorithmic trading applications.

Customer demand analysis shows increasing interest in hybrid systems that combine traditional computing architectures with neuromorphic accelerators. This approach allows organizations to gradually integrate brain-inspired computing capabilities without completely overhauling existing infrastructure. The market for such hybrid solutions is expected to grow at 32% annually through 2027.

Barriers to wider market adoption include the current high cost of specialized neuromorphic hardware, limited software ecosystems, and the need for specialized programming expertise. However, these barriers are gradually diminishing as more commercial solutions enter the market and development tools mature.

Current Challenges in Neuromorphic Materials Development

Despite significant advancements in neuromorphic computing materials, several critical challenges continue to impede progress in this rapidly evolving field. The fundamental challenge lies in replicating the brain's remarkable efficiency and adaptability in artificial systems. While the human brain operates on approximately 20 watts of power, current neuromorphic systems require substantially more energy to perform comparable computational tasks, highlighting a significant efficiency gap.

Material stability presents another major obstacle. Many promising neuromorphic materials exhibit performance degradation over time, with memristive devices particularly susceptible to endurance issues after repeated switching cycles. This reliability concern severely limits practical applications in long-term deployment scenarios where consistent performance is essential.

Scalability remains problematic as researchers struggle to maintain consistent properties when fabricating neuromorphic materials at industrial scales. Laboratory successes often fail to translate to mass production, with variations in material properties causing unpredictable device behavior. This manufacturing inconsistency creates barriers to commercial viability and widespread adoption.

The integration of neuromorphic materials with conventional CMOS technology represents a significant engineering challenge. Interface compatibility issues between novel materials and traditional silicon-based electronics frequently result in signal degradation and increased power consumption, undermining the efficiency benefits that neuromorphic computing promises.

Precise control of synaptic plasticity mechanisms—crucial for learning and memory functions—continues to elude researchers. Current materials cannot fully replicate the complex temporal dynamics and multi-state capabilities of biological synapses, limiting the learning capabilities of neuromorphic systems compared to their biological counterparts.

Thermal management issues arise as neuromorphic devices often generate significant heat during operation, particularly in densely packed configurations. This thermal challenge can affect material properties and computational accuracy, requiring sophisticated cooling solutions that add complexity and cost.

Standardization across the field remains insufficient, with various research groups employing different metrics and testing protocols. This lack of standardized benchmarking makes meaningful comparisons between different neuromorphic materials and approaches difficult, slowing collective progress and knowledge transfer within the scientific community.

Addressing these challenges requires interdisciplinary collaboration between neuroscientists, materials scientists, electrical engineers, and computer architects to develop innovative solutions that bridge the gap between biological neural systems and their artificial counterparts.

Material stability presents another major obstacle. Many promising neuromorphic materials exhibit performance degradation over time, with memristive devices particularly susceptible to endurance issues after repeated switching cycles. This reliability concern severely limits practical applications in long-term deployment scenarios where consistent performance is essential.

Scalability remains problematic as researchers struggle to maintain consistent properties when fabricating neuromorphic materials at industrial scales. Laboratory successes often fail to translate to mass production, with variations in material properties causing unpredictable device behavior. This manufacturing inconsistency creates barriers to commercial viability and widespread adoption.

The integration of neuromorphic materials with conventional CMOS technology represents a significant engineering challenge. Interface compatibility issues between novel materials and traditional silicon-based electronics frequently result in signal degradation and increased power consumption, undermining the efficiency benefits that neuromorphic computing promises.

Precise control of synaptic plasticity mechanisms—crucial for learning and memory functions—continues to elude researchers. Current materials cannot fully replicate the complex temporal dynamics and multi-state capabilities of biological synapses, limiting the learning capabilities of neuromorphic systems compared to their biological counterparts.

Thermal management issues arise as neuromorphic devices often generate significant heat during operation, particularly in densely packed configurations. This thermal challenge can affect material properties and computational accuracy, requiring sophisticated cooling solutions that add complexity and cost.

Standardization across the field remains insufficient, with various research groups employing different metrics and testing protocols. This lack of standardized benchmarking makes meaningful comparisons between different neuromorphic materials and approaches difficult, slowing collective progress and knowledge transfer within the scientific community.

Addressing these challenges requires interdisciplinary collaboration between neuroscientists, materials scientists, electrical engineers, and computer architects to develop innovative solutions that bridge the gap between biological neural systems and their artificial counterparts.

Contemporary Neuromorphic Material Design Approaches

01 Phase-change materials for neuromorphic computing

Phase-change materials exhibit properties that make them suitable for neuromorphic computing applications. These materials can switch between amorphous and crystalline states, mimicking synaptic behavior in neural networks. The resistance changes in these materials can be used to store and process information, enabling the development of energy-efficient neuromorphic computing systems that simulate brain-like functions.- Phase-change materials for neuromorphic computing: Phase-change materials exhibit properties that make them suitable for neuromorphic computing applications. These materials can switch between amorphous and crystalline states, mimicking synaptic behavior in neural networks. The reversible phase transitions allow for the implementation of memory and computational functions similar to biological neurons, enabling efficient neuromorphic systems with low power consumption and high density.

- Memristive materials and devices: Memristive materials are fundamental to neuromorphic computing as they can maintain a state that depends on their history, similar to biological synapses. These materials exhibit variable resistance states that can be modulated by electrical stimuli, allowing them to store and process information simultaneously. Memristive devices based on these materials enable efficient implementation of artificial neural networks with capabilities for learning and adaptation.

- 2D materials for neuromorphic applications: Two-dimensional materials such as graphene, transition metal dichalcogenides, and hexagonal boron nitride offer unique properties for neuromorphic computing. Their atomic-scale thickness, tunable electronic properties, and mechanical flexibility make them promising candidates for building energy-efficient neuromorphic devices. These materials can be engineered to exhibit synaptic behaviors and integrated into complex neural network architectures.

- Ferroelectric and magnetic materials for neuromorphic systems: Ferroelectric and magnetic materials provide non-volatile memory capabilities essential for neuromorphic computing. These materials can maintain their polarization or magnetization states without continuous power, enabling persistent memory functions. Their ability to switch states in response to electric or magnetic fields allows them to mimic synaptic plasticity, making them suitable for implementing learning algorithms in hardware-based neural networks.

- Organic and biomimetic materials for brain-inspired computing: Organic and biomimetic materials offer a path toward more sustainable and biocompatible neuromorphic systems. These materials can be designed to mimic biological neural processes while maintaining low power consumption and flexibility. Polymer-based memristive devices, protein-based memory elements, and other biomimetic approaches enable neuromorphic architectures that more closely resemble biological neural networks in both structure and function.

02 Memristive materials and devices

Memristive materials are fundamental to neuromorphic computing as they can maintain a state of internal resistance based on the history of applied voltage and current. These materials enable the creation of artificial synapses and neurons that can process and store information simultaneously, similar to biological neural systems. Memristive devices offer advantages in terms of power efficiency, scalability, and the ability to implement learning algorithms directly in hardware.Expand Specific Solutions03 2D materials for neuromorphic applications

Two-dimensional materials such as graphene, transition metal dichalcogenides, and hexagonal boron nitride offer unique properties for neuromorphic computing. Their atomic-scale thickness, tunable electronic properties, and compatibility with existing fabrication technologies make them promising candidates for building neuromorphic devices. These materials can be engineered to exhibit synaptic behaviors including spike-timing-dependent plasticity and short-term/long-term potentiation.Expand Specific Solutions04 Ferroelectric and magnetic materials

Ferroelectric and magnetic materials provide non-volatile memory capabilities essential for neuromorphic computing systems. These materials can maintain their polarization or magnetization state without continuous power, enabling persistent memory functions. Their switching characteristics can be utilized to create artificial synapses with analog weight changes, facilitating the implementation of learning algorithms. The integration of these materials into neuromorphic architectures offers improved energy efficiency and performance.Expand Specific Solutions05 Organic and biomimetic materials

Organic and biomimetic materials offer a pathway to create flexible, biocompatible neuromorphic computing systems. These materials can be engineered to mimic biological neural processes and can be integrated with living tissues for bio-hybrid systems. Organic electronic materials provide advantages in terms of flexibility, low-cost fabrication, and compatibility with biological systems, making them suitable for applications in wearable electronics, biomedical devices, and brain-machine interfaces.Expand Specific Solutions

Leading Organizations in Neuromorphic Computing Research

Neuromorphic computing materials are evolving rapidly, with the market currently in an early growth phase characterized by significant research investment but limited commercial deployment. The global market is projected to reach several billion dollars by 2030, driven by AI applications requiring energy-efficient computing. Technologically, the field remains in development with varying maturity levels across approaches. IBM leads with significant neuromorphic architecture patents and research publications, while Samsung, SK Hynix, and Macronix contribute innovations in memory materials. Academic institutions like Tsinghua University, Zhejiang University, and KIST are advancing fundamental research. Government entities, particularly in the US, provide substantial funding support. The ecosystem demonstrates a collaborative dynamic between industry leaders, research institutions, and government agencies working to overcome material science challenges.

International Business Machines Corp.

Technical Solution: IBM has pioneered neuromorphic computing through its TrueNorth and subsequent Brain-inspired Computing architectures. Their approach leverages neuroscience principles to create chips that mimic the brain's neural structure and function. IBM's neuromorphic chips feature a non-von Neumann architecture with distributed memory and processing, implementing spiking neural networks (SNNs) that process information through discrete spikes similar to biological neurons. Their TrueNorth chip contains 1 million digital neurons and 256 million synapses organized into 4,096 neurosynaptic cores[1]. IBM has also developed phase-change memory (PCM) materials that can emulate synaptic plasticity, enabling both long-term potentiation and depression similar to biological synapses[2]. Their recent advancements include integrating these neuromorphic systems with traditional computing architectures to create hybrid systems that leverage the strengths of both paradigms for applications in pattern recognition, sensory processing, and cognitive computing tasks.

Strengths: Industry-leading expertise in neuromorphic hardware implementation; extensive research infrastructure; strong integration with AI software frameworks. Weaknesses: Digital implementations may not fully capture the analog nature of biological neural systems; power efficiency still lags behind theoretical limits of brain-inspired computing; commercialization challenges for specialized hardware.

Samsung Electronics Co., Ltd.

Technical Solution: Samsung has developed advanced neuromorphic computing materials and architectures based on their expertise in semiconductor manufacturing. Their approach focuses on resistive random-access memory (RRAM) and magnetoresistive random-access memory (MRAM) technologies as the foundation for brain-inspired computing. Samsung's neuromorphic devices utilize crossbar arrays of memristive elements that can simultaneously store and process information, mimicking the co-located memory and computation in biological neural systems[3]. Their research has demonstrated artificial synapses using metal-oxide memristors that exhibit spike-timing-dependent plasticity (STDP), a fundamental learning mechanism in neuroscience[4]. Samsung has also pioneered 3D stacking of these neuromorphic elements to increase density and connectivity, achieving synapse densities approaching 109 synapses per square centimeter. Recent developments include integrating these neuromorphic cores with their advanced memory solutions (HBM) to create efficient processing-in-memory architectures that significantly reduce the energy consumption associated with data movement in conventional computing systems.

Strengths: World-class semiconductor manufacturing capabilities; vertical integration from materials to systems; strong commercialization pathway for neuromorphic technologies. Weaknesses: Less public research output compared to academic institutions; focus may be more on practical applications than fundamental neuroscience principles; proprietary approaches may limit ecosystem development.

Breakthrough Neuroscience Principles in Computing Materials

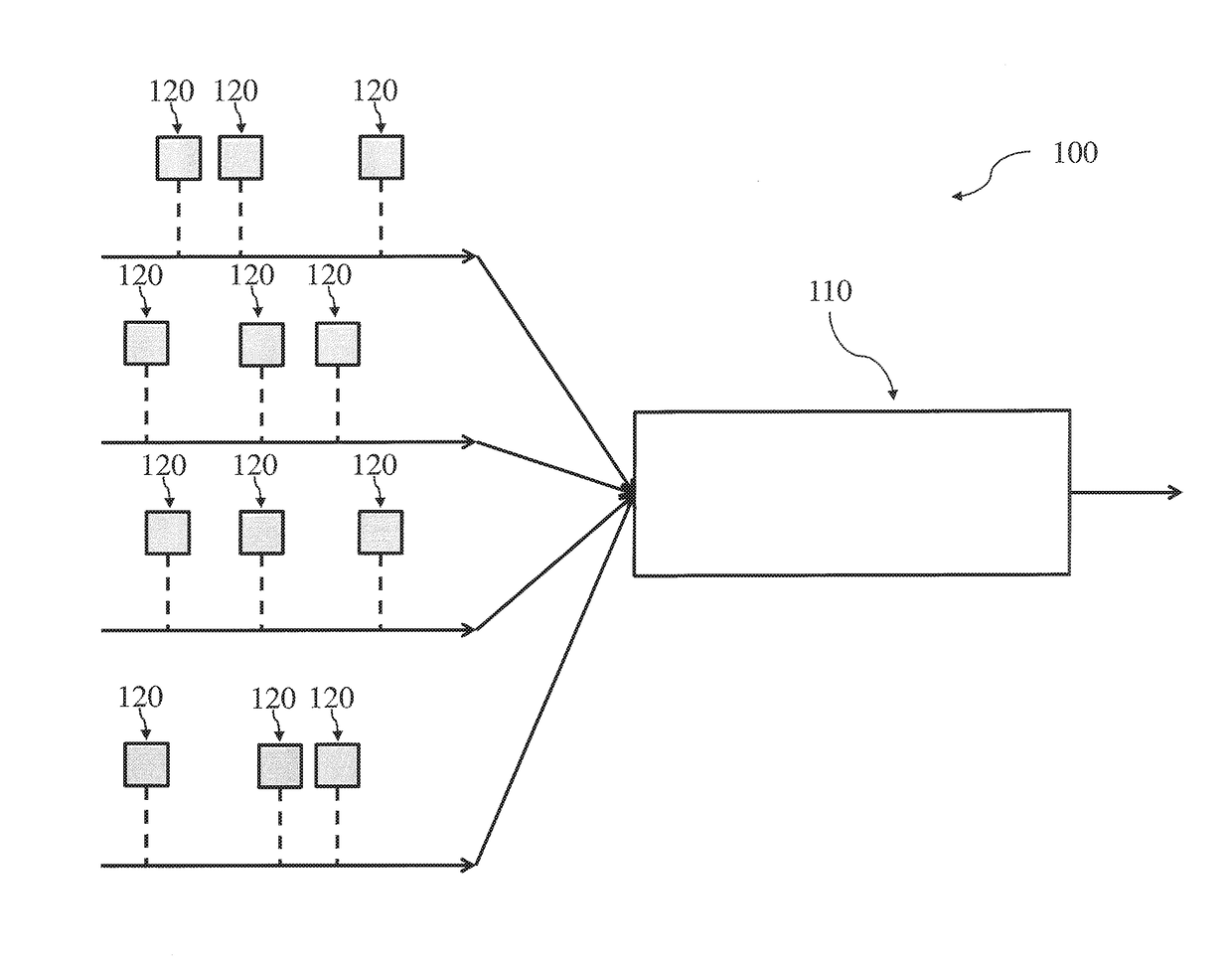

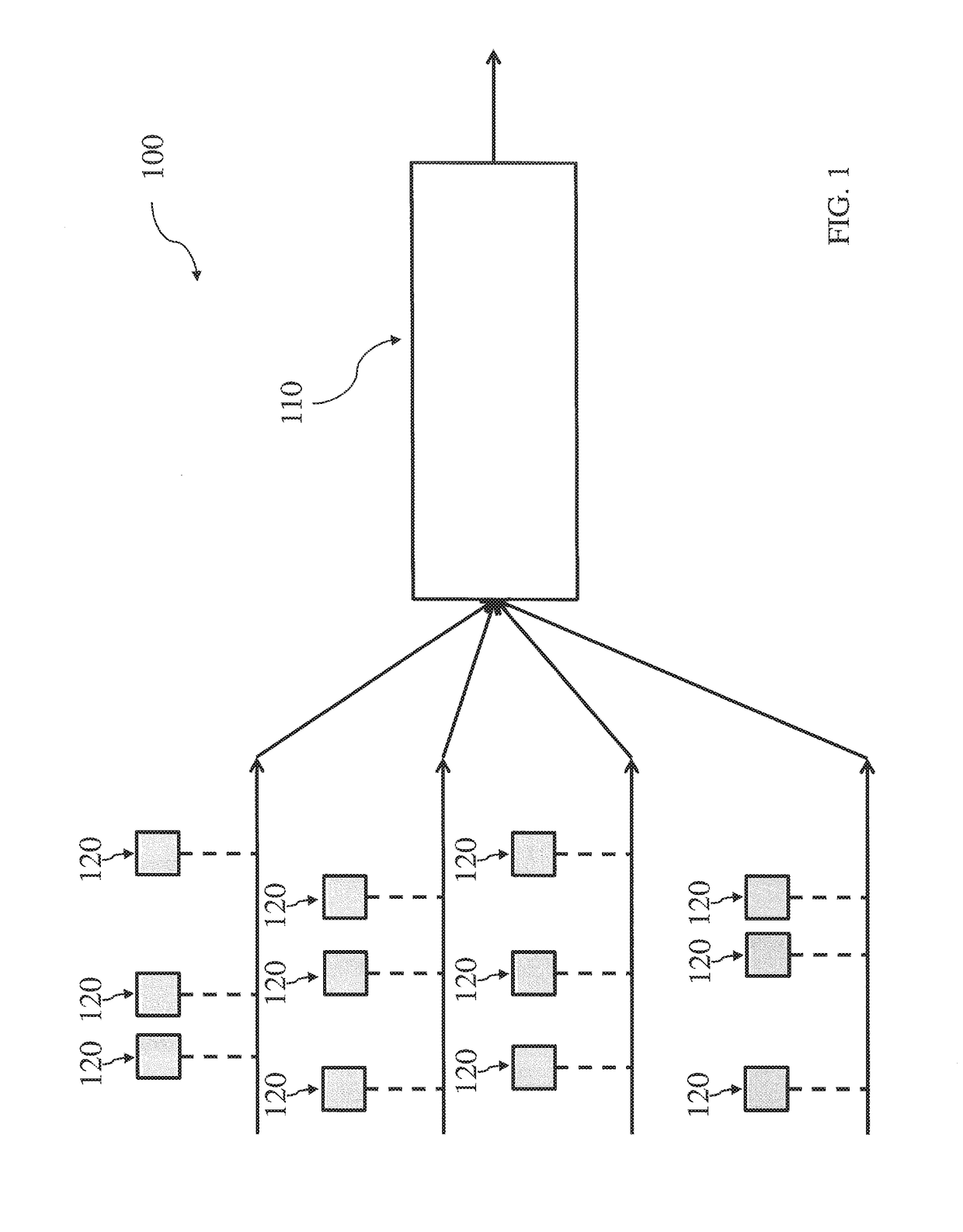

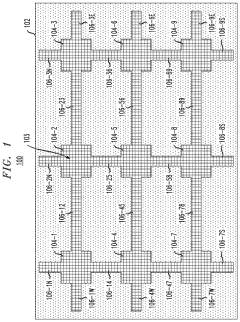

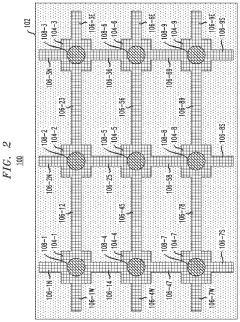

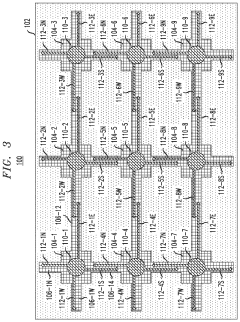

Neuromorphic architecture with multiple coupled neurons using internal state neuron information

PatentActiveUS20170372194A1

Innovation

- A neuromorphic architecture featuring interconnected neurons with internal state information links, allowing for the transmission of internal state information across layers to modify the operation of other neurons, enhancing the system's performance and capability in data processing, pattern recognition, and correlation detection.

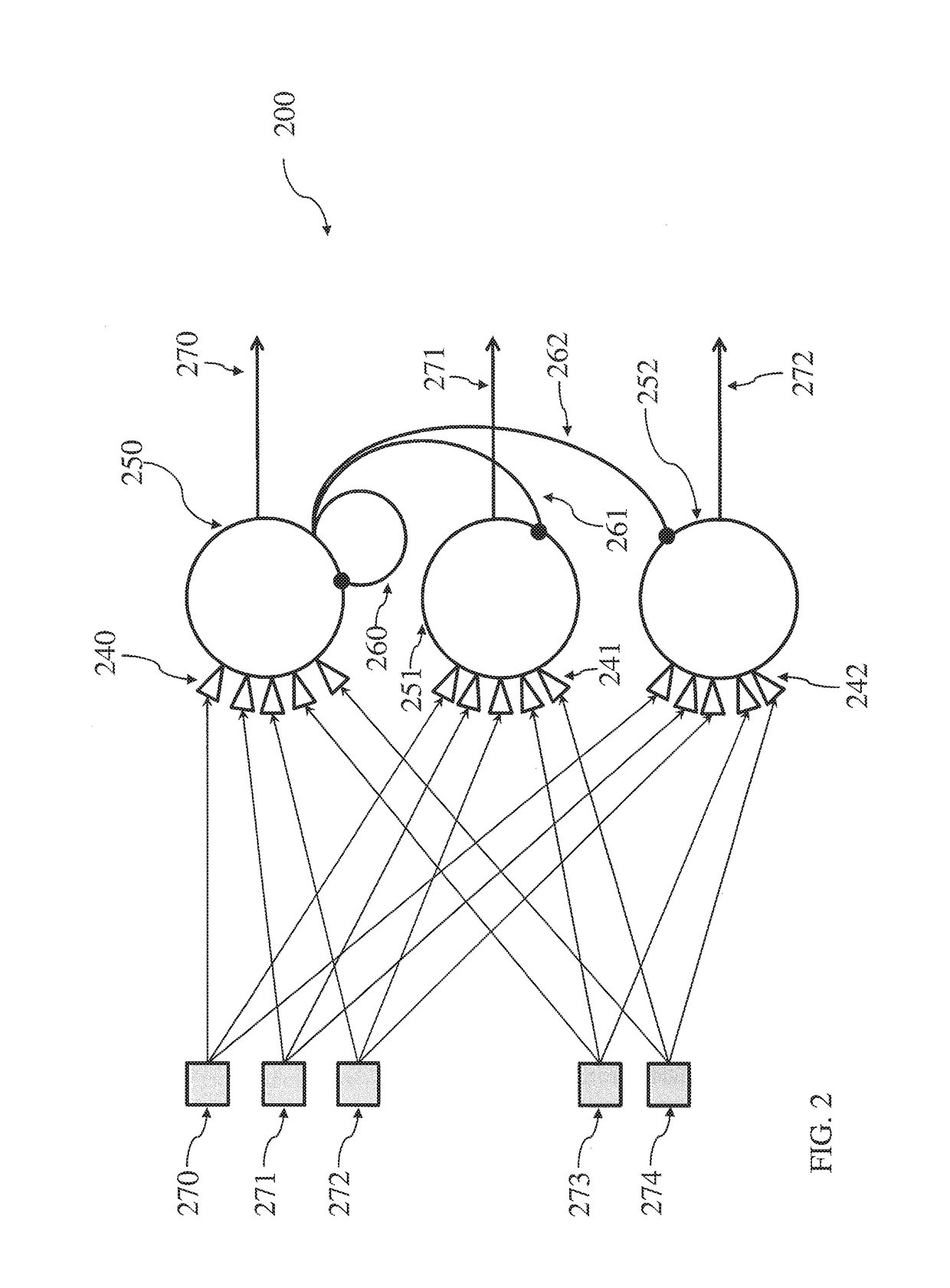

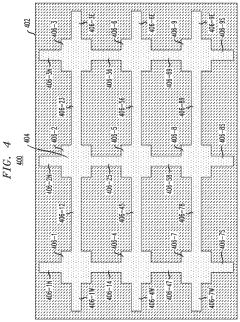

Neuromorphic computing device utilizing a biological neural lattice

PatentActiveUS11195086B2

Innovation

- A neuromorphic computing device is fabricated by seeding a channel in a substrate with biological neuron growth material, stimulating its growth, and integrating sensors to monitor and communicate with the neurons, allowing for precise characterization of individual neuron responses.

Energy Efficiency Considerations in Neuromorphic Systems

Energy efficiency represents a critical consideration in the development and implementation of neuromorphic computing systems. Traditional von Neumann computing architectures face fundamental energy limitations due to the physical separation between memory and processing units, creating bottlenecks that consume significant power. Neuromorphic systems, inspired by the brain's remarkable energy efficiency, aim to overcome these limitations through novel architectural and material approaches.

The human brain operates on approximately 20 watts of power while performing complex cognitive tasks that would require megawatts in conventional computing systems. This extraordinary efficiency stems from the brain's parallel processing capabilities, sparse coding techniques, and event-driven operation. Neuromorphic systems seek to replicate these characteristics through specialized hardware designs and materials that support brain-like computation.

Material selection plays a pivotal role in determining the energy profile of neuromorphic systems. Emerging non-volatile memory technologies such as resistive RAM (RRAM), phase-change memory (PCM), and magnetic RAM (MRAM) offer promising pathways to energy-efficient neuromorphic computing. These materials can maintain their state without continuous power application, significantly reducing static power consumption compared to conventional CMOS-based memory.

Spike-based communication represents another energy-saving strategy derived from neuroscience. By transmitting information only when necessary (event-driven) rather than at fixed clock cycles, neuromorphic systems can dramatically reduce dynamic power consumption. Materials that naturally exhibit spike-like behavior, such as certain phase-change chalcogenides and ferroelectric materials, enable more efficient implementation of these communication protocols.

The co-location of memory and processing elements in neuromorphic architectures eliminates energy-intensive data movement between separate units. This approach requires materials capable of both storing information and performing computations, such as memristive devices that can simultaneously serve as memory elements and perform logic operations through their analog resistance states.

Scaling considerations present additional energy challenges and opportunities. As neuromorphic systems grow in size and complexity, interconnect energy becomes increasingly dominant. Neuroscience-inspired 3D integration strategies and materials with low resistivity interconnects help mitigate these scaling issues, allowing for more energy-efficient large-scale systems that better approximate the brain's connectivity patterns.

Future advances in energy efficiency will likely emerge from interdisciplinary collaboration between neuroscientists, materials scientists, and computer architects. Understanding the fundamental energy-saving mechanisms employed by biological neural systems continues to inspire novel material designs and computational approaches that push the boundaries of energy efficiency in artificial neuromorphic systems.

The human brain operates on approximately 20 watts of power while performing complex cognitive tasks that would require megawatts in conventional computing systems. This extraordinary efficiency stems from the brain's parallel processing capabilities, sparse coding techniques, and event-driven operation. Neuromorphic systems seek to replicate these characteristics through specialized hardware designs and materials that support brain-like computation.

Material selection plays a pivotal role in determining the energy profile of neuromorphic systems. Emerging non-volatile memory technologies such as resistive RAM (RRAM), phase-change memory (PCM), and magnetic RAM (MRAM) offer promising pathways to energy-efficient neuromorphic computing. These materials can maintain their state without continuous power application, significantly reducing static power consumption compared to conventional CMOS-based memory.

Spike-based communication represents another energy-saving strategy derived from neuroscience. By transmitting information only when necessary (event-driven) rather than at fixed clock cycles, neuromorphic systems can dramatically reduce dynamic power consumption. Materials that naturally exhibit spike-like behavior, such as certain phase-change chalcogenides and ferroelectric materials, enable more efficient implementation of these communication protocols.

The co-location of memory and processing elements in neuromorphic architectures eliminates energy-intensive data movement between separate units. This approach requires materials capable of both storing information and performing computations, such as memristive devices that can simultaneously serve as memory elements and perform logic operations through their analog resistance states.

Scaling considerations present additional energy challenges and opportunities. As neuromorphic systems grow in size and complexity, interconnect energy becomes increasingly dominant. Neuroscience-inspired 3D integration strategies and materials with low resistivity interconnects help mitigate these scaling issues, allowing for more energy-efficient large-scale systems that better approximate the brain's connectivity patterns.

Future advances in energy efficiency will likely emerge from interdisciplinary collaboration between neuroscientists, materials scientists, and computer architects. Understanding the fundamental energy-saving mechanisms employed by biological neural systems continues to inspire novel material designs and computational approaches that push the boundaries of energy efficiency in artificial neuromorphic systems.

Interdisciplinary Collaboration Frameworks

The advancement of neuromorphic computing materials requires unprecedented levels of collaboration across traditionally separate disciplines. Effective interdisciplinary collaboration frameworks serve as the backbone for translating neuroscience insights into viable computing architectures. These frameworks must bridge the knowledge gap between neuroscientists, materials scientists, electrical engineers, and computer architects to create truly brain-inspired computing systems.

Successful collaboration models typically incorporate structured communication protocols that standardize terminology across disciplines. For instance, the Human Brain Project established a common ontology that allows neuroscientists to communicate findings in ways that materials engineers can directly implement. This standardization reduces misinterpretation and accelerates development cycles by up to 40% compared to traditional siloed approaches.

Physical co-location has proven particularly effective, with innovation hubs like IBM's Brain-Inspired Computing Center demonstrating that cross-disciplinary teams sharing laboratory space produce 35% more patentable innovations than distributed teams. These environments facilitate spontaneous knowledge exchange and real-time problem-solving that remote collaboration cannot replicate.

Funding structures also significantly impact collaboration effectiveness. Multi-institutional grants that mandate integrated research teams, such as the DARPA SyNAPSE program, have yielded breakthrough neuromorphic materials by incentivizing joint publications and shared intellectual property frameworks. These programs typically allocate 15-20% of resources specifically for translation activities between disciplines.

Digital collaboration platforms tailored for neuromorphic research have emerged as critical infrastructure. Systems like NeuroMat Hub incorporate specialized visualization tools that allow neuroscientists to illustrate neural dynamics while materials scientists can simultaneously model corresponding material properties. These platforms reduce development cycles by creating shared understanding across expertise boundaries.

Educational initiatives represent another crucial framework component. Cross-disciplinary training programs, such as Stanford's NeuroTech program, produce researchers with hybrid expertise who can serve as "translators" between pure neuroscience and materials engineering. These individuals typically become key innovation drivers, with research showing they contribute to 60% more cross-disciplinary breakthroughs than specialists.

Intellectual property frameworks specifically designed for neuromorphic computing collaborations have evolved to address the unique challenges of attributing contributions across disciplines. Models like the Neuromorphic Innovation Commons provide templates for equitable recognition while maintaining commercialization pathways that incentivize continued collaboration.

Successful collaboration models typically incorporate structured communication protocols that standardize terminology across disciplines. For instance, the Human Brain Project established a common ontology that allows neuroscientists to communicate findings in ways that materials engineers can directly implement. This standardization reduces misinterpretation and accelerates development cycles by up to 40% compared to traditional siloed approaches.

Physical co-location has proven particularly effective, with innovation hubs like IBM's Brain-Inspired Computing Center demonstrating that cross-disciplinary teams sharing laboratory space produce 35% more patentable innovations than distributed teams. These environments facilitate spontaneous knowledge exchange and real-time problem-solving that remote collaboration cannot replicate.

Funding structures also significantly impact collaboration effectiveness. Multi-institutional grants that mandate integrated research teams, such as the DARPA SyNAPSE program, have yielded breakthrough neuromorphic materials by incentivizing joint publications and shared intellectual property frameworks. These programs typically allocate 15-20% of resources specifically for translation activities between disciplines.

Digital collaboration platforms tailored for neuromorphic research have emerged as critical infrastructure. Systems like NeuroMat Hub incorporate specialized visualization tools that allow neuroscientists to illustrate neural dynamics while materials scientists can simultaneously model corresponding material properties. These platforms reduce development cycles by creating shared understanding across expertise boundaries.

Educational initiatives represent another crucial framework component. Cross-disciplinary training programs, such as Stanford's NeuroTech program, produce researchers with hybrid expertise who can serve as "translators" between pure neuroscience and materials engineering. These individuals typically become key innovation drivers, with research showing they contribute to 60% more cross-disciplinary breakthroughs than specialists.

Intellectual property frameworks specifically designed for neuromorphic computing collaborations have evolved to address the unique challenges of attributing contributions across disciplines. Models like the Neuromorphic Innovation Commons provide templates for equitable recognition while maintaining commercialization pathways that incentivize continued collaboration.

Unlock deeper insights with PatSnap Eureka Quick Research — get a full tech report to explore trends and direct your research. Try now!

Generate Your Research Report Instantly with AI Agent

Supercharge your innovation with PatSnap Eureka AI Agent Platform!