Neuromorphic Computing Materials: Structure and Function Analysis

OCT 27, 20259 MIN READ

Generate Your Research Report Instantly with AI Agent

PatSnap Eureka helps you evaluate technical feasibility & market potential.

Neuromorphic Computing Evolution and Objectives

Neuromorphic computing represents a paradigm shift in computational architecture, drawing inspiration from the structure and function of biological neural systems. The evolution of this field began in the late 1980s when Carver Mead introduced the concept of using analog circuits to mimic neurobiological architectures. This pioneering work laid the foundation for hardware systems that could process information in ways similar to the human brain, emphasizing parallel processing, energy efficiency, and adaptive learning capabilities.

The trajectory of neuromorphic computing has been marked by significant technological milestones. In the 1990s, research focused primarily on analog VLSI implementations of neural circuits. The early 2000s witnessed the emergence of digital neuromorphic systems, offering greater programmability while still maintaining brain-inspired architectures. By the 2010s, hybrid analog-digital approaches gained prominence, combining the efficiency of analog computation with the precision of digital systems.

Material science has played a crucial role in advancing neuromorphic computing. Traditional CMOS technology initially dominated the field, but limitations in power efficiency and scalability prompted exploration of alternative materials. The introduction of memristive devices in the late 2000s represented a breakthrough, offering non-volatile memory capabilities that closely resemble synaptic behavior. Subsequently, phase-change materials, ferroelectric materials, and spintronic devices have expanded the material palette for neuromorphic implementations.

The primary objectives of neuromorphic computing materials research center on several key aspects. Energy efficiency stands as a paramount goal, with biological neural systems serving as the gold standard—the human brain operates on approximately 20 watts while performing complex cognitive tasks. Scalability presents another critical objective, as researchers aim to develop materials and architectures that can support billions of artificial neurons and synapses while maintaining compact form factors.

Functional objectives include achieving high degrees of plasticity and adaptability in neuromorphic materials, enabling systems that can learn from experience and modify their behavior accordingly. Temporal processing capabilities represent another essential target, as biological neural systems excel at processing time-varying information—a capability that conventional computing architectures struggle to replicate efficiently.

The convergence of material science, neuroscience, and computer engineering continues to drive the evolution of neuromorphic computing. Current research emphasizes developing materials with multi-functional properties that can simultaneously serve as memory elements and computational units, thereby reducing the energy costs associated with data movement between separate processing and storage components.

The trajectory of neuromorphic computing has been marked by significant technological milestones. In the 1990s, research focused primarily on analog VLSI implementations of neural circuits. The early 2000s witnessed the emergence of digital neuromorphic systems, offering greater programmability while still maintaining brain-inspired architectures. By the 2010s, hybrid analog-digital approaches gained prominence, combining the efficiency of analog computation with the precision of digital systems.

Material science has played a crucial role in advancing neuromorphic computing. Traditional CMOS technology initially dominated the field, but limitations in power efficiency and scalability prompted exploration of alternative materials. The introduction of memristive devices in the late 2000s represented a breakthrough, offering non-volatile memory capabilities that closely resemble synaptic behavior. Subsequently, phase-change materials, ferroelectric materials, and spintronic devices have expanded the material palette for neuromorphic implementations.

The primary objectives of neuromorphic computing materials research center on several key aspects. Energy efficiency stands as a paramount goal, with biological neural systems serving as the gold standard—the human brain operates on approximately 20 watts while performing complex cognitive tasks. Scalability presents another critical objective, as researchers aim to develop materials and architectures that can support billions of artificial neurons and synapses while maintaining compact form factors.

Functional objectives include achieving high degrees of plasticity and adaptability in neuromorphic materials, enabling systems that can learn from experience and modify their behavior accordingly. Temporal processing capabilities represent another essential target, as biological neural systems excel at processing time-varying information—a capability that conventional computing architectures struggle to replicate efficiently.

The convergence of material science, neuroscience, and computer engineering continues to drive the evolution of neuromorphic computing. Current research emphasizes developing materials with multi-functional properties that can simultaneously serve as memory elements and computational units, thereby reducing the energy costs associated with data movement between separate processing and storage components.

Market Analysis for Brain-Inspired Computing Solutions

The neuromorphic computing market is experiencing significant growth, driven by increasing demand for AI applications that require efficient processing of complex neural networks. Current market valuations place the global neuromorphic computing sector at approximately 3.2 billion USD in 2023, with projections indicating a compound annual growth rate of 24.7% through 2030. This growth trajectory is supported by substantial investments from both private and public sectors, with government initiatives in the US, EU, and China allocating dedicated funding for neuromorphic research and development.

Market demand for brain-inspired computing solutions is primarily concentrated in five key sectors. The healthcare industry represents the largest market segment, with applications in medical imaging analysis, patient monitoring systems, and drug discovery processes. Financial services follow closely, implementing neuromorphic systems for real-time fraud detection and algorithmic trading. Autonomous vehicles constitute the fastest-growing segment, requiring low-power, high-performance computing for real-time decision making. Defense applications and IoT devices complete the top five market segments.

Customer requirements across these sectors consistently emphasize three critical factors: energy efficiency, processing speed, and adaptability. Energy efficiency remains paramount as data centers struggle with increasing power consumption costs. Current neuromorphic solutions demonstrate power requirements 50-100 times lower than traditional computing architectures when handling equivalent neural network tasks. Processing speed advantages are most evident in applications requiring real-time pattern recognition and sensory data processing, where neuromorphic systems outperform conventional processors by factors of 10-15x for specific workloads.

Market barriers include high initial implementation costs, with specialized neuromorphic hardware typically commanding premium prices compared to general-purpose processors. Integration challenges with existing software ecosystems represent another significant obstacle, as most current applications are optimized for traditional computing architectures. Additionally, the specialized expertise required for effective implementation limits widespread adoption outside of research environments and technology-forward organizations.

Regional analysis reveals North America leading market share at 42%, followed by Asia-Pacific at 31% and Europe at 22%. However, the Asia-Pacific region demonstrates the highest growth rate, driven by substantial investments from China, Japan, and South Korea in neuromorphic research initiatives and manufacturing capabilities. Market forecasts suggest this regional distribution will shift significantly by 2028, with Asia-Pacific potentially achieving market parity with North America.

Market demand for brain-inspired computing solutions is primarily concentrated in five key sectors. The healthcare industry represents the largest market segment, with applications in medical imaging analysis, patient monitoring systems, and drug discovery processes. Financial services follow closely, implementing neuromorphic systems for real-time fraud detection and algorithmic trading. Autonomous vehicles constitute the fastest-growing segment, requiring low-power, high-performance computing for real-time decision making. Defense applications and IoT devices complete the top five market segments.

Customer requirements across these sectors consistently emphasize three critical factors: energy efficiency, processing speed, and adaptability. Energy efficiency remains paramount as data centers struggle with increasing power consumption costs. Current neuromorphic solutions demonstrate power requirements 50-100 times lower than traditional computing architectures when handling equivalent neural network tasks. Processing speed advantages are most evident in applications requiring real-time pattern recognition and sensory data processing, where neuromorphic systems outperform conventional processors by factors of 10-15x for specific workloads.

Market barriers include high initial implementation costs, with specialized neuromorphic hardware typically commanding premium prices compared to general-purpose processors. Integration challenges with existing software ecosystems represent another significant obstacle, as most current applications are optimized for traditional computing architectures. Additionally, the specialized expertise required for effective implementation limits widespread adoption outside of research environments and technology-forward organizations.

Regional analysis reveals North America leading market share at 42%, followed by Asia-Pacific at 31% and Europe at 22%. However, the Asia-Pacific region demonstrates the highest growth rate, driven by substantial investments from China, Japan, and South Korea in neuromorphic research initiatives and manufacturing capabilities. Market forecasts suggest this regional distribution will shift significantly by 2028, with Asia-Pacific potentially achieving market parity with North America.

Current Challenges in Neuromorphic Materials Development

Despite significant advancements in neuromorphic computing materials, several critical challenges continue to impede progress in this rapidly evolving field. The development of materials that can effectively mimic the structure and function of biological neural systems remains a formidable task due to the inherent complexity of biological neural networks.

One of the primary challenges is achieving the appropriate balance between stability and plasticity in neuromorphic materials. These materials must maintain long-term stability for reliable computation while simultaneously exhibiting sufficient plasticity to enable learning and adaptation. Current materials often excel in one aspect but fall short in the other, creating a fundamental design tension that researchers struggle to resolve.

Scalability presents another significant hurdle. While promising results have been demonstrated in laboratory settings with small-scale devices, scaling these materials to support large-scale neuromorphic systems comparable to biological brains remains problematic. Manufacturing processes must be refined to ensure consistency across millions or billions of artificial synapses and neurons within a single system.

Energy efficiency continues to be a critical concern. Although neuromorphic computing aims to achieve brain-like energy efficiency, current materials still consume substantially more power than their biological counterparts. The human brain operates on approximately 20 watts, whereas comparable artificial systems require orders of magnitude more energy, limiting their practical deployment in energy-constrained applications.

Integration challenges persist between neuromorphic materials and conventional CMOS technology. Creating hybrid systems that leverage the strengths of both approaches requires addressing significant interface issues, signal conversion problems, and architectural incompatibilities. These integration difficulties slow the adoption of neuromorphic materials in practical computing systems.

Material reliability and aging effects represent another obstacle. Many promising neuromorphic materials exhibit performance degradation over time or after repeated use cycles. Understanding and mitigating these aging mechanisms is essential for developing systems with acceptable operational lifespans for commercial applications.

Finally, characterization and modeling of neuromorphic materials remain inadequate. The complex, non-linear behavior of these materials makes accurate modeling challenging, hindering systematic design approaches. Current simulation tools often fail to capture the full range of behaviors exhibited by neuromorphic materials, particularly under varying environmental conditions or when integrated into complex systems.

One of the primary challenges is achieving the appropriate balance between stability and plasticity in neuromorphic materials. These materials must maintain long-term stability for reliable computation while simultaneously exhibiting sufficient plasticity to enable learning and adaptation. Current materials often excel in one aspect but fall short in the other, creating a fundamental design tension that researchers struggle to resolve.

Scalability presents another significant hurdle. While promising results have been demonstrated in laboratory settings with small-scale devices, scaling these materials to support large-scale neuromorphic systems comparable to biological brains remains problematic. Manufacturing processes must be refined to ensure consistency across millions or billions of artificial synapses and neurons within a single system.

Energy efficiency continues to be a critical concern. Although neuromorphic computing aims to achieve brain-like energy efficiency, current materials still consume substantially more power than their biological counterparts. The human brain operates on approximately 20 watts, whereas comparable artificial systems require orders of magnitude more energy, limiting their practical deployment in energy-constrained applications.

Integration challenges persist between neuromorphic materials and conventional CMOS technology. Creating hybrid systems that leverage the strengths of both approaches requires addressing significant interface issues, signal conversion problems, and architectural incompatibilities. These integration difficulties slow the adoption of neuromorphic materials in practical computing systems.

Material reliability and aging effects represent another obstacle. Many promising neuromorphic materials exhibit performance degradation over time or after repeated use cycles. Understanding and mitigating these aging mechanisms is essential for developing systems with acceptable operational lifespans for commercial applications.

Finally, characterization and modeling of neuromorphic materials remain inadequate. The complex, non-linear behavior of these materials makes accurate modeling challenging, hindering systematic design approaches. Current simulation tools often fail to capture the full range of behaviors exhibited by neuromorphic materials, particularly under varying environmental conditions or when integrated into complex systems.

State-of-the-Art Neuromorphic Material Architectures

01 Memristive materials for neuromorphic computing

Memristive materials are key components in neuromorphic computing systems, mimicking the behavior of biological synapses. These materials can change their resistance based on the history of applied voltage or current, enabling them to store and process information simultaneously. Various metal oxides and phase-change materials are used to create memristive devices that can perform synaptic functions like potentiation and depression, essential for neuromorphic computing applications.- Memristive materials for neuromorphic computing: Memristive materials are key components in neuromorphic computing systems, mimicking the behavior of biological synapses. These materials can change their resistance based on the history of applied voltage or current, enabling them to store and process information simultaneously. Various metal oxides and phase-change materials are used to create memristive devices that can perform synaptic functions like potentiation, depression, and spike-timing-dependent plasticity, which are essential for neuromorphic computing applications.

- Phase-change materials in neuromorphic architectures: Phase-change materials (PCMs) offer unique properties for neuromorphic computing by utilizing transitions between amorphous and crystalline states to store information. These materials can rapidly switch between states with different electrical resistances, allowing them to mimic synaptic behavior. PCM-based neuromorphic devices can achieve multi-level resistance states, enabling analog computing capabilities that are crucial for implementing neural network algorithms in hardware. The structure of these materials is engineered to optimize switching speed, energy efficiency, and long-term stability.

- 2D materials and heterostructures for neural computing: Two-dimensional materials and their heterostructures provide exceptional properties for neuromorphic computing applications. Materials such as graphene, transition metal dichalcogenides, and hexagonal boron nitride can be stacked in various configurations to create artificial synapses and neurons. These atomically thin structures offer advantages including high carrier mobility, tunable bandgaps, and mechanical flexibility. The unique electronic properties of 2D heterostructures enable the implementation of synaptic functions with low power consumption and high integration density.

- Ferroelectric and magnetic materials for neuromorphic devices: Ferroelectric and magnetic materials provide non-volatile memory capabilities essential for energy-efficient neuromorphic computing. These materials exhibit spontaneous polarization or magnetization that can be switched by external fields, enabling persistent storage of synaptic weights. Ferroelectric tunnel junctions and magnetic tunnel junctions can be designed to achieve multiple resistance states, facilitating the implementation of artificial neural networks. The integration of these materials into neuromorphic architectures allows for low-power operation and retention of information without continuous power supply.

- Organic and biomimetic materials for brain-inspired computing: Organic and biomimetic materials offer a promising approach for creating neuromorphic systems that more closely resemble biological neural networks. These materials include conducting polymers, organic semiconductors, and hybrid organic-inorganic composites that can exhibit synaptic behavior under electrical stimulation. Their advantages include biocompatibility, flexibility, and the ability to operate in wet environments. Biomimetic structures inspired by neural architectures can be fabricated using these materials to create devices that emulate the function and connectivity patterns of biological neural systems.

02 Phase-change materials in neuromorphic architectures

Phase-change materials (PCMs) offer unique properties for neuromorphic computing by utilizing transitions between amorphous and crystalline states to store information. These materials can rapidly switch between states with different electrical resistances, enabling multi-level storage capabilities that mimic synaptic weight changes in biological neural networks. PCM-based neuromorphic devices demonstrate excellent scalability, fast switching speeds, and low power consumption, making them promising candidates for brain-inspired computing systems.Expand Specific Solutions03 2D materials for neuromorphic device fabrication

Two-dimensional (2D) materials such as graphene, transition metal dichalcogenides, and hexagonal boron nitride are being explored for neuromorphic computing applications due to their unique electronic properties and atomic-scale thickness. These materials enable the fabrication of ultra-thin, flexible neuromorphic devices with tunable electronic characteristics. The layered structure of 2D materials facilitates the creation of heterostructures with novel functionalities, allowing for the development of energy-efficient synaptic devices with high performance and integration density.Expand Specific Solutions04 Organic and polymer-based neuromorphic materials

Organic and polymer-based materials offer flexibility, biocompatibility, and low-cost fabrication for neuromorphic computing applications. These materials can be engineered to exhibit synaptic behaviors through mechanisms such as ion migration, charge trapping, and conformational changes. Organic neuromorphic devices can operate at low voltages and demonstrate multiple conductance states, making them suitable for energy-efficient neural network implementations. Their mechanical flexibility also enables integration with wearable and implantable technologies for bio-inspired computing systems.Expand Specific Solutions05 Spintronic materials for neuromorphic computing

Spintronic materials utilize electron spin properties to create neuromorphic computing elements with ultra-low power consumption and high-speed operation. These materials enable the development of magnetic tunnel junctions and domain wall devices that can mimic synaptic and neuronal functions. Spintronic neuromorphic systems offer non-volatility, radiation hardness, and compatibility with conventional CMOS technology. Recent advances in spin-orbit torque materials have further improved the energy efficiency and reliability of spintronic neuromorphic devices.Expand Specific Solutions

Leading Organizations in Neuromorphic Computing Research

Neuromorphic Computing Materials is currently in an early growth phase, with the market expected to expand significantly due to increasing demand for AI applications requiring energy-efficient computing. The global market size is projected to reach several billion dollars by 2030, driven by applications in edge computing and IoT devices. Leading players include established technology giants like IBM, Samsung Electronics, and Hewlett Packard Enterprise, who are investing heavily in R&D. Academic institutions such as KAIST, Tsinghua University, and the University of California are contributing fundamental research. Emerging specialized companies like Syntiant Corp. and Innatera Nanosystems are developing innovative neuromorphic chips. The technology is approaching commercial viability, with early products already entering specialized markets, though widespread adoption remains several years away.

Samsung Electronics Co., Ltd.

Technical Solution: Samsung has developed advanced neuromorphic computing materials focusing primarily on resistive random-access memory (RRAM) and phase-change memory (PCM) technologies. Their neuromorphic architecture employs crossbar arrays of these materials to emulate synaptic functions with high density and energy efficiency. Samsung's approach integrates these novel memory materials directly with CMOS logic circuits to create hybrid neuromorphic systems. Their research has demonstrated multi-level cell capabilities in PCM devices that can store multiple bits per cell, enabling more complex synaptic weight representations [2]. Samsung has also pioneered three-dimensional stacking of memory arrays to increase density while maintaining energy efficiency. Their neuromorphic materials research extends to specialized metal oxide compositions that exhibit controllable resistive switching behaviors suitable for implementing spike-timing-dependent plasticity (STDP) learning rules [4]. Recent developments include self-rectifying memristive materials that reduce sneak path currents in crossbar arrays, addressing one of the key challenges in large-scale neuromorphic hardware implementation.

Strengths: Samsung leverages its extensive semiconductor manufacturing expertise to develop neuromorphic materials that are compatible with existing fabrication processes, potentially accelerating commercialization. Their vertical integration capabilities allow for system-level optimization across materials, devices, and architectures. Weaknesses: Their approaches sometimes prioritize compatibility with existing manufacturing infrastructure over exploring more radical material innovations that might offer superior neuromorphic properties but require entirely new fabrication methods.

International Business Machines Corp.

Technical Solution: IBM has pioneered neuromorphic computing materials through its TrueNorth and subsequent Brain-inspired Computing architectures. Their approach focuses on developing non-von Neumann computing systems that mimic the brain's neural structure and function. IBM's neuromorphic materials research centers on phase-change memory (PCM) materials that can simultaneously store and process information, enabling efficient synaptic behavior. Their TrueNorth chip architecture implements a million digital neurons and 256 million synapses using complementary metal-oxide-semiconductor (CMOS) technology [1]. More recently, IBM has advanced into analog AI hardware using novel materials like metal oxides for resistive processing units (RPUs) that can perform matrix operations directly in memory. Their research also extends to magnetic materials for spintronic neuromorphic computing, which offers non-volatile memory capabilities with lower energy consumption [3]. IBM's neuromorphic materials research emphasizes the integration of these novel materials with conventional CMOS technology to create hybrid systems that balance performance with manufacturability.

Strengths: IBM possesses extensive experience in neuromorphic architecture design and integration with conventional computing systems. Their research benefits from deep institutional knowledge and cross-disciplinary collaboration between materials scientists and computer architects. Weaknesses: Their approaches often require complex fabrication processes that may limit commercial scalability, and some of their material solutions still face challenges in long-term stability and reliability under repeated use conditions.

Critical Patents in Synaptic Material Engineering

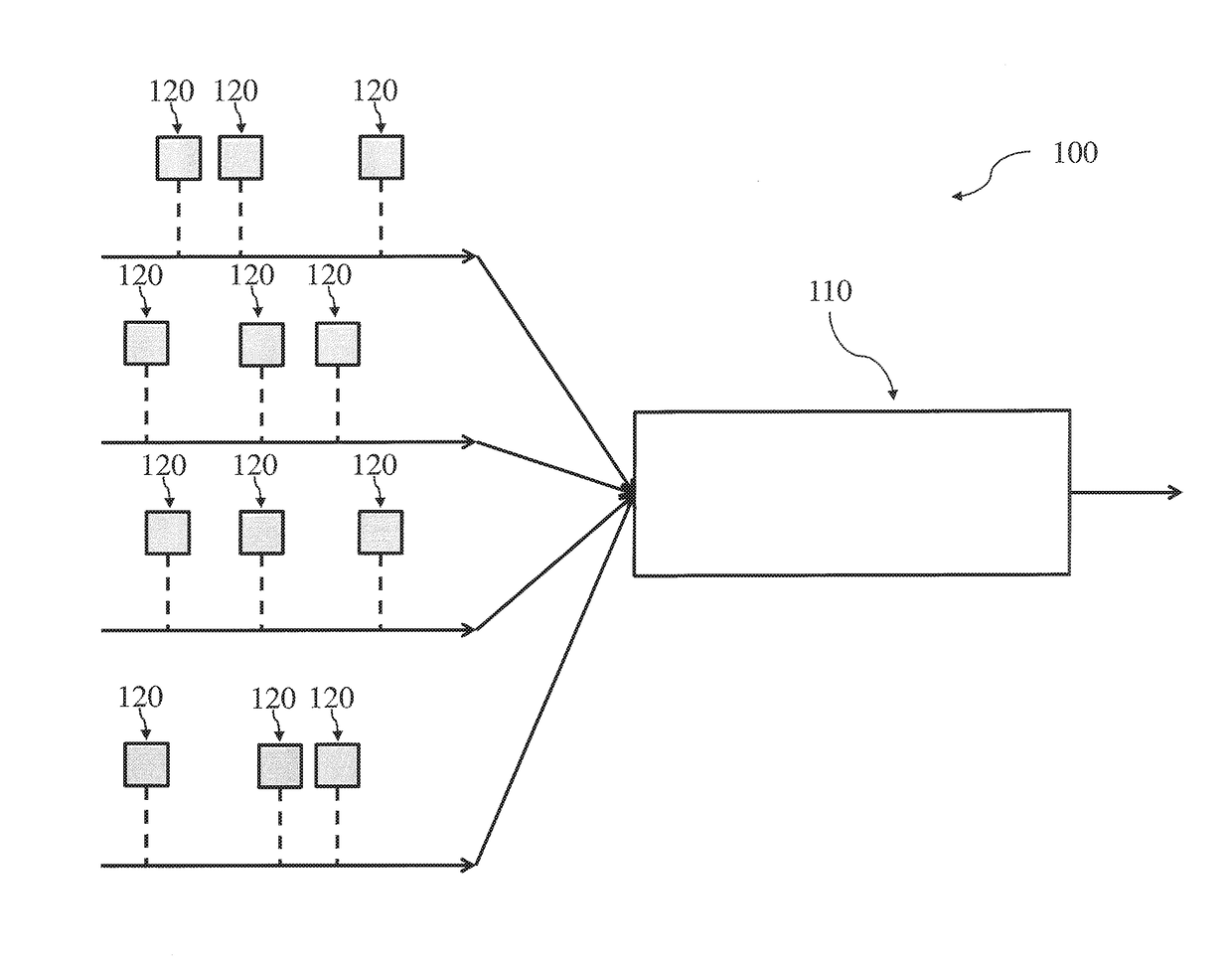

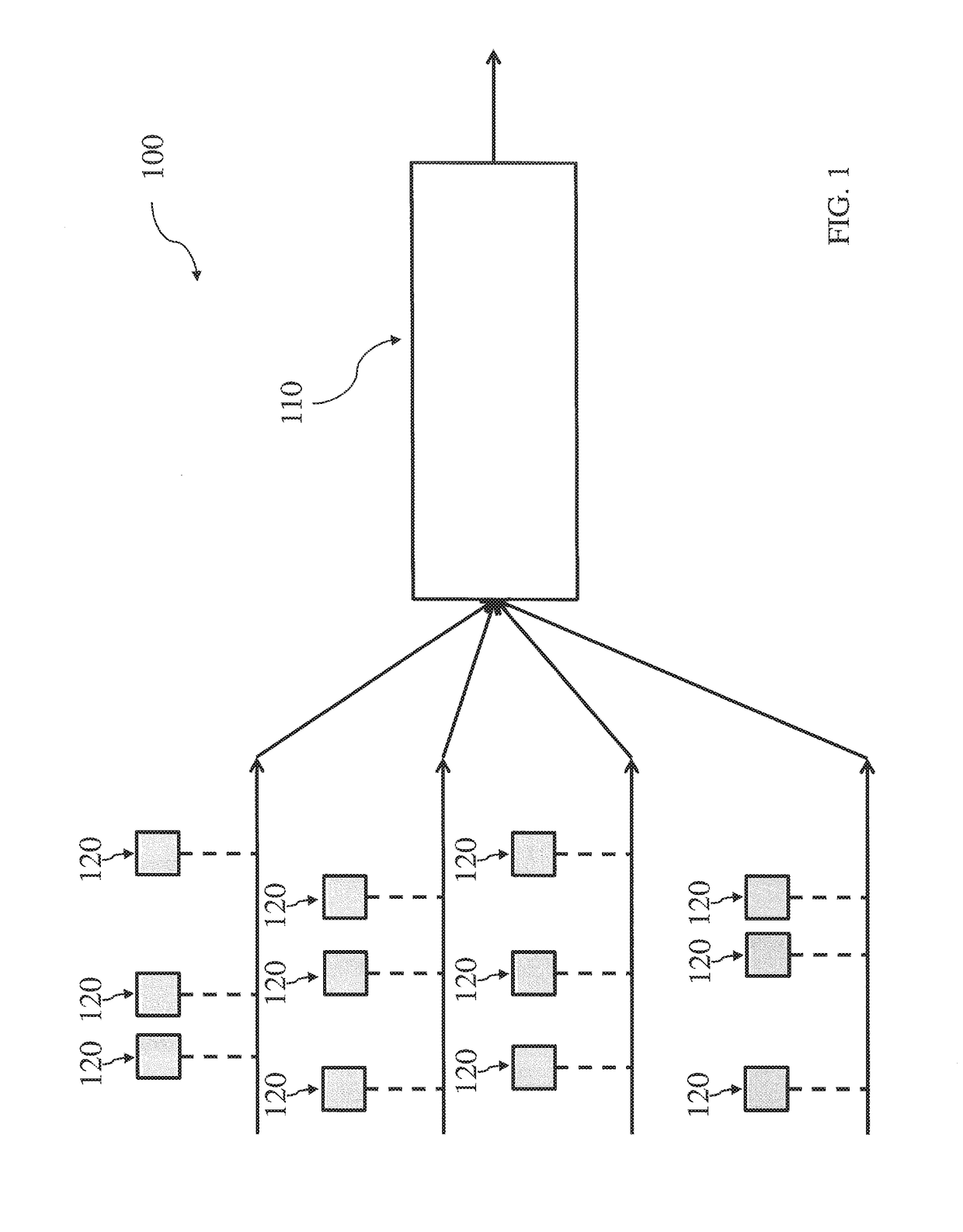

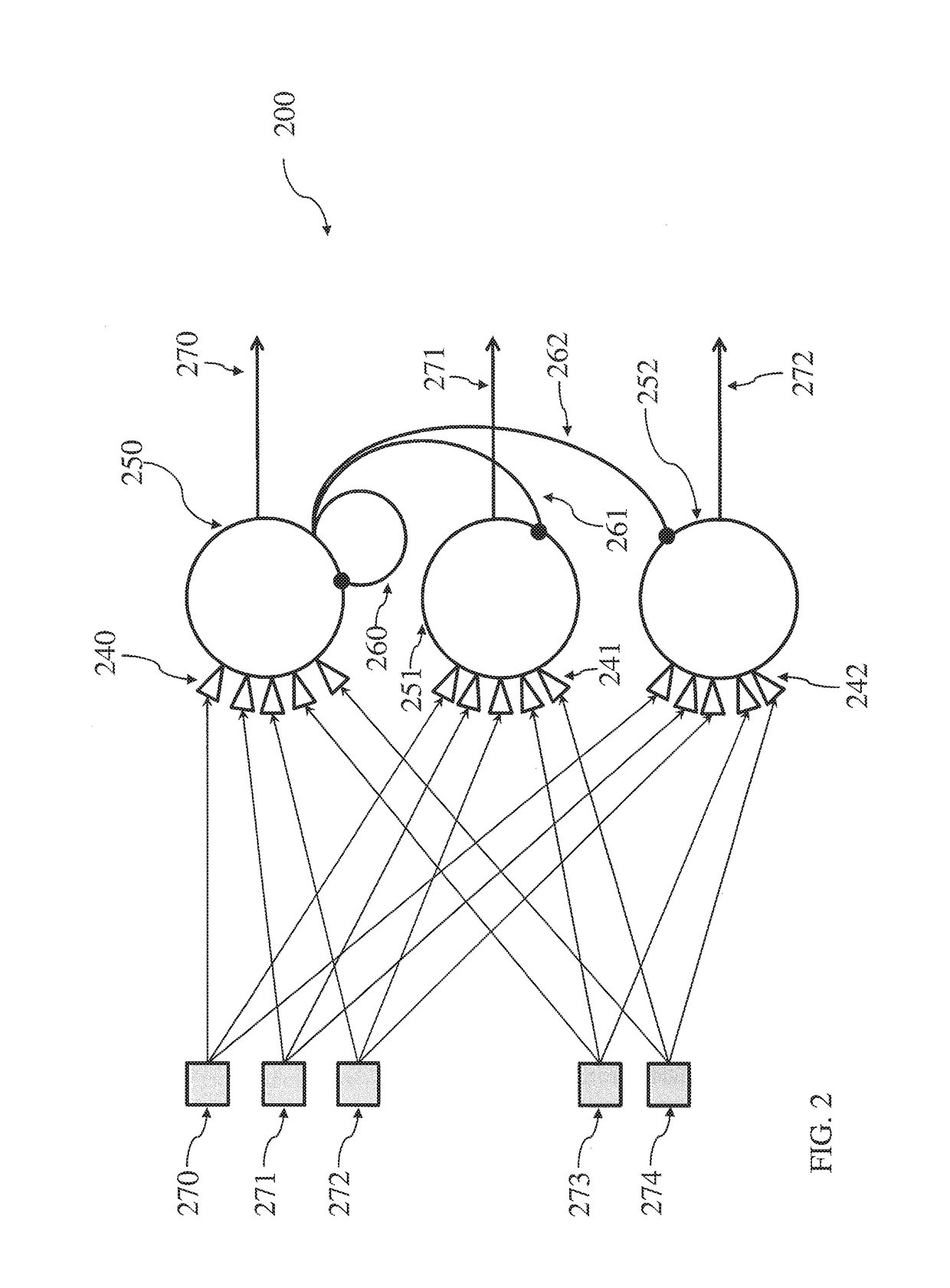

Neuromorphic architecture with multiple coupled neurons using internal state neuron information

PatentActiveUS20170372194A1

Innovation

- A neuromorphic architecture featuring interconnected neurons with internal state information links, allowing for the transmission of internal state information across layers to modify the operation of other neurons, enhancing the system's performance and capability in data processing, pattern recognition, and correlation detection.

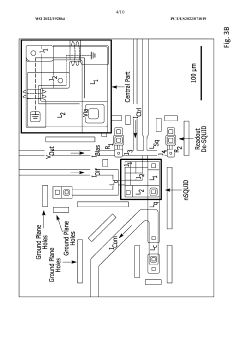

Superconducting neuromorphic computing devices and circuits

PatentWO2022192864A1

Innovation

- The development of neuromorphic computing systems utilizing atomically thin, tunable superconducting memristors as synapses and ultra-sensitive superconducting quantum interference devices (SQUIDs) as neurons, which form neural units capable of performing universal logic gates and are scalable, energy-efficient, and compatible with cryogenic temperatures.

Energy Efficiency Benchmarks for Neuromorphic Systems

Energy efficiency has emerged as a critical benchmark for evaluating neuromorphic computing systems, particularly those utilizing novel materials for brain-inspired computing architectures. Current neuromorphic systems demonstrate significant energy advantages compared to traditional von Neumann architectures, with leading implementations achieving efficiency metrics in the femtojoule per synaptic operation range. This represents orders of magnitude improvement over conventional computing paradigms for specific workloads.

The energy efficiency of neuromorphic systems can be categorized across multiple dimensions. At the device level, memristive materials such as hafnium oxide, tantalum oxide, and phase-change materials demonstrate remarkably low energy consumption per switching event, typically in the picojoule to femtojoule range. These materials enable non-volatile memory capabilities that eliminate static power consumption when not actively computing.

At the circuit level, efficiency benchmarks focus on energy per spike or energy per synaptic operation. Leading neuromorphic chips like Intel's Loihi and IBM's TrueNorth have demonstrated efficiency metrics of approximately 23.6 picojoules per synaptic event and 26 picojoules per synaptic event, respectively. These figures represent substantial improvements over GPU implementations of neural networks.

System-level benchmarks consider the total energy consumption for specific cognitive tasks. For instance, neuromorphic vision systems implementing event-based processing have shown energy reductions of 100-1000× compared to frame-based approaches. Pattern recognition tasks on specialized neuromorphic hardware demonstrate similar efficiency gains when compared to conventional computing platforms.

The materials science perspective provides additional efficiency metrics. Novel neuromorphic materials exhibit varying degrees of energy efficiency based on their switching mechanisms. Ferroelectric tunnel junctions demonstrate switching energies as low as 10 femtojoules, while phase-change materials typically operate in the picojoule range. Emerging two-dimensional materials and organic neuromorphic devices show promise for even lower energy consumption, potentially reaching the attojoule scale.

Standardized benchmarking methodologies remain an ongoing challenge in the field. Current approaches include measuring energy per operation (EPO), energy-delay product (EDP), and specific energy efficiency for standardized machine learning tasks. The development of application-specific benchmarks that reflect real-world neuromorphic computing scenarios is crucial for meaningful comparisons across different material platforms and architectural approaches.

The energy efficiency of neuromorphic systems can be categorized across multiple dimensions. At the device level, memristive materials such as hafnium oxide, tantalum oxide, and phase-change materials demonstrate remarkably low energy consumption per switching event, typically in the picojoule to femtojoule range. These materials enable non-volatile memory capabilities that eliminate static power consumption when not actively computing.

At the circuit level, efficiency benchmarks focus on energy per spike or energy per synaptic operation. Leading neuromorphic chips like Intel's Loihi and IBM's TrueNorth have demonstrated efficiency metrics of approximately 23.6 picojoules per synaptic event and 26 picojoules per synaptic event, respectively. These figures represent substantial improvements over GPU implementations of neural networks.

System-level benchmarks consider the total energy consumption for specific cognitive tasks. For instance, neuromorphic vision systems implementing event-based processing have shown energy reductions of 100-1000× compared to frame-based approaches. Pattern recognition tasks on specialized neuromorphic hardware demonstrate similar efficiency gains when compared to conventional computing platforms.

The materials science perspective provides additional efficiency metrics. Novel neuromorphic materials exhibit varying degrees of energy efficiency based on their switching mechanisms. Ferroelectric tunnel junctions demonstrate switching energies as low as 10 femtojoules, while phase-change materials typically operate in the picojoule range. Emerging two-dimensional materials and organic neuromorphic devices show promise for even lower energy consumption, potentially reaching the attojoule scale.

Standardized benchmarking methodologies remain an ongoing challenge in the field. Current approaches include measuring energy per operation (EPO), energy-delay product (EDP), and specific energy efficiency for standardized machine learning tasks. The development of application-specific benchmarks that reflect real-world neuromorphic computing scenarios is crucial for meaningful comparisons across different material platforms and architectural approaches.

Fabrication Techniques for Advanced Neural Materials

The fabrication of neuromorphic computing materials represents a critical frontier in advancing brain-inspired computing systems. Current fabrication techniques employ a sophisticated blend of traditional semiconductor manufacturing processes and novel approaches specifically tailored for neural materials. Photolithography remains fundamental, allowing precise patterning of neural circuit components at increasingly smaller scales, though challenges persist in achieving the density and complexity comparable to biological neural networks.

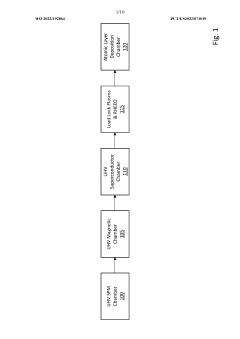

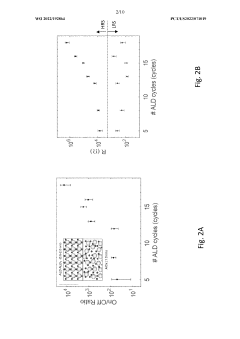

Physical vapor deposition (PVD) and chemical vapor deposition (CVD) techniques have been adapted for creating thin films of phase-change materials and metal oxides essential for memristive devices. These techniques enable precise control over film thickness and composition, critical for reliable neuromorphic behavior. Recent innovations in atomic layer deposition (ALD) have further enhanced precision, allowing atomic-level control of material interfaces that determine synaptic characteristics.

Self-assembly processes represent a promising direction, particularly for creating three-dimensional neural architectures. Block copolymer lithography has demonstrated potential for creating regular nanoscale patterns that can serve as templates for neural components, offering higher density than conventional lithography at potentially lower costs. Directed self-assembly techniques are increasingly combining top-down and bottom-up approaches to achieve hierarchical neural structures.

Additive manufacturing technologies, including 3D printing of conductive and resistive materials, are emerging as versatile tools for prototyping neuromorphic systems. These methods allow rapid iteration of designs and enable novel geometries impossible with traditional fabrication. Advanced printing techniques using nanomaterial inks have achieved feature sizes approaching those needed for high-density neural networks.

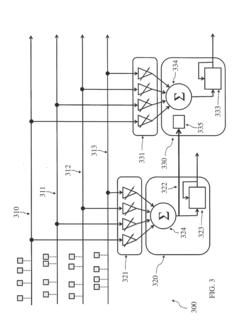

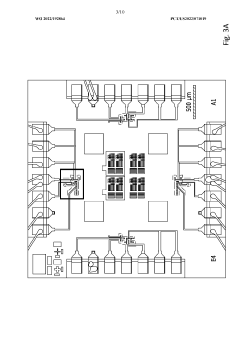

Integration challenges remain significant, particularly in connecting neuromorphic materials with conventional CMOS circuitry. Hybrid integration approaches using through-silicon vias (TSVs) and advanced packaging techniques are being developed to address these challenges, enabling neuromorphic accelerators that complement traditional computing architectures.

Quality control and characterization methods have evolved specifically for neural materials, including specialized electrical testing protocols that evaluate synaptic plasticity and neuronal firing characteristics. Advanced microscopy and spectroscopy techniques provide insights into material structure-function relationships, guiding iterative improvements in fabrication processes.

Scalability remains a central challenge, with current techniques often limited to laboratory-scale production. Industry-academic collaborations are actively developing manufacturing processes capable of consistent, large-scale production of neuromorphic materials while maintaining the precise characteristics required for reliable neural computation.

Physical vapor deposition (PVD) and chemical vapor deposition (CVD) techniques have been adapted for creating thin films of phase-change materials and metal oxides essential for memristive devices. These techniques enable precise control over film thickness and composition, critical for reliable neuromorphic behavior. Recent innovations in atomic layer deposition (ALD) have further enhanced precision, allowing atomic-level control of material interfaces that determine synaptic characteristics.

Self-assembly processes represent a promising direction, particularly for creating three-dimensional neural architectures. Block copolymer lithography has demonstrated potential for creating regular nanoscale patterns that can serve as templates for neural components, offering higher density than conventional lithography at potentially lower costs. Directed self-assembly techniques are increasingly combining top-down and bottom-up approaches to achieve hierarchical neural structures.

Additive manufacturing technologies, including 3D printing of conductive and resistive materials, are emerging as versatile tools for prototyping neuromorphic systems. These methods allow rapid iteration of designs and enable novel geometries impossible with traditional fabrication. Advanced printing techniques using nanomaterial inks have achieved feature sizes approaching those needed for high-density neural networks.

Integration challenges remain significant, particularly in connecting neuromorphic materials with conventional CMOS circuitry. Hybrid integration approaches using through-silicon vias (TSVs) and advanced packaging techniques are being developed to address these challenges, enabling neuromorphic accelerators that complement traditional computing architectures.

Quality control and characterization methods have evolved specifically for neural materials, including specialized electrical testing protocols that evaluate synaptic plasticity and neuronal firing characteristics. Advanced microscopy and spectroscopy techniques provide insights into material structure-function relationships, guiding iterative improvements in fabrication processes.

Scalability remains a central challenge, with current techniques often limited to laboratory-scale production. Industry-academic collaborations are actively developing manufacturing processes capable of consistent, large-scale production of neuromorphic materials while maintaining the precise characteristics required for reliable neural computation.

Unlock deeper insights with PatSnap Eureka Quick Research — get a full tech report to explore trends and direct your research. Try now!

Generate Your Research Report Instantly with AI Agent

Supercharge your innovation with PatSnap Eureka AI Agent Platform!