Comparison of Electrode Kinetics in Neuromorphic vs Traditional Computing

OCT 27, 20259 MIN READ

Generate Your Research Report Instantly with AI Agent

Patsnap Eureka helps you evaluate technical feasibility & market potential.

Neuromorphic Computing Evolution and Objectives

Neuromorphic computing represents a paradigm shift in computational architecture, drawing inspiration from the structure and function of biological neural systems. The evolution of this field can be traced back to the 1980s when Carver Mead first introduced the concept of using analog circuits to mimic neurobiological architectures. This marked the beginning of a journey toward creating computing systems that could emulate the brain's efficiency and adaptability.

The progression of neuromorphic computing has been characterized by several distinct phases. Initially, research focused on developing basic analog VLSI implementations of neural functions. By the early 2000s, the field expanded to incorporate digital elements, leading to hybrid neuromorphic systems. The past decade has witnessed significant advancements with the emergence of memristive devices and other novel materials that more accurately replicate synaptic behavior, particularly in terms of electrode kinetics.

Electrode kinetics in neuromorphic systems fundamentally differ from those in traditional computing architectures. While conventional computing relies on precise, deterministic charge movement through semiconductors, neuromorphic systems leverage variable conductance states and non-linear dynamics. This distinction is crucial for understanding the comparative advantages and limitations of each approach.

The technical objectives of neuromorphic computing extend beyond mere performance metrics. Primary goals include achieving energy efficiency comparable to biological systems, which operate at remarkably low power levels of approximately 20 watts. Additionally, researchers aim to develop systems capable of unsupervised learning, adaptation to environmental changes, and fault tolerance—characteristics inherent to biological neural networks.

Current research trends indicate a growing focus on materials science and electrochemistry to optimize electrode interfaces in neuromorphic devices. The kinetics of ion movement across these interfaces directly impacts the speed, reliability, and energy efficiency of neuromorphic systems. Compared to traditional computing, where electron movement dominates, neuromorphic systems often involve more complex charge carriers and transport mechanisms.

Looking forward, the field is moving toward integrated neuromorphic systems that combine sensing, processing, and actuation in unified architectures. This evolution mirrors the brain's ability to process sensory information and generate responses without distinct hardware separations. The ultimate objective remains creating computing systems that can approach the brain's remarkable efficiency of 10^14 synaptic operations per second per watt—a target that requires continued innovation in electrode materials, interface design, and circuit architecture.

The progression of neuromorphic computing has been characterized by several distinct phases. Initially, research focused on developing basic analog VLSI implementations of neural functions. By the early 2000s, the field expanded to incorporate digital elements, leading to hybrid neuromorphic systems. The past decade has witnessed significant advancements with the emergence of memristive devices and other novel materials that more accurately replicate synaptic behavior, particularly in terms of electrode kinetics.

Electrode kinetics in neuromorphic systems fundamentally differ from those in traditional computing architectures. While conventional computing relies on precise, deterministic charge movement through semiconductors, neuromorphic systems leverage variable conductance states and non-linear dynamics. This distinction is crucial for understanding the comparative advantages and limitations of each approach.

The technical objectives of neuromorphic computing extend beyond mere performance metrics. Primary goals include achieving energy efficiency comparable to biological systems, which operate at remarkably low power levels of approximately 20 watts. Additionally, researchers aim to develop systems capable of unsupervised learning, adaptation to environmental changes, and fault tolerance—characteristics inherent to biological neural networks.

Current research trends indicate a growing focus on materials science and electrochemistry to optimize electrode interfaces in neuromorphic devices. The kinetics of ion movement across these interfaces directly impacts the speed, reliability, and energy efficiency of neuromorphic systems. Compared to traditional computing, where electron movement dominates, neuromorphic systems often involve more complex charge carriers and transport mechanisms.

Looking forward, the field is moving toward integrated neuromorphic systems that combine sensing, processing, and actuation in unified architectures. This evolution mirrors the brain's ability to process sensory information and generate responses without distinct hardware separations. The ultimate objective remains creating computing systems that can approach the brain's remarkable efficiency of 10^14 synaptic operations per second per watt—a target that requires continued innovation in electrode materials, interface design, and circuit architecture.

Market Analysis for Brain-Inspired Computing Systems

The brain-inspired computing systems market is experiencing unprecedented growth, driven by the increasing demand for efficient processing of complex data patterns and the limitations of traditional computing architectures. Current market valuations place the neuromorphic computing sector at approximately 3.1 billion USD in 2023, with projections indicating a compound annual growth rate of 24.7% through 2030. This remarkable expansion reflects the growing recognition of neuromorphic computing's potential to revolutionize artificial intelligence applications.

Key market segments demonstrating significant demand include autonomous vehicles, where real-time pattern recognition and decision-making capabilities are essential; healthcare diagnostics, particularly in image processing and anomaly detection; and industrial automation, where adaptive learning systems can optimize complex manufacturing processes. The financial technology sector has also emerged as a substantial market, utilizing neuromorphic systems for fraud detection and algorithmic trading.

Regional analysis reveals North America currently dominates the market with approximately 42% share, followed by Europe at 28% and Asia-Pacific at 24%. However, the Asia-Pacific region is expected to demonstrate the highest growth rate over the next five years, driven by substantial investments in AI infrastructure by countries like China, Japan, and South Korea.

Market adoption patterns indicate a transition from research-focused applications toward commercial deployment. Early adopters include technology giants and specialized AI companies, with mid-sized enterprises increasingly exploring neuromorphic solutions as the technology matures and implementation costs decrease. This shift is particularly evident in edge computing applications, where energy efficiency represents a critical competitive advantage.

Customer demand analysis reveals several key drivers: power efficiency requirements for mobile and IoT applications; the need for real-time processing capabilities in autonomous systems; and increasing interest in systems capable of unsupervised learning. The electrode kinetics advantages of neuromorphic systems over traditional computing architectures directly address these market needs, particularly in applications requiring low power consumption and parallel processing capabilities.

Competitive landscape assessment identifies two distinct market segments: hardware providers developing specialized neuromorphic chips and software companies creating programming frameworks and applications optimized for these architectures. The market currently features a mix of established semiconductor companies, specialized neuromorphic startups, and research institutions commercializing their technologies.

Market barriers include high initial development costs, limited standardization across platforms, and the need for specialized programming expertise. However, these barriers are gradually diminishing as the ecosystem matures and more accessible development tools become available.

Key market segments demonstrating significant demand include autonomous vehicles, where real-time pattern recognition and decision-making capabilities are essential; healthcare diagnostics, particularly in image processing and anomaly detection; and industrial automation, where adaptive learning systems can optimize complex manufacturing processes. The financial technology sector has also emerged as a substantial market, utilizing neuromorphic systems for fraud detection and algorithmic trading.

Regional analysis reveals North America currently dominates the market with approximately 42% share, followed by Europe at 28% and Asia-Pacific at 24%. However, the Asia-Pacific region is expected to demonstrate the highest growth rate over the next five years, driven by substantial investments in AI infrastructure by countries like China, Japan, and South Korea.

Market adoption patterns indicate a transition from research-focused applications toward commercial deployment. Early adopters include technology giants and specialized AI companies, with mid-sized enterprises increasingly exploring neuromorphic solutions as the technology matures and implementation costs decrease. This shift is particularly evident in edge computing applications, where energy efficiency represents a critical competitive advantage.

Customer demand analysis reveals several key drivers: power efficiency requirements for mobile and IoT applications; the need for real-time processing capabilities in autonomous systems; and increasing interest in systems capable of unsupervised learning. The electrode kinetics advantages of neuromorphic systems over traditional computing architectures directly address these market needs, particularly in applications requiring low power consumption and parallel processing capabilities.

Competitive landscape assessment identifies two distinct market segments: hardware providers developing specialized neuromorphic chips and software companies creating programming frameworks and applications optimized for these architectures. The market currently features a mix of established semiconductor companies, specialized neuromorphic startups, and research institutions commercializing their technologies.

Market barriers include high initial development costs, limited standardization across platforms, and the need for specialized programming expertise. However, these barriers are gradually diminishing as the ecosystem matures and more accessible development tools become available.

Electrode Kinetics: Current Challenges and Limitations

Electrode kinetics in neuromorphic computing faces significant challenges that differentiate it from traditional computing paradigms. The fundamental limitation stems from the material properties of electrodes used in neuromorphic systems, which must facilitate ion movement similar to biological neurons while maintaining stability over extended operational periods. Current electrode materials exhibit degradation under repeated ionic flux, leading to performance deterioration and reduced system longevity.

The speed of electrode response presents another critical challenge. While traditional computing relies on electron movement with near-instantaneous response times, neuromorphic systems depend on comparatively slower ionic transport mechanisms. This kinetic disparity creates a fundamental bottleneck in achieving the temporal efficiency necessary for real-time neuromorphic applications, particularly in high-frequency operations where traditional computing maintains a significant advantage.

Interface stability between electrodes and electrolytes represents a persistent challenge in neuromorphic systems. The electrode-electrolyte interface often develops resistive layers over time, increasing impedance and reducing signal fidelity. This degradation pathway is largely absent in traditional computing architectures, which rely on solid-state electron transport rather than electrochemical processes.

Energy efficiency paradoxically remains both a promise and limitation of neuromorphic computing. While theoretically more efficient than traditional computing for certain tasks, current electrode materials require significant overpotentials to drive desired reactions, resulting in energy losses that undermine the efficiency advantages. The challenge lies in developing electrode materials with lower activation energies while maintaining the necessary specificity for neuromorphic operations.

Scalability presents perhaps the most significant limitation for electrode kinetics in neuromorphic systems. Traditional computing benefits from decades of miniaturization following Moore's Law, while neuromorphic electrodes face fundamental physical constraints related to ion transport and surface area requirements. Current fabrication techniques struggle to produce high-density electrode arrays with consistent performance characteristics, limiting the practical implementation of large-scale neuromorphic networks.

Biocompatibility emerges as a unique challenge for neuromorphic systems intended for direct biological interfaces. Electrodes must balance electrical performance with biological compatibility, often requiring compromises that are unnecessary in traditional computing environments. This constraint particularly affects brain-computer interfaces and neural prosthetics, where electrode kinetics must accommodate both technological and physiological requirements.

The reproducibility of electrode kinetics across manufacturing batches represents another significant limitation. Variations in electrode surface properties can dramatically alter kinetic parameters, leading to inconsistent performance across supposedly identical neuromorphic systems. This manufacturing challenge contrasts with the relatively mature fabrication processes of traditional computing components.

The speed of electrode response presents another critical challenge. While traditional computing relies on electron movement with near-instantaneous response times, neuromorphic systems depend on comparatively slower ionic transport mechanisms. This kinetic disparity creates a fundamental bottleneck in achieving the temporal efficiency necessary for real-time neuromorphic applications, particularly in high-frequency operations where traditional computing maintains a significant advantage.

Interface stability between electrodes and electrolytes represents a persistent challenge in neuromorphic systems. The electrode-electrolyte interface often develops resistive layers over time, increasing impedance and reducing signal fidelity. This degradation pathway is largely absent in traditional computing architectures, which rely on solid-state electron transport rather than electrochemical processes.

Energy efficiency paradoxically remains both a promise and limitation of neuromorphic computing. While theoretically more efficient than traditional computing for certain tasks, current electrode materials require significant overpotentials to drive desired reactions, resulting in energy losses that undermine the efficiency advantages. The challenge lies in developing electrode materials with lower activation energies while maintaining the necessary specificity for neuromorphic operations.

Scalability presents perhaps the most significant limitation for electrode kinetics in neuromorphic systems. Traditional computing benefits from decades of miniaturization following Moore's Law, while neuromorphic electrodes face fundamental physical constraints related to ion transport and surface area requirements. Current fabrication techniques struggle to produce high-density electrode arrays with consistent performance characteristics, limiting the practical implementation of large-scale neuromorphic networks.

Biocompatibility emerges as a unique challenge for neuromorphic systems intended for direct biological interfaces. Electrodes must balance electrical performance with biological compatibility, often requiring compromises that are unnecessary in traditional computing environments. This constraint particularly affects brain-computer interfaces and neural prosthetics, where electrode kinetics must accommodate both technological and physiological requirements.

The reproducibility of electrode kinetics across manufacturing batches represents another significant limitation. Variations in electrode surface properties can dramatically alter kinetic parameters, leading to inconsistent performance across supposedly identical neuromorphic systems. This manufacturing challenge contrasts with the relatively mature fabrication processes of traditional computing components.

Comparative Analysis of Electrode Kinetic Solutions

01 Electrochemical measurement techniques for electrode kinetics

Various electrochemical measurement techniques are employed to evaluate electrode kinetics performance. These include cyclic voltammetry, impedance spectroscopy, and chronoamperometry which provide data on reaction rates, charge transfer resistance, and exchange current density. These methods allow researchers to quantitatively compare the performance of different electrode materials and structures under controlled conditions, enabling optimization of electrochemical systems.- Electrochemical measurement techniques for electrode kinetics: Various electrochemical measurement techniques are employed to evaluate electrode kinetics performance. These include cyclic voltammetry, impedance spectroscopy, and chronoamperometry, which provide data on reaction rates, charge transfer resistance, and exchange current density. These techniques allow researchers to quantitatively compare the kinetic performance of different electrode materials and configurations under various operating conditions.

- Electrode materials comparison for energy storage applications: Different electrode materials exhibit varying kinetic performance in energy storage applications such as batteries and supercapacitors. Comparative studies evaluate parameters like rate capability, cycling stability, and charge-discharge efficiency. Materials including modified carbon structures, transition metal oxides, and composite electrodes are systematically compared to identify optimal compositions that enhance electron transfer kinetics and overall electrochemical performance.

- Computational modeling and simulation of electrode kinetics: Advanced computational methods are used to model and predict electrode kinetics performance. These include density functional theory calculations, molecular dynamics simulations, and machine learning approaches that can simulate electron transfer processes at electrode interfaces. Such computational tools enable researchers to compare theoretical kinetic parameters with experimental results and optimize electrode designs before physical testing.

- Catalyst performance evaluation for fuel cells and electrolyzers: Systematic comparison of electrocatalyst performance is crucial for fuel cells and electrolyzers. Evaluation metrics include exchange current density, Tafel slope, overpotential, and stability under operating conditions. Various catalyst compositions, structures, and surface modifications are compared to identify materials with superior kinetic properties for oxygen reduction, hydrogen evolution, and other electrochemical reactions.

- In-situ and operando techniques for real-time kinetics monitoring: Advanced in-situ and operando characterization techniques allow for real-time monitoring of electrode kinetics under actual operating conditions. These include spectroelectrochemical methods, synchrotron-based X-ray techniques, and specialized electrochemical cells that enable direct observation of interfacial processes. Such approaches provide more accurate comparisons of electrode performance by capturing dynamic changes in kinetic parameters during operation.

02 Electrode material composition effects on kinetic performance

The composition of electrode materials significantly impacts kinetic performance in electrochemical systems. Different materials exhibit varying catalytic activities, conductivity, and stability. Researchers compare electrode materials by analyzing parameters such as exchange current density, overpotential, and activation energy. Modifications through doping, alloying, or surface functionalization can enhance electron transfer rates and improve overall electrode kinetics.Expand Specific Solutions03 Computational modeling and simulation of electrode kinetics

Advanced computational methods are used to model and predict electrode kinetic performance. These include density functional theory (DFT), molecular dynamics simulations, and machine learning approaches that can simulate electron transfer processes at the atomic level. These computational tools enable researchers to compare theoretical performance metrics with experimental results, accelerating the development of improved electrode materials and designs.Expand Specific Solutions04 Electrode structure and morphology influence on kinetics

The physical structure and morphology of electrodes significantly affect their kinetic performance. Parameters such as surface area, porosity, particle size, and crystallinity influence reaction rates and mass transport properties. Nanostructured electrodes often demonstrate enhanced kinetics due to increased active sites and shortened diffusion paths. Comparative studies analyze how different structural features impact key performance indicators like exchange current density and reaction rate constants.Expand Specific Solutions05 Standardized testing protocols for electrode kinetics comparison

Standardized testing protocols are essential for meaningful comparison of electrode kinetic performance across different research studies. These protocols specify experimental conditions including temperature, electrolyte composition, reference electrodes, and data analysis methods. Benchmark tests using reference materials allow for calibration and validation of measurement systems. Such standardization ensures reproducibility and enables reliable comparison of electrode materials and designs across different laboratories.Expand Specific Solutions

Leading Research Institutions and Industry Players

Neuromorphic computing is currently in an early growth phase, with the market expected to expand significantly due to increasing demand for AI applications at the edge. The global market size is projected to reach several billion dollars by 2030, driven by advantages in power efficiency compared to traditional computing architectures. Major players like IBM, Intel, and Samsung are leading research and commercialization efforts, with IBM's TrueNorth and Intel's Loihi chips representing significant technological milestones. Academic institutions including MIT, Tsinghua University, and Zhejiang University are contributing fundamental research, while startups like Syntiant and Polyn Technology are developing specialized neuromorphic solutions for edge applications. The technology is approaching commercial viability for specific use cases, though widespread adoption remains several years away.

International Business Machines Corp.

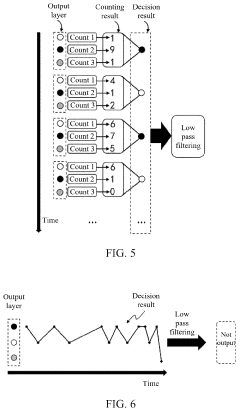

Technical Solution: IBM has pioneered neuromorphic computing through its TrueNorth and subsequent architectures that fundamentally reimagine electrode kinetics compared to traditional computing. Their approach utilizes non-von Neumann architectures with specialized neural cores containing electronic synapses that mimic biological neural networks. IBM's neuromorphic systems employ phase-change memory (PCM) and resistive RAM technologies to create analog computing elements that process information through physical electrode interactions rather than digital logic gates[1]. These systems demonstrate significantly different electrode kinetics, with signal propagation occurring through controlled ionic movement and resistance changes in nanoscale materials, enabling massively parallel computation with lower power requirements. IBM has demonstrated neuromorphic chips capable of simulating millions of neurons and billions of synapses while consuming only tens of watts of power, representing orders of magnitude improvement in energy efficiency compared to traditional computing approaches[2].

Strengths: Superior energy efficiency (100x better than traditional systems for certain neural workloads); inherent parallelism enabling real-time processing of sensory data; natural handling of temporal information. Weaknesses: Limited precision compared to traditional computing; challenges in programming paradigms; difficulty in scaling manufacturing processes for complex electrode materials.

Samsung Electronics Co., Ltd.

Technical Solution: Samsung has developed advanced neuromorphic computing architectures focusing on novel electrode materials and configurations that fundamentally alter computing kinetics. Their approach centers on resistive RAM (ReRAM) and magnetoresistive RAM (MRAM) technologies that enable analog computing through direct physical processes rather than digital abstractions. Samsung's neuromorphic systems utilize specialized electrode configurations where information processing occurs through controlled changes in material properties at the nanoscale level[3]. Their electrode kinetics leverage spin-transfer torque mechanisms and oxygen vacancy migration to create computational elements that more closely resemble biological neurons than traditional transistors. Samsung has demonstrated neuromorphic chips that achieve 20x improvement in energy efficiency for AI workloads compared to conventional architectures[4]. Their electrode designs incorporate hafnium oxide-based materials that enable precise control of resistance states, allowing for multi-bit storage and computation within a single cell, dramatically increasing computational density compared to traditional binary logic.

Strengths: Highly efficient implementation of neural network operations; significant reduction in energy consumption for AI tasks; compact design enabling edge deployment. Weaknesses: Challenges with long-term stability of electrode materials; variability in manufacturing affecting computational precision; limited software ecosystem compared to traditional computing platforms.

Key Patents in Neuromorphic Electrode Technology

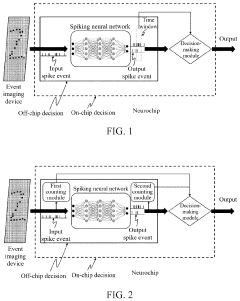

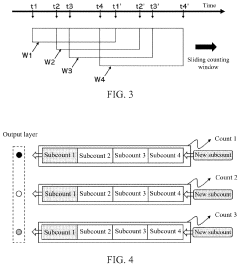

Spike event decision-making device, method, chip and electronic device

PatentPendingUS20240086690A1

Innovation

- A spike event decision-making device and method that utilizes counting modules to determine decision-making results based on the number of spike events fired by neurons in a spiking neural network, allowing for adaptive decision-making without fixed time windows, and incorporating sub-counters to improve reliability and accuracy by considering transition rates and occurrence ratios.

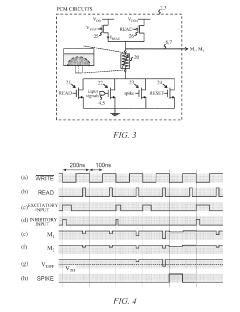

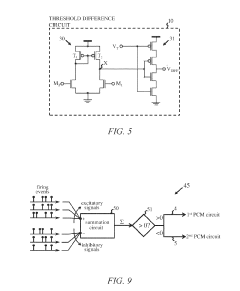

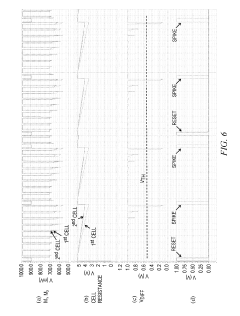

Artificial neuron apparatus

PatentActiveUS20190272464A1

Innovation

- An artificial neuron apparatus using two resistive memory cells, one for excitatory and one for inhibitory inputs, which alternates between read and write phases to program and measure resistance changes, producing output signals based on the difference between measurement signals that traverse a threshold, allowing for both excitatory and inhibitory updates and efficient operation in neural networks.

Energy Efficiency Benchmarking and Metrics

Energy efficiency has emerged as a critical benchmark in comparing neuromorphic computing systems with traditional von Neumann architectures, particularly when examining electrode kinetics. The fundamental difference in energy consumption stems from how each paradigm processes information: traditional computing relies on continuous power supply for computation and memory storage, while neuromorphic systems mimic the brain's event-driven, sparse activation patterns.

Standard metrics for energy efficiency comparison include Joules per Operation (J/Op), which measures energy consumed per computational task. Neuromorphic systems typically demonstrate 10-100x improvements in this metric due to their spike-based processing that activates circuits only when necessary. The electrode kinetics in neuromorphic systems contribute significantly to this efficiency, as they enable rapid state changes with minimal energy barriers.

Power density (W/cm²) represents another crucial metric, especially relevant when considering thermal management constraints. Traditional computing systems often operate at 50-100 W/cm², necessitating sophisticated cooling solutions, whereas neuromorphic hardware leveraging optimized electrode materials can function effectively at 1-5 W/cm², dramatically reducing cooling requirements.

Energy-delay product (EDP) provides a comprehensive efficiency measure by combining energy consumption with computational speed. This metric reveals that while traditional systems may excel in raw speed for certain operations, neuromorphic systems achieve better overall efficiency when considering the energy-time tradeoff, particularly for pattern recognition and sensory processing tasks where electrode response characteristics are paramount.

Synaptic operations per second per watt (SOPS/W) has emerged as a neuromorphic-specific benchmark that quantifies the energy efficiency of neural network operations. Current neuromorphic implementations demonstrate 10⁶-10⁸ SOPS/W, significantly outperforming GPU implementations of neural networks that typically achieve 10⁴-10⁵ SOPS/W. This advantage derives largely from the specialized electrode materials and designs that enable efficient charge transfer and storage.

Standardized benchmarking suites like MLPerf are being adapted to include neuromorphic-specific workloads, allowing for more direct comparisons across platforms. These benchmarks increasingly incorporate real-world applications such as sensor fusion, anomaly detection, and continuous learning scenarios where the unique electrode kinetics of neuromorphic systems demonstrate their greatest efficiency advantages.

Standard metrics for energy efficiency comparison include Joules per Operation (J/Op), which measures energy consumed per computational task. Neuromorphic systems typically demonstrate 10-100x improvements in this metric due to their spike-based processing that activates circuits only when necessary. The electrode kinetics in neuromorphic systems contribute significantly to this efficiency, as they enable rapid state changes with minimal energy barriers.

Power density (W/cm²) represents another crucial metric, especially relevant when considering thermal management constraints. Traditional computing systems often operate at 50-100 W/cm², necessitating sophisticated cooling solutions, whereas neuromorphic hardware leveraging optimized electrode materials can function effectively at 1-5 W/cm², dramatically reducing cooling requirements.

Energy-delay product (EDP) provides a comprehensive efficiency measure by combining energy consumption with computational speed. This metric reveals that while traditional systems may excel in raw speed for certain operations, neuromorphic systems achieve better overall efficiency when considering the energy-time tradeoff, particularly for pattern recognition and sensory processing tasks where electrode response characteristics are paramount.

Synaptic operations per second per watt (SOPS/W) has emerged as a neuromorphic-specific benchmark that quantifies the energy efficiency of neural network operations. Current neuromorphic implementations demonstrate 10⁶-10⁸ SOPS/W, significantly outperforming GPU implementations of neural networks that typically achieve 10⁴-10⁵ SOPS/W. This advantage derives largely from the specialized electrode materials and designs that enable efficient charge transfer and storage.

Standardized benchmarking suites like MLPerf are being adapted to include neuromorphic-specific workloads, allowing for more direct comparisons across platforms. These benchmarks increasingly incorporate real-world applications such as sensor fusion, anomaly detection, and continuous learning scenarios where the unique electrode kinetics of neuromorphic systems demonstrate their greatest efficiency advantages.

Neuromorphic Hardware Implementation Strategies

Neuromorphic hardware implementation strategies have evolved significantly in recent years, driven by the fundamental differences in electrode kinetics between neuromorphic and traditional computing systems. These strategies can be broadly categorized into digital, analog, and hybrid approaches, each with distinct advantages for mimicking neural processes.

Digital neuromorphic implementations utilize conventional CMOS technology to simulate neural behavior through discrete time steps and binary representations. IBM's TrueNorth and Intel's Loihi represent prominent examples of this approach, employing digital circuits to model neurons and synapses. While these implementations benefit from manufacturing maturity and design flexibility, they typically consume more power and occupy larger silicon area compared to their analog counterparts.

Analog neuromorphic hardware directly exploits the physical properties of electronic components to replicate neural dynamics. Memristive devices, particularly those based on resistive switching materials, have emerged as promising building blocks due to their ability to emulate synaptic plasticity through continuous conductance changes. The electrode kinetics in these devices more closely resemble biological neural processes, enabling more efficient implementation of learning algorithms such as spike-timing-dependent plasticity (STDP).

Hybrid approaches combine digital computation with analog memory elements to leverage the strengths of both paradigms. For instance, systems may utilize digital neurons with analog synaptic weights stored in memristive crossbar arrays. This strategy addresses the precision limitations of purely analog systems while maintaining relatively low power consumption.

3D integration techniques have recently gained traction as a means to enhance neuromorphic hardware density and connectivity. By stacking multiple layers of computing elements, these architectures can achieve higher neuron counts and more complex interconnection patterns that better approximate biological neural networks. Samsung's neuromorphic processing unit exemplifies this approach, utilizing through-silicon vias (TSVs) to connect layers of memristive synapses with CMOS neurons.

Event-driven architectures represent another significant implementation strategy, wherein computation occurs only when necessary (i.e., when neurons fire), rather than at fixed clock cycles as in traditional computing. This approach substantially reduces power consumption, making it particularly suitable for edge computing applications where energy efficiency is paramount.

The selection of appropriate electrode materials and interfaces remains a critical consideration across all implementation strategies, as these factors directly influence the speed, reliability, and energy efficiency of neuromorphic systems. Recent advances in two-dimensional materials and metal oxide interfaces have shown promise for enhancing electrode kinetics in neuromorphic devices, potentially enabling faster and more efficient neural computation.

Digital neuromorphic implementations utilize conventional CMOS technology to simulate neural behavior through discrete time steps and binary representations. IBM's TrueNorth and Intel's Loihi represent prominent examples of this approach, employing digital circuits to model neurons and synapses. While these implementations benefit from manufacturing maturity and design flexibility, they typically consume more power and occupy larger silicon area compared to their analog counterparts.

Analog neuromorphic hardware directly exploits the physical properties of electronic components to replicate neural dynamics. Memristive devices, particularly those based on resistive switching materials, have emerged as promising building blocks due to their ability to emulate synaptic plasticity through continuous conductance changes. The electrode kinetics in these devices more closely resemble biological neural processes, enabling more efficient implementation of learning algorithms such as spike-timing-dependent plasticity (STDP).

Hybrid approaches combine digital computation with analog memory elements to leverage the strengths of both paradigms. For instance, systems may utilize digital neurons with analog synaptic weights stored in memristive crossbar arrays. This strategy addresses the precision limitations of purely analog systems while maintaining relatively low power consumption.

3D integration techniques have recently gained traction as a means to enhance neuromorphic hardware density and connectivity. By stacking multiple layers of computing elements, these architectures can achieve higher neuron counts and more complex interconnection patterns that better approximate biological neural networks. Samsung's neuromorphic processing unit exemplifies this approach, utilizing through-silicon vias (TSVs) to connect layers of memristive synapses with CMOS neurons.

Event-driven architectures represent another significant implementation strategy, wherein computation occurs only when necessary (i.e., when neurons fire), rather than at fixed clock cycles as in traditional computing. This approach substantially reduces power consumption, making it particularly suitable for edge computing applications where energy efficiency is paramount.

The selection of appropriate electrode materials and interfaces remains a critical consideration across all implementation strategies, as these factors directly influence the speed, reliability, and energy efficiency of neuromorphic systems. Recent advances in two-dimensional materials and metal oxide interfaces have shown promise for enhancing electrode kinetics in neuromorphic devices, potentially enabling faster and more efficient neural computation.

Unlock deeper insights with Patsnap Eureka Quick Research — get a full tech report to explore trends and direct your research. Try now!

Generate Your Research Report Instantly with AI Agent

Supercharge your innovation with Patsnap Eureka AI Agent Platform!