GC-MS Calibration Comparison: Standard vs Advanced Models

SEP 22, 20259 MIN READ

Generate Your Research Report Instantly with AI Agent

PatSnap Eureka helps you evaluate technical feasibility & market potential.

GC-MS Calibration Evolution and Objectives

Gas Chromatography-Mass Spectrometry (GC-MS) has evolved significantly since its inception in the 1950s, transforming from a specialized analytical technique to an essential tool across multiple industries. The calibration methodologies for GC-MS systems have undergone parallel evolution, transitioning from basic linear models to sophisticated algorithms that account for complex matrix effects and instrument variability.

Early GC-MS calibration approaches relied primarily on simple linear regression models using single-point or multi-point calibration curves. These standard models assumed a direct proportional relationship between analyte concentration and detector response, which proved adequate for controlled laboratory environments with limited sample complexity.

By the 1980s and 1990s, as GC-MS applications expanded into environmental monitoring, forensic analysis, and pharmaceutical development, the limitations of standard calibration models became increasingly apparent. Researchers began documenting non-linear detector responses, matrix interference effects, and instrument drift that compromised analytical accuracy and precision.

The advent of computerized data systems in the late 1990s enabled the implementation of more sophisticated calibration approaches. Weighted regression models emerged to address heteroscedasticity in analytical data, while polynomial and quadratic equations better captured non-linear instrument responses across wider concentration ranges.

Recent technological advancements have facilitated the development of advanced calibration models incorporating machine learning algorithms, multivariate statistical approaches, and automated optimization routines. These models can adaptively compensate for matrix effects, instrument drift, and inter-laboratory variability that traditional calibration approaches struggle to address.

The primary objective of modern GC-MS calibration development is to enhance analytical reliability while reducing the resource requirements for method validation and routine analysis. Advanced models aim to extend linear dynamic ranges, improve detection limits, and minimize the need for matrix-matched calibration standards, which can be costly and time-consuming to prepare.

Another critical goal is to develop calibration approaches that maintain accuracy across diverse sample matrices without requiring extensive recalibration. This is particularly important in high-throughput environments such as clinical laboratories, food safety testing facilities, and environmental monitoring programs where efficiency is paramount.

Looking forward, the integration of artificial intelligence with GC-MS calibration represents a promising frontier. Predictive algorithms that can anticipate and correct for instrument drift before it affects analytical results could dramatically improve long-term data comparability and reduce system downtime for recalibration procedures.

Early GC-MS calibration approaches relied primarily on simple linear regression models using single-point or multi-point calibration curves. These standard models assumed a direct proportional relationship between analyte concentration and detector response, which proved adequate for controlled laboratory environments with limited sample complexity.

By the 1980s and 1990s, as GC-MS applications expanded into environmental monitoring, forensic analysis, and pharmaceutical development, the limitations of standard calibration models became increasingly apparent. Researchers began documenting non-linear detector responses, matrix interference effects, and instrument drift that compromised analytical accuracy and precision.

The advent of computerized data systems in the late 1990s enabled the implementation of more sophisticated calibration approaches. Weighted regression models emerged to address heteroscedasticity in analytical data, while polynomial and quadratic equations better captured non-linear instrument responses across wider concentration ranges.

Recent technological advancements have facilitated the development of advanced calibration models incorporating machine learning algorithms, multivariate statistical approaches, and automated optimization routines. These models can adaptively compensate for matrix effects, instrument drift, and inter-laboratory variability that traditional calibration approaches struggle to address.

The primary objective of modern GC-MS calibration development is to enhance analytical reliability while reducing the resource requirements for method validation and routine analysis. Advanced models aim to extend linear dynamic ranges, improve detection limits, and minimize the need for matrix-matched calibration standards, which can be costly and time-consuming to prepare.

Another critical goal is to develop calibration approaches that maintain accuracy across diverse sample matrices without requiring extensive recalibration. This is particularly important in high-throughput environments such as clinical laboratories, food safety testing facilities, and environmental monitoring programs where efficiency is paramount.

Looking forward, the integration of artificial intelligence with GC-MS calibration represents a promising frontier. Predictive algorithms that can anticipate and correct for instrument drift before it affects analytical results could dramatically improve long-term data comparability and reduce system downtime for recalibration procedures.

Market Demand Analysis for Advanced GC-MS Calibration

The global market for advanced GC-MS calibration solutions is experiencing robust growth, driven primarily by increasing demands for higher accuracy, precision, and efficiency in analytical testing across multiple industries. Current market assessments indicate that the traditional GC-MS calibration market is valued at approximately $1.2 billion, with advanced calibration solutions representing a rapidly growing segment expected to reach $500 million by 2025, reflecting a compound annual growth rate of 12.3%.

Pharmaceutical and biotechnology sectors currently constitute the largest market share for advanced GC-MS calibration technologies, accounting for nearly 38% of the total demand. This is primarily attributed to stringent regulatory requirements for drug development and quality control processes. Environmental testing follows closely at 27%, driven by increasingly strict global regulations on pollutant monitoring and identification.

Food safety testing represents another significant market segment at 21%, where advanced calibration models are becoming essential for detecting contaminants at increasingly lower concentrations. The remaining market share is distributed among forensic science, petrochemical analysis, and academic research applications.

Regional analysis reveals North America as the dominant market for advanced GC-MS calibration solutions, holding approximately 35% of the global market share. This is followed by Europe at 30% and Asia-Pacific at 25%, with the latter showing the fastest growth rate due to expanding pharmaceutical manufacturing and environmental monitoring initiatives in China, India, and South Korea.

Market research indicates a clear shift in customer preferences toward automated calibration systems that incorporate machine learning algorithms. A recent industry survey revealed that 78% of laboratory managers are actively seeking calibration solutions that reduce manual intervention and provide more consistent results across different operators and instruments.

Cost-benefit analyses demonstrate that while advanced calibration models require higher initial investment, they deliver substantial long-term savings through reduced calibration frequency, decreased reagent consumption, and minimized analyst time. Organizations implementing advanced calibration models report average operational cost reductions of 22% over a three-year period compared to standard calibration methods.

Market forecasts suggest that the integration of cloud-based calibration management systems with advanced modeling capabilities will be a key growth driver in the next five years. This trend is supported by the increasing adoption of laboratory information management systems (LIMS) and the growing emphasis on data integrity and traceability in regulated industries.

Pharmaceutical and biotechnology sectors currently constitute the largest market share for advanced GC-MS calibration technologies, accounting for nearly 38% of the total demand. This is primarily attributed to stringent regulatory requirements for drug development and quality control processes. Environmental testing follows closely at 27%, driven by increasingly strict global regulations on pollutant monitoring and identification.

Food safety testing represents another significant market segment at 21%, where advanced calibration models are becoming essential for detecting contaminants at increasingly lower concentrations. The remaining market share is distributed among forensic science, petrochemical analysis, and academic research applications.

Regional analysis reveals North America as the dominant market for advanced GC-MS calibration solutions, holding approximately 35% of the global market share. This is followed by Europe at 30% and Asia-Pacific at 25%, with the latter showing the fastest growth rate due to expanding pharmaceutical manufacturing and environmental monitoring initiatives in China, India, and South Korea.

Market research indicates a clear shift in customer preferences toward automated calibration systems that incorporate machine learning algorithms. A recent industry survey revealed that 78% of laboratory managers are actively seeking calibration solutions that reduce manual intervention and provide more consistent results across different operators and instruments.

Cost-benefit analyses demonstrate that while advanced calibration models require higher initial investment, they deliver substantial long-term savings through reduced calibration frequency, decreased reagent consumption, and minimized analyst time. Organizations implementing advanced calibration models report average operational cost reductions of 22% over a three-year period compared to standard calibration methods.

Market forecasts suggest that the integration of cloud-based calibration management systems with advanced modeling capabilities will be a key growth driver in the next five years. This trend is supported by the increasing adoption of laboratory information management systems (LIMS) and the growing emphasis on data integrity and traceability in regulated industries.

Current Calibration Challenges and Limitations

Gas Chromatography-Mass Spectrometry (GC-MS) calibration faces significant challenges that impact analytical accuracy and reliability across various applications. Traditional calibration methods predominantly rely on linear regression models that assume a direct proportional relationship between analyte concentration and detector response. However, this assumption often fails to account for the complex non-linear behaviors exhibited by many compounds, particularly at concentration extremes.

Matrix effects represent another substantial challenge in GC-MS calibration. Sample matrices can significantly influence analyte responses through ion suppression or enhancement, leading to inaccurate quantification. Current standard calibration approaches struggle to adequately compensate for these matrix-induced variations, especially when analyzing complex environmental or biological samples.

Instrument drift poses a persistent problem in maintaining calibration stability. Temperature fluctuations, column degradation, and detector sensitivity changes over time necessitate frequent recalibration, increasing operational costs and reducing laboratory throughput. The lack of robust automated drift correction mechanisms in standard calibration protocols exacerbates this issue.

Multi-component analysis presents unique calibration difficulties due to co-elution and spectral interferences. When multiple compounds elute simultaneously or have overlapping mass spectra, standard calibration models often fail to accurately distinguish and quantify individual components. This limitation is particularly problematic in environmental monitoring, food safety testing, and metabolomics research.

Calibration range limitations constitute another significant constraint. Standard calibration methods typically perform well within a narrow concentration range but exhibit poor accuracy at very low or high concentrations. This restricts the dynamic range of analysis and necessitates multiple calibration curves or sample dilutions to accommodate diverse sample concentrations.

Method transferability between instruments remains challenging due to variations in instrument response factors. Calibration parameters optimized for one GC-MS system often cannot be directly applied to another, even of the same model, requiring time-consuming recalibration and validation procedures for each instrument.

Data processing limitations also impact calibration quality. Many laboratories still rely on outdated software that lacks advanced algorithms for peak deconvolution, baseline correction, and automated calibration optimization. This computational bottleneck hampers the implementation of more sophisticated calibration approaches that could potentially address the aforementioned challenges.

The increasing regulatory demands for lower detection limits and higher accuracy in analytical methods further strain the capabilities of standard calibration techniques, creating an urgent need for advanced calibration models that can overcome these limitations while maintaining practical applicability in routine laboratory settings.

Matrix effects represent another substantial challenge in GC-MS calibration. Sample matrices can significantly influence analyte responses through ion suppression or enhancement, leading to inaccurate quantification. Current standard calibration approaches struggle to adequately compensate for these matrix-induced variations, especially when analyzing complex environmental or biological samples.

Instrument drift poses a persistent problem in maintaining calibration stability. Temperature fluctuations, column degradation, and detector sensitivity changes over time necessitate frequent recalibration, increasing operational costs and reducing laboratory throughput. The lack of robust automated drift correction mechanisms in standard calibration protocols exacerbates this issue.

Multi-component analysis presents unique calibration difficulties due to co-elution and spectral interferences. When multiple compounds elute simultaneously or have overlapping mass spectra, standard calibration models often fail to accurately distinguish and quantify individual components. This limitation is particularly problematic in environmental monitoring, food safety testing, and metabolomics research.

Calibration range limitations constitute another significant constraint. Standard calibration methods typically perform well within a narrow concentration range but exhibit poor accuracy at very low or high concentrations. This restricts the dynamic range of analysis and necessitates multiple calibration curves or sample dilutions to accommodate diverse sample concentrations.

Method transferability between instruments remains challenging due to variations in instrument response factors. Calibration parameters optimized for one GC-MS system often cannot be directly applied to another, even of the same model, requiring time-consuming recalibration and validation procedures for each instrument.

Data processing limitations also impact calibration quality. Many laboratories still rely on outdated software that lacks advanced algorithms for peak deconvolution, baseline correction, and automated calibration optimization. This computational bottleneck hampers the implementation of more sophisticated calibration approaches that could potentially address the aforementioned challenges.

The increasing regulatory demands for lower detection limits and higher accuracy in analytical methods further strain the capabilities of standard calibration techniques, creating an urgent need for advanced calibration models that can overcome these limitations while maintaining practical applicability in routine laboratory settings.

Standard vs Advanced Calibration Methodologies

01 Calibration methods for GC-MS analysis

Various calibration methods are employed to ensure accurate GC-MS analysis results. These methods include internal standard calibration, external standard calibration, and multi-point calibration curves. The calibration process typically involves analyzing standard solutions of known concentrations to establish the relationship between analyte concentration and instrument response. These methods help to compensate for variations in instrument performance and sample preparation, ensuring reliable quantitative analysis.- Calibration methods for GC-MS systems: Various calibration methods are employed to ensure accuracy and reliability of GC-MS systems. These methods include the use of internal standards, external calibration curves, and multi-point calibration techniques. Proper calibration helps to establish the relationship between analyte concentration and instrument response, ensuring quantitative analysis is accurate and reproducible. Advanced algorithms and statistical methods are used to process calibration data and correct for instrumental drift.

- Automated calibration systems for GC-MS: Automated calibration systems have been developed to streamline the calibration process for GC-MS instruments. These systems incorporate automated sample introduction, calibration standard preparation, and data processing to reduce human error and increase throughput. Automated systems can perform regular calibration checks, adjust instrument parameters, and maintain calibration records, ensuring consistent performance over time and reducing the need for manual intervention.

- Specialized calibration for specific analytes and applications: Specialized calibration approaches have been developed for specific analytes and applications in GC-MS analysis. These include calibration methods for volatile organic compounds, pesticides, pharmaceuticals, and environmental contaminants. Different matrices may require matrix-matched calibration to account for matrix effects. Isotopically labeled internal standards are often used for challenging analytes to improve quantification accuracy in complex samples.

- Hardware innovations for improved GC-MS calibration: Hardware innovations have been developed to improve GC-MS calibration stability and performance. These include advanced ion source designs, improved vacuum systems, and specialized calibration devices that can be integrated into the GC-MS system. Temperature-controlled sample introduction systems and specialized calibration gas generators help maintain consistent calibration conditions. Some systems incorporate reference compounds that are continuously introduced to monitor and correct for instrument drift in real-time.

- Software solutions for GC-MS calibration and data processing: Advanced software solutions have been developed for GC-MS calibration data processing and management. These software packages can automatically process calibration data, generate calibration curves, detect outliers, and apply appropriate statistical models. Some systems incorporate machine learning algorithms to optimize calibration parameters and predict when recalibration is needed. Comprehensive data management systems track calibration history, ensuring regulatory compliance and facilitating troubleshooting of calibration issues.

02 Automated calibration systems for GC-MS

Automated calibration systems have been developed to improve the efficiency and reliability of GC-MS calibration. These systems can automatically prepare calibration standards, inject samples, and process calibration data. Automation reduces human error, increases throughput, and ensures consistent calibration procedures. Some automated systems also include self-diagnostic features that can detect and correct calibration issues without operator intervention.Expand Specific Solutions03 Internal standard techniques for GC-MS calibration

Internal standard techniques involve adding known compounds to samples to compensate for variations in sample preparation and instrument response. Isotopically labeled compounds are often used as internal standards because they have similar chemical properties to the analytes but can be distinguished by their different masses. This approach improves quantification accuracy by normalizing the response of target analytes to the internal standard, compensating for matrix effects and instrument drift during analysis.Expand Specific Solutions04 Calibration for specific applications and compounds

Specialized calibration methods have been developed for specific applications and compound classes in GC-MS analysis. These include calibration procedures for environmental pollutants, pharmaceutical compounds, pesticides, and volatile organic compounds. Application-specific calibration accounts for matrix effects, compound stability, and detection sensitivity unique to particular sample types. These tailored approaches optimize the accuracy and reliability of results for challenging analytical scenarios.Expand Specific Solutions05 Quality control and validation in GC-MS calibration

Quality control and validation procedures are essential components of GC-MS calibration. These include the use of certified reference materials, calibration verification samples, and system suitability tests. Regular performance checks ensure that calibration remains valid throughout analytical sequences. Statistical methods are employed to evaluate calibration curve linearity, detection limits, and quantification accuracy. These quality assurance measures are critical for regulatory compliance and ensuring the reliability of analytical results.Expand Specific Solutions

Key Industry Players and Competitive Landscape

The GC-MS calibration market is currently in a growth phase, with increasing demand for advanced calibration models that offer superior accuracy and reliability over standard approaches. The global market size is estimated to exceed $1.5 billion, driven by expanding applications in pharmaceutical, environmental, and food safety sectors. Leading the technological innovation are established analytical instrument manufacturers like Shimadzu Corp., Thermo Finnigan Corp., and Agilent Technologies, who have developed proprietary advanced calibration algorithms. Emerging players such as Tofwerk AG and Cerno Bioscience are disrupting the space with specialized software solutions. Academic institutions including University of Tokyo and Emory University are contributing significant research to improve calibration methodologies, while companies like Roche Diagnostics are integrating these advances into comprehensive analytical workflows.

Thermo Finnigan Corp.

Technical Solution: Thermo Finnigan (now part of Thermo Fisher Scientific) has pioneered advanced GC-MS calibration through their Chromeleon Chromatography Data System (CDS). Their calibration approach incorporates multi-level bracketing calibration that automatically compensates for instrument drift during long analytical sequences. Their SmartTune technology provides automated mass calibration and optimization routines that maintain consistent mass accuracy without manual tuning. Thermo's advanced calibration models implement weighted regression algorithms that optimize quantitation across wide dynamic ranges, particularly beneficial for trace analysis. Their calibration technology includes intelligent peak integration algorithms that adapt to changing chromatographic conditions, ensuring consistent quantitation even with matrix interferences or minor retention time shifts.

Strengths: Exceptional mass accuracy maintenance over extended periods, sophisticated weighted regression models for wide dynamic range calibration, and automated system performance verification. Weaknesses: Complex calibration models may require significant computational resources and specialized expertise for optimal implementation.

Waters Technology Corp.

Technical Solution: Waters has developed the Quanpedia calibration library system that standardizes calibration methods across laboratories while allowing customization for specific applications. Their advanced calibration models incorporate automatic response factor tracking that identifies and compensates for detector sensitivity changes over time. Waters' TargetLynx software implements intelligent calibration curve fitting that automatically selects optimal regression models based on analyte behavior. Their calibration technology features multi-component internal standardization that compensates for matrix effects across complex sample types. Waters has also pioneered calibration transfer protocols that enable method transfer between different instrument configurations while maintaining quantitative accuracy, addressing a critical challenge in multi-laboratory studies.

Strengths: Excellent calibration library management for standardization across laboratories, sophisticated matrix effect compensation, and robust calibration transfer capabilities between instruments. Weaknesses: Some advanced calibration features require subscription to their informatics platforms and may have steep learning curves for new users.

Critical Patents and Innovations in GC-MS Calibration

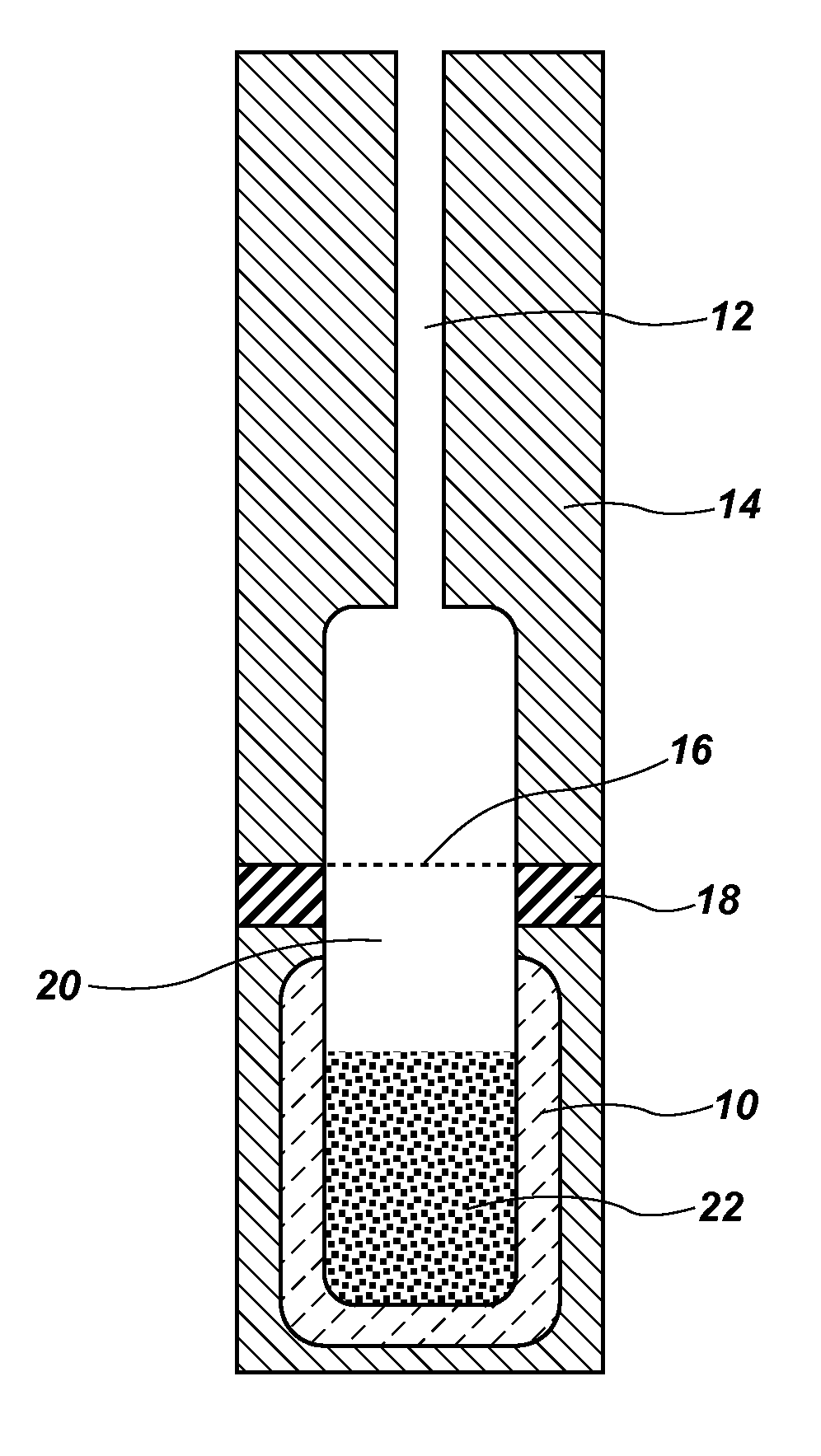

Simple equilibrium distribution sampling device for GC-ms calibration

PatentActiveUS20120227461A1

Innovation

- A system using granular PDMS particles in a calibration vial to create a thermodynamic equilibrium between analytes and headspace vapor, allowing for simultaneous calibration of GC and MS with volatile and semi-volatile organic compounds, providing a solvent-less, stable, and easy-to-use method.

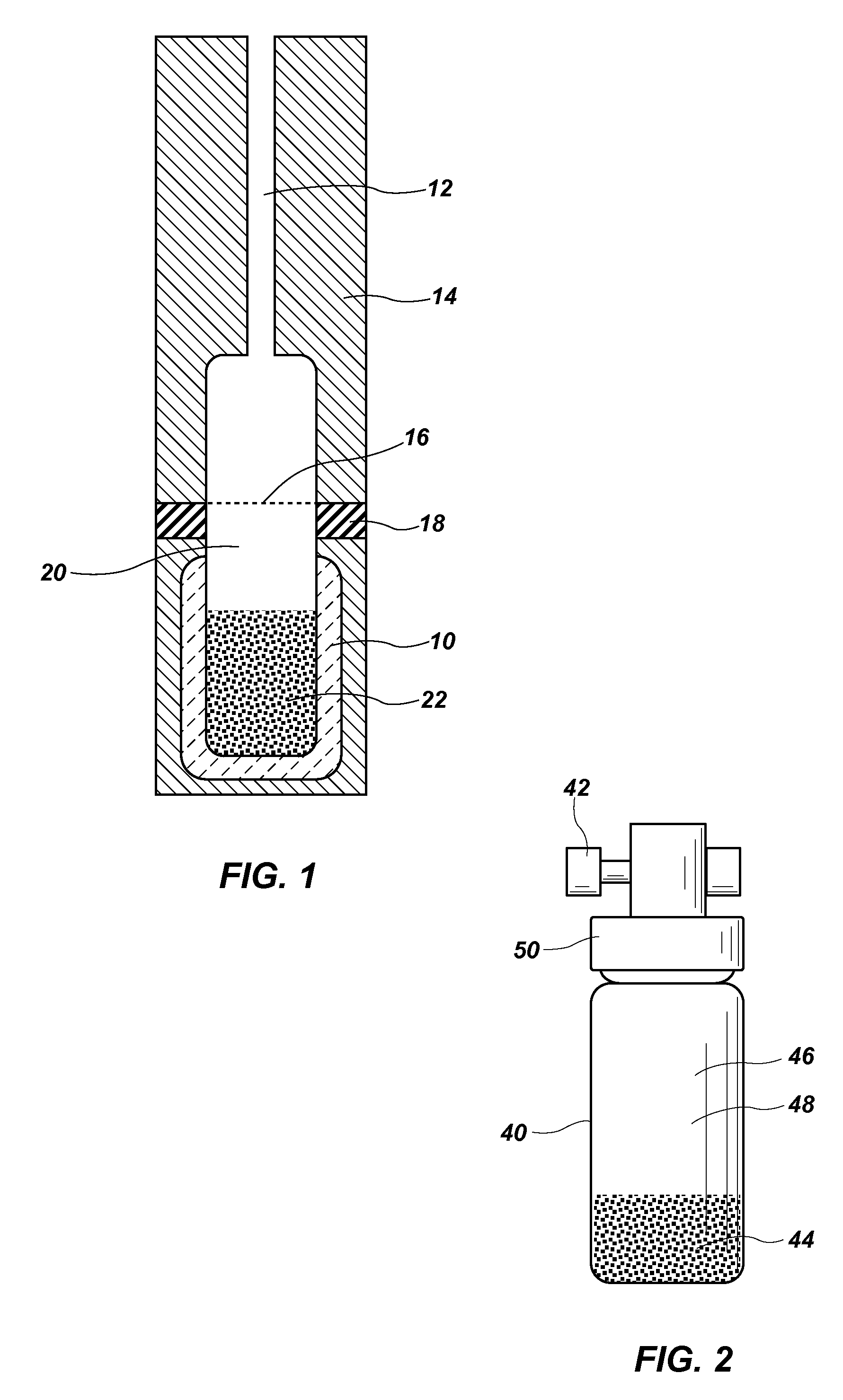

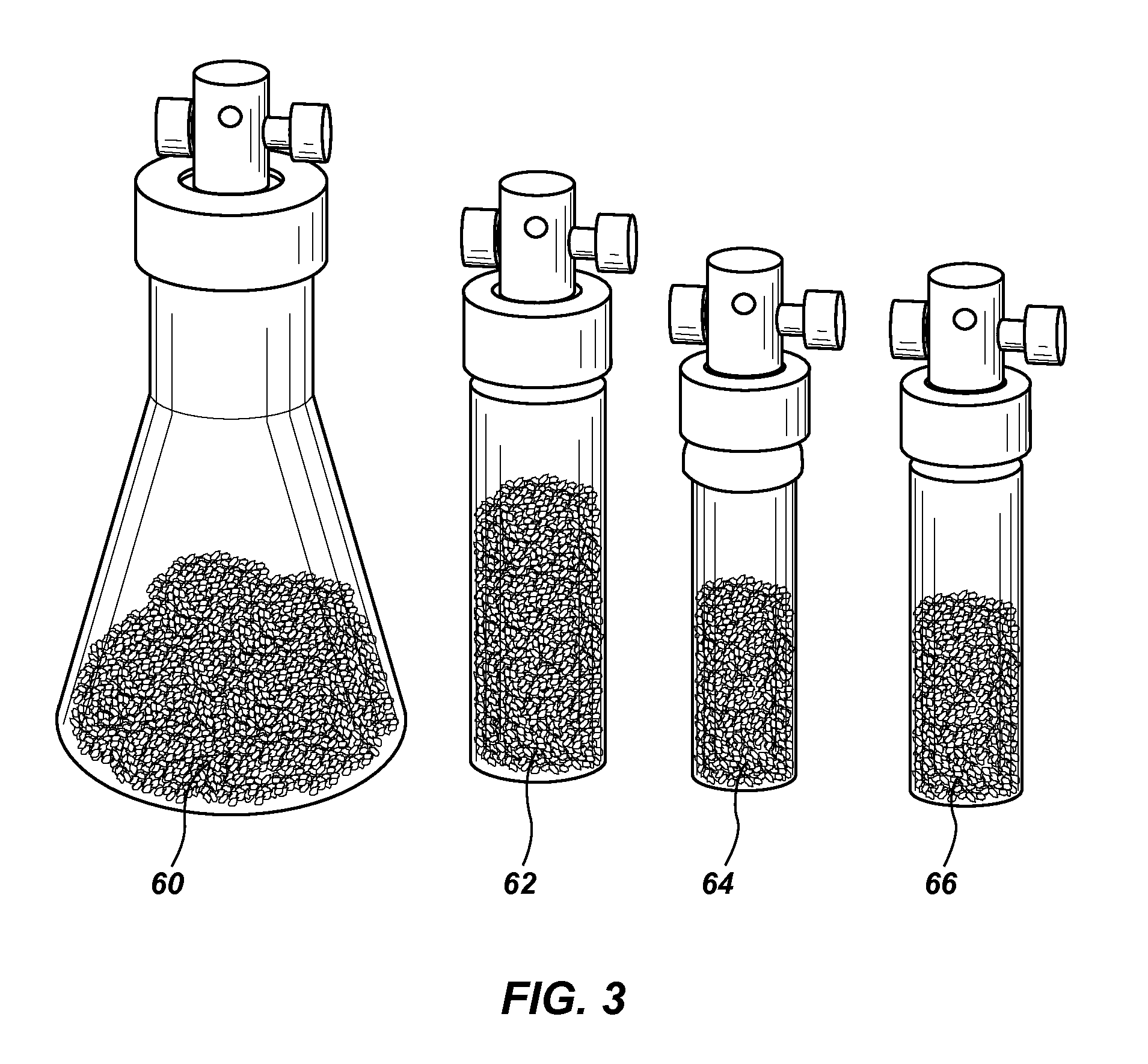

Standard Analyte Generator

PatentActiveUS20160258910A1

Innovation

- A reusable standard analyte generator vial is developed by spiking pure standards into a silicone diffusion pump fluid or polyacrylonitrile solution mixed with adsorbent particles, creating a stable and portable sorbent matrix that allows for repeatable and automated extractions using SPME or needle trap devices, enabling multiple QC extractions from a single vial.

Regulatory Compliance and Quality Assurance

Regulatory compliance and quality assurance represent critical components in the implementation of GC-MS calibration methodologies across various industries. The analytical precision offered by gas chromatography-mass spectrometry demands rigorous adherence to established regulatory frameworks to ensure data integrity and reliability. Organizations such as the FDA, EPA, and ISO have established comprehensive guidelines that dictate calibration procedures, validation protocols, and quality control measures for GC-MS systems.

Standard calibration models typically align with basic regulatory requirements, offering straightforward compliance pathways through established protocols. These models generally follow linear regression approaches that satisfy minimum regulatory thresholds in pharmaceutical, environmental, and food safety applications. However, they may fall short when addressing complex matrices or when higher precision is mandated by evolving regulatory standards.

Advanced calibration models present both opportunities and challenges from a compliance perspective. While these sophisticated approaches—including weighted regression, quadratic models, and machine learning algorithms—can deliver superior analytical performance, they often necessitate more extensive validation documentation. Regulatory bodies increasingly recognize the enhanced accuracy of advanced models but require thorough method validation and uncertainty estimation to support their implementation.

Quality assurance frameworks for GC-MS calibration must incorporate comprehensive system suitability tests, regular performance verification, and robust data management systems. The transition from standard to advanced calibration models requires careful consideration of quality metrics, including limits of detection, quantification, precision parameters, and measurement uncertainty. Organizations must develop detailed standard operating procedures that address calibration frequency, acceptance criteria, and corrective actions when deviations occur.

Documentation requirements differ significantly between standard and advanced calibration approaches. Advanced models demand more extensive records of algorithm selection justification, validation across concentration ranges, and demonstration of superiority over conventional methods. Electronic data integrity becomes particularly crucial when implementing sophisticated calibration models, with regulatory bodies emphasizing audit trails, data security, and traceability of calibration decisions.

Industry-specific considerations further complicate the regulatory landscape. Pharmaceutical applications must adhere to GMP and ICH guidelines, environmental testing follows EPA methodologies, and food safety analysis requires compliance with specific regional frameworks. The selection between standard and advanced calibration models must therefore balance analytical performance requirements against the regulatory burden associated with implementing more complex mathematical approaches.

Standard calibration models typically align with basic regulatory requirements, offering straightforward compliance pathways through established protocols. These models generally follow linear regression approaches that satisfy minimum regulatory thresholds in pharmaceutical, environmental, and food safety applications. However, they may fall short when addressing complex matrices or when higher precision is mandated by evolving regulatory standards.

Advanced calibration models present both opportunities and challenges from a compliance perspective. While these sophisticated approaches—including weighted regression, quadratic models, and machine learning algorithms—can deliver superior analytical performance, they often necessitate more extensive validation documentation. Regulatory bodies increasingly recognize the enhanced accuracy of advanced models but require thorough method validation and uncertainty estimation to support their implementation.

Quality assurance frameworks for GC-MS calibration must incorporate comprehensive system suitability tests, regular performance verification, and robust data management systems. The transition from standard to advanced calibration models requires careful consideration of quality metrics, including limits of detection, quantification, precision parameters, and measurement uncertainty. Organizations must develop detailed standard operating procedures that address calibration frequency, acceptance criteria, and corrective actions when deviations occur.

Documentation requirements differ significantly between standard and advanced calibration approaches. Advanced models demand more extensive records of algorithm selection justification, validation across concentration ranges, and demonstration of superiority over conventional methods. Electronic data integrity becomes particularly crucial when implementing sophisticated calibration models, with regulatory bodies emphasizing audit trails, data security, and traceability of calibration decisions.

Industry-specific considerations further complicate the regulatory landscape. Pharmaceutical applications must adhere to GMP and ICH guidelines, environmental testing follows EPA methodologies, and food safety analysis requires compliance with specific regional frameworks. The selection between standard and advanced calibration models must therefore balance analytical performance requirements against the regulatory burden associated with implementing more complex mathematical approaches.

Cost-Benefit Analysis of Calibration Models

When evaluating the implementation of advanced calibration models versus standard approaches in GC-MS systems, financial considerations play a crucial role in decision-making processes. Standard calibration models typically require lower initial investment, with costs primarily associated with basic software packages and minimal training requirements. These models generally utilize linear regression techniques that come bundled with most GC-MS systems, representing a cost-effective solution for routine analyses.

Advanced calibration models, conversely, demand significantly higher upfront expenditure. This includes specialized software licenses ranging from $5,000 to $25,000 depending on capabilities, potential hardware upgrades to support complex computational requirements, and comprehensive staff training programs that may cost between $2,000 and $10,000 per analyst. Organizations must also factor in productivity decreases during transition periods, typically lasting 2-4 weeks.

The return on investment timeline differs substantially between these approaches. Standard models offer immediate operational capability with minimal financial risk, while advanced models typically require 6-18 months to achieve positive ROI, depending on laboratory throughput and application complexity. However, long-term financial benefits of advanced models become evident through multiple pathways.

Advanced calibration models demonstrate superior quantitative accuracy, reducing costly analytical errors by 15-30% compared to standard approaches. This translates to fewer repeated analyses and decreased reagent consumption. Studies indicate laboratories implementing advanced models experience approximately 20% reduction in calibration frequency requirements, generating substantial savings in reference standards and analyst time.

For high-throughput environments processing over 1,000 samples monthly, advanced models can reduce operational costs by $15,000-$30,000 annually through improved efficiency and reduced maintenance requirements. Additionally, these models extend instrument lifetime by optimizing performance parameters and reducing unnecessary stress on system components.

Risk assessment reveals standard models present lower financial exposure but higher potential for analytical errors, while advanced models carry implementation risks but provide greater analytical reliability. The optimal selection ultimately depends on laboratory-specific factors including sample throughput, analytical complexity, regulatory requirements, and available resources. Small facilities with limited budgets and basic analytical needs may find standard models sufficient, while research institutions and high-volume testing laboratories typically achieve superior cost-benefit outcomes with advanced calibration approaches.

Advanced calibration models, conversely, demand significantly higher upfront expenditure. This includes specialized software licenses ranging from $5,000 to $25,000 depending on capabilities, potential hardware upgrades to support complex computational requirements, and comprehensive staff training programs that may cost between $2,000 and $10,000 per analyst. Organizations must also factor in productivity decreases during transition periods, typically lasting 2-4 weeks.

The return on investment timeline differs substantially between these approaches. Standard models offer immediate operational capability with minimal financial risk, while advanced models typically require 6-18 months to achieve positive ROI, depending on laboratory throughput and application complexity. However, long-term financial benefits of advanced models become evident through multiple pathways.

Advanced calibration models demonstrate superior quantitative accuracy, reducing costly analytical errors by 15-30% compared to standard approaches. This translates to fewer repeated analyses and decreased reagent consumption. Studies indicate laboratories implementing advanced models experience approximately 20% reduction in calibration frequency requirements, generating substantial savings in reference standards and analyst time.

For high-throughput environments processing over 1,000 samples monthly, advanced models can reduce operational costs by $15,000-$30,000 annually through improved efficiency and reduced maintenance requirements. Additionally, these models extend instrument lifetime by optimizing performance parameters and reducing unnecessary stress on system components.

Risk assessment reveals standard models present lower financial exposure but higher potential for analytical errors, while advanced models carry implementation risks but provide greater analytical reliability. The optimal selection ultimately depends on laboratory-specific factors including sample throughput, analytical complexity, regulatory requirements, and available resources. Small facilities with limited budgets and basic analytical needs may find standard models sufficient, while research institutions and high-volume testing laboratories typically achieve superior cost-benefit outcomes with advanced calibration approaches.

Unlock deeper insights with PatSnap Eureka Quick Research — get a full tech report to explore trends and direct your research. Try now!

Generate Your Research Report Instantly with AI Agent

Supercharge your innovation with PatSnap Eureka AI Agent Platform!