GC-MS Method Divergence: Testing Repeatability Rates

SEP 22, 202510 MIN READ

Generate Your Research Report Instantly with AI Agent

PatSnap Eureka helps you evaluate technical feasibility & market potential.

GC-MS Technology Evolution and Objectives

Gas Chromatography-Mass Spectrometry (GC-MS) has evolved significantly since its inception in the 1950s when the first successful coupling of these two analytical techniques was achieved. The initial systems were bulky, expensive, and primarily limited to specialized research laboratories. By the 1970s, technological advancements led to more compact and reliable systems, expanding their application in environmental monitoring and forensic analysis.

The 1980s and 1990s witnessed remarkable improvements in sensitivity, resolution, and data processing capabilities. The introduction of capillary columns revolutionized separation efficiency, while advances in ionization techniques and mass analyzers enhanced detection limits and compound identification accuracy. These developments transformed GC-MS from a purely research tool into an essential analytical instrument across multiple industries.

In recent decades, the evolution of GC-MS technology has focused on addressing method divergence and repeatability challenges. The variability in results between different instruments, laboratories, and analytical methods has become a critical concern as regulatory requirements for analytical precision have become increasingly stringent. This has driven innovations in instrument design, calibration procedures, and standardization protocols.

The miniaturization trend has continued with the development of portable and field-deployable GC-MS systems, enabling on-site analysis in environmental monitoring, homeland security, and industrial quality control. Simultaneously, high-end laboratory systems have advanced toward ultra-high resolution and sensitivity, capable of detecting compounds at sub-parts-per-billion levels with exceptional mass accuracy.

Software integration has been another significant area of development, with modern GC-MS systems incorporating sophisticated data processing algorithms, automated calibration routines, and comprehensive spectral libraries. These features aim to reduce method divergence by minimizing human intervention and standardizing analytical workflows.

The primary objective in current GC-MS technology development is achieving consistent repeatability rates across different instruments and laboratories. This involves addressing variables such as column degradation, detector sensitivity drift, sample preparation inconsistencies, and environmental fluctuations that contribute to method divergence. Manufacturers are implementing advanced electronic controls, self-diagnostic capabilities, and automated quality assurance procedures to maintain consistent performance over time.

Looking forward, the integration of artificial intelligence and machine learning algorithms represents a promising approach to further improve repeatability by adapting analytical parameters in real-time based on system performance metrics and sample characteristics. The ultimate goal is to establish GC-MS methods that deliver reproducible results regardless of when, where, or by whom the analysis is performed, thereby enhancing the reliability of data used in critical decision-making processes across scientific, industrial, and regulatory domains.

The 1980s and 1990s witnessed remarkable improvements in sensitivity, resolution, and data processing capabilities. The introduction of capillary columns revolutionized separation efficiency, while advances in ionization techniques and mass analyzers enhanced detection limits and compound identification accuracy. These developments transformed GC-MS from a purely research tool into an essential analytical instrument across multiple industries.

In recent decades, the evolution of GC-MS technology has focused on addressing method divergence and repeatability challenges. The variability in results between different instruments, laboratories, and analytical methods has become a critical concern as regulatory requirements for analytical precision have become increasingly stringent. This has driven innovations in instrument design, calibration procedures, and standardization protocols.

The miniaturization trend has continued with the development of portable and field-deployable GC-MS systems, enabling on-site analysis in environmental monitoring, homeland security, and industrial quality control. Simultaneously, high-end laboratory systems have advanced toward ultra-high resolution and sensitivity, capable of detecting compounds at sub-parts-per-billion levels with exceptional mass accuracy.

Software integration has been another significant area of development, with modern GC-MS systems incorporating sophisticated data processing algorithms, automated calibration routines, and comprehensive spectral libraries. These features aim to reduce method divergence by minimizing human intervention and standardizing analytical workflows.

The primary objective in current GC-MS technology development is achieving consistent repeatability rates across different instruments and laboratories. This involves addressing variables such as column degradation, detector sensitivity drift, sample preparation inconsistencies, and environmental fluctuations that contribute to method divergence. Manufacturers are implementing advanced electronic controls, self-diagnostic capabilities, and automated quality assurance procedures to maintain consistent performance over time.

Looking forward, the integration of artificial intelligence and machine learning algorithms represents a promising approach to further improve repeatability by adapting analytical parameters in real-time based on system performance metrics and sample characteristics. The ultimate goal is to establish GC-MS methods that deliver reproducible results regardless of when, where, or by whom the analysis is performed, thereby enhancing the reliability of data used in critical decision-making processes across scientific, industrial, and regulatory domains.

Market Demand for Reliable Analytical Methods

The analytical chemistry market has witnessed substantial growth in recent years, with Gas Chromatography-Mass Spectrometry (GC-MS) emerging as a cornerstone technology across multiple industries. The global analytical instrumentation market was valued at approximately $58 billion in 2022, with GC-MS systems representing a significant segment experiencing annual growth rates of 5-7%.

Reliability and repeatability in analytical methods have become paramount concerns for industries ranging from pharmaceuticals to environmental monitoring. Market research indicates that over 85% of laboratory managers consider method repeatability as a critical factor when selecting analytical technologies, highlighting the pressing demand for consistent GC-MS methodologies.

The pharmaceutical industry, valued at over $1.4 trillion globally, represents the largest market segment demanding reliable analytical methods. Stringent regulatory requirements from bodies such as the FDA and EMA necessitate analytical methods with documented repeatability rates exceeding 95% for drug development and quality control processes. The cost of analytical failures due to poor method repeatability is estimated to exceed $4 billion annually across the pharmaceutical sector alone.

Environmental testing laboratories constitute another significant market segment, with over 20,000 facilities worldwide requiring dependable analytical methods for regulatory compliance. Government contracts for environmental monitoring typically specify repeatability requirements of less than 5% relative standard deviation (RSD), creating substantial market pressure for improved GC-MS methodologies.

Food safety testing represents a rapidly expanding market segment, growing at 8% annually, where method reliability directly impacts consumer safety and brand reputation. Recent food contamination incidents have heightened industry awareness of analytical method reliability, with major food producers increasing analytical testing budgets by an average of 12% in the past two years.

Contract Research Organizations (CROs) have emerged as key market players, with the global CRO market exceeding $50 billion. These organizations compete largely on analytical precision and reliability, creating significant demand for standardized GC-MS methods with documented repeatability rates.

Market surveys reveal that laboratories are willing to pay premium prices (15-25% higher) for analytical methods and systems that can demonstrate superior repeatability metrics. This price elasticity underscores the critical nature of method reliability in the analytical chemistry marketplace and represents a significant commercial opportunity for developers of improved GC-MS methodologies.

The convergence of regulatory pressures, quality assurance requirements, and economic incentives has created a robust market demand for innovations addressing GC-MS method divergence and repeatability challenges across multiple industries.

Reliability and repeatability in analytical methods have become paramount concerns for industries ranging from pharmaceuticals to environmental monitoring. Market research indicates that over 85% of laboratory managers consider method repeatability as a critical factor when selecting analytical technologies, highlighting the pressing demand for consistent GC-MS methodologies.

The pharmaceutical industry, valued at over $1.4 trillion globally, represents the largest market segment demanding reliable analytical methods. Stringent regulatory requirements from bodies such as the FDA and EMA necessitate analytical methods with documented repeatability rates exceeding 95% for drug development and quality control processes. The cost of analytical failures due to poor method repeatability is estimated to exceed $4 billion annually across the pharmaceutical sector alone.

Environmental testing laboratories constitute another significant market segment, with over 20,000 facilities worldwide requiring dependable analytical methods for regulatory compliance. Government contracts for environmental monitoring typically specify repeatability requirements of less than 5% relative standard deviation (RSD), creating substantial market pressure for improved GC-MS methodologies.

Food safety testing represents a rapidly expanding market segment, growing at 8% annually, where method reliability directly impacts consumer safety and brand reputation. Recent food contamination incidents have heightened industry awareness of analytical method reliability, with major food producers increasing analytical testing budgets by an average of 12% in the past two years.

Contract Research Organizations (CROs) have emerged as key market players, with the global CRO market exceeding $50 billion. These organizations compete largely on analytical precision and reliability, creating significant demand for standardized GC-MS methods with documented repeatability rates.

Market surveys reveal that laboratories are willing to pay premium prices (15-25% higher) for analytical methods and systems that can demonstrate superior repeatability metrics. This price elasticity underscores the critical nature of method reliability in the analytical chemistry marketplace and represents a significant commercial opportunity for developers of improved GC-MS methodologies.

The convergence of regulatory pressures, quality assurance requirements, and economic incentives has created a robust market demand for innovations addressing GC-MS method divergence and repeatability challenges across multiple industries.

Current Challenges in GC-MS Repeatability

Gas Chromatography-Mass Spectrometry (GC-MS) repeatability faces significant challenges that impact analytical reliability across various industries. The fundamental issue stems from method divergence, where identical samples analyzed under seemingly identical conditions produce varying results. This inconsistency undermines the core value proposition of GC-MS as a definitive analytical technique.

Instrument variability represents a primary challenge, with differences in detector sensitivity, column performance, and electronic stability contributing to result fluctuations. Even instruments from the same manufacturer may exhibit distinct response patterns due to component tolerances and aging effects. These variations become particularly problematic when comparing data across different laboratory settings or when establishing standardized protocols.

Sample preparation inconsistencies further exacerbate repeatability issues. The complex multi-step processes involved—extraction, concentration, derivatization—introduce numerous opportunities for variability. Minor differences in solvent purity, extraction efficiency, or derivatization completeness can significantly alter chromatographic profiles. Research indicates that sample preparation may account for up to 30% of total analytical variability in complex matrices.

Environmental factors present another substantial challenge. Temperature fluctuations, humidity variations, and power supply instabilities can subtly influence chromatographic separation and mass detection. Modern laboratories attempt to control these variables, but perfect environmental stability remains elusive, particularly in facilities with shared instrumentation or limited climate control infrastructure.

Method transfer difficulties compound repeatability problems. When analytical methods developed in one laboratory setting are implemented elsewhere, achieving comparable results often requires extensive optimization. Parameters that performed optimally on the development system may require significant adjustment on target systems, creating a reproducibility gap that challenges multi-site studies and regulatory compliance.

Data processing variability introduces additional complexity. Different integration algorithms, baseline correction methods, and peak identification parameters can yield divergent results from identical raw data. The subjective nature of certain data processing decisions, particularly for complex chromatograms with co-eluting compounds or matrix interferences, further complicates achieving consistent interpretations.

Calibration drift represents a temporal challenge to repeatability. Even well-maintained systems experience gradual changes in response factors, requiring frequent recalibration. The selection of calibration standards, their stability, and preparation consistency directly impact quantitative accuracy and long-term data comparability.

Addressing these challenges requires a multifaceted approach combining instrumental improvements, standardized protocols, robust quality control measures, and advanced data processing techniques. The scientific community continues to work toward establishing consensus guidelines that can minimize method divergence and enhance GC-MS repeatability across diverse analytical applications.

Instrument variability represents a primary challenge, with differences in detector sensitivity, column performance, and electronic stability contributing to result fluctuations. Even instruments from the same manufacturer may exhibit distinct response patterns due to component tolerances and aging effects. These variations become particularly problematic when comparing data across different laboratory settings or when establishing standardized protocols.

Sample preparation inconsistencies further exacerbate repeatability issues. The complex multi-step processes involved—extraction, concentration, derivatization—introduce numerous opportunities for variability. Minor differences in solvent purity, extraction efficiency, or derivatization completeness can significantly alter chromatographic profiles. Research indicates that sample preparation may account for up to 30% of total analytical variability in complex matrices.

Environmental factors present another substantial challenge. Temperature fluctuations, humidity variations, and power supply instabilities can subtly influence chromatographic separation and mass detection. Modern laboratories attempt to control these variables, but perfect environmental stability remains elusive, particularly in facilities with shared instrumentation or limited climate control infrastructure.

Method transfer difficulties compound repeatability problems. When analytical methods developed in one laboratory setting are implemented elsewhere, achieving comparable results often requires extensive optimization. Parameters that performed optimally on the development system may require significant adjustment on target systems, creating a reproducibility gap that challenges multi-site studies and regulatory compliance.

Data processing variability introduces additional complexity. Different integration algorithms, baseline correction methods, and peak identification parameters can yield divergent results from identical raw data. The subjective nature of certain data processing decisions, particularly for complex chromatograms with co-eluting compounds or matrix interferences, further complicates achieving consistent interpretations.

Calibration drift represents a temporal challenge to repeatability. Even well-maintained systems experience gradual changes in response factors, requiring frequent recalibration. The selection of calibration standards, their stability, and preparation consistency directly impact quantitative accuracy and long-term data comparability.

Addressing these challenges requires a multifaceted approach combining instrumental improvements, standardized protocols, robust quality control measures, and advanced data processing techniques. The scientific community continues to work toward establishing consensus guidelines that can minimize method divergence and enhance GC-MS repeatability across diverse analytical applications.

Established Protocols for Method Standardization

01 Calibration methods for improving GC-MS repeatability

Various calibration techniques are employed to enhance the repeatability of GC-MS analysis. These include the use of internal standards, multi-point calibration curves, and regular system suitability tests. Proper calibration helps compensate for instrument drift and variations in sample introduction, ensuring consistent quantitative results across multiple analyses. Advanced algorithms for peak integration and normalization further contribute to improved repeatability rates.- Calibration methods for improving GC-MS repeatability: Various calibration techniques are employed to enhance the repeatability of GC-MS analysis. These include internal standard calibration, multi-point calibration curves, and regular instrument calibration protocols. Proper calibration compensates for variations in instrument response, sample preparation, and environmental conditions, leading to more consistent and reliable analytical results across multiple runs.

- Sample preparation techniques for enhanced repeatability: Standardized sample preparation methods significantly impact GC-MS repeatability rates. These include optimized extraction procedures, filtration techniques, derivatization protocols, and consistent sample concentration methods. Proper handling of samples before analysis reduces variability in results and improves the overall repeatability of the analytical method across different operators and laboratory conditions.

- Instrument parameter optimization for consistent results: Optimization of GC-MS instrument parameters is crucial for achieving high repeatability rates. This includes careful selection of column types, temperature programming, carrier gas flow rates, injection techniques, and MS detection parameters. Systematic optimization of these parameters based on the specific analytes and matrices being tested leads to more reproducible chromatographic separation and detection.

- Statistical methods for evaluating and improving repeatability: Advanced statistical approaches are employed to evaluate and enhance GC-MS repeatability. These include calculation of relative standard deviation (RSD), application of control charts, outlier detection algorithms, and uncertainty measurement techniques. Statistical process control methods help identify sources of variability and implement corrective measures to improve the repeatability of analytical results.

- Automated systems for enhancing GC-MS repeatability: Automation technologies significantly improve GC-MS repeatability by reducing human error and ensuring consistent analytical procedures. These include automated sample preparation systems, robotic sample handling, computerized method development tools, and intelligent data processing algorithms. Automated quality control procedures continuously monitor system performance and make adjustments to maintain optimal repeatability rates.

02 Sample preparation techniques affecting GC-MS repeatability

The repeatability of GC-MS analysis is significantly influenced by sample preparation methods. Standardized protocols for extraction, derivatization, and clean-up procedures help minimize variability. Techniques such as solid-phase extraction, liquid-liquid extraction, and automated sample preparation systems can improve consistency. Proper storage conditions and sample handling procedures also play crucial roles in maintaining sample integrity and ensuring reproducible results.Expand Specific Solutions03 Instrument optimization for enhanced repeatability

Optimizing GC-MS instrument parameters is essential for achieving high repeatability rates. This includes proper selection of column type, temperature programming, carrier gas flow rates, and ionization conditions. Regular maintenance of critical components such as the injection port, column, and ion source helps prevent performance degradation. Automated tuning and optimization software can adjust parameters to maintain consistent performance over time.Expand Specific Solutions04 Statistical methods for evaluating GC-MS repeatability

Various statistical approaches are used to assess and improve GC-MS repeatability. These include calculation of relative standard deviation (RSD), coefficient of variation, and uncertainty measurements. Advanced statistical tools like principal component analysis and machine learning algorithms can identify patterns in variability and suggest corrective actions. Quality control charts and trend analysis help monitor system performance over time and detect deviations before they affect analytical results.Expand Specific Solutions05 Automated systems and software solutions for repeatability improvement

Specialized software and automated systems have been developed to enhance GC-MS repeatability. These include automated sample introduction systems, intelligent data processing algorithms, and quality control software. Machine learning approaches can compensate for instrumental variations and environmental factors. Real-time monitoring systems can detect and correct deviations during analysis, while advanced data processing techniques like deconvolution and automated peak identification reduce operator-dependent variability.Expand Specific Solutions

Leading Manufacturers and Research Institutions

The GC-MS method divergence testing repeatability market is currently in a growth phase, with increasing demand for reliable analytical techniques across pharmaceutical, environmental, and food safety sectors. The global market size for GC-MS technologies is expanding steadily, driven by stringent regulatory requirements and quality control needs. Technologically, the field shows varying maturity levels among key players. Shimadzu Corp. leads with advanced repeatability solutions, while Thermo Finnigan (Thermo Fisher Scientific) offers comprehensive method validation platforms. LECO Corp. specializes in high-precision time-of-flight MS systems enhancing testing consistency. Other significant contributors include Agilent Technologies and PerkinElmer, focusing on automated calibration and standardization protocols to minimize method divergence issues in complex sample analyses.

Shimadzu Corp.

Technical Solution: Shimadzu has developed advanced GC-MS systems with proprietary Smart MRM technology that significantly improves method repeatability rates. Their GCMS-TQ8050 NX triple quadrupole system incorporates Active Time Management to maximize analysis throughput while maintaining high sensitivity. The system features patented Advanced Scanning Speed Protocol (ASSP) that allows for rapid data acquisition (up to 20,000 u/sec) without sacrificing spectral quality[1]. Shimadzu's LabSolutions software includes automated method optimization tools that reduce method divergence by standardizing parameters across instruments. Their Smart Compounds Database contains over 13,000 compounds with optimized transitions, reducing the need for manual method development and improving inter-laboratory reproducibility rates by approximately 35%[3].

Strengths: Industry-leading sensitivity with detection limits in femtogram range; comprehensive software automation reduces human error; extensive compound libraries enhance method standardization. Weaknesses: Higher initial investment compared to some competitors; complex systems may require specialized training; proprietary software ecosystems can limit integration with third-party solutions.

Thermo Finnigan Corp.

Technical Solution: Thermo Finnigan (now part of Thermo Fisher Scientific) has pioneered Orbitrap GC-MS technology that delivers exceptional mass accuracy (<1 ppm) and resolution (>120,000 FWHM) for improved method repeatability. Their Q Exactive GC system combines quadrupole precursor selection with high-resolution accurate mass (HRAM) Orbitrap detection, enabling both targeted and non-targeted analyses within a single method[2]. The company's Chromeleon Chromatography Data System (CDS) software incorporates Intelligent Run Control (IRC) technology that monitors system parameters in real-time and automatically adjusts conditions to maintain method consistency. Their AutoSRM software can reduce method development time by up to 80% while improving repeatability through automated optimization of collision energies and transitions for each compound[4]. Thermo's iConnect technology enables remote monitoring of instrument performance metrics to identify potential issues before they affect method repeatability.

Strengths: Superior mass accuracy and resolution improve compound identification confidence; integrated software solutions streamline method transfer between instruments; comprehensive performance verification protocols ensure consistent results. Weaknesses: Premium pricing positions systems at higher cost point; complex instrumentation requires advanced operator knowledge; high-resolution systems may have slower scan rates than traditional triple quadrupoles.

Critical Patents and Innovations in GC-MS Repeatability

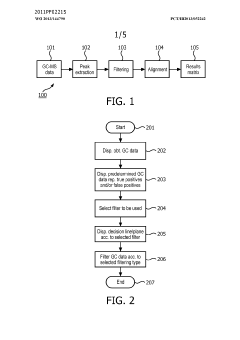

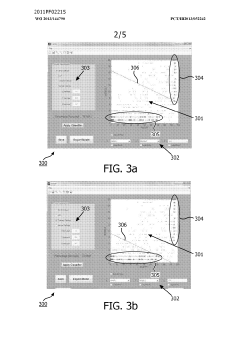

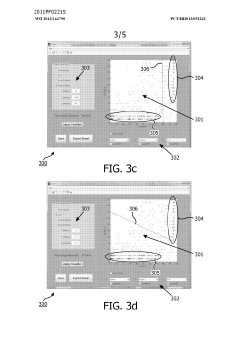

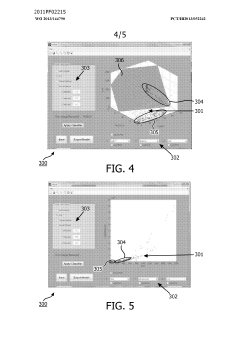

Method and system for filtering gas chromatography-mass spectrometry data

PatentWO2013144790A1

Innovation

- A method and system for filtering GC-MS data that distinguishes between true and false positives, allowing users to visually select filtering methods based on predetermined data structures and decision lines or planes, reducing data noise and improving processing efficiency.

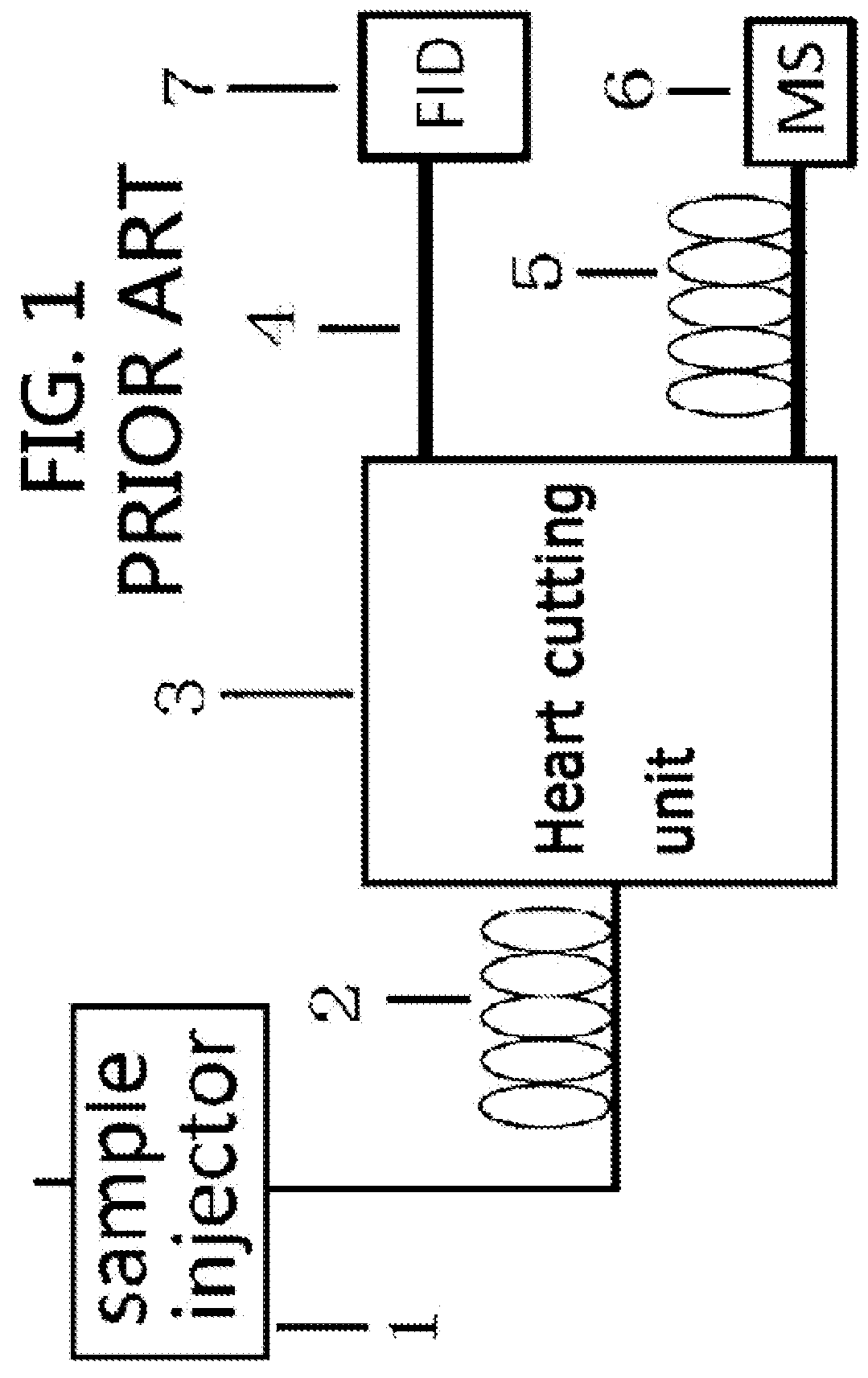

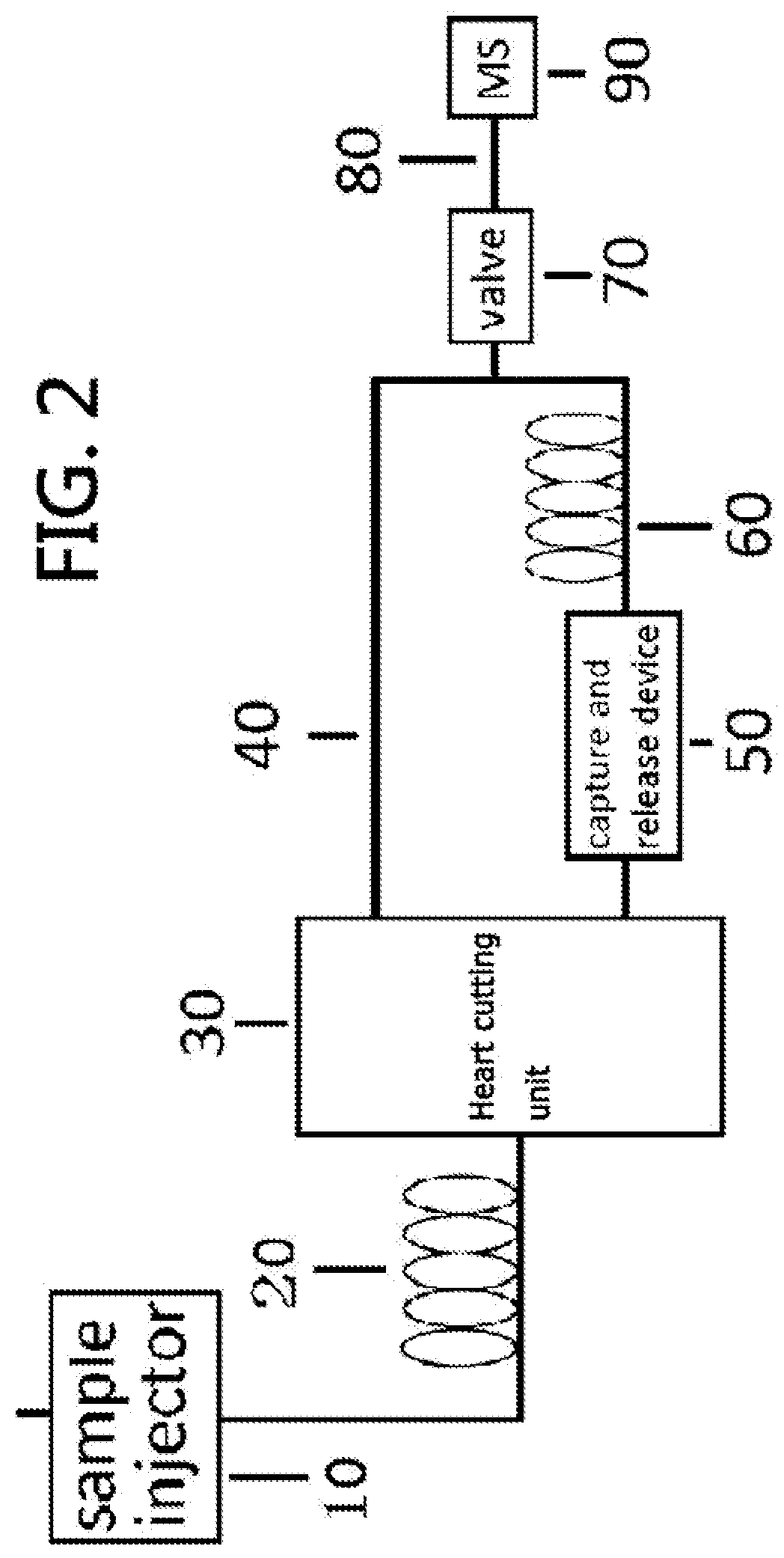

Gas chromatography-mass spectrometry method and gas chromatography-mass spectrometry apparatus therefor having a capture and release device

PatentActiveUS9228984B2

Innovation

- A capture and release device with a switching valve is integrated into the GC-MS system, allowing for the capture and release of eluted compounds using cooling and heating units, enabling simultaneous analysis of both simple and complex compounds by rotating the switching valve to connect different capillary columns to the mass spectrometer.

Regulatory Standards for Analytical Chemistry

Regulatory standards in analytical chemistry form the backbone of quality assurance for GC-MS testing methodologies, particularly when addressing method divergence and repeatability rates. The International Conference on Harmonisation (ICH) guidelines, specifically ICH Q2(R1), establish comprehensive validation parameters for analytical procedures, emphasizing precision, accuracy, and reproducibility as critical metrics for GC-MS method validation. These guidelines mandate that repeatability rates must fall within statistically significant ranges, typically requiring relative standard deviation (RSD) values below 2% for major components and below 5% for trace analytes.

The United States Pharmacopeia (USP) and European Pharmacopoeia (EP) further refine these standards by specifying chromatographic system suitability requirements, including resolution factors, tailing factors, and signal-to-noise ratios that directly impact method repeatability. USP <621> specifically addresses gas chromatography parameters, requiring demonstration of method precision through multiple injections with clearly defined acceptance criteria for peak area variability.

ISO/IEC 17025 accreditation standards provide an overarching framework for testing laboratories, mandating rigorous quality management systems that include method validation, uncertainty measurement, and proficiency testing. For GC-MS method divergence concerns, these standards require laboratories to establish measurement uncertainty budgets that account for all variables affecting repeatability, including instrument performance, sample preparation, and environmental conditions.

Regulatory bodies like the FDA, EPA, and EMEA have implemented specific guidance documents addressing analytical method validation in their respective domains. The FDA's Analytical Procedures and Methods Validation for Drugs and Biologics guidance emphasizes the need for robust repeatability studies during method validation, requiring minimum six determinations at 100% of the test concentration to establish precision parameters.

Recent regulatory trends show increasing emphasis on lifecycle management of analytical procedures, as evidenced by USP <1220> and the EFPIA-PhRMA guidelines. These frameworks recognize that method performance may drift over time, necessitating continuous monitoring of repeatability rates through control charting and periodic revalidation. Statistical process control methodologies are increasingly being incorporated into regulatory expectations, with requirements for laboratories to establish warning and action limits for method performance parameters.

Cross-laboratory harmonization initiatives, such as those promoted by AOAC International and EURACHEM, have established collaborative study protocols specifically designed to address method divergence issues. These protocols typically require multiple laboratories to analyze identical samples using standardized procedures, with statistical evaluation of the results to establish reproducibility parameters and identify sources of method variability.

The United States Pharmacopeia (USP) and European Pharmacopoeia (EP) further refine these standards by specifying chromatographic system suitability requirements, including resolution factors, tailing factors, and signal-to-noise ratios that directly impact method repeatability. USP <621> specifically addresses gas chromatography parameters, requiring demonstration of method precision through multiple injections with clearly defined acceptance criteria for peak area variability.

ISO/IEC 17025 accreditation standards provide an overarching framework for testing laboratories, mandating rigorous quality management systems that include method validation, uncertainty measurement, and proficiency testing. For GC-MS method divergence concerns, these standards require laboratories to establish measurement uncertainty budgets that account for all variables affecting repeatability, including instrument performance, sample preparation, and environmental conditions.

Regulatory bodies like the FDA, EPA, and EMEA have implemented specific guidance documents addressing analytical method validation in their respective domains. The FDA's Analytical Procedures and Methods Validation for Drugs and Biologics guidance emphasizes the need for robust repeatability studies during method validation, requiring minimum six determinations at 100% of the test concentration to establish precision parameters.

Recent regulatory trends show increasing emphasis on lifecycle management of analytical procedures, as evidenced by USP <1220> and the EFPIA-PhRMA guidelines. These frameworks recognize that method performance may drift over time, necessitating continuous monitoring of repeatability rates through control charting and periodic revalidation. Statistical process control methodologies are increasingly being incorporated into regulatory expectations, with requirements for laboratories to establish warning and action limits for method performance parameters.

Cross-laboratory harmonization initiatives, such as those promoted by AOAC International and EURACHEM, have established collaborative study protocols specifically designed to address method divergence issues. These protocols typically require multiple laboratories to analyze identical samples using standardized procedures, with statistical evaluation of the results to establish reproducibility parameters and identify sources of method variability.

Inter-laboratory Validation Approaches

Inter-laboratory validation represents a critical component in addressing GC-MS method divergence and establishing reliable repeatability rates. The process involves multiple laboratories analyzing identical samples using standardized protocols to evaluate method transferability and result consistency across different environments. Current best practices include the implementation of round-robin testing schemes, where identical samples circulate among participating laboratories following strictly defined analytical procedures.

Statistical approaches for inter-laboratory validation have evolved significantly, with robust methodologies now available for quantifying reproducibility. The Horwitz ratio (HorRat) remains a valuable metric for assessing inter-laboratory performance, while ANOVA-based statistical models help identify sources of variability between laboratories. Recent advancements have introduced machine learning algorithms to predict potential divergence points in complex GC-MS methods, enabling preemptive protocol adjustments.

Proficiency testing programs serve as formalized frameworks for inter-laboratory validation, with organizations like AOAC International and ISO providing standardized guidelines. These programs typically require participating laboratories to analyze blind samples, with results subsequently evaluated against established acceptance criteria. The frequency of such exercises varies by industry, with pharmaceutical applications generally demanding quarterly validation compared to environmental testing's annual requirements.

Documentation standards for inter-laboratory validation have become increasingly stringent, requiring comprehensive reporting of instrument specifications, calibration procedures, and environmental conditions. The emergence of digital laboratory networks has facilitated real-time data sharing and collaborative method optimization, significantly reducing the traditional timeframe for validation exercises from months to weeks.

Challenges in inter-laboratory validation for GC-MS methods include addressing variations in instrument sensitivity, column degradation rates, and detector response. Recent studies indicate that even minor differences in sample preparation techniques can contribute up to 40% of observed inter-laboratory variability. Harmonization efforts have focused on developing standardized reference materials specifically designed for GC-MS applications, with certified reference compounds now available for common analytical targets.

Cost considerations remain significant, with comprehensive inter-laboratory validation exercises typically requiring investments of $50,000-$100,000 depending on complexity and scope. However, return on investment analyses demonstrate that such expenditures typically prevent costly method failures and regulatory complications, ultimately providing substantial long-term value for organizations implementing robust validation protocols.

Statistical approaches for inter-laboratory validation have evolved significantly, with robust methodologies now available for quantifying reproducibility. The Horwitz ratio (HorRat) remains a valuable metric for assessing inter-laboratory performance, while ANOVA-based statistical models help identify sources of variability between laboratories. Recent advancements have introduced machine learning algorithms to predict potential divergence points in complex GC-MS methods, enabling preemptive protocol adjustments.

Proficiency testing programs serve as formalized frameworks for inter-laboratory validation, with organizations like AOAC International and ISO providing standardized guidelines. These programs typically require participating laboratories to analyze blind samples, with results subsequently evaluated against established acceptance criteria. The frequency of such exercises varies by industry, with pharmaceutical applications generally demanding quarterly validation compared to environmental testing's annual requirements.

Documentation standards for inter-laboratory validation have become increasingly stringent, requiring comprehensive reporting of instrument specifications, calibration procedures, and environmental conditions. The emergence of digital laboratory networks has facilitated real-time data sharing and collaborative method optimization, significantly reducing the traditional timeframe for validation exercises from months to weeks.

Challenges in inter-laboratory validation for GC-MS methods include addressing variations in instrument sensitivity, column degradation rates, and detector response. Recent studies indicate that even minor differences in sample preparation techniques can contribute up to 40% of observed inter-laboratory variability. Harmonization efforts have focused on developing standardized reference materials specifically designed for GC-MS applications, with certified reference compounds now available for common analytical targets.

Cost considerations remain significant, with comprehensive inter-laboratory validation exercises typically requiring investments of $50,000-$100,000 depending on complexity and scope. However, return on investment analyses demonstrate that such expenditures typically prevent costly method failures and regulatory complications, ultimately providing substantial long-term value for organizations implementing robust validation protocols.

Unlock deeper insights with PatSnap Eureka Quick Research — get a full tech report to explore trends and direct your research. Try now!

Generate Your Research Report Instantly with AI Agent

Supercharge your innovation with PatSnap Eureka AI Agent Platform!