How to Design Energy-Efficient Spiking Networks for Always-On Keyword Spotting

AUG 20, 20259 MIN READ

Generate Your Research Report Instantly with AI Agent

Patsnap Eureka helps you evaluate technical feasibility & market potential.

Spiking Networks for KWS: Background and Objectives

Spiking Neural Networks (SNNs) have emerged as a promising paradigm for energy-efficient computing, drawing inspiration from the biological mechanisms of the human brain. In the context of Always-On Keyword Spotting (KWS), SNNs offer a unique approach to address the challenges of continuous, low-power audio processing. The evolution of this technology can be traced back to the early research on artificial neural networks, with a significant shift towards biologically plausible models in recent years.

The primary objective of implementing SNNs for KWS is to develop systems capable of continuously monitoring audio streams for specific keywords while consuming minimal energy. This aligns with the growing demand for edge computing solutions in IoT devices, smart home appliances, and wearable technology. The goal is to achieve high accuracy in keyword detection while maintaining ultra-low power consumption, enabling devices to operate for extended periods without frequent recharging.

Historically, conventional deep learning approaches have dominated the field of speech recognition and keyword spotting. However, these methods often require substantial computational resources and energy, making them less suitable for always-on applications. The shift towards SNNs represents a paradigm change, focusing on event-driven computation and sparse activations to significantly reduce power consumption.

The development of SNNs for KWS is driven by several key technological trends. First, the advancement in neuromorphic hardware has provided a platform for efficient implementation of spiking networks. Second, the increasing need for privacy-preserving on-device processing has pushed for local, energy-efficient solutions. Lastly, the growing market for smart devices with voice control capabilities has created a demand for always-on, low-power audio processing systems.

Current research in this field aims to address several critical challenges. These include developing efficient training algorithms for SNNs, optimizing network architectures for keyword spotting tasks, and bridging the gap between SNN performance and traditional deep learning models. Additionally, there is a focus on creating robust systems capable of operating in noisy environments and adapting to different user accents and languages.

The expected outcomes of this technological pursuit include the development of highly efficient KWS systems that can be integrated into a wide range of devices with minimal impact on battery life. This would enable more widespread adoption of voice-controlled interfaces in everyday objects, potentially transforming how we interact with technology in our daily lives. Furthermore, advancements in this area are likely to contribute to the broader field of neuromorphic computing, potentially leading to breakthroughs in other areas of artificial intelligence and machine learning.

The primary objective of implementing SNNs for KWS is to develop systems capable of continuously monitoring audio streams for specific keywords while consuming minimal energy. This aligns with the growing demand for edge computing solutions in IoT devices, smart home appliances, and wearable technology. The goal is to achieve high accuracy in keyword detection while maintaining ultra-low power consumption, enabling devices to operate for extended periods without frequent recharging.

Historically, conventional deep learning approaches have dominated the field of speech recognition and keyword spotting. However, these methods often require substantial computational resources and energy, making them less suitable for always-on applications. The shift towards SNNs represents a paradigm change, focusing on event-driven computation and sparse activations to significantly reduce power consumption.

The development of SNNs for KWS is driven by several key technological trends. First, the advancement in neuromorphic hardware has provided a platform for efficient implementation of spiking networks. Second, the increasing need for privacy-preserving on-device processing has pushed for local, energy-efficient solutions. Lastly, the growing market for smart devices with voice control capabilities has created a demand for always-on, low-power audio processing systems.

Current research in this field aims to address several critical challenges. These include developing efficient training algorithms for SNNs, optimizing network architectures for keyword spotting tasks, and bridging the gap between SNN performance and traditional deep learning models. Additionally, there is a focus on creating robust systems capable of operating in noisy environments and adapting to different user accents and languages.

The expected outcomes of this technological pursuit include the development of highly efficient KWS systems that can be integrated into a wide range of devices with minimal impact on battery life. This would enable more widespread adoption of voice-controlled interfaces in everyday objects, potentially transforming how we interact with technology in our daily lives. Furthermore, advancements in this area are likely to contribute to the broader field of neuromorphic computing, potentially leading to breakthroughs in other areas of artificial intelligence and machine learning.

Market Analysis for Always-On KWS Solutions

The market for Always-On Keyword Spotting (KWS) solutions has experienced significant growth in recent years, driven by the increasing demand for voice-activated devices and smart home technologies. This trend is expected to continue as consumers seek more convenient and hands-free ways to interact with their devices.

The global market for voice recognition technologies, which includes KWS solutions, is projected to expand rapidly. Key factors contributing to this growth include the rising adoption of smart speakers, voice-controlled IoT devices, and voice-enabled virtual assistants in smartphones and other consumer electronics.

In the consumer electronics sector, Always-On KWS solutions are becoming increasingly prevalent in smart speakers, smartphones, smart TVs, and wearable devices. These technologies enable devices to continuously listen for specific wake words or commands, providing a seamless user experience without the need for physical interaction.

The automotive industry is another significant market for Always-On KWS solutions. Voice-activated infotainment systems and in-car assistants are becoming standard features in modern vehicles, enhancing driver safety and convenience. This trend is expected to accelerate with the development of autonomous vehicles, where voice control will play a crucial role in human-machine interaction.

Enterprise and industrial applications are also driving demand for Always-On KWS solutions. Voice-controlled systems are being integrated into smart office environments, manufacturing facilities, and healthcare settings to improve productivity and streamline operations.

However, the market faces challenges related to power consumption and privacy concerns. Energy-efficient solutions are crucial for battery-powered devices and always-on applications. Consumers and regulators are increasingly concerned about the privacy implications of devices that are constantly listening, necessitating robust security measures and transparent data handling practices.

The competitive landscape is characterized by a mix of established tech giants and innovative startups. Major players are investing heavily in research and development to improve the accuracy, efficiency, and energy consumption of their KWS solutions. Emerging companies are focusing on niche applications and novel approaches to differentiate themselves in the market.

As the technology matures, we can expect to see further integration of Always-On KWS solutions into everyday devices and environments. The development of more energy-efficient and privacy-conscious solutions will be critical in addressing current market challenges and unlocking new opportunities across various industries.

The global market for voice recognition technologies, which includes KWS solutions, is projected to expand rapidly. Key factors contributing to this growth include the rising adoption of smart speakers, voice-controlled IoT devices, and voice-enabled virtual assistants in smartphones and other consumer electronics.

In the consumer electronics sector, Always-On KWS solutions are becoming increasingly prevalent in smart speakers, smartphones, smart TVs, and wearable devices. These technologies enable devices to continuously listen for specific wake words or commands, providing a seamless user experience without the need for physical interaction.

The automotive industry is another significant market for Always-On KWS solutions. Voice-activated infotainment systems and in-car assistants are becoming standard features in modern vehicles, enhancing driver safety and convenience. This trend is expected to accelerate with the development of autonomous vehicles, where voice control will play a crucial role in human-machine interaction.

Enterprise and industrial applications are also driving demand for Always-On KWS solutions. Voice-controlled systems are being integrated into smart office environments, manufacturing facilities, and healthcare settings to improve productivity and streamline operations.

However, the market faces challenges related to power consumption and privacy concerns. Energy-efficient solutions are crucial for battery-powered devices and always-on applications. Consumers and regulators are increasingly concerned about the privacy implications of devices that are constantly listening, necessitating robust security measures and transparent data handling practices.

The competitive landscape is characterized by a mix of established tech giants and innovative startups. Major players are investing heavily in research and development to improve the accuracy, efficiency, and energy consumption of their KWS solutions. Emerging companies are focusing on niche applications and novel approaches to differentiate themselves in the market.

As the technology matures, we can expect to see further integration of Always-On KWS solutions into everyday devices and environments. The development of more energy-efficient and privacy-conscious solutions will be critical in addressing current market challenges and unlocking new opportunities across various industries.

Current Challenges in Energy-Efficient SNN Design

The design of energy-efficient Spiking Neural Networks (SNNs) for always-on keyword spotting faces several significant challenges. One of the primary obstacles is the inherent trade-off between energy efficiency and performance. As SNNs aim to mimic the biological neural networks' energy efficiency, they must maintain high accuracy in keyword detection while minimizing power consumption.

A major challenge lies in the optimization of network architecture. Traditional deep learning models often rely on complex, computationally intensive structures that are not suitable for low-power, always-on devices. Designing an SNN topology that balances the number of neurons, layers, and connections while maintaining high accuracy is a complex task that requires careful consideration of the specific keyword spotting requirements.

Another significant hurdle is the development of efficient spike encoding and decoding mechanisms. Converting input audio signals into spike trains and then interpreting the output spikes for keyword detection must be done with minimal energy expenditure. This process needs to be both accurate and computationally lightweight to ensure real-time performance on resource-constrained devices.

The choice of learning algorithms for SNNs presents another challenge. While backpropagation has been highly successful in traditional neural networks, its direct application to SNNs is not straightforward due to the discrete nature of spike events. Developing energy-efficient learning algorithms that can effectively train SNNs for keyword spotting tasks remains an active area of research.

Hardware implementation of SNNs for always-on keyword spotting poses additional challenges. Designing specialized neuromorphic hardware that can efficiently process spike-based computations while consuming minimal power is crucial. This involves optimizing memory access patterns, reducing data movement, and implementing energy-efficient spike generation and propagation mechanisms.

The dynamic nature of speech and the variability in acoustic environments further complicate the design of energy-efficient SNNs for keyword spotting. The network must be robust enough to handle different speakers, accents, and background noise conditions while maintaining low power consumption. This requires careful consideration of feature extraction techniques and network adaptability.

Lastly, the challenge of quantization and pruning in SNNs for keyword spotting is significant. Reducing the precision of weights and activations can greatly improve energy efficiency, but it may also lead to a degradation in detection accuracy. Finding the optimal balance between model size, computational complexity, and performance is a critical challenge in designing energy-efficient SNNs for always-on keyword spotting applications.

A major challenge lies in the optimization of network architecture. Traditional deep learning models often rely on complex, computationally intensive structures that are not suitable for low-power, always-on devices. Designing an SNN topology that balances the number of neurons, layers, and connections while maintaining high accuracy is a complex task that requires careful consideration of the specific keyword spotting requirements.

Another significant hurdle is the development of efficient spike encoding and decoding mechanisms. Converting input audio signals into spike trains and then interpreting the output spikes for keyword detection must be done with minimal energy expenditure. This process needs to be both accurate and computationally lightweight to ensure real-time performance on resource-constrained devices.

The choice of learning algorithms for SNNs presents another challenge. While backpropagation has been highly successful in traditional neural networks, its direct application to SNNs is not straightforward due to the discrete nature of spike events. Developing energy-efficient learning algorithms that can effectively train SNNs for keyword spotting tasks remains an active area of research.

Hardware implementation of SNNs for always-on keyword spotting poses additional challenges. Designing specialized neuromorphic hardware that can efficiently process spike-based computations while consuming minimal power is crucial. This involves optimizing memory access patterns, reducing data movement, and implementing energy-efficient spike generation and propagation mechanisms.

The dynamic nature of speech and the variability in acoustic environments further complicate the design of energy-efficient SNNs for keyword spotting. The network must be robust enough to handle different speakers, accents, and background noise conditions while maintaining low power consumption. This requires careful consideration of feature extraction techniques and network adaptability.

Lastly, the challenge of quantization and pruning in SNNs for keyword spotting is significant. Reducing the precision of weights and activations can greatly improve energy efficiency, but it may also lead to a degradation in detection accuracy. Finding the optimal balance between model size, computational complexity, and performance is a critical challenge in designing energy-efficient SNNs for always-on keyword spotting applications.

Existing Energy-Efficient SNN Solutions for KWS

01 Energy-efficient spiking neural network architectures

Developing specialized hardware architectures for spiking neural networks that optimize energy consumption. These designs focus on reducing power usage while maintaining computational efficiency, often through novel circuit designs or neuromorphic computing principles.- Energy-efficient spiking neural network architectures: Developing specialized hardware architectures for spiking neural networks that optimize energy consumption. These designs focus on reducing power usage while maintaining computational efficiency, often through novel circuit designs or neuromorphic computing approaches.

- Sparse coding and event-driven processing: Implementing sparse coding techniques and event-driven processing in spiking networks to reduce computational overhead and energy consumption. This approach allows for more efficient information encoding and processing, leading to lower power requirements.

- Adaptive learning algorithms for energy efficiency: Developing adaptive learning algorithms specifically designed for spiking neural networks that optimize energy usage during training and inference. These algorithms adjust network parameters dynamically to minimize power consumption while maintaining performance.

- Low-power neuromorphic hardware implementation: Creating low-power neuromorphic hardware implementations of spiking neural networks using advanced semiconductor technologies and circuit design techniques. These implementations aim to closely mimic biological neural systems while minimizing energy consumption.

- Optimized spike encoding and transmission: Developing efficient methods for encoding and transmitting spike information within spiking neural networks. This includes optimizing spike timing, reducing the number of spikes needed for computation, and implementing energy-efficient communication protocols between neurons.

02 Sparse coding and event-driven processing

Implementing sparse coding techniques and event-driven processing in spiking networks to reduce computational overhead and energy consumption. This approach leverages the temporal nature of spike-based information processing to minimize unnecessary computations.Expand Specific Solutions03 Adaptive learning algorithms for energy efficiency

Developing adaptive learning algorithms that optimize the energy efficiency of spiking networks during training and inference. These algorithms dynamically adjust network parameters to minimize energy consumption while maintaining performance.Expand Specific Solutions04 Low-power synaptic devices and memristors

Incorporating low-power synaptic devices and memristors into spiking neural network hardware to reduce energy consumption. These components mimic biological synapses and can store and process information with minimal energy use.Expand Specific Solutions05 Optimized spike encoding and compression techniques

Developing efficient spike encoding and compression techniques to reduce data transfer and storage requirements in spiking networks. These methods aim to minimize the energy consumed in communication between neurons and in memory access operations.Expand Specific Solutions

Key Players in SNN and KWS Technology

The development of energy-efficient spiking networks for always-on keyword spotting is in its early stages, with significant potential for growth. The market is expanding as demand for low-power, always-on devices increases across various sectors. While the technology is still evolving, several key players are making strides in this field. Companies like Intel, Samsung, and Qualcomm are leveraging their expertise in semiconductor technology to develop efficient neuromorphic chips. Specialized firms such as Innatera Nanosystems and BrainChip are focusing on neuromorphic computing solutions. Academic institutions like Zhejiang University and the University of Electronic Science & Technology of China are contributing valuable research. The competition is intensifying as more organizations recognize the potential of this technology for edge computing and IoT applications.

Intel Corp.

Technical Solution: Intel has developed energy-efficient spiking neural networks for always-on keyword spotting using their Loihi neuromorphic chip. The Loihi chip implements a spiking neural network architecture that mimics the brain's neural structure and function, allowing for efficient processing of temporal information. For keyword spotting, Intel's approach utilizes a time-to-first-spike encoding scheme, where the timing of spikes carries important information[1]. This method reduces the number of spikes needed for computation, significantly lowering energy consumption. Intel's implementation achieves state-of-the-art accuracy while consuming only a fraction of the energy compared to traditional deep learning approaches[2]. The system can be trained using supervised learning algorithms adapted for spiking networks, such as surrogate gradient descent[3].

Strengths: Extremely low power consumption, real-time processing capability, and scalability. Weaknesses: Requires specialized hardware and different programming paradigms compared to traditional neural networks.

Innatera Nanosystems BV

Technical Solution: Innatera has developed a neuromorphic processor specifically designed for always-on edge AI applications, including keyword spotting. Their approach uses spiking neural networks (SNNs) implemented in analog/mixed-signal circuits, which closely mimic the brain's neural dynamics. For keyword spotting, Innatera's solution employs a compact SNN architecture that processes audio input streams in real-time, using spike-based feature extraction and classification[4]. The system utilizes temporal coding, where information is encoded in the precise timing of spikes, allowing for efficient processing of audio signals. Innatera's neuromorphic chip achieves ultra-low power consumption by leveraging the event-driven nature of SNNs and the energy efficiency of analog computation[5]. The company claims their solution can reduce power consumption by up to 100x compared to traditional digital implementations.

Strengths: Extremely low power consumption, real-time processing, and compact form factor. Weaknesses: Limited flexibility compared to software-based solutions and potential challenges in scaling to more complex tasks.

Core Innovations in SNN Design for KWS

Dual State Circuit for Energy Efficient Hardware Implementation of Spiking Neural Networks

PatentPendingUS20230153585A1

Innovation

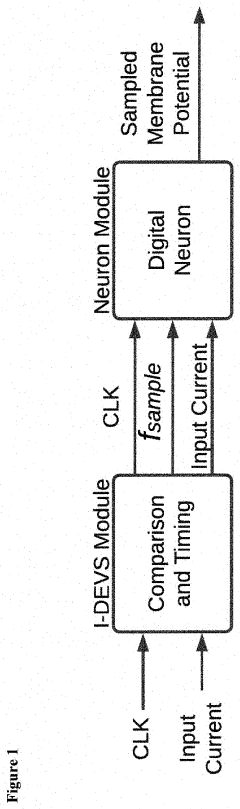

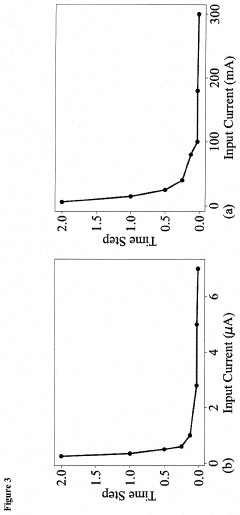

- The Input-Dependent Variable Sampling (I-DEVS) method modulates the sampling frequency of artificial neurons based on input signals, reducing unnecessary switching activity by adjusting the frequency inversely proportional to the input current, thereby reducing power consumption and hardware resource usage without affecting the neuron's behavior.

Methods and apparatus for spiking neural computation

PatentWO2013119861A1

Innovation

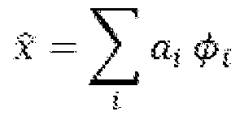

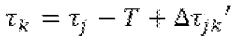

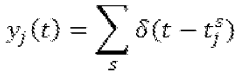

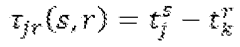

- The implementation of a spiking neural network that uses anti-leaky integrate-and-fire neurons with no synaptic weights, where information is coded in the relative timing of spikes, allowing for efficient computation of linear systems and transformations with arbitrary precision using a single neuron, and converting between self-referential and non-self-referential timing forms.

Hardware Implementations for SNN-based KWS

Hardware implementations for SNN-based Keyword Spotting (KWS) systems are crucial for achieving energy-efficient, always-on operation. These implementations typically leverage the event-driven nature of spiking neural networks (SNNs) to reduce power consumption and computational complexity.

One common approach is the use of neuromorphic hardware, which mimics the structure and function of biological neural networks. These specialized chips, such as IBM's TrueNorth and Intel's Loihi, are designed to efficiently process spike-based information. They often incorporate parallel processing units that can handle multiple neuron updates simultaneously, significantly reducing energy consumption compared to traditional von Neumann architectures.

Field-Programmable Gate Arrays (FPGAs) offer another viable solution for SNN-based KWS hardware implementation. FPGAs provide flexibility in design and can be optimized for specific SNN architectures. They allow for parallel processing of neuron updates and synaptic operations, which is particularly beneficial for SNNs. FPGA implementations have demonstrated significant improvements in energy efficiency and processing speed for KWS tasks.

Application-Specific Integrated Circuits (ASICs) represent a more specialized hardware solution for SNN-based KWS. While less flexible than FPGAs, ASICs can be tailored to specific SNN architectures and KWS requirements, offering superior energy efficiency and performance. However, the development of ASICs is more time-consuming and costly, making them suitable for large-scale production scenarios.

Mixed-signal designs, combining analog and digital circuits, have also shown promise in SNN hardware implementations. These designs leverage the inherent analog nature of biological neurons to reduce power consumption in certain computations while maintaining the precision and flexibility of digital processing for other operations.

Recent advancements in hardware design have focused on optimizing memory access patterns and reducing data movement, which are significant contributors to energy consumption in neural network computations. Techniques such as in-memory computing and near-memory processing have been explored to minimize data transfer between processing units and memory, further enhancing energy efficiency.

To address the always-on nature of KWS systems, hardware implementations often incorporate low-power wake-up circuits. These circuits continuously monitor incoming audio signals with minimal energy consumption, activating the full SNN-based KWS system only when potential keywords are detected. This approach significantly reduces overall power consumption during idle periods.

One common approach is the use of neuromorphic hardware, which mimics the structure and function of biological neural networks. These specialized chips, such as IBM's TrueNorth and Intel's Loihi, are designed to efficiently process spike-based information. They often incorporate parallel processing units that can handle multiple neuron updates simultaneously, significantly reducing energy consumption compared to traditional von Neumann architectures.

Field-Programmable Gate Arrays (FPGAs) offer another viable solution for SNN-based KWS hardware implementation. FPGAs provide flexibility in design and can be optimized for specific SNN architectures. They allow for parallel processing of neuron updates and synaptic operations, which is particularly beneficial for SNNs. FPGA implementations have demonstrated significant improvements in energy efficiency and processing speed for KWS tasks.

Application-Specific Integrated Circuits (ASICs) represent a more specialized hardware solution for SNN-based KWS. While less flexible than FPGAs, ASICs can be tailored to specific SNN architectures and KWS requirements, offering superior energy efficiency and performance. However, the development of ASICs is more time-consuming and costly, making them suitable for large-scale production scenarios.

Mixed-signal designs, combining analog and digital circuits, have also shown promise in SNN hardware implementations. These designs leverage the inherent analog nature of biological neurons to reduce power consumption in certain computations while maintaining the precision and flexibility of digital processing for other operations.

Recent advancements in hardware design have focused on optimizing memory access patterns and reducing data movement, which are significant contributors to energy consumption in neural network computations. Techniques such as in-memory computing and near-memory processing have been explored to minimize data transfer between processing units and memory, further enhancing energy efficiency.

To address the always-on nature of KWS systems, hardware implementations often incorporate low-power wake-up circuits. These circuits continuously monitor incoming audio signals with minimal energy consumption, activating the full SNN-based KWS system only when potential keywords are detected. This approach significantly reduces overall power consumption during idle periods.

Power Consumption Benchmarks and Standards

Power consumption benchmarks and standards play a crucial role in evaluating and comparing the energy efficiency of spiking networks for always-on keyword spotting systems. These benchmarks provide a standardized framework for measuring and reporting power consumption, enabling developers and researchers to assess the performance of their designs against industry norms and competitors' solutions.

One widely recognized benchmark for low-power keyword spotting is the Google Speech Commands dataset, which consists of 65,000 one-second audio clips of 30 short words. This dataset serves as a common ground for evaluating the accuracy and energy efficiency of keyword spotting models. Researchers often report their models' performance on this dataset in terms of accuracy and power consumption, allowing for direct comparisons between different approaches.

The MLPerf Inference benchmark, developed by the MLCommons consortium, includes a keyword spotting task that specifically targets always-on applications. This benchmark provides a standardized methodology for measuring the inference performance and power efficiency of machine learning models, including those based on spiking neural networks. It defines specific scenarios and metrics for keyword spotting, such as accuracy, latency, and energy consumption per inference.

In terms of power consumption standards, the International Electrotechnical Commission (IEC) has established guidelines for measuring and reporting the energy efficiency of electronic devices. While not specific to keyword spotting systems, these standards provide a foundation for consistent power measurements across different hardware platforms and can be adapted for spiking network implementations.

The IEEE 1801 Unified Power Format (UPF) standard is another important reference for power-aware design of integrated circuits. Although primarily focused on digital design, its principles can be applied to the development of energy-efficient spiking networks for keyword spotting. UPF provides a standardized way to specify power intent and constraints, which is valuable for optimizing the power consumption of hardware implementations.

For always-on keyword spotting applications, the power consumption of the entire system, including the microphone, analog-to-digital converter, and processing unit, must be considered. The ETSI EN 300 328 standard, which governs short-range devices operating in the 2.4 GHz ISM band, indirectly influences power consumption benchmarks for wireless keyword spotting devices by setting limits on transmit power and duty cycle.

When designing energy-efficient spiking networks for always-on keyword spotting, it is essential to target power consumption levels that are competitive with state-of-the-art solutions. Current benchmarks indicate that high-performance keyword spotting systems can operate with power consumption in the range of 1-10 mW, with some ultra-low-power implementations achieving sub-mW operation. These figures serve as important reference points for evaluating the energy efficiency of new spiking network designs.

One widely recognized benchmark for low-power keyword spotting is the Google Speech Commands dataset, which consists of 65,000 one-second audio clips of 30 short words. This dataset serves as a common ground for evaluating the accuracy and energy efficiency of keyword spotting models. Researchers often report their models' performance on this dataset in terms of accuracy and power consumption, allowing for direct comparisons between different approaches.

The MLPerf Inference benchmark, developed by the MLCommons consortium, includes a keyword spotting task that specifically targets always-on applications. This benchmark provides a standardized methodology for measuring the inference performance and power efficiency of machine learning models, including those based on spiking neural networks. It defines specific scenarios and metrics for keyword spotting, such as accuracy, latency, and energy consumption per inference.

In terms of power consumption standards, the International Electrotechnical Commission (IEC) has established guidelines for measuring and reporting the energy efficiency of electronic devices. While not specific to keyword spotting systems, these standards provide a foundation for consistent power measurements across different hardware platforms and can be adapted for spiking network implementations.

The IEEE 1801 Unified Power Format (UPF) standard is another important reference for power-aware design of integrated circuits. Although primarily focused on digital design, its principles can be applied to the development of energy-efficient spiking networks for keyword spotting. UPF provides a standardized way to specify power intent and constraints, which is valuable for optimizing the power consumption of hardware implementations.

For always-on keyword spotting applications, the power consumption of the entire system, including the microphone, analog-to-digital converter, and processing unit, must be considered. The ETSI EN 300 328 standard, which governs short-range devices operating in the 2.4 GHz ISM band, indirectly influences power consumption benchmarks for wireless keyword spotting devices by setting limits on transmit power and duty cycle.

When designing energy-efficient spiking networks for always-on keyword spotting, it is essential to target power consumption levels that are competitive with state-of-the-art solutions. Current benchmarks indicate that high-performance keyword spotting systems can operate with power consumption in the range of 1-10 mW, with some ultra-low-power implementations achieving sub-mW operation. These figures serve as important reference points for evaluating the energy efficiency of new spiking network designs.

Unlock deeper insights with Patsnap Eureka Quick Research — get a full tech report to explore trends and direct your research. Try now!

Generate Your Research Report Instantly with AI Agent

Supercharge your innovation with Patsnap Eureka AI Agent Platform!