Neuromorphic Signal Processing in IoT: Energy Use Testing

SEP 8, 20259 MIN READ

Generate Your Research Report Instantly with AI Agent

PatSnap Eureka helps you evaluate technical feasibility & market potential.

Neuromorphic Computing Evolution and Objectives

Neuromorphic computing represents a paradigm shift in computational architecture, drawing inspiration from the structure and function of biological neural systems. The evolution of this field began in the late 1980s with Carver Mead's pioneering work at Caltech, where he first proposed using analog VLSI systems to mimic neurobiological architectures. This marked the birth of neuromorphic engineering as a distinct discipline combining neuroscience, physics, mathematics, computer science, and electrical engineering.

Throughout the 1990s and early 2000s, research remained largely academic, with limited practical applications due to technological constraints. The field gained significant momentum around 2010 with the emergence of more sophisticated CMOS technologies and the increasing limitations of traditional von Neumann architectures, particularly regarding energy efficiency and processing speed for certain computational tasks.

The development trajectory accelerated dramatically with major research initiatives like the European Human Brain Project and DARPA's SyNAPSE program, which led to breakthrough neuromorphic chips such as IBM's TrueNorth and Intel's Loihi. These chips demonstrated unprecedented energy efficiency for neural network computations, operating at milliwatt power levels while performing complex pattern recognition tasks.

In the context of IoT applications, neuromorphic computing evolution has been driven by the critical need for edge processing capabilities that minimize power consumption. Traditional cloud-based processing models become increasingly untenable as IoT device deployments scale into the billions, creating bandwidth bottlenecks and raising privacy concerns.

The primary objectives of neuromorphic signal processing in IoT environments center on achieving ultra-low power consumption while maintaining computational effectiveness. Current research aims to develop neuromorphic systems capable of processing sensory data (visual, auditory, and other modalities) directly at the edge with minimal energy expenditure, ideally in the microwatt to milliwatt range.

Another key objective is enabling continuous learning and adaptation in resource-constrained environments. Unlike traditional computing paradigms requiring separate training and inference phases, neuromorphic systems aim to learn continuously from incoming data streams, adapting to changing conditions without requiring cloud connectivity or significant power increases.

The field is now moving toward creating specialized neuromorphic processors optimized specifically for IoT signal processing tasks, with particular emphasis on developing standardized benchmarking methodologies for energy use testing. These efforts seek to establish reliable metrics for comparing different neuromorphic approaches in terms of energy efficiency, processing capability, and adaptability to various IoT deployment scenarios.

Throughout the 1990s and early 2000s, research remained largely academic, with limited practical applications due to technological constraints. The field gained significant momentum around 2010 with the emergence of more sophisticated CMOS technologies and the increasing limitations of traditional von Neumann architectures, particularly regarding energy efficiency and processing speed for certain computational tasks.

The development trajectory accelerated dramatically with major research initiatives like the European Human Brain Project and DARPA's SyNAPSE program, which led to breakthrough neuromorphic chips such as IBM's TrueNorth and Intel's Loihi. These chips demonstrated unprecedented energy efficiency for neural network computations, operating at milliwatt power levels while performing complex pattern recognition tasks.

In the context of IoT applications, neuromorphic computing evolution has been driven by the critical need for edge processing capabilities that minimize power consumption. Traditional cloud-based processing models become increasingly untenable as IoT device deployments scale into the billions, creating bandwidth bottlenecks and raising privacy concerns.

The primary objectives of neuromorphic signal processing in IoT environments center on achieving ultra-low power consumption while maintaining computational effectiveness. Current research aims to develop neuromorphic systems capable of processing sensory data (visual, auditory, and other modalities) directly at the edge with minimal energy expenditure, ideally in the microwatt to milliwatt range.

Another key objective is enabling continuous learning and adaptation in resource-constrained environments. Unlike traditional computing paradigms requiring separate training and inference phases, neuromorphic systems aim to learn continuously from incoming data streams, adapting to changing conditions without requiring cloud connectivity or significant power increases.

The field is now moving toward creating specialized neuromorphic processors optimized specifically for IoT signal processing tasks, with particular emphasis on developing standardized benchmarking methodologies for energy use testing. These efforts seek to establish reliable metrics for comparing different neuromorphic approaches in terms of energy efficiency, processing capability, and adaptability to various IoT deployment scenarios.

IoT Market Demand for Energy-Efficient Signal Processing

The Internet of Things (IoT) market is experiencing unprecedented growth, with global deployments expected to reach 41.6 billion connected devices by 2025. This explosive expansion is driving significant demand for energy-efficient signal processing solutions that can support the massive data processing requirements while operating within strict power constraints. Market research indicates that energy consumption has become a critical factor influencing IoT adoption decisions across multiple sectors.

Industrial IoT applications represent the largest market segment demanding energy-efficient neuromorphic signal processing, with manufacturing companies seeking solutions that can reduce operational costs while extending the lifespan of deployed sensors. Current industrial IoT deployments typically consume 30-50% of their total energy budget on signal processing tasks alone, creating substantial opportunities for neuromorphic computing approaches that mimic the brain's energy-efficient processing methods.

Consumer IoT devices constitute another rapidly growing market segment, with smart home products, wearables, and personal health monitoring systems requiring extended battery life to meet consumer expectations. Market surveys reveal that 78% of consumers consider battery life a decisive factor when purchasing IoT devices, highlighting the commercial importance of energy-efficient signal processing technologies.

The healthcare sector presents particularly compelling use cases for energy-efficient neuromorphic processing. Remote patient monitoring systems require continuous signal processing of vital signs while operating on limited power sources. The market for these devices is projected to grow at a CAGR of 14.2% through 2027, driven by the aging global population and the shift toward home-based care models.

Edge computing trends are further amplifying market demand for energy-efficient signal processing. As organizations increasingly process data closer to its source rather than in centralized cloud environments, the energy constraints of edge devices become more pronounced. Market analysis shows that 73% of IoT data will be processed at the edge by 2025, necessitating signal processing solutions that can operate effectively within tight energy budgets.

Regulatory pressures are also shaping market demand, with several regions implementing energy efficiency standards for electronic devices. The European Union's Ecodesign Directive and similar initiatives worldwide are creating market incentives for manufacturers to adopt more energy-efficient signal processing technologies in their IoT product lines.

Venture capital investments in energy-efficient IoT technologies have seen a 35% year-over-year increase, reflecting market recognition of the critical importance of power optimization in the IoT ecosystem. This investment trend is particularly focused on neuromorphic computing approaches that promise orders-of-magnitude improvements in energy efficiency compared to conventional digital signal processing methods.

Industrial IoT applications represent the largest market segment demanding energy-efficient neuromorphic signal processing, with manufacturing companies seeking solutions that can reduce operational costs while extending the lifespan of deployed sensors. Current industrial IoT deployments typically consume 30-50% of their total energy budget on signal processing tasks alone, creating substantial opportunities for neuromorphic computing approaches that mimic the brain's energy-efficient processing methods.

Consumer IoT devices constitute another rapidly growing market segment, with smart home products, wearables, and personal health monitoring systems requiring extended battery life to meet consumer expectations. Market surveys reveal that 78% of consumers consider battery life a decisive factor when purchasing IoT devices, highlighting the commercial importance of energy-efficient signal processing technologies.

The healthcare sector presents particularly compelling use cases for energy-efficient neuromorphic processing. Remote patient monitoring systems require continuous signal processing of vital signs while operating on limited power sources. The market for these devices is projected to grow at a CAGR of 14.2% through 2027, driven by the aging global population and the shift toward home-based care models.

Edge computing trends are further amplifying market demand for energy-efficient signal processing. As organizations increasingly process data closer to its source rather than in centralized cloud environments, the energy constraints of edge devices become more pronounced. Market analysis shows that 73% of IoT data will be processed at the edge by 2025, necessitating signal processing solutions that can operate effectively within tight energy budgets.

Regulatory pressures are also shaping market demand, with several regions implementing energy efficiency standards for electronic devices. The European Union's Ecodesign Directive and similar initiatives worldwide are creating market incentives for manufacturers to adopt more energy-efficient signal processing technologies in their IoT product lines.

Venture capital investments in energy-efficient IoT technologies have seen a 35% year-over-year increase, reflecting market recognition of the critical importance of power optimization in the IoT ecosystem. This investment trend is particularly focused on neuromorphic computing approaches that promise orders-of-magnitude improvements in energy efficiency compared to conventional digital signal processing methods.

Current Neuromorphic Technologies and Energy Challenges

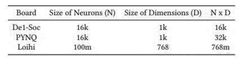

The current landscape of neuromorphic computing technologies presents a diverse array of architectures and implementations, each with distinct approaches to mimicking brain-like processing capabilities. Leading hardware platforms include IBM's TrueNorth, Intel's Loihi, and BrainChip's Akida, which represent different design philosophies in neuromorphic engineering. These systems utilize spiking neural networks (SNNs) that fundamentally differ from traditional computing paradigms by processing information through discrete events or "spikes" rather than continuous signals.

Despite significant advancements, neuromorphic technologies face substantial energy challenges when deployed in IoT environments. Current implementations struggle to balance computational capabilities with power constraints, particularly in edge devices where battery life is critical. For instance, while TrueNorth achieves impressive energy efficiency at 70mW for one million neurons, this still exceeds the power budget of many IoT applications that require sub-milliwatt operation.

The energy profile of neuromorphic systems varies significantly across different operational modes. During active processing, spike generation and transmission consume considerable power, while static power consumption during idle states remains a persistent challenge. Memory access operations, particularly in systems utilizing off-chip memory, create significant energy bottlenecks that undermine the theoretical efficiency advantages of neuromorphic approaches.

Material limitations present another critical challenge. Current CMOS-based implementations face fundamental physical constraints in achieving the energy efficiency of biological neural systems. Emerging materials such as memristors, phase-change memory, and spintronic devices offer promising alternatives but remain in early development stages with unresolved issues in reliability, scalability, and manufacturing consistency.

Signal processing applications in IoT contexts introduce additional complexities. Real-time processing requirements for sensor data streams demand responsive yet energy-efficient computation. Current neuromorphic solutions struggle to maintain consistent performance across varying workloads while preserving their energy advantages. The trade-off between processing accuracy and energy consumption becomes particularly acute in applications like audio processing, image recognition, and anomaly detection at the edge.

Testing methodologies for energy consumption in neuromorphic systems lack standardization, complicating comparative analysis across different technologies. Existing benchmarks often fail to capture the unique operational characteristics of spike-based processing, leading to inconsistent evaluation metrics. This challenge is compounded by the diversity of IoT application requirements, which range from continuous monitoring scenarios to event-triggered processing with vastly different energy profiles.

Despite significant advancements, neuromorphic technologies face substantial energy challenges when deployed in IoT environments. Current implementations struggle to balance computational capabilities with power constraints, particularly in edge devices where battery life is critical. For instance, while TrueNorth achieves impressive energy efficiency at 70mW for one million neurons, this still exceeds the power budget of many IoT applications that require sub-milliwatt operation.

The energy profile of neuromorphic systems varies significantly across different operational modes. During active processing, spike generation and transmission consume considerable power, while static power consumption during idle states remains a persistent challenge. Memory access operations, particularly in systems utilizing off-chip memory, create significant energy bottlenecks that undermine the theoretical efficiency advantages of neuromorphic approaches.

Material limitations present another critical challenge. Current CMOS-based implementations face fundamental physical constraints in achieving the energy efficiency of biological neural systems. Emerging materials such as memristors, phase-change memory, and spintronic devices offer promising alternatives but remain in early development stages with unresolved issues in reliability, scalability, and manufacturing consistency.

Signal processing applications in IoT contexts introduce additional complexities. Real-time processing requirements for sensor data streams demand responsive yet energy-efficient computation. Current neuromorphic solutions struggle to maintain consistent performance across varying workloads while preserving their energy advantages. The trade-off between processing accuracy and energy consumption becomes particularly acute in applications like audio processing, image recognition, and anomaly detection at the edge.

Testing methodologies for energy consumption in neuromorphic systems lack standardization, complicating comparative analysis across different technologies. Existing benchmarks often fail to capture the unique operational characteristics of spike-based processing, leading to inconsistent evaluation metrics. This challenge is compounded by the diversity of IoT application requirements, which range from continuous monitoring scenarios to event-triggered processing with vastly different energy profiles.

Existing Energy-Efficient Signal Processing Solutions

01 Energy-efficient neuromorphic computing architectures

Neuromorphic computing architectures are designed to mimic the brain's neural networks, offering significant energy efficiency advantages over traditional computing systems for signal processing tasks. These architectures utilize specialized hardware implementations that reduce power consumption while maintaining computational capabilities. By optimizing the design of neural networks and implementing them in hardware specifically tailored for neuromorphic computing, these systems can achieve substantial energy savings compared to conventional processors when performing complex signal processing operations.- Low-power neuromorphic computing architectures: Neuromorphic computing architectures designed specifically for low power consumption utilize specialized hardware implementations that mimic neural networks while minimizing energy use. These architectures employ techniques such as spike-based processing, event-driven computation, and optimized circuit designs to achieve significant energy efficiency compared to traditional computing approaches. By processing information only when necessary and using parallel processing structures, these systems can perform complex signal processing tasks with minimal power requirements.

- Energy-efficient spiking neural networks: Spiking neural networks (SNNs) offer an energy-efficient approach to signal processing by transmitting information through discrete spikes rather than continuous values. This sparse communication reduces power consumption as computation occurs only when necessary. Implementation of SNNs in neuromorphic hardware enables efficient processing of temporal data and pattern recognition while maintaining low energy usage. These networks can be optimized through various encoding schemes and learning algorithms to further reduce energy requirements while maintaining high performance in signal processing tasks.

- Memristor-based neuromorphic signal processing: Memristor technology enables highly efficient neuromorphic signal processing by combining memory and processing functions in the same device. These non-volatile memory elements can store synaptic weights while performing computations, eliminating the energy-intensive data transfer between separate memory and processing units. Memristor-based neuromorphic systems achieve significant power savings through in-memory computing, reduced leakage current, and analog signal processing capabilities. This approach is particularly effective for edge computing applications where energy constraints are critical.

- Neuromorphic hardware for edge computing: Specialized neuromorphic hardware designed for edge computing applications focuses on minimizing energy consumption while enabling real-time signal processing at the network edge. These systems incorporate power-efficient design principles such as asynchronous circuits, approximate computing, and dynamic power management to extend battery life in resource-constrained environments. By processing data locally rather than transmitting to cloud servers, neuromorphic edge devices significantly reduce the overall energy footprint of IoT and mobile applications while maintaining low latency for time-sensitive tasks.

- Energy optimization techniques for neuromorphic systems: Various optimization techniques can be applied to neuromorphic systems to further reduce energy consumption during signal processing tasks. These include quantization of neural network parameters, pruning of unnecessary connections, implementation of sparse activation functions, and dynamic voltage scaling. Advanced algorithms that adapt power usage based on computational demands enable these systems to maintain an optimal balance between performance and energy efficiency. Additionally, novel training methods specifically designed for neuromorphic hardware can produce networks that require minimal energy while maintaining high accuracy.

02 Spike-based signal processing techniques

Spike-based signal processing leverages the event-driven nature of neuromorphic systems to reduce energy consumption. Rather than continuous processing, these techniques operate on discrete neural spikes, activating computational resources only when necessary. This approach significantly reduces power requirements by eliminating unnecessary computations during periods of inactivity. The sparse temporal coding inherent in spike-based processing allows for efficient representation of signals while maintaining information integrity, making it particularly suitable for applications with strict energy constraints.Expand Specific Solutions03 Low-power neuromorphic hardware implementations

Specialized hardware implementations for neuromorphic signal processing focus on minimizing energy consumption through innovative circuit designs and materials. These implementations include analog, digital, and mixed-signal approaches that optimize power efficiency while maintaining computational capabilities. Technologies such as memristive devices, low-power CMOS circuits, and specialized neuromorphic chips enable significant reductions in energy use compared to traditional signal processing hardware. These hardware solutions are particularly valuable for edge computing applications where power constraints are critical.Expand Specific Solutions04 Adaptive power management in neuromorphic systems

Adaptive power management techniques in neuromorphic systems dynamically adjust energy consumption based on processing requirements. These systems can scale their power usage according to the complexity of the signal processing task, activating only the necessary neural components. By implementing intelligent power gating, voltage scaling, and activity-dependent resource allocation, these systems optimize energy efficiency while maintaining performance. This approach is particularly effective for applications with varying computational demands, allowing the system to conserve energy during periods of lower processing requirements.Expand Specific Solutions05 Application-specific neuromorphic signal processing

Application-specific neuromorphic signal processing systems are tailored to particular use cases, optimizing energy efficiency for specific tasks. These specialized implementations focus on the unique requirements of applications such as image processing, audio analysis, sensor data interpretation, and pattern recognition. By eliminating unnecessary computational elements and optimizing the architecture for specific signal types, these systems achieve significant energy savings compared to general-purpose processors. The customization allows for precise balancing of performance and power consumption based on application requirements.Expand Specific Solutions

Leading Companies in Neuromorphic Computing for IoT

Neuromorphic signal processing in IoT for energy efficiency testing is in an early growth stage, with the market expanding rapidly due to increasing demand for energy-efficient edge computing solutions. The technology is approaching maturity with key players demonstrating significant advancements. Companies like IBM, Polyn Technology, and Syntiant are leading with commercial neuromorphic chips, while Wiliot and Skaichips focus on ultra-low-power IoT implementations. Academic-industry partnerships involving Tsinghua University, Fudan University, and CNRS are accelerating innovation. State Grid Corporation of China and its subsidiaries are exploring applications in smart grid infrastructure, indicating growing industrial adoption. The market is characterized by diverse approaches, from analog neuromorphic designs to digital implementations, with energy efficiency as the primary competitive advantage.

International Business Machines Corp.

Technical Solution: IBM has developed neuromorphic computing solutions specifically targeting IoT energy efficiency challenges. Their TrueNorth neuromorphic chip architecture implements spiking neural networks that mimic brain functions, consuming only 70mW while delivering 46 billion synaptic operations per second[1]. For IoT signal processing, IBM has created specialized hardware accelerators that integrate with their neuromorphic systems to process sensor data at the edge with minimal energy consumption. Their approach includes event-driven processing where computations occur only when needed rather than continuously, significantly reducing power requirements. IBM's testing methodology involves comprehensive energy profiling across various IoT workloads, measuring both static and dynamic power consumption under different operational conditions[3]. They've demonstrated up to 100x energy efficiency improvements compared to conventional von Neumann architectures for specific IoT signal processing tasks.

Strengths: Exceptional energy efficiency with proven 100x improvement over traditional architectures; mature development ecosystem with programming tools that simplify deployment; extensive testing frameworks for energy optimization. Weaknesses: Higher initial implementation complexity compared to conventional solutions; requires specialized knowledge for optimal programming; limited compatibility with legacy IoT systems requiring additional adaptation layers.

Polyn Technology Ltd.

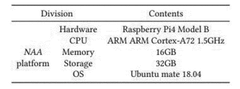

Technical Solution: Polyn Technology has pioneered Neuromorphic Analog Signal Processing (NASP) technology specifically designed for ultra-low-power IoT applications. Their solution combines analog neuromorphic circuits with digital interfaces to create a highly efficient signal processing platform. Polyn's NASP chips perform always-on sensor signal processing while consuming orders of magnitude less power than conventional digital solutions - typically in the microwatt range for continuous operation[2]. Their architecture implements neuromorphic computing principles directly in analog hardware, eliminating the need for analog-to-digital conversion in the signal processing chain, which significantly reduces energy consumption. Polyn's testing methodology includes comprehensive power profiling across various IoT sensor types (accelerometers, microphones, biomedical sensors) and operational scenarios. Their neuromorphic processors can extract meaningful features from raw sensor data while consuming as little as 100μW, enabling battery-powered IoT devices to operate for years without replacement[4]. The company has developed specialized testing frameworks that measure energy consumption across different workloads and environmental conditions.

Strengths: Ultra-low power consumption enabling years of battery life for IoT devices; direct analog processing eliminates energy-intensive ADC operations; compact form factor suitable for space-constrained IoT applications. Weaknesses: Limited to specific types of signal processing tasks; requires careful analog design considerations; less flexibility than fully programmable digital solutions for complex algorithmic changes.

Key Patents in Neuromorphic Signal Processing

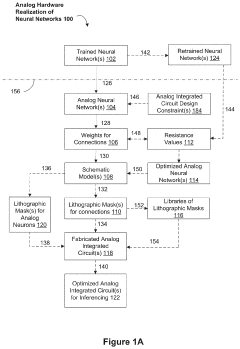

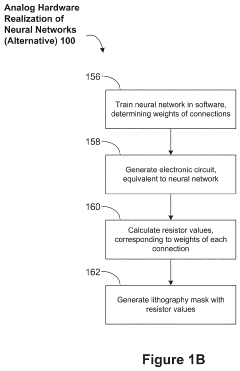

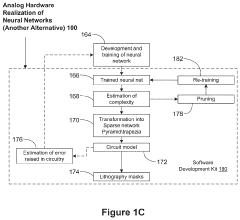

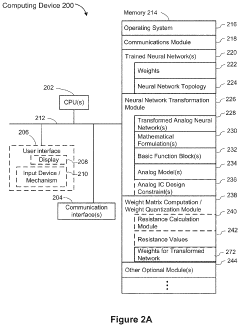

Neuromorphic Analog Signal Processor for Predictive Maintenance of Machines

PatentPendingUS20230081715A1

Innovation

- Analog neuromorphic circuits that model trained neural networks, using operational amplifiers and resistors to create hardware implementations that are more power-efficient, scalable, and less sensitive to noise and temperature changes, allowing for mass production and reduced manufacturing costs.

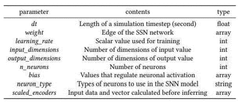

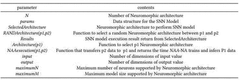

Neuromorphic architecture dynamic selection method for modeling on basis of SNN model parameter, and recording medium and device for performing same

PatentWO2022102912A1

Innovation

- A method for dynamically selecting a neuromorphic architecture based on SNN model parameters, using the Neuromorphic Architecture Abstraction (NAA) model, which extracts information from NPZ files, selects suitable architectures, calculates execution times, and chooses the architecture with the shortest learning and inference time, enabling efficient and appropriate model execution.

Standardization Efforts for Energy Benchmarking

The standardization of energy benchmarking methodologies for neuromorphic signal processing in IoT applications represents a critical advancement toward enabling fair comparisons across different implementations. Currently, several international organizations are actively developing frameworks to establish consistent metrics and testing procedures for evaluating energy efficiency in neuromorphic computing systems.

The IEEE Neuromorphic Computing Standards Working Group has been pioneering efforts to create a unified approach to energy benchmarking through their P2971 standard initiative. This framework aims to establish standardized test conditions, measurement methodologies, and reporting formats specifically tailored for neuromorphic hardware deployed in IoT environments. The standard addresses various operational scenarios, including continuous monitoring, event-driven processing, and adaptive learning phases.

In parallel, the International Electrotechnical Commission (IEC) has formed a technical committee focused on low-power neuromorphic technologies, with a dedicated task force examining energy consumption patterns in edge computing applications. Their proposed testing protocol incorporates both synthetic benchmarks and real-world IoT workloads to provide comprehensive energy profiles across different operational conditions.

Industry consortia are also making significant contributions to standardization efforts. The Neuromorphic Computing Industry Alliance (NCIA) has published a white paper outlining recommended practices for energy benchmarking, emphasizing the importance of measuring not only peak power consumption but also energy efficiency during idle states and transition periods. Their approach incorporates metrics such as energy per inference, energy per learning cycle, and standby power requirements.

Academic institutions have established collaborative initiatives like the Green Neuromorphic Computing Benchmark Suite, which provides standardized datasets and reference implementations specifically designed to stress-test energy consumption characteristics of neuromorphic systems under IoT-relevant workloads. This suite has been adopted by several research groups and commercial entities as a de facto standard for preliminary evaluations.

Regulatory bodies, particularly in regions with strong emphasis on energy efficiency such as the European Union, have begun incorporating neuromorphic computing considerations into their energy labeling directives for IoT devices. These regulations are driving the need for standardized testing methodologies that can be consistently applied across different device categories and use cases.

Despite these advances, challenges remain in harmonizing the various approaches into a globally accepted framework. Differences in testing methodologies, reference workloads, and measurement granularity continue to complicate direct comparisons between published results from different organizations.

The IEEE Neuromorphic Computing Standards Working Group has been pioneering efforts to create a unified approach to energy benchmarking through their P2971 standard initiative. This framework aims to establish standardized test conditions, measurement methodologies, and reporting formats specifically tailored for neuromorphic hardware deployed in IoT environments. The standard addresses various operational scenarios, including continuous monitoring, event-driven processing, and adaptive learning phases.

In parallel, the International Electrotechnical Commission (IEC) has formed a technical committee focused on low-power neuromorphic technologies, with a dedicated task force examining energy consumption patterns in edge computing applications. Their proposed testing protocol incorporates both synthetic benchmarks and real-world IoT workloads to provide comprehensive energy profiles across different operational conditions.

Industry consortia are also making significant contributions to standardization efforts. The Neuromorphic Computing Industry Alliance (NCIA) has published a white paper outlining recommended practices for energy benchmarking, emphasizing the importance of measuring not only peak power consumption but also energy efficiency during idle states and transition periods. Their approach incorporates metrics such as energy per inference, energy per learning cycle, and standby power requirements.

Academic institutions have established collaborative initiatives like the Green Neuromorphic Computing Benchmark Suite, which provides standardized datasets and reference implementations specifically designed to stress-test energy consumption characteristics of neuromorphic systems under IoT-relevant workloads. This suite has been adopted by several research groups and commercial entities as a de facto standard for preliminary evaluations.

Regulatory bodies, particularly in regions with strong emphasis on energy efficiency such as the European Union, have begun incorporating neuromorphic computing considerations into their energy labeling directives for IoT devices. These regulations are driving the need for standardized testing methodologies that can be consistently applied across different device categories and use cases.

Despite these advances, challenges remain in harmonizing the various approaches into a globally accepted framework. Differences in testing methodologies, reference workloads, and measurement granularity continue to complicate direct comparisons between published results from different organizations.

Edge AI Implementation Strategies

Implementing Edge AI for neuromorphic signal processing in IoT environments requires strategic approaches that balance computational efficiency with energy constraints. The deployment of neuromorphic computing at the edge represents a paradigm shift from traditional cloud-based processing models, offering significant advantages in latency reduction and privacy enhancement.

Edge AI implementation for neuromorphic signal processing can follow several architectural patterns. The fully decentralized approach distributes neuromorphic processing entirely to edge devices, maximizing response time but potentially limiting computational capacity. Hybrid architectures balance processing between edge devices and local gateways, offering flexibility for varying computational demands. Hierarchical implementations create multi-tier processing networks where neuromorphic computations are strategically distributed based on complexity and energy requirements.

Device-specific optimization represents a critical success factor for neuromorphic edge deployment. This involves tailoring neural network architectures to match the specific constraints of target hardware platforms, including memory limitations, processing capabilities, and energy profiles. Techniques such as network pruning, quantization, and architecture-specific compilation can significantly reduce energy consumption while maintaining acceptable inference accuracy.

Energy-aware scheduling algorithms provide another strategic dimension for implementation. These algorithms dynamically adjust processing priorities based on real-time energy availability, sensor data importance, and application requirements. Adaptive duty cycling can temporarily deactivate neuromorphic processing during periods of low activity or energy scarcity, while maintaining essential monitoring functions.

Hardware acceleration represents a key enablement strategy, with specialized neuromorphic chips offering orders-of-magnitude improvements in energy efficiency compared to general-purpose processors. Technologies such as Spiking Neural Networks (SNNs) implemented on dedicated hardware can process sensor data with minimal energy expenditure, making them ideal for battery-powered IoT deployments.

Federated learning approaches enable edge devices to collaboratively improve neuromorphic models without centralizing sensitive data. This strategy involves training local models on device-specific data, sharing only model updates rather than raw data, and aggregating these updates to improve the global model. This approach addresses both privacy concerns and bandwidth limitations while enabling continuous improvement of neuromorphic processing capabilities.

Implementation roadmaps typically begin with proof-of-concept deployments focused on single-function neuromorphic processing, gradually expanding to multi-modal sensing and more complex decision-making capabilities as the technology matures and energy efficiency improves.

Edge AI implementation for neuromorphic signal processing can follow several architectural patterns. The fully decentralized approach distributes neuromorphic processing entirely to edge devices, maximizing response time but potentially limiting computational capacity. Hybrid architectures balance processing between edge devices and local gateways, offering flexibility for varying computational demands. Hierarchical implementations create multi-tier processing networks where neuromorphic computations are strategically distributed based on complexity and energy requirements.

Device-specific optimization represents a critical success factor for neuromorphic edge deployment. This involves tailoring neural network architectures to match the specific constraints of target hardware platforms, including memory limitations, processing capabilities, and energy profiles. Techniques such as network pruning, quantization, and architecture-specific compilation can significantly reduce energy consumption while maintaining acceptable inference accuracy.

Energy-aware scheduling algorithms provide another strategic dimension for implementation. These algorithms dynamically adjust processing priorities based on real-time energy availability, sensor data importance, and application requirements. Adaptive duty cycling can temporarily deactivate neuromorphic processing during periods of low activity or energy scarcity, while maintaining essential monitoring functions.

Hardware acceleration represents a key enablement strategy, with specialized neuromorphic chips offering orders-of-magnitude improvements in energy efficiency compared to general-purpose processors. Technologies such as Spiking Neural Networks (SNNs) implemented on dedicated hardware can process sensor data with minimal energy expenditure, making them ideal for battery-powered IoT deployments.

Federated learning approaches enable edge devices to collaboratively improve neuromorphic models without centralizing sensitive data. This strategy involves training local models on device-specific data, sharing only model updates rather than raw data, and aggregating these updates to improve the global model. This approach addresses both privacy concerns and bandwidth limitations while enabling continuous improvement of neuromorphic processing capabilities.

Implementation roadmaps typically begin with proof-of-concept deployments focused on single-function neuromorphic processing, gradually expanding to multi-modal sensing and more complex decision-making capabilities as the technology matures and energy efficiency improves.

Unlock deeper insights with PatSnap Eureka Quick Research — get a full tech report to explore trends and direct your research. Try now!

Generate Your Research Report Instantly with AI Agent

Supercharge your innovation with PatSnap Eureka AI Agent Platform!