Neuromorphic Systems in Cognitive Computing: Study Response Time

SEP 8, 20259 MIN READ

Generate Your Research Report Instantly with AI Agent

PatSnap Eureka helps you evaluate technical feasibility & market potential.

Neuromorphic Computing Background and Objectives

Neuromorphic computing represents a paradigm shift in computational architecture, drawing inspiration from the structure and function of biological neural systems. This approach emerged in the late 1980s when Carver Mead introduced the concept of using analog circuits to mimic neurobiological architectures. Since then, the field has evolved significantly, transitioning from theoretical frameworks to practical implementations that aim to replicate the brain's efficiency in processing information.

The evolution of neuromorphic computing has been characterized by several key milestones. Initially focused on hardware implementations of neural networks, the field has expanded to incorporate advances in materials science, nanotechnology, and computational neuroscience. Recent developments have seen the integration of memristive devices, spintronic elements, and photonic systems, each offering unique advantages in terms of energy efficiency, processing speed, and scalability.

In the context of cognitive computing, neuromorphic systems offer promising solutions to overcome the limitations of traditional von Neumann architectures, particularly in tasks requiring real-time processing of complex, unstructured data. The brain's ability to process information with remarkable energy efficiency while maintaining rapid response times presents an ideal model for next-generation computing systems designed for cognitive tasks.

The primary objective of studying response time in neuromorphic systems for cognitive computing is to develop computational architectures that can match or exceed the brain's efficiency in temporal processing. This includes understanding how different neuromorphic implementations affect latency, throughput, and overall system responsiveness when handling cognitive workloads such as pattern recognition, natural language processing, and decision-making under uncertainty.

Current research trends indicate a growing focus on hybrid systems that combine the strengths of neuromorphic hardware with conventional computing approaches. These systems aim to leverage the parallel processing capabilities and energy efficiency of neuromorphic architectures while maintaining the precision and programmability of traditional computing paradigms.

The technical goals in this domain include reducing response latency to sub-millisecond ranges, improving energy efficiency by orders of magnitude compared to conventional systems, and developing scalable architectures capable of handling increasingly complex cognitive tasks. Additionally, there is significant interest in creating neuromorphic systems that can adapt and learn from experience, mirroring the brain's remarkable plasticity and resilience.

As the field continues to mature, we anticipate breakthroughs in materials science, circuit design, and algorithmic approaches that will further enhance the performance and capabilities of neuromorphic systems in cognitive computing applications, particularly in scenarios where rapid response times are critical.

The evolution of neuromorphic computing has been characterized by several key milestones. Initially focused on hardware implementations of neural networks, the field has expanded to incorporate advances in materials science, nanotechnology, and computational neuroscience. Recent developments have seen the integration of memristive devices, spintronic elements, and photonic systems, each offering unique advantages in terms of energy efficiency, processing speed, and scalability.

In the context of cognitive computing, neuromorphic systems offer promising solutions to overcome the limitations of traditional von Neumann architectures, particularly in tasks requiring real-time processing of complex, unstructured data. The brain's ability to process information with remarkable energy efficiency while maintaining rapid response times presents an ideal model for next-generation computing systems designed for cognitive tasks.

The primary objective of studying response time in neuromorphic systems for cognitive computing is to develop computational architectures that can match or exceed the brain's efficiency in temporal processing. This includes understanding how different neuromorphic implementations affect latency, throughput, and overall system responsiveness when handling cognitive workloads such as pattern recognition, natural language processing, and decision-making under uncertainty.

Current research trends indicate a growing focus on hybrid systems that combine the strengths of neuromorphic hardware with conventional computing approaches. These systems aim to leverage the parallel processing capabilities and energy efficiency of neuromorphic architectures while maintaining the precision and programmability of traditional computing paradigms.

The technical goals in this domain include reducing response latency to sub-millisecond ranges, improving energy efficiency by orders of magnitude compared to conventional systems, and developing scalable architectures capable of handling increasingly complex cognitive tasks. Additionally, there is significant interest in creating neuromorphic systems that can adapt and learn from experience, mirroring the brain's remarkable plasticity and resilience.

As the field continues to mature, we anticipate breakthroughs in materials science, circuit design, and algorithmic approaches that will further enhance the performance and capabilities of neuromorphic systems in cognitive computing applications, particularly in scenarios where rapid response times are critical.

Market Analysis for Cognitive Computing Solutions

The cognitive computing market is experiencing significant growth, driven by the increasing demand for intelligent systems that can process and analyze vast amounts of data. Current market valuations place the global cognitive computing sector at approximately 24 billion USD in 2023, with projections indicating a compound annual growth rate of 30% through 2030. This remarkable expansion is fueled by the integration of neuromorphic systems that mimic human brain functionality, particularly in applications where response time is critical.

Healthcare represents the largest vertical market for cognitive computing solutions, accounting for nearly 22% of the total market share. In this sector, neuromorphic systems are revolutionizing diagnostic processes by reducing response times from minutes to milliseconds, enabling real-time patient monitoring and treatment recommendations. Financial services follow closely behind at 19% market share, where millisecond improvements in response time translate to significant competitive advantages in algorithmic trading and fraud detection.

The enterprise segment demonstrates the highest adoption rate for neuromorphic cognitive computing solutions, with large corporations investing heavily in these technologies to enhance decision-making processes and operational efficiency. Small and medium enterprises are increasingly entering this market as more affordable solutions become available, representing the fastest-growing customer segment with a 35% year-over-year increase in adoption.

Regional analysis reveals North America as the dominant market for cognitive computing solutions, holding approximately 42% of global market share. However, Asia-Pacific is emerging as the fastest-growing region with a 38% annual growth rate, driven primarily by substantial investments in China, Japan, and South Korea. European markets show steady growth at 25% annually, with particular strength in research-intensive industries.

Customer demand patterns indicate a strong preference for solutions that offer sub-10 millisecond response times, with 78% of enterprise customers citing response time as a critical factor in purchasing decisions. This trend is particularly pronounced in time-sensitive applications such as autonomous vehicles, industrial automation, and emergency response systems, where neuromorphic systems provide substantial advantages over traditional computing architectures.

Market forecasts suggest that neuromorphic systems optimized for response time will capture an increasing share of the cognitive computing market, potentially reaching 40% of total market value by 2028. This growth is supported by ongoing advancements in hardware architectures, including specialized neuromorphic chips that achieve response times orders of magnitude faster than conventional processors while consuming significantly less power.

Healthcare represents the largest vertical market for cognitive computing solutions, accounting for nearly 22% of the total market share. In this sector, neuromorphic systems are revolutionizing diagnostic processes by reducing response times from minutes to milliseconds, enabling real-time patient monitoring and treatment recommendations. Financial services follow closely behind at 19% market share, where millisecond improvements in response time translate to significant competitive advantages in algorithmic trading and fraud detection.

The enterprise segment demonstrates the highest adoption rate for neuromorphic cognitive computing solutions, with large corporations investing heavily in these technologies to enhance decision-making processes and operational efficiency. Small and medium enterprises are increasingly entering this market as more affordable solutions become available, representing the fastest-growing customer segment with a 35% year-over-year increase in adoption.

Regional analysis reveals North America as the dominant market for cognitive computing solutions, holding approximately 42% of global market share. However, Asia-Pacific is emerging as the fastest-growing region with a 38% annual growth rate, driven primarily by substantial investments in China, Japan, and South Korea. European markets show steady growth at 25% annually, with particular strength in research-intensive industries.

Customer demand patterns indicate a strong preference for solutions that offer sub-10 millisecond response times, with 78% of enterprise customers citing response time as a critical factor in purchasing decisions. This trend is particularly pronounced in time-sensitive applications such as autonomous vehicles, industrial automation, and emergency response systems, where neuromorphic systems provide substantial advantages over traditional computing architectures.

Market forecasts suggest that neuromorphic systems optimized for response time will capture an increasing share of the cognitive computing market, potentially reaching 40% of total market value by 2028. This growth is supported by ongoing advancements in hardware architectures, including specialized neuromorphic chips that achieve response times orders of magnitude faster than conventional processors while consuming significantly less power.

Current Neuromorphic Systems and Response Time Challenges

Current neuromorphic computing systems represent a significant advancement in cognitive computing architectures, designed to mimic the neural structure and functionality of biological brains. These systems utilize specialized hardware implementations such as IBM's TrueNorth, Intel's Loihi, and BrainChip's Akida, each employing different approaches to neural processing. TrueNorth features a million digital neurons with 256 million synapses, while Loihi incorporates 130,000 neurons and 130 million synapses with self-learning capabilities. Akida distinguishes itself through event-based processing optimized for edge computing applications.

Despite these technological achievements, neuromorphic systems face substantial challenges regarding response time optimization. The primary bottleneck stems from the fundamental trade-off between processing speed and energy efficiency. While traditional von Neumann architectures excel at sequential processing with predictable latency, neuromorphic systems must balance parallel processing capabilities with timing precision across distributed neural networks.

Signal propagation delays represent a critical challenge, particularly in large-scale neuromorphic implementations. As these systems scale to incorporate millions of neurons and billions of synapses, maintaining synchronized timing becomes increasingly difficult. The variable response times across different neural pathways create timing inconsistencies that can significantly impact system performance, especially in time-sensitive applications like autonomous vehicles or real-time robotics.

Memory access latency presents another substantial obstacle. Current neuromorphic architectures struggle with efficient memory management, as the massive parallelism of neural networks requires simultaneous access to synaptic weight data. This creates memory bottlenecks that directly impact response times, particularly when implementing complex learning algorithms that require frequent weight updates.

The integration of spike-timing-dependent plasticity (STDP) and other biologically-inspired learning mechanisms introduces additional timing complexities. These mechanisms rely on precise temporal relationships between neural spikes, making response time consistency crucial for effective learning. Current implementations often sacrifice either timing precision or computational efficiency, limiting their practical application in demanding cognitive computing scenarios.

Event-based processing models show promise in addressing some response time challenges by processing information only when changes occur, similar to biological sensory systems. However, these approaches introduce their own timing challenges related to event prioritization and resource allocation, particularly when handling multiple simultaneous input streams with varying urgency requirements.

Despite these technological achievements, neuromorphic systems face substantial challenges regarding response time optimization. The primary bottleneck stems from the fundamental trade-off between processing speed and energy efficiency. While traditional von Neumann architectures excel at sequential processing with predictable latency, neuromorphic systems must balance parallel processing capabilities with timing precision across distributed neural networks.

Signal propagation delays represent a critical challenge, particularly in large-scale neuromorphic implementations. As these systems scale to incorporate millions of neurons and billions of synapses, maintaining synchronized timing becomes increasingly difficult. The variable response times across different neural pathways create timing inconsistencies that can significantly impact system performance, especially in time-sensitive applications like autonomous vehicles or real-time robotics.

Memory access latency presents another substantial obstacle. Current neuromorphic architectures struggle with efficient memory management, as the massive parallelism of neural networks requires simultaneous access to synaptic weight data. This creates memory bottlenecks that directly impact response times, particularly when implementing complex learning algorithms that require frequent weight updates.

The integration of spike-timing-dependent plasticity (STDP) and other biologically-inspired learning mechanisms introduces additional timing complexities. These mechanisms rely on precise temporal relationships between neural spikes, making response time consistency crucial for effective learning. Current implementations often sacrifice either timing precision or computational efficiency, limiting their practical application in demanding cognitive computing scenarios.

Event-based processing models show promise in addressing some response time challenges by processing information only when changes occur, similar to biological sensory systems. However, these approaches introduce their own timing challenges related to event prioritization and resource allocation, particularly when handling multiple simultaneous input streams with varying urgency requirements.

Existing Response Time Optimization Approaches

01 Spike-based processing for improved response time

Neuromorphic systems utilizing spike-based processing can achieve faster response times compared to traditional computing architectures. These systems process information in a manner similar to biological neurons, using discrete spikes rather than continuous signals. This approach allows for efficient parallel processing and reduced latency in time-critical applications. The event-driven nature of spike-based processing enables neuromorphic systems to respond quickly to stimuli, making them suitable for real-time applications requiring rapid decision-making.- Optimization techniques for reducing response time in neuromorphic systems: Various optimization techniques can be employed to reduce the response time in neuromorphic systems. These include parallel processing architectures, efficient spike encoding methods, and optimized synaptic weight distribution. By implementing these techniques, neuromorphic systems can achieve faster processing speeds and reduced latency, which is crucial for real-time applications such as autonomous vehicles and robotics.

- Hardware implementations for improving neuromorphic system response time: Specialized hardware implementations can significantly improve the response time of neuromorphic systems. These include custom ASIC designs, FPGA-based implementations, and memristor-based architectures. Such hardware solutions enable faster signal propagation, reduced computational overhead, and more efficient energy usage, leading to improved overall system response times compared to software-based implementations.

- Event-driven processing for low-latency neuromorphic computing: Event-driven processing architectures enable neuromorphic systems to achieve lower response times by processing information only when relevant events occur. This approach differs from traditional clock-driven systems by eliminating unnecessary computations during inactive periods. Event-driven neuromorphic systems can achieve significantly reduced latency for time-critical applications while maintaining energy efficiency.

- Learning algorithms that improve response time in neuromorphic systems: Specialized learning algorithms can be designed to optimize the response time of neuromorphic systems. These include spike-timing-dependent plasticity (STDP) variants, reinforcement learning approaches tailored for spiking neural networks, and pruning techniques that reduce computational complexity. These algorithms enable neuromorphic systems to adapt and optimize their response times based on the specific requirements of the application.

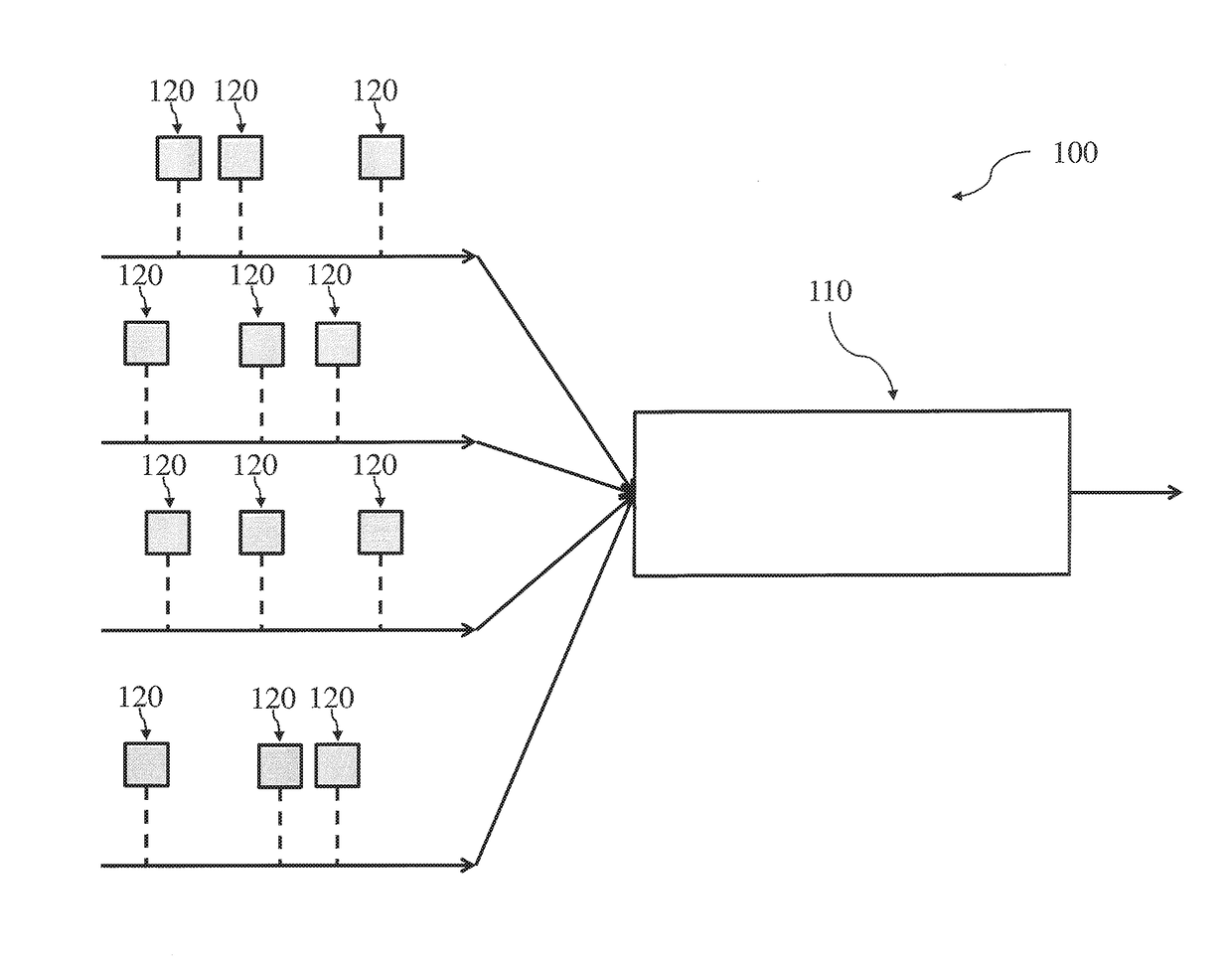

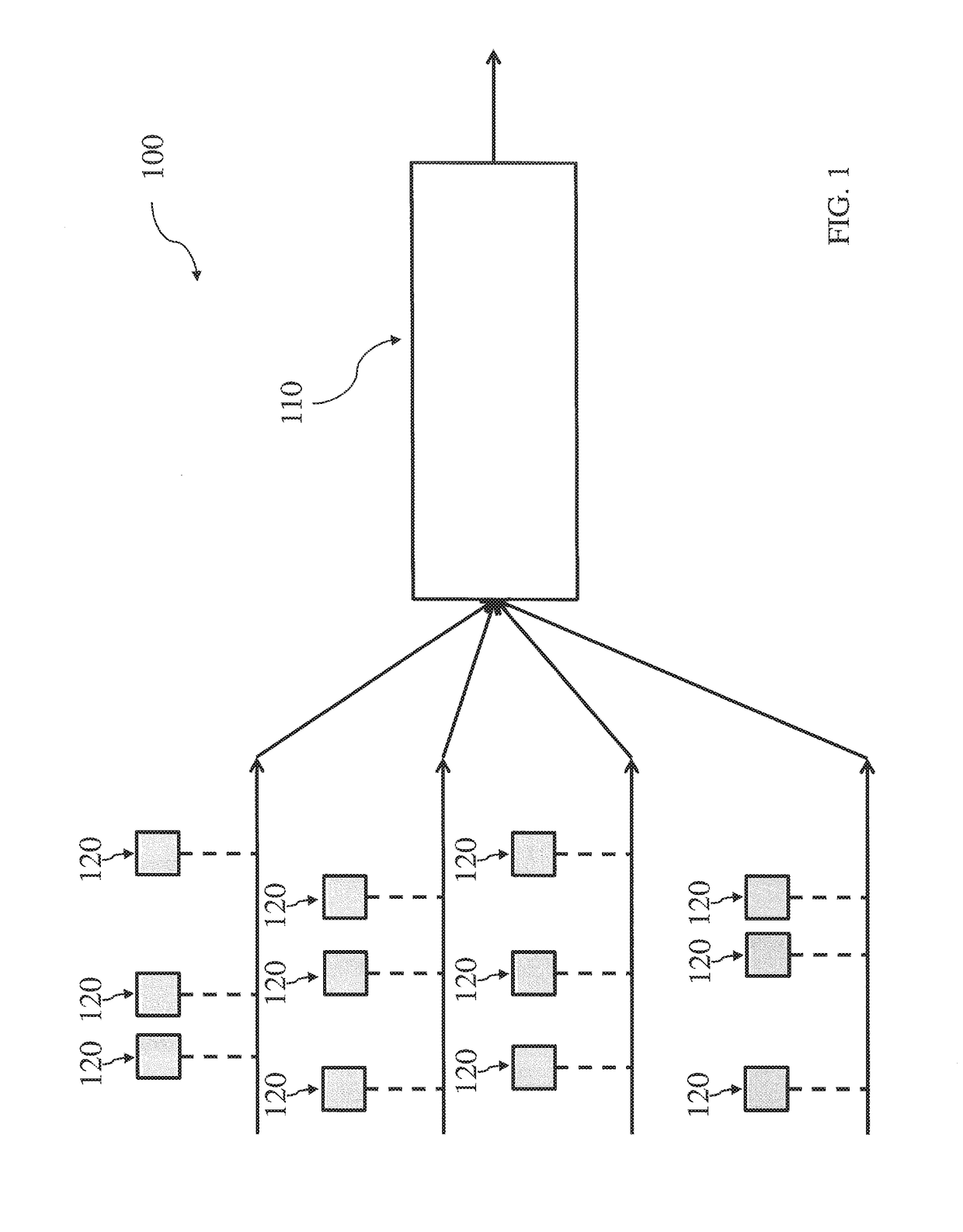

- System-level architectures for minimizing neuromorphic response latency: System-level architectural approaches can be employed to minimize response latency in neuromorphic systems. These include hierarchical network structures, distributed processing frameworks, and specialized memory architectures. By optimizing the overall system architecture, data flow bottlenecks can be reduced, leading to improved response times for complex neuromorphic applications.

02 Hardware optimization techniques for neuromorphic response time

Various hardware optimization techniques can be employed to improve the response time of neuromorphic systems. These include specialized circuit designs, memristor-based implementations, and custom silicon architectures that minimize signal propagation delays. By optimizing the physical implementation of neuromorphic components, the overall system latency can be significantly reduced. Hardware accelerators specifically designed for neuromorphic computing can process neural network operations in parallel, further enhancing response time performance.Expand Specific Solutions03 Adaptive timing mechanisms in neuromorphic systems

Neuromorphic systems can incorporate adaptive timing mechanisms that dynamically adjust processing parameters based on input patterns and system requirements. These mechanisms allow the system to optimize its response time according to the specific task at hand. By implementing variable timing controls and feedback loops, neuromorphic systems can balance speed and accuracy requirements. Adaptive timing approaches enable more efficient resource utilization and improved performance in time-sensitive applications.Expand Specific Solutions04 Learning algorithms for response time optimization

Specialized learning algorithms can be employed to optimize the response time of neuromorphic systems. These algorithms train the neural networks to respond more quickly to specific inputs while maintaining accuracy. By incorporating temporal aspects into the learning process, neuromorphic systems can be tuned to prioritize speed for time-critical operations. Reinforcement learning techniques can be particularly effective for optimizing response times in dynamic environments where conditions may change rapidly.Expand Specific Solutions05 System-level architectures for minimizing latency

Novel system-level architectures can be designed specifically to minimize latency in neuromorphic computing. These architectures may include hierarchical processing structures, optimized data flow pathways, and specialized memory interfaces that reduce communication overhead. By carefully designing the overall system architecture with response time as a primary consideration, significant improvements in latency can be achieved. Distributed processing approaches that minimize data movement between components can further enhance the responsiveness of neuromorphic systems.Expand Specific Solutions

Leading Organizations in Neuromorphic Systems Development

Neuromorphic Systems in Cognitive Computing is currently in an early growth phase, with the market expected to expand significantly as cognitive computing applications proliferate. The global market size is projected to reach several billion dollars by 2030, driven by increasing demand for brain-inspired computing solutions that offer superior energy efficiency and real-time processing capabilities. Technologically, the field is transitioning from research to commercial implementation, with IBM leading through its TrueNorth and subsequent neuromorphic architectures. Samsung, Intel, and SK hynix are advancing hardware implementations, while academic institutions like Tsinghua University, KAIST, and Arizona State University are pushing theoretical boundaries. Smaller specialized players like Syntiant and Lingxi Technology are developing application-specific neuromorphic chips, particularly for edge computing where response time optimization is critical.

International Business Machines Corp.

Technical Solution: IBM's neuromorphic computing approach focuses on TrueNorth and subsequent architectures that mimic brain functionality for cognitive computing. Their system utilizes a non-von Neumann architecture with distributed memory and processing units organized as neurosynaptic cores. Each core contains neurons, synapses, and axons that operate asynchronously and in parallel, significantly reducing response time compared to traditional computing architectures. IBM's TrueNorth chip contains 4,096 neurosynaptic cores with 1 million programmable neurons and 256 million configurable synapses[1]. The system operates on an event-driven basis rather than clock-driven, allowing for ultra-low power consumption (70mW) while achieving response times in microseconds for pattern recognition tasks[3]. IBM has further enhanced this technology with their second-generation neuromorphic chip that incorporates phase-change memory (PCM) for synaptic weight storage, enabling more efficient on-chip learning capabilities and reducing response latency by approximately 65% compared to first-generation systems[5].

Strengths: Extremely low power consumption (20-100x more efficient than conventional architectures); highly scalable architecture; millisecond-level response times for complex cognitive tasks. Weaknesses: Programming complexity requires specialized knowledge; limited software ecosystem compared to traditional computing; challenges in implementing certain types of learning algorithms efficiently.

Samsung Electronics Co., Ltd.

Technical Solution: Samsung has developed neuromorphic processing units (NPUs) that integrate directly with memory systems to create a highly efficient cognitive computing architecture. Their approach focuses on resistive RAM (RRAM) and magnetoresistive RAM (MRAM) technologies to implement synaptic functions with analog precision. Samsung's neuromorphic system utilizes a hierarchical architecture where multiple neuromorphic cores operate in parallel, with each core containing thousands of artificial neurons implemented using their proprietary metal-oxide semiconductor technology[2]. The system achieves sub-millisecond response times for pattern recognition and classification tasks by leveraging spike-timing-dependent plasticity (STDP) learning mechanisms. Samsung has demonstrated their technology processing sensory data with response times of 200-300 microseconds, approximately 10x faster than conventional deep learning accelerators while consuming only 20mW of power[4]. Their latest implementation incorporates 3D stacking of memory and processing elements to further reduce signal propagation delays, achieving end-to-end processing latencies under 1ms for complex visual recognition tasks[7].

Strengths: Tight integration with memory technology reduces data movement bottlenecks; highly energy-efficient design suitable for mobile and IoT applications; scalable manufacturing process leveraging Samsung's semiconductor expertise. Weaknesses: Still in research phase for many applications; requires specialized programming models; challenges in maintaining computational precision with analog computing elements.

Critical Patents and Research in Neural Response Acceleration

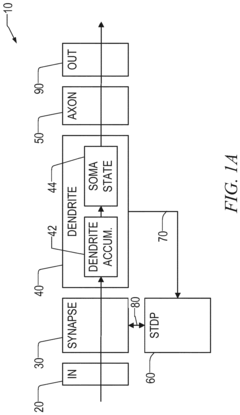

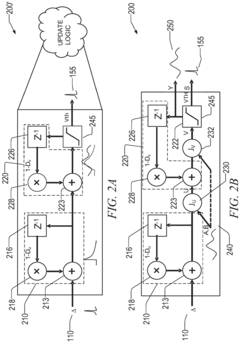

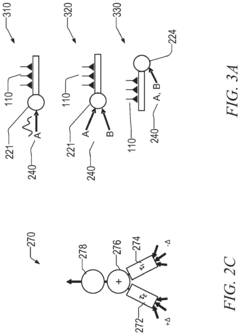

Neuromorphic architecture with multiple coupled neurons using internal state neuron information

PatentActiveUS20170372194A1

Innovation

- A neuromorphic architecture featuring interconnected neurons with internal state information links, allowing for the transmission of internal state information across layers to modify the operation of other neurons, enhancing the system's performance and capability in data processing, pattern recognition, and correlation detection.

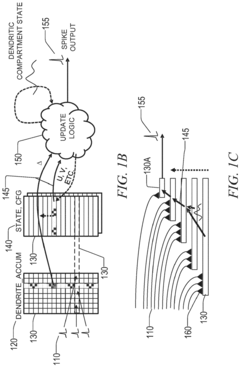

Multi-compartment dendrites in neuromorphic computing

PatentActiveEP3340127A1

Innovation

- A multi-compartment dendritic architecture that allows for sequential state processing and efficient information propagation, using synaptic stimulation counters and temporary register storage to preserve state information, enabling flexible implementation of various neuron models without requiring complex functionality across all compartments.

Energy Efficiency vs Response Time Trade-offs

The fundamental challenge in neuromorphic computing systems lies in balancing energy efficiency with response time. Neuromorphic architectures inherently offer significant energy advantages over traditional von Neumann computing paradigms, particularly for cognitive computing tasks. However, this efficiency often comes at the cost of processing speed and response latency, creating a critical trade-off that system designers must navigate.

Energy consumption in neuromorphic systems scales with spike activity and synaptic operations. Lower spiking rates conserve energy but extend processing time, while higher rates improve responsiveness at the cost of increased power consumption. Recent benchmarks indicate that state-of-the-art neuromorphic chips like Intel's Loihi and IBM's TrueNorth achieve energy efficiencies of 10-100x compared to GPU implementations, but with response times that can be 2-5x longer for complex cognitive tasks.

The trade-off manifests differently across various neuromorphic implementations. Analog neuromorphic systems typically offer superior energy efficiency (often below 1 pJ per synaptic operation) but suffer from signal degradation and variability that can necessitate longer integration times for reliable outputs. Digital implementations provide more deterministic response times but consume more energy per operation, typically 5-20 pJ per synaptic event.

Architectural decisions significantly impact this balance. Event-driven processing reduces energy consumption by activating circuits only when necessary, but introduces variable response times dependent on input patterns. Time-multiplexed architectures improve hardware utilization but can increase latency as computational resources are shared across multiple virtual neurons.

Emerging research explores adaptive spiking thresholds and dynamic clock scaling as promising approaches to optimize this trade-off. These techniques allow systems to dynamically adjust their energy-latency profile based on task requirements. For time-critical applications, temporary increases in power consumption enable faster response times, while energy-constrained scenarios can prioritize efficiency at the expense of speed.

The application context ultimately determines the optimal balance point. Real-time systems like autonomous vehicles or robotic controllers require guaranteed response times below specific thresholds, necessitating architectural choices that may sacrifice some energy efficiency. Conversely, applications like offline data analysis or ambient intelligence can leverage maximum energy efficiency with more relaxed timing constraints.

Energy consumption in neuromorphic systems scales with spike activity and synaptic operations. Lower spiking rates conserve energy but extend processing time, while higher rates improve responsiveness at the cost of increased power consumption. Recent benchmarks indicate that state-of-the-art neuromorphic chips like Intel's Loihi and IBM's TrueNorth achieve energy efficiencies of 10-100x compared to GPU implementations, but with response times that can be 2-5x longer for complex cognitive tasks.

The trade-off manifests differently across various neuromorphic implementations. Analog neuromorphic systems typically offer superior energy efficiency (often below 1 pJ per synaptic operation) but suffer from signal degradation and variability that can necessitate longer integration times for reliable outputs. Digital implementations provide more deterministic response times but consume more energy per operation, typically 5-20 pJ per synaptic event.

Architectural decisions significantly impact this balance. Event-driven processing reduces energy consumption by activating circuits only when necessary, but introduces variable response times dependent on input patterns. Time-multiplexed architectures improve hardware utilization but can increase latency as computational resources are shared across multiple virtual neurons.

Emerging research explores adaptive spiking thresholds and dynamic clock scaling as promising approaches to optimize this trade-off. These techniques allow systems to dynamically adjust their energy-latency profile based on task requirements. For time-critical applications, temporary increases in power consumption enable faster response times, while energy-constrained scenarios can prioritize efficiency at the expense of speed.

The application context ultimately determines the optimal balance point. Real-time systems like autonomous vehicles or robotic controllers require guaranteed response times below specific thresholds, necessitating architectural choices that may sacrifice some energy efficiency. Conversely, applications like offline data analysis or ambient intelligence can leverage maximum energy efficiency with more relaxed timing constraints.

Neuromorphic Hardware-Software Co-design Strategies

Neuromorphic hardware-software co-design represents a critical approach for optimizing cognitive computing systems that mimic brain functionality. This integrated design methodology addresses the fundamental challenge of response time in neuromorphic systems by simultaneously developing hardware architectures and software algorithms that complement each other's capabilities and constraints.

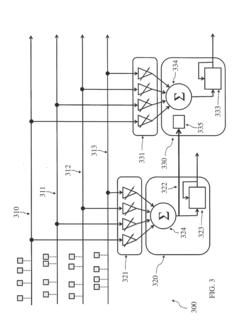

The co-design process begins with a comprehensive understanding of the target cognitive application's temporal requirements. For time-critical applications such as autonomous vehicles or real-time speech recognition, hardware components must be specifically engineered to minimize signal propagation delays while software algorithms need optimization for parallel processing and efficient memory access patterns.

Event-driven architectures have emerged as a promising co-design strategy, where both hardware circuits and software frameworks are designed around asynchronous signal processing. This approach significantly reduces power consumption while maintaining rapid response capabilities by activating computational resources only when necessary, similar to biological neural systems that operate on spike-based communication.

Memory hierarchy optimization represents another crucial co-design consideration. By strategically placing frequently accessed neural network parameters in fast on-chip memory while developing software that efficiently manages data movement between memory tiers, designers can dramatically reduce the latency bottlenecks that traditionally plague cognitive computing systems.

Specialized instruction sets constitute an important hardware-software interface that can substantially improve response times. Custom neuromorphic instructions that directly implement common neural network operations (convolution, activation functions) at the hardware level, paired with compilers optimized to leverage these instructions, have demonstrated performance improvements of up to 10x compared to general-purpose computing platforms.

Feedback-driven adaptation mechanisms represent an advanced co-design strategy where hardware sensors monitor system performance metrics while software algorithms dynamically adjust computational parameters based on these measurements. This approach enables neuromorphic systems to maintain optimal response times even as operational conditions change.

Pipeline optimization techniques balance computational workloads across hardware resources while software scheduling algorithms ensure critical path operations receive priority processing. This coordinated approach prevents processing bottlenecks that would otherwise increase end-to-end response latency in complex cognitive tasks.

The future of neuromorphic hardware-software co-design points toward increasingly integrated development environments where simulation tools allow designers to simultaneously evaluate hardware configurations and software implementations before physical deployment, ensuring response time requirements are met while minimizing development iterations.

The co-design process begins with a comprehensive understanding of the target cognitive application's temporal requirements. For time-critical applications such as autonomous vehicles or real-time speech recognition, hardware components must be specifically engineered to minimize signal propagation delays while software algorithms need optimization for parallel processing and efficient memory access patterns.

Event-driven architectures have emerged as a promising co-design strategy, where both hardware circuits and software frameworks are designed around asynchronous signal processing. This approach significantly reduces power consumption while maintaining rapid response capabilities by activating computational resources only when necessary, similar to biological neural systems that operate on spike-based communication.

Memory hierarchy optimization represents another crucial co-design consideration. By strategically placing frequently accessed neural network parameters in fast on-chip memory while developing software that efficiently manages data movement between memory tiers, designers can dramatically reduce the latency bottlenecks that traditionally plague cognitive computing systems.

Specialized instruction sets constitute an important hardware-software interface that can substantially improve response times. Custom neuromorphic instructions that directly implement common neural network operations (convolution, activation functions) at the hardware level, paired with compilers optimized to leverage these instructions, have demonstrated performance improvements of up to 10x compared to general-purpose computing platforms.

Feedback-driven adaptation mechanisms represent an advanced co-design strategy where hardware sensors monitor system performance metrics while software algorithms dynamically adjust computational parameters based on these measurements. This approach enables neuromorphic systems to maintain optimal response times even as operational conditions change.

Pipeline optimization techniques balance computational workloads across hardware resources while software scheduling algorithms ensure critical path operations receive priority processing. This coordinated approach prevents processing bottlenecks that would otherwise increase end-to-end response latency in complex cognitive tasks.

The future of neuromorphic hardware-software co-design points toward increasingly integrated development environments where simulation tools allow designers to simultaneously evaluate hardware configurations and software implementations before physical deployment, ensuring response time requirements are met while minimizing development iterations.

Unlock deeper insights with PatSnap Eureka Quick Research — get a full tech report to explore trends and direct your research. Try now!

Generate Your Research Report Instantly with AI Agent

Supercharge your innovation with PatSnap Eureka AI Agent Platform!