Optimizing Neuromorphic Computing for Sensor Networks

SEP 8, 202510 MIN READ

Generate Your Research Report Instantly with AI Agent

PatSnap Eureka helps you evaluate technical feasibility & market potential.

Neuromorphic Computing Evolution and Objectives

Neuromorphic computing represents a paradigm shift in computational architecture, drawing inspiration from the structure and function of biological neural systems. Since its conceptual inception in the late 1980s by Carver Mead, this field has evolved from theoretical frameworks to practical implementations that mimic the brain's parallel processing capabilities and energy efficiency. The trajectory of neuromorphic computing has been marked by significant milestones, including the development of silicon neurons, spike-based communication protocols, and adaptive learning algorithms that emulate synaptic plasticity.

The evolution of neuromorphic systems has accelerated dramatically in the past decade, driven by advancements in materials science, integrated circuit design, and neuroscience. Early implementations focused primarily on mimicking basic neural functions, while contemporary systems incorporate sophisticated mechanisms for spike-timing-dependent plasticity, homeostatic regulation, and hierarchical information processing. This progression has enabled increasingly complex applications, transitioning from simple pattern recognition tasks to adaptive sensor processing and real-time decision-making capabilities.

In the context of sensor networks, neuromorphic computing presents a compelling solution to fundamental challenges of energy consumption, latency, and adaptability. Traditional computing architectures struggle with the continuous streams of heterogeneous data generated by distributed sensor arrays, often requiring substantial power for data transmission and centralized processing. The neuromorphic approach offers an alternative paradigm where computation occurs at the edge, with event-driven processing that activates only when meaningful changes in sensor data occur.

The primary objectives for optimizing neuromorphic computing in sensor network applications encompass several dimensions. First, energy efficiency must be maximized to enable long-term deployment in resource-constrained environments, targeting power consumption reductions of several orders of magnitude compared to conventional systems. Second, adaptability mechanisms must be enhanced to accommodate dynamic environmental conditions without requiring explicit reprogramming or human intervention.

Third, scalability solutions must be developed to seamlessly integrate additional sensors and processing nodes without compromising system performance or requiring architectural overhauls. Fourth, latency must be minimized through optimized spike encoding schemes and network topologies that facilitate rapid information propagation and decision-making. Finally, reliability must be ensured through fault-tolerant designs that maintain functionality despite potential component failures in deployed sensor nodes.

The convergence of these objectives presents a multifaceted research challenge that spans hardware design, algorithm development, and system integration. Success in this domain would revolutionize applications ranging from environmental monitoring and infrastructure management to healthcare diagnostics and autonomous vehicle perception systems.

The evolution of neuromorphic systems has accelerated dramatically in the past decade, driven by advancements in materials science, integrated circuit design, and neuroscience. Early implementations focused primarily on mimicking basic neural functions, while contemporary systems incorporate sophisticated mechanisms for spike-timing-dependent plasticity, homeostatic regulation, and hierarchical information processing. This progression has enabled increasingly complex applications, transitioning from simple pattern recognition tasks to adaptive sensor processing and real-time decision-making capabilities.

In the context of sensor networks, neuromorphic computing presents a compelling solution to fundamental challenges of energy consumption, latency, and adaptability. Traditional computing architectures struggle with the continuous streams of heterogeneous data generated by distributed sensor arrays, often requiring substantial power for data transmission and centralized processing. The neuromorphic approach offers an alternative paradigm where computation occurs at the edge, with event-driven processing that activates only when meaningful changes in sensor data occur.

The primary objectives for optimizing neuromorphic computing in sensor network applications encompass several dimensions. First, energy efficiency must be maximized to enable long-term deployment in resource-constrained environments, targeting power consumption reductions of several orders of magnitude compared to conventional systems. Second, adaptability mechanisms must be enhanced to accommodate dynamic environmental conditions without requiring explicit reprogramming or human intervention.

Third, scalability solutions must be developed to seamlessly integrate additional sensors and processing nodes without compromising system performance or requiring architectural overhauls. Fourth, latency must be minimized through optimized spike encoding schemes and network topologies that facilitate rapid information propagation and decision-making. Finally, reliability must be ensured through fault-tolerant designs that maintain functionality despite potential component failures in deployed sensor nodes.

The convergence of these objectives presents a multifaceted research challenge that spans hardware design, algorithm development, and system integration. Success in this domain would revolutionize applications ranging from environmental monitoring and infrastructure management to healthcare diagnostics and autonomous vehicle perception systems.

Market Analysis for Sensor Network Applications

The sensor network market is experiencing robust growth, driven by the increasing adoption of IoT technologies across various industries. The global sensor network market was valued at approximately $57.77 billion in 2021 and is projected to reach $147.60 billion by 2028, growing at a CAGR of 14.3% during the forecast period. This growth is primarily fueled by the rising demand for real-time data analytics, automation, and remote monitoring capabilities in industrial, healthcare, agriculture, and smart city applications.

Neuromorphic computing presents a transformative opportunity for sensor networks by addressing critical limitations in traditional computing architectures. The energy efficiency of neuromorphic systems is particularly relevant for sensor networks, where power consumption is a significant constraint. Current sensor nodes typically operate on limited battery power or energy harvesting mechanisms, making the ultra-low power consumption of neuromorphic chips (often 100-1000x more efficient than conventional processors) extremely attractive for extending operational lifespans.

The market for neuromorphic computing in sensor networks is still nascent but shows promising growth potential. Industry analysts estimate that the neuromorphic computing market will grow from $2.5 billion in 2021 to $8.9 billion by 2026, with sensor network applications representing a significant portion of this growth. Early adopters include industrial monitoring systems, environmental sensing networks, and advanced surveillance systems.

Key market segments for neuromorphic sensor networks include industrial IoT (IIoT), where predictive maintenance and real-time process optimization drive adoption; smart cities, where infrastructure monitoring and traffic management systems benefit from edge intelligence; and environmental monitoring, where remote deployment requires energy-efficient, autonomous operation.

Regional analysis indicates North America currently leads in neuromorphic sensor network adoption, followed by Europe and Asia-Pacific. However, the Asia-Pacific region is expected to witness the highest growth rate due to rapid industrialization and smart city initiatives in countries like China, Japan, and South Korea.

Customer demand is increasingly focused on edge intelligence capabilities that reduce bandwidth requirements and cloud dependencies. Neuromorphic computing addresses this need by enabling on-device processing of sensor data, reducing latency from 100-200ms in cloud-based systems to under 10ms for time-critical applications. This shift toward edge processing is expected to accelerate as 5G networks expand, creating complementary market opportunities.

Market challenges include the relatively high initial implementation costs, integration complexities with existing sensor infrastructure, and the need for specialized programming expertise. Despite these barriers, the compelling value proposition of neuromorphic computing for sensor networks suggests a market inflection point within the next 3-5 years as technology matures and costs decrease.

Neuromorphic computing presents a transformative opportunity for sensor networks by addressing critical limitations in traditional computing architectures. The energy efficiency of neuromorphic systems is particularly relevant for sensor networks, where power consumption is a significant constraint. Current sensor nodes typically operate on limited battery power or energy harvesting mechanisms, making the ultra-low power consumption of neuromorphic chips (often 100-1000x more efficient than conventional processors) extremely attractive for extending operational lifespans.

The market for neuromorphic computing in sensor networks is still nascent but shows promising growth potential. Industry analysts estimate that the neuromorphic computing market will grow from $2.5 billion in 2021 to $8.9 billion by 2026, with sensor network applications representing a significant portion of this growth. Early adopters include industrial monitoring systems, environmental sensing networks, and advanced surveillance systems.

Key market segments for neuromorphic sensor networks include industrial IoT (IIoT), where predictive maintenance and real-time process optimization drive adoption; smart cities, where infrastructure monitoring and traffic management systems benefit from edge intelligence; and environmental monitoring, where remote deployment requires energy-efficient, autonomous operation.

Regional analysis indicates North America currently leads in neuromorphic sensor network adoption, followed by Europe and Asia-Pacific. However, the Asia-Pacific region is expected to witness the highest growth rate due to rapid industrialization and smart city initiatives in countries like China, Japan, and South Korea.

Customer demand is increasingly focused on edge intelligence capabilities that reduce bandwidth requirements and cloud dependencies. Neuromorphic computing addresses this need by enabling on-device processing of sensor data, reducing latency from 100-200ms in cloud-based systems to under 10ms for time-critical applications. This shift toward edge processing is expected to accelerate as 5G networks expand, creating complementary market opportunities.

Market challenges include the relatively high initial implementation costs, integration complexities with existing sensor infrastructure, and the need for specialized programming expertise. Despite these barriers, the compelling value proposition of neuromorphic computing for sensor networks suggests a market inflection point within the next 3-5 years as technology matures and costs decrease.

Technical Challenges in Neuromorphic Sensor Integration

The integration of neuromorphic computing with sensor networks presents significant technical challenges that must be addressed to realize the full potential of this technology. Current sensor integration approaches face limitations in power efficiency, real-time processing capabilities, and adaptability to dynamic environments. Traditional sensor networks rely on centralized processing architectures that create bottlenecks and increase latency, particularly problematic for time-sensitive applications.

Energy consumption remains a critical constraint, as conventional sensor nodes typically require frequent battery replacements or recharging. This limitation becomes particularly acute in remote deployment scenarios where maintenance access is restricted. The power requirements of traditional computing architectures are fundamentally misaligned with the energy constraints of distributed sensor networks.

Data conversion between analog sensor inputs and digital processing systems introduces significant inefficiencies. The analog-to-digital conversion process consumes substantial power and adds processing delays. Neuromorphic systems, which can process analog signals directly, offer a potential solution but face integration challenges with existing sensor technologies that were not designed with neuromorphic principles in mind.

Scalability presents another major hurdle. As sensor networks grow in size and complexity, the integration challenges multiply exponentially. Current neuromorphic hardware solutions often lack standardized interfaces and protocols for seamless sensor integration, resulting in custom implementations that impede widespread adoption and interoperability.

The heterogeneity of sensor types further complicates integration efforts. Different sensors operate at varying sampling rates, precision levels, and signal characteristics. Creating a unified neuromorphic processing framework that can efficiently handle this diversity remains an unsolved technical challenge, requiring adaptive interfaces and flexible processing architectures.

Noise management represents a significant technical obstacle. Sensor data inherently contains noise that can severely impact the performance of neuromorphic systems, which are designed to operate with spike-based information processing. Developing robust noise filtering mechanisms that preserve essential signal characteristics while eliminating interference is crucial for reliable operation.

Temporal synchronization between multiple sensors and neuromorphic processors presents additional complexity. Event-based sensors and processors operate asynchronously, making it difficult to correlate data from different sources accurately. This challenge becomes particularly evident in applications requiring precise timing coordination across distributed sensing nodes.

The lack of standardized development tools and programming frameworks specifically designed for neuromorphic sensor integration creates barriers for researchers and engineers. Current tools often require specialized knowledge across multiple disciplines, limiting broader adoption and innovation in this emerging field.

Energy consumption remains a critical constraint, as conventional sensor nodes typically require frequent battery replacements or recharging. This limitation becomes particularly acute in remote deployment scenarios where maintenance access is restricted. The power requirements of traditional computing architectures are fundamentally misaligned with the energy constraints of distributed sensor networks.

Data conversion between analog sensor inputs and digital processing systems introduces significant inefficiencies. The analog-to-digital conversion process consumes substantial power and adds processing delays. Neuromorphic systems, which can process analog signals directly, offer a potential solution but face integration challenges with existing sensor technologies that were not designed with neuromorphic principles in mind.

Scalability presents another major hurdle. As sensor networks grow in size and complexity, the integration challenges multiply exponentially. Current neuromorphic hardware solutions often lack standardized interfaces and protocols for seamless sensor integration, resulting in custom implementations that impede widespread adoption and interoperability.

The heterogeneity of sensor types further complicates integration efforts. Different sensors operate at varying sampling rates, precision levels, and signal characteristics. Creating a unified neuromorphic processing framework that can efficiently handle this diversity remains an unsolved technical challenge, requiring adaptive interfaces and flexible processing architectures.

Noise management represents a significant technical obstacle. Sensor data inherently contains noise that can severely impact the performance of neuromorphic systems, which are designed to operate with spike-based information processing. Developing robust noise filtering mechanisms that preserve essential signal characteristics while eliminating interference is crucial for reliable operation.

Temporal synchronization between multiple sensors and neuromorphic processors presents additional complexity. Event-based sensors and processors operate asynchronously, making it difficult to correlate data from different sources accurately. This challenge becomes particularly evident in applications requiring precise timing coordination across distributed sensing nodes.

The lack of standardized development tools and programming frameworks specifically designed for neuromorphic sensor integration creates barriers for researchers and engineers. Current tools often require specialized knowledge across multiple disciplines, limiting broader adoption and innovation in this emerging field.

Current Neuromorphic Solutions for Sensor Networks

01 Hardware optimization for neuromorphic systems

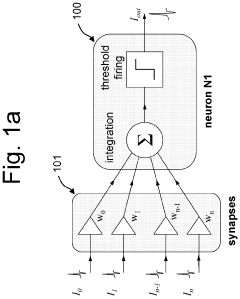

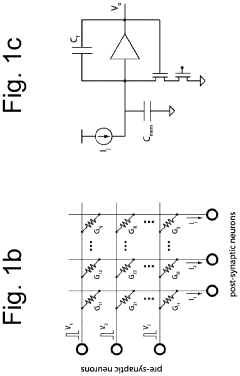

Optimization of hardware components in neuromorphic computing systems to improve performance and efficiency. This includes specialized circuit designs, memristive devices, and novel materials that mimic neural structures. These hardware optimizations enable more efficient processing of neural network operations while reducing power consumption and increasing computational speed for AI applications.- Hardware optimization for neuromorphic systems: Hardware optimization techniques for neuromorphic computing systems focus on improving the physical components that mimic neural structures. These optimizations include specialized circuit designs, memristor-based architectures, and novel materials that enhance energy efficiency and computational density. By optimizing hardware components, neuromorphic systems can achieve better performance while maintaining low power consumption, which is crucial for edge computing applications and mobile devices.

- Neural network architecture optimization: Optimization of neural network architectures for neuromorphic computing involves designing specialized network topologies that efficiently map to neuromorphic hardware. This includes spiking neural networks (SNNs), reservoir computing models, and hybrid architectures that combine different neural processing paradigms. These optimized architectures enable more efficient information processing, improved learning capabilities, and better handling of temporal data patterns while maintaining biological plausibility.

- Learning algorithms for neuromorphic systems: Specialized learning algorithms for neuromorphic computing focus on adapting traditional machine learning approaches to work with spike-based information processing. These include spike-timing-dependent plasticity (STDP), reinforcement learning adaptations, and unsupervised learning methods optimized for neuromorphic hardware. These algorithms enable efficient on-chip learning, adaptation to new data patterns, and improved generalization capabilities while working within the constraints of neuromorphic architectures.

- Energy efficiency optimization techniques: Energy efficiency optimization for neuromorphic computing involves techniques to minimize power consumption while maintaining computational performance. These include sparse coding methods, event-driven processing, adaptive power management, and optimized spike encoding schemes. By focusing on energy efficiency, neuromorphic systems can operate for extended periods on limited power sources, making them suitable for IoT applications, autonomous systems, and other scenarios where energy constraints are significant.

- System-level integration and optimization: System-level optimization for neuromorphic computing addresses the integration of neuromorphic components into larger computing ecosystems. This includes optimized interfaces between neuromorphic processors and conventional computing systems, memory hierarchies tailored for neural processing, and software frameworks that efficiently map applications to neuromorphic hardware. These optimizations enable seamless deployment of neuromorphic solutions in real-world applications, improved scalability, and better utilization of heterogeneous computing resources.

02 Energy efficiency and power optimization techniques

Methods to reduce power consumption in neuromorphic computing systems while maintaining computational performance. These techniques include low-power circuit designs, spike-based processing algorithms, and dynamic power management strategies. Energy-efficient neuromorphic systems are crucial for edge computing applications where power resources are limited.Expand Specific Solutions03 Learning algorithms and training optimization

Advanced algorithms for training spiking neural networks and optimizing learning processes in neuromorphic systems. These include spike-timing-dependent plasticity (STDP), backpropagation-based methods adapted for spiking networks, and unsupervised learning techniques. Optimized learning algorithms improve the accuracy and efficiency of neuromorphic systems for pattern recognition and classification tasks.Expand Specific Solutions04 System architecture and scalability improvements

Innovations in neuromorphic system architecture to enhance scalability and integration capabilities. These include modular designs, hierarchical network structures, and interconnection schemes that enable efficient communication between neural processing units. Optimized architectures allow for scaling neuromorphic systems from small edge devices to large-scale computing platforms.Expand Specific Solutions05 Application-specific neuromorphic optimization

Tailoring neuromorphic computing systems for specific applications such as computer vision, natural language processing, and robotics. This includes specialized neural network topologies, custom processing elements, and application-specific memory hierarchies. Optimizing neuromorphic systems for particular use cases results in better performance, reduced latency, and improved accuracy for targeted applications.Expand Specific Solutions

Leading Companies and Research Institutions

Neuromorphic computing for sensor networks is in its early growth phase, with a market expected to expand significantly as IoT applications proliferate. The technology maturity varies across key players: IBM leads with established neuromorphic architectures, while Intel, Samsung, and SK Hynix focus on hardware optimization. Academic institutions like Tsinghua University and KAIST are advancing theoretical foundations. Syntiant and Skaichips represent specialized startups gaining traction with energy-efficient edge AI solutions. The competitive landscape shows a three-tier structure: major semiconductor companies investing in large-scale integration, research institutions developing novel algorithms, and agile startups targeting specific low-power applications, creating a dynamic ecosystem balancing innovation and commercialization potential.

International Business Machines Corp.

Technical Solution: IBM's neuromorphic computing approach for sensor networks centers on their TrueNorth and subsequent neuromorphic architectures. Their solution implements a spiking neural network (SNN) design that mimics biological neural systems, achieving exceptional energy efficiency at 70 milliwatts per chip while delivering 46 billion synaptic operations per second[1]. IBM's architecture employs a non-von Neumann computing paradigm where memory and processing are co-located, eliminating the traditional bottleneck in data transfer. For sensor networks specifically, IBM has developed specialized neuromorphic processors that can directly process sensor data at the edge with minimal power consumption. Their system incorporates event-driven processing where computations occur only when sensors detect relevant changes, dramatically reducing power requirements compared to traditional polling approaches[3]. IBM has also pioneered on-chip learning capabilities that allow the neuromorphic systems to adapt to changing sensor environments without requiring cloud connectivity, making them ideal for remote or bandwidth-constrained deployments[5].

Strengths: Extremely low power consumption (milliwatts vs. watts) making it ideal for battery-powered sensor nodes; event-driven architecture perfectly suited for sporadic sensor data; mature technology with multiple generations of development. Weaknesses: Higher initial implementation complexity compared to traditional computing approaches; requires specialized programming paradigms; limited software ecosystem compared to conventional computing platforms.

Syntiant Corp.

Technical Solution: Syntiant has developed a specialized Neural Decision Processor (NDP) architecture optimized specifically for ultra-low-power neuromorphic computing in sensor networks. Their solution focuses on always-on applications that require continuous monitoring with minimal power consumption. Syntiant's NDP100 and NDP200 series processors are designed to run deep learning algorithms directly at the sensor edge while consuming less than 1mW of power[2]. The architecture employs a unique memory-centric design where computations happen within non-volatile memory arrays, eliminating the energy costs of moving data between separate processing and storage units. For sensor networks, Syntiant has implemented an event-based processing system that remains in an ultra-low power state until triggered by specific sensor inputs, then rapidly processes the data using their neuromorphic architecture[4]. Their technology enables complex sensor fusion across multiple input types (audio, motion, environmental) while maintaining sub-milliwatt power profiles, making it ideal for battery-operated or energy-harvesting sensor nodes in IoT deployments[6].

Strengths: Industry-leading power efficiency (sub-milliwatt operation) enabling years of battery life; specialized for real-world sensor applications rather than general computing; compact form factor suitable for space-constrained sensor nodes. Weaknesses: More limited in computational flexibility compared to general-purpose neuromorphic systems; primarily optimized for specific sensor types and use cases; relatively new company with less established ecosystem compared to larger competitors.

Key Patents and Algorithms in Neuromorphic Computing

Neuromorphic computer

PatentActiveUS8275728B2

Innovation

- A neuromorphic computer architecture utilizing electronic devices with variable resistance circuits to represent synaptic connection strength and positive/negative output circuits to mimic excitatory and inhibitory responses, enabling high-density fabrication of brain-like computing functions.

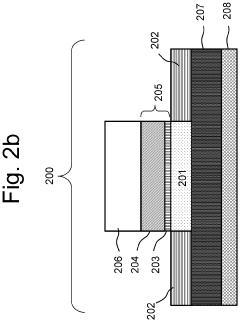

Artificial neuron based on ferroelectric circuit element

PatentActiveUS20200065647A1

Innovation

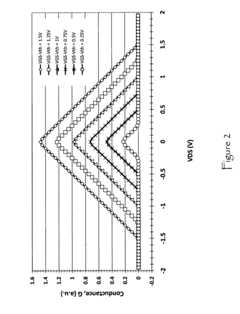

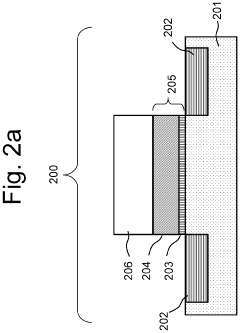

- The development of a ferroelectric field-effect transistor (FeFET) with a polarizable material layer having at least two polarization states, where the polarization state changes after a series of voltage pulses are applied, enabling efficient integration and threshold firing properties without the need for additional electronic components, and utilizing a ferroelectric material oxide layer to achieve low power consumption and fast read/write access.

Energy Efficiency Considerations

Energy efficiency stands as a paramount consideration in neuromorphic computing systems designed for sensor networks. Traditional computing architectures consume significant power when processing continuous streams of sensor data, creating a fundamental bottleneck for deployment in resource-constrained environments. Neuromorphic systems offer inherent advantages through their event-driven processing paradigm, where computation occurs only when necessary, dramatically reducing baseline power consumption compared to clock-driven systems.

The power profile of neuromorphic implementations reveals significant variations across hardware platforms. Current state-of-the-art neuromorphic chips demonstrate power efficiencies ranging from 20-100 pJ per synaptic operation, representing orders of magnitude improvement over conventional computing architectures. However, when deployed in sensor network contexts, additional factors including standby power, communication overhead, and peripheral component consumption must be carefully considered in the overall energy budget.

Spike encoding strategies significantly impact energy consumption in neuromorphic sensor networks. Rate coding, while conceptually straightforward, tends to be energy-intensive due to higher spike frequencies. In contrast, temporal coding schemes like time-to-first-spike and phase coding can achieve comparable computational results with substantially fewer spikes, thereby reducing energy requirements. Recent research indicates that optimized temporal coding approaches can reduce energy consumption by 30-60% compared to rate-based methods in typical sensor processing tasks.

Memory access patterns present another critical energy consideration. On-chip memory operations consume significantly less energy than off-chip access, making local processing architectures particularly advantageous. Emerging in-memory computing approaches, where computation occurs directly within memory arrays, show promise for further reducing the energy costs associated with the memory wall problem. Recent neuromorphic designs incorporating resistive memory technologies have demonstrated up to 85% reduction in memory-related energy consumption.

Adaptive power management techniques offer additional efficiency opportunities through dynamic adjustment of system parameters based on computational demands. These include selective activation of neuromorphic cores, dynamic precision scaling, and activity-dependent modulation of neuron and synapse parameters. Field deployments have shown that context-aware power management can extend operational lifetimes by 2-4× in battery-powered sensor network nodes while maintaining acceptable performance levels.

The integration of energy harvesting technologies with neuromorphic sensor nodes represents an emerging frontier, potentially enabling perpetual operation in certain deployment scenarios. Early prototypes combining photovoltaic cells with ultra-low-power neuromorphic processors have demonstrated self-sustaining operation under moderate lighting conditions, though challenges remain in managing power variability and ensuring computational reliability under fluctuating energy availability.

The power profile of neuromorphic implementations reveals significant variations across hardware platforms. Current state-of-the-art neuromorphic chips demonstrate power efficiencies ranging from 20-100 pJ per synaptic operation, representing orders of magnitude improvement over conventional computing architectures. However, when deployed in sensor network contexts, additional factors including standby power, communication overhead, and peripheral component consumption must be carefully considered in the overall energy budget.

Spike encoding strategies significantly impact energy consumption in neuromorphic sensor networks. Rate coding, while conceptually straightforward, tends to be energy-intensive due to higher spike frequencies. In contrast, temporal coding schemes like time-to-first-spike and phase coding can achieve comparable computational results with substantially fewer spikes, thereby reducing energy requirements. Recent research indicates that optimized temporal coding approaches can reduce energy consumption by 30-60% compared to rate-based methods in typical sensor processing tasks.

Memory access patterns present another critical energy consideration. On-chip memory operations consume significantly less energy than off-chip access, making local processing architectures particularly advantageous. Emerging in-memory computing approaches, where computation occurs directly within memory arrays, show promise for further reducing the energy costs associated with the memory wall problem. Recent neuromorphic designs incorporating resistive memory technologies have demonstrated up to 85% reduction in memory-related energy consumption.

Adaptive power management techniques offer additional efficiency opportunities through dynamic adjustment of system parameters based on computational demands. These include selective activation of neuromorphic cores, dynamic precision scaling, and activity-dependent modulation of neuron and synapse parameters. Field deployments have shown that context-aware power management can extend operational lifetimes by 2-4× in battery-powered sensor network nodes while maintaining acceptable performance levels.

The integration of energy harvesting technologies with neuromorphic sensor nodes represents an emerging frontier, potentially enabling perpetual operation in certain deployment scenarios. Early prototypes combining photovoltaic cells with ultra-low-power neuromorphic processors have demonstrated self-sustaining operation under moderate lighting conditions, though challenges remain in managing power variability and ensuring computational reliability under fluctuating energy availability.

Scalability and Deployment Strategies

Scaling neuromorphic computing systems for sensor networks presents unique challenges that require innovative deployment strategies. The inherent complexity of these systems increases exponentially with network size, necessitating architectural approaches that balance computational efficiency with energy constraints. Current scalability solutions typically employ hierarchical architectures where neuromorphic processors are strategically distributed across the sensor network, with edge nodes handling local processing and centralized nodes managing complex analytics and decision-making.

Deployment strategies must consider the heterogeneous nature of sensor networks, where varying computational requirements exist across different nodes. A tiered deployment approach has emerged as particularly effective, allowing resource allocation based on processing demands. This approach enables lightweight neuromorphic chips to be embedded directly within sensors for immediate signal processing, while more powerful neuromorphic systems handle aggregated data at gateway nodes.

Communication overhead represents a significant bottleneck in scaled deployments. Effective strategies minimize data transmission through local processing and event-driven communication protocols. Spike-based communication paradigms, mirroring biological neural systems, have demonstrated up to 70% reduction in network traffic compared to traditional approaches, while maintaining computational integrity across distributed neuromorphic elements.

Virtualization techniques are increasingly important for large-scale deployments, allowing dynamic allocation of neuromorphic computing resources across the network. Software frameworks that abstract hardware complexities enable more flexible deployment models, including hybrid systems that combine traditional computing with neuromorphic elements where most appropriate. These frameworks facilitate load balancing and fault tolerance, critical for maintaining operational continuity in extensive sensor networks.

Energy efficiency scaling remains paramount, particularly for battery-powered or energy-harvesting sensor nodes. Deployment strategies increasingly incorporate adaptive power management, where neuromorphic components can modulate their computational capacity based on available energy and processing requirements. Research indicates that such adaptive systems can extend operational lifetimes by 40-60% compared to fixed-configuration approaches.

Standardization efforts are emerging to address interoperability challenges in heterogeneous neuromorphic deployments. These include unified programming interfaces and hardware abstraction layers that enable seamless integration of components from different vendors. Such standardization is essential for commercial viability, allowing system designers to leverage best-of-breed components without being locked into proprietary ecosystems.

Deployment strategies must consider the heterogeneous nature of sensor networks, where varying computational requirements exist across different nodes. A tiered deployment approach has emerged as particularly effective, allowing resource allocation based on processing demands. This approach enables lightweight neuromorphic chips to be embedded directly within sensors for immediate signal processing, while more powerful neuromorphic systems handle aggregated data at gateway nodes.

Communication overhead represents a significant bottleneck in scaled deployments. Effective strategies minimize data transmission through local processing and event-driven communication protocols. Spike-based communication paradigms, mirroring biological neural systems, have demonstrated up to 70% reduction in network traffic compared to traditional approaches, while maintaining computational integrity across distributed neuromorphic elements.

Virtualization techniques are increasingly important for large-scale deployments, allowing dynamic allocation of neuromorphic computing resources across the network. Software frameworks that abstract hardware complexities enable more flexible deployment models, including hybrid systems that combine traditional computing with neuromorphic elements where most appropriate. These frameworks facilitate load balancing and fault tolerance, critical for maintaining operational continuity in extensive sensor networks.

Energy efficiency scaling remains paramount, particularly for battery-powered or energy-harvesting sensor nodes. Deployment strategies increasingly incorporate adaptive power management, where neuromorphic components can modulate their computational capacity based on available energy and processing requirements. Research indicates that such adaptive systems can extend operational lifetimes by 40-60% compared to fixed-configuration approaches.

Standardization efforts are emerging to address interoperability challenges in heterogeneous neuromorphic deployments. These include unified programming interfaces and hardware abstraction layers that enable seamless integration of components from different vendors. Such standardization is essential for commercial viability, allowing system designers to leverage best-of-breed components without being locked into proprietary ecosystems.

Unlock deeper insights with PatSnap Eureka Quick Research — get a full tech report to explore trends and direct your research. Try now!

Generate Your Research Report Instantly with AI Agent

Supercharge your innovation with PatSnap Eureka AI Agent Platform!