Quantify Energy Efficiency in Neuromorphic Computing Systems

SEP 5, 20259 MIN READ

Generate Your Research Report Instantly with AI Agent

PatSnap Eureka helps you evaluate technical feasibility & market potential.

Neuromorphic Computing Energy Efficiency Background and Objectives

Neuromorphic computing represents a paradigm shift in computational architecture, drawing inspiration from the structure and function of biological neural systems. This field has evolved significantly since its conceptual inception in the late 1980s by Carver Mead, progressing from theoretical frameworks to practical implementations that aim to replicate the brain's remarkable energy efficiency. Traditional von Neumann architectures face fundamental limitations in energy efficiency due to the separation of memory and processing units, creating bottlenecks that consume significant power during data transfer operations.

The evolution of neuromorphic computing has been driven by the exponential growth in data processing requirements across various domains, including artificial intelligence, autonomous systems, and edge computing. These applications demand computational solutions that can process complex, unstructured data in real-time while operating within strict power constraints. Neuromorphic systems address these challenges by integrating memory and processing elements, enabling parallel computation and event-driven processing that significantly reduces energy consumption.

Recent technological advancements in materials science, semiconductor fabrication, and neural network algorithms have accelerated the development of neuromorphic hardware. Notable milestones include the introduction of memristive devices, spintronic components, and photonic neural networks, each offering unique advantages in terms of energy efficiency, processing speed, and integration density. These innovations have collectively pushed the boundaries of what is possible in low-power, high-performance computing.

The primary objective in quantifying energy efficiency in neuromorphic systems is to establish standardized metrics and methodologies that accurately reflect their computational capabilities relative to power consumption. Unlike traditional computing systems where FLOPS/watt serves as a common benchmark, neuromorphic architectures require more nuanced evaluation frameworks that account for their unique operational characteristics, such as spike-based processing and temporal dynamics.

Additionally, this research aims to identify the fundamental physical and architectural limits of energy efficiency in neuromorphic computing, providing a roadmap for future innovations. By understanding these theoretical boundaries, researchers can develop targeted strategies to approach the energy efficiency of biological neural systems, which operate at approximately 10^14 operations per second per watt—orders of magnitude more efficient than current electronic implementations.

The ultimate goal is to enable neuromorphic systems that can perform complex cognitive tasks with energy requirements comparable to the human brain (approximately 20 watts), facilitating the deployment of advanced AI capabilities in energy-constrained environments such as mobile devices, remote sensors, and autonomous vehicles. This would represent a transformative advancement in computing technology with far-reaching implications across multiple industries and scientific disciplines.

The evolution of neuromorphic computing has been driven by the exponential growth in data processing requirements across various domains, including artificial intelligence, autonomous systems, and edge computing. These applications demand computational solutions that can process complex, unstructured data in real-time while operating within strict power constraints. Neuromorphic systems address these challenges by integrating memory and processing elements, enabling parallel computation and event-driven processing that significantly reduces energy consumption.

Recent technological advancements in materials science, semiconductor fabrication, and neural network algorithms have accelerated the development of neuromorphic hardware. Notable milestones include the introduction of memristive devices, spintronic components, and photonic neural networks, each offering unique advantages in terms of energy efficiency, processing speed, and integration density. These innovations have collectively pushed the boundaries of what is possible in low-power, high-performance computing.

The primary objective in quantifying energy efficiency in neuromorphic systems is to establish standardized metrics and methodologies that accurately reflect their computational capabilities relative to power consumption. Unlike traditional computing systems where FLOPS/watt serves as a common benchmark, neuromorphic architectures require more nuanced evaluation frameworks that account for their unique operational characteristics, such as spike-based processing and temporal dynamics.

Additionally, this research aims to identify the fundamental physical and architectural limits of energy efficiency in neuromorphic computing, providing a roadmap for future innovations. By understanding these theoretical boundaries, researchers can develop targeted strategies to approach the energy efficiency of biological neural systems, which operate at approximately 10^14 operations per second per watt—orders of magnitude more efficient than current electronic implementations.

The ultimate goal is to enable neuromorphic systems that can perform complex cognitive tasks with energy requirements comparable to the human brain (approximately 20 watts), facilitating the deployment of advanced AI capabilities in energy-constrained environments such as mobile devices, remote sensors, and autonomous vehicles. This would represent a transformative advancement in computing technology with far-reaching implications across multiple industries and scientific disciplines.

Market Analysis for Energy-Efficient Neuromorphic Systems

The neuromorphic computing market is experiencing significant growth, driven by increasing demand for energy-efficient computing solutions across various industries. Current market valuations place the global neuromorphic computing sector at approximately 3.2 billion USD in 2023, with projections indicating a compound annual growth rate (CAGR) of 24.7% through 2030. This remarkable growth trajectory is primarily fueled by applications in edge computing, autonomous systems, and artificial intelligence, where energy efficiency represents a critical competitive advantage.

The demand for energy-efficient neuromorphic systems is particularly strong in sectors requiring real-time processing of sensory data, including automotive, healthcare, consumer electronics, and industrial automation. In the automotive industry, neuromorphic chips are increasingly being integrated into advanced driver-assistance systems (ADAS) and autonomous vehicles, where they offer substantial energy savings compared to traditional computing architectures while processing complex visual and sensor data streams.

Healthcare applications represent another significant market segment, with neuromorphic systems being deployed in medical imaging, patient monitoring, and diagnostic tools. The inherent energy efficiency of these systems makes them ideal for portable and wearable medical devices where battery life is a critical concern. Market research indicates that healthcare applications of neuromorphic computing could reach 1.8 billion USD by 2028.

Consumer electronics manufacturers are also exploring neuromorphic solutions for next-generation smartphones, smart home devices, and wearables. The ability to perform complex AI tasks with minimal power consumption addresses a fundamental challenge in mobile computing, potentially extending battery life while enabling more sophisticated on-device intelligence. Industry analysts predict that by 2025, over 15% of premium smartphones will incorporate some form of neuromorphic processing elements.

From a geographical perspective, North America currently leads the market with approximately 42% share, followed by Europe (28%) and Asia-Pacific (24%). However, the Asia-Pacific region is expected to demonstrate the fastest growth rate over the next five years, driven by substantial investments in semiconductor manufacturing and AI research in countries like China, South Korea, and Japan.

The enterprise segment currently dominates market revenue, accounting for nearly 65% of total market value. However, the consumer segment is anticipated to grow at a faster rate as neuromorphic technologies become more accessible and integrated into everyday devices. This shift is expected to create new market opportunities for both established semiconductor companies and specialized neuromorphic hardware startups.

The demand for energy-efficient neuromorphic systems is particularly strong in sectors requiring real-time processing of sensory data, including automotive, healthcare, consumer electronics, and industrial automation. In the automotive industry, neuromorphic chips are increasingly being integrated into advanced driver-assistance systems (ADAS) and autonomous vehicles, where they offer substantial energy savings compared to traditional computing architectures while processing complex visual and sensor data streams.

Healthcare applications represent another significant market segment, with neuromorphic systems being deployed in medical imaging, patient monitoring, and diagnostic tools. The inherent energy efficiency of these systems makes them ideal for portable and wearable medical devices where battery life is a critical concern. Market research indicates that healthcare applications of neuromorphic computing could reach 1.8 billion USD by 2028.

Consumer electronics manufacturers are also exploring neuromorphic solutions for next-generation smartphones, smart home devices, and wearables. The ability to perform complex AI tasks with minimal power consumption addresses a fundamental challenge in mobile computing, potentially extending battery life while enabling more sophisticated on-device intelligence. Industry analysts predict that by 2025, over 15% of premium smartphones will incorporate some form of neuromorphic processing elements.

From a geographical perspective, North America currently leads the market with approximately 42% share, followed by Europe (28%) and Asia-Pacific (24%). However, the Asia-Pacific region is expected to demonstrate the fastest growth rate over the next five years, driven by substantial investments in semiconductor manufacturing and AI research in countries like China, South Korea, and Japan.

The enterprise segment currently dominates market revenue, accounting for nearly 65% of total market value. However, the consumer segment is anticipated to grow at a faster rate as neuromorphic technologies become more accessible and integrated into everyday devices. This shift is expected to create new market opportunities for both established semiconductor companies and specialized neuromorphic hardware startups.

Current State and Challenges in Neuromorphic Energy Quantification

Neuromorphic computing systems have made significant strides in recent years, yet quantifying their energy efficiency remains a complex challenge. Current methodologies for energy measurement in these brain-inspired architectures vary widely across research institutions and industry players, creating inconsistencies in reported performance metrics. The lack of standardized benchmarking protocols specifically designed for neuromorphic hardware has hindered meaningful comparisons between different implementations and technologies.

At present, most energy quantification approaches focus on static power consumption measurements that fail to capture the dynamic nature of neuromorphic operations. Traditional metrics like FLOPS/Watt or operations/Joule, while useful for conventional computing systems, do not adequately represent the event-driven, asynchronous processing paradigms that characterize neuromorphic architectures. This fundamental mismatch creates significant challenges when attempting to evaluate and compare energy efficiency across different neuromorphic platforms.

Geographically, neuromorphic computing research exhibits distinct regional characteristics. North American institutions primarily focus on digital neuromorphic implementations with emphasis on scalability, while European research centers tend to prioritize analog and mixed-signal approaches that optimize for biological fidelity. Asian research groups, particularly in China and Japan, have made notable advances in memristor-based implementations that promise exceptional energy efficiency but present unique measurement challenges.

A major technical constraint in accurate energy quantification is the difficulty in isolating computational energy costs from peripheral operations such as memory access and data movement. Current neuromorphic systems often integrate these functions in novel ways that defy traditional power profiling methods. Additionally, the event-driven nature of spike-based computation creates highly variable power profiles that depend on input data characteristics, making standardized measurement protocols difficult to establish.

The miniaturization of neuromorphic components presents another significant challenge, as conventional power measurement tools lack the temporal and spatial resolution needed to accurately capture energy consumption at the scale of individual synaptic operations. This limitation has forced researchers to rely on simulation-based estimates that may not fully reflect real-world performance.

Industry adoption is further constrained by the absence of reliable energy efficiency models that can predict scaling behavior as neuromorphic systems grow from laboratory demonstrations to commercial applications. The interdisciplinary nature of the field—spanning computer architecture, materials science, neuroscience, and electrical engineering—has resulted in fragmented approaches to energy quantification that lack cohesion and comparability.

At present, most energy quantification approaches focus on static power consumption measurements that fail to capture the dynamic nature of neuromorphic operations. Traditional metrics like FLOPS/Watt or operations/Joule, while useful for conventional computing systems, do not adequately represent the event-driven, asynchronous processing paradigms that characterize neuromorphic architectures. This fundamental mismatch creates significant challenges when attempting to evaluate and compare energy efficiency across different neuromorphic platforms.

Geographically, neuromorphic computing research exhibits distinct regional characteristics. North American institutions primarily focus on digital neuromorphic implementations with emphasis on scalability, while European research centers tend to prioritize analog and mixed-signal approaches that optimize for biological fidelity. Asian research groups, particularly in China and Japan, have made notable advances in memristor-based implementations that promise exceptional energy efficiency but present unique measurement challenges.

A major technical constraint in accurate energy quantification is the difficulty in isolating computational energy costs from peripheral operations such as memory access and data movement. Current neuromorphic systems often integrate these functions in novel ways that defy traditional power profiling methods. Additionally, the event-driven nature of spike-based computation creates highly variable power profiles that depend on input data characteristics, making standardized measurement protocols difficult to establish.

The miniaturization of neuromorphic components presents another significant challenge, as conventional power measurement tools lack the temporal and spatial resolution needed to accurately capture energy consumption at the scale of individual synaptic operations. This limitation has forced researchers to rely on simulation-based estimates that may not fully reflect real-world performance.

Industry adoption is further constrained by the absence of reliable energy efficiency models that can predict scaling behavior as neuromorphic systems grow from laboratory demonstrations to commercial applications. The interdisciplinary nature of the field—spanning computer architecture, materials science, neuroscience, and electrical engineering—has resulted in fragmented approaches to energy quantification that lack cohesion and comparability.

Existing Energy Efficiency Quantification Methodologies

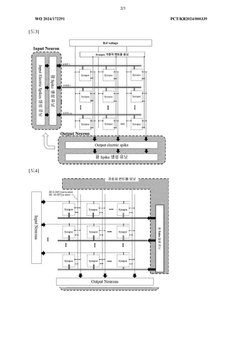

01 Low-power neuromorphic hardware architectures

Specialized hardware architectures designed specifically for neuromorphic computing can significantly reduce energy consumption compared to traditional computing systems. These architectures often implement brain-inspired designs that minimize data movement and optimize parallel processing. By closely mimicking neural structures, these systems can achieve high computational efficiency while maintaining low power requirements, making them suitable for edge computing applications where energy constraints are critical.- Low-power neuromorphic hardware architectures: Specialized hardware architectures designed specifically for neuromorphic computing can significantly reduce energy consumption compared to traditional computing systems. These architectures often implement brain-inspired designs that minimize data movement and optimize parallel processing. By closely mimicking neural structures, these systems can achieve higher energy efficiency while maintaining computational performance for AI and machine learning tasks.

- Memristor-based computing for energy efficiency: Memristors are non-volatile memory devices that can be used to implement synaptic functions in neuromorphic systems. These devices enable in-memory computing, reducing the energy costs associated with data transfer between memory and processing units. Memristor-based neuromorphic systems can perform computations with significantly lower power consumption by integrating memory and processing functions, making them ideal for edge computing applications where energy constraints are critical.

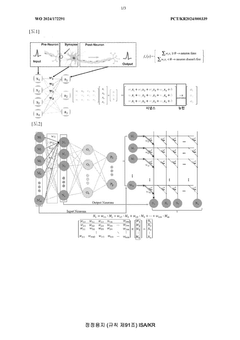

- Spike-based processing techniques: Spike-based or event-driven processing techniques mimic the brain's communication method, where neurons transmit information only when necessary through discrete spikes. This approach significantly reduces energy consumption by minimizing continuous data processing and focusing computational resources only when needed. Implementing sparse activation patterns and temporal coding in neuromorphic systems allows for efficient information processing with minimal energy expenditure.

- Optimized neural network architectures: Specialized neural network architectures designed specifically for neuromorphic hardware can dramatically improve energy efficiency. These optimized architectures include pruned networks, quantized weights, and sparse connectivity patterns that reduce computational requirements while maintaining accuracy. By implementing these architectural innovations, neuromorphic systems can achieve significant power savings compared to traditional deep learning implementations.

- Dynamic power management techniques: Advanced power management techniques that dynamically adjust system parameters based on computational demands can significantly enhance energy efficiency in neuromorphic systems. These techniques include adaptive voltage scaling, clock gating, power gating, and selective activation of neural circuits. By intelligently managing power consumption according to workload requirements, these systems can optimize energy usage while maintaining performance for various applications.

02 Memristor-based neural networks

Memristor technology enables highly efficient neuromorphic computing by combining memory and processing functions in the same device, significantly reducing energy consumption associated with data transfer. These non-volatile memory elements can maintain their state without power, further enhancing energy efficiency. Memristor-based neural networks can implement synaptic weights directly in hardware, allowing for efficient implementation of learning algorithms while consuming minimal power during both training and inference operations.Expand Specific Solutions03 Spike-based processing techniques

Spike-based processing mimics the brain's communication method, where information is encoded in discrete events (spikes) rather than continuous signals. This approach significantly reduces energy consumption as computation occurs only when necessary. By implementing sparse activation patterns and event-driven processing, these systems can achieve substantial power savings compared to traditional computing paradigms. Spike-timing-dependent plasticity (STDP) and other biologically-inspired learning mechanisms further enhance the energy efficiency of these systems.Expand Specific Solutions04 Analog computing for neural networks

Analog computing approaches for neuromorphic systems leverage the natural physics of electronic components to perform computations with significantly lower energy requirements than digital alternatives. By processing information in the analog domain, these systems avoid the energy costs associated with analog-to-digital conversion and can perform multiple operations simultaneously. This approach enables highly efficient implementation of neural network operations such as matrix multiplication and activation functions, resulting in orders of magnitude improvement in energy efficiency.Expand Specific Solutions05 Optimized training algorithms for energy efficiency

Specialized training algorithms designed specifically for neuromorphic hardware can substantially reduce the energy requirements of these systems. These algorithms often incorporate techniques such as quantization, pruning, and sparse activation to minimize computational demands. By optimizing the training process to account for hardware constraints and energy considerations, these approaches enable the development of neural networks that maintain high accuracy while significantly reducing power consumption during both training and inference phases.Expand Specific Solutions

Key Industry Players in Neuromorphic Computing

The neuromorphic computing systems energy efficiency market is in its early growth stage, characterized by significant research investments but limited commercial deployment. The market is projected to expand rapidly as energy constraints in AI applications become critical, with estimates suggesting a compound annual growth rate of 25-30% over the next five years. Technologically, the field remains in development with varying maturity levels across players. IBM leads with its TrueNorth and subsequent architectures, while Intel's Loihi platform demonstrates promising results. Huawei, Samsung, and SK Hynix are making substantial investments in hardware implementations. Academic-industry partnerships involving Tsinghua University, University of California, and KAIST are accelerating innovation. Specialized players like Syntiant and Cambricon are focusing on edge applications where energy efficiency provides competitive advantage.

International Business Machines Corp.

Technical Solution: IBM's neuromorphic computing approach focuses on the TrueNorth architecture, which implements a million programmable spiking neurons and 256 million configurable synapses. The system achieves remarkable energy efficiency with 70mW power consumption while delivering 46 giga-synaptic operations per second (GSOPS). IBM has developed specific metrics to quantify energy efficiency, measuring synaptic operations per second per watt (SOPS/W), achieving approximately 400 billion SOPS/W [1]. Their SyNAPSE program has demonstrated neuromorphic chips that are 10,000 times more energy efficient than conventional von Neumann architectures for certain cognitive applications [2]. IBM has also implemented advanced power management techniques including clock gating, power gating, and dynamic voltage scaling specifically optimized for spiking neural networks, allowing fine-grained control of energy consumption based on computational load.

Strengths: Industry-leading energy efficiency metrics with proven hardware implementation; comprehensive power management techniques specifically designed for neuromorphic architectures; mature development ecosystem. Weaknesses: Specialized programming model requires significant adaptation of existing algorithms; limited commercial deployment beyond research applications; higher initial implementation costs compared to conventional computing approaches.

Huawei Technologies Co., Ltd.

Technical Solution: Huawei has developed the Ascend neuromorphic computing platform that incorporates energy efficiency quantification through their Da Vinci architecture. This system employs a hybrid approach combining traditional deep learning accelerators with neuromorphic principles. Huawei's solution achieves energy efficiency through their Neural Processing Unit (NPU) that implements sparse event-driven computation, reducing unnecessary calculations by up to 70% compared to dense matrix operations [3]. Their energy quantification framework measures performance in terms of inferences per joule (IPJ), with reported figures of over 7,000 IPJ for vision tasks. Huawei has implemented adaptive precision techniques that dynamically adjust computational precision based on task requirements, further reducing energy consumption by 30-50% [4]. Their neuromorphic systems also incorporate hardware-level power monitoring with microsecond granularity to provide real-time energy consumption data for optimization.

Strengths: Seamless integration with existing AI frameworks and hardware ecosystems; comprehensive energy monitoring capabilities; adaptive precision techniques that balance accuracy and energy consumption. Weaknesses: Less specialized for pure neuromorphic applications compared to dedicated neuromorphic hardware; higher static power consumption than pure event-driven architectures; proprietary ecosystem with potential geopolitical limitations.

Core Technologies for Neuromorphic Power Optimization

Neuromorphic system comprising waveguide extending into array

PatentWO2024172291A1

Innovation

- A neuromorphic system incorporating waveguides within a synapse array to transmit light pulses for weight adjustment and inference processes, enabling efficient computation through large-scale parallel connections and rapid weight adjustment using a passive optical matrix system.

Neuromorphic optical computing architecture system and apparatus

PatentPendingUS20240428063A1

Innovation

- A neuromorphic optical computing architecture system employing an attention-aware optical neural network with spectral and spatial sparse optical convolution layers, utilizing a multi-spectral laser and optical attention modules for adaptive resource allocation, allowing only active neurons to process signals, thereby reducing redundancy and enhancing efficiency.

Standardization Efforts for Neuromorphic Energy Benchmarking

The standardization of energy efficiency metrics for neuromorphic computing systems has become increasingly critical as these bio-inspired architectures gain traction in commercial applications. Currently, several international organizations are spearheading efforts to establish uniform benchmarking methodologies that accurately capture the unique energy characteristics of neuromorphic hardware.

The IEEE Neuromorphic Computing Standards Working Group, established in 2019, has been developing the P2971 standard specifically focused on energy efficiency measurement protocols for neuromorphic systems. This initiative aims to create a framework that accounts for the event-driven nature of these systems, which traditional computing metrics fail to properly evaluate.

Similarly, the International Neuromorphic Systems Engineering Association (INSEA) has proposed the Neuromorphic Energy Efficiency Index (NEEI), which normalizes energy consumption against computational throughput while considering the sparse activation patterns typical in neuromorphic architectures. This metric has gained significant adoption among research institutions but remains in refinement stages for industry-wide acceptance.

The European Telecommunications Standards Institute (ETSI) has also contributed through its Industry Specification Group on Neuromorphic Computing (ISG NMC), which released a technical specification in 2022 outlining methodologies for measuring energy consumption in spike-based computing systems. Their approach emphasizes real-world workloads rather than synthetic benchmarks.

Collaborative efforts between academia and industry have resulted in the Neuromorphic Energy Benchmark Suite (NEBS), a collection of standardized tasks and datasets specifically designed to stress different aspects of neuromorphic hardware while providing consistent energy measurements. NEBS includes both inference and learning scenarios across various application domains.

The Green Neuromorphic Alliance, a consortium of 27 technology companies and research institutions, has established a certification program that validates energy efficiency claims based on standardized testing protocols. This initiative aims to prevent misleading marketing claims and provide consumers with reliable comparison metrics when evaluating neuromorphic solutions.

Despite these advances, challenges remain in standardization efforts, particularly regarding the integration of emerging neuromorphic materials and architectures that may require fundamentally different energy assessment approaches. Cross-platform compatibility of benchmarks and accounting for the energy implications of different learning algorithms continue to be active areas of development in the standardization community.

The IEEE Neuromorphic Computing Standards Working Group, established in 2019, has been developing the P2971 standard specifically focused on energy efficiency measurement protocols for neuromorphic systems. This initiative aims to create a framework that accounts for the event-driven nature of these systems, which traditional computing metrics fail to properly evaluate.

Similarly, the International Neuromorphic Systems Engineering Association (INSEA) has proposed the Neuromorphic Energy Efficiency Index (NEEI), which normalizes energy consumption against computational throughput while considering the sparse activation patterns typical in neuromorphic architectures. This metric has gained significant adoption among research institutions but remains in refinement stages for industry-wide acceptance.

The European Telecommunications Standards Institute (ETSI) has also contributed through its Industry Specification Group on Neuromorphic Computing (ISG NMC), which released a technical specification in 2022 outlining methodologies for measuring energy consumption in spike-based computing systems. Their approach emphasizes real-world workloads rather than synthetic benchmarks.

Collaborative efforts between academia and industry have resulted in the Neuromorphic Energy Benchmark Suite (NEBS), a collection of standardized tasks and datasets specifically designed to stress different aspects of neuromorphic hardware while providing consistent energy measurements. NEBS includes both inference and learning scenarios across various application domains.

The Green Neuromorphic Alliance, a consortium of 27 technology companies and research institutions, has established a certification program that validates energy efficiency claims based on standardized testing protocols. This initiative aims to prevent misleading marketing claims and provide consumers with reliable comparison metrics when evaluating neuromorphic solutions.

Despite these advances, challenges remain in standardization efforts, particularly regarding the integration of emerging neuromorphic materials and architectures that may require fundamentally different energy assessment approaches. Cross-platform compatibility of benchmarks and accounting for the energy implications of different learning algorithms continue to be active areas of development in the standardization community.

Environmental Impact of Neuromorphic Computing Systems

The environmental impact of neuromorphic computing systems represents a critical dimension in evaluating their overall sustainability and long-term viability. Traditional computing architectures have historically contributed significantly to global energy consumption and electronic waste, making environmental considerations increasingly important in technological advancement.

Neuromorphic computing systems offer promising environmental benefits through their inherently energy-efficient design principles. By mimicking the brain's neural structure, these systems can potentially reduce power consumption by orders of magnitude compared to conventional von Neumann architectures. Recent studies indicate that neuromorphic chips like Intel's Loihi and IBM's TrueNorth demonstrate 1000x to 10000x improvements in energy efficiency for certain workloads, translating to substantial reductions in carbon footprint when deployed at scale.

The manufacturing processes for neuromorphic hardware present both challenges and opportunities from an environmental perspective. While specialized materials and fabrication techniques may initially require resource-intensive processes, the extended operational lifespan and reduced energy demands throughout the product lifecycle potentially offset these initial environmental costs. Life cycle assessments (LCAs) conducted on prototype neuromorphic systems suggest favorable environmental profiles compared to traditional computing hardware.

Electronic waste reduction represents another significant environmental advantage of neuromorphic systems. Their potential for longer operational lifespans, combined with more efficient computing capabilities, could reduce the frequency of hardware replacement cycles. Additionally, the adaptive nature of neuromorphic architectures may allow for more effective hardware reuse across different applications, further minimizing electronic waste generation.

Carbon emissions associated with neuromorphic computing extend beyond direct energy consumption to include embodied carbon from manufacturing and distribution. Preliminary carbon accounting models suggest that the transition to neuromorphic computing could reduce ICT-related emissions by 15-30% in specific application domains, particularly in edge computing scenarios where energy constraints are most pronounced.

Water usage in cooling systems represents a frequently overlooked environmental factor in computing infrastructure. Neuromorphic systems' reduced heat generation potentially decreases cooling requirements, leading to significant water conservation in data center operations. This aspect becomes increasingly important as water scarcity affects more regions globally.

The scalability of environmental benefits presents perhaps the most compelling case for neuromorphic computing adoption. As these systems move from research prototypes to widespread deployment, their cumulative environmental impact could substantially contribute to meeting global sustainability targets in the technology sector, particularly as AI and edge computing applications continue their exponential growth trajectory.

Neuromorphic computing systems offer promising environmental benefits through their inherently energy-efficient design principles. By mimicking the brain's neural structure, these systems can potentially reduce power consumption by orders of magnitude compared to conventional von Neumann architectures. Recent studies indicate that neuromorphic chips like Intel's Loihi and IBM's TrueNorth demonstrate 1000x to 10000x improvements in energy efficiency for certain workloads, translating to substantial reductions in carbon footprint when deployed at scale.

The manufacturing processes for neuromorphic hardware present both challenges and opportunities from an environmental perspective. While specialized materials and fabrication techniques may initially require resource-intensive processes, the extended operational lifespan and reduced energy demands throughout the product lifecycle potentially offset these initial environmental costs. Life cycle assessments (LCAs) conducted on prototype neuromorphic systems suggest favorable environmental profiles compared to traditional computing hardware.

Electronic waste reduction represents another significant environmental advantage of neuromorphic systems. Their potential for longer operational lifespans, combined with more efficient computing capabilities, could reduce the frequency of hardware replacement cycles. Additionally, the adaptive nature of neuromorphic architectures may allow for more effective hardware reuse across different applications, further minimizing electronic waste generation.

Carbon emissions associated with neuromorphic computing extend beyond direct energy consumption to include embodied carbon from manufacturing and distribution. Preliminary carbon accounting models suggest that the transition to neuromorphic computing could reduce ICT-related emissions by 15-30% in specific application domains, particularly in edge computing scenarios where energy constraints are most pronounced.

Water usage in cooling systems represents a frequently overlooked environmental factor in computing infrastructure. Neuromorphic systems' reduced heat generation potentially decreases cooling requirements, leading to significant water conservation in data center operations. This aspect becomes increasingly important as water scarcity affects more regions globally.

The scalability of environmental benefits presents perhaps the most compelling case for neuromorphic computing adoption. As these systems move from research prototypes to widespread deployment, their cumulative environmental impact could substantially contribute to meeting global sustainability targets in the technology sector, particularly as AI and edge computing applications continue their exponential growth trajectory.

Unlock deeper insights with PatSnap Eureka Quick Research — get a full tech report to explore trends and direct your research. Try now!

Generate Your Research Report Instantly with AI Agent

Supercharge your innovation with PatSnap Eureka AI Agent Platform!