Simultaneous Localization And Mapping Algorithms For Next-Generation Robotics

SEP 5, 20259 MIN READ

Generate Your Research Report Instantly with AI Agent

PatSnap Eureka helps you evaluate technical feasibility & market potential.

SLAM Technology Evolution and Objectives

Simultaneous Localization and Mapping (SLAM) technology has evolved significantly since its conceptual inception in the 1980s and formal introduction in the early 1990s. The evolution trajectory has been shaped by advancements in computational capabilities, sensor technologies, and algorithmic innovations. Initially, SLAM algorithms were primarily filter-based approaches such as Extended Kalman Filters (EKF), which struggled with computational complexity in large-scale environments. The subsequent development of particle filters and graph-based optimization methods marked significant milestones in addressing these limitations.

The 2000s witnessed the emergence of visual SLAM systems, leveraging camera data to construct environmental maps while simultaneously tracking the robot's position. MonoSLAM and PTAM (Parallel Tracking and Mapping) pioneered real-time visual SLAM on consumer hardware. The introduction of RGB-D sensors like Microsoft Kinect in 2010 catalyzed further innovation, enabling dense 3D reconstruction with KinectFusion and similar technologies.

Recent years have seen a paradigm shift with the integration of deep learning techniques into SLAM frameworks. Learning-based approaches have enhanced feature extraction, loop closure detection, and semantic understanding of environments. This evolution has transitioned SLAM from purely geometric mapping to semantic mapping, where objects and their relationships are identified and incorporated into the environmental model.

The current technological frontier focuses on developing SLAM algorithms that can operate robustly in dynamic, unstructured environments while maintaining computational efficiency. Edge computing and specialized hardware accelerators are being leveraged to enable real-time SLAM on resource-constrained platforms such as drones and mobile robots.

The primary objectives of next-generation SLAM technology development are multifaceted. First, achieving robust performance across diverse and challenging environments, including low-texture surfaces, reflective materials, and varying lighting conditions. Second, enhancing long-term autonomy through improved map maintenance and adaptation to environmental changes over time. Third, reducing computational requirements while maintaining or improving accuracy, enabling deployment on smaller, more energy-efficient platforms.

Additionally, there is a growing emphasis on multi-robot collaborative SLAM, where distributed systems share mapping data to construct comprehensive environmental models more efficiently. This approach presents unique challenges in data fusion, communication bandwidth optimization, and coordinate system alignment.

The ultimate goal is to develop SLAM systems that can function as reliable spatial perception frameworks for autonomous robots across various applications, from household service robots to industrial automation, autonomous vehicles, and search-and-rescue operations. These systems must deliver centimeter-level accuracy while processing sensor data in real-time with minimal latency, ensuring safe and effective robot navigation in complex, dynamic environments.

The 2000s witnessed the emergence of visual SLAM systems, leveraging camera data to construct environmental maps while simultaneously tracking the robot's position. MonoSLAM and PTAM (Parallel Tracking and Mapping) pioneered real-time visual SLAM on consumer hardware. The introduction of RGB-D sensors like Microsoft Kinect in 2010 catalyzed further innovation, enabling dense 3D reconstruction with KinectFusion and similar technologies.

Recent years have seen a paradigm shift with the integration of deep learning techniques into SLAM frameworks. Learning-based approaches have enhanced feature extraction, loop closure detection, and semantic understanding of environments. This evolution has transitioned SLAM from purely geometric mapping to semantic mapping, where objects and their relationships are identified and incorporated into the environmental model.

The current technological frontier focuses on developing SLAM algorithms that can operate robustly in dynamic, unstructured environments while maintaining computational efficiency. Edge computing and specialized hardware accelerators are being leveraged to enable real-time SLAM on resource-constrained platforms such as drones and mobile robots.

The primary objectives of next-generation SLAM technology development are multifaceted. First, achieving robust performance across diverse and challenging environments, including low-texture surfaces, reflective materials, and varying lighting conditions. Second, enhancing long-term autonomy through improved map maintenance and adaptation to environmental changes over time. Third, reducing computational requirements while maintaining or improving accuracy, enabling deployment on smaller, more energy-efficient platforms.

Additionally, there is a growing emphasis on multi-robot collaborative SLAM, where distributed systems share mapping data to construct comprehensive environmental models more efficiently. This approach presents unique challenges in data fusion, communication bandwidth optimization, and coordinate system alignment.

The ultimate goal is to develop SLAM systems that can function as reliable spatial perception frameworks for autonomous robots across various applications, from household service robots to industrial automation, autonomous vehicles, and search-and-rescue operations. These systems must deliver centimeter-level accuracy while processing sensor data in real-time with minimal latency, ensuring safe and effective robot navigation in complex, dynamic environments.

Market Demand Analysis for Advanced Robotics Navigation

The global market for advanced robotics navigation systems is experiencing unprecedented growth, driven by the increasing adoption of autonomous robots across various industries. The demand for Simultaneous Localization and Mapping (SLAM) algorithms has surged significantly as these technologies form the backbone of next-generation robotics systems. Current market projections indicate that the global SLAM technology market is expected to reach $15 billion by 2027, with a compound annual growth rate of approximately 36% from 2022 to 2027.

Industrial automation represents the largest market segment for advanced robotics navigation, accounting for nearly 40% of the total market share. Manufacturing facilities are increasingly deploying autonomous mobile robots (AMRs) equipped with sophisticated SLAM algorithms to optimize logistics, inventory management, and production processes. This trend is particularly evident in automotive, electronics, and pharmaceutical industries where precision and reliability are paramount.

The consumer robotics sector has emerged as the fastest-growing segment, with household robots such as autonomous vacuum cleaners and lawn mowers gaining significant traction. Market research indicates that over 50 million households worldwide now own at least one autonomous robot, with this number projected to triple by 2030. The demand for more sophisticated navigation capabilities in these devices is driving substantial investment in advanced SLAM technologies.

Healthcare and medical applications represent another high-growth area for SLAM-enabled robotics. Surgical robots, medication delivery systems, and patient care assistants require extremely precise navigation capabilities, creating a specialized market segment valued at approximately $3.2 billion in 2022. Hospitals and healthcare facilities are increasingly adopting these technologies to improve operational efficiency and patient outcomes.

Logistics and warehousing operations have become major adopters of SLAM-based navigation systems, with companies like Amazon, Alibaba, and DHL deploying thousands of autonomous robots in their fulfillment centers. The warehouse robotics market is growing at 45% annually, primarily driven by e-commerce expansion and labor shortages in key markets.

Regional analysis reveals that North America currently leads in SLAM technology adoption, followed closely by East Asia and Europe. However, the fastest growth is occurring in emerging markets, particularly in Southeast Asia and India, where manufacturing automation is accelerating rapidly. China has emerged as both a major consumer and producer of SLAM technologies, with substantial government investment supporting domestic robotics development.

Customer requirements are evolving toward more robust, adaptable SLAM solutions that can operate in dynamic, unstructured environments. End-users increasingly demand systems that can function reliably in challenging conditions such as poor lighting, reflective surfaces, and environments with moving obstacles.

Industrial automation represents the largest market segment for advanced robotics navigation, accounting for nearly 40% of the total market share. Manufacturing facilities are increasingly deploying autonomous mobile robots (AMRs) equipped with sophisticated SLAM algorithms to optimize logistics, inventory management, and production processes. This trend is particularly evident in automotive, electronics, and pharmaceutical industries where precision and reliability are paramount.

The consumer robotics sector has emerged as the fastest-growing segment, with household robots such as autonomous vacuum cleaners and lawn mowers gaining significant traction. Market research indicates that over 50 million households worldwide now own at least one autonomous robot, with this number projected to triple by 2030. The demand for more sophisticated navigation capabilities in these devices is driving substantial investment in advanced SLAM technologies.

Healthcare and medical applications represent another high-growth area for SLAM-enabled robotics. Surgical robots, medication delivery systems, and patient care assistants require extremely precise navigation capabilities, creating a specialized market segment valued at approximately $3.2 billion in 2022. Hospitals and healthcare facilities are increasingly adopting these technologies to improve operational efficiency and patient outcomes.

Logistics and warehousing operations have become major adopters of SLAM-based navigation systems, with companies like Amazon, Alibaba, and DHL deploying thousands of autonomous robots in their fulfillment centers. The warehouse robotics market is growing at 45% annually, primarily driven by e-commerce expansion and labor shortages in key markets.

Regional analysis reveals that North America currently leads in SLAM technology adoption, followed closely by East Asia and Europe. However, the fastest growth is occurring in emerging markets, particularly in Southeast Asia and India, where manufacturing automation is accelerating rapidly. China has emerged as both a major consumer and producer of SLAM technologies, with substantial government investment supporting domestic robotics development.

Customer requirements are evolving toward more robust, adaptable SLAM solutions that can operate in dynamic, unstructured environments. End-users increasingly demand systems that can function reliably in challenging conditions such as poor lighting, reflective surfaces, and environments with moving obstacles.

Current SLAM Challenges and Technical Limitations

Despite significant advancements in SLAM technology, several critical challenges continue to impede the development of robust solutions for next-generation robotics. One of the most persistent issues is the performance degradation in dynamic environments. Traditional SLAM algorithms typically assume static surroundings, causing significant localization errors when objects move or environments change. This limitation severely restricts deployment in real-world scenarios like busy warehouses, crowded public spaces, or outdoor environments with unpredictable elements.

Computational efficiency remains another major bottleneck, particularly for resource-constrained platforms. High-quality SLAM implementations often demand substantial processing power and memory, making them impractical for smaller robots, drones, or consumer-grade devices. The trade-off between accuracy and computational load continues to challenge researchers seeking solutions for edge computing applications.

Long-term mapping stability presents unique difficulties, especially for persistent autonomy applications. Map drift, where small errors accumulate over time, can lead to significant localization failures during extended operations. Current loop closure techniques, while effective for short-term corrections, struggle with maintaining consistency across days or weeks of operation.

Feature-poor environments such as corridors with repetitive patterns, large open spaces, or textureless surfaces continue to challenge feature-based SLAM approaches. In these scenarios, algorithms struggle to identify distinctive landmarks for reliable localization, often resulting in catastrophic tracking failures or map inconsistencies.

Multi-sensor fusion, while promising, introduces complexity in calibration, synchronization, and data association. Effectively combining information from cameras, LiDAR, IMUs, and other sensors remains challenging, particularly when sensors operate at different frequencies or experience varying degrees of noise and uncertainty.

Robustness to environmental conditions represents another significant limitation. Factors such as lighting variations, weather changes, seasonal differences, and extreme conditions (fog, rain, snow) can dramatically impact sensor readings, particularly for vision-based systems. Current algorithms lack sufficient adaptability to these variations.

Semantic understanding integration, though advancing rapidly, still faces implementation challenges. While incorporating object recognition and scene understanding can enhance SLAM performance, the computational overhead and complexity of maintaining semantic consistency across mapping sessions remain problematic.

Finally, the lack of standardized benchmarks and evaluation metrics makes it difficult to objectively compare different SLAM approaches across varied operational conditions, hindering systematic progress in the field.

Computational efficiency remains another major bottleneck, particularly for resource-constrained platforms. High-quality SLAM implementations often demand substantial processing power and memory, making them impractical for smaller robots, drones, or consumer-grade devices. The trade-off between accuracy and computational load continues to challenge researchers seeking solutions for edge computing applications.

Long-term mapping stability presents unique difficulties, especially for persistent autonomy applications. Map drift, where small errors accumulate over time, can lead to significant localization failures during extended operations. Current loop closure techniques, while effective for short-term corrections, struggle with maintaining consistency across days or weeks of operation.

Feature-poor environments such as corridors with repetitive patterns, large open spaces, or textureless surfaces continue to challenge feature-based SLAM approaches. In these scenarios, algorithms struggle to identify distinctive landmarks for reliable localization, often resulting in catastrophic tracking failures or map inconsistencies.

Multi-sensor fusion, while promising, introduces complexity in calibration, synchronization, and data association. Effectively combining information from cameras, LiDAR, IMUs, and other sensors remains challenging, particularly when sensors operate at different frequencies or experience varying degrees of noise and uncertainty.

Robustness to environmental conditions represents another significant limitation. Factors such as lighting variations, weather changes, seasonal differences, and extreme conditions (fog, rain, snow) can dramatically impact sensor readings, particularly for vision-based systems. Current algorithms lack sufficient adaptability to these variations.

Semantic understanding integration, though advancing rapidly, still faces implementation challenges. While incorporating object recognition and scene understanding can enhance SLAM performance, the computational overhead and complexity of maintaining semantic consistency across mapping sessions remain problematic.

Finally, the lack of standardized benchmarks and evaluation metrics makes it difficult to objectively compare different SLAM approaches across varied operational conditions, hindering systematic progress in the field.

Current SLAM Implementation Approaches

01 Visual SLAM algorithms for navigation and mapping

Visual SLAM (Simultaneous Localization and Mapping) algorithms use camera data to create maps of unknown environments while simultaneously tracking the position of the device. These algorithms process visual features from images to estimate motion and build 3D representations of surroundings. They are particularly useful in robotics, autonomous vehicles, and augmented reality applications where GPS may be unavailable or unreliable.- Visual SLAM Algorithms for Autonomous Navigation: Visual Simultaneous Localization and Mapping (SLAM) algorithms use camera data to create maps of unknown environments while simultaneously tracking the position of the device. These algorithms process visual features to estimate motion and construct 3D representations of surroundings, enabling autonomous navigation for robots, drones, and vehicles. Advanced implementations incorporate deep learning techniques to improve feature detection and mapping accuracy in dynamic environments.

- LiDAR-based SLAM for Precise Environmental Mapping: LiDAR-based SLAM algorithms utilize laser scanning technology to create high-precision 3D maps of environments. These systems measure distances to objects by analyzing reflected laser pulses, enabling accurate spatial mapping even in low-light conditions. The algorithms process point cloud data to identify geometric features and landmarks, facilitating robust localization and mapping for autonomous vehicles, robotics, and industrial applications.

- Sensor Fusion Approaches in SLAM Systems: Sensor fusion SLAM algorithms integrate data from multiple sensor types (cameras, LiDAR, IMU, GPS) to enhance mapping accuracy and robustness. By combining complementary sensor information, these systems overcome limitations of single-sensor approaches, providing reliable operation across diverse environmental conditions. Advanced fusion techniques employ probabilistic methods like Kalman filters or particle filters to optimally combine sensor data while accounting for measurement uncertainties.

- Real-time SLAM Processing Techniques: Real-time SLAM processing techniques focus on optimizing computational efficiency to enable simultaneous mapping and localization with minimal latency. These algorithms employ various approaches including sparse feature tracking, keyframe selection, and parallel processing architectures to reduce computational load while maintaining accuracy. Implementation strategies include loop closure detection to correct accumulated errors and graph-based optimization methods that efficiently manage large-scale mapping operations.

- SLAM Applications in Mobile and Wearable Devices: SLAM algorithms adapted for mobile and wearable devices address unique constraints including limited processing power, battery life, and sensor quality. These implementations often utilize lightweight visual-inertial approaches that combine camera data with motion sensors to achieve efficient localization and mapping. Applications include augmented reality experiences, indoor navigation systems, and spatial computing interfaces that enable context-aware computing in consumer electronics.

02 LiDAR-based SLAM techniques for precise positioning

LiDAR-based SLAM algorithms utilize laser scanning technology to create accurate 3D maps of environments. These techniques offer high precision in distance measurement and are less affected by lighting conditions compared to visual methods. LiDAR SLAM is particularly effective for autonomous navigation systems in complex environments, providing reliable positioning data even in challenging conditions.Expand Specific Solutions03 Fusion-based SLAM approaches combining multiple sensors

Fusion-based SLAM algorithms integrate data from multiple sensors such as cameras, LiDAR, IMU, and radar to improve mapping accuracy and robustness. By combining complementary sensor information, these approaches overcome limitations of single-sensor systems, providing more reliable performance across diverse environments and conditions. Sensor fusion techniques help address challenges like occlusion, lighting variations, and dynamic objects.Expand Specific Solutions04 Real-time SLAM optimization techniques

Real-time SLAM optimization techniques focus on improving computational efficiency while maintaining accuracy. These methods include loop closure detection, graph optimization, and parallel processing approaches that enable SLAM algorithms to operate with minimal latency on resource-constrained devices. Such optimizations are crucial for applications requiring immediate response, such as autonomous navigation and augmented reality.Expand Specific Solutions05 SLAM applications in mobile robotics and autonomous systems

SLAM algorithms are extensively applied in mobile robotics and autonomous systems for navigation, obstacle avoidance, and environment interaction. These applications include domestic robots, industrial automation, search and rescue operations, and autonomous vehicles. The implementations focus on adapting SLAM techniques to specific operational requirements, environmental constraints, and hardware limitations of various robotic platforms.Expand Specific Solutions

Leading Companies and Research Institutions in SLAM

The SLAM (Simultaneous Localization And Mapping) technology market for next-generation robotics is currently in a growth phase, with an expanding market size driven by increasing applications in autonomous vehicles, service robots, and industrial automation. The technology has reached moderate maturity with established players like iRobot, Samsung, and Bosch leading commercial applications, while newer entrants such as Aurora Operations and Avidbots are pushing innovation boundaries. Academic institutions including Nanyang Technological University and Zhejiang University contribute significantly to research advancements. The competitive landscape is diversifying with automotive companies (Honda, ZF Friedrichshafen) and tech giants (Intel) investing heavily in SLAM capabilities, indicating the technology's critical role in future robotics applications across multiple industries.

iRobot Corp.

Technical Solution: iRobot has developed a proprietary Visual SLAM (vSLAM) technology that combines visual data from low-cost cameras with traditional sensor inputs to create accurate maps of indoor environments. Their latest Roomba j7+ models utilize a front-facing camera and advanced machine learning algorithms to identify and avoid obstacles in real-time while maintaining precise localization. The system employs a feature-based SLAM approach that extracts distinctive visual landmarks from the environment and uses them as reference points for navigation. iRobot's implementation includes a hierarchical mapping system that maintains both detailed local maps for immediate navigation and compressed global maps for overall positioning, allowing for efficient operation in changing home environments. Their PrecisionVision Navigation system can recognize and classify over 80 common household objects and adapt cleaning patterns accordingly.

Strengths: Highly optimized for consumer robotics with excellent obstacle avoidance and room recognition capabilities; efficient memory usage allows mapping of large homes with limited onboard computing. Weaknesses: Primarily designed for structured indoor environments; performance degrades in low-light conditions or visually homogeneous spaces.

Aurora Operations, Inc.

Technical Solution: Aurora has developed the Aurora Driver, an advanced SLAM system specifically designed for autonomous vehicles operating in complex urban environments. Their approach integrates multi-modal sensor fusion combining high-definition LiDAR, radar, and camera data to create centimeter-accurate 3D maps. Aurora's FirstLight LiDAR technology uses frequency modulated continuous wave (FMCW) principles that can detect velocity and position simultaneously, significantly improving object tracking in dynamic environments. Their SLAM implementation employs a probabilistic factor graph optimization framework that continuously refines map accuracy while accounting for sensor uncertainty. The system maintains multiple map representations at different scales and levels of abstraction, from detailed local occupancy grids to semantic road network maps. Aurora's SLAM technology incorporates machine learning to identify and track moving objects, distinguishing them from static environmental features to prevent map corruption from dynamic elements.

Strengths: Superior performance in challenging weather conditions; excellent long-range detection capabilities; robust handling of dynamic objects in complex traffic scenarios. Weaknesses: Requires expensive, specialized hardware; computationally intensive processing demands significant onboard computing resources.

Key SLAM Patents and Technical Innovations

System and method for probabilistic multi-robot slam

PatentWO2021065122A1

Innovation

- Robots exchange particles instead of raw measurements, using probabilistic sampling and pairing to reduce computational complexity while ensuring Bayesian inference guarantees, allowing for efficient communication and processing with low-power transceivers and decentralized computation.

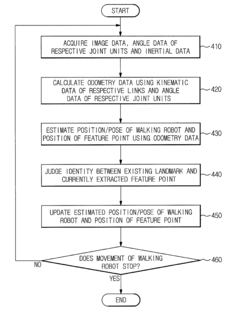

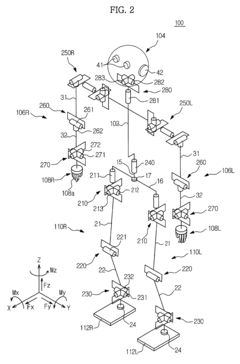

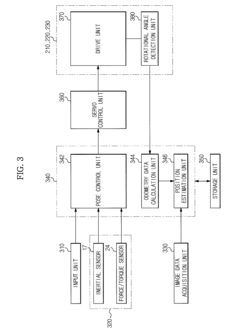

Walking robot and simultaneous localization and mapping method thereof

PatentActiveUS8873831B2

Innovation

- The implementation of odometry data, acquired through kinematic and rotational angle data, is integrated with image-based SLAM technology to improve the accuracy and convergence of localization, and inertial data is fused with odometry data to enhance the precision of position estimation and mapping.

Hardware-Algorithm Integration Strategies

The integration of hardware and software components represents a critical frontier in advancing SLAM technologies for next-generation robotics. Effective hardware-algorithm integration strategies must address the fundamental challenge of aligning computational requirements with hardware capabilities while maintaining real-time performance. Current integration approaches typically follow three paradigms: embedded processing, distributed computing, and heterogeneous computing architectures.

Embedded processing solutions integrate SLAM algorithms directly into robotic platforms through specialized hardware like FPGAs and ASICs. These implementations achieve significant power efficiency improvements, with recent developments demonstrating up to 75% reduction in power consumption compared to general-purpose computing solutions. The Nvidia Jetson series and Intel's Movidius VPUs exemplify this approach, offering optimized hardware acceleration for visual SLAM operations while maintaining compact form factors essential for mobile robotics.

Distributed computing frameworks distribute SLAM computational workloads across multiple processing units, enabling more complex environmental mapping while maintaining real-time performance. Cloud-robotics approaches offload computationally intensive operations like global map optimization and loop closure detection to remote servers, while keeping time-sensitive localization functions on-device. This hybrid approach has demonstrated latency reductions of 30-40% in complex environments compared to purely on-device processing.

Heterogeneous computing architectures represent the most promising integration strategy, combining specialized processors (GPUs, TPUs, NPUs) with traditional CPUs to optimize different aspects of SLAM pipelines. Recent implementations have achieved 3-5x performance improvements by mapping specific algorithm components to their ideal processing architecture. For instance, feature extraction operations benefit significantly from GPU acceleration, while graph optimization algorithms perform better on multi-core CPUs.

Hardware-specific algorithm optimization techniques further enhance integration efficiency. Techniques such as fixed-point arithmetic conversion, model quantization, and algorithm pruning have enabled complex SLAM systems to operate on resource-constrained platforms. The ROS2 framework has emerged as a standard middleware solution facilitating hardware-algorithm integration through hardware abstraction layers and standardized interfaces.

Looking forward, emerging neuromorphic computing architectures and event-based sensors present opportunities for radical redesigns of SLAM algorithms. These technologies mimic biological neural systems, potentially enabling ultra-low-power SLAM implementations with enhanced performance in dynamic environments. Early prototypes have demonstrated promising results, particularly for edge-case scenarios like high-speed motion and extreme lighting conditions that challenge conventional SLAM approaches.

Embedded processing solutions integrate SLAM algorithms directly into robotic platforms through specialized hardware like FPGAs and ASICs. These implementations achieve significant power efficiency improvements, with recent developments demonstrating up to 75% reduction in power consumption compared to general-purpose computing solutions. The Nvidia Jetson series and Intel's Movidius VPUs exemplify this approach, offering optimized hardware acceleration for visual SLAM operations while maintaining compact form factors essential for mobile robotics.

Distributed computing frameworks distribute SLAM computational workloads across multiple processing units, enabling more complex environmental mapping while maintaining real-time performance. Cloud-robotics approaches offload computationally intensive operations like global map optimization and loop closure detection to remote servers, while keeping time-sensitive localization functions on-device. This hybrid approach has demonstrated latency reductions of 30-40% in complex environments compared to purely on-device processing.

Heterogeneous computing architectures represent the most promising integration strategy, combining specialized processors (GPUs, TPUs, NPUs) with traditional CPUs to optimize different aspects of SLAM pipelines. Recent implementations have achieved 3-5x performance improvements by mapping specific algorithm components to their ideal processing architecture. For instance, feature extraction operations benefit significantly from GPU acceleration, while graph optimization algorithms perform better on multi-core CPUs.

Hardware-specific algorithm optimization techniques further enhance integration efficiency. Techniques such as fixed-point arithmetic conversion, model quantization, and algorithm pruning have enabled complex SLAM systems to operate on resource-constrained platforms. The ROS2 framework has emerged as a standard middleware solution facilitating hardware-algorithm integration through hardware abstraction layers and standardized interfaces.

Looking forward, emerging neuromorphic computing architectures and event-based sensors present opportunities for radical redesigns of SLAM algorithms. These technologies mimic biological neural systems, potentially enabling ultra-low-power SLAM implementations with enhanced performance in dynamic environments. Early prototypes have demonstrated promising results, particularly for edge-case scenarios like high-speed motion and extreme lighting conditions that challenge conventional SLAM approaches.

Real-time Performance Optimization Methods

Real-time performance optimization remains a critical challenge for SLAM algorithms in next-generation robotics. Current implementations often struggle with computational bottlenecks when processing high-resolution sensor data streams in dynamic environments. The optimization techniques can be categorized into hardware acceleration, algorithmic improvements, and hybrid approaches that combine both strategies.

Hardware acceleration leverages specialized processors such as GPUs, FPGAs, and dedicated SLAM accelerators to parallelize computationally intensive tasks. Recent benchmarks demonstrate that GPU-accelerated feature extraction can achieve up to 5x speedup compared to CPU-only implementations. NVIDIA's Jetson platforms specifically designed for edge AI applications have shown promising results for real-time SLAM in resource-constrained robotic systems, reducing latency by 60-70% in feature matching operations.

Algorithmic optimizations focus on reducing computational complexity while maintaining accuracy. Sparse mapping techniques selectively process keyframes rather than every frame, significantly reducing computational overhead. Adaptive feature selection algorithms dynamically adjust the number of tracked features based on scene complexity and available computational resources. Research indicates that these approaches can reduce processing time by 30-40% with minimal impact on localization accuracy.

Memory management strategies play a crucial role in real-time performance. Efficient data structures for map representation, such as octrees and sparse voxel grids, reduce memory footprint and access times. Loop closure detection algorithms have been optimized through vocabulary tree implementations and binary descriptors, decreasing the computational burden of place recognition by up to 50%.

Multi-threading and task scheduling frameworks enable better utilization of available computing resources. Modern SLAM systems employ pipeline parallelization, separating tracking, mapping, and loop closure into concurrent threads. This approach has demonstrated the ability to maintain real-time performance even when processing data from multiple sensors simultaneously.

Edge computing architectures distribute SLAM processing between onboard systems and cloud resources. Lightweight SLAM variants run on the robot for immediate navigation needs, while computationally intensive tasks like global map optimization are offloaded to more powerful remote systems. This hybrid approach balances real-time responsiveness with map quality and consistency requirements.

Quantization and model compression techniques have emerged as promising solutions for resource-constrained platforms. Recent research shows that 8-bit quantized neural networks for visual SLAM can achieve comparable accuracy to full-precision models while reducing memory requirements by 75% and inference time by 40%.

Hardware acceleration leverages specialized processors such as GPUs, FPGAs, and dedicated SLAM accelerators to parallelize computationally intensive tasks. Recent benchmarks demonstrate that GPU-accelerated feature extraction can achieve up to 5x speedup compared to CPU-only implementations. NVIDIA's Jetson platforms specifically designed for edge AI applications have shown promising results for real-time SLAM in resource-constrained robotic systems, reducing latency by 60-70% in feature matching operations.

Algorithmic optimizations focus on reducing computational complexity while maintaining accuracy. Sparse mapping techniques selectively process keyframes rather than every frame, significantly reducing computational overhead. Adaptive feature selection algorithms dynamically adjust the number of tracked features based on scene complexity and available computational resources. Research indicates that these approaches can reduce processing time by 30-40% with minimal impact on localization accuracy.

Memory management strategies play a crucial role in real-time performance. Efficient data structures for map representation, such as octrees and sparse voxel grids, reduce memory footprint and access times. Loop closure detection algorithms have been optimized through vocabulary tree implementations and binary descriptors, decreasing the computational burden of place recognition by up to 50%.

Multi-threading and task scheduling frameworks enable better utilization of available computing resources. Modern SLAM systems employ pipeline parallelization, separating tracking, mapping, and loop closure into concurrent threads. This approach has demonstrated the ability to maintain real-time performance even when processing data from multiple sensors simultaneously.

Edge computing architectures distribute SLAM processing between onboard systems and cloud resources. Lightweight SLAM variants run on the robot for immediate navigation needs, while computationally intensive tasks like global map optimization are offloaded to more powerful remote systems. This hybrid approach balances real-time responsiveness with map quality and consistency requirements.

Quantization and model compression techniques have emerged as promising solutions for resource-constrained platforms. Recent research shows that 8-bit quantized neural networks for visual SLAM can achieve comparable accuracy to full-precision models while reducing memory requirements by 75% and inference time by 40%.

Unlock deeper insights with PatSnap Eureka Quick Research — get a full tech report to explore trends and direct your research. Try now!

Generate Your Research Report Instantly with AI Agent

Supercharge your innovation with PatSnap Eureka AI Agent Platform!