Simultaneous Localization And Mapping For Autonomous Drones

SEP 5, 20259 MIN READ

Generate Your Research Report Instantly with AI Agent

PatSnap Eureka helps you evaluate technical feasibility & market potential.

SLAM Technology Background and Objectives

Simultaneous Localization and Mapping (SLAM) technology has evolved significantly over the past three decades, transforming from theoretical concepts in robotics to practical applications across multiple domains. The fundamental challenge SLAM addresses is enabling autonomous systems to construct or update maps of unknown environments while simultaneously tracking their location within these environments—a classic "chicken and egg" problem in robotics.

The development of SLAM can be traced back to the early 1990s when probabilistic approaches began to formalize the problem. Early implementations relied heavily on extended Kalman filters and particle filters, which provided mathematical frameworks for handling uncertainty in sensor measurements and motion. These approaches, while groundbreaking, were computationally intensive and often limited to small-scale environments.

The mid-2000s witnessed a paradigm shift with the introduction of graph-based SLAM methods, which represented the problem as optimization over a graph of spatial constraints. This period also saw the integration of visual information through visual SLAM (vSLAM) techniques, expanding beyond traditional laser-based approaches and enabling more robust environmental understanding.

For autonomous drones specifically, SLAM technology faces unique challenges due to the three-dimensional nature of aerial navigation, limited payload capacity affecting sensor selection, and the dynamic environments in which drones typically operate. Traditional SLAM algorithms designed for ground robots required significant adaptation to accommodate these constraints.

Recent advancements have focused on real-time performance, with lightweight algorithms optimized for the computational constraints of drone platforms. The integration of multiple sensor modalities—including visual cameras, depth sensors, inertial measurement units (IMUs), and occasionally lightweight LiDAR—has become standard practice to enhance robustness across varying environmental conditions.

The primary technical objectives for drone-based SLAM systems include achieving accurate localization with centimeter-level precision, generating detailed environmental maps for navigation and obstacle avoidance, maintaining performance in GPS-denied environments, and operating within the strict power and computational constraints inherent to aerial platforms.

Looking forward, the field is moving toward semantic SLAM systems that not only map geometric structures but also understand the semantic meaning of environmental elements. This evolution aims to enable higher-level reasoning about environments, facilitating more complex autonomous behaviors and human-drone interactions.

The convergence of SLAM with deep learning approaches represents another significant trend, with neural networks increasingly employed for feature extraction, loop closure detection, and scene understanding. These hybrid approaches promise to address longstanding challenges in dynamic environment mapping and operation under varying lighting conditions.

The development of SLAM can be traced back to the early 1990s when probabilistic approaches began to formalize the problem. Early implementations relied heavily on extended Kalman filters and particle filters, which provided mathematical frameworks for handling uncertainty in sensor measurements and motion. These approaches, while groundbreaking, were computationally intensive and often limited to small-scale environments.

The mid-2000s witnessed a paradigm shift with the introduction of graph-based SLAM methods, which represented the problem as optimization over a graph of spatial constraints. This period also saw the integration of visual information through visual SLAM (vSLAM) techniques, expanding beyond traditional laser-based approaches and enabling more robust environmental understanding.

For autonomous drones specifically, SLAM technology faces unique challenges due to the three-dimensional nature of aerial navigation, limited payload capacity affecting sensor selection, and the dynamic environments in which drones typically operate. Traditional SLAM algorithms designed for ground robots required significant adaptation to accommodate these constraints.

Recent advancements have focused on real-time performance, with lightweight algorithms optimized for the computational constraints of drone platforms. The integration of multiple sensor modalities—including visual cameras, depth sensors, inertial measurement units (IMUs), and occasionally lightweight LiDAR—has become standard practice to enhance robustness across varying environmental conditions.

The primary technical objectives for drone-based SLAM systems include achieving accurate localization with centimeter-level precision, generating detailed environmental maps for navigation and obstacle avoidance, maintaining performance in GPS-denied environments, and operating within the strict power and computational constraints inherent to aerial platforms.

Looking forward, the field is moving toward semantic SLAM systems that not only map geometric structures but also understand the semantic meaning of environmental elements. This evolution aims to enable higher-level reasoning about environments, facilitating more complex autonomous behaviors and human-drone interactions.

The convergence of SLAM with deep learning approaches represents another significant trend, with neural networks increasingly employed for feature extraction, loop closure detection, and scene understanding. These hybrid approaches promise to address longstanding challenges in dynamic environment mapping and operation under varying lighting conditions.

Market Demand Analysis for Autonomous Drone Navigation

The global market for autonomous drone navigation systems, particularly those utilizing Simultaneous Localization And Mapping (SLAM) technology, has experienced significant growth in recent years. This expansion is driven by increasing applications across multiple sectors including agriculture, construction, security, delivery services, and entertainment. Industry analysts project the autonomous drone market to reach $23.7 billion by 2027, with SLAM-enabled navigation systems representing a crucial component of this growth trajectory.

Commercial sectors demonstrate particularly strong demand signals. In construction and infrastructure inspection, autonomous drones equipped with advanced SLAM capabilities reduce inspection times by up to 85% while improving safety outcomes. The agriculture sector has embraced precision farming applications, with autonomous drones providing critical data for crop monitoring, resulting in yield improvements averaging 15-20% for early adopters.

Security and surveillance applications represent another significant market segment, with government and private security firms increasingly deploying autonomous drone systems for perimeter monitoring and emergency response. The logistics and delivery sector has emerged as a high-potential growth area, with major e-commerce companies investing heavily in autonomous drone delivery infrastructure to reduce last-mile delivery costs.

Consumer demand for autonomous drones has similarly accelerated, particularly in the prosumer photography and videography segments. The ability of SLAM-equipped drones to navigate complex environments while maintaining stable flight paths has created new creative possibilities for content creators.

Market research indicates several key demand drivers for SLAM technology in autonomous drones. First, there is increasing pressure for solutions that operate effectively in GPS-denied environments, such as indoor spaces, urban canyons, and remote areas. Second, customers across sectors demand systems with lower computational requirements to extend flight times while maintaining navigation accuracy. Third, there is growing interest in multi-sensor fusion approaches that combine visual, LiDAR, and inertial data for more robust performance across varied environmental conditions.

Regional analysis reveals differentiated market needs. North American and European markets prioritize high-precision navigation for specialized industrial applications, while emerging Asian markets demonstrate stronger demand for cost-effective solutions that can be deployed at scale. The Middle Eastern market shows particular interest in autonomous drone systems capable of operating in extreme environmental conditions.

Industry surveys indicate that end-users are willing to pay premium prices for SLAM solutions that demonstrate consistent performance, with reliability and accuracy ranking as the most important purchasing factors across all market segments.

Commercial sectors demonstrate particularly strong demand signals. In construction and infrastructure inspection, autonomous drones equipped with advanced SLAM capabilities reduce inspection times by up to 85% while improving safety outcomes. The agriculture sector has embraced precision farming applications, with autonomous drones providing critical data for crop monitoring, resulting in yield improvements averaging 15-20% for early adopters.

Security and surveillance applications represent another significant market segment, with government and private security firms increasingly deploying autonomous drone systems for perimeter monitoring and emergency response. The logistics and delivery sector has emerged as a high-potential growth area, with major e-commerce companies investing heavily in autonomous drone delivery infrastructure to reduce last-mile delivery costs.

Consumer demand for autonomous drones has similarly accelerated, particularly in the prosumer photography and videography segments. The ability of SLAM-equipped drones to navigate complex environments while maintaining stable flight paths has created new creative possibilities for content creators.

Market research indicates several key demand drivers for SLAM technology in autonomous drones. First, there is increasing pressure for solutions that operate effectively in GPS-denied environments, such as indoor spaces, urban canyons, and remote areas. Second, customers across sectors demand systems with lower computational requirements to extend flight times while maintaining navigation accuracy. Third, there is growing interest in multi-sensor fusion approaches that combine visual, LiDAR, and inertial data for more robust performance across varied environmental conditions.

Regional analysis reveals differentiated market needs. North American and European markets prioritize high-precision navigation for specialized industrial applications, while emerging Asian markets demonstrate stronger demand for cost-effective solutions that can be deployed at scale. The Middle Eastern market shows particular interest in autonomous drone systems capable of operating in extreme environmental conditions.

Industry surveys indicate that end-users are willing to pay premium prices for SLAM solutions that demonstrate consistent performance, with reliability and accuracy ranking as the most important purchasing factors across all market segments.

Current SLAM Challenges in Aerial Environments

Despite significant advancements in SLAM technology for ground-based autonomous systems, aerial environments present unique challenges that continue to impede the widespread deployment of fully autonomous drones. The dynamic nature of aerial navigation introduces motion complexities absent in ground vehicles, including six degrees of freedom movement and susceptibility to wind disturbances, which can cause rapid changes in position and orientation that are difficult for current SLAM algorithms to process in real-time.

Scale and feature variability represent another substantial challenge. Drones operating at different altitudes encounter dramatic changes in environmental scale and feature appearance. A building that appears as a distinct landmark from one altitude may become an expansive, feature-rich landscape at lower altitudes, requiring adaptive feature detection and matching algorithms that can maintain consistency across these scale transitions.

Resource constraints significantly limit SLAM capabilities on aerial platforms. The stringent weight limitations of drones necessitate lightweight computing hardware, restricting the computational power available for complex SLAM operations. Additionally, power consumption concerns further constrain processing capabilities, as energy-intensive computations directly impact flight duration and operational range.

Environmental diversity compounds these challenges. Drones must operate across varied settings—from structured urban environments with abundant visual features to featureless open spaces like fields or water bodies where traditional feature-based SLAM approaches struggle. This diversity demands robust algorithms capable of adapting to changing environmental conditions without manual reconfiguration.

Sensor limitations present further obstacles. Visual sensors suffer from motion blur during rapid movements and struggle in low-light conditions. LiDAR systems, while providing precise depth information, add weight and power demands that smaller drones cannot accommodate. Sensor fusion approaches that combine multiple data sources show promise but increase system complexity and computational requirements.

Dynamic environments with moving objects introduce additional complexity, as SLAM algorithms must distinguish between static environmental features and temporary dynamic elements to avoid mapping errors. This challenge becomes particularly acute in populated areas where human and vehicle movements are common.

GPS-denied environments such as indoor spaces, urban canyons, or areas with electromagnetic interference eliminate the possibility of using global positioning as a reference, forcing complete reliance on onboard SLAM capabilities. In these scenarios, error accumulation becomes a critical concern, as small inaccuracies compound over time without external correction mechanisms.

Scale and feature variability represent another substantial challenge. Drones operating at different altitudes encounter dramatic changes in environmental scale and feature appearance. A building that appears as a distinct landmark from one altitude may become an expansive, feature-rich landscape at lower altitudes, requiring adaptive feature detection and matching algorithms that can maintain consistency across these scale transitions.

Resource constraints significantly limit SLAM capabilities on aerial platforms. The stringent weight limitations of drones necessitate lightweight computing hardware, restricting the computational power available for complex SLAM operations. Additionally, power consumption concerns further constrain processing capabilities, as energy-intensive computations directly impact flight duration and operational range.

Environmental diversity compounds these challenges. Drones must operate across varied settings—from structured urban environments with abundant visual features to featureless open spaces like fields or water bodies where traditional feature-based SLAM approaches struggle. This diversity demands robust algorithms capable of adapting to changing environmental conditions without manual reconfiguration.

Sensor limitations present further obstacles. Visual sensors suffer from motion blur during rapid movements and struggle in low-light conditions. LiDAR systems, while providing precise depth information, add weight and power demands that smaller drones cannot accommodate. Sensor fusion approaches that combine multiple data sources show promise but increase system complexity and computational requirements.

Dynamic environments with moving objects introduce additional complexity, as SLAM algorithms must distinguish between static environmental features and temporary dynamic elements to avoid mapping errors. This challenge becomes particularly acute in populated areas where human and vehicle movements are common.

GPS-denied environments such as indoor spaces, urban canyons, or areas with electromagnetic interference eliminate the possibility of using global positioning as a reference, forcing complete reliance on onboard SLAM capabilities. In these scenarios, error accumulation becomes a critical concern, as small inaccuracies compound over time without external correction mechanisms.

Current SLAM Solutions for Autonomous Drones

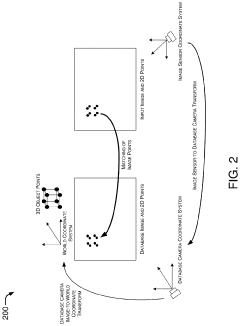

01 Visual SLAM techniques for environment mapping

Visual SLAM techniques use camera data to simultaneously build maps of unknown environments while tracking the position of the device within that environment. These systems process visual features from images to create 3D representations of surroundings, enabling applications in augmented reality, robotics, and autonomous navigation. Advanced algorithms extract distinctive visual landmarks from camera feeds to establish spatial relationships and construct coherent environmental models.- Visual SLAM techniques for environment mapping: Visual SLAM techniques use camera data to simultaneously build maps of unknown environments while tracking the position of the device within that environment. These systems process visual features from images to create 3D representations of surroundings, enabling applications in augmented reality, robotics, and autonomous navigation. Advanced algorithms extract distinctive points from camera frames and track them across sequential images to estimate motion and construct environmental maps.

- Sensor fusion approaches for improved SLAM accuracy: Combining data from multiple sensors enhances SLAM system performance and reliability. These approaches integrate information from cameras, LiDAR, IMUs, GPS, and other sensors to overcome limitations of single-sensor systems. Sensor fusion techniques help address challenges like poor lighting conditions, featureless environments, or rapid movements by leveraging complementary sensor strengths. This results in more robust localization and mapping capabilities across diverse operational scenarios.

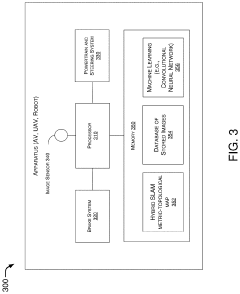

- Machine learning and AI-enhanced SLAM systems: Machine learning and artificial intelligence techniques are being incorporated into SLAM systems to improve performance and adaptability. These approaches use neural networks and other AI methods to enhance feature detection, object recognition, and scene understanding. ML-enhanced SLAM systems can better handle dynamic environments, recognize previously visited locations, and make predictions about spatial relationships. This integration enables more intelligent mapping and navigation capabilities in complex real-world settings.

- SLAM for autonomous vehicles and mobile robotics: SLAM technologies specifically designed for autonomous vehicles and mobile robots address unique challenges related to dynamic environments and real-time operation requirements. These systems must handle varying speeds, changing lighting conditions, and moving objects while maintaining precise localization. Specialized algorithms optimize computational efficiency for mobile platforms with limited resources, enabling reliable navigation in urban environments, indoor spaces, and off-road scenarios.

- Loop closure and global optimization techniques: Loop closure and global optimization are critical components of SLAM systems that correct accumulated errors when revisiting previously mapped areas. These techniques identify when a device returns to a known location and adjust the entire map to maintain consistency. Advanced algorithms compare current observations with stored map features to detect loops and apply mathematical optimization to distribute errors across the trajectory. This process significantly improves long-term mapping accuracy and enables the creation of large-scale consistent environmental representations.

02 Sensor fusion approaches for improved SLAM accuracy

Combining data from multiple sensors enhances SLAM system performance and reliability. These approaches integrate information from cameras, LiDAR, IMUs, GPS, and other sensors to overcome limitations of single-sensor systems. Sensor fusion techniques compensate for individual sensor weaknesses, providing more robust localization in challenging environments such as low-light conditions or featureless spaces, while improving mapping accuracy and reducing drift errors.Expand Specific Solutions03 Machine learning and AI-enhanced SLAM systems

Machine learning and artificial intelligence techniques are being incorporated into SLAM systems to improve feature detection, object recognition, and scene understanding. These approaches enable more intelligent mapping by recognizing and classifying objects within environments, predicting movement patterns, and adapting to changing conditions. Deep learning models help SLAM systems better interpret complex environments and make more accurate localization decisions in real-time applications.Expand Specific Solutions04 Real-time SLAM for autonomous vehicles and robotics

Real-time SLAM implementations focus on processing environmental data with minimal latency for autonomous navigation applications. These systems prioritize computational efficiency while maintaining sufficient accuracy for safe operation of vehicles and robots. Optimized algorithms balance processing requirements with performance needs, enabling autonomous systems to navigate dynamic environments while continuously updating their position and environmental understanding.Expand Specific Solutions05 Loop closure and global optimization techniques

Loop closure algorithms detect when a system returns to a previously mapped location, allowing for correction of accumulated errors in SLAM systems. These techniques identify matching features between current and past observations to recognize revisited areas. Global optimization methods then adjust the entire map and trajectory to maintain consistency, reducing drift and improving overall mapping accuracy. These approaches are essential for creating coherent maps of large environments during extended operation.Expand Specific Solutions

Key Industry Players in Drone SLAM Development

The SLAM technology for autonomous drones is currently in a growth phase, with increasing market adoption across commercial and research sectors. The market is expanding rapidly, projected to reach significant scale as drone applications diversify across industries. From a technical maturity perspective, academic institutions lead fundamental research, with National University of Defense Technology, Nanyang Technological University, and Zhejiang University pioneering algorithmic innovations. Commercial players demonstrate varying levels of implementation maturity - Aurora Operations and Intel are advancing practical applications, while specialized companies like Arbe Robotics and Terabee focus on sensor integration. Traditional automotive companies (Ford, Continental, Bosch) are leveraging their autonomous vehicle expertise to enter this space, creating a competitive landscape where cross-industry collaboration between academia and industry is accelerating technological advancement.

Nanyang Technological University

Technical Solution: Nanyang Technological University (NTU) has developed a pioneering SLAM system for autonomous drones called "Lightweight Visual-Inertial SLAM" (LVI-SLAM). This system uniquely combines event-based cameras with traditional vision sensors and IMUs to achieve high-speed mapping and localization even in challenging lighting conditions[7]. Event cameras detect pixel-level brightness changes rather than capturing full frames, allowing for microsecond-level temporal resolution and exceptional performance in high-dynamic-range environments. NTU's approach implements a tightly-coupled optimization framework that jointly estimates drone trajectory and environmental structure while maintaining computational efficiency. Their system incorporates novel drift correction algorithms that leverage both visual and inertial data to maintain accuracy during extended flights[8]. NTU researchers have also developed specialized mapping techniques that prioritize structural features relevant to navigation while filtering out noise and transient elements. The system includes adaptive parameter tuning that optimizes performance based on flight conditions, environmental characteristics, and available computational resources, making it suitable for various drone platforms from small consumer models to larger industrial applications.

Strengths: Exceptional performance in challenging lighting conditions through event-based vision; highly efficient computational implementation suitable for resource-constrained platforms; robust drift correction for long-duration missions; adaptable to various drone sizes and capabilities. Weaknesses: Event cameras are still relatively specialized and expensive components; integration complexity with existing drone platforms; may require additional calibration compared to more conventional approaches; limited commercial deployment experience compared to industry solutions.

Zhejiang University

Technical Solution: Zhejiang University has developed an innovative SLAM system for autonomous drones called "FastSLAM-D" (Fast Simultaneous Localization and Mapping for Drones). Their approach focuses on computational efficiency while maintaining high accuracy, making it particularly suitable for small to medium-sized drones with limited processing capabilities. The system employs a novel sparse point cloud representation that significantly reduces memory requirements while preserving critical structural information[9]. Zhejiang's implementation utilizes a hierarchical mapping framework that maintains different resolution maps for various navigation tasks - high-detail local maps for obstacle avoidance and compressed global maps for path planning. Their research has produced specialized algorithms for feature extraction that work effectively in both indoor and outdoor environments, with robust performance across varying lighting conditions and textures[10]. The university has also developed techniques for collaborative SLAM, where multiple drones share mapping data to build more comprehensive environmental models faster than single-drone approaches. Their system incorporates machine learning for loop closure detection, significantly improving long-term mapping consistency and reducing position drift during extended autonomous flights.

Strengths: Exceptional computational efficiency suitable for small drones; innovative sparse representation reduces memory requirements while maintaining accuracy; effective in both indoor and outdoor environments; collaborative capabilities enable multi-drone mapping operations. Weaknesses: May sacrifice some mapping detail compared to more resource-intensive approaches; limited commercial deployment outside research contexts; potential challenges in extremely feature-poor environments; collaborative features require specialized communication protocols between drones.

Core SLAM Algorithms and Sensor Fusion Techniques

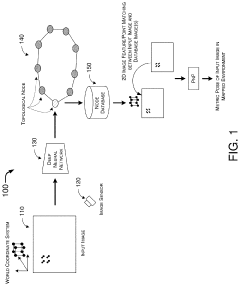

Hybrid Metric-Topological Camera-Based Localization

PatentActiveUS20210082145A1

Innovation

- A hybrid SLAM approach using stereo vision or depth sensors for initial mapping and a single camera or image sensor for localization, employing deep learning-based image classification and geometric PnP techniques to create a hybrid metric-topological map that is continuously updated, reducing memory requirements and costs by using a single camera instead of LIDAR.

Simultaneous Localization and Mapping

PatentInactiveUS20220214696A1

Innovation

- A method utilizing statically mounted Time of Flight (ToF) sensors to detect objects and execute a wall-following algorithm, allowing the robot to circumnavigate objects, aggregate measurements, and perform scan matching to determine the robot's position relative to the object, without relying on traditional laser scanners.

Regulatory Framework for Autonomous Drone Operations

The regulatory landscape for autonomous drone operations incorporating SLAM technology is evolving rapidly across global jurisdictions. In the United States, the Federal Aviation Administration (FAA) has established Part 107 regulations for commercial drone operations, with specific waivers required for beyond visual line of sight (BVLOS) operations that typically leverage SLAM capabilities. The European Union Aviation Safety Agency (EASA) has implemented a risk-based regulatory framework categorizing drone operations into "open," "specific," and "certified" categories, with SLAM-enabled autonomous flights generally falling under the latter two categories requiring operational risk assessments.

Regulatory challenges specifically related to SLAM technology include certification standards for sensor reliability, computational accuracy requirements, and fail-safe mechanisms. Current regulations in most jurisdictions mandate redundant positioning systems beyond SLAM, typically requiring GNSS as backup. Privacy regulations intersect with SLAM implementation, as environmental mapping involves data collection that may include private property or individuals, triggering GDPR compliance requirements in Europe and similar privacy frameworks elsewhere.

Airspace integration presents another regulatory dimension, with UTM (Unmanned Traffic Management) systems being developed globally to accommodate autonomous drone operations. The International Civil Aviation Organization (ICAO) is working to standardize these frameworks across member states, though implementation timelines vary significantly by region.

Safety certification for SLAM algorithms represents a particular regulatory challenge, as traditional aviation certification methodologies are poorly suited to machine learning components often incorporated in modern SLAM implementations. Several jurisdictions are developing "sandboxing" approaches, allowing limited deployment of novel autonomous technologies in controlled environments while regulatory frameworks mature.

Industry stakeholders including DJI, Intel, and Amazon are actively participating in regulatory working groups to shape technical standards for autonomous navigation. The IEEE and ISO have established technical committees developing standards specifically addressing SLAM implementation requirements, with ISO/IEC TR 23902 providing initial guidance on verification methodologies for environmental sensing systems.

Looking forward, regulatory harmonization efforts are underway through initiatives like the Joint Authorities for Rulemaking on Unmanned Systems (JARUS), which aims to create technical, safety, and operational requirements for autonomous drone certification that can be adopted globally, potentially streamlining the path to market for SLAM-enabled drone systems across international markets.

Regulatory challenges specifically related to SLAM technology include certification standards for sensor reliability, computational accuracy requirements, and fail-safe mechanisms. Current regulations in most jurisdictions mandate redundant positioning systems beyond SLAM, typically requiring GNSS as backup. Privacy regulations intersect with SLAM implementation, as environmental mapping involves data collection that may include private property or individuals, triggering GDPR compliance requirements in Europe and similar privacy frameworks elsewhere.

Airspace integration presents another regulatory dimension, with UTM (Unmanned Traffic Management) systems being developed globally to accommodate autonomous drone operations. The International Civil Aviation Organization (ICAO) is working to standardize these frameworks across member states, though implementation timelines vary significantly by region.

Safety certification for SLAM algorithms represents a particular regulatory challenge, as traditional aviation certification methodologies are poorly suited to machine learning components often incorporated in modern SLAM implementations. Several jurisdictions are developing "sandboxing" approaches, allowing limited deployment of novel autonomous technologies in controlled environments while regulatory frameworks mature.

Industry stakeholders including DJI, Intel, and Amazon are actively participating in regulatory working groups to shape technical standards for autonomous navigation. The IEEE and ISO have established technical committees developing standards specifically addressing SLAM implementation requirements, with ISO/IEC TR 23902 providing initial guidance on verification methodologies for environmental sensing systems.

Looking forward, regulatory harmonization efforts are underway through initiatives like the Joint Authorities for Rulemaking on Unmanned Systems (JARUS), which aims to create technical, safety, and operational requirements for autonomous drone certification that can be adopted globally, potentially streamlining the path to market for SLAM-enabled drone systems across international markets.

Real-time Processing Constraints in Aerial SLAM

Real-time processing represents one of the most significant challenges in aerial SLAM implementation for autonomous drones. Unlike ground-based robots, drones operate with severe computational constraints due to payload limitations, power consumption concerns, and the need for rapid decision-making during flight. These constraints become particularly acute when processing the high-dimensional data streams required for effective SLAM operations.

The computational burden of aerial SLAM stems from multiple simultaneous processes: feature extraction from visual or LiDAR data, point cloud registration, loop closure detection, and map optimization. For autonomous drones, these calculations must be completed within strict time budgets, typically under 100ms per frame to maintain stable flight control. Exceeding these time constraints can lead to navigation errors, drift accumulation, or even catastrophic failures in dynamic environments.

Power consumption presents another critical constraint. Processing-intensive SLAM algorithms can rapidly drain battery resources, directly impacting flight duration. This creates a fundamental tension between SLAM accuracy and operational endurance that must be carefully balanced in real-world applications.

Hardware limitations further complicate the situation. While embedded computing platforms like NVIDIA Jetson or Intel NUC offer significant processing capabilities, they still represent a fraction of the computational power available in ground-based systems. This necessitates algorithm optimization specifically for resource-constrained environments.

Recent innovations have addressed these constraints through several approaches. Edge computing architectures distribute processing loads between onboard and offboard systems, though this introduces communication latency concerns. Algorithm optimizations including sparse feature selection, keyframe-based processing, and incremental map updates have reduced computational requirements without significantly compromising accuracy.

Hardware acceleration through dedicated visual processing units (VPUs) and field-programmable gate arrays (FPGAs) has emerged as another promising direction. These specialized computing architectures can perform specific SLAM operations with greater efficiency than general-purpose processors, though at the cost of implementation complexity.

The real-time processing challenge ultimately drives a fundamental research question: how to balance accuracy, completeness, and computational efficiency in aerial SLAM systems. This balance varies significantly based on application requirements, from infrastructure inspection needing high precision to search-and-rescue operations prioritizing rapid exploration and obstacle avoidance.

The computational burden of aerial SLAM stems from multiple simultaneous processes: feature extraction from visual or LiDAR data, point cloud registration, loop closure detection, and map optimization. For autonomous drones, these calculations must be completed within strict time budgets, typically under 100ms per frame to maintain stable flight control. Exceeding these time constraints can lead to navigation errors, drift accumulation, or even catastrophic failures in dynamic environments.

Power consumption presents another critical constraint. Processing-intensive SLAM algorithms can rapidly drain battery resources, directly impacting flight duration. This creates a fundamental tension between SLAM accuracy and operational endurance that must be carefully balanced in real-world applications.

Hardware limitations further complicate the situation. While embedded computing platforms like NVIDIA Jetson or Intel NUC offer significant processing capabilities, they still represent a fraction of the computational power available in ground-based systems. This necessitates algorithm optimization specifically for resource-constrained environments.

Recent innovations have addressed these constraints through several approaches. Edge computing architectures distribute processing loads between onboard and offboard systems, though this introduces communication latency concerns. Algorithm optimizations including sparse feature selection, keyframe-based processing, and incremental map updates have reduced computational requirements without significantly compromising accuracy.

Hardware acceleration through dedicated visual processing units (VPUs) and field-programmable gate arrays (FPGAs) has emerged as another promising direction. These specialized computing architectures can perform specific SLAM operations with greater efficiency than general-purpose processors, though at the cost of implementation complexity.

The real-time processing challenge ultimately drives a fundamental research question: how to balance accuracy, completeness, and computational efficiency in aerial SLAM systems. This balance varies significantly based on application requirements, from infrastructure inspection needing high precision to search-and-rescue operations prioritizing rapid exploration and obstacle avoidance.

Unlock deeper insights with PatSnap Eureka Quick Research — get a full tech report to explore trends and direct your research. Try now!

Generate Your Research Report Instantly with AI Agent

Supercharge your innovation with PatSnap Eureka AI Agent Platform!