Simultaneous Localization And Mapping For Disaster Response Robotics

SEP 5, 20259 MIN READ

Generate Your Research Report Instantly with AI Agent

PatSnap Eureka helps you evaluate technical feasibility & market potential.

SLAM Technology Background and Objectives

Simultaneous Localization and Mapping (SLAM) technology has evolved significantly since its conceptual introduction in the 1980s, with substantial advancements occurring in the past two decades. The fundamental principle of SLAM involves enabling autonomous systems to construct or update maps of unknown environments while simultaneously tracking their position within these environments. This dual capability represents a critical technological foundation for autonomous robotics, particularly in disaster response scenarios where pre-existing maps may be unavailable or rendered obsolete by structural damage.

The evolution of SLAM technology has been characterized by several distinct phases, beginning with filter-based approaches such as Extended Kalman Filters (EKF) and particle filters. These early implementations faced significant computational constraints and struggled with large-scale environments. The subsequent development of graph-based optimization methods marked a pivotal advancement, enabling more accurate and computationally efficient mapping solutions. Recent years have witnessed the integration of deep learning techniques, which have substantially enhanced feature extraction and environmental understanding capabilities.

In disaster response contexts, SLAM technology faces unique challenges that differentiate it from applications in controlled environments. Post-disaster scenarios typically present dynamic, unstable, and visually degraded environments characterized by smoke, dust, debris, and structural instability. These conditions severely compromise traditional sensing modalities and demand robust, multi-sensor fusion approaches that can maintain operational effectiveness despite sensor degradation or failure.

The primary technological objectives for disaster response SLAM systems include achieving real-time performance under computational constraints, maintaining robustness in visually challenging and dynamically changing environments, and developing systems capable of operating with minimal prior information. Additionally, there is a critical need for SLAM solutions that can function effectively in GPS-denied environments such as collapsed buildings, underground structures, and areas with electromagnetic interference.

Future technological trajectories point toward the development of collaborative SLAM systems that enable multiple robots to construct shared environmental representations, enhancing both mapping efficiency and accuracy. There is also significant research momentum toward semantic SLAM approaches that incorporate object recognition and scene understanding, providing contextually enriched maps that can identify critical elements such as victims, hazardous materials, and structural vulnerabilities. These advanced capabilities aim to transform SLAM from a purely navigational tool into an integrated system for situational awareness in disaster response operations.

The convergence of SLAM with other emerging technologies, including edge computing, 5G connectivity, and augmented reality interfaces, presents promising opportunities for enhancing the operational effectiveness of disaster response robotics. These technological synergies may ultimately enable more rapid, safe, and effective disaster response operations, potentially saving lives and reducing the risks faced by human responders.

The evolution of SLAM technology has been characterized by several distinct phases, beginning with filter-based approaches such as Extended Kalman Filters (EKF) and particle filters. These early implementations faced significant computational constraints and struggled with large-scale environments. The subsequent development of graph-based optimization methods marked a pivotal advancement, enabling more accurate and computationally efficient mapping solutions. Recent years have witnessed the integration of deep learning techniques, which have substantially enhanced feature extraction and environmental understanding capabilities.

In disaster response contexts, SLAM technology faces unique challenges that differentiate it from applications in controlled environments. Post-disaster scenarios typically present dynamic, unstable, and visually degraded environments characterized by smoke, dust, debris, and structural instability. These conditions severely compromise traditional sensing modalities and demand robust, multi-sensor fusion approaches that can maintain operational effectiveness despite sensor degradation or failure.

The primary technological objectives for disaster response SLAM systems include achieving real-time performance under computational constraints, maintaining robustness in visually challenging and dynamically changing environments, and developing systems capable of operating with minimal prior information. Additionally, there is a critical need for SLAM solutions that can function effectively in GPS-denied environments such as collapsed buildings, underground structures, and areas with electromagnetic interference.

Future technological trajectories point toward the development of collaborative SLAM systems that enable multiple robots to construct shared environmental representations, enhancing both mapping efficiency and accuracy. There is also significant research momentum toward semantic SLAM approaches that incorporate object recognition and scene understanding, providing contextually enriched maps that can identify critical elements such as victims, hazardous materials, and structural vulnerabilities. These advanced capabilities aim to transform SLAM from a purely navigational tool into an integrated system for situational awareness in disaster response operations.

The convergence of SLAM with other emerging technologies, including edge computing, 5G connectivity, and augmented reality interfaces, presents promising opportunities for enhancing the operational effectiveness of disaster response robotics. These technological synergies may ultimately enable more rapid, safe, and effective disaster response operations, potentially saving lives and reducing the risks faced by human responders.

Market Analysis for Disaster Response Robotics

The global market for disaster response robotics is experiencing significant growth, driven by increasing frequency and severity of natural and man-made disasters worldwide. The market was valued at approximately $1.2 billion in 2022 and is projected to reach $3.7 billion by 2028, representing a compound annual growth rate (CAGR) of 20.4%. This growth trajectory is supported by rising government investments in disaster management technologies across developed and developing nations.

North America currently dominates the market with about 38% share, followed by Europe (27%) and Asia-Pacific (24%). The Asia-Pacific region is expected to witness the fastest growth due to increasing disaster incidents and growing technology adoption in countries like Japan, China, and India. Japan alone has allocated over $300 million for disaster response robotics research and deployment in its latest five-year plan.

The market segmentation reveals distinct categories based on robot functionality. SLAM-enabled ground robots constitute approximately 45% of the market, followed by aerial drones (30%), underwater robots (15%), and hybrid systems (10%). Within these segments, autonomous systems with advanced SLAM capabilities command premium pricing, typically 30-40% higher than their manually operated counterparts.

Key customer segments include government disaster management agencies (52%), military and defense organizations (23%), emergency response teams (15%), and private sector entities including insurance companies and critical infrastructure operators (10%). The purchasing cycle typically involves lengthy evaluation periods, with average procurement timelines ranging from 8-14 months.

Market drivers include increasing disaster frequencies due to climate change, growing recognition of robotics' effectiveness in dangerous environments, and technological advancements in SLAM algorithms that enhance robot autonomy and effectiveness. The COVID-19 pandemic has accelerated market growth by highlighting the value of unmanned systems in emergency situations.

Barriers to market expansion include high initial investment costs, technical limitations in extreme environments, regulatory hurdles regarding autonomous system deployment, and interoperability challenges with existing emergency response equipment. Additionally, training requirements for effective operation represent a significant hidden cost that many organizations underestimate during procurement.

The competitive landscape features both established defense contractors and agile robotics startups. Recent market consolidation has occurred through strategic acquisitions, with major defense companies acquiring specialized robotics firms to enhance their disaster response portfolios.

North America currently dominates the market with about 38% share, followed by Europe (27%) and Asia-Pacific (24%). The Asia-Pacific region is expected to witness the fastest growth due to increasing disaster incidents and growing technology adoption in countries like Japan, China, and India. Japan alone has allocated over $300 million for disaster response robotics research and deployment in its latest five-year plan.

The market segmentation reveals distinct categories based on robot functionality. SLAM-enabled ground robots constitute approximately 45% of the market, followed by aerial drones (30%), underwater robots (15%), and hybrid systems (10%). Within these segments, autonomous systems with advanced SLAM capabilities command premium pricing, typically 30-40% higher than their manually operated counterparts.

Key customer segments include government disaster management agencies (52%), military and defense organizations (23%), emergency response teams (15%), and private sector entities including insurance companies and critical infrastructure operators (10%). The purchasing cycle typically involves lengthy evaluation periods, with average procurement timelines ranging from 8-14 months.

Market drivers include increasing disaster frequencies due to climate change, growing recognition of robotics' effectiveness in dangerous environments, and technological advancements in SLAM algorithms that enhance robot autonomy and effectiveness. The COVID-19 pandemic has accelerated market growth by highlighting the value of unmanned systems in emergency situations.

Barriers to market expansion include high initial investment costs, technical limitations in extreme environments, regulatory hurdles regarding autonomous system deployment, and interoperability challenges with existing emergency response equipment. Additionally, training requirements for effective operation represent a significant hidden cost that many organizations underestimate during procurement.

The competitive landscape features both established defense contractors and agile robotics startups. Recent market consolidation has occurred through strategic acquisitions, with major defense companies acquiring specialized robotics firms to enhance their disaster response portfolios.

SLAM Technical Challenges in Disaster Environments

SLAM systems in disaster environments face unique and severe challenges that significantly differ from those in controlled settings. The unpredictable and dynamic nature of disaster sites creates fundamental difficulties for traditional SLAM algorithms, which typically assume relatively stable environments with distinguishable features.

The primary challenge stems from environmental instability. Disaster zones often contain unstable structures, shifting debris, and changing topography due to aftershocks, continuing collapses, or rescue operations. These changes invalidate previously mapped areas and create discrepancies between stored maps and current reality, causing localization failures and mapping inconsistencies.

Visual degradation presents another significant obstacle. Disaster environments frequently suffer from poor lighting conditions, dust clouds, smoke, and other visual obstructions that severely impact camera-based SLAM systems. These conditions reduce feature visibility and tracking reliability, leading to increased drift and potential system failure.

Sensor reliability is compromised in extreme conditions. High temperatures, water exposure, and physical impacts can damage or degrade sensor performance. Multi-sensor fusion approaches become critical but introduce additional computational complexity and calibration challenges when sensors operate under stress or partial failure.

The geometric complexity of disaster sites further complicates SLAM operations. Irregular surfaces, complex 3D structures from collapsed buildings, and non-Manhattan geometry environments make feature extraction and loop closure detection particularly difficult. Standard geometric assumptions that many SLAM algorithms rely on become invalid in these chaotic environments.

Communication constraints between robots and base stations limit real-time data transmission capabilities. This necessitates more autonomous operation with limited computational resources onboard the robot, creating a difficult balance between algorithmic sophistication and practical deployability.

Motion estimation becomes exceptionally challenging due to uneven terrain, causing unpredictable robot movements that are difficult to model accurately. Wheel slippage, unexpected drops, and irregular motion patterns introduce significant odometry errors that propagate through the SLAM system.

Real-time performance requirements add another layer of complexity. Disaster response operations demand immediate mapping and localization results to guide rescue efforts effectively, yet the computational load increases dramatically in complex environments, creating tension between accuracy and speed.

These technical challenges necessitate specialized SLAM approaches for disaster robotics that prioritize robustness over precision, incorporate uncertainty handling mechanisms, and adapt to rapidly changing conditions while maintaining operational reliability under extreme stress.

The primary challenge stems from environmental instability. Disaster zones often contain unstable structures, shifting debris, and changing topography due to aftershocks, continuing collapses, or rescue operations. These changes invalidate previously mapped areas and create discrepancies between stored maps and current reality, causing localization failures and mapping inconsistencies.

Visual degradation presents another significant obstacle. Disaster environments frequently suffer from poor lighting conditions, dust clouds, smoke, and other visual obstructions that severely impact camera-based SLAM systems. These conditions reduce feature visibility and tracking reliability, leading to increased drift and potential system failure.

Sensor reliability is compromised in extreme conditions. High temperatures, water exposure, and physical impacts can damage or degrade sensor performance. Multi-sensor fusion approaches become critical but introduce additional computational complexity and calibration challenges when sensors operate under stress or partial failure.

The geometric complexity of disaster sites further complicates SLAM operations. Irregular surfaces, complex 3D structures from collapsed buildings, and non-Manhattan geometry environments make feature extraction and loop closure detection particularly difficult. Standard geometric assumptions that many SLAM algorithms rely on become invalid in these chaotic environments.

Communication constraints between robots and base stations limit real-time data transmission capabilities. This necessitates more autonomous operation with limited computational resources onboard the robot, creating a difficult balance between algorithmic sophistication and practical deployability.

Motion estimation becomes exceptionally challenging due to uneven terrain, causing unpredictable robot movements that are difficult to model accurately. Wheel slippage, unexpected drops, and irregular motion patterns introduce significant odometry errors that propagate through the SLAM system.

Real-time performance requirements add another layer of complexity. Disaster response operations demand immediate mapping and localization results to guide rescue efforts effectively, yet the computational load increases dramatically in complex environments, creating tension between accuracy and speed.

These technical challenges necessitate specialized SLAM approaches for disaster robotics that prioritize robustness over precision, incorporate uncertainty handling mechanisms, and adapt to rapidly changing conditions while maintaining operational reliability under extreme stress.

Current SLAM Solutions for Extreme Environments

01 Visual SLAM techniques

Visual SLAM systems use cameras to capture visual data for simultaneous localization and mapping. These systems process image features to create maps of the environment while determining the position of the device. Visual SLAM techniques can include monocular, stereo, or RGB-D camera implementations, with algorithms that detect and track distinctive visual features across frames to estimate motion and build environmental maps.- Visual SLAM techniques for navigation and mapping: Visual SLAM techniques use camera data to simultaneously build maps of unknown environments while tracking the position of the device within that environment. These systems process visual features from images to create 3D representations of surroundings and determine device location in real-time. Advanced implementations may incorporate deep learning for feature extraction and matching, enabling more robust performance in challenging lighting conditions or dynamic environments.

- Sensor fusion approaches for enhanced SLAM accuracy: Sensor fusion combines data from multiple sensors such as cameras, LiDAR, IMU, and GPS to improve SLAM performance. By integrating complementary sensor information, these systems can overcome limitations of individual sensors, providing more accurate localization and mapping in diverse environments. This approach enables robust operation in challenging conditions like low light, featureless areas, or rapid movement scenarios where single-sensor solutions might fail.

- SLAM for augmented and virtual reality applications: SLAM technology enables immersive AR/VR experiences by accurately tracking device position and mapping physical environments. This allows virtual objects to be placed and anchored convincingly in the real world, maintaining proper perspective and occlusion relationships. These implementations often prioritize low latency and computational efficiency to provide seamless user experiences on mobile or wearable devices with limited processing capabilities.

- Machine learning approaches to SLAM optimization: Machine learning techniques are increasingly applied to improve SLAM systems, particularly for loop closure detection, feature extraction, and trajectory optimization. Neural networks can be trained to recognize previously visited locations, predict motion patterns, or identify stable environmental features. These approaches can enhance system robustness in challenging environments and enable more efficient processing of sensor data, reducing computational requirements while maintaining accuracy.

- SLAM for autonomous vehicle navigation: SLAM systems designed specifically for autonomous vehicles incorporate specialized algorithms to handle high-speed movement, large-scale environments, and dynamic obstacles. These implementations often prioritize real-time performance, safety-critical reliability, and integration with path planning systems. They may employ dedicated hardware accelerators to process sensor data from multiple high-resolution sensors simultaneously, enabling precise localization even in complex urban environments.

02 SLAM for autonomous vehicles and robotics

SLAM technology is crucial for autonomous vehicles and robots to navigate unknown environments. These systems combine sensor data from cameras, LiDAR, and other sensors to create real-time maps while determining the vehicle's position within that map. The technology enables path planning, obstacle avoidance, and autonomous navigation in dynamic environments without relying on pre-existing maps or external positioning systems.Expand Specific Solutions03 Machine learning and AI-enhanced SLAM

Modern SLAM systems increasingly incorporate machine learning and artificial intelligence to improve mapping accuracy and efficiency. Neural networks can be trained to recognize objects, predict motion, and enhance feature detection in challenging environments. These AI-enhanced approaches can better handle dynamic objects, changing lighting conditions, and complex scenes that traditional geometric SLAM methods struggle with.Expand Specific Solutions04 Sensor fusion for robust SLAM

Sensor fusion techniques combine data from multiple sensors to create more robust SLAM systems. By integrating information from cameras, LiDAR, IMUs, GPS, and other sensors, these systems can overcome the limitations of individual sensors. Fusion approaches help maintain accurate localization and mapping in challenging conditions such as low light, featureless environments, or when certain sensors are temporarily unavailable or unreliable.Expand Specific Solutions05 AR/VR applications of SLAM

SLAM technology is fundamental to augmented and virtual reality applications, enabling digital content to be accurately placed in the physical world. These systems track the user's position and orientation while mapping the surrounding environment in real-time. AR/VR SLAM implementations often focus on computational efficiency to run on mobile devices, with techniques optimized for indoor environments and close-range interactions with digital objects overlaid on the physical world.Expand Specific Solutions

Key Industry Players in Disaster Robotics

The SLAM technology for disaster response robotics is currently in a growth phase, with an expanding market driven by increasing natural disasters and emergency response needs. The market is projected to reach significant scale as governments and organizations invest in advanced robotic solutions for hazardous environments. Technologically, the field shows varying maturity levels across players. Industry leaders like Samsung Electronics, Intel, and Mitsubishi Electric demonstrate advanced capabilities through integrated hardware-software solutions, while specialized robotics companies such as Aurora Operations and iRobot offer purpose-built disaster response platforms. Academic institutions including Nanyang Technological University and Zhejiang University contribute cutting-edge research, particularly in challenging environments. The ecosystem is further enriched by emerging players like Terabee developing specialized sensors and Arbe Robotics advancing radar technologies for improved mapping in disaster scenarios.

Aurora Operations, Inc.

Technical Solution: Aurora has adapted their autonomous vehicle SLAM technology to create specialized solutions for disaster response robotics. Their approach leverages high-definition mapping techniques originally developed for self-driving cars to create precise environmental models in disaster scenarios. Aurora's disaster response SLAM system employs their proprietary "Aurora Driver" technology modified for off-road and unstable environments. The system utilizes a multi-modal sensor suite including FirstLight FMCW LiDAR, imaging radar, and high-resolution cameras to create detailed 3D maps even in challenging visibility conditions. Their implementation includes specialized algorithms for detecting and tracking moving objects in disaster environments, allowing robots to navigate safely around rescue workers and victims. Aurora has developed a unique "predictive mapping" capability that can estimate the structure of partially collapsed buildings based on visible portions, aiding in search and rescue planning. The system features robust localization that maintains accuracy even when GPS signals are unavailable, using visual landmarks and inertial measurement to maintain position awareness in complex indoor environments.

Strengths: Exceptional mapping precision and detail; superior performance in dynamic environments with moving objects; excellent handling of large-scale mapping for complex disaster sites. Weaknesses: Higher computational requirements than some alternatives; more complex integration process; relatively new adaptation to disaster-specific scenarios compared to some competitors.

Robert Bosch GmbH

Technical Solution: Bosch has developed a comprehensive SLAM solution for disaster response robotics that builds on their extensive sensor technology expertise. Their system integrates multiple sensing modalities including radar, LiDAR, and visual sensors to create reliable mapping in challenging disaster environments. Bosch's disaster response SLAM technology features their proprietary "RobustSLAM" algorithm that maintains localization accuracy even when portions of the environment change during mapping operations - a common occurrence in disaster scenarios with unstable structures. Their implementation includes specialized hardware accelerators that enable real-time 3D mapping with semantic labeling to identify hazards, victims, and safe paths automatically. The system incorporates Bosch's industrial-grade sensors that are designed to operate in extreme temperatures, dust, smoke, and moisture conditions typical in disaster zones. Additionally, Bosch has developed a hierarchical mapping approach that creates both detailed local maps for immediate navigation and broader strategic maps for coordinating multiple response units.

Strengths: Exceptional sensor quality and reliability; robust performance in extreme environmental conditions; excellent integration with existing emergency response systems. Weaknesses: Higher initial cost compared to some alternatives; complex deployment requiring technical expertise; somewhat heavier hardware components affecting mobility in certain scenarios.

Core SLAM Algorithms and Sensor Fusion Techniques

Simultaneous localization and mapping for a mobile robot

PatentActiveUS9400501B2

Innovation

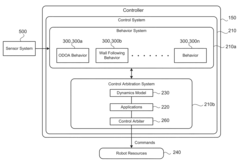

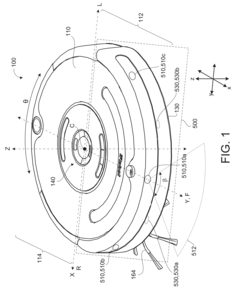

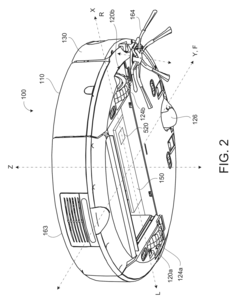

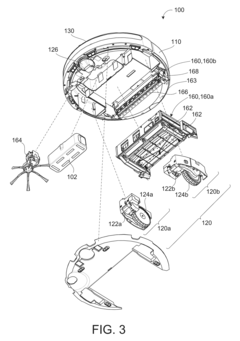

- The method involves initializing a particle model on a controller, synchronizing sensor data with robot pose changes, accumulating data, and updating particles based on localization quality, using odometry and inertial measurements, and applying a range sensor model to correct occupancy probabilities, while resampling particles to maintain localization accuracy.

Simultaneous Localization and Mapping

PatentInactiveUS20220214696A1

Innovation

- A method utilizing statically mounted Time of Flight (ToF) sensors to detect objects and execute a wall-following algorithm, allowing the robot to circumnavigate objects, aggregate measurements, and perform scan matching to determine the robot's position relative to the object, without relying on traditional laser scanners.

Safety Standards and Certifications for Rescue Robotics

The integration of SLAM technology in disaster response robotics necessitates adherence to rigorous safety standards and certifications to ensure operational reliability in hazardous environments. Currently, the International Organization for Standardization (ISO) has established ISO 13482:2014 for personal care robots, which provides a foundation for safety requirements applicable to rescue robotics. Additionally, the American National Standards Institute (ANSI) and the Robotics Industries Association (RIA) have developed ANSI/RIA R15.06-2012, focusing on industrial robot safety that can be adapted for disaster response applications.

For SLAM-enabled rescue robots specifically, IEC 61508 (Functional Safety of Electrical/Electronic/Programmable Electronic Safety-related Systems) provides critical guidelines for ensuring the reliability of sensor systems and algorithmic decision-making processes. These standards emphasize fail-safe mechanisms that prevent catastrophic failures during localization errors or mapping inaccuracies in disaster zones.

Certification processes for SLAM-based rescue robots typically involve rigorous testing in simulated disaster environments. The National Institute of Standards and Technology (NIST) has developed standardized test methods for response robots, including specific evaluations for navigation and mapping capabilities in degraded visual and communication environments. These tests assess a robot's ability to maintain accurate localization while traversing unstable terrain or navigating through smoke-filled environments.

The European Union's CE marking requirements also apply to rescue robotics, with particular emphasis on electromagnetic compatibility (EMC) standards to ensure SLAM sensors function properly despite electromagnetic interference often present in disaster scenarios. The EU Machinery Directive 2006/42/EC provides additional safety requirements relevant to autonomous navigation systems.

Emerging standards specifically addressing SLAM technology in safety-critical applications include the development of ISO/TC 299 for robotics, which is working on standards for service robots in extreme environments. Additionally, UL 3100 for Automated Mobile Platforms addresses safety considerations for autonomous navigation systems, including those utilizing SLAM algorithms.

For international deployment of rescue robots, compliance with ITU radio regulations is essential, particularly for robots using wireless communication for real-time SLAM data processing or remote operation. The International Electrotechnical Commission's IEC 60529 standard for Ingress Protection (IP) ratings is also crucial for ensuring SLAM sensors and processing hardware can withstand exposure to dust, water, and debris commonly encountered in disaster environments.

For SLAM-enabled rescue robots specifically, IEC 61508 (Functional Safety of Electrical/Electronic/Programmable Electronic Safety-related Systems) provides critical guidelines for ensuring the reliability of sensor systems and algorithmic decision-making processes. These standards emphasize fail-safe mechanisms that prevent catastrophic failures during localization errors or mapping inaccuracies in disaster zones.

Certification processes for SLAM-based rescue robots typically involve rigorous testing in simulated disaster environments. The National Institute of Standards and Technology (NIST) has developed standardized test methods for response robots, including specific evaluations for navigation and mapping capabilities in degraded visual and communication environments. These tests assess a robot's ability to maintain accurate localization while traversing unstable terrain or navigating through smoke-filled environments.

The European Union's CE marking requirements also apply to rescue robotics, with particular emphasis on electromagnetic compatibility (EMC) standards to ensure SLAM sensors function properly despite electromagnetic interference often present in disaster scenarios. The EU Machinery Directive 2006/42/EC provides additional safety requirements relevant to autonomous navigation systems.

Emerging standards specifically addressing SLAM technology in safety-critical applications include the development of ISO/TC 299 for robotics, which is working on standards for service robots in extreme environments. Additionally, UL 3100 for Automated Mobile Platforms addresses safety considerations for autonomous navigation systems, including those utilizing SLAM algorithms.

For international deployment of rescue robots, compliance with ITU radio regulations is essential, particularly for robots using wireless communication for real-time SLAM data processing or remote operation. The International Electrotechnical Commission's IEC 60529 standard for Ingress Protection (IP) ratings is also crucial for ensuring SLAM sensors and processing hardware can withstand exposure to dust, water, and debris commonly encountered in disaster environments.

Human-Robot Collaboration in Disaster Scenarios

Human-robot collaboration in disaster scenarios represents a critical advancement in emergency response systems, combining the cognitive capabilities of humans with the physical resilience of robots. This collaboration framework enables more effective search and rescue operations by leveraging the complementary strengths of both agents. Humans provide strategic oversight, complex decision-making, and contextual understanding, while robots equipped with SLAM technology can navigate hazardous environments, collect data, and perform physical tasks without risking human lives.

The collaborative model typically operates through three primary interaction modes: supervisory control, where humans remotely direct robot activities; shared autonomy, where control responsibilities are dynamically allocated based on situational demands; and team-based operations, where multiple humans and robots work in coordinated groups. These interaction paradigms are supported by intuitive interfaces that facilitate real-time communication and control, including augmented reality displays, haptic feedback systems, and natural language processing.

Effective human-robot collaboration requires robust communication protocols that function reliably even in communication-degraded environments. Mesh networking, delay-tolerant networking, and multi-modal communication systems have emerged as solutions to maintain operational continuity when traditional communication infrastructure is compromised. These systems enable robots to transmit SLAM-generated maps and environmental data to human operators, while receiving updated mission parameters and control inputs.

Trust calibration represents another crucial aspect of this collaboration. Disaster responders must develop appropriate levels of trust in robotic systems, avoiding both over-reliance and under-utilization. This calibration process is facilitated through transparent operation, where robots communicate their confidence levels, limitations, and decision-making processes to human teammates.

Training methodologies for disaster response teams increasingly incorporate virtual reality simulations and mixed-reality exercises that prepare human operators to work effectively with robotic partners. These training programs focus on developing shared mental models, establishing common operational procedures, and practicing collaborative problem-solving in simulated disaster environments before deployment to actual emergency situations.

The integration of SLAM-enabled robots with human teams has demonstrated significant improvements in search coverage, victim location rates, and responder safety across various disaster scenarios, including building collapses, wildfire zones, and flood-affected areas. As these collaborative systems continue to evolve, they promise to transform disaster response capabilities through more efficient resource allocation, reduced response times, and enhanced situational awareness.

The collaborative model typically operates through three primary interaction modes: supervisory control, where humans remotely direct robot activities; shared autonomy, where control responsibilities are dynamically allocated based on situational demands; and team-based operations, where multiple humans and robots work in coordinated groups. These interaction paradigms are supported by intuitive interfaces that facilitate real-time communication and control, including augmented reality displays, haptic feedback systems, and natural language processing.

Effective human-robot collaboration requires robust communication protocols that function reliably even in communication-degraded environments. Mesh networking, delay-tolerant networking, and multi-modal communication systems have emerged as solutions to maintain operational continuity when traditional communication infrastructure is compromised. These systems enable robots to transmit SLAM-generated maps and environmental data to human operators, while receiving updated mission parameters and control inputs.

Trust calibration represents another crucial aspect of this collaboration. Disaster responders must develop appropriate levels of trust in robotic systems, avoiding both over-reliance and under-utilization. This calibration process is facilitated through transparent operation, where robots communicate their confidence levels, limitations, and decision-making processes to human teammates.

Training methodologies for disaster response teams increasingly incorporate virtual reality simulations and mixed-reality exercises that prepare human operators to work effectively with robotic partners. These training programs focus on developing shared mental models, establishing common operational procedures, and practicing collaborative problem-solving in simulated disaster environments before deployment to actual emergency situations.

The integration of SLAM-enabled robots with human teams has demonstrated significant improvements in search coverage, victim location rates, and responder safety across various disaster scenarios, including building collapses, wildfire zones, and flood-affected areas. As these collaborative systems continue to evolve, they promise to transform disaster response capabilities through more efficient resource allocation, reduced response times, and enhanced situational awareness.

Unlock deeper insights with PatSnap Eureka Quick Research — get a full tech report to explore trends and direct your research. Try now!

Generate Your Research Report Instantly with AI Agent

Supercharge your innovation with PatSnap Eureka AI Agent Platform!