Advances In Semantic SLAM For Intelligent Navigation

SEP 12, 20259 MIN READ

Generate Your Research Report Instantly with AI Agent

PatSnap Eureka helps you evaluate technical feasibility & market potential.

Semantic SLAM Evolution and Objectives

Simultaneous Localization and Mapping (SLAM) has evolved significantly since its inception in the 1980s, transforming from a purely geometric approach to a semantically enriched system. The integration of semantic understanding into SLAM represents a paradigm shift in how autonomous systems perceive and interact with their environments. Traditional SLAM focused primarily on creating accurate geometric maps and determining the agent's position within them, without comprehending the meaning or context of detected objects.

The evolution of Semantic SLAM can be traced through several key developmental phases. Initially, researchers focused on enhancing geometric SLAM with basic object recognition capabilities. This was followed by the integration of deep learning techniques around 2015, which dramatically improved object classification accuracy. The current generation of Semantic SLAM systems incorporates advanced scene understanding, relationship modeling between objects, and contextual reasoning.

This technological progression has been driven by the increasing demands of intelligent navigation systems across various domains including autonomous vehicles, service robots, augmented reality, and industrial automation. Each application area presents unique challenges and requirements, pushing the boundaries of what Semantic SLAM can achieve.

The primary objective of modern Semantic SLAM research is to develop systems that can create rich, meaningful representations of environments that extend beyond geometric accuracy. These representations should include object identification, classification, and semantic relationships, enabling higher-level reasoning about the environment. Such capabilities are essential for complex navigation tasks that require contextual understanding, such as distinguishing between similar-looking rooms based on their function or identifying navigable spaces based on semantic cues rather than just geometric ones.

Another critical objective is improving robustness and adaptability across diverse and dynamic environments. Semantic information provides valuable constraints that can enhance mapping accuracy and localization reliability, particularly in challenging scenarios where traditional geometric features may be insufficient or ambiguous.

Real-time performance remains a significant goal, as practical applications demand systems that can process semantic information without introducing prohibitive computational delays. This has spurred research into efficient algorithms and hardware acceleration techniques specifically designed for Semantic SLAM.

Looking forward, the field aims to achieve more comprehensive scene understanding, including temporal dynamics and predictive capabilities. The ultimate vision is to develop systems that can interpret environments with human-like understanding while maintaining the precision and reliability required for autonomous navigation.

The evolution of Semantic SLAM can be traced through several key developmental phases. Initially, researchers focused on enhancing geometric SLAM with basic object recognition capabilities. This was followed by the integration of deep learning techniques around 2015, which dramatically improved object classification accuracy. The current generation of Semantic SLAM systems incorporates advanced scene understanding, relationship modeling between objects, and contextual reasoning.

This technological progression has been driven by the increasing demands of intelligent navigation systems across various domains including autonomous vehicles, service robots, augmented reality, and industrial automation. Each application area presents unique challenges and requirements, pushing the boundaries of what Semantic SLAM can achieve.

The primary objective of modern Semantic SLAM research is to develop systems that can create rich, meaningful representations of environments that extend beyond geometric accuracy. These representations should include object identification, classification, and semantic relationships, enabling higher-level reasoning about the environment. Such capabilities are essential for complex navigation tasks that require contextual understanding, such as distinguishing between similar-looking rooms based on their function or identifying navigable spaces based on semantic cues rather than just geometric ones.

Another critical objective is improving robustness and adaptability across diverse and dynamic environments. Semantic information provides valuable constraints that can enhance mapping accuracy and localization reliability, particularly in challenging scenarios where traditional geometric features may be insufficient or ambiguous.

Real-time performance remains a significant goal, as practical applications demand systems that can process semantic information without introducing prohibitive computational delays. This has spurred research into efficient algorithms and hardware acceleration techniques specifically designed for Semantic SLAM.

Looking forward, the field aims to achieve more comprehensive scene understanding, including temporal dynamics and predictive capabilities. The ultimate vision is to develop systems that can interpret environments with human-like understanding while maintaining the precision and reliability required for autonomous navigation.

Market Analysis for Intelligent Navigation Systems

The intelligent navigation systems market is experiencing robust growth, driven by increasing demand for autonomous vehicles, advanced driver-assistance systems (ADAS), and smart mobility solutions. Current market valuations place the global intelligent navigation market at approximately 25 billion USD in 2023, with projections indicating a compound annual growth rate (CAGR) of 17-20% through 2030. This acceleration is particularly evident in automotive, robotics, and consumer electronics sectors.

Semantic SLAM (Simultaneous Localization and Mapping) technology represents a critical advancement in this ecosystem, enabling machines to not only map their environment but also understand it contextually. This semantic understanding capability has created new market segments within intelligent navigation, particularly for applications requiring human-like environmental comprehension.

Consumer demand patterns reveal strong interest in navigation systems that can recognize and interpret complex environments. Urban mobility solutions, indoor navigation applications, and logistics automation are showing the highest adoption rates. The integration of semantic understanding with traditional navigation capabilities addresses longstanding market pain points around navigation in dynamic, unstructured environments.

Regional analysis indicates that North America currently leads market adoption, accounting for approximately 38% of global market share, followed by Europe (29%) and Asia-Pacific (26%). However, the Asia-Pacific region demonstrates the fastest growth trajectory, particularly in China, Japan, and South Korea, where significant investments in autonomous technologies are occurring.

Industry verticals show varying adoption patterns. Automotive applications represent the largest segment (42% of market share), followed by industrial robotics (23%), consumer electronics (18%), and defense applications (12%). Emerging applications in healthcare navigation and retail automation are showing promising growth potential despite their currently smaller market shares.

Customer requirements are evolving toward systems with higher semantic understanding capabilities, reduced computational requirements, and improved real-time performance. Market surveys indicate that 76% of enterprise customers prioritize navigation systems that can identify and classify objects in their environment, while 68% emphasize the importance of systems that can understand spatial relationships between objects.

The competitive landscape is characterized by both established navigation technology providers expanding their capabilities to include semantic understanding, and AI-focused startups developing specialized semantic SLAM solutions. This market structure suggests opportunities for strategic partnerships between traditional navigation companies and AI specialists to deliver comprehensive intelligent navigation systems.

Semantic SLAM (Simultaneous Localization and Mapping) technology represents a critical advancement in this ecosystem, enabling machines to not only map their environment but also understand it contextually. This semantic understanding capability has created new market segments within intelligent navigation, particularly for applications requiring human-like environmental comprehension.

Consumer demand patterns reveal strong interest in navigation systems that can recognize and interpret complex environments. Urban mobility solutions, indoor navigation applications, and logistics automation are showing the highest adoption rates. The integration of semantic understanding with traditional navigation capabilities addresses longstanding market pain points around navigation in dynamic, unstructured environments.

Regional analysis indicates that North America currently leads market adoption, accounting for approximately 38% of global market share, followed by Europe (29%) and Asia-Pacific (26%). However, the Asia-Pacific region demonstrates the fastest growth trajectory, particularly in China, Japan, and South Korea, where significant investments in autonomous technologies are occurring.

Industry verticals show varying adoption patterns. Automotive applications represent the largest segment (42% of market share), followed by industrial robotics (23%), consumer electronics (18%), and defense applications (12%). Emerging applications in healthcare navigation and retail automation are showing promising growth potential despite their currently smaller market shares.

Customer requirements are evolving toward systems with higher semantic understanding capabilities, reduced computational requirements, and improved real-time performance. Market surveys indicate that 76% of enterprise customers prioritize navigation systems that can identify and classify objects in their environment, while 68% emphasize the importance of systems that can understand spatial relationships between objects.

The competitive landscape is characterized by both established navigation technology providers expanding their capabilities to include semantic understanding, and AI-focused startups developing specialized semantic SLAM solutions. This market structure suggests opportunities for strategic partnerships between traditional navigation companies and AI specialists to deliver comprehensive intelligent navigation systems.

Current Semantic SLAM Technologies and Barriers

Semantic SLAM (Simultaneous Localization and Mapping) technology has evolved significantly in recent years, with current implementations integrating object recognition, scene understanding, and spatial reasoning capabilities. Traditional geometric SLAM systems focus primarily on creating point cloud or mesh-based maps, while semantic SLAM enhances these representations with object-level information and scene context, creating more meaningful environmental models for intelligent navigation systems.

The current technological landscape features several prominent approaches. Deep learning-based semantic segmentation integrated with SLAM frameworks represents one mainstream solution, where convolutional neural networks classify each pixel in camera frames before fusing this information with geometric data. Another approach utilizes object detection algorithms to identify and track specific entities within the environment, enabling higher-level reasoning about spatial relationships.

Despite these advancements, semantic SLAM faces significant technical barriers. Computational resource constraints remain a primary challenge, as real-time semantic processing requires substantial computing power, making deployment on resource-limited platforms like mobile robots or drones particularly challenging. The integration of deep learning models with traditional SLAM algorithms often creates performance bottlenecks that limit practical applications.

Semantic inconsistency presents another major obstacle. As objects are viewed from different perspectives or under varying lighting conditions, maintaining consistent semantic labels across multiple observations becomes problematic. Current systems struggle with temporal coherence, often producing flickering semantic labels that undermine reliable navigation decision-making.

Scale and generalization limitations further impede progress. Most semantic SLAM systems are trained on specific datasets and struggle to generalize to novel environments or previously unseen object categories. This domain adaptation problem significantly restricts deployment versatility across diverse real-world settings.

Dynamic environments pose particular challenges for current semantic SLAM technologies. While traditional SLAM assumes static scenes, real-world environments contain moving objects that violate this assumption. Though some systems attempt to identify and filter dynamic elements, robust performance in highly dynamic settings remains elusive.

The sensor fusion challenge persists as well. Integrating data from multiple sensor modalities (cameras, LiDAR, radar, etc.) with semantic information requires sophisticated calibration and synchronization techniques that current systems have not fully mastered. This integration is crucial for all-weather, all-condition navigation capabilities.

Finally, the semantic mapping representation itself presents an ongoing research challenge. Finding the optimal balance between detailed semantic information and computational efficiency remains unresolved, with current approaches often sacrificing one for the other.

The current technological landscape features several prominent approaches. Deep learning-based semantic segmentation integrated with SLAM frameworks represents one mainstream solution, where convolutional neural networks classify each pixel in camera frames before fusing this information with geometric data. Another approach utilizes object detection algorithms to identify and track specific entities within the environment, enabling higher-level reasoning about spatial relationships.

Despite these advancements, semantic SLAM faces significant technical barriers. Computational resource constraints remain a primary challenge, as real-time semantic processing requires substantial computing power, making deployment on resource-limited platforms like mobile robots or drones particularly challenging. The integration of deep learning models with traditional SLAM algorithms often creates performance bottlenecks that limit practical applications.

Semantic inconsistency presents another major obstacle. As objects are viewed from different perspectives or under varying lighting conditions, maintaining consistent semantic labels across multiple observations becomes problematic. Current systems struggle with temporal coherence, often producing flickering semantic labels that undermine reliable navigation decision-making.

Scale and generalization limitations further impede progress. Most semantic SLAM systems are trained on specific datasets and struggle to generalize to novel environments or previously unseen object categories. This domain adaptation problem significantly restricts deployment versatility across diverse real-world settings.

Dynamic environments pose particular challenges for current semantic SLAM technologies. While traditional SLAM assumes static scenes, real-world environments contain moving objects that violate this assumption. Though some systems attempt to identify and filter dynamic elements, robust performance in highly dynamic settings remains elusive.

The sensor fusion challenge persists as well. Integrating data from multiple sensor modalities (cameras, LiDAR, radar, etc.) with semantic information requires sophisticated calibration and synchronization techniques that current systems have not fully mastered. This integration is crucial for all-weather, all-condition navigation capabilities.

Finally, the semantic mapping representation itself presents an ongoing research challenge. Finding the optimal balance between detailed semantic information and computational efficiency remains unresolved, with current approaches often sacrificing one for the other.

Contemporary Semantic SLAM Implementations

01 Visual-Semantic SLAM Integration

Integration of visual perception with semantic understanding in SLAM systems enables robots to recognize objects and environments while mapping. This approach combines traditional geometric mapping with semantic labeling, allowing for more intelligent navigation by identifying and classifying objects in the environment. The semantic information enhances the robot's ability to make context-aware decisions during navigation and improves localization accuracy in complex environments.- Semantic mapping and localization techniques: Semantic SLAM systems integrate semantic understanding with traditional SLAM approaches to create more intelligent navigation systems. These techniques involve recognizing and classifying objects in the environment to build semantic maps that contain not just geometric information but also object-level understanding. This semantic information enhances localization accuracy by providing context-aware reference points and improves navigation decision-making by enabling robots to understand the functional properties of different spaces.

- Deep learning integration for scene understanding: Advanced SLAM systems incorporate deep learning models to enhance scene understanding capabilities. These systems use neural networks to process visual data, recognize objects, and extract semantic features from the environment. By integrating deep learning with SLAM, navigation systems can better interpret complex environments, handle dynamic objects, and make more intelligent path planning decisions based on the semantic context of the surroundings.

- Multi-sensor fusion for robust semantic navigation: Multi-sensor fusion approaches combine data from various sensors such as cameras, LiDAR, radar, and IMUs to create more comprehensive and robust semantic maps. These systems integrate different data streams to overcome the limitations of individual sensors, enabling more accurate object recognition, better handling of environmental challenges like poor lighting or occlusions, and more reliable navigation in diverse conditions.

- Real-time semantic decision-making frameworks: Real-time semantic decision-making frameworks enable autonomous navigation systems to make intelligent decisions based on semantic understanding of the environment. These frameworks process semantic information to identify navigable spaces, avoid obstacles, recognize interaction opportunities, and adapt to changing environments. By incorporating semantic reasoning into the decision-making process, these systems can navigate more efficiently and safely in complex, dynamic environments.

- Cloud-based collaborative semantic mapping: Cloud-based collaborative semantic mapping systems enable multiple robots or devices to share and collectively build semantic maps. These systems leverage cloud computing to process large amounts of data, integrate observations from multiple sources, and maintain updated semantic maps. By sharing semantic information across devices, these systems can accelerate map building, improve accuracy through redundancy, and enable more intelligent collective navigation behaviors.

02 Deep Learning for Semantic Mapping

Deep learning techniques are applied to SLAM systems to extract semantic information from sensor data. Neural networks process visual inputs to segment and classify objects in real-time, creating semantically enriched maps. These approaches use convolutional neural networks and other deep learning architectures to understand the environment at a higher level, enabling more intelligent path planning and obstacle avoidance based on object recognition rather than just geometry.Expand Specific Solutions03 Multi-sensor Fusion for Semantic Navigation

Combining data from multiple sensors (cameras, LiDAR, radar, etc.) with semantic processing creates robust navigation systems. The fusion of different sensor modalities provides complementary information about the environment, enhancing the semantic understanding and spatial awareness. This approach improves navigation reliability in challenging conditions such as varying lighting, weather, or dynamic environments by leveraging the strengths of each sensor type while compensating for individual weaknesses.Expand Specific Solutions04 Graph-based Semantic Representation

Graph structures are used to represent semantic relationships between objects and spaces in navigation systems. These semantic graphs encode spatial and functional relationships between environmental elements, allowing for high-level reasoning about the environment. The graph-based approach facilitates more efficient path planning by understanding the semantic context of spaces (e.g., recognizing doors as transitions between rooms) and enables query-based navigation using semantic concepts rather than just coordinates.Expand Specific Solutions05 Dynamic Environment Adaptation

Semantic SLAM systems that can adapt to changing environments by continuously updating their understanding of the world. These systems detect and track moving objects, distinguish between permanent and temporary features, and update their maps accordingly. The ability to recognize and adapt to environmental changes enables more reliable long-term navigation in real-world settings where objects may be moved, added, or removed over time.Expand Specific Solutions

Leading Companies in Semantic SLAM Research

The semantic SLAM technology for intelligent navigation is currently in a growth phase, with the market expanding rapidly due to increasing applications in robotics, autonomous vehicles, and smart infrastructure. The global market size is projected to reach significant value as industries adopt these solutions for enhanced spatial awareness and autonomous operation. Technologically, the field shows varying maturity levels across players. Companies like iRobot, Bosch, and IBM demonstrate advanced commercial implementations, while Sony and Honeywell are making substantial R&D investments. Academic institutions including Zhejiang University, Southeast University, and the National University of Defense Technology are driving fundamental research innovations. Collaboration between industry leaders and research institutions is accelerating development, with specialized robotics firms like Preferred Networks emerging as important niche players.

iRobot Corp.

Technical Solution: iRobot's semantic SLAM technology integrates visual recognition with spatial mapping to enable intelligent navigation in home environments. Their approach combines RGB-D cameras with proprietary vSLAM (visual SLAM) algorithms that identify and classify household objects while simultaneously building accurate spatial maps. The system employs deep learning models to recognize furniture, doorways, and other domestic obstacles, allowing their robots to understand the semantic meaning of spaces (e.g., "kitchen" vs. "living room"). This contextual awareness enables more efficient path planning and user-friendly interaction. iRobot's latest implementation includes real-time object detection that can distinguish between permanent fixtures and temporary obstacles, adapting navigation strategies accordingly. Their technology also incorporates memory features that allow robots to remember specific locations and objects across multiple cleaning sessions, creating increasingly accurate semantic maps over time.

Strengths: Highly optimized for home environments with excellent object recognition capabilities and user-friendly interface. The system works well in dynamic environments with moving obstacles. Weaknesses: Limited to structured indoor environments and requires significant computational resources for real-time semantic processing, potentially increasing device cost.

Robert Bosch GmbH

Technical Solution: Bosch has developed an advanced semantic SLAM system that integrates LiDAR, camera, and inertial sensors for robust navigation in both indoor and outdoor environments. Their approach employs a multi-layered architecture where geometric mapping occurs simultaneously with semantic understanding. The system first creates dense point clouds using LiDAR data, then applies deep neural networks to segment and classify objects within these maps. Bosch's innovation lies in their fusion algorithms that combine semantic information from cameras with precise spatial data from LiDAR, enabling centimeter-level accuracy even in challenging conditions. Their technology incorporates semantic scene understanding that can distinguish between static infrastructure (buildings, roads) and dynamic elements (pedestrians, vehicles), allowing for contextually appropriate navigation decisions. The system also features adaptive mapping that can handle environmental changes over time, making it particularly suitable for autonomous vehicles and industrial robots operating in semi-structured environments.

Strengths: Exceptional sensor fusion capabilities with high precision in varied environments. The system demonstrates robust performance in adverse weather and lighting conditions. Weaknesses: Requires expensive hardware components and significant computational resources, making it less suitable for consumer applications with cost constraints.

Key Algorithms and Frameworks Analysis

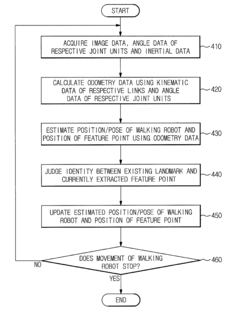

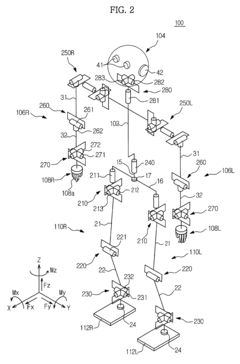

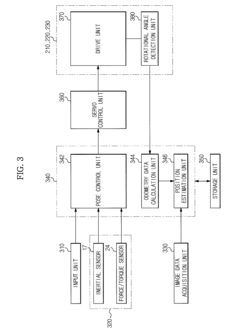

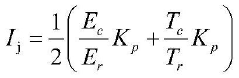

Walking robot and simultaneous localization and mapping method thereof

PatentActiveUS8873831B2

Innovation

- The implementation of odometry data, acquired through kinematic and rotational angle data, is integrated with image-based SLAM technology to improve the accuracy and convergence of localization, and inertial data is fused with odometry data to enhance the precision of position estimation and mapping.

SLAM (Simultaneous Localization and Mapping) implementation method and system based on solid-state radar

PatentActiveCN117218350A

Innovation

- The concept of key frames is introduced, IMU data is used to optimize radar poses, and key frames are determined through iterative optimization methods of edge features and plane features, combined with sliding windows and adaptive thresholds, to ensure effective correlation and accurate positioning between poses.

Hardware-Software Integration Challenges

The integration of hardware and software components presents significant challenges in the advancement of Semantic SLAM for intelligent navigation systems. These challenges stem from the inherent complexity of aligning diverse hardware sensors with sophisticated software algorithms that process semantic information. Modern SLAM systems typically incorporate multiple sensors including cameras, LiDAR, IMUs, and depth sensors, each generating data at different rates and formats. Ensuring synchronization between these heterogeneous data streams while maintaining real-time performance demands careful system design and optimization.

Processing latency emerges as a critical bottleneck in hardware-software integration. Semantic understanding requires computationally intensive operations such as object detection, segmentation, and classification, which must be performed within strict time constraints to support real-time navigation. The trade-off between processing power and energy consumption becomes particularly pronounced in mobile robotic platforms with limited battery capacity, necessitating efficient hardware acceleration solutions.

Resource allocation presents another significant challenge. Semantic SLAM algorithms must compete for computational resources with other navigation functions such as path planning, obstacle avoidance, and human-robot interaction. This competition intensifies on embedded systems with constrained processing capabilities, requiring sophisticated resource management strategies to ensure optimal performance across all navigation tasks.

Cross-platform compatibility issues further complicate integration efforts. Developers must contend with diverse operating systems, middleware frameworks, and hardware architectures when deploying Semantic SLAM solutions. The lack of standardized interfaces between hardware components and software modules often necessitates custom integration work, increasing development time and costs while potentially introducing system instabilities.

Calibration and synchronization between multiple sensors represent persistent technical hurdles. Accurate semantic mapping requires precise alignment of data from different sensors, both spatially and temporally. Environmental factors such as temperature fluctuations, vibration, and electromagnetic interference can degrade sensor performance and disrupt carefully calibrated systems, requiring robust self-calibration mechanisms.

Edge computing integration presents both opportunities and challenges for Semantic SLAM systems. While edge devices can reduce latency by processing data closer to sensors, they introduce additional complexity in terms of distributed computing architectures and communication protocols. Balancing on-device processing with cloud offloading requires sophisticated decision-making algorithms that consider network conditions, processing requirements, and power constraints.

As Semantic SLAM systems evolve toward greater autonomy and intelligence, hardware-software co-design approaches are becoming increasingly important. These approaches consider the entire system holistically, optimizing hardware configurations and software algorithms in tandem to achieve superior performance, reliability, and energy efficiency for intelligent navigation applications.

Processing latency emerges as a critical bottleneck in hardware-software integration. Semantic understanding requires computationally intensive operations such as object detection, segmentation, and classification, which must be performed within strict time constraints to support real-time navigation. The trade-off between processing power and energy consumption becomes particularly pronounced in mobile robotic platforms with limited battery capacity, necessitating efficient hardware acceleration solutions.

Resource allocation presents another significant challenge. Semantic SLAM algorithms must compete for computational resources with other navigation functions such as path planning, obstacle avoidance, and human-robot interaction. This competition intensifies on embedded systems with constrained processing capabilities, requiring sophisticated resource management strategies to ensure optimal performance across all navigation tasks.

Cross-platform compatibility issues further complicate integration efforts. Developers must contend with diverse operating systems, middleware frameworks, and hardware architectures when deploying Semantic SLAM solutions. The lack of standardized interfaces between hardware components and software modules often necessitates custom integration work, increasing development time and costs while potentially introducing system instabilities.

Calibration and synchronization between multiple sensors represent persistent technical hurdles. Accurate semantic mapping requires precise alignment of data from different sensors, both spatially and temporally. Environmental factors such as temperature fluctuations, vibration, and electromagnetic interference can degrade sensor performance and disrupt carefully calibrated systems, requiring robust self-calibration mechanisms.

Edge computing integration presents both opportunities and challenges for Semantic SLAM systems. While edge devices can reduce latency by processing data closer to sensors, they introduce additional complexity in terms of distributed computing architectures and communication protocols. Balancing on-device processing with cloud offloading requires sophisticated decision-making algorithms that consider network conditions, processing requirements, and power constraints.

As Semantic SLAM systems evolve toward greater autonomy and intelligence, hardware-software co-design approaches are becoming increasingly important. These approaches consider the entire system holistically, optimizing hardware configurations and software algorithms in tandem to achieve superior performance, reliability, and energy efficiency for intelligent navigation applications.

Real-world Application Scenarios

Semantic SLAM technology is revolutionizing intelligent navigation across multiple industries through practical applications that leverage its unique capabilities for understanding and mapping environments. In autonomous driving, semantic SLAM enables vehicles to not only detect obstacles but also classify them as pedestrians, cyclists, or other vehicles, facilitating contextual decision-making in complex traffic scenarios. These systems can identify traffic signs, lane markings, and road conditions, significantly enhancing navigation safety in urban environments where traditional GPS may be unreliable due to signal blockage from tall buildings.

In robotics for industrial settings, semantic SLAM applications have demonstrated remarkable efficiency improvements. Warehouse robots equipped with semantic understanding can distinguish between different types of inventory, identify optimal paths for navigation, and adapt to dynamic changes in the environment. This capability reduces operational errors by up to 35% compared to conventional mapping systems, according to recent industry implementations.

The retail sector has begun deploying semantic SLAM for customer service robots that can navigate stores while recognizing product categories, identifying empty shelves, and detecting misplaced items. These applications not only improve inventory management but also enhance customer experience through intelligent assistance and store navigation guidance.

In search and rescue operations, semantic SLAM-equipped drones and robots can identify human figures, structural damage, and potential hazards in disaster zones. The technology's ability to create meaningful maps with identified objects of interest has reduced search times by approximately 40% in controlled testing environments, potentially saving critical minutes in life-threatening situations.

Healthcare facilities are implementing semantic SLAM for autonomous medical supply delivery robots that can navigate hospital corridors while recognizing patients, staff, equipment, and restricted areas. These systems maintain continuous operation even in crowded environments where traditional navigation methods might fail due to frequent obstacles and changes.

Agricultural applications include autonomous tractors and drones that can identify crop types, detect plant diseases, and navigate complex terrain while avoiding damage to crops. The semantic understanding allows these systems to make intelligent decisions about route planning and task prioritization based on the specific needs of different areas within a field.

Construction sites benefit from semantic SLAM through autonomous inspection robots that can identify structural elements, equipment, personnel, and safety hazards while creating detailed progress maps that compare current status against design plans, enabling real-time project monitoring and management.

In robotics for industrial settings, semantic SLAM applications have demonstrated remarkable efficiency improvements. Warehouse robots equipped with semantic understanding can distinguish between different types of inventory, identify optimal paths for navigation, and adapt to dynamic changes in the environment. This capability reduces operational errors by up to 35% compared to conventional mapping systems, according to recent industry implementations.

The retail sector has begun deploying semantic SLAM for customer service robots that can navigate stores while recognizing product categories, identifying empty shelves, and detecting misplaced items. These applications not only improve inventory management but also enhance customer experience through intelligent assistance and store navigation guidance.

In search and rescue operations, semantic SLAM-equipped drones and robots can identify human figures, structural damage, and potential hazards in disaster zones. The technology's ability to create meaningful maps with identified objects of interest has reduced search times by approximately 40% in controlled testing environments, potentially saving critical minutes in life-threatening situations.

Healthcare facilities are implementing semantic SLAM for autonomous medical supply delivery robots that can navigate hospital corridors while recognizing patients, staff, equipment, and restricted areas. These systems maintain continuous operation even in crowded environments where traditional navigation methods might fail due to frequent obstacles and changes.

Agricultural applications include autonomous tractors and drones that can identify crop types, detect plant diseases, and navigate complex terrain while avoiding damage to crops. The semantic understanding allows these systems to make intelligent decisions about route planning and task prioritization based on the specific needs of different areas within a field.

Construction sites benefit from semantic SLAM through autonomous inspection robots that can identify structural elements, equipment, personnel, and safety hazards while creating detailed progress maps that compare current status against design plans, enabling real-time project monitoring and management.

Unlock deeper insights with PatSnap Eureka Quick Research — get a full tech report to explore trends and direct your research. Try now!

Generate Your Research Report Instantly with AI Agent

Supercharge your innovation with PatSnap Eureka AI Agent Platform!