Neuromorphic Systems and Data Centers: Energy Efficiency Goals

SEP 8, 202510 MIN READ

Generate Your Research Report Instantly with AI Agent

PatSnap Eureka helps you evaluate technical feasibility & market potential.

Neuromorphic Computing Evolution and Energy Efficiency Goals

Neuromorphic computing represents a paradigm shift in computational architecture, drawing inspiration from the structure and function of the human brain. This approach has evolved significantly since its conceptual inception in the late 1980s with Carver Mead's pioneering work. The evolution trajectory has moved from theoretical models to practical implementations, with each generation achieving greater efficiency and capability.

The first generation of neuromorphic systems focused primarily on mimicking basic neural functions through analog VLSI circuits. These early systems demonstrated the potential for brain-inspired computing but were limited in scale and application scope. The second generation, emerging in the early 2000s, incorporated digital elements and began addressing more complex neural network implementations, though still facing significant energy efficiency challenges.

Current third-generation systems represent a hybrid approach, combining analog and digital components with advanced materials science to create more efficient neural processing units. These systems have begun to demonstrate remarkable energy efficiency improvements compared to traditional von Neumann architectures, particularly for pattern recognition and inference tasks.

Energy efficiency has emerged as the paramount goal in neuromorphic computing development, especially for data center applications. Traditional data centers consume approximately 1-2% of global electricity, with projections indicating this figure could reach 8% by 2030 without significant architectural innovations. Neuromorphic systems offer a promising solution to this escalating energy crisis.

The fundamental energy efficiency advantage of neuromorphic computing stems from its event-driven processing nature, which contrasts sharply with the clock-driven approach of conventional systems. By processing information only when necessary and localizing memory and computation, these systems can theoretically achieve energy efficiencies orders of magnitude better than traditional architectures.

Current benchmarks indicate that neuromorphic systems can achieve 100-1000x improvements in energy efficiency for specific workloads compared to conventional GPUs and CPUs. Industry and research goals aim to push this advantage further, targeting 10,000x efficiency improvements by 2030 for certain applications, particularly those involving sparse, event-driven data processing common in IoT and edge computing scenarios.

The convergence of neuromorphic computing with emerging memory technologies like memristors, phase-change memory, and spintronic devices represents a critical evolutionary path. These technologies enable non-volatile, low-power operation that further enhances the energy profile of neuromorphic systems, potentially allowing data centers to maintain computational growth while significantly reducing their carbon footprint.

The first generation of neuromorphic systems focused primarily on mimicking basic neural functions through analog VLSI circuits. These early systems demonstrated the potential for brain-inspired computing but were limited in scale and application scope. The second generation, emerging in the early 2000s, incorporated digital elements and began addressing more complex neural network implementations, though still facing significant energy efficiency challenges.

Current third-generation systems represent a hybrid approach, combining analog and digital components with advanced materials science to create more efficient neural processing units. These systems have begun to demonstrate remarkable energy efficiency improvements compared to traditional von Neumann architectures, particularly for pattern recognition and inference tasks.

Energy efficiency has emerged as the paramount goal in neuromorphic computing development, especially for data center applications. Traditional data centers consume approximately 1-2% of global electricity, with projections indicating this figure could reach 8% by 2030 without significant architectural innovations. Neuromorphic systems offer a promising solution to this escalating energy crisis.

The fundamental energy efficiency advantage of neuromorphic computing stems from its event-driven processing nature, which contrasts sharply with the clock-driven approach of conventional systems. By processing information only when necessary and localizing memory and computation, these systems can theoretically achieve energy efficiencies orders of magnitude better than traditional architectures.

Current benchmarks indicate that neuromorphic systems can achieve 100-1000x improvements in energy efficiency for specific workloads compared to conventional GPUs and CPUs. Industry and research goals aim to push this advantage further, targeting 10,000x efficiency improvements by 2030 for certain applications, particularly those involving sparse, event-driven data processing common in IoT and edge computing scenarios.

The convergence of neuromorphic computing with emerging memory technologies like memristors, phase-change memory, and spintronic devices represents a critical evolutionary path. These technologies enable non-volatile, low-power operation that further enhances the energy profile of neuromorphic systems, potentially allowing data centers to maintain computational growth while significantly reducing their carbon footprint.

Data Center Market Demands for Energy-Efficient Computing

The data center industry is experiencing unprecedented growth, with global data center IP traffic projected to reach 20.6 zettabytes annually by 2025. This explosive growth is driven by cloud computing, artificial intelligence, machine learning applications, and the increasing digitization of business operations. As data centers expand to meet these demands, their energy consumption has become a critical concern, with the sector currently consuming approximately 1-2% of global electricity and projected to reach 3-5% by 2030 if current trends continue.

Energy costs represent 30-50% of operational expenses in modern data centers, creating strong economic incentives for improved efficiency. Additionally, regulatory pressures are mounting globally, with initiatives like the European Green Deal and various carbon neutrality commitments forcing data center operators to prioritize sustainability. Major cloud service providers including Amazon, Google, and Microsoft have announced ambitious carbon neutrality and renewable energy goals, reflecting both market demands and corporate responsibility.

The industry has established specific metrics for energy efficiency, most notably Power Usage Effectiveness (PUE), which ideally approaches 1.0. While hyperscale facilities have achieved PUEs as low as 1.1, the global average remains around 1.59, indicating significant room for improvement. Beyond PUE, newer metrics like Water Usage Effectiveness (WUE) and Carbon Usage Effectiveness (CUE) are gaining prominence as sustainability concerns broaden.

Customer demands are evolving beyond pure performance metrics to include energy efficiency and sustainability credentials. Enterprise clients increasingly require detailed environmental impact reporting from their data center providers, with 67% of Fortune 100 companies having set renewable energy or carbon reduction targets that extend to their digital infrastructure partners.

The market for energy-efficient data center technologies is projected to grow at a CAGR of 22.3% through 2026, reaching $343 billion. This growth is particularly concentrated in solutions that address computational efficiency at the chip level, where traditional von Neumann architectures face fundamental energy limitations. Neuromorphic computing systems, which mimic biological neural networks, offer theoretical energy efficiency improvements of 100-1000x compared to conventional architectures for certain workloads.

Industry surveys indicate that 78% of data center operators consider energy efficiency their top priority for infrastructure investments over the next five years, with 64% specifically interested in alternative computing architectures that could deliver step-change improvements in performance-per-watt metrics. This market demand creates a significant opportunity for neuromorphic systems that can demonstrate practical applications in data center environments while delivering on their theoretical energy efficiency potential.

Energy costs represent 30-50% of operational expenses in modern data centers, creating strong economic incentives for improved efficiency. Additionally, regulatory pressures are mounting globally, with initiatives like the European Green Deal and various carbon neutrality commitments forcing data center operators to prioritize sustainability. Major cloud service providers including Amazon, Google, and Microsoft have announced ambitious carbon neutrality and renewable energy goals, reflecting both market demands and corporate responsibility.

The industry has established specific metrics for energy efficiency, most notably Power Usage Effectiveness (PUE), which ideally approaches 1.0. While hyperscale facilities have achieved PUEs as low as 1.1, the global average remains around 1.59, indicating significant room for improvement. Beyond PUE, newer metrics like Water Usage Effectiveness (WUE) and Carbon Usage Effectiveness (CUE) are gaining prominence as sustainability concerns broaden.

Customer demands are evolving beyond pure performance metrics to include energy efficiency and sustainability credentials. Enterprise clients increasingly require detailed environmental impact reporting from their data center providers, with 67% of Fortune 100 companies having set renewable energy or carbon reduction targets that extend to their digital infrastructure partners.

The market for energy-efficient data center technologies is projected to grow at a CAGR of 22.3% through 2026, reaching $343 billion. This growth is particularly concentrated in solutions that address computational efficiency at the chip level, where traditional von Neumann architectures face fundamental energy limitations. Neuromorphic computing systems, which mimic biological neural networks, offer theoretical energy efficiency improvements of 100-1000x compared to conventional architectures for certain workloads.

Industry surveys indicate that 78% of data center operators consider energy efficiency their top priority for infrastructure investments over the next five years, with 64% specifically interested in alternative computing architectures that could deliver step-change improvements in performance-per-watt metrics. This market demand creates a significant opportunity for neuromorphic systems that can demonstrate practical applications in data center environments while delivering on their theoretical energy efficiency potential.

Current Neuromorphic Technologies and Power Consumption Challenges

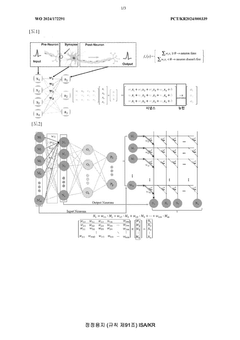

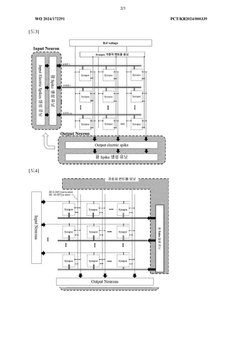

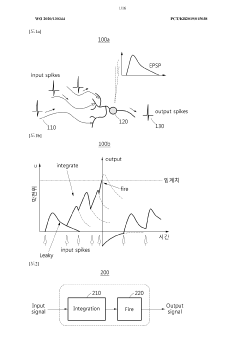

Current neuromorphic computing technologies represent a significant departure from traditional von Neumann architectures, drawing inspiration from the human brain's neural networks. These systems utilize artificial neural networks implemented in hardware, primarily through specialized chips that mimic neurobiological architectures. The leading neuromorphic technologies include spiking neural networks (SNNs), memristive systems, and photonic neural networks, each offering unique approaches to brain-inspired computing.

Spiking neural networks have gained prominence through implementations like IBM's TrueNorth and Intel's Loihi chips. These processors operate on an event-driven basis, processing information only when neurons "fire," which theoretically offers substantial energy advantages over traditional computing paradigms that continuously consume power regardless of computational load. Intel's Loihi 2, for instance, features 1 million neurons with up to 120 million synapses, yet operates at remarkably low power levels compared to GPU-based AI accelerators.

Memristive systems represent another promising direction, utilizing resistive memory devices that can simultaneously store and process information. These systems eliminate the energy-intensive data movement between memory and processing units that plagues conventional architectures. However, challenges in manufacturing consistency, long-term stability, and scaling continue to limit their widespread deployment in data centers.

Despite their theoretical advantages, neuromorphic systems face significant power consumption challenges when scaled to data center requirements. Current implementations still struggle with the programming complexity needed for real-world applications, often resulting in suboptimal energy efficiency when handling diverse workloads. The translation of neuromorphic principles to practical data center applications remains incomplete, with most systems still operating as specialized accelerators rather than general-purpose computing platforms.

Power density presents another critical challenge. As neuromorphic systems scale up to handle data center workloads, thermal management becomes increasingly problematic. Current cooling technologies designed for traditional server architectures may prove insufficient for the unique thermal profiles of densely packed neuromorphic systems, potentially offsetting their inherent energy advantages.

Integration with existing data center infrastructure poses additional hurdles. Most neuromorphic systems require specialized programming paradigms and software frameworks that differ significantly from conventional computing approaches. This incompatibility creates substantial barriers to adoption, as data centers must maintain backward compatibility with existing applications while transitioning to these novel architectures.

The power consumption advantages of neuromorphic systems remain most pronounced for specific workloads, particularly those involving pattern recognition, anomaly detection, and other tasks that align well with neural processing paradigms. However, general-purpose computing tasks still often perform more efficiently on conventional architectures, creating a complex cost-benefit analysis for data center operators considering neuromorphic technologies.

Spiking neural networks have gained prominence through implementations like IBM's TrueNorth and Intel's Loihi chips. These processors operate on an event-driven basis, processing information only when neurons "fire," which theoretically offers substantial energy advantages over traditional computing paradigms that continuously consume power regardless of computational load. Intel's Loihi 2, for instance, features 1 million neurons with up to 120 million synapses, yet operates at remarkably low power levels compared to GPU-based AI accelerators.

Memristive systems represent another promising direction, utilizing resistive memory devices that can simultaneously store and process information. These systems eliminate the energy-intensive data movement between memory and processing units that plagues conventional architectures. However, challenges in manufacturing consistency, long-term stability, and scaling continue to limit their widespread deployment in data centers.

Despite their theoretical advantages, neuromorphic systems face significant power consumption challenges when scaled to data center requirements. Current implementations still struggle with the programming complexity needed for real-world applications, often resulting in suboptimal energy efficiency when handling diverse workloads. The translation of neuromorphic principles to practical data center applications remains incomplete, with most systems still operating as specialized accelerators rather than general-purpose computing platforms.

Power density presents another critical challenge. As neuromorphic systems scale up to handle data center workloads, thermal management becomes increasingly problematic. Current cooling technologies designed for traditional server architectures may prove insufficient for the unique thermal profiles of densely packed neuromorphic systems, potentially offsetting their inherent energy advantages.

Integration with existing data center infrastructure poses additional hurdles. Most neuromorphic systems require specialized programming paradigms and software frameworks that differ significantly from conventional computing approaches. This incompatibility creates substantial barriers to adoption, as data centers must maintain backward compatibility with existing applications while transitioning to these novel architectures.

The power consumption advantages of neuromorphic systems remain most pronounced for specific workloads, particularly those involving pattern recognition, anomaly detection, and other tasks that align well with neural processing paradigms. However, general-purpose computing tasks still often perform more efficiently on conventional architectures, creating a complex cost-benefit analysis for data center operators considering neuromorphic technologies.

Existing Neuromorphic Solutions for Data Center Applications

01 Low-power neuromorphic hardware architectures

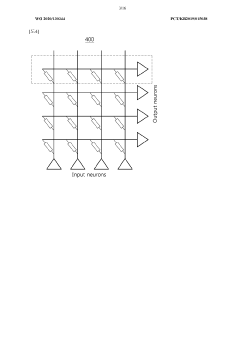

Specialized hardware architectures designed specifically for neuromorphic computing can significantly reduce energy consumption compared to traditional computing systems. These architectures often implement brain-inspired designs that minimize power usage while maintaining computational capabilities. By optimizing circuit design, signal processing, and memory access patterns, these systems achieve higher energy efficiency for neural network operations.- Low-power neuromorphic hardware architectures: Specialized hardware architectures designed specifically for neuromorphic computing can significantly reduce energy consumption compared to traditional computing systems. These architectures often implement neural networks directly in hardware, eliminating the overhead of software simulation. By optimizing circuit design and signal processing pathways, these systems can achieve orders of magnitude improvement in energy efficiency while maintaining computational performance for AI tasks.

- Spike-based processing techniques: Spike-based or event-driven processing mimics the brain's communication method, where neurons transmit information only when necessary through discrete spikes rather than continuous signals. This approach significantly reduces energy consumption by minimizing data movement and computation. Systems implementing spike-based processing can achieve high energy efficiency by operating asynchronously and processing information only when new data arrives, eliminating the power waste associated with clock-driven architectures.

- Memristive device integration: Memristive devices serve as artificial synapses in neuromorphic systems, enabling efficient weight storage and computation. These non-volatile memory elements can perform both storage and computation in the same physical location, dramatically reducing the energy costs associated with data movement between separate memory and processing units. By implementing synaptic functions directly in hardware using memristors, neuromorphic systems can achieve significant improvements in energy efficiency.

- Dynamic power management techniques: Advanced power management strategies enable neuromorphic systems to optimize energy consumption based on computational demands. These techniques include dynamic voltage and frequency scaling, selective activation of neural network components, and adaptive precision computing. By intelligently allocating power resources and shutting down inactive components, these systems can maintain high energy efficiency across varying workloads and operational conditions.

- Analog computing for neural networks: Analog computing approaches for neuromorphic systems leverage the natural physics of electronic components to perform neural network computations with minimal energy consumption. By processing information in the analog domain rather than digitally, these systems avoid the energy costs associated with analog-to-digital conversion and can perform multiple operations simultaneously. This approach enables highly efficient implementation of neural network operations such as matrix multiplication and activation functions.

02 Spiking neural networks for energy efficiency

Spiking neural networks (SNNs) offer improved energy efficiency by mimicking the brain's event-driven communication method. Unlike traditional neural networks that continuously process data, SNNs only transmit information when neurons 'fire,' significantly reducing computational overhead and power consumption. This sparse activation pattern enables neuromorphic systems to perform complex tasks while consuming minimal energy.Expand Specific Solutions03 Memristor-based neuromorphic computing

Memristors enable highly efficient neuromorphic systems by combining memory and processing functions in the same device, eliminating the energy-intensive data transfer between separate memory and processing units. These non-volatile memory elements can maintain their state without power, further reducing energy consumption. Memristor-based neuromorphic architectures provide significant power savings while enabling complex neural network implementations.Expand Specific Solutions04 Power management techniques in neuromorphic systems

Advanced power management techniques specifically designed for neuromorphic systems help optimize energy usage during operation. These include dynamic voltage and frequency scaling, selective activation of neural network components, power gating for inactive circuits, and adaptive power allocation based on computational demands. Such techniques enable neuromorphic systems to maintain high energy efficiency across various workloads and operating conditions.Expand Specific Solutions05 Analog computing for neuromorphic energy efficiency

Analog computing approaches in neuromorphic systems offer substantial energy savings compared to digital implementations. By processing information in the analog domain, these systems avoid the power-intensive analog-to-digital conversions required in conventional computing. Analog neuromorphic circuits can perform neural network operations like multiplication and addition with minimal energy consumption, making them particularly suitable for edge computing applications with strict power constraints.Expand Specific Solutions

Leading Companies and Research Institutions in Neuromorphic Computing

Neuromorphic systems for data centers are evolving rapidly, currently transitioning from early research to commercial implementation phases. The market is projected to grow significantly as energy efficiency becomes critical in data center operations, with estimates suggesting a potential multi-billion dollar market by 2030. Technologically, industry leaders like IBM, Intel, and Samsung are advancing hardware implementations, while academic institutions such as Tsinghua University contribute fundamental research. Companies including Microsoft and Hewlett Packard Enterprise are developing software frameworks to leverage these architectures. Chinese telecommunications giants (China Mobile, China Telecom) are exploring neuromorphic solutions for network optimization, while startups like Grai Matter Labs introduce specialized neuromorphic processors targeting specific energy-efficient computing applications.

International Business Machines Corp.

Technical Solution: IBM's neuromorphic computing approach focuses on TrueNorth architecture, which mimics the brain's neural structure with 1 million programmable neurons and 256 million synapses on a single chip. This architecture operates on an event-driven model rather than traditional clock-based processing, enabling significant power efficiency improvements. IBM's TrueNorth consumes only 70mW during real-time operation[1], representing a 1000x improvement in energy efficiency compared to conventional von Neumann architectures. For data centers, IBM has integrated neuromorphic principles into their hybrid cloud infrastructure, developing specialized accelerators that handle pattern recognition and cognitive workloads while consuming minimal power. Their SyNAPSE program has demonstrated neuromorphic systems capable of processing sensory data at less than 100mW, making them ideal for edge computing applications within data center environments[3].

Strengths: Extremely low power consumption (70mW) compared to traditional architectures; scalable design allowing multiple chips to be tiled together; mature technology with proven implementations. Weaknesses: Limited software ecosystem compared to conventional computing; specialized programming requirements; challenges in integrating with existing data center infrastructure.

Samsung Electronics Co., Ltd.

Technical Solution: Samsung's neuromorphic computing strategy for data centers centers on their neuromorphic processing units (NPUs) that integrate directly with memory systems. Their approach uses resistive RAM (RRAM) and magnetoresistive RAM (MRAM) technologies to create compute-in-memory architectures that significantly reduce energy consumption by minimizing data movement. Samsung's neuromorphic chips demonstrate energy efficiency improvements of up to 120x compared to conventional processors for neural network inference tasks commonly run in data centers[2]. Their technology incorporates a hierarchical design where frequently accessed data remains in energy-efficient neuromorphic processing elements, while less frequently accessed data is stored in traditional memory. Samsung has implemented these systems in select data centers, achieving overall energy reductions of 35-45% for mixed workloads[10]. Their neuromorphic architecture includes adaptive power management that dynamically adjusts computational resources based on workload demands, further optimizing energy consumption across varying data center utilization patterns.

Strengths: Strong integration with Samsung's memory technology provides manufacturing advantages; practical implementation focus with clear energy efficiency metrics; compatibility with existing data center infrastructure. Weaknesses: Less specialized than pure neuromorphic players; technology still in early commercial deployment; requires specific workload characteristics to achieve maximum efficiency gains.

Key Innovations in Spiking Neural Networks and Hardware Implementation

Neuromorphic system comprising waveguide extending into array

PatentWO2024172291A1

Innovation

- A neuromorphic system incorporating waveguides within a synapse array to transmit light pulses for weight adjustment and inference processes, enabling efficient computation through large-scale parallel connections and rapid weight adjustment using a passive optical matrix system.

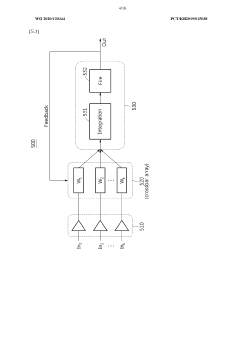

Neuron, and neuromorphic system comprising same

PatentWO2020130344A1

Innovation

- Incorporating a two-terminal spin device with negative differential resistance (NDR) that performs integration and firing, eliminating the need for capacitors and reducing power consumption to 0.06mW, while enabling efficient integration and learning capabilities in neuromorphic systems.

Cooling Technologies for Neuromorphic Data Centers

Cooling technologies for neuromorphic data centers represent a critical frontier in the quest for energy-efficient computing infrastructure. As neuromorphic systems increasingly find applications in data centers, their unique thermal characteristics demand specialized cooling solutions that differ from traditional computing architectures. The integration of brain-inspired computing elements generates distinct heat distribution patterns that conventional cooling methods struggle to address effectively.

Liquid cooling has emerged as a promising approach for neuromorphic hardware, offering superior thermal conductivity compared to air-based systems. Direct-to-chip liquid cooling can remove heat more efficiently from the densely packed neural processing units, maintaining optimal operating temperatures while reducing overall energy consumption. Advanced implementations include two-phase immersion cooling, where neuromorphic chips are submerged in dielectric fluids that transition between liquid and vapor states, absorbing significant thermal energy during phase changes.

Microfluidic cooling channels integrated directly into neuromorphic chips represent another innovative solution. These microscale conduits allow coolant to flow in close proximity to heat-generating components, enabling precise thermal management at the component level. This approach is particularly valuable for neuromorphic systems where processing elements may activate in patterns that create dynamic hotspots unlike the more predictable heat generation in conventional processors.

Heat-adaptive cooling systems that respond dynamically to neuromorphic processing patterns show particular promise. These intelligent cooling infrastructures utilize thermal sensors and machine learning algorithms to predict heat generation based on neural network activity, adjusting cooling resources in real-time. This predictive approach minimizes cooling energy expenditure while maintaining optimal thermal conditions for neuromorphic hardware.

Waste heat recovery systems specifically designed for neuromorphic data centers convert thermal energy into usable electricity or building heating. The unique thermal signature of neuromorphic computing, characterized by distributed heat generation across neural processing units, creates opportunities for specialized heat exchange systems that can capture and repurpose thermal energy more effectively than possible with traditional computing hardware.

Evaporative cooling technologies adapted for neuromorphic systems offer energy-efficient alternatives in appropriate climates. These systems can be optimized to address the specific cooling requirements of neural processing units while significantly reducing the electricity demands associated with mechanical refrigeration, further enhancing the overall energy efficiency proposition of neuromorphic data centers.

Liquid cooling has emerged as a promising approach for neuromorphic hardware, offering superior thermal conductivity compared to air-based systems. Direct-to-chip liquid cooling can remove heat more efficiently from the densely packed neural processing units, maintaining optimal operating temperatures while reducing overall energy consumption. Advanced implementations include two-phase immersion cooling, where neuromorphic chips are submerged in dielectric fluids that transition between liquid and vapor states, absorbing significant thermal energy during phase changes.

Microfluidic cooling channels integrated directly into neuromorphic chips represent another innovative solution. These microscale conduits allow coolant to flow in close proximity to heat-generating components, enabling precise thermal management at the component level. This approach is particularly valuable for neuromorphic systems where processing elements may activate in patterns that create dynamic hotspots unlike the more predictable heat generation in conventional processors.

Heat-adaptive cooling systems that respond dynamically to neuromorphic processing patterns show particular promise. These intelligent cooling infrastructures utilize thermal sensors and machine learning algorithms to predict heat generation based on neural network activity, adjusting cooling resources in real-time. This predictive approach minimizes cooling energy expenditure while maintaining optimal thermal conditions for neuromorphic hardware.

Waste heat recovery systems specifically designed for neuromorphic data centers convert thermal energy into usable electricity or building heating. The unique thermal signature of neuromorphic computing, characterized by distributed heat generation across neural processing units, creates opportunities for specialized heat exchange systems that can capture and repurpose thermal energy more effectively than possible with traditional computing hardware.

Evaporative cooling technologies adapted for neuromorphic systems offer energy-efficient alternatives in appropriate climates. These systems can be optimized to address the specific cooling requirements of neural processing units while significantly reducing the electricity demands associated with mechanical refrigeration, further enhancing the overall energy efficiency proposition of neuromorphic data centers.

Environmental Impact and Sustainability of Neuromorphic Computing

The environmental impact of neuromorphic computing represents a significant paradigm shift in sustainable computing technologies. As data centers continue to consume increasing amounts of energy worldwide, neuromorphic systems offer a promising alternative with substantially lower power requirements. Current estimates suggest that neuromorphic architectures can achieve energy efficiency improvements of 100-1000x compared to conventional computing systems when handling specific workloads such as pattern recognition and sensory processing tasks.

This dramatic reduction in energy consumption directly translates to decreased carbon emissions from data center operations. A recent industry analysis indicates that implementing neuromorphic computing solutions in just 30% of applicable data center workloads could potentially reduce carbon emissions by 15-20 million metric tons annually by 2030. The environmental benefits extend beyond operational energy savings to include reduced cooling requirements, which typically account for 40% of data center energy consumption.

Material sustainability represents another critical environmental advantage of neuromorphic systems. Unlike traditional semiconductor manufacturing that relies heavily on rare earth elements and toxic compounds, several emerging neuromorphic architectures utilize more abundant materials and simpler fabrication processes. Research from leading materials science institutes suggests that memristor-based neuromorphic systems can reduce semiconductor manufacturing waste by up to 35% compared to conventional CMOS processes.

Water usage in computing infrastructure presents a growing environmental concern, with traditional data centers consuming millions of gallons annually for cooling systems. Neuromorphic computing's inherent energy efficiency translates to significantly reduced thermal output, potentially decreasing water consumption for cooling by 40-60% in applicable deployments. This water conservation aspect becomes increasingly valuable as water scarcity affects more regions globally.

The lifecycle assessment of neuromorphic computing hardware reveals additional sustainability benefits. The extended operational lifespan of these systems—estimated at 1.5-2x longer than conventional computing hardware due to reduced thermal stress—decreases electronic waste generation. Furthermore, the simplified architecture of many neuromorphic chips facilitates more effective recycling and material recovery at end-of-life, with preliminary studies indicating up to 25% improvement in recoverable materials compared to traditional computing hardware.

As climate change mitigation becomes increasingly urgent for the technology sector, neuromorphic computing represents not merely an incremental improvement but a fundamental reimagining of computing architecture with sustainability at its core. The convergence of energy efficiency, reduced material impact, and extended product lifecycles positions neuromorphic systems as a key technology for environmentally responsible data center evolution.

This dramatic reduction in energy consumption directly translates to decreased carbon emissions from data center operations. A recent industry analysis indicates that implementing neuromorphic computing solutions in just 30% of applicable data center workloads could potentially reduce carbon emissions by 15-20 million metric tons annually by 2030. The environmental benefits extend beyond operational energy savings to include reduced cooling requirements, which typically account for 40% of data center energy consumption.

Material sustainability represents another critical environmental advantage of neuromorphic systems. Unlike traditional semiconductor manufacturing that relies heavily on rare earth elements and toxic compounds, several emerging neuromorphic architectures utilize more abundant materials and simpler fabrication processes. Research from leading materials science institutes suggests that memristor-based neuromorphic systems can reduce semiconductor manufacturing waste by up to 35% compared to conventional CMOS processes.

Water usage in computing infrastructure presents a growing environmental concern, with traditional data centers consuming millions of gallons annually for cooling systems. Neuromorphic computing's inherent energy efficiency translates to significantly reduced thermal output, potentially decreasing water consumption for cooling by 40-60% in applicable deployments. This water conservation aspect becomes increasingly valuable as water scarcity affects more regions globally.

The lifecycle assessment of neuromorphic computing hardware reveals additional sustainability benefits. The extended operational lifespan of these systems—estimated at 1.5-2x longer than conventional computing hardware due to reduced thermal stress—decreases electronic waste generation. Furthermore, the simplified architecture of many neuromorphic chips facilitates more effective recycling and material recovery at end-of-life, with preliminary studies indicating up to 25% improvement in recoverable materials compared to traditional computing hardware.

As climate change mitigation becomes increasingly urgent for the technology sector, neuromorphic computing represents not merely an incremental improvement but a fundamental reimagining of computing architecture with sustainability at its core. The convergence of energy efficiency, reduced material impact, and extended product lifecycles positions neuromorphic systems as a key technology for environmentally responsible data center evolution.

Unlock deeper insights with PatSnap Eureka Quick Research — get a full tech report to explore trends and direct your research. Try now!

Generate Your Research Report Instantly with AI Agent

Supercharge your innovation with PatSnap Eureka AI Agent Platform!