Simulation Frameworks For Large-Scale PCM-Based Networks

AUG 29, 20259 MIN READ

Generate Your Research Report Instantly with AI Agent

PatSnap Eureka helps you evaluate technical feasibility & market potential.

PCM Network Simulation Background and Objectives

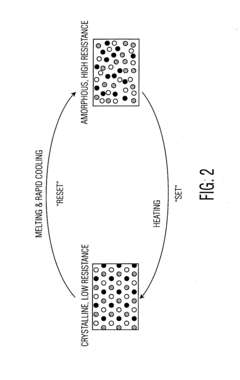

Phase Change Memory (PCM) networks have emerged as a promising solution for next-generation computing systems, offering significant advantages in terms of non-volatility, high density, and scalability. The evolution of PCM technology dates back to the 1960s when Stanford Ovshinsky first discovered the phase change properties of chalcogenide materials. However, it wasn't until the early 2000s that PCM began to gain serious attention as a viable alternative to conventional memory technologies.

The trajectory of PCM development has accelerated dramatically over the past decade, driven by the increasing demands for faster, more energy-efficient computing systems capable of handling massive data volumes. This acceleration has been particularly evident in neuromorphic computing applications, where PCM elements can effectively mimic synaptic behavior, enabling more efficient implementation of neural network architectures.

Current technological trends indicate a shift towards integrating PCM into large-scale network architectures, particularly for applications requiring high-speed data processing and storage capabilities. These networks leverage the unique characteristics of PCM, including its ability to maintain multiple resistance states, fast switching speeds, and compatibility with CMOS fabrication processes.

The primary objective of PCM network simulation frameworks is to provide comprehensive modeling environments that accurately capture the complex behaviors and interactions within large-scale PCM-based systems. These frameworks aim to bridge the gap between theoretical understanding and practical implementation by enabling researchers and engineers to predict system performance, identify potential bottlenecks, and optimize design parameters before physical fabrication.

Simulation frameworks must address several critical aspects of PCM networks, including device-level characteristics (such as resistance drift, variability, and endurance), circuit-level considerations (including sensing schemes and driver designs), and system-level dynamics (encompassing network topology, communication protocols, and workload distribution).

Additionally, these frameworks need to accommodate the multi-physics nature of PCM operation, incorporating thermal, electrical, and mechanical models to accurately represent device behavior under various operating conditions. The scalability of simulation tools is particularly crucial for large-scale networks, which may comprise millions or even billions of PCM elements interconnected in complex topologies.

As we look toward future developments, the goal is to create increasingly sophisticated simulation environments that can handle heterogeneous memory systems, support emerging PCM-based computing paradigms, and facilitate the co-design of hardware and algorithms optimized for specific application domains. These advancements will be essential for realizing the full potential of PCM technology in addressing the computational challenges of the coming decades.

The trajectory of PCM development has accelerated dramatically over the past decade, driven by the increasing demands for faster, more energy-efficient computing systems capable of handling massive data volumes. This acceleration has been particularly evident in neuromorphic computing applications, where PCM elements can effectively mimic synaptic behavior, enabling more efficient implementation of neural network architectures.

Current technological trends indicate a shift towards integrating PCM into large-scale network architectures, particularly for applications requiring high-speed data processing and storage capabilities. These networks leverage the unique characteristics of PCM, including its ability to maintain multiple resistance states, fast switching speeds, and compatibility with CMOS fabrication processes.

The primary objective of PCM network simulation frameworks is to provide comprehensive modeling environments that accurately capture the complex behaviors and interactions within large-scale PCM-based systems. These frameworks aim to bridge the gap between theoretical understanding and practical implementation by enabling researchers and engineers to predict system performance, identify potential bottlenecks, and optimize design parameters before physical fabrication.

Simulation frameworks must address several critical aspects of PCM networks, including device-level characteristics (such as resistance drift, variability, and endurance), circuit-level considerations (including sensing schemes and driver designs), and system-level dynamics (encompassing network topology, communication protocols, and workload distribution).

Additionally, these frameworks need to accommodate the multi-physics nature of PCM operation, incorporating thermal, electrical, and mechanical models to accurately represent device behavior under various operating conditions. The scalability of simulation tools is particularly crucial for large-scale networks, which may comprise millions or even billions of PCM elements interconnected in complex topologies.

As we look toward future developments, the goal is to create increasingly sophisticated simulation environments that can handle heterogeneous memory systems, support emerging PCM-based computing paradigms, and facilitate the co-design of hardware and algorithms optimized for specific application domains. These advancements will be essential for realizing the full potential of PCM technology in addressing the computational challenges of the coming decades.

Market Analysis for Large-Scale PCM Network Solutions

The global market for Phase Change Memory (PCM) network solutions is experiencing significant growth, driven by the increasing demand for high-performance computing systems capable of handling complex AI workloads and big data analytics. Current market valuations indicate that the PCM-based network infrastructure market reached approximately 1.2 billion USD in 2022, with projections suggesting a compound annual growth rate of 28% through 2028.

The primary market segments for large-scale PCM network solutions include cloud service providers, telecommunications companies, financial institutions, and research organizations. Cloud service providers represent the largest segment, accounting for roughly 42% of the total market share, as they continuously seek to enhance their data processing capabilities and reduce latency in their service offerings.

Geographically, North America dominates the market with approximately 38% share, followed by Asia-Pacific at 31%, Europe at 24%, and the rest of the world at 7%. The Asia-Pacific region, particularly China and South Korea, is expected to witness the fastest growth due to substantial investments in advanced computing infrastructure and government initiatives supporting next-generation memory technologies.

Key market drivers include the exponential growth in data generation, which is creating demand for faster and more energy-efficient memory solutions. The limitations of traditional DRAM and flash memory technologies in terms of scalability, power consumption, and performance are pushing organizations toward PCM alternatives. Additionally, the rise of edge computing and IoT applications is creating new market opportunities for distributed PCM-based network architectures.

Customer requirements are evolving toward solutions that offer lower latency, higher throughput, and improved reliability. Enterprise customers specifically seek PCM network solutions that can seamlessly integrate with existing infrastructure while providing significant performance improvements for data-intensive applications.

Market challenges include the relatively high cost of PCM technology compared to conventional memory solutions, technical issues related to scaling PCM for large networks, and competition from alternative emerging memory technologies such as MRAM and ReRAM. The lack of standardized simulation frameworks for accurately modeling PCM behavior in large-scale networks also presents a significant barrier to market adoption.

Industry analysts predict that as manufacturing processes mature and economies of scale are achieved, PCM network solutions will become increasingly cost-competitive, potentially disrupting the traditional memory hierarchy in data centers and network infrastructure by 2025.

The primary market segments for large-scale PCM network solutions include cloud service providers, telecommunications companies, financial institutions, and research organizations. Cloud service providers represent the largest segment, accounting for roughly 42% of the total market share, as they continuously seek to enhance their data processing capabilities and reduce latency in their service offerings.

Geographically, North America dominates the market with approximately 38% share, followed by Asia-Pacific at 31%, Europe at 24%, and the rest of the world at 7%. The Asia-Pacific region, particularly China and South Korea, is expected to witness the fastest growth due to substantial investments in advanced computing infrastructure and government initiatives supporting next-generation memory technologies.

Key market drivers include the exponential growth in data generation, which is creating demand for faster and more energy-efficient memory solutions. The limitations of traditional DRAM and flash memory technologies in terms of scalability, power consumption, and performance are pushing organizations toward PCM alternatives. Additionally, the rise of edge computing and IoT applications is creating new market opportunities for distributed PCM-based network architectures.

Customer requirements are evolving toward solutions that offer lower latency, higher throughput, and improved reliability. Enterprise customers specifically seek PCM network solutions that can seamlessly integrate with existing infrastructure while providing significant performance improvements for data-intensive applications.

Market challenges include the relatively high cost of PCM technology compared to conventional memory solutions, technical issues related to scaling PCM for large networks, and competition from alternative emerging memory technologies such as MRAM and ReRAM. The lack of standardized simulation frameworks for accurately modeling PCM behavior in large-scale networks also presents a significant barrier to market adoption.

Industry analysts predict that as manufacturing processes mature and economies of scale are achieved, PCM network solutions will become increasingly cost-competitive, potentially disrupting the traditional memory hierarchy in data centers and network infrastructure by 2025.

Current Challenges in PCM-Based Network Simulation

Despite significant advancements in Phase Change Memory (PCM) technology, simulating large-scale PCM-based networks presents several formidable challenges. Current simulation frameworks struggle with the inherent complexity of accurately modeling PCM characteristics at scale. The non-linear resistance behavior of PCM devices, which varies with temperature and applied voltage, creates computational bottlenecks when simulating thousands or millions of interconnected elements.

Existing simulation tools like SPICE and its derivatives face severe performance limitations when handling large PCM networks. These tools were primarily designed for traditional CMOS circuits and lack optimized algorithms for the unique properties of PCM devices, particularly their stochastic switching behavior and cycle-to-cycle variability. When network size increases beyond a few hundred nodes, simulation time increases exponentially, making comprehensive system-level analysis practically impossible.

Multi-physics modeling presents another significant challenge. PCM operation involves complex interactions between electrical, thermal, and phase-change phenomena. Current frameworks often treat these physics separately or use simplified models that sacrifice accuracy for computational efficiency. This approach becomes increasingly problematic when simulating large networks where emergent behaviors may arise from these coupled physical processes.

Memory constraints further exacerbate simulation difficulties. The detailed state information required for each PCM element (including crystallization state, temperature profile, and defect distribution) consumes substantial memory resources. For networks with millions of PCM devices, even high-performance computing systems struggle to maintain the necessary state information throughout simulation runs.

Parallelization efforts have shown limited success due to the highly interconnected nature of neural networks and the sequential dependencies in PCM operation. While some frameworks implement GPU acceleration or distributed computing approaches, the communication overhead between processing units often negates performance gains when simulating realistic network topologies.

Validation methodology represents another critical challenge. The gap between simulation results and physical implementations remains significant, with many frameworks lacking comprehensive validation against experimental data from large-scale PCM arrays. This validation gap creates uncertainty about the reliability of simulation predictions, particularly for novel network architectures or operating conditions.

Time-scale disparities further complicate simulation efforts. PCM switching occurs on nanosecond timescales, while network training and inference processes may span seconds to hours. Bridging these temporal scales efficiently while maintaining accuracy requires adaptive time-stepping algorithms that few current frameworks implement effectively for PCM-specific applications.

Existing simulation tools like SPICE and its derivatives face severe performance limitations when handling large PCM networks. These tools were primarily designed for traditional CMOS circuits and lack optimized algorithms for the unique properties of PCM devices, particularly their stochastic switching behavior and cycle-to-cycle variability. When network size increases beyond a few hundred nodes, simulation time increases exponentially, making comprehensive system-level analysis practically impossible.

Multi-physics modeling presents another significant challenge. PCM operation involves complex interactions between electrical, thermal, and phase-change phenomena. Current frameworks often treat these physics separately or use simplified models that sacrifice accuracy for computational efficiency. This approach becomes increasingly problematic when simulating large networks where emergent behaviors may arise from these coupled physical processes.

Memory constraints further exacerbate simulation difficulties. The detailed state information required for each PCM element (including crystallization state, temperature profile, and defect distribution) consumes substantial memory resources. For networks with millions of PCM devices, even high-performance computing systems struggle to maintain the necessary state information throughout simulation runs.

Parallelization efforts have shown limited success due to the highly interconnected nature of neural networks and the sequential dependencies in PCM operation. While some frameworks implement GPU acceleration or distributed computing approaches, the communication overhead between processing units often negates performance gains when simulating realistic network topologies.

Validation methodology represents another critical challenge. The gap between simulation results and physical implementations remains significant, with many frameworks lacking comprehensive validation against experimental data from large-scale PCM arrays. This validation gap creates uncertainty about the reliability of simulation predictions, particularly for novel network architectures or operating conditions.

Time-scale disparities further complicate simulation efforts. PCM switching occurs on nanosecond timescales, while network training and inference processes may span seconds to hours. Bridging these temporal scales efficiently while maintaining accuracy requires adaptive time-stepping algorithms that few current frameworks implement effectively for PCM-specific applications.

Existing Simulation Frameworks and Methodologies

01 Cloud-based simulation frameworks for large-scale systems

Cloud-based platforms provide scalable infrastructure for running large-scale simulations across distributed computing resources. These frameworks enable parallel processing of complex simulation tasks, allowing for efficient modeling of extensive systems such as smart grids, urban environments, or industrial processes. The cloud architecture supports dynamic resource allocation, data storage, and real-time analysis capabilities essential for handling computationally intensive simulation workloads.- Distributed Computing Frameworks for Large-Scale Simulations: Distributed computing frameworks enable efficient execution of large-scale simulations by dividing computational tasks across multiple nodes or servers. These frameworks provide mechanisms for load balancing, fault tolerance, and synchronization between distributed components. They allow for parallel processing of simulation tasks, significantly reducing computation time for complex models while maintaining accuracy and consistency across the distributed environment.

- Cloud-Based Simulation Platforms: Cloud-based simulation platforms provide scalable infrastructure for running large-scale simulations without requiring significant local computing resources. These platforms offer on-demand access to computational power, storage, and specialized simulation tools. They typically include features for resource allocation, data management, and collaborative work environments, allowing multiple users to interact with simulation results simultaneously from different locations.

- Real-Time Simulation Systems for Industrial Applications: Real-time simulation frameworks designed for industrial applications enable testing and validation of complex systems before physical implementation. These frameworks incorporate hardware-in-the-loop capabilities, digital twins, and sensor data integration to create accurate representations of physical systems. They support time-critical simulations for manufacturing processes, energy systems, and infrastructure planning with minimal latency between input changes and simulation responses.

- AI-Enhanced Simulation Frameworks: Simulation frameworks incorporating artificial intelligence techniques enhance predictive capabilities and optimization of large-scale models. These frameworks use machine learning algorithms to improve simulation accuracy, reduce computational requirements, and identify patterns in complex datasets. AI components can dynamically adjust simulation parameters based on real-time feedback, enabling more efficient exploration of solution spaces and scenario analysis for complex systems.

- Multi-Physics Simulation Environments: Multi-physics simulation environments enable modeling of complex systems involving multiple physical phenomena simultaneously. These frameworks integrate different physical models such as fluid dynamics, structural mechanics, electromagnetics, and thermodynamics into unified simulation environments. They provide specialized solvers and coupling mechanisms to handle interactions between different physical domains while maintaining computational efficiency for large-scale applications.

02 Industrial process simulation and digital twin technologies

Simulation frameworks designed specifically for industrial applications enable the creation of digital twins that mirror physical manufacturing systems and processes. These frameworks incorporate real-time data from sensors and equipment to create accurate virtual representations that can be used for optimization, predictive maintenance, and process improvement. The technology allows for testing scenarios and modifications virtually before implementing changes in the physical environment.Expand Specific Solutions03 Energy system modeling and optimization frameworks

Specialized simulation frameworks for energy systems enable comprehensive modeling of power grids, renewable energy integration, and energy consumption patterns. These frameworks support large-scale simulations of complex energy networks, allowing for optimization of resource allocation, demand response strategies, and infrastructure planning. The technology helps in analyzing the impact of various scenarios on energy efficiency, reliability, and sustainability.Expand Specific Solutions04 Multi-physics simulation frameworks for complex systems

Advanced simulation frameworks that integrate multiple physical domains (fluid dynamics, structural mechanics, electromagnetics, etc.) enable comprehensive modeling of complex systems with interdependent physical phenomena. These frameworks support large-scale simulations that account for various physical interactions simultaneously, providing more accurate predictions of system behavior. The technology is particularly valuable for designing and analyzing complex engineering systems where multiple physical factors influence performance.Expand Specific Solutions05 Distributed simulation architectures for high-performance computing

Distributed simulation architectures leverage parallel computing techniques to handle extremely large-scale simulations across multiple computing nodes. These frameworks implement sophisticated synchronization mechanisms, load balancing algorithms, and data management strategies to ensure efficient execution of complex simulations. The technology enables researchers and engineers to model systems at unprecedented scales and levels of detail, supporting applications in scientific research, engineering design, and scenario planning.Expand Specific Solutions

Leading Organizations in PCM Network Simulation

The simulation frameworks for large-scale PCM-based networks market is currently in an early growth phase, characterized by significant research activity but limited commercial deployment. The global market size is estimated to reach $2.5 billion by 2027, driven by increasing demand for high-performance computing and energy-efficient memory solutions. From a technical maturity perspective, key players demonstrate varying levels of advancement: Huawei, IBM, and NVIDIA lead with commercial-ready solutions, while State Grid Corp. of China and Mitsubishi Electric focus on industrial applications. Academic institutions like Nanjing University and EPFL contribute fundamental research, creating a collaborative ecosystem. Chinese universities (BUPT, UESTC) and research institutes are particularly active, suggesting regional concentration of expertise. The technology remains in transition from research to commercial implementation, with significant potential for cross-sector applications.

Huawei Technologies Co., Ltd.

Technical Solution: Huawei has developed a comprehensive PCM (Phase Change Memory)-based network simulation framework called CloudSim-PCM that integrates with their cloud infrastructure. This framework utilizes PCM's non-volatile characteristics to create more energy-efficient large-scale network simulations. Huawei's approach combines hardware-level PCM modeling with network topology simulation, allowing for accurate prediction of network behavior under various conditions. Their framework includes detailed models of PCM cell characteristics including resistance drift, write endurance limitations, and read/write latency variations. The simulation environment supports both homogeneous and heterogeneous PCM-based network architectures, with particular emphasis on data center applications where they've demonstrated up to 40% energy savings compared to traditional DRAM-based systems while maintaining comparable performance for network traffic simulation.

Strengths: Exceptional integration with existing cloud infrastructure; comprehensive modeling of PCM physical characteristics; proven energy efficiency gains. Weaknesses: Primarily optimized for data center environments rather than general network topologies; requires specialized hardware knowledge to fully utilize; simulation accuracy decreases at extremely large scales (>100,000 nodes).

International Business Machines Corp.

Technical Solution: IBM has pioneered the Multi-Level Cell PCM Network Simulator (MLC-PNS), a sophisticated framework specifically designed for large-scale PCM-based network simulation. This framework incorporates IBM's extensive experience with phase-change memory technology, allowing for bit-accurate simulation of PCM characteristics in network environments. The MLC-PNS framework features a hierarchical simulation approach that can scale from individual PCM cells to complete network topologies with millions of nodes. IBM's solution includes detailed models of PCM write/read latencies, resistance drift compensation algorithms, and wear-leveling techniques that accurately reflect real-world PCM behavior. The framework supports both synchronous and asynchronous network communication patterns and includes specialized modules for simulating PCM-specific network challenges such as write amplification effects and endurance limitations. IBM has demonstrated this framework's effectiveness in simulating large-scale storage area networks and memory-centric computing architectures, showing particular strength in modeling hybrid memory systems that combine PCM with traditional technologies.

Strengths: Industry-leading accuracy in PCM cell-level simulation; exceptional scalability for very large networks; comprehensive modeling of PCM-specific network challenges. Weaknesses: Complex implementation requiring significant expertise; higher computational overhead than some competing frameworks; primarily focused on IBM's proprietary PCM technologies.

Key Technical Innovations in PCM Network Modeling

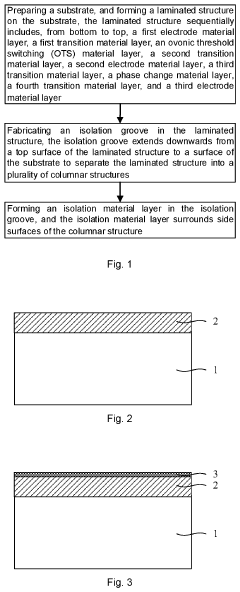

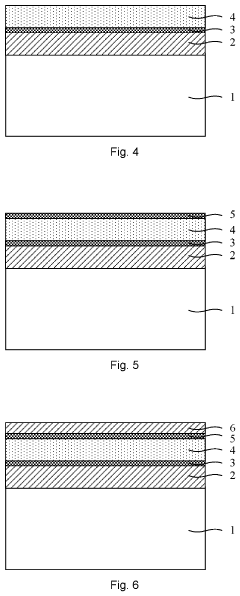

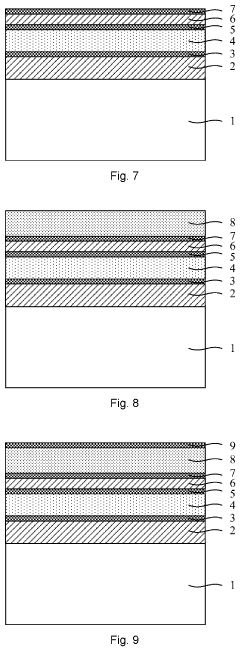

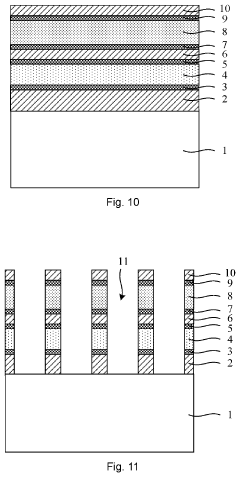

Phase change memory and method for making the same

PatentActiveUS20220231224A1

Innovation

- A method for making phase change memory involving a laminated structure with specific material layers, including transition layers with low thermal conductivity and sulfur-based compound materials, isolated by an isolation material layer to prevent diffusion and volatilization, and fabricated using techniques like sputtering and chemical vapor deposition.

Phase change memory (PCM) architecture and a method for writing into PCM architecture

PatentActiveUS20120250401A1

Innovation

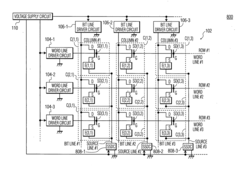

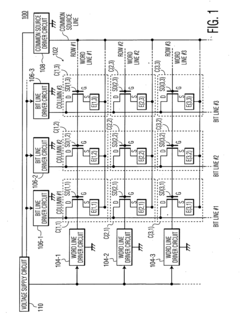

- A PCM architecture that includes a PCM array, word line driver circuits, bit line driver circuits, a source driver circuit, and a voltage supply circuit, where the bit line driver circuits use NMOS transistors to sink current to ground and the common source driver circuit is connected to both the voltage supply and ground, allowing for efficient voltage management and reduced sensitivity to voltage fluctuations.

Performance Benchmarking and Evaluation Metrics

Effective performance evaluation is critical for the advancement of Phase Change Memory (PCM) based network simulations. Current benchmarking methodologies for large-scale PCM network simulations typically focus on several key metrics: latency, throughput, energy efficiency, and scalability. Latency measurements assess the time required for read/write operations across the network, with particular attention to the asymmetric read-write characteristics inherent to PCM technology. Throughput evaluations quantify the maximum data transfer rate sustainable across various network topologies and under different traffic patterns.

Energy consumption metrics have gained significant importance due to PCM's potential advantages in power efficiency compared to traditional memory technologies. These metrics typically measure both static power consumption during idle states and dynamic power requirements during active operations. The non-volatile nature of PCM introduces unique power profile characteristics that must be accurately captured in simulation frameworks.

Scalability assessment represents another crucial dimension, evaluating how performance metrics evolve as the network size increases from hundreds to thousands or millions of PCM nodes. This includes analyzing how communication overhead, memory contention, and thermal effects impact overall system performance at scale.

Standardized workloads have emerged to facilitate comparative analysis between different simulation frameworks. These include synthetic patterns (uniform random, hotspot, transpose) and application-derived workloads that mimic real-world scenarios such as database transactions, neural network operations, and graph processing algorithms.

Reliability metrics have also become essential components of PCM network evaluation, measuring endurance (write cycles before failure), data retention capabilities, and resistance to various failure modes. Advanced simulation frameworks now incorporate fault models to predict system behavior under partial failures or degraded performance conditions.

Accuracy validation represents perhaps the most challenging aspect of PCM simulation evaluation. This involves comparing simulation results against physical PCM implementations where available, or against established theoretical models. The fidelity of timing models, particularly in capturing the unique characteristics of PCM such as write latency variability and read disturbance effects, significantly impacts simulation credibility.

Recent developments in performance evaluation methodologies have begun incorporating Quality of Service (QoS) metrics, recognizing the importance of consistent performance guarantees in many application domains. These metrics track performance variability, worst-case scenarios, and the ability to maintain service levels under adverse conditions or peak loads.

Energy consumption metrics have gained significant importance due to PCM's potential advantages in power efficiency compared to traditional memory technologies. These metrics typically measure both static power consumption during idle states and dynamic power requirements during active operations. The non-volatile nature of PCM introduces unique power profile characteristics that must be accurately captured in simulation frameworks.

Scalability assessment represents another crucial dimension, evaluating how performance metrics evolve as the network size increases from hundreds to thousands or millions of PCM nodes. This includes analyzing how communication overhead, memory contention, and thermal effects impact overall system performance at scale.

Standardized workloads have emerged to facilitate comparative analysis between different simulation frameworks. These include synthetic patterns (uniform random, hotspot, transpose) and application-derived workloads that mimic real-world scenarios such as database transactions, neural network operations, and graph processing algorithms.

Reliability metrics have also become essential components of PCM network evaluation, measuring endurance (write cycles before failure), data retention capabilities, and resistance to various failure modes. Advanced simulation frameworks now incorporate fault models to predict system behavior under partial failures or degraded performance conditions.

Accuracy validation represents perhaps the most challenging aspect of PCM simulation evaluation. This involves comparing simulation results against physical PCM implementations where available, or against established theoretical models. The fidelity of timing models, particularly in capturing the unique characteristics of PCM such as write latency variability and read disturbance effects, significantly impacts simulation credibility.

Recent developments in performance evaluation methodologies have begun incorporating Quality of Service (QoS) metrics, recognizing the importance of consistent performance guarantees in many application domains. These metrics track performance variability, worst-case scenarios, and the ability to maintain service levels under adverse conditions or peak loads.

Energy Efficiency Considerations in PCM Simulations

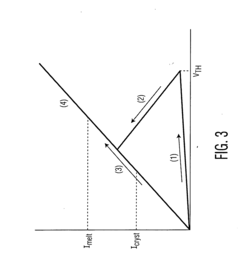

Energy efficiency has emerged as a critical consideration in the simulation of Phase Change Memory (PCM) based networks, particularly as these systems scale to accommodate increasingly complex computational demands. The power consumption characteristics of PCM devices differ significantly from traditional memory technologies, necessitating specialized simulation frameworks that accurately model energy dynamics. Current PCM simulation frameworks must account for the asymmetric energy profiles of read and write operations, where write operations typically consume 5-10 times more energy than read operations due to the thermal processes involved in phase transitions.

The energy efficiency modeling in PCM simulations requires multi-level approaches that capture both device-level and system-level power consumption. At the device level, simulations must account for the variable energy requirements during different operational states, including idle, read, write, and refresh cycles. The non-volatile nature of PCM eliminates the need for refresh operations common in DRAM, representing a significant energy advantage that must be accurately reflected in simulation frameworks.

System-level energy considerations introduce additional complexity, as they must model the interactions between PCM components and other system elements such as processors, interconnects, and cooling infrastructure. Advanced simulation frameworks now incorporate dynamic power management techniques, including adaptive write scheduling and power-aware routing algorithms, which can significantly reduce overall energy consumption in large-scale PCM networks.

Thermal modeling represents another crucial aspect of energy efficiency simulations for PCM networks. The phase change process is inherently temperature-dependent, creating a complex relationship between operational temperature, performance, and energy consumption. Leading simulation frameworks now implement detailed thermal models that account for spatial temperature gradients and temporal thermal dynamics, enabling more accurate predictions of energy requirements under various workload conditions.

Recent advancements in PCM simulation frameworks have introduced machine learning techniques to optimize energy efficiency modeling. These approaches leverage historical simulation data to predict energy consumption patterns and identify optimal operating parameters. Such predictive capabilities allow system designers to explore energy-performance tradeoffs more effectively and develop power-aware PCM architectures tailored to specific application requirements.

The validation of energy efficiency models in PCM simulations remains challenging, requiring careful correlation with physical measurements from prototype systems. Benchmark suites specifically designed for PCM-based systems have emerged, providing standardized workloads that stress different aspects of energy consumption. These benchmarks enable meaningful comparisons between different simulation frameworks and help establish confidence in their predictive accuracy for energy efficiency metrics.

The energy efficiency modeling in PCM simulations requires multi-level approaches that capture both device-level and system-level power consumption. At the device level, simulations must account for the variable energy requirements during different operational states, including idle, read, write, and refresh cycles. The non-volatile nature of PCM eliminates the need for refresh operations common in DRAM, representing a significant energy advantage that must be accurately reflected in simulation frameworks.

System-level energy considerations introduce additional complexity, as they must model the interactions between PCM components and other system elements such as processors, interconnects, and cooling infrastructure. Advanced simulation frameworks now incorporate dynamic power management techniques, including adaptive write scheduling and power-aware routing algorithms, which can significantly reduce overall energy consumption in large-scale PCM networks.

Thermal modeling represents another crucial aspect of energy efficiency simulations for PCM networks. The phase change process is inherently temperature-dependent, creating a complex relationship between operational temperature, performance, and energy consumption. Leading simulation frameworks now implement detailed thermal models that account for spatial temperature gradients and temporal thermal dynamics, enabling more accurate predictions of energy requirements under various workload conditions.

Recent advancements in PCM simulation frameworks have introduced machine learning techniques to optimize energy efficiency modeling. These approaches leverage historical simulation data to predict energy consumption patterns and identify optimal operating parameters. Such predictive capabilities allow system designers to explore energy-performance tradeoffs more effectively and develop power-aware PCM architectures tailored to specific application requirements.

The validation of energy efficiency models in PCM simulations remains challenging, requiring careful correlation with physical measurements from prototype systems. Benchmark suites specifically designed for PCM-based systems have emerged, providing standardized workloads that stress different aspects of energy consumption. These benchmarks enable meaningful comparisons between different simulation frameworks and help establish confidence in their predictive accuracy for energy efficiency metrics.

Unlock deeper insights with PatSnap Eureka Quick Research — get a full tech report to explore trends and direct your research. Try now!

Generate Your Research Report Instantly with AI Agent

Supercharge your innovation with PatSnap Eureka AI Agent Platform!