SLAM For Intelligent Surveillance Drones

SEP 12, 20259 MIN READ

Generate Your Research Report Instantly with AI Agent

PatSnap Eureka helps you evaluate technical feasibility & market potential.

SLAM Technology Evolution and Surveillance Drone Objectives

Simultaneous Localization and Mapping (SLAM) technology has evolved significantly since its inception in the 1980s, transitioning from theoretical concepts to practical applications across various domains. The evolution of SLAM has been characterized by increasing accuracy, computational efficiency, and adaptability to dynamic environments. Early SLAM algorithms relied heavily on extended Kalman filters and particle filters, which were computationally intensive and limited in scalability. The introduction of graph-based optimization methods in the 2000s marked a significant advancement, enabling more efficient processing of large-scale environments.

The integration of visual sensors with traditional range-finding technologies led to the development of Visual SLAM systems, which leverage camera data to construct detailed environmental maps while simultaneously tracking the device's position. This evolution continued with the emergence of RGB-D SLAM, utilizing depth information alongside visual data to enhance mapping precision. Recent advancements include semantic SLAM, which incorporates object recognition capabilities to create more meaningful environmental representations, and deep learning-based approaches that improve robustness in challenging conditions.

For surveillance drones specifically, SLAM technology enables autonomous navigation in GPS-denied environments, precise tracking of moving targets, and comprehensive environmental awareness. These capabilities are crucial for applications ranging from border security and critical infrastructure protection to disaster response and urban monitoring. The technical objectives for SLAM in surveillance drones include achieving real-time processing with limited onboard computational resources, maintaining accuracy during high-speed flight, and adapting to rapidly changing environments.

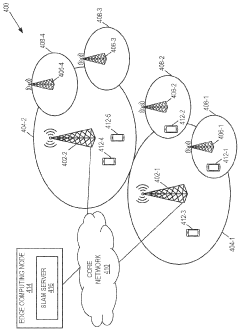

Current research focuses on developing lightweight SLAM algorithms optimized for drone hardware constraints while maintaining high performance. Multi-sensor fusion approaches combine visual, inertial, and sometimes LiDAR data to enhance robustness across varying lighting conditions and environmental complexities. Edge computing integration is being explored to distribute computational loads between the drone and ground stations, enabling more sophisticated SLAM implementations on resource-constrained platforms.

The trajectory of SLAM technology for surveillance drones is moving toward greater autonomy, with objectives including persistent surveillance capabilities, collaborative mapping among drone swarms, and advanced anomaly detection through environmental understanding. Energy efficiency remains a critical goal, as power consumption directly impacts flight duration and operational range. Additionally, there is growing emphasis on developing SLAM systems that can function effectively in adverse weather conditions and environments with limited distinguishing features, expanding the operational envelope of surveillance drones.

The integration of visual sensors with traditional range-finding technologies led to the development of Visual SLAM systems, which leverage camera data to construct detailed environmental maps while simultaneously tracking the device's position. This evolution continued with the emergence of RGB-D SLAM, utilizing depth information alongside visual data to enhance mapping precision. Recent advancements include semantic SLAM, which incorporates object recognition capabilities to create more meaningful environmental representations, and deep learning-based approaches that improve robustness in challenging conditions.

For surveillance drones specifically, SLAM technology enables autonomous navigation in GPS-denied environments, precise tracking of moving targets, and comprehensive environmental awareness. These capabilities are crucial for applications ranging from border security and critical infrastructure protection to disaster response and urban monitoring. The technical objectives for SLAM in surveillance drones include achieving real-time processing with limited onboard computational resources, maintaining accuracy during high-speed flight, and adapting to rapidly changing environments.

Current research focuses on developing lightweight SLAM algorithms optimized for drone hardware constraints while maintaining high performance. Multi-sensor fusion approaches combine visual, inertial, and sometimes LiDAR data to enhance robustness across varying lighting conditions and environmental complexities. Edge computing integration is being explored to distribute computational loads between the drone and ground stations, enabling more sophisticated SLAM implementations on resource-constrained platforms.

The trajectory of SLAM technology for surveillance drones is moving toward greater autonomy, with objectives including persistent surveillance capabilities, collaborative mapping among drone swarms, and advanced anomaly detection through environmental understanding. Energy efficiency remains a critical goal, as power consumption directly impacts flight duration and operational range. Additionally, there is growing emphasis on developing SLAM systems that can function effectively in adverse weather conditions and environments with limited distinguishing features, expanding the operational envelope of surveillance drones.

Market Analysis for Intelligent Surveillance Drone Solutions

The intelligent surveillance drone market is experiencing unprecedented growth, driven by increasing security concerns across various sectors including government, commercial, and residential. The global market for surveillance drones was valued at approximately $4.5 billion in 2022 and is projected to reach $12.3 billion by 2028, representing a compound annual growth rate (CAGR) of 18.2%. This remarkable expansion reflects the growing adoption of advanced surveillance technologies and the integration of SLAM (Simultaneous Localization and Mapping) capabilities.

North America currently dominates the market with a 38% share, followed by Europe (27%) and Asia-Pacific (24%). However, the Asia-Pacific region is expected to witness the fastest growth rate of 22.1% during the forecast period, primarily due to increasing security investments in countries like China, India, and Japan.

The demand for intelligent surveillance drones is particularly strong in the government and defense sectors, accounting for approximately 45% of the total market. These institutions require sophisticated surveillance capabilities for border security, counter-terrorism operations, and disaster management. Commercial applications represent 35% of the market, with significant adoption in critical infrastructure protection, industrial site monitoring, and event security.

Key market drivers include the decreasing cost of drone technology, advancements in artificial intelligence and computer vision, and the growing need for autonomous surveillance solutions that reduce human intervention. The integration of SLAM technology specifically has created a premium segment within the market, with SLAM-enabled surveillance drones commanding price premiums of 30-40% compared to conventional alternatives.

Customer requirements are increasingly focused on real-time data processing, extended flight times, all-weather operation capabilities, and seamless integration with existing security infrastructure. End-users are willing to pay premium prices for solutions that offer enhanced autonomy, obstacle avoidance, and the ability to operate in GPS-denied environments – all capabilities enabled by advanced SLAM implementations.

Market challenges include regulatory restrictions on drone operations in many countries, privacy concerns, and the technical limitations of current battery technology. Additionally, the high computational requirements of SLAM algorithms present challenges for miniaturization and power efficiency in drone platforms.

Emerging trends include the development of swarm-based surveillance systems, edge computing integration for faster data processing, and hybrid drones with extended operational capabilities. The market is also witnessing increased demand for drone-as-a-service business models, which reduce capital expenditure requirements for end-users while providing access to the latest technology.

North America currently dominates the market with a 38% share, followed by Europe (27%) and Asia-Pacific (24%). However, the Asia-Pacific region is expected to witness the fastest growth rate of 22.1% during the forecast period, primarily due to increasing security investments in countries like China, India, and Japan.

The demand for intelligent surveillance drones is particularly strong in the government and defense sectors, accounting for approximately 45% of the total market. These institutions require sophisticated surveillance capabilities for border security, counter-terrorism operations, and disaster management. Commercial applications represent 35% of the market, with significant adoption in critical infrastructure protection, industrial site monitoring, and event security.

Key market drivers include the decreasing cost of drone technology, advancements in artificial intelligence and computer vision, and the growing need for autonomous surveillance solutions that reduce human intervention. The integration of SLAM technology specifically has created a premium segment within the market, with SLAM-enabled surveillance drones commanding price premiums of 30-40% compared to conventional alternatives.

Customer requirements are increasingly focused on real-time data processing, extended flight times, all-weather operation capabilities, and seamless integration with existing security infrastructure. End-users are willing to pay premium prices for solutions that offer enhanced autonomy, obstacle avoidance, and the ability to operate in GPS-denied environments – all capabilities enabled by advanced SLAM implementations.

Market challenges include regulatory restrictions on drone operations in many countries, privacy concerns, and the technical limitations of current battery technology. Additionally, the high computational requirements of SLAM algorithms present challenges for miniaturization and power efficiency in drone platforms.

Emerging trends include the development of swarm-based surveillance systems, edge computing integration for faster data processing, and hybrid drones with extended operational capabilities. The market is also witnessing increased demand for drone-as-a-service business models, which reduce capital expenditure requirements for end-users while providing access to the latest technology.

SLAM Implementation Challenges in Aerial Surveillance

Implementing SLAM (Simultaneous Localization and Mapping) technology in aerial surveillance drones presents several significant challenges that must be addressed for effective deployment. The dynamic nature of drone flight introduces complex motion patterns that traditional SLAM algorithms struggle to process accurately. Six-degree-of-freedom movement creates computational complexity far exceeding that of ground-based robots, requiring specialized algorithmic approaches.

Environmental factors pose substantial obstacles for aerial SLAM systems. Varying lighting conditions, from bright sunlight to low-light scenarios, affect sensor reliability and feature detection accuracy. Weather elements such as rain, fog, and wind create additional complications by introducing noise to sensor readings and destabilizing drone positioning, which can compromise mapping precision.

Hardware limitations represent another critical challenge. The payload constraints of surveillance drones restrict the deployment of heavy, high-performance computing systems necessary for real-time SLAM processing. Power consumption must be carefully balanced against operational flight time, creating a significant design trade-off between computational capability and mission duration.

Sensor integration complexity increases with aerial platforms. The fusion of data from multiple sensors—including visual cameras, LiDAR, IMUs, and GPS—requires sophisticated calibration and synchronization. Sensor drift and vibration-induced noise further complicate accurate data interpretation, necessitating robust filtering algorithms.

Real-time processing demands present perhaps the most pressing challenge. Surveillance applications require immediate environmental understanding and decision-making capabilities. The computational burden of processing high-resolution visual or point cloud data while simultaneously performing localization and mapping functions strains onboard systems, often leading to latency issues that compromise operational effectiveness.

Scale and perspective variations introduce unique difficulties for feature detection and matching algorithms. As drones change altitude, the scale of observed features changes dramatically, requiring adaptive approaches to maintain tracking consistency. Similarly, perspective distortions from aerial viewpoints complicate the correspondence problem in visual SLAM implementations.

Dynamic environment handling represents a significant hurdle for surveillance applications. Traditional SLAM assumes relatively static environments, but surveillance scenarios often involve tracking moving objects within changing scenes. Distinguishing between drone movement and object movement requires sophisticated filtering and segmentation techniques that add computational overhead.

Privacy and security concerns also present implementation challenges, as aerial surveillance systems must comply with increasingly stringent regulations regarding data collection, storage, and transmission, particularly in civilian applications.

Environmental factors pose substantial obstacles for aerial SLAM systems. Varying lighting conditions, from bright sunlight to low-light scenarios, affect sensor reliability and feature detection accuracy. Weather elements such as rain, fog, and wind create additional complications by introducing noise to sensor readings and destabilizing drone positioning, which can compromise mapping precision.

Hardware limitations represent another critical challenge. The payload constraints of surveillance drones restrict the deployment of heavy, high-performance computing systems necessary for real-time SLAM processing. Power consumption must be carefully balanced against operational flight time, creating a significant design trade-off between computational capability and mission duration.

Sensor integration complexity increases with aerial platforms. The fusion of data from multiple sensors—including visual cameras, LiDAR, IMUs, and GPS—requires sophisticated calibration and synchronization. Sensor drift and vibration-induced noise further complicate accurate data interpretation, necessitating robust filtering algorithms.

Real-time processing demands present perhaps the most pressing challenge. Surveillance applications require immediate environmental understanding and decision-making capabilities. The computational burden of processing high-resolution visual or point cloud data while simultaneously performing localization and mapping functions strains onboard systems, often leading to latency issues that compromise operational effectiveness.

Scale and perspective variations introduce unique difficulties for feature detection and matching algorithms. As drones change altitude, the scale of observed features changes dramatically, requiring adaptive approaches to maintain tracking consistency. Similarly, perspective distortions from aerial viewpoints complicate the correspondence problem in visual SLAM implementations.

Dynamic environment handling represents a significant hurdle for surveillance applications. Traditional SLAM assumes relatively static environments, but surveillance scenarios often involve tracking moving objects within changing scenes. Distinguishing between drone movement and object movement requires sophisticated filtering and segmentation techniques that add computational overhead.

Privacy and security concerns also present implementation challenges, as aerial surveillance systems must comply with increasingly stringent regulations regarding data collection, storage, and transmission, particularly in civilian applications.

Current SLAM Solutions for Surveillance Drones

01 Visual SLAM techniques for autonomous navigation

Visual SLAM techniques use camera data to simultaneously map an environment and locate a device within it. These systems process visual features to create 3D maps while tracking the camera's position in real-time. Advanced implementations incorporate deep learning for improved feature detection and matching, enabling more robust performance in challenging environments such as low-light conditions or scenes with repetitive patterns.- Visual SLAM techniques for autonomous navigation: Visual SLAM systems use camera data to simultaneously map an environment and determine the position of a device within it. These systems process visual features from images to create 3D maps while tracking the camera's movement in real-time. Advanced implementations incorporate deep learning for improved feature detection and matching, enabling more robust performance in challenging environments such as low-light conditions or areas with repetitive visual patterns.

- LiDAR-based SLAM for precise environmental mapping: LiDAR sensors provide accurate distance measurements that enhance SLAM performance by creating detailed point clouds of the surrounding environment. These systems excel at generating precise 3D maps with depth information, making them particularly valuable for autonomous vehicles and robots operating in complex environments. LiDAR-based SLAM algorithms can detect and track objects while simultaneously updating the environmental map and determining the device's position with high accuracy.

- Fusion of multiple sensors for robust SLAM systems: Multi-sensor fusion approaches combine data from various sensors such as cameras, LiDAR, IMUs, and radar to overcome the limitations of single-sensor SLAM systems. By integrating complementary sensor data, these systems achieve greater robustness across diverse environmental conditions. Sensor fusion techniques typically employ probabilistic methods like Kalman filters or particle filters to optimally combine measurements while accounting for sensor uncertainties and environmental noise.

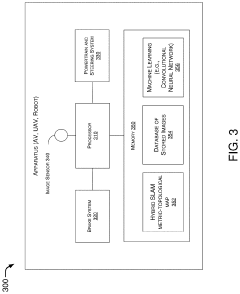

- AI and machine learning enhancements for SLAM: Machine learning and AI techniques are increasingly integrated into SLAM systems to improve performance in challenging scenarios. Deep neural networks can be trained to recognize places despite appearance changes, enhance feature extraction, and improve loop closure detection. These AI-enhanced systems can better handle dynamic environments by distinguishing between static and moving objects, leading to more accurate and reliable mapping and localization results even in crowded or changing environments.

- Edge computing and optimization for real-time SLAM: Optimized SLAM algorithms designed for edge computing enable real-time performance on devices with limited computational resources. These implementations use techniques such as sparse mapping, keyframe selection, and efficient data structures to reduce memory and processing requirements. Hardware acceleration through specialized processors or FPGAs further enhances performance, making SLAM technology viable for mobile robots, drones, and AR/VR headsets that require low latency and power efficiency.

02 SLAM for augmented and virtual reality applications

SLAM technology enables precise spatial mapping for AR/VR applications, allowing virtual objects to interact realistically with the physical environment. These systems track the user's position and orientation while building a detailed environmental model, enabling seamless integration between virtual and physical worlds. The technology supports features like occlusion, where virtual objects can be hidden behind real-world objects, enhancing immersion and user experience.Expand Specific Solutions03 LiDAR and sensor fusion approaches for SLAM

LiDAR-based SLAM systems use laser scanning to create precise 3D point clouds of environments, offering advantages in accuracy and range compared to purely visual approaches. Sensor fusion techniques combine data from multiple sources such as cameras, LiDAR, IMU, and GPS to enhance robustness and accuracy. These approaches are particularly valuable in challenging environments where a single sensor type might fail, such as in varying lighting conditions or featureless areas.Expand Specific Solutions04 SLAM optimization and computational efficiency

Advanced optimization techniques improve SLAM performance on resource-constrained devices by reducing computational demands while maintaining accuracy. These approaches include sparse mapping, keyframe selection, and efficient loop closure detection. Real-time processing is achieved through parallel computing architectures and algorithmic improvements that balance accuracy with speed, enabling deployment on mobile devices and embedded systems with limited processing power.Expand Specific Solutions05 SLAM for robotic applications and autonomous systems

SLAM enables robots and autonomous systems to navigate unknown environments without external positioning infrastructure. These implementations focus on reliability in dynamic environments where objects may move or change position. Specialized algorithms handle path planning, obstacle avoidance, and multi-robot coordination, allowing for applications in warehouse automation, delivery robots, and domestic service robots. The systems can adapt to environmental changes and learn from experience to improve mapping and navigation over time.Expand Specific Solutions

Leading Companies in Drone SLAM Technology Ecosystem

The SLAM for Intelligent Surveillance Drones market is currently in a growth phase, with increasing adoption across security and monitoring applications. The market size is expanding rapidly, projected to reach significant value as drone surveillance becomes mainstream in both commercial and governmental sectors. Technologically, this field is approaching maturity with several academic institutions leading research, including Northwestern Polytechnical University, Beijing Institute of Technology, and Zhejiang University, which have established strong foundations in SLAM algorithms. Commercial players like UISEE Technologies, Sony, and Preferred Robotics are advancing practical implementations, while specialized drone companies such as Xi'an Inno Aviation are developing industry-specific solutions. The integration of AI with SLAM technology represents the current competitive frontier, with cross-industry collaborations accelerating development.

UISEE Technologies (Beijing) Co., Ltd.

Technical Solution: UISEE has developed "DroneVision," an industrial-grade SLAM solution specifically engineered for surveillance drones operating in challenging environments. Their system integrates visual, inertial, and occasionally LiDAR data through a sophisticated sensor fusion framework that maintains localization accuracy even during aggressive maneuvers or GPS-denied conditions. The company's proprietary mapping algorithms create multi-resolution environmental representations that balance detail with computational efficiency. UISEE's solution includes advanced trajectory planning capabilities that optimize drone movement for both surveillance coverage and SLAM performance. Their system has been commercially deployed for security applications, featuring encrypted data transmission and processing to ensure surveillance information security. The technology includes specialized modules for night-time operation using infrared imaging and can function effectively in adverse weather conditions.

Strengths: Proven commercial deployment in real-world security applications; robust performance in GPS-denied environments; advanced security features for sensitive surveillance operations. Weaknesses: Higher cost compared to academic solutions; may require specialized hardware configurations for optimal performance.

Sony Group Corp.

Technical Solution: Sony has leveraged its expertise in imaging technology to develop "AirSense," a sophisticated SLAM system for surveillance drones. Their solution utilizes high-quality camera sensors combined with proprietary image processing algorithms to extract rich visual features even in challenging lighting conditions. Sony's implementation employs a hierarchical mapping approach that maintains multiple map representations at different scales, enabling both precise localization and efficient global path planning. The system incorporates advanced optical flow techniques for motion estimation that can function reliably even during rapid drone movements. Sony has also integrated their SLAM technology with automated object detection and tracking capabilities, allowing surveillance drones to follow subjects of interest while maintaining spatial awareness. Their solution includes specialized hardware accelerators for real-time processing of visual data, achieving high performance while minimizing power consumption.

Strengths: Superior image processing capabilities leveraging Sony's sensor expertise; efficient hardware acceleration for real-time performance; seamless integration with object tracking for advanced surveillance. Weaknesses: Potential dependency on Sony's proprietary hardware ecosystem; may be more expensive than other solutions due to premium components.

Key Patents and Research in Aerial SLAM Systems

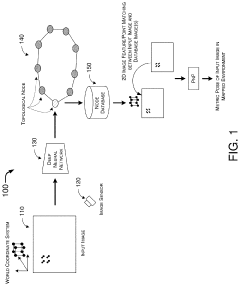

Hybrid Metric-Topological Camera-Based Localization

PatentActiveUS20210082145A1

Innovation

- A hybrid SLAM approach using stereo vision or depth sensors for initial mapping and a single camera or image sensor for localization, employing deep learning-based image classification and geometric PnP techniques to create a hybrid metric-topological map that is continuously updated, reducing memory requirements and costs by using a single camera instead of LIDAR.

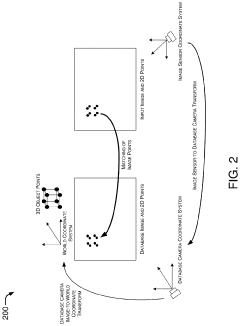

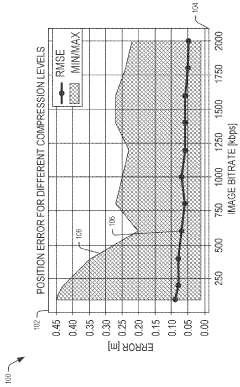

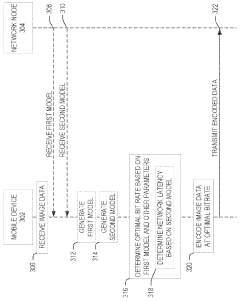

Bitrate adaptation for edge-assisted localization given network availability for mobile devices

PatentWO2024136880A1

Innovation

- A dynamic bitrate adaptation method for edge-assisted SLAM, where a model determines an optimal image data bitrate based on network latency, map presence, and environment bitrate, allowing for compression and transmission at an improved bitrate to enhance localization performance.

Security and Privacy Implications of SLAM-enabled Surveillance

The integration of SLAM technology in surveillance drones raises significant security and privacy concerns that must be addressed comprehensively. As these systems create detailed 3D maps of environments and track movements within them, they inherently collect vast amounts of potentially sensitive data about individuals, properties, and activities without explicit consent.

From a privacy perspective, SLAM-enabled surveillance drones can capture high-resolution spatial data that may reveal personal information beyond what traditional surveillance systems collect. The persistent nature of these systems allows for continuous monitoring and tracking of individuals across extended periods, creating detailed behavior profiles. This capability introduces risks of function creep, where systems deployed for legitimate security purposes gradually expand to more invasive monitoring applications.

Data security presents another critical challenge. The rich environmental data collected by SLAM systems requires robust protection against unauthorized access. Breaches could expose sensitive information about facility layouts, security vulnerabilities, or personal activities. Additionally, the transmission of this data between drones and base stations creates potential interception points that malicious actors might exploit.

Regulatory frameworks worldwide are struggling to keep pace with these technological developments. Current privacy laws often fail to adequately address the unique capabilities of SLAM-enabled surveillance, creating legal gray areas that complicate deployment and governance. Organizations implementing these systems face uncertain compliance requirements and potential liability issues.

The autonomous decision-making capabilities of advanced SLAM systems introduce additional concerns. As these systems increasingly interpret the environments they map, questions arise about accountability for misidentifications or privacy violations resulting from algorithmic decisions rather than human oversight.

Potential mitigation strategies include implementing privacy-by-design principles in SLAM system development, such as automatic blurring of faces or private areas, data minimization techniques, and strict retention policies. Technical safeguards like end-to-end encryption for data transmission and storage, access controls, and regular security audits are essential components of responsible deployment.

Transparent governance frameworks that clearly define appropriate use cases, establish oversight mechanisms, and provide avenues for public input can help balance security benefits with privacy protections. As this technology continues to evolve, ongoing ethical assessment and regulatory development will be crucial to ensure SLAM-enabled surveillance serves public safety without undermining fundamental privacy rights.

From a privacy perspective, SLAM-enabled surveillance drones can capture high-resolution spatial data that may reveal personal information beyond what traditional surveillance systems collect. The persistent nature of these systems allows for continuous monitoring and tracking of individuals across extended periods, creating detailed behavior profiles. This capability introduces risks of function creep, where systems deployed for legitimate security purposes gradually expand to more invasive monitoring applications.

Data security presents another critical challenge. The rich environmental data collected by SLAM systems requires robust protection against unauthorized access. Breaches could expose sensitive information about facility layouts, security vulnerabilities, or personal activities. Additionally, the transmission of this data between drones and base stations creates potential interception points that malicious actors might exploit.

Regulatory frameworks worldwide are struggling to keep pace with these technological developments. Current privacy laws often fail to adequately address the unique capabilities of SLAM-enabled surveillance, creating legal gray areas that complicate deployment and governance. Organizations implementing these systems face uncertain compliance requirements and potential liability issues.

The autonomous decision-making capabilities of advanced SLAM systems introduce additional concerns. As these systems increasingly interpret the environments they map, questions arise about accountability for misidentifications or privacy violations resulting from algorithmic decisions rather than human oversight.

Potential mitigation strategies include implementing privacy-by-design principles in SLAM system development, such as automatic blurring of faces or private areas, data minimization techniques, and strict retention policies. Technical safeguards like end-to-end encryption for data transmission and storage, access controls, and regular security audits are essential components of responsible deployment.

Transparent governance frameworks that clearly define appropriate use cases, establish oversight mechanisms, and provide avenues for public input can help balance security benefits with privacy protections. As this technology continues to evolve, ongoing ethical assessment and regulatory development will be crucial to ensure SLAM-enabled surveillance serves public safety without undermining fundamental privacy rights.

Energy Efficiency and Flight Time Optimization

Energy efficiency remains a critical challenge for surveillance drones implementing SLAM technology. Current intelligent surveillance drones typically achieve flight times between 20-30 minutes, which significantly limits operational capabilities for extended surveillance missions. This constraint stems primarily from the high power consumption of onboard processing units required for real-time SLAM computations, alongside the energy demands of propulsion systems and sensor arrays.

Battery technology presents both limitations and opportunities. Lithium-polymer batteries dominate the market due to their favorable energy density (approximately 150-200 Wh/kg), but research into solid-state batteries promises potential energy density improvements of 2-3 times within the next five years. Meanwhile, hydrogen fuel cells are emerging as viable alternatives for extended missions, offering energy densities up to 700 Wh/kg, though with increased system complexity.

Computational efficiency optimization represents another crucial avenue for extending flight time. Edge computing architectures specifically designed for SLAM applications have demonstrated power consumption reductions of 30-45% compared to general-purpose processors. Notable implementations include NVIDIA's Jetson series and Intel's Movidius VPUs, which balance computational capability with power efficiency.

Dynamic power management strategies show particular promise, with adaptive algorithms that modulate computational resources based on mission requirements. Field tests indicate that context-aware processing—reducing SLAM precision in low-complexity environments while maintaining high fidelity in detail-rich scenes—can extend flight times by 15-25% without significant performance degradation.

Aerodynamic optimization also contributes substantially to energy efficiency. Recent innovations in drone frame design have reduced drag coefficients by up to 18%, while advanced propeller designs have improved propulsion efficiency by 7-12%. Biomimetic approaches, particularly those inspired by bird wing structures, have demonstrated promising results in wind tunnel testing, with energy savings of 8-15% under various flight conditions.

Multi-modal energy harvesting represents an emerging frontier, with prototype systems incorporating flexible solar panels (conversion efficiency ~22%), piezoelectric elements that generate power from vibration, and thermal gradient harvesters. Though currently supplementary rather than primary power sources, these technologies collectively can extend mission durations by 10-30% depending on environmental conditions.

Battery technology presents both limitations and opportunities. Lithium-polymer batteries dominate the market due to their favorable energy density (approximately 150-200 Wh/kg), but research into solid-state batteries promises potential energy density improvements of 2-3 times within the next five years. Meanwhile, hydrogen fuel cells are emerging as viable alternatives for extended missions, offering energy densities up to 700 Wh/kg, though with increased system complexity.

Computational efficiency optimization represents another crucial avenue for extending flight time. Edge computing architectures specifically designed for SLAM applications have demonstrated power consumption reductions of 30-45% compared to general-purpose processors. Notable implementations include NVIDIA's Jetson series and Intel's Movidius VPUs, which balance computational capability with power efficiency.

Dynamic power management strategies show particular promise, with adaptive algorithms that modulate computational resources based on mission requirements. Field tests indicate that context-aware processing—reducing SLAM precision in low-complexity environments while maintaining high fidelity in detail-rich scenes—can extend flight times by 15-25% without significant performance degradation.

Aerodynamic optimization also contributes substantially to energy efficiency. Recent innovations in drone frame design have reduced drag coefficients by up to 18%, while advanced propeller designs have improved propulsion efficiency by 7-12%. Biomimetic approaches, particularly those inspired by bird wing structures, have demonstrated promising results in wind tunnel testing, with energy savings of 8-15% under various flight conditions.

Multi-modal energy harvesting represents an emerging frontier, with prototype systems incorporating flexible solar panels (conversion efficiency ~22%), piezoelectric elements that generate power from vibration, and thermal gradient harvesters. Though currently supplementary rather than primary power sources, these technologies collectively can extend mission durations by 10-30% depending on environmental conditions.

Unlock deeper insights with PatSnap Eureka Quick Research — get a full tech report to explore trends and direct your research. Try now!

Generate Your Research Report Instantly with AI Agent

Supercharge your innovation with PatSnap Eureka AI Agent Platform!