High-Performance Computing For Large-Scale Finite Element Simulations

AUG 28, 20259 MIN READ

Generate Your Research Report Instantly with AI Agent

PatSnap Eureka helps you evaluate technical feasibility & market potential.

HPC Evolution and Simulation Goals

High-Performance Computing (HPC) has evolved dramatically over the past decades, transforming from specialized supercomputers to distributed computing environments that leverage parallel processing capabilities. The journey began with vector processors in the 1970s, progressed through massively parallel systems in the 1990s, and has now entered an era of heterogeneous computing architectures that combine CPUs, GPUs, and specialized accelerators. This evolution has been driven by the increasing complexity of scientific and engineering problems that demand unprecedented computational power.

Finite Element Analysis (FEA), as a numerical method for solving complex engineering problems, has been a primary beneficiary of these HPC advancements. The technique divides complex structures into smaller, manageable elements, creating systems with millions or even billions of degrees of freedom that require substantial computational resources. Early simulations were limited to simplified models with coarse meshes, but modern HPC systems enable high-fidelity simulations with fine-grained meshes that capture intricate physical phenomena with remarkable accuracy.

The primary technical goal in this domain is to achieve scalable performance for large-scale FEA simulations that can efficiently utilize thousands of computing nodes while maintaining numerical stability and accuracy. This involves developing algorithms that minimize communication overhead, balance computational loads across heterogeneous resources, and exploit data locality to overcome memory bandwidth limitations. Additionally, there is a growing emphasis on reducing the time-to-solution for complex simulations, enabling engineers to perform more design iterations within constrained development cycles.

Another critical objective is enhancing the fidelity of simulations by incorporating multi-physics capabilities that can simultaneously model structural mechanics, fluid dynamics, heat transfer, and electromagnetic phenomena. This holistic approach provides more realistic predictions of system behavior but introduces significant computational challenges due to the coupling between different physical domains operating at various time and length scales.

Energy efficiency has emerged as an increasingly important goal, with researchers seeking to optimize algorithms and hardware configurations to maximize computational output per watt of power consumed. This focus on "green HPC" is driven by both environmental concerns and the practical limitations of power delivery and cooling systems in large computing facilities.

Looking forward, the field aims to leverage emerging technologies such as quantum computing and neuromorphic architectures to overcome current computational barriers, potentially enabling simulations of unprecedented scale and complexity that could revolutionize product development across industries ranging from aerospace and automotive to biomedical engineering and materials science.

Finite Element Analysis (FEA), as a numerical method for solving complex engineering problems, has been a primary beneficiary of these HPC advancements. The technique divides complex structures into smaller, manageable elements, creating systems with millions or even billions of degrees of freedom that require substantial computational resources. Early simulations were limited to simplified models with coarse meshes, but modern HPC systems enable high-fidelity simulations with fine-grained meshes that capture intricate physical phenomena with remarkable accuracy.

The primary technical goal in this domain is to achieve scalable performance for large-scale FEA simulations that can efficiently utilize thousands of computing nodes while maintaining numerical stability and accuracy. This involves developing algorithms that minimize communication overhead, balance computational loads across heterogeneous resources, and exploit data locality to overcome memory bandwidth limitations. Additionally, there is a growing emphasis on reducing the time-to-solution for complex simulations, enabling engineers to perform more design iterations within constrained development cycles.

Another critical objective is enhancing the fidelity of simulations by incorporating multi-physics capabilities that can simultaneously model structural mechanics, fluid dynamics, heat transfer, and electromagnetic phenomena. This holistic approach provides more realistic predictions of system behavior but introduces significant computational challenges due to the coupling between different physical domains operating at various time and length scales.

Energy efficiency has emerged as an increasingly important goal, with researchers seeking to optimize algorithms and hardware configurations to maximize computational output per watt of power consumed. This focus on "green HPC" is driven by both environmental concerns and the practical limitations of power delivery and cooling systems in large computing facilities.

Looking forward, the field aims to leverage emerging technologies such as quantum computing and neuromorphic architectures to overcome current computational barriers, potentially enabling simulations of unprecedented scale and complexity that could revolutionize product development across industries ranging from aerospace and automotive to biomedical engineering and materials science.

Market Demand for Large-Scale FEM Solutions

The market for large-scale Finite Element Method (FEM) solutions is experiencing robust growth, driven primarily by increasing complexity in engineering design and analysis across multiple industries. According to recent market research, the global FEM software market reached approximately $6.3 billion in 2022 and is projected to grow at a compound annual growth rate of 9.8% through 2028.

Automotive and aerospace industries represent the largest market segments, collectively accounting for over 45% of the total market share. These sectors demand high-performance computing solutions for FEM to simulate complex scenarios such as crash tests, aerodynamic performance, and structural integrity under various conditions. The ability to run these simulations accurately and efficiently directly translates to reduced physical prototyping costs, which can save manufacturers millions in development expenses.

Energy sector applications, particularly in renewable energy infrastructure development, have emerged as the fastest-growing segment with 14.2% annual growth. Wind turbine design optimization, offshore platform structural analysis, and nuclear containment simulations all require increasingly sophisticated FEM capabilities to meet stringent safety and efficiency standards.

Healthcare and biomedical engineering applications represent an emerging market with significant potential, currently growing at 12.7% annually. Applications include implant design optimization, tissue engineering, and surgical planning simulations. These applications often involve complex multi-physics problems that combine structural mechanics with fluid dynamics or electromagnetic interactions.

From an end-user perspective, there is a clear shift in market demand from traditional licensed software packages toward cloud-based high-performance computing solutions for FEM. This transition is driven by the need for scalable computing resources that can handle increasingly complex simulations without requiring massive capital investments in computing infrastructure.

Regional analysis indicates North America leads the market with 38% share, followed by Europe (29%) and Asia-Pacific (24%). However, the Asia-Pacific region shows the highest growth rate at 11.3%, driven by rapid industrialization in China and India, and increasing adoption of advanced engineering simulation technologies.

Key customer requirements identified through market surveys include: reduced simulation time for complex models (cited by 87% of respondents), improved parallel processing capabilities (82%), better integration with CAD systems (76%), enhanced visualization of results (71%), and more intuitive user interfaces (68%). These requirements highlight the critical need for continued advancement in high-performance computing solutions specifically optimized for large-scale FEM applications.

Automotive and aerospace industries represent the largest market segments, collectively accounting for over 45% of the total market share. These sectors demand high-performance computing solutions for FEM to simulate complex scenarios such as crash tests, aerodynamic performance, and structural integrity under various conditions. The ability to run these simulations accurately and efficiently directly translates to reduced physical prototyping costs, which can save manufacturers millions in development expenses.

Energy sector applications, particularly in renewable energy infrastructure development, have emerged as the fastest-growing segment with 14.2% annual growth. Wind turbine design optimization, offshore platform structural analysis, and nuclear containment simulations all require increasingly sophisticated FEM capabilities to meet stringent safety and efficiency standards.

Healthcare and biomedical engineering applications represent an emerging market with significant potential, currently growing at 12.7% annually. Applications include implant design optimization, tissue engineering, and surgical planning simulations. These applications often involve complex multi-physics problems that combine structural mechanics with fluid dynamics or electromagnetic interactions.

From an end-user perspective, there is a clear shift in market demand from traditional licensed software packages toward cloud-based high-performance computing solutions for FEM. This transition is driven by the need for scalable computing resources that can handle increasingly complex simulations without requiring massive capital investments in computing infrastructure.

Regional analysis indicates North America leads the market with 38% share, followed by Europe (29%) and Asia-Pacific (24%). However, the Asia-Pacific region shows the highest growth rate at 11.3%, driven by rapid industrialization in China and India, and increasing adoption of advanced engineering simulation technologies.

Key customer requirements identified through market surveys include: reduced simulation time for complex models (cited by 87% of respondents), improved parallel processing capabilities (82%), better integration with CAD systems (76%), enhanced visualization of results (71%), and more intuitive user interfaces (68%). These requirements highlight the critical need for continued advancement in high-performance computing solutions specifically optimized for large-scale FEM applications.

Current HPC Challenges in FEM Implementation

Despite significant advancements in High-Performance Computing (HPC) for Finite Element Method (FEM) simulations, several critical challenges persist that limit the full potential of large-scale implementations. The exponential growth in problem size and complexity has outpaced hardware improvements, creating a computational bottleneck. Modern FEM applications in fields such as aerospace engineering, biomedical modeling, and climate science often require simulations with billions of degrees of freedom, pushing current HPC architectures to their limits.

Memory bandwidth constraints represent one of the most significant barriers. As FEM models grow in size, the memory requirements increase dramatically, often exceeding available system memory. This leads to excessive data movement between memory hierarchies, creating performance bottlenecks that significantly reduce computational efficiency. Even with high-performance interconnects, the latency associated with data transfer can account for up to 70% of total simulation time in large-scale problems.

Load balancing presents another formidable challenge, particularly for adaptive mesh refinement techniques. Dynamic mesh adaptation during simulation creates computational imbalances across processing units, with some processors becoming overloaded while others remain underutilized. Current domain decomposition strategies struggle to maintain optimal workload distribution throughout the simulation lifecycle, resulting in reduced parallel efficiency and increased execution time.

Scalability issues become increasingly pronounced as simulations expand to utilize thousands of computing cores. Communication overhead grows non-linearly with the number of processors, creating diminishing returns beyond certain scale thresholds. Studies indicate that many FEM applications achieve only 10-30% of theoretical peak performance on large supercomputing systems due to these scalability limitations.

Energy consumption has emerged as a critical concern for sustained large-scale FEM simulations. Power requirements for complex simulations can reach megawatt levels, imposing significant operational costs and environmental impacts. Current HPC architectures lack efficient power management mechanisms specifically optimized for the irregular computation and communication patterns characteristic of FEM workloads.

Software complexity and portability challenges further complicate FEM implementations. Legacy code bases often struggle to efficiently utilize heterogeneous computing resources such as GPUs, FPGAs, and specialized accelerators. The diversity of hardware architectures requires significant code adaptation and optimization, creating substantial development overhead and maintenance challenges for simulation software.

Fault tolerance becomes increasingly critical as simulation scale increases. With thousands of components operating simultaneously, the probability of hardware failures during extended simulation runs approaches certainty. Current checkpoint-restart mechanisms introduce significant overhead, sometimes consuming up to 25% of total computation time in large-scale simulations.

Memory bandwidth constraints represent one of the most significant barriers. As FEM models grow in size, the memory requirements increase dramatically, often exceeding available system memory. This leads to excessive data movement between memory hierarchies, creating performance bottlenecks that significantly reduce computational efficiency. Even with high-performance interconnects, the latency associated with data transfer can account for up to 70% of total simulation time in large-scale problems.

Load balancing presents another formidable challenge, particularly for adaptive mesh refinement techniques. Dynamic mesh adaptation during simulation creates computational imbalances across processing units, with some processors becoming overloaded while others remain underutilized. Current domain decomposition strategies struggle to maintain optimal workload distribution throughout the simulation lifecycle, resulting in reduced parallel efficiency and increased execution time.

Scalability issues become increasingly pronounced as simulations expand to utilize thousands of computing cores. Communication overhead grows non-linearly with the number of processors, creating diminishing returns beyond certain scale thresholds. Studies indicate that many FEM applications achieve only 10-30% of theoretical peak performance on large supercomputing systems due to these scalability limitations.

Energy consumption has emerged as a critical concern for sustained large-scale FEM simulations. Power requirements for complex simulations can reach megawatt levels, imposing significant operational costs and environmental impacts. Current HPC architectures lack efficient power management mechanisms specifically optimized for the irregular computation and communication patterns characteristic of FEM workloads.

Software complexity and portability challenges further complicate FEM implementations. Legacy code bases often struggle to efficiently utilize heterogeneous computing resources such as GPUs, FPGAs, and specialized accelerators. The diversity of hardware architectures requires significant code adaptation and optimization, creating substantial development overhead and maintenance challenges for simulation software.

Fault tolerance becomes increasingly critical as simulation scale increases. With thousands of components operating simultaneously, the probability of hardware failures during extended simulation runs approaches certainty. Current checkpoint-restart mechanisms introduce significant overhead, sometimes consuming up to 25% of total computation time in large-scale simulations.

State-of-the-Art Parallel Computing Approaches

01 Resource allocation and optimization in HPC systems

Efficient resource allocation is critical for high-performance computing systems. This includes techniques for optimizing CPU, memory, and network resources across distributed computing environments. Advanced algorithms can dynamically allocate resources based on workload demands, ensuring optimal utilization while maintaining performance levels. These approaches help balance computational loads across multiple nodes and prevent bottlenecks that could degrade overall system performance.- Resource allocation and optimization in HPC systems: Efficient resource allocation is critical for high-performance computing systems. This includes techniques for optimizing CPU, memory, and network resources across distributed computing environments. Advanced algorithms can dynamically allocate resources based on workload demands, ensuring optimal utilization while maintaining performance levels. These approaches help balance computational loads across clusters and prevent bottlenecks that would otherwise degrade system performance.

- Workload management and scheduling techniques: Effective workload management and scheduling are essential for maximizing HPC performance. This involves sophisticated algorithms that prioritize tasks, manage job queues, and distribute computational workloads across available resources. Advanced scheduling techniques can predict resource requirements, minimize wait times, and optimize execution paths. These systems can adapt to changing conditions and workload characteristics to maintain high throughput and efficiency in complex computing environments.

- Performance monitoring and analytics: Comprehensive monitoring and analytics tools enable real-time assessment of HPC system performance. These solutions collect metrics across various system components, analyze performance patterns, and identify potential bottlenecks or inefficiencies. Advanced analytics can provide insights into system behavior, predict performance issues before they impact operations, and recommend optimization strategies. This data-driven approach allows administrators to fine-tune configurations and improve overall computing efficiency.

- Virtualization and containerization for HPC: Virtualization and containerization technologies enable flexible deployment and isolation of HPC workloads. These approaches allow for better resource utilization, improved portability across different computing environments, and simplified management of complex software dependencies. By abstracting the underlying hardware, these technologies facilitate scalability and provide consistent execution environments. They also support hybrid cloud deployments, allowing HPC workloads to seamlessly span on-premises and cloud resources.

- Network optimization for distributed computing: Network performance is crucial for distributed HPC systems where data must be efficiently transferred between compute nodes. Advanced techniques focus on minimizing latency, optimizing bandwidth utilization, and implementing efficient communication protocols. This includes intelligent data routing, network topology optimization, and specialized hardware acceleration. By reducing communication overhead and improving data transfer rates, these approaches significantly enhance the overall performance of distributed computing applications.

02 Workload management and scheduling techniques

Effective workload management and scheduling are essential for maximizing HPC performance. This involves sophisticated algorithms that prioritize tasks, manage job queues, and distribute computational workloads across available resources. Advanced scheduling techniques can predict resource requirements, identify potential conflicts, and optimize execution sequences to minimize idle time and maximize throughput. These systems adapt to changing conditions and workload characteristics to maintain optimal performance.Expand Specific Solutions03 Performance monitoring and analytics

Comprehensive monitoring and analytics tools enable real-time assessment of HPC system performance. These solutions collect metrics across various system components, analyze performance patterns, and identify potential bottlenecks or inefficiencies. Advanced analytics can predict performance degradation before it impacts users, allowing for proactive optimization. Visualization tools help administrators understand complex performance data and make informed decisions to maintain optimal system operation.Expand Specific Solutions04 Virtualization and containerization for HPC

Virtualization and containerization technologies enable flexible deployment and isolation of HPC workloads. These approaches allow for consistent execution environments across heterogeneous infrastructure while minimizing overhead. Container orchestration systems can automatically scale resources based on demand, improving resource utilization and performance. These technologies facilitate portability of HPC applications across different computing environments while maintaining performance characteristics.Expand Specific Solutions05 Parallel processing and acceleration techniques

Advanced parallel processing techniques significantly enhance HPC performance by distributing computational tasks across multiple processing units. This includes methods for data parallelism, task parallelism, and hybrid approaches that optimize for specific workloads. Hardware accelerators such as GPUs, FPGAs, and specialized processors can be integrated to speed up specific computational tasks. Efficient algorithms for work distribution and synchronization minimize overhead and maximize the benefits of parallel execution.Expand Specific Solutions

Leading HPC and FEM Software Providers

High-Performance Computing for Large-Scale Finite Element Simulations is currently in a growth phase, with the market expanding rapidly due to increasing demand for complex engineering simulations across industries. The global market size is estimated to exceed $5 billion, driven by automotive, aerospace, and energy sectors requiring advanced simulation capabilities. Leading commercial players like ANSYS, Siemens, and Autodesk have developed mature software solutions with sophisticated parallel computing capabilities, while academic institutions such as Tsinghua University and Zhejiang University are advancing fundamental research. Major industrial corporations including Boeing, Mercedes-Benz, and IBM are investing heavily in proprietary HPC infrastructure to gain competitive advantages through faster simulation cycles and more accurate results, creating a diverse ecosystem of specialized solutions across different application domains.

ANSYS, Inc.

Technical Solution: ANSYS has developed a comprehensive high-performance computing (HPC) solution for large-scale finite element simulations through their ANSYS Mechanical platform. Their approach utilizes domain decomposition methods where large models are divided into smaller subdomains that can be solved in parallel across multiple computing nodes. ANSYS implements advanced sparse matrix solvers including direct sparse solvers for smaller problems and iterative solvers with preconditioners for larger models. Their Distributed ANSYS technology enables scaling across hundreds of cores with near-linear speedup for certain problem classes. ANSYS has also integrated GPU acceleration capabilities, allowing computationally intensive operations like matrix assembly and equation solving to leverage NVIDIA GPU hardware, achieving up to 5x performance improvements for certain simulation types[1]. Their HPC framework supports both shared-memory parallelism within nodes and distributed-memory parallelism across nodes using MPI (Message Passing Interface), enabling efficient utilization of modern heterogeneous computing architectures.

Strengths: Industry-leading scalability across multiple nodes with proven performance on problems with hundreds of millions of degrees of freedom; mature ecosystem with pre/post-processing tools optimized for HPC workflows; extensive validation across industries. Weaknesses: Proprietary licensing model can be cost-prohibitive for some organizations; performance scaling may plateau for certain problem types; requires specialized expertise to fully optimize HPC configurations.

International Business Machines Corp.

Technical Solution: IBM has pioneered high-performance computing solutions for large-scale finite element simulations through their IBM Spectrum LSF platform and Power Systems architecture. Their approach combines hardware and software innovations specifically designed for computationally intensive engineering workloads. IBM's Power9 and Power10 processors feature high memory bandwidth (up to 230GB/s per socket) and specialized acceleration interfaces that significantly reduce data movement bottlenecks in finite element analysis[2]. Their implementation leverages OpenMP for shared-memory parallelism and optimized MPI libraries for distributed computing across large clusters. IBM has developed adaptive mesh refinement techniques that dynamically allocate computational resources to critical regions of the simulation domain, improving both accuracy and efficiency. Their systems utilize hierarchical storage management with automatic data migration between high-performance flash storage and traditional disk storage to optimize I/O performance for the massive datasets generated in large-scale simulations[3]. IBM's solutions also incorporate machine learning techniques to predict simulation convergence and optimize solver parameters.

Strengths: Superior memory bandwidth architecture particularly beneficial for memory-bound FEA problems; mature enterprise-grade job scheduling and resource management; strong integration with cloud resources for hybrid computing models. Weaknesses: Higher initial investment compared to commodity x86 clusters; requires specialized expertise to fully leverage Power architecture advantages; ecosystem of compatible software may be more limited than x86 alternatives.

Breakthrough Algorithms for FEM Acceleration

Finite element methods and systems

PatentActiveUS10394978B2

Innovation

- The FEM Multigrid Gaussian Belief Propagation (FMGaBP) algorithm reformulates FEM problems using probabilistic inference with graphical models, eliminating the need for sparse data-structures and global algebraic operations by employing distributed message communications and localized computations, suitable for various parallel computing architectures.

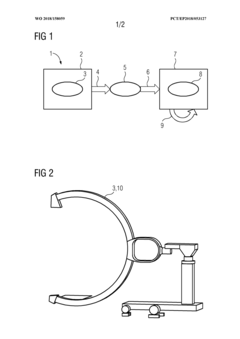

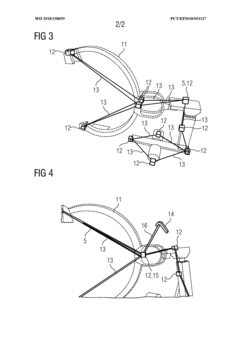

Method and simulation device for simulating at least one component

PatentWO2018158059A1

Innovation

- A method that generates a reduced-complexity model from a detailed finite element model using model reduction techniques, allowing for real-time simulation with minimal loss of accuracy, enabling faster calculation and analysis without the need for separate real-time capable models.

Hardware-Software Co-design for FEM Optimization

Hardware-software co-design represents a paradigm shift in optimizing Finite Element Method (FEM) simulations, addressing the computational bottlenecks that traditional approaches face when handling large-scale problems. This integrated approach synchronizes hardware architecture development with software algorithm design, creating systems specifically tailored for FEM workloads.

The co-design methodology begins with comprehensive workload characterization, identifying computation and memory access patterns unique to FEM operations. These patterns typically involve sparse matrix operations, irregular memory access, and varying degrees of parallelism across different simulation phases. By understanding these characteristics, hardware components can be specifically engineered to accelerate critical FEM operations.

Custom processor architectures have emerged as a key component in this co-design approach. Field-Programmable Gate Arrays (FPGAs) and Application-Specific Integrated Circuits (ASICs) are being developed with specialized execution units optimized for matrix assembly and solver operations. These architectures incorporate high-bandwidth memory interfaces and custom cache hierarchies that align with FEM data structures, significantly reducing memory latency issues.

On the software side, algorithms are being redesigned to exploit hardware capabilities. This includes domain decomposition methods that match the memory hierarchy of target systems, matrix assembly techniques that maximize cache utilization, and solver algorithms that leverage specialized vector processing units. Compiler technologies have also evolved to automatically transform FEM code to utilize hardware-specific features without requiring extensive manual optimization.

Memory system optimization represents another critical aspect of co-design efforts. Novel memory architectures, including High Bandwidth Memory (HBM) and compute-near-memory designs, are being integrated with FEM software that employs locality-aware data structures and access patterns. These innovations significantly reduce the memory wall problem that traditionally limits FEM performance.

Communication optimization techniques bridge hardware interconnect capabilities with algorithmic requirements. Hardware-aware partitioning algorithms minimize cross-node communication while specialized network topologies support the communication patterns common in FEM simulations. This synergy between network hardware design and distributed FEM algorithms has enabled scaling to unprecedented problem sizes.

The co-design approach has yielded remarkable performance improvements, with some specialized systems demonstrating 10-100x speedups over general-purpose computing platforms for specific FEM applications. As simulation demands continue to grow, this integrated hardware-software methodology will likely become the standard approach for next-generation high-performance FEM systems.

The co-design methodology begins with comprehensive workload characterization, identifying computation and memory access patterns unique to FEM operations. These patterns typically involve sparse matrix operations, irregular memory access, and varying degrees of parallelism across different simulation phases. By understanding these characteristics, hardware components can be specifically engineered to accelerate critical FEM operations.

Custom processor architectures have emerged as a key component in this co-design approach. Field-Programmable Gate Arrays (FPGAs) and Application-Specific Integrated Circuits (ASICs) are being developed with specialized execution units optimized for matrix assembly and solver operations. These architectures incorporate high-bandwidth memory interfaces and custom cache hierarchies that align with FEM data structures, significantly reducing memory latency issues.

On the software side, algorithms are being redesigned to exploit hardware capabilities. This includes domain decomposition methods that match the memory hierarchy of target systems, matrix assembly techniques that maximize cache utilization, and solver algorithms that leverage specialized vector processing units. Compiler technologies have also evolved to automatically transform FEM code to utilize hardware-specific features without requiring extensive manual optimization.

Memory system optimization represents another critical aspect of co-design efforts. Novel memory architectures, including High Bandwidth Memory (HBM) and compute-near-memory designs, are being integrated with FEM software that employs locality-aware data structures and access patterns. These innovations significantly reduce the memory wall problem that traditionally limits FEM performance.

Communication optimization techniques bridge hardware interconnect capabilities with algorithmic requirements. Hardware-aware partitioning algorithms minimize cross-node communication while specialized network topologies support the communication patterns common in FEM simulations. This synergy between network hardware design and distributed FEM algorithms has enabled scaling to unprecedented problem sizes.

The co-design approach has yielded remarkable performance improvements, with some specialized systems demonstrating 10-100x speedups over general-purpose computing platforms for specific FEM applications. As simulation demands continue to grow, this integrated hardware-software methodology will likely become the standard approach for next-generation high-performance FEM systems.

Energy Efficiency in Large-Scale Simulations

Energy efficiency has emerged as a critical concern in large-scale finite element simulations due to the exponential growth in computational demands and associated power consumption. Modern high-performance computing (HPC) facilities running complex finite element analyses can consume megawatts of power, resulting in substantial operational costs and environmental impact. The energy consumption of these systems is primarily distributed across processing units (60-70%), cooling infrastructure (20-30%), and peripheral components (10-15%).

Recent advancements in energy-efficient computing architectures have demonstrated promising results for finite element applications. GPU-accelerated computing has shown energy efficiency improvements of 3-7x compared to traditional CPU-only approaches for specific finite element workloads. Similarly, field-programmable gate arrays (FPGAs) offer 5-15x better performance-per-watt for certain matrix operations common in finite element analysis.

Software-level optimizations present another avenue for energy reduction. Adaptive mesh refinement techniques can reduce computational requirements by 30-50% by focusing resources on critical regions of the simulation domain. Algorithm-level improvements, such as mixed-precision computing and approximate computing, have demonstrated energy savings of 20-40% with minimal impact on simulation accuracy for many engineering applications.

Dynamic power management strategies have proven effective in large-scale deployments. Techniques such as frequency scaling, workload consolidation, and selective component deactivation can reduce energy consumption by 15-25% during simulation runtime. These approaches are particularly valuable for long-running finite element simulations with varying computational intensity phases.

Cooling innovations represent a significant opportunity for system-level efficiency gains. Liquid cooling solutions have demonstrated 30-45% reduction in cooling energy compared to traditional air cooling for high-density HPC clusters running finite element workloads. Emerging technologies like immersion cooling show potential for even greater efficiency improvements, with early implementations reporting 40-60% total energy savings.

The industry is witnessing a paradigm shift toward energy-aware simulation workflows. This includes energy consumption modeling, simulation scheduling based on energy availability, and integration with renewable energy sources. Research indicates that energy-aware scheduling can reduce carbon footprint by 20-30% while maintaining simulation throughput in large-scale environments.

Recent advancements in energy-efficient computing architectures have demonstrated promising results for finite element applications. GPU-accelerated computing has shown energy efficiency improvements of 3-7x compared to traditional CPU-only approaches for specific finite element workloads. Similarly, field-programmable gate arrays (FPGAs) offer 5-15x better performance-per-watt for certain matrix operations common in finite element analysis.

Software-level optimizations present another avenue for energy reduction. Adaptive mesh refinement techniques can reduce computational requirements by 30-50% by focusing resources on critical regions of the simulation domain. Algorithm-level improvements, such as mixed-precision computing and approximate computing, have demonstrated energy savings of 20-40% with minimal impact on simulation accuracy for many engineering applications.

Dynamic power management strategies have proven effective in large-scale deployments. Techniques such as frequency scaling, workload consolidation, and selective component deactivation can reduce energy consumption by 15-25% during simulation runtime. These approaches are particularly valuable for long-running finite element simulations with varying computational intensity phases.

Cooling innovations represent a significant opportunity for system-level efficiency gains. Liquid cooling solutions have demonstrated 30-45% reduction in cooling energy compared to traditional air cooling for high-density HPC clusters running finite element workloads. Emerging technologies like immersion cooling show potential for even greater efficiency improvements, with early implementations reporting 40-60% total energy savings.

The industry is witnessing a paradigm shift toward energy-aware simulation workflows. This includes energy consumption modeling, simulation scheduling based on energy availability, and integration with renewable energy sources. Research indicates that energy-aware scheduling can reduce carbon footprint by 20-30% while maintaining simulation throughput in large-scale environments.

Unlock deeper insights with PatSnap Eureka Quick Research — get a full tech report to explore trends and direct your research. Try now!

Generate Your Research Report Instantly with AI Agent

Supercharge your innovation with PatSnap Eureka AI Agent Platform!