Optimizing Hall Effect Sensor Electronics for Signal Fidelity

SEP 22, 20259 MIN READ

Generate Your Research Report Instantly with AI Agent

PatSnap Eureka helps you evaluate technical feasibility & market potential.

Hall Effect Sensor Technology Background and Objectives

Hall Effect sensors, discovered by Edwin Hall in 1879, have evolved significantly over the past century to become integral components in various electronic systems. Initially observed as a voltage difference across an electrical conductor when placed in a magnetic field, this phenomenon has been harnessed for precise magnetic field detection and measurement applications. The technology has progressed from basic magnetic field detection to sophisticated integrated circuits capable of high-precision measurements in challenging environments.

The evolution of Hall Effect sensor technology has been marked by several key advancements, including miniaturization, increased sensitivity, and improved signal processing capabilities. Early implementations were limited by material constraints and signal noise issues, but modern sensors incorporate advanced semiconductor materials and integrated signal conditioning circuits that significantly enhance performance. Recent developments have focused on reducing power consumption, improving temperature stability, and enhancing immunity to external electromagnetic interference.

Current market trends indicate a growing demand for Hall Effect sensors with superior signal fidelity across diverse applications including automotive systems, industrial automation, consumer electronics, and medical devices. The push toward Industry 4.0 and IoT integration has further accelerated the need for sensors that can provide reliable, high-quality signals in increasingly complex electronic environments.

The primary technical objective in optimizing Hall Effect sensor electronics for signal fidelity is to maximize the signal-to-noise ratio while maintaining operational stability across varying environmental conditions. This involves addressing challenges related to offset voltage, temperature drift, electromagnetic interference, and power supply variations that can compromise measurement accuracy.

Another critical goal is to develop signal conditioning architectures that can effectively filter unwanted noise components while preserving the integrity of the desired signal. This includes implementing advanced amplification techniques, filtering methods, and digital signal processing algorithms tailored specifically for Hall Effect sensor outputs.

The integration of Hall Effect sensors with modern microcontrollers and communication interfaces represents another important objective, enabling seamless data acquisition and processing in complex systems. This integration must maintain signal fidelity throughout the entire signal chain, from sensor to final application.

Looking forward, the technology roadmap aims to achieve higher levels of integration, with Hall Effect sensing elements combined with sophisticated signal processing capabilities in single-chip solutions. These advancements will support emerging applications in fields such as robotics, autonomous vehicles, and precision manufacturing, where accurate magnetic field measurements with minimal signal degradation are essential for system performance and reliability.

The evolution of Hall Effect sensor technology has been marked by several key advancements, including miniaturization, increased sensitivity, and improved signal processing capabilities. Early implementations were limited by material constraints and signal noise issues, but modern sensors incorporate advanced semiconductor materials and integrated signal conditioning circuits that significantly enhance performance. Recent developments have focused on reducing power consumption, improving temperature stability, and enhancing immunity to external electromagnetic interference.

Current market trends indicate a growing demand for Hall Effect sensors with superior signal fidelity across diverse applications including automotive systems, industrial automation, consumer electronics, and medical devices. The push toward Industry 4.0 and IoT integration has further accelerated the need for sensors that can provide reliable, high-quality signals in increasingly complex electronic environments.

The primary technical objective in optimizing Hall Effect sensor electronics for signal fidelity is to maximize the signal-to-noise ratio while maintaining operational stability across varying environmental conditions. This involves addressing challenges related to offset voltage, temperature drift, electromagnetic interference, and power supply variations that can compromise measurement accuracy.

Another critical goal is to develop signal conditioning architectures that can effectively filter unwanted noise components while preserving the integrity of the desired signal. This includes implementing advanced amplification techniques, filtering methods, and digital signal processing algorithms tailored specifically for Hall Effect sensor outputs.

The integration of Hall Effect sensors with modern microcontrollers and communication interfaces represents another important objective, enabling seamless data acquisition and processing in complex systems. This integration must maintain signal fidelity throughout the entire signal chain, from sensor to final application.

Looking forward, the technology roadmap aims to achieve higher levels of integration, with Hall Effect sensing elements combined with sophisticated signal processing capabilities in single-chip solutions. These advancements will support emerging applications in fields such as robotics, autonomous vehicles, and precision manufacturing, where accurate magnetic field measurements with minimal signal degradation are essential for system performance and reliability.

Market Applications and Demand Analysis

The Hall Effect sensor market has experienced significant growth in recent years, driven primarily by the automotive industry's increasing demand for precise position sensing and contactless measurement solutions. The global Hall Effect sensor market was valued at approximately 2.1 billion USD in 2022 and is projected to reach 3.5 billion USD by 2028, representing a compound annual growth rate of 8.7% during the forecast period.

Automotive applications constitute the largest market segment, accounting for nearly 40% of the total market share. The transition toward electric vehicles and advanced driver assistance systems (ADAS) has substantially increased the need for high-fidelity Hall Effect sensors in applications such as motor commutation, position sensing, and current measurement. The automotive industry's stringent requirements for signal accuracy and reliability under harsh environmental conditions have become key drivers for innovations in signal fidelity optimization.

Industrial automation represents the second-largest application segment, with a market share of approximately 25%. In this sector, Hall Effect sensors are extensively used for speed detection, position sensing, and current monitoring in factory automation systems. The Industry 4.0 paradigm has accelerated demand for sensors with enhanced signal fidelity to support predictive maintenance and real-time monitoring capabilities.

Consumer electronics applications have shown the fastest growth rate at 12.3% annually, particularly in smartphones, wearables, and home appliances. These applications require miniaturized Hall Effect sensors with low power consumption and high signal-to-noise ratios, creating unique challenges for signal fidelity optimization.

Market analysis reveals a growing demand for integrated Hall Effect sensor solutions that combine sensing elements with advanced signal processing capabilities. End-users increasingly require sensors that can provide accurate measurements in noisy electromagnetic environments, with 78% of surveyed industrial customers citing signal fidelity as a critical selection criterion.

Regional market distribution shows Asia-Pacific leading with 45% market share, followed by North America (28%) and Europe (22%). China and South Korea have emerged as manufacturing hubs for Hall Effect sensors, while North America and Europe focus on high-performance applications requiring superior signal fidelity.

The market is witnessing a shift toward programmable Hall Effect sensors that allow for calibration and compensation of signal variations due to temperature fluctuations and aging effects. This trend aligns with the broader industry movement toward more adaptive and intelligent sensing solutions that can maintain signal fidelity across varying operational conditions.

Automotive applications constitute the largest market segment, accounting for nearly 40% of the total market share. The transition toward electric vehicles and advanced driver assistance systems (ADAS) has substantially increased the need for high-fidelity Hall Effect sensors in applications such as motor commutation, position sensing, and current measurement. The automotive industry's stringent requirements for signal accuracy and reliability under harsh environmental conditions have become key drivers for innovations in signal fidelity optimization.

Industrial automation represents the second-largest application segment, with a market share of approximately 25%. In this sector, Hall Effect sensors are extensively used for speed detection, position sensing, and current monitoring in factory automation systems. The Industry 4.0 paradigm has accelerated demand for sensors with enhanced signal fidelity to support predictive maintenance and real-time monitoring capabilities.

Consumer electronics applications have shown the fastest growth rate at 12.3% annually, particularly in smartphones, wearables, and home appliances. These applications require miniaturized Hall Effect sensors with low power consumption and high signal-to-noise ratios, creating unique challenges for signal fidelity optimization.

Market analysis reveals a growing demand for integrated Hall Effect sensor solutions that combine sensing elements with advanced signal processing capabilities. End-users increasingly require sensors that can provide accurate measurements in noisy electromagnetic environments, with 78% of surveyed industrial customers citing signal fidelity as a critical selection criterion.

Regional market distribution shows Asia-Pacific leading with 45% market share, followed by North America (28%) and Europe (22%). China and South Korea have emerged as manufacturing hubs for Hall Effect sensors, while North America and Europe focus on high-performance applications requiring superior signal fidelity.

The market is witnessing a shift toward programmable Hall Effect sensors that allow for calibration and compensation of signal variations due to temperature fluctuations and aging effects. This trend aligns with the broader industry movement toward more adaptive and intelligent sensing solutions that can maintain signal fidelity across varying operational conditions.

Current Challenges in Hall Sensor Signal Fidelity

Despite significant advancements in Hall effect sensor technology, several critical challenges persist in achieving optimal signal fidelity. The primary issue remains signal-to-noise ratio (SNR) degradation, particularly in industrial environments where electromagnetic interference (EMI) is prevalent. Current Hall sensor systems struggle to maintain signal integrity when exposed to high-frequency noise sources from nearby power electronics, motors, or wireless communication systems.

Temperature drift represents another substantial challenge, with typical Hall sensors exhibiting sensitivity variations of 500-1500 ppm/°C. This thermal dependency creates significant measurement errors across industrial operating temperature ranges (-40°C to 125°C), necessitating complex compensation algorithms that increase system complexity and cost.

Offset voltage instability continues to plague Hall sensor performance, with random fluctuations occurring due to package stress, aging effects, and thermal cycling. These offset variations can reach magnitudes of several millivolts, which becomes particularly problematic when measuring weak magnetic fields where the actual Hall voltage may be in the microvolt range.

Bandwidth limitations present obstacles for applications requiring high-speed magnetic field measurements. Current amplifier designs in Hall sensor signal chains typically restrict bandwidth to 20-100 kHz, insufficient for emerging applications in power electronics switching at frequencies exceeding 500 kHz. This limitation stems from inherent trade-offs between bandwidth extension and noise performance.

Linearity errors constitute another significant challenge, with most commercial Hall sensors exhibiting non-linearity errors between 0.5% and 2% across their full measurement range. These non-linearities become particularly problematic in precision applications such as current sensing for battery management systems or motor control, where accurate power calculations depend on highly linear measurements.

Power consumption optimization remains difficult, especially for battery-operated IoT applications. Current low-power Hall sensor solutions sacrifice either response time or resolution to achieve power savings, creating an unresolved design tension between performance and energy efficiency.

Integration challenges persist when incorporating Hall sensors into complex systems-on-chip (SoC) designs. The analog front-end circuitry requires careful isolation from digital switching noise, while maintaining small die area and low manufacturing cost. Current isolation techniques often demand additional silicon area or specialized process steps that increase production costs.

Calibration complexity represents a final major hurdle, with most Hall sensor systems requiring multi-point calibration procedures to address individual device variations. These calibration requirements significantly impact manufacturing throughput and increase end-product costs, particularly for high-volume consumer applications.

Temperature drift represents another substantial challenge, with typical Hall sensors exhibiting sensitivity variations of 500-1500 ppm/°C. This thermal dependency creates significant measurement errors across industrial operating temperature ranges (-40°C to 125°C), necessitating complex compensation algorithms that increase system complexity and cost.

Offset voltage instability continues to plague Hall sensor performance, with random fluctuations occurring due to package stress, aging effects, and thermal cycling. These offset variations can reach magnitudes of several millivolts, which becomes particularly problematic when measuring weak magnetic fields where the actual Hall voltage may be in the microvolt range.

Bandwidth limitations present obstacles for applications requiring high-speed magnetic field measurements. Current amplifier designs in Hall sensor signal chains typically restrict bandwidth to 20-100 kHz, insufficient for emerging applications in power electronics switching at frequencies exceeding 500 kHz. This limitation stems from inherent trade-offs between bandwidth extension and noise performance.

Linearity errors constitute another significant challenge, with most commercial Hall sensors exhibiting non-linearity errors between 0.5% and 2% across their full measurement range. These non-linearities become particularly problematic in precision applications such as current sensing for battery management systems or motor control, where accurate power calculations depend on highly linear measurements.

Power consumption optimization remains difficult, especially for battery-operated IoT applications. Current low-power Hall sensor solutions sacrifice either response time or resolution to achieve power savings, creating an unresolved design tension between performance and energy efficiency.

Integration challenges persist when incorporating Hall sensors into complex systems-on-chip (SoC) designs. The analog front-end circuitry requires careful isolation from digital switching noise, while maintaining small die area and low manufacturing cost. Current isolation techniques often demand additional silicon area or specialized process steps that increase production costs.

Calibration complexity represents a final major hurdle, with most Hall sensor systems requiring multi-point calibration procedures to address individual device variations. These calibration requirements significantly impact manufacturing throughput and increase end-product costs, particularly for high-volume consumer applications.

Signal Conditioning Circuit Solutions

01 Signal conditioning and amplification techniques

Various signal conditioning and amplification techniques are employed to improve Hall effect sensor signal fidelity. These include differential amplification, chopper stabilization, and specialized integrated circuits that reduce noise and increase sensitivity. Advanced filtering methods help eliminate unwanted frequency components while preserving the integrity of the Hall sensor output signal, resulting in more accurate magnetic field measurements.- Signal conditioning and amplification techniques: Various signal conditioning and amplification techniques are employed to improve Hall effect sensor signal fidelity. These include specialized amplifier circuits, differential amplification methods, and filtering techniques to reduce noise and enhance the quality of the output signal. Such techniques help in maintaining signal integrity even in environments with electromagnetic interference, ensuring accurate measurement of magnetic fields.

- Noise reduction and interference mitigation: Hall effect sensor systems incorporate specific noise reduction and interference mitigation strategies to enhance signal fidelity. These include shielding techniques, common-mode rejection circuits, and specialized filtering to eliminate unwanted electromagnetic interference. Advanced signal processing algorithms are also implemented to distinguish between actual signals and noise, thereby improving the overall accuracy and reliability of the sensor output.

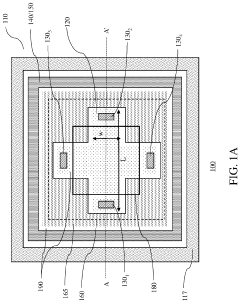

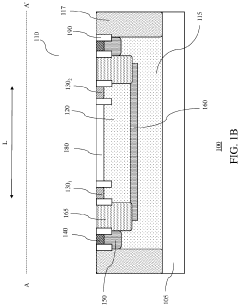

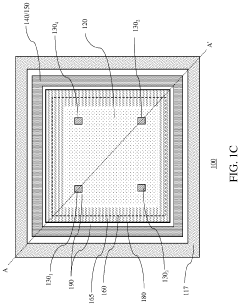

- Integrated circuit design for Hall sensors: Specialized integrated circuit designs are developed specifically for Hall effect sensors to improve signal fidelity. These designs incorporate on-chip signal processing, temperature compensation, and calibration capabilities. The integration of sensor elements with processing circuitry on a single chip reduces signal path length, minimizes external connections, and enhances overall signal quality while reducing susceptibility to external interference.

- Calibration and compensation techniques: Various calibration and compensation techniques are employed to enhance Hall effect sensor signal fidelity. These include temperature compensation circuits, offset voltage correction, and sensitivity adjustment methods. Dynamic calibration techniques allow for real-time adjustment of sensor parameters to account for environmental variations and aging effects, ensuring consistent and accurate measurements over the sensor's operational lifetime.

- Power management and supply stabilization: Effective power management and supply stabilization techniques are crucial for maintaining Hall effect sensor signal fidelity. These include voltage regulation circuits, power filtering, and isolation techniques to prevent power supply noise from affecting sensor performance. Low-power design approaches and specialized power sequencing methods ensure stable operation while minimizing power consumption, particularly important for battery-operated and portable applications.

02 Temperature compensation methods

Temperature variations can significantly affect Hall effect sensor performance and signal fidelity. Various compensation methods are implemented to mitigate these effects, including integrated temperature sensors, calibration circuits, and specialized materials with reduced temperature coefficients. These approaches help maintain consistent sensor output across a wide operating temperature range, ensuring reliable signal fidelity in varying environmental conditions.Expand Specific Solutions03 Sensor design and packaging innovations

Innovations in Hall effect sensor design and packaging contribute significantly to signal fidelity. These include optimized semiconductor materials, specialized magnetic concentrators, and shielding techniques that enhance sensitivity while reducing interference. Advanced packaging methods protect the sensor elements from environmental factors while maintaining precise positioning relative to the magnetic field source, resulting in more stable and accurate signal output.Expand Specific Solutions04 Digital signal processing and calibration

Digital signal processing techniques and calibration methods significantly improve Hall effect sensor signal fidelity. These include analog-to-digital conversion with high resolution, digital filtering algorithms, and programmable gain amplifiers. Factory and self-calibration routines compensate for manufacturing variations and aging effects, while digital interfaces provide robust communication with host systems, ensuring accurate magnetic field measurements under various operating conditions.Expand Specific Solutions05 Noise reduction and interference mitigation

Specialized techniques for noise reduction and interference mitigation are crucial for maintaining Hall effect sensor signal fidelity. These include electromagnetic shielding, common-mode rejection circuits, and strategic sensor placement. Advanced power supply filtering and isolation methods prevent external electrical noise from affecting sensor performance. Chopper stabilization and spinning current techniques are employed to reduce offset and 1/f noise, resulting in cleaner signals with improved signal-to-noise ratio.Expand Specific Solutions

Key Industry Players and Competitive Landscape

The Hall Effect Sensor Electronics market is currently in a growth phase, with increasing demand driven by automotive, industrial, and consumer electronics applications. The global market size is estimated to reach approximately $2 billion by 2025, growing at a CAGR of 8-10%. Technologically, the field is maturing with key players like Texas Instruments, Infineon Technologies, and Allegro MicroSystems leading innovation in signal fidelity optimization. Companies such as STMicroelectronics, Honeywell, and Robert Bosch are advancing sensor miniaturization and integration capabilities, while emerging players like Melexis and ams-OSRAM are focusing on specialized applications. Academic institutions including Southeast University and research organizations like Fraunhofer-Gesellschaft are contributing to fundamental advancements in Hall sensor technology, particularly in noise reduction and temperature compensation techniques.

Texas Instruments Incorporated

Technical Solution: Texas Instruments has pioneered Hall Effect sensor signal conditioning through their DRV5x series, which integrates sophisticated analog front-end circuitry with digital processing capabilities. Their approach employs a chopper-stabilized amplification stage with auto-zeroing techniques that periodically sample and cancel offset voltages, achieving offset drift below 2.5 μV/°C[2]. TI's solution incorporates programmable gain amplifiers with digitally controlled feedback networks that maintain optimal signal-to-noise ratios across varying magnetic field strengths[4]. The architecture includes specialized EMI filtering stages with common-mode rejection exceeding 80 dB across a wide frequency range, making it particularly robust in electrically noisy automotive and industrial environments[6]. TI has also implemented advanced temperature compensation using on-chip temperature sensors and calibration tables stored in non-volatile memory, allowing real-time correction of both gain and offset parameters across the entire operating temperature range.

Strengths: Exceptional EMI immunity with integrated filtering that exceeds automotive standards. Comprehensive development ecosystem with evaluation modules and simulation tools accelerates design cycles. Weaknesses: Higher complexity requires more extensive configuration during system integration, and some solutions have higher quiescent current than competing options.

Infineon Technologies AG

Technical Solution: Infineon Technologies has developed the TLE49xx family of Hall Effect sensors with integrated signal conditioning optimized for high-fidelity applications. Their approach centers on a monolithic design that combines the Hall sensing element with specialized analog processing circuits on a single silicon die, minimizing parasitic effects and thermal gradients that could degrade signal quality[3]. Infineon's architecture employs a proprietary dynamic offset cancellation technique that samples the Hall voltage at multiple phases and uses digital averaging to eliminate systematic offset errors, achieving typical residual offset below 100 μT[7]. Their sensors incorporate an advanced biasing scheme that maintains the Hall element in its optimal operating region despite temperature and supply voltage variations, ensuring consistent sensitivity across the entire operating range. For applications requiring precise threshold detection, Infineon implements a digitally controlled comparator with programmable hysteresis that prevents output chatter near the switching threshold, with response times under 2 μs for rapid position detection applications[9].

Strengths: Exceptional thermal stability through integrated temperature compensation circuits that maintain accuracy across -40°C to +150°C. Highly optimized power consumption with typical operating current below 2mA. Weaknesses: Limited configurability in some product variants, and relatively higher cost compared to basic Hall sensors without advanced signal conditioning.

Core Patents and Innovations in Hall Sensor Electronics

Hall effect sensors with tunable sensitivity and/or resistance

PatentActiveUS20200292631A1

Innovation

- A Hall effect sensor design with a tunable Hall plate thickness, achieved through adjustable implants in the separation layer and bias voltage applied to the separation layer, allowing for customizable current sensitivity and resistance, enabling high voltage and current sensitivity within the same device.

High speed densor circuit for stabilized hall effect sensor

PatentInactiveUS6265864B1

Innovation

- A four-terminal Hall effect sensor with orthogonally paired terminals and a circuitry that uses pass gate transistors and a timing generator to control the charging and discharging of multiple capacitor pairs, minimizing the size and response time of capacitors to reduce timing delays and correct for error components in the output voltage signal.

Noise Reduction Techniques and Methodologies

Noise reduction in Hall Effect sensor systems represents a critical aspect of signal fidelity optimization. The inherent sensitivity of these sensors to external electromagnetic interference necessitates comprehensive noise mitigation strategies. Traditional approaches include hardware-based solutions such as shielding and filtering, which create protective barriers against ambient electromagnetic fields that would otherwise corrupt sensor readings.

Advanced filtering techniques have evolved significantly, with digital signal processing (DSP) methods now complementing analog filters. Particularly effective are adaptive filters that dynamically adjust their parameters based on real-time noise characteristics, enabling more precise separation of signal from noise even in variable environments. These systems can identify and eliminate periodic noise patterns while preserving the integrity of the actual measurement signal.

Differential sensing configurations have emerged as another powerful methodology, wherein multiple Hall sensors are arranged to detect the same magnetic field but with opposite polarities. By subtracting these signals, common-mode noise affecting both sensors equally is effectively canceled, while the desired differential signal is amplified. This approach has demonstrated noise reduction factors of up to 20dB in industrial applications.

Chopper stabilization techniques address the particularly troublesome 1/f noise (flicker noise) that dominates at low frequencies. By modulating the Hall voltage to a higher frequency band where 1/f noise is minimal, then demodulating back to baseband after amplification, these systems significantly improve signal-to-noise ratios in precision measurement applications. Recent implementations have achieved noise floors below 100nT/√Hz.

Integration of on-chip compensation circuits represents the cutting edge of noise reduction technology. These systems incorporate temperature sensors, offset calibration mechanisms, and dynamic range adjustment capabilities directly within the sensor package. Real-time calibration algorithms continuously monitor and adjust for drift factors, maintaining measurement accuracy across varying environmental conditions.

Frequency domain analysis tools have become increasingly important in identifying and characterizing noise sources. Spectral analysis techniques allow engineers to isolate specific frequency components contributing to measurement error, enabling targeted filtering approaches rather than broadband solutions that might compromise signal integrity.

The combination of these methodologies in modern Hall Effect sensor systems has enabled unprecedented levels of measurement precision, with some advanced implementations achieving noise rejection ratios exceeding 80dB. This progress has expanded the application range of Hall sensors into previously inaccessible domains requiring extreme sensitivity, such as biomedical imaging and quantum computing support systems.

Advanced filtering techniques have evolved significantly, with digital signal processing (DSP) methods now complementing analog filters. Particularly effective are adaptive filters that dynamically adjust their parameters based on real-time noise characteristics, enabling more precise separation of signal from noise even in variable environments. These systems can identify and eliminate periodic noise patterns while preserving the integrity of the actual measurement signal.

Differential sensing configurations have emerged as another powerful methodology, wherein multiple Hall sensors are arranged to detect the same magnetic field but with opposite polarities. By subtracting these signals, common-mode noise affecting both sensors equally is effectively canceled, while the desired differential signal is amplified. This approach has demonstrated noise reduction factors of up to 20dB in industrial applications.

Chopper stabilization techniques address the particularly troublesome 1/f noise (flicker noise) that dominates at low frequencies. By modulating the Hall voltage to a higher frequency band where 1/f noise is minimal, then demodulating back to baseband after amplification, these systems significantly improve signal-to-noise ratios in precision measurement applications. Recent implementations have achieved noise floors below 100nT/√Hz.

Integration of on-chip compensation circuits represents the cutting edge of noise reduction technology. These systems incorporate temperature sensors, offset calibration mechanisms, and dynamic range adjustment capabilities directly within the sensor package. Real-time calibration algorithms continuously monitor and adjust for drift factors, maintaining measurement accuracy across varying environmental conditions.

Frequency domain analysis tools have become increasingly important in identifying and characterizing noise sources. Spectral analysis techniques allow engineers to isolate specific frequency components contributing to measurement error, enabling targeted filtering approaches rather than broadband solutions that might compromise signal integrity.

The combination of these methodologies in modern Hall Effect sensor systems has enabled unprecedented levels of measurement precision, with some advanced implementations achieving noise rejection ratios exceeding 80dB. This progress has expanded the application range of Hall sensors into previously inaccessible domains requiring extreme sensitivity, such as biomedical imaging and quantum computing support systems.

Environmental Factors Affecting Hall Sensor Performance

Hall Effect sensors, while robust in design, are significantly influenced by various environmental factors that can compromise their signal fidelity and measurement accuracy. Temperature variations represent one of the most critical environmental challenges, as they directly affect the semiconductor material's carrier mobility and concentration. This temperature dependency manifests as sensitivity drift, offset voltage fluctuations, and overall measurement instability across operating temperature ranges, typically causing a 0.1% to 0.5% sensitivity change per degree Celsius in standard silicon-based sensors.

Electromagnetic interference (EMI) constitutes another substantial environmental concern for Hall sensor implementations. External magnetic fields from nearby power lines, motors, transformers, or other electronic equipment can introduce noise and distortion into the sensor's output signal. This interference becomes particularly problematic in industrial environments where multiple electromagnetic sources operate simultaneously, potentially leading to false readings or reduced measurement precision.

Mechanical stress and vibration also significantly impact Hall sensor performance. Physical deformation of the semiconductor material due to mounting pressure, thermal expansion, or vibration can alter the material's electrical properties through the piezoresistive effect. These mechanical factors typically manifest as baseline drift and sensitivity changes that are difficult to predict or compensate for in dynamic operating environments.

Humidity and corrosive atmospheres present long-term reliability challenges for Hall sensor electronics. While the semiconductor element itself may be protected, the supporting circuitry and connections remain vulnerable to moisture ingress and corrosion. Protective encapsulation techniques must balance environmental protection with minimal impact on the sensor's magnetic field detection capabilities.

Radiation exposure represents a specialized environmental concern in aerospace, nuclear, and certain medical applications. High-energy particles can cause both transient effects (single event upsets) and cumulative damage (total ionizing dose effects) to the semiconductor material and supporting electronics, potentially leading to permanent sensitivity shifts or complete device failure.

Power supply variations, though often overlooked, can significantly affect measurement accuracy. Hall sensors require stable supply voltages to maintain consistent performance, as fluctuations directly impact the biasing current through the semiconductor element and consequently affect the Hall voltage generated. Modern sensor designs incorporate voltage regulators and reference circuits to mitigate these effects, but residual sensitivity to power supply variations remains a consideration in precision applications.

Electromagnetic interference (EMI) constitutes another substantial environmental concern for Hall sensor implementations. External magnetic fields from nearby power lines, motors, transformers, or other electronic equipment can introduce noise and distortion into the sensor's output signal. This interference becomes particularly problematic in industrial environments where multiple electromagnetic sources operate simultaneously, potentially leading to false readings or reduced measurement precision.

Mechanical stress and vibration also significantly impact Hall sensor performance. Physical deformation of the semiconductor material due to mounting pressure, thermal expansion, or vibration can alter the material's electrical properties through the piezoresistive effect. These mechanical factors typically manifest as baseline drift and sensitivity changes that are difficult to predict or compensate for in dynamic operating environments.

Humidity and corrosive atmospheres present long-term reliability challenges for Hall sensor electronics. While the semiconductor element itself may be protected, the supporting circuitry and connections remain vulnerable to moisture ingress and corrosion. Protective encapsulation techniques must balance environmental protection with minimal impact on the sensor's magnetic field detection capabilities.

Radiation exposure represents a specialized environmental concern in aerospace, nuclear, and certain medical applications. High-energy particles can cause both transient effects (single event upsets) and cumulative damage (total ionizing dose effects) to the semiconductor material and supporting electronics, potentially leading to permanent sensitivity shifts or complete device failure.

Power supply variations, though often overlooked, can significantly affect measurement accuracy. Hall sensors require stable supply voltages to maintain consistent performance, as fluctuations directly impact the biasing current through the semiconductor element and consequently affect the Hall voltage generated. Modern sensor designs incorporate voltage regulators and reference circuits to mitigate these effects, but residual sensitivity to power supply variations remains a consideration in precision applications.

Unlock deeper insights with PatSnap Eureka Quick Research — get a full tech report to explore trends and direct your research. Try now!

Generate Your Research Report Instantly with AI Agent

Supercharge your innovation with PatSnap Eureka AI Agent Platform!