Video description method based on cyclic convolution decoding of a complementary attention mechanism

A technology of video description and circular convolution, applied in the field of computer vision, can solve the problem of weakening the ability of the circular neural network to transmit information, and achieve the effects of improving long-distance dependence, improving accuracy, and good application value

- Summary

- Abstract

- Description

- Claims

- Application Information

AI Technical Summary

Problems solved by technology

Method used

Image

Examples

Embodiment Construction

[0040] In order to make the object, technical solution and advantages of the present invention clearer, the present invention will be further described in detail below in conjunction with the accompanying drawings and embodiments. It should be understood that the specific embodiments described here are only used to explain the present invention, not to limit the present invention.

[0041] On the contrary, the invention covers any alternatives, modifications, equivalent methods and schemes within the spirit and scope of the invention as defined by the claims. Further, in order to make the public have a better understanding of the present invention, some specific details are described in detail in the detailed description of the present invention below. The present invention can be fully understood by those skilled in the art without the description of these detailed parts.

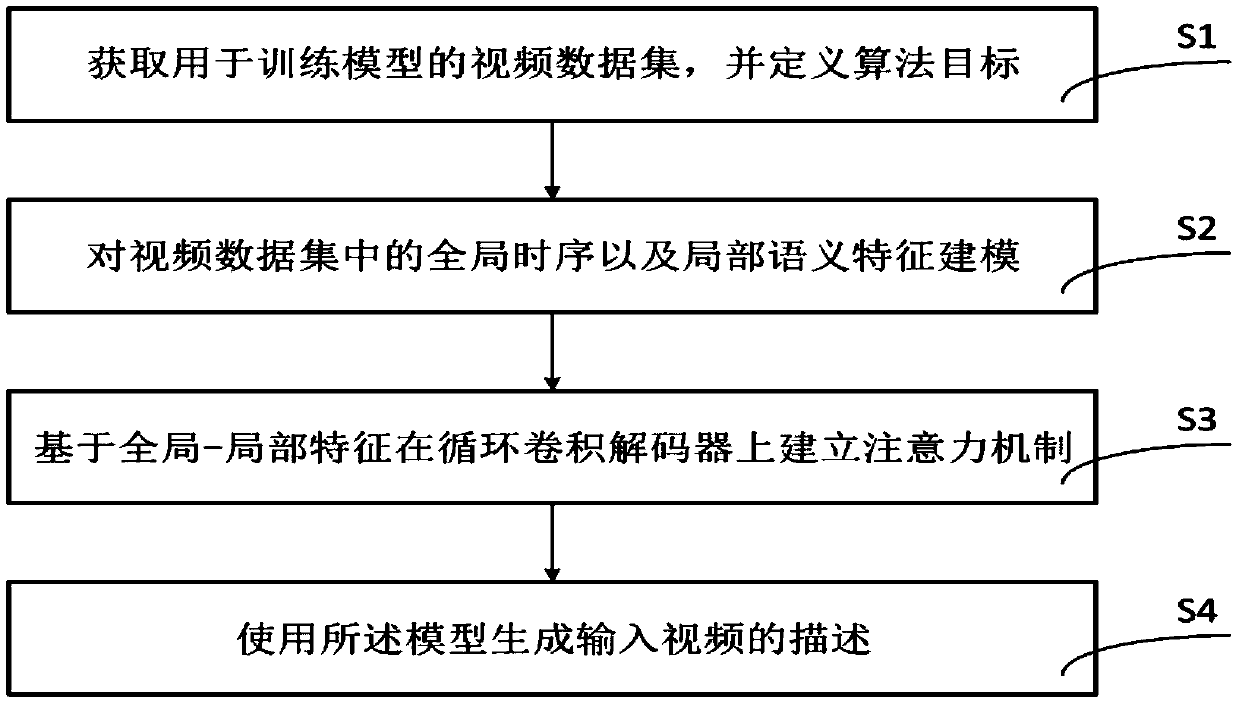

[0042] refer to figure 1, in a preferred embodiment of the present invention, the video description g...

PUM

Login to View More

Login to View More Abstract

Description

Claims

Application Information

Login to View More

Login to View More