Feature extraction method, device, terminal device and storage medium

A feature extraction and feature fusion technology, applied in the field of computer vision, can solve the problem of low extraction accuracy, avoid feature confusion and improve detection accuracy

- Summary

- Abstract

- Description

- Claims

- Application Information

AI Technical Summary

Problems solved by technology

Method used

Image

Examples

Embodiment 1

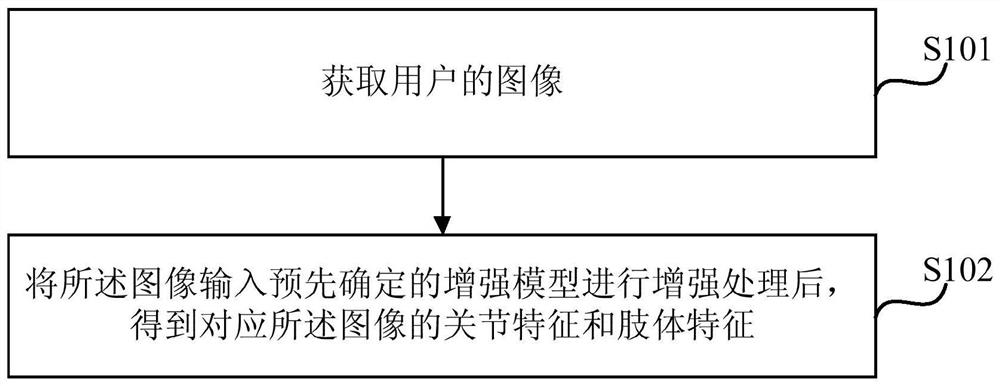

[0043] figure 1 It is a schematic flow chart of a feature extraction method provided in Embodiment 1 of the present invention. This method is applicable to the situation of feature extraction for users. Specifically, this method is applicable to the situation of body feature and joint feature extraction for users. The method can be performed by a feature extraction device, wherein the device can be implemented by software and / or hardware, and is generally integrated on a terminal device. In this embodiment, the terminal device includes but is not limited to: computers, personal digital assistants and other devices.

[0044] Such as figure 1 As shown, a feature extraction method provided in Embodiment 1 of the present invention includes the following steps:

[0045] S101. Acquire an image of a user.

[0046] In this embodiment, the image may include a photo of the user. The image can be used to estimate the pose of the user, such as analyzing the behavior of the user. The i...

Embodiment 2

[0081] Figure 2a It is a schematic flowchart of a feature extraction method provided by Embodiment 2 of the present invention. This Embodiment 2 is optimized on the basis of the foregoing embodiments. In this embodiment, the target element area is subjected to feature fusion and feature migration to obtain enhanced joint features and enhanced limb features, which is further embodied as: input the target element area into the joint model to obtain the initially added joint features and Joint fusion feature, the joint fusion feature is obtained by merging the features of the joint convolution layer set in the joint model, and the joint convolution layer set is the convolution layer in the joint model except for the output initial joint feature The convolutional layer, the initial joint feature is obtained by convolving the joint fusion feature;

[0082]Input the target element area into the limb model to obtain the newly added limb feature and limb fusion feature. The limb fus...

Embodiment 3

[0139] image 3 It is a schematic structural diagram of a feature extraction device provided by Embodiment 3 of the present invention. The device is applicable to the case of extracting features from users. Specifically, the method is applicable to the case of extracting body features and joint features from users. Wherein the device can be realized by software and / or hardware, and generally integrated on the terminal equipment.

[0140] Such as image 3 As shown, the device includes: an acquisition module 31 and a processing module 32;

[0141] Wherein, the obtaining module 31 is used to obtain the image of the user;

[0142] The processing module 32 is configured to input the image into a predetermined enhancement model for enhancement processing to obtain joint features and limb features corresponding to the image, and the enhancement processing includes at least one of the following feature fusion, feature migration, and feature extraction .

[0143] In this embodiment...

PUM

Login to View More

Login to View More Abstract

Description

Claims

Application Information

Login to View More

Login to View More - R&D

- Intellectual Property

- Life Sciences

- Materials

- Tech Scout

- Unparalleled Data Quality

- Higher Quality Content

- 60% Fewer Hallucinations

Browse by: Latest US Patents, China's latest patents, Technical Efficacy Thesaurus, Application Domain, Technology Topic, Popular Technical Reports.

© 2025 PatSnap. All rights reserved.Legal|Privacy policy|Modern Slavery Act Transparency Statement|Sitemap|About US| Contact US: help@patsnap.com