Multi-person behavior recognition system based on key point detection and working method

A technology of recognition system and working method, applied in character and pattern recognition, instrument, calculation, etc., can solve the problems of reducing the efficiency of recognition, omission of detection, failure of classification and recognition, etc., and achieve the effect of improving the accuracy rate

- Summary

- Abstract

- Description

- Claims

- Application Information

AI Technical Summary

Problems solved by technology

Method used

Image

Examples

Embodiment 1

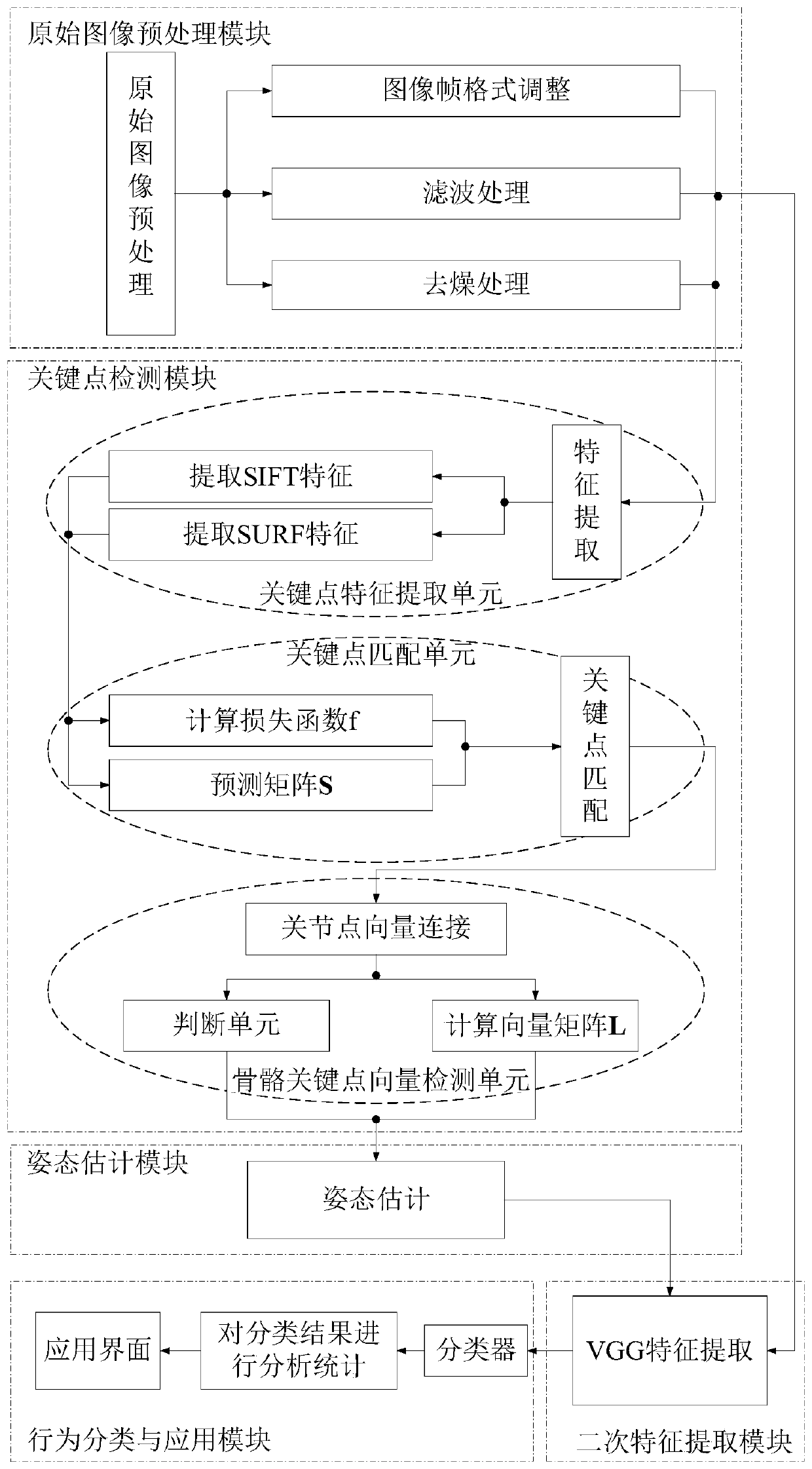

[0041] In one or more embodiments, a multi-person behavior recognition system based on key point detection is disclosed, such as figure 1 shown, including:

[0042] (1) The original image preprocessing module is used to adjust the format of the input image frame, filter and denoise the input image. Adjusting the format of the input image frame includes adjusting the size of the input image, adjusting the gray value of the input image, and adjusting the storage format of the input image; the input image is denoised by a filter, and then the irrelevant information in the image is eliminated by a smooth denoising operation impact on subsequent outcomes;

[0043] (2) Key point detection module, refer to image 3 , including:

[0044] ① The key point feature extraction unit performs SIFT and SURF key point feature extraction on each frame of image to obtain the key point feature matrix;

[0045] Specifically, a Gaussian function is used to establish a scale space for each frame o...

Embodiment 2

[0060] In one or more implementations, a method of multi-person behavior recognition based on key point detection is disclosed, such as figure 2 shown, including the following steps:

[0061] Step S01: Raw image preprocessing operation

[0062] Input two multi-person behavior datasets, MPII Human Pose Dataset and MSCOCO Dataset, with a total of 25k image frames, including several marked image frames, and perform filtering and denoising operations on the image frames of the dataset. The image frames in the data set are processed in gray scale, and the format of the network input is adjusted appropriately.

[0063] In this embodiment, the purpose of selecting MPII Human Pose Dataset and MSCOCO Dataset data sets for the original image is to facilitate the description of the method of this embodiment. In the actual application process, the original image is the collected image to be classified and recognized.

[0064] Step S02: Extract SIFT and SURF features of the image frame ...

Embodiment 3

[0113] In this embodiment, the real-time class behavior of classroom students is taken as an example. The classroom camera equipment collects the class situation of the students in the current classroom, and analyzes the class status of the students in this period of time in real time. The steps are as follows:

[0114] Step S01: Acquired image preprocessing operation

[0115] The camera equipment in the classroom uploads one frame of image to the host computer per second, and performs preprocessing operations on the collected images;

[0116] Step S02: Extract SIFT and SURF features of the image frame

[0117] The collected image is used as input to extract SIFT features. The specific steps are as follows:

[0118] 1) Use the Gaussian function G(x,y,σ) to establish the scale space σ, and the calculation formula of the Gaussian function is shown in (Ι):

[0119]

[0120] Among them, G(x, y, σ) represents a Gaussian function, (x, y) is a spatial coordinate, and σ is a scal...

PUM

Login to View More

Login to View More Abstract

Description

Claims

Application Information

Login to View More

Login to View More