Patents

Literature

23055 results about "Processing" patented technology

Efficacy Topic

Property

Owner

Technical Advancement

Application Domain

Technology Topic

Technology Field Word

Patent Country/Region

Patent Type

Patent Status

Application Year

Inventor

Processing is an open-source graphical library and integrated development environment (IDE) built for the electronic arts, new media art, and visual design communities with the purpose of teaching non-programmers the fundamentals of computer programming in a visual context.

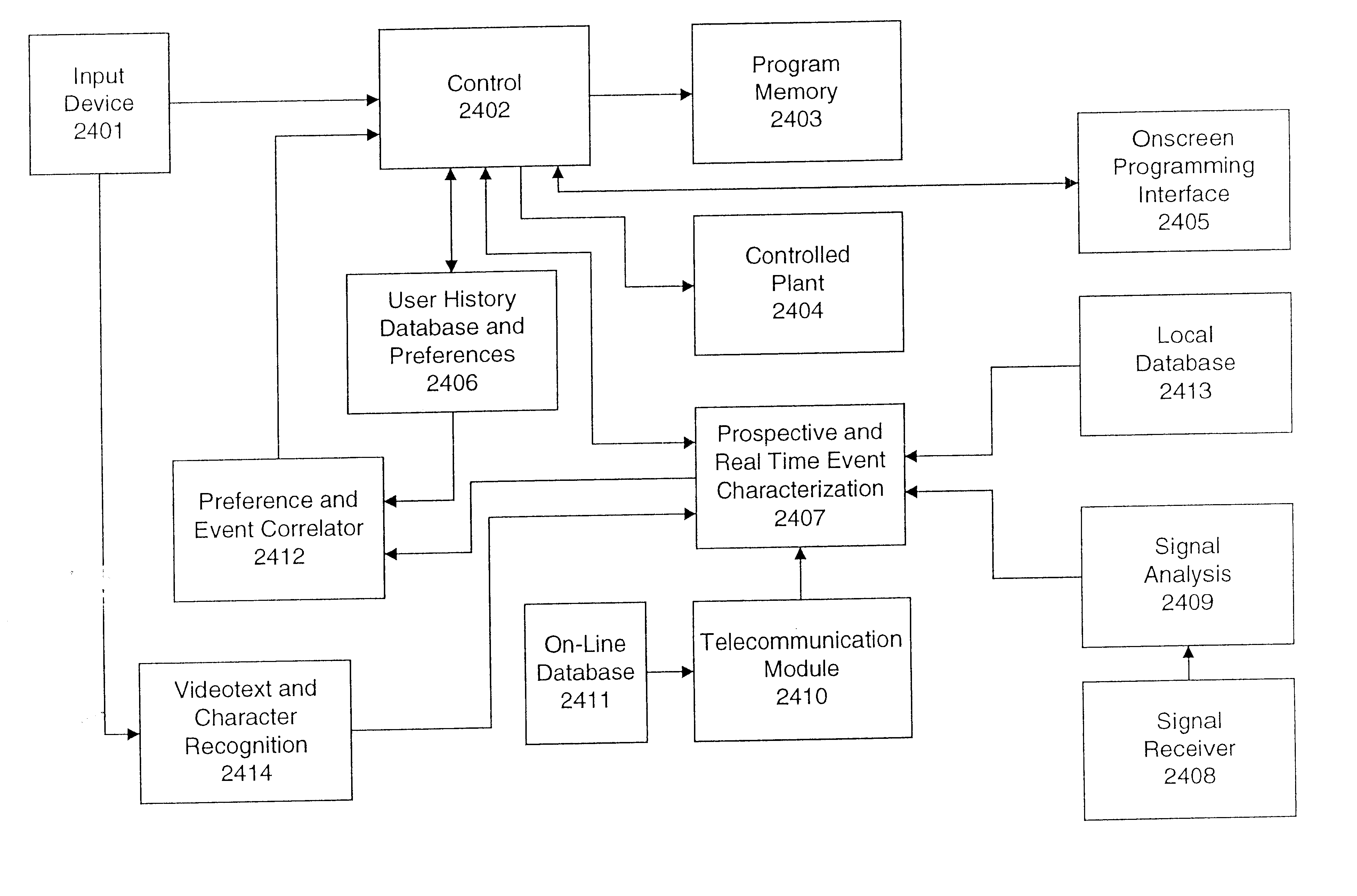

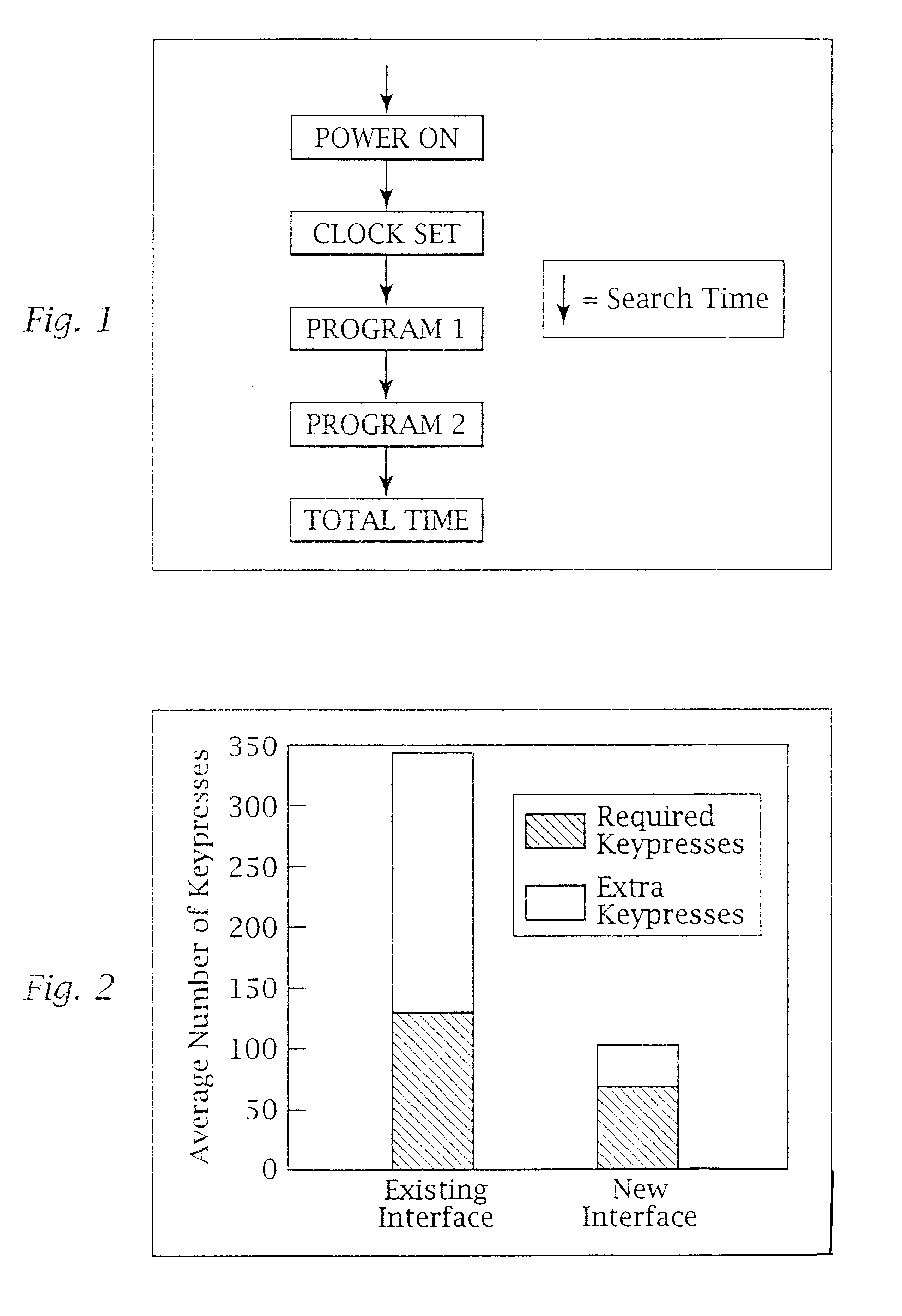

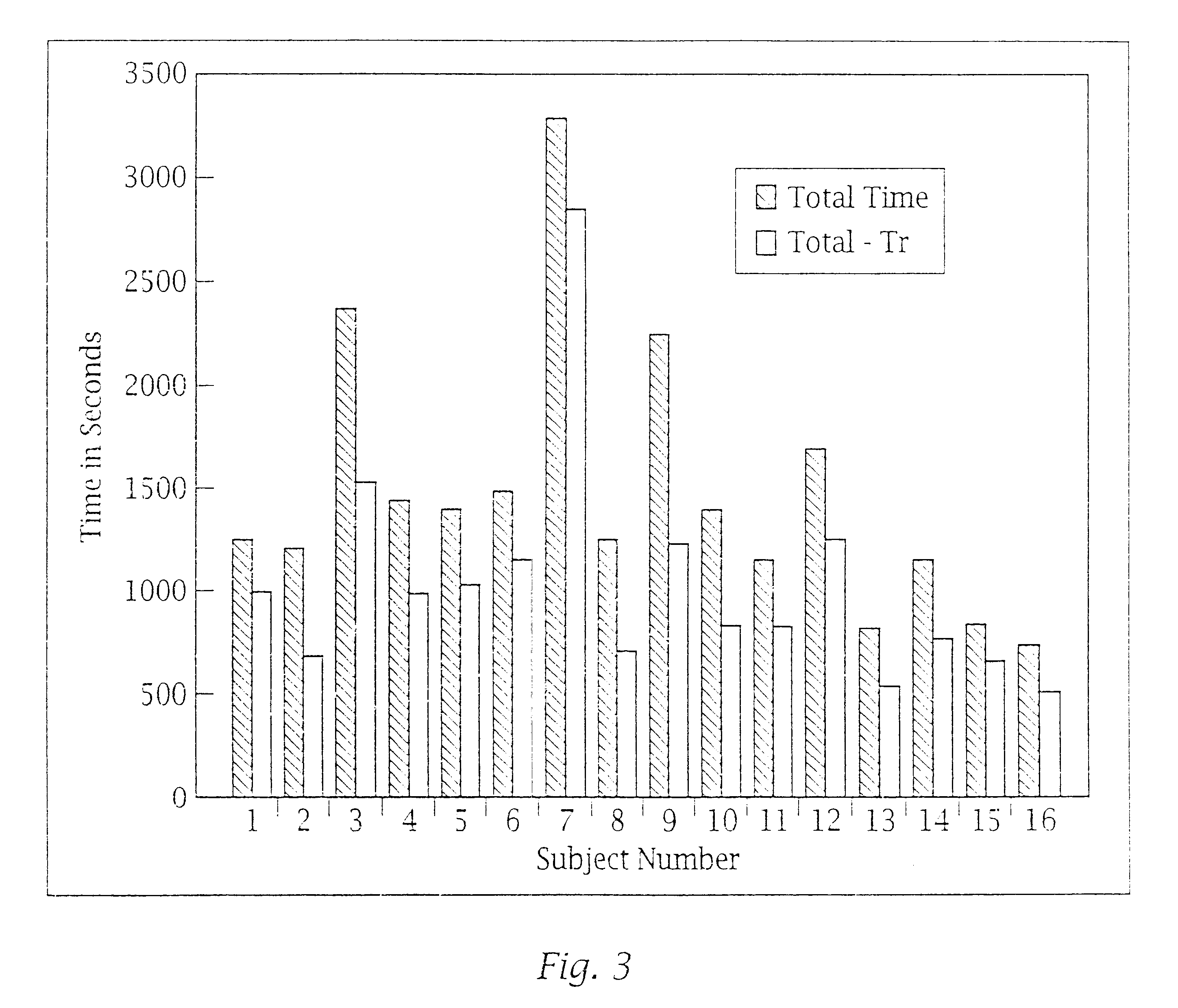

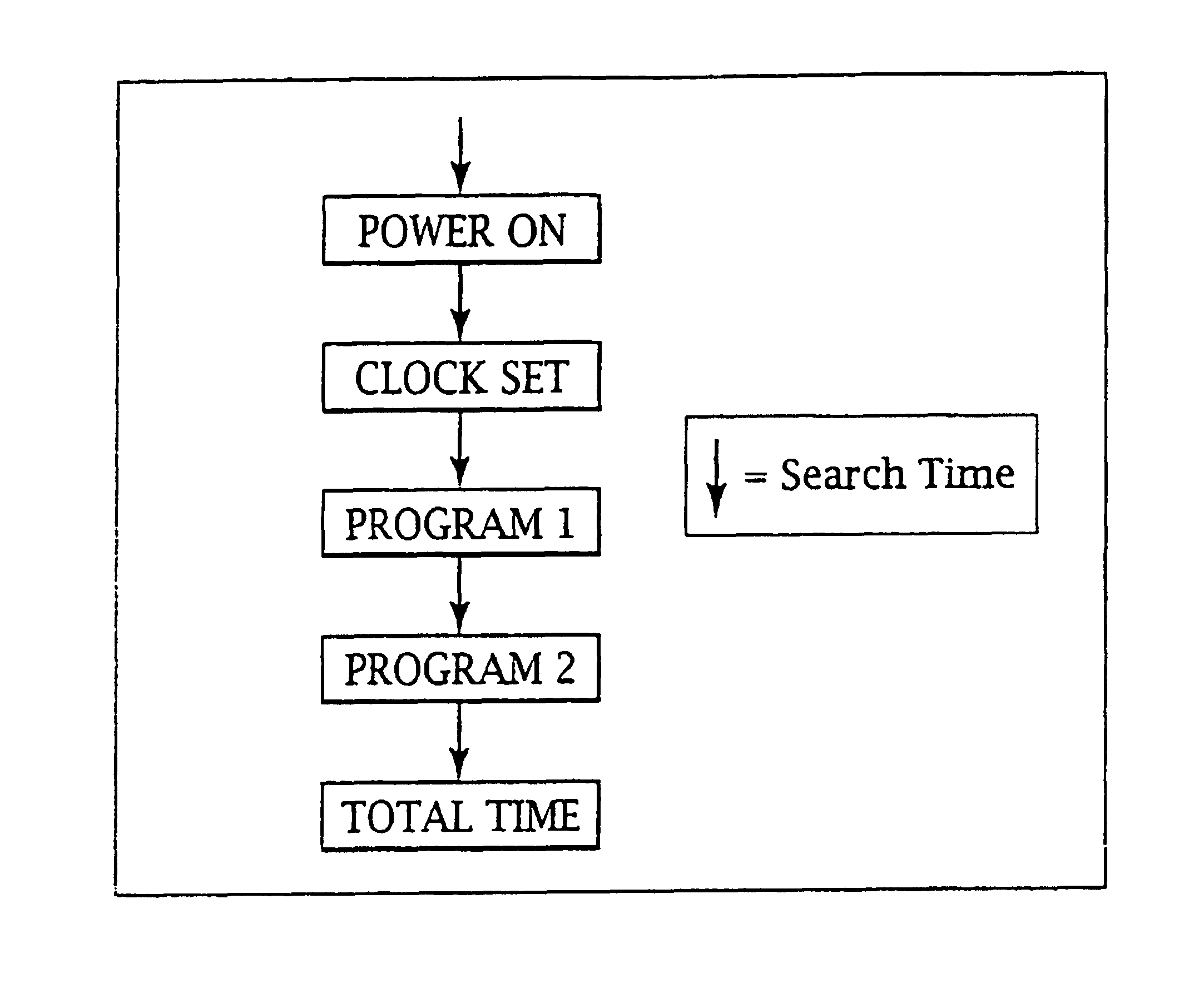

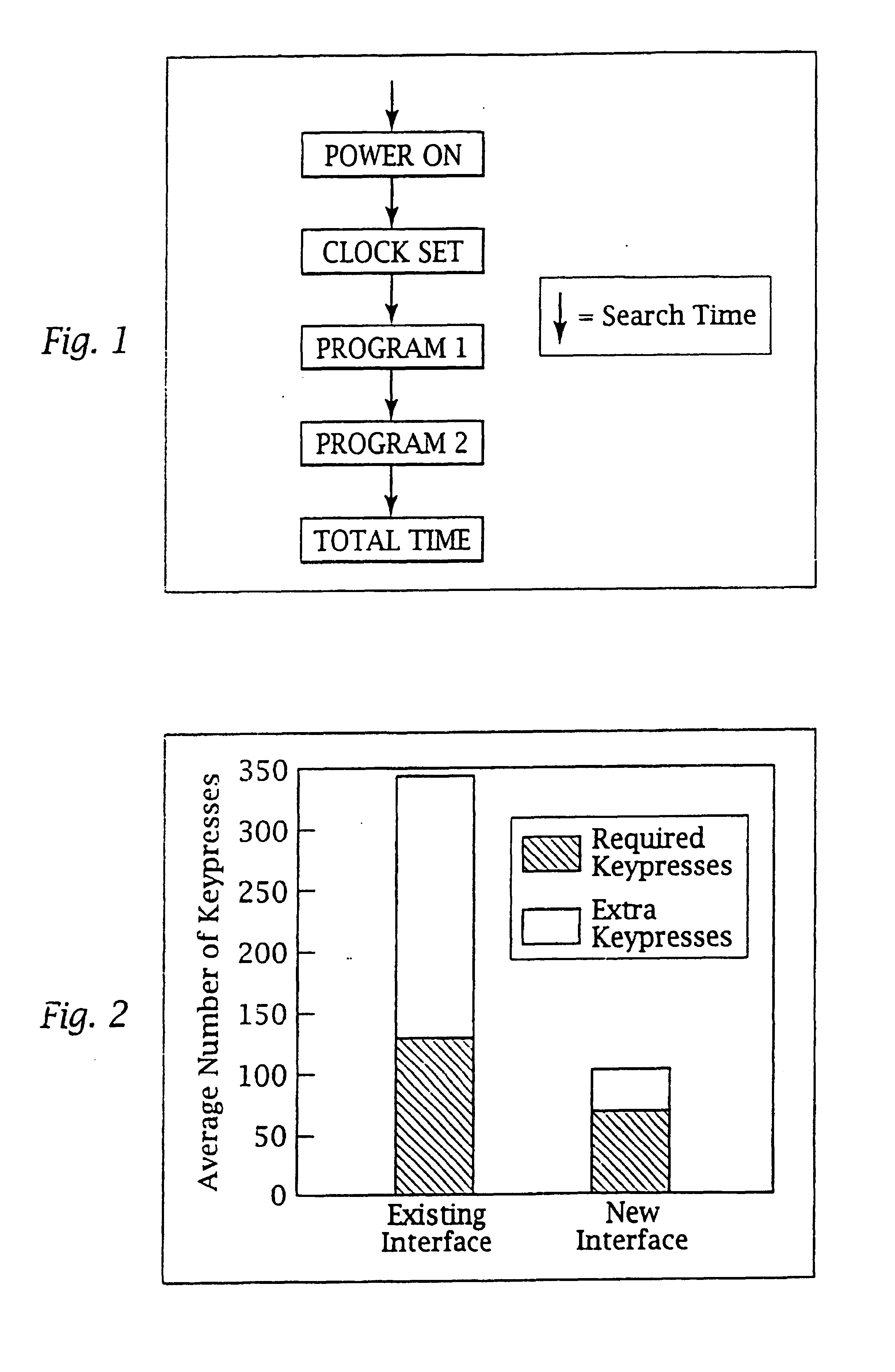

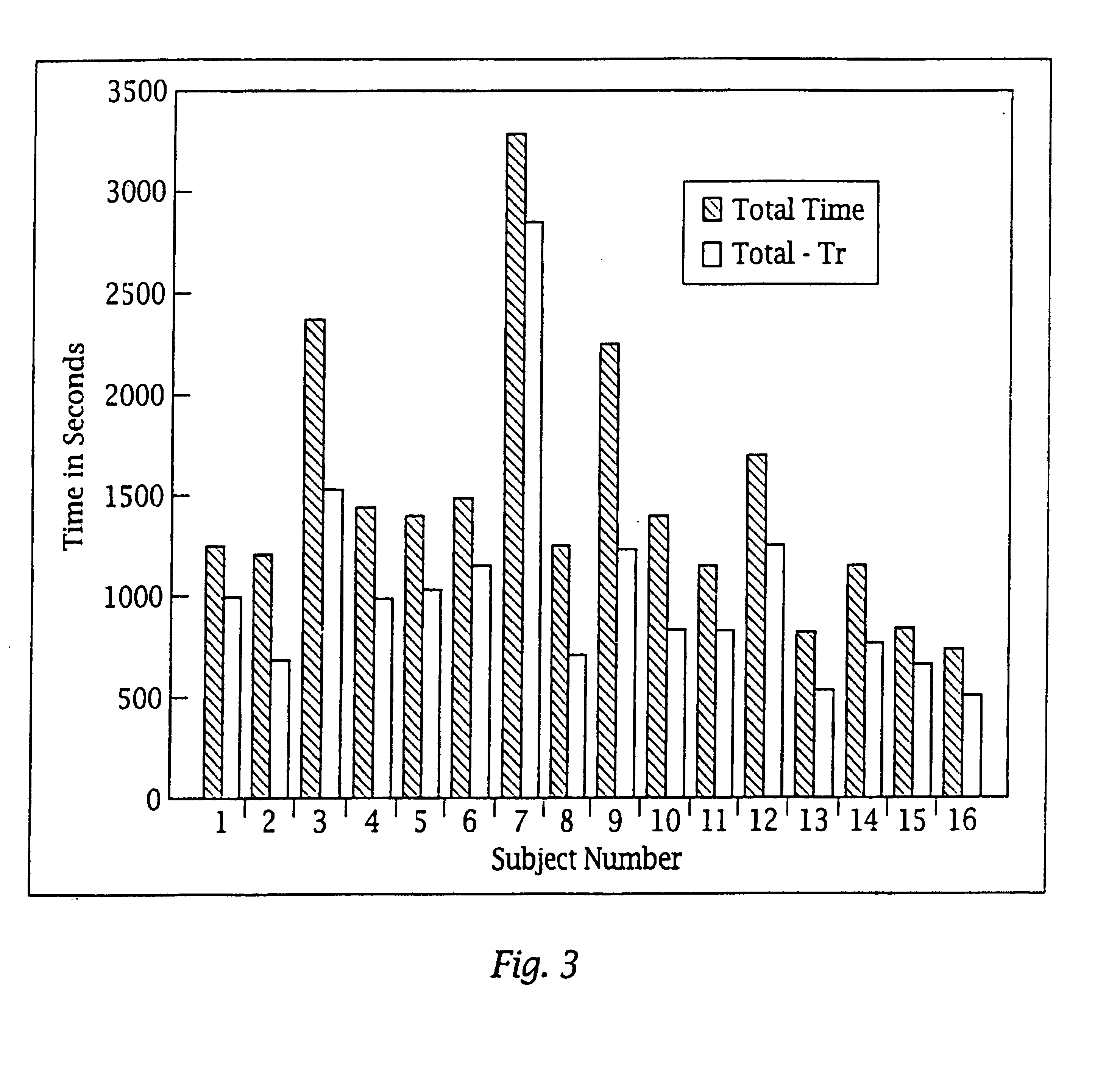

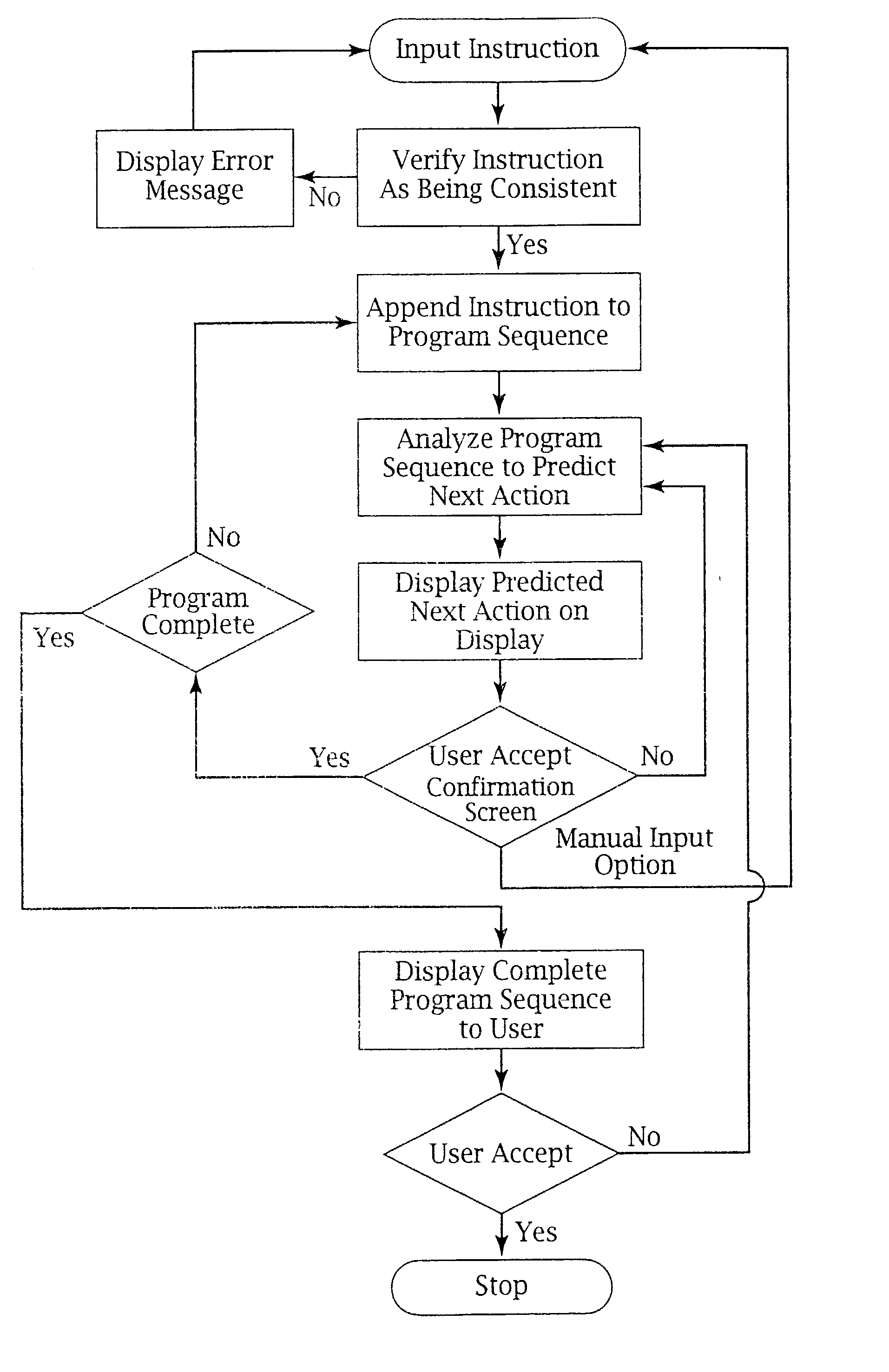

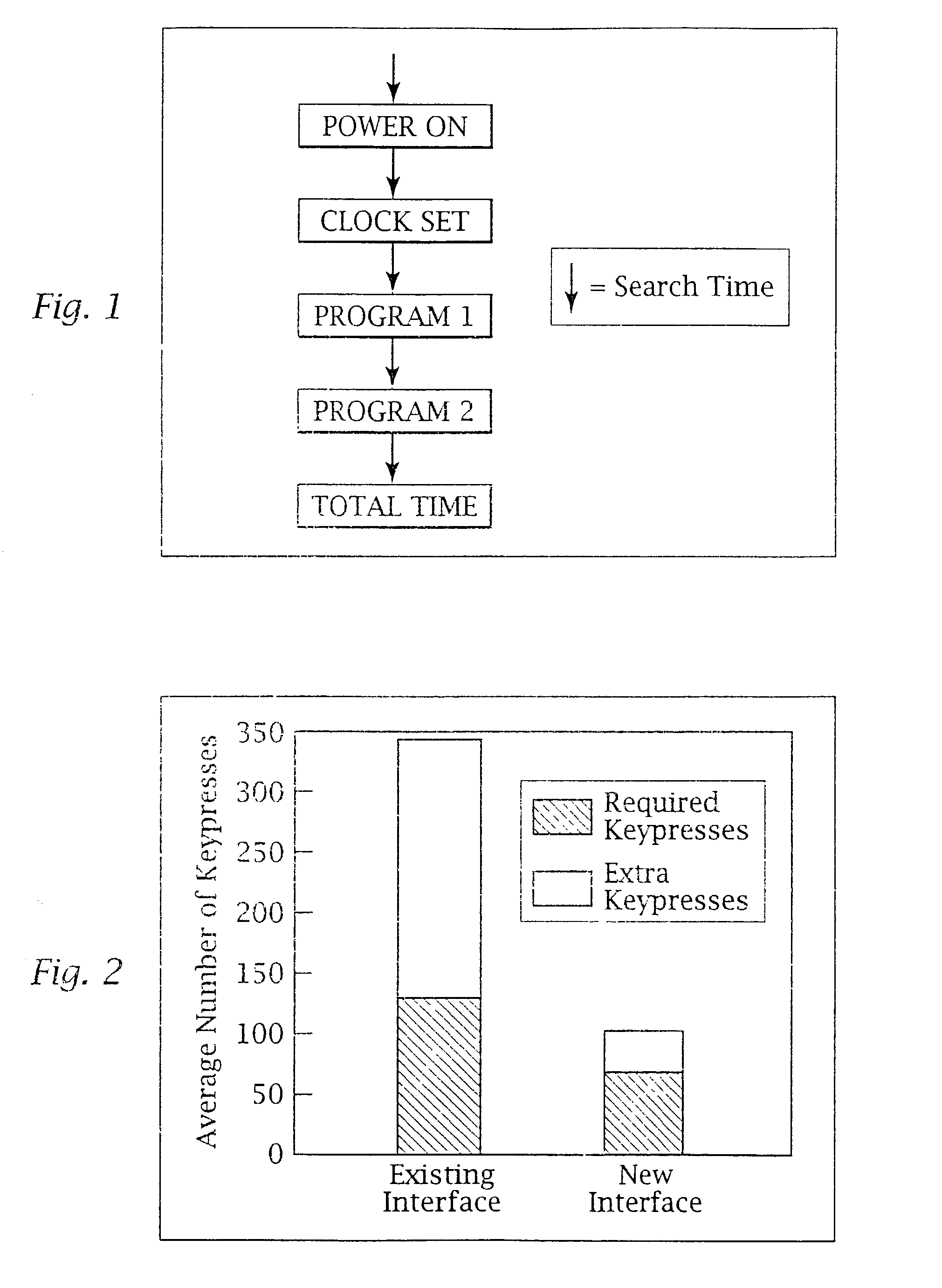

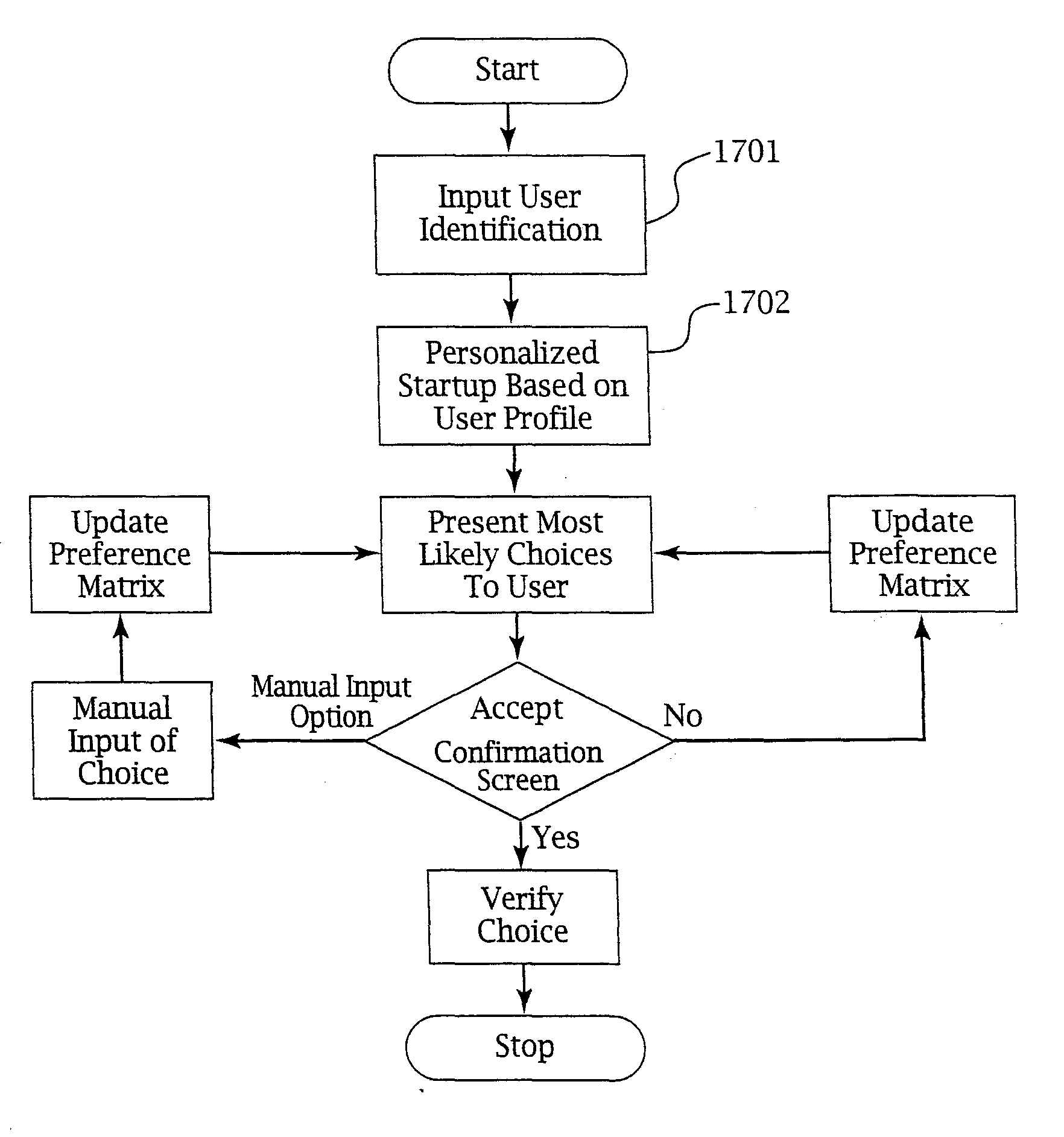

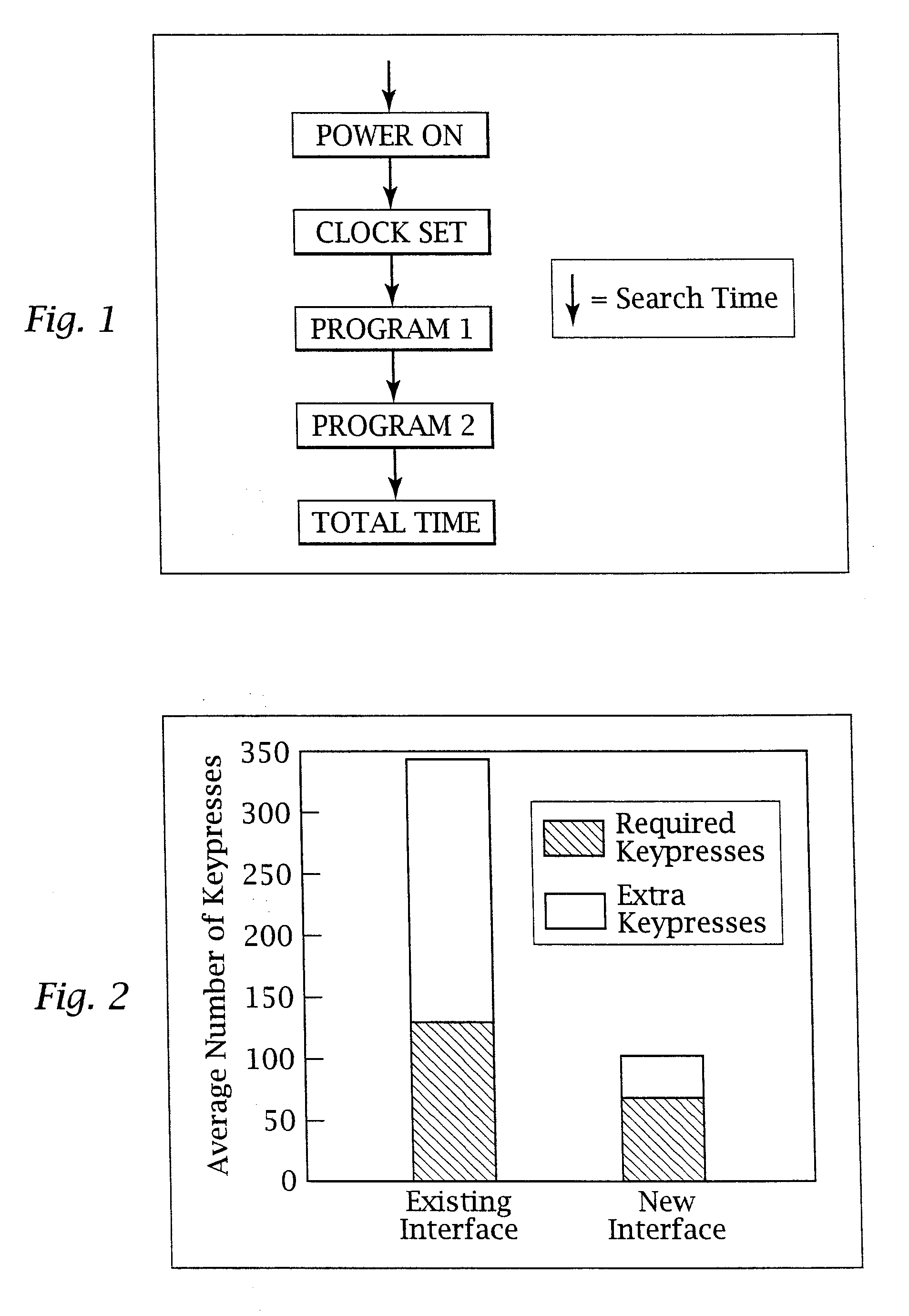

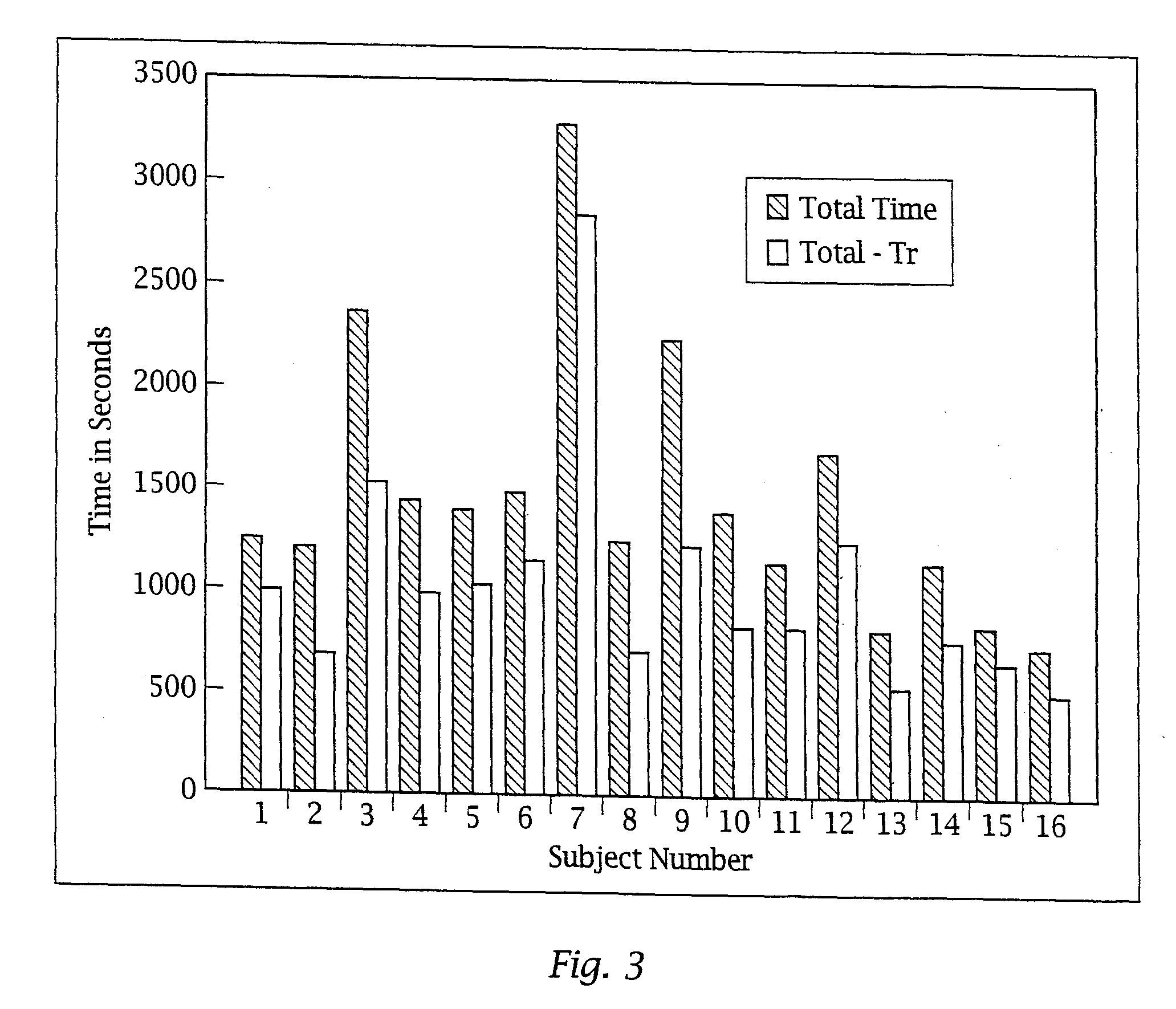

Ergonomic man-machine interface incorporating adaptive pattern recognition based control system

InactiveUS6418424B1Minimal costAvoid the needTelevision system detailsDigital data processing detailsHuman–machine interfaceData stream

An adaptive interface for a programmable system, for predicting a desired user function, based on user history, as well as machine internal status and context. The apparatus receives an input from the user and other data. A predicted input is presented for confirmation by the user, and the predictive mechanism is updated based on this feedback. Also provided is a pattern recognition system for a multimedia device, wherein a user input is matched to a video stream on a conceptual basis, allowing inexact programming of a multimedia device. The system analyzes a data stream for correspondence with a data pattern for processing and storage. The data stream is subjected to adaptive pattern recognition to extract features of interest to provide a highly compressed representation which may be efficiently processed to determine correspondence. Applications of the interface and system include a VCR, medical device, vehicle control system, audio device, environmental control system, securities trading terminal, and smart house. The system optionally includes an actuator for effecting the environment of operation, allowing closed-loop feedback operation and automated learning.

Owner:BLANDING HOVENWEEP

Ergonomic man-machine interface incorporating adaptive pattern recognition based control system

ActiveUS7136710B1Significant to useImprove computing powerComputer controlAnalogue secracy/subscription systemsConceptual basisHuman–machine interface

An adaptive interface for a programmable system, for predicting a desired user function, based on user history, as well as machine internal status and context. The apparatus receives an input from the user and other data. A predicted input is presented for confirmation by the user, and the predictive mechanism is updated based on this feedback. Also provided is a pattern recognition system for a multimedia device, wherein a user input is matched to a video stream on a conceptual basis, allowing inexact programming of a multimedia device. The system analyzes a data stream for correspondence with a data pattern for processing and storage. The data stream is subjected to adaptive pattern recognition to extract features of interest to provide a highly compressed representation which may be efficiently processed to determine correspondence. Applications of the interface and system include a VCR, medical device, vehicle control system, audio device, environmental control system, securities trading terminal, and smart house. The system optionally includes an actuator for effecting the environment of operation, allowing closed-loop feedback operation and automated learning.

Owner:BLANDING HOVENWEEP +1

Media recording device with packet data interface

An adaptive interface for a programmable system, for predicting a desired user function, based on user history, as well as machine internal status and context. The apparatus receives an input from the user and other data. A predicted input is presented for confirmation by the user, and the predictive mechanism is updated based on this feedback. Also provided is a pattern recognition system for a multimedia device, wherein a user input is matched to a video stream on a conceptual basis, allowing inexact programming of a multimedia device. The system analyzes a data stream for correspondence with a data pattern for processing and storage. The data stream is subjected to adaptive pattern recognition to extract features of interest to provide a highly compressed representation that may be efficiently processed to determine correspondence. Applications of the interface and system include a video cassette recorder (VCR), medical device, vehicle control system, audio device, environmental control system, securities trading terminal, and smart house. The system optionally includes an actuator for effecting the environment of operation, allowing closed-loop feedback operation and automated learning.

Owner:BLANDING HOVENWEEP

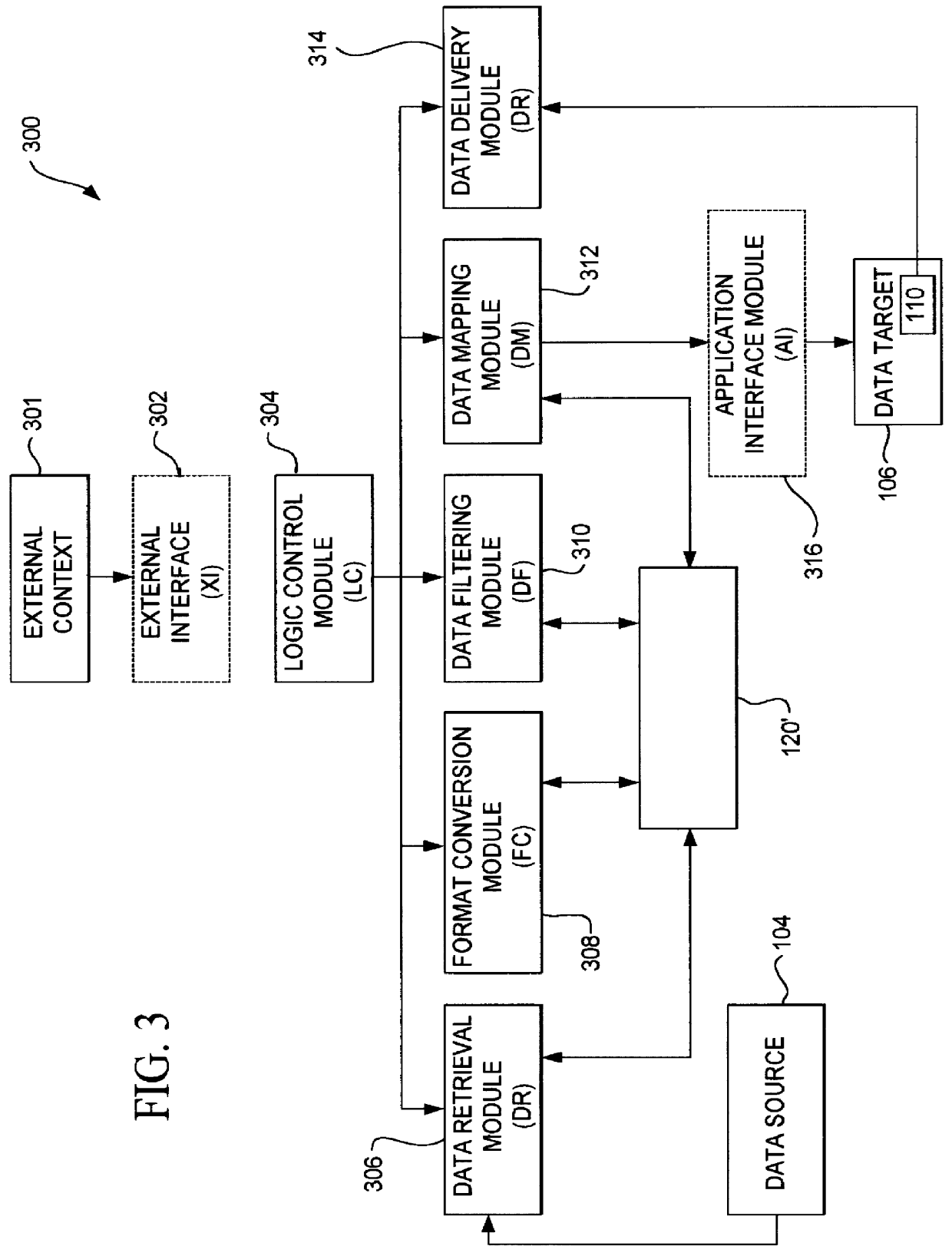

Method and apparatus for data communication

InactiveUS6094684AInterprogram communicationMultiple digital computer combinationsGraphicsGraphical user interface

A data acquisition and delivery system for performing data delivery tasks is disclosed. This system uses a computer running software to acquire source data from a selected data source, to process (e.g. filter, format convert) the data, if desired, and to deliver the resulting delivered data to a data target. The system is designed to access remote and / or local data sources and to deliver data to remote and / or local data targets. The data target might be an application program that delivers the data to a file or the data target may simply be a file, for example. To obtain the delivered data, the software performs processing of the source data as appropriate for the particular type of data being retrieved, for the particular data target and as specified by a user, for example. The system can communicate directly with a target application program, telling the target application to place the delivered data in a particular location in a particular file. The system provides an external interface to an external context. If the external context is a human, the external interface may be a graphical user interface, for example. If the external context is another software application, the external interface may be an OLE interface, for example. Using the external interface, the external context is able to vary a variety of parameters to define data delivery tasks as desired. The system uses a unique notation that includes a plurality of predefined parameters to define the data delivery tasks and to communicate them to the software.

Owner:E BOTZ COM INC A DELAWARE +2

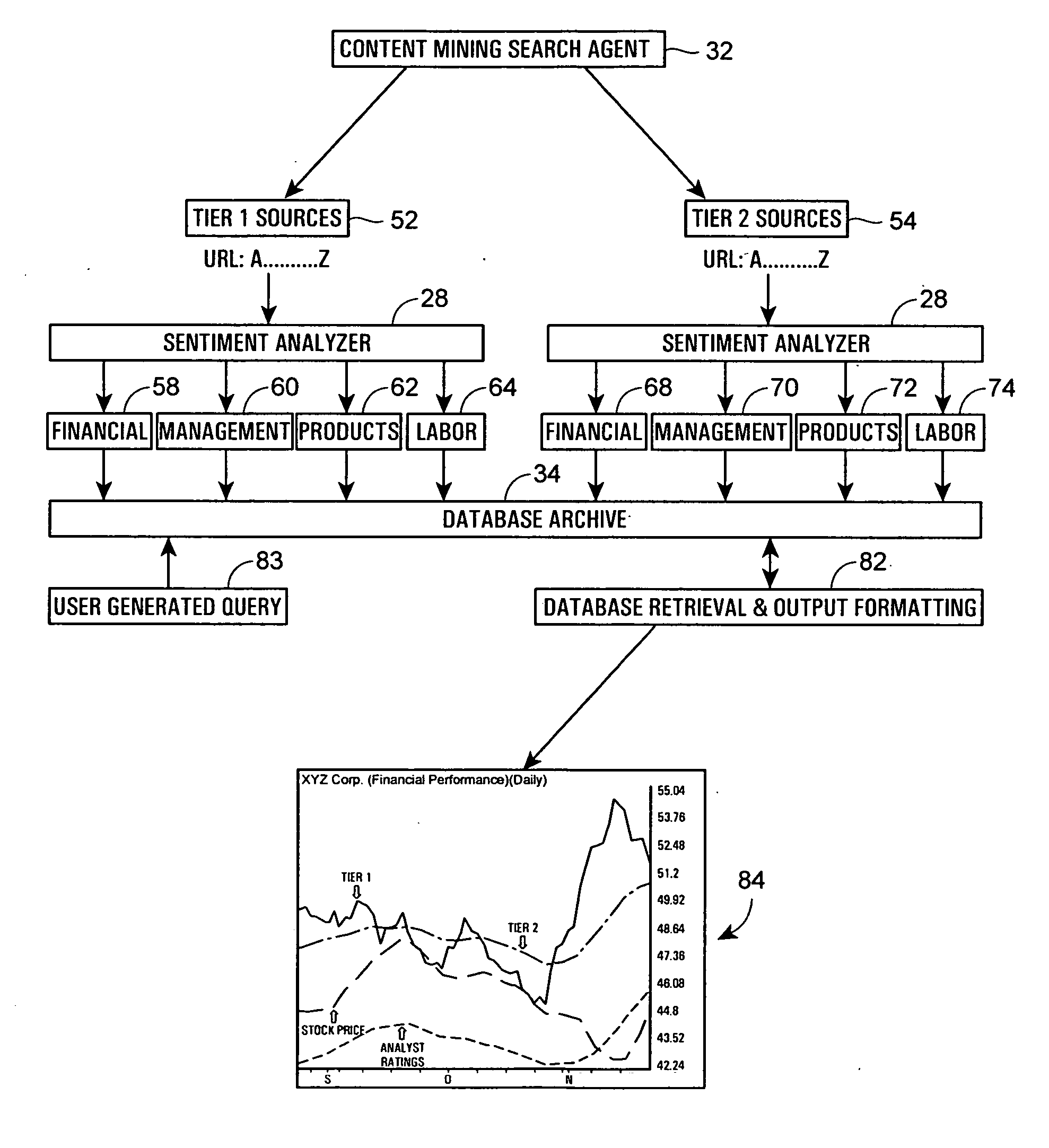

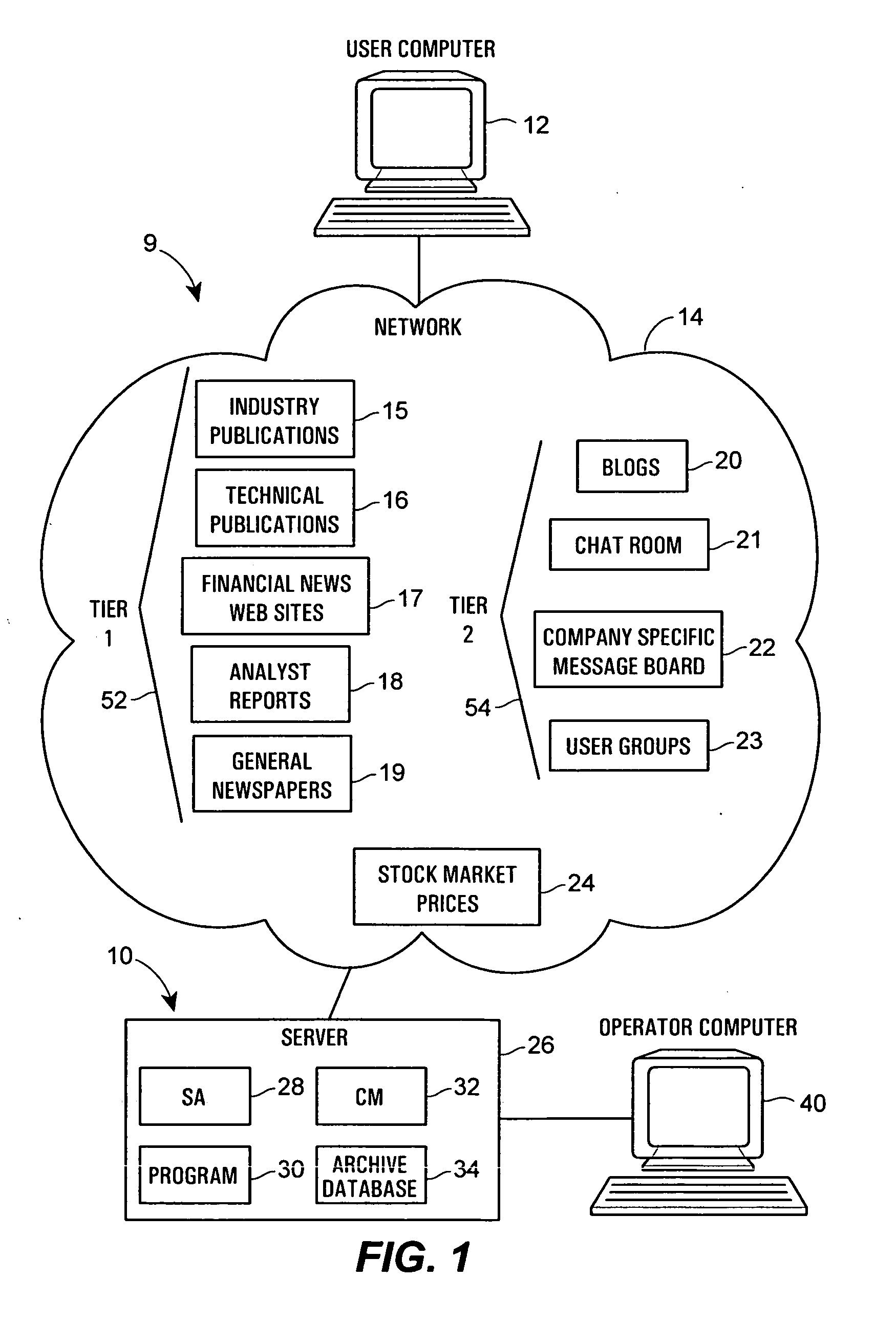

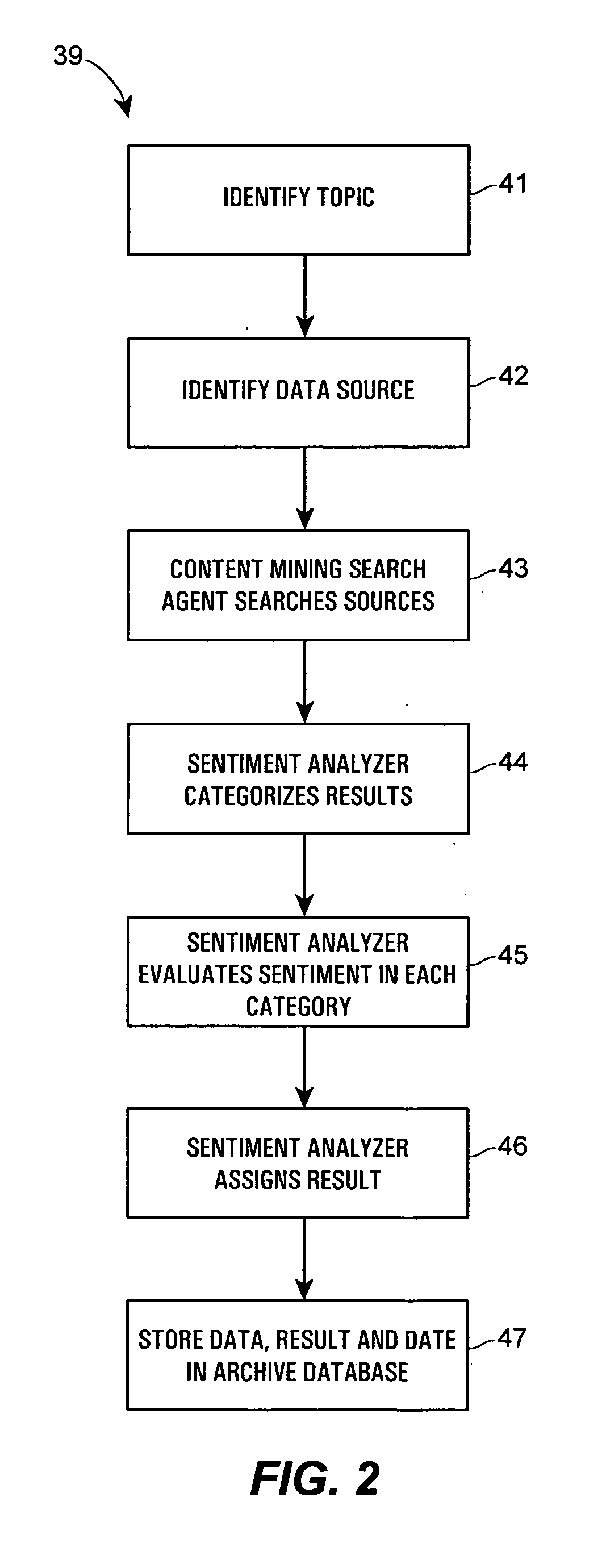

Method and system for conducting sentiment analysis for securities research

A computer system performs financial analysis on one or more financial entities, which may be corporations, securities, etc., based on the sentiment expressed about the one or more financial entities within raw textual data stored in one or more electronic data sources containing information or text related to one or more financial entities. The computer system includes a content mining search agent that identifies one or more words or phrases within raw textual data in the data sources using natural language processing to identify relevant raw textual data related to the one or more financial entities, a sentiment analyzer that analyzes the relevant raw textual data to determine the nature or the strength of the sentiment expressed about the one or more financial entities within the relevant raw textual data and that assigns a value to the nature or strength of the sentiment expressed about the one or more financial entities within the relevant raw textual data, and a user interface program that controls the content mining search agent and the sentiment analyzer and that displays, to a user, the values of the nature or strength of the sentiment expressed about the one or more financial entities within the data sources. This computer system enables a user to make better decisions regarding whether or not to purchase or invest in the one or more financial entities.

Owner:AIM HLDG LLC

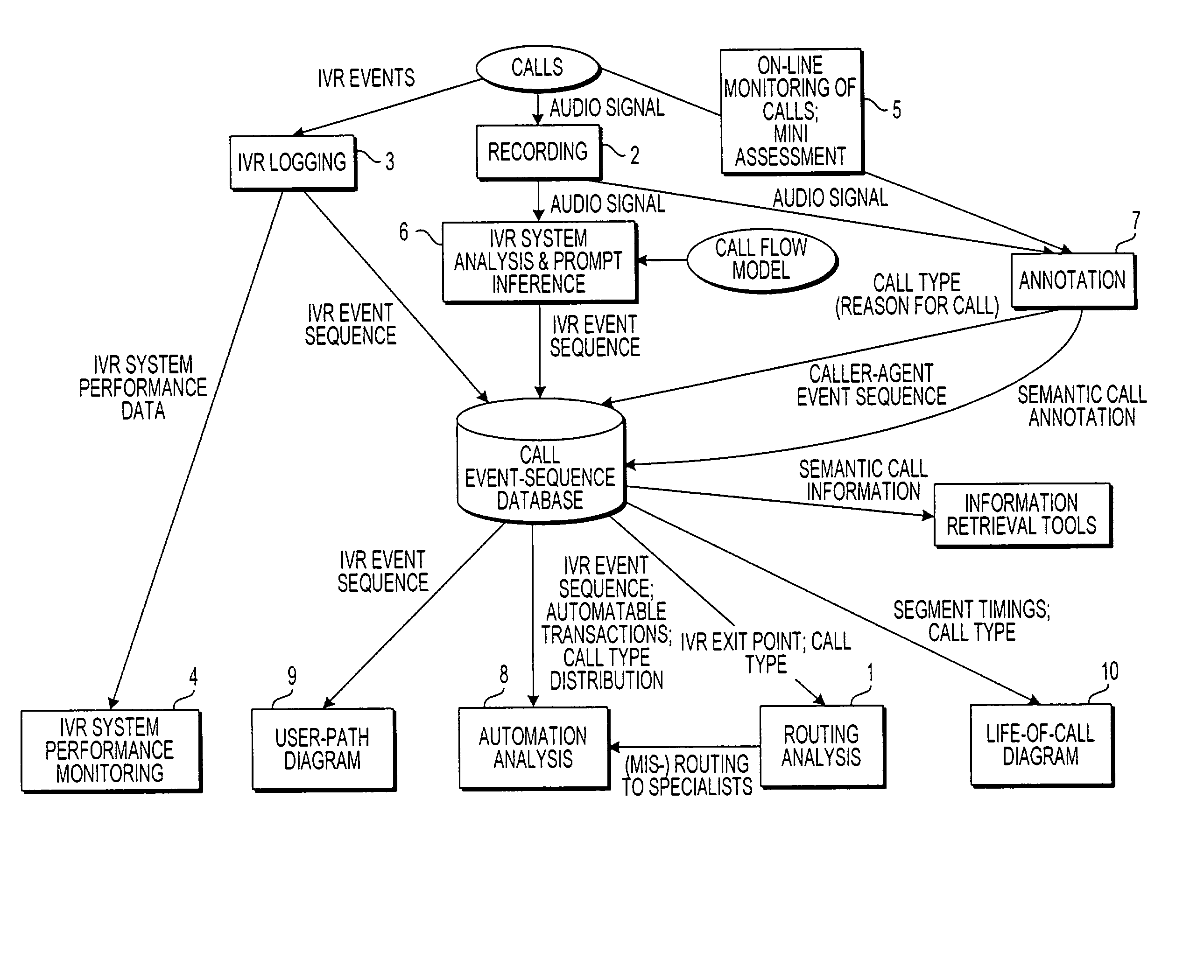

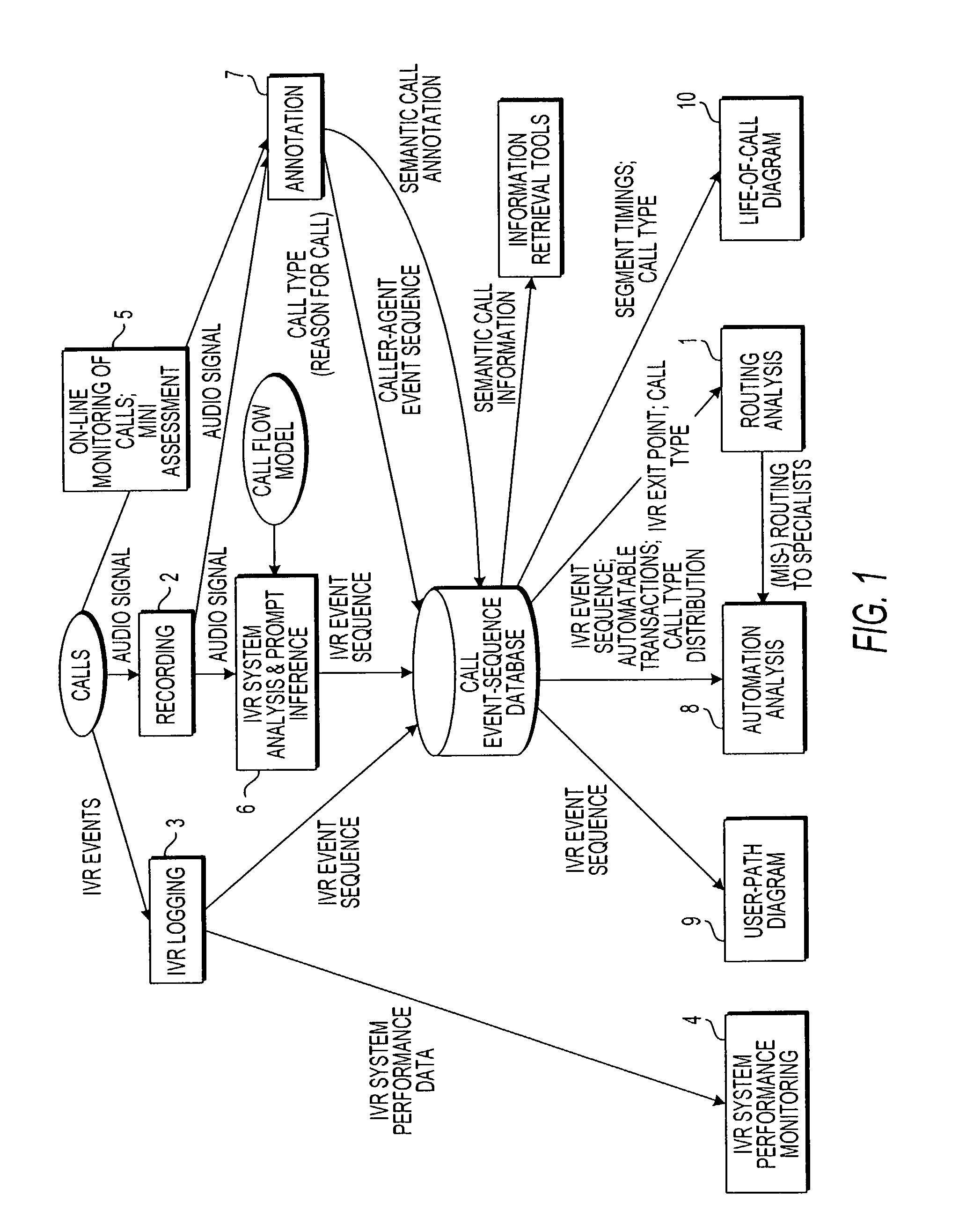

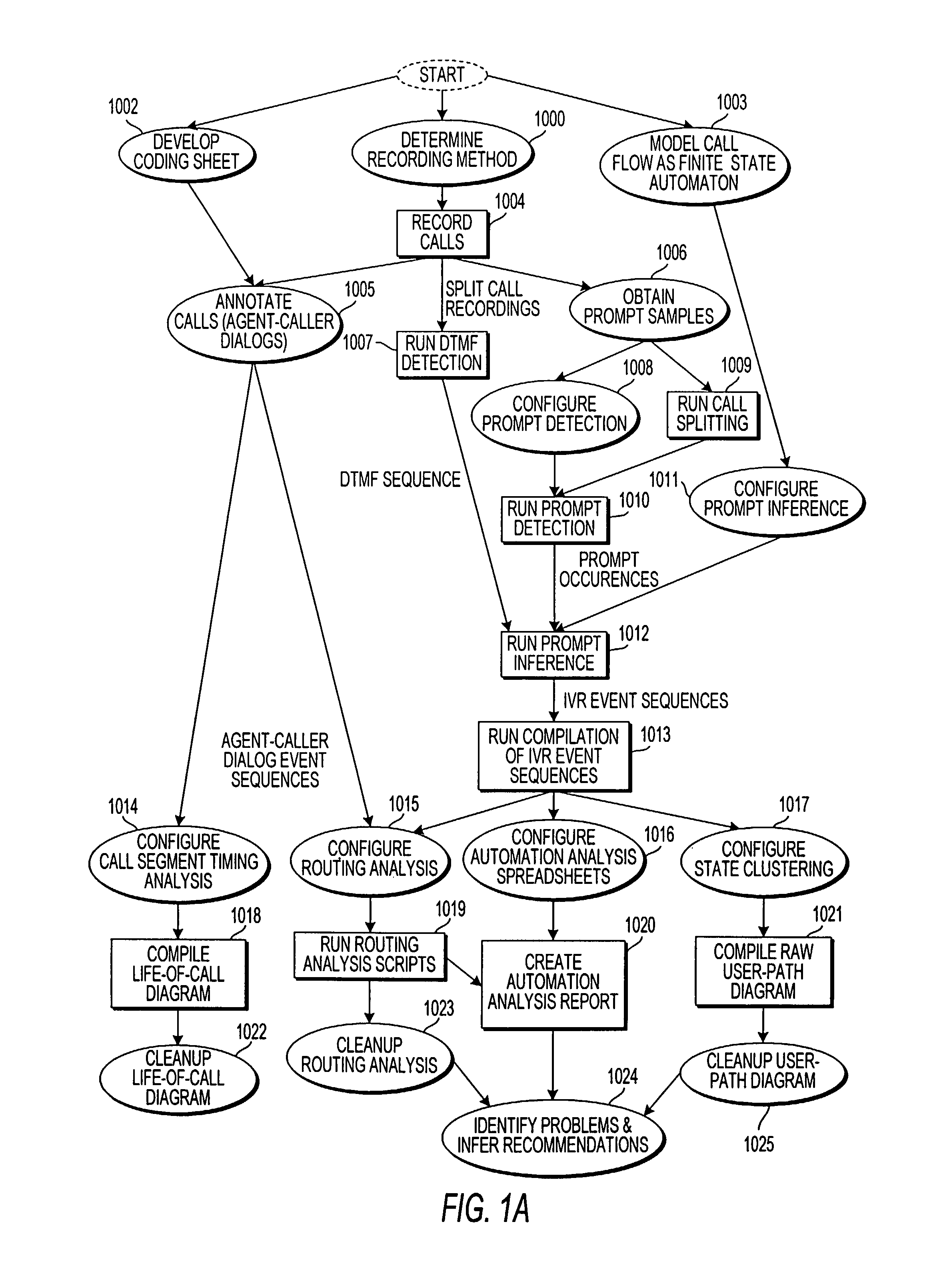

Apparatus and method for visually representing behavior of a user of an automated response system

InactiveUS7039166B1Overcome deficienciesSpeech analysisManual exchangesUser inputFinite-state machine

A system for visually representing user behavior within an interactive voice response (IVR) system of a call processing center generates a complete sequence of events within the IVR system for plural calls to the call processing center, the plurality of calls being recorded from end to end. A call flow of the IVR system is modeled as a non-deterministic finite-state machine, such that a start state of the finite-state machine represents a first prompt of the IVR system, other states of the finite-state machine represent subsequent prompts at which a branching occurs in the call flow of the IVR system, exit conditions are represented as end states, and transitions of the finite-state machine represent transitions between call flow states triggered by data inputted by a user or by internal processing of the IVR system. The complete sequences of events for the plural calls are provided to the finite-state machine to produce a two-way matrix of several counters. The data from the two-way matrix is represented as a state-transition diagram.

Owner:CX360 INC +1

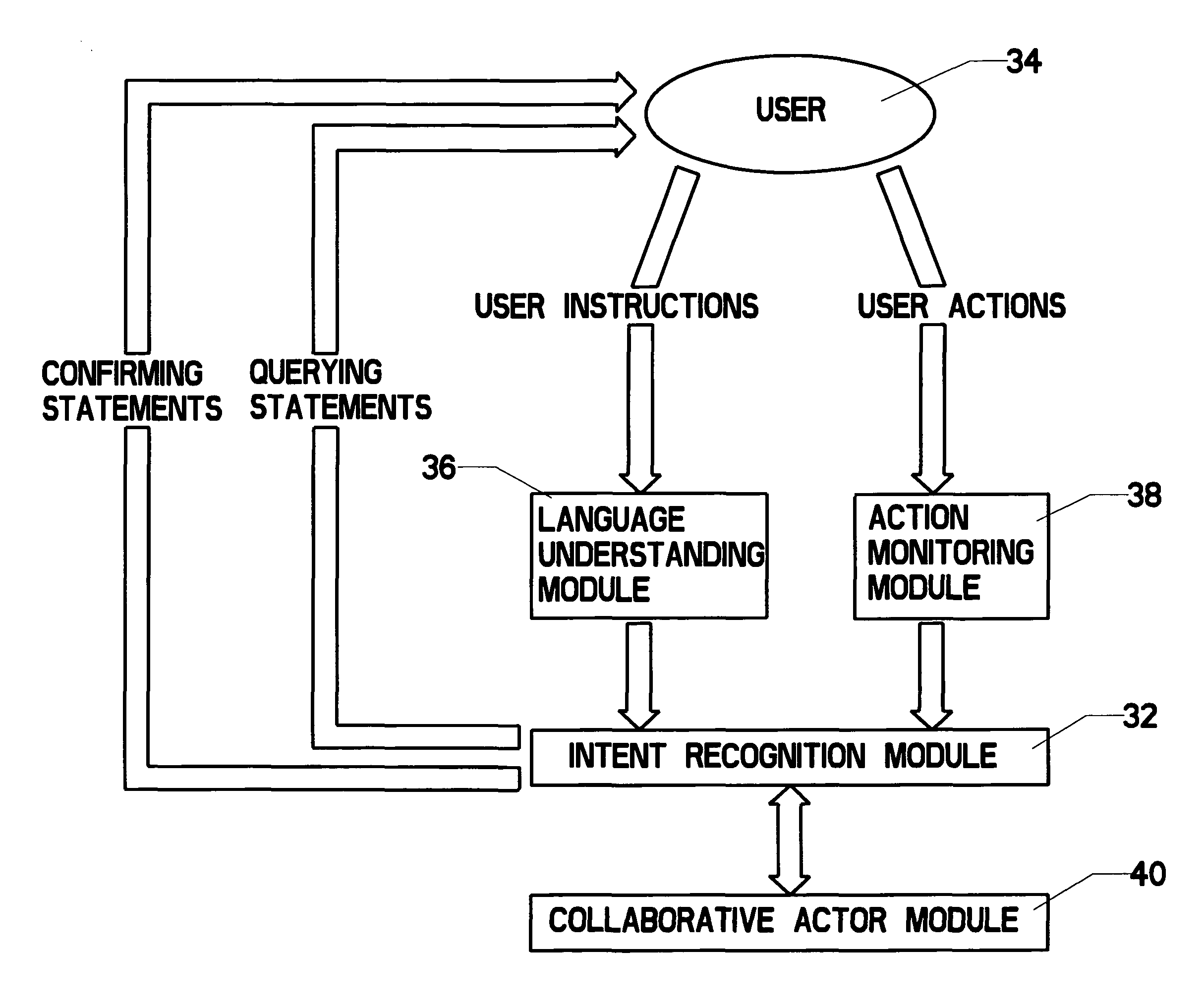

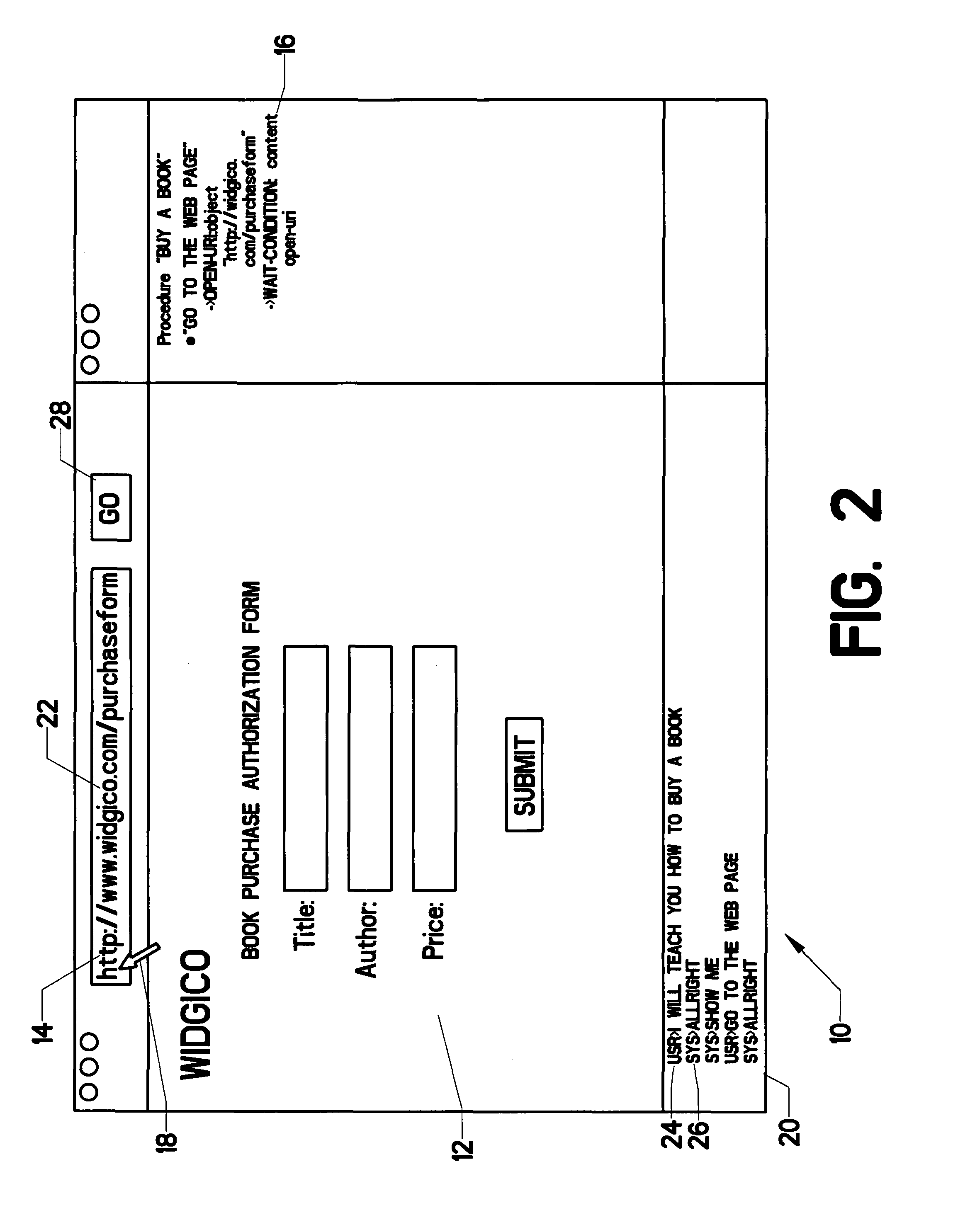

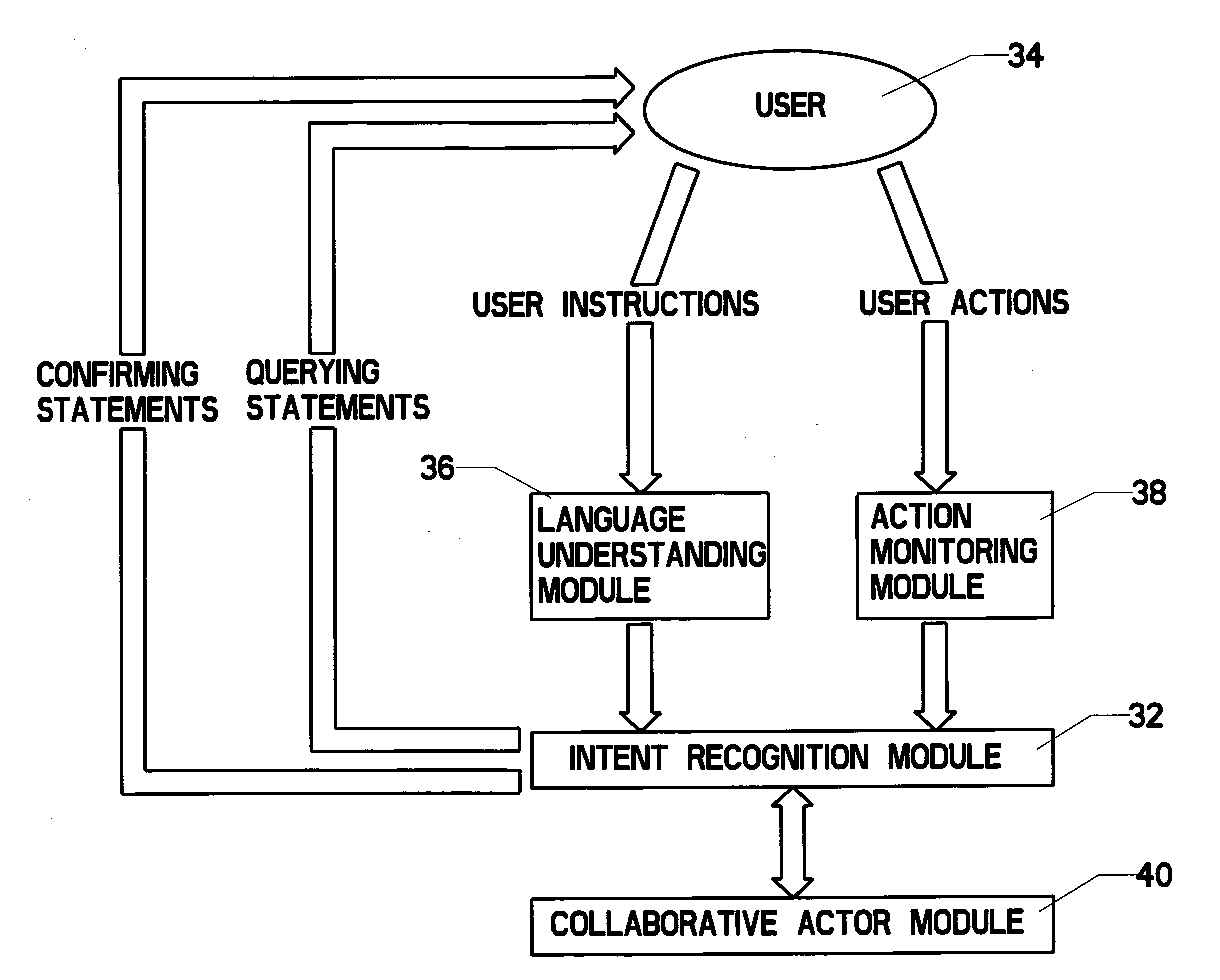

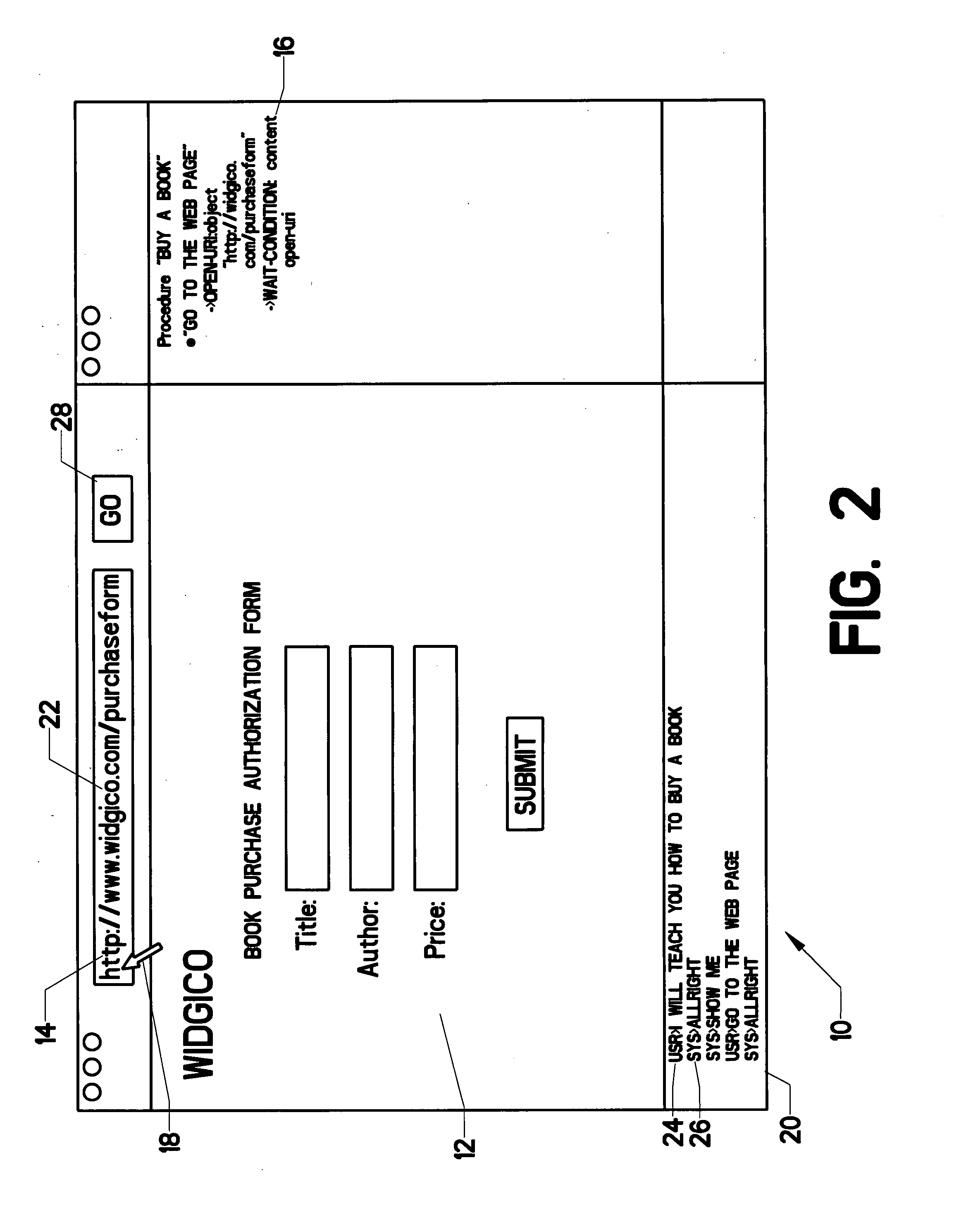

Interactive complex task teaching system that allows for natural language input, recognizes a user's intent, and automatically performs tasks in document object model (DOM) nodes

ActiveUS7983997B2Error detection/correctionDigital computer detailsRepetitive taskComponent Object Model

A system which allows a user to teach a computational device how to perform complex, repetitive tasks that the user usually would perform using the device's graphical user interface (GUI) often but not limited to being a web browser. The system includes software running on a user's computational device. The user “teaches” task steps by inputting natural language and demonstrating actions with the GUI. The system uses a semantic ontology and natural language processing to create an explicit representation of the task that is stored on the computer. After a complete task has been taught, the system is able to automatically execute the task in new situations. Because the task is represented in terms of the ontology and user's intentions, the system is able to adapt to changes in the computer code while still pursuing the objectives taught by the user.

Owner:FLORIDA INST FOR HUMAN & MACHINE COGNITION

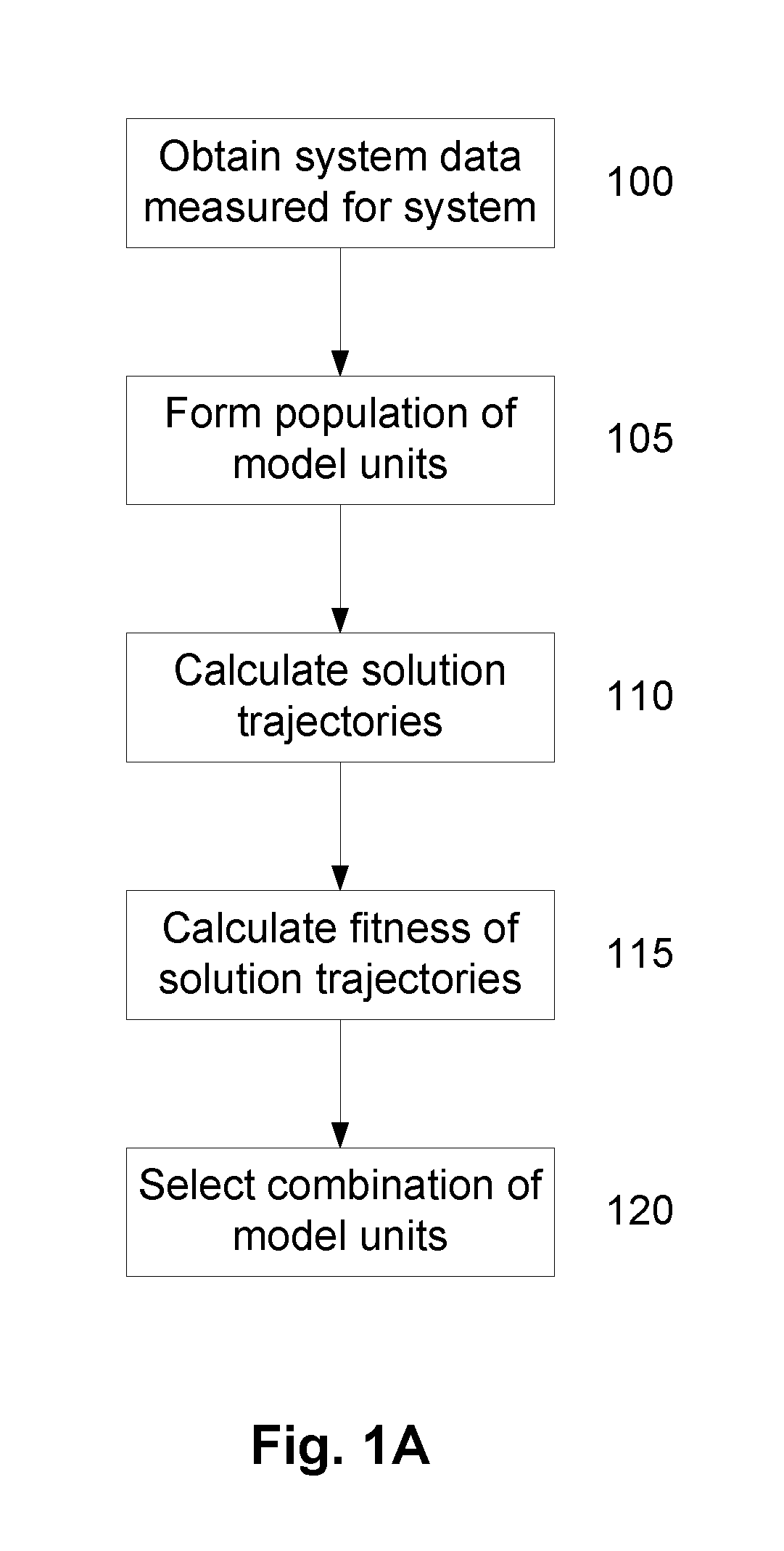

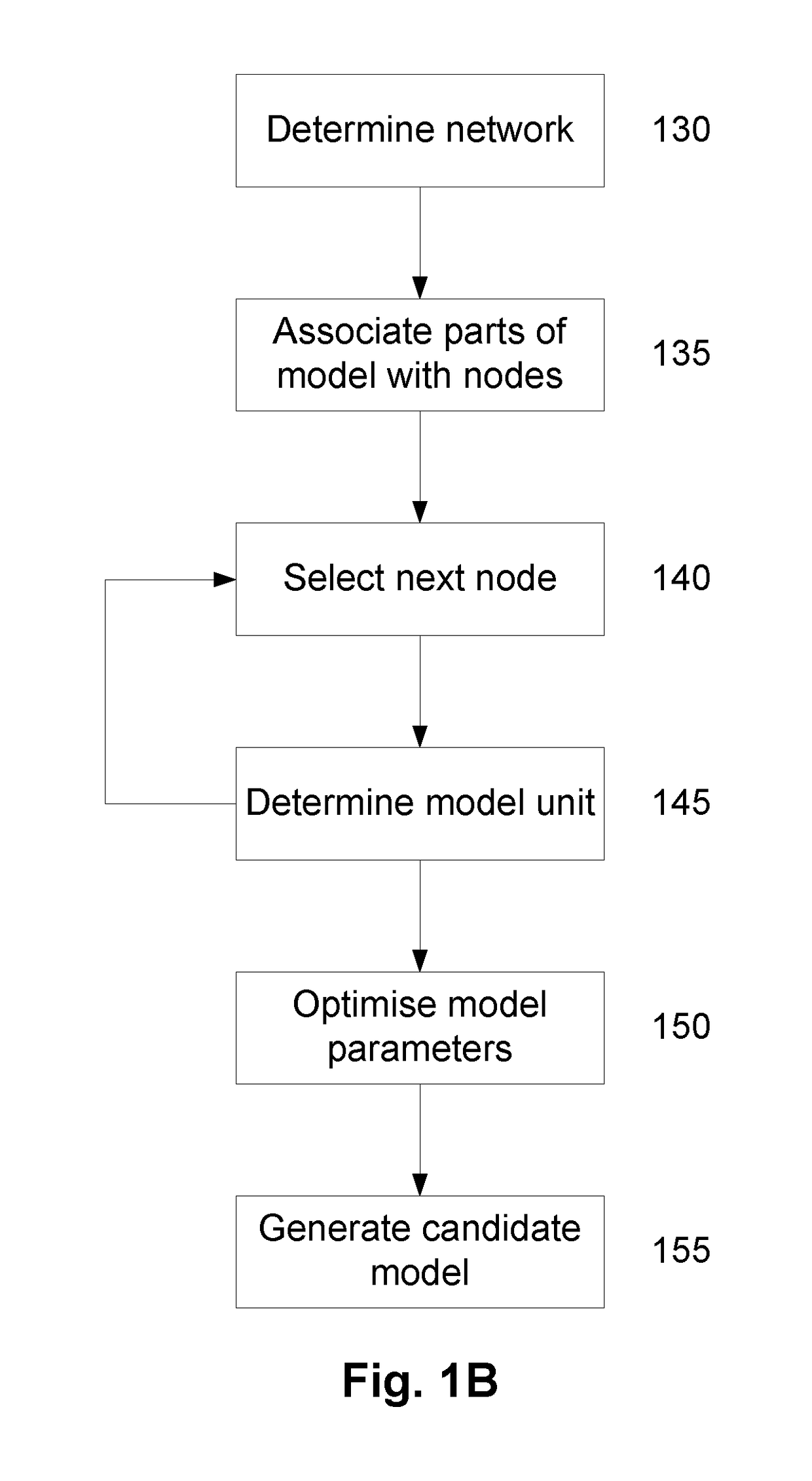

A System and Method for Modelling System Behaviour

ActiveUS20170147722A1Reduce the impactReduce impactMedical simulationDesign optimisation/simulationCollective modelModel system

A method of modelling system behaviour of a physical system, the method including, in one or more electronic processing devices obtaining quantified system data measured for the physical system, the quantified system data being at least partially indicative of the system behaviour for at least a time period, forming at least one population of model units, each model unit including model parameters and at least part of a model, the model parameters being at least partially based on the quantified system data, each model including one or more mathematical equations for modelling system behaviour, for each model unit calculating at least one solution trajectory for at least part of the at least one time period; determining a fitness value based at least in part on the at least one solution trajectory; and, selecting a combination of model units using the fitness values of each model unit, the combination of model units representing a collective model that models the system behaviour.

Owner:EVOLVING MACHINE INTELLIGENCE

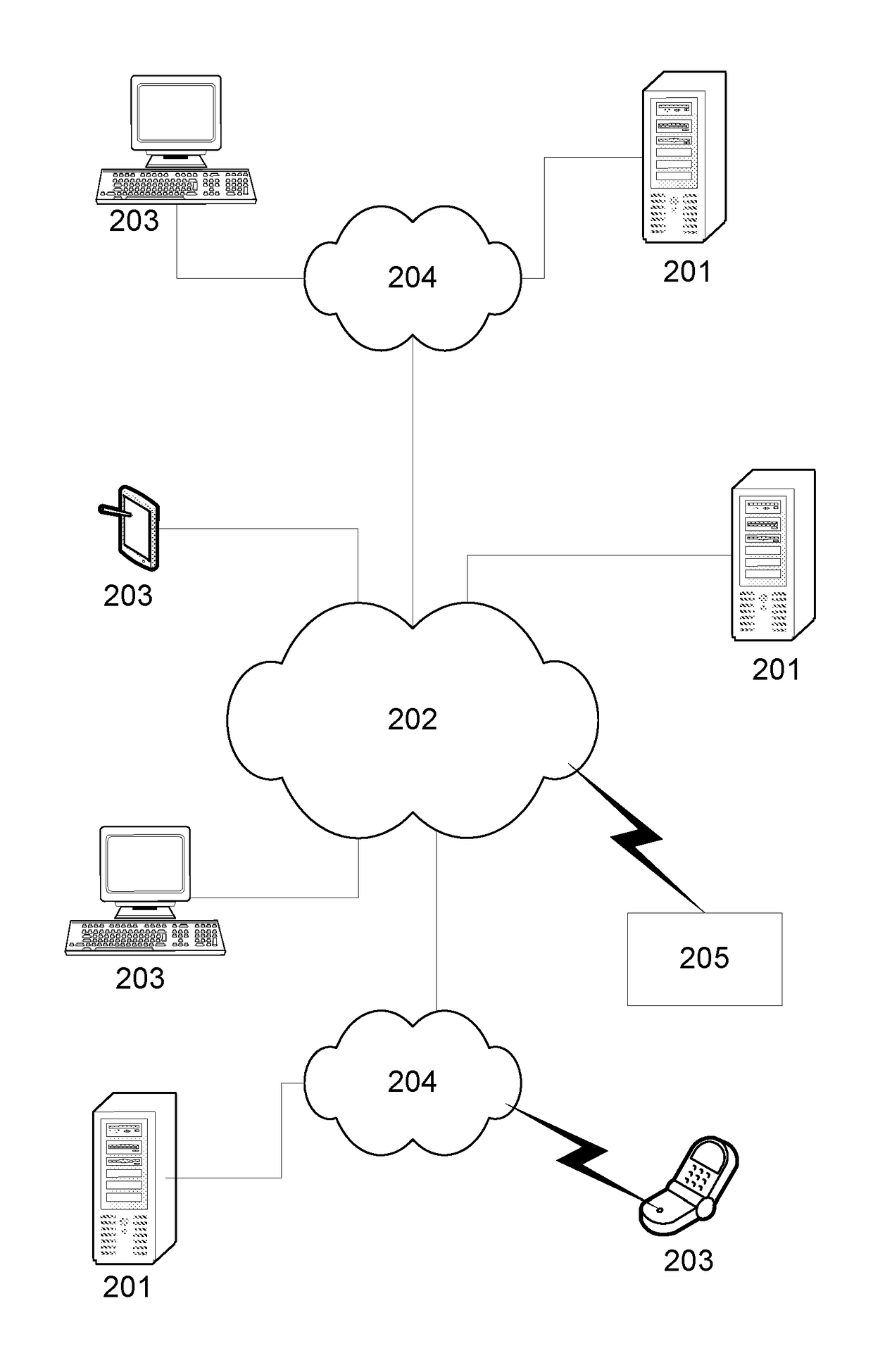

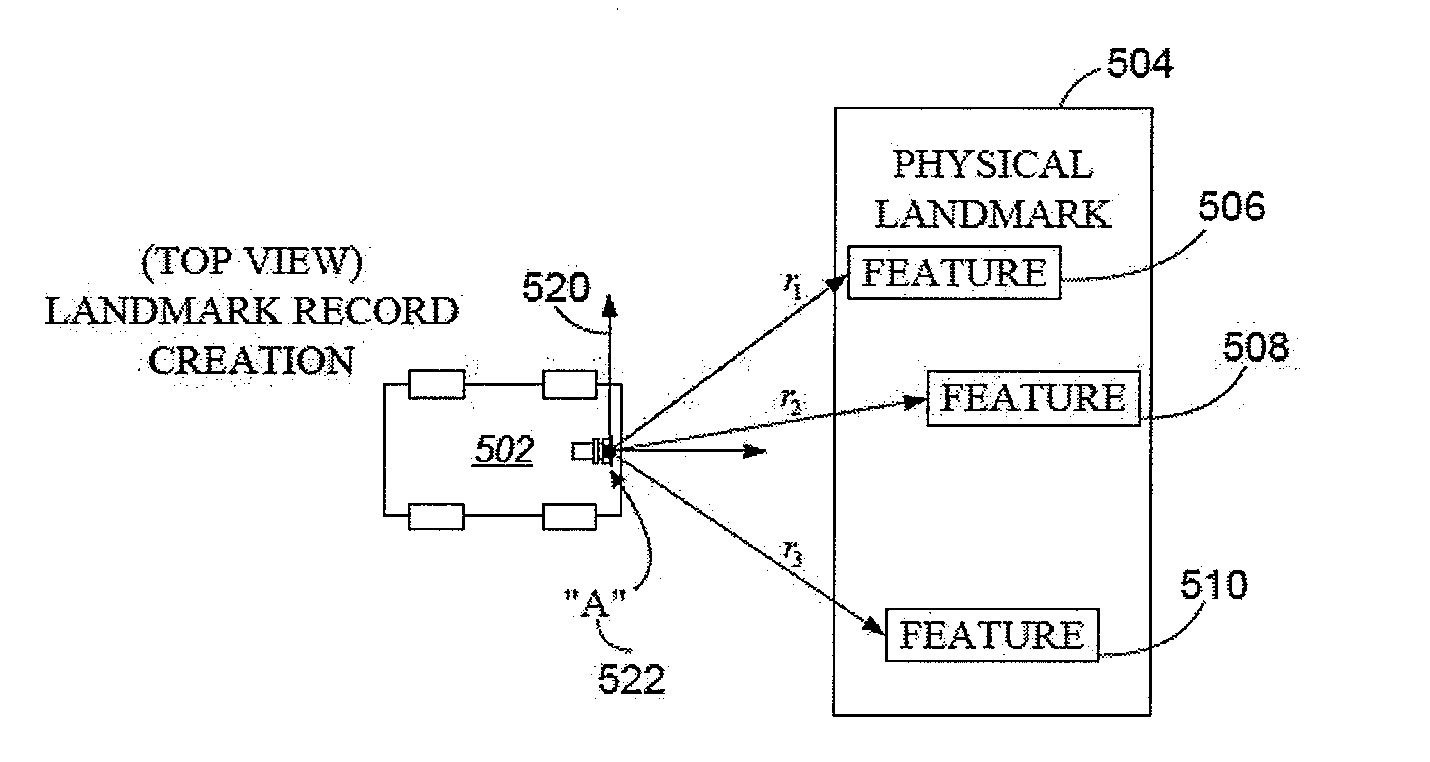

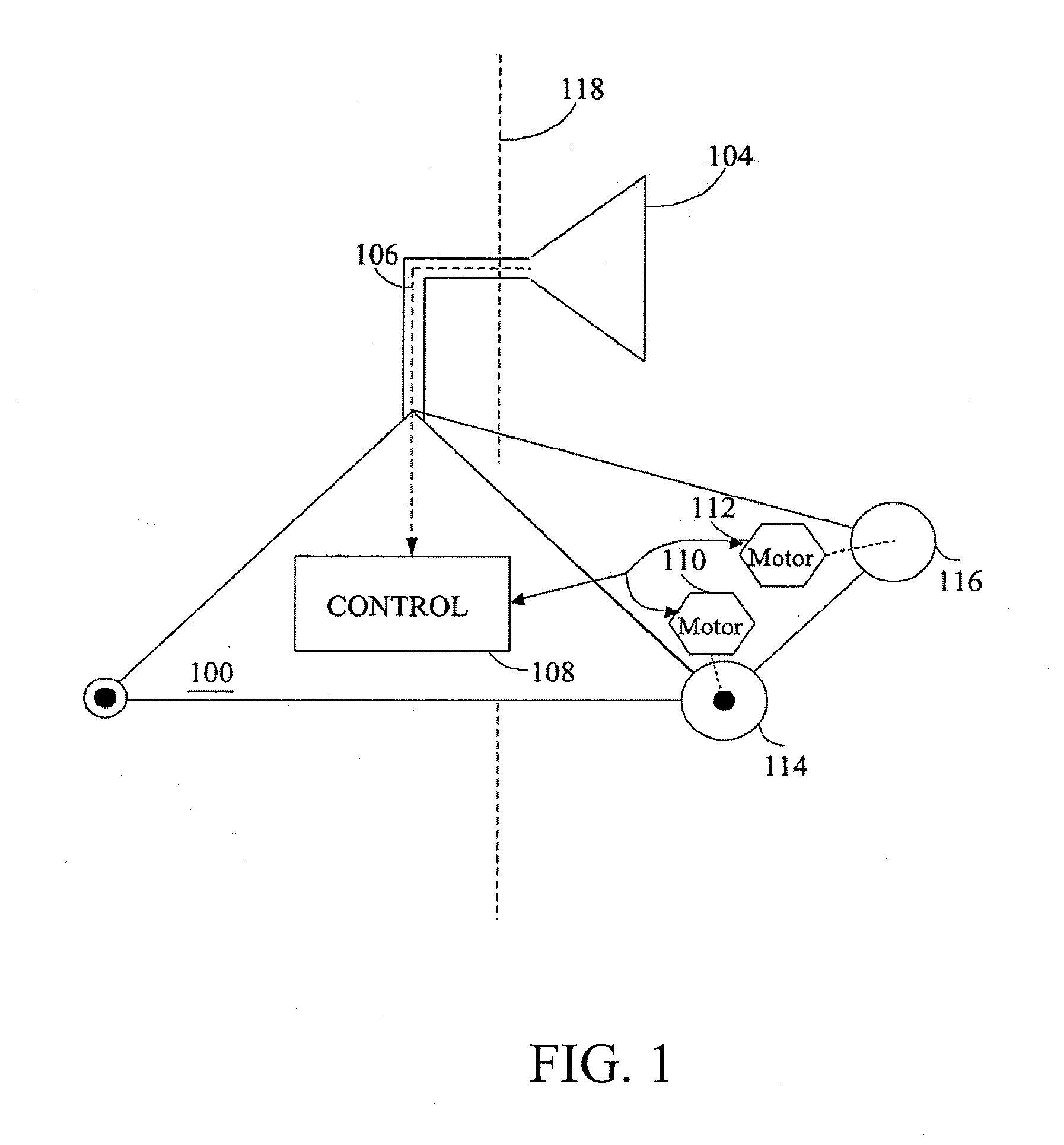

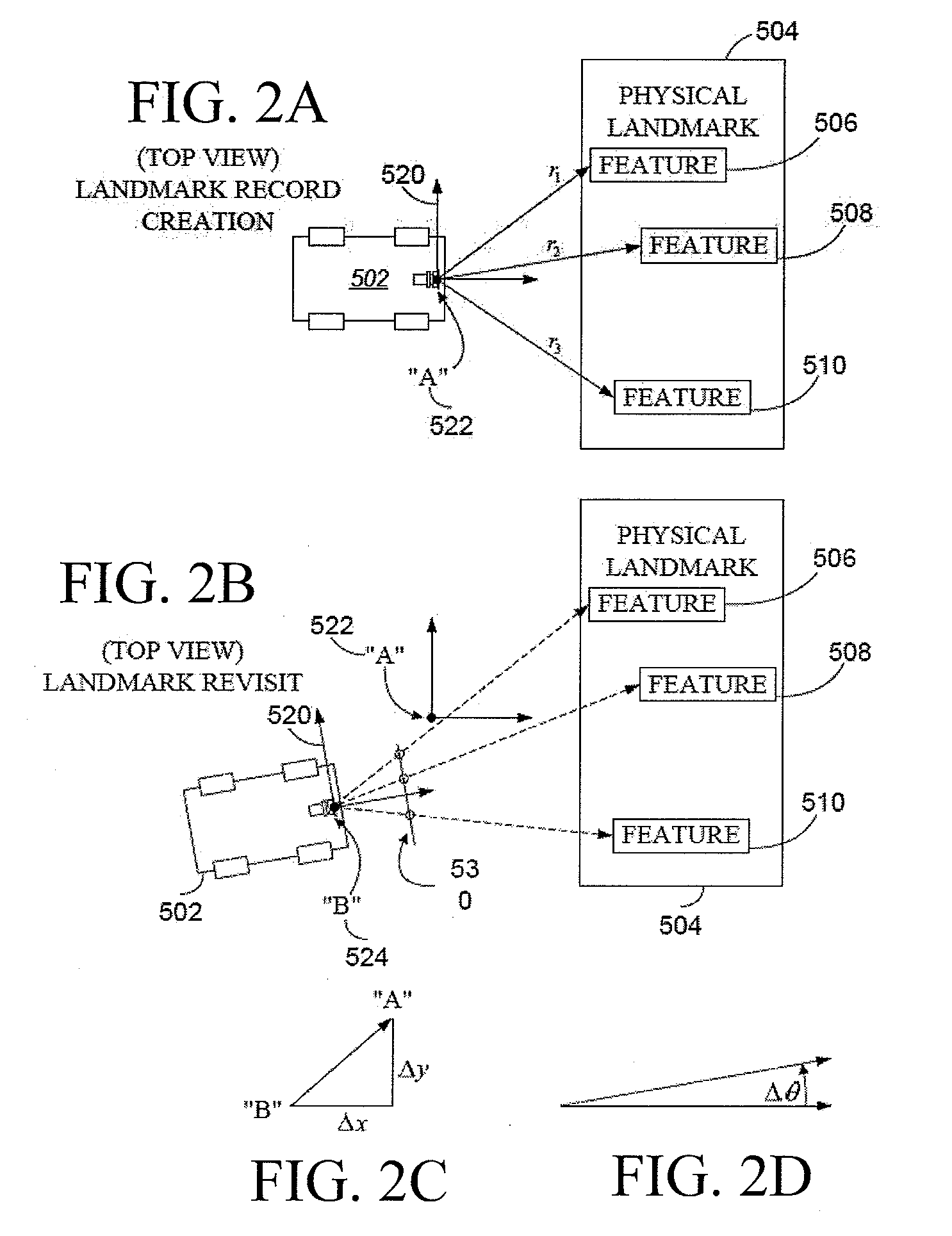

Systems and methods for vslam optimization

ActiveUS20120121161A1Database queryingCharacter and pattern recognitionSimultaneous localization and mappingLandmark matching

The invention is related to methods and apparatus that use a visual sensor and dead reckoning sensors to process Simultaneous Localization and Mapping (SLAM). These techniques can be used in robot navigation. Advantageously, such visual techniques can be used to autonomously generate and update a map. Unlike with laser rangefinders, the visual techniques are economically practical in a wide range of applications and can be used in relatively dynamic environments, such as environments in which people move. Certain embodiments contemplate improvements to the front-end processing in a SLAM-based system. Particularly, certain of these embodiments contemplate a novel landmark matching process. Certain of these embodiments also contemplate a novel landmark creation process. Certain embodiments contemplate improvements to the back-end processing in a SLAM-based system. Particularly, certain of these embodiments contemplate algorithms for modifying the SLAM graph in real-time to achieve a more efficient structure.

Owner:IROBOT CORP

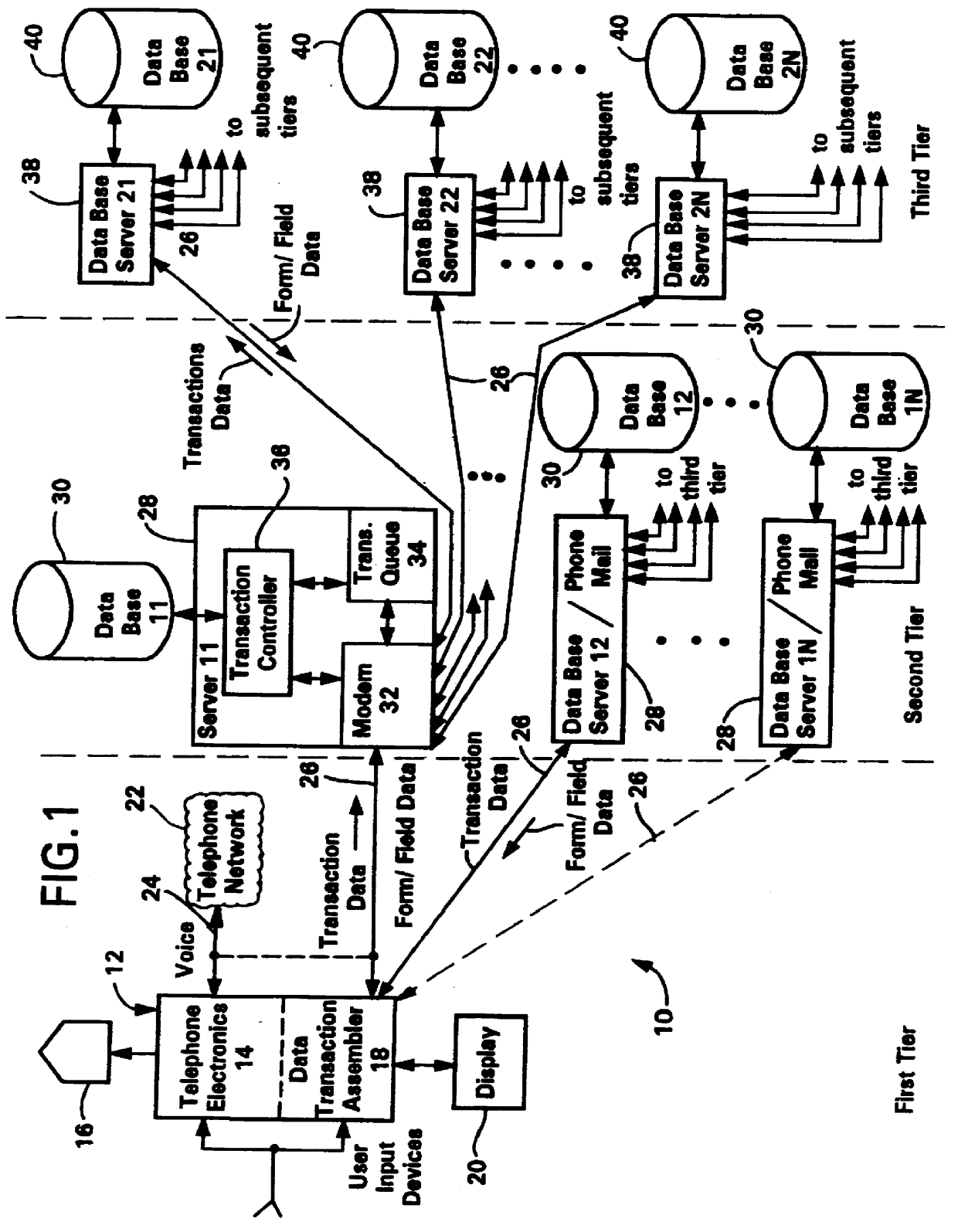

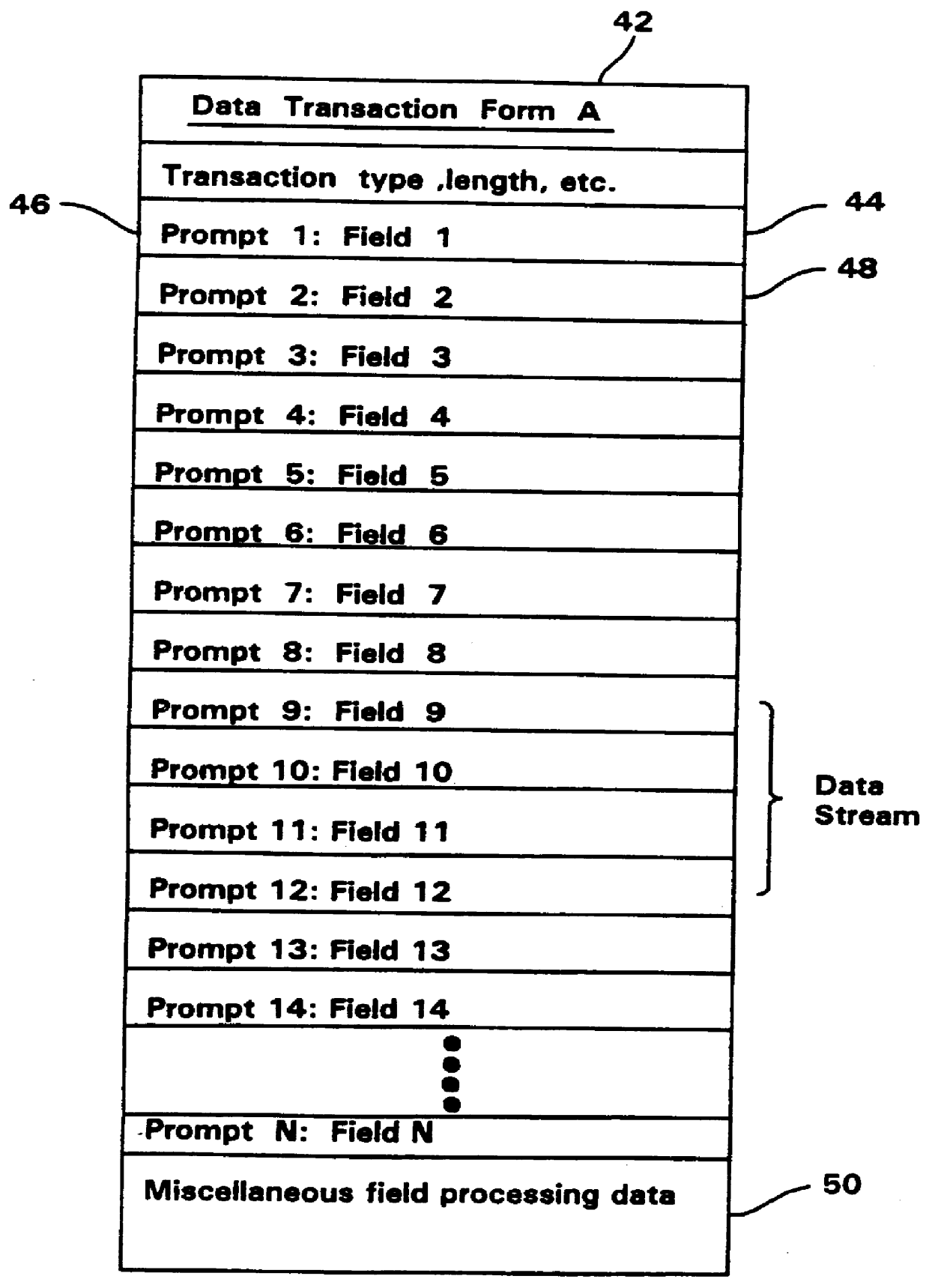

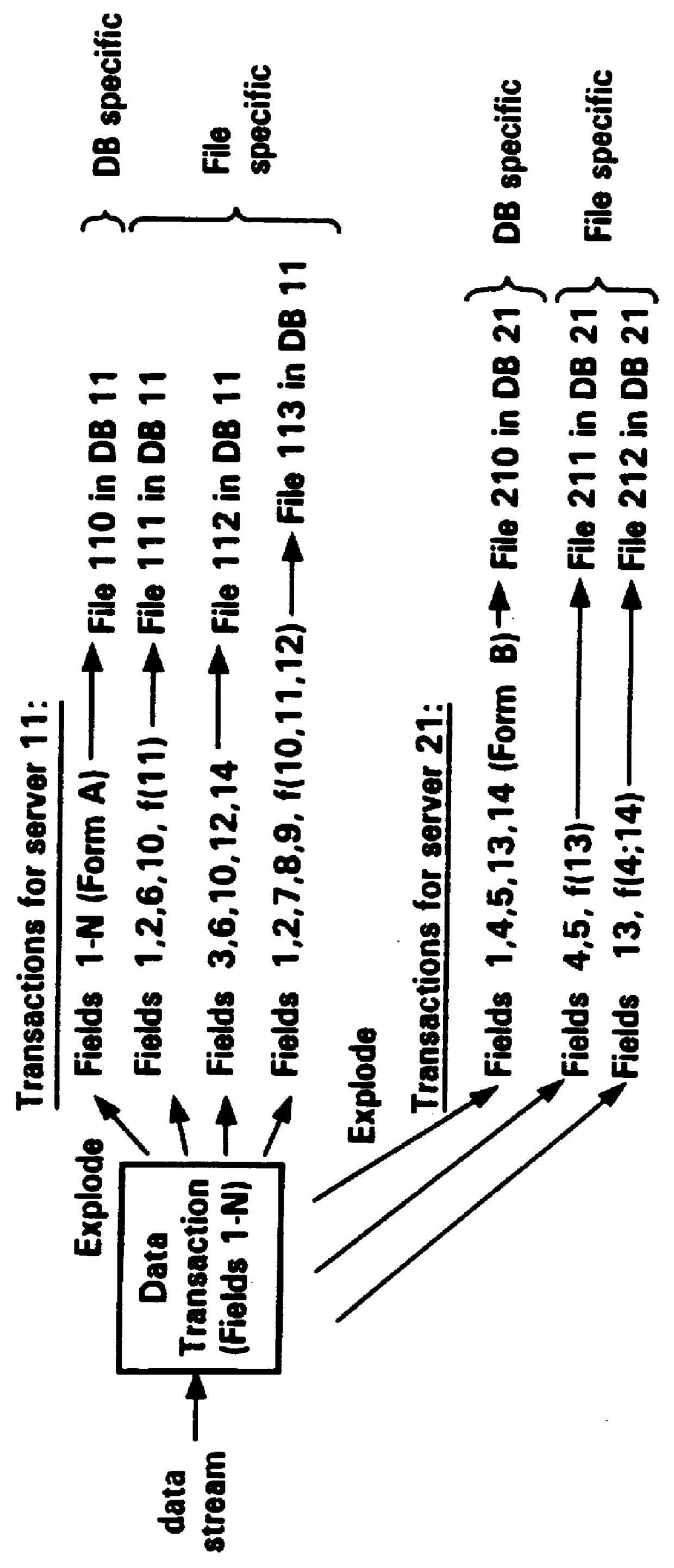

Data transaction assembly server

InactiveUS6044382ASpecial service for subscribersTwo-way working systemsData bufferApplication software

A form driven operating system which permits dynamic reconfiguration of any host processor into a virtual machine which supports any of a number of operating system independent applications. A data transaction assembly server (TAS) downloads menus and forms which are unique to each application requiring data to be input for local or remote processing. The data transactions and forms are exchanged between the TAS, which functions as a form driven operating system of the host computer, and a remote processor in a real-time fashion so that virtually any operating system independent software application may be implemented in which a form driven operating system may be used to facilitate input, and in which the data input into the form may be processed locally or remotely, returned as a data stream, and displayed to the user. The TAS merely requires a flash PROM for storing the TAS control firmware, a RAM for storing the data streams making up the forms and menus, and a small RAM which operates as an input / output transaction buffer for storing the data streams of the template and the user replies to the prompts during assembly of a data transaction.

Owner:CYBERFONE TECH

Interactive complex task teaching system

A system which allows a user to teach a computational device how to perform complex, repetitive tasks that the user usually would perform using the device's graphical user interface (GUI) often but not limited to being a web browser. The system includes software running on a user's computational device. The user “teaches” task steps by inputting natural language and demonstrating actions with the GUI. The system uses a semantic ontology and natural language processing to create an explicit representation of the task that is stored on the computer. After a complete task has been taught, the system is able to automatically execute the task in new situations. Because the task is represented in terms of the ontology and user's intentions, the system is able to adapt to changes in the computer code while still pursuing the objectives taught by the user.

Owner:FLORIDA INST FOR HUMAN & MACHINE COGNITION

Ergonomic man-machine interface incorporating adaptive pattern recognition based control system

InactiveUS20070061735A1Decrease productivityImprove the environmentTelevision system detailsRecording carrier detailsHuman–machine interfaceData stream

An adaptive interface for a programmable system, for predicting a desired user function, based on user history, as well as machine internal status and context. The apparatus receives an input from the user and other data. A predicted input is presented for confirmation by the user, and the predictive mechanism is updated based on this feedback. Also provided is a pattern recognition system for a multimedia device, wherein a user input is matched to a video stream on a conceptual basis, allowing inexact programming of a multimedia device. The system analyzes a data stream for correspondence with a data pattern for processing and storage. The data stream is subjected to adaptive pattern recognition to extract features of interest to provide a highly compressed representation which may be efficiently processed to determine correspondence. Applications of the interface and system include a VCR, medical device, vehicle control system, audio device, environmental control system, securities trading terminal, and smart house. The system optionally includes an actuator for effecting the environment of operation, allowing closed-loop feedback operation and automated learning.

Owner:BLANDING HOVENWEEP

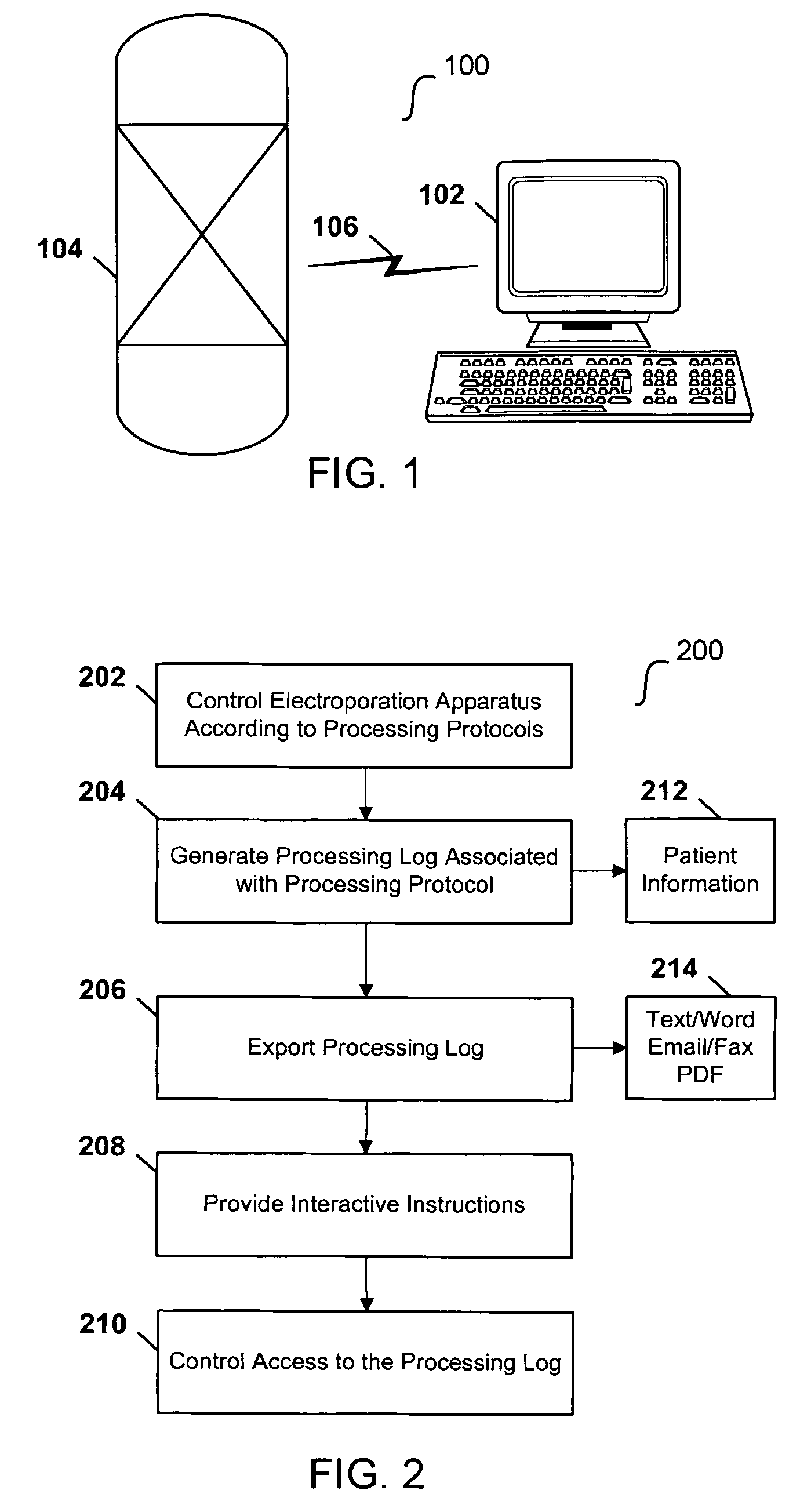

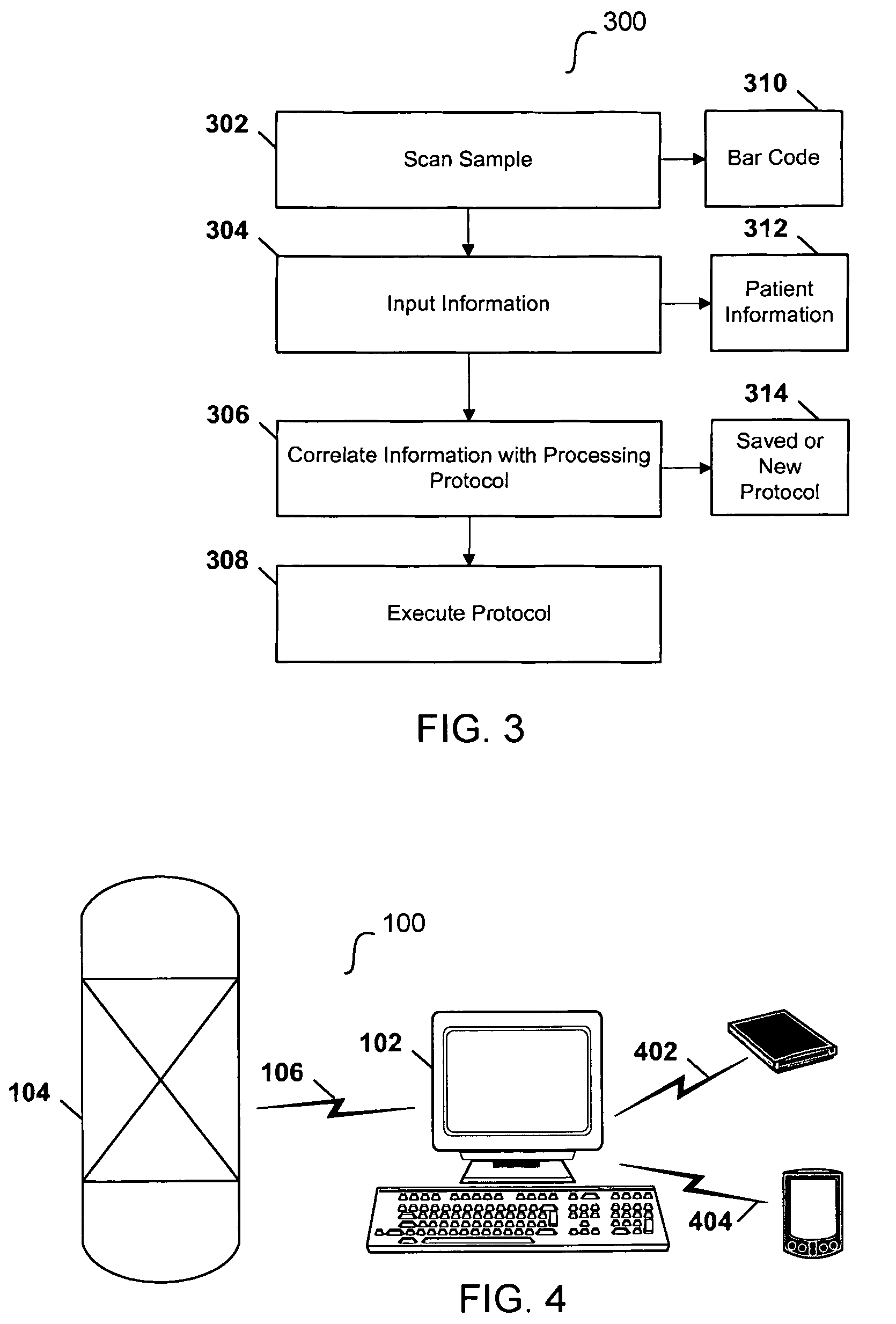

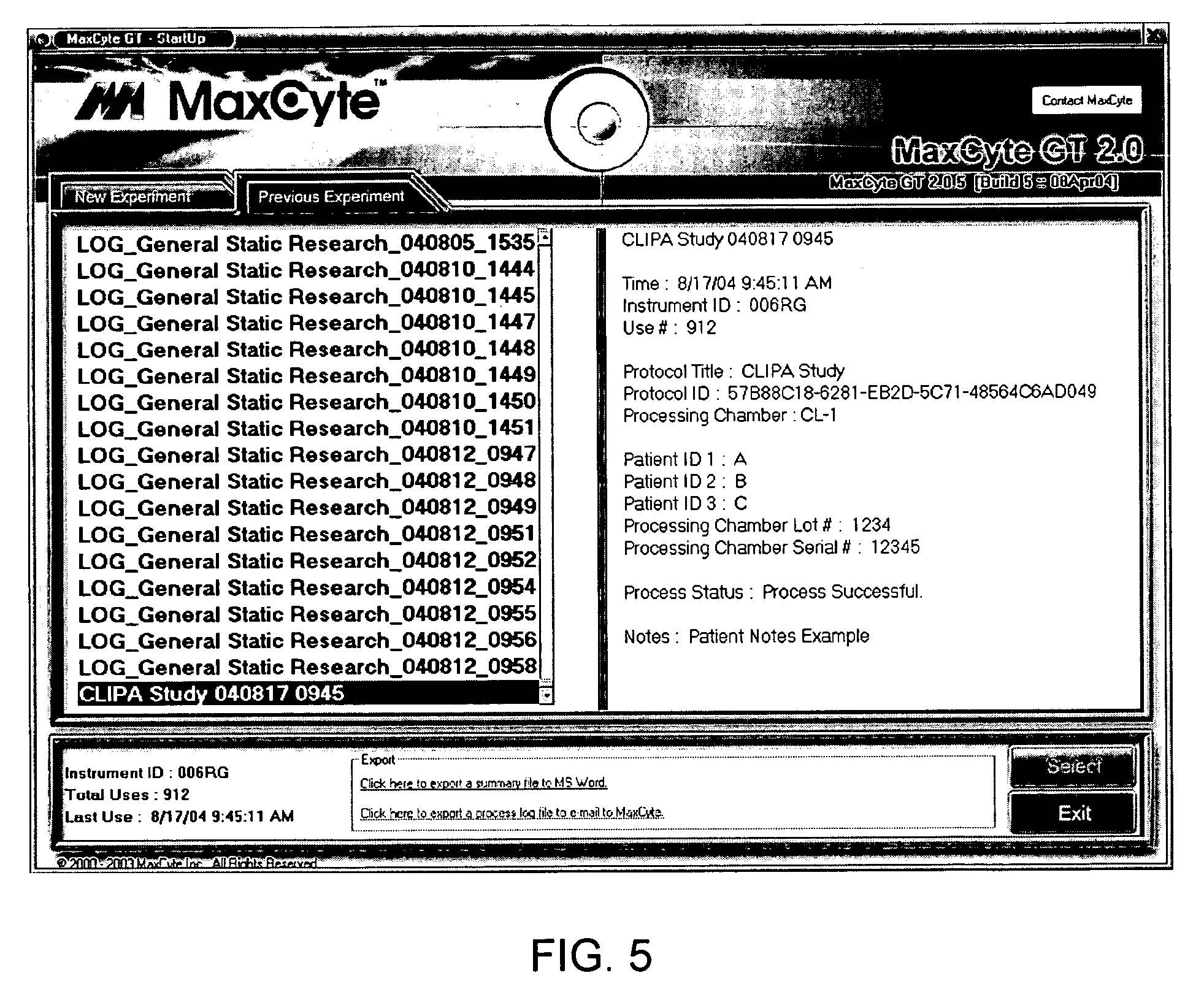

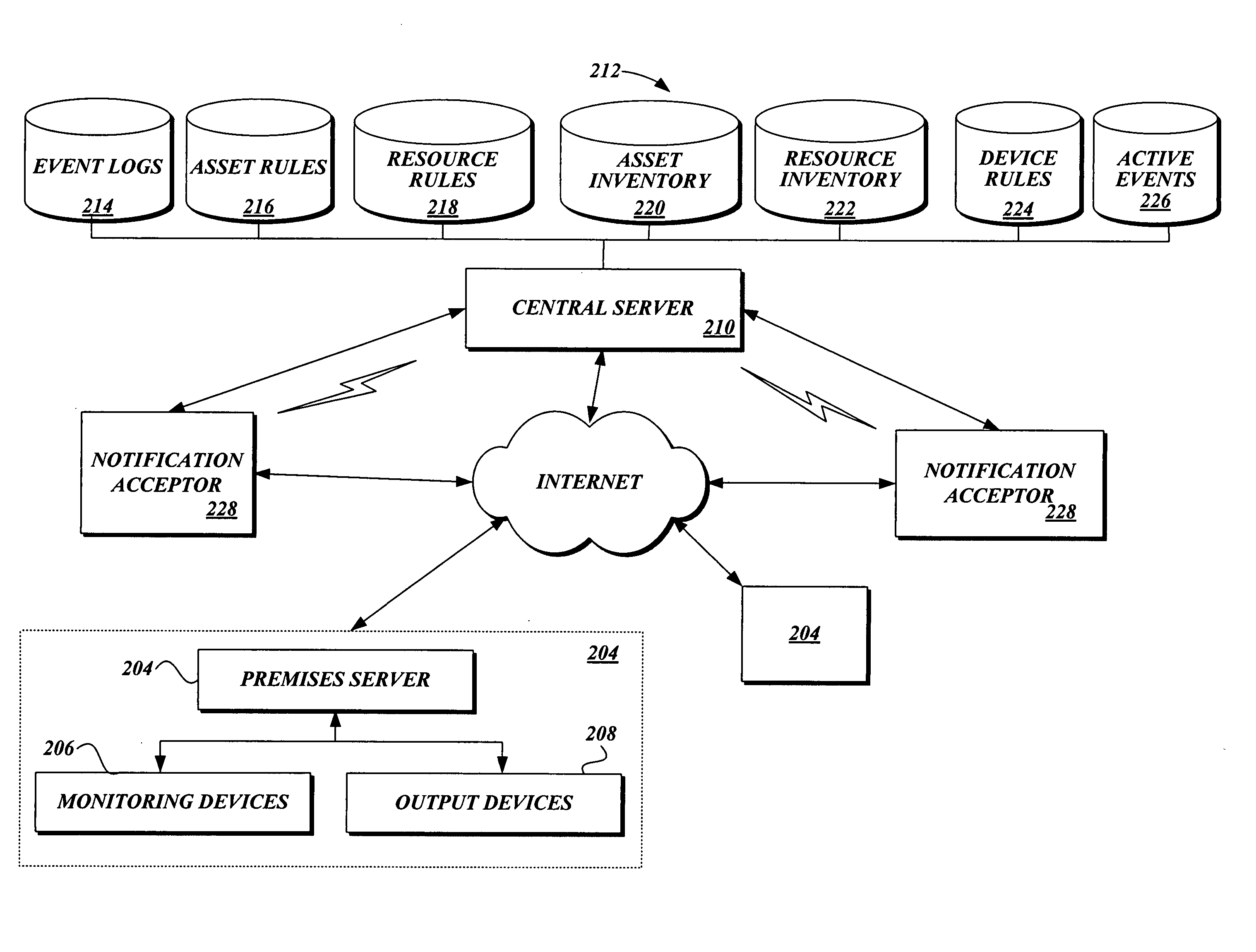

Computerized electroporation

ActiveUS7991559B2General purpose stored program computerOther foreign material introduction processesInformation processingProcessing

Techniques for computerized electroporation. An electroporation apparatus may be controlled according to one of a plurality of previously-saved, user-defined processing protocols. A processing log associated with a processing protocol may be generated, and the processing log may include patient or sample specific information. The processing log or a summary of the processing log may be exported to a user. Interactive instructions may be provided to a user. Those instructions may correspond to one or more steps of a processing protocol.

Owner:MAXCYTE INC

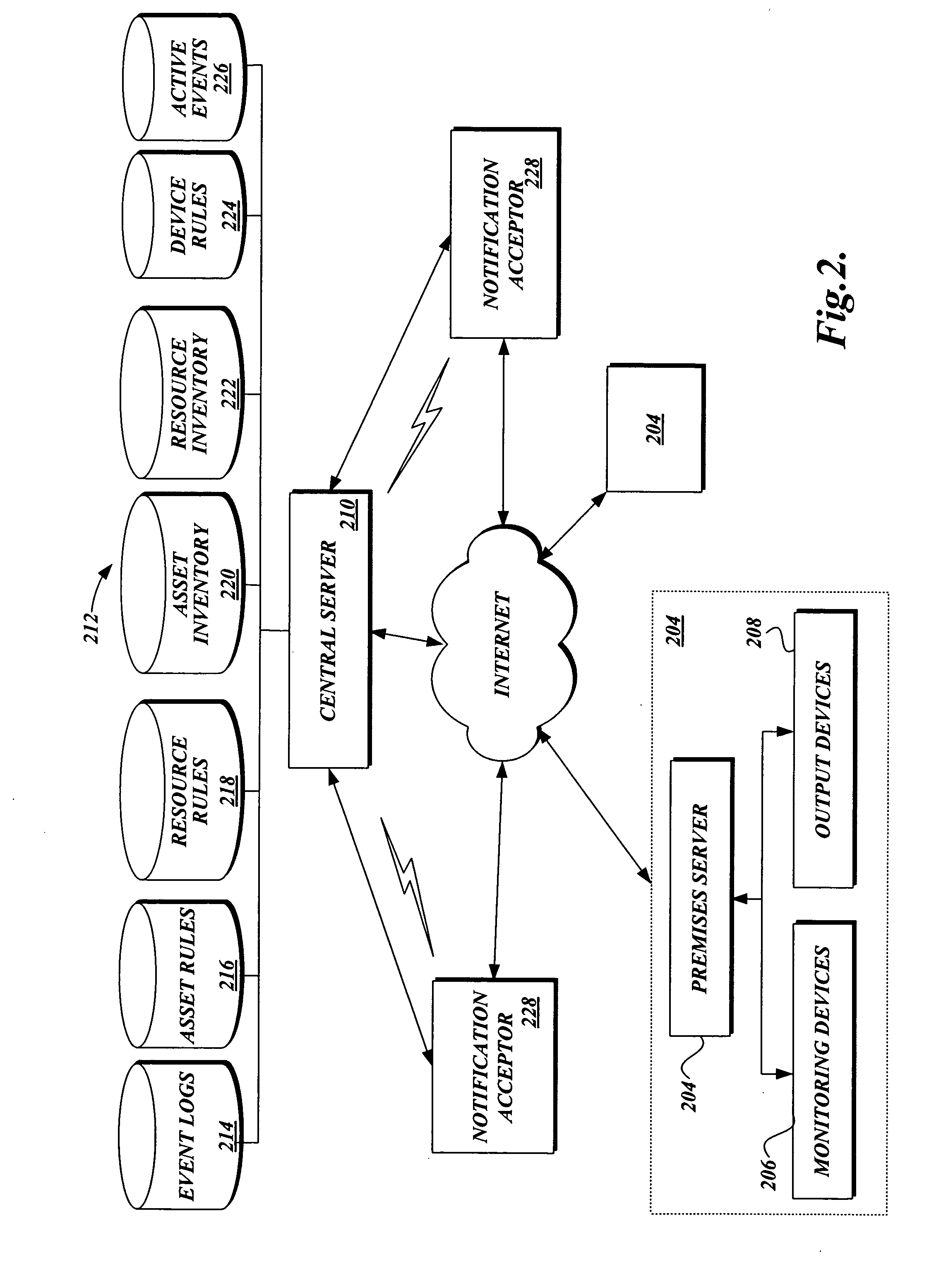

Method and process for configuring a premises for monitoring

A system and method for configuring an integrated information system through a common user interface are provided. A user accesses a graphical user interface and selects client, premises, location, monitoring device, and processing rule information. The graphical user interface transmits the user selection to a processing server, which configures one or more monitoring devices according to the user selections.

Owner:VIG ACQUISITIONS

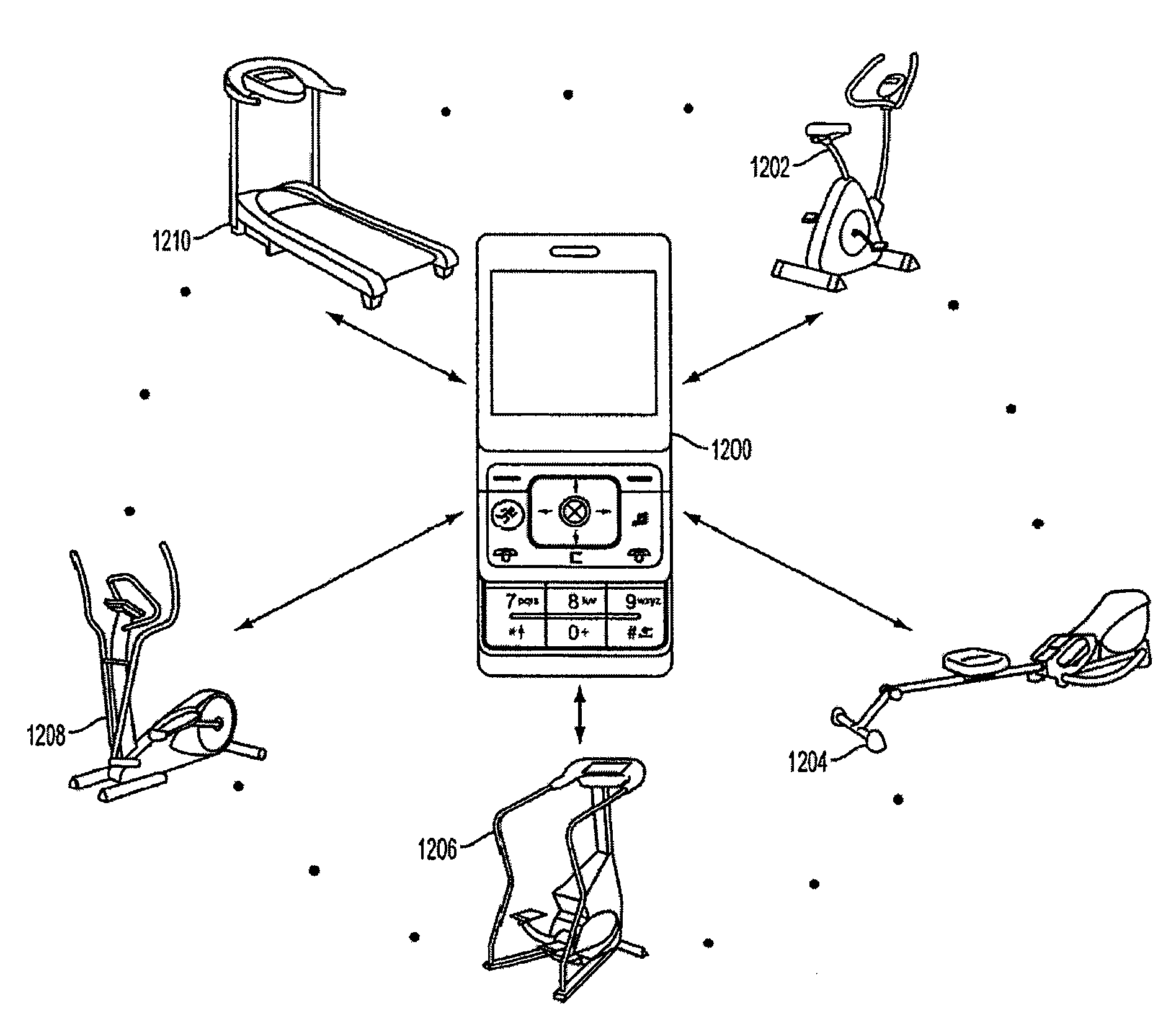

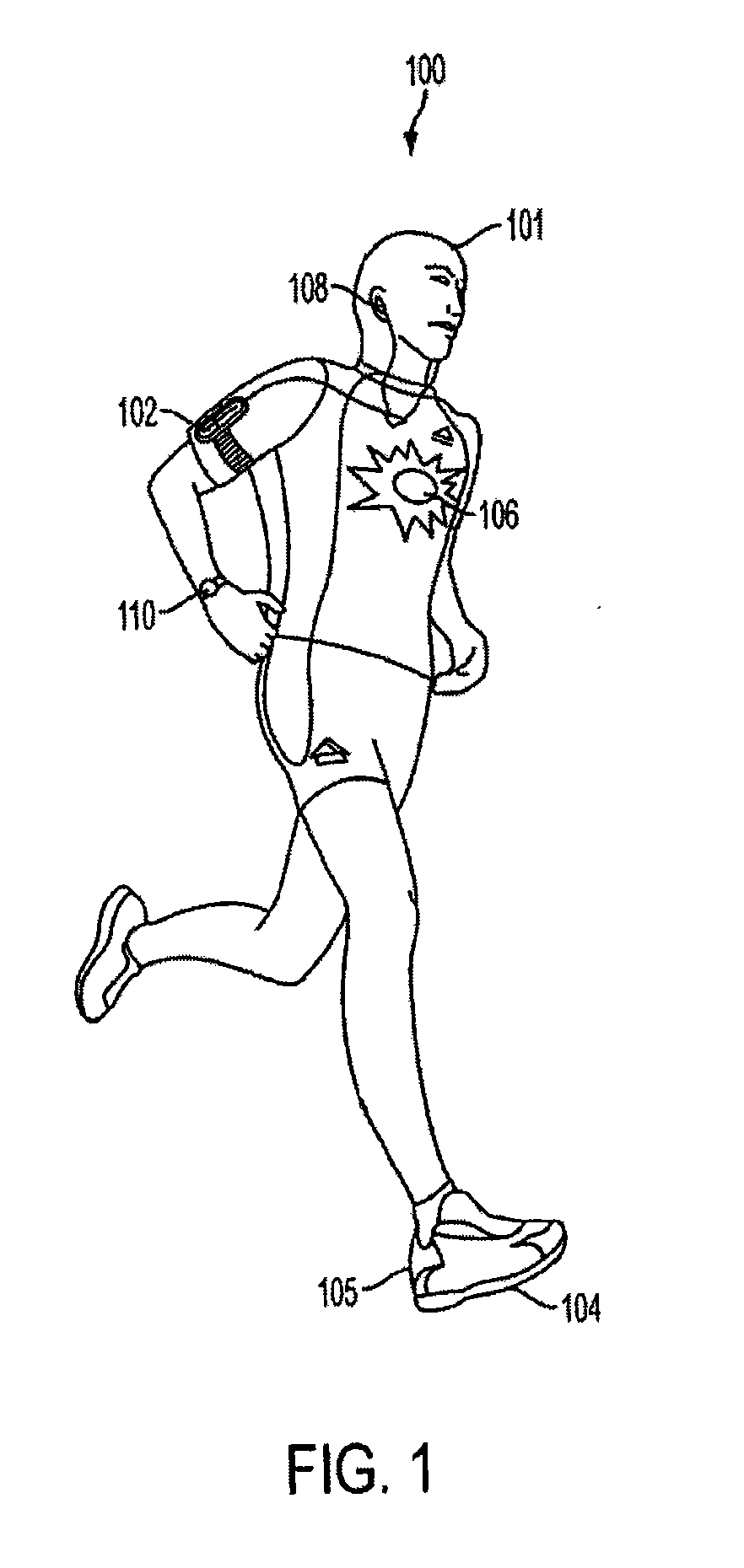

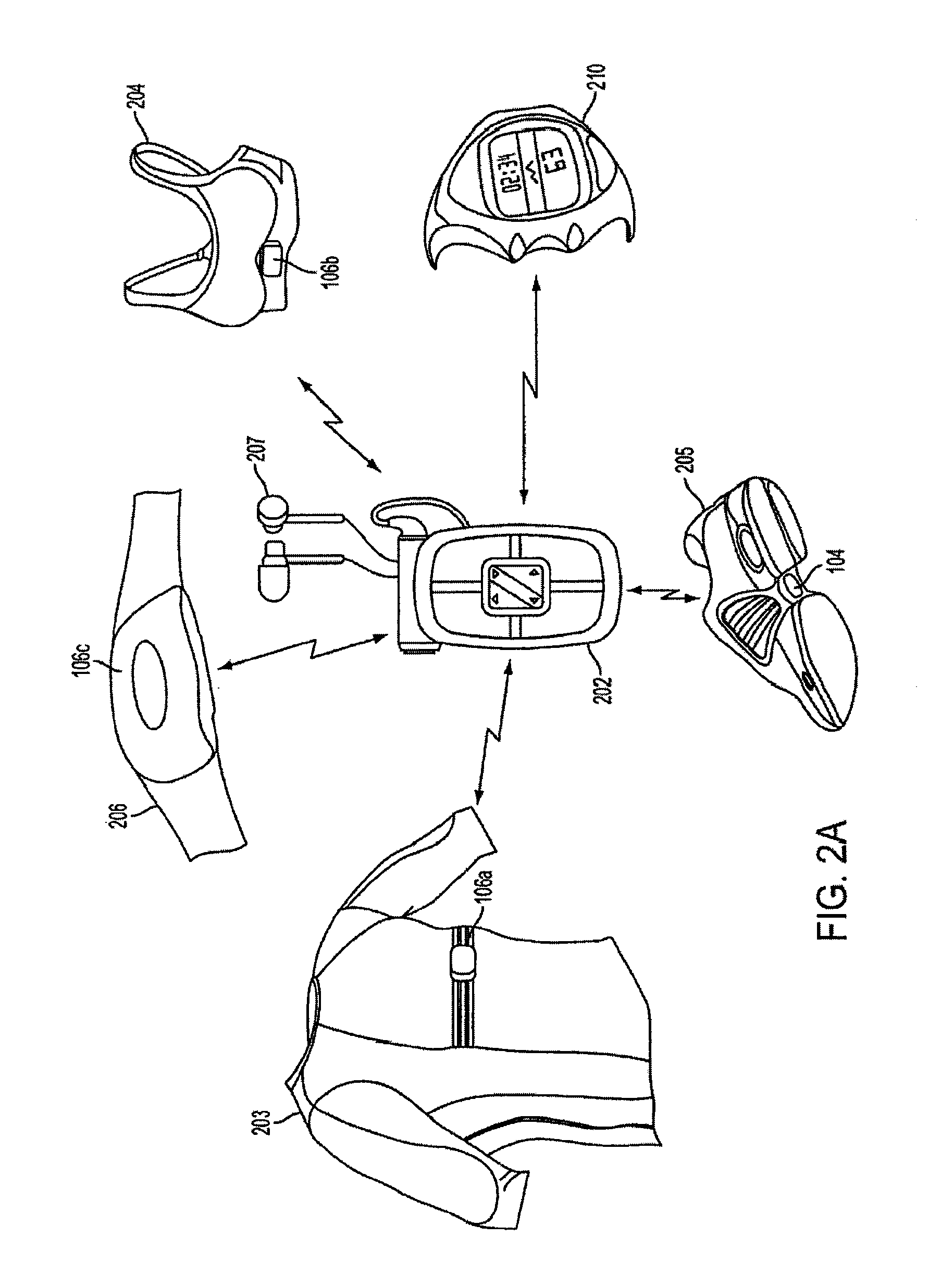

Sports Electronic Training System With Electronic Gaming Features, And Applications Thereof

ActiveUS20090233770A1Function increaseAssess a fitness level for an individualPhysical therapies and activitiesPosition fixationGame basedHuman–computer interaction

A sports electronic training system with electronic gaming features, and applications thereof, are disclosed. In an embodiment, the system comprises at least one monitor and a portable electronic processing device for receiving data from the at least one monitor and providing feedback to an individual based on the received data. The monitor can be a motion monitor that measures an individual's performance such as, for example, speed, pace and distance for a runner. Other monitors might include a heart rate monitor, a temperature monitor, an altimeter, et cetera. In an embodiment, an input is provided to an electronic game based on data obtained from the at least one monitor that effects, for example, an avatar, a digitally created character, an action within the game, or a game score of the electronic game.

Owner:ADIDAS

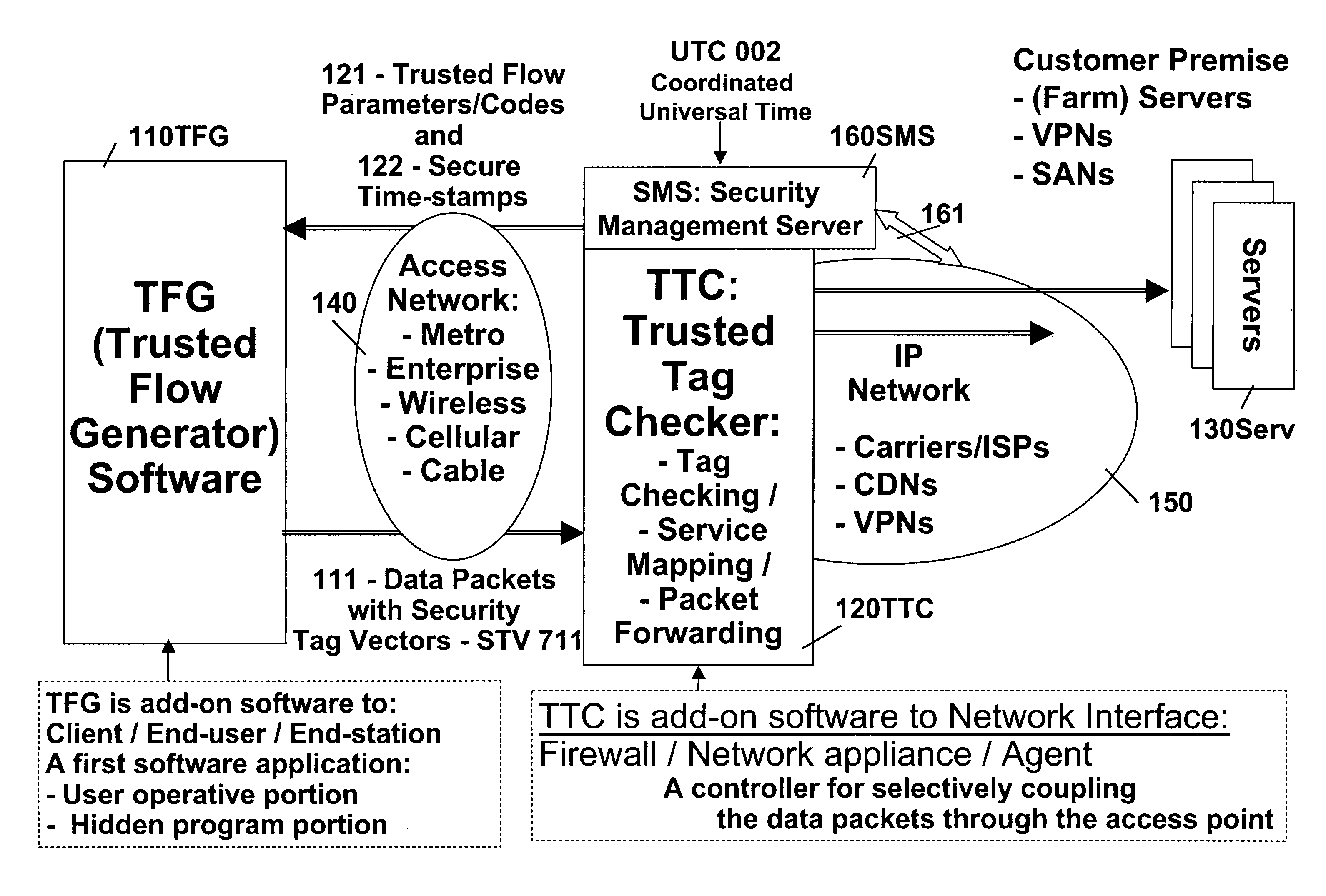

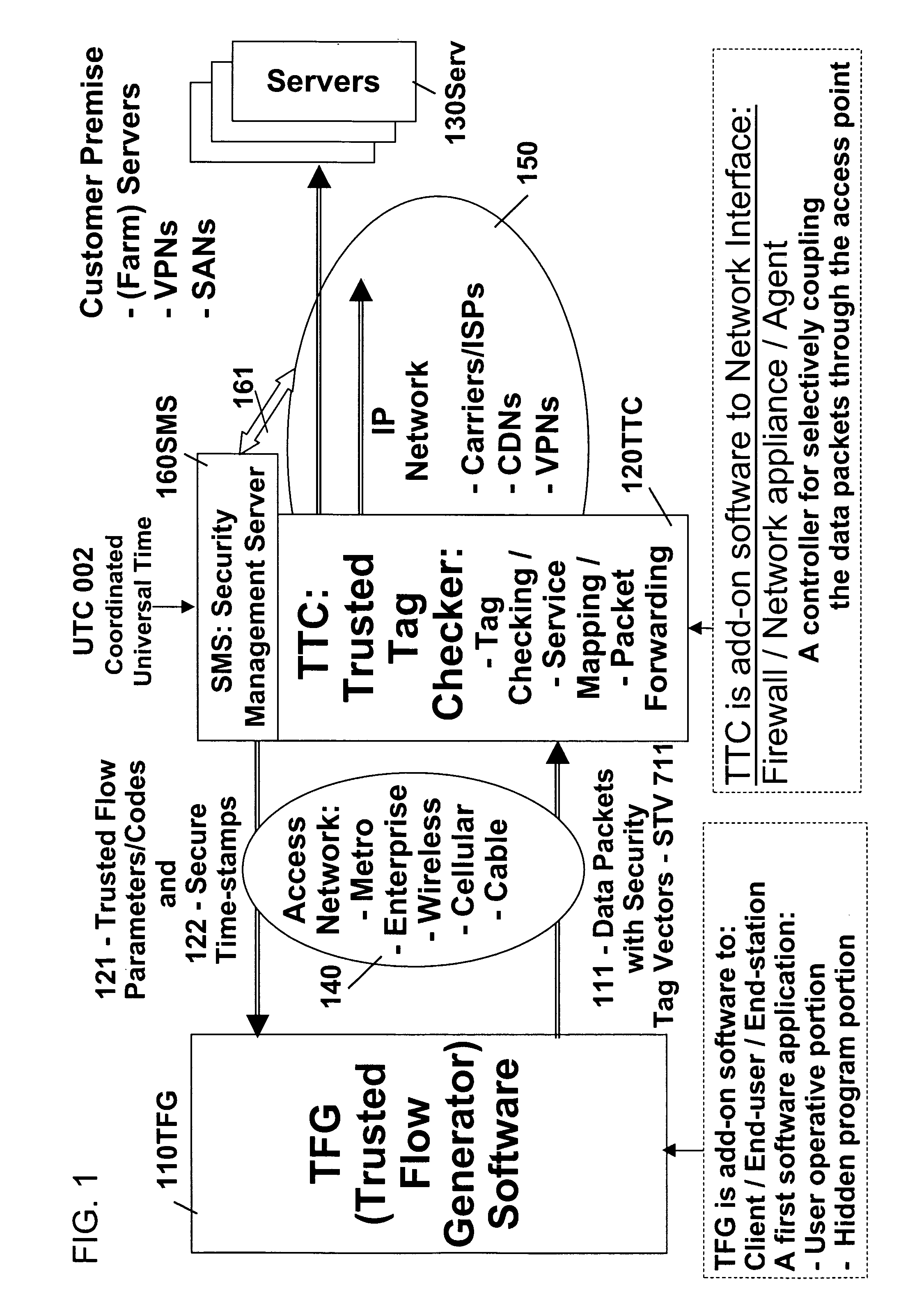

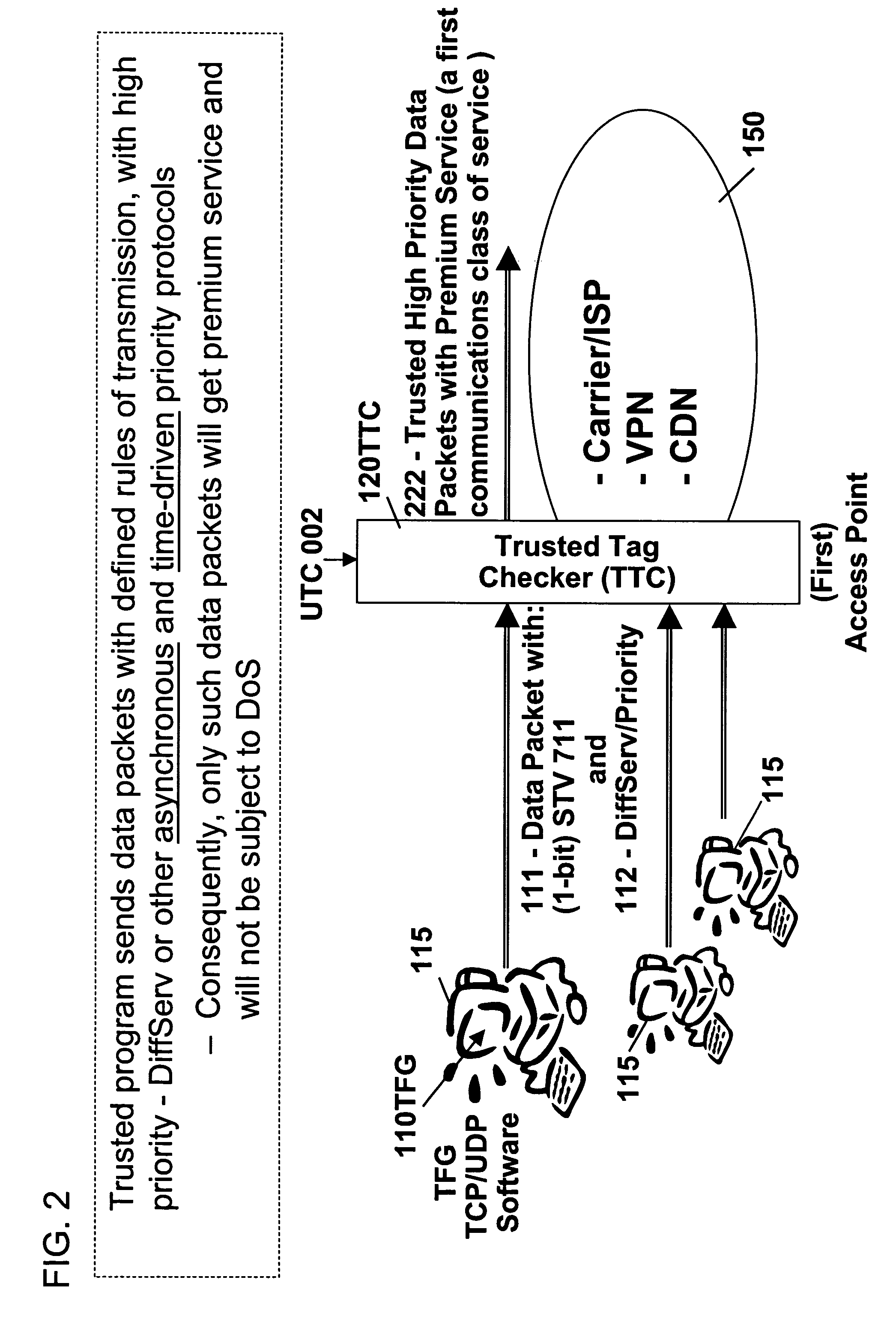

Remotely authenticated operation method

InactiveUS7509687B2Improvement effortsEfficient use ofDigital data processing detailsUser identity/authority verificationTrusted ComputingMedia server

The objective of this invention is to provide continuous remote authenticated operations for ensuring proper content processing and management in remote untrusted computing environment. The method is based on using a program that was hidden within the content protection program at the remote untrusted computing environment, e.g., an end station. The hidden program can be updated dynamically and it includes an inseparable and interlocked functionality for generating a pseudo random sequence of security signals. Only the media server that sends the content knows how the pseudo-random sequence of security signals were generated; therefore, the media server is able to check the validity of the security signals, and thereby, verify the authenticity of the programs used to process content at the remote untrusted computing environment. If the verification operation fails, the media server will stop the transmission of content to the remote untrusted computing environment.

Owner:ATTESTWAVE LLC

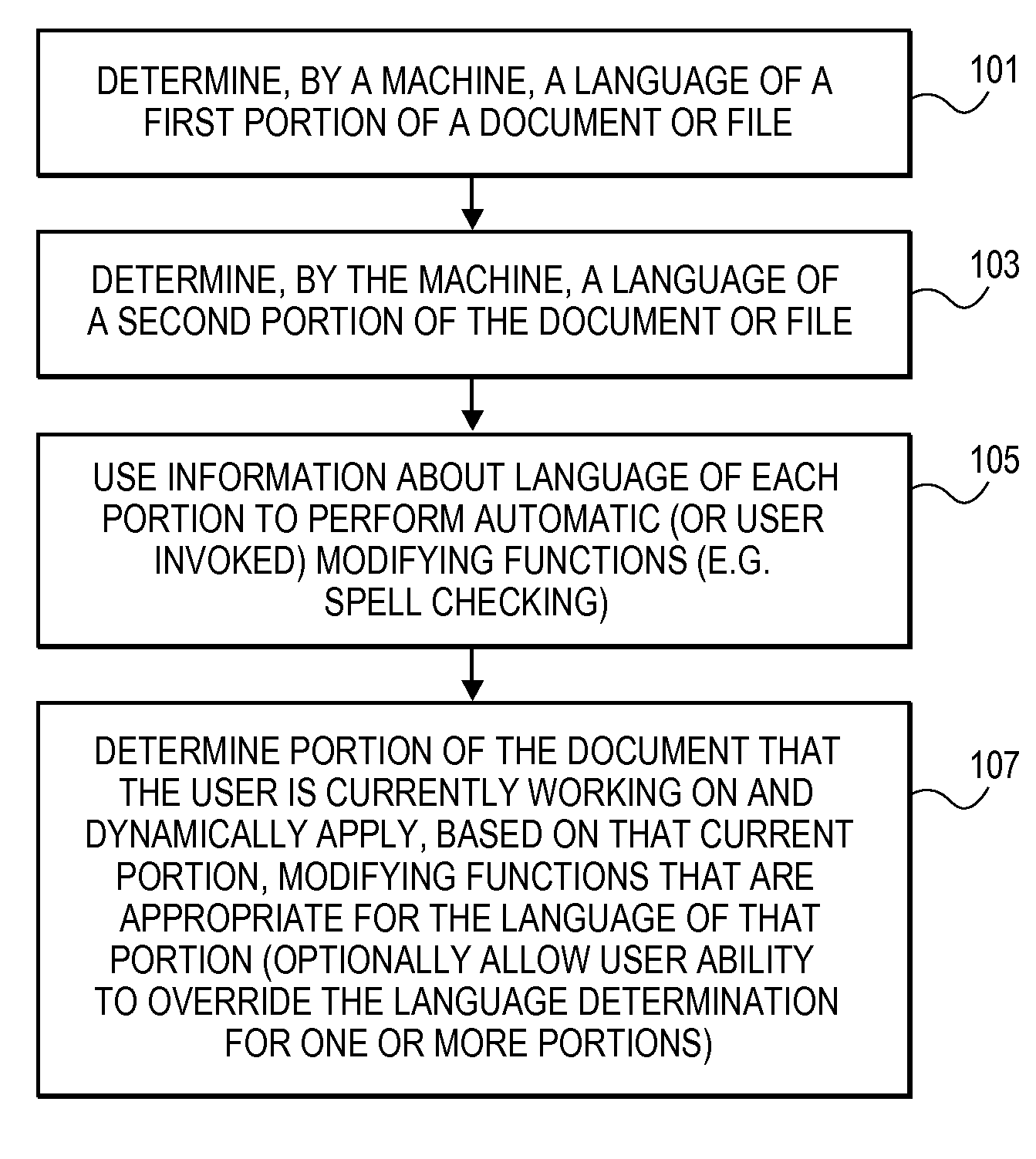

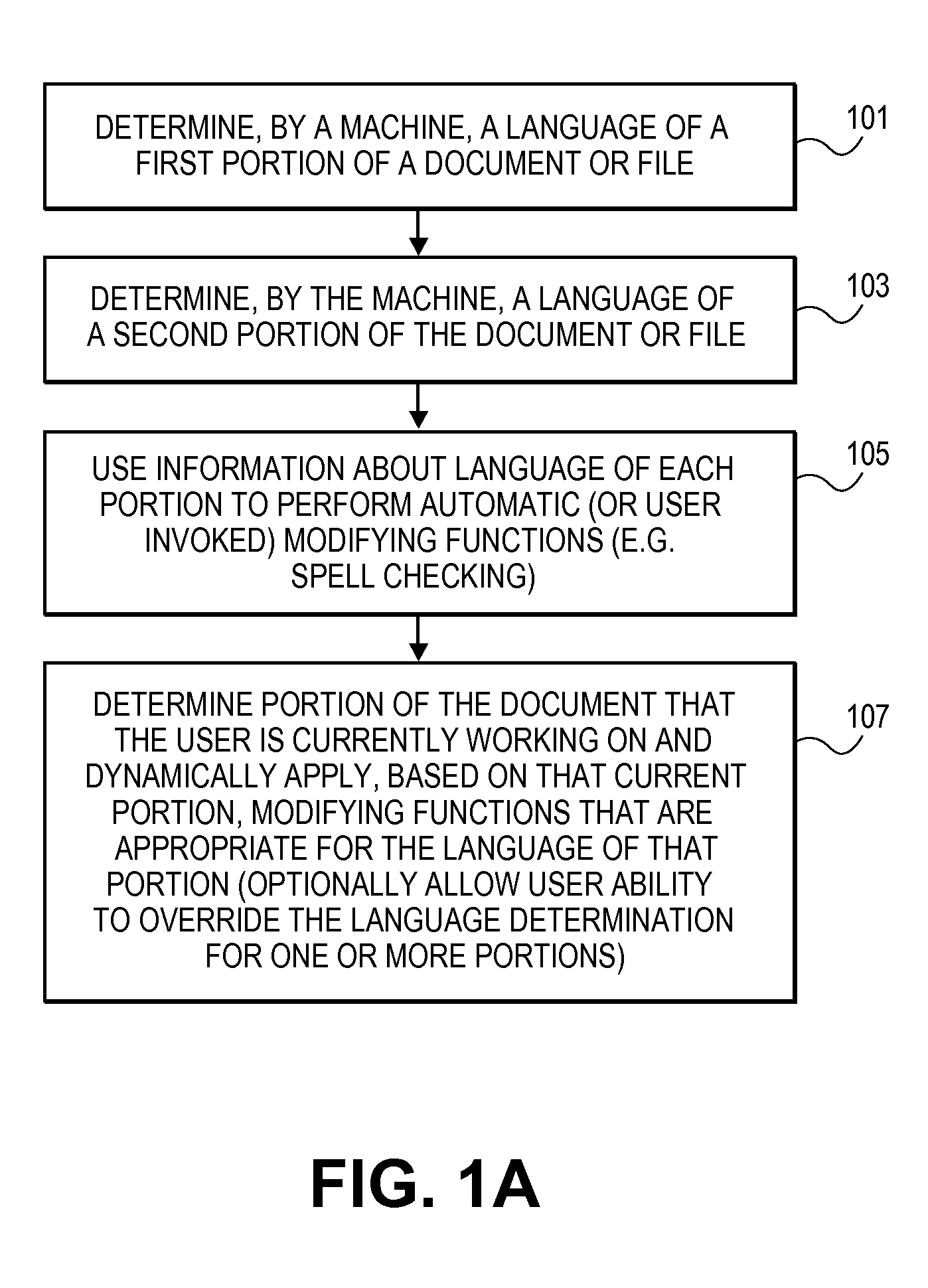

Automatic language identification for dynamic text processing

ActiveUS20090307584A1Natural language translationSpecial data processing applicationsHuman languageDynamic text

Methods and systems which utilize, in one embodiment, automatic language identification, including automatic language identification for dynamic text processing. In at least certain embodiments, automatic language identification can be applied to spellchecking in real time as the user types.

Owner:APPLE INC

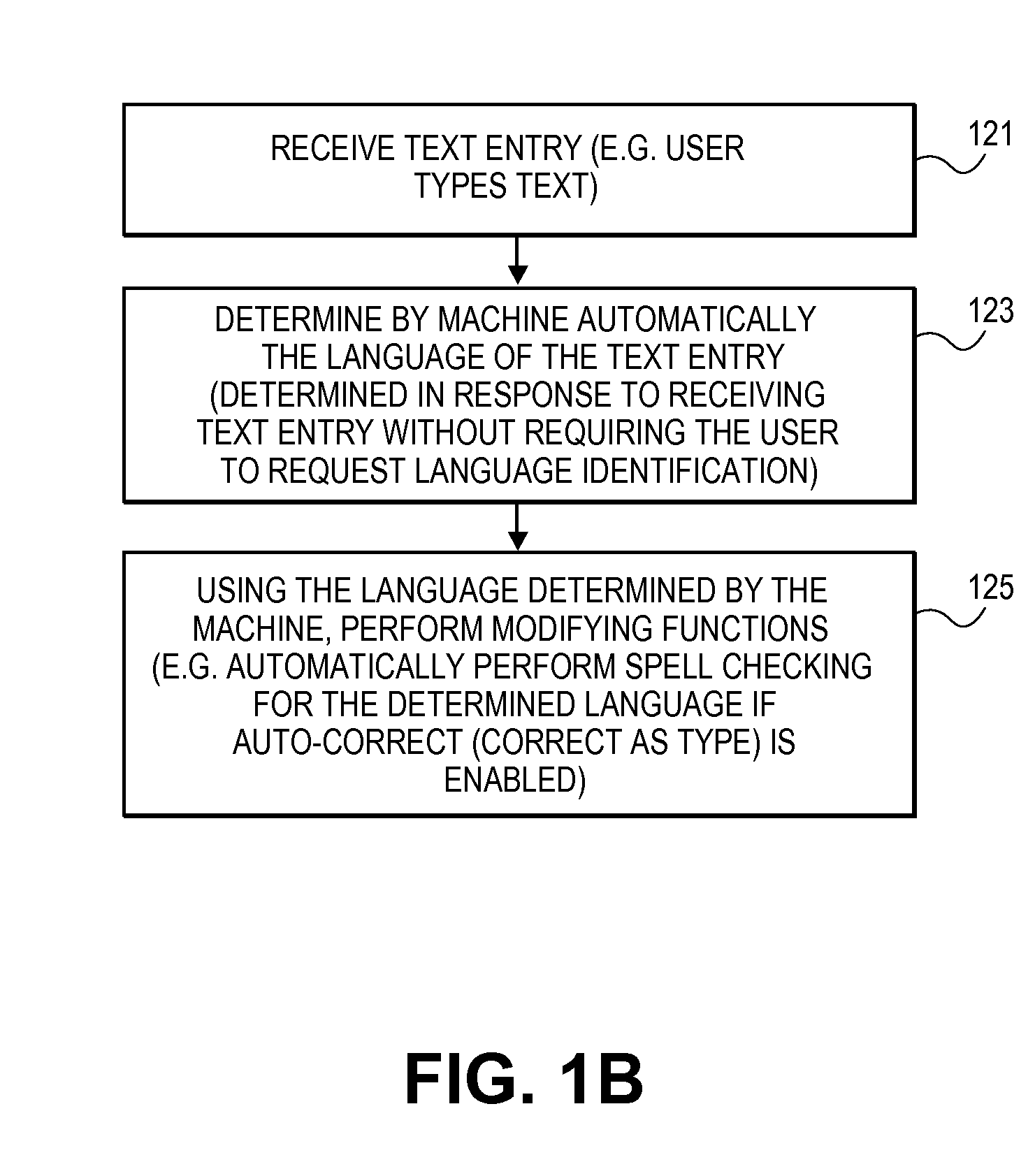

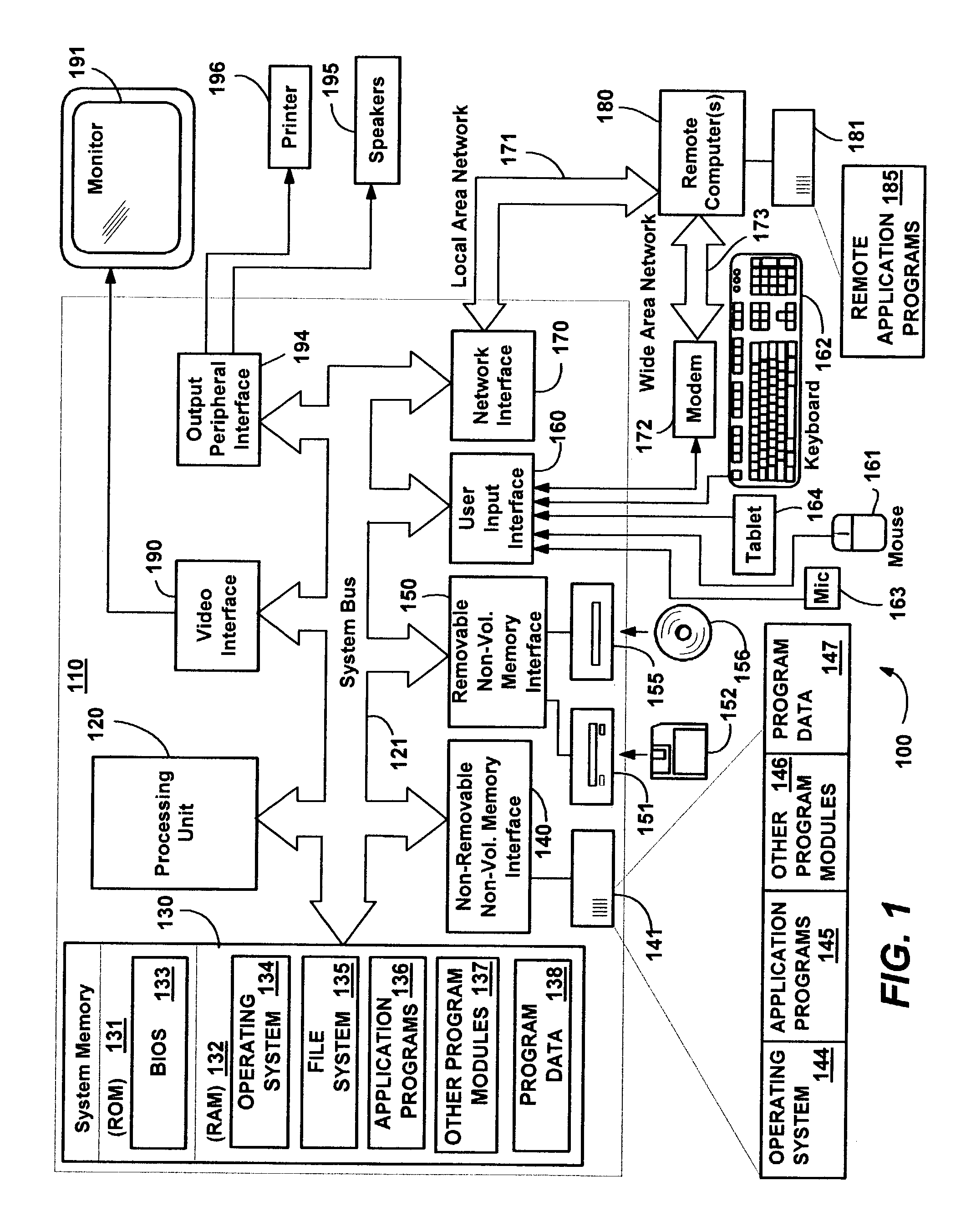

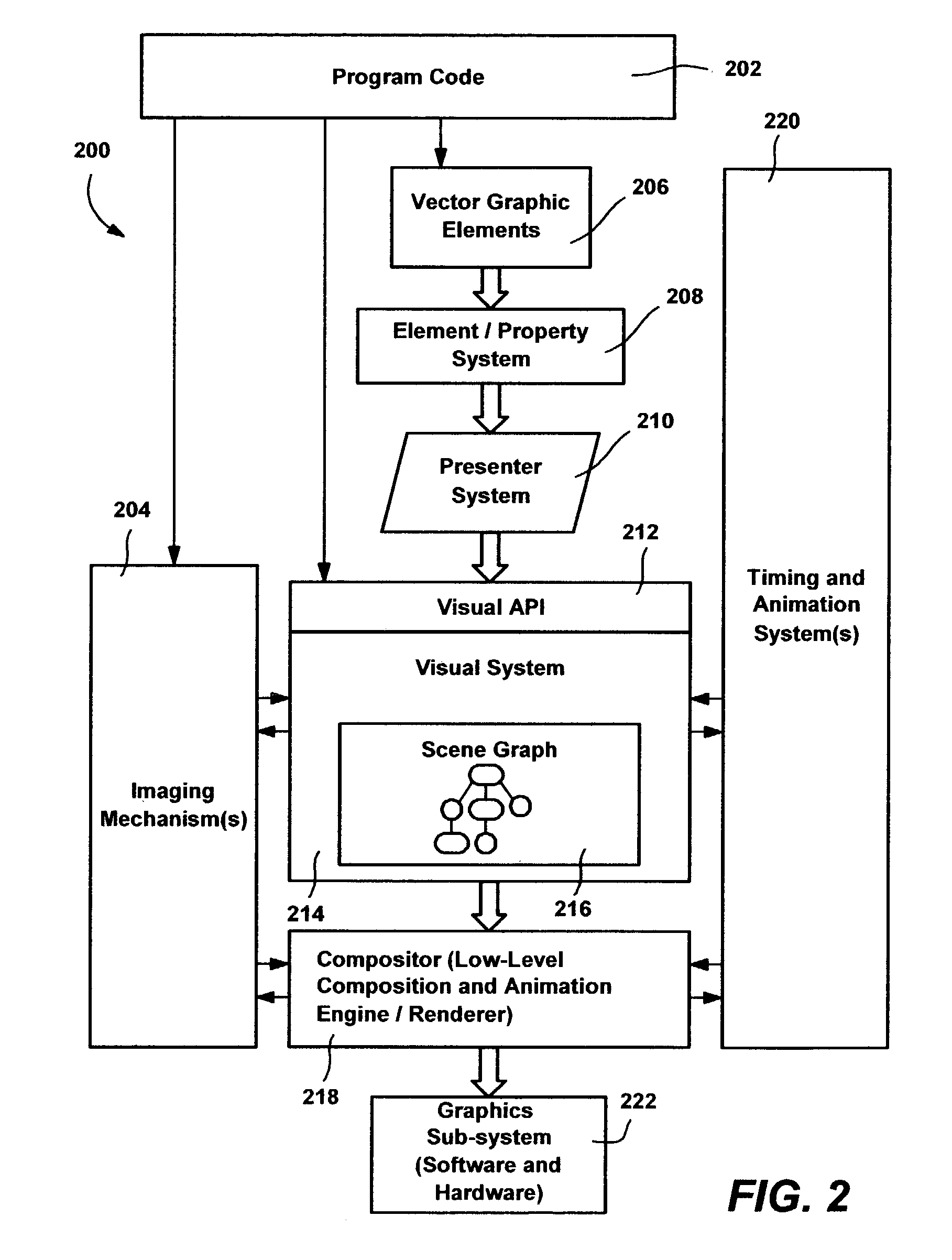

System and method for managing visual structure, timing, and animation in a graphics processing system

InactiveUS7088374B2Smooth animationDrawing from basic elementsCathode-ray tube indicatorsGraphicsAnimation

A visual tree structure as specified by a program is constructed and maintained by a visual system's user interface thread. As needed, the tree structure is traversed on the UI thread, with changes compiled into change queues. A secondary rendering thread that handles animation and graphical composition takes the content from the change queues, to construct and maintain a condensed visual tree. Static visual subtrees are collapsed, leaving a condensed tree with only animated attributes such as transforms as parent nodes, such that animation data is managed on the secondary thread, with references into the visual tree. When run, the rendering thread processes the change queues, applies changes to the condensed trees, and updates the structure of the animation list as necessary by resampling animated values at their new times. Content in the condensed visual tree is then rendered and composed. Animation and a composition communication protocol are also provided.

Owner:MICROSOFT TECH LICENSING LLC

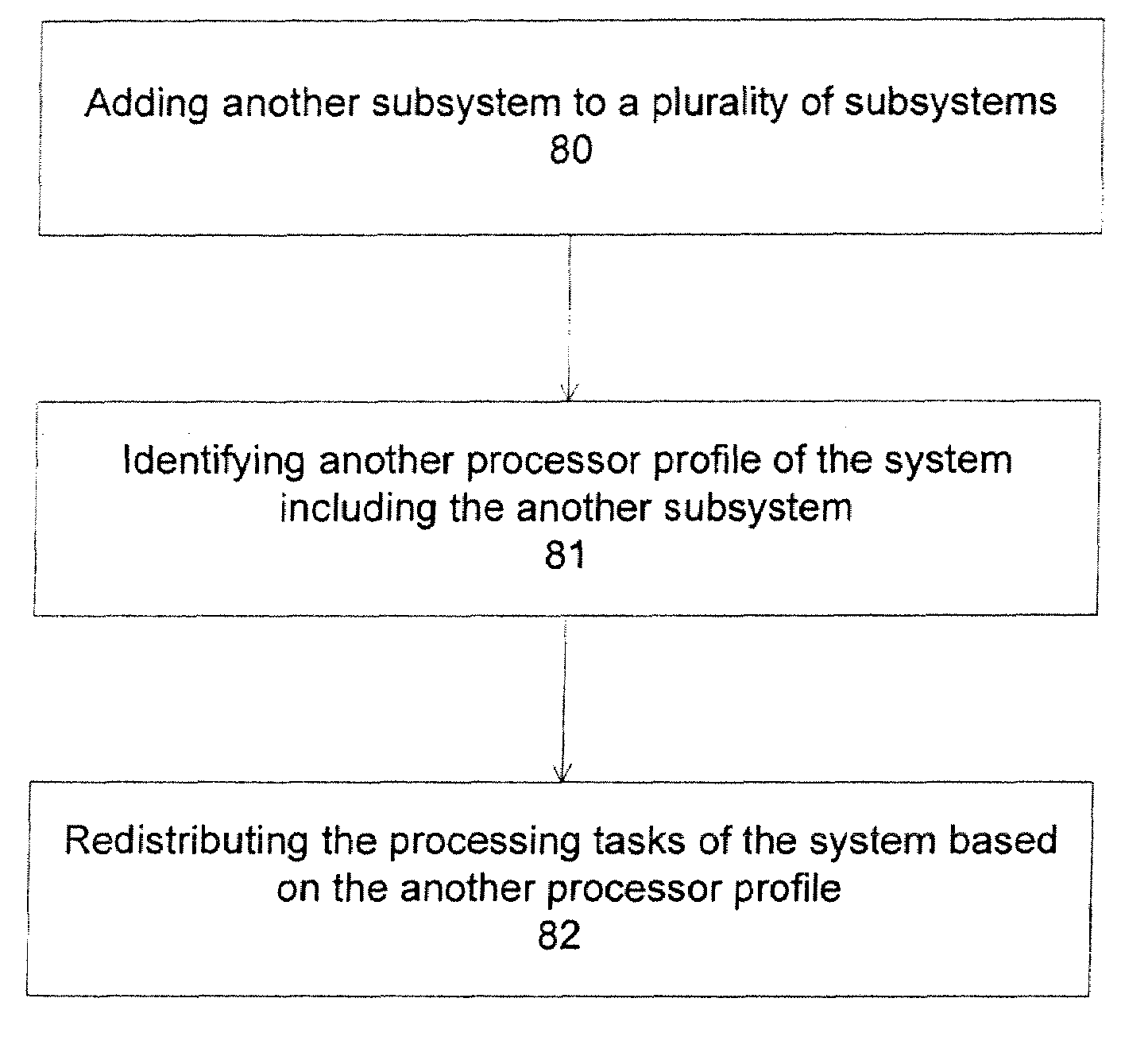

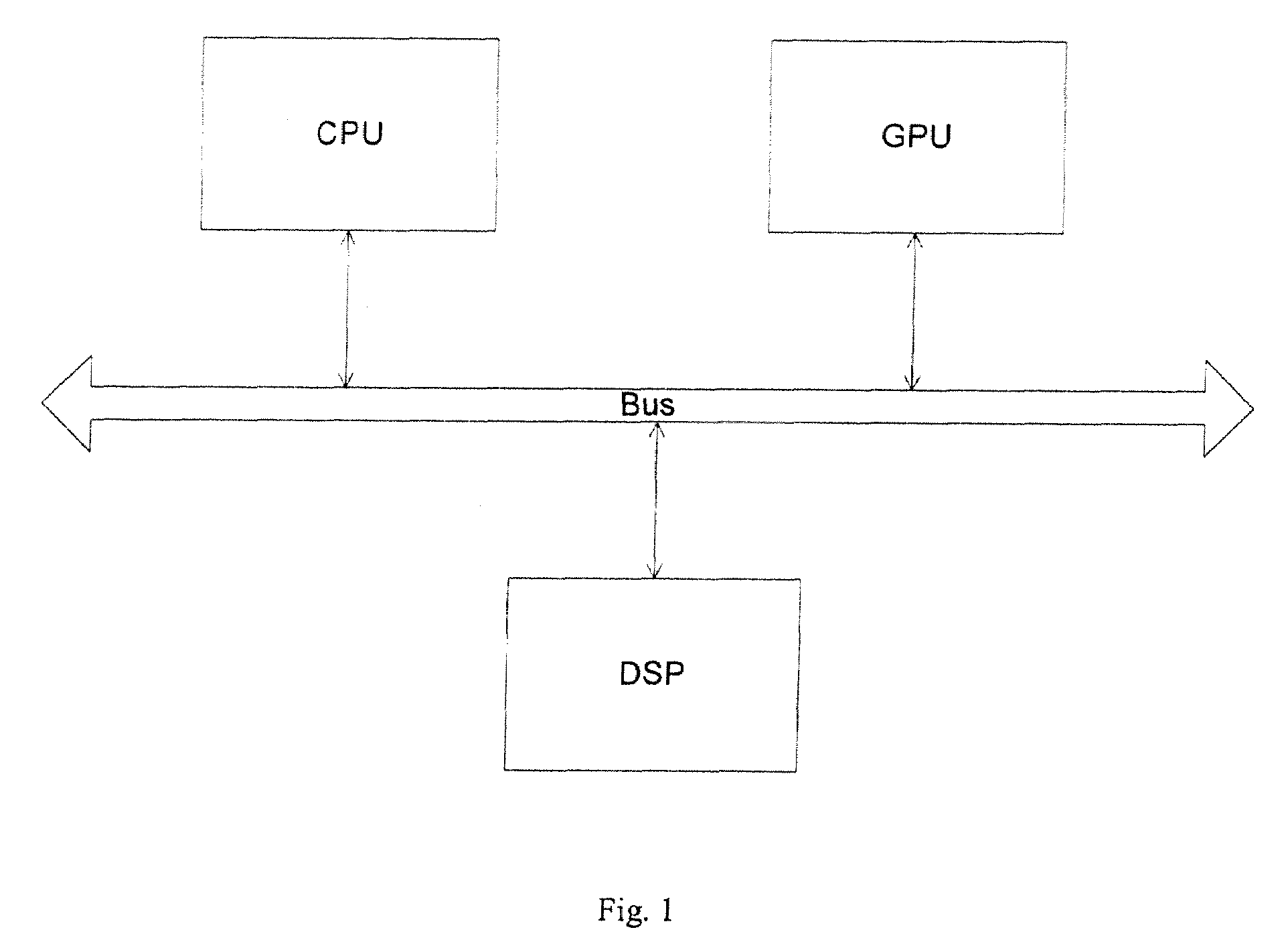

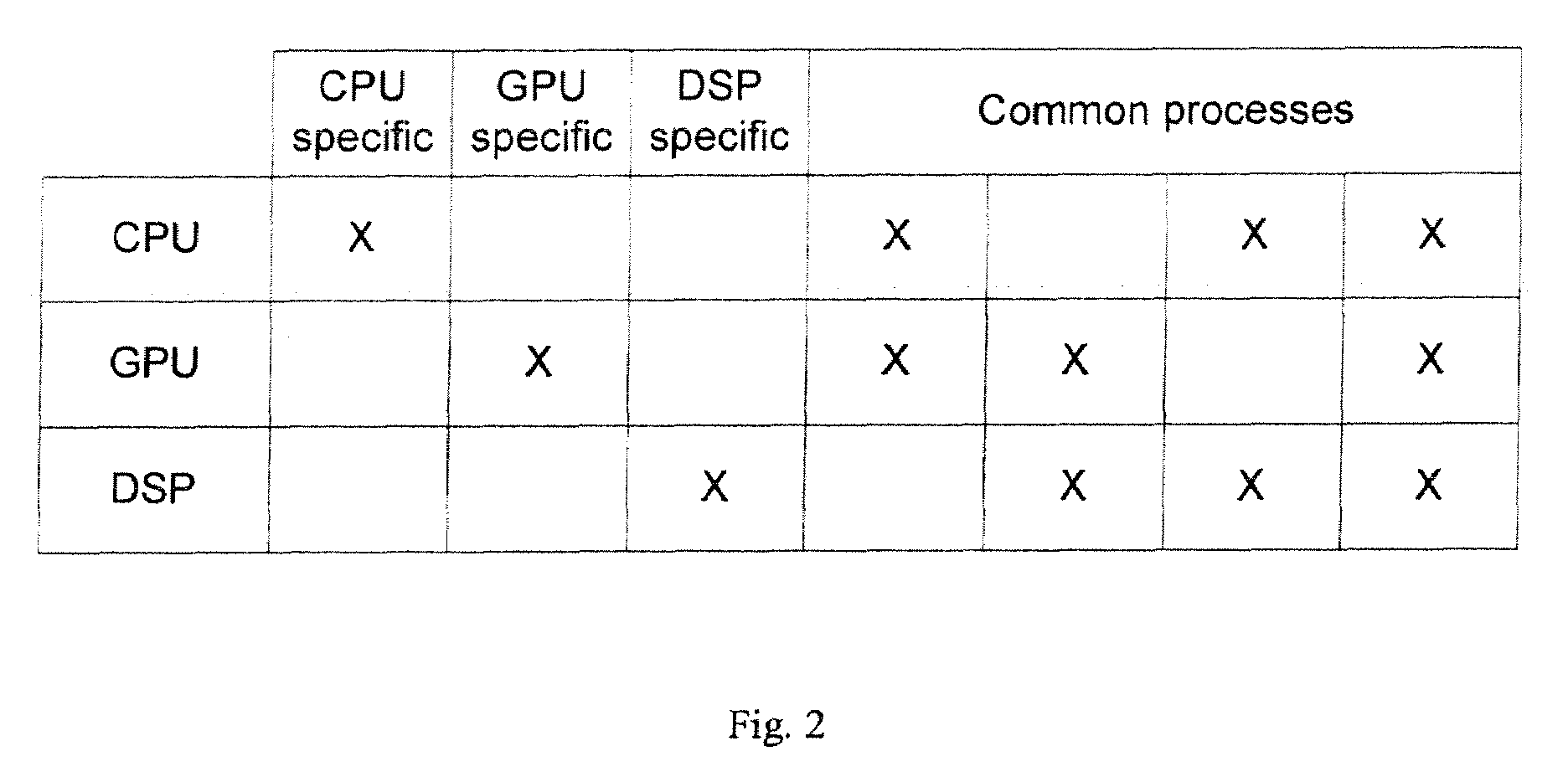

Methods and apparatuses for load balancing between multiple processing units

ActiveUS20090109230A1PerformanceProcessingEnergy efficient ICTDigital data processing detailsDigital signal processingGraphics

Exemplary embodiments of methods and apparatuses to dynamically redistribute computational processes in a system that includes a plurality of processing units are described. The power consumption, the performance, and the power / performance value are determined for various computational processes between a plurality of subsystems where each of the subsystems is capable of performing the computational processes. The computational processes are exemplarily graphics rendering process, image processing process, signal processing process, Bayer decoding process, or video decoding process, which can be performed by a central processing unit, a graphics processing units or a digital signal processing unit. In one embodiment, the distribution of computational processes between capable subsystems is based on a power setting, a performance setting, a dynamic setting or a value setting.

Owner:APPLE INC

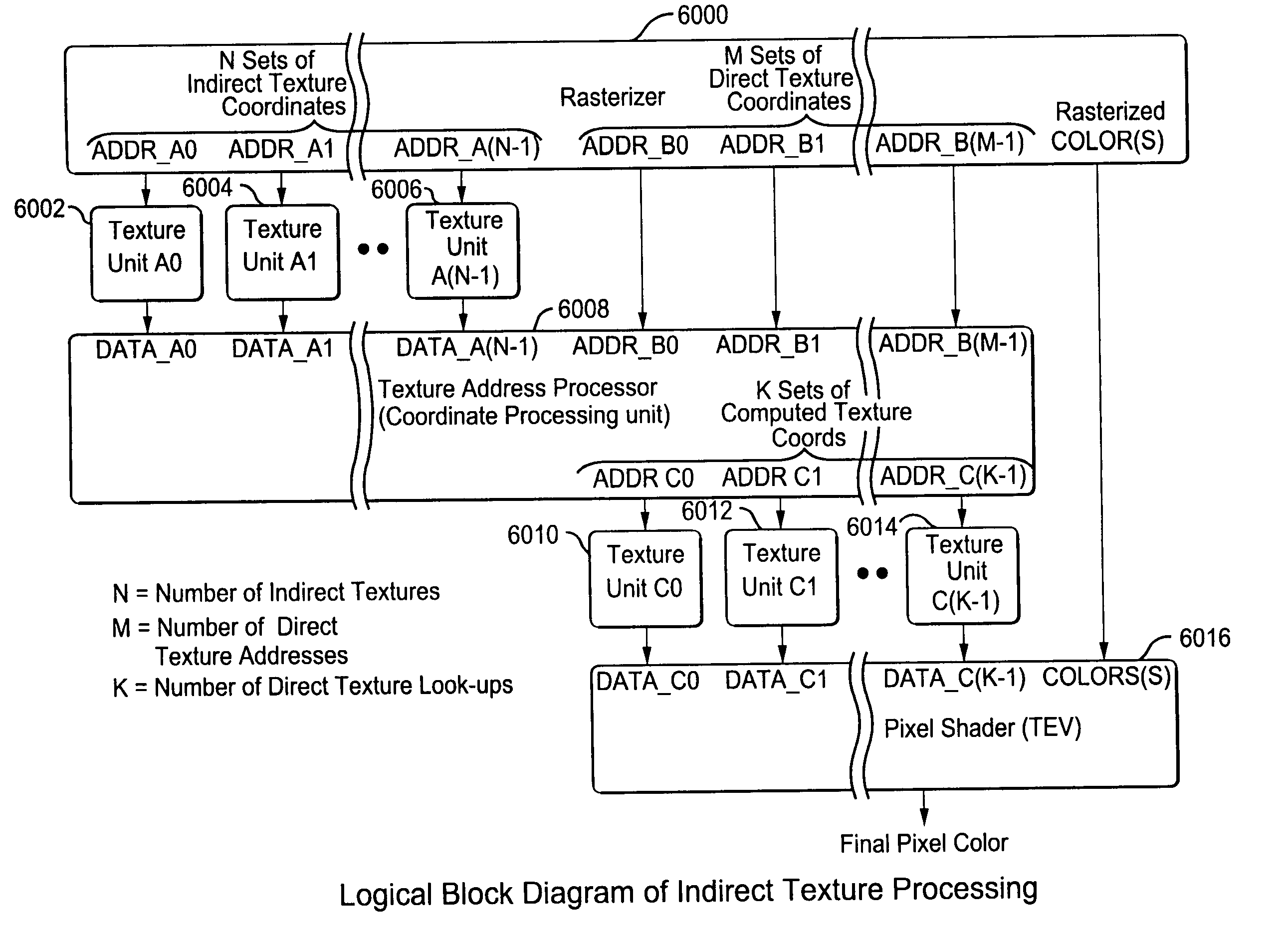

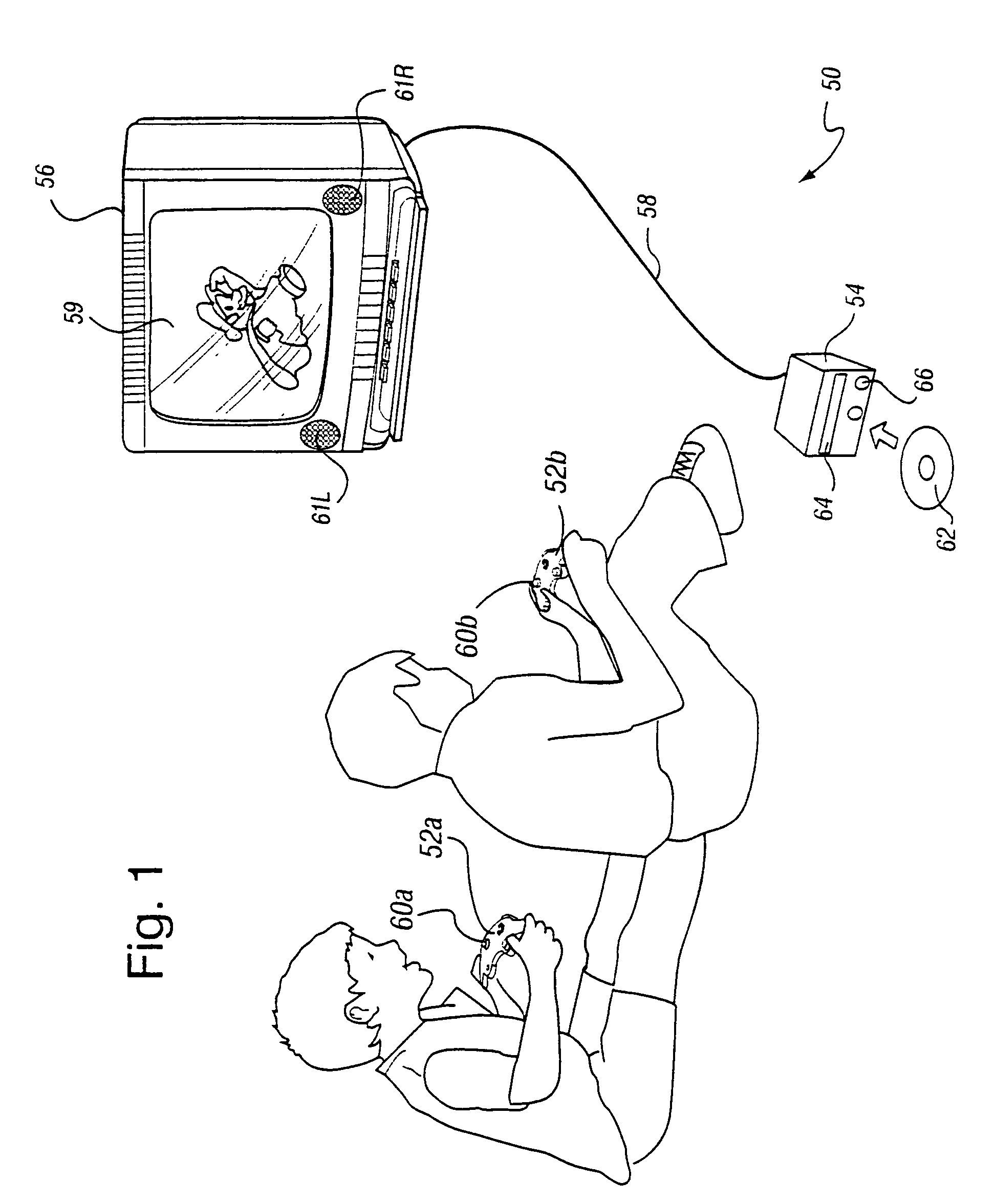

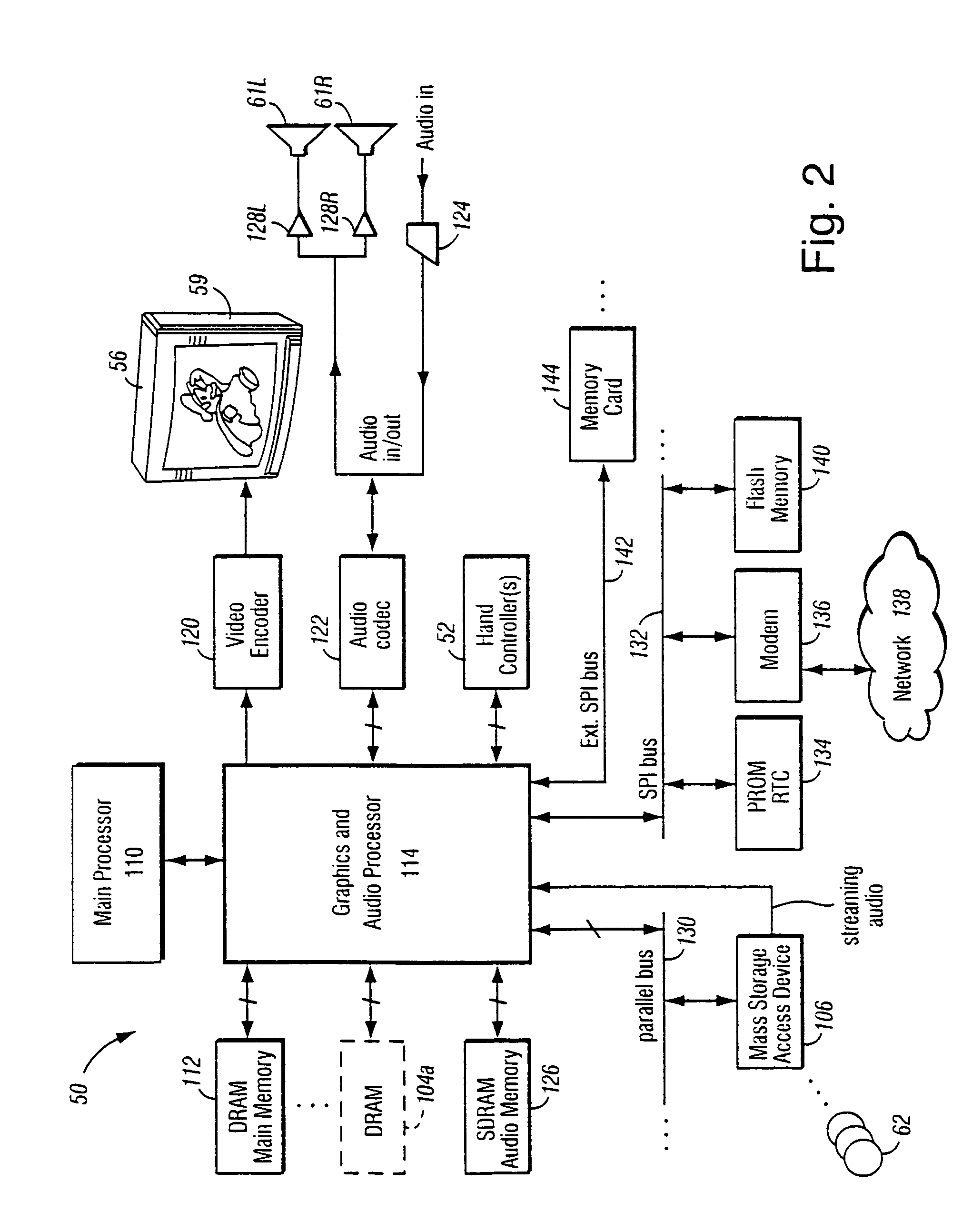

Method and apparatus for interleaved processing of direct and indirect texture coordinates in a graphics system

InactiveUS7002591B1Efficient implementationIncrease in texture mapping hardware complexityCathode-ray tube indicators3D-image renderingPattern recognitionProcessing

A graphics system including a custom graphics and audio processor produces exciting 2D and 3D graphics and surround sound. The system includes a graphics and audio processor including a 3D graphics pipeline and an audio digital signal processor. The graphics pipeline renders and prepares images for display at least in part in response to polygon vertex attribute data and texel color data stored as a texture images in an associated memory. An efficient texturing pipeline arrangement achieves a relatively low chip-footprint by utilizing a single texture coordinate / data processing unit that interleaves the processing of logical direct and indirect texture coordinate data and a texture lookup data feedback path for “recirculating” indirect texture lookup data retrieved from a single texture retrieval unit back to the texture coordinate / data processing unit. Versatile indirect texture referencing is achieved by using the same texture coordinate / data processing unit to transform the recirculated texture lookup data into offsets that may be added to the texture coordinates of a direct texture lookup. A generalized indirect texture API function is provided that supports defining at least four indirect texture referencing operations and allows for selectively associating one of at least eight different texture images with each indirect texture defined. Retrieved indirect texture lookup data is processed as multi-bit binary data triplets of three, four, five, or eight bits. The data triplets are multiplied by a 3×2 texture coordinate offset matrix before being optionally combined with regular non-indirect coordinate data or coordinate data from a previous cycle / stage of processing. Values of the offset matrix elements are variable and may be dynamically defined for each cycle / stage using selected constants. Two additional variable matrix configurations are also defined containing element values obtained from current direct texture coordinates. Circuitry for optionally biasing and scaling retrieved texture data is also provided.

Owner:NINTENDO CO LTD

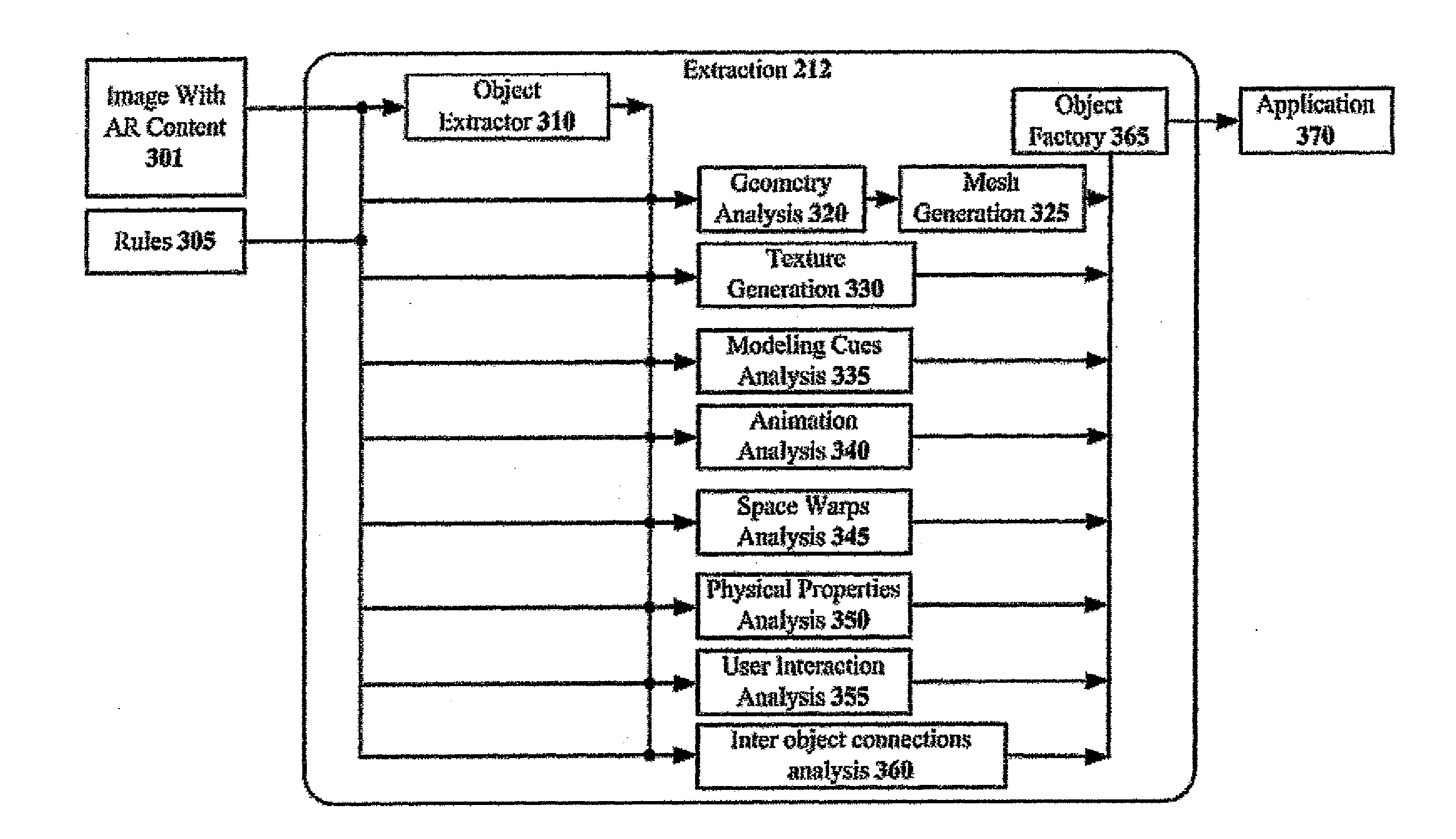

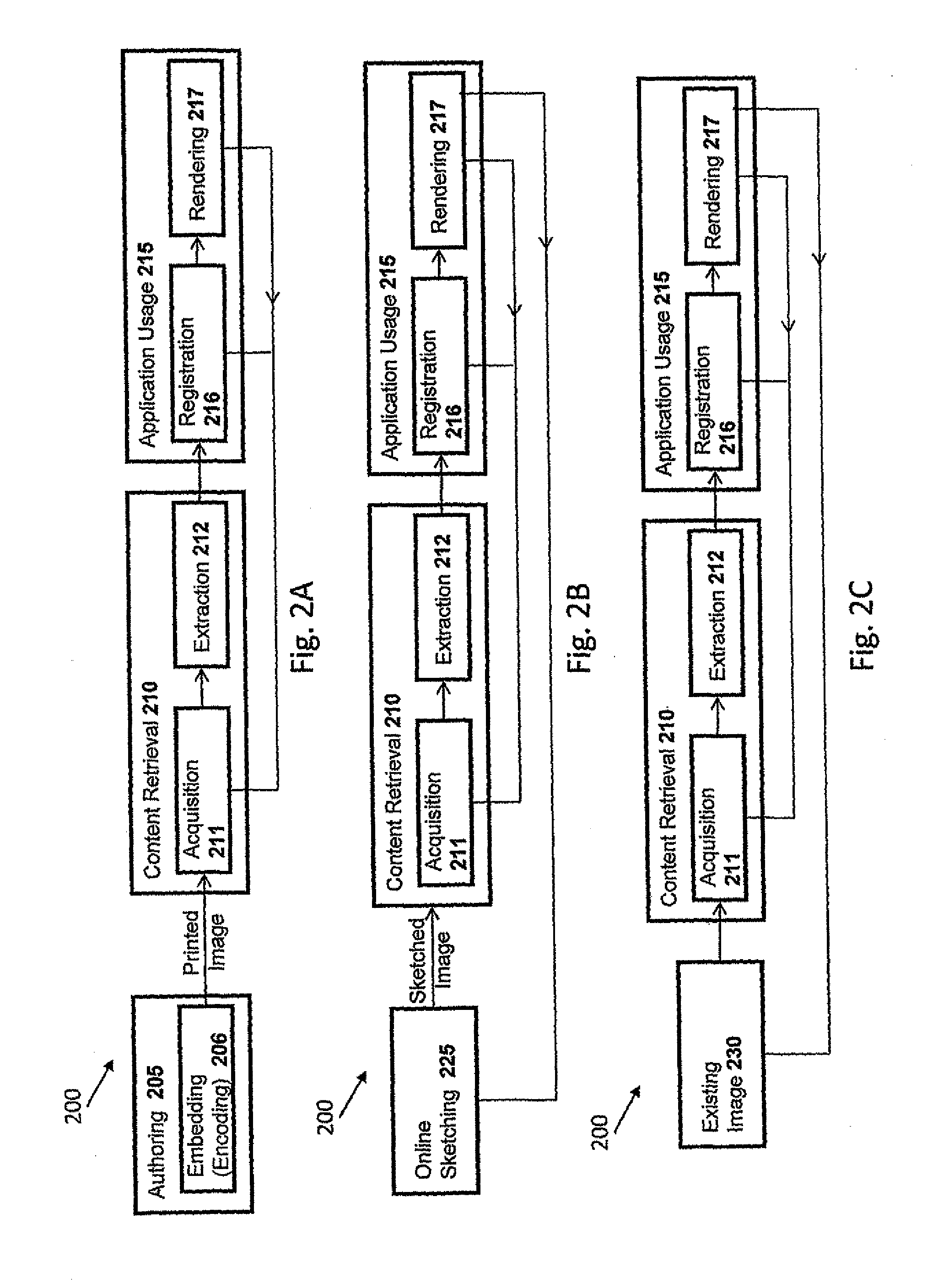

Method and System for Compositing an Augmented Reality Scene

ActiveUS20120069051A1Eliminate needCathode-ray tube indicatorsImage data processingComputer graphics (images)Virtual model

Disclosed are systems and methods for compositing an augmented reality scene, the methods including the steps of extracting, by an extraction component into a memory of a data-processing machine, at least one object from a real-world image detected by a sensing device; geometrically reconstructing at least one virtual model from at least one object; and compositing AR content from at least one virtual model in order to augment the AR content on the real-world image, thereby creating AR scene. Preferably, the method further includes; extracting at least one annotation from the real-world image into the memory of the data-processing machine for modifying at least one virtual model according to at least one annotation. Preferably, the method further includes: interacting with AR scene by modifying AR content based on modification of at least one object and / or at least one annotation in the real-world image.

Owner:APPLE INC

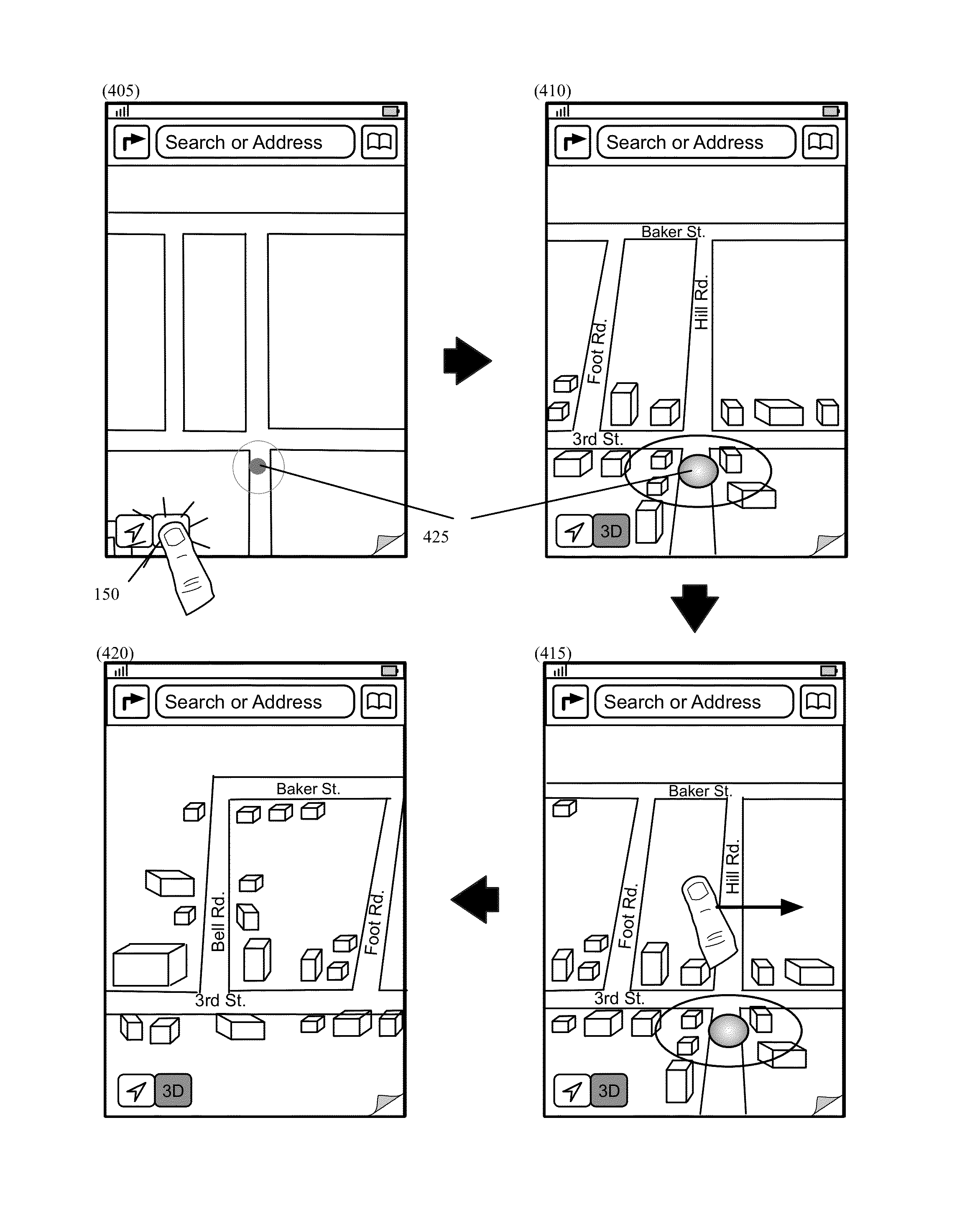

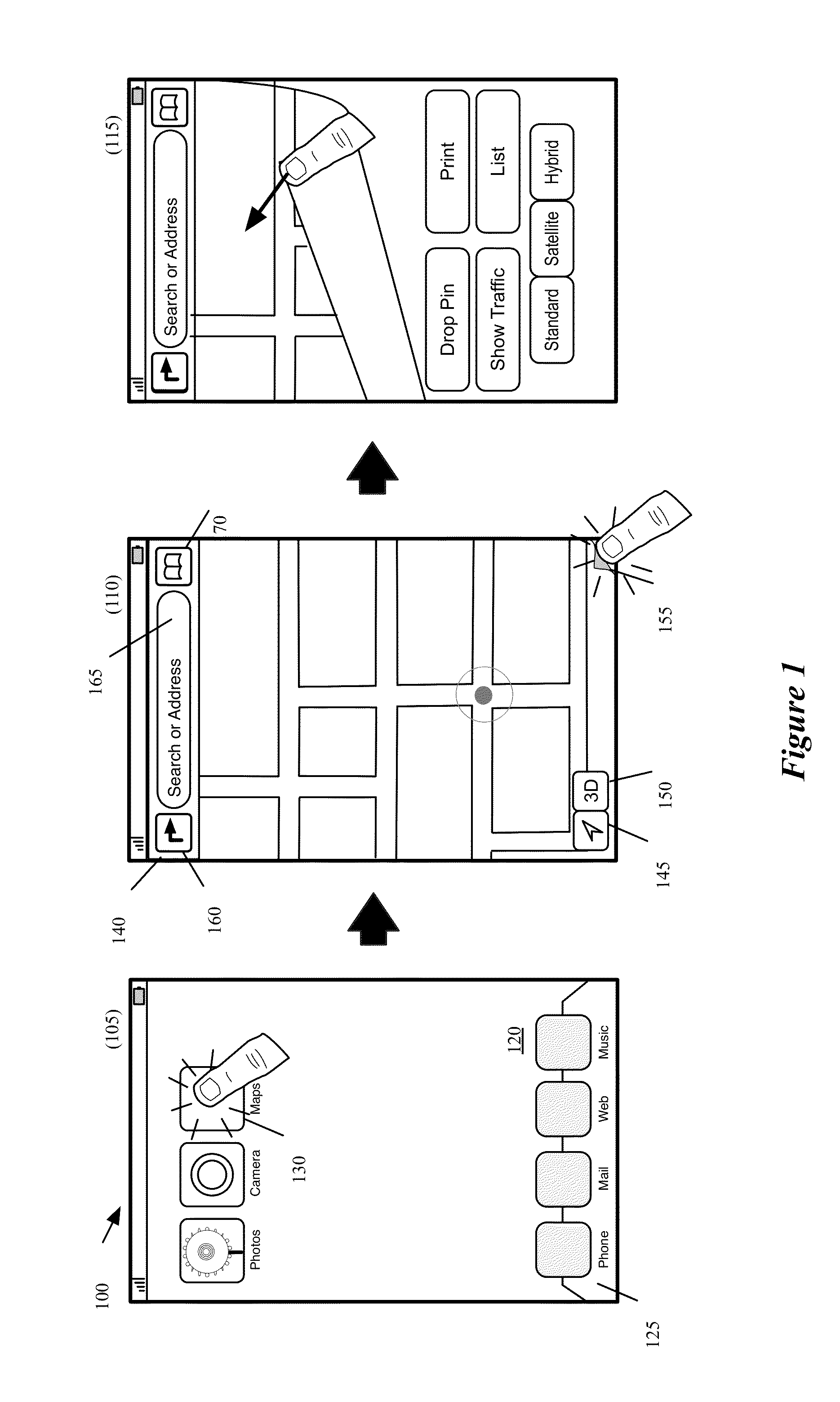

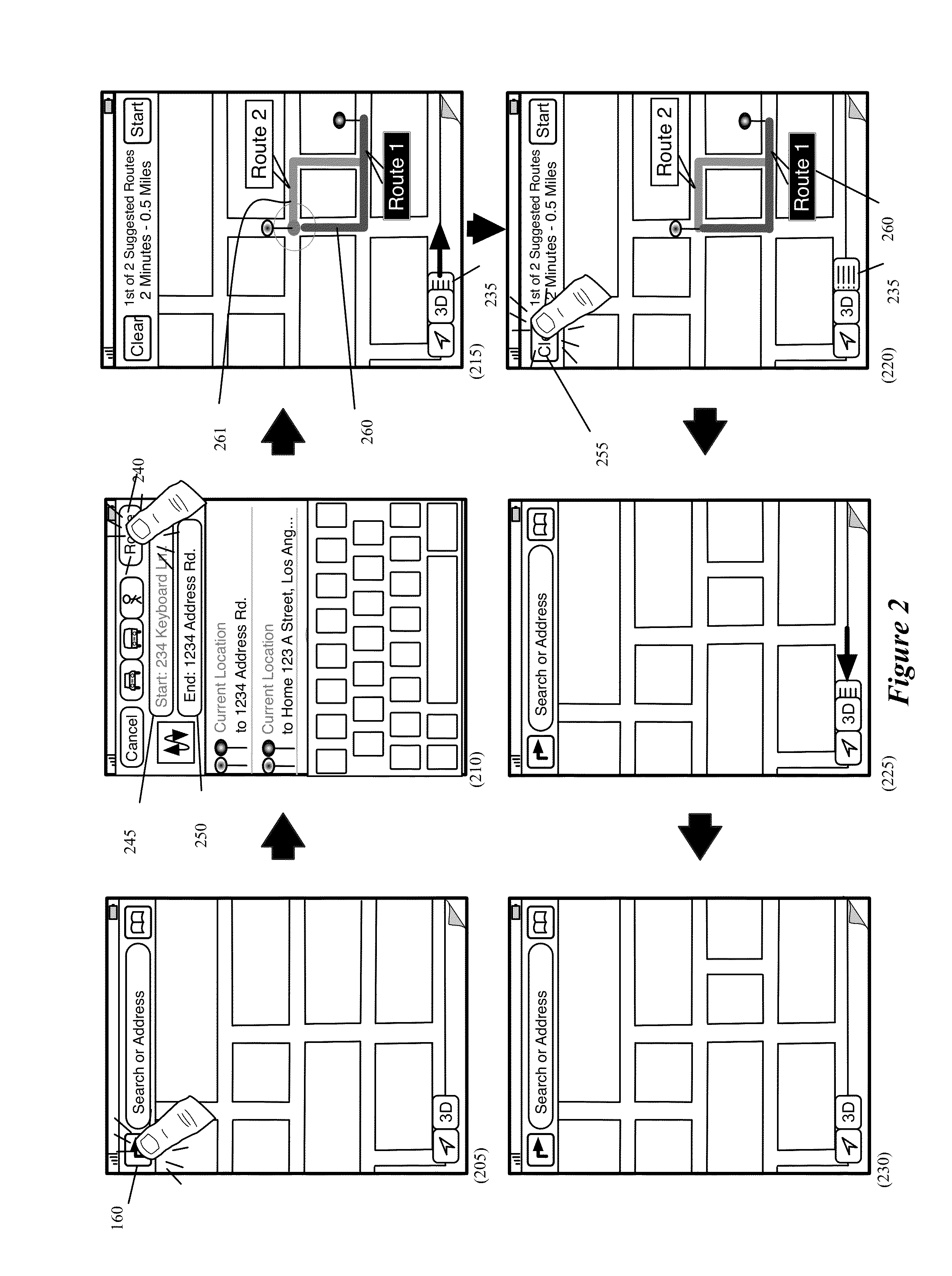

Mapping application with 3D presentation

A device that includes at least one processing unit and stores a multi-mode mapping program for execution by the at least one processing unit is described. The program includes a user interface (UI). The UI includes a display area for displaying a two-dimensional (2D) presentation of a map or a three-dimensional (3D) presentation of the map. The UI includes a selectable 3D control for directing the program to transition between the 2D and 3D presentations.

Owner:APPLE INC

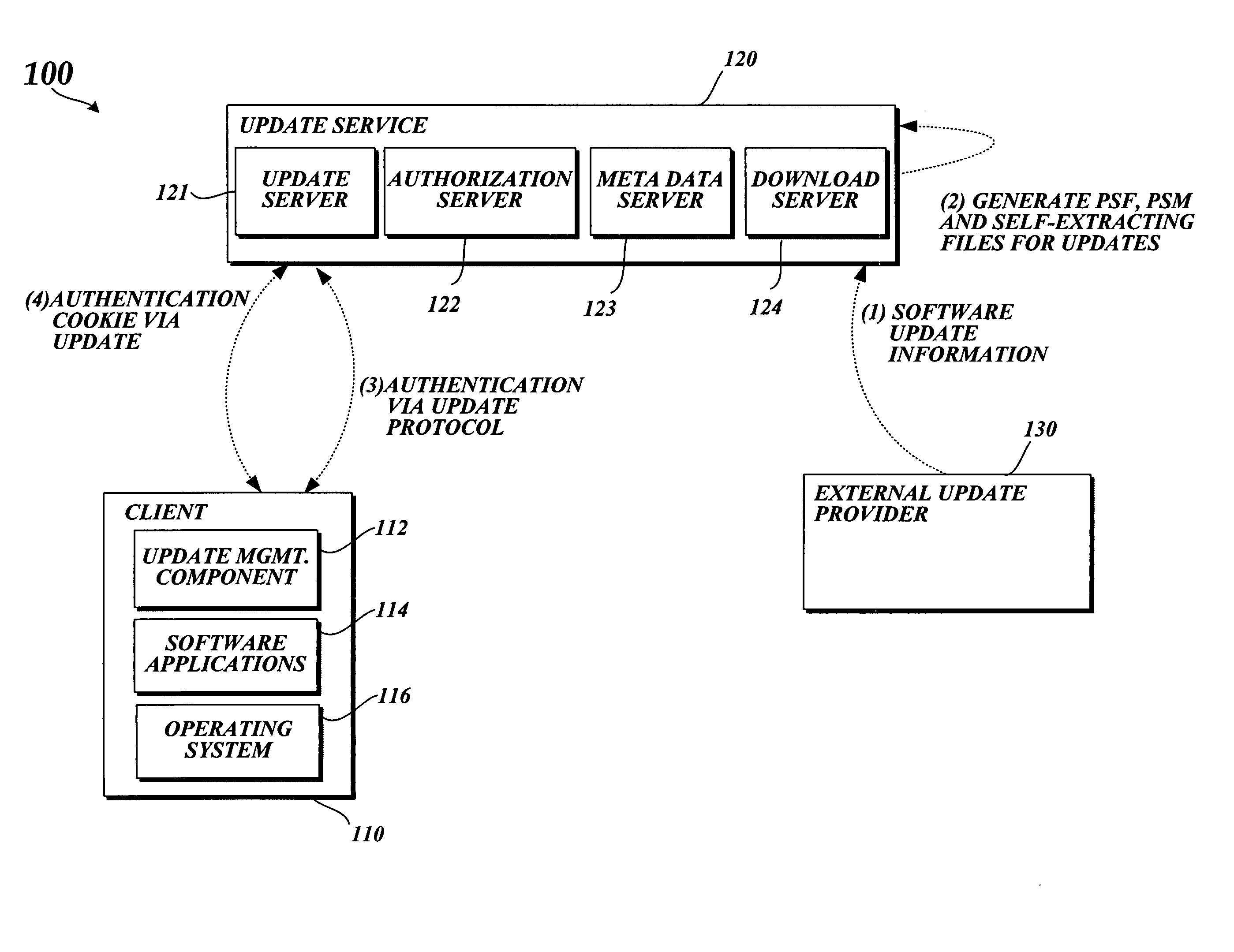

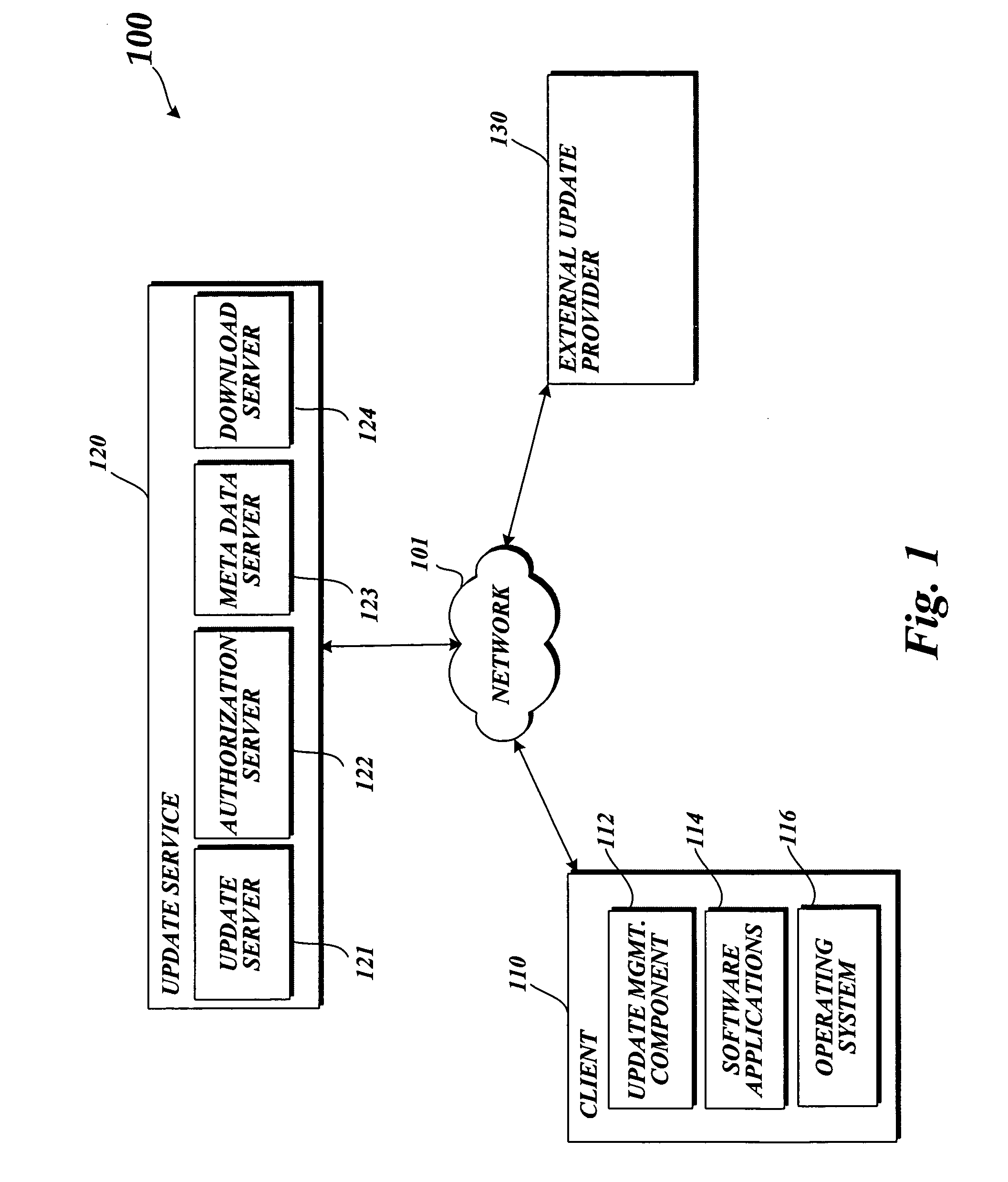

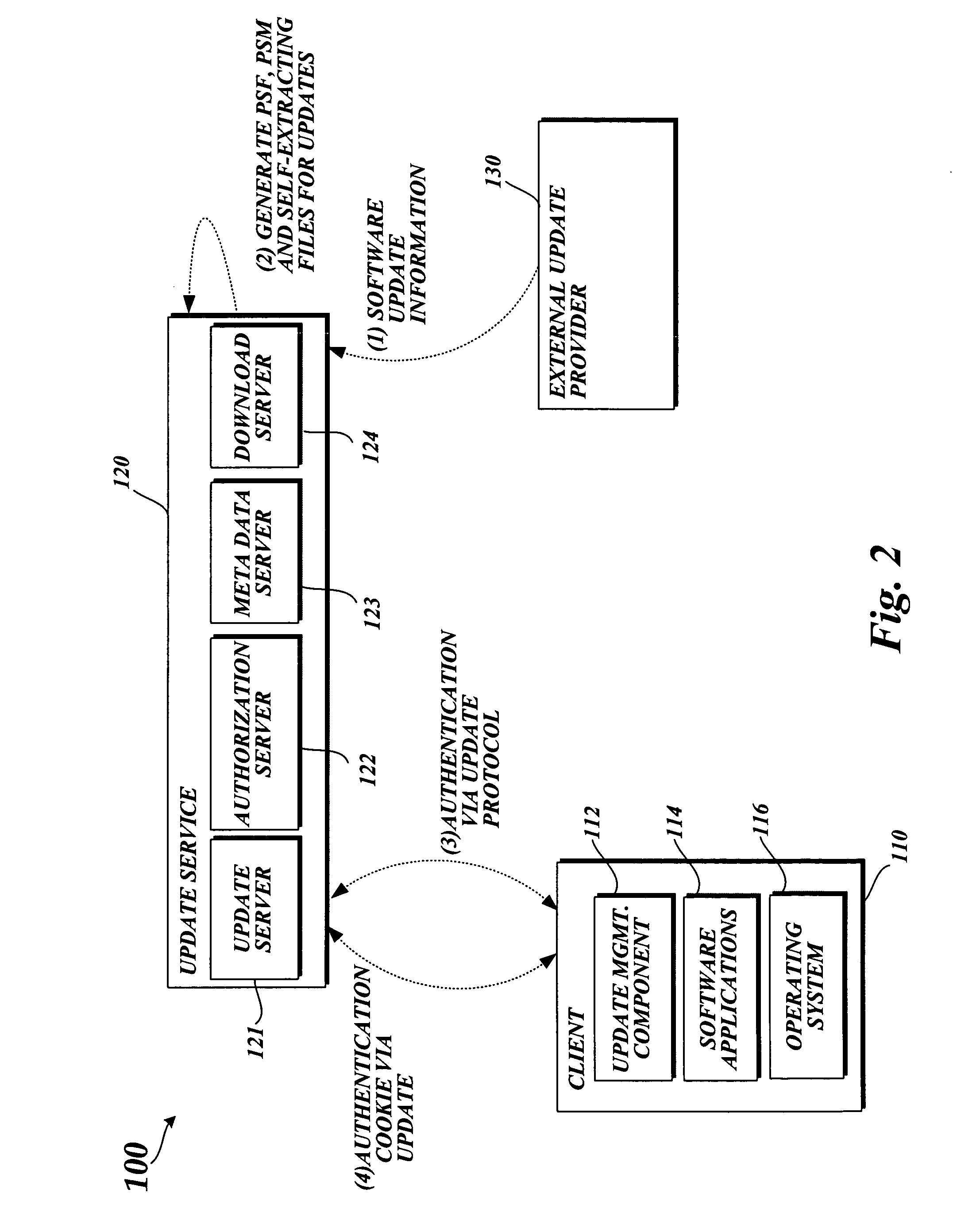

System and method for managing and communicating software updates

InactiveUS20050132348A1Minimize bandwidth and/or processing resourceFacilitating selection and implementationProgram loading/initiatingTransmissionService controlSoftware update

A system and method for facilitating the selection and implementation of software updates while minimizing the bandwidth and processing resources required to select and implement the software updates. In one embodiment, an update service controls access to software updates, or other types of software, stored on a server.

Owner:MICROSOFT TECH LICENSING LLC

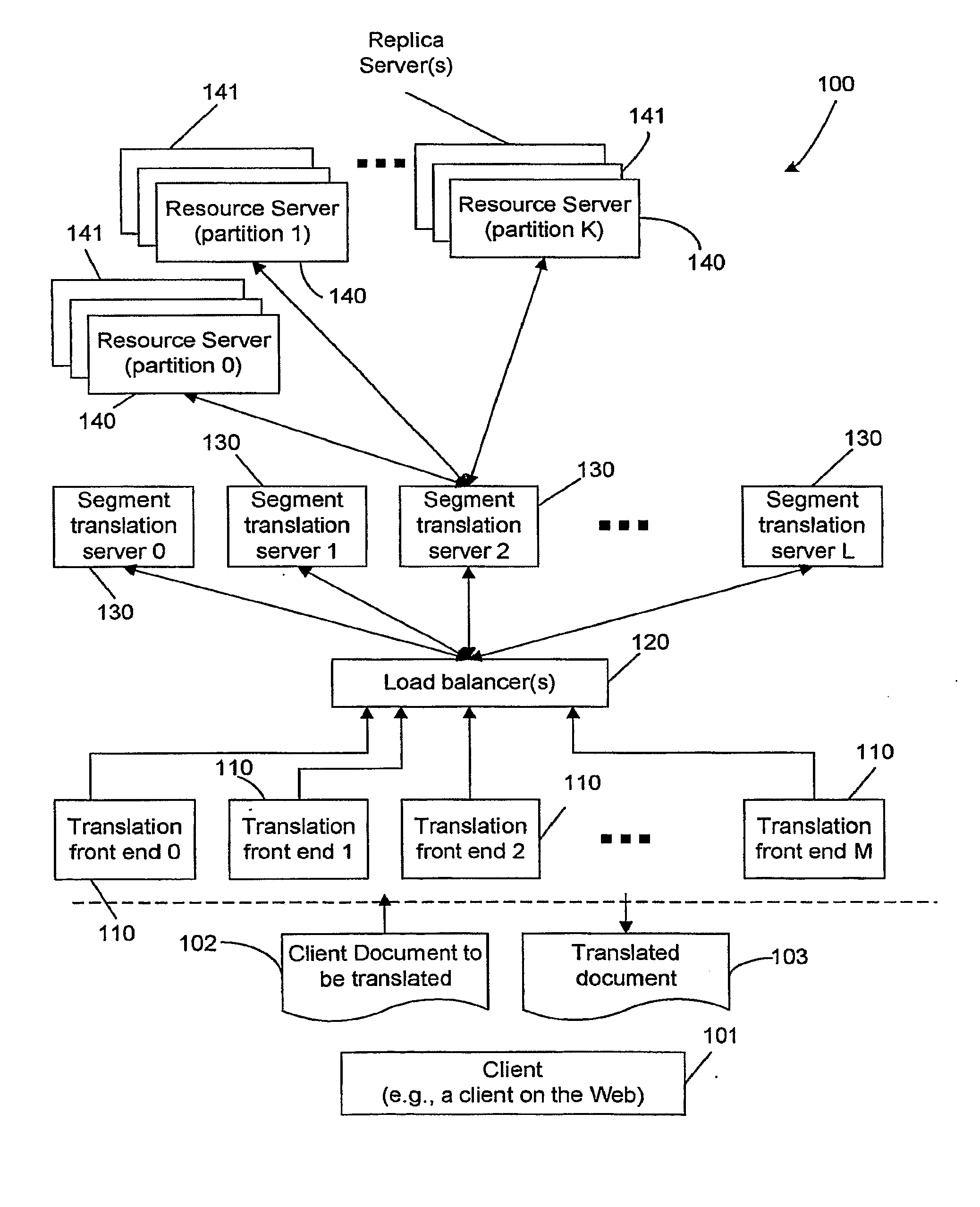

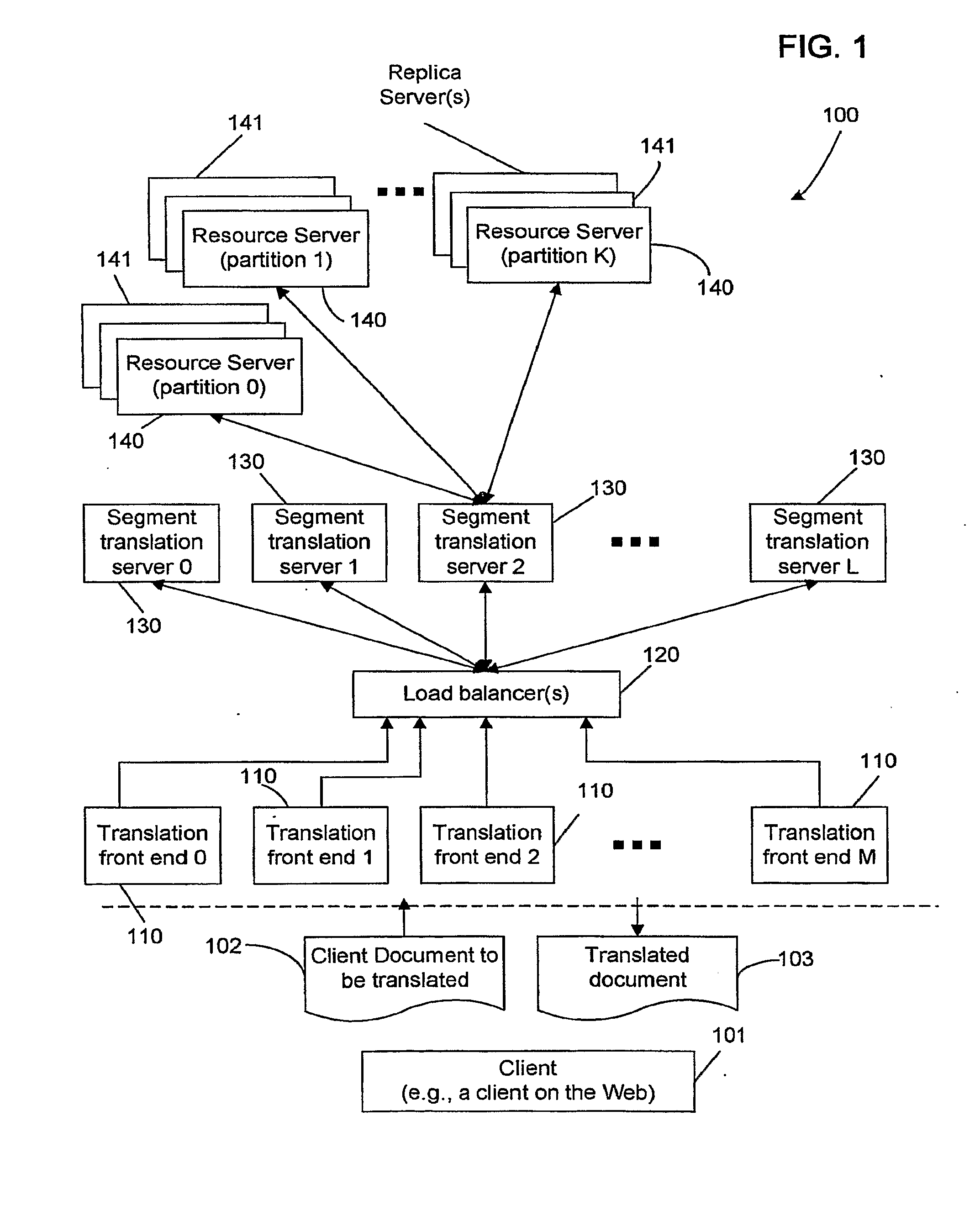

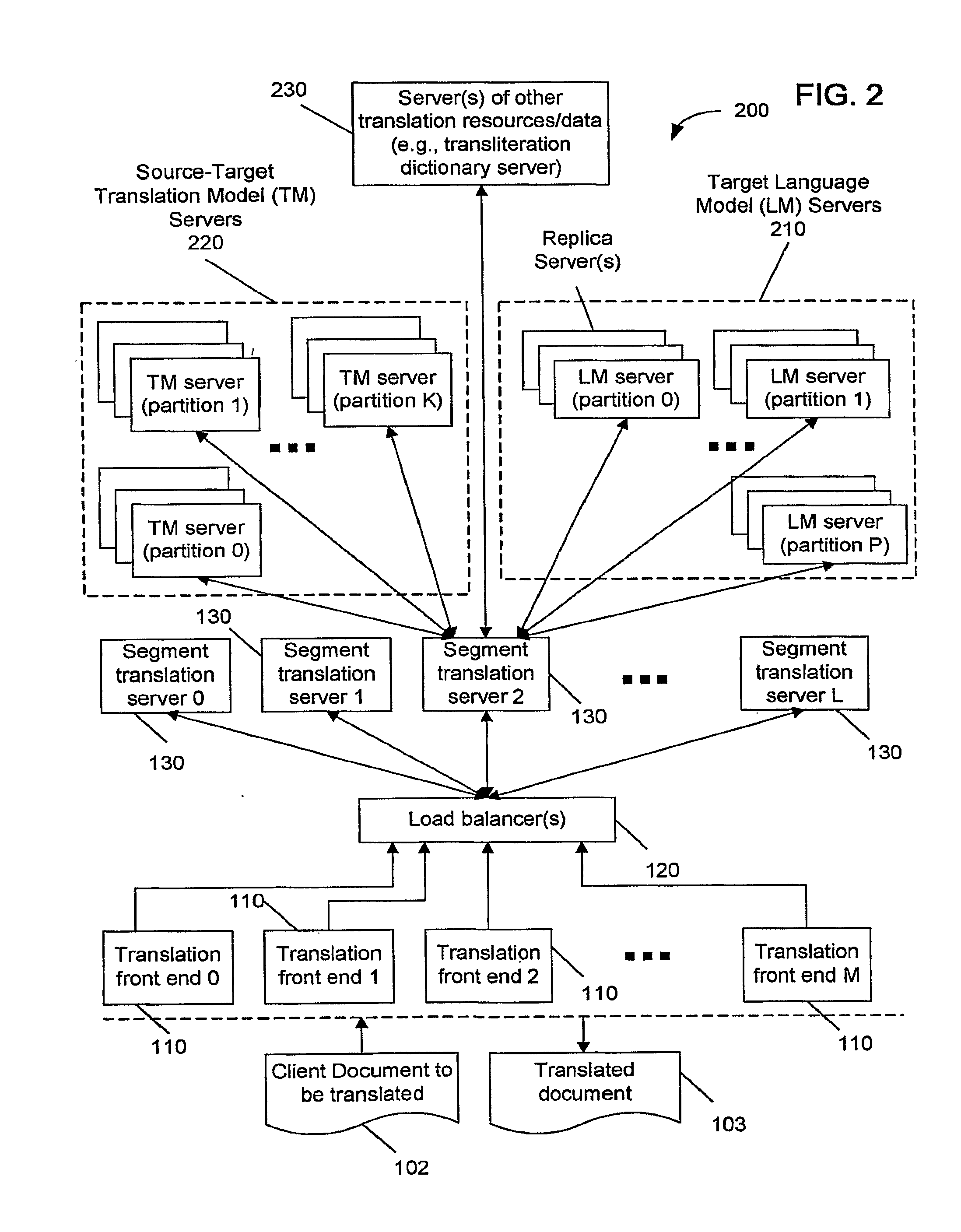

Encoding and Adaptive, Scalable Accessing of Distributed Models

ActiveUS20080262828A1Quality improvementImprove translation speedNatural language translationTransformation of program codeTheoretical computer scienceMachine translation

Systems, methods, and apparatus for accessing distributed models in automated machine processing, including using large language models in machine translation, speech recognition and other applications.

Owner:GOOGLE LLC

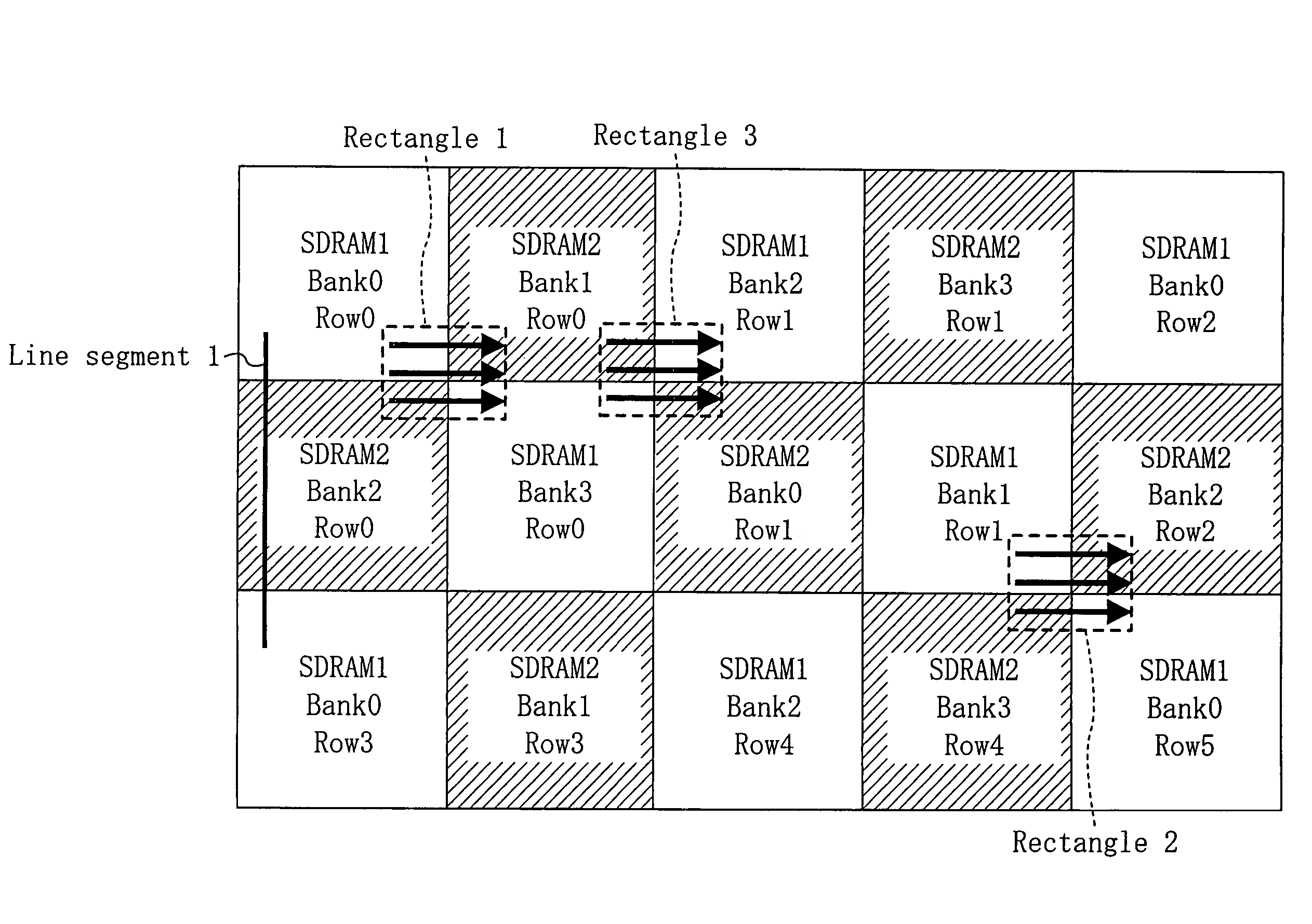

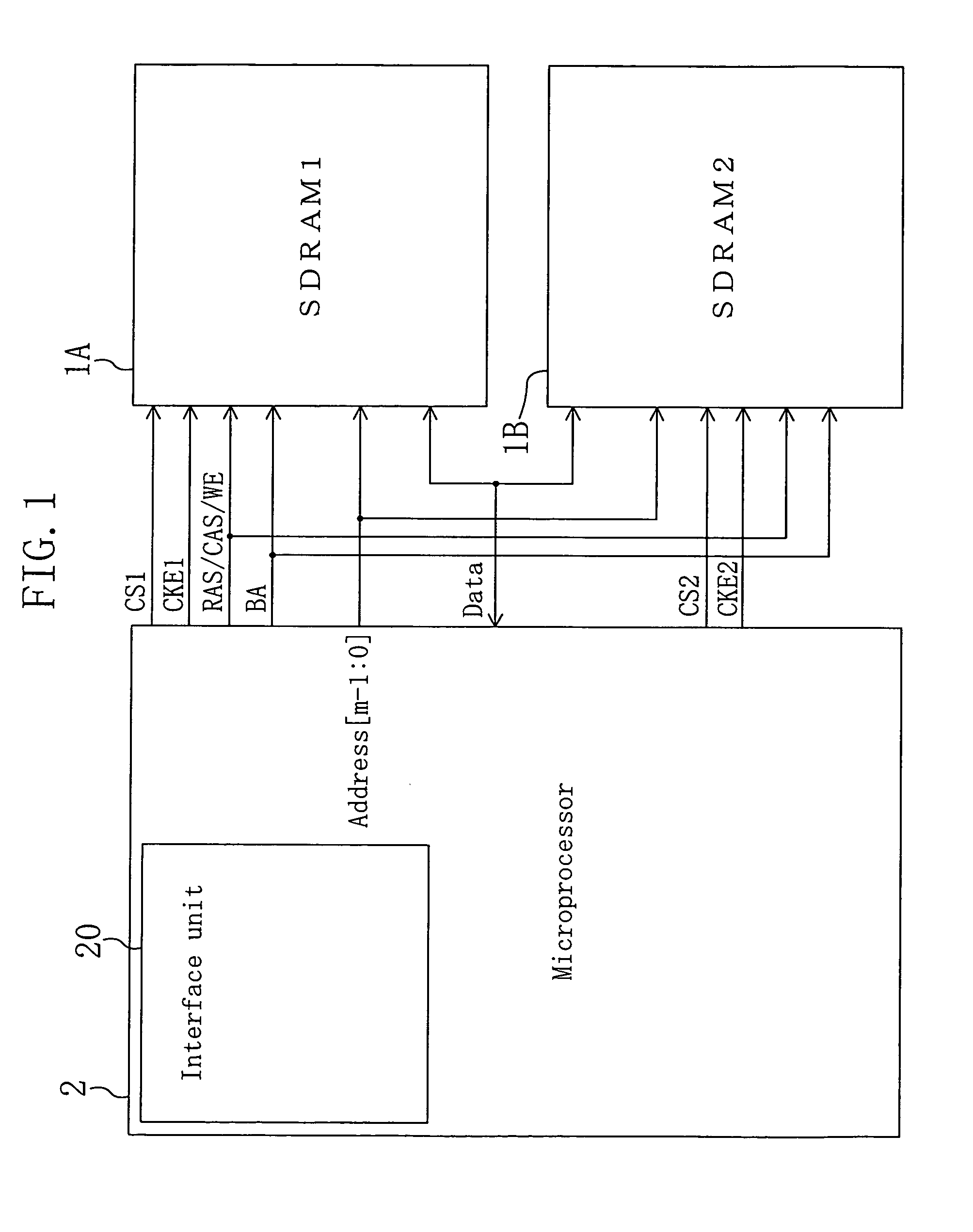

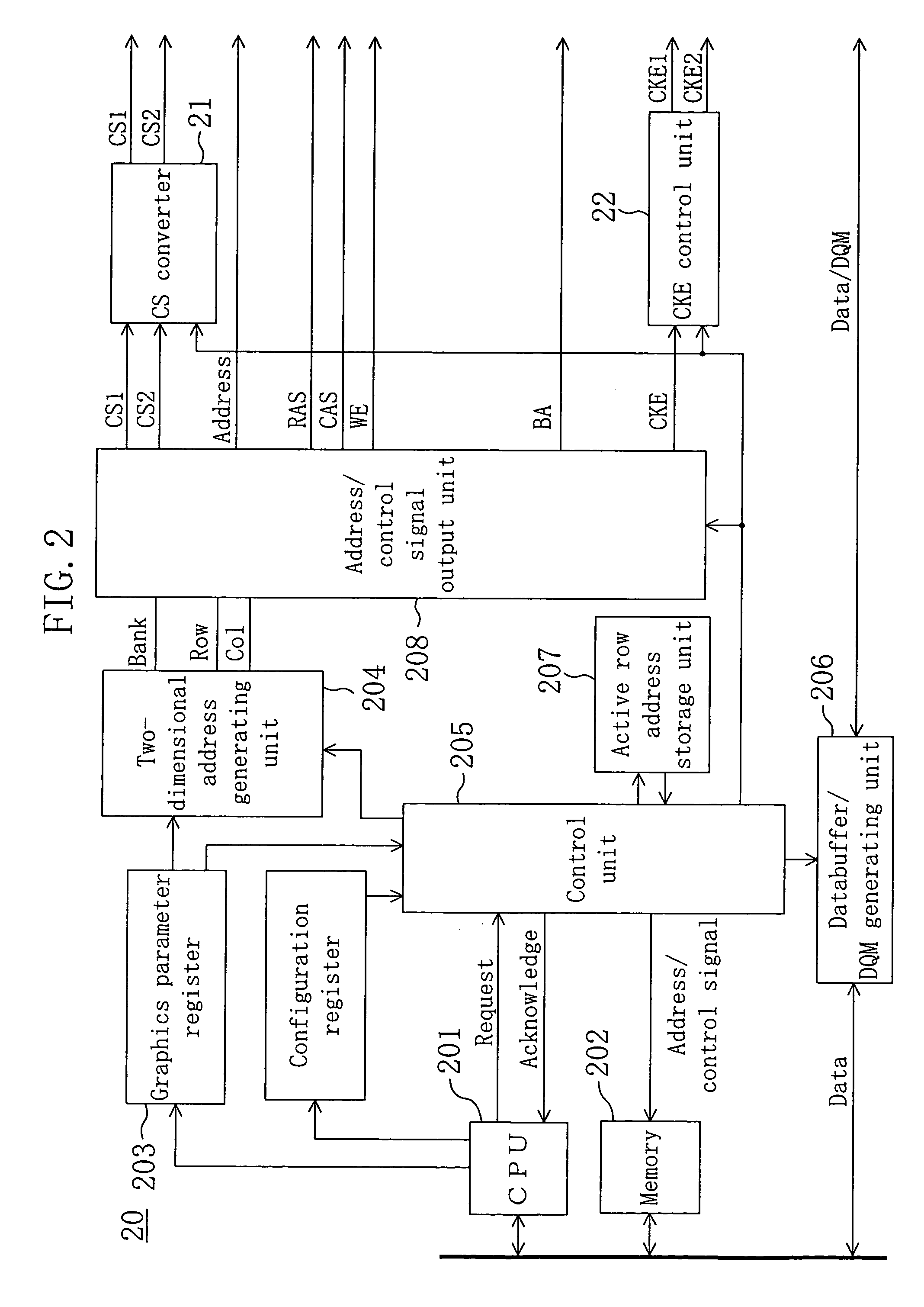

DRAM controller for graphics processing operable to enable/disable burst transfer

ActiveUS7562184B2Reduce in quantityReduce overheadMemory adressing/allocation/relocationCathode-ray tube indicatorsGraphicsComputer science

An interface unit 20 assigns different SDRAMs 1 and 2 to adjacent drawing blocks in a frame-buffer area. In processing that extends across the adjacent drawing blocks, active commands, for example, are issued alternately to the SDRAMs 1 and 2 to reduce waiting cycles resulting from the issue interval restriction. Furthermore, since individual clock enable signals CKE1 and CKE2 are output to the SDRAMs 1 and 2 so that burst transfers of the SDRAMs 1 and 2 can be stopped individually, no cycle is necessary to stop the burst transfers.

Owner:SOCIONEXT INC

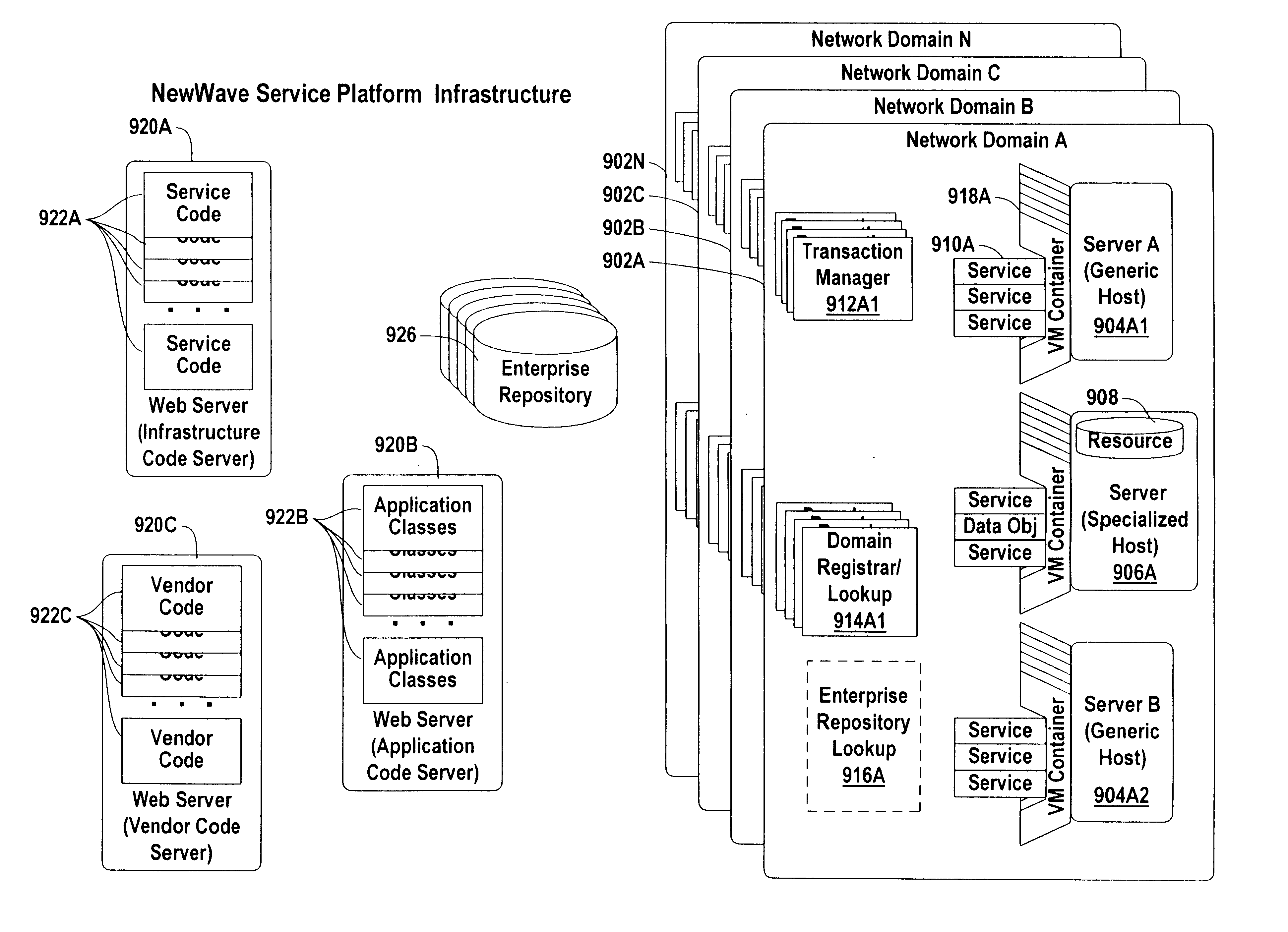

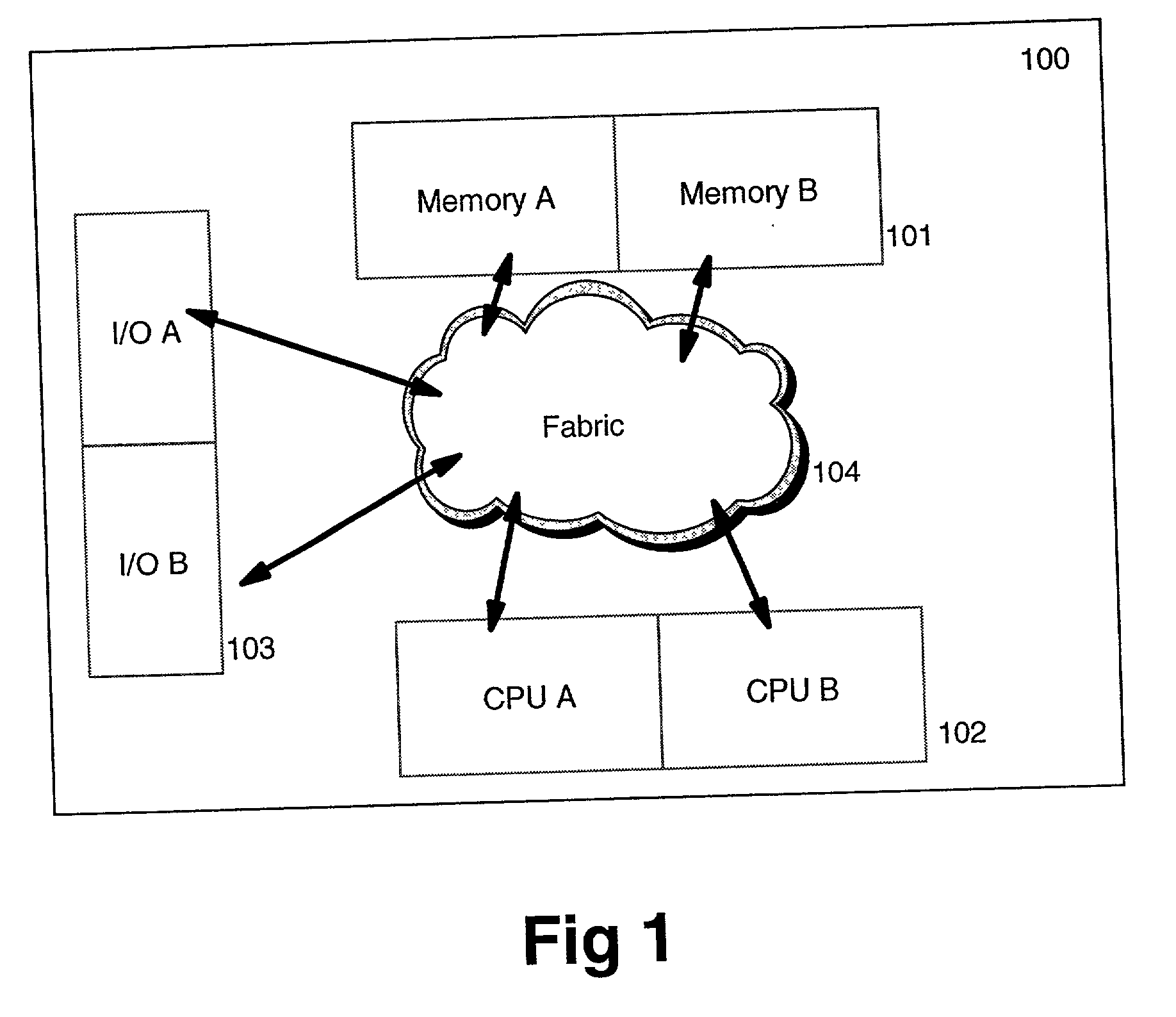

Method and system for implementing a global ecosystem of interrelated services

InactiveUS6868441B2Easy accessEnhances ease of interactionFinanceDigital computer detailsOperational systemGeolocation

The present invention is directed to a method and management service platform for implementing a global ecosystem of interrelated services. The service platform is comprised of three distinct layers: a physical machine layer; a virtual machine layer and a layer of interrelated services. The physical machine layer may be deployed on large numbers of small generic servers in many geographic locations distributed for enterprise use. Associated with one or more servers are particular resources managed by that particular server. Any server in any geographic location can process any service needed by any client in any other geographic area. The operating system of each physical server is not used directly in the operating environment, but instead, each server runs a platform-independent programming language virtual machine on top of the operating system—this is the virtual machine layer. Services are location independent processing entities that are managed dynamically, configured dynamically, load their code remotely, and found and communicated with dynamically. A generic service container is a CPU process into which arbitrary software services may be homed to a host server at runtime. Thus, a virtual machine layer is an interface layer of service which supports the layer of interrelated srvices rather than the operating system of the physical machines.

Owner:EKMK LLC

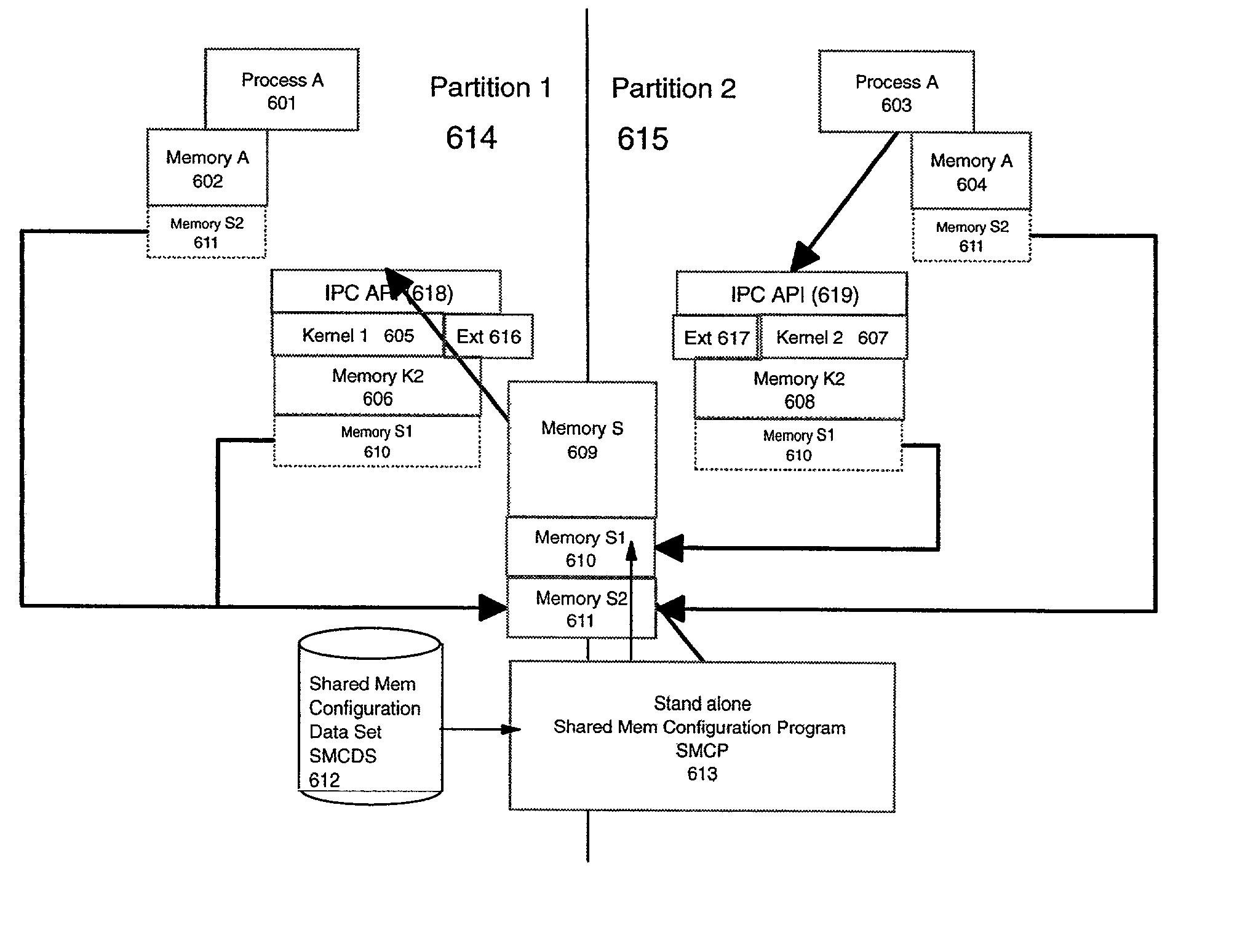

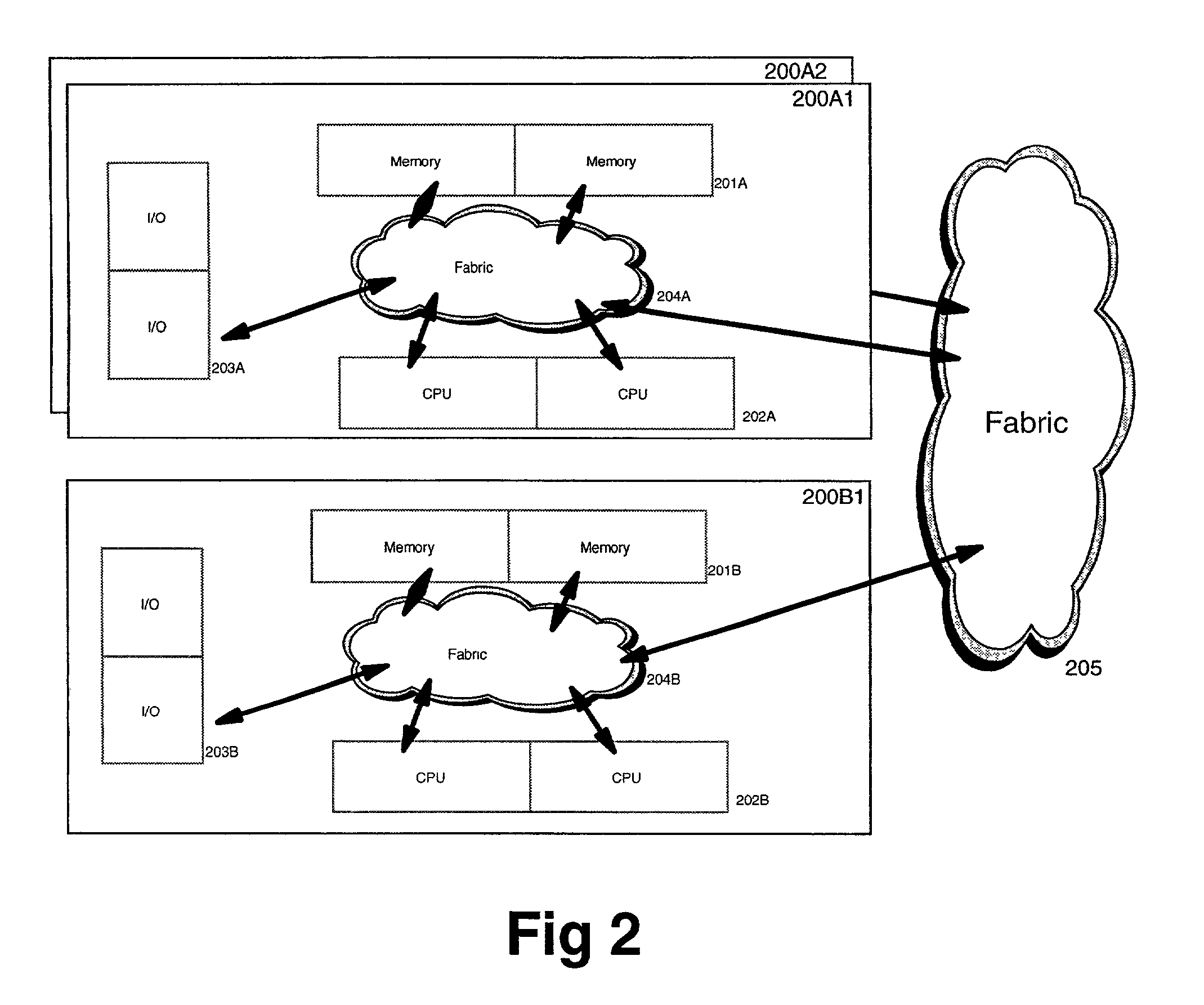

Inter-partition message passing method, system and program product for managing workload in a partitioned processing environment

InactiveUS20020129085A1Easy to moveDirect to transferResource allocationMultiple digital computer combinationsOperational systemResource assignment

A partitioned processing system capable of supporting diverse operating system partitions is disclosed wherein throughput information is passed from a partition to a partition resource manager. The throughput information is used to create resource balancing directives for the partitioned resource. The processing system includes at least a first partition and a second partition. A partition resource manager is provided for receiving information about throughput from the second partition and determining resource balancing directives. A communicator communicates the resource balancing directives from the partition manager to a kernel in the second partition which allocates resources to the second partition according to the resource balancing directives received from the partition manager.

Owner:IBM CORP

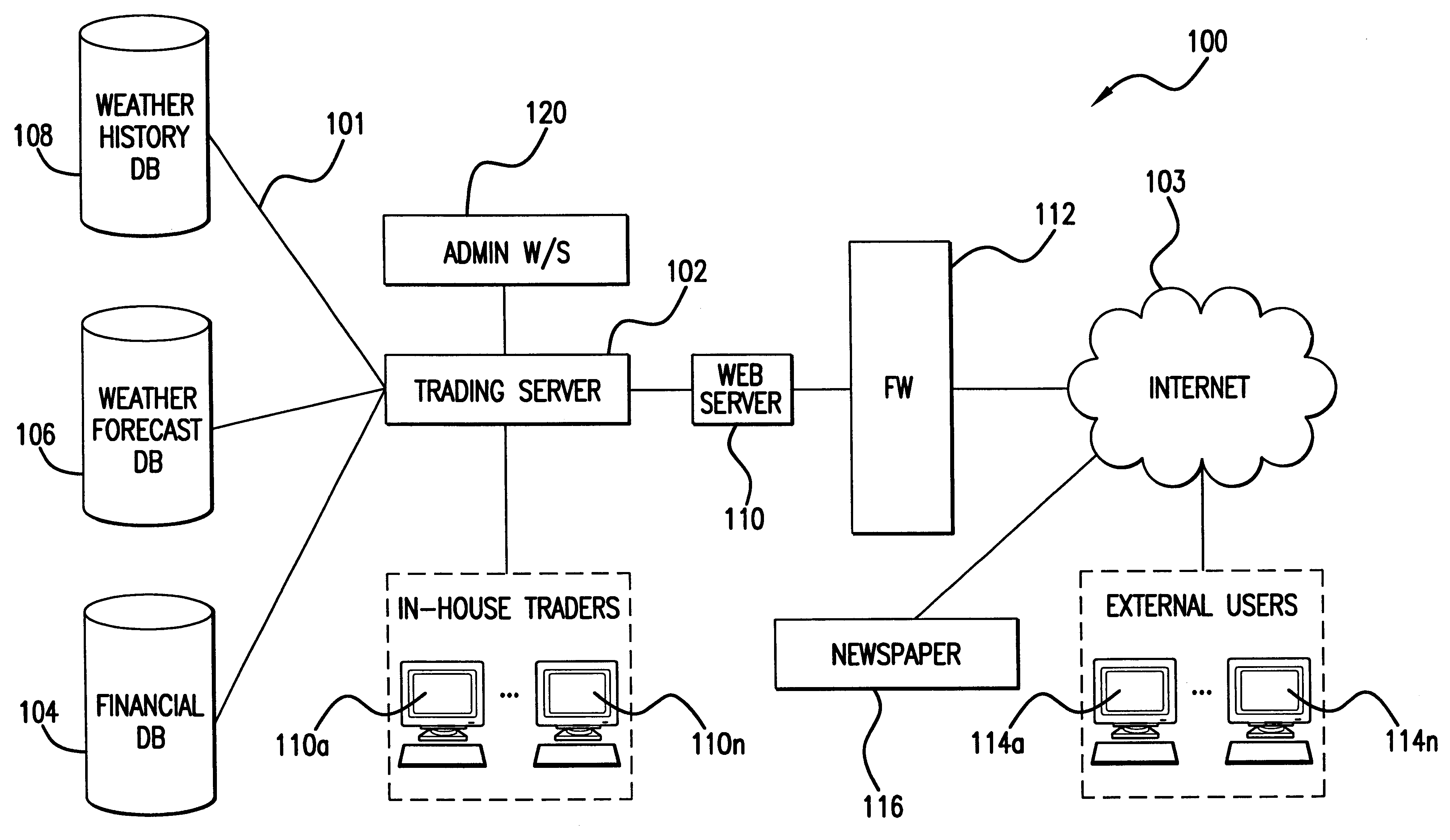

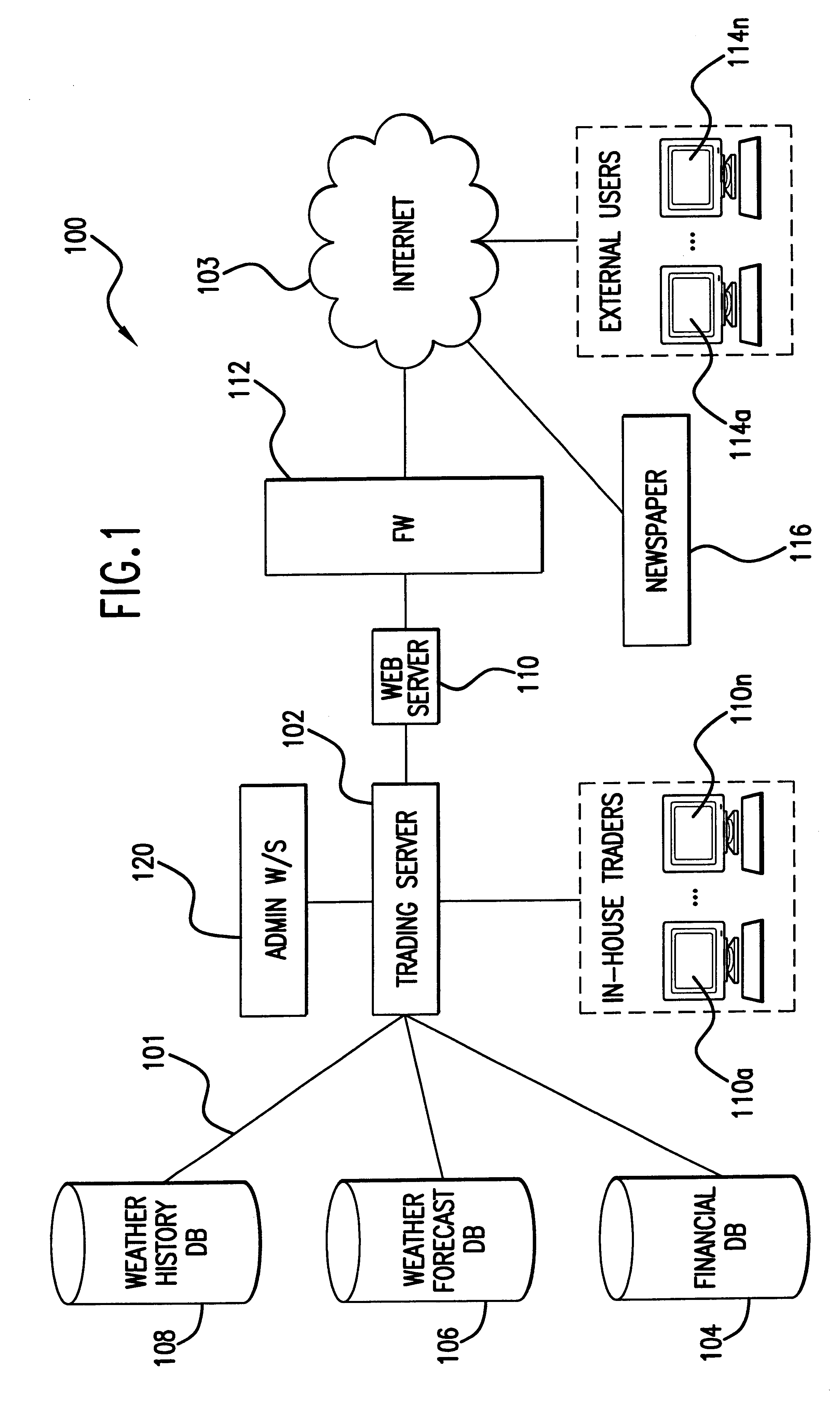

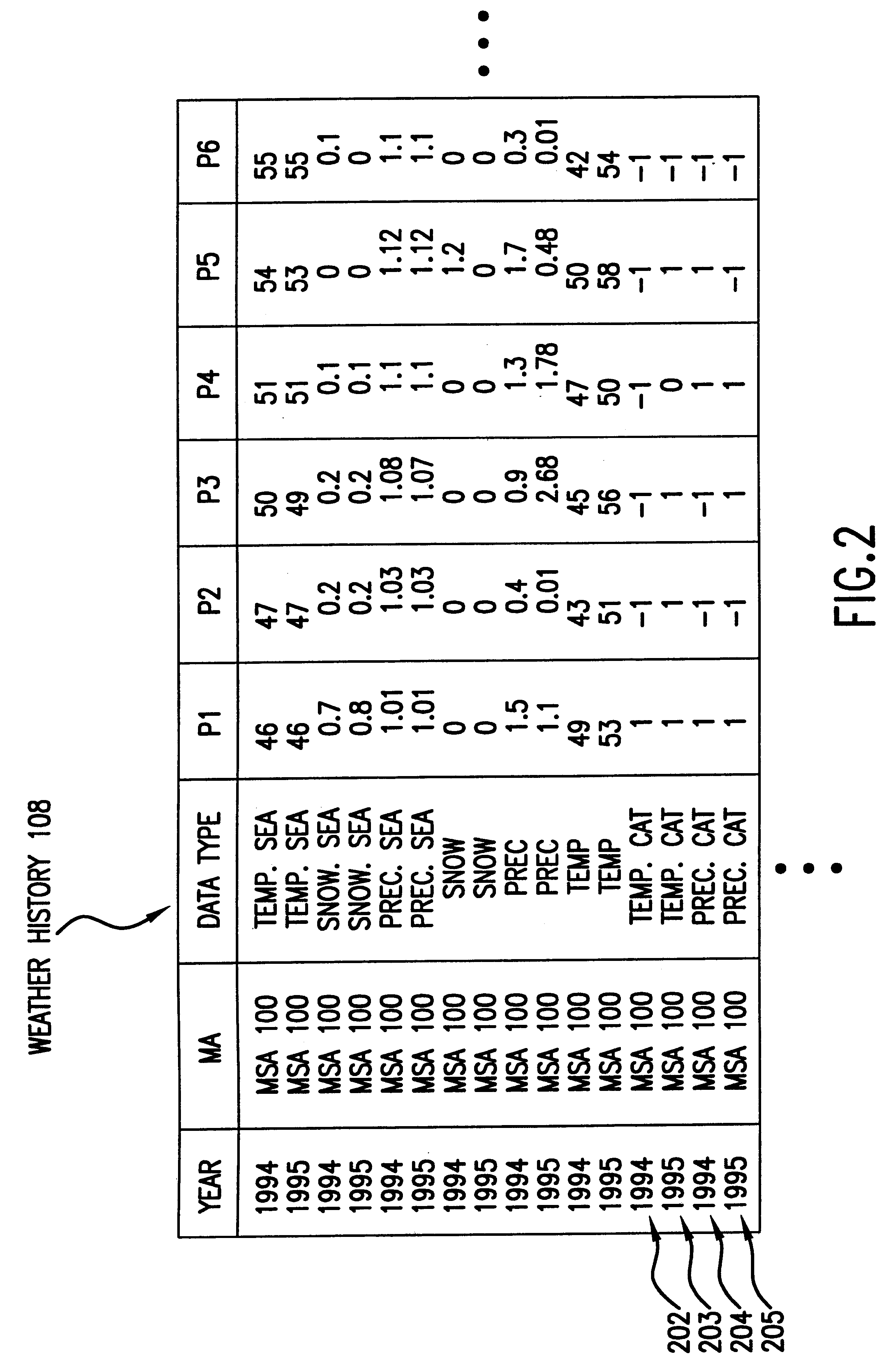

System, method, and computer program product for valuating weather-based financial instruments

InactiveUS6418417B1FinanceSpecial data processing applicationsGraphical user interfaceFinancial transaction

A system and method for valuating weather-based financial instruments including weather futures, options, swaps, and the like. The system includes weather forecast, weather history, and financial databases. Also included in the system is a central processing trading server that is accessible via a plurality of internal and external workstations. The workstations provide a graphical user interface for users to enter a series of inputs and receive information (i.e., output) concerning a financial instrument. The method involves collecting the series of inputs-start date, maturity date, geographic location(s), risk-free rate, and base weather condition-affecting the value of the financial instrument and applying a pricing model modified to account for weather.

Owner:PANALEC

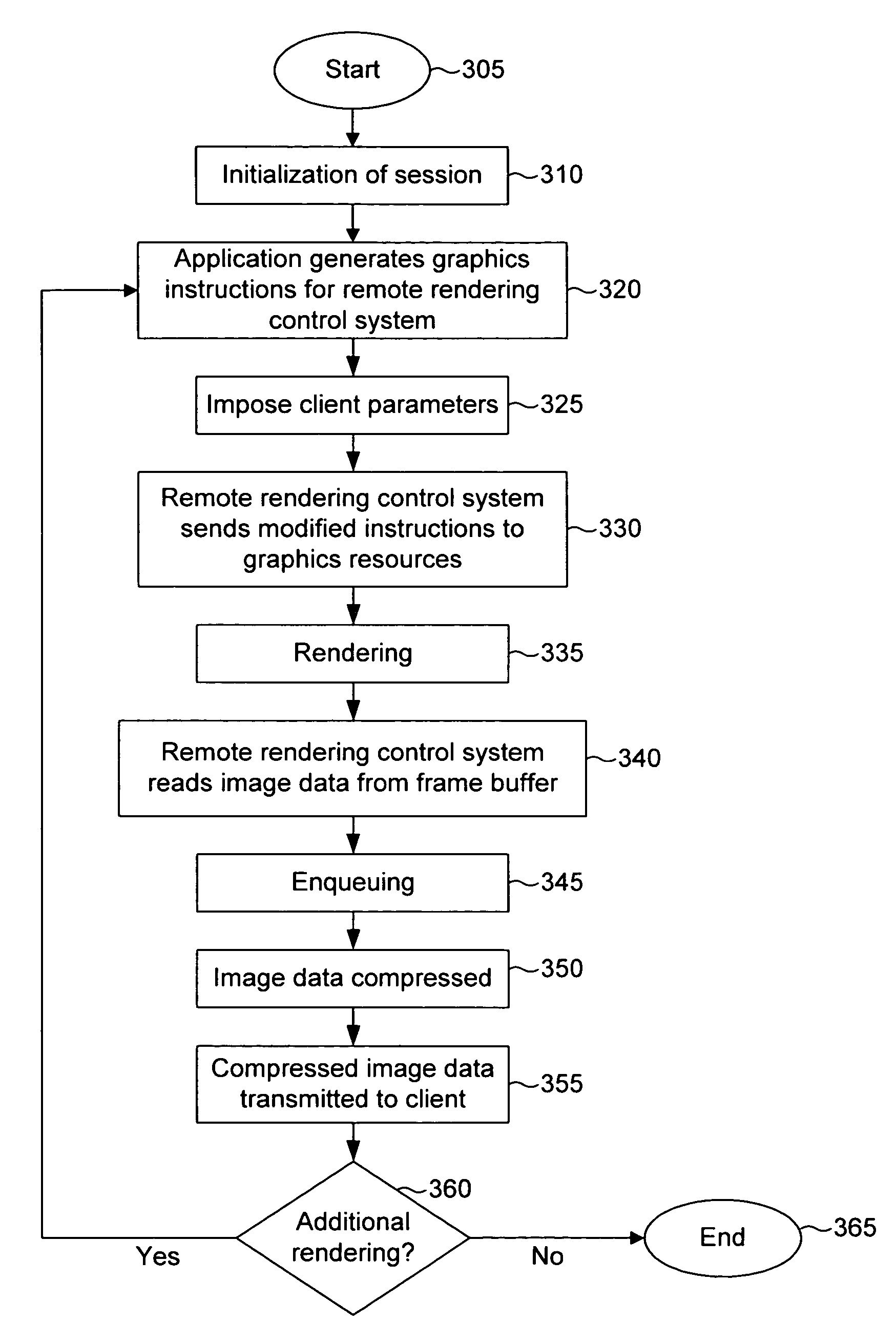

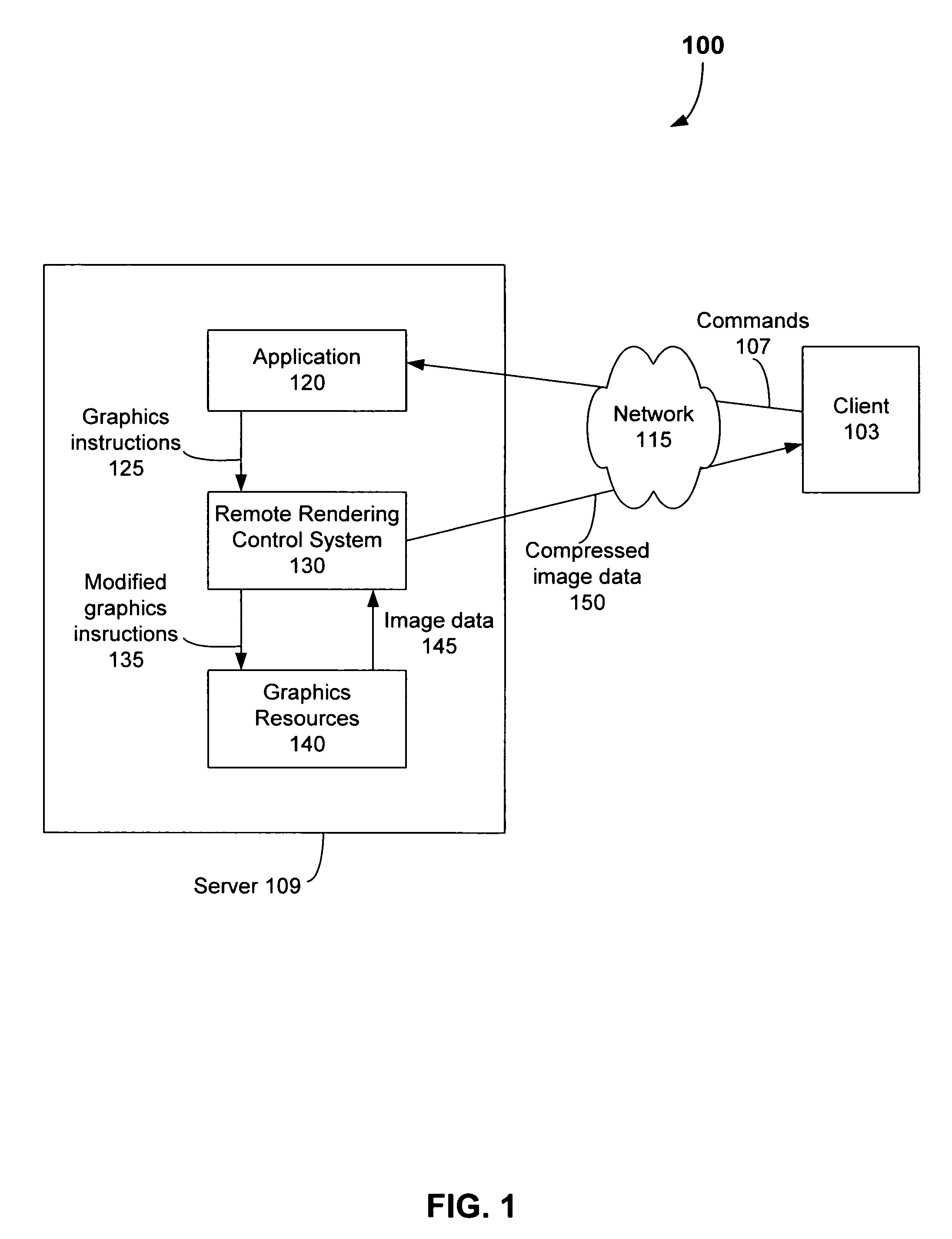

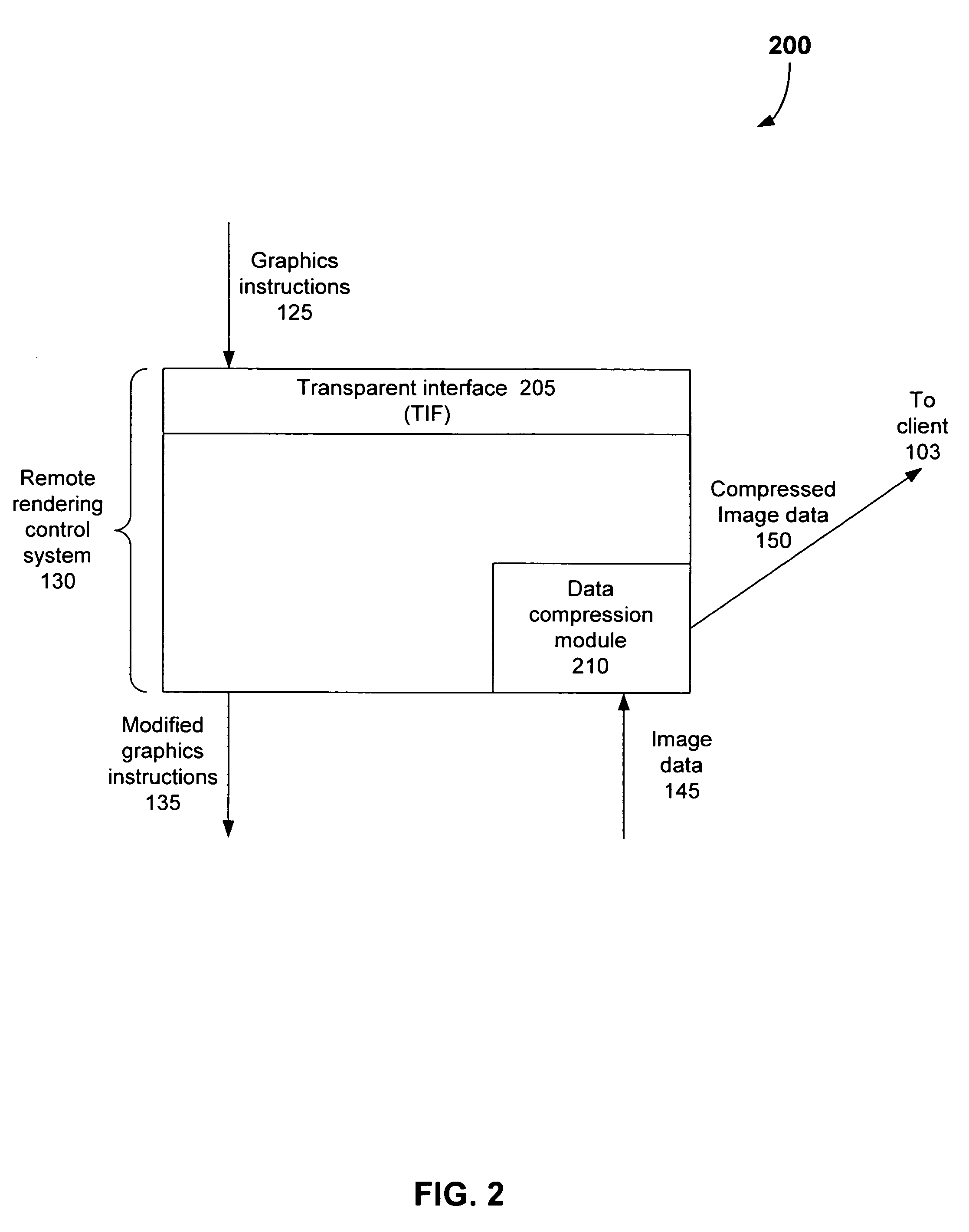

System method and computer program product for remote graphics processing

InactiveUS7274368B1Great utilityCathode-ray tube indicatorsMultiple digital computer combinationsClient-sideFramebuffer

A system, method, and computer program product are provided for remote rendering of computer graphics. The system includes a graphics application program resident at a remote server. The graphics application is invoked by a user or process located at a client. The invoked graphics application proceeds to issue graphics instructions. The graphics instructions are received by a remote rendering control system. Given that the client and server differ with respect to graphics context and image processing capability, the remote rendering control system modifies the graphics instructions in order to accommodate these differences. The modified graphics instructions are sent to graphics rendering resources, which produce one or more rendered images. Data representing the rendered images is written to one or more frame buffers. The remote rendering control system then reads this image data from the frame buffers. The image data is transmitted to the client for display or processing. In an embodiment of the system, the image data is compressed before being transmitted to the client. In such an embodiment, the steps of rendering, compression, and transmission can be performed asynchronously in a pipelined manner.

Owner:GOOGLE LLC +1

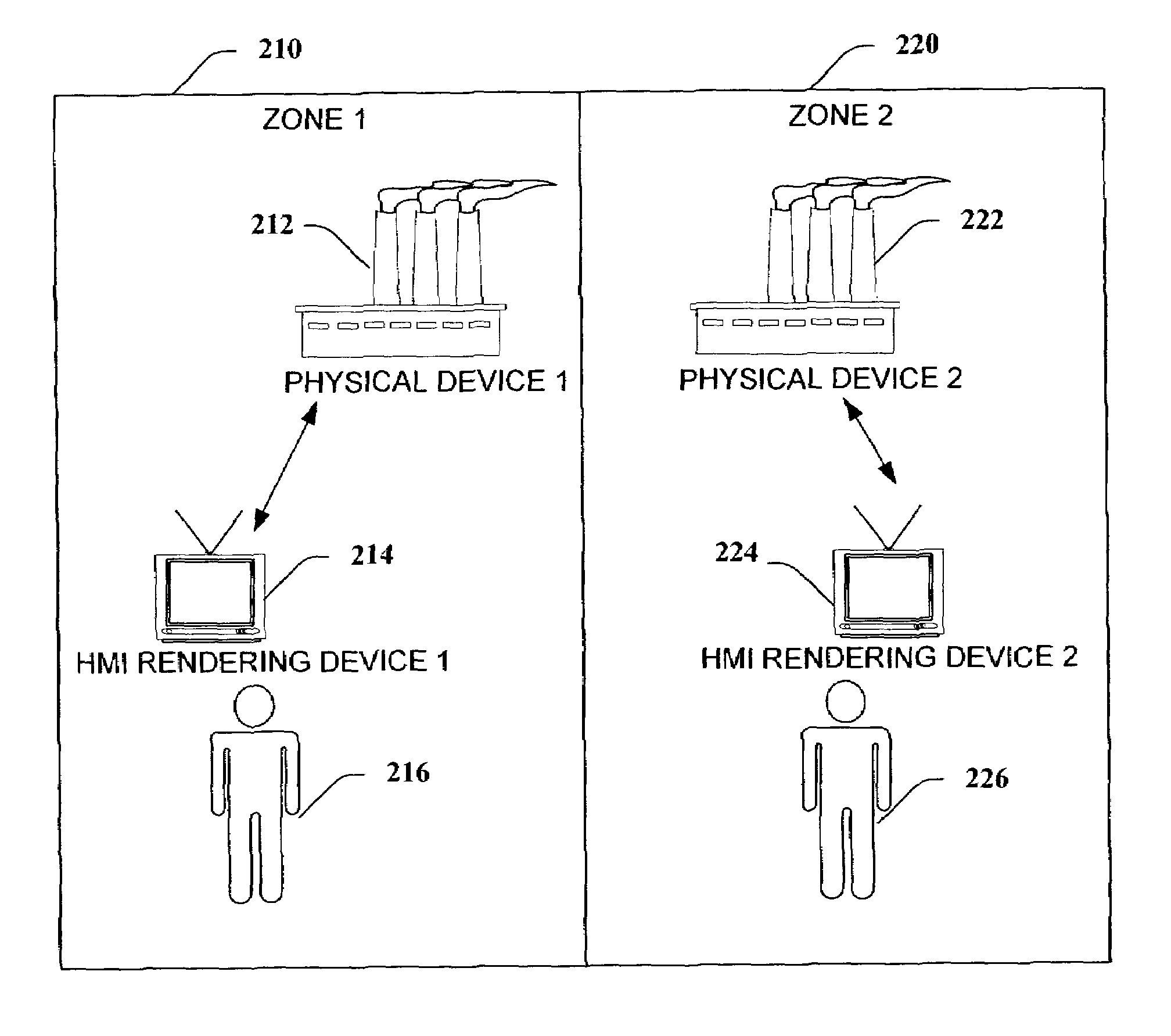

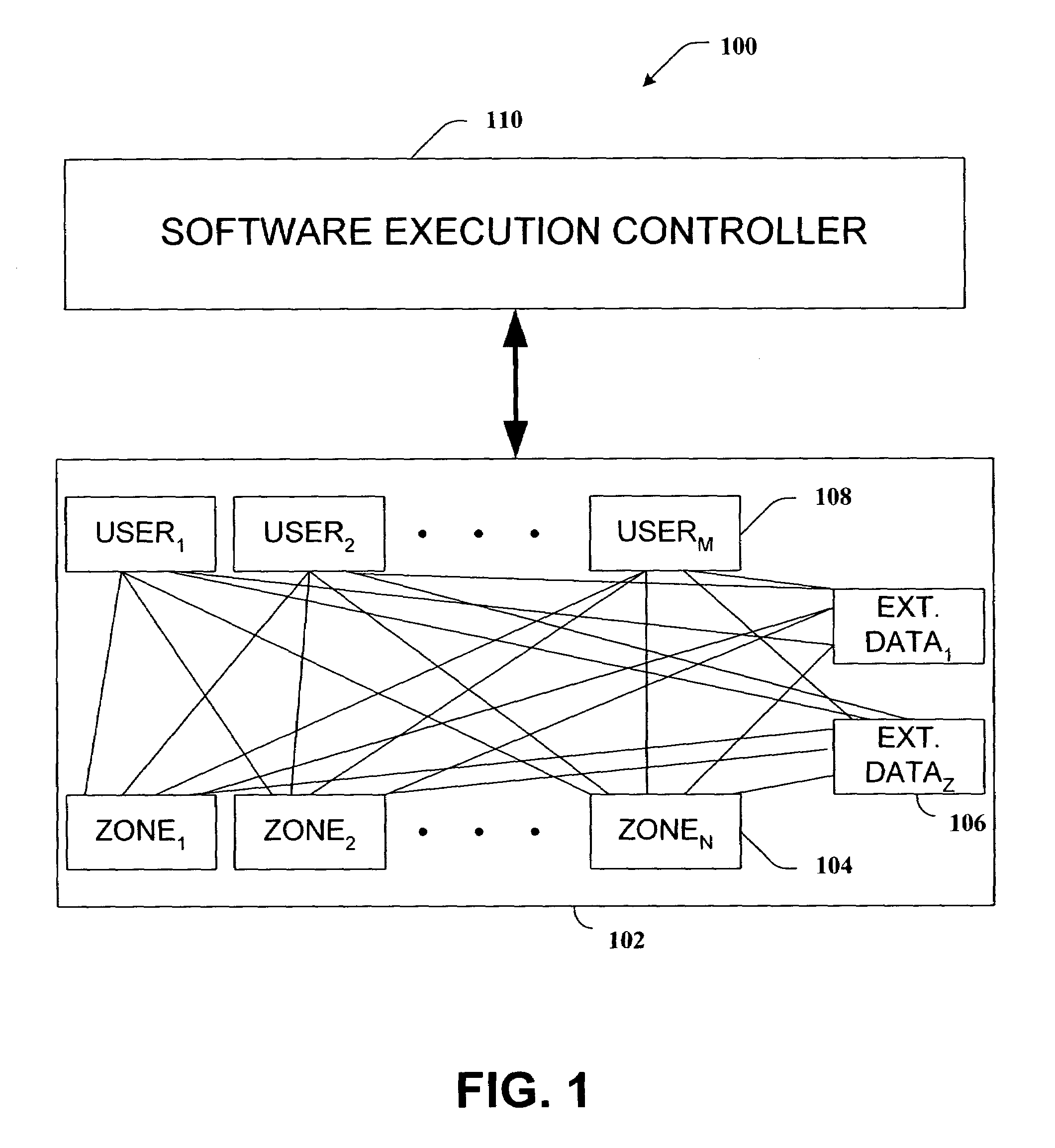

Location-based execution of software/HMI

ActiveUS7194446B1Consistent and reliableFacilitate HMI renderingProgramme controlDigital computer detailsHuman–machine interfaceAutomation

A system and / or method that configures a HMI based at least upon a current state of parameters and a predefined protocol. A processing component queries an industrial automation environment receiving a current state of parameters relating to a human machine interface (HMI). A rendering component automatically configures the HMI to function in accordance with a predefined protocol.

Owner:ROCKWELL SOFTWARE