Patents

Literature

9443 results about "Source data" patented technology

Efficacy Topic

Property

Owner

Technical Advancement

Application Domain

Technology Topic

Technology Field Word

Patent Country/Region

Patent Type

Patent Status

Application Year

Inventor

Source data is raw data (sometimes called atomic data) that has not been processed for meaningful use to become Information.

Digital data storage system

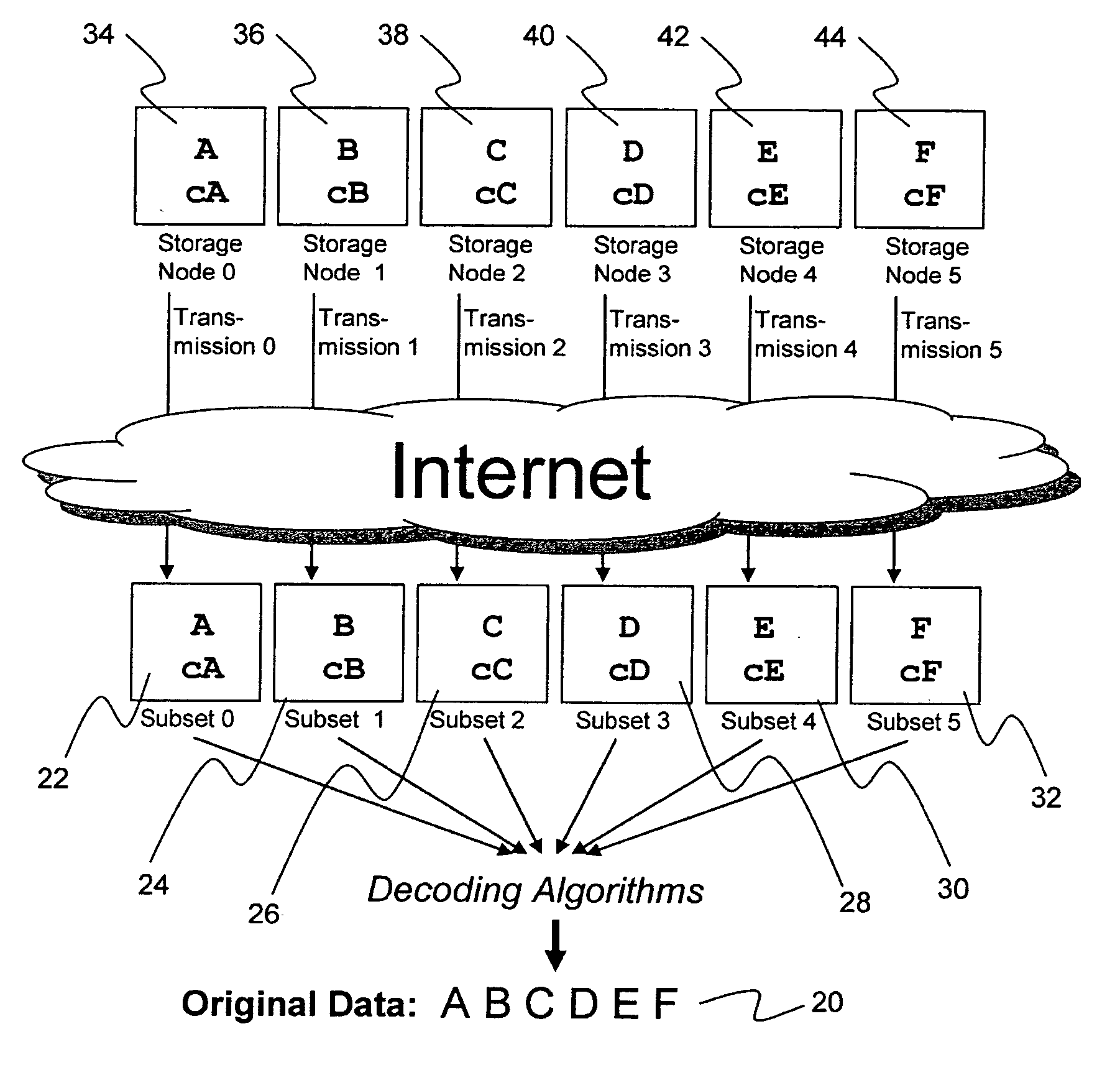

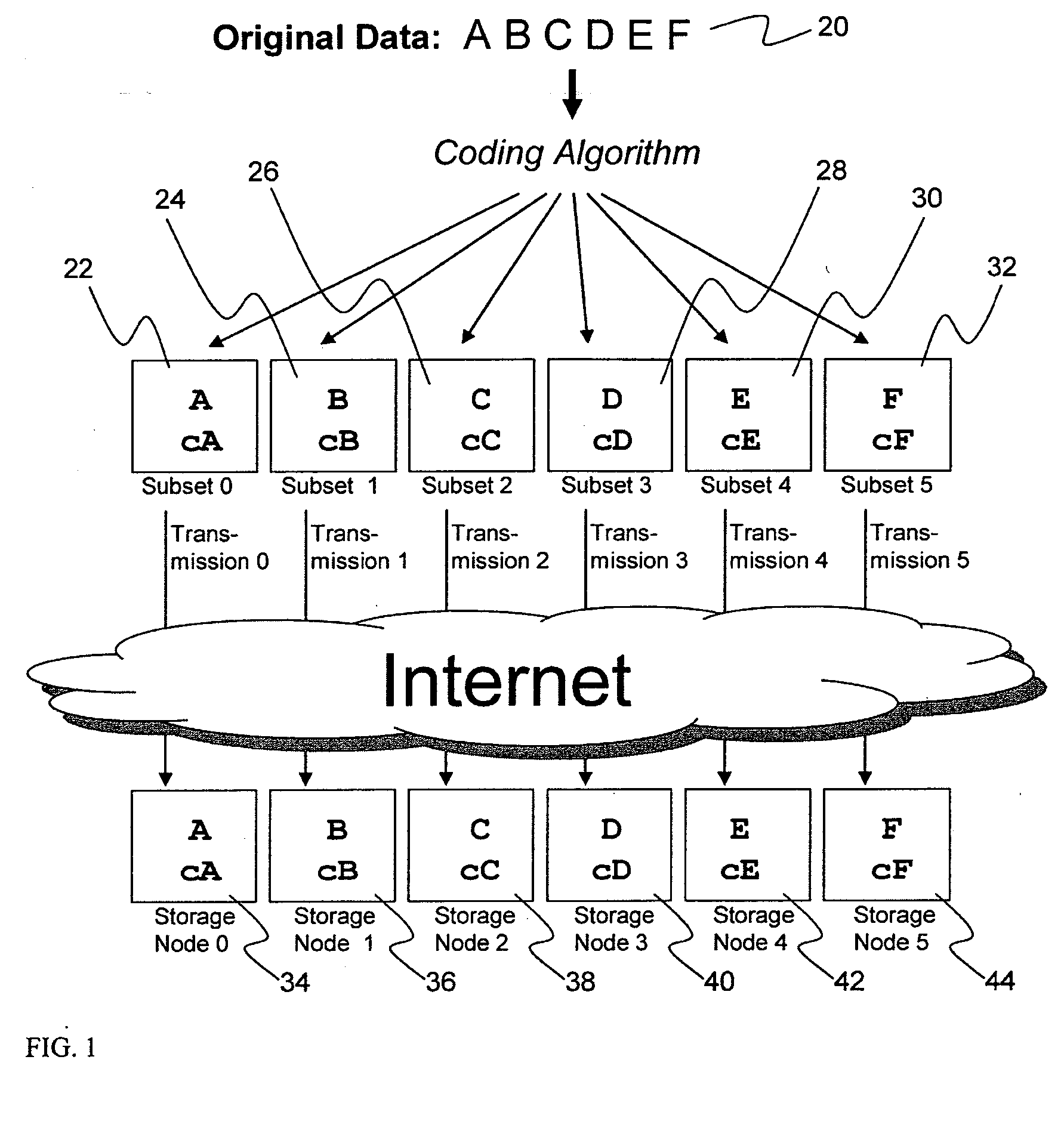

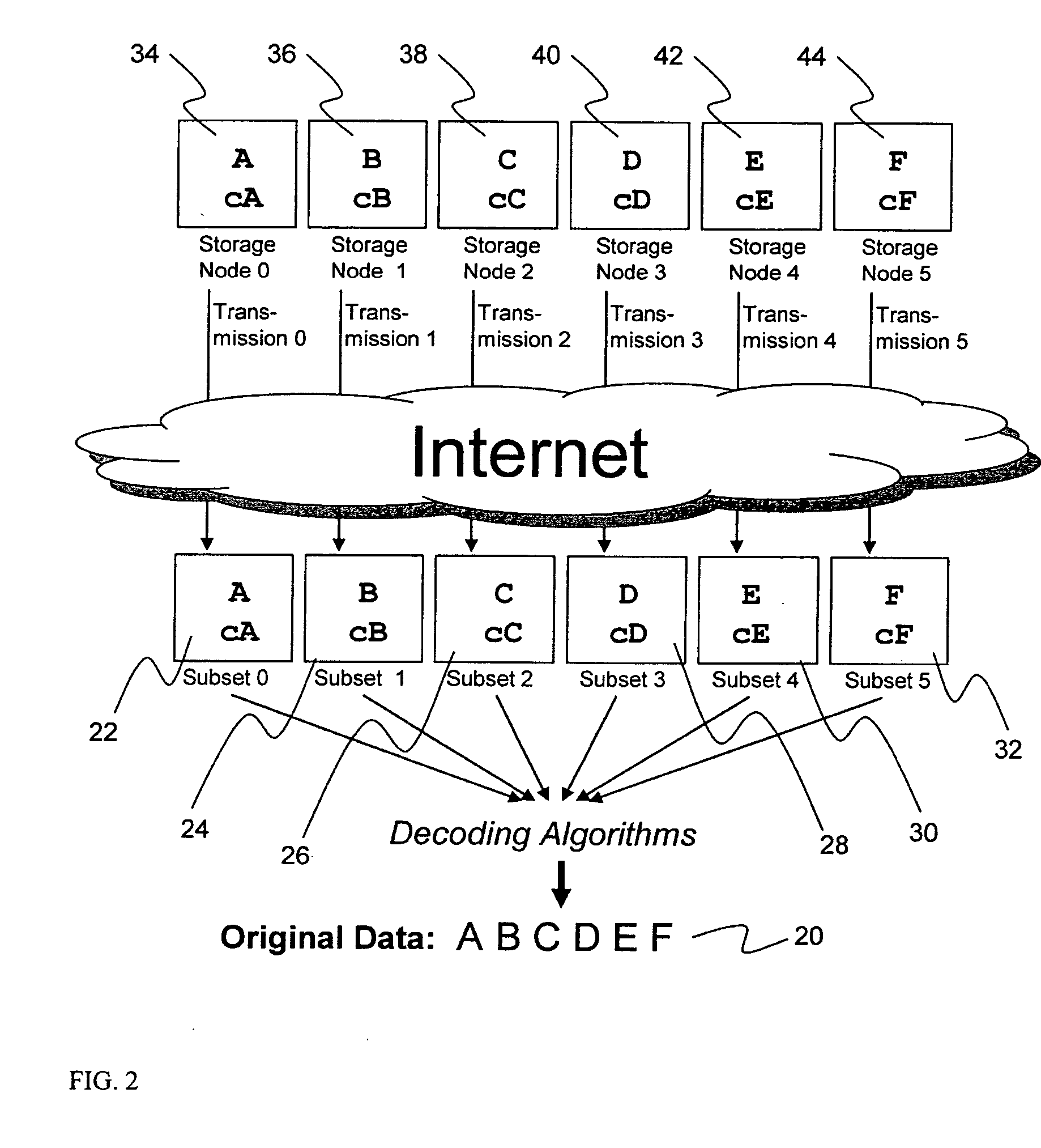

ActiveUS20070079081A1Less usableImprove privacyComputer security arrangementsMemory systemsOriginal dataSmall data

An efficient method for breaking source data into smaller data subsets and storing those subsets along with coded information about some of the other data subsets on different storage nodes such that the original data can be recreated from a portion of those data subsets in an efficient manner.

Owner:PURE STORAGE

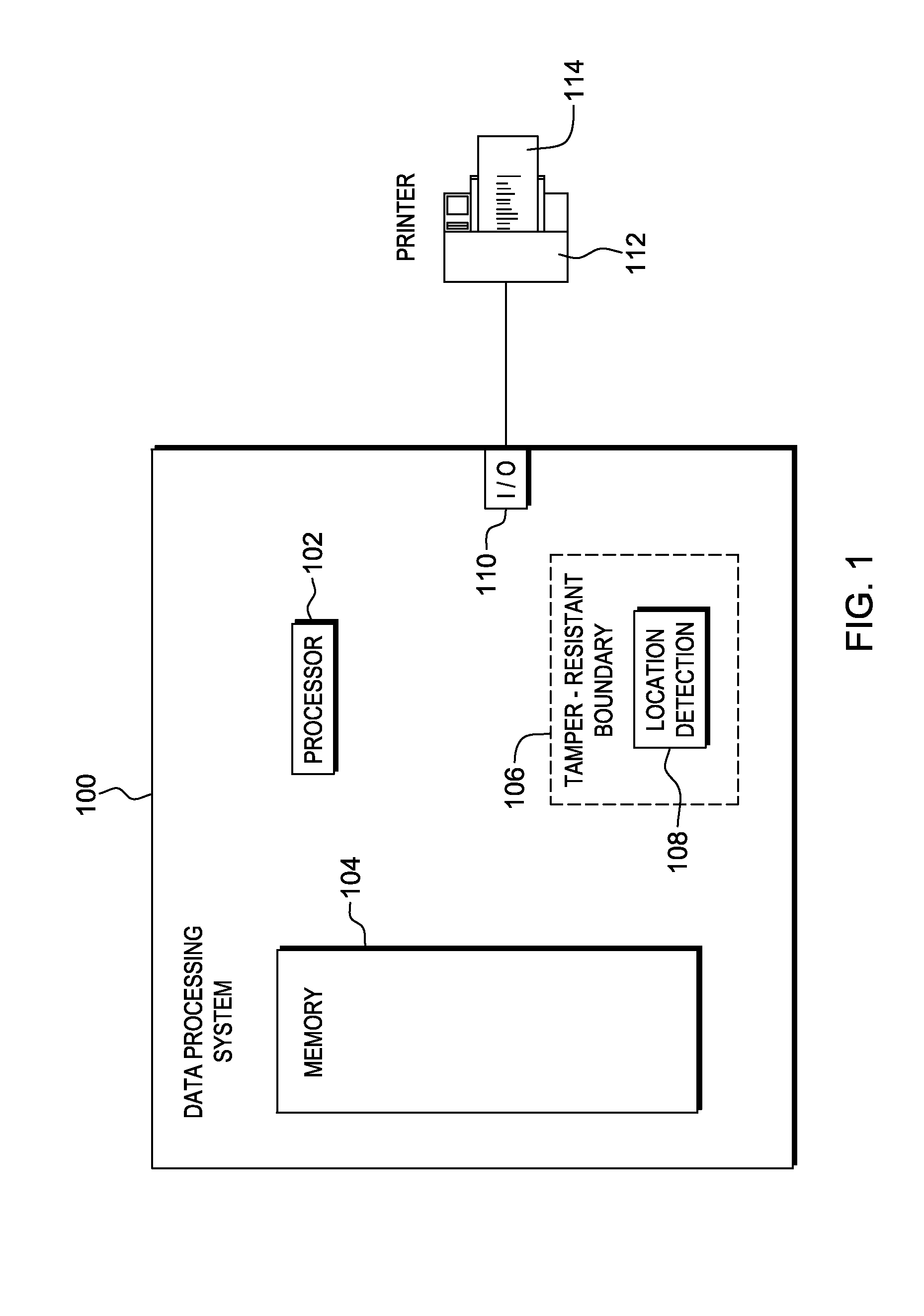

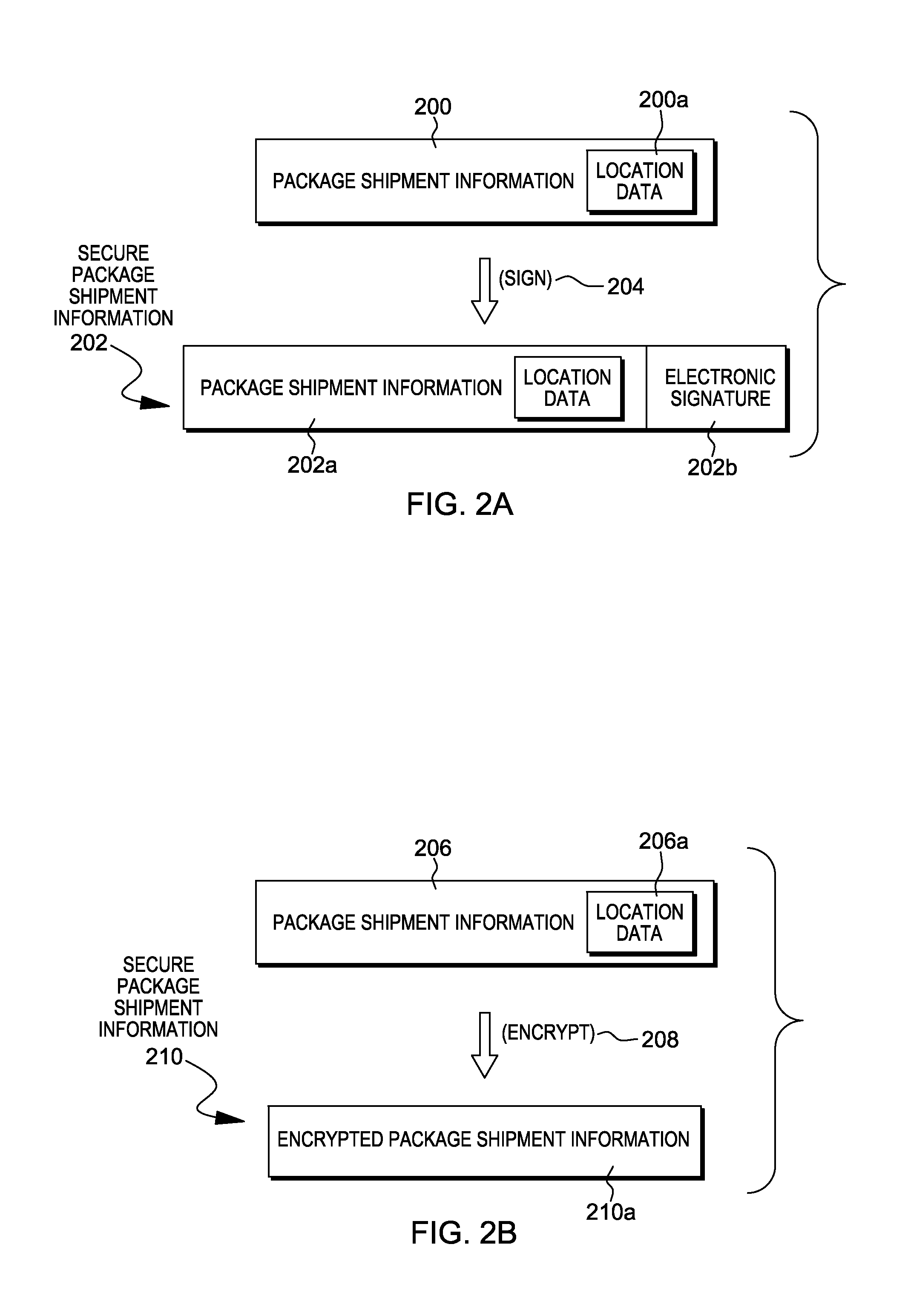

Package source verification

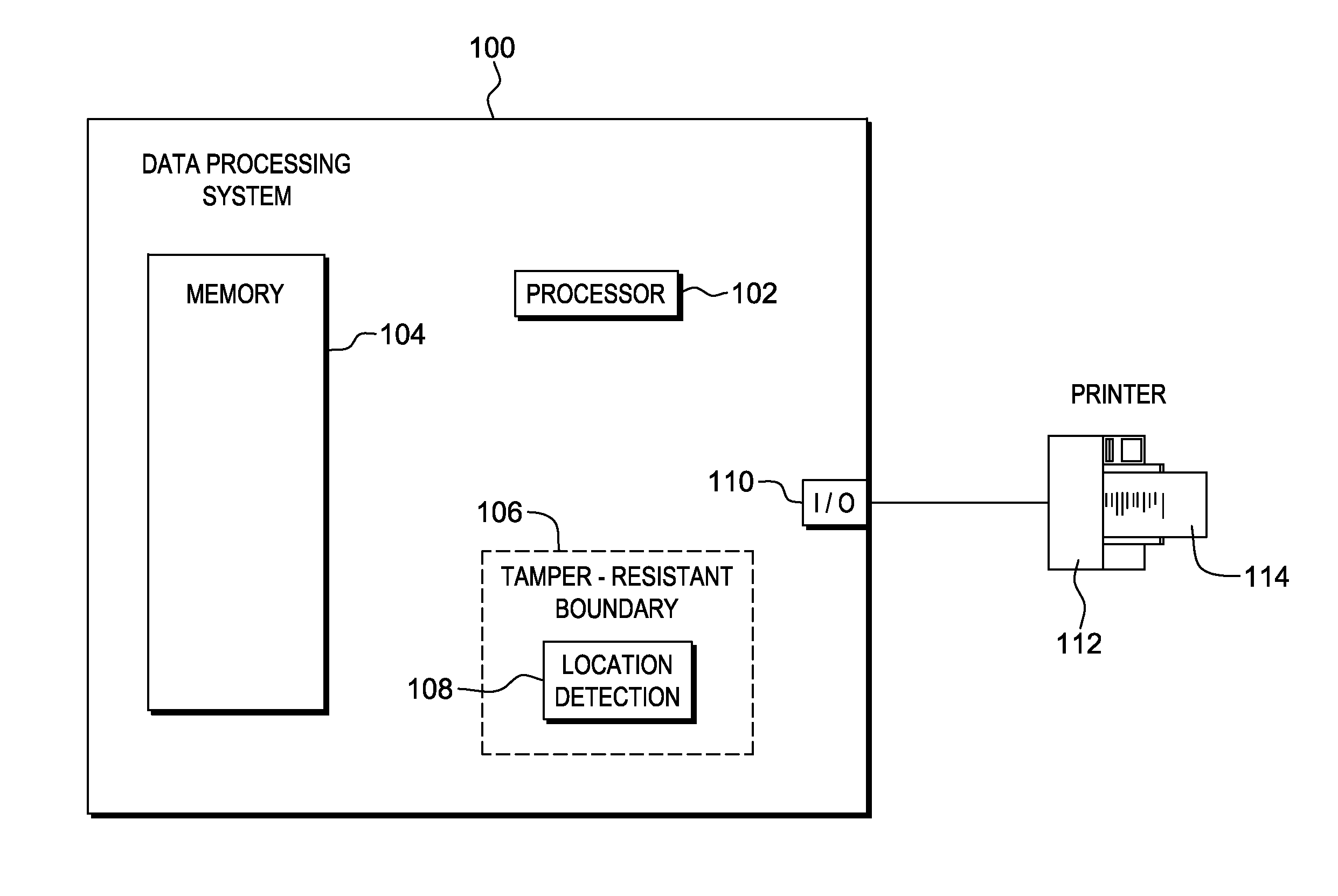

InactiveUS20140074746A1Convenient verificationOvercomes shortcomingCommerceLogisticsData terminalTamper resistance

Verification of a source of a package is facilitated. A data terminal certified by an authority obtains location data from a location detection component. The location data indicates a source location from which the package is to be shipped, and is detected by the location detection component at the source location. Secure package shipment information, including the location data, is provided with the package to securely convey the detected source location to facilitate verifying the source of the package. The data terminal can be a portable data terminal certified by the authority and have a tamper-proof boundary behind which resides the location detection component and one or more keys for securing the package shipment information. Upon tampering with the tamper-resistant boundary, the certification of the portable data terminal can be nullified.

Owner:HAND HELD PRODS

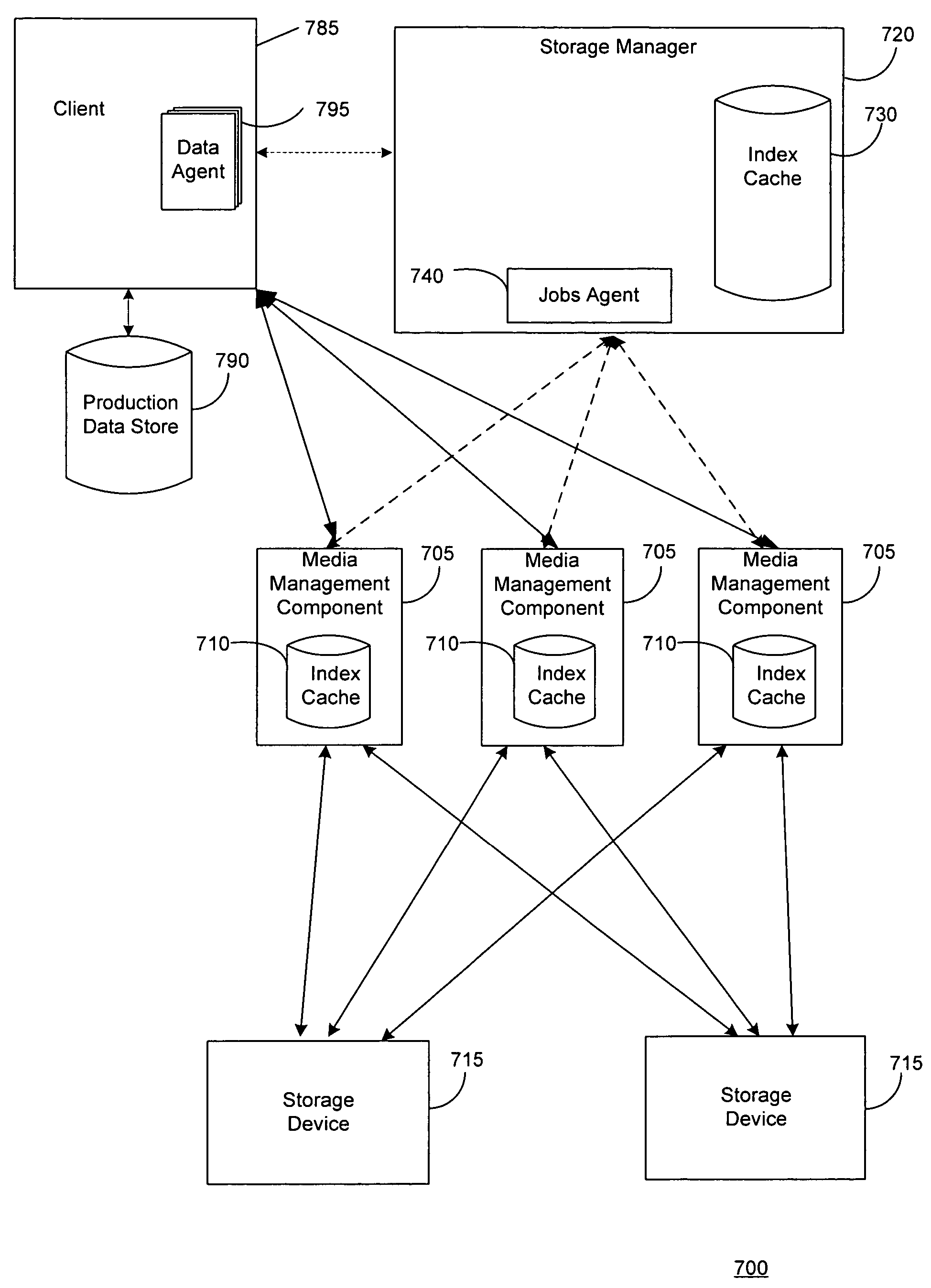

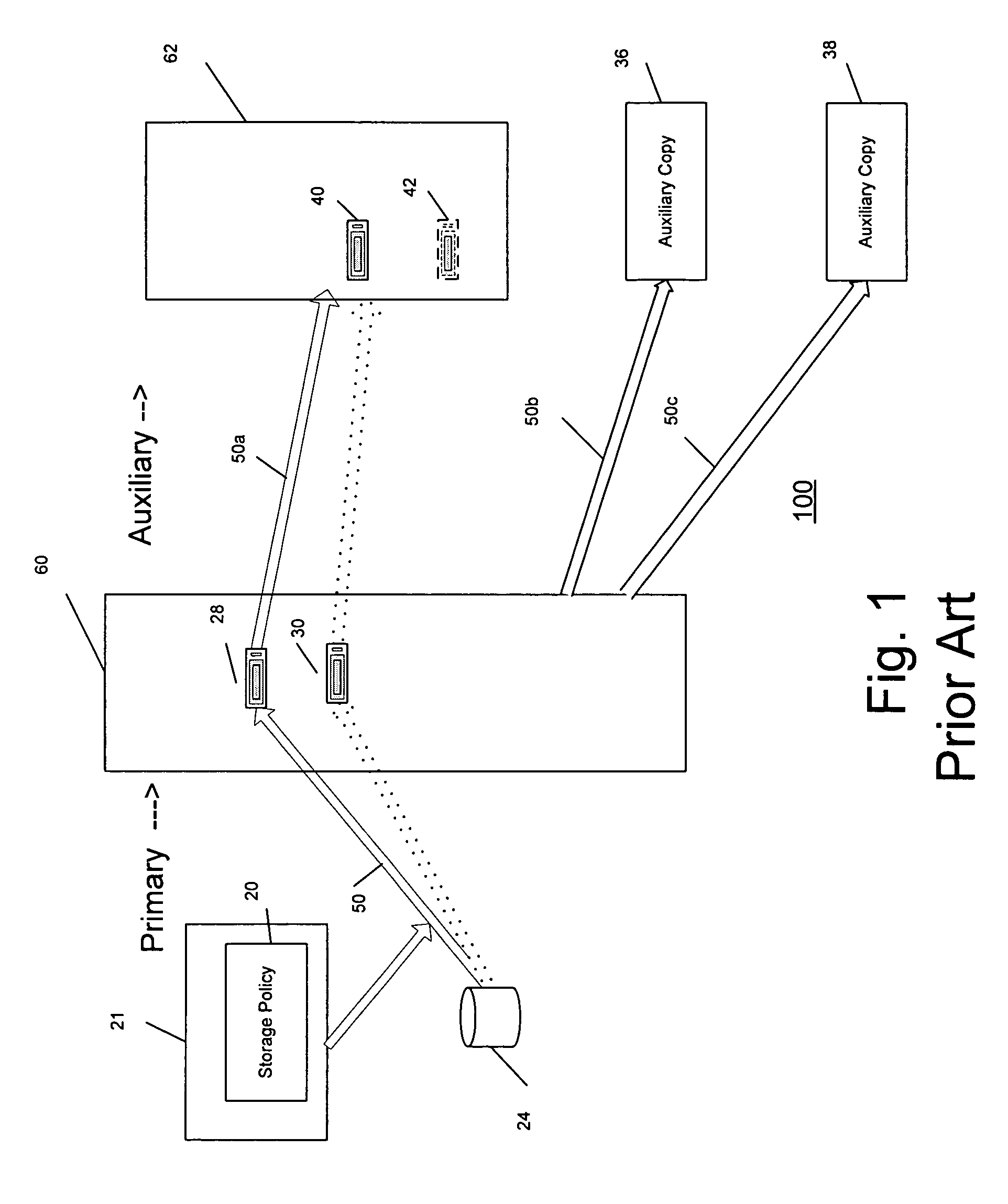

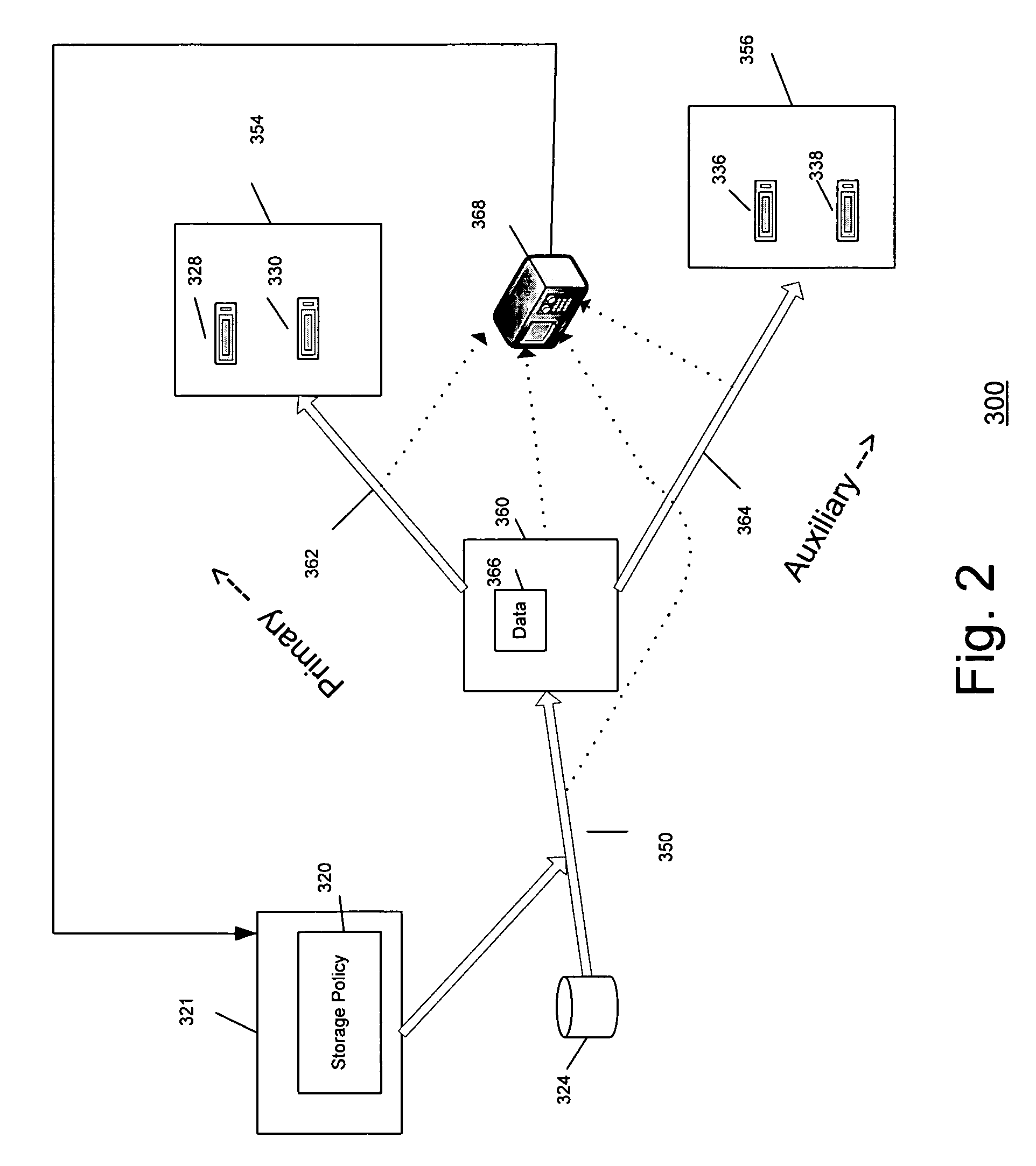

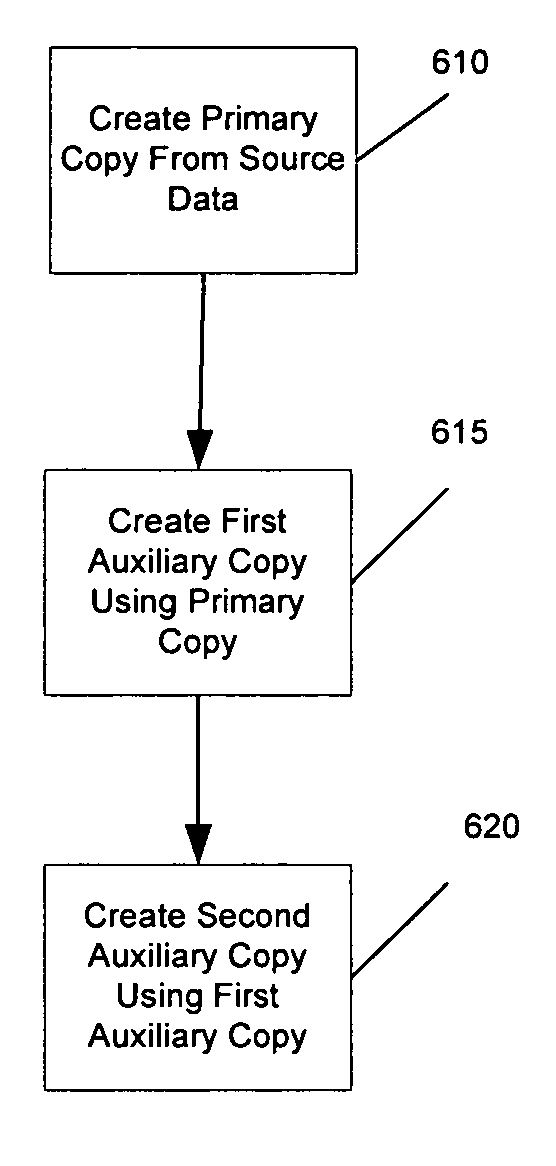

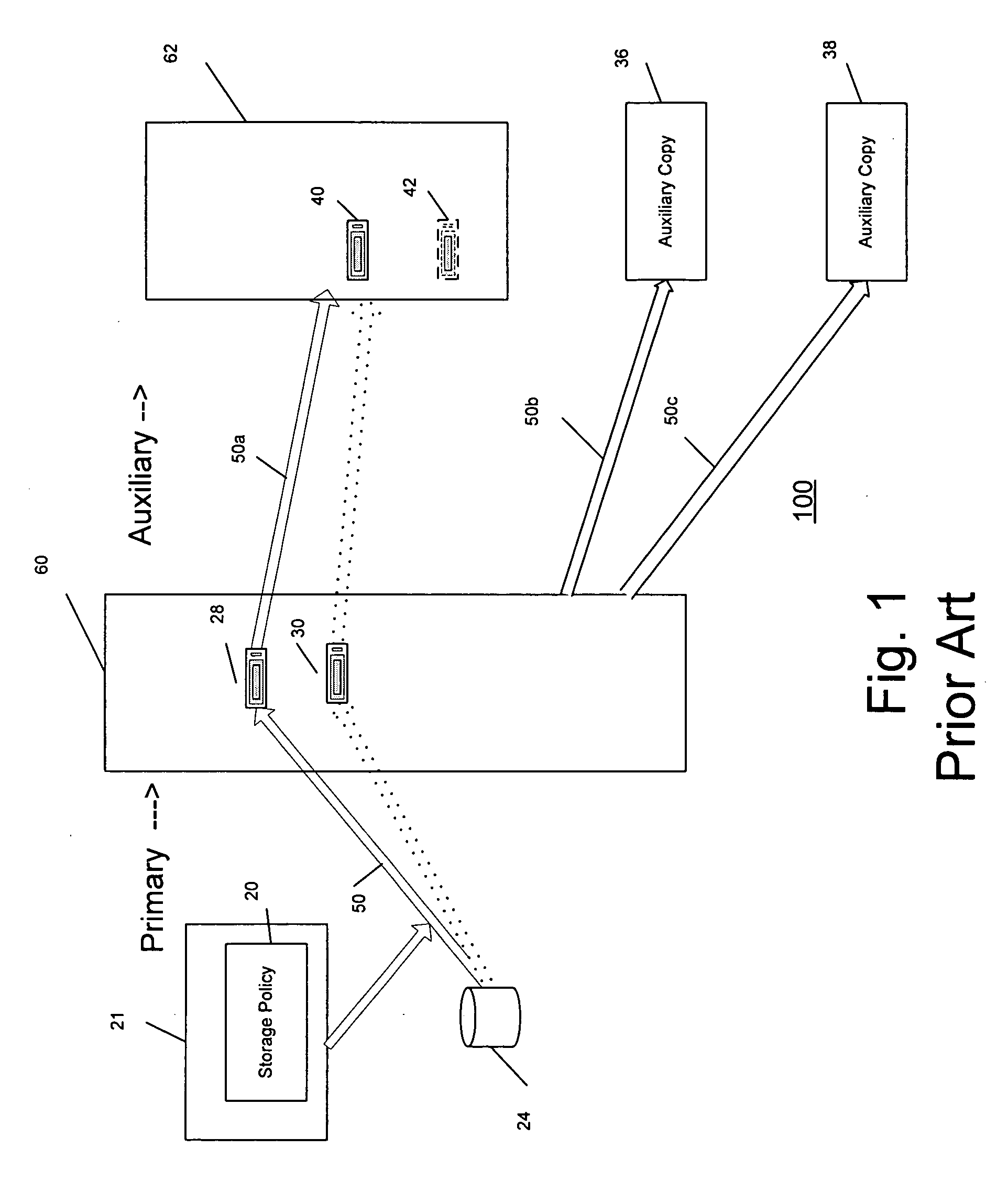

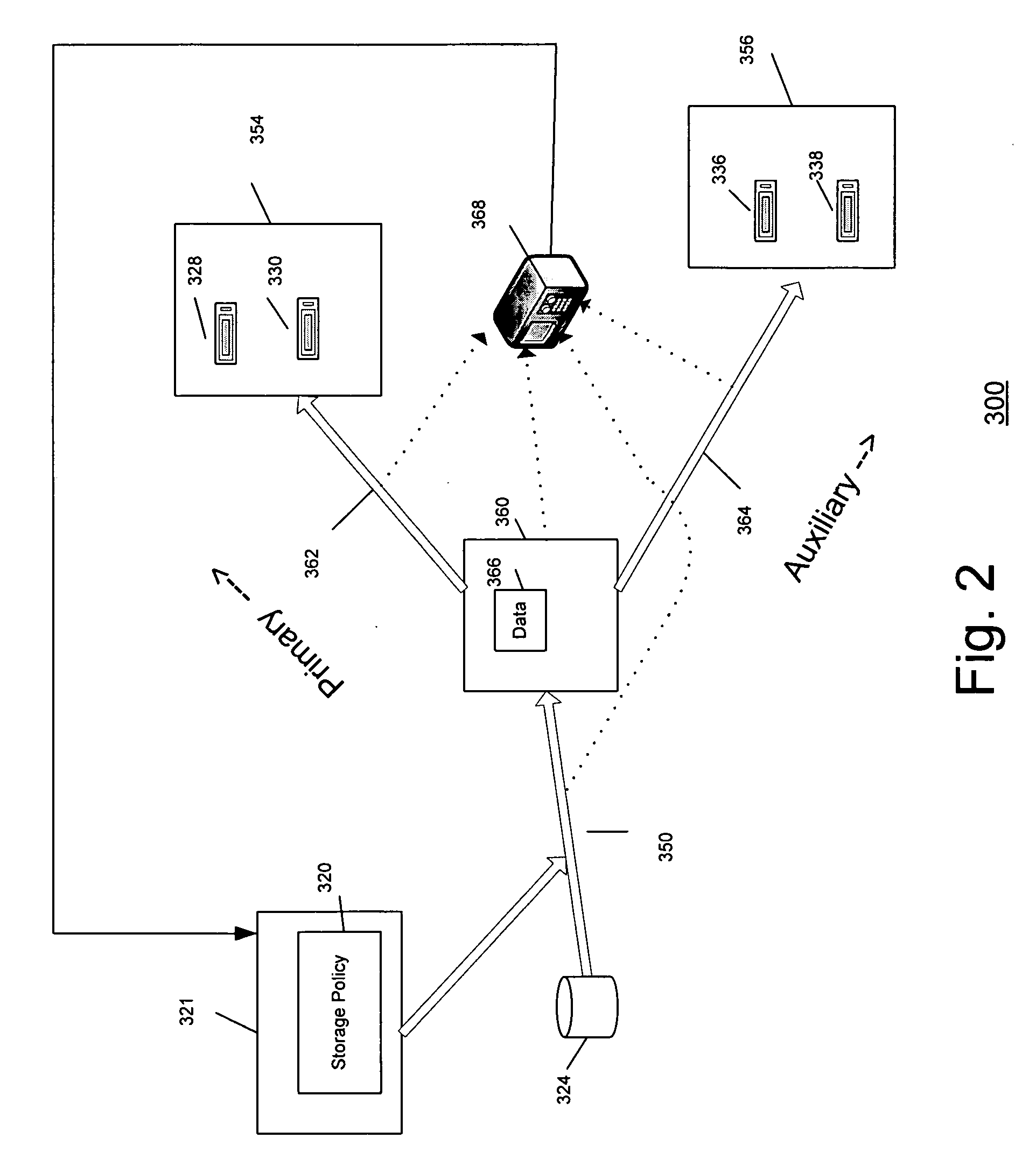

System and method for performing auxiliary storage operations

Systems and methods for protecting data in a tiered storage system are provided. The storage system comprises a management server, a media management component connected to the management server, a plurality of storage media connected to the media management component, and a data source connected to the media management component. Source data is copied from a source to a buffer to produce intermediate data. The intermediate data is copied to both a first and second medium to produce a primary and auxiliary copy, respectively. An auxiliary copy may be made from another auxiliary copy. An auxiliary copy may also be made from a primary copy right before the primary copy is pruned.

Owner:COMMVAULT SYST INC

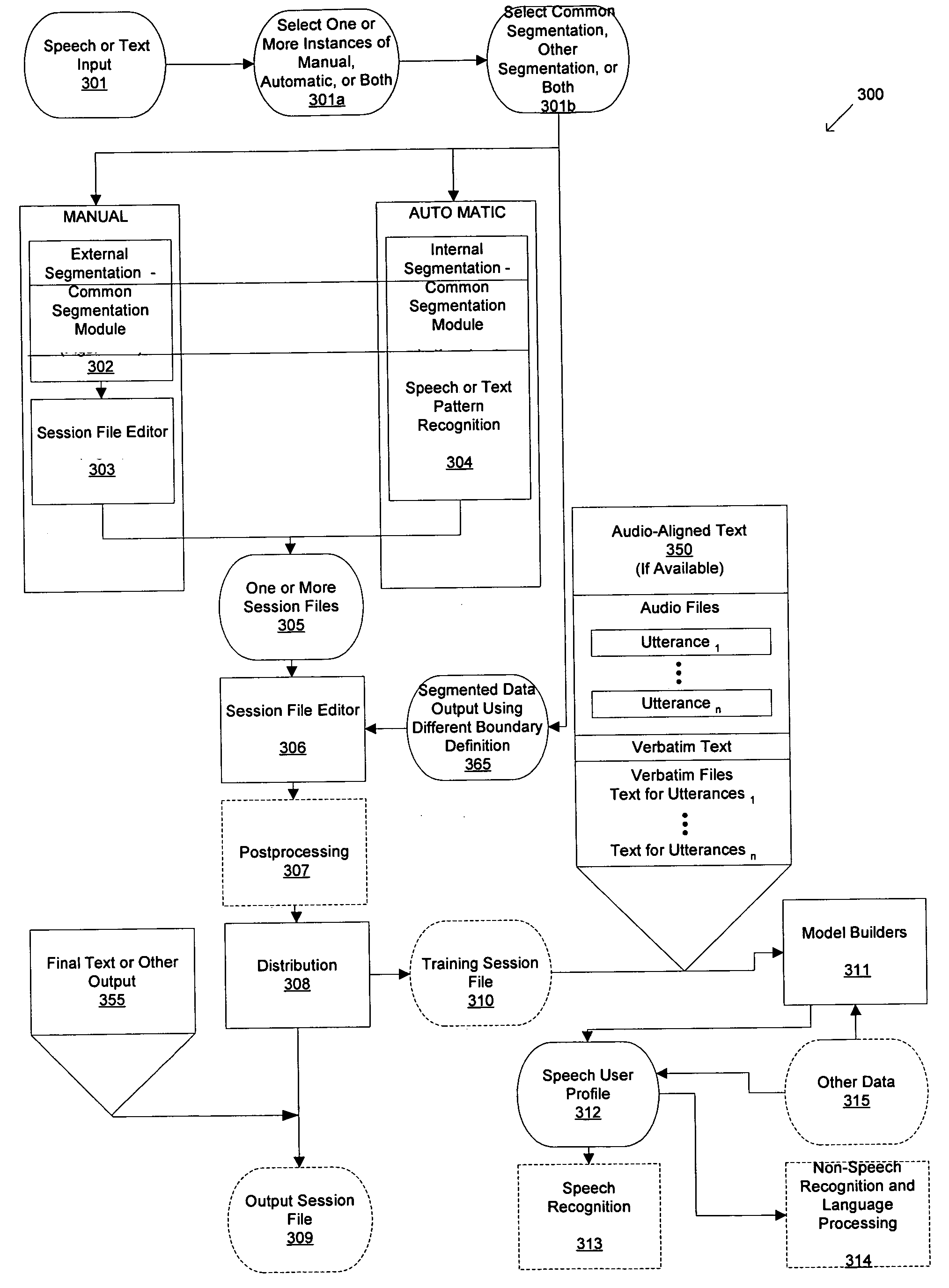

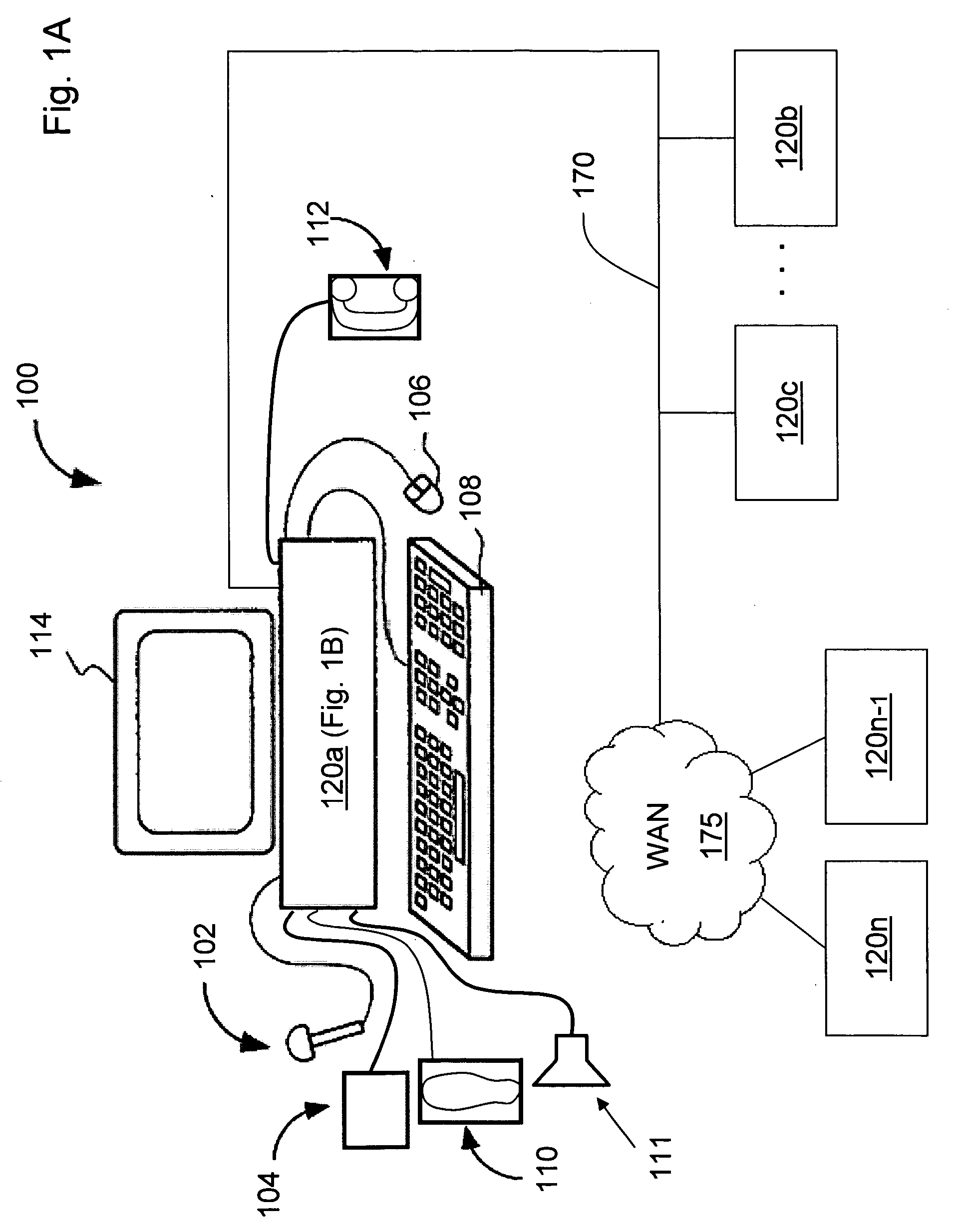

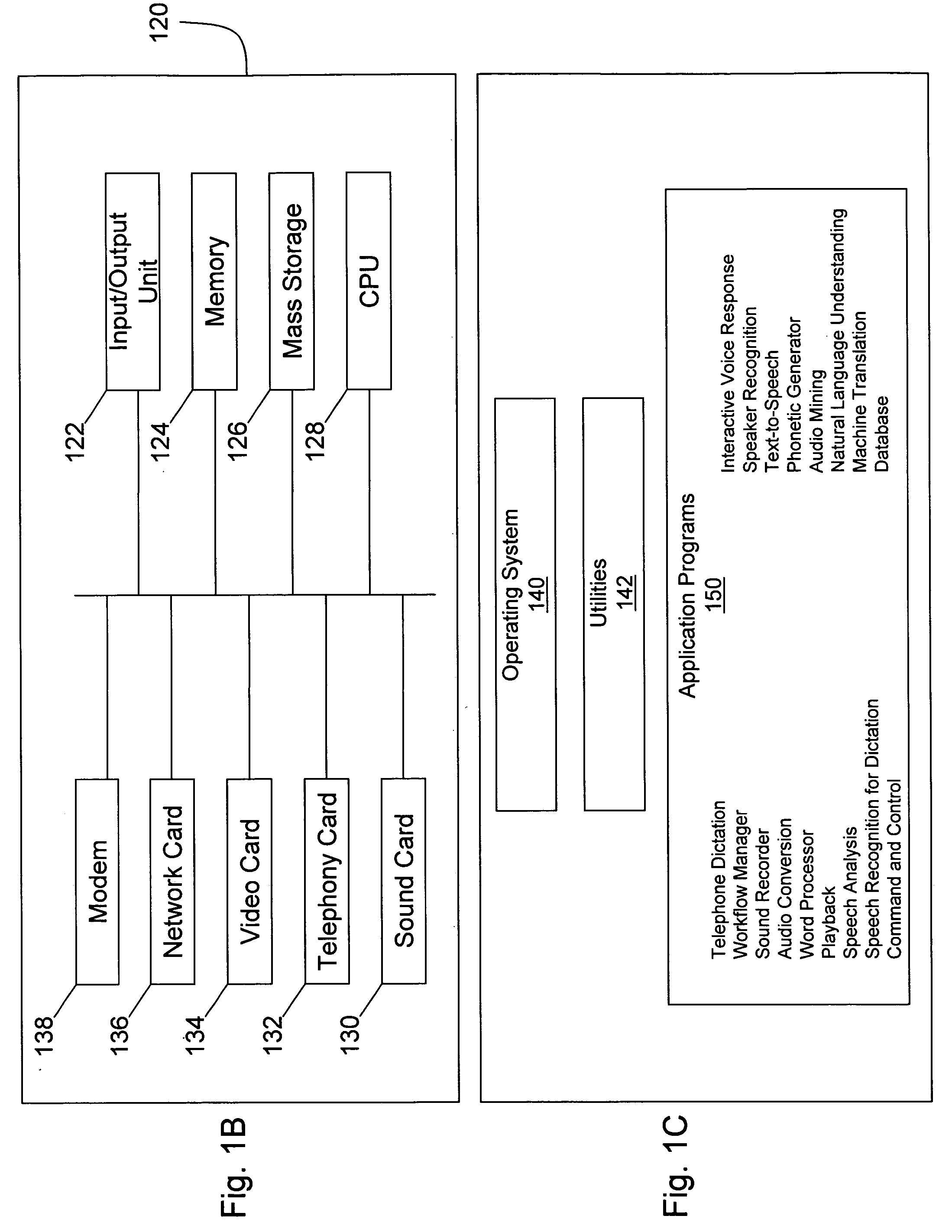

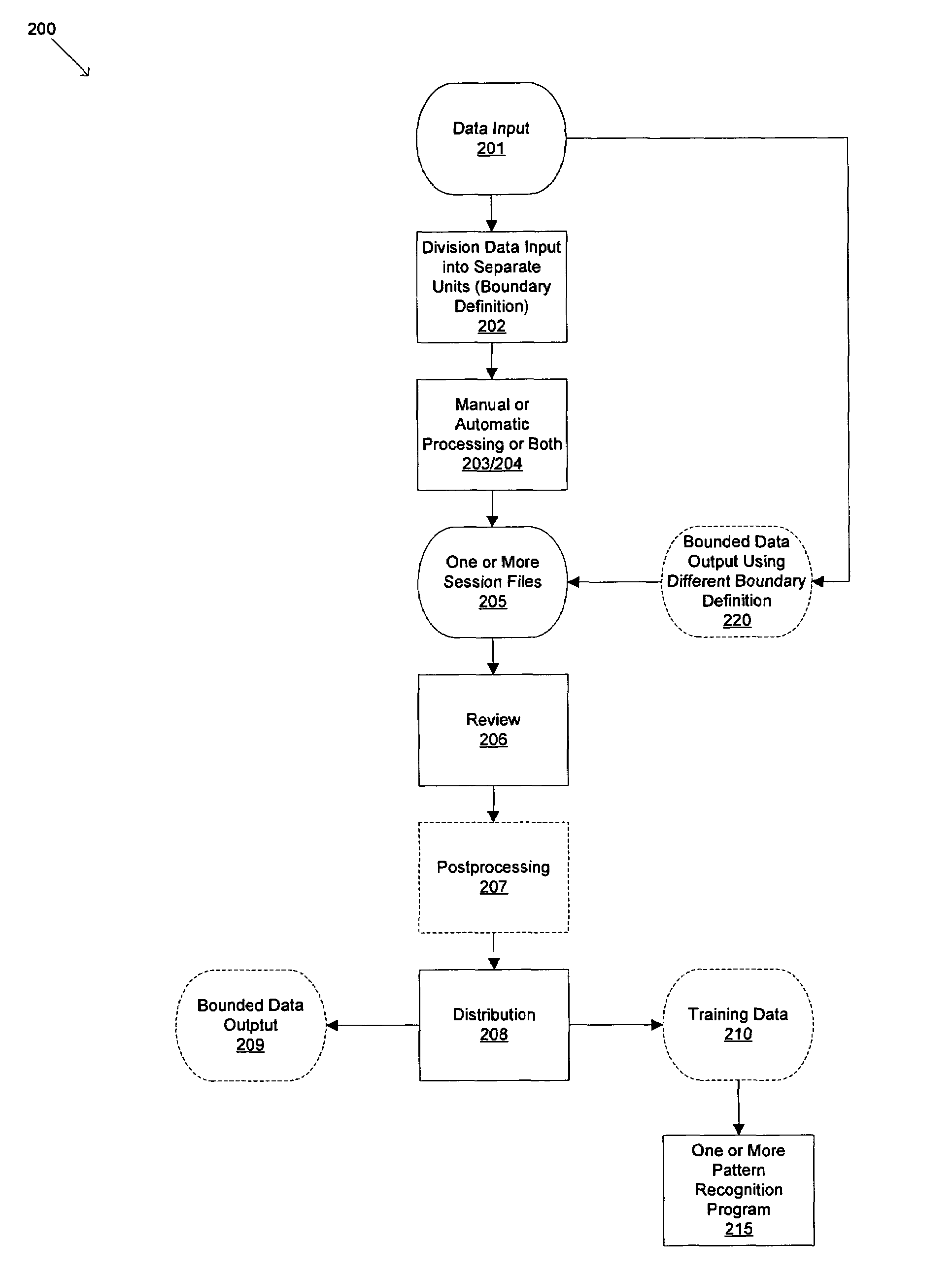

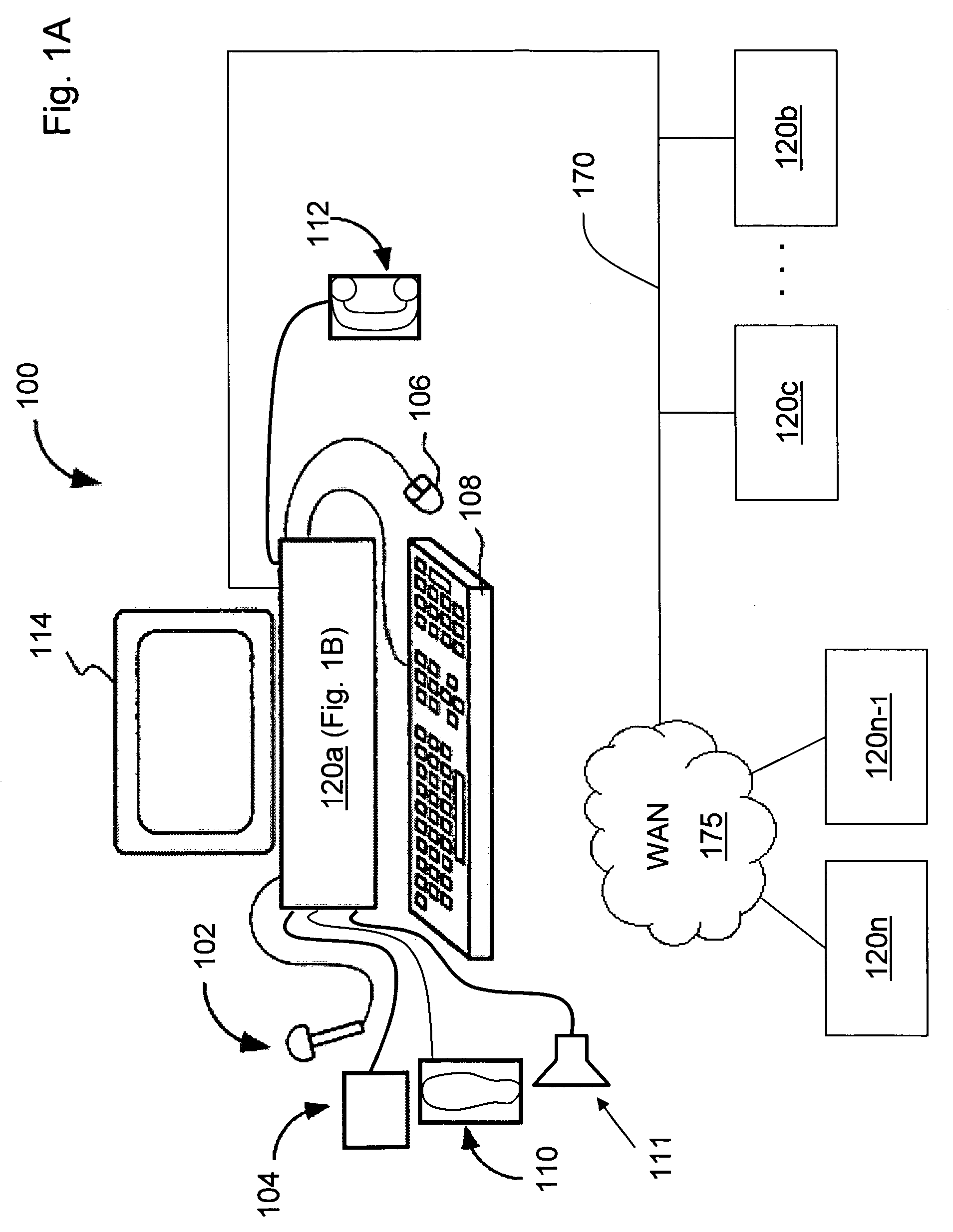

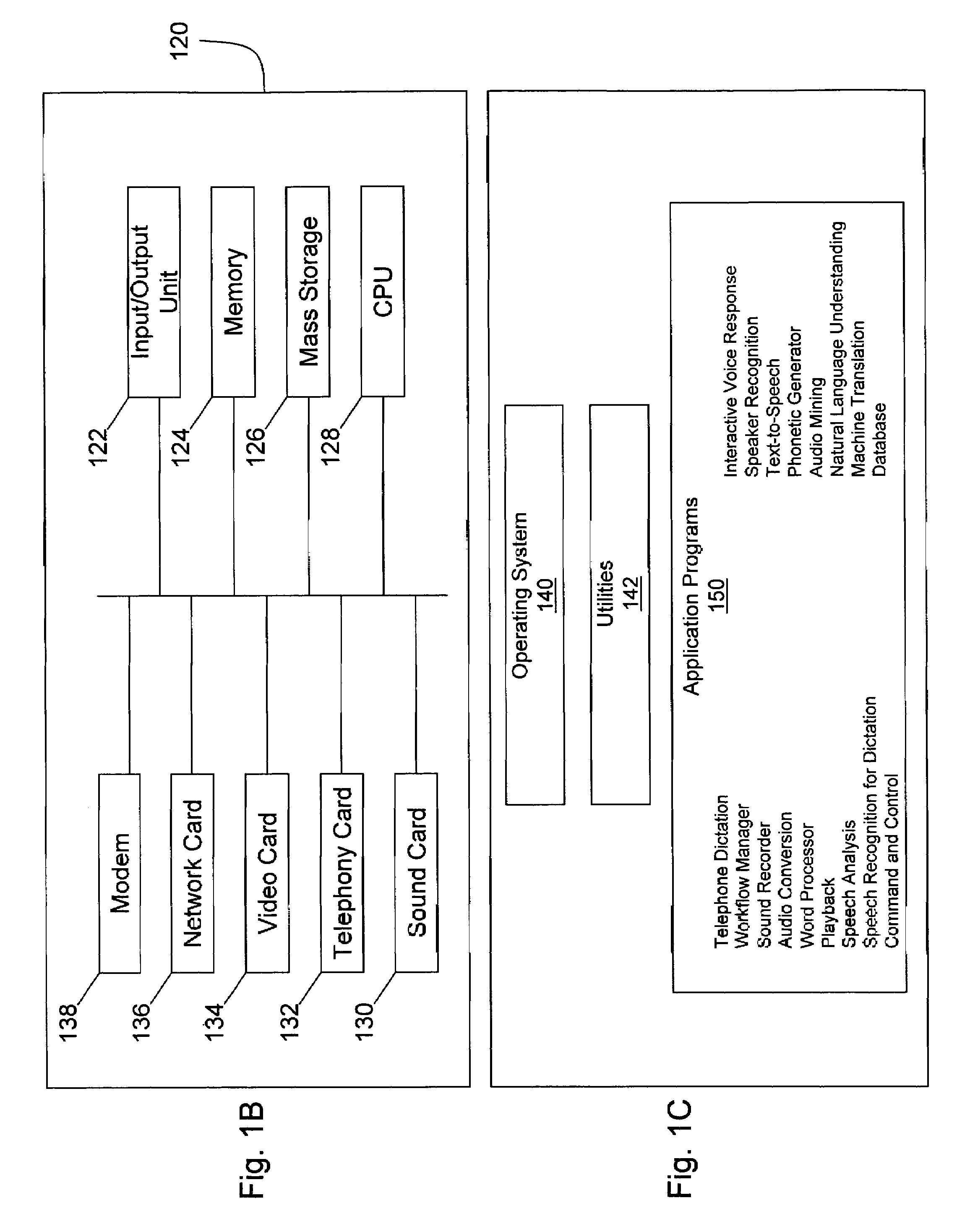

Synchronized pattern recognition source data processed by manual or automatic means for creation of shared speaker-dependent speech user profile

InactiveUS20060149558A1Avoids time-consuming generationMaximize likelihoodSpeech recognitionGraphicsData segment

An apparatus for collecting data from a plurality of diverse data sources, the diverse data sources generating input data selected from the group including text, audio, and graphics, the diverse data sources selected from the group including real-time and recorded, human and mechanically-generated audio, single-speaker and multispeaker, the apparatus comprising: means for dividing the input data into one or more data segments, the dividing means acting separately on the input data from each of the plurality of diverse data sources, each of the data segments being associated with at least one respective data buffer such that each of the respective data buffers would have the same number of segments given the same data; means for selective processing of the data segments within each of the respective data buffers; and means for distributing at least one of the respective data buffers such that the collected data associated therewith may be used for further processing.

Owner:CUSTOM SPEECH USA

Performance/capacity management framework over many servers

InactiveUS6148335ADigital computer detailsData switching networksApplication programming interfaceColour coding

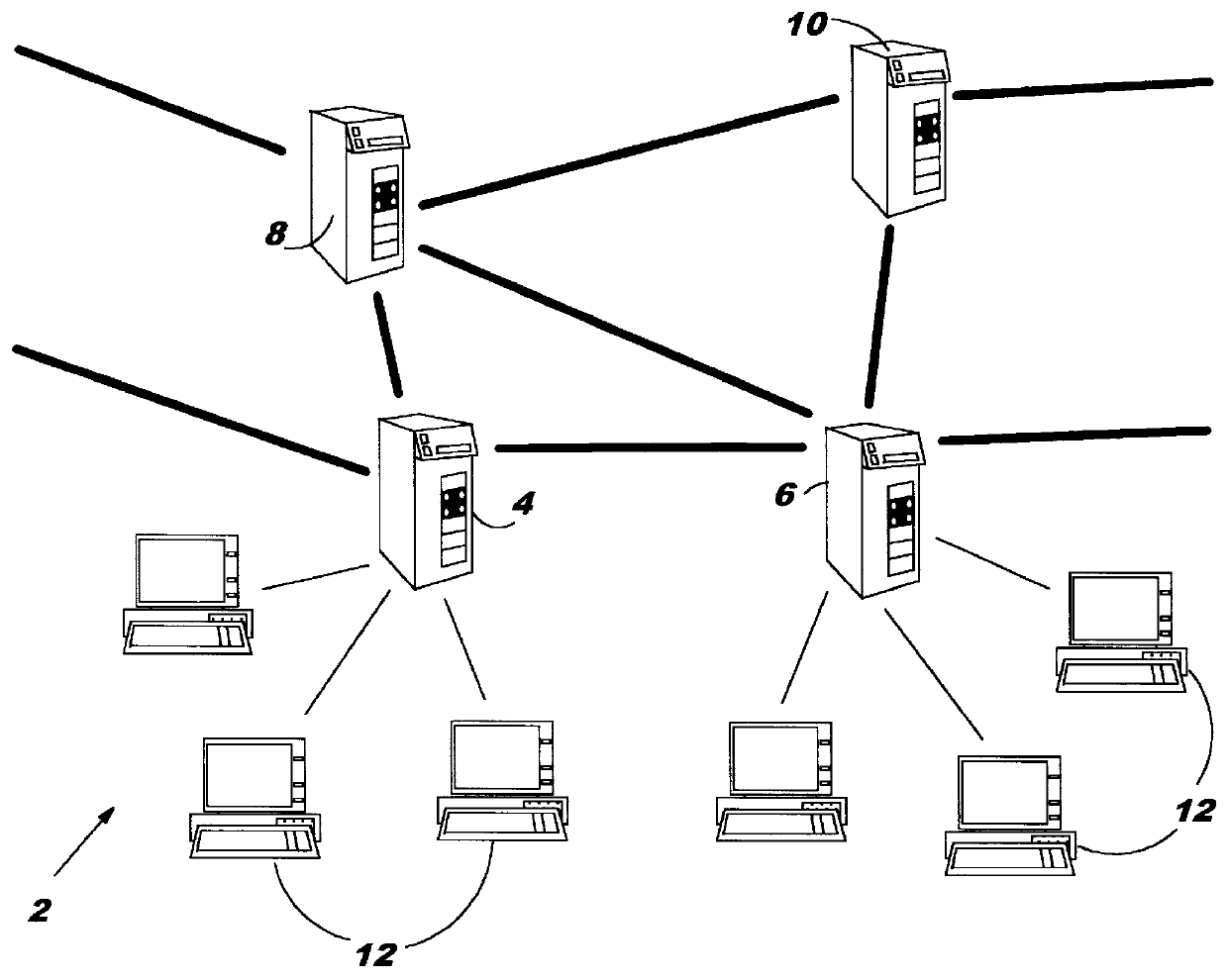

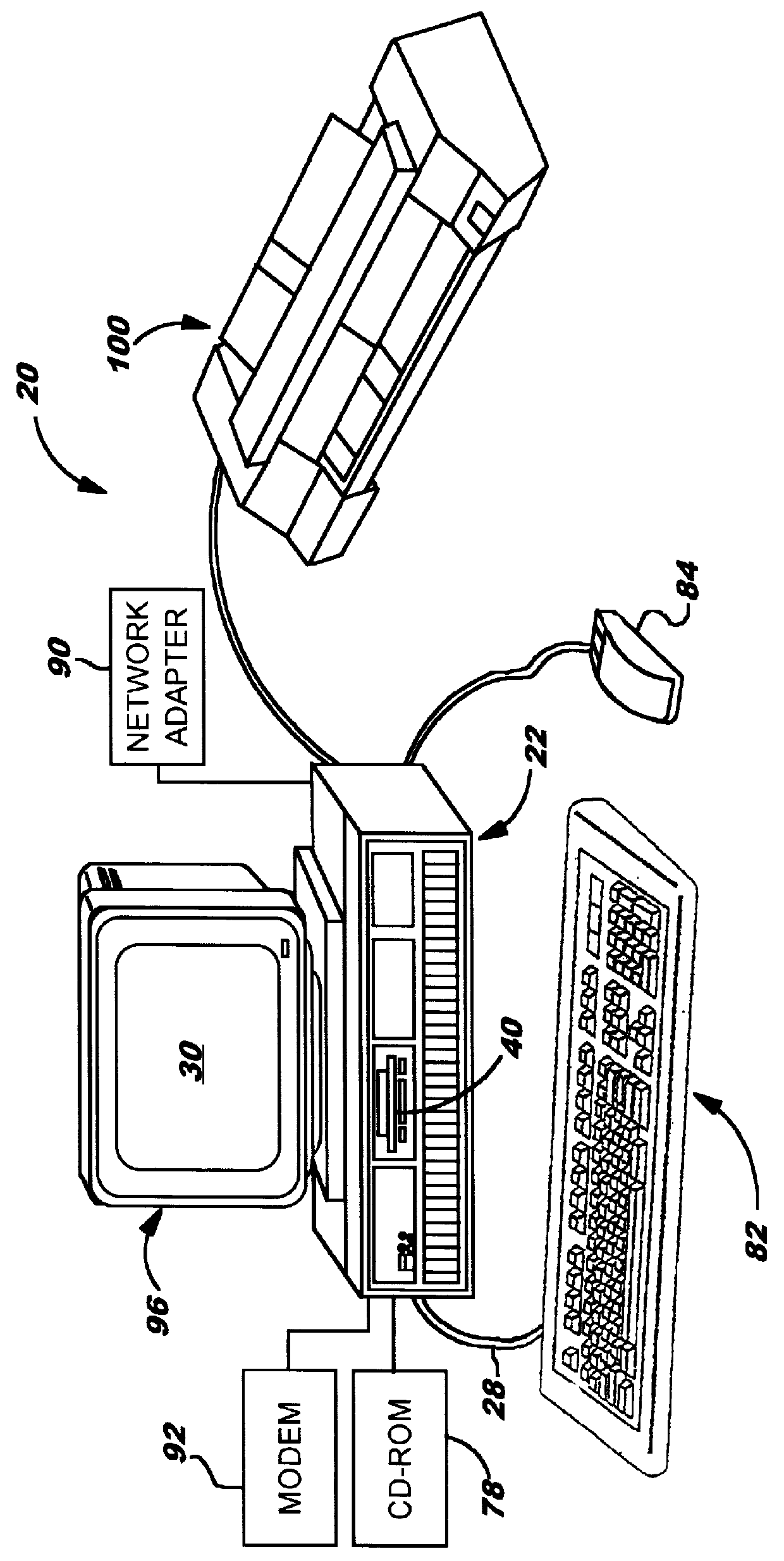

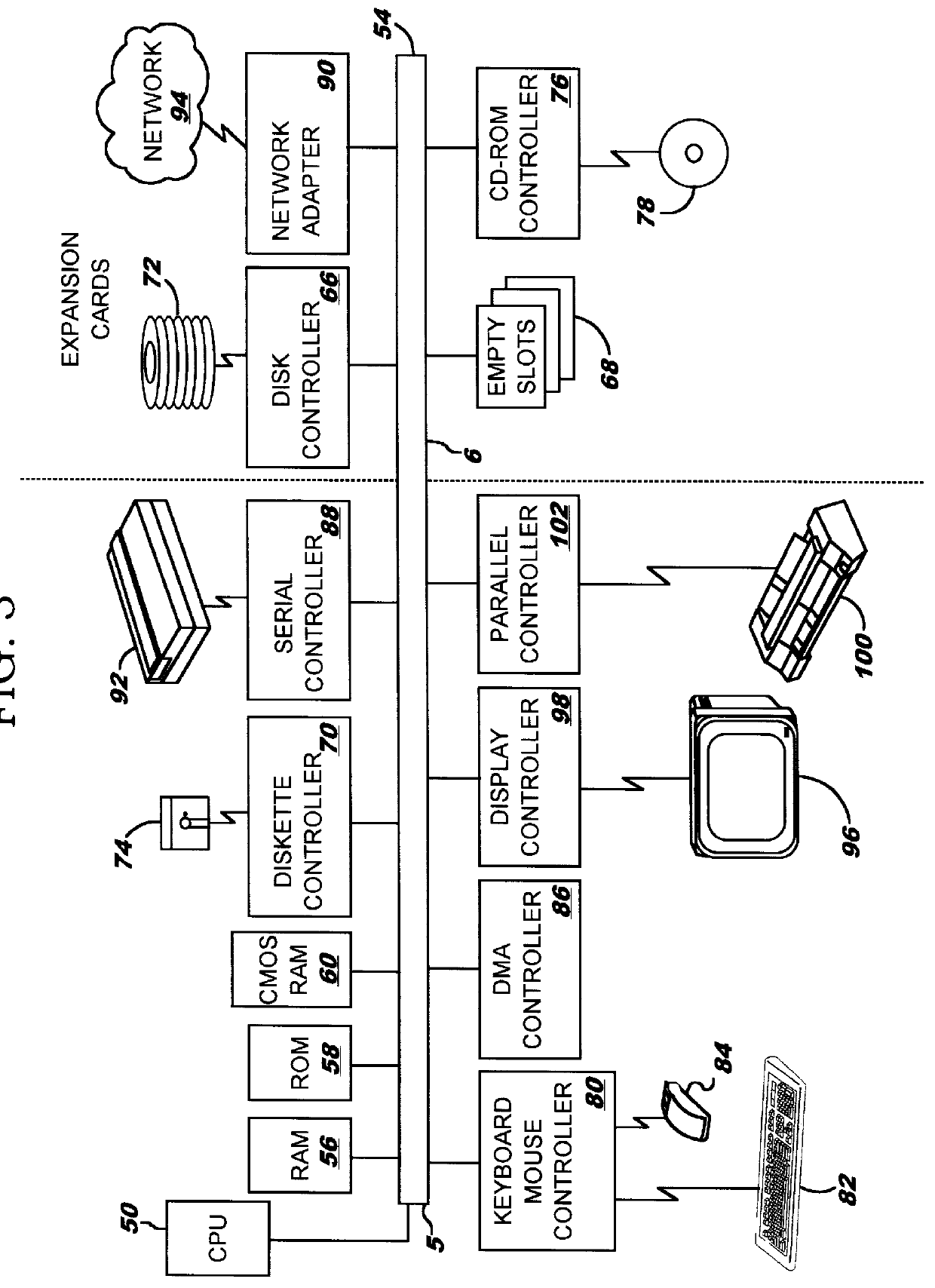

A method of monitoring a computer network by collecting resource data from a plurality of network nodes, analyzing the resource data to generate historical performance data, and reporting the historical performance data to another network node. The network nodes can be servers operating on different platforms, and resource data is gathered using separate programs having different application programming interfaces for the respective platforms. The analysis can generate daily, weekly, and monthly historical performance data, based on a variety of resources including CPU utilization, memory availability, I / O usage, and permanent storage capacity. The report may be constructed using a plurality of documents related by hypertext links. The hypertext links can be color-coded in response to at least one performance parameter in the historical performance data surpassing an associated threshold. An action list can also be created in response to such an event.

Owner:IBM CORP

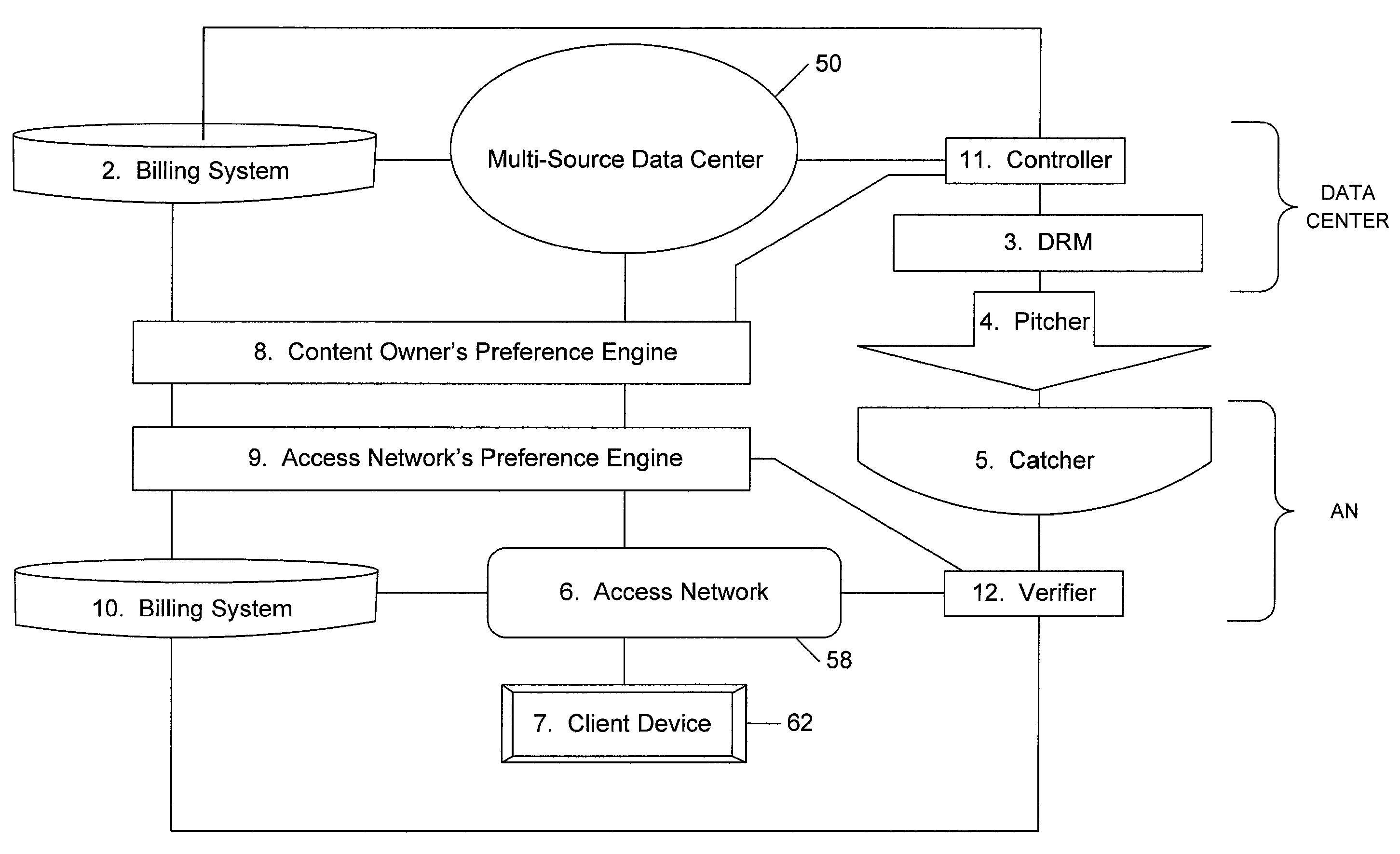

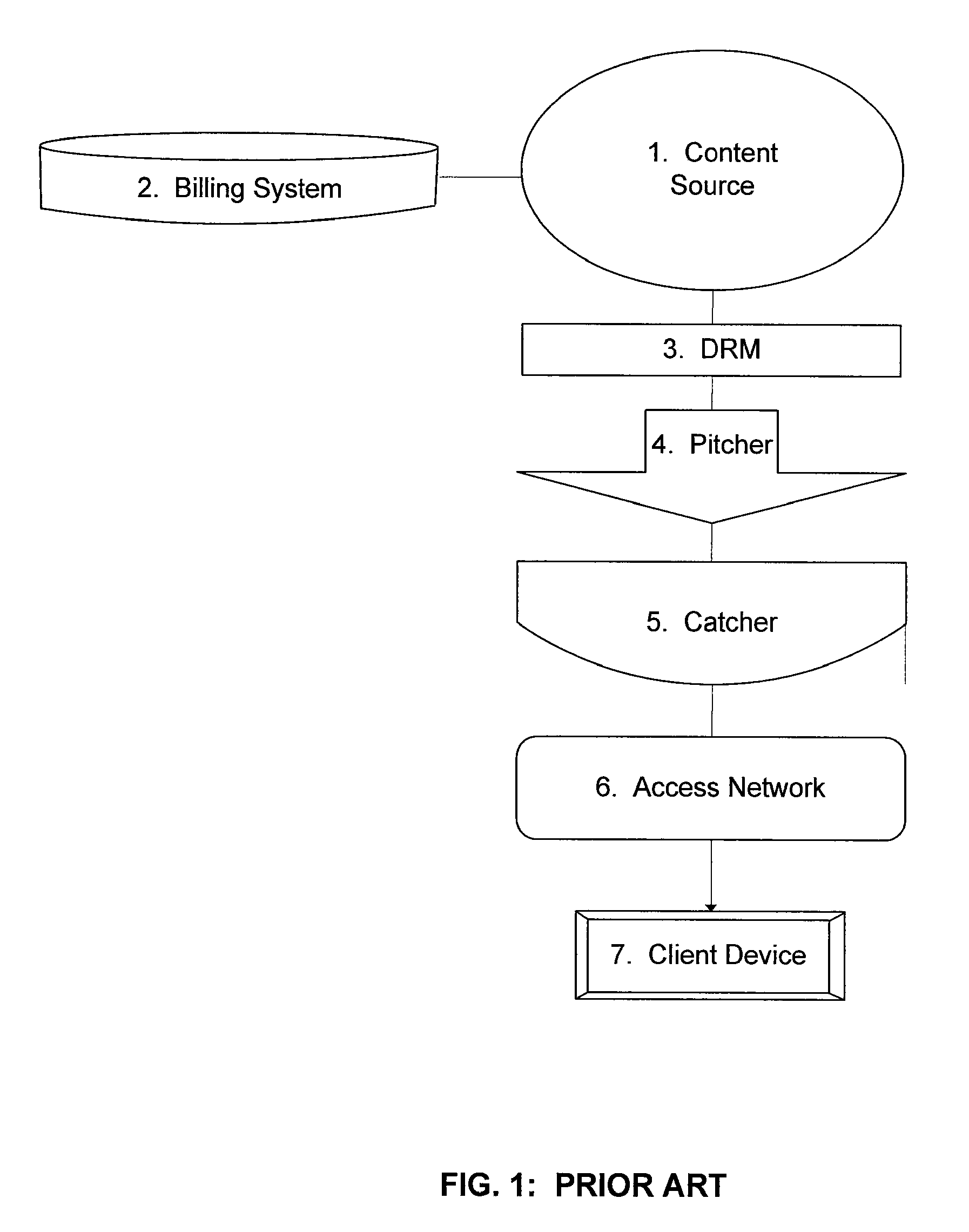

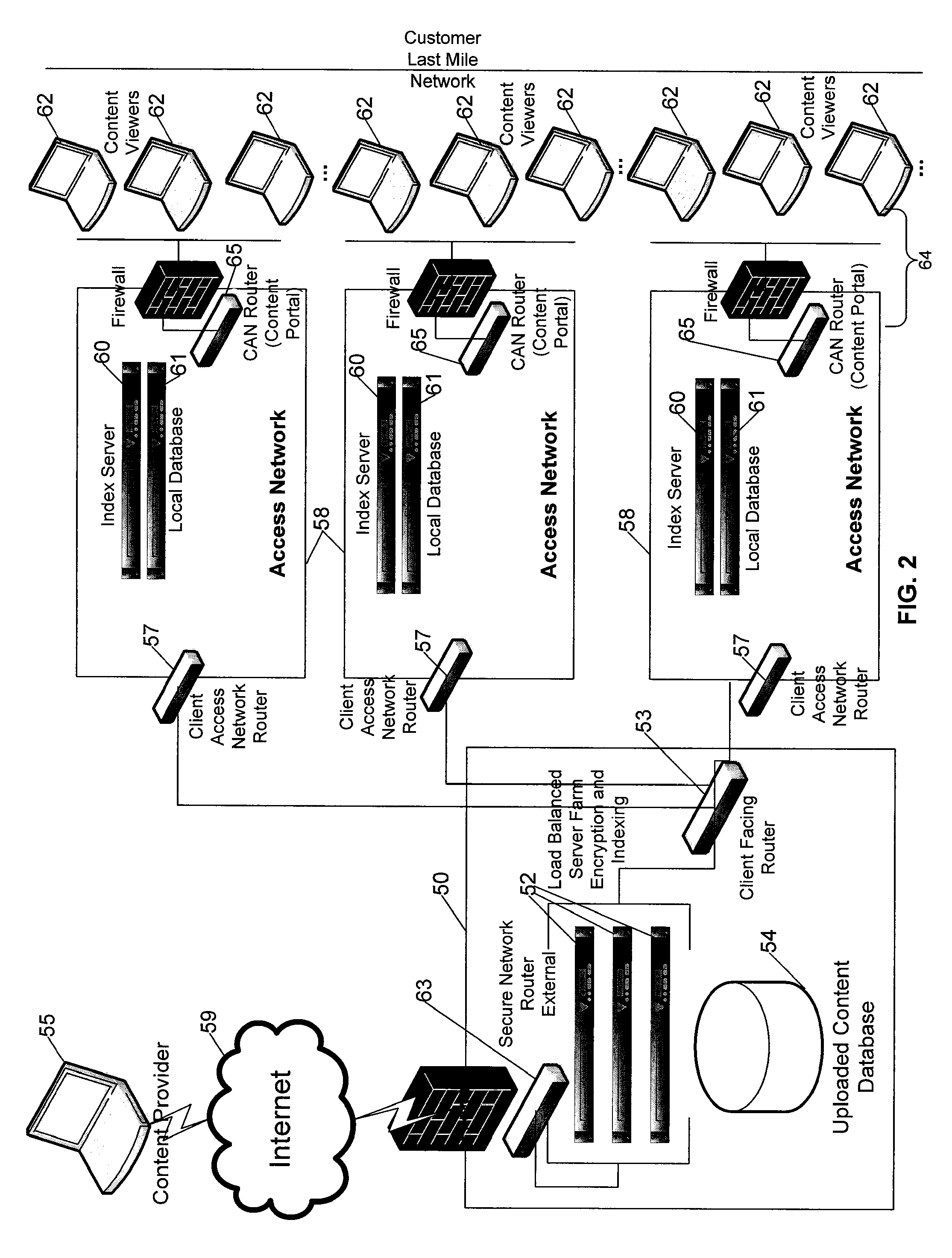

Multi-source bridge content distribution system and method

ActiveUS20080155614A1Appropriately sharedComplete banking machinesAdvertisementsContent distributionAccess network

A multi-source bridge content distribution system links multiple content owners with access network operators or content distribution providers leasing space on access networks so that multi-media content can be provided from multiple content owners to consumers through a multi-source bridge or data center. Content files and associated content owner preference settings are provided from a plurality of content sources or providers to the multi-source data center. Files stored at the data center or locally at an access network are provided to subscribers through the local access network Content files are provided if the content owner preference settings are a sufficient match with service provider access network preference settings set up by the service provider using the access network to provide content to subscribers.

Owner:VERIMATRIX INC

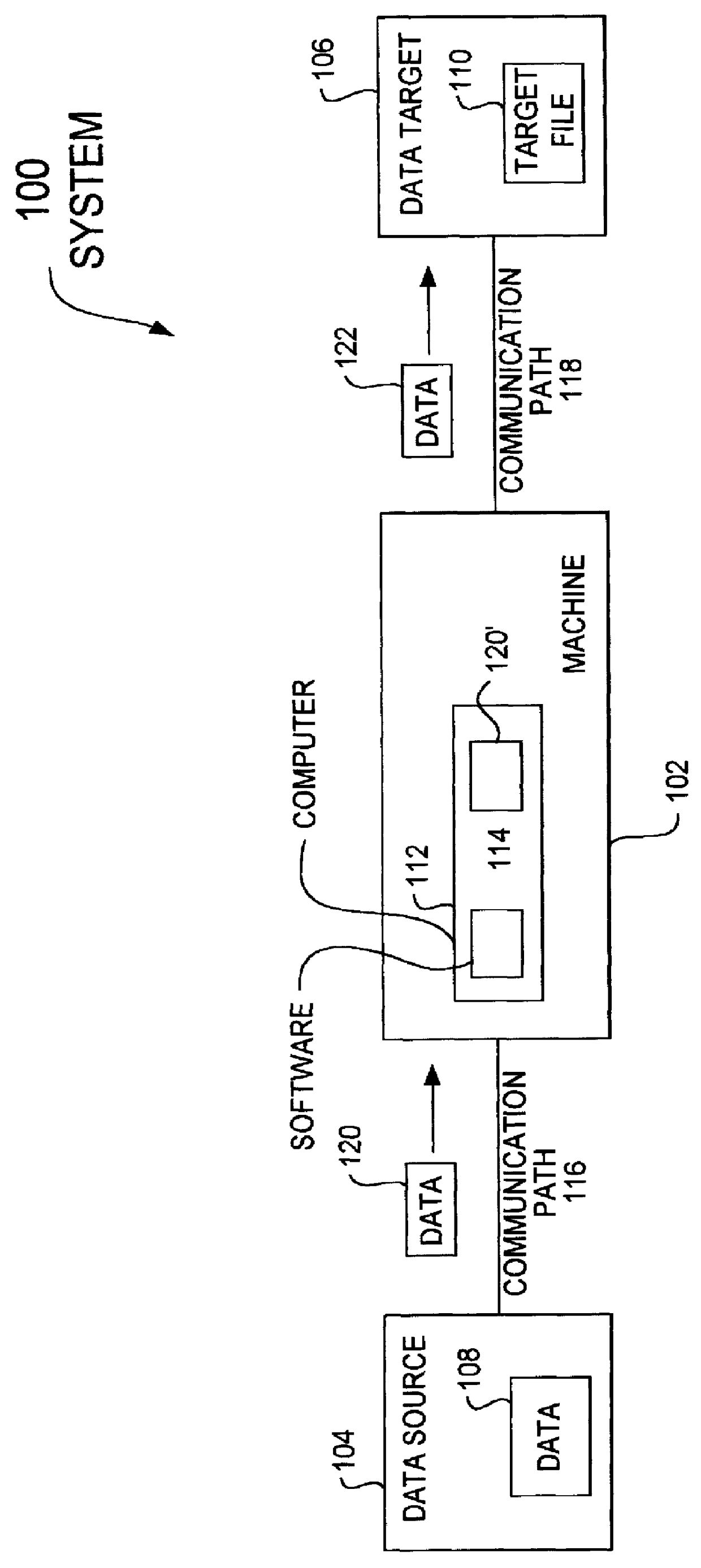

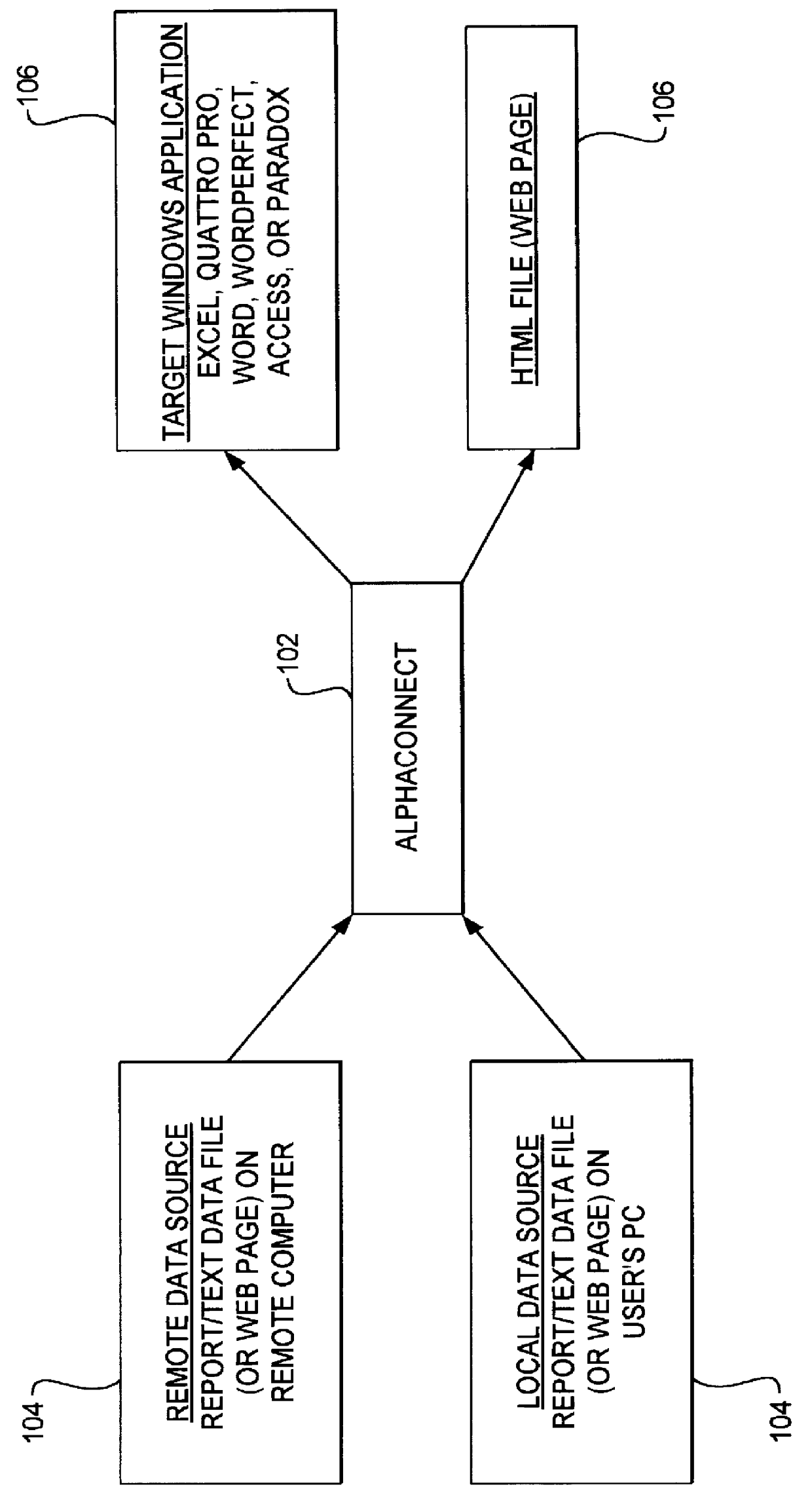

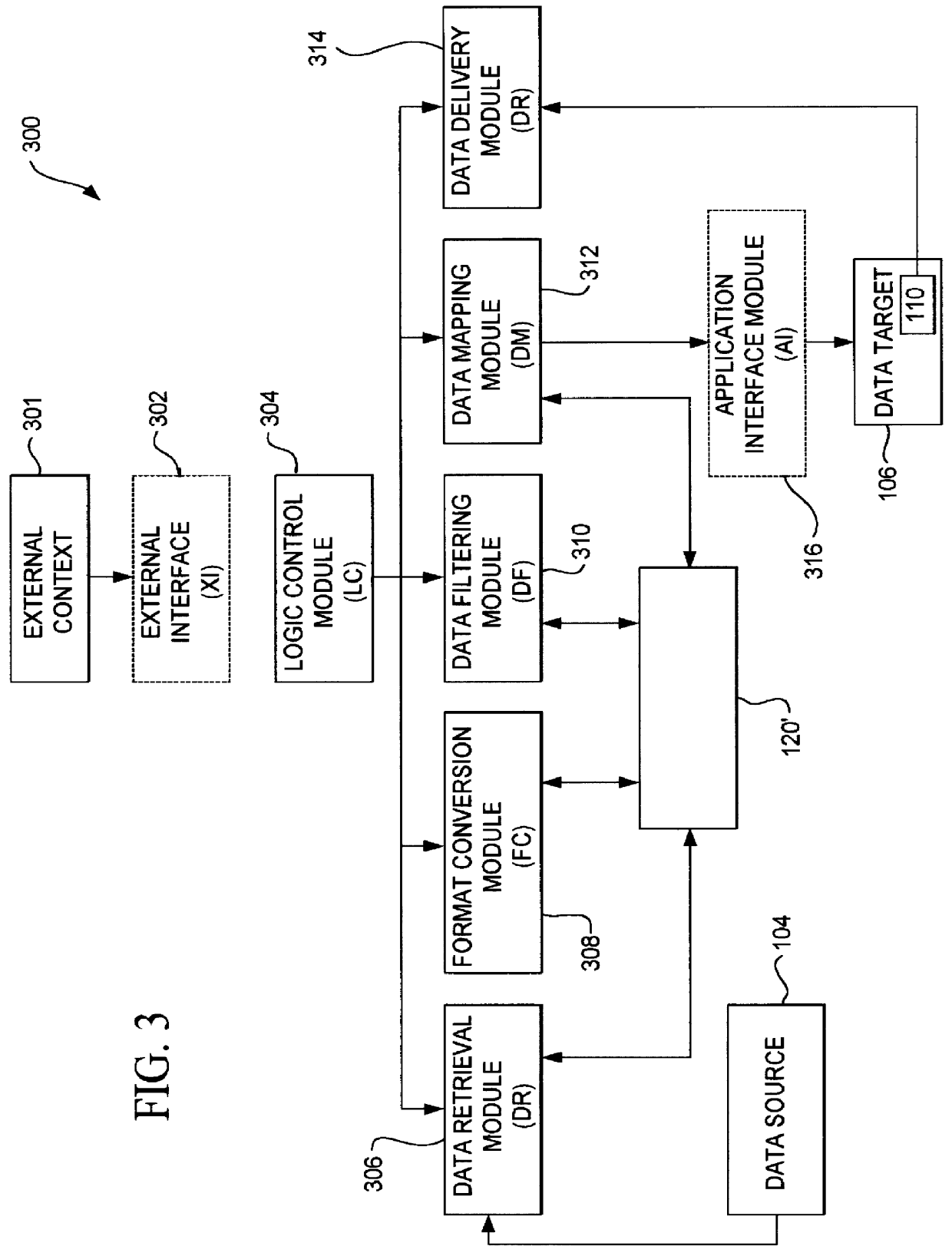

Method and apparatus for data communication

InactiveUS6094684AInterprogram communicationMultiple digital computer combinationsGraphicsGraphical user interface

A data acquisition and delivery system for performing data delivery tasks is disclosed. This system uses a computer running software to acquire source data from a selected data source, to process (e.g. filter, format convert) the data, if desired, and to deliver the resulting delivered data to a data target. The system is designed to access remote and / or local data sources and to deliver data to remote and / or local data targets. The data target might be an application program that delivers the data to a file or the data target may simply be a file, for example. To obtain the delivered data, the software performs processing of the source data as appropriate for the particular type of data being retrieved, for the particular data target and as specified by a user, for example. The system can communicate directly with a target application program, telling the target application to place the delivered data in a particular location in a particular file. The system provides an external interface to an external context. If the external context is a human, the external interface may be a graphical user interface, for example. If the external context is another software application, the external interface may be an OLE interface, for example. Using the external interface, the external context is able to vary a variety of parameters to define data delivery tasks as desired. The system uses a unique notation that includes a plurality of predefined parameters to define the data delivery tasks and to communicate them to the software.

Owner:E BOTZ COM INC A DELAWARE +2

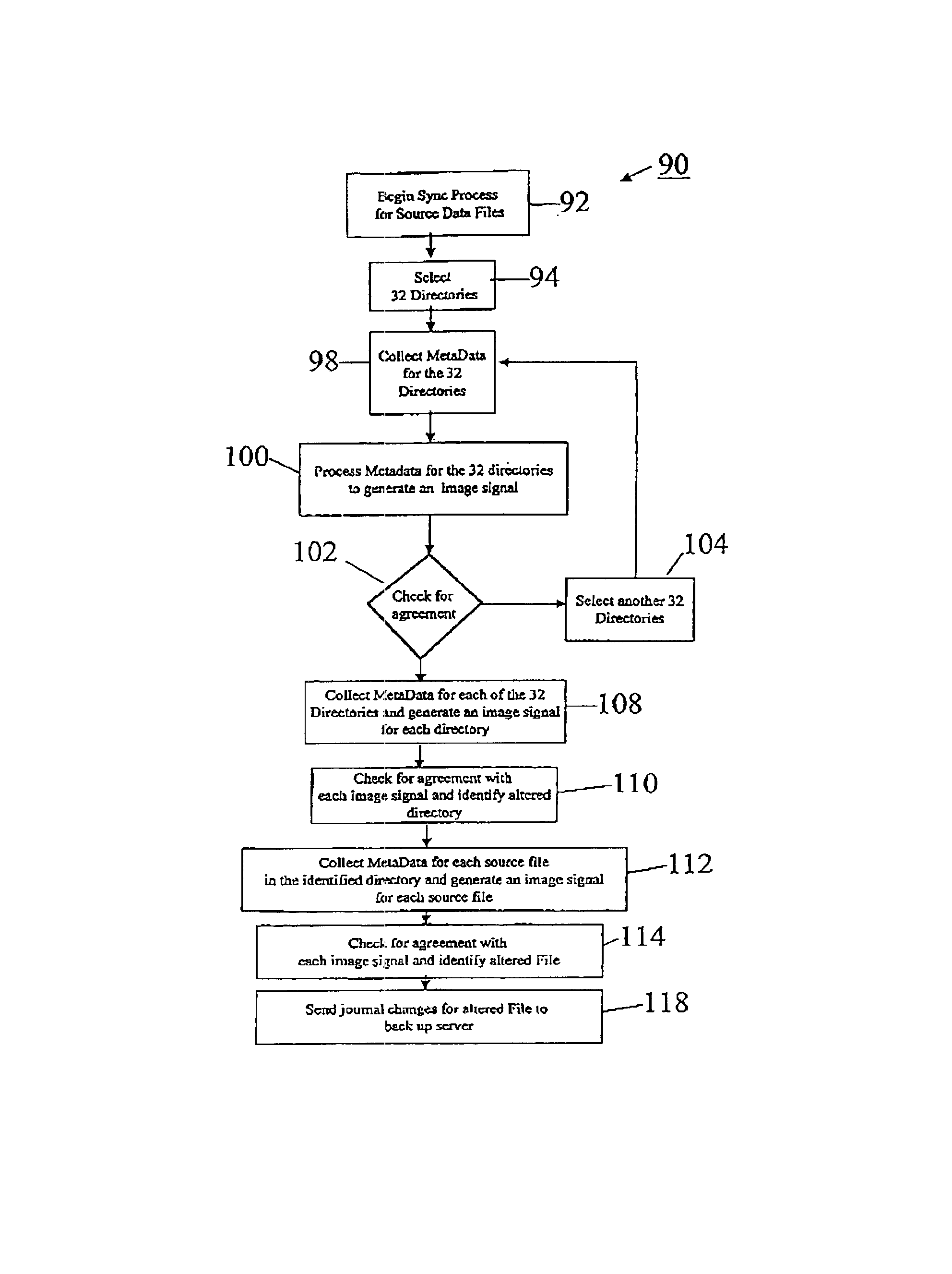

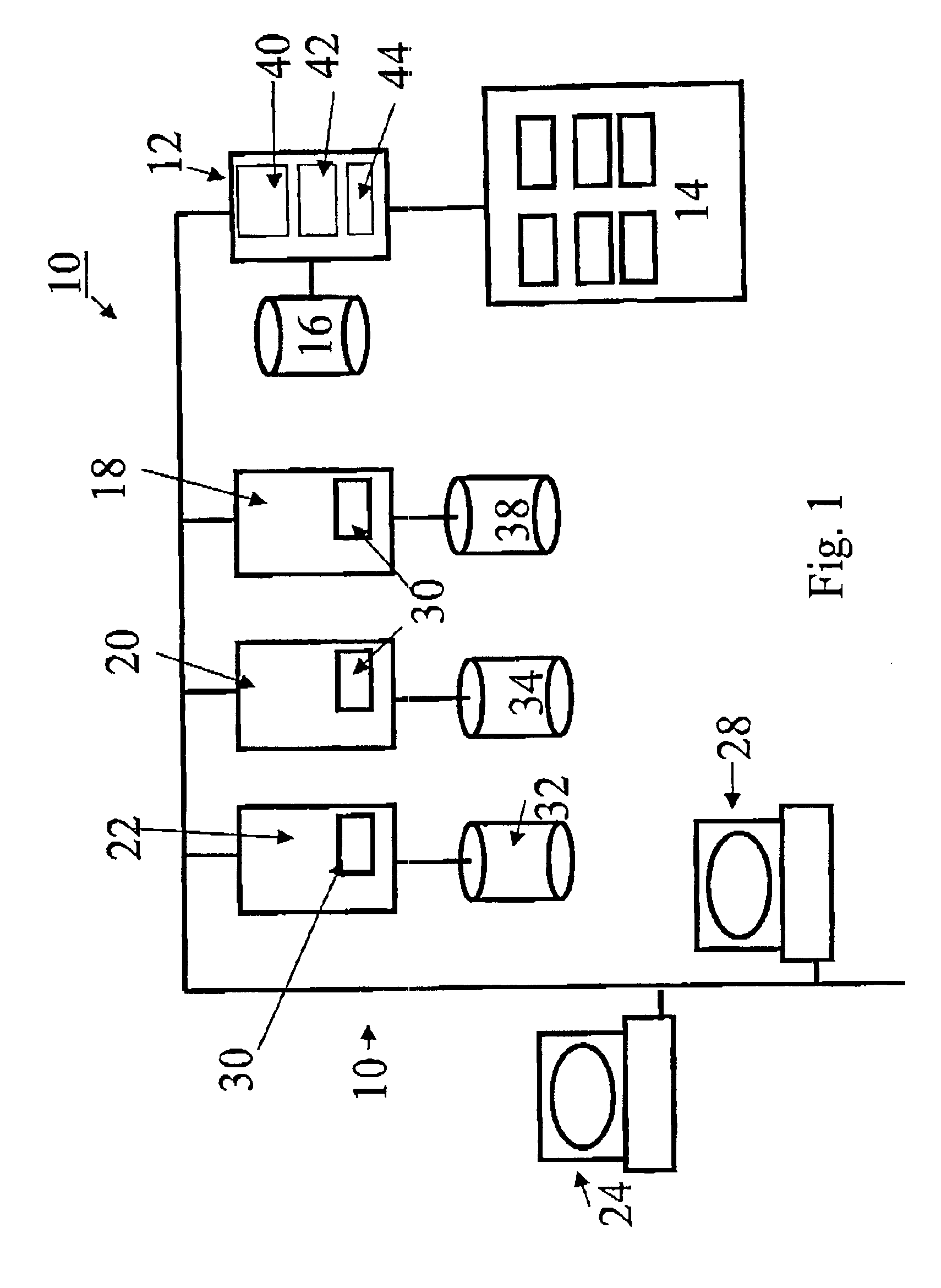

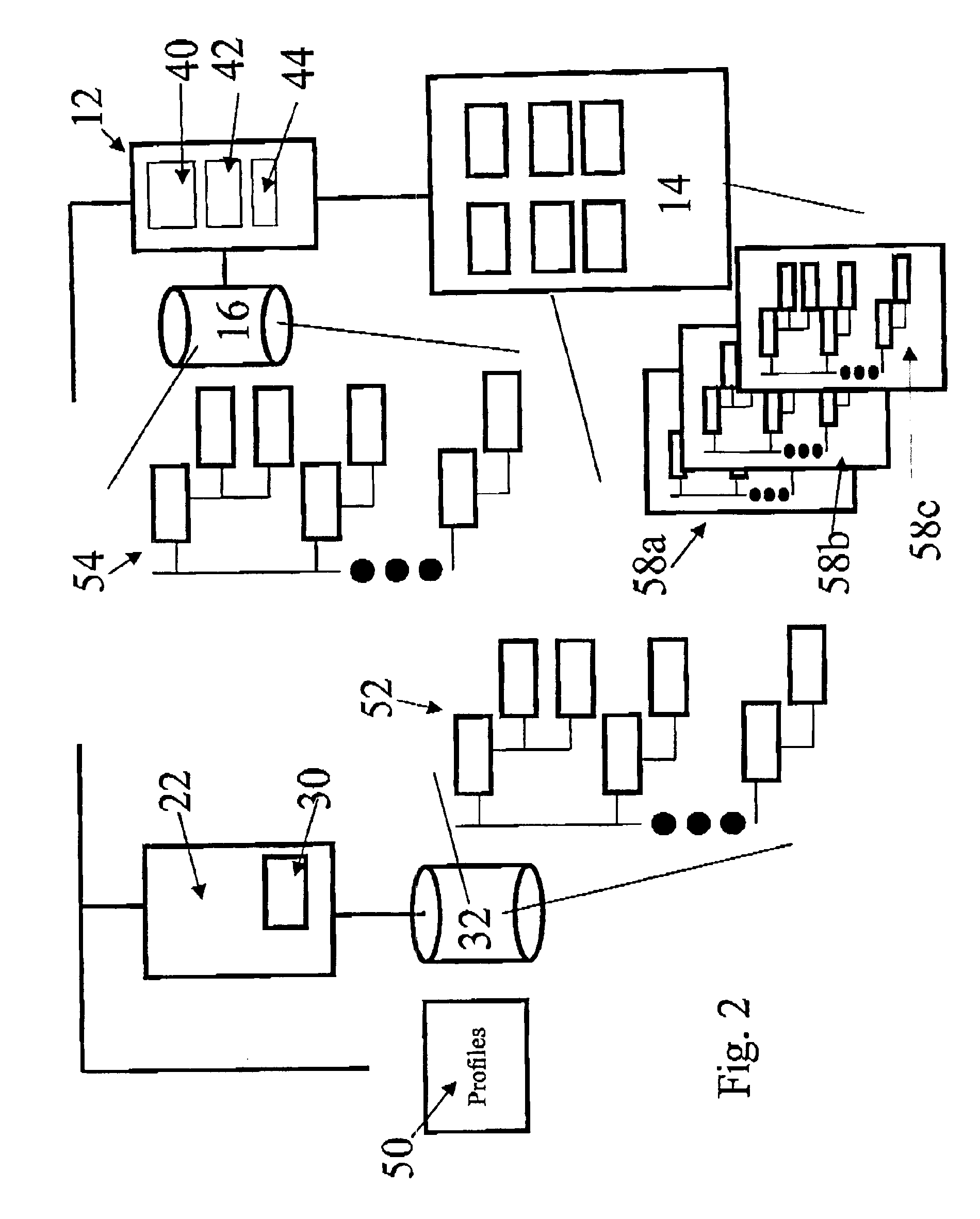

Systems and methods for backing up data files

InactiveUS6847984B1Provide integrityReduce demandData processing applicationsError detection/correctionBaseline dataData file

The invention provides systems and methods for continuous back up of data stored on a computer network. To this end the systems of the invention include a synchronization process that replicates selected source data files data stored on the network and to create a corresponding set of replicated data files, called the target data files, that are stored on a back up server. This synchronization process builds a baseline data structure of target data files. In parallel to this synchronization process, the system includes a dynamic replication process that includes a plurality of agents, each of which monitors a portion of the source data files to detect and capture, at the byte-level, changes to the source data files. Each agent may record the changes to a respective journal file, and as the dynamic replication process detects that the journal files contain data, the journal files are transferred or copied to the back up server so that the captured changes can be written to the appropriate ones of the target data files.

Owner:KEEPITSAFE INC

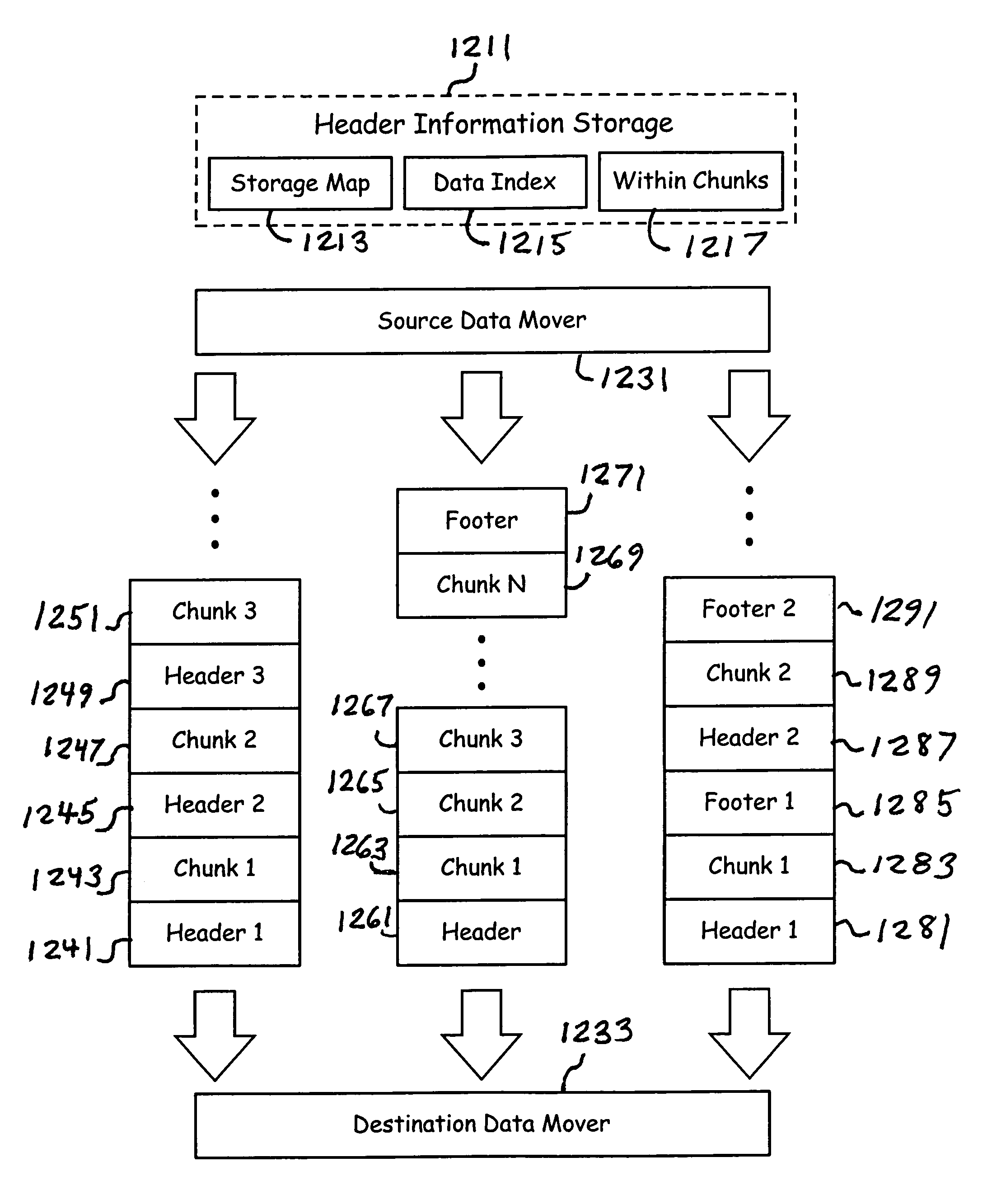

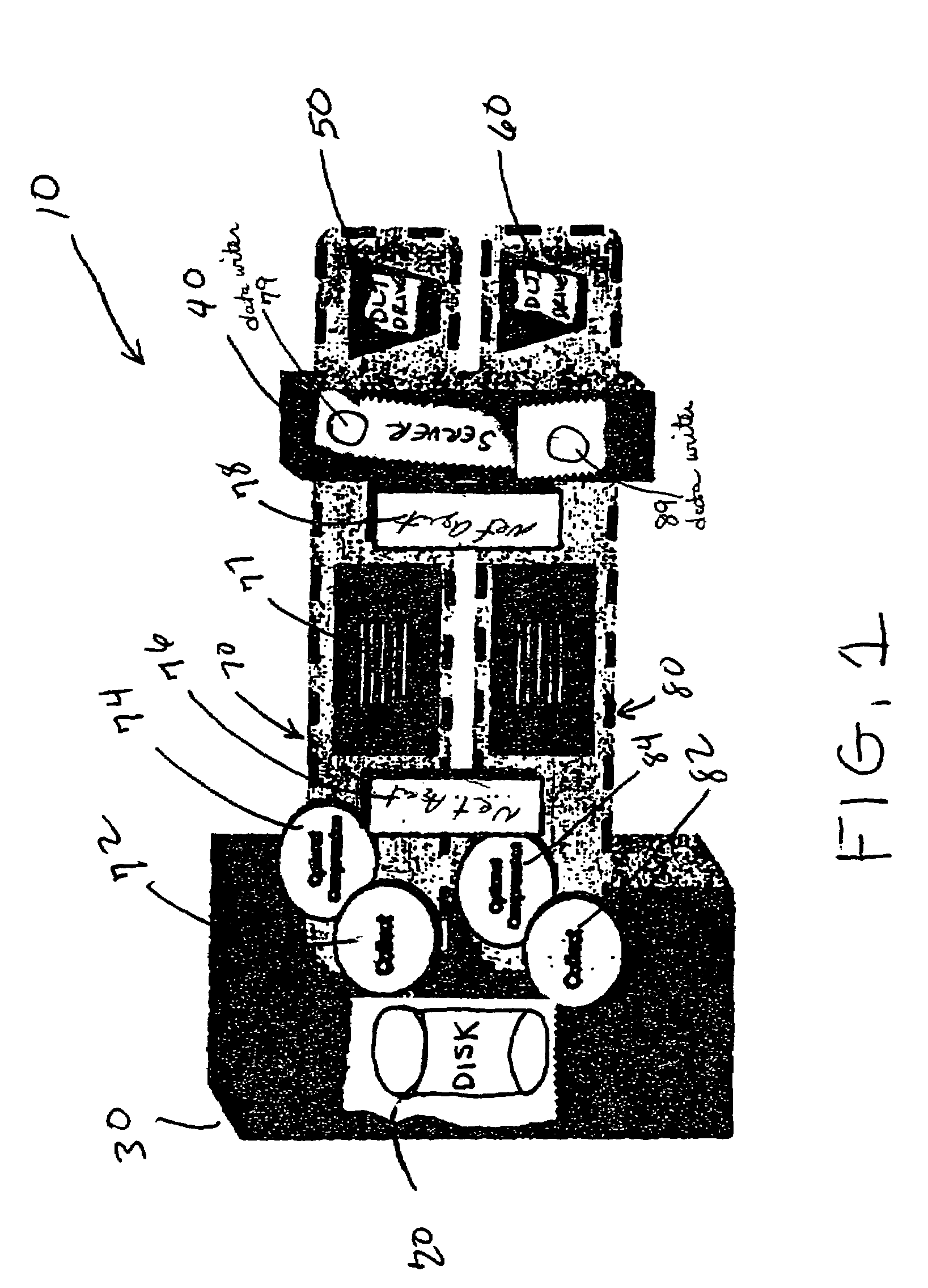

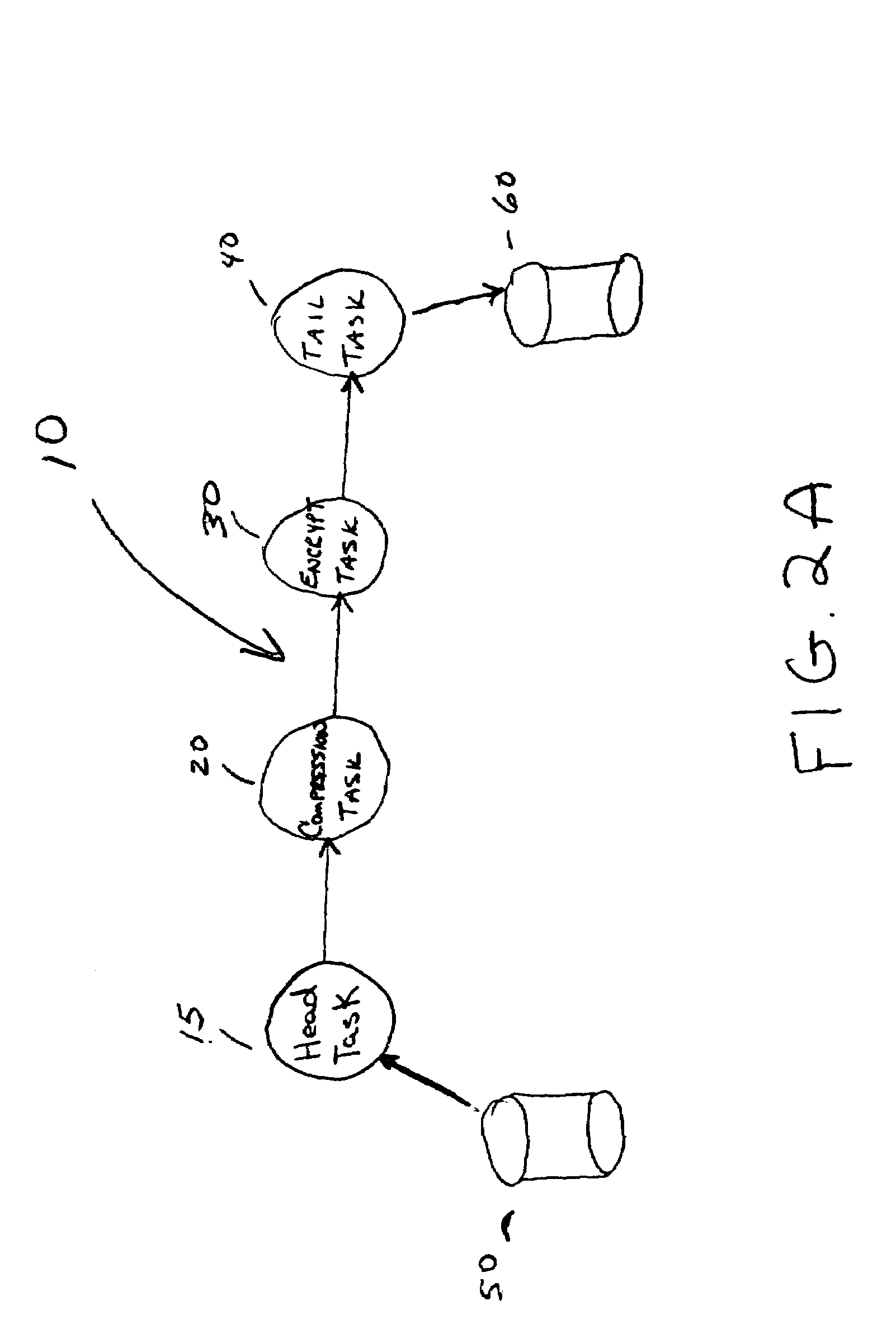

High speed data transfer mechanism

InactiveUS7209972B1Input/output to record carriersData switching by path configurationMedia typeOperational system

A storage and data management system establishes a data transfer pipeline between an application and a storage media using a source data mover and a destination data mover. The data movers are modular software entities which compartmentalize the differences between operating systems and media types. In addition, they independently interact to perform encryption, compression, etc., based on the content of a file as it is being communicated through the pipeline. Headers and chunking of data occurs when beneficial without the application ever having to be aware. Faster access times and storage mapping offer enhanced user interaction.

Owner:COMMVAULT SYST INC

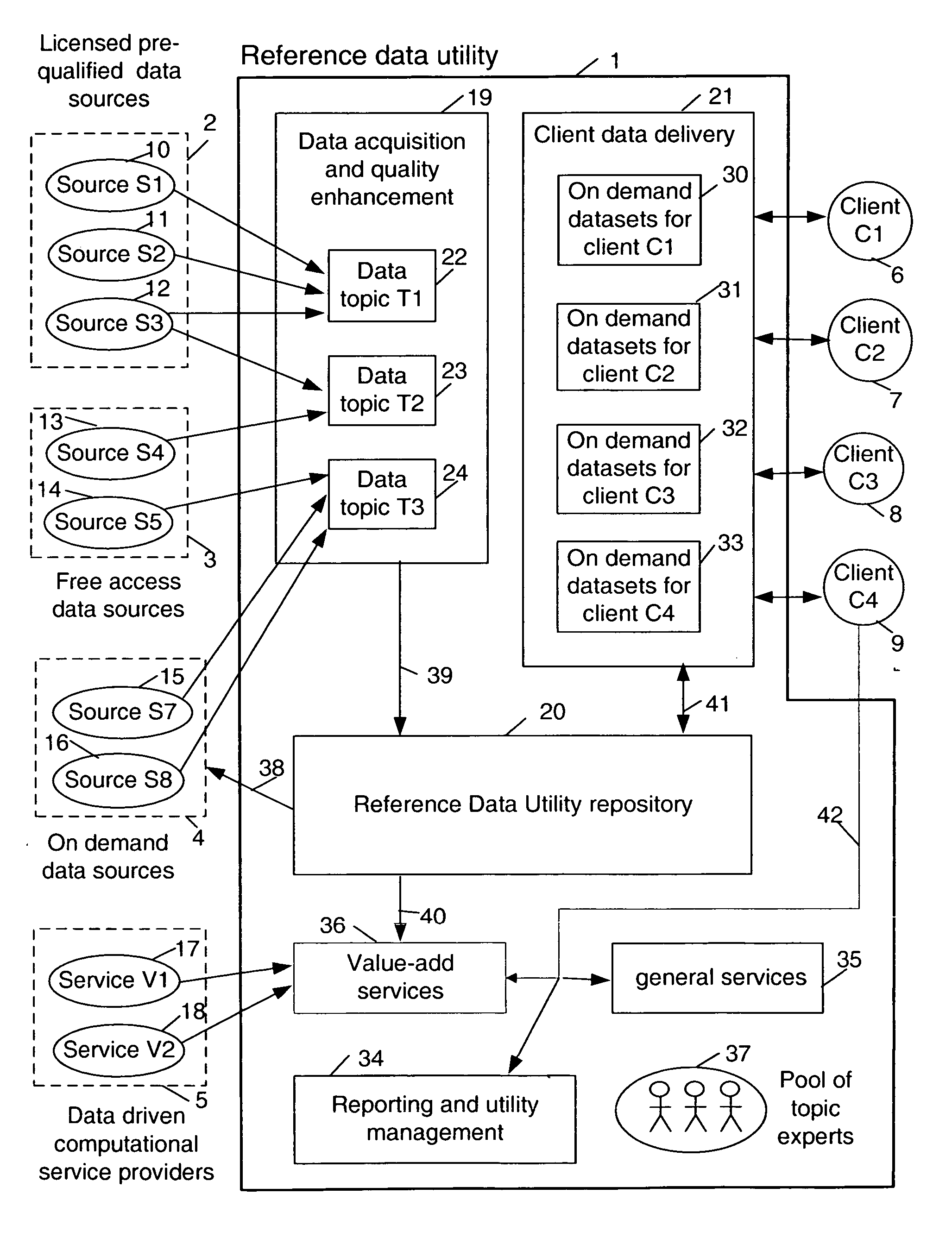

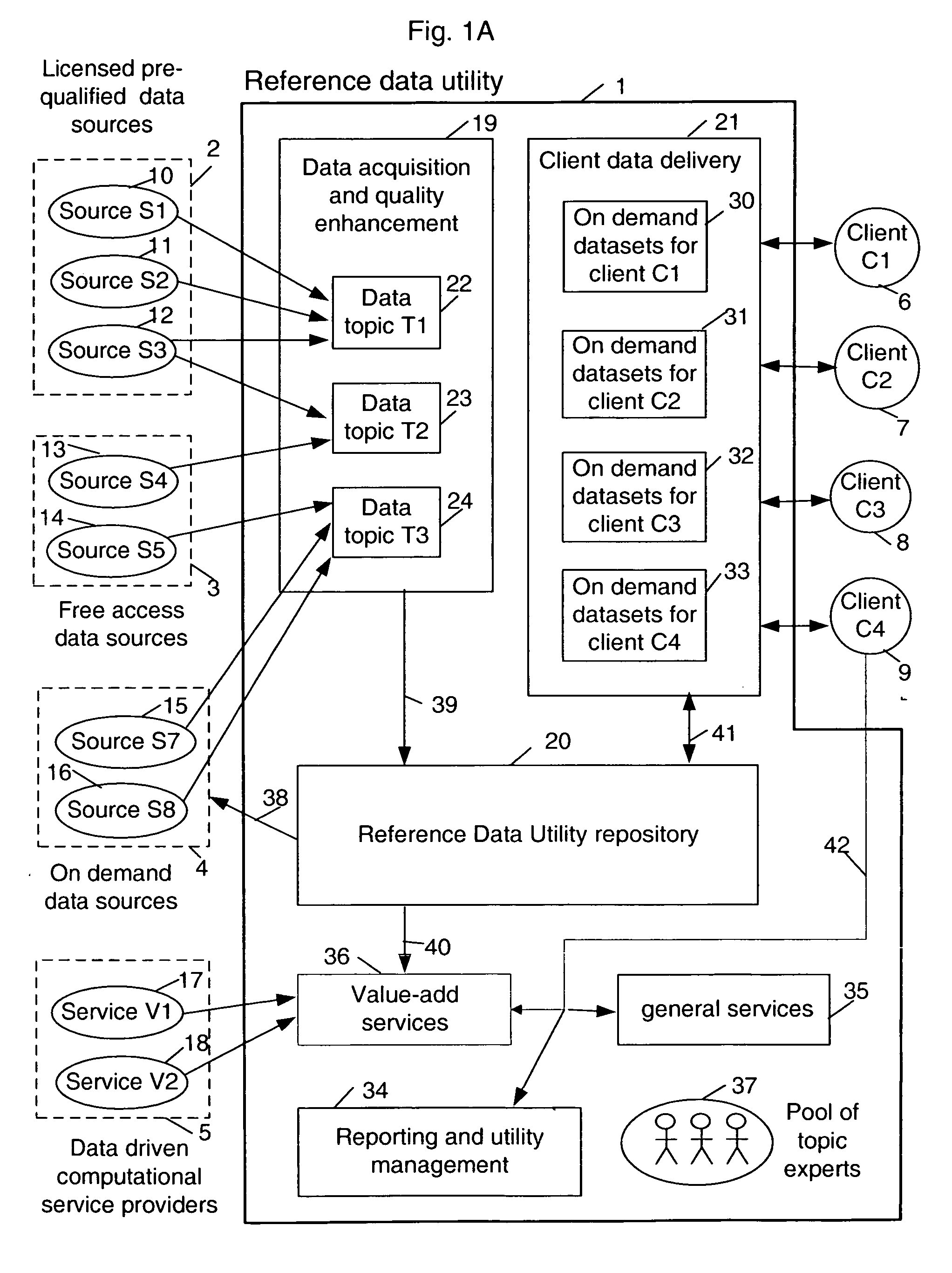

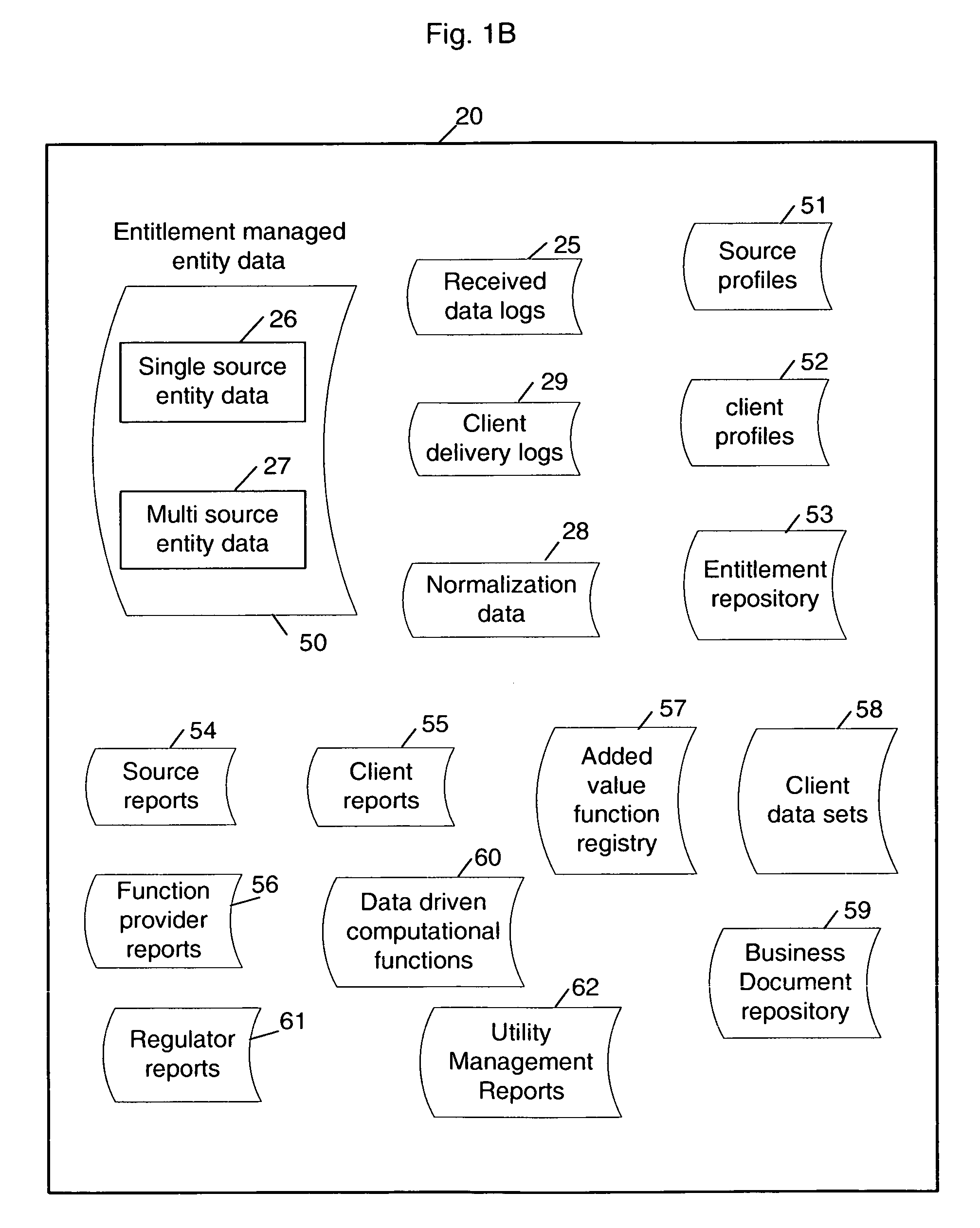

Multi-source multi-tenant entitlement enforcing data repository and method of operation

InactiveUS20060235831A1Easy to optimizeLow costFinanceSpecial data processing applicationsData qualityData store

Forming and maintaining a multi-source multi-tenant data repository on behalf of multiple tenants. Information in the multi-source multi-tenant data repository is received from multiple sources. Different sources and different data quality enhancement processes may yield different values for attributes of the same referred entity. Information in the multi-source multi-tenant data repository is tagged with annotations documenting the sources of the information, and any data quality processing actions applied to it. Tenants of the multi-source multi-tenant data repository have entitlement to values from some sources and to the results of some quality enhancement processes. Aspects of the method maintain this entitlement information; employ evolutionarily tracked source data tags; receive requests for information, locate the requested information, apply any sourcing preference, enforce entitlements and return entitled values to the requester. An outsourced reference data utility is one context where such a multi-source multi-tenant data repository is useful.

Owner:ADINOLFI RONALD EMMETT +9

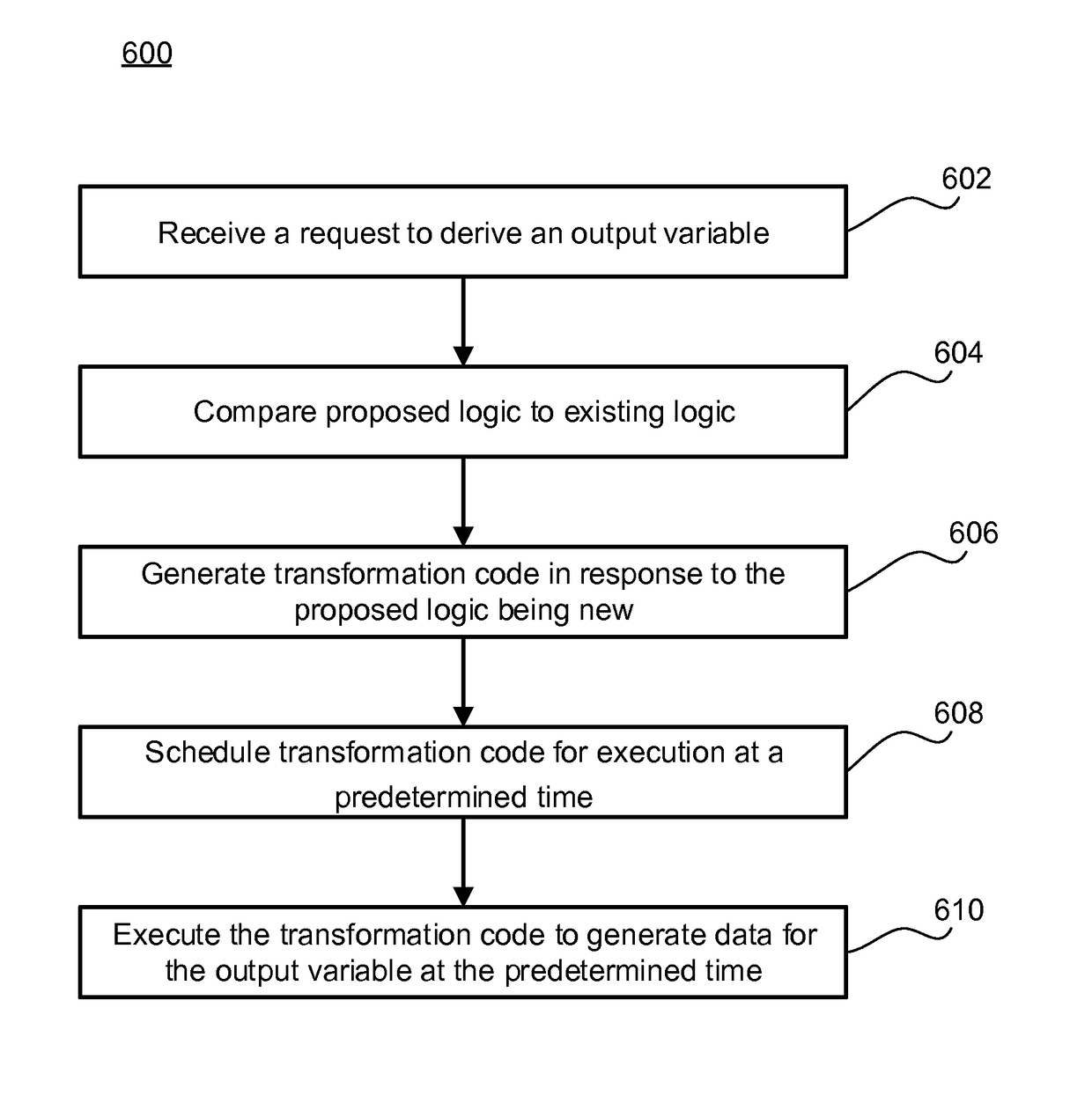

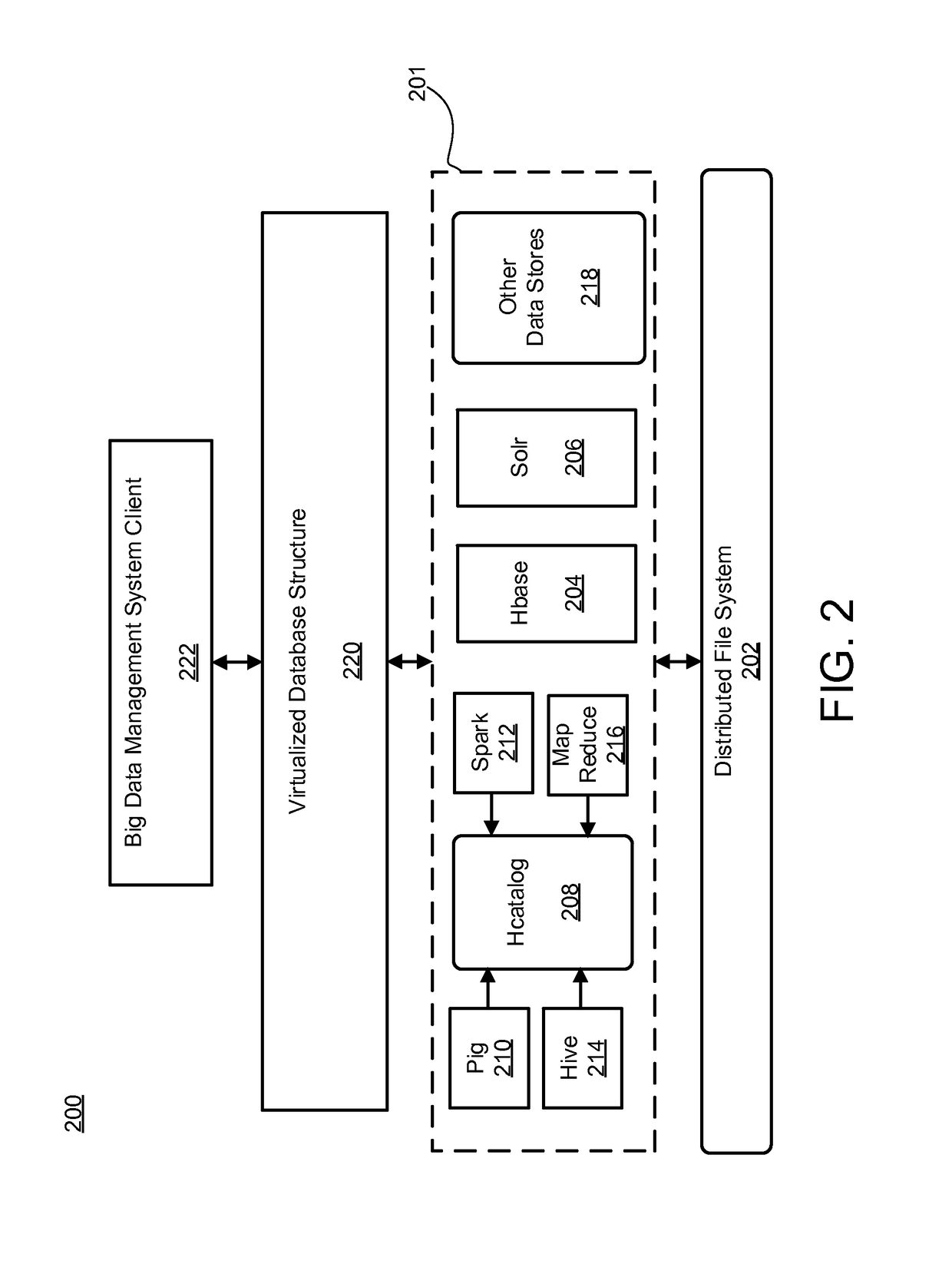

System and method transforming source data into output data in big data environments

ActiveUS10055426B2Digital data information retrievalRequirement analysisTheoretical computer scienceSource data

A system may receive a request to derive an output variable from a source variable. The request may include proposed logic to derive the output variable from the source variable. The system may then compare the proposed logic to existing logic to determine the proposed logic is new. In response to the proposed logic being new, the system may generate transformation code configured to execute the proposed logic. The system may further schedule the transformation code for execution at a predetermined time, and then execute the transformation code to generate data for the output variable.

Owner:AMERICAN EXPRESS TRAVEL RELATED SERVICES CO INC

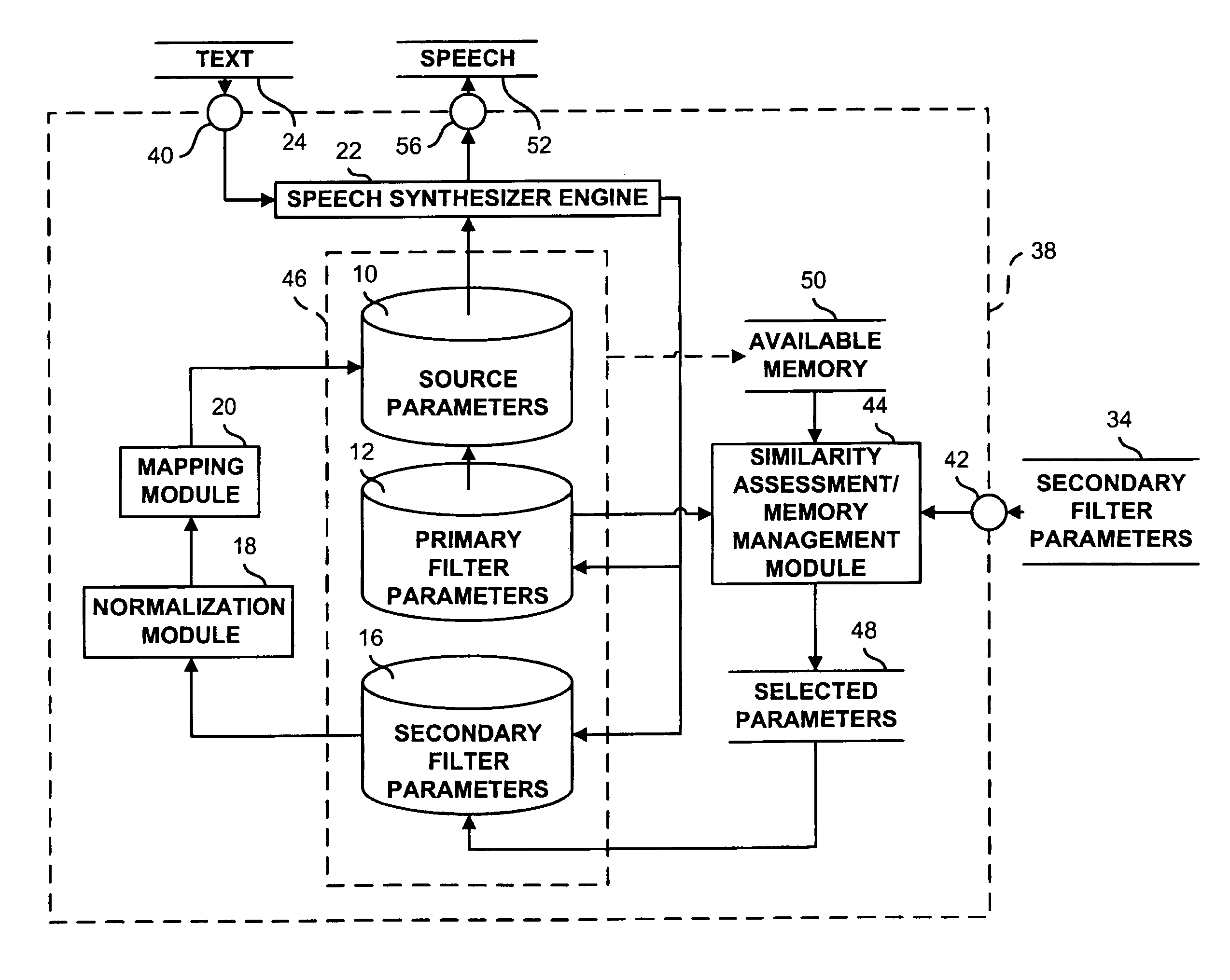

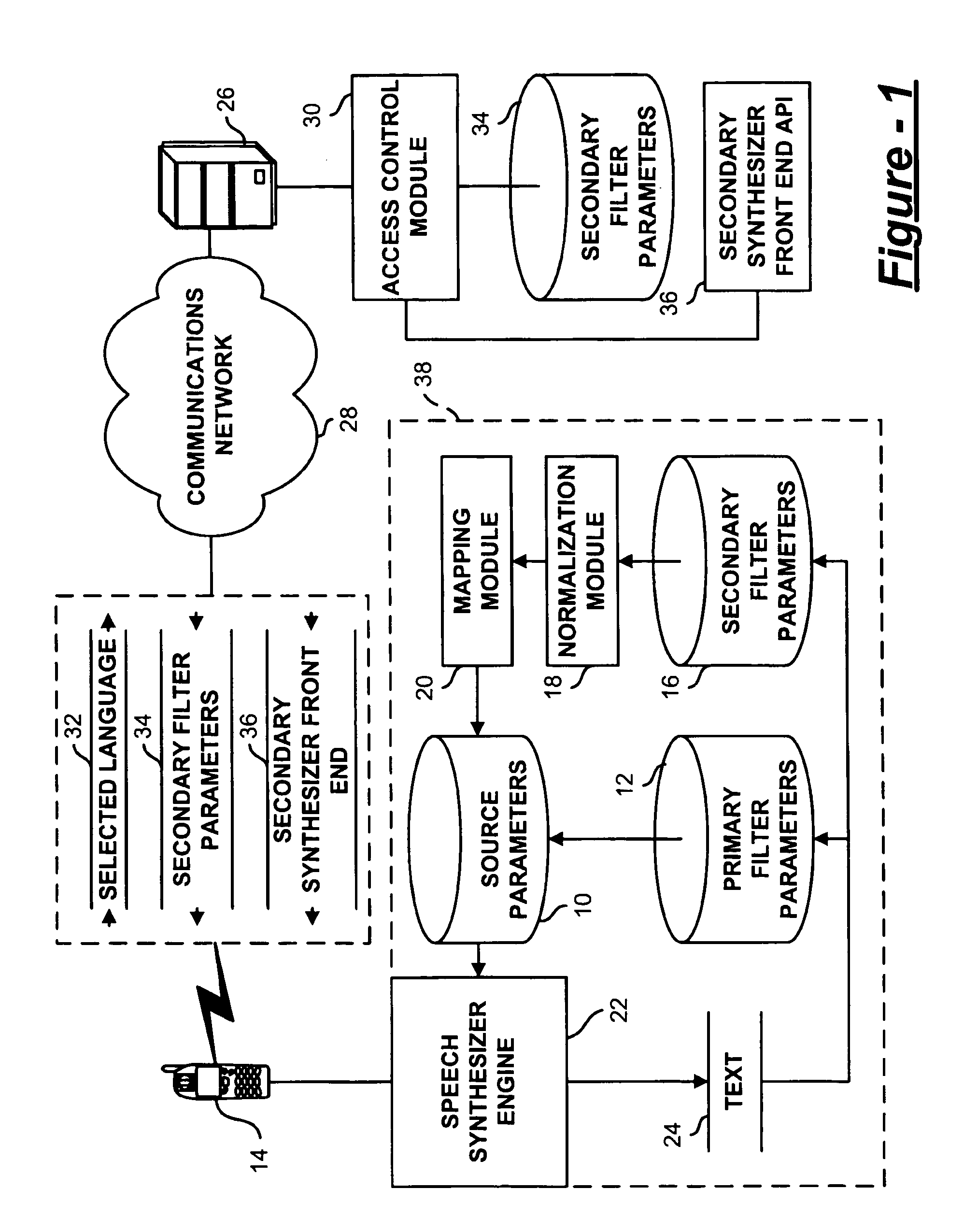

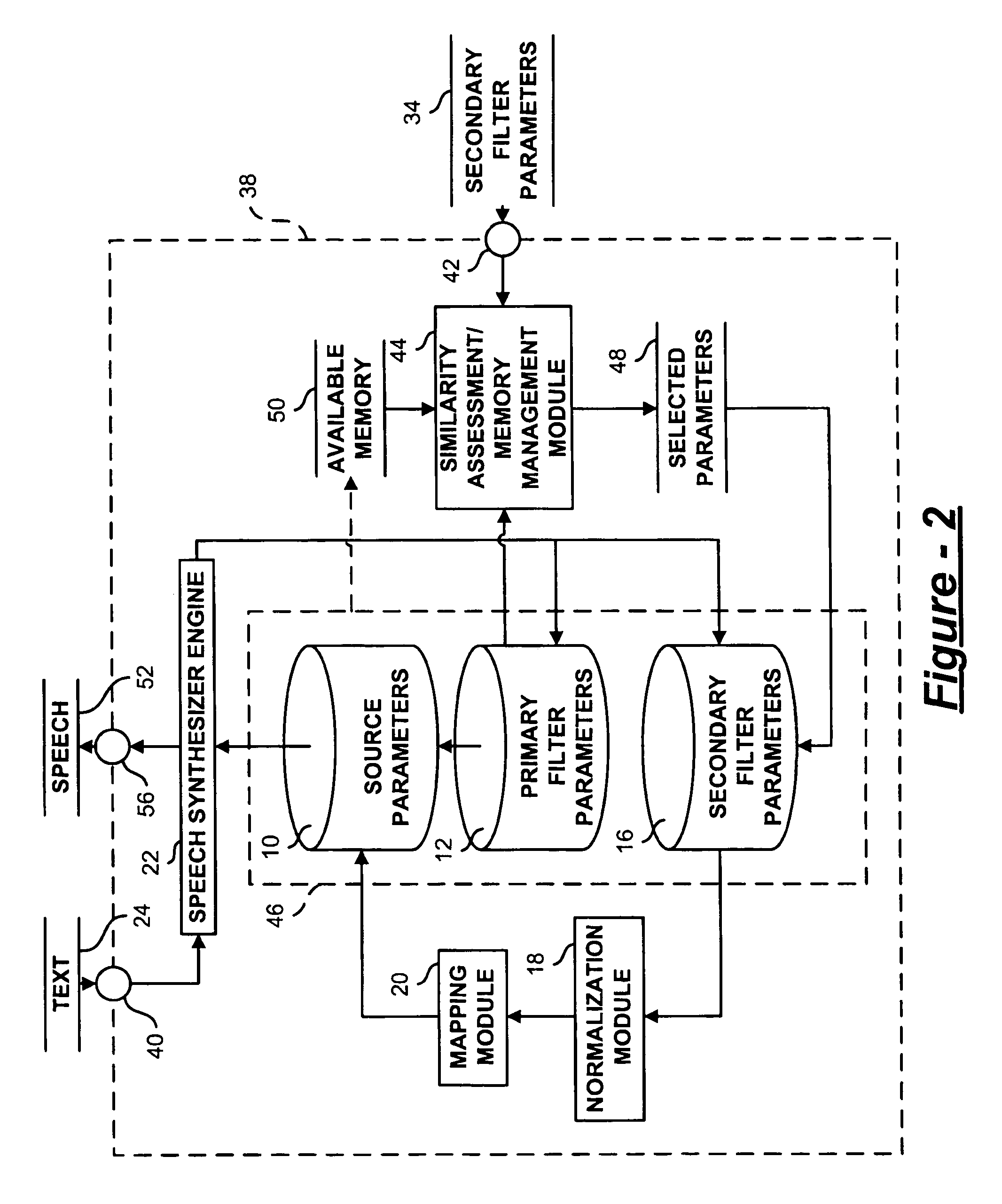

Multilingual text-to-speech system with limited resources

A multilingual text-to-speech system includes a source datastore of primary source parameters providing information about a speaker of a primary language. A plurality of primary filter parameters provides information about sounds in the primary language. A plurality of secondary filter parameters provides information about sounds in a secondary language. One or more secondary filter parameters is normalized to the primary filter parameters and mapped to a primary source parameter.

Owner:SOVEREIGN PEAK VENTURES LLC

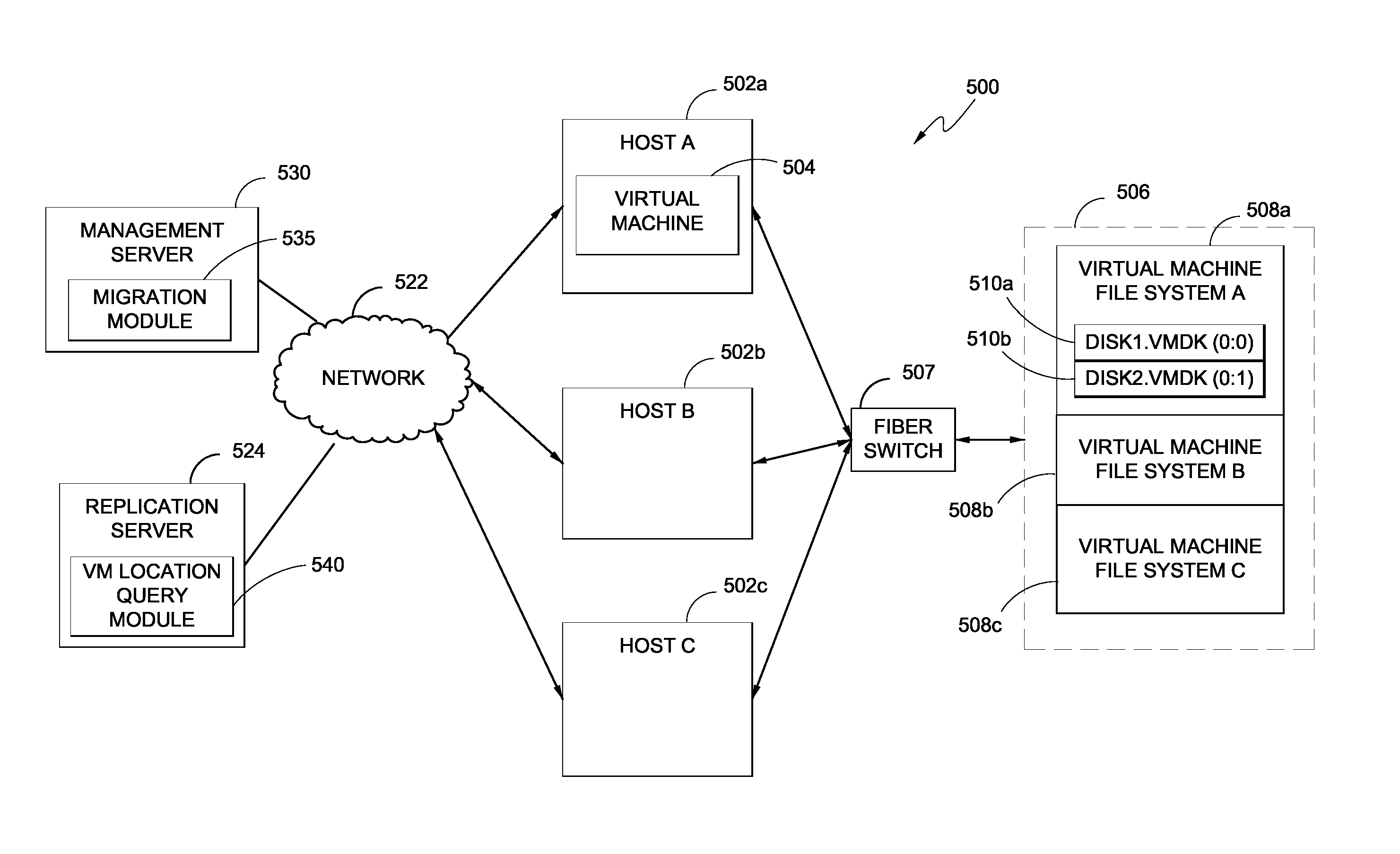

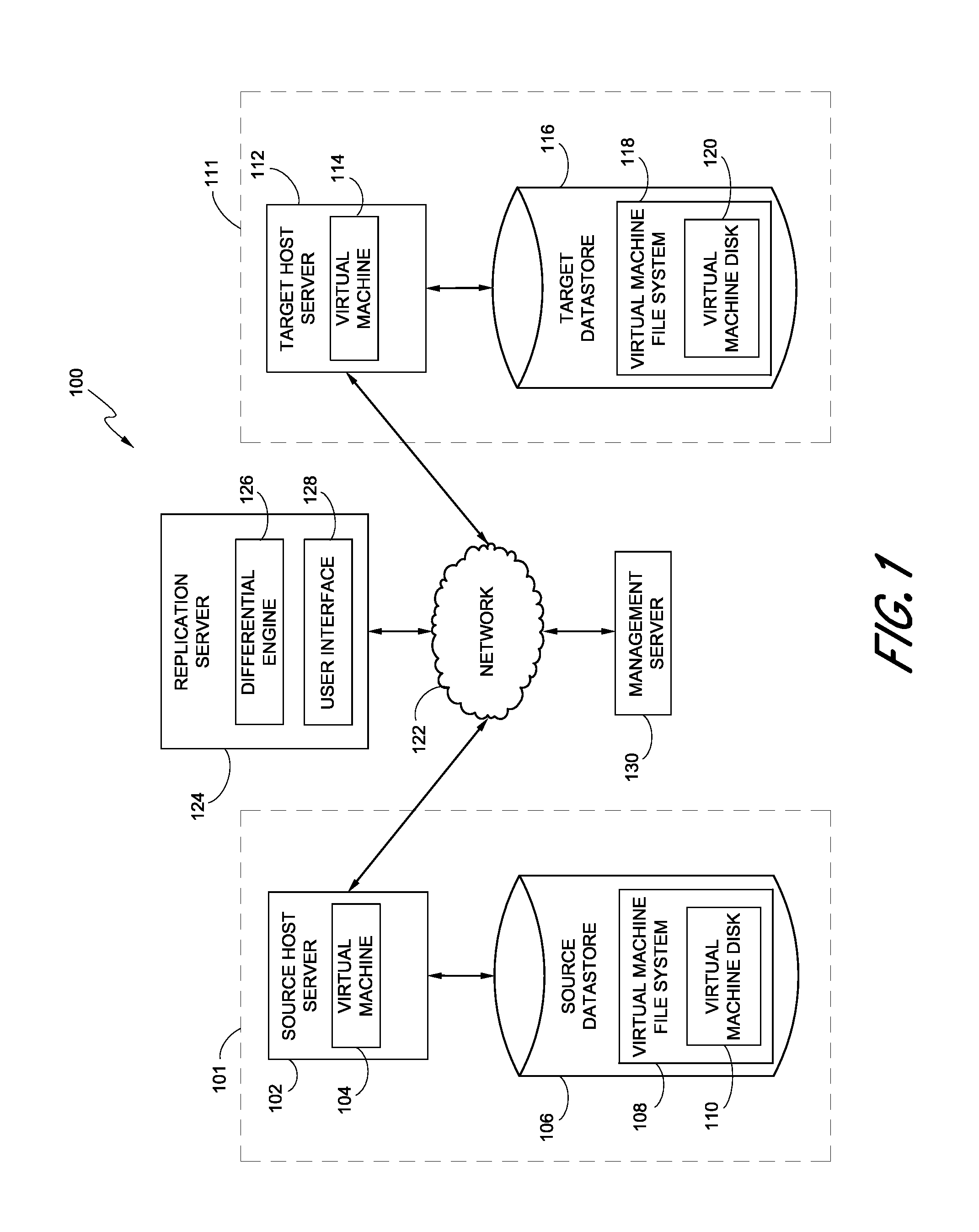

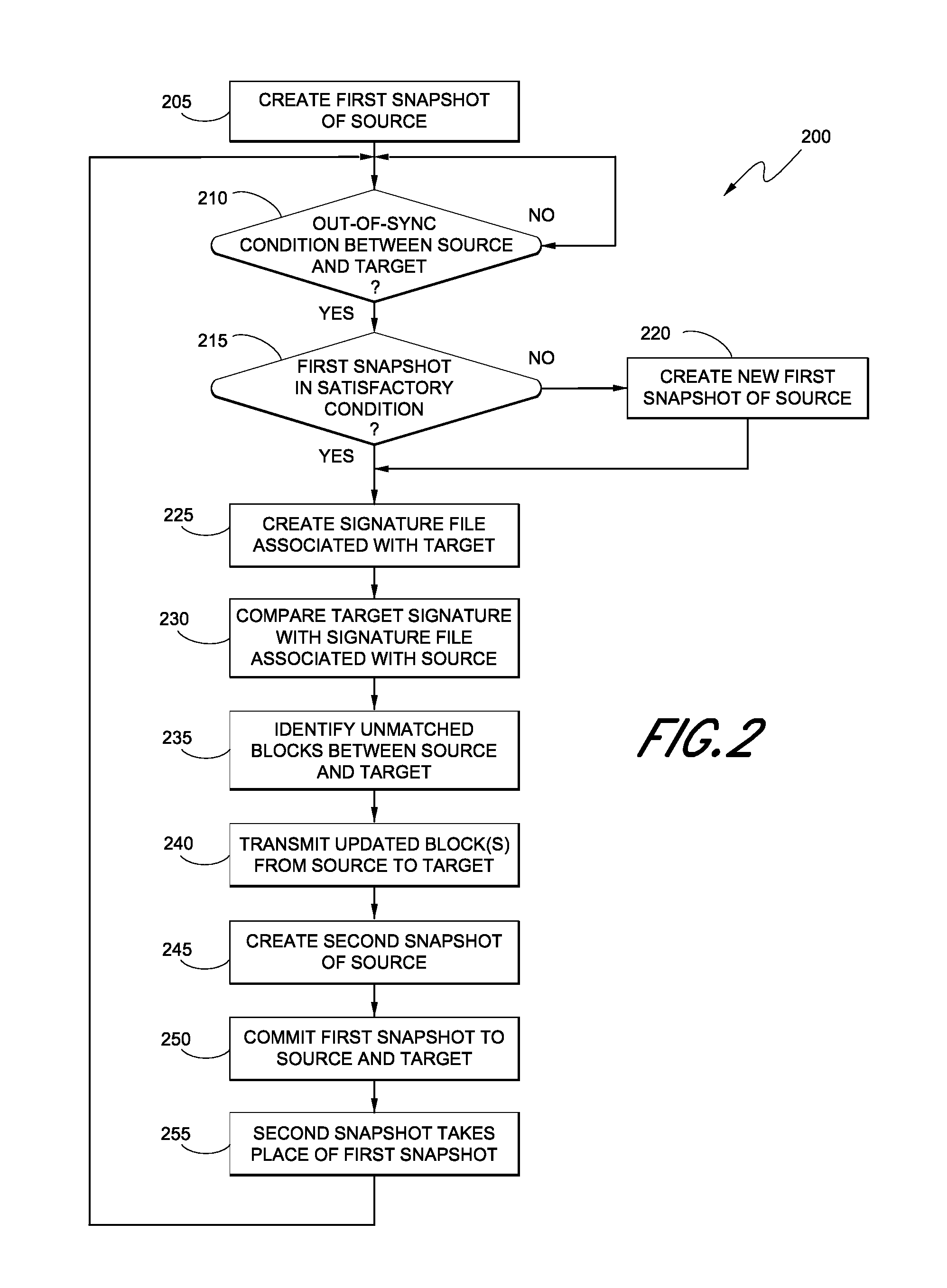

Replication systems and methods for a virtual computing environment

Hybrid replication systems and methods for a virtual computing environment utilize snapshot rotation and differential replication. During snapshot rotation, data modifications intended for a source virtual machine disk (VMDK) are captured by a primary snapshot. Once a particular criterion is satisfied, the data modifications are redirected to a secondary snapshot while the primary snapshot is committed to both source and target VMDKs. The secondary snapshot is then promoted to primary, and a new secondary snapshot is created with writes redirected thereto. If the VMDKs become out-of-sync, disclosed systems can automatically perform a differential scan of the source data and send only the required changes to the target server. Once the two data sets are synchronized, snapshot replication can begin at the previously configured intervals. Certain systems further provide for planned failover copy operations and / or account for migration of a virtual machine during the copying of multiple VMDKs.

Owner:QUEST SOFTWARE INC

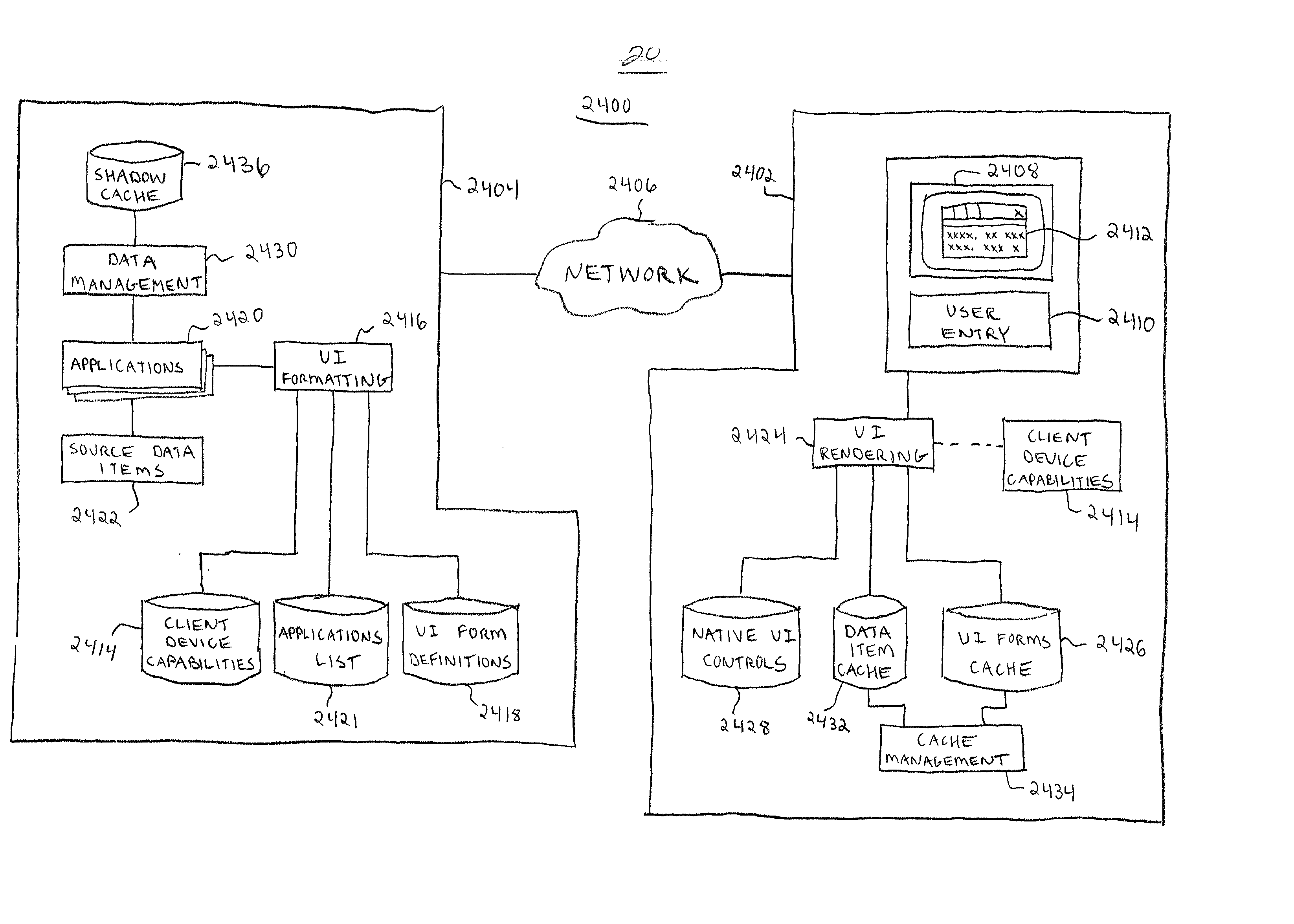

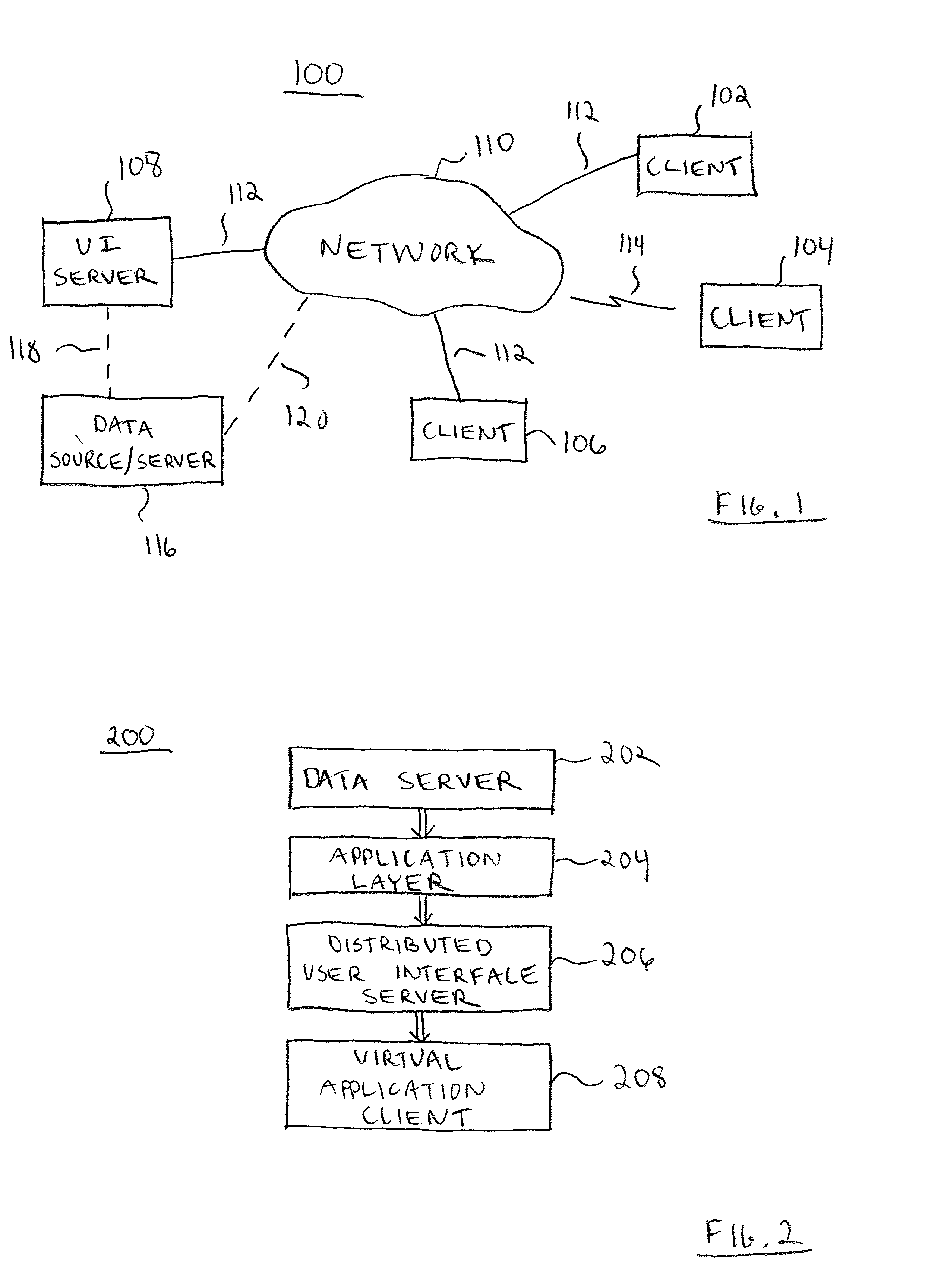

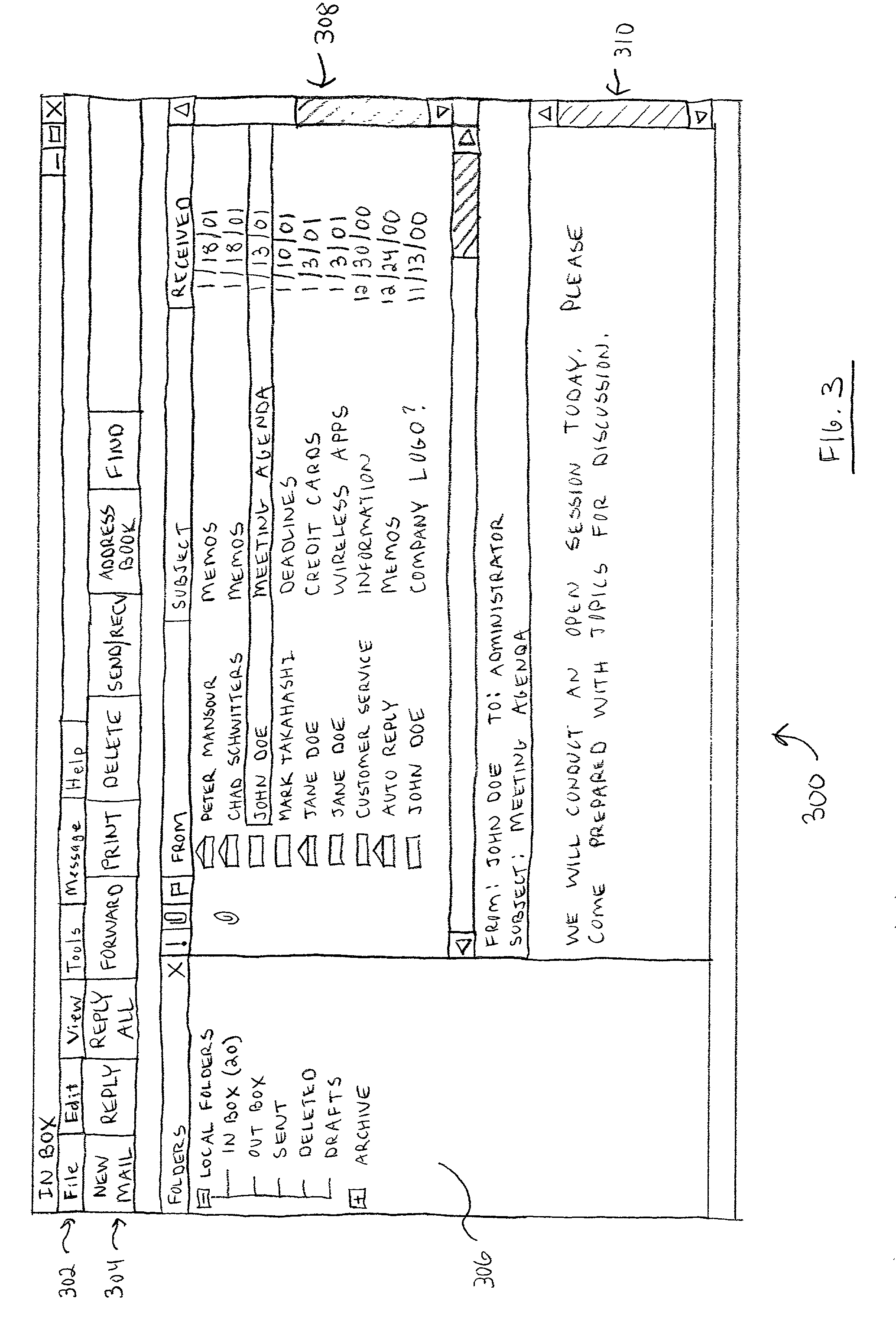

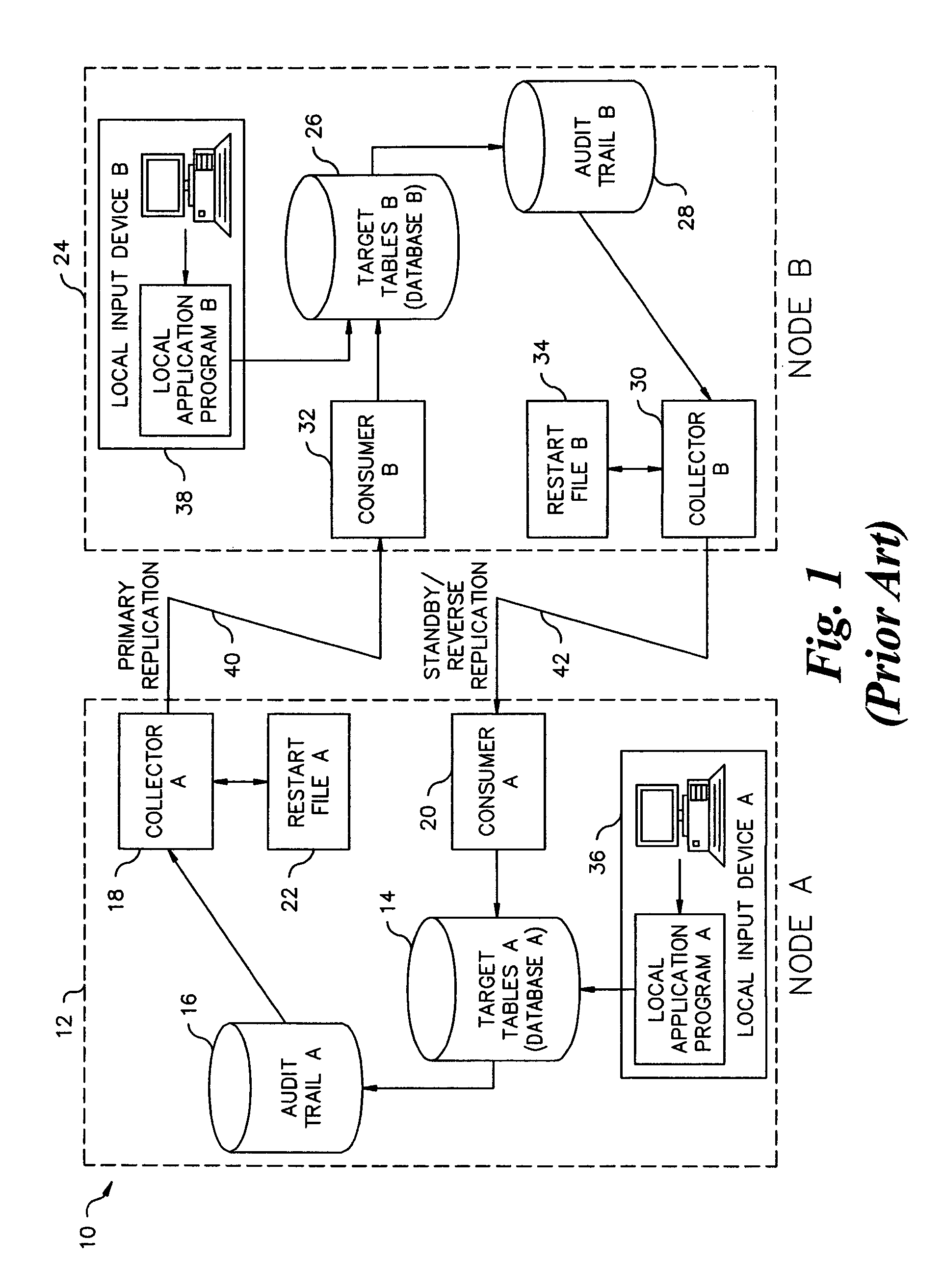

Platform-independent distributed user interface client architecture

InactiveUS20020129096A1Reduce demandLower-bandwidthCathode-ray tube indicatorsMultiple digital computer combinationsDistributed user interfaceThe Internet

A distributed user interface (UI) system includes a client device configured to render a UI for a server-based application. The client device communicates with a UI server over a network such as the Internet. The UI server performs formatting for the UI, which preferably utilizes a number of native UI controls that are available locally at the client device. In this manner, the client device need only be responsible for the actual rendering of the UI. The source data items are downloaded from the UI server to the client device when necessary, and the client device populates the UI with the downloaded source data items. The client device employs a cache to store the source data items locally for easy retrieval.

Owner:SPROQIT TECHNOLGIES

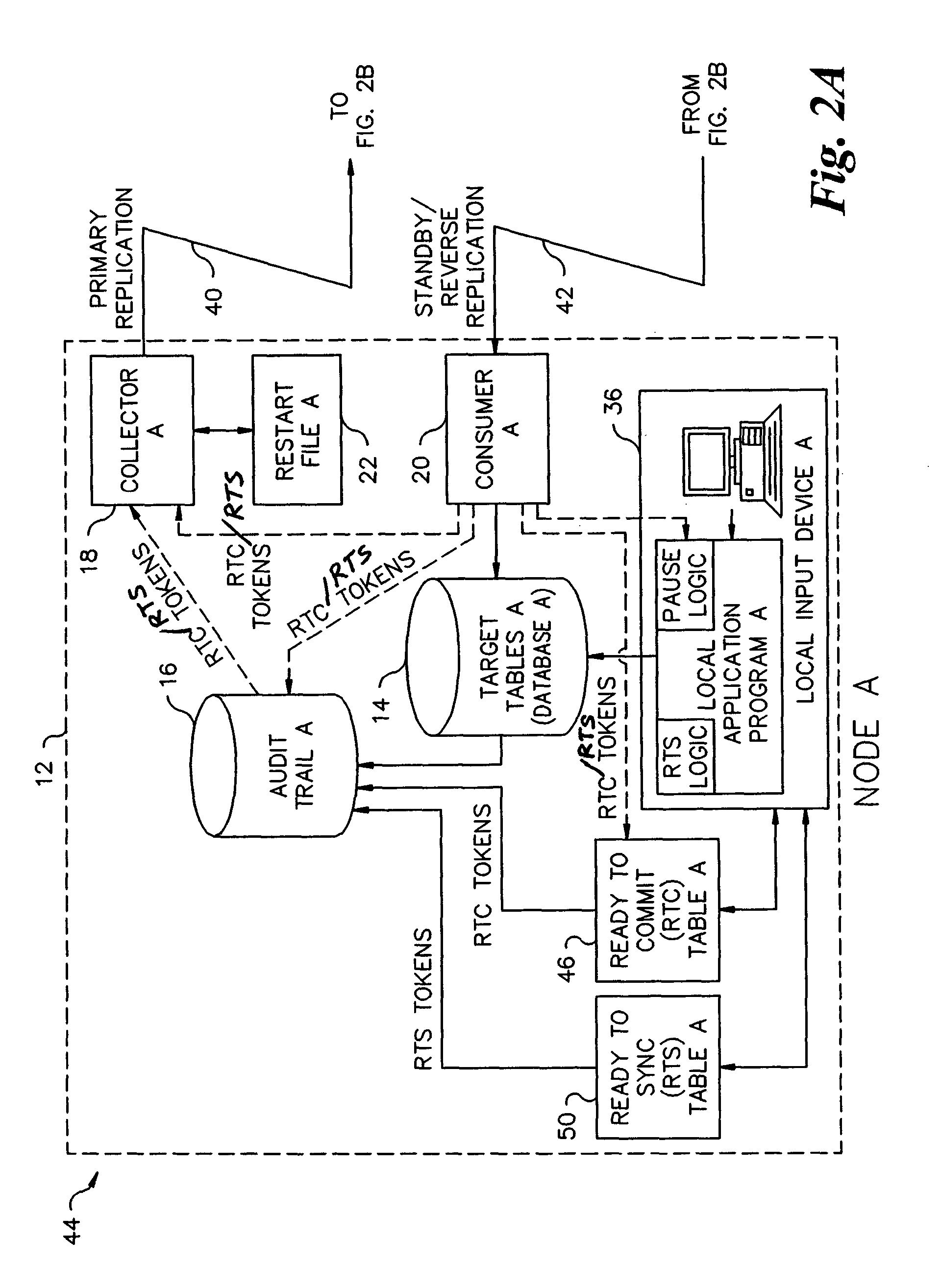

Asynchronous coordinated commit replication and dual write with replication transmission and locking of target database on updates only

InactiveUS7177866B2Digital data information retrievalData processing applicationsData recordsTarget database

Tokens are used to prepare a target database for replication from a source database and to confirm the preparation in an asynchronous coordinated commit replication process. During a dual write replication process, transmission of the replicated data and locking of data records in the target database occurs only on updates.

Owner:RPX CORP

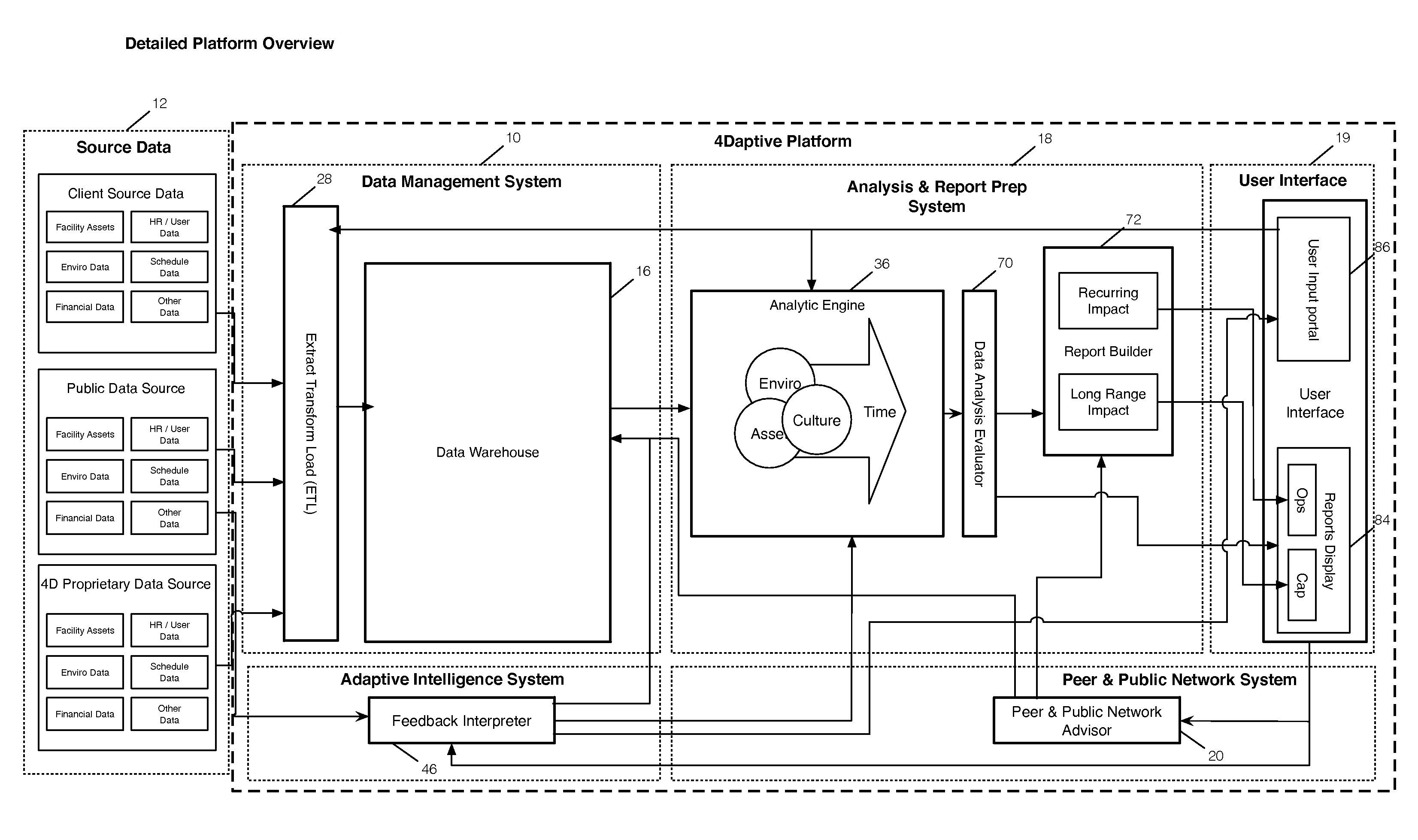

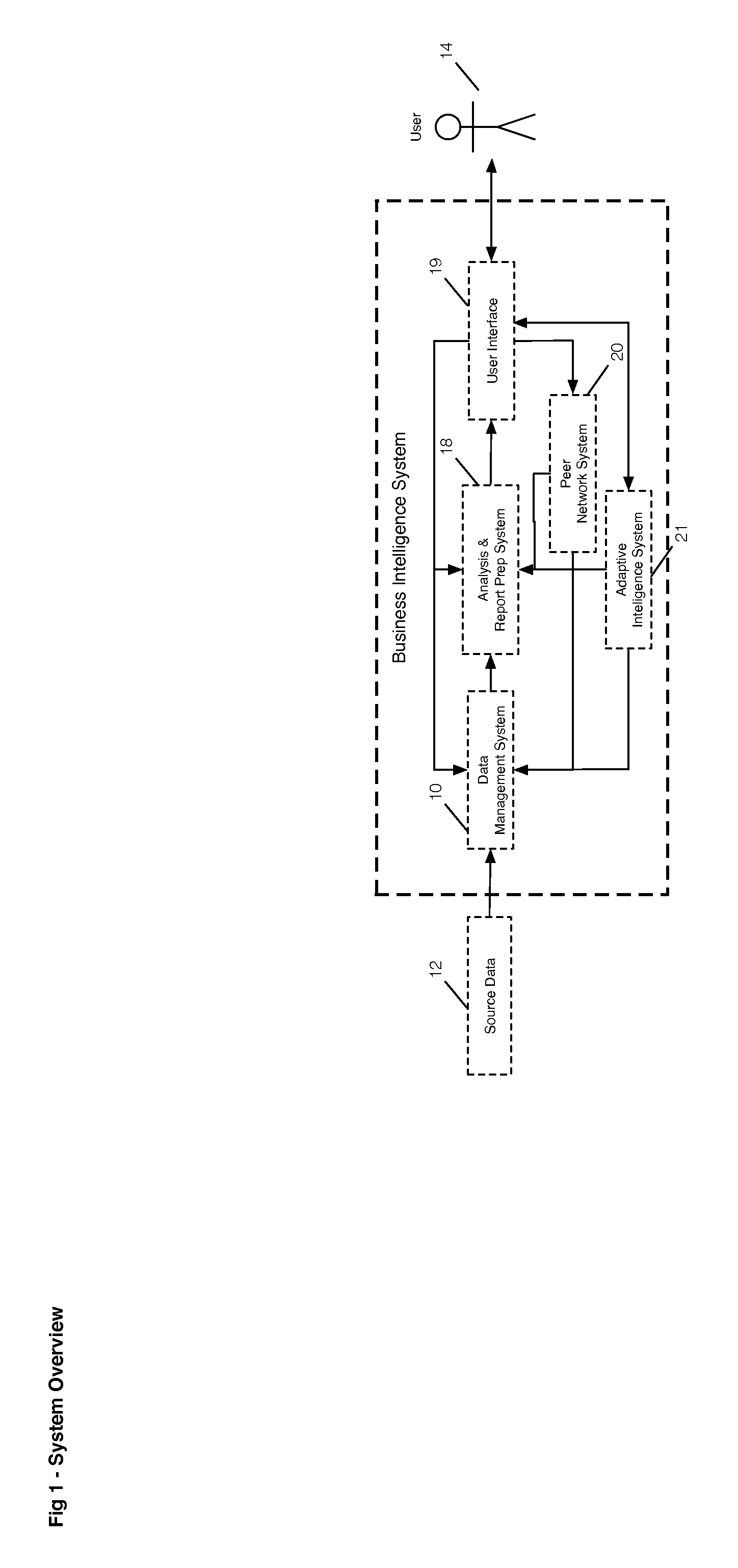

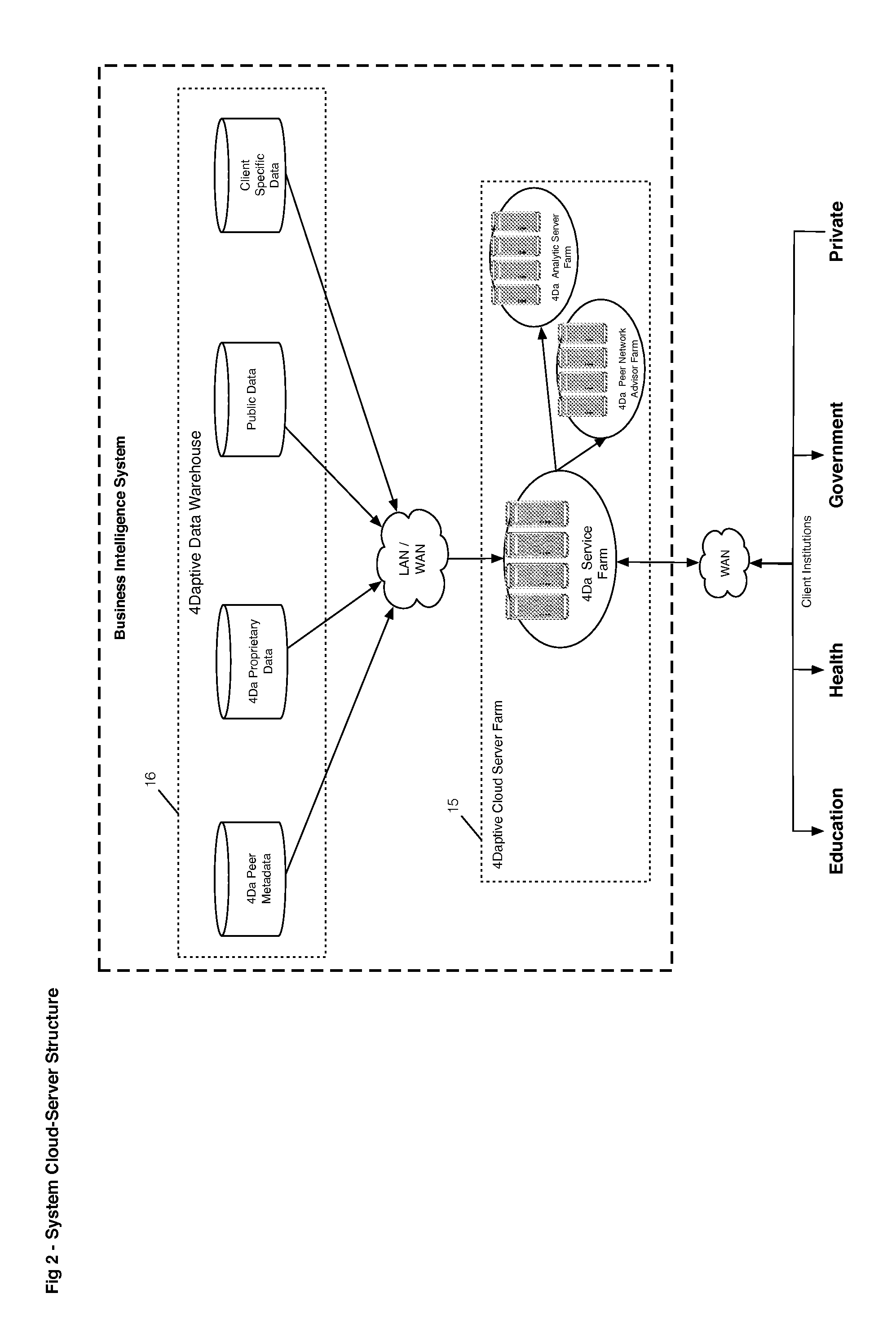

Business intelligence system and method utilizing multidimensional analysis of a plurality of transformed and scaled data streams

A method is provided for qualifying and analyzing business intelligence. At a first part of a data management system receives first, second and third streams of data. The first stream is client provided source data, the second is public source data and the third is data management system internal data previously collected and managed source data in the data management system. The three streams of data are organized into items and their attributes at the data management system. The source data is transformed at a data warehouse where it becomes normalized. Logic is applied to provide multi-dimensional analysis of transformed source data relative to a scale for at least one business intelligence. The data warehouse includes updated data from the multi-dimensional analysis. A user interface communicates with the data management system to create statistical information that illustrates impact over time and value.

Owner:ROUNDHOUSE ONE

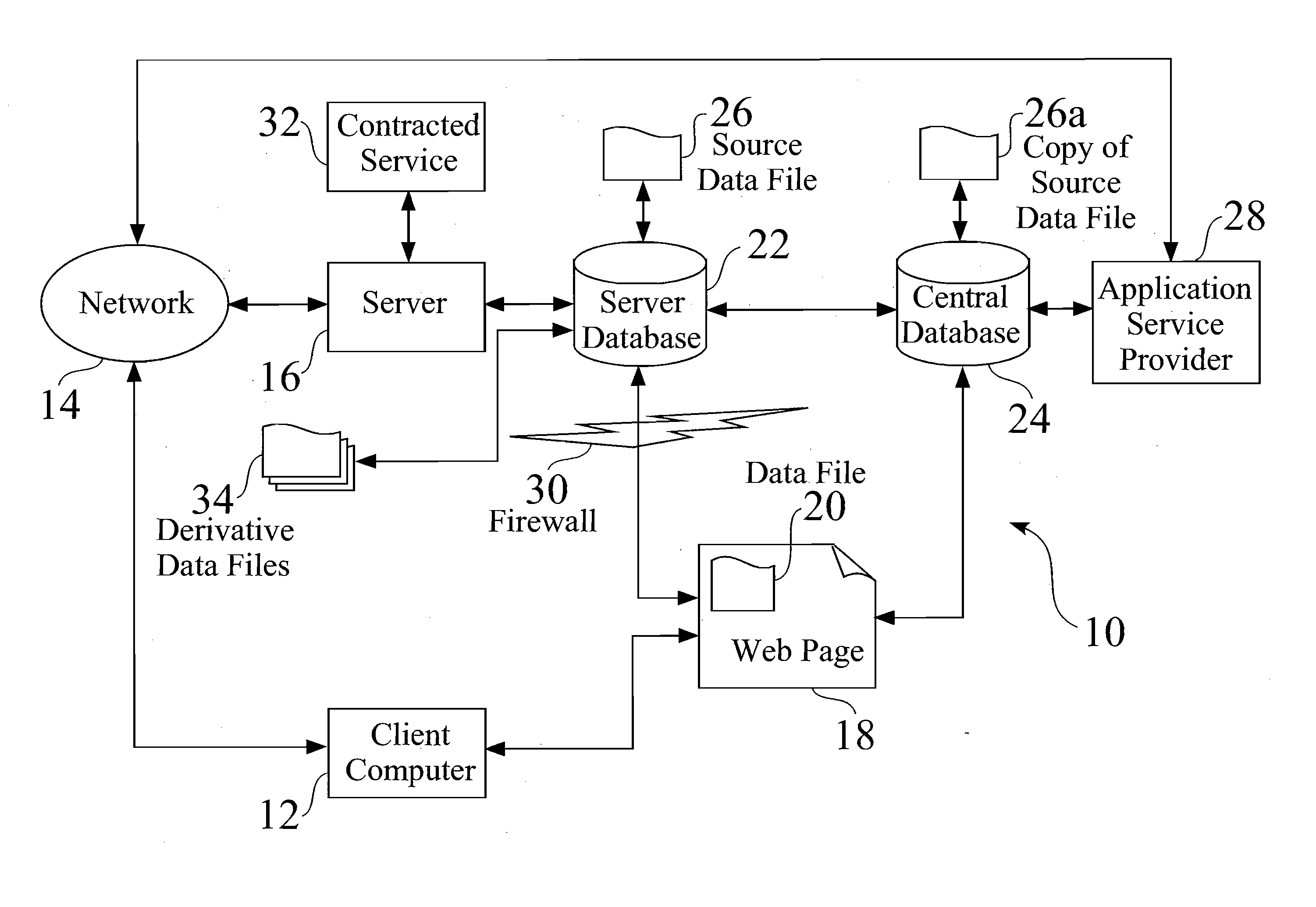

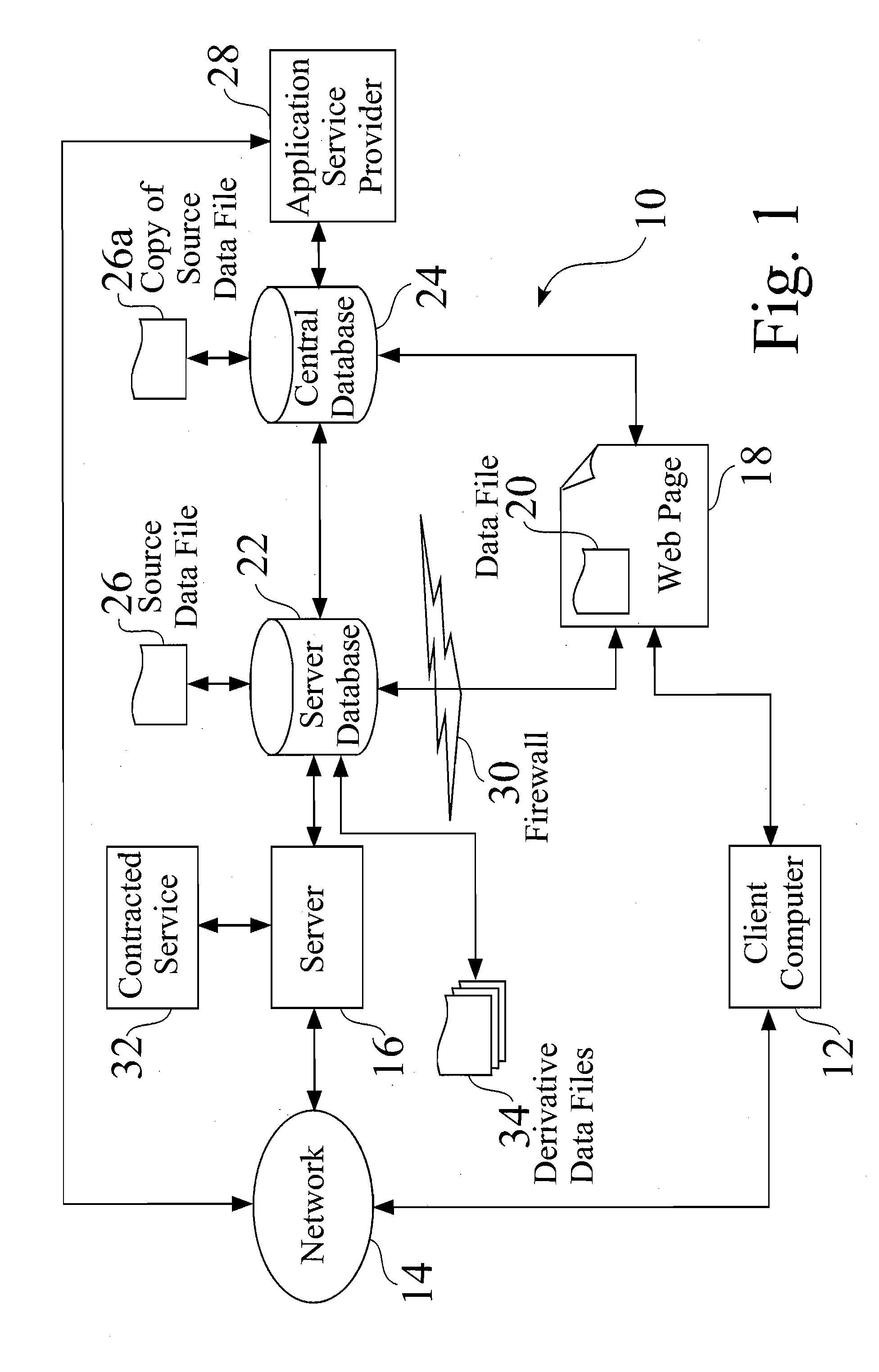

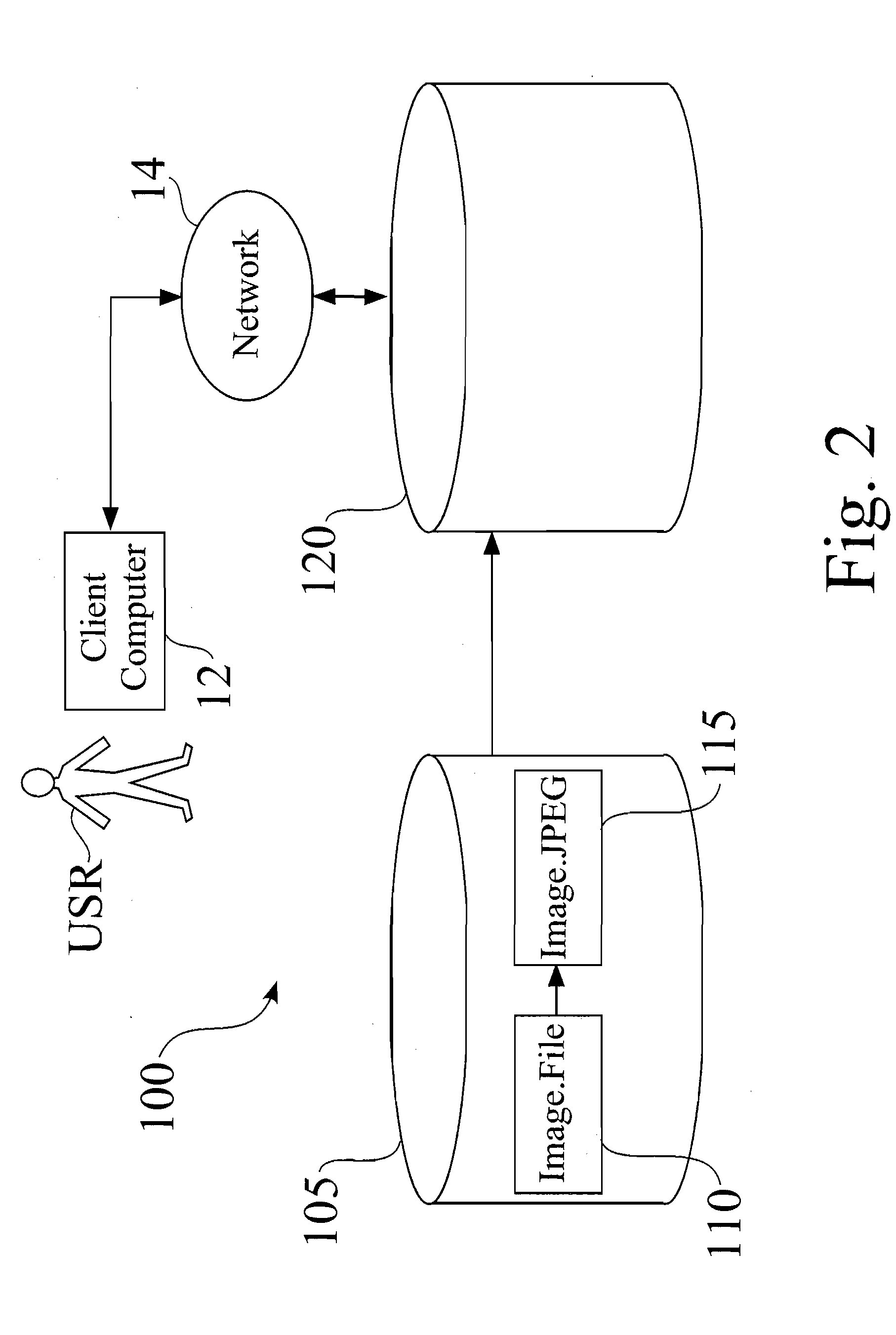

System for management of source and derivative data

Source data is centralized in a database and derivative data sets are formed from the source data. When it is desired to modify derivative data, the source data can be accessed and modified to form a new derivative data set, instead of modifying the prior data set, such that source data integrity is maintained. Tags are associated with derivative data, which can be embedded in the derivative data or associated with the derivative data as an attached element. Tags identify information such as the server that generated the derivative data, the source data and any tasks or transformations that were applied to the source data to generate the derivative data. Users with assigned access privileges to source data can be given access to a source data repository, whereby a number of users can access the source files and modify derivative data files by changes in the source data file.

Owner:MEEK BRIAN GUNNAR +2

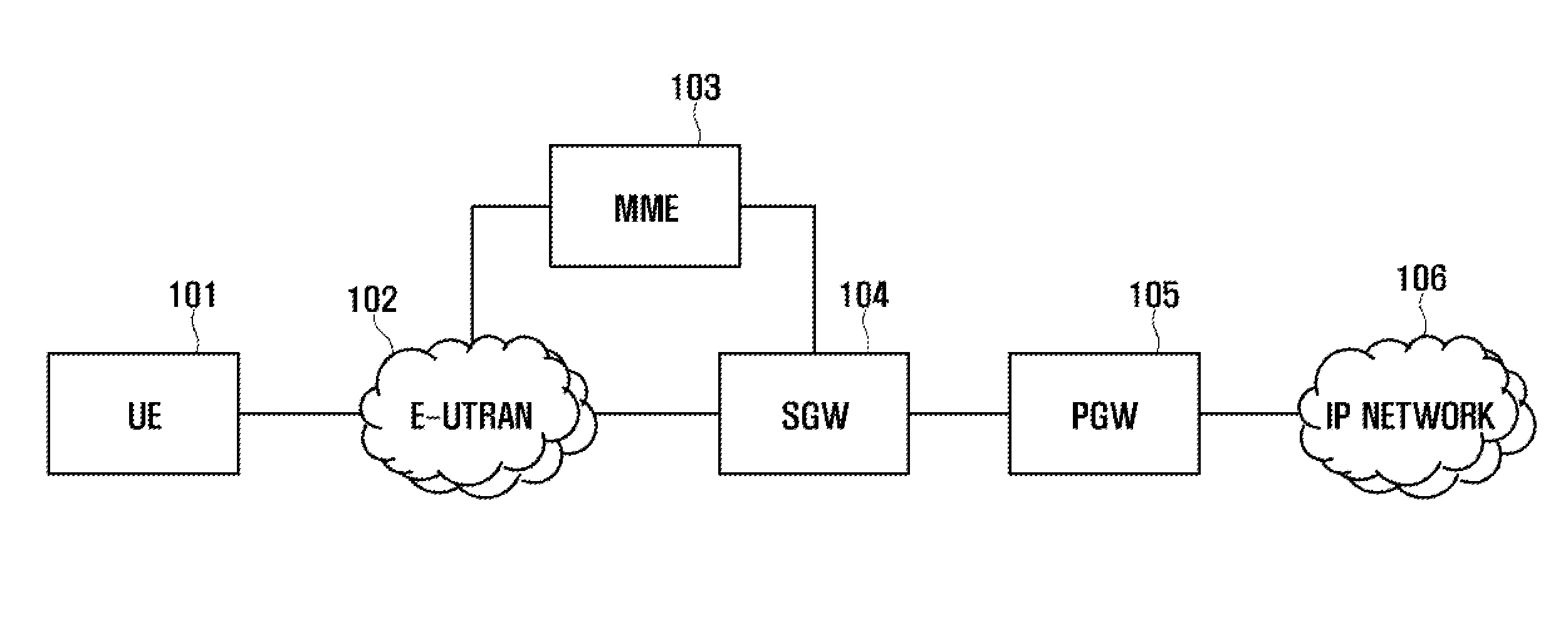

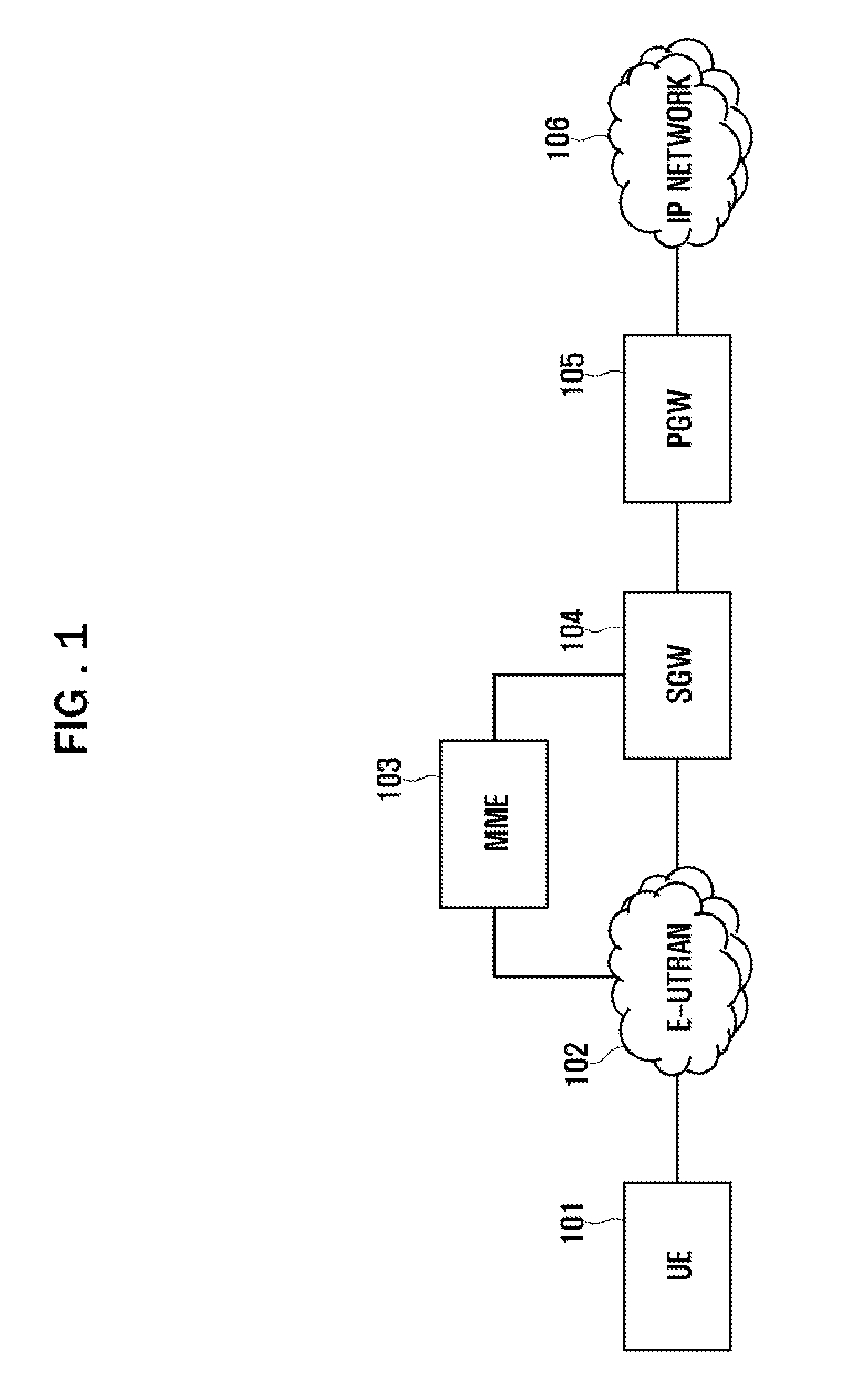

Communication system and data transmission method thereof

ActiveUS8711806B2Guaranteed normal transmissionTime-division multiplexData switching by path configurationCommunications systemData transmission

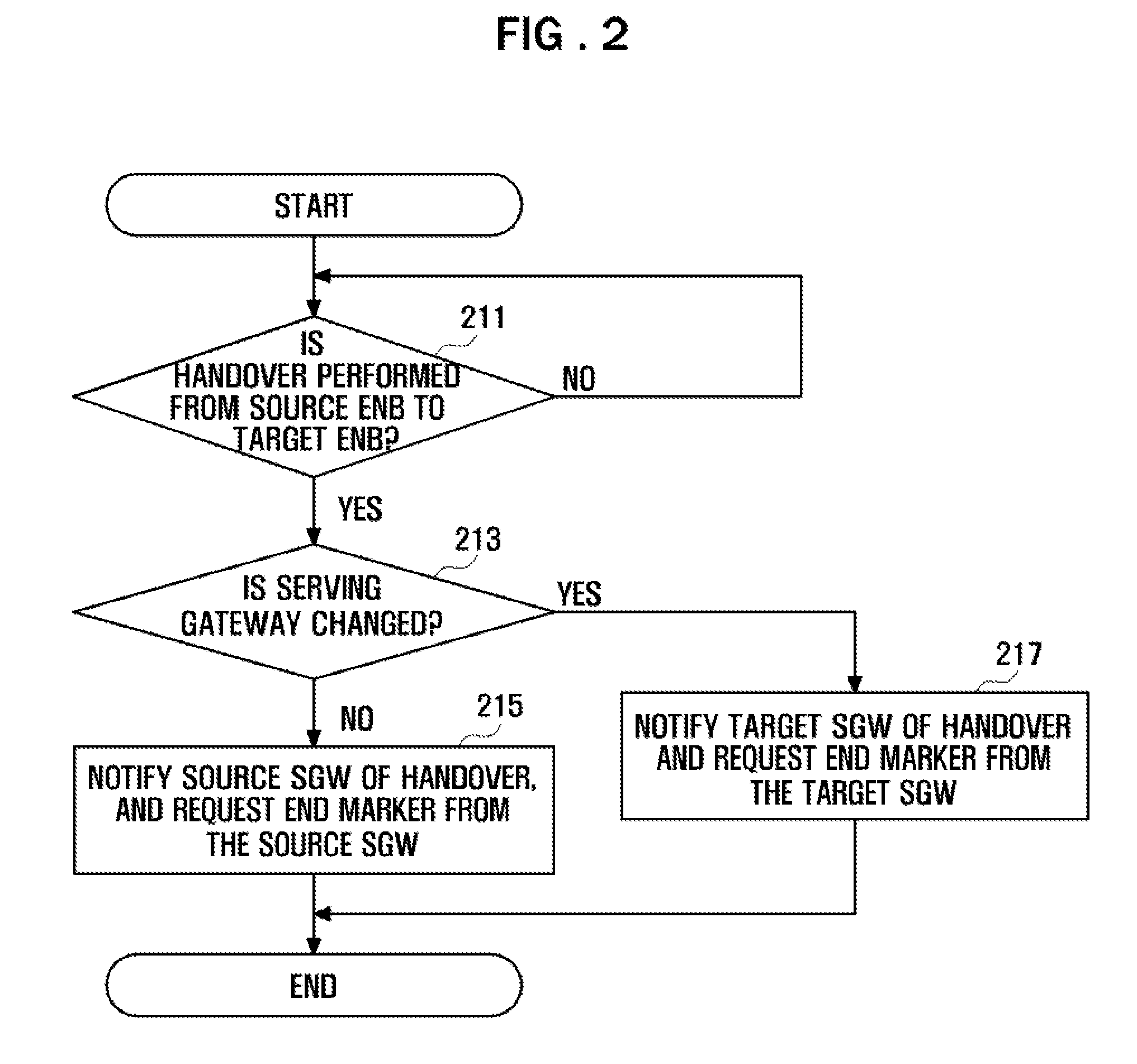

A communication system and data transmission method thereof are provided. The method includes adding an end marker to the end of source data and transmitting the source data and the end marker for a Packet data network GateWay (PGW) to a source evolved Node B (eNB) if a handover is carried out from the source eNB to a target eNB while the PGW is transmitting the source data to the source eNB, forwarding the source data and the end marker from the source eNB to the target eNB, transmitting target data immediately following the source data from the PGW to the target eNB, and transmitting the source data and the target data, which is classified into the source data by the end marker and immediately follows the end of the source data, from the target eNB to user equipment.

Owner:SAMSUNG ELECTRONICS CO LTD

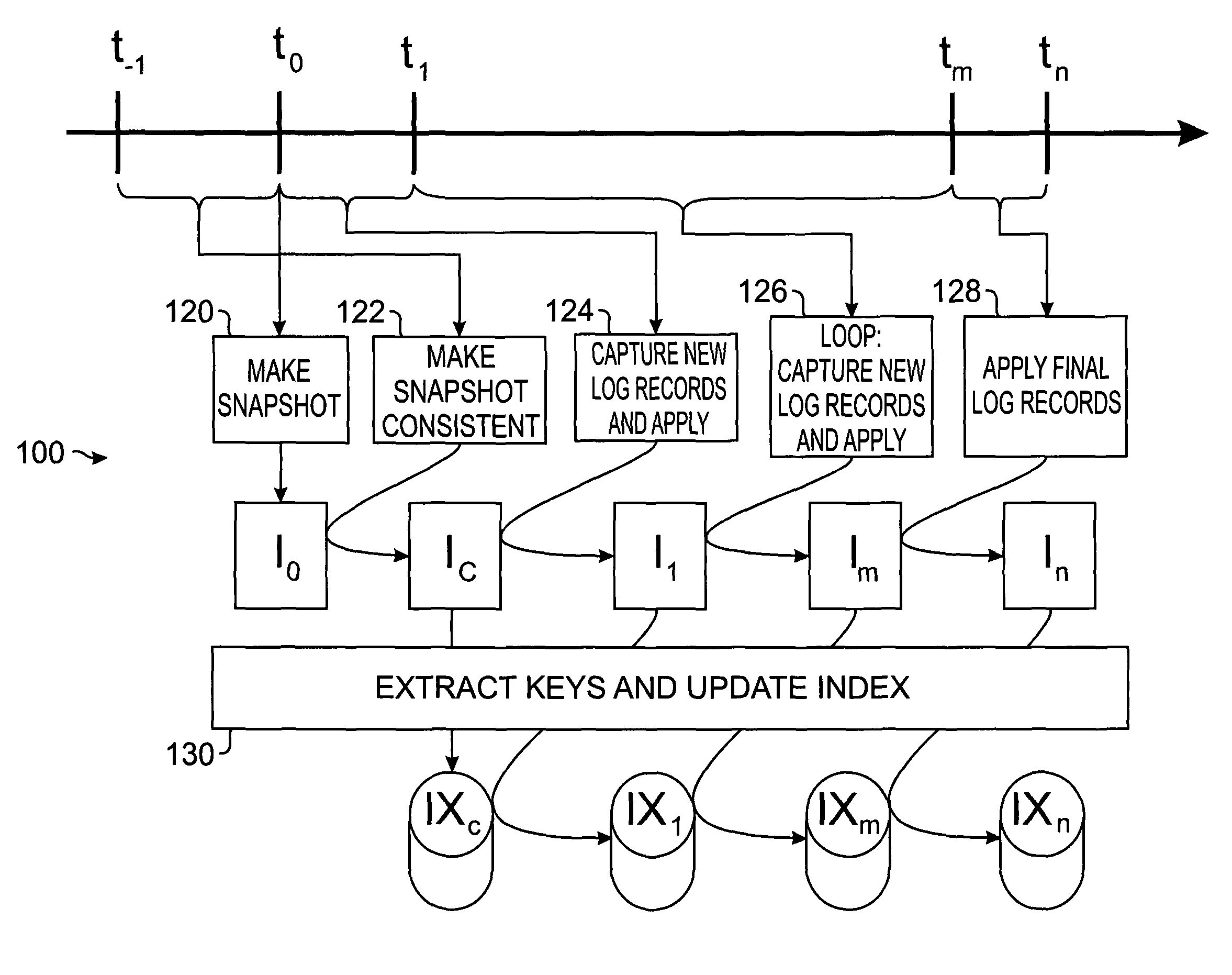

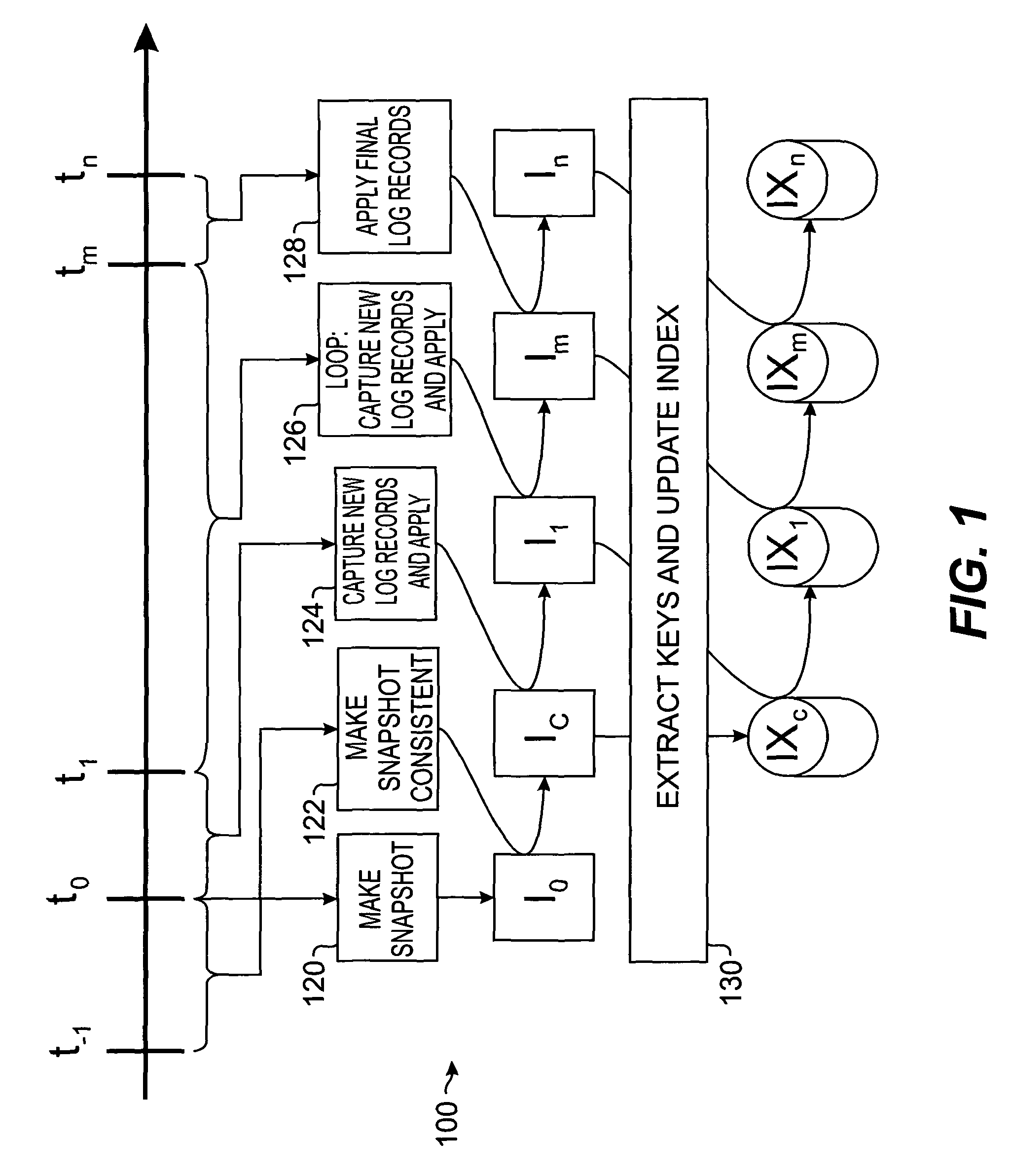

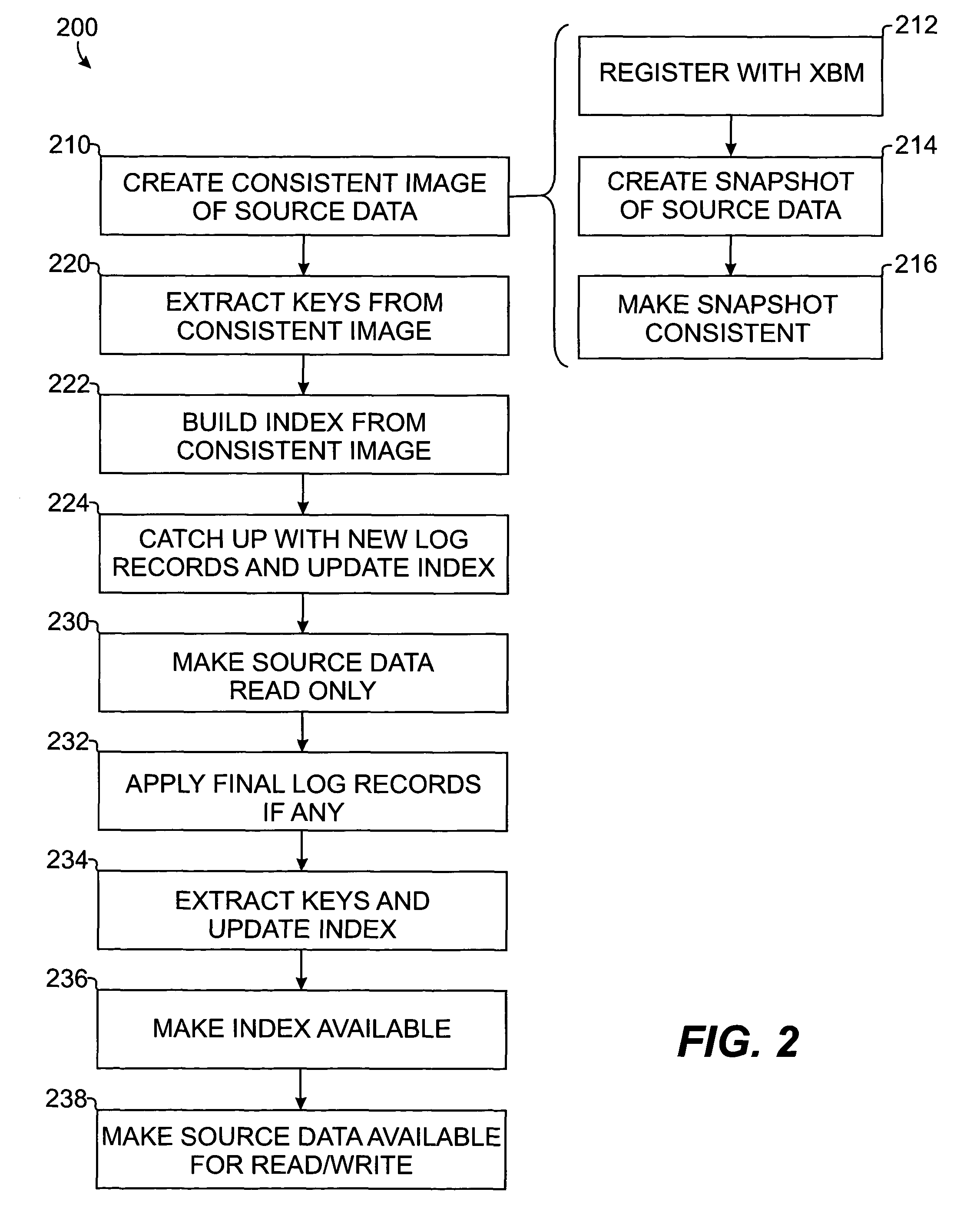

Method and apparatus for building index of source data

ActiveUS7933927B2Digital data information retrievalData processing applicationsRelational databaseTablespace

An online index building operation is disclosed for building an index from source data with minimal loss of availability to the source data. The source data can be maintained in a relational database system, such as in a tablespace of a DB2® environment. The disclosed operation creates a consistent image of the source data as of a point-in-time and creates an index from the consistent image. Then, the disclosed operation repeats the acts of making the image consistent as of a subsequent point-in-time and updating the index to reflect the subsequent consistent image until substantially caught up with the current changes to the source data. If not caught up, the disclosed operation continues unless it is falling behind at which point the operation terminates. If it is caught up, the disclosed operation locks access to the source data, updates the image to reflect any final changes, updates the index, and allows access to the index.

Owner:BMC SOFTWARE

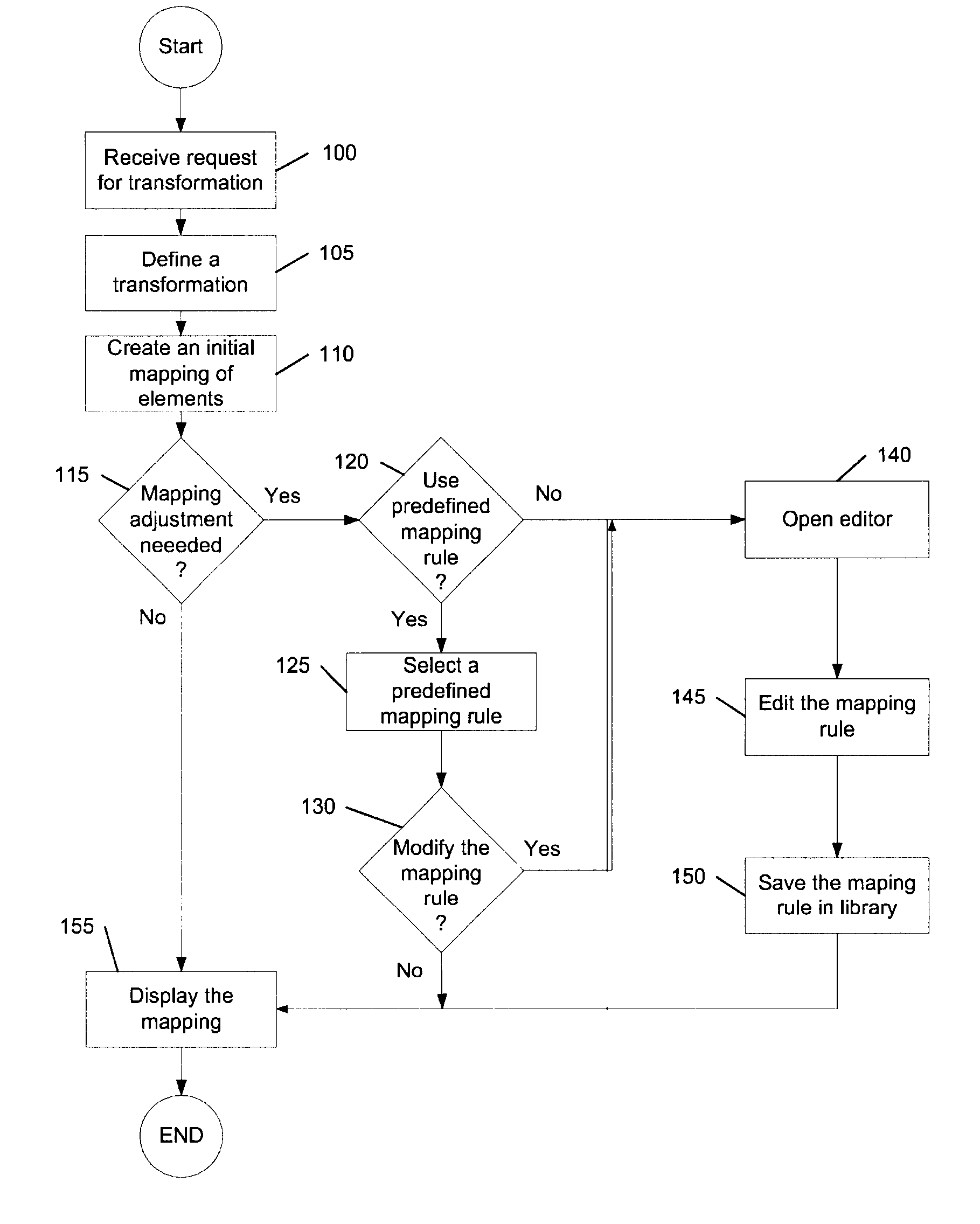

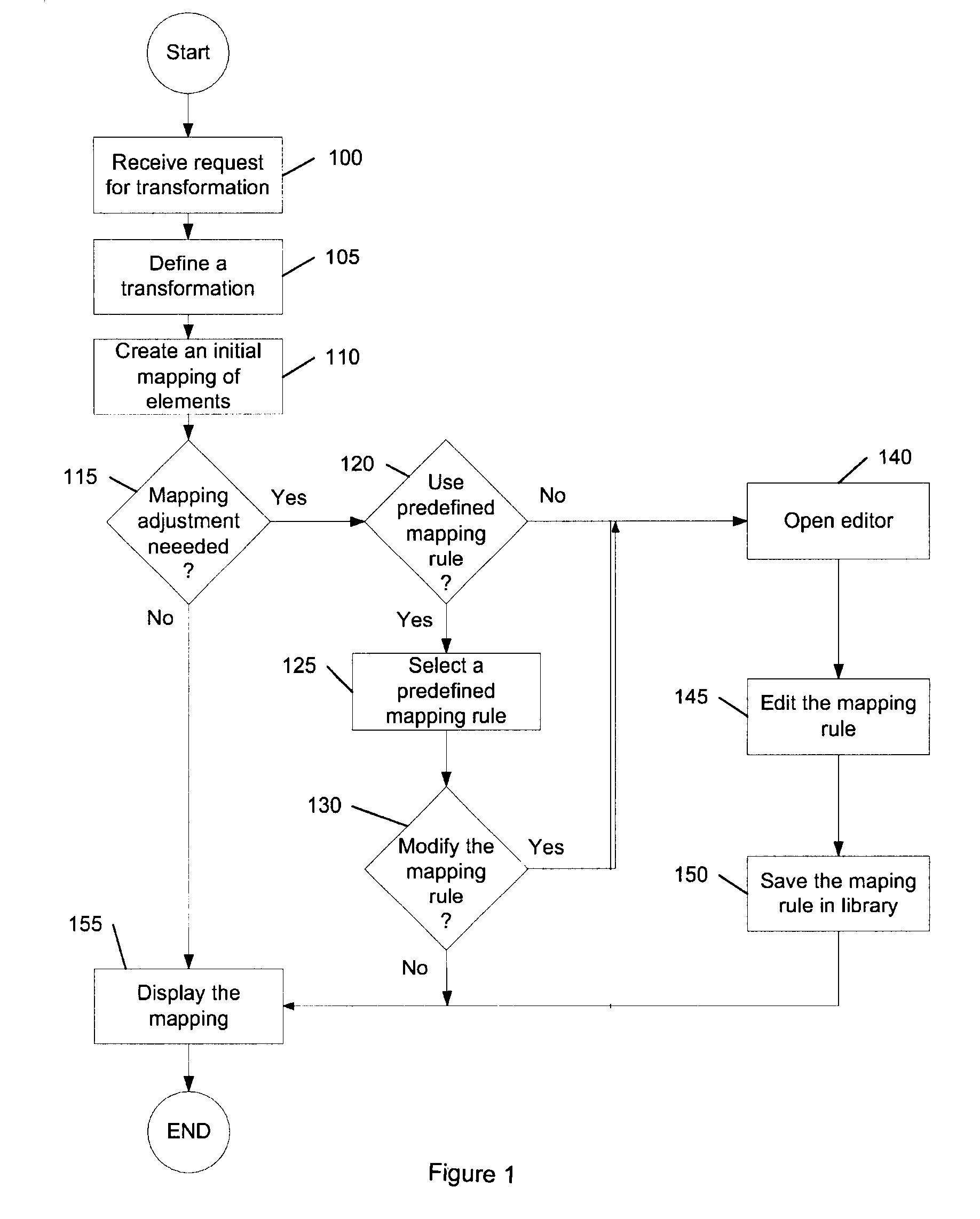

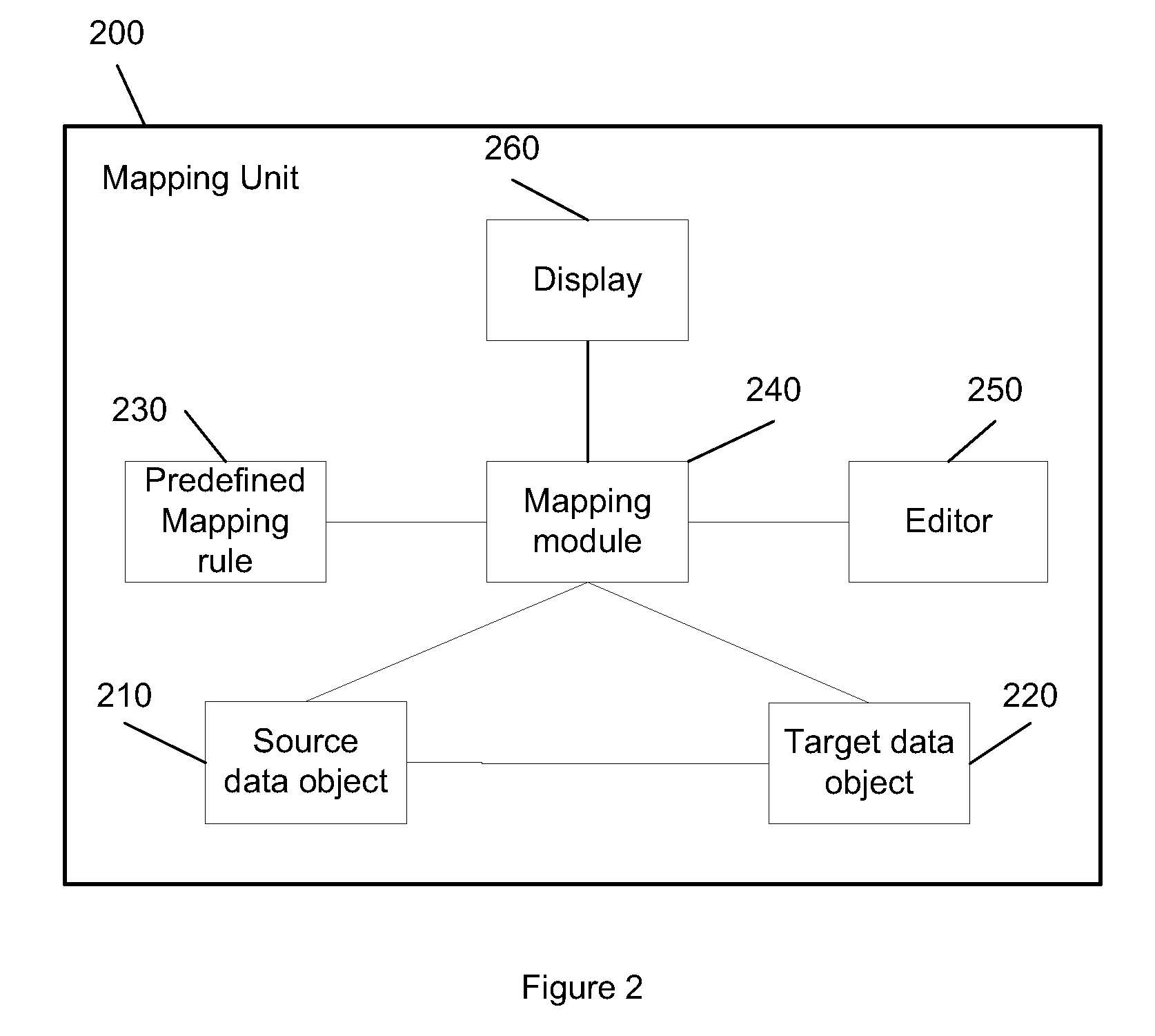

Reusable mapping rules for data to data transformation

InactiveUS20100057673A1Digital data information retrievalSpecial data processing applicationsGraphicsData transformation

What is described is a method and a system for data transformation by using predefined mapping rules. A transformation between a source data object and a target data object is defined and an initial mapping of elements from the source data object to the target data object is created. A predefined mapping rule is applied as a subsequent mapping between the source data object and the target data object to adjust the transformation. The mapping from the source data object to the target data object is displayed via a graphical user interface.

Owner:SAP AG

Synchronized pattern recognition source data processed by manual or automatic means for creation of shared speaker-dependent speech user profile

An apparatus for transforming data input by dividing the data input into a uniform dataset with one or more data divisions, processing the uniform dataset to produce a first processed dataset with one or more data divisions, processing the uniform dataset to produce a second processed dataset with one or more data divisions, wherein the first and second processed datasets have the same number of data divisions, and editing data selectively within each one of the one or more divisions of the first and second processed dataset. This apparatus has particular utility in error-spotting in processed datasets, and toward training a pattern recognition application, such as speech recognition, to produce more accurate processed datasets.

Owner:CUSTOM SPEECH USA

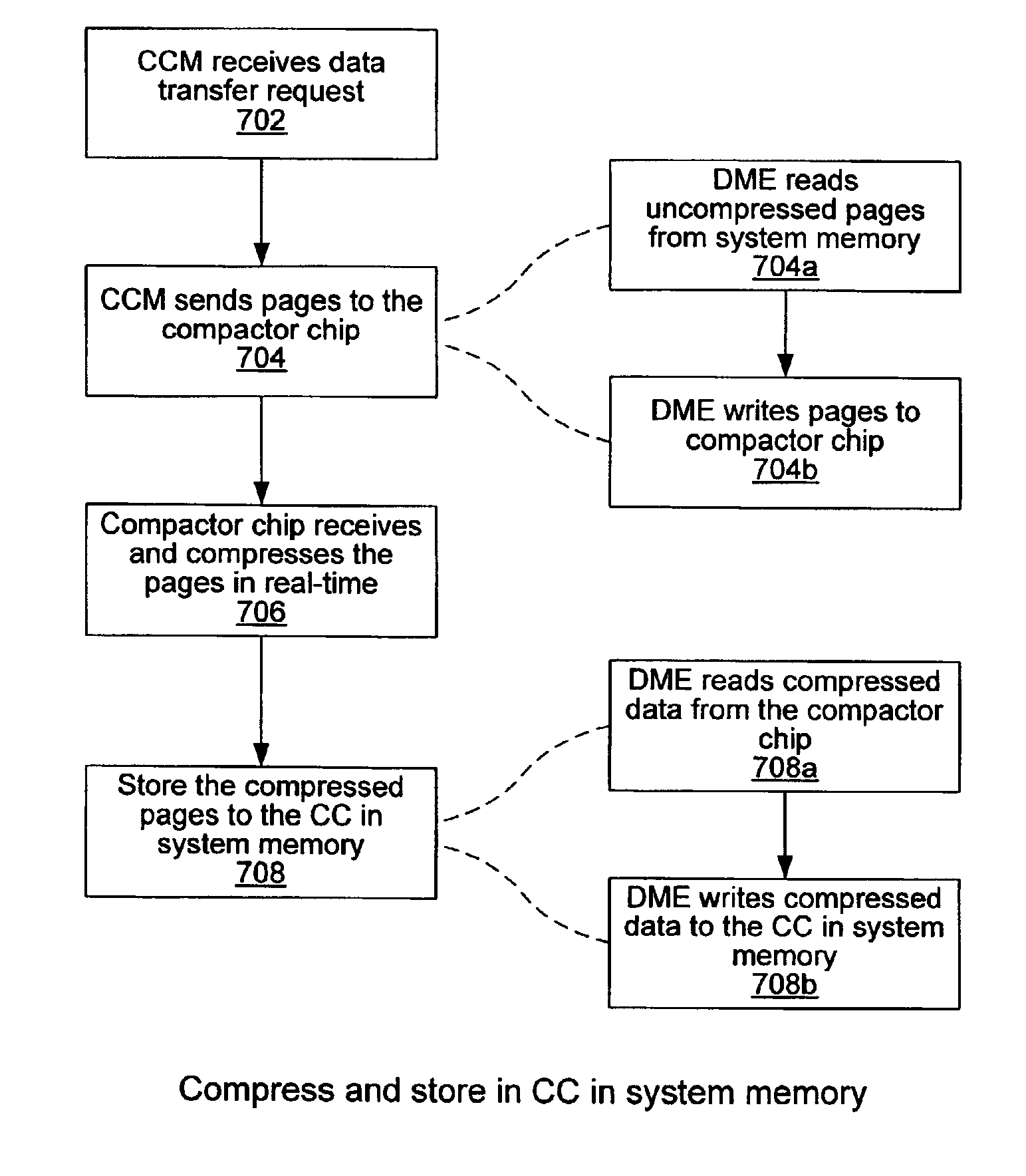

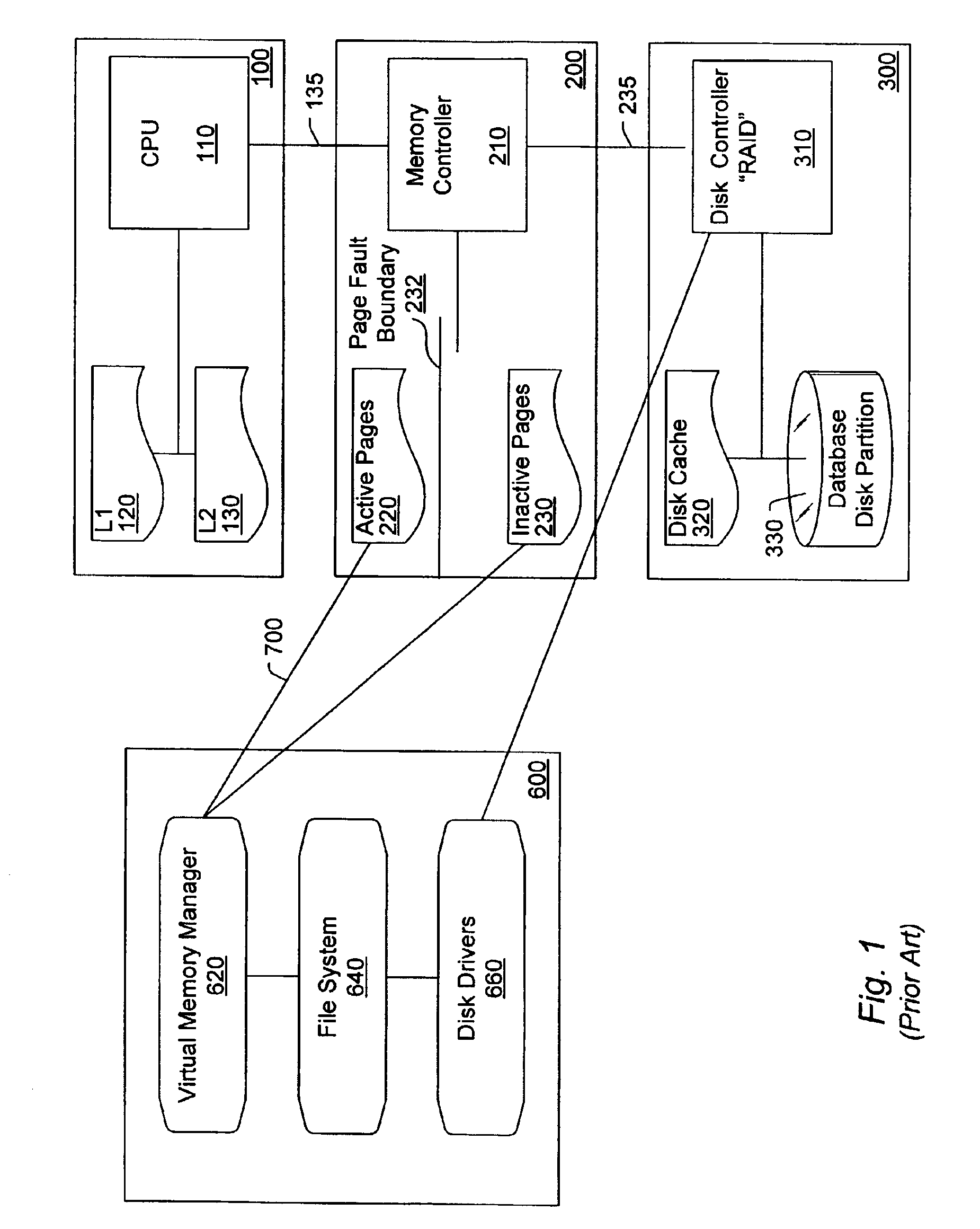

Managing a codec engine for memory compression/decompression operations using a data movement engine

InactiveUS7089391B2Memory architecture accessing/allocationMemory adressing/allocation/relocationComputerized systemMemory controller

A system and method for managing a functional unit in a system using a data movement engine. An exemplary system may comprise a CPU coupled to a memory controller. The memory controller may include or couple to a data movement engine (DME). The memory controller may in turn couple to a system memory or other device which includes at least one functional unit. The DME may operate to transfer data to / from the system memory and / or the functional unit, as described herein. In one embodiment, the DME may also include multiple DME channels or multiple DME contexts. The DME may operate to direct the functional unit to perform operations on data in the system memory. For example, the DME may read source data from the system memory, the DME may then write the source data to the functional unit, the functional unit may operate on the data to produce modified data, the DME may then read the modified data from the functional unit, and the DME may then write the modified data to a destination in the system memory. Thus the DME may direct the functional unit to perform an operation on data in system memory using four data movement operations. The DME may also perform various other data movement operations in the computer system, e.g., data movement operations that are not involved with operation of the functional unit.

Owner:INTELLECTUAL VENTURES I LLC

System and method for performing auxiliary storage operations

Systems and methods for protecting data in a tiered storage system are provided. The storage system comprises a management server, a media management component connected to the management server, a plurality of storage media connected to the media management component, and a data source connected to the media management component. Source data is copied from a source to a buffer to produce intermediate data. The intermediate data is copied to both a first and second medium to produce a primary and auxiliary copy, respectively. An auxiliary copy may be made from another auxiliary copy. An auxiliary copy may also be made from a primary copy right before the primary copy is pruned.

Owner:COMMVAULT SYST INC

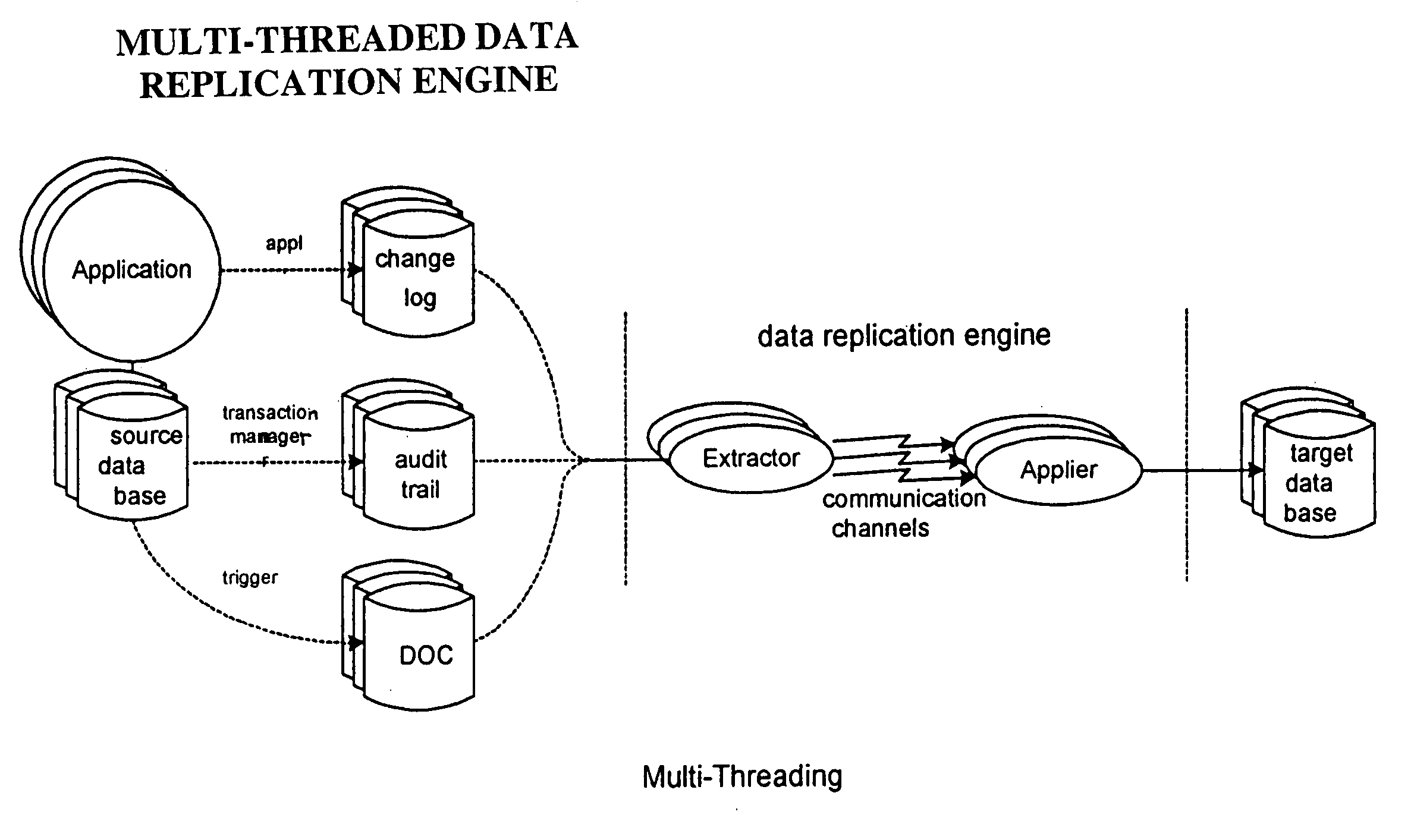

Method for ensuring referential integrity in multi-threaded replication engines

ActiveUS20050021567A1Digital data information retrievalDigital data processing detailsTransaction dataLoad capacity

During replication of transaction data from a source database to a target database via a change queue associated with the source database, one or more multiple paths are provided between the change queue and the target database. The one or more multiple paths cause at least some of the transaction data to become unserialized. At least some of the unserialized data is reserialized prior to or upon applying the originally unserialized transaction data to the target database. If the current transaction load is close or equal to the maximum transaction load capacity of a path between the change queue and the target database, another path is provided. If the maximum transaction threshold limit of an applier associated with the target database has been reached, open transactions may be prematurely committed.

Owner:INTEL CORP

Stereoplexing for film and video applications

A method for multiplexing a stream of stereoscopic image source data into a series of left images and a series of right images combinable to form a series of stereoscopic images, both the stereoscopic image source data and series of left images and series of right images conceptually defined to be within frames. The method includes compressing stereoscopic image source data at varying levels across the frame, thereby forming left images and right images, and providing a series of single frames divided into portions, each single frame containing one right image in a first portion and one left image in a second portion. Alternately, single frames may contain two right images in a first two portions of each single frame and two left images in a second two portions of each single frame, wherein each set of right and left images may be processed differently. Multiplexing processes such as staggering, alternating, filtering, variable scaling, and sharpening from original, uncompressed right and left images may be employed.

Owner:REAID INC

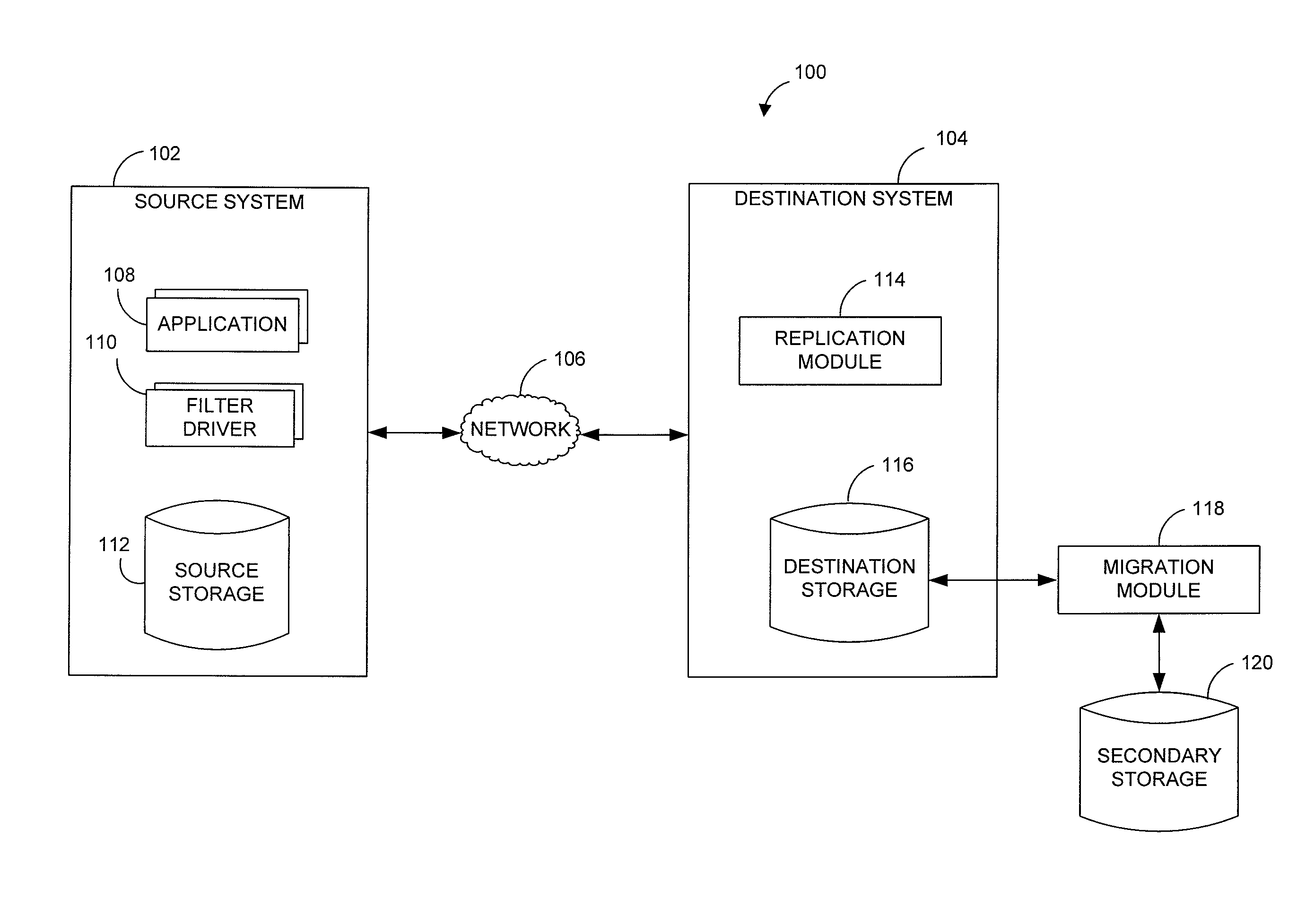

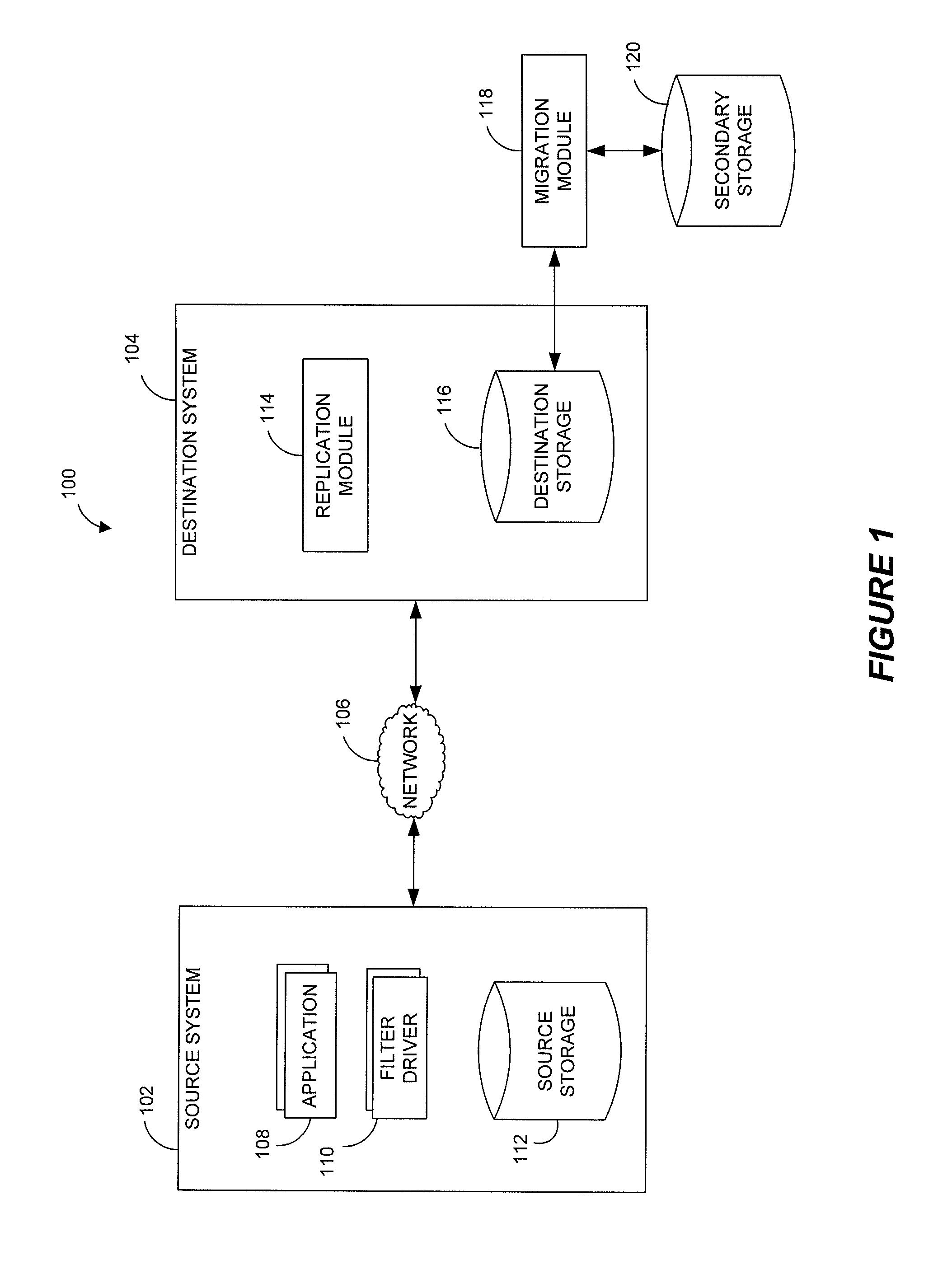

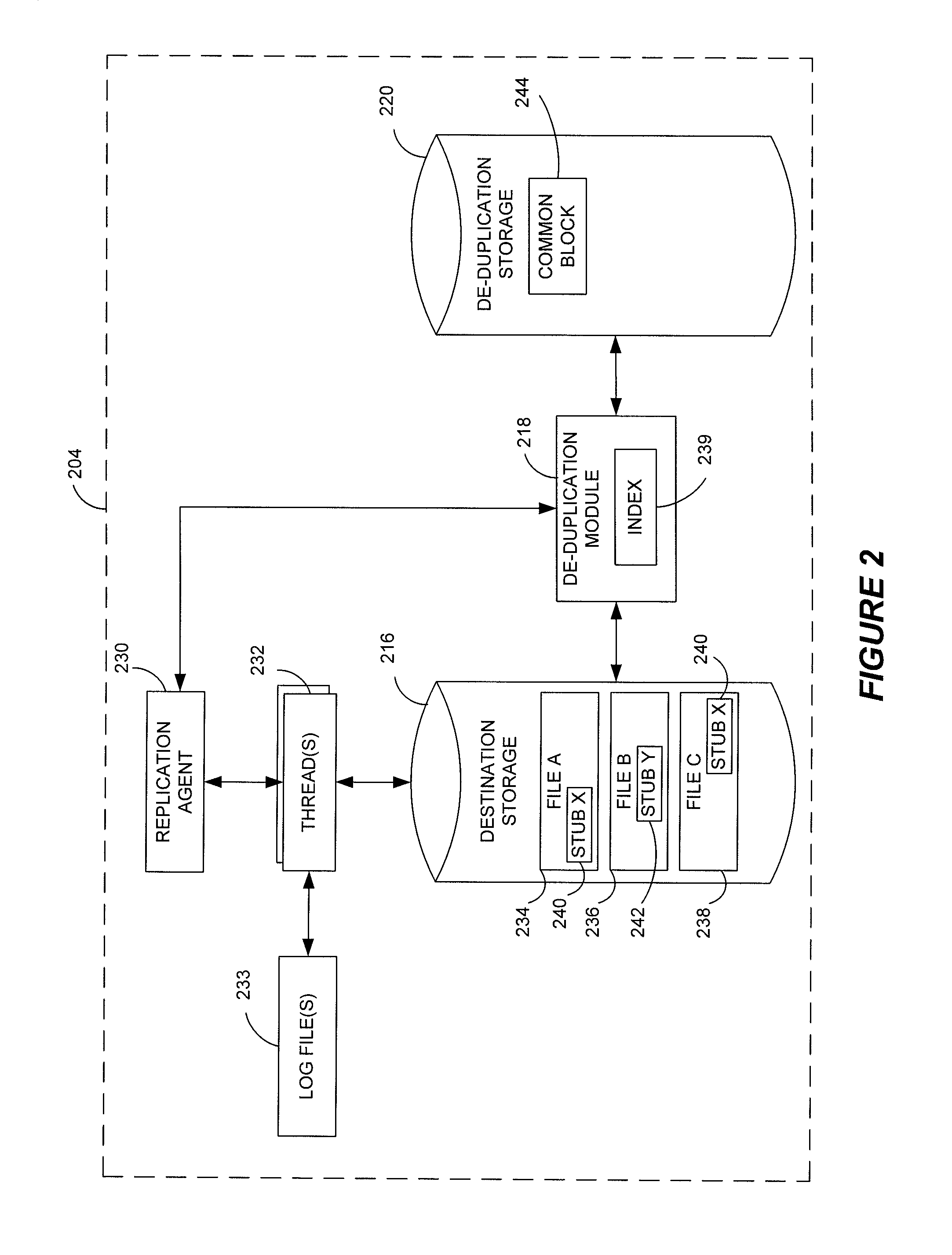

Data restore systems and methods in a replication environment

ActiveUS8352422B2Digital data information retrievalDigital data processing detailsData managementGoal system

Stubbing systems and methods are provided for intelligent data management in a replication environment, such as by reducing the space occupied by replication data on a destination system. In certain examples, stub files or like objects replace migrated, de-duplicated or otherwise copied data that has been moved from the destination system to secondary storage. Access is further provided to the replication data in a manner that is transparent to the user and / or without substantially impacting the base replication process. In order to distinguish stub files representing migrated replication data from replicated stub files, priority tags or like identifiers can be used. Thus, when accessing a stub file on the destination system, such as to modify replication data or perform a restore process, the tagged stub files can be used to recall archived data prior to performing the requested operation so that an accurate copy of the source data is generated.

Owner:COMMVAULT SYST INC

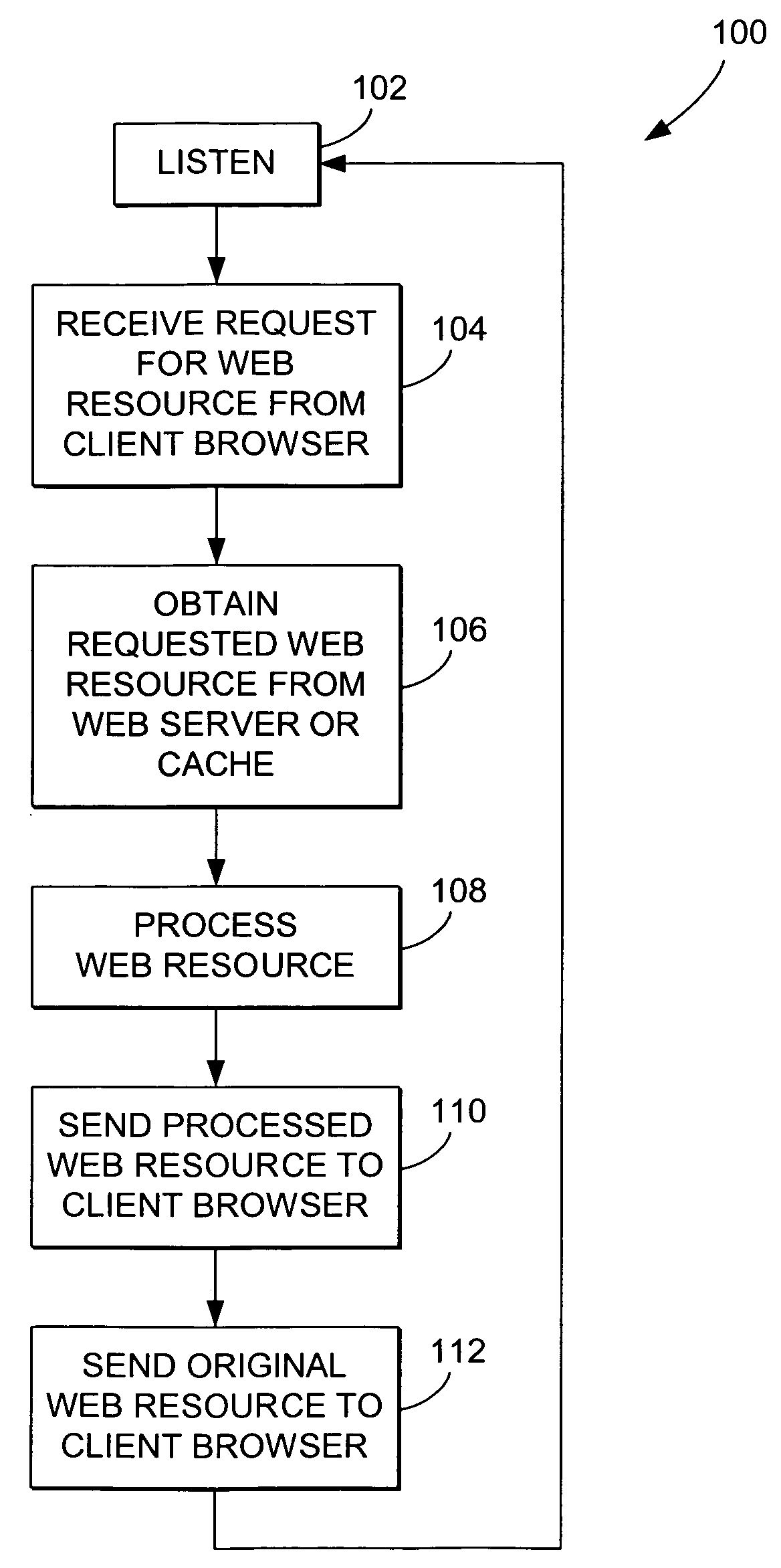

Web page source file transfer system and method

A method for transmitting web page source data over a computer network. The method typically includes receiving a request for the web page source data from a remote client. The web page source data contains renderable and non-renderable data. The request is received at an acceleration device positioned on the computer network intermediate the web page source data and an associated web server. The method further includes filtering at least a portion of the non-renderable data from the requested web page source data, thereby creating modified web page source data, and sending the modified web page source data to the remote client. The non-renderable data is selected from the group consisting of whitespace, comments, hard returns, meta tags, keywords configured to be interpreted by a search engine, and commands not interpretable by the remote client.

Owner:JUMIPER NETWORKS INC

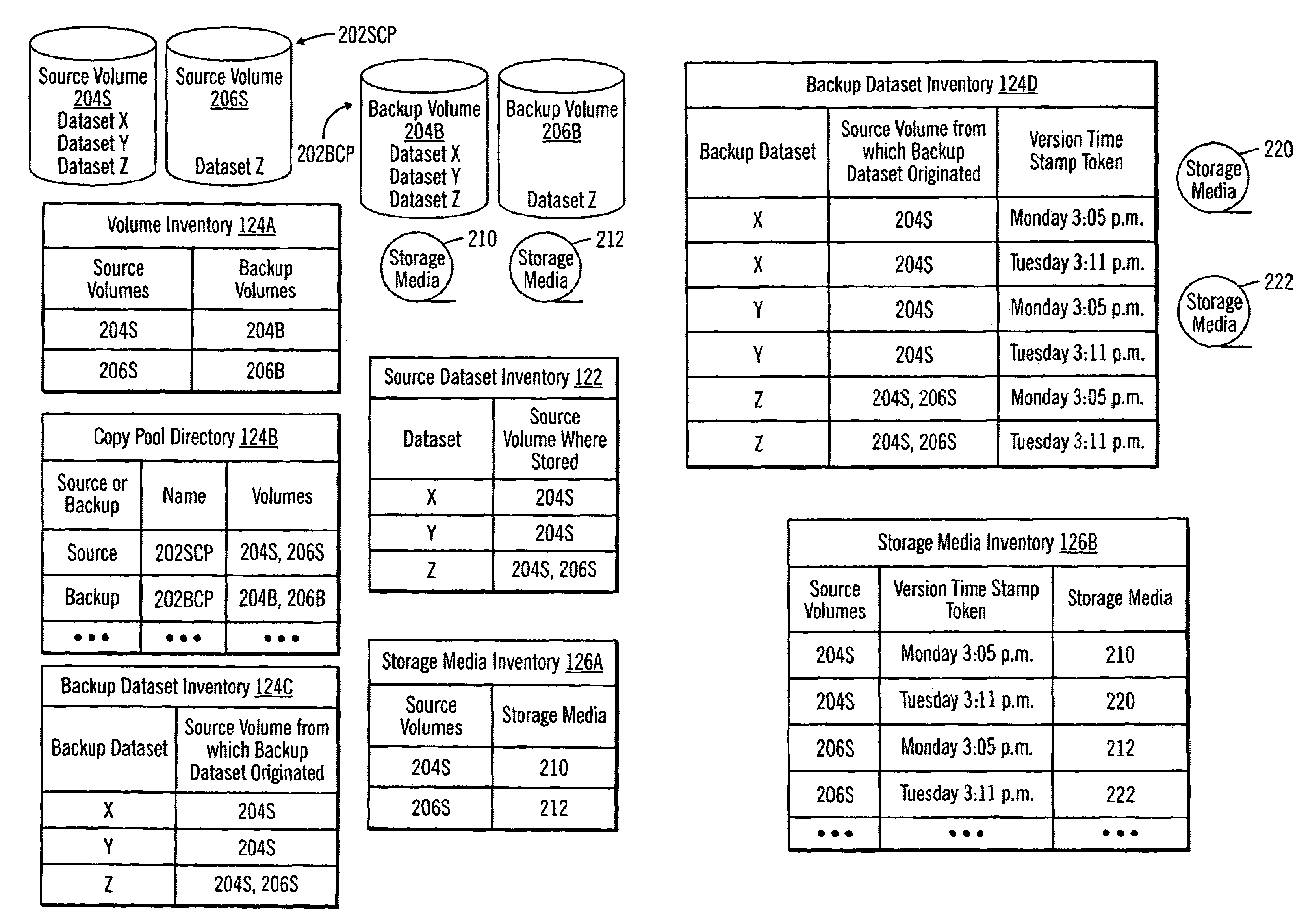

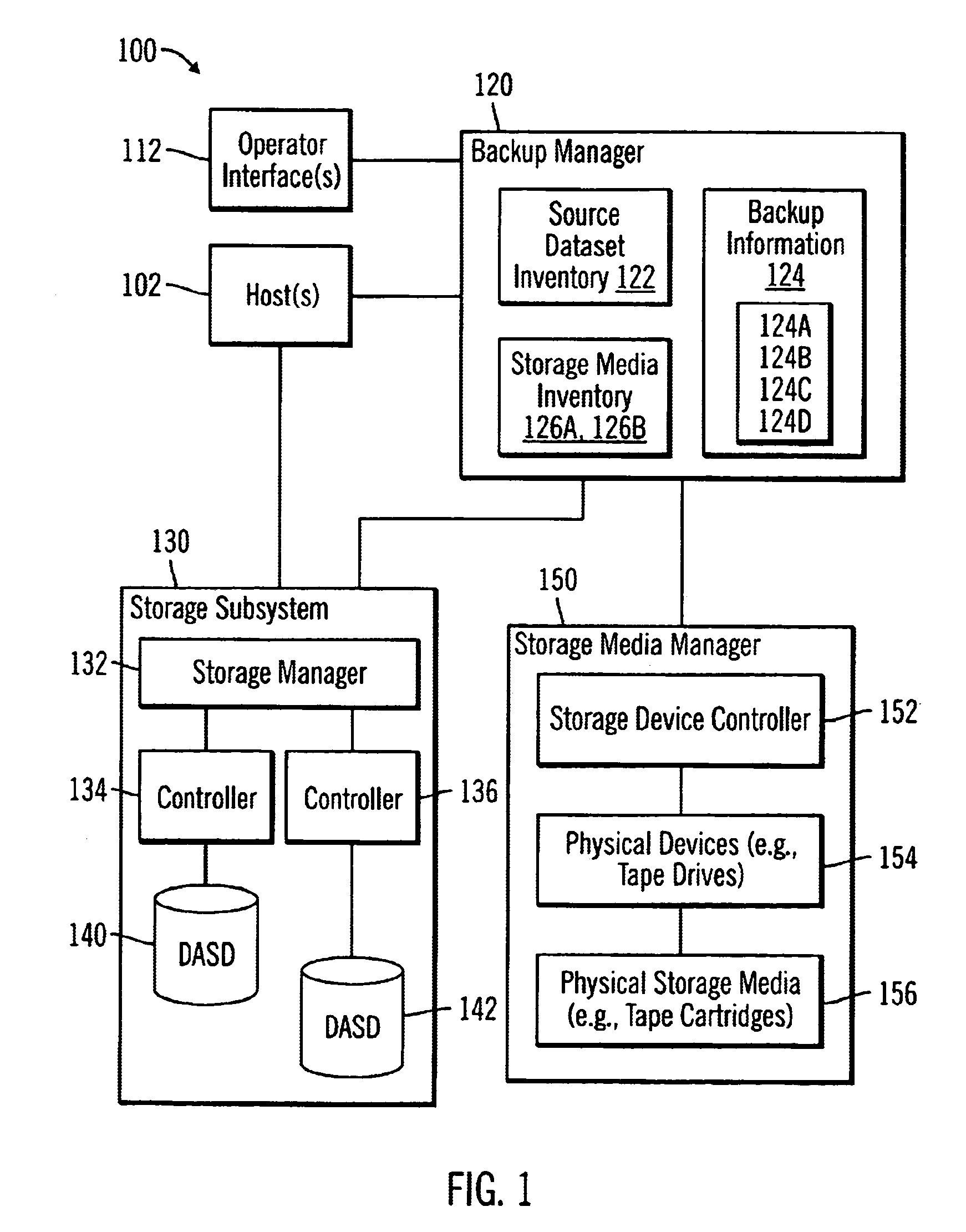

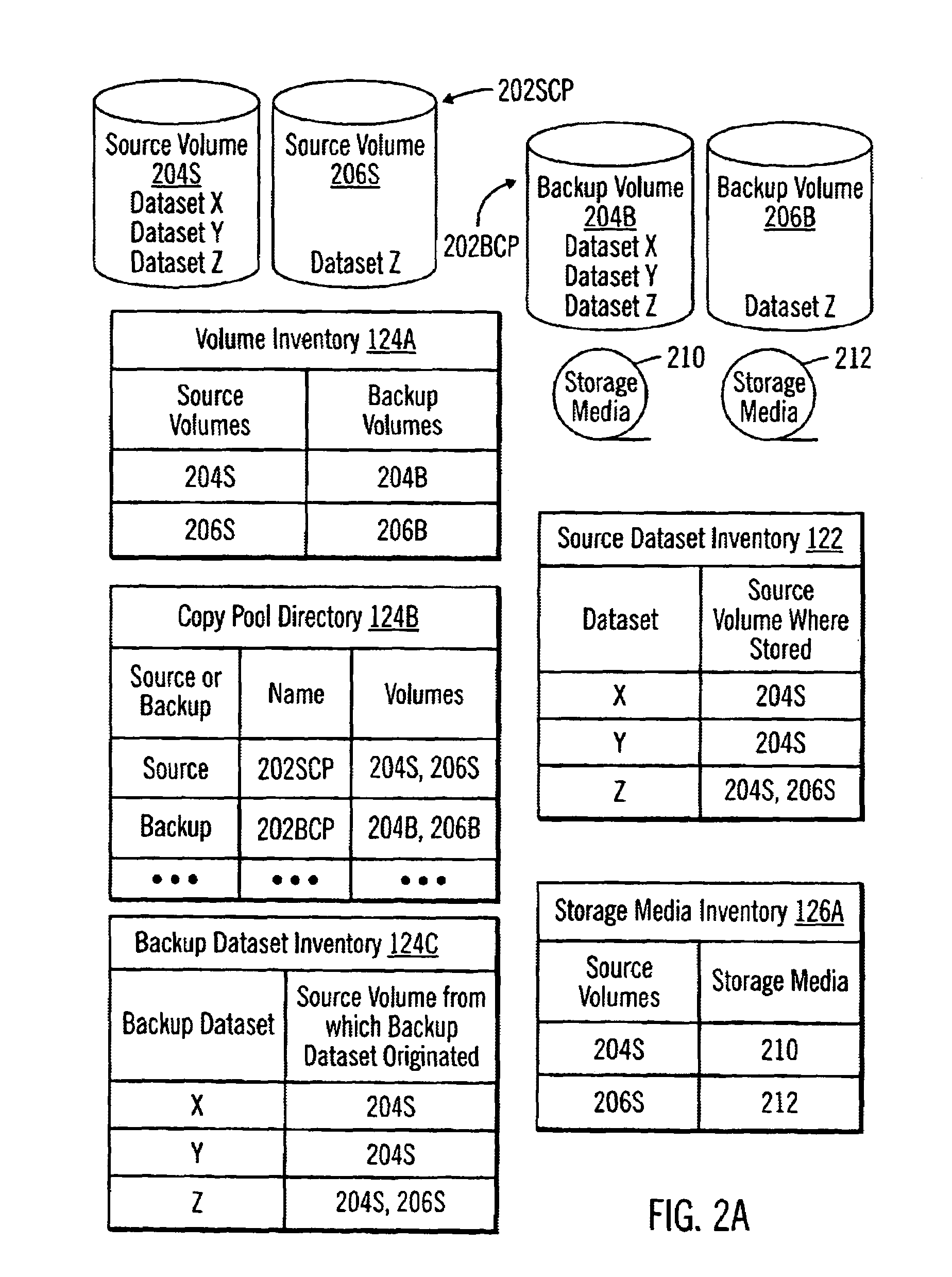

Method, system, and program for data backup

ActiveUS6959369B1Eliminate needMemory loss protectionError detection/correctionData setApplication software

Disclosed is a system, method, and program for data backup. A backup copy of source data is created. A backup dataset inventory is created when the backup copy is created. The backup dataset inventory includes a backup dataset identifier and an originating source volume identifier for cach dataset of the source data. The backup copy is copied to a storage medium. A storage media inventory is created when copying the backup copy to the storage medium. The storage media inventory includes the originating source volume identifier and a storage media identifier for each dataset of the source data. This single backup scheme eliminates having to issue both image copies for individual dataset recovery, as well as, separate full volume dumps for recover of failed physical volumes or to recover an entire application.

Owner:GOOGLE LLC

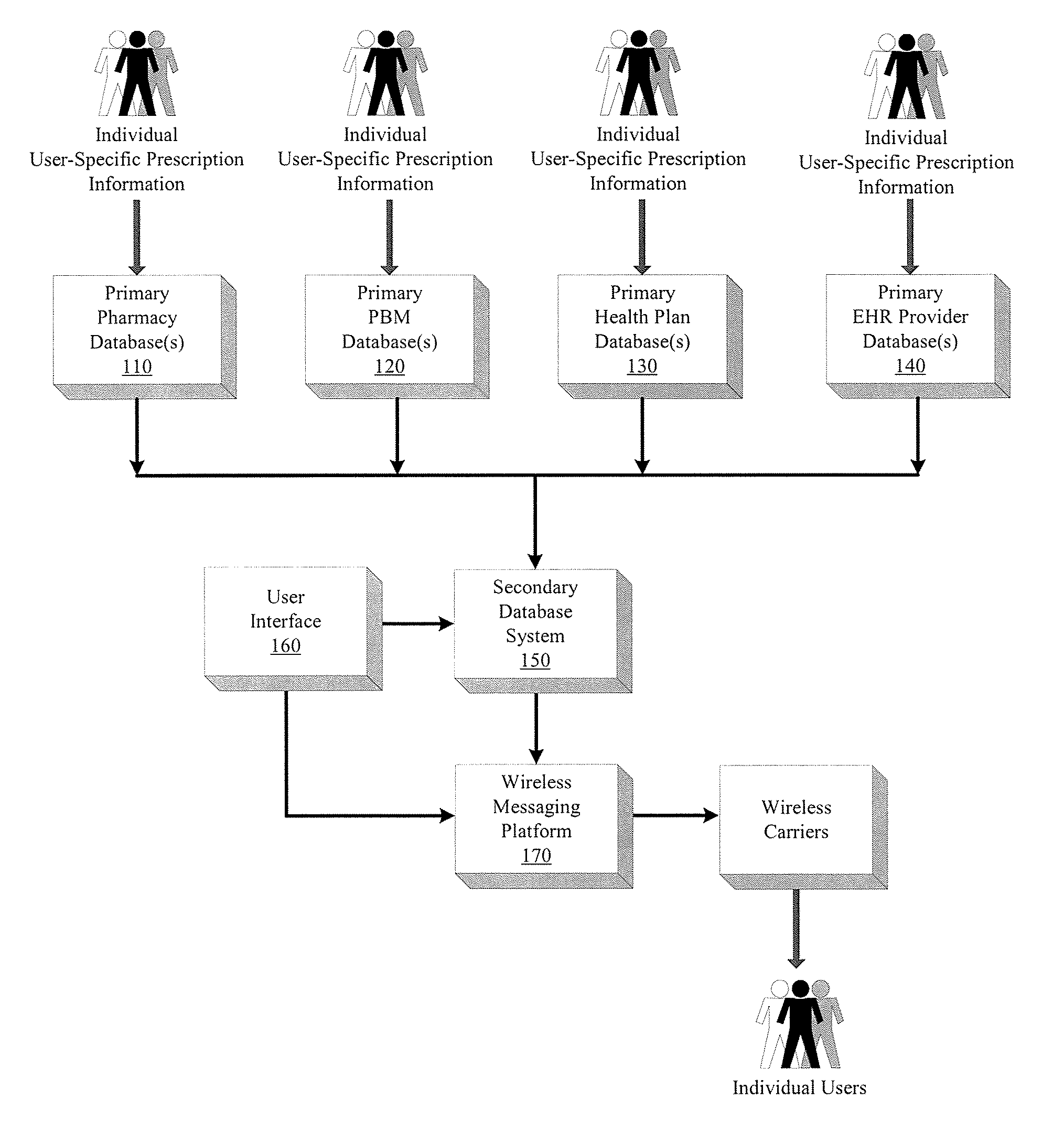

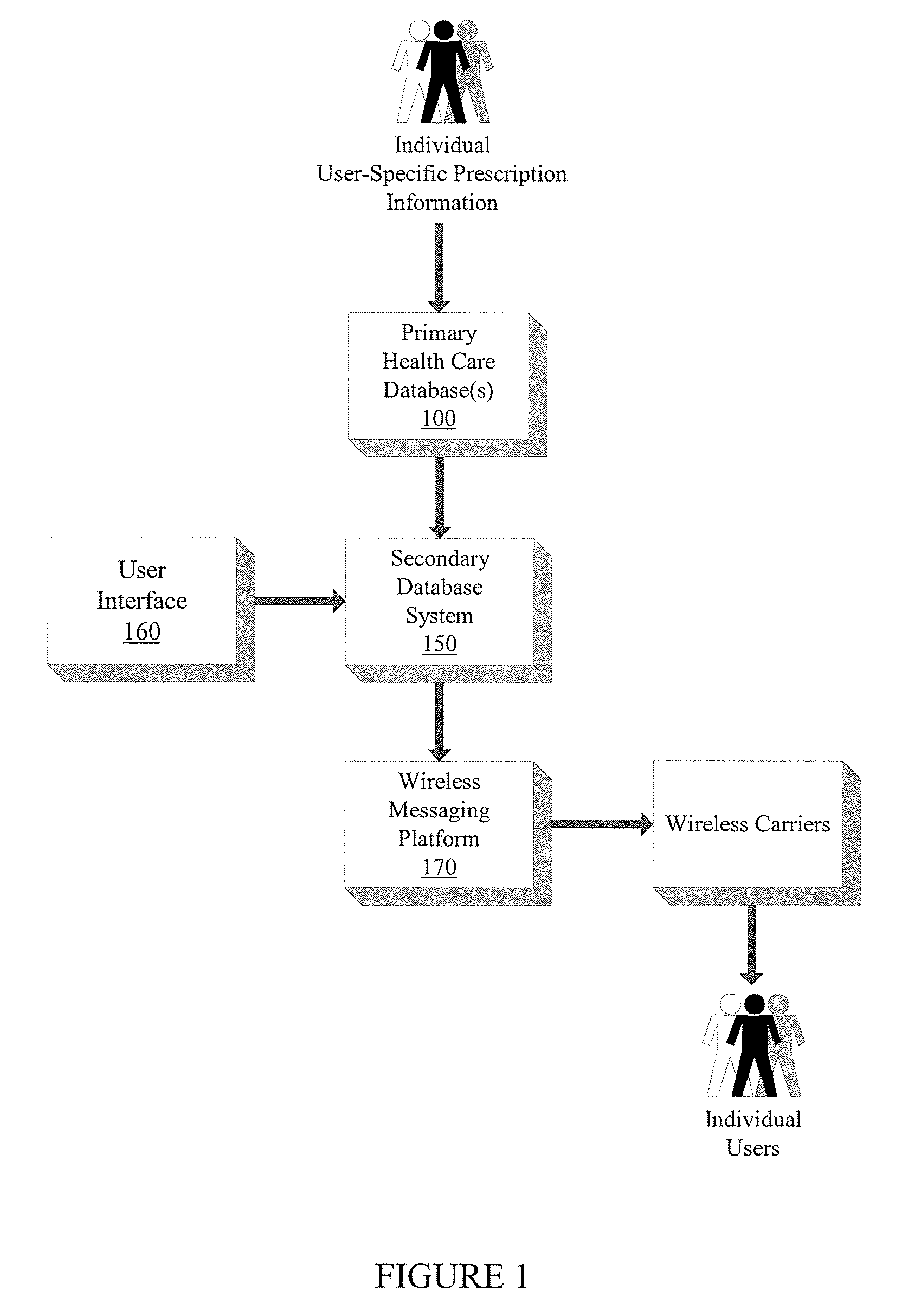

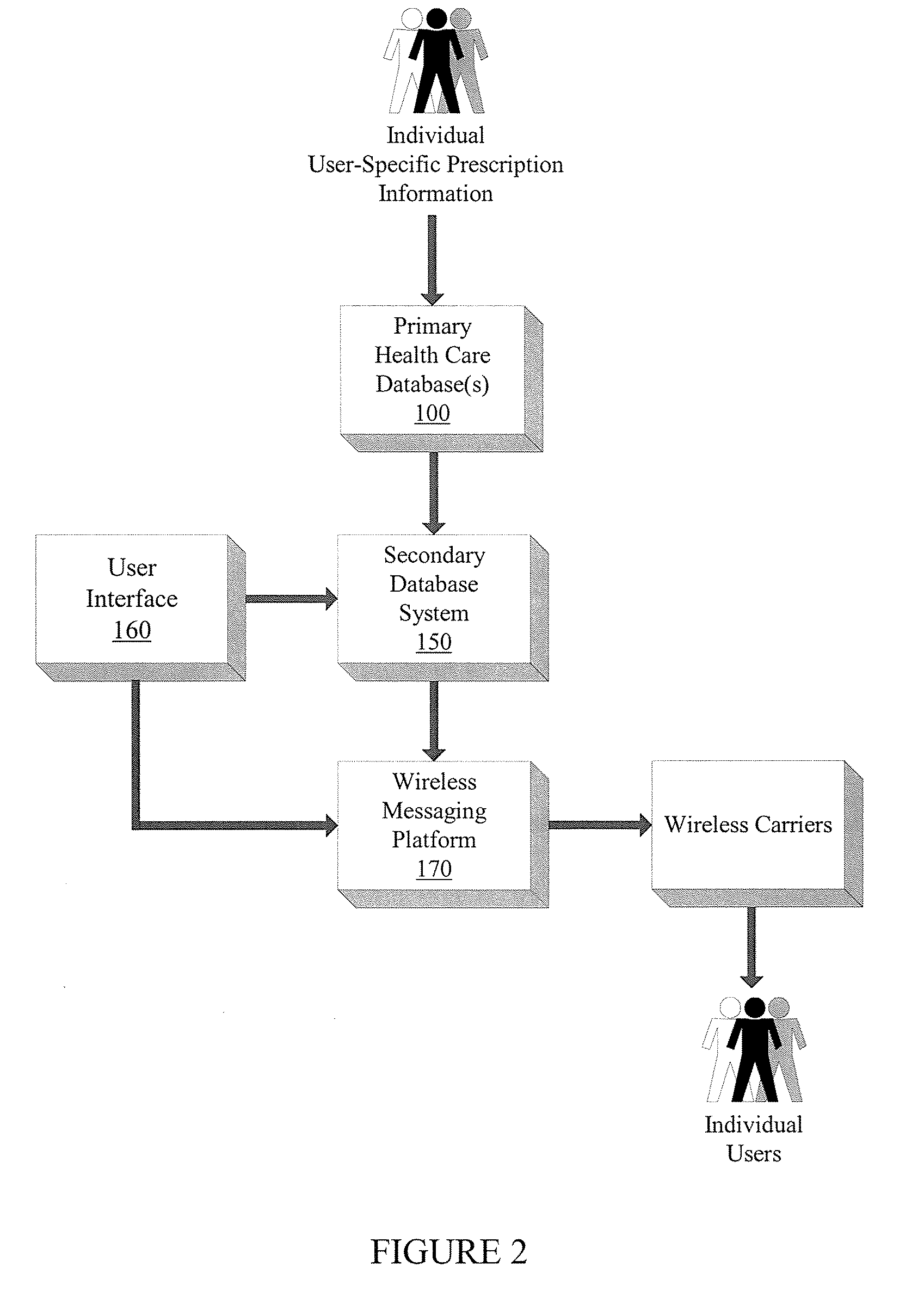

Integrated prescription management and compliance system

A system and method for prescription therapy management and compliance are provided. The system framework integrates primary databases from pharmacies, pharmacy benefit managers (PBMs), health plans, and EHR providers with a secondary database system, user interface, and wireless messaging platform, in order to extract and aggregate user-specific prescription data and make it available to users through a personalized account and as a part of a wireless prescription reminder service. The system helps users access, aggregate, manage, update, automate, and schedule the prescriptions they are currently taking. Users receive real-time wireless prescription dosing reminders based on their prescribed drug, dosage, and other indications, tailored to each individual's daily schedule. These wireless dosing reminders (i) include additional instructional content such as pill images, compliance tools, and Web or WAP drug links, (ii) are automatically scheduled and transmitted based on the user's prescription source data, in conjunction with selections indicated on their personal account, and (iii) are transmitted via SMS, EMS, MMS, WAP, email, and other formats to the user's mobile phone, PDA, or other wireless device. The integrated interface and secondary database system enable a number of additional system features which focus on compliance and management of the underlying source prescription data.

Owner:LAWLESS OLIVER CHARLES

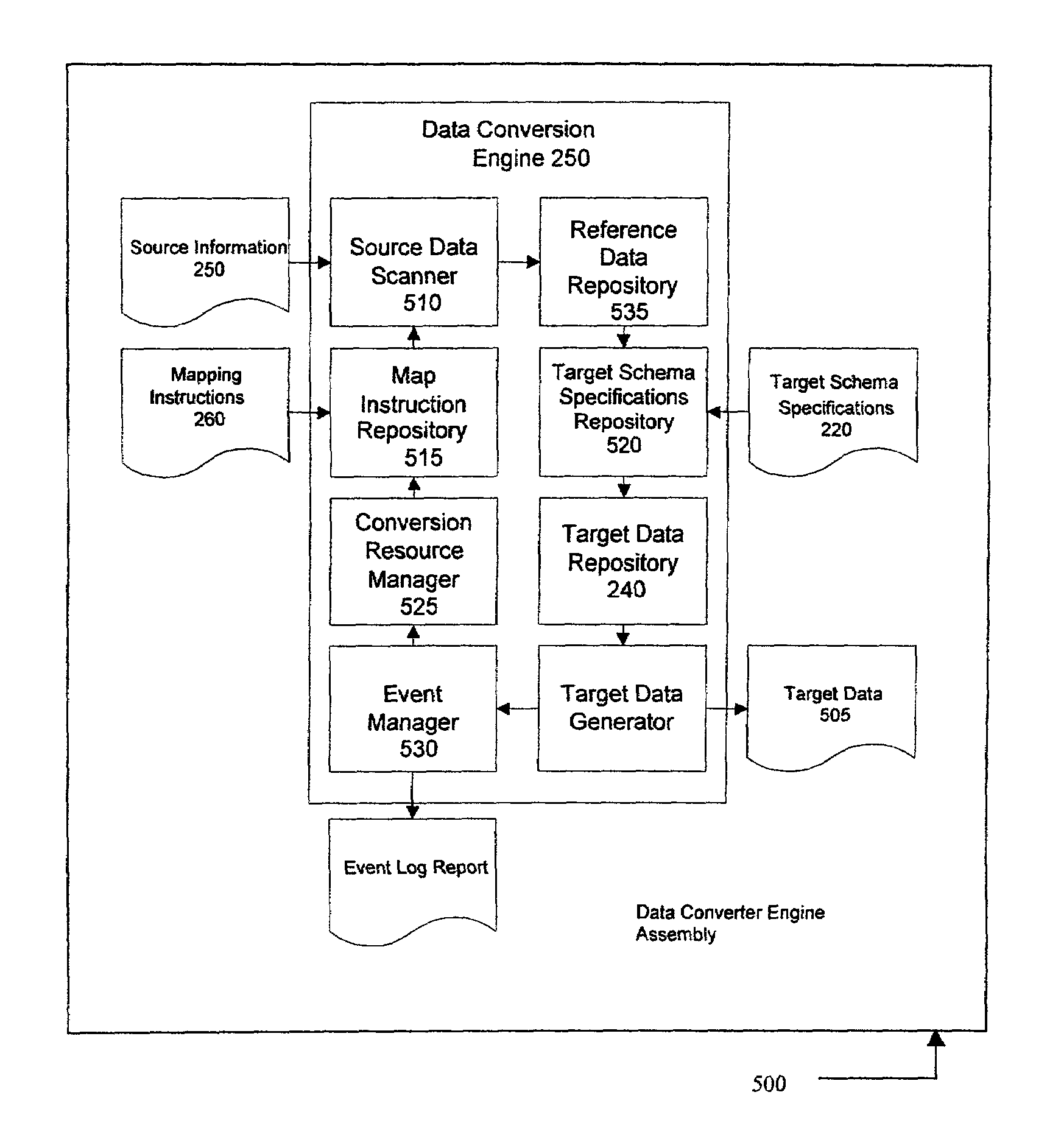

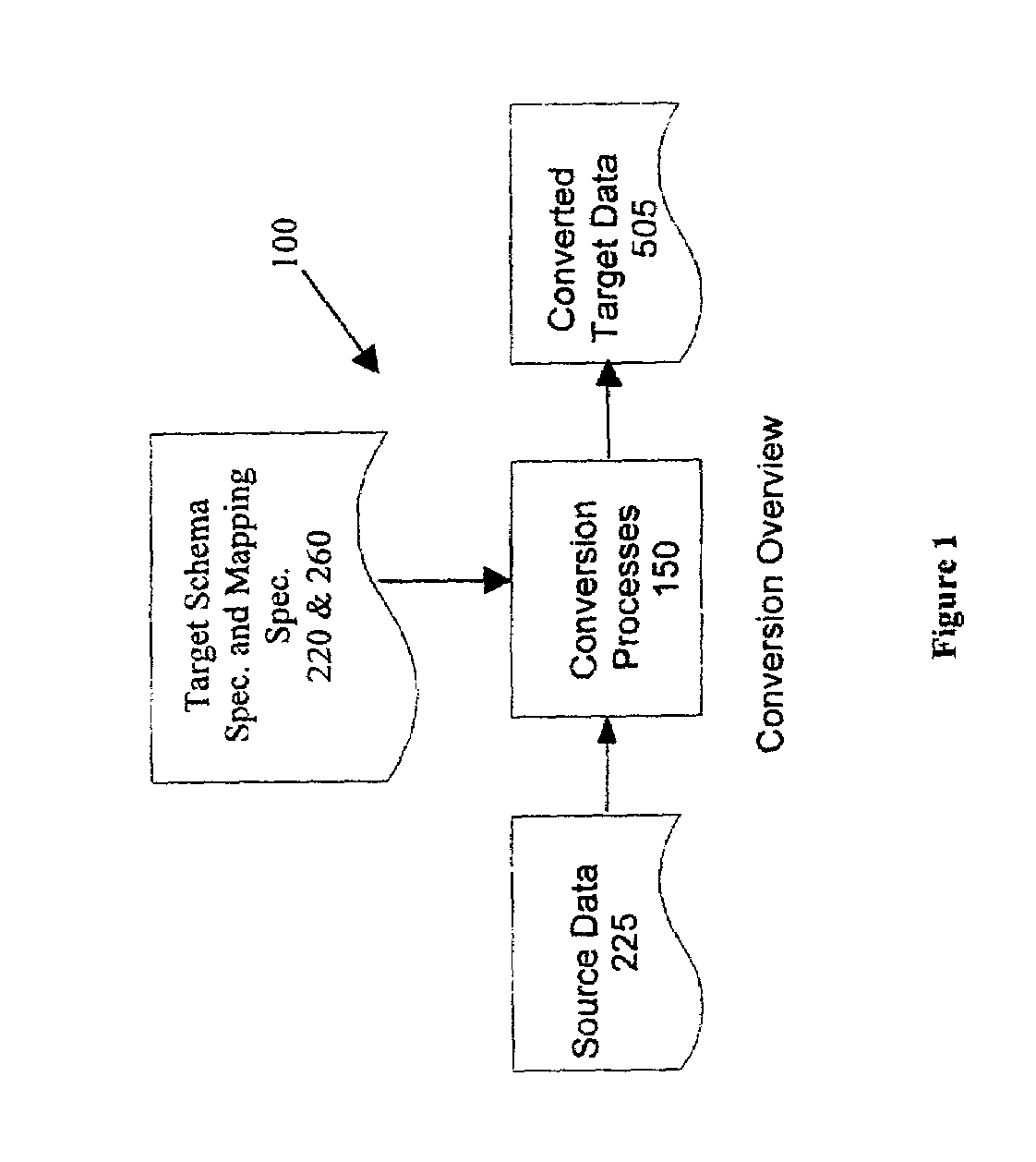

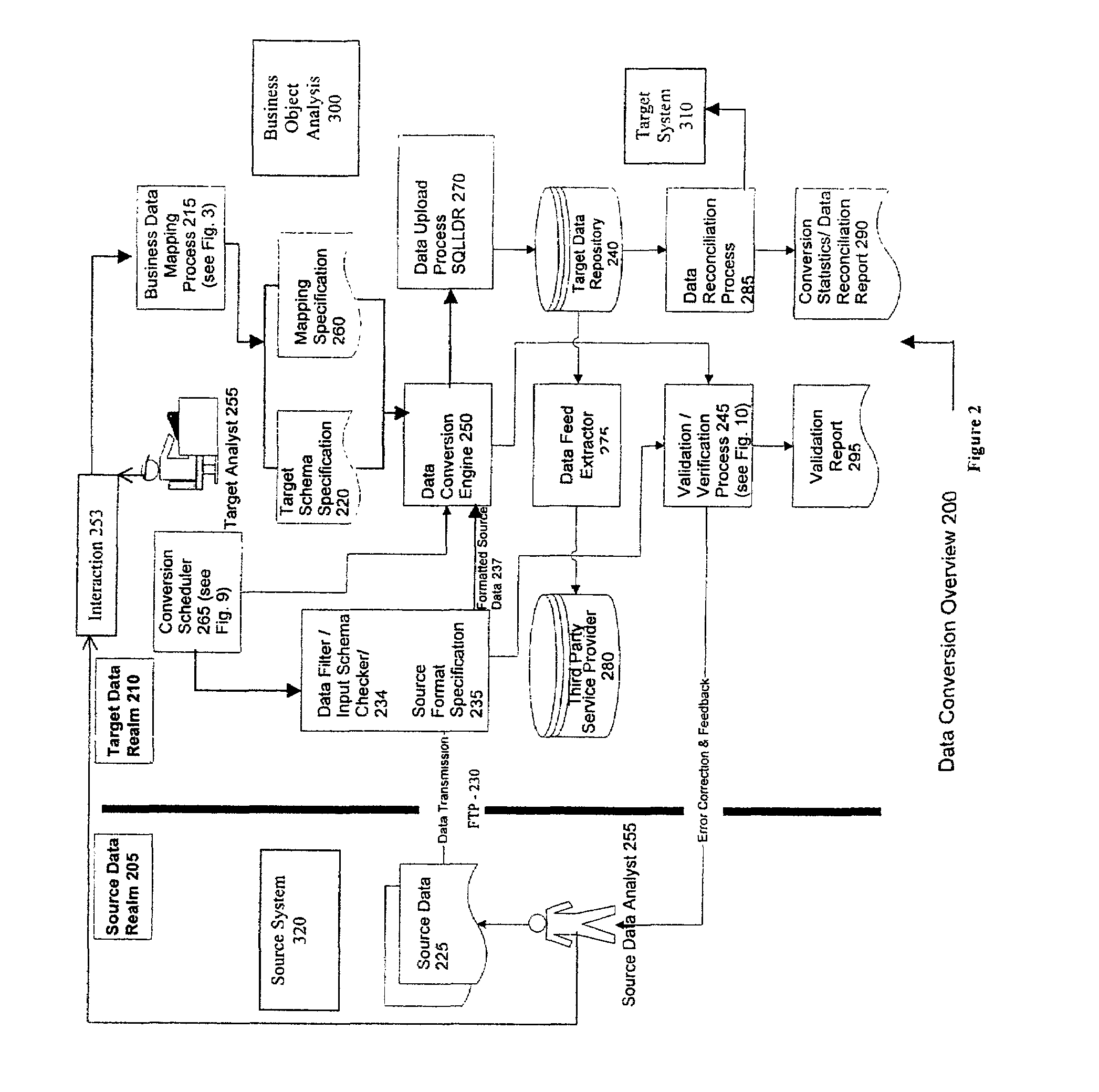

System and method for database conversion

InactiveUS6996589B1Improve performanceFlexiblyData processing applicationsDigital data information retrievalData feedExtensible markup

A database conversion engine comprising a method and system to convert business information residing on one system to another system. A generic, extensible, scalable conversion engine may perform conversion of source data to target data as per mapping instructions / specifications, target schema specifications, and a source extract format specification without the need for code changes to the engine itself for subsequent conversions. A scheduler component may implement a scalable architecture capable of voluminous data crunching operations. Multi-level validation of the incoming source, data may also be provided by the system. A mechanism may provide data feeds to third-party systems as a part of business data conversion. An English-like, XML-based (extensible markup language), user-friendly, extensible data markup language may be further provided to specify the mapping instructions directly or via a GUI (graphical user interface). The system and method employs a business-centric approach to data conversion that determines the basic business object that is the building block of a given conversion. This approach facilitates identification of basic minimum required data for conversion leading to efficiencies in volume of data, performance, validations, reusability, and conversion turnover time.

Owner:NETCRACKER TECH SOLUTIONS